Submitted:

10 February 2026

Posted:

12 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Context and Motivation

1.2. Problem Statement

1.3. Gap in Existing Work

- a shared taxonomy that separates measurement fromcontrol from state semantics,

- a consistent way to reason about where code executes (hook placement) and what state it can safely maintain,

- a feasibility analysis that treats verifier constraints, synchronization restrictions, and portability as primary design constraints rather than caveats, and

- a structured mapping from workload patterns (skew, scans, phase shifts, and strict tail latency budgets) to the appropriate integration mode.

1.4. Approach and Contributions Overview

1.5. Paper Organization

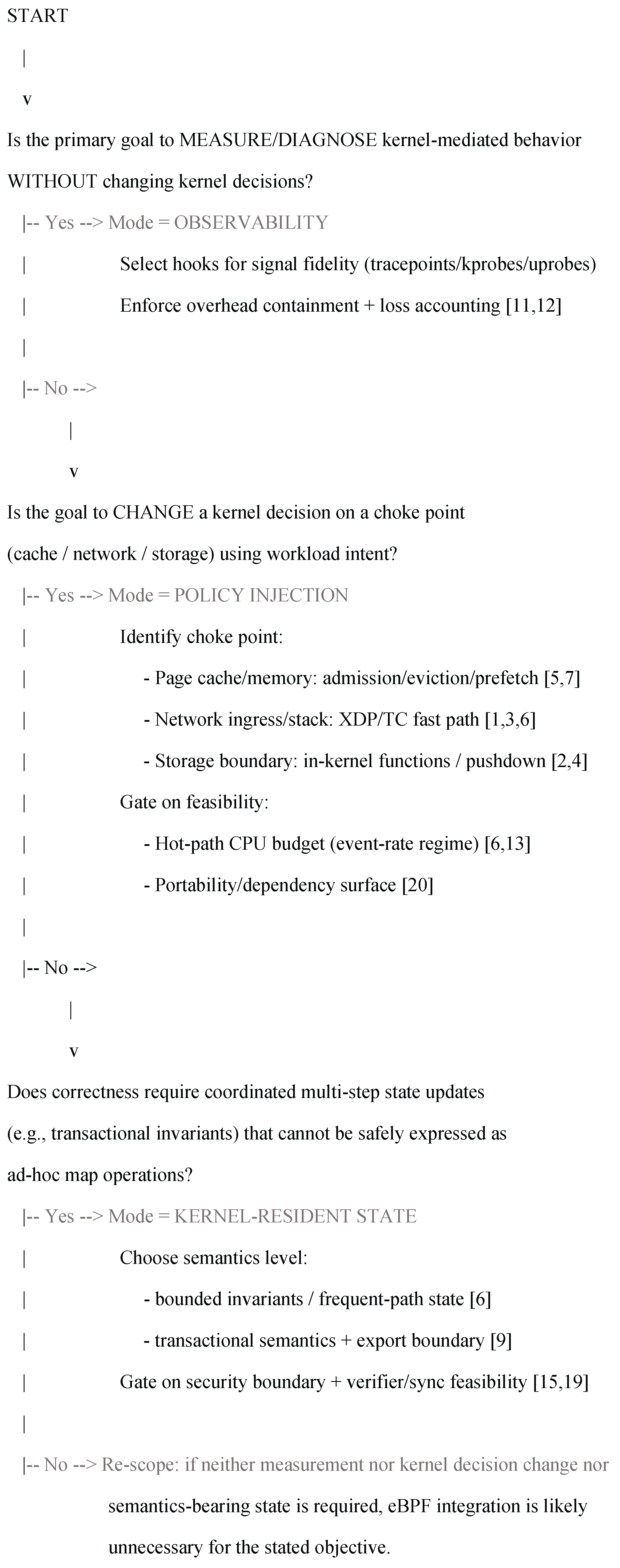

- A three-mode taxonomy of database–kernel integration via eBPF—observability, policy injection, and kernel-resident state—that separates intent and prevents category errors in system comparison.

- Feasibility characterization for each mode is based on hook placement, state model, and verifier/synchronization/portability constraints that bound what can be built and what costs dominate.

- A structured analysis of representative systems (programmable page-cache policies, XDP ingress offload, and kernel-resident transactional state with WAL export) using a single framework enables a principled comparison across previously siloed lines of work.

- A decision framework that maps workload patterns and performance objectives to the appropriate integration mode, clarifying when to measure, when to specialize the kernel policy, and when the kernel-resident state is justified for correctness and tail-latency control.

2. Related Work

2.1. eBPF-Based Observability for Storage and Database-Adjacent Diagnosis

2.2. Policy Injection in Kernel Choke Points: Page Cache Specialization

2.3. Policy Injection and Fast Paths: Networking, Protocols, and RPC Handling

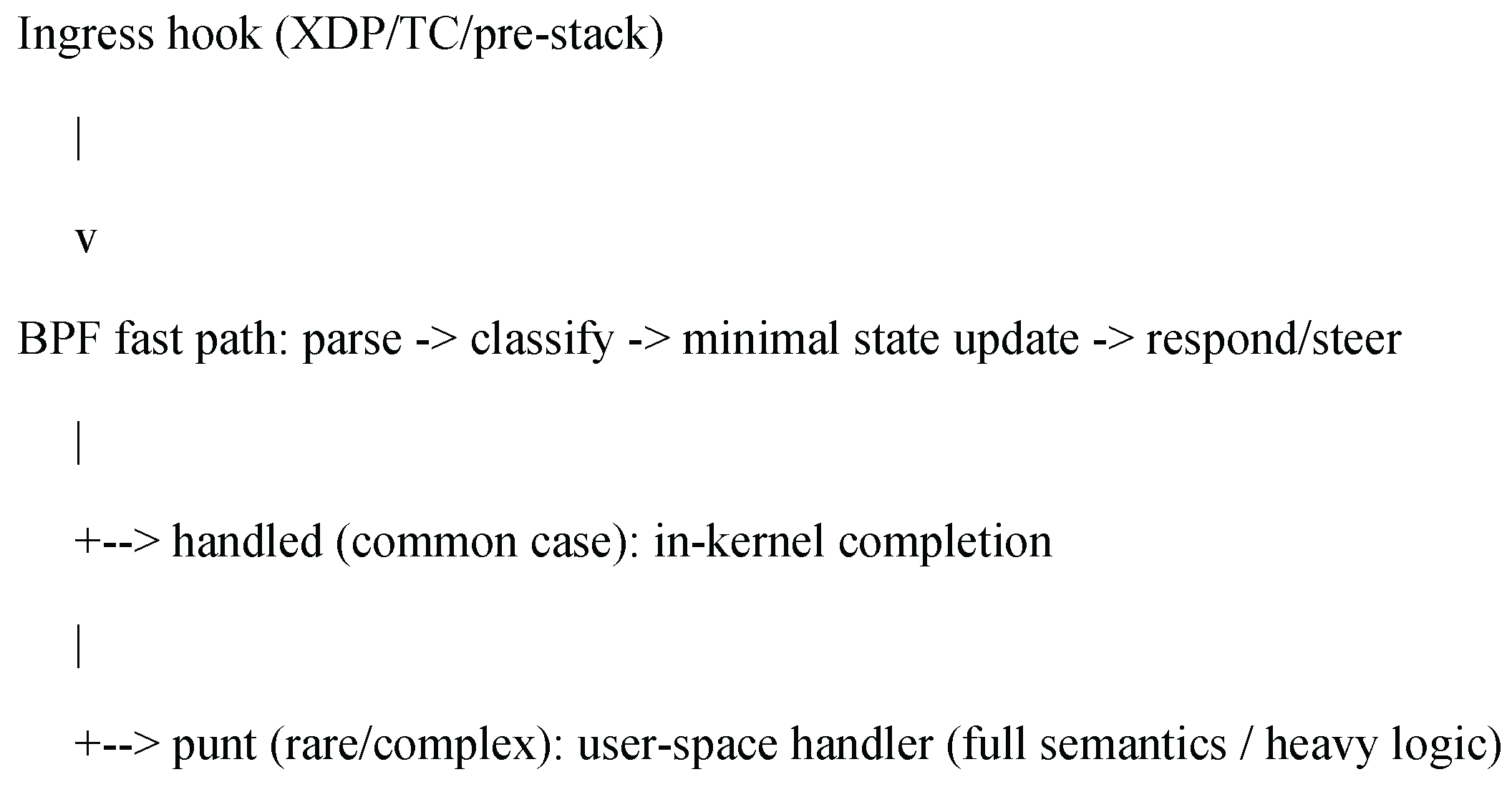

- Frequent path handling is performed in the kernel, and complexity and exceptions are intentionally punted to the user space [6].

- eNetSTL emphasizes that adoption depends on reusable building blocks, not only feasibility proofs [8].

- Fast-path programs typically handle a constrained subset of operations; the quality of the frequent/rare split determines both performance and correctness risk [6].

- As fast paths require richer invariants (multi-object updates, transactional constraints), ad-hoc states in maps become fragile and motivate stronger state semantics (Section 2.5) [6].

- Cost attribution depends on hook placement and runtime behavior; without careful decomposition, “eBPF acceleration” results can be difficult to compare across systems [13].

2.4. Policy Injection for Storage: In-Kernel Storage Functions and Network-Attached Pushdown

2.5. Kernel-resident state and transactional semantics for eBPF programs

2.6. Foundations: Verification, Security, and Portability as Feasibility Constraints

2.7. Positioning and Novelty Relative to Prior Work

- separates observability, policy injection, and kernel-resident states as distinct intents with distinct feasibility boundaries.

- provides shared comparison axes (hook placement, state model, verifier/synchronization/portability constraints); and

- enables principled cross-system evaluation rather than siloed comparisons within a subcommunity.

3. Methodology: Framework Construction and Analysis Protocol

3.1. Scope and Assumptions

- Practical deployments must tolerate kernel upgrades and heterogeneity; interface instability and dependency mismatches are treated as feasibility constraints, not “engineering details” [20].

- The unit of analysis is a system claim : a paper’s stated objective (e.g., lower tail latency), chosen hook placement, state model, constraint mitigation, and reported evaluation evidence.

3.2. Definitions and Terminology

3.3. Three-Mode Taxonomy

- Objective (what the system is trying to achieve)

- Mechanism (how eBPF is used)

- Typical hook points (where the code runs)

- Allowed actions (observe-only vs. modify policy vs. maintain semantics-bearing state)

- Success criteria (what “works” means)

- Dominant risks (what breaks feasibility in practice)

| Mode | Objective | Typical hook points | Allowed actions | Dominant feasibility boundaries |

|---|---|---|---|---|

| Observability | Derive database-relevant signals about kernel-mediated behavior with minimal perturbation | Tracepoints/kprobes/fentry, uprobes/USDT, perf events, networking and block-layer tracepoints [10,11,12,13] | Read kernel/user-space state; emit events/metrics | Measurement overhead and perturbation [11], tracing-stack variance [12], hook availability and stability [20] |

| Policy Injection | Specialize kernel decisions at choke points using workload-specific intent | Page-cache events and memory/I/O policy points [5,7]; XDP/TC/cgroup networking hooks [1,3,6,8]; storage-path hooks/functions [2,4] | Modify admission/eviction/prefetch decisions; steer/handle packets; execute storage functions | Correctness under mixed workloads [5,7], hot-path CPU budget [6,7], portability/dependency surface [20] |

| Kernel-Resident State | Provide semantics-bearing state transitions for eBPF programs (up to transactional consistency) | Typically paired with fast-path hooks (e.g., XDP) or kernel events requiring coordinated updates [6,9] | Maintain shared state with defined semantics; optionally export logs/state | Verifier and synchronization constraints [15,19], state explosion, security boundary expansion [18,19] |

3.4. Hook Placement Model

| Hook family | Typical context | Event-rate regime | Representative DB-adjacent use | Primary constraints |

|---|---|---|---|---|

| Tracing hooks (tracepoints, kprobes/fentry) | process context or kernel internal contexts | low → very high (workload-dependent) | Storage/network/kernel-path diagnosis [10,11,12,13] | Perturbation and overhead containment [11], fidelity variance across tooling [12], interface instability [20] |

| User-space instrumentation (uprobes/USDT) | user process | low → high | Correlating DB internals with kernel events [10,12] | Sampling bias, overhead, symbol stability |

| XDP | early ingress path | very high | Packet-level fast paths for KV/transactions [6]; network accel analyses [13] | Tight CPU budgets; limited semantics; state access costs [6,13] |

| TC / cgroup networking hooks | networking stack | high | Protocol/request handling and traffic shaping [1,3,8] | Per-packet overhead; interaction with stack behavior |

| Page-cache / memory policy hooks | memory subsystem | high (cache events) | Admission/eviction/prefetch specialization [5,7] | Shared-resource interference; correctness and regressions [5,7] |

| Storage-path hooks / in-kernel storage functions | I/O path | medium → high | In-kernel storage functions and pushdown [2,4] | Heterogeneity; ordering/durability interactions [2,4] |

| Security hooks (LSM) | security decision points | medium | Guardrails and policy enforcement for extensions [18] | Privilege boundaries; policy correctness |

3.5. State Model and Semantics

| State level | Typical mode(s) | Example requirement | Typical risk |

|---|---|---|---|

| Stateless/counters | Observability | attribution of delays to kernel layers [10] | measurement perturbation [11] |

| Keyed state | Observability, Policy Injection | per-connection tracking; per-file hotness | state size and memory overhead |

| Coordinated updates | Policy Injection | eviction structures; fairness across flows [7] | contention; correctness under concurrency |

| Semantics-bearing | Policy Injection, Kernel-Resident State | protocol invariants in fast path [3,6] | verifier/sync limits; complexity |

| Transactional | Kernel-Resident State | atomic multi-key update with isolation [9] | feasibility under eBPF constraints; enlarged security boundary [19] |

3.6. Feasibility Constraint Model

| Constraint | Manifestation | Typical failure mode | Mitigation strategies | Representative work |

|---|---|---|---|---|

| Verifier-bounded computation | bounded loops, restricted pointers, limited stack; verifier reasoning must accept program [14,15] | program rejected; forced simplification; unsafe workarounds | restructure into bounded phases; tail calls; push complex logic to user space; use verified toolchains where applicable [21] | [14,15,21] |

| Synchronization restrictions | limited locking in hot contexts; contention dominates at high event rates [6] | correctness bugs; throughput collapse under contention | per-CPU state; lock-free designs; shard state; minimize shared updates; frequent/rare splitting [6] | [6] |

| Portability / dependency-surface instability | reliance on kernel internals; CO-RE/tooling does not eliminate all mismatches [20] | breakage across kernel versions; silent mismeasurement | minimize internal dependencies; prefer stable hooks; defensive probing; explicit version gating; document kernel/CONFIG requirements | [20] |

| Security boundary and privilege model | loading privileges; attack surface of in-kernel logic; extension governance [16,18,19] | escalation risk; abuse of hooks/state; unsafe extension deployment | privilege restriction; LSM-based guardrails; capability gating; minimize in-kernel semantics; auditing and policy controls [18] | [16,18,19] |

| Resource ceilings | map memory, per-CPU overhead, export bandwidth; runtime overhead [11,12,13] | dropped events; memory pressure; instability under load | bounded sampling; backpressure-aware export; limit state cardinality; report overhead explicitly [11,12] | [11,12,13] |

3.7. Cost Model

- Base overhead of reaching the hook and running the attached programs.

- Instruction path length, branching, and helper/kfunc usage.

- Map lookups/updates, contention, and cache locality.

3.8. Correctness, Safety, and Failure Modes

| Mode | Correctness criteria | Safety criteria | Security boundary |

|---|---|---|---|

| Observability | measurement fidelity; correct attribution; bounded perturbation; loss accounting [10,11,12,13] | overhead containment to avoid impacting unrelated processes [11] | who can attach probes; what data is exposed; leakage risk |

| Policy Injection | preserves kernel invariants; improves objective without regressions under mixed workloads; stable under load [1,3,5,6,7,8] | bounded work on hot paths; rollback/disable mechanism; interference awareness [5,7] | privilege to load/attach; risk of policy abuse; isolation between tenants [19] |

| Kernel-Resident State | semantics claims (atomicity/isolation) are defined and evidenced; concurrency correctness; failure handling and export boundary [6,9] | bounded state growth; controlled contention; predictable degradation | expanded attack surface via richer in-kernel services; governance and hardening [18,19] |

3.9. Analysis Rubric and System Profile Sheet

| Field | Description |

|---|---|

| System | Paper/system name and year |

| Claimed objective | Throughput, tail latency, resource isolation, diagnosis fidelity, etc. |

| Mode | Observability / Policy Injection / Kernel-Resident State (Section 3.3) |

| Typical hook points | Specific attachment points (Section 3.4) |

| Event-rate regime | Qualitative (low/medium/high/very high) |

| State model | Position on state ladder (Section 3.5) |

| Semantics claims | Invariants, correctness guarantees, transactional claims [9] |

| Constraints encountered | Verifier, synchronization, portability, resources (Section 3.6) |

| Mitigations | Concrete strategies used (Table 4) |

| Cost drivers | Hook/program/map/export/crossing decomposition (Section 3.7) |

| Evaluation evidence | Workloads, baselines, metrics, variance reporting |

| Portability posture | Kernel/version assumptions; dependency surface notes [20] |

| Security boundary | Privileges required; potential abuse surface [18,19] |

| Reported limitations | Stated coverage gaps, failure modes |

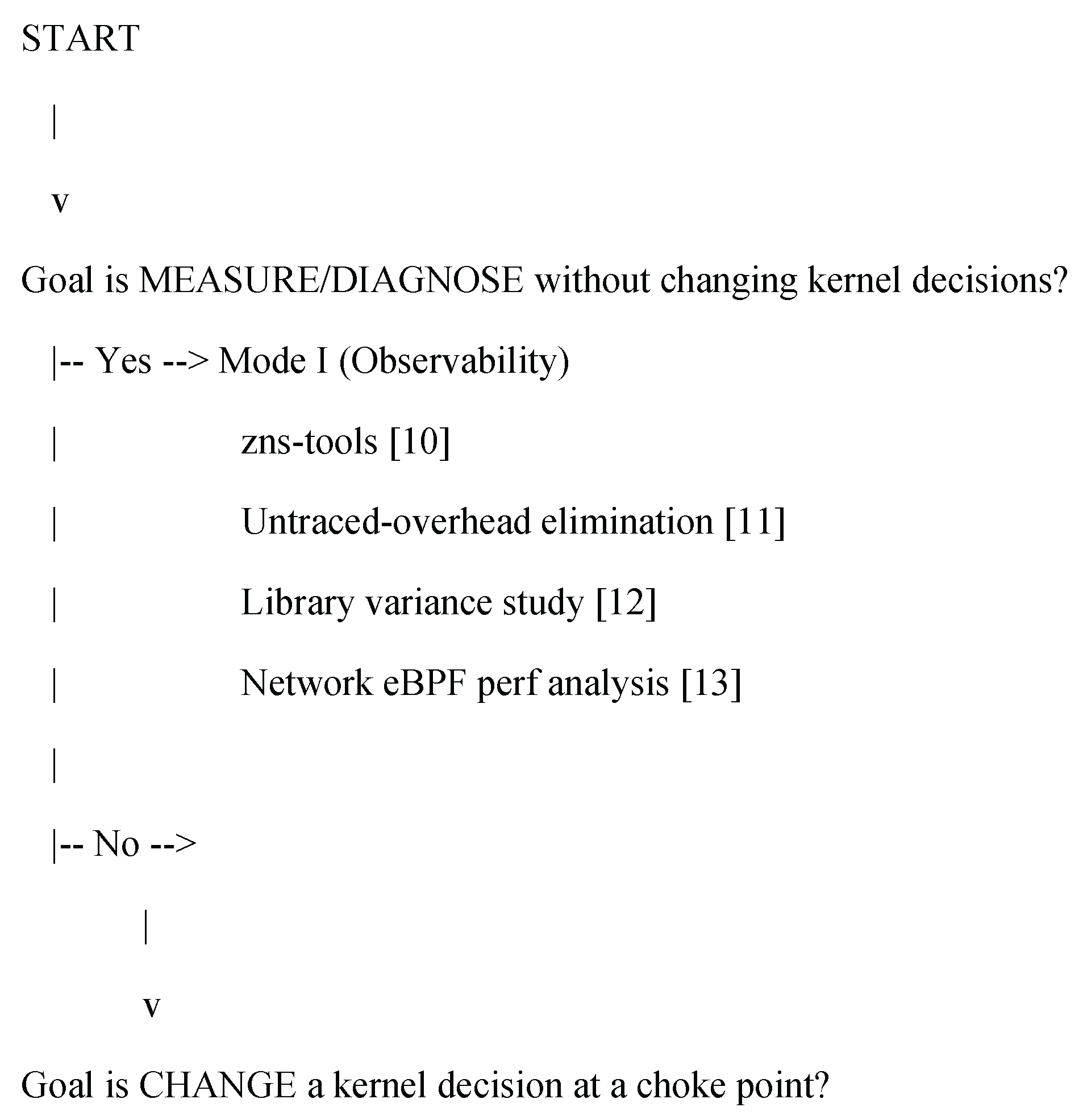

3.10. Workload-Pattern-to-Mode Mapping Procedure

3.11. Case Study Selection and Extraction Protocol

- Explicit use of eBPF to observe or influence a DB-adjacent kernel choke point.

- A stated performance or correctness objective and evaluation with workload evidence.

- Sufficient implementation details to identify hook placement and state model.

- hook points, and event-rate regime

- state ladder position;

- Feasibility constraints encountered and mitigations used (Table 4)

- cost decomposition (Section 3.7).

- Correctness/safety/security boundary (Table 5);

- portability posture (dependency surface signals) [20].

3.12. Reproducibility and Reporting Requirements

- kernel version and configuration flags relevant to eBPF and target subsystems

- CPU model, core count, NUMA topology; frequency scaling status

- NIC/storage device model; key offload features enabled/disabled (where relevant)

- hook points used (exact attach types and sites)

- program sizes (instruction count where available) and compilation toolchain

- map types, key/value sizes, cardinality bounds, and memory footprint

- export mechanism (ring buffer/perf events/etc.) and backpressure/drop handling

- CPU pinning/affinity for kernel threads and user processes

- interrupt/NAPI affinity where networking fast paths are evaluated

- sampling rates, aggregation windows, and filtering rules (for observability)

- workload generator and configuration (request mixes, dataset sizes, skew/scan behavior)

- baselines with exact kernel/userspace configuration and tuning knobs

- warm-up procedure and run duration; how steady state is determined

- throughput and tail latency definitions (percentiles, time windows)

- overhead reporting (CPU%, memory overhead, dropped events, regression tests)

- variance reporting (repetitions, confidence intervals or equivalent)

- explicit statement of kernel-version assumptions and known incompatibilities [20]

- which interfaces are assumed stable vs. version-gated

4. Framework Application and System Analysis

4.0. Section Goals and Reading Guide

- System profile sheets (Table 6 template): condensed in-text, full versions assumed to be appended.

- Constraint matrix with mitigation strategies (Table 4): used to interpret feasibility choices rather than listing “limitations” ad hoc.

- Cost decomposition (Section 3.7): used to separate the hook cost, program cost, state-access cost, and export/crossing cost.

- MR-1 reproducibility checklist (Section 3.12): used to judge the interpretability of results, motivated by demonstrated tracing overhead and tooling variance [11,12].

- Decision flowchart (Section 3.10): validated using the case studies in Section 4.5.

| Mode | Systems (this paper’s exemplars) |

|---|---|

| I: Observability | zns-tools [10]; Eliminating overhead on untraced processes [11]; eBPF library performance/fidelity variance [12]; eBPF network application performance demystification [13] |

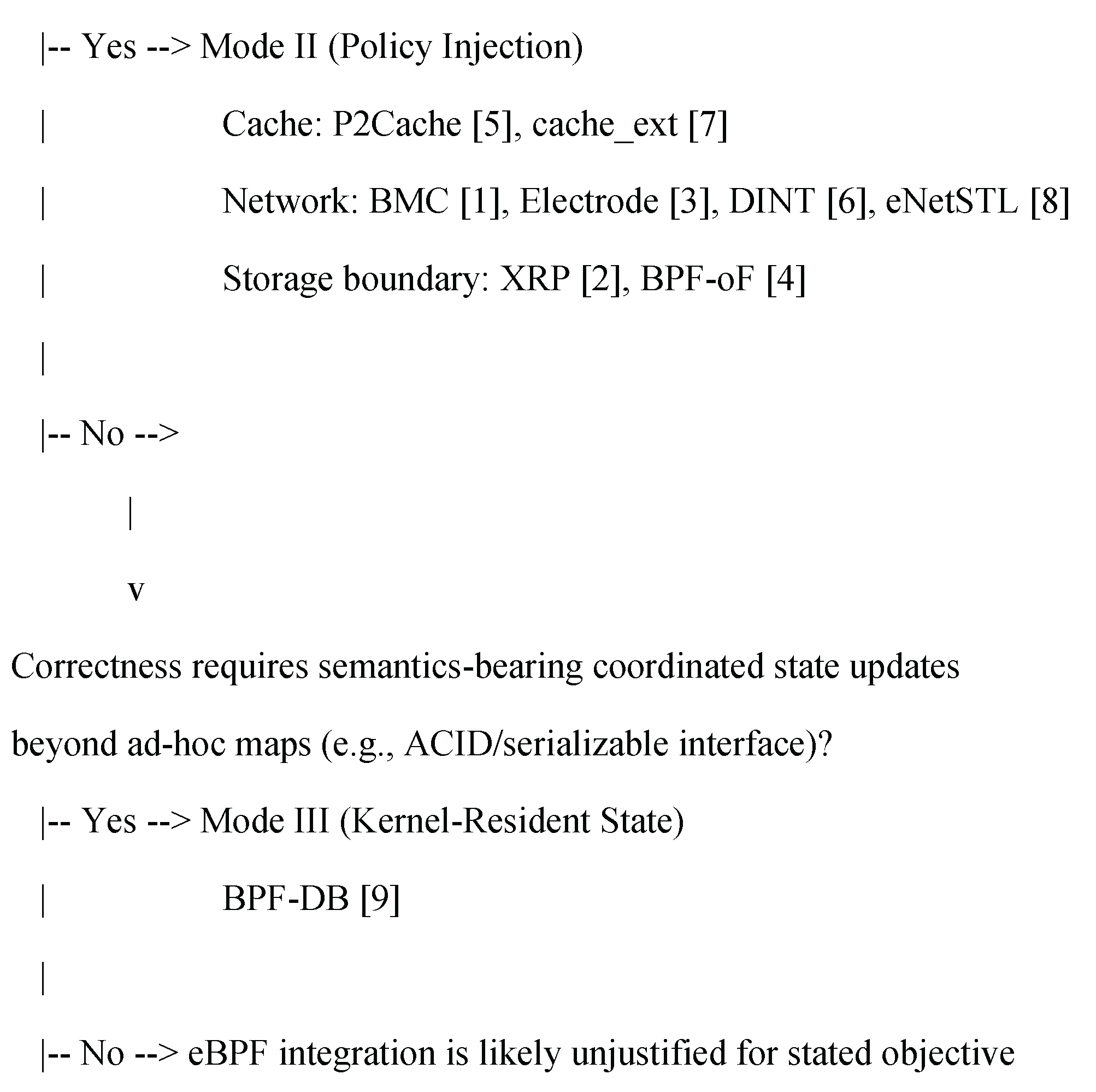

| II: Policy injection | Cache: P2Cache [5], cache_ext [7]. Network: BMC [1], Electrode [3], DINT [6], eNetSTL [8]. Storage boundary: XRP [2], BPF-oF [4] |

| III: Kernel-resident state | BPF-DB [9] with DINT as contrast case for “bounded state + frequent/rare split” [6] |

4.2. Mode I — Observability: What Can Be Measured, at What Cost, and with What Failure Modes

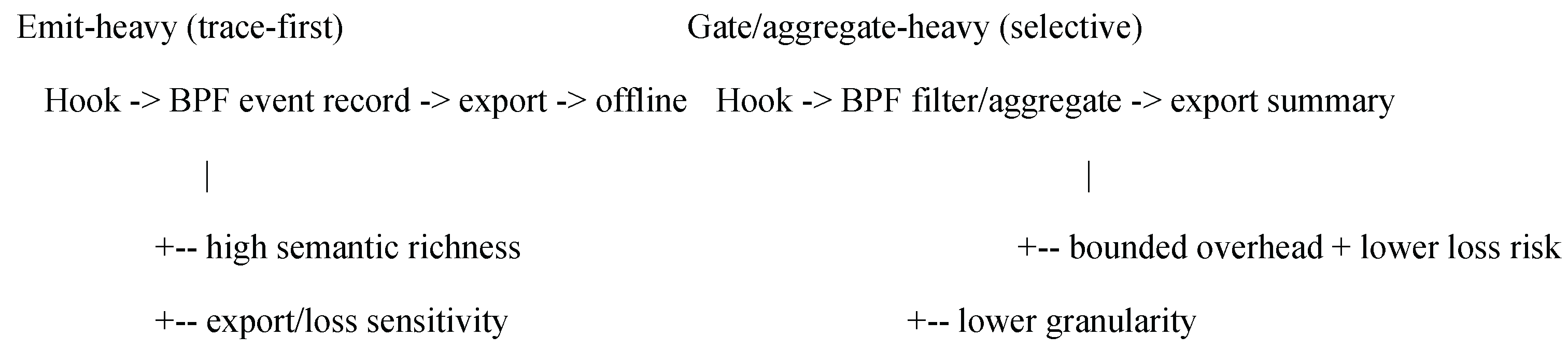

4.2.1. Mode I Overview

- Perturbation: tracing must not materially alter the workload being measured; otherwise, the measurement becomes a closed loop. Craun et al. showed that even per-process tracing strategies can impose non-trivial overheads on untraced processes, making “targeted tracing” an unsolved engineering problem under common approaches [11].

- Fidelity under load: event loss and export path limitations can silently corrupt conclusions. Machado et al. showed that tracing stacks and libraries vary widely in event loss and resource usage; therefore, “eBPF-based observability” is not a single comparable unit unless the toolchain is controlled and reported [12].

- Interpretability: Measurements must map onto actionable choke points. The zns-tools illustrate the value of cross-layer correlation to bridge abstraction gaps (file name/inode/block address) and expose semantic mismatches across layers [10].

4.2.2. System Profiles (Mode I)

| System | Primary intent | Typical hook points | State level | Dominant cost driver | Core limitation (framework view) |

|---|---|---|---|---|---|

| zns-tools [10] | Cross-layer storage profiling and timeline reconstruction | Kernel probes across VFS/MM/FS/block/NVMe + user-space probes; timestamped event traces | Keyed event traces; offline reconstruction | Export + post-processing (timeline building) | Heavy tracing must manage event volume and semantic translation across layers |

| Eliminating overhead on untraced processes [11] | Make per-process tracing viable without penalizing others | Tracepoints/kprobes; focuses on tracing attachment path and filtering placement | Minimal state (PID sets / gating) | Hook-trigger overhead for unrelated processes | Existing filtering strategies still tax untraced processes; requires deeper runtime changes to remove it |

| No Two Snowflakes Are Alike [12] | Quantify library-level performance/resource/fidelity trade-offs | I/O tracing hooks (storage syscall/I/O events) via different libraries | Keyed tracing + export | Export + user-space runtime/library overhead | Results vary by library; “measurement method” becomes a confounder unless standardized |

| Demystifying performance of eBPF network applications [13] | Identify when eBPF offload helps/hurts; characterize cost drivers and interference | Primarily XDP and kernel↔user comm paths; microbenchmarks of maps, chaining, JIT | Keyed state via maps; controlled experiments | Program complexity + state-access + runtime/JIT effects | eBPF offloads can violate performance isolation; benefits are workload- and codegen-dependent |

4.2.2.1. zns-Tools: Cross-Layer Storage Profiling for ZNS Stacks

- The paper frames tracing not just as “collect events,” but as “collect enough cross-layer identifiers to translate between abstractions” (file descriptor/inode/block/LBA/zone) [10]. This is an explicit instance of the “semantic gap” that Mode I exists to close.

- The zns-tools demonstrate that even for the same high-level workload, device utilization and behavior can vary “vastly,” indicating that application-level outcomes can be misleading without cross-layer attribution [10].

- zns-tools does not attempt to change the page cache, filesystem, or device policy. It provides a structured route to localize where mismatches occur (e.g., cross-layer data placement/classification decisions), creating inputs for policy discussions rather than asserting policy changes.

4.2.2.2 Eliminating eBPF tracing overhead on untraced processes: observability cost containment

4.2.2.3. No Two Snowflakes Are Alike: The Tracing Stack Is Part of the Experimental Condition

- Without explicitly reporting the tracing stack, kernel version, export mechanism, and loss accounting, two Mode I “observability” results are not necessarily comparable [12].

- Event loss can convert causal reasoning into an artifact. For database diagnosis, missing events can invert the interpretations (e.g., attributing latency to one layer because events from another are dropped).

- This work justifies MR-1’s insistence on reporting export mechanisms, drop behavior, and toolchain/runtime choices.

4.2.2.4. Demystifying Performance of eBPF Network Applications: Measurement of the Integration Medium

- The paper treats eBPF itself as the object of measurement maps, chaining mechanisms, kerneluser communication, and JIT behavior providing a diagnostic lens for feasibility claims made by Mode II systems [13].

- They empirically demonstrated cross-application interference when offloads were executed before demultiplexing, violating performance isolation in realistic NIC queue settings [13].

- The results clarify that “offload logic to eBPF” is not a monotonic performance story; it is workload- and mechanism-dependent.

4.2.3. Cross-System Synthesis (Mode I)

4.2.4. Observability Takeaways for DB Researchers

4.3. Mode II — Policy Injection: Cache, Network, and Storage Choke Points

4.3.2. Cache-Layer Policy Injection: Programmable Page-Cache Decisions

4.3.2.1. P2Cache

- Mode - Policy Injection (cache choke point).

- Typical hook points - page-cache/memory/I / O decision points (admission, eviction, prefetch/readahead).

- Keyed and coordinated state (tracking working sets, access phases or hint metadata).

- Shared-resource externalities (colocated workload regressions) and kernel coupling (interfaces deep in the memory subsystem).

4.3.2.2. cache_ext

- Mode: Policy Injection (cache choke point).

- Typical hook points: page cache events for admission, access, eviction, and removal decisions.

- Coordinated shared updates (eviction structures) with strong pressure to shard and bound the contention.

- Contention collapse and regressions under mixed tenancy, plus long-term stability issues when interfaces touch internal kernel cache machinery.

4.3.2.3. Cache-Layer Comparison (Normalized)

| Dimension | P2Cache [5] | cache_ext [7] |

|---|---|---|

| Integration intent | Application-directed cache decisions | Generalizable in-kernel cache policy customization |

| Hook regime | Page-cache decision points | Page-cache decision points (high-frequency event regime) |

| Control boundary | Explicit workload intent is part of the design contract | Emphasis on implementable policy space inside kernel |

| State pressure | Keyed hints + policy state | Coordinated eviction/admission structures; contention management |

| Dominant feasibility constraint | Mixed-workload externalities; interface coupling | Hot-path CPU budget + contention; interface coupling |

| Typical mitigation posture | Bound policy scope; rely on intent to reduce misprediction | Keep policy in-kernel to avoid crossings; structure state to reduce contention |

- Mode: Policy Injection (network fast path) with explicit boundary conditions that begin to approach Mode III.

- Hook regime: XDP ingress (very high event rate regime).

- Semantics-bearing state (transaction request handling) managed under strict bounded execution constraints.

- Semantic drift between fast and slow paths; state pressure that pushes beyond ad-hoc map updates.

- Mode: Policy Injection enabler (network fast path).

- Hook regime: network function attachment points.

- State ladder: varies; aims to make higher-level constructs feasible without bespoke reinvention.

- Dominant risks: correctness-by-construction and predictable performance under constrained contexts.

4.3.4. Storage-Boundary Policy Injection: In-Kernel Storage Functions and Network-Attached Pushdown

4.3.4.1. XRP

- Mode: Policy Injection (storage boundary).

- Hook regime: storage-path function attachment points (I/O submission/completion-adjacent).

- State ladder: keyed state and bounded semantics-bearing logic constrained by storage-path correctness expectations.

- Dominant risks: Semantic interactions with ordering/durability expectations and stack heterogeneity.

| Dimension | XRP [2] | BPF-oF [4] |

|---|---|---|

| Integration intent | In-kernel storage functions | Storage function pushdown over network |

| Choke point | Local storage/I/O path | Distributed storage boundary |

| Primary value | Reduce overhead; enable bounded I/O-path functions | Reduce data movement; place computation near storage endpoints |

| Dominant constraints | Ordering/durability interactions; device/stack heterogeneity | Endpoint heterogeneity; distributed correctness envelope |

| Portability posture | Sensitive to kernel/storage stack interfaces | Sensitive to network/storage endpoint capabilities and interfa`ces |

4.3.5. Mode II Cross-System Synthesis and Feasibility Gates

- The specialized logic is bounded (small instruction footprint, predictable state access) and can be expressed without escalating to Mode III semantics.

- The injected policy shifts the cost rather than removing it (e.g., state contention, export overhead, per-packet overhead) and saturates cores earlier than expected [13].

- The “policy” becomes an implicit state machine with correctness requirements that exceed ad-hoc maps and bounded execution, creating brittle designs (Mode II drifting into Mode III without acknowledging it) [6].

- Dependency-surface instability and insufficient reporting make it difficult to reproduce or deploy results across kernel versions and fleets [20].

4.4. Mode III - Kernel-Resident State: Semantics-Bearing State and Transactional Consistency

4.4.1. Mode III Overview

- Mode II specializes in kernel decisions, and Mode III provides semantics-bearing state services to ensure that certain classes of kernel-resident logic are correct under concurrency.

- Mode II fails as a performance regression or interference, and Mode III fails as correctness violations, semantic drift, or unacceptable security boundary expansion.

4.4.2. System Profiles (Mode III)

4.4.2.1. BPF-DB

- Mode: Kernel resident state.

- Hook regime: paired with arbitrary eBPF trigger points; the key contribution is a semantics-bearing state, not a specific hook.

- State ladder: Transactional.

- Dominant cost drivers: synchronization/coordination overhead, state footprint, and export boundary overhead (WAL export).

4.4.2.2. DINT as a Contrast Case

- Mode: Policy Injection with semantics-bearing state in the fast path.

- State ladder: semantics-bearing but not general transactional substrate.

- Core trade-off: avoids Mode-III complexity by limiting coverage and using fallback at the cost of semantic boundary management between fast and slow paths [6].

4.4.2.3. Normalized Comparison

| Dimension | BPF-DB [9] | DINT (contrast) [6] |

|---|---|---|

| Primary intent | Provide transactional semantics for eBPF programs | Accelerate frequent-path transaction handling |

| Semantics stance | Explicit ACID/transaction interface + export boundary | Frequent/rare split; semantics managed by partitioning |

| State ladder | Transactional | Semantics-bearing (bounded) |

| Where complexity lives | In-kernel state service + WAL/export integration | Boundary management between fast path and user-space fallback |

| Dominant feasibility risk | Sync/verifier feasibility + security boundary expansion [15,19] | Semantic drift; coverage gaps; contention at hot rates |

| When it is justified | Semantic pressure requires coordinated multi-step updates | Frequent-path dominates and can be safely bounded |

4.4.3. Cross-System Synthesis (Mode III)

- Correctness requires atomic multi-step updates that cannot be maintained by ad hoc maps without a defined concurrency model.

- Frequent/rare partitioning no longer isolates complexity because “rare” becomes common under contention, skew, or adversarial patterns, turning the fallback into the dominant path [6].

- Semantic pressure indicators accumulate (Section 4.3.3): isolation, conflict handling, replay safety, cross-core coordination, and explicit log/export requirements.

- The integration must expose a clean user-space boundary for durability or management, making an export model (e.g., WAL export) a part of the design contract [9].

4.5. Cross-Mode Comparison and Decision Procedure Validation

| System | Mode | Choke point | Typical hook regime | Event-rate regime | State ladder level | Dominant feasibility boundary | Primary mitigation posture |

|---|---|---|---|---|---|---|---|

| BMC [1] | II | Network | Pre-stack / early packet path | Very high | Keyed / bounded semantics | Per-packet CPU budget; coverage limits | Fast-path subset; bounded parsing/handling |

| XRP [2] | II | Storage boundary | I/O-path storage-function hooks | Medium–High | Keyed / bounded semantics | Heterogeneity; ordering/durability envelope | Restrict function scope; place logic where semantics are exposed |

| Electrode [3] | II | Network/protocol | Packet-processing hooks | High | Semantics-bearing state | Correctness under bounded execution; isolation | Protocol-step bounding; state minimization |

| BPF-oF [4] | II | Storage boundary (distributed) | Network-attached pushdown boundary | Medium–High | Keyed / semantics-bearing (varies) | Endpoint heterogeneity; distributed envelope | Pushdown scoping; capability-aware deployment |

| P2Cache [5] | II | Cache | Page-cache policy points | High | Keyed / coordinated | Mixed-workload externalities; kernel coupling | Explicit intent/hints; bounded policy scope |

| DINT [6] | II (boundary case) | Network (transactions) | XDP ingress | Very high | Semantics-bearing state | Semantics vs bounded execution; crossings | Frequent/rare split; bounded in-kernel work |

| cache_ext [7] | II | Cache | Page-cache policy points | High | Coordinated shared updates | Contention + hot-path CPU; kernel coupling | In-kernel policy to avoid crossings; shard/bound structures |

| eNetSTL [8] | II (enabler) | Network | Network-function hooks | High | Varies | Engineering complexity under constraints | Library-style components to reduce bespoke errors/costs |

| BPF-DB [9] | III | Kernel-resident state service | Paired with triggers (varies) | Varies (often high) | Transactional | Verifier/sync feasibility; security boundary | Structured semantics + explicit export boundary (WAL) |

| zns-tools [10] | I | Observability (storage) | Cross-layer probes + app probes | Medium–High | Keyed traces | Export/fidelity; semantic translation | Cross-layer correlation; offline reconstruction |

| Untraced overhead elimination [11] | I | Observability feasibility | Trace hooks + targeting mechanisms | High | Minimal gating state | System-wide overhead externalities | Target isolation; eliminate untraced overhead paths |

| Library variance study [12] | I | Observability comparability | Multiple tracing stacks | High | Keyed traces | Fidelity variance; toolchain confounding | Treat library/runtime as experimental variable |

| Network eBPF perf analysis [13] | I | Observability (medium analysis) | XDP/comm-path microbenchmarks | Very high | Keyed state | Isolation violations; runtime-dependent costs | Cost decomposition; isolation-sensitive placement analysis |

4.5.2. Validate the Decision Flowchart Against Case Studies

- Cross-layer storage attribution and timeline reconstruction [10].

- Overhead containment is a prerequisite for valid measurement (untraced-process overhead externality) [11].

- Measurement stack variance is a confounder that requires explicit control and reporting [12].

- Characterization of eBPF network application costs and isolation effects as feasibility evidence rather than an acceleration claim [13].

4.5.3. MR-1 Compliance Scan with Compliance Score

- (2 pts) Kernel/platform disclosure

- (2 pts) Attachment disclosure

- (2 pts) State/export disclosure

- (1 pt) Runtime controls

- (2 pts) Workloads/baselines disclosure

- (1 pt) Metrics/variance disclosure

| title | year | kernel_platform | attach | state_export | controls | workloads_baselines | metrics_variance | compliance_score |

|---|---|---|---|---|---|---|---|---|

| BMC: Accelerating Memcached using Safe In-kernel Caching and Pre-stack Processing | 2021 | 2 | 0 | 1 | 1 | 1 | 0 | 5 |

| XRP: In-Kernel Storage Functions with eBPF | 2022 | 1 | 1 | 0 | 1 | 2 | 0 | 5 |

| Electrode: Accelerating Distributed Protocols with eBPF | 2023 | 2 | 1 | 2 | 1 | 0 | 0 | 6 |

| BPF-oF: Storage Function Pushdown Over the Network | 2023 | 1 | 1 | 1 | 1 | 1 | 0 | 5 |

| P2Cache: Application-Directed Page Cache Optimization | 2023 | 2 | 1 | 0 | 1 | 1 | 0 | 5 |

| DINT: Fast In-Kernel Distributed Transactions with eBPF | 2024 | 2 | 2 | 1 | 1 | 2 | 0 | 8 |

| cache_ext: Customizing the Page Cache with eBPF | 2025 | 1 | 0 | 0 | 0 | 0 | 0 | 1 |

| eNetSTL: Towards an In-kernel Library for High-Performance eBPF-based Network Functions | 2025 | 2 | 2 | 1 | 1 | 1 | 0 | 7 |

| BPF-DB: A Kernel-Embedded Transactional DBMS for eBPF Applications | 2025 | 2 | 0 | 1 | 1 | 1 | 0 | 5 |

| zns-tools: eBPF-Powered Cross-Layer Storage Profiling for ZNS SSDs | 2024 | 1 | 1 | 0 | 1 | 1 | 0 | 4 |

| Eliminating eBPF Tracing Overhead on Untraced Processes | 2024 | 1 | 0 | 0 | 0 | 1 | 1 | 3 |

| No Two Snowflakes Are Alike: Studying eBPF Libraries’ Performance, Fidelity and Resource Usage | 2025 | 2 | 1 | 1 | 1 | 1 | 0 | 6 |

| Demystifying Performance of eBPF Network Applications | 2025 | 2 | 2 | 1 | 1 | 1 | 0 | 7 |

- CS≥8CS \ge 8CS≥8: 1/13 papers (DINT [6])

- 5≤CS<85 \le CS < 85≤CS<8: 9/13 papers

- Metrics/variance disclosure: Only the of 1/13 papers scored the variance criterion (Table 13). This severely limits cross-paper quantitative synthesis, especially for tail-latency assertions.

- Attachment specificity and state/export details: Several systems score on attach or state/export, which blocks precise cost attribution and complicates the replication of “fast path” vs “control path” effects (Table 13).

- CS≥8CS \ge 8CS≥8: results were comparable with minimal ambiguity.

- 5≤CS<85 \le CS < 85≤CS<8: results are usable but require qualification; missing details likely affect cost/fidelity/portability.

- CS<5CS < 5CS<5: results are discussed qualitatively and not used for cross-system quantitative conclusions.

4.6. Consolidated Takeaways as Numbered Decision Rules

5. Discussion

5.1. Why it Worked

5.2. What the Results Imply

5.3. Where it Outperforms Existing Methods

5.4. Practical Implications

- Mode selection is a correct decision, not a presentation choice. Treat Mode I evidence as diagnosis unless bridge conditions are met; treat Mode II as kernel behavior change requiring invariant envelopes and externality evaluation; treat Mode III as semantics work requiring explicit guarantees and an export boundary [5,6,7,9,10,11,12,13].

- MR-1 is not optional if the results are meant to be generalized. Table 13 shows that missing variance reporting and underspecified attachment/state/export details are the norm, which blocks rigorous cross-paper quantitative conclusions, even when headline metrics look compelling. This is predictable given the known confounders in tracing overhead and tooling variance [11,12] and known instability risks [20].

- Treat eBPF programs as privileged production codes, whose risk increases sharply from Mode I to Mode III. The security boundary expands with statefulness and semantics-bearing logic; governance and least privilege are important [19].

- Prefer interventions that are bounded, disabled, and version-scoped. Dependency surface instability makes implicit portability assumptions unsafe; operational feasibility requires an explicit kernel/version posture [20].

- Demand disclosure artifacts are required. A system that cannot disclose attach sites, state/export budgets, and variance is not operationally characterizable under the load.

6. Limitations

6.1. What the Framework Does Not Handle

6.3. Data and Deployment Limitations (Now Grounded in Table 13)

7. Conclusion and Future Work

7.1. Conclusion

7.2. Future Work

Acknowledgments

References

- Y. Ghigoff, J. Sopena, K. Lazri, A. Blin, and G. Muller, “BMC: Accelerating Memcached using Safe In-kernel Caching and Pre-stack Processing,” in Proc. 18th USENIX Symp. Networked Systems Design and Implementation (NSDI ’21), Apr. 2021, pp. 487–501.

- Y. Zhong, H. Li, Y. J. Wu, I. Zarkadas, J. Tao, E. Mesterhazy, M. Makris, J. Yang, A. Tai, R. Stutsman, and A. Cidon, “XRP: In-Kernel Storage Functions with eBPF,” in Proc. 16th USENIX Symp. Operating Systems Design and Implementation (OSDI ’22), Jul. 2022, pp. 375–393.

- Y. Zhou, Z. Wang, S. Dharanipragada, and M. Yu, “Electrode: Accelerating Distributed Protocols with eBPF,” in Proc. 20th USENIX Symp. Networked Systems Design and Implementation (NSDI ’23), Apr. 2023, pp. 1391–1407.

- I. Zarkadas, T. Zussman, J. Carin, S. Jiang, Y. Zhong, J. Pfefferle, H. Franke, J. Yang, K. Kaffes, R. Stutsman, and A. Cidon, “BPF-oF: Storage Function Pushdown Over the Network,” arXiv preprint arXiv:2312.06808, Dec. 2023. [CrossRef]

- D. Lee, I. Choi, C. Lee, S. Lee, and J. Kim, “P2Cache: Application-Directed Page Cache Optimization,” in Proc. ACM Workshop on Hot Topics in Storage and File Systems (HotStorage ’23), Jul. 2023. [CrossRef]

- Y. Zhou, X. Xiang, M. Kiley, S. Dharanipragada, and M. Yu, “DINT: Fast In-Kernel Distributed Transactions with eBPF,” in Proc. 21st USENIX Symp. Networked Systems Design and Implementation (NSDI ’24), Apr. 2024, pp. 401–417.

- T. Zussman, I. Zarkadas, J. Carin, A. Cheng, H. Franke, J. Pfefferle, and A. Cidon, “cache_ext: Customizing the Page Cache with eBPF,” in Proc. ACM SIGOPS Symp. Operating Systems Principles (SOSP ’25), Oct. 2025. [CrossRef]

- B. Yang, D. Shen, J. Zhang, H. Yang, L. Zhao, B. Wang, G. Liu, and K. Chen, “eNetSTL: Towards an In-kernel Library for High-Performance eBPF-based Network Functions,” in Proc. European Conf. on Computer Systems (EuroSys ’25), Mar.–Apr. 2025. [CrossRef]

- M. Butrovich, S. Arch, W. S. Lim, W. Zhang, J. M. Patel, and A. Pavlo, “BPF-DB: A Kernel-Embedded Transactional Database Management System for eBPF Applications,” Proc. ACM Manag. Data, vol. 3, no. 3 (SIGMOD), Art. 135, Jun. 2025. [CrossRef]

- N. Tehrany, K. Doekemeijer, and A. Trivedi, “zns-tools: eBPF-Powered Cross-Layer Storage Profiling for ZNS SSDs,” in Proc. Workshop on Challenges and Opportunities of Efficient and Performant Storage Systems (CHEOPS ’24), Apr. 2024. [CrossRef]

- M. Craun, K. Hussain, U. Gautam, Z. Ji, T. Rao, and D. Williams, “Eliminating eBPF Tracing Overhead on Untraced Processes,” in Proc. Workshop on eBPF and Kernel Extensions (eBPF ’24), Aug. 2024. [CrossRef]

- C. Machado, B. Gião, S. Amaro, M. Matos, J. Paulo, and T. Esteves, “No Two Snowflakes Are Alike: Studying eBPF Libraries in the Real World,” in Proc. Workshop on eBPF and Kernel Extensions (eBPF ’25), Sep. 2025. [CrossRef]

- F. Shahinfar, S. Miano, A. Panda, and G. Antichi, “Demystifying Performance of eBPF Network Applications,” Proc. ACM Netw., vol. 3, CoNEXT3, Art. 16, Sep. 2025. [CrossRef]

- S. Bhat and H. Shacham, “Formal Verification of the Linux Kernel eBPF Verifier Range Analysis,” Tech. Rep., The University of Texas at Austin, May 2022.

- H. Vishwanathan, M. Shachnai, S. Narayana, and S. Nagarakatte, “Verifying the Verifier: eBPF Range Analysis Verification,” in Proc. Int. Conf. Computer Aided Verification (CAV 2023), LNCS 13966, 2023, pp. 226–251. [CrossRef]

- J. Jia, R. Sahu, A. Oswald, D. Williams, M. V. Le, and T. Xu, “Kernel Extension Verification is Untenable,” in Proc. Workshop on Hot Topics in Operating Systems (HotOS ’23), Jun. 2023. [CrossRef]

- K. K. Dwivedi, R. Iyer, and S. Kashyap, “Fast, Flexible, and Practical Kernel Extensions,” in Proc. ACM Symp. Operating Systems Principles (SOSP ’24), Nov. 2024. [CrossRef]

- K. Huang, J. Sampson, M. Payer, G. Tan, Z. Qian, and T. Jaeger, “SoK: Challenges and Paths Toward Memory Safety for eBPF,” in Proc. IEEE Symp. Security and Privacy (SP), 2025, pp. 848–866. [CrossRef]

- H. Lu, S. Wang, Y. Wu, W. He, and F. Zhang, “MOAT: Towards Safe BPF Kernel Extension,” in Proc. 33rd USENIX Security Symp. (USENIX Security ’24), Aug. 2024, pp. 1153–1170.

- S. (Wanxiang) Zhong, J. Liu, A. Arpaci-Dusseau, and R. Arpaci-Dusseau, “Revealing the Unstable Foundations of eBPF-Based Kernel Extensions,” in Proc. European Conf. on Computer Systems (EuroSys ’25), Mar.–Apr. 2025. [CrossRef]

- X. Wu, Y. Feng, T. Huang, X. Lu, S. Lin, L. Xie, S. Zhao, and Q. Cao, “VEP: A Two-stage Verification Toolchain for Full eBPF Programmability,” in Proc. 22nd USENIX Symp. Networked Systems Design and Implementation (NSDI ’25), Apr. 2025, pp. 277–299.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).