Submitted:

10 February 2026

Posted:

12 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

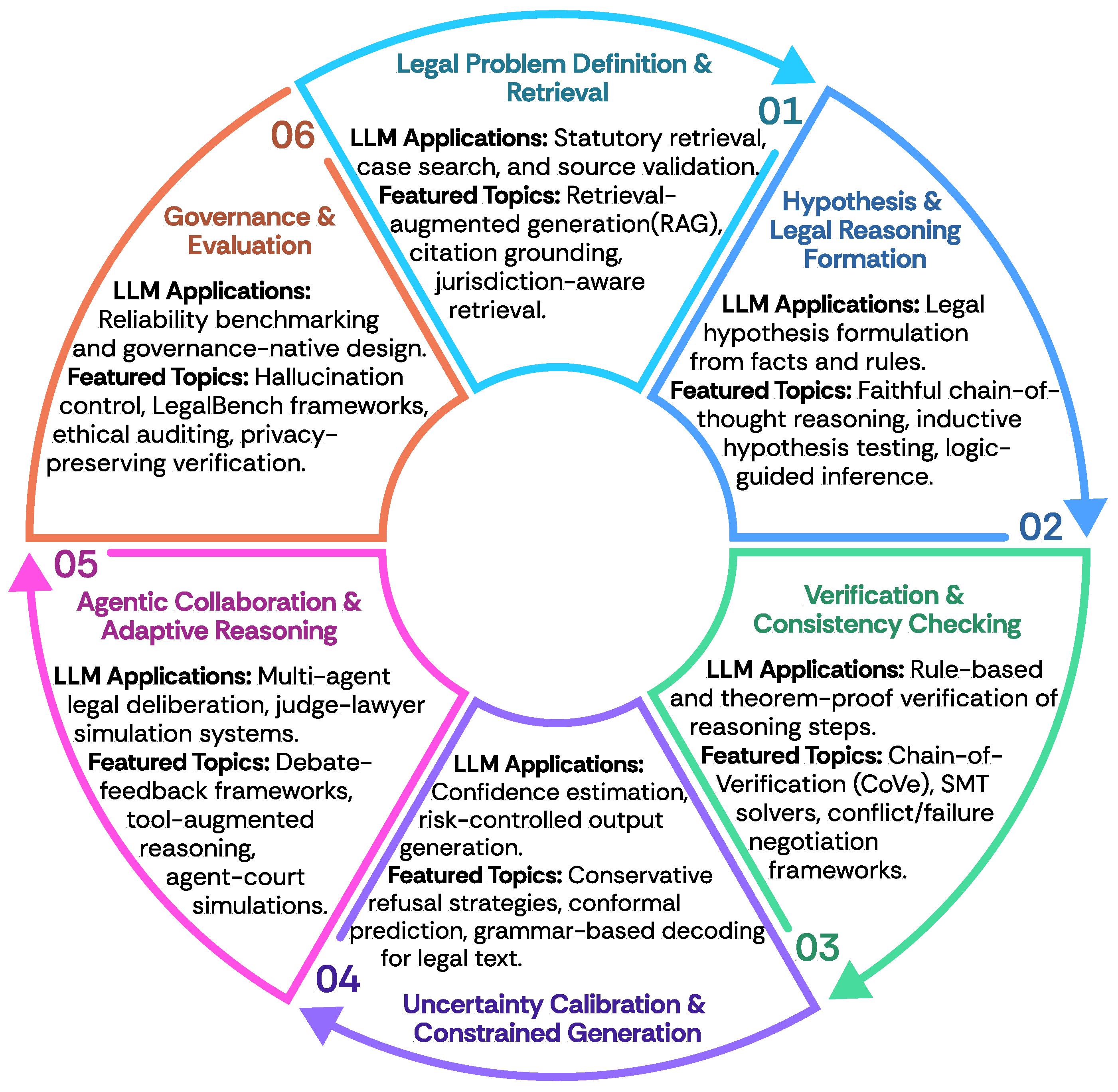

2. Constructions of LegalAI

2.1. Legal Reasoning

Faithfulness and Verifiability

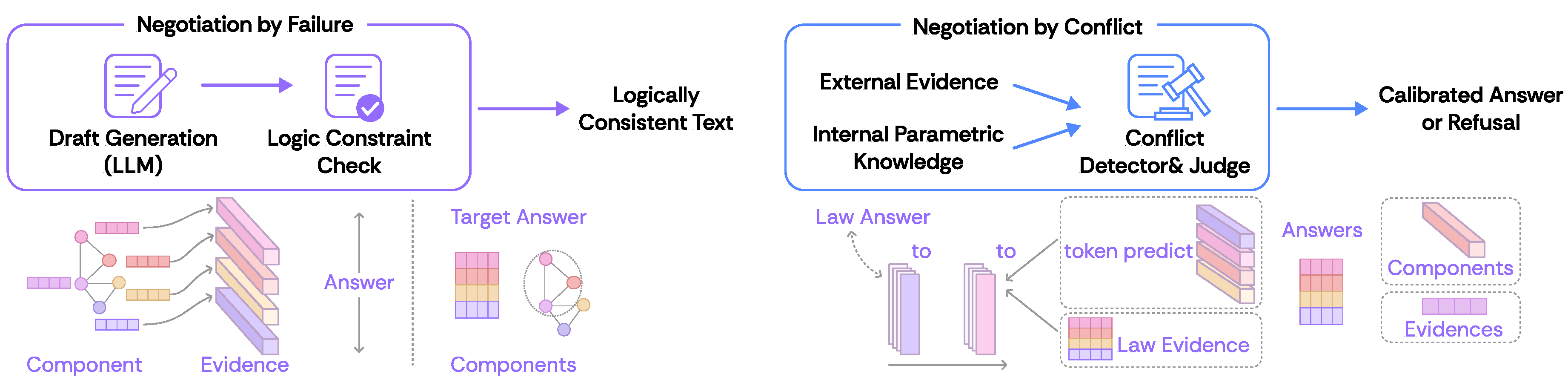

Verifiability and Neuro-Symbolic Integration

Conflict Calibration

Constrained Generation

2.2. Foundation Model

2.3. Retrieval and Agentic Methods

3. Evaluation of LegalAI

3.1. Merits of Legal Reasoning

3.2. Knowledge Alignment

3.3. Hallucination Control

4. Challenge and Future

5. Conclusion

6. Limitations

Appendix A. Related Work: Factuality Verified and Scope-Limited Generation

Appendix B. Formulation of Verified Generation

- is the base LLM probability distribution.

- is the probability assigned by the verification module.

- is a hyperparameter weighing factual adherence against linguistic fluency.

Appendix C. Detailed Discussions on the Construction of LegalAI

| Model / Framework | Country / Region | Parameters | Training Resources | PT | SFT | RL | Date |

|---|---|---|---|---|---|---|---|

| Legal-BERT [23] | Greece, United Kingdom | LEGAL-BERT-BASE(110M) | US contracts, EU legislation, ECHR cases | √ | - | - | 2020 |

| German BERT Base | Germany | bert-base-cased | Wiki, OpenLegalData, News | √ | √ | - | 2020 |

| CaseLaw-BERT | United States | bert-base-uncased(110M) | ILSI, ISS, ILDC Dataset | √ | √ | - | 2021 |

| CodeGen [112] | United States | CodeGen1/CodeGen2/CodeGen2.5(350M,1B,3B,7B,16B) | Jaxformer | √ | - | - | 2022 |

| ChatLaw [34] | China | InternLM(4x7B, MoE) | 93,000 court case decisions | √ | - | - | 2023 |

| DISC-LawLLM [172] | China | Qwen2.5-instruct 7B | DISC-Law-SFT | √ | √ | - | 2023 |

| HanFei | China | HanFei-1.0(7b) | Cases, regulations, indictments, legal news | √ | √ | - | 2023 |

| JurisLMs | China | LLaMA, GPT2 | Chinese legal corpus | √ | √ | - | 2023 |

| LaWGPT [186] | China | Chinese-alpaca-plus-7B | Awesome Chinese Legal Resources | √ | √ | - | 2023 |

| LexiLaw | China | ChatGLM-6B | BELLE 1.5M | - | √ | - | 2023 |

| WisdomInterrogatory | China | Baichuan-7B | 40G legal-related data | √ | √ | - | 2023 |

| Lawyer LLaMA [60] | China | quzhe/llama_chinese_13B, Chinese-LLaMA-13B | Alpaca-GPT4 (52k Chinese + 52k English) | √ | √ | - | 2023 |

| LAWGPT-zh | China | ChatGLM-6B | CrimeKgAssitant | - | √ | - | 2023 |

| BaoLuo LawAssistant | China | ChatGLM, sftglm-6b | Legal dataset | - | √ | - | 2023 |

| FedJudge [171] | China | baichuan-7b | C3VG, Lawyer LLaMA | - | √ | - | 2023 |

| Law-GLM | Germany, China | GLM-10B | 30GB of Chinese legal data | - | √ | - | 2023 |

| LexLM [26] | Denmark | RoBERTa large | LeXFiles corpus | √ | - | - | 2023 |

| legal-xlm-roberta-large [114] | Switzerland, Denmark, USA | XLM-R large | Multi Legal Pile | √ | - | - | 2023 |

| bert-large-portuguese-cased-legal | Brazil | BERTimbau large | assin, assin2, stsb_multi_mt pt, IRIS STS datasets | √ | √ | - | 2023 |

| SaulLM-7B [31] | United States, France, Portugal | Mistral-7B | Specialized data in the legal field | √ | - | - | 2024 |

| LawLLM [135] | United States | Gemma-7B | American legal data from the case.law platform | - | √ | - | 2024 |

| LegalΔ [35] | China | Qwen2.5-14B-Instruct | Lawbench, Lexeval, Disclaw | - | √ | √ | 2025 |

Appendix C.1. Formal Reasoning for Law: A Taxonomy

| Algorithm: LegalReasoningVerification_SMT |

| Input: (rules), (facts), c (conclusion), t (time), j (jurisdiction) |

| Output: (validity, counterexample, violations) |

| 1: solver ← new Z3Solver() |

| 2: violations |

| 3: for each rule in do |

| 4: solver.add(ForAll) |

| 5: if or then |

| 6: violations.add |

| 7: if violations then |

| 8: return (False, None, violations) |

| 9: for each fact f in do |

| 10: solver.add(encode_fact) |

| 11: solver.add(Not) |

| 12: result ← solver.check() |

| 13: if result == UNSAT then |

| 14: return (True, None, ∅) |

| 15: else if result == SAT then |

| 16: return (False, solver.model(), {"insufficient reasoning"}) |

| 17: else |

| 18: return (Unknown, None, {"solver timeout"}) |

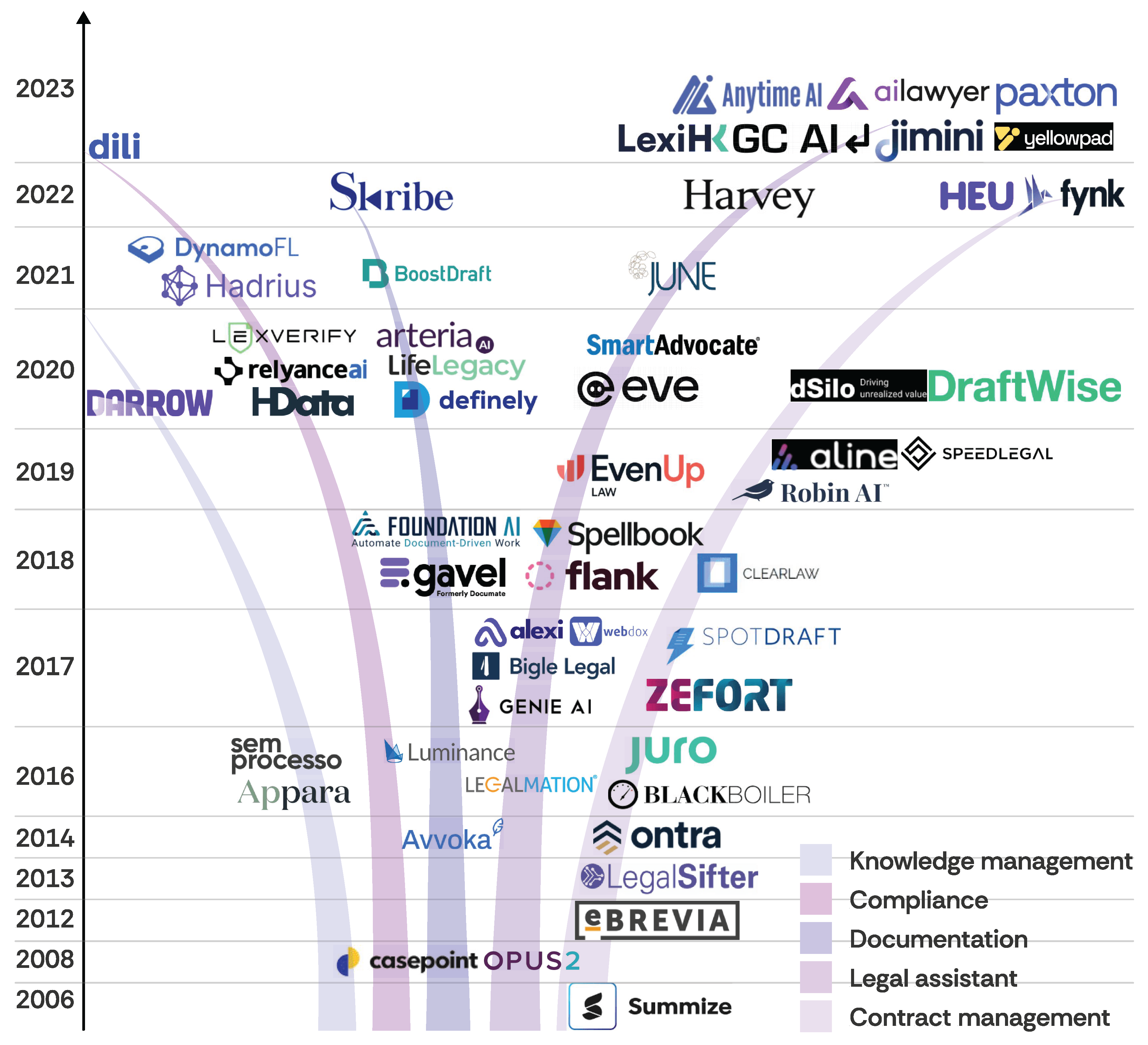

Appendix D. Products of LegalAI

Appendix E. Supplementary Materials

Appendix E.1. Ethical Concerns in Developing LegalAI

Appendix E.2. Differences with Existing Surveys

References

- Achiam, J.; Adler, S.; Agarwal, S.; Ahmad, L.; Akkaya, I.; Aleman, F. L.; Almeida, D.; Altenschmidt, J.; Altman, S.; Anadkat, S.; et al. Gpt-4 technical report. arXiv 2023, arXiv:2303.08774. [Google Scholar] [CrossRef]

- Agrawal, A., Suzgun, M., Mackey, L., Kalai, A. 2024103. Do Language Models Know When They’re Hallucinating References? Y. Graham M. Purver (), Findings of the Association for Computational Linguistics: EACL 2024 Findings of the association for computational linguistics: Eacl 2024 ( 912–928). St. Julian’s, MaltaAssociation for Computational Linguistics. Available online: https://aclanthology.org/2024.findings-eacl.62/ (accessed on).

- Agrawal, A.; Suzgun, M.; Mackey, L.; Kalai, A. 20242. Do language models know when they’re hallucinating references? Findings of the Association for Computational Linguistics: EACL 2024 Findings of the association for computational linguistics: Eacl 2024 ( 912–928).

- Alpay, F.; Alakkad, H. Truth-Aware Decoding: A Program-Logic Approach to Factual Language Generation. Truth-aware decoding: A program-logic approach to factual language generation. 2025. Available online: https://arxiv.org/abs/2510.07331.

- Alsagheer, D. R.; Kamal, A.; Kamal, M.; Wu, C. Y.; Shi, W. The Lawyer That Never Thinks: Consistency and Fairness as Keys to Reliable AI. Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics 2025, Volume 1, 9943–9954. [Google Scholar]

- Anthropic. The Claude 3 Model Family: Opus, Sonnet, Haiku. The claude 3 model family: Opus, sonnet, haiku. 2024. Available online: https://assets.anthropic.com/m/61e7d27f8c8f5919/original/Claude-3-Model-Card.pdf.

- Asai, A.; Wu, Z.; Wang, Y.; Sil, A.; Hajishirzi, H. Self-RAG: Learning to Retrieve, Generate, and Critique through Self-Reflection. Self-rag: Learning to retrieve, generate, and critique through self-reflection. 2023. Available online: https://arxiv.org/abs/2310.11511.

- Asgari, E.; Montaña-Brown, N.; Dubois, M.; Khalil, S.; Balloch, J.; Yeung, J. A.; Pimenta, D. 2025may13. A Framework to Assess Clinical Safety and Hallucination Rates of LLMs for Medical Text Summarisation. NPJ Digital Medicine81274. [CrossRef] [PubMed] [PubMed Central]

- Askari, A.; Aliannejadi, M.; Abolghasemi, A.; Kanoulas, E.; Verberne, S. Closer: conversational legal longformer with expertise-aware passage response ranker for long contexts. In Proceedings of the 32nd ACM International Conference on information and knowledge management Proceedings of the 32nd acm international conference on information and knowledge management, 2023; pp. 25–35. [Google Scholar]

- Bayless, S.; Buliani, S.; Cassel, D.; Cook, B.; Clough, D.; Delmas, R.; Diallo, N.; Erata, F.; Feng, N.; Giannakopoulou, D.; Goel, A.; Gokhale, A.; Hendrix, J.; Hudak, M.; Jovanović, D.; Kent, A. M.; Kiesl-Reiter, B.; Kuna, J. J.; Labai, N.; Yao, J. A Neurosymbolic Approach to Natural Language Formalization and Verification. A neurosymbolic approach to natural language formalization and verification. 2025. Available online: https://arxiv.org/abs/2511.09008.

- Beltagy, I.; Peters, M. E.; Cohan, A. 2020. Longformer: The Long-Document Transformer. Longformer: The long-document transformer. Available online: https://arxiv.org/abs/2004.05150 (accessed on).

- Bendová, K.; Knap, T.; Černỳ, J.; Pour, V.; Savelka, J.; Kvapilíková, I.; Drápal, J. What Are the Facts? Automated Extraction of Court-Established Facts from Criminal-Court Opinions. arXiv 2025, arXiv:2511.05320. [Google Scholar]

- Birhane, A.; Steed, R.; Ojewale, V.; Vecchione, B.; Raji, I. D. 20241. AI auditing: The Broken Bus on the Road to AI Accountability. Ai auditing: The broken bus on the road to ai accountability. Available online: https://arxiv.org/abs/2401.14462 (accessed on).

- Birhane, A.; Steed, R.; Ojewale, V.; Vecchione, B.; Raji, I. D. AI auditing: The broken bus on the road to AI accountability. 2024 IEEE Conference on Secure and Trustworthy Machine Learning (SaTML) 2024 ieee conference on secure and trustworthy machine learning (satml), 20242; pp. 612–643. [Google Scholar]

- Cai, H.; Zhao, S.; Zhang, L.; Shen, X.; Xu, Q.; Shen, W.; Wen, Z.; Ban, T. Unilaw-R1: A Large Language Model for Legal Reasoning with Reinforcement Learning and Iterative Inference. In Proceedings of the 2025 Conference on Empirical Methods in Natural Language Processing Proceedings of the 2025 conference on empirical methods in natural language processing, 2025; pp. 18128–18142. [Google Scholar]

- Cao, C.; Li, M.; Dai, J.; Yang, J.; Zhao, Z.; Zhang, S.; Shi, W.; Liu, C.; Han, S.; Guo, Y. 2025. Towards Advanced Mathematical Reasoning for LLMs via First-Order Logic Theorem Proving. Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing: EMNLP 2025. Proceedings of the 2024 conference on empirical methods in natural language processing: Emnlp 2025.

- Cao, C.; Zhu, H.; Ji, J.; Sun, Q.; Zhu, Z.; Wu, Y.; Dai, J.; Yang, Y.; Han, S.; Guo, Y. SafeLawBench: Towards Safe Alignment of Large Language Models. In Findings of the Association for Computational Linguistics: ACL 2025 Findings of the association for computational linguistics: Acl 2025 ( 14015–14048); AustriaAssociation for Computational Linguistics: Vienna, 2025. [Google Scholar]

- Cao, S.; Wang, L. 202408. Verifiable Generation with Subsentence-Level Fine-Grained Citations. L.-W. Ku, A. Martins, V. Srikumar (), Findings of the Association for Computational Linguistics: ACL 2024 Findings of the association for computational linguistics: Acl 2024 ( 15584–15596). Bangkok, ThailandAssociation for Computational Linguistics. Available online: https://aclanthology.org/2024.findings-acl.920/. [CrossRef]

- Carlini, N.; Tramer, F.; Wallace, E.; Jagielski, M.; Herbert-Voss, A.; Lee, K.; Roberts, A.; Brown, T.; Song, D.; Erlingsson, U.; et al. Extracting training data from large language models. 30th USENIX security symposium (USENIX Security 21) 30th usenix security symposium (usenix security 21), 2021; pp. 2633–2650. [Google Scholar]

- Chakraborty, N.; Ornik, M.; Driggs-Campbell, K. 202503. Hallucination Detection in Foundation Models for Decision-Making: A Flexible Definition and Review of the State of the Art. ACM Computing Surveys5771–35. [CrossRef]

- Chalkidis, I., Fergadiotis, M., Androutsopoulos, I. 202111. MultiEURLEX - A multi-lingual and multi-label legal document classification dataset for zero-shot cross-lingual transfer. M.-F. Moens, X. Huang, L. Specia, S. W.-t. Yih (), Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing Proceedings of the 2021 conference on empirical methods in natural language processing ( 6974–6996). Online and Punta Cana, Dominican RepublicAssociation for Computational Linguistics. Available online: https://aclanthology.org/2021.emnlp-main.559/ (accessed on). [CrossRef]

- Chalkidis, I.; Fergadiotis, M.; Malakasiotis, P.; Aletras, N.; Androutsopoulos, I. LEGAL-BERT: The muppets straight out of law school. arXiv 2020, arXiv:2010.02559. [Google Scholar] [CrossRef]

- Chalkidis, I.; Fergadiotis, M.; Malakasiotis, P.; Aletras, N.; Androutsopoulos, I. 20202. LEGAL-BERT: The Muppets straight out of Law School. Legal-bert: The muppets straight out of law school. Available online: https://arxiv.org/abs/2010.02559.

- Chalkidis, I.; Fergadiotis, M.; Malakasiotis, P.; Aletras, N.; Androutsopoulos, I. Androutsopoulos, I. 2020311. LEGAL-BERT: The Muppets straight out of Law School. T. Cohn, Y. He, Y. Liu (), Findings of the Association for Computational Linguistics: EMNLP 2020 Findings of the association for computational linguistics: Emnlp 2020 ( 2898–2904). OnlineAssociation for Computational Linguistics. Available online: https://aclanthology.org/2020.findings-emnlp.261/ (accessed on). [CrossRef]

- Chalkidis, I.; Garneau, N.; Goanta, C.; Katz, D. M.; Søgaard, A. LeXFiles and LegalLAMA: Facilitating English multinational legal language model development. arXiv 2023, arXiv:2305.07507. [Google Scholar]

- Chalkidis, I.; Garneau, N.; Goanta, C.; Katz, D. M.; Søgaard, A. LeXFiles and LegalLAMA: Facilitating English Multinational Legal Language Model Development. Lexfiles and legallama: Facilitating english multinational legal language model development. 2023. Available online: https://arxiv.org/abs/2305.07507.

- Chalkidis, I.; Jana, A.; Hartung, D.; Bommarito, M.; Androutsopoulos, I.; Katz, D. M.; Aletras, N. LexGLUE: A Benchmark Dataset for Legal Language Understanding in English. Lexglue: A benchmark dataset for legal language understanding in english. 2022. Available online: https://arxiv.org/abs/2110.00976.

- Chen, G.; Fan, L.; Gong, Z.; Xie, N.; Li, Z.; Liu, Z.; Li, C.; Qu, Q.; Alinejad-Rokny, H.; Ni, S.; et al. Agentcourt: Simulating court with adversarial evolvable lawyer agents. Findings of the Association for Computational Linguistics: ACL 2025 Findings of the association for computational linguistics: Acl 2025, 2025, 5850–5865. [Google Scholar]

- Chen, X.; Mao, M.; Li, S.; Shangguan, H. Debate-feedback: A multi-agent framework for efficient legal judgment prediction. arXiv 2025, arXiv:2504.05358. [Google Scholar]

- Cherian, J. J.; Gibbs, I.; Candès, E. J. Large language model validity via enhanced conformal prediction methods. Large language model validity via enhanced conformal prediction methods. 2024. Available online: https://arxiv.org/abs/2406.09714.

- Colombo, P.; Pires, T. P.; Boudiaf, M.; Culver, D.; Melo, R.; Corro, C.; Martins, A. F. T.; Esposito, F.; Raposo, V. L.; Morgado, S.; Desa, M. 20241. SaulLM-7B: A pioneering Large Language Model for Law. Saullm-7b: A pioneering large language model for law. 1. Available online: https://arxiv.org/abs/2403.03883.

- Colombo, P.; Pires, T. P.; Boudiaf, M.; Culver, D.; Melo, R.; Corro, C.; Martins, A. F.; Esposito, F.; Raposo, V. L.; Morgado, S.; et al. Saullm-7b: A pioneering large language model for law. arXiv 2024, arXiv:2403.03883. [Google Scholar]

- Cui, J.; Ning, M.; Li, Z.; Chen, B.; Yan, Y.; Li, H.; Ling, B.; Tian, Y.; Yuan, L. Chatlaw: A multi-agent collaborative legal assistant with knowledge graph enhanced mixture-of-experts large language model. arXiv 2023, arXiv:2306.16092. [Google Scholar]

- Cui, J.; Ning, M.; Li, Z.; Chen, B.; Yan, Y.; Li, H.; Ling, B.; Tian, Y.; Yuan, L. Chatlaw: A Multi-Agent Collaborative Legal Assistant with Knowledge Graph Enhanced Mixture-of-Experts Large Language Model. Chatlaw: A multi-agent collaborative legal assistant with knowledge graph enhanced mixture-of-experts large language model. 2024. Available online: https://arxiv.org/abs/2306.16092.

- Dai, X.; Xu, B.; Liu, Z.; Yan, Y.; Xie, H.; Yi, X.; Wang, S.; Yu, G. LegalΔ: Enhancing Legal Reasoning in LLMs via Reinforcement Learning with Chain-of-Thought Guided Information Gain. Legalδ: Enhancing legal reasoning in llms via reinforcement learning with chain-of-thought guided information gain. 2025. Available online: https://arxiv.org/abs/2508.12281.

- Dai, Y.; Feng, D.; Huang, J.; Jia, H.; Xie, Q.; Zhang, Y.; Han, W.; Tian, W.; Wang, H. LAiW: A Chinese legal large language models benchmark. In Proceedings of the 31st International conference on computational linguistics Proceedings of the 31st international conference on computational linguistics, 2025; pp. 10738–10766. [Google Scholar]

- Dathathri, S.; Madotto, A.; Lan, J.; Hung, J.; Frank, E.; Molino, P.; Yosinski, J.; Liu, R. Plug and play language models: A simple approach to controlled text generation. Plug and play language models: A simple approach to controlled text generation. 2019. Available online: https://arxiv.org/abs/1912.02164.

- Dettmers, T.; Pagnoni, A.; Holtzman, A.; Zettlemoyer, L. 20231. QLORA: efficient finetuning of quantized LLMs. In Proceedings of the 37th International Conference on Neural Information Processing Systems. Proceedings of the 37th international conference on neural information processing systems, Red Hook, NY, USA; Curran Associates Inc.

- Dettmers, T.; Pagnoni, A.; Holtzman, A.; Zettlemoyer, L. 20232. QLoRA: Efficient Finetuning of Quantized LLMs. Qlora: Efficient finetuning of quantized llms. Available online: https://arxiv.org/abs/2305.14314.

- DeYoung, J., Jain, S., Rajani, N. F., Lehman, E., Xiong, C., Socher, R., Wallace, B. C. 202007. ERASER: A Benchmark to Evaluate Rationalized NLP Models. D. Jurafsky, J. Chai, N. Schluter, J. Tetreault (), Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics Proceedings of the 58th annual meeting of the association for computational linguistics ( 4443–4458). OnlineAssociation for Computational Linguistics. Available online: https://aclanthology.org/2020.acl-main.408/ (accessed on). [CrossRef]

- Dhuliawala, S.; Komeili, M.; Xu, J.; Raileanu, R.; Li, X.; Celikyilmaz, A.; Weston, J. 202408. Chain-of-Verification Reduces Hallucination in Large Language Models. L.-W. Ku, A. Martins, V. Srikumar (), Findings of the Association for Computational Linguistics: ACL 2024 Findings of the association for computational linguistics: Acl 2024 ( 3563–3578). Bangkok, ThailandAssociation for Computational Linguistics. Available online: https://aclanthology.org/2024.findings-acl.212/. [CrossRef]

- Fang, H.; Balakrishnan, A.; Jhamtani, H.; Bufe, J.; Crawford, J.; Krishnamurthy, J.; Pauls, A.; Eisner, J.; Andreas, J.; Klein, D. 202307. The Whole Truth and Nothing But the Truth: Faithful and Controllable Dialogue Response Generation with Dataflow Transduction and Constrained Decoding. A. Rogers, J. Boyd-Graber, N. Okazaki (), Findings of the Association for Computational Linguistics: ACL 2023 Findings of the association for computational linguistics: Acl 2023 ( 5682–5700). Toronto, CanadaAssociation for Computational Linguistics. Available online: https://aclanthology.org/2023.findings-acl.351/ (accessed on). [CrossRef]

- Fei, Z.; Zhang, S.; Shen, X.; Zhu, D.; Wang, X.; Ge, J.; Ng, V. Internlm-law: An open-sourced chinese legal large language model. In Proceedings of the 31st International Conference on Computational Linguistics Proceedings of the 31st international conference on computational linguistics, 2025; pp. 9376–9392. [Google Scholar]

- Fernsel, L.; Kalff, Y.; Simbeck, K. Assessing the Auditability of AI-integrating Systems: A Framework and Learning Analytics Case Study. arXiv 2024, arXiv:2411.08906. [Google Scholar]

- Gao, T.; Wettig, A.; Yen, H.; Chen, D. How to train long-context language models (effectively). Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics 2025, Volume 1, 7376–7399. [Google Scholar]

- Geng, S.; Josifoski, M.; Peyrard, M.; West, R. Grammar-Constrained Decoding for Structured NLP Tasks without Finetuning. Grammar-constrained decoding for structured nlp tasks without finetuning. 2024. Available online: https://arxiv.org/abs/2305.13971.

- Guha, N.; Nyarko, J.; Ho, D.; Ré, C.; Chilton, A.; Chohlas-Wood, A.; Peters, A.; Waldon, B.; Rockmore, D.; Zambrano, D.; et al. Legalbench: A collaboratively built benchmark for measuring legal reasoning in large language models. Advances in neural information processing systems 2023, 3644123–44279. [Google Scholar] [CrossRef]

- Guha, N.; Nyarko, J.; Ho, D. E.; Ré, C.; Chilton, A.; Narayana, A.; Chohlas-Wood, A.; Peters, A.; Waldon, B.; Rockmore, D. N.; Zambrano, D.; Talisman, D.; Hoque, E.; Surani, F.; Fagan, F.; Sarfaty, G.; Dickinson, G. M.; Porat, H.; Hegland, J.; Li, Z. LEGALBENCH: a collaboratively built benchmark for measuring legal reasoning in large language models. In Proceedings of the 37th International Conference on Neural Information Processing Systems. Proceedings of the 37th international conference on neural information processing systems, Red Hook, NY, USA, 2023; Curran Associates Inc. [Google Scholar]

- Guha, N.; Nyarko, J.; Ho, D. E.; Ré, C.; Chilton, A.; Narayana, A.; Chohlas-Wood, A.; Peters, A.; Waldon, B.; Rockmore, D. N.; Zambrano, D.; Talisman, D.; Hoque, E.; Surani, F.; Fagan, F.; Sarfaty, G.; Dickinson, G. M.; Porat, H.; Hegland, J.; Li, Z. LegalBench: A Collaboratively Built Benchmark for Measuring Legal Reasoning in Large Language Models. Legalbench: A collaboratively built benchmark for measuring legal reasoning in large language models. 2023. Available online: https://arxiv.org/abs/2308.11462.

- Guo, C.; Sablayrolles, A.; Jégou, H.; Kiela, D. Gradient-based Adversarial Attacks against Text Transformers. Gradient-based adversarial attacks against text transformers. 2021. Available online: https://arxiv.org/abs/2104.13733.

- Guo, Q.; Dong, Y.; Tian, L.; Kang, Z.; Zhang, Y.; Wang, S. BANER: Boundary-aware LLMs for few-shot named entity recognition. In Proceedings of the 31st International Conference on Computational Linguistics Proceedings of the 31st international conference on computational linguistics, 2025; pp. 10375–10389. [Google Scholar]

- Gupta, A.; Rice, D.; O’Connor, B. -Stance: A Large-Scale Real World Dataset of Stances in Legal Argumentation. Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics 2025, Volume 1, 31450–31467. [Google Scholar]

- Gururangan, S., Marasović, A., Swayamdipta, S., Lo, K., Beltagy, I., Downey, D., Smith, N. A. 202007. Don’t Stop Pretraining: Adapt Language Models to Domains and Tasks. D. Jurafsky, J. Chai, N. Schluter, J. Tetreault (), Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics Proceedings of the 58th annual meeting of the association for computational linguistics ( 8342–8360). OnlineAssociation for Computational Linguistics. Available online: https://aclanthology.org/2020.acl-main.740/ (accessed on). [CrossRef]

- 202511. Laniqo at WMT25 Terminology Translation Task: A Multi-Objective Reranking Strategy for Terminology-Aware Translation via Pareto-Optimal Decoding. B. Haddow, T. Kocmi, P. Koehn, C. Monz (), Proceedings of the Tenth Conference on Machine Translation Proceedings of the tenth conference on machine translation ( 1276–1283). Suzhou, ChinaAssociation for Computational Linguistics. Available online: https://aclanthology.org/2025.wmt-1.107/ (accessed on).

- Han, S.; Guo, Y.-K.; Huang, S. Hong Kong Generative Artificial Intelligence Technical and Application Guideline; Hong Kong SAR Government Press Release, 2025. [Google Scholar]

- Hepworth, I.; Olive, K.; Dasgupta, K.; Le, M.; Lodato, M.; Maruseac, M.; Meiklejohn, S.; Chaudhuri, S.; Minkus, T. Securing the AI Software Supply Chain Securing the ai software supply chain; Google, 2024. [Google Scholar]

- Hong Kong Productivity Council (HKPC). Hong Kong enterprise cyber security readiness index and AI security survey. Hong kong enterprise cyber security readiness index and ai security survey. Press Release. 2024n. Available online: https://www.hkpc.org/en/about-us/media-centre/press-releases/2024/hong-kong-enterprise-cyber-security-readiness-index.

- Hu, E. J.; Shen, Y.; Wallis, P.; Allen-Zhu, Z.; Li, Y.; Wang, S.; Wang, L.; Chen, W. LoRA: Low-Rank Adaptation of Large Language Models. Lora: Low-rank adaptation of large language models. 2021. Available online: https://arxiv.org/abs/2106.09685.

- Huang, L.; Yu, W.; Ma, W.; Zhong, W.; Feng, Z.; Wang, H.; Chen, Q.; Peng, W.; Feng, X.; Qin, B.; Liu, T. A Survey on Hallucination in Large Language Models: Principles, Taxonomy, Challenges, and Open Questions. ACM Transactions on Information Systems Available online. 2025, 4321–55. [Google Scholar] [CrossRef]

- Huang, Q.; Tao, M.; Zhang, C.; An, Z.; Jiang, C.; Chen, Z.; Wu, Z.; Feng, Y. Lawyer LLaMA Technical Report. Lawyer llama technical report. 2023. Available online: https://arxiv.org/abs/2305.15062.

- Hurst, A.; Lerer, A.; Goucher, A. P.; Perelman, A.; Ramesh, A.; Clark, A.; Ostrow, A.; Welihinda, A.; Hayes, A.; Radford, A.; et al. Gpt-4o system card. arXiv 2024, arXiv:2410.21276. [Google Scholar] [CrossRef]

- Ji, J.; Chen, X.; Pan, R.; Zhang, C.; Zhu, H.; Li, J.; Hong, D.; Chen, B.; Zhou, J.; Wang, K.; et al. Safe RLHF-V: Safe Reinforcement Learning from Multi-modal Human Feedback. arXiv 2025, arXiv:2503.17682. [Google Scholar]

- Ji, J.; Hong, D.; Zhang, B.; Chen, B.; Dai, J.; Zheng, B.; Qiu, T. A.; Zhou, J.; Wang, K.; Li, B.; Han, S.; Guo, Y.; Yang, Y. 202507. PKU-SafeRLHF: Towards Multi-Level Safety Alignment for LLMs with Human Preference. W. Che, J. Nabende, E. Shutova, M. T. Pilehvar (), Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers) Proceedings of the 63rd annual meeting of the association for computational linguistics (volume 1: Long papers) ( 31983–32016). Vienna, AustriaAssociation for Computational Linguistics. Available online: https://aclanthology.org/2025.acl-long.1544/ (accessed on). [CrossRef]

- Ji, J.; Qiu, T.; Chen, B.; Zhang, B.; Lou, H.; Wang, K.; Duan, Y.; He, Z.; Zhou, J.; Zhang, Z.; et al. Ai alignment: A comprehensive survey. arXiv 2023, arXiv:2310.19852. [Google Scholar]

- Ji, Z.; Lee, N.; Frieske, R.; Yu, T.; Su, D.; Xu, Y.; Ishii, E.; Bang, Y. J.; Madotto, A.; Fung, P. 202303. Survey of Hallucination in Natural Language Generation. ACM Computing Surveys55121–38. Available online: http://dx.doi.org/10.1145/3571730 (accessed on). [CrossRef]

- Jiang, Z.; Xu, F. F.; Gao, L.; Sun, Z.; Liu, Q.; Dwivedi-Yu, J.; Yang, Y.; Callan, J.; Neubig, G. Active Retrieval Augmented Generation. Active retrieval augmented generation. 2023. Available online: https://arxiv.org/abs/2305.06983.

- Ju, C.; Shi, W.; Liu, C.; Ji, J.; Zhang, J.; Zhang, R.; Xu, J.; Yang, Y.; Han, S.; Guo, Y. 2025. Benchmarking multi-national value alignment for large language models. Findings of the Association for Computational Linguistics: ACL 2025 Findings of the association for computational linguistics: Acl 2025 ( 20042–20058).

- Kalai, A. T.; Nachum, O.; Vempala, S. S.; Zhang, E. Why language models hallucinate. Why language models hallucinate. 2025. Available online: https://arxiv.org/abs/2509.04664.

- Kalai, A. T.; Vempala, S. S. Calibrated Language Models Must Hallucinate. In Proceedings of the 56th Annual ACM Symposium on Theory of Computing Proceedings of the 56th annual acm symposium on theory of computing ( 160–171), New York, NY, USA, 2024; Association for Computing Machinery. [Google Scholar] [CrossRef]

- Kaleeswaran, S.; Santhiar, A.; Kanade, A.; Gulwani, S. Semi-supervised verified feedback generation. In Proceedings of the 2016 24th ACM SIGSOFT International Symposium on Foundations of Software Engineering Proceedings of the 2016 24th acm sigsoft international symposium on foundations of software engineering, 2016; pp. 739–750. [Google Scholar]

- Katz, D. M.; Hartung, D.; Gerlach, L.; Jana, A.; Bommarito, M. J., II. Natural language processing in the legal domain. arXiv 2023, arXiv:2302.12039. [Google Scholar] [CrossRef]

- Khot, T.; Trivedi, H.; Finlayson, M.; Fu, Y.; Richardson, K.; Clark, P.; Sabharwal, A. Decomposed prompting: A modular approach for solving complex tasks. Decomposed prompting: A modular approach for solving complex tasks. 2022. Available online: https://arxiv.org/abs/2210.02406.

- Khot, T.; Trivedi, H.; Finlayson, M.; Fu, Y.; Richardson, K.; Clark, P.; Sabharwal, A. Decomposed Prompting: A Modular Approach for Solving Complex Tasks. Decomposed prompting: A modular approach for solving complex tasks. 2023. Available online: https://arxiv.org/abs/2210.02406.

- Klisura, Đ.; Torres, A. R. B.; Gárate-Escamilla, A. K.; Biswal, R. R.; Yang, K.; Pataci, H.; Rios, A. A Multi-Agent Framework for Mitigating Dialect Biases in Privacy Policy Question-Answering Systems. arXiv 2025, arXiv:2506.02998. [Google Scholar]

- Kornilova, A.; Eidelman, V. BillSum: A corpus for automatic summarization of US legislation. In Proceedings of the 2nd Workshop on New Frontiers in Summarization Proceedings of the 2nd workshop on new frontiers in summarization, 2019; pp. 48–56. [Google Scholar]

- Kuhn, L.; Gal, Y.; Farquhar, S. Semantic Uncertainty: Linguistic Invariances for Uncertainty Estimation in Natural Language Generation. Semantic uncertainty: Linguistic invariances for uncertainty estimation in natural language generation. 2023. Available online: https://arxiv.org/abs/2302.09664.

- kui Sin, K.; Xuan, X.; Kit, C.; yan Chan, C. H.; kin Ip, H. H. Solving the Unsolvable: Translating Case Law in Hong Kong. Solving the unsolvable: Translating case law in hong kong. 2025. Available online: https://arxiv.org/abs/2501.09444.

- Kullback, S. Kullback-leibler divergence. Tech. Rep. 1951. [Google Scholar]

- Lee, J.-S. Lexgpt 0.1: pre-trained gpt-j models with pile of law. arXiv 2023, arXiv:2306.05431. [Google Scholar]

- Lewis, P.; Perez, E.; Piktus, A.; Petroni, F.; Karpukhin, V.; Goyal, N.; K"uttler, H.; Lewis, M.; Yih, W.-t.; Rockt"aschel, T.; et al. Retrieval-augmented generation for knowledge-intensive nlp tasks. Advances in Neural Information Processing Systems Advances in neural information processing systems 2020, 33, 9459–9474. [Google Scholar]

- Lewis, P.; Perez, E.; Piktus, A.; Petroni, F.; Karpukhin, V.; Goyal, N.; Küttler, H.; Lewis, M.; Yih, W.-t.; Rocktäschel, T.; Riedel, S.; Kiela, D. Retrieval-augmented generation for knowledge-intensive nlp tasks. Advances in Neural Information Processing Systems Advances in neural information processing systems 2020, 33, 9459–9474. [Google Scholar]

- Lewis, P.; Perez, E.; Piktus, A.; Petroni, F.; Karpukhin, V.; Goyal, N.; Küttler, H.; Lewis, M.; tau Yih, W.; Rocktäschel, T.; Riedel, S.; Kiela, D. Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks. Retrieval-augmented generation for knowledge-intensive nlp tasks. 2021. Available online: https://arxiv.org/abs/2005.11401.

- Li, C.; Wang, S.; Zhang, J.; Zong, C. 202406. Improving In-context Learning of Multilingual Generative Language Models with Cross-lingual Alignment. K. Duh, H. Gomez, S. Bethard (), Proceedings of the 2024 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (Volume 1: Long Papers) Proceedings of the 2024 conference of the north american chapter of the association for computational linguistics: Human language technologies (volume 1: Long papers) ( 8058–8076). Mexico City, MexicoAssociation for Computational Linguistics. Available online: https://aclanthology.org/2024.naacl-long.445/ (accessed on). [CrossRef]

- Li, H.; Chen, Y.; Ai, Q.; Wu, Y.; Zhang, R.; Liu, Y. Lexeval: A comprehensive chinese legal benchmark for evaluating large language models. Advances in Neural Information Processing Systems 2024, 3725061–25094. [Google Scholar]

- Li, H.; Chen, Y.; Zeng, J.; Peng, H.; Jing, H.; Hu, W.; Yang, X.; Zeng, Z.; Han, S.; Song, Y. 20251. GSPR: Aligning LLM Safeguards as Generalizable Safety Policy Reasoners. Gspr: Aligning llm safeguards as generalizable safety policy reasoners. Available online: https://arxiv.org/abs/2509.24418.

- Li, H.; Chen, Y.; Zeng, J.; Peng, H.; Jing, H.; Hu, W.; Yang, X.; Zeng, Z.; Han, S.; Song, Y. GSPR: Aligning LLM Safeguards as Generalizable Safety Policy Reasoners. arXiv 2025, arXiv:2509.24418. [Google Scholar] [CrossRef]

- Li, H.; Hu, W.; Jing, H.; Chen, Y.; Hu, Q.; Han, S.; Chu, T.; Hu, P.; Song, Y. 20251. Privaci-bench: Evaluating privacy with contextual integrity and legal compliance. Privaci-bench: Evaluating privacy with contextual integrity and legal compliance. Available online: https://arxiv.org/abs/2502.17041.

- Li, H.; Hu, W.; Jing, H.; Chen, Y.; Hu, Q.; Han, S.; Chu, T.; Hu, P.; Song, Y. Privaci-bench: Evaluating privacy with contextual integrity and legal compliance. arXiv 2025, arXiv:2502.17041. [Google Scholar]

- Li, H.; Shao, Y.; Wu, Y.; Ai, Q.; Ma, Y.; Liu, Y. Lecardv2: A large-scale chinese legal case retrieval dataset. In Proceedings of the 47th International ACM SIGIR Conference on Research and Development in Information Retrieval Proceedings of the 47th international acm sigir conference on research and development in information retrieval, 2024; pp. 2251–2260. [Google Scholar]

- Li, L.; Li, D.; Lin, C.; Li, W.; Xue, W.; Han, S.; Guo, Y. AIRA: Activation-Informed Low-Rank Adaptation for Large Models. In Proceedings of the IEEE/CVF International Conference on Computer Vision Proceedings of the ieee/cvf international conference on computer vision, 2025; pp. 1729–1739. [Google Scholar]

- Li, L.; Lin, C.; Li, D.; Huang, Y.-L.; Li, W.; Wu, T.; Zou, J.; Xue, W.; Han, S.; Guo, Y. Efficient Fine-Tuning of Large Models via Nested Low-Rank Adaptation. In Proceedings of the IEEE/CVF International Conference on Computer Vision Proceedings of the ieee/cvf international conference on computer vision, 2025; pp. 22252–22262. [Google Scholar]

- Li, X.; Cao, Y.; Pan, L.; Ma, Y.; Sun, A. 202408. Towards Verifiable Generation: A Benchmark for Knowledge-aware Language Model Attribution. L.-W. Ku, A. Martins, V. Srikumar (), Findings of the Association for Computational Linguistics: ACL 2024 Findings of the association for computational linguistics: Acl 2024 ( 493–516). Bangkok, ThailandAssociation for Computational Linguistics. Available online: https://aclanthology.org/2024.findings-acl.28/ (accessed on). 08. [CrossRef]

- Lin, S.; Hilton, J.; Evans, O. 20221. Teaching Models to Express Their Uncertainty in Words. Teaching models to express their uncertainty in words. Available online: https://arxiv.org/abs/2205.14334.

- Lin, S.; Hilton, J.; Evans, O. 20222. Teaching models to express their uncertainty in words. Teaching models to express their uncertainty in words. 2. Available online: https://arxiv.org/abs/2205.14334.

- Ling, C., Zhao, X., Zhang, X., Cheng, W., Liu, Y., Sun, Y., Oishi, M., Osaki, T., Matsuda, K., Ji, J., Bai, G., Zhao, L., Chen, H. 202406. Uncertainty Quantification for In-Context Learning of Large Language Models. K. Duh, H. Gomez, S. Bethard (), Proceedings of the 2024 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (Volume 1: Long Papers) Proceedings of the 2024 conference of the north american chapter of the association for computational linguistics: Human language technologies (volume 1: Long papers) ( 3357–3370). Mexico City, MexicoAssociation for Computational Linguistics. Available online: https://aclanthology.org/2024.naacl-long.184/ (accessed on). [CrossRef]

- Liu, C.; Kang, Y.; Wang, S.; Qing, L.; Zhao, F.; Wu, C.; Sun, C.; Kuang, K.; Wu, F. More than catastrophic forgetting: Integrating general capabilities for domain-specific llms. In Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing Proceedings of the 2024 conference on empirical methods in natural language processing, 2024; pp. 7531–7548. [Google Scholar]

- Liu, X.; Liang, T.; He, Z.; Xu, J.; Wang, W.; He, P.; Tu, Z.; Mi, H.; Yu, D. Trust, But Verify: A Self-Verification Approach to Reinforcement Learning with Verifiable Rewards. arXiv 2025, arXiv:2505.13445. [Google Scholar] [CrossRef]

- Lu, H.; Fang, L.; Zhang, R.; Li, X.; Cai, J.; Cheng, H.; Tang, L.; Liu, Z.; Sun, Z.; Wang, T.; et al. Alignment and safety in large language models: Safety mechanisms, training paradigms, and emerging challenges. arXiv 2025, arXiv:2507.19672. [Google Scholar] [CrossRef]

- Lu, X.; West, P.; Zellers, R.; Le Bras, R.; Bhagavatula, C.; Choi, Y. 202106. NeuroLogic Decoding: (Un)supervised Neural Text Generation with Predicate Logic Constraints. K. Toutanova et al. (), Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies Proceedings of the 2021 conference of the north american chapter of the association for computational linguistics: Human language technologies ( 4288–4299). OnlineAssociation for Computational Linguistics. Available online: https://aclanthology.org/2021.naacl-main.339/ (accessed on). 202106. [CrossRef]

- Luo, J.; Kou, Z.; Yang, L.; Luo, X.; Huang, J.; Xiao, Z.; Peng, J.; Liu, C.; Ji, J.; Liu, X.; et al. FinMME: Benchmark Dataset for Financial Multi-Modal Reasoning Evaluation. arXiv 2025, arXiv:2505.24714. [Google Scholar]

- Luo, K.; Huang, Q.; Jiang, C.; Feng, Y. Automating Legal Interpretation with LLMs: Retrieval, Generation, and Evaluation. arXiv 2025, arXiv:2501.01743. [Google Scholar] [CrossRef]

- Lyu, Q.; Havaldar, S.; Stein, A.; Zhang, L.; Rao, D.; Wong, E.; Apidianaki, M.; Callison-Burch, C. Faithful Chain-of-Thought Reasoning. Faithful chain-of-thought reasoning. 2023. Available online: https://arxiv.org/abs/2301.13379.

- Magesh, V.; Surani, F.; Dahl, M.; Suzgun, M.; Manning, C. D.; Ho, D. E. Hallucination-Free? Assessing the Reliability of Leading AI Legal Research Tools. Hallucination-free? assessing the reliability of leading ai legal research tools. 2024. Available online: https://arxiv.org/abs/2405.20362.

- Magesh, V.; Surani, F.; Dahl, M.; Suzgun, M.; Manning, C. D.; Ho, D. E. Hallucination-Free? Assessing the Reliability of Leading AI Legal Research Tools. Journal of Empirical Legal Studies 2025, 222216–242. [Google Scholar] [CrossRef]

- Mitchell, M.; Wu, S.; Zaldivar, A.; Barnes, P.; Vasserman, L.; Hutchinson, B.; Spitzer, E.; Raji, I. D.; Gebru, T. 201901. Model Cards for Model Reporting. Proceedings of the Conference on Fairness, Accountability, and Transparency Proceedings of the conference on fairness, accountability, and transparency ( 220–229). ACM. Available online: http://dx.doi.org/10.1145/3287560.3287596 (accessed on). [CrossRef]

- Mudgal, S.; Lee, J.; Ganapathy, H.; Li, Y.; Wang, T.; Huang, Y.; Chen, Z.; Cheng, H.-T.; Collins, M.; Strohman, T.; Chen, J.; Beutel, A.; Beirami, A. Controlled Decoding from Language Models. Controlled decoding from language models. 2024. Available online: https://arxiv.org/abs/2310.17022.

- Mündler, N.; He, J.; Jenko, S.; Vechev, M. Self-contradictory Hallucinations of Large Language Models: Evaluation, Detection and Mitigation. Self-contradictory hallucinations of large language models: Evaluation, detection and mitigation. 2024. Available online: https://arxiv.org/abs/2305.15852.

- Nguyen, H. T.; Fungwacharakorn, W.; Zin, M. M.; Goebel, R.; Toni, F.; Stathis, K.; Satoh, K. LLMs for legal reasoning: A unified framework and future perspectives. Computer Law & Security Review 2025, 58106165. [Google Scholar]

- Nguyen, M.; Gupta, S.; Le, H. CAAD: Context-Aware Adaptive Decoding for Truthful Text Generation. Caad: Context-aware adaptive decoding for truthful text generation. 2025. Available online: https://arxiv.org/abs/2508.02184.

- Ni, A.; Iyer, S.; Radev, D.; Stoyanov, V.; Yih, W.-t.; Wang, S.; Lin, X. V. Lever: Learning to verify language-to-code generation with execution. International Conference on Machine Learning International conference on machine learning, 2023; pp. 26106–26128. [Google Scholar]

- Nigam, S. K.; Patnaik, B. D.; Mishra, S.; Thomas, A. V.; Shallum, N.; Ghosh, K.; Bhattacharya, A. NyayaRAG: Realistic Legal Judgment Prediction with RAG under the Indian Common Law System. arXiv 2025, arXiv:2508.00709. [Google Scholar] [CrossRef]

- Nijkamp, E.; Pang, B.; Hayashi, H.; Tu, L.; Wang, H.; Zhou, Y.; Savarese, S.; Xiong, C. CodeGen: An Open Large Language Model for Code with Multi-Turn Program Synthesis. Codegen: An open large language model for code with multi-turn program synthesis. 2023. Available online: https://arxiv.org/abs/2203.13474.

- Niklaus, J.; Matoshi, V.; Stürmer, M.; Chalkidis, I.; Ho, D. E. Multilegalpile: A 689gb multilingual legal corpus. arXiv 2023, arXiv:2306.02069. [Google Scholar]

- Niklaus, J.; Matoshi, V.; Stürmer, M.; Chalkidis, I.; Ho, D. E. MultiLegalPile: A 689GB Multilingual Legal Corpus. Multilegalpile: A 689gb multilingual legal corpus. 2024. Available online: https://arxiv.org/abs/2306.02069.

- Nye, M.; Andreassen, A. J.; Gur-Ari, G.; Michalewski, H.; Austin, J.; Bieber, D.; Dohan, D.; Lewkowycz, A.; Bosma, M.; Luan, D.; et al. Show your work: Scratchpads for intermediate computation with language models. 2021. [Google Scholar] [CrossRef]

- Oehri, M.; Conti, G.; Pather, K.; Rossi, A.; Serra, L.; Parody, A.; Johannesen, R.; Petersen, A.; Krasniqi, A. Trusted Uncertainty in Large Language Models: A Unified Framework for Confidence Calibration and Risk-Controlled Refusal. Trusted uncertainty in large language models: A unified framework for confidence calibration and risk-controlled refusal. 2025. Available online: https://arxiv.org/abs/2509.01455.

- Östling, A.; Sargeant, H.; Xie, H.; Bull, L.; Terenin, A.; Jonsson, L.; Magnusson, M.; Steffek, F. The Cambridge law corpus: A dataset for legal AI research. Advances in Neural Information Processing Systems 2023, 3641355–41385. [Google Scholar] [CrossRef]

- Pfeiffer, J.; Kamath, A.; Rücklé, A.; Cho, K.; Gurevych, I. 202104. AdapterFusion: Non-Destructive Task Composition for Transfer Learning. P. Merlo, J. Tiedemann, R. Tsarfaty (), Proceedings of the 16th Conference of the European Chapter of the Association for Computational Linguistics: Main Volume Proceedings of the 16th conference of the european chapter of the association for computational linguistics: Main volume ( 487–503). OnlineAssociation for Computational Linguistics. Available online: https://aclanthology.org/2021.eacl-main.39/ (accessed on). 04. [CrossRef]

- Poesia, G.; Gandhi, K.; Zelikman, E.; Goodman, N. D. 20231. Certified deductive reasoning with language models. Certified deductive reasoning with language models. Available online: https://arxiv.org/abs/2306.04031.

- Poesia, G.; Gandhi, K.; Zelikman, E.; Goodman, N. D. 20232. Certified Reasoning with Language Models. ArXivabs/2306.04031. Available online: https://api.semanticscholar.org/CorpusID:259095869.

- Rabelo, J.; Kim, M.-Y.; Goebel, R.; Yoshioka, M.; Kano, Y.; Satoh, K. 2020. COLIEE 2020: Methods for Legal Document Retrieval and Entailment. New Frontiers in Artificial Intelligence: JSAI-IsAI 2020 Workshops, JURISIN, LENLS 2020 Workshops, Virtual Event, November 15–17, 2020, Revised Selected Papers New frontiers in artificial intelligence: Jsai-isai 2020 workshops, jurisin, lenls 2020 workshops, virtual event, november 15–17, 2020, revised selected papers ( 196–210). Berlin, HeidelbergSpringer-Verlag. Available online: https://doi.org/10.1007/978-3-030-79942-7_13 (accessed on). [CrossRef]

- Raffel, C.; Shazeer, N.; Roberts, A.; Lee, K.; Narang, S.; Matena, M.; Zhou, Y.; Li, W.; Liu, P. J. Exploring the limits of transfer learning with a unified text-to-text transformer. J. Mach. Learn. Res.211. 2020. [Google Scholar]

- Raji, I. D.; Smart, A.; White, R. N.; Mitchell, M.; Gebru, T.; Hutchinson, B.; Smith-Loud, J.; Theron, D.; Barnes, P. 2020. Closing the AI accountability gap: Defining an end-to-end framework for internal algorithmic auditing. Proceedings of the 2020 conference on fairness, accountability, and transparency Proceedings of the 2020 conference on fairness, accountability, and transparency ( 33–44).

- Raptopoulos, P.; Filandrianos, G.; Lymperaiou, M.; Stamou, G. PAKTON: A Multi-Agent Framework for Question Answering in Long Legal Agreements. arXiv 2025, arXiv:2506.00608. [Google Scholar] [CrossRef]

- ritun16. Chain-of-Verification Implementation. Chain-of-verification implementation. GitHub repository. 2024. Available online: https://github.com/ritun16/chain-of-verification.

- Ryu, C.; Lee, S.; Pang, S.; Choi, C.; Choi, H.; Min, M.; Sohn, J.-Y. Retrieval-based evaluation for LLMs: a case study in Korean legal QA. In Proceedings of the Natural Legal Language Processing Workshop 2023 Proceedings of the natural legal language processing workshop 2023, 2023; pp. 132–137. [Google Scholar]

- Sanh, V.; Webson, A.; Raffel, C.; Bach, S. H.; Sutawika, L.; Alyafeai, Z.; Chaffin, A.; Stiegler, A.; Scao, T. L.; Raja, A.; Dey, M.; Bari, M. S.; Xu, C.; Thakker, U.; Sharma, S. S.; Szczechla, E.; Kim, T.; Chhablani, G.; Nayak, N.; Rush, A. M. Multitask Prompted Training Enables Zero-Shot Task Generalization. Multitask prompted training enables zero-shot task generalization. 2022. Available online: https://arxiv.org/abs/2110.08207.

- Schick, T.; Dwivedi-Yu, J.; Dessì, R.; Raileanu, R.; Lomeli, M.; Hambro, E.; Zettlemoyer, L.; Cancedda, N.; Scialom, T. Toolformer: Language models can teach themselves to use tools. Advances in Neural Information Processing Systems 2023, 3668539–68551. [Google Scholar]

- Schöning, J.; Kruse, N. Compliance of AI Systems. arXiv 2025, arXiv:2503.05571. [Google Scholar] [CrossRef]

- Schweighofer, K.; Brune, B.; Gruber, L.; Schmid, S.; Aufreiter, A.; Gruber, A.; Doms, T.; Eder, S.; Mayer, F.; Stadlbauer, X.-P.; Schwald, C.; Zellinger, W.; Nessler, B.; Hochreiter, S. Safe and Certifiable AI Systems: Concepts, Challenges, and Lessons Learned. Safe and certifiable ai systems: Concepts, challenges, and lessons learned. 2025. Available online: https://arxiv.org/abs/2509.08852.

- ShengbinYue, S.; Huang, T.; Jia, Z.; Wang, S.; Liu, S.; Song, Y.; Huang, X.-J.; Wei, Z. Multi-agent simulator drives language models for legal intensive interaction. Findings of the Association for Computational Linguistics: NAACL 2025 Findings of the association for computational linguistics: Naacl 2025, 2025, 6537–6570. [Google Scholar]

- Shi, J.; Guo, Q.; Liao, Y.; Wang, Y.; Chen, S.; Liang, S. Legal-LM: Knowledge graph enhanced large language models for law consulting. International conference on intelligent computing International conference on intelligent computing, 2024; pp. 175–186. [Google Scholar]

- Shi, W.; Zhu, H.; Ji, J.; Li, M.; Zhang, J.; Zhang, R.; Zhu, J.; Xu, J.; Han, S.; Guo, Y. LegalReasoner: Step-wised Verification-Correction for Legal Judgment Reasoning. arXiv 2025, arXiv:2506.07443. [Google Scholar]

- Shin, R.; Lin, C.; Thomson, S.; Chen, C.; Roy, S.; Platanios, E. A.; Pauls, A.; Klein, D.; Eisner, J.; Van Durme, B.; Moens, M.-F.; Huang, X.; Specia, L.; Yih (), S. W.-t. 202111. Constrained Language Models Yield Few-Shot Semantic Parsers. M.-F. Moens, X. Huang, L. Specia, S. W.-t. Yih (), Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing Proceedings of the 2021 conference on empirical methods in natural language processing ( 7699–7715). Online and Punta Cana, Dominican RepublicAssociation for Computational Linguistics. Available online: https://aclanthology.org/2021.emnlp-main.608/ (accessed on). [CrossRef]

- Shu, D.; Zhao, H.; Liu, X.; Demeter, D.; Du, M.; Zhang, Y. 202410. LawLLM: Law Large Language Model for the US Legal System. Proceedings of the 33rd ACM International Conference on Information and Knowledge Management Proceedings of the 33rd acm international conference on information and knowledge management ( 4882–4889). ACM. Available online: http://dx.doi.org/10.1145/3627673.3680020 (accessed on). [CrossRef]

- Smith, J.; Jones, A.; Williams, B. A Comprehensive Overview of the Development and Impact of Large Language Models. Journal of Artificial Intelligence Research 2023, 701–50. [Google Scholar]

- Song, F.; Yu, B.; Lang, H.; Yu, H.; Huang, F.; Wang, H.; Li, Y. Scaling data diversity for fine-tuning language models in human alignment. arXiv 2024, arXiv:2403.11124. [Google Scholar] [CrossRef]

- South, T. Private, Verifiable, and Auditable AI Systems. Private, verifiable, and auditable ai systems. 2025. Available online: https://arxiv.org/abs/2509.00085.

- Su, W.; Yue, B.; Ai, Q.; Hu, Y.; Li, J.; Wang, C.; Zhang, K.; Wu, Y.; Liu, Y. Judge: Benchmarking judgment document generation for chinese legal system. In Proceedings of the 48th International ACM SIGIR Conference on Research and Development in Information Retrieval Proceedings of the 48th international acm sigir conference on research and development in information retrieval, 2025; pp. 3573–3583. [Google Scholar]

- Sun, J.; Dai, C.; Luo, Z.; Chang, Y.; Li, Y. LawLuo: A Multi-Agent Collaborative Framework for Multi-Round Chinese Legal Consultation. Lawluo: A multi-agent collaborative framework for multi-round chinese legal consultation. 2024. Available online: https://arxiv.org/abs/2407.16252.

- Sun, K.; Wu, J.; Guo, M.; Li, J.; Huang, J. Accurate Target Privacy Preserving Federated Learning Balancing Fairness and Utility. Accurate target privacy preserving federated learning balancing fairness and utility. 2025. Available online: https://arxiv.org/abs/2510.26841.

- Surden, H. Artificial intelligence and law: An overview. Ga. St. UL Rev. 2018, 351305. [Google Scholar]

- The Law Society of Hong Kong (LSHK). The impact of artificial intelligence on the legal profession: Position paper. The impact of artificial intelligence on the legal profession: Position paper. Position Paper. 2024j. Available online: https://www.hklawsoc.org.hk/-/media/HKLS/Home/News/2024/LSHK-Position-Paper_AI_EN.pdf.

- Tian, K.; Mitchell, E.; Zhou, A.; Sharma, A.; Rafailov, R.; Yao, H.; Finn, C.; Manning, C. D. Just Ask for Calibration: Strategies for Eliciting Calibrated Confidence Scores from Language Models Fine-Tuned with Human Feedback. Just ask for calibration: Strategies for eliciting calibrated confidence scores from language models fine-tuned with human feedback. 2023. Available online: https://arxiv.org/abs/2305.14975.

- Touvron, H.; Lavril, T.; Izacard, G.; Martinet, X.; Lachaux, M.-A.; Lacroix, T.; Rozière, B.; Goyal, N.; Hambro, E.; Azhar, F.; et al. Llama: Open and efficient foundation language models. arXiv 2023, arXiv:2302.13971. [Google Scholar] [CrossRef]

- Tran, H. T. H.; Chatterjee, N.; Pollak, S.; Doucet, A. Deberta beats behemoths: A comparative analysis of fine-tuning, prompting, and peft approaches on legallensner. Proceedings of the Natural Legal Language Processing Workshop 2024 Proceedings of the natural legal language processing workshop 2024, 2024, 371–380. [Google Scholar]

- Tuggener, D.; Von Däniken, P.; Peetz, T.; Cieliebak, M. LEDGAR: a large-scale multi-label corpus for text classification of legal provisions in contracts. In Proceedings of the twelfth language resources and evaluation conference Proceedings of the twelfth language resources and evaluation conference, 2020; pp. 1235–1241. [Google Scholar]

- Union, E. 2016. Regulation (EU) 2016/679 of the European Parliament and of the Council of 27 April 2016 on the protection of natural persons with regard to the processing of personal data and on the free movement of such data, and repealing Directive 95/46/EC (General Data Protection Regulation). Regulation (eu) 2016/679 of the european parliament and of the council of 27 april 2016 on the protection of natural persons with regard to the processing of personal data and on the free movement of such data, and repealing directive 95/46/ec (general data protection regulation). Available online: https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=CELEX:32016R0679 (accessed on). Official Journal of the European Union: L 119, 4 May 2016, pp. 1–88; Entered into force: 25 May 2018.

- Union, E. 2024. Regulation (EU) 2024/1689 of the European Parliament and of the Council of 13 June 2024 laying down harmonised rules on artificial intelligence (Artificial Intelligence Act). Regulation (eu) 2024/1689 of the european parliament and of the council of 13 june 2024 laying down harmonised rules on artificial intelligence (artificial intelligence act). Available online: https://www.aiact-info.eu/full-text-and-pdf-download/ (accessed on). Content consistent with Official Journal of the European Union (L 2024/1689, 12 July 2024); Entered into force: 1 August 2024; PDF and full text provided by EU-authorised platform (aiact-info.eu).

- Valmeekam, K.; Stechly, K.; Kambhampati, S. LLMs Still Can’t Plan; Can LRMs? A Preliminary Evaluation of OpenAI’s o1 on PlanBench. Llms still can’t plan; can lrms? a preliminary evaluation of openai’s o1 on planbench. 2024. Available online: https://arxiv.org/abs/2409.13373.

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A. N.; Kaiser, *!!! REPLACE !!!*; Polosukhin, I. Attention is all you need. In Advances in neural information processing systems30; 2017. [Google Scholar]

- Wang, S.; Fan, W.; Feng, Y.; Lin, S.; Ma, X.; Wang, S.; Yin, D. Knowledge Graph Retrieval-Augmented Generation for LLM-based Recommendation. Knowledge graph retrieval-augmented generation for llm-based recommendation. 2025. Available online: https://arxiv.org/abs/2501.02226.

- Wang, T.; Yu, P.; Tan, X. E.; O’Brien, S.; Pasunuru, R.; Dwivedi-Yu, J.; Golovneva, O.; Zettlemoyer, L.; Fazel-Zarandi, M.; Celikyilmaz, A. Shepherd: A critic for language model generation. arXiv 2023, arXiv:2308.04592. [Google Scholar] [CrossRef]

- Wang, X.; Sen, P.; Li, R.; Yilmaz, E. 202504. Adaptive Retrieval-Augmented Generation for Conversational Systems. L. Chiruzzo, A. Ritter, L. Wang (), Findings of the Association for Computational Linguistics: NAACL 2025 Findings of the association for computational linguistics: Naacl 2025 ( 491–503). Albuquerque, New MexicoAssociation for Computational Linguistics. Available online: https://aclanthology.org/2025.findings-naacl.30/ (accessed on). [CrossRef]

- Wang, X.; Zhang, X.; Hoo, V.; Shao, Z.; Zhang, X. LegalReasoner: A multi-stage framework for legal judgment prediction via large language models and knowledge integration. IEEE Access. 2024. [Google Scholar] [CrossRef]

- Wang, Y.; Kordi, Y.; Mishra, S.; Liu, A.; Smith, N. A.; Khashabi, D.; Hajishirzi, H. Self-Instruct: Aligning Language Models with Self-Generated Instructions. Self-instruct: Aligning language models with self-generated instructions. 2023. Available online: https://arxiv.org/abs/2212.10560.

- Wei, J.; Bosma, M.; Zhao, V. Y.; Guu, K.; Yu, A. W.; Lester, B.; Du, N.; Dai, A. M.; Le, Q. V. Finetuned Language Models Are Zero-Shot Learners. Finetuned language models are zero-shot learners. 2022. Available online: https://arxiv.org/abs/2109.01652.

- Welleck, S.; Liu, J.; Lu, X.; Hajishirzi, H.; Choi, Y. 20221. NaturalProver: Grounded Mathematical Proof Generation with Language Models. Naturalprover: Grounded mathematical proof generation with language models. Available online: https://arxiv.org/abs/2205.12910.

- Welleck, S.; Liu, J.; Lu, X.; Hajishirzi, H.; Choi, Y. Naturalprover: Grounded mathematical proof generation with language models. Advances in Neural Information Processing Systems Advances in neural information processing systems 2022, 35, 4913–4927. [Google Scholar]

- Wu, Y.; Wang, C.; Gumusel, E.; Liu, X. Knowledge-infused legal wisdom: Navigating llm consultation through the lens of diagnostics and positive-unlabeled reinforcement learning. arXiv 2024, arXiv:2406.03600. [Google Scholar]

- Xie, N.; Bai, Y.; Gao, H.; Xue, Z.; Fang, F.; Zhao, Q.; Li, Z.; Zhu, L.; Ni, S.; Yang, M. Delilaw: A chinese legal counselling system based on a large language model. In Proceedings of the 33rd ACM International Conference on Information and Knowledge Management Proceedings of the 33rd acm international conference on information and knowledge management, 2024; pp. 5299–5303. [Google Scholar]

- Xu, Q.; Liu, Q.; Fei, H.; Yu, H.; Guan, S.; Wei, X. CLEAR: A Framework Enabling Large Language Models to Discern Confusing Legal Paragraphs. Findings of the Association for Computational Linguistics: EMNLP 2025 Findings of the association for computational linguistics: Emnlp 2025, 8937–8953. [Google Scholar]

- Xu, Q.; Wei, X.; Yu, H.; Liu, Q.; Fei, H. Divide and Conquer: Legal Concept-guided Criminal Court View Generation. Findings of the Association for Computational Linguistics: EMNLP 2024 Findings of the association for computational linguistics: Emnlp 2024, 2024, 3395–3410. [Google Scholar]

- Xu, X.; Zhao, L.; Xu, H.; Chen, C. CLaw: Benchmarking Chinese Legal Knowledge in Large Language Models-A Fine-grained Corpus and Reasoning Analysis. arXiv 2025, arXiv:2509.21208. [Google Scholar]

- Yang, X.-W.; Shao, J.-J.; Guo, L.-Z.; Zhang, B.-W.; Zhou, Z.; Jia, L.-H.; Dai, W.-Z.; Li, Y. Neuro-symbolic artificial intelligence: Towards improving the reasoning abilities of large language models. Neuro-symbolic artificial intelligence: Towards improving the reasoning abilities of large language models. 2025. Available online: https://arxiv.org/abs/2508.13678.

- Yang, Z.; Dong, L.; Du, X.; Cheng, H.; Cambria, E.; Liu, X.; Gao, J.; Wei, F. Language Models as Inductive Reasoners. Language models as inductive reasoners. 2024. Available online: https://arxiv.org/abs/2212.10923.

- Yao, R.; Wu, Y.; Wang, C.; Xiong, J.; Wang, F.; Liu, X. Elevating legal LLM responses: harnessing trainable logical structures and semantic knowledge with legal reasoning. arXiv 2025, arXiv:2502.07912. [Google Scholar] [CrossRef]

- Ye, F.; Li, S. MileCut: A multi-view truncation framework for legal case retrieval. Proceedings of the ACM Web Conference 2024 Proceedings of the acm web conference 2024, 2024, 1341–1349. [Google Scholar]

- Yu, F.; Seedat, N.; Herrmannova, D.; Schilder, F.; Schwarz, J. R. Beyond Pointwise Scores: Decomposed Criteria-Based Evaluation of LLM Responses. In Proceedings of the 2025 Conference on Empirical Methods in Natural Language Processing: Industry Track Proceedings of the 2025 conference on empirical methods in natural language processing: Industry track ( 1931–1954), 2025. [Google Scholar]

- Yuan, W.; Cao, J.; Jiang, Z.; Kang, Y.; Lin, J.; Song, K.; Lin, T.; Yan, P.; Sun, C.; Liu, X. Can large language models grasp legal theories? enhance legal reasoning with insights from multi-agent collaboration. Findings of the association for computational linguistics: EMNLP 2024 Findings of the association for computational linguistics: Emnlp 2024, 2024, 7577–7597. [Google Scholar]

- Yue, L.; Liu, Q.; Du, Y.; Gao, W.; Liu, Y.; Yao, F. FedJudge: Federated Legal Large Language Model. Fedjudge: Federated legal large language model. 2024. Available online: https://arxiv.org/abs/2309.08173.

- Yue, S.; Chen, W.; Wang, S.; Li, B.; Shen, C.; Liu, S.; Zhou, Y.; Xiao, Y.; Yun, S.; Huang, X.; Wei, Z. DISC-LawLLM: Fine-tuning Large Language Models for Intelligent Legal Services. Disc-lawllm: Fine-tuning large language models for intelligent legal services. 2023. Available online: https://arxiv.org/abs/2309.11325.

- Zaheer, M.; Guruganesh, G.; Dubey, A.; Ainslie, J.; Alberti, C.; Ontanon, S.; Pham, P.; Ravula, A.; Wang, Q.; Yang, L.; Ahmed, A. Big bird: transformers for longer sequences. In Proceedings of the 34th International Conference on Neural Information Processing Systems. Proceedings of the 34th international conference on neural information processing systems, Red Hook, NY, USA, 2020; Curran Associates Inc. [Google Scholar]

- Zhang, K.; Chen, C.; Wang, Y.; Tian, Q.; Bai, L. Cfgl-lcr: A counterfactual graph learning framework for legal case retrieval. In Proceedings of the 29th ACM SIGKDD Conference on knowledge discovery and data mining Proceedings of the 29th acm sigkdd conference on knowledge discovery and data mining, 2023; pp. 3332–3341. [Google Scholar]

- Zhang, K.; Xie, G.; Yu, W.; Xu, M.; Tang, X.; Li, Y.; Xu, J. Legal Mathematical Reasoning with LLMs: Procedural Alignment through Two-Stage Reinforcement Learning. arXiv 2025, arXiv:2504.02590. [Google Scholar]

- Zhang, R.; Sullivan, D.; Jackson, K.; Xie, P.; Chen, M. 202504. Defense against Prompt Injection Attacks via Mixture of Encodings. L. Chiruzzo, A. Ritter, L. Wang (), Proceedings of the 2025 Conference of the Nations of the Americas Chapter of the Association for Computational Linguistics: Human Language Technologies (Volume 2: Short Papers) Proceedings of the 2025 conference of the nations of the americas chapter of the association for computational linguistics: Human language technologies (volume 2: Short papers) ( 244–252). Albuquerque, New MexicoAssociation for Computational Linguistics. Available online: https://aclanthology.org/2025.naacl-short.21/ (accessed on). [CrossRef]

- Zhang, T.; Patil, S. G.; Jain, N.; Shen, S.; Zaharia, M.; Stoica, I.; Gonzalez, J. E. RAFT: Adapting Language Model to Domain Specific RAG. Raft: Adapting language model to domain specific rag. 2024. Available online: https://arxiv.org/abs/2403.10131.

- Zhang, X.; Ruan, J.; Ma, X.; Zhu, Y.; Zhao, H.; Li, H.; Chen, J.; Zeng, K.; Cai, X. When to continue thinking: Adaptive thinking mode switching for efficient reasoning. arXiv 2025, arXiv:2505.15400. [Google Scholar] [CrossRef]

- Zhao, R.; Li, X.; Joty, S.; Qin, C.; Bing, L. Verify-and-Edit: A Knowledge-Enhanced Chain-of-Thought Framework. Verify-and-edit: A knowledge-enhanced chain-of-thought framework. 2023. Available online: https://arxiv.org/abs/2305.03268.

- ZHAO, W.; HU, Y.; DENG, Y.; GUO, J.; SUI, X.; HAN, X.; ZHANG, A.; ZHAO, Y.; QIN, B.; CHUA, T.-S.; et al. Beware of your Po! Measuring and mitigating AI safety risks in role-play fine-tuning of LLMs. In Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics, Vienna, Austria Proceedings of the 63rd annual meeting of the association for computational linguistics, vienna, austria, 2025; pp. 5131–5157. [Google Scholar]

- Zhao, W. X.; Zhou, K.; Li, J.; Tang, T.; Wang, X.; Hou, Y.; Min, Y.; Zhang, B.; Zhang, J.; Dong, Z.; et al. A survey of large language models. arXiv 2023, arXiv:2303.1822312. [Google Scholar] [PubMed]

- Zheng, L.; Guha, N.; Anderson, B. R.; Henderson, P.; Ho, D. E. 20211. When does pretraining help? assessing self-supervised learning for law and the casehold dataset of 53,000+ legal holdings. Proceedings of the eighteenth international conference on artificial intelligence and law Proceedings of the eighteenth international conference on artificial intelligence and law ( 159–168).

- Zheng, L.; Guha, N.; Anderson, B. R.; Henderson, P.; Ho, D. E. 20212. When does pretraining help? assessing self-supervised learning for law and the casehold dataset of 53,000+ legal holdings. Proceedings of the eighteenth international conference on artificial intelligence and law Proceedings of the eighteenth international conference on artificial intelligence and law ( 159–168).

- Zhong, H.; Xiao, C.; Tu, C.; Zhang, T.; Liu, Z.; Sun, M. How does NLP benefit legal system: A summary of legal artificial intelligence. arXiv 2020, arXiv:2004.12158. [Google Scholar] [CrossRef]

- Zhou, J. P.; Staats, C.; Li, W.; Szegedy, C.; Weinberger, K. Q.; Wu, Y. Don’t Trust: Verify – Grounding LLM Quantitative Reasoning with Autoformalization. Don’t trust: Verify – grounding llm quantitative reasoning with autoformalization. Available online: https://arxiv.org/abs/2403.18120.

- Zhou, Z.; Shi, J.-X.; Song, P.-X.; Yang, X.-W.; Jin, Y.-X.; Guo, L.-Z.; Li, Y.-F. LawGPT: A Chinese Legal Knowledge-Enhanced Large Language Model. Lawgpt: A chinese legal knowledge-enhanced large language model. 2024. Available online: https://arxiv.org/abs/2406.04614.

- Zöller, M.-A.; Iurshina, A.; Röder, I. 2025. Trustworthy Generative AI for Financial Services (Practitioner Track). Symposium on Scaling AI Assessments (SAIA 2024) Symposium on scaling ai assessments (saia 2024) ( 2–1).

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).