Submitted:

10 February 2026

Posted:

11 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Literature Review

3. Research Motivation

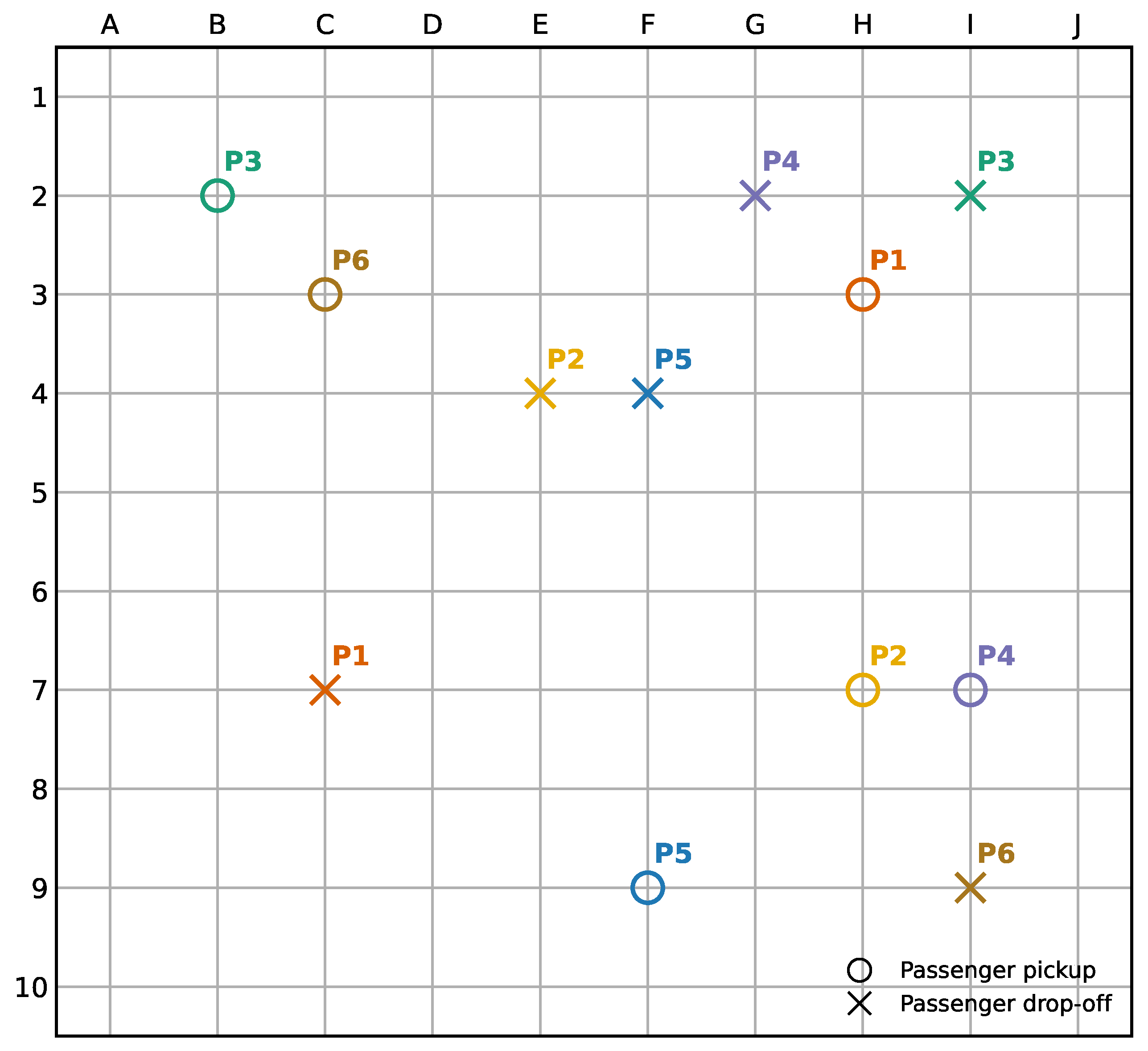

4. Scope and Problem Definition

5. Materials and Methods

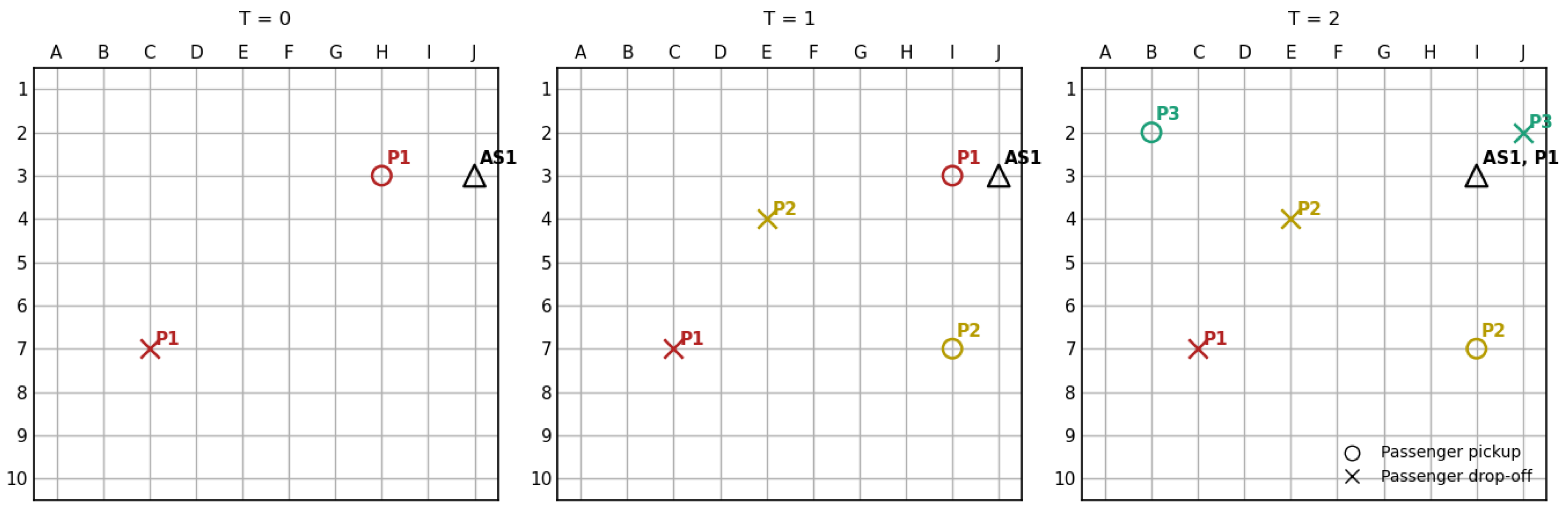

5.1. ASP Problem Formulation as a Markov Decision Process (MDP)

5.2. Proposed Method: Imitation Learning–Assisted Deep Reinforcement Learning

5.2.1. Overview of the Learning Pipeline

5.2.2. Imitation Learning via Generative Adversarial Imitation Learning (GAIL)

5.2.3. Policy Refinement via Proximal Policy Optimization (PPO)

5.3. ASP Environment Set Up

5.4. DRL Observation Space

5.5. DRL Action Space

5.6. DRL Reward Function

6. Results

7. Discussion

8. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| ASP | Autonomous Shuttle Problem |

| DRL | Deep Reinforcement Learning |

| GAIL | Generative Adversarial Imitation Learning |

| MDP | Markov Decision Process |

| MSDVRP | Multi-agent Stochastic and Dynamic Vehicle Routing |

References

- Burger, A. What Might Autonomous Public Transit Look Like? 2021. Available online: https://www.vta.gov/blog/what-might-autonomous-public-transit-look.

- Imhof, S.; Frolicher, J.; von Arx, W. Shared Autonomous Vehicles in Rural Public Transportation Systems. Research in Transportation Economics 2020, 83, 100925. [Google Scholar] [CrossRef]

- Trubia, S.; Curto, S.; Severino, A.; Arena, F.; Zuccalà, Y. Autonomous Vehicles Effects on Public Transport Systems. In Proceedings of the AIP Conference Proceedings, 2021; Vol. 2343. [Google Scholar]

- Transit, New Jersey. What Might Autonomous Public Transit Look Like? Available online: https://www.njtransit.com/Avatar.

- Farazi, N.P.; Zou, B.; Ahamed, T.; Barua, L. Deep reinforcement learning in transportation research: A review. Transportation research interdisciplinary perspectives 2021, 11, 100425. [Google Scholar] [CrossRef]

- Feng, S.; Duan, P.; Ke, J.; Yang, H. Coordinating ride-sourcing and public transport services with a reinforcement learning approach. Transportation Research Part C: Emerging Technologies 2022, 138, 103611. [Google Scholar] [CrossRef]

- Cordeau, J.F. A Branch-and-Cut Algorithm for the Dial-a-Ride Problem. Operations Research 2006, 54, 573–586. [Google Scholar] [CrossRef]

- Hiermann, G.; Puchinger, J.; Ropke, S.; Hartl, R.F. The Electric Fleet Size and Mix Vehicle Routing Problem with Time Windows and Recharging Stations. European Journal of Operational Research 2016, 252, 995–1018. [Google Scholar] [CrossRef]

- Sutton, R.S.; Barto, A.G. Reinforcement Learning: An Introduction, 2 ed.; The MIT Press, 2018. [Google Scholar]

- Schulman, J.; Wolski, F.; Dhariwal, P.; Radford, A.; Klimov, O. Proximal Policy Optimization Algorithms. arXiv arXiv:1707.06347. [PubMed]

- Ho, J.; Ermon, S. Generative Adversarial Imitation Learning. In Proceedings of the Advances in Neural Information Processing Systems, 2016; Vol. 29. [Google Scholar]

- Wilson, N.H.M.; Colvin, N.J. Computer Control of the Rochester Dial-a-Ride System. Technical Report Report 77-22. 1977. [Google Scholar]

- Psaraftis, H.N. A Dynamic Programming Solution to the Single Vehicle Many-to-Many Immediate Request Dial-a-Ride Problem. Transportation Science 1980, 14, 130–154. [Google Scholar] [CrossRef]

- Jaw, J.J.; Odoni, A.R.; Psaraftis, H.N.; Wilson, N.H.M. A Heuristic Algorithm for the Multi-Vehicle Advance Request Dial-a-Ride Problem with Time Windows. Transportation Research Part B: Methodological 1986, 20, 243–257. [Google Scholar] [CrossRef]

- Xiang, Z.; Chu, C.; Chen, H. The Study of a Dynamic Dial-a-Ride Problem under Time-Dependent and Stochastic Environments. European Journal of Operational Research 2008, 185, 534–551. [Google Scholar] [CrossRef]

- Brownell, C.; Kornhauser, A. A Driverless Alternative: Fleet Size and Cost Requirements for a Statewide Autonomous Taxi Network in New Jersey. Transportation Research Record 2014, 2416, 73–81. [Google Scholar] [CrossRef]

- Fagnant, D.J.; Kockelman, K.M. Dynamic Ride-Sharing and Fleet Sizing for a System of Shared Autonomous Vehicles in Austin, Texas. Transportation 2018, 45, 143–158. [Google Scholar] [CrossRef]

- Goralzik, A.; König, A.; Alčiauskaitė, L.; Hatzakis, T. Shared mobility services: an accessibility assessment from the perspective of people with disabilities. European transport research review 2022, 14, 34. [Google Scholar] [CrossRef] [PubMed]

- Hildebrandt, F.D.; Thomas, B.W.; Ulmer, M.W. Opportunities for Reinforcement Learning in Stochastic Dynamic Vehicle Routing. Computers & Operations Research 2023, 150, 106071. [Google Scholar]

- De Nijs, F.; Walraven, E.; De Weerdt, M.; Spaan, M. Constrained Multiagent Markov Decision Processes: A Taxonomy of Problems and Algorithms. Journal of Artificial Intelligence Research 2021, 70, 955–1001. [Google Scholar] [CrossRef]

- ECONorthwest; Douglas, Parsons Brinckerhoff Quade. Estimating the Benefits and Costs of Public Transit Projects: A Guidebook for Practitioners. Transportation Research Board, Technical Report TCRP Report 78. 2002. [Google Scholar]

- Google. Routing Options in OR-Tools. 22 11 2024. Available online: https://developers.google.com/optimization/routing/routing_options.

- Team SimPy. SimPy: Discrete-Event Simulation for Python. 2024. Available online: https://simpy.readthedocs.io/.

- Silver, D.; Huang, A.; Maddison, C.J.; Guez, A.; Sifre, L.; van den Driessche, G.; Schrittwieser, J.; Antonoglou, I.; Panneershelvam, V.; Lanctot, M.; et al. Mastering the Game of Go with Deep Neural Networks and Tree Search. Nature 2016, 529, 484–489. [Google Scholar] [CrossRef] [PubMed]

- Berner, C.; Brockman, G.; Chan, B.; Cheung, V.; Dębiak, P.; Dennison, C.; Farhi, D.; Fischer, J.; Hashme, S.; Hesse, C.; et al. Dota 2 with Large Scale Deep Reinforcement Learning. arXiv arXiv:1912.06680. [CrossRef]

- Vinyals, O.; Babuschkin, I.; Czarnecki, W.M.; Mathieu, M.; Dudzik, A.; Chung, J.; Choi, D.H.; Powell, R.; Ewalds, T.; Georgiev, P.; et al. Grandmaster level in StarCraft II using multi-agent reinforcement learning. nature 2019, 575, 350–354. [Google Scholar] [CrossRef] [PubMed]

- Silver, D.; Schrittwieser, J.; Simonyan, K.; Antonoglou, I.; Huang, A.; Guez, A.; Hubert, T.; Baker, L.; Lai, M.; Bolton, A.; et al. Mastering the game of go without human knowledge. nature 2017, 550, 354–359. [Google Scholar] [CrossRef] [PubMed]

- Rashid, T.; Samvelyan, M.; De Witt, C.S.; Farquhar, G.; Foerster, J.; Whiteson, S. Monotonic Value Function Factorisation for Deep Multi-Agent Reinforcement Learning. Journal of Machine Learning Research 2020, 21, 1–51. [Google Scholar]

- Yu, C.; Velu, A.; Vinitsky, E.; Gao, J.; Wang, Y.; Bayen, A.; Wu, Y. The Surprising Effectiveness of PPO in Cooperative Multi-Agent Games. Advances in Neural Information Processing Systems 2022, 35, 24611–24624. [Google Scholar]

| Training | Training | # of | Learning | n | Batch | Clip | Ent. |

|---|---|---|---|---|---|---|---|

| Sequence | Algorithm | Steps | Rate | Steps | Size | Range | Coef. |

| 1 | GAIL | 200,000 | 1024 | 4096 | 0.2 | 0.02 | |

| 2 | GAIL | 200,000 | 1024 | 4096 | 0.2 | 0.01 | |

| 3 | GAIL | 400,000 | 1024 | 4096 | 0.2 | 0.001 | |

| 4 | DRL | 200,000 | 1024 | 4096 | 0.2 | 0.02 | |

| 5 | DRL | 200,000 | 1024 | 4096 | 0.2 | 0.01 | |

| 6 | DRL | 400,000 | 1024 | 4096 | 0.2 | 0.001 |

| DRL | DARP | |||||||

|---|---|---|---|---|---|---|---|---|

| Episode | Avg. | Avg. | Episode | Serviced | Avg. | Avg. | Episode | Serviced |

| Wait | In-Vehicle | End | Time | Wait | In-Vehicle | End | Time | |

| Time | Time | Time | (W+IV) | Time | Time | Time | (W+IV) | |

| 1 | 30 | 27 | 96 | 57 | 17 | 21 | 59 | 38 |

| 2 | 25 | 8 | 73 | 33 | 8 | 22 | 49 | 30 |

| 3 | 20 | 8 | 70 | 28 | 24 | 17 | 59 | 41 |

| 4 | 34 | 12 | 102 | 46 | 8 | 14 | 43 | 22 |

| 5 | 21 | 11 | 72 | 32 | 16 | 8 | 52 | 24 |

| 6 | 32 | 14 | 98 | 46 | 18 | 22 | 62 | 40 |

| 7 | 27 | 26 | 107 | 53 | 19 | 12 | 58 | 31 |

| 8 | 20 | 16 | 69 | 36 | 22 | 21 | 64 | 43 |

| 9 | 37 | 14 | 101 | 51 | 26 | 11 | 61 | 37 |

| 10 | 24 | 14 | 78 | 38 | 31 | 19 | 73 | 50 |

| 11 | 31 | 14 | 95 | 45 | 28 | 16 | 68 | 44 |

| 12 | 18 | 18 | 65 | 36 | 26 | 17 | 60 | 43 |

| 13 | 17 | 26 | 87 | 43 | 32 | 15 | 67 | 47 |

| 14 | 16 | 49 | 98 | 65 | 9 | 13 | 52 | 22 |

| 15 | 31 | 22 | 84 | 53 | 17 | 22 | 57 | 39 |

| 16 | 19 | 10 | 72 | 29 | 26 | 13 | 61 | 39 |

| 17 | 22 | 30 | 78 | 52 | 25 | 10 | 57 | 35 |

| 18 | 24 | 16 | 77 | 40 | 8 | 24 | 49 | 32 |

| 19 | 16 | 17 | 66 | 33 | 18 | 17 | 58 | 35 |

| 20 | 36 | 13 | 98 | 49 | 12 | 21 | 58 | 33 |

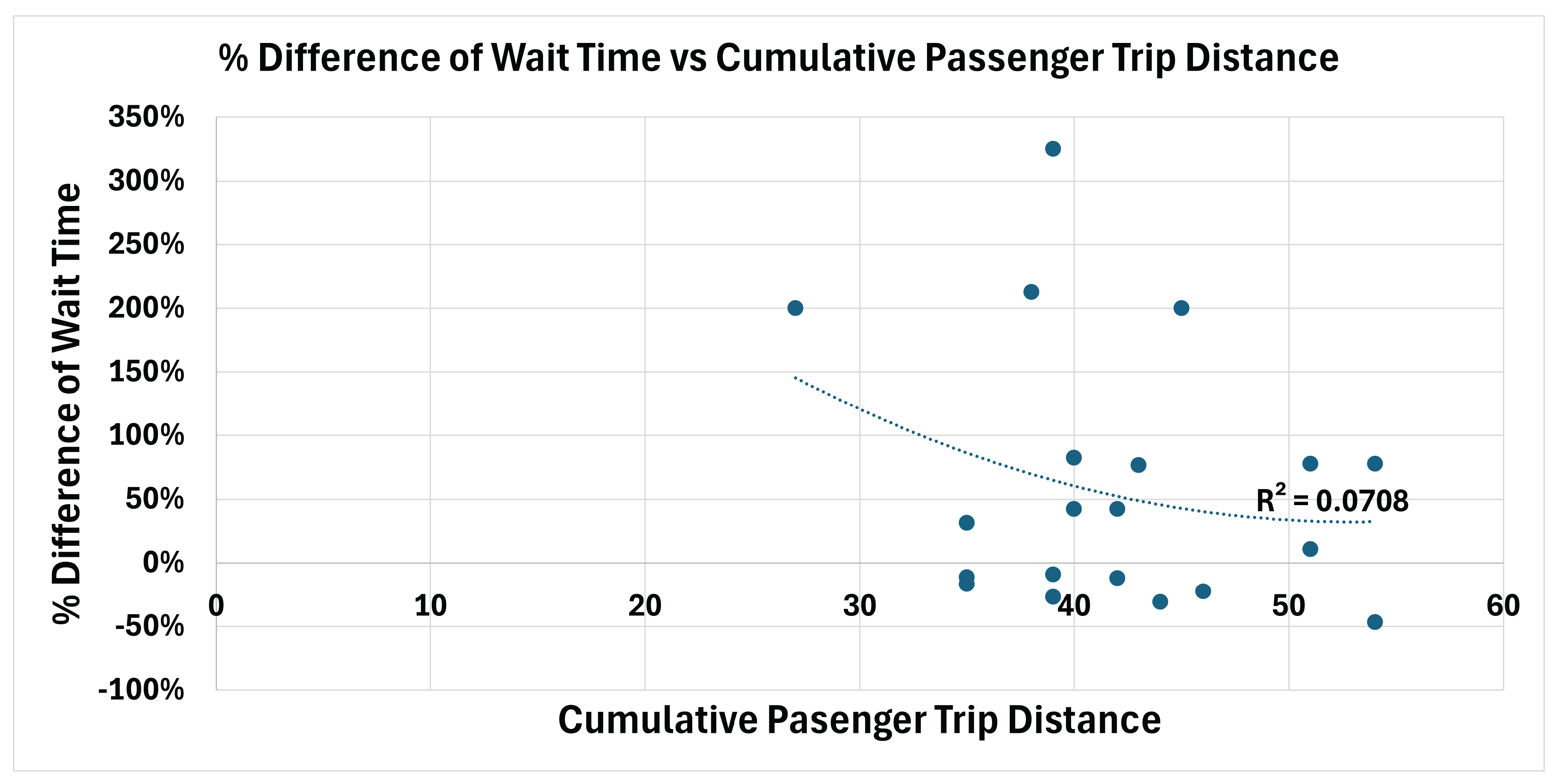

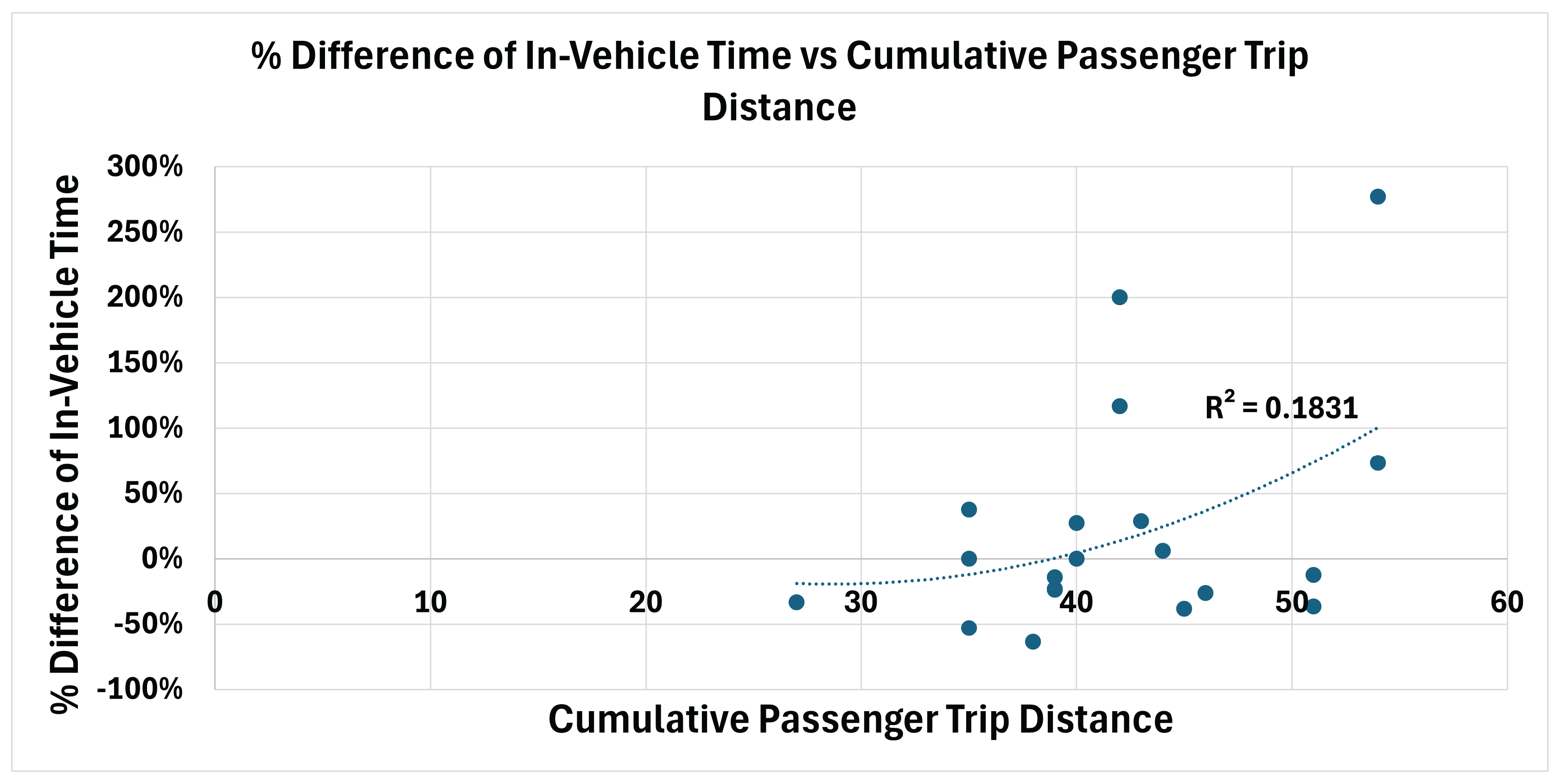

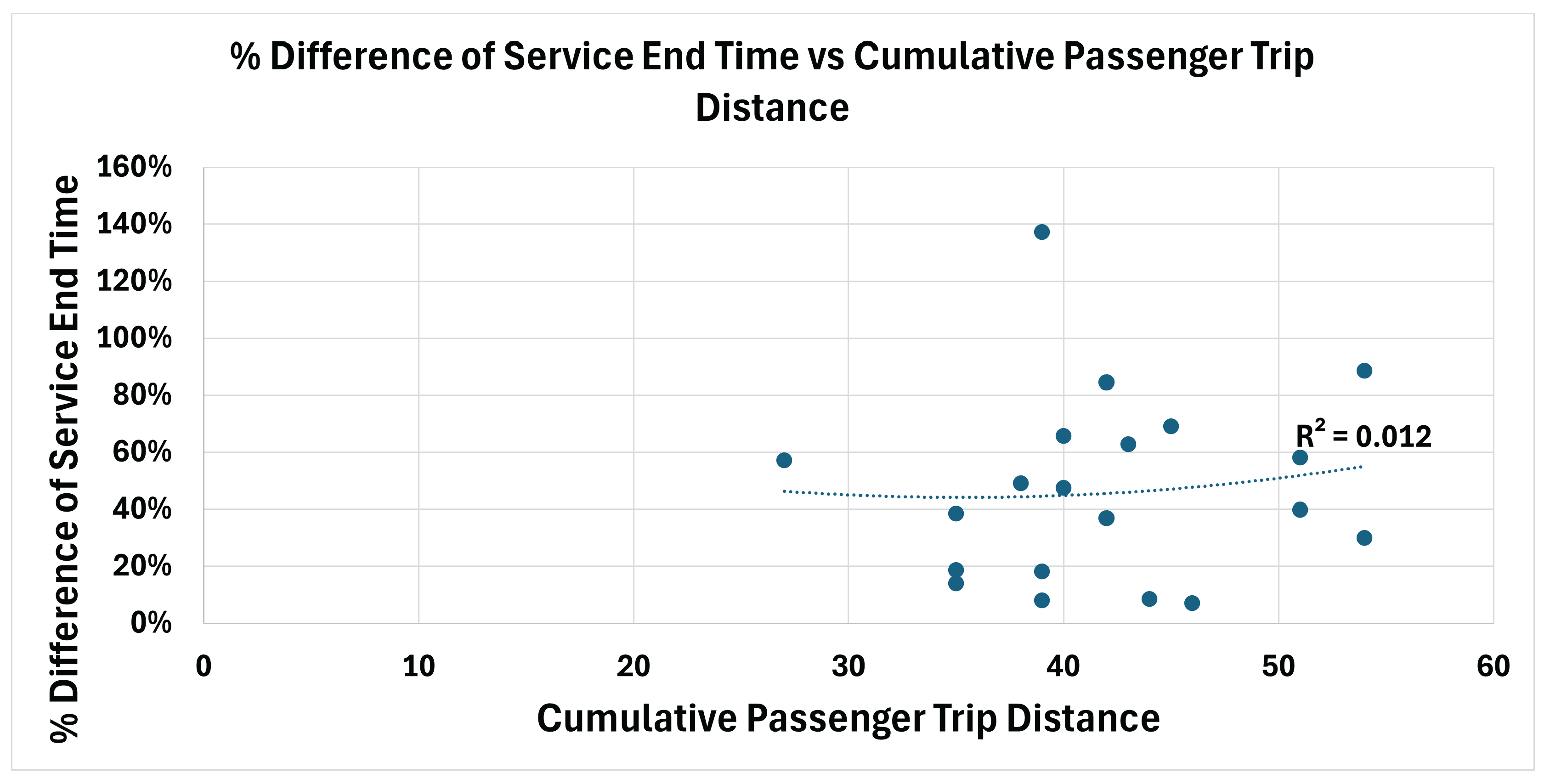

| Episode | % Difference | % Difference of | % Difference of | Cumulative Passenger | Avg. Passenger |

|---|---|---|---|---|---|

| of Wait Time | In-Vehicle Time | Service End Time | Trip Distance | Travel Distance | |

| 1 | 76% | 29% | 63% | 43 | 7 |

| 2 | 213% | -64% | 49% | 38 | 6 |

| 3 | -17% | -53% | 19% | 35 | 6 |

| 4 | 325% | -14% | 137% | 39 | 7 |

| 5 | 31% | 38% | 38% | 35 | 6 |

| 6 | 78% | -36% | 58% | 51 | 9 |

| 7 | 42% | 117% | 84% | 42 | 7 |

| 8 | -9% | -24% | 8% | 39 | 7 |

| 9 | 42% | 27% | 66% | 40 | 7 |

| 10 | -23% | -26% | 7% | 46 | 8 |

| 11 | 11% | -13% | 40% | 51 | 9 |

| 12 | -31% | 6% | 8% | 44 | 7 |

| 13 | -47% | 73% | 30% | 54 | 9 |

| 14 | 78% | 277% | 88% | 54 | 9 |

| 15 | 82% | 0% | 47% | 40 | 7 |

| 16 | -27% | -23% | 18% | 39 | 7 |

| 17 | -12% | 200% | 37% | 42 | 7 |

| 18 | 200% | -33% | 57% | 27 | 5 |

| 19 | -11% | 0% | 14% | 35 | 6 |

| 20 | 200% | -38% | 69% | 45 | 8 |

| DRL | DARP | |||||||

| Statistic | Avg. Wait Time |

Avg. In-Vehicle Time |

Episode End Time |

Serviced Time |

Avg. Wait Time |

Avg. In-Vehicle Time |

Episode End Time |

Serviced Time |

| Mean | 25 | 18 | 84 | 43 | 20 | 17 | 58 | 36 |

| Median | 24 | 15 | 81 | 44 | 19 | 17 | 59 | 38 |

| Min. | 16 | 8 | 65 | 28 | 8 | 8 | 43 | 22 |

| Max. | 37 | 49 | 107 | 65 | 32 | 24 | 73 | 50 |

| % Diff. Wait Time |

% Diff. In-Vehicle Time |

% Diff. Episode End Time |

% Diff. Serviced Time |

Cumulative Trip Distance |

|

| Mean | 60% | 22% | 47% | 28% | 42 |

| Median | 37% | -6% | 44% | 20% | 41 |

| Min. | -47% | -64% | 7% | -32% | 27 |

| Max. | 325% | 277% | 137% | 195% | 54 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).