Submitted:

10 February 2026

Posted:

10 February 2026

You are already at the latest version

Abstract

Keywords:

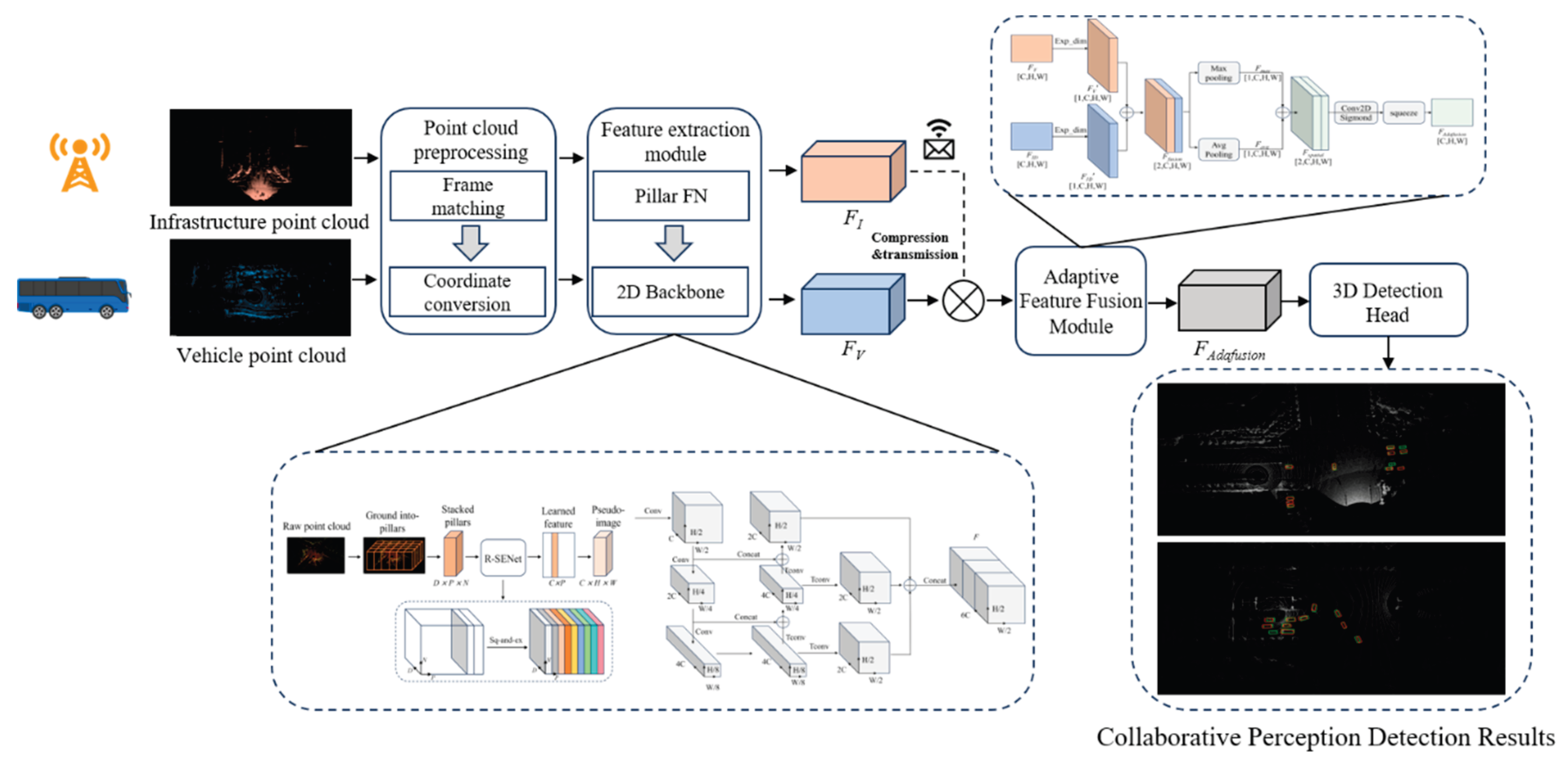

1. Introduction

- We present a point-cloud-enhanced feature modeling approach tailored for vehicle–infrastructure cooperative perception. By integrating a dual-dimension squeeze-and-excitation mechanism with a multi-scale feature pyramid, the proposed method improves the representation capability of sparse point clouds, long-range objects, and heavily occluded regions.

- We design a spatially adaptive feature fusion module that explicitly encodes feature sources and generates fusion weights using both max pooling and average pooling. This design enables dynamic and balanced weighting between vehicle-side local features and infrastructure-side global semantics, effectively mitigating fusion bias induced by field-of-view discrepancies.

- Extensive experiments are conducted on the DAIR-V2X dataset and an additional in-house dataset. The results demonstrate that, compared with mainstream cooperative perception approaches, the proposed method achieves a significant improvement in overall 3D detection accuracy and exhibits notably enhanced robustness for long-range targets, occluded regions, and scenarios with incomplete information.

2. Related Work

2.1. LiDAR-Based 3D Object Detection

2.2. V2X Collaborative Perception

3. Method

3.1. Point Cloud Data Preprocessing

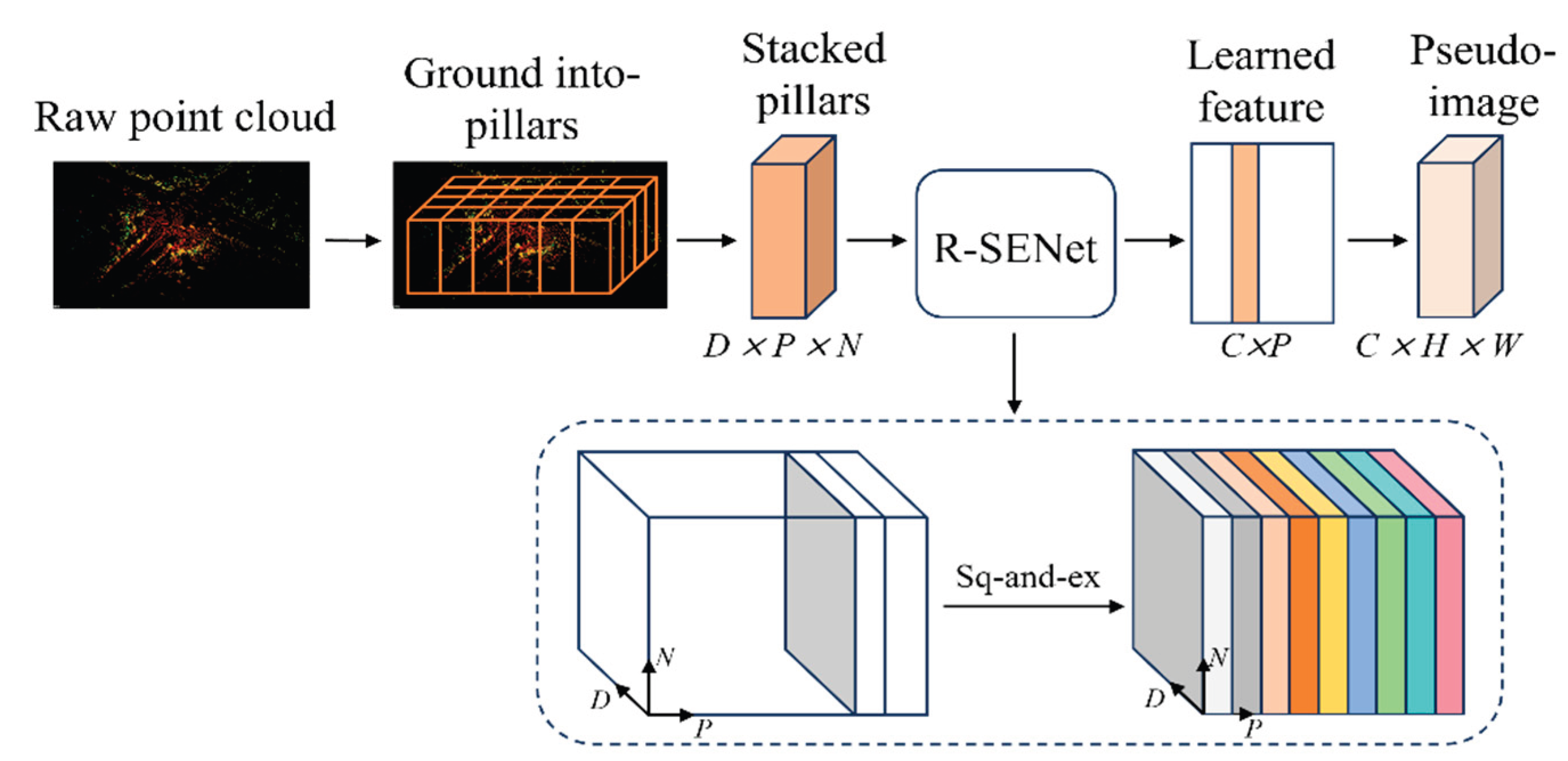

3.2. Point Cloud Feature Extraction

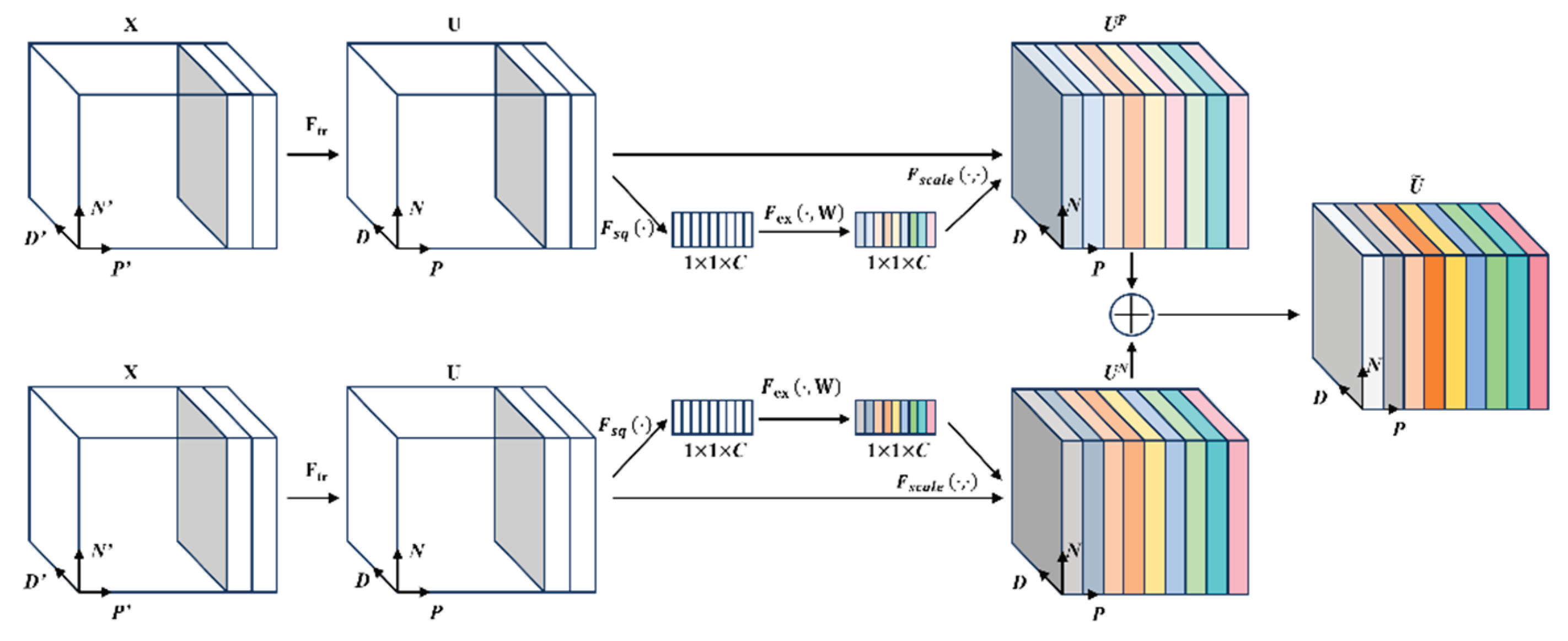

3.2.1. Feature Encoding with the Improved PointPillars Network

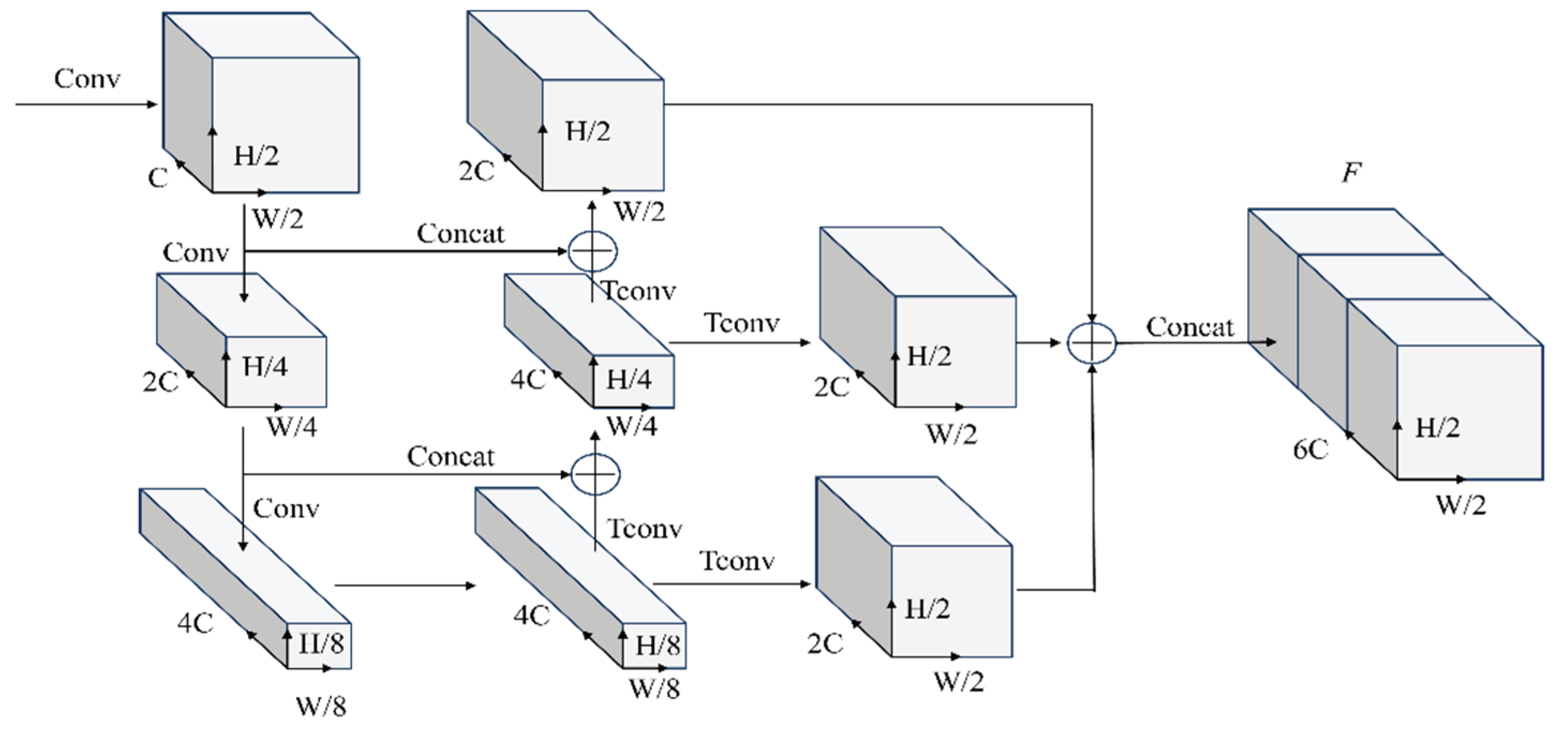

3.2.2. 2D Backbone Network

3.3. Feature Compression and Transmission

3.4. Spatially Adaptive Vehicle–Infrastructure Feature Fusion

3.5. Detection Head

4. Experimental Results

4.1. Device Information

4.2. Experimental Datasets

4.2.1. DAIR-V2X Dataset

4.2.2. Self-Collected Dataset

4.3. Evaluation Metrics

4.3.1. IoU(Intersection over Union)

4.3.2. AP(Average precision)

4.4. Experimental Setup

4.5. Quantitative Results

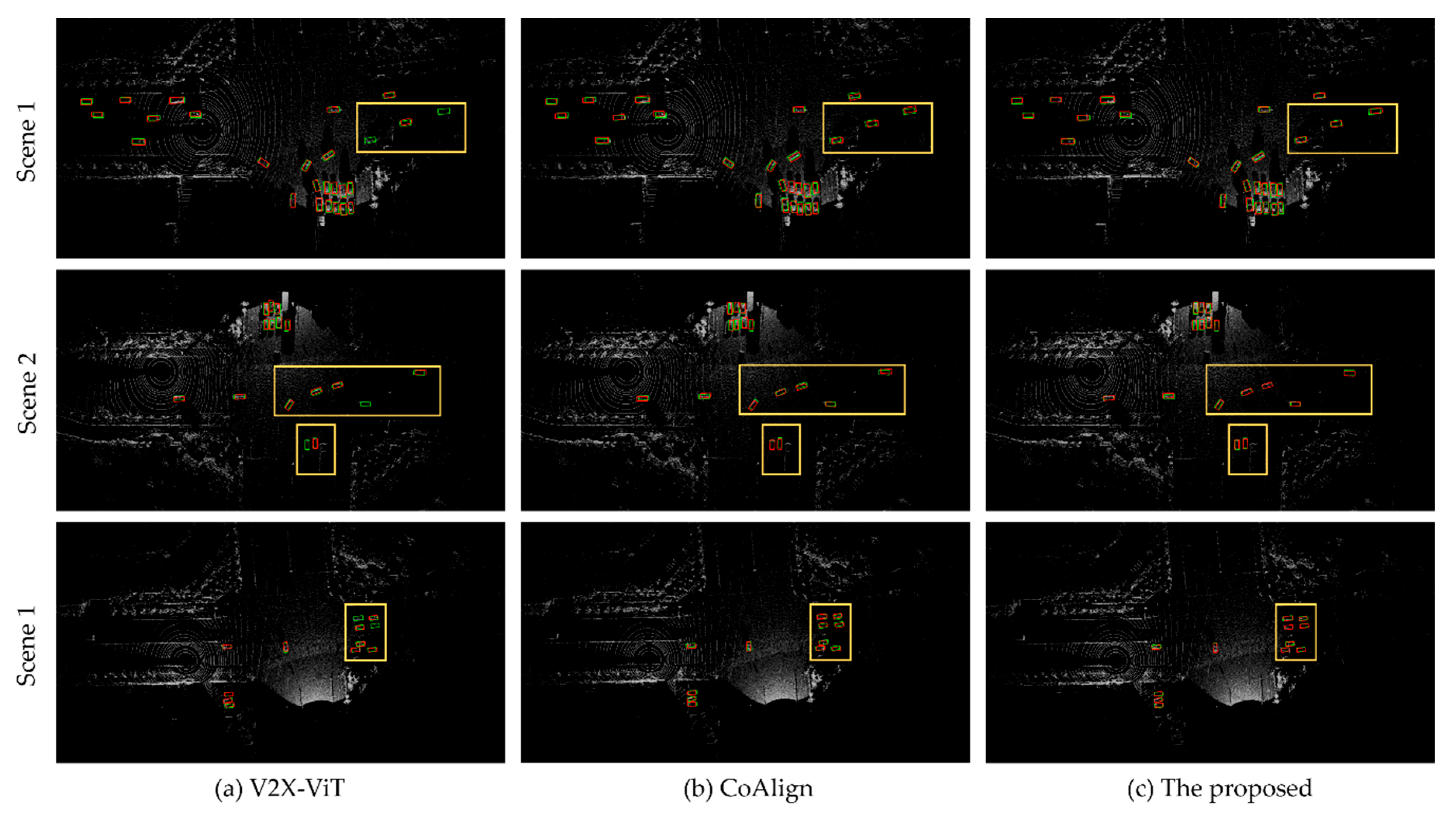

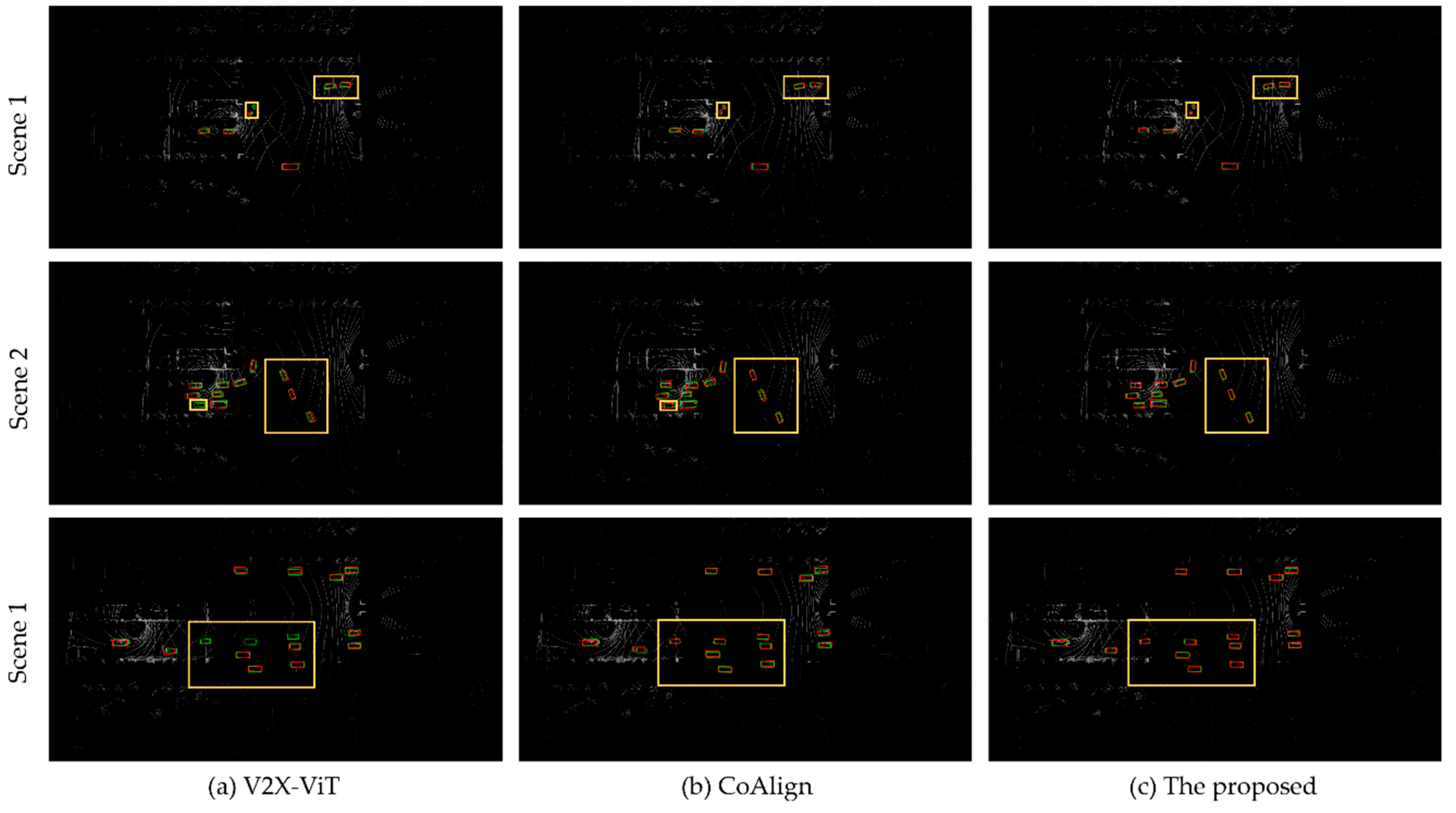

4.6. Qualitative Results

4.6. Ablation Study

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Yurtsever, E.; Lambert, J.; Carballo, A.; Takeda, K. A survey of autonomous driving: Common practices and emerging technologies. IEEE Access 2020, 8, 58443–58469. [Google Scholar] [CrossRef]

- Huang, T.; Liu, J.; Zhou, X.; Nguyen, D.C.; Azghadi, M.R.; Xia, Y.; Sun, S. V2X cooperative perception for autonomous driving: Recent advances and challenges. arXiv 2023, arXiv:2310.03525. [Google Scholar] [CrossRef]

- Noor-A-Rahim, M.; Liu, Z.; Lee, H.; Khyam, M.O.; He, J.; Pesch, D.; Poor, H.V. 6G for vehicle-to-everything (V2X) communications: Enabling technologies, challenges, and opportunities. Proc. IEEE 2022, 110, 712–734. [Google Scholar] [CrossRef]

- Ye, X.; Shu, M.; Li, H.; et al. Rope3D: The roadside perception dataset for autonomous driving and monocular 3D object detection task. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 19–24 June 2022; pp. 21341–21350. [Google Scholar]

- Wu, J.; Xu, H.; Tian, Y.; Pi, R.; Yue, R. Vehicle detection under adverse weather from roadside LiDAR data. Sensors 2020, 20, 3433. [Google Scholar] [CrossRef]

- Liu, S.; Gao, C.; Chen, Y.; Peng, X.; Kong, X.; Wang, K.; Wang, M. Towards vehicle-to-everything autonomous driving: A survey on collaborative perception. arXiv 2023, arXiv:2308.16714. [Google Scholar]

- Chen, Q.; Ma, X.; Tang, S.; Guo, J.; Yang, Q.; Fu, S. F-Cooper: Feature-based cooperative perception for autonomous vehicle edge computing system using 3D point clouds. In Proceedings of the 4th ACM/IEEE Symposium on Edge Computing, Arlington, VA, USA, 7–9 November 2019; pp. 88–100. [Google Scholar]

- Yu, H.; Tang, Y.; Xie, E.; Mao, J.; Yuan, J.; Luo, P.; Nie, Z. Vehicle–infrastructure cooperative 3D object detection via feature flow prediction. arXiv 2023, arXiv:2303.10552. [Google Scholar]

- Ren, S.; Lei, Z.; Wang, Z.; Dianati, M.; Wang, Y.; Chen, S.; Zhang, W. Interruption-aware cooperative perception for V2X communication-aided autonomous driving. IEEE Trans. Intell. Veh. 2024, 9, 4698–4714. [Google Scholar] [CrossRef]

- Bai, Z.; Wu, G.; Barth, M.J.; Liu, Y.; Sisbot, E.A.; Oguchi, K. PillarGrid: Deep learning-based cooperative perception for 3D object detection from onboard-roadside LiDAR. In Proceedings of the 2022 IEEE 25th International Conference on Intelligent Transportation Systems (ITSC), Macau, China, 8–12 October 2022; pp. 1743–1749. [Google Scholar]

- Xiang, C.; Xie, X.; Feng, C.; Bai, Z.; Niu, Z.; Yang, M. V2I-BEVF: Multi-modal fusion based on BEV representation for vehicle–infrastructure perception. In Proceedings of the 2023 IEEE 26th International Conference on Intelligent Transportation Systems (ITSC), Bilbao, Spain, 24–28 September 2023; pp. 5292–5299. [Google Scholar]

- Qi, C.R.; Su, H.; Mo, K.; Guibas, L.J. PointNet: Deep learning on point sets for 3D classification and segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 652–660. [Google Scholar]

- Qi, C.R.; Yi, L.; Su, H.; Guibas, L.J. PointNet++: Deep hierarchical feature learning on point sets in a metric space. Adv. Neural Inf. Process. Syst. 2017, 30. [Google Scholar]

- Shi, S.; Wang, X.; Li, H. PointRCNN: 3D object proposal generation and detection from point cloud. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 15–20 June 2019; pp. 770–779. [Google Scholar]

- Shi, S.; Guo, C.; Jiang, L.; Wang, Z.; Shi, J.; Wang, X.; Li, H. PV-RCNN: Point-voxel feature set abstraction for 3D object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 14–19 June 2020; pp. 10529–10538. [Google Scholar]

- Yang, Z.; Sun, Y.; Liu, S.; Jia, J. 3DSSD: Point-based 3D single-stage object detector. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 14–19 June 2020; pp. 11040–11048. [Google Scholar]

- Liu, Z.; Zhang, Z.; Cao, Y.; Hu, H.; Tong, X. Group-free 3D object detection via transformers. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Montreal, QC, Canada, 11–17 October 2021; pp. 2949–2958. [Google Scholar]

- Mao, J.; Xue, Y.; Niu, M.; Bai, H.; Feng, J.; Liang, X.; Xu, C. Voxel transformer for 3D object detection. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Montreal, QC, Canada, 11–17 October 2021; pp. 3164–3173. [Google Scholar]

- Chen, Y.; Liu, J.; Zhang, X.; Qi, X.; Jia, J. VoxelNeXt: Fully sparse VoxelNet for 3D object detection and tracking. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Vancouver, BC, Canada, 18–23 June 2023; pp. 21674–21683. [Google Scholar]

- Yan, Y.; Mao, Y.; Li, B. SECOND: Sparsely embedded convolutional detection. Sensors 2018, 18, 3337. [Google Scholar] [CrossRef]

- Yin, T.; Zhou, X.; Krahenbuhl, P. Center-based 3D object detection and tracking. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, TN, USA, 20–25 June 2021; pp. 11784–11793. [Google Scholar]

- Lang, A.H.; Vora, S.; Caesar, H.; Zhou, L.; Yang, J.; Beijbom, O. PointPillars: Fast encoders for object detection from point clouds. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 15–20 June 2019; pp. 12697–12705. [Google Scholar]

- Xie, Q.; Zhou, X.; Qiu, T.; Zhang, Q.; Qu, W. Soft actor–critic-based multilevel cooperative perception for connected autonomous vehicles. IEEE Internet Things J. 2022, 9, 21370–21381. [Google Scholar] [CrossRef]

- Guo, A.; Zhang, S.; Tang, E.; Gao, X.; Pang, H.; Tian, H.; Chen, Z. When autonomous vehicle meets V2X cooperative perception: How far are we? arXiv 2025, arXiv:2509.24927. [Google Scholar] [CrossRef]

- Chen, Q.; Tang, S.; Yang, Q.; Fu, S. COOPER: Cooperative perception for connected autonomous vehicles based on 3D point clouds. In Proceedings of the 2019 IEEE 39th International Conference on Distributed Computing Systems (ICDCS), Dallas, TX, USA, 7–9 July 2019; pp. 514–524. [Google Scholar]

- Arnold, E.; Dianati, M.; De Temple, R.; Fallah, S. Cooperative perception for 3D object detection in driving scenarios using infrastructure sensors. IEEE Trans. Intell. Transp. Syst. 2020, 23, 1852–1864. [Google Scholar] [CrossRef]

- Mo, Y.; Zhang, P.; Chen, Z.; Ran, B. A method of vehicle–infrastructure cooperative perception based vehicle state information fusion using improved Kalman filter. Multimed. Tools Appl. 2022, 81, 4603–4620. [Google Scholar] [CrossRef]

- Yu, H.; Luo, Y.; Shu, M.; Huo, Y.; Yang, Z.; Shi, Y.; Nie, Z. DAIR-V2X: A large-scale dataset for vehicle–infrastructure cooperative 3D object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 19–24 June 2022; pp. 21361–21370. [Google Scholar]

- Feng, X.; Sun, H.; Zheng, H. LCV2I: Communication-efficient and high-performance collaborative perception framework with low-resolution LiDAR. arXiv 2025, arXiv:2502.17039. [Google Scholar]

- Wang, T.H.; Manivasagam, S.; Liang, M.; Yang, B.; Zeng, W.; Urtasun, R. V2VNet: Vehicle-to-vehicle communication for joint perception and prediction. In Proceedings of the European Conference on Computer Vision, Glasgow, UK, 23–28 August 2020; pp. 605–621. [Google Scholar]

- Xu, R.; Xiang, H.; Xia, X.; Han, X.; Li, J.; Ma, J. OPV2V: An open benchmark dataset and fusion pipeline for perception with vehicle-to-vehicle communication. In Proceedings of the 2022 International Conference on Robotics and Automation (ICRA), Philadelphia, PA, USA, 23–27 May 2022; pp. 2583–2589. [Google Scholar]

- Xu, R.; Xiang, H.; Tu, Z.; Xia, X.; Yang, M.H.; Ma, J. V2X-ViT: Vehicle-to-everything cooperative perception with vision transformer. In Proceedings of the European Conference on Computer Vision, Tel Aviv, Israel, 23–27 October 2022; pp. 107–124. [Google Scholar]

- Hu, Y.; Fang, S.; Lei, Z.; Zhong, Y.; Chen, S. Where2Comm: Communication-efficient collaborative perception via spatial confidence maps. arXiv 2022, arXiv:2209.12836. [Google Scholar]

- Yan, W.; Cao, H.; Chen, J.; Wu, T. FETR: Feature transformer for vehicle–infrastructure cooperative 3D object detection. Neurocomputing 2024, 600, 128147. [Google Scholar] [CrossRef]

- Shao, J.; Li, T.; Zhang, J. Task-oriented communication for vehicle-to-infrastructure cooperative perception. In Proceedings of the 2024 IEEE 34th International Workshop on Machine Learning for Signal Processing (MLSP), London, UK, 25–28 September 2024; pp. 1–6. [Google Scholar]

- Chen, Z.; Shi, Y.; Jia, J. TransIFF: An instance-level feature fusion framework for vehicle–infrastructure cooperative 3D detection with transformers. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Paris, France, 1–8 October 2023; pp. 18205–18214. [Google Scholar]

- Wang, S.; Yuan, M.; Zhang, C.; He, L.; Xu, Q.; Wang, J. V2X-DGPE: Addressing domain gaps and pose errors for robust collaborative 3D object detection. In Proceedings of the 2025 IEEE Intelligent Vehicles Symposium (IV), Munich, Germany, 4–7 June 2025; pp. 2074–2080. [Google Scholar]

- Wang, L.; Lan, J.; Li, M. PAFNet: Pillar attention fusion network for vehicle–infrastructure cooperative target detection using LiDAR. Symmetry 2024, 16, 401. [Google Scholar] [CrossRef]

- Yan, J.; Peng, Z.; Yin, H.; Wang, J.; Wang, X.; Shen, Y.; Cremers, D. Trajectory prediction for intelligent vehicles using spatial-attention mechanism. IET Intell. Transp. Syst. 2020, 14, 1855–1863. [Google Scholar] [CrossRef]

- Mushtaq, H.; Deng, X.; Ullah, I.; Ali, M.; Malik, B.H. O2SAT: Object-oriented-segmentation-guided spatial-attention network for 3D object detection in autonomous vehicles. Information 2024, 15, 376. [Google Scholar] [CrossRef]

- Xu, R.; Tu, Z.; Xiang, H.; Shao, W.; Zhou, B.; Ma, J. CoBEVT: Cooperative bird’s-eye-view semantic segmentation with sparse transformers. arXiv 2022, arXiv:2207.02202. [Google Scholar]

- Lu, Y.; Li, Q.; Liu, B.; Dianati, M.; Feng, C.; Chen, S.; Wang, Y. Robust collaborative 3D object detection in presence of pose errors. arXiv 2022, arXiv:2211.07214. [Google Scholar]

| Category | Device | Description |

|---|---|---|

| Roadside Equipment | LiDAR Sensor | RoboSense 16-beam 10 Hz 360°/30° |

| Vehicle Equipment | LiDAR Sensor | RoboSense 16-beam 20 Hz 360°/30° |

| Positioning system | RTK-based high-precision localization | |

| System Integration | Synchronization | Hardware-trigger via Time Server |

| Calibration | Precise Extrinsic Calibration |

| Method | Fusion Type | DAIR-V2X | Self-collected | |||

|---|---|---|---|---|---|---|

| AP@0.5 | AP@0.7 | Inference Time (ms) |

AP@0.5 | AP@0.7 | ||

| Pointpillars [22] | None | 0.481 | - | 25.36 | 0.359 | - |

| LateFusion[28] | Late | 0.561 | - | 36.72 | 0.437 | - |

| Cooper[25] | Early | 0.617 | - | 69.86 | 0.561 | - |

| F-Cooper[7] | Intermediate | 0.734 | 0.559 | 35.17 | 0.712 | 0.546 |

| V2VNet[30] | Intermediate | 0.654 | 0.402 | 73.58 | 0.656 | 0.409 |

| CoBEVT[41] | Intermediate | 0.580 | 0.443 | 63.76 | 0.571 | 0.440 |

| V2X-ViT[32] | Intermediate | 0.585 | 0.449 | 161.04 | 0.564 | 0.453 |

| Where2comm[33] | Intermediate | 0.625 | 0.488 | 82.52 | 0.611 | 0.462 |

| CoAlign[42] | Intermediate | 0.741 | 0.594 | 97.41 | 0.668 | 0.547 |

| The proposed | Intermediate | 0.762 | 0.617 | 60.67 | 0.694 | 0.563 |

| Ablation Setting | DAIR-V2X | Self-collected | ||||

|---|---|---|---|---|---|---|

| R-SENet | FBF-Net | SAFF | AP@0.5 | AP@0.7 | AP@0.5 | AP@0.7 |

| × | × | × | 0.689 | 0.531 | 0.607 | 0.488 |

| √ | × | × | 0.728 | 0.579 | 0.649 | 0.526 |

| √ | √ | × | 0.739 | 0.593 | 0.667 | 0.539 |

| √ | × | √ | 0.751 | 0.608 | 0.681 | 0.552 |

| √ | √ | √ | 0.762 | 0.617 | 0.694 | 0.563 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).