Submitted:

09 February 2026

Posted:

10 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

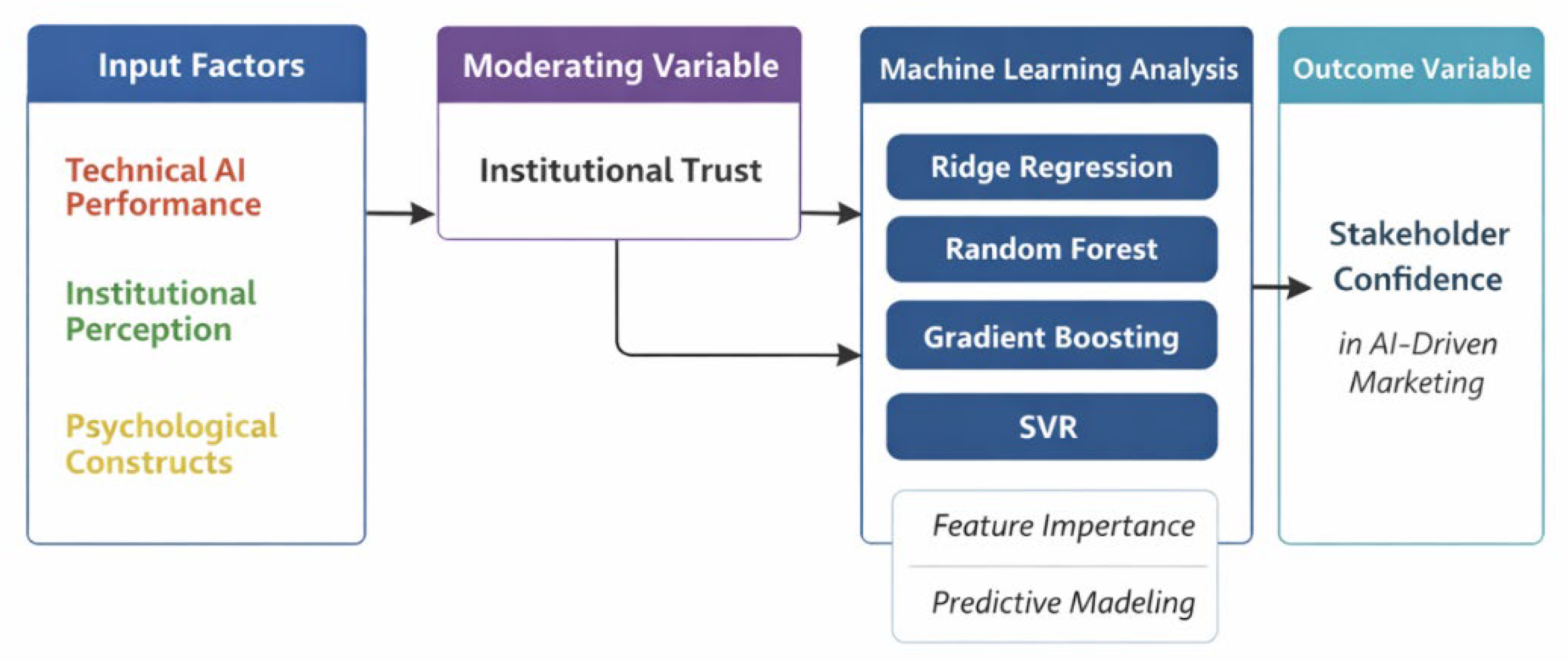

2. Materials and Methods

2.1. Research Design and Data Collection

2.2. Data Augmentation via Covariance-Preserving Sampling

2.3. Machine Learning Models and Benchmarking Strategy

2.4. Model Validation and Performance Evaluation

2.5. Robustness Testing and Model Interpretability

2.6. Ethical Considerations

3. Results

3.1. Diagnostic Assessment and Data Integrity

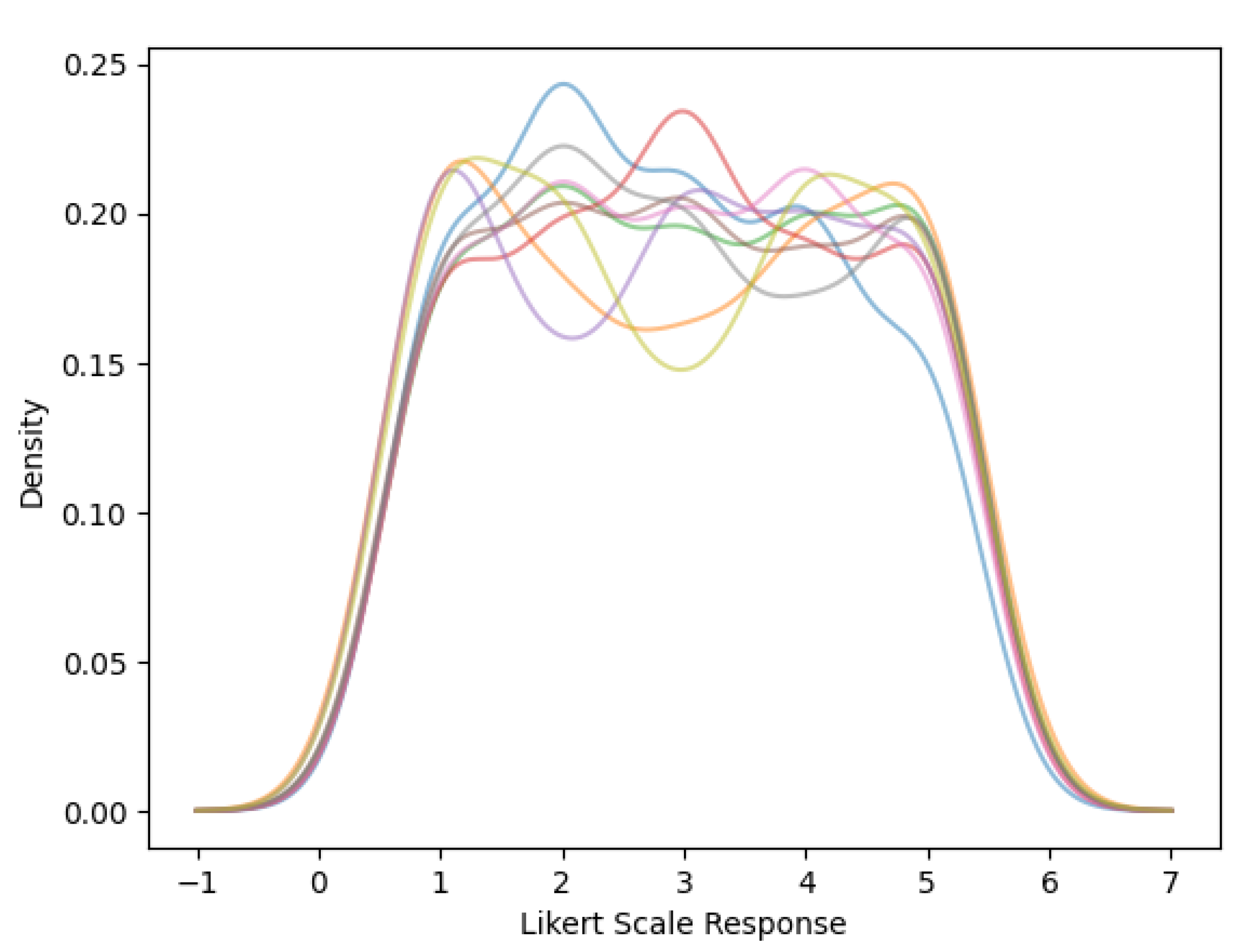

3.2. Distributional Characteristics of Stakeholder Responses

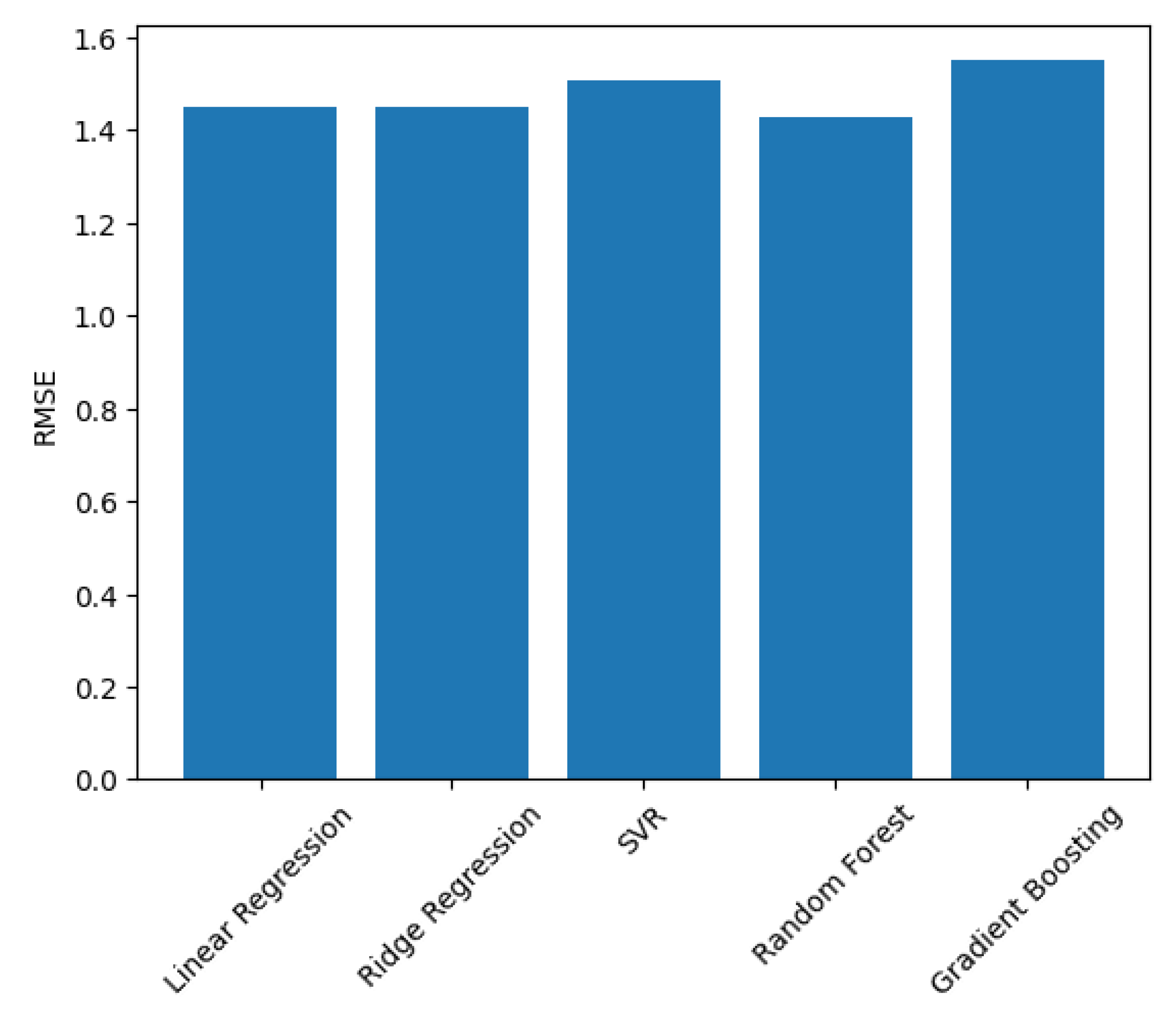

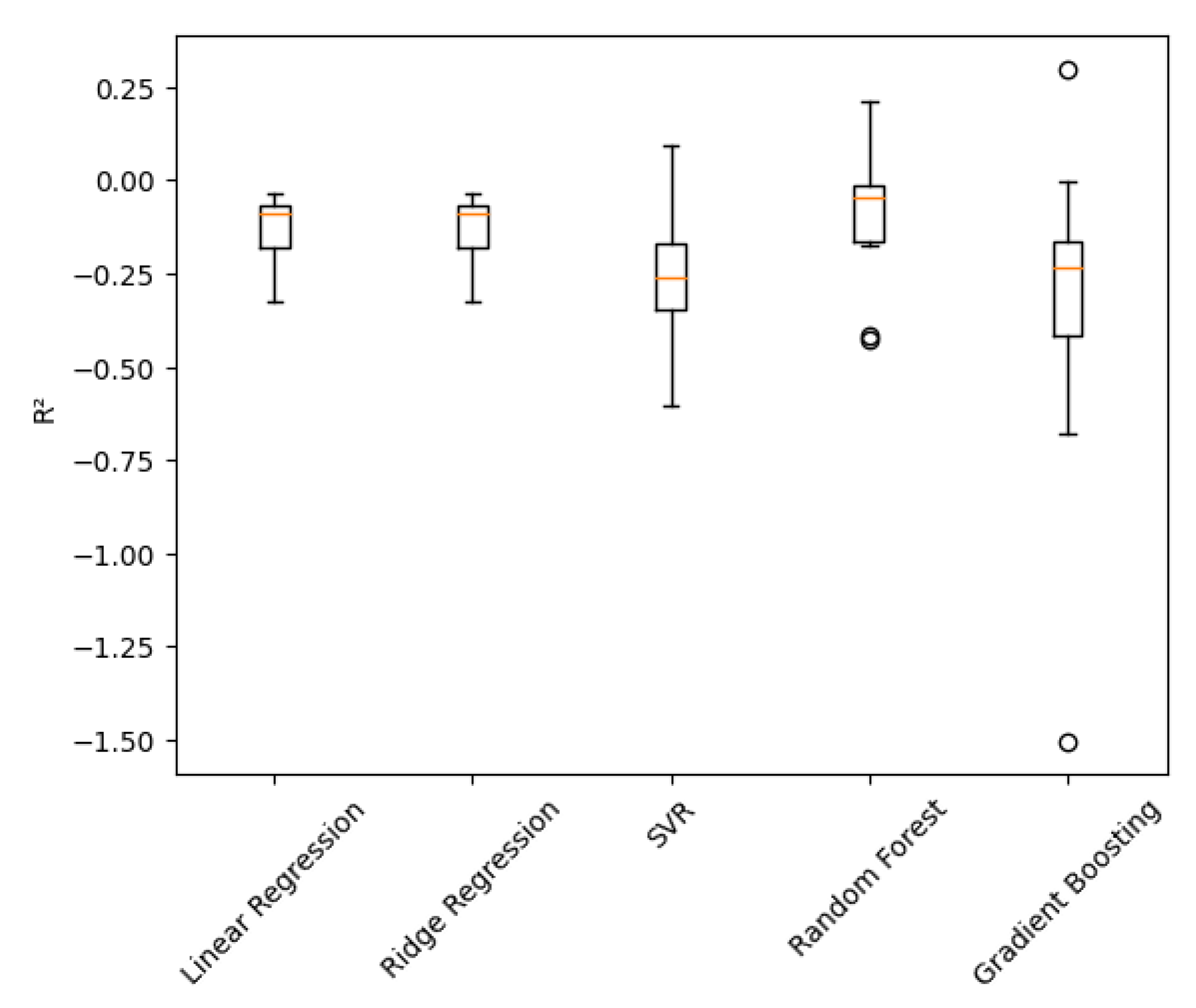

3.3. Predictive Model Benchmarking

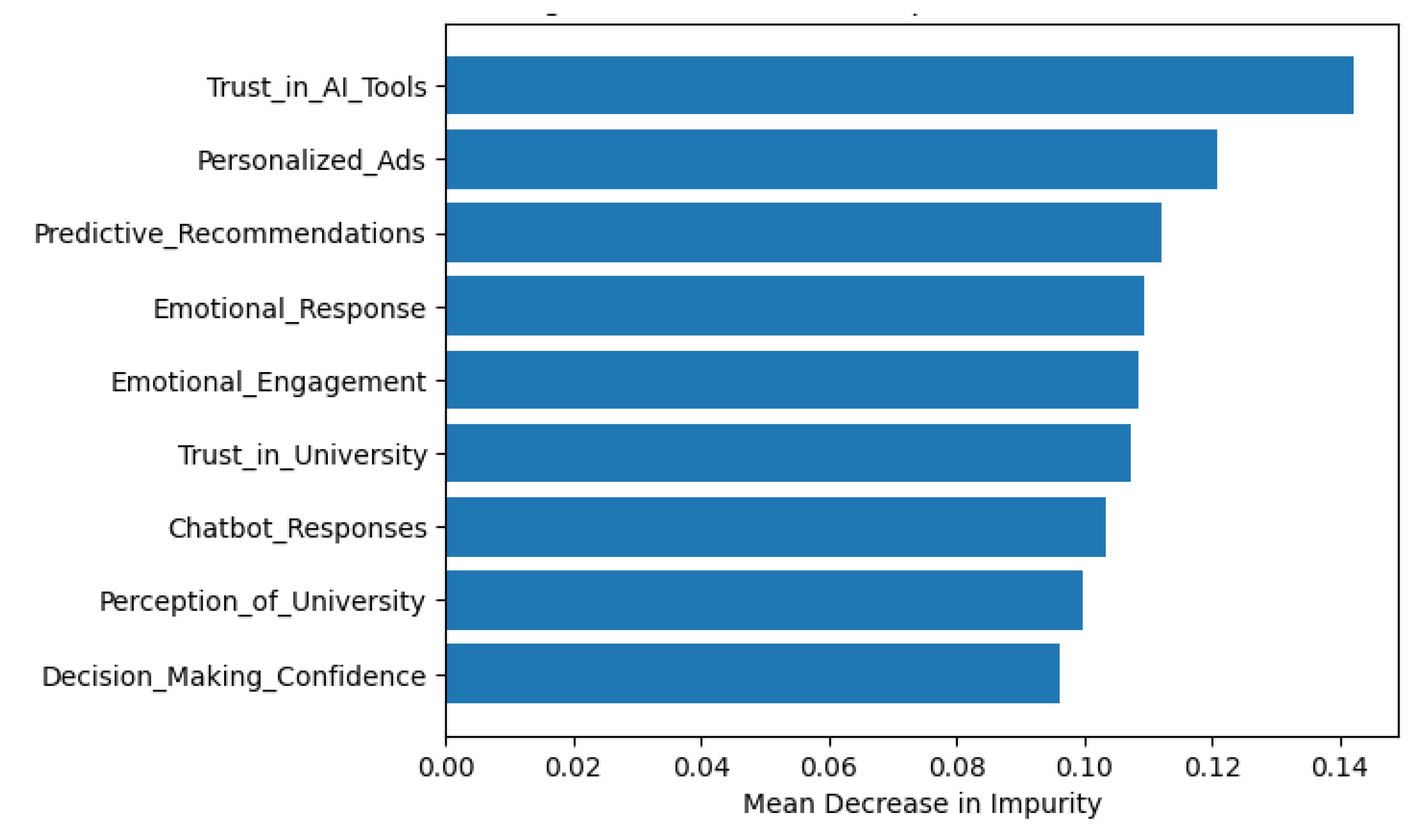

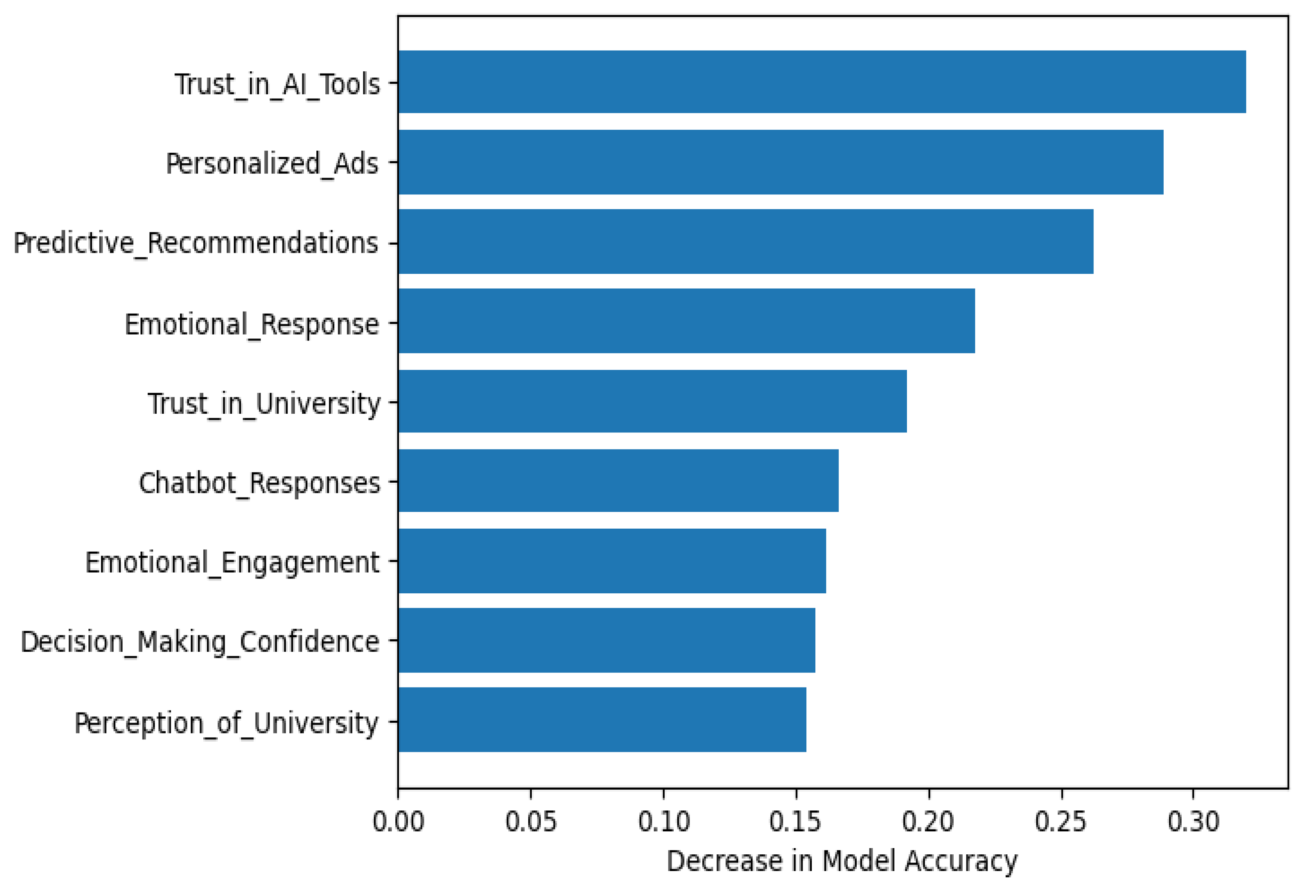

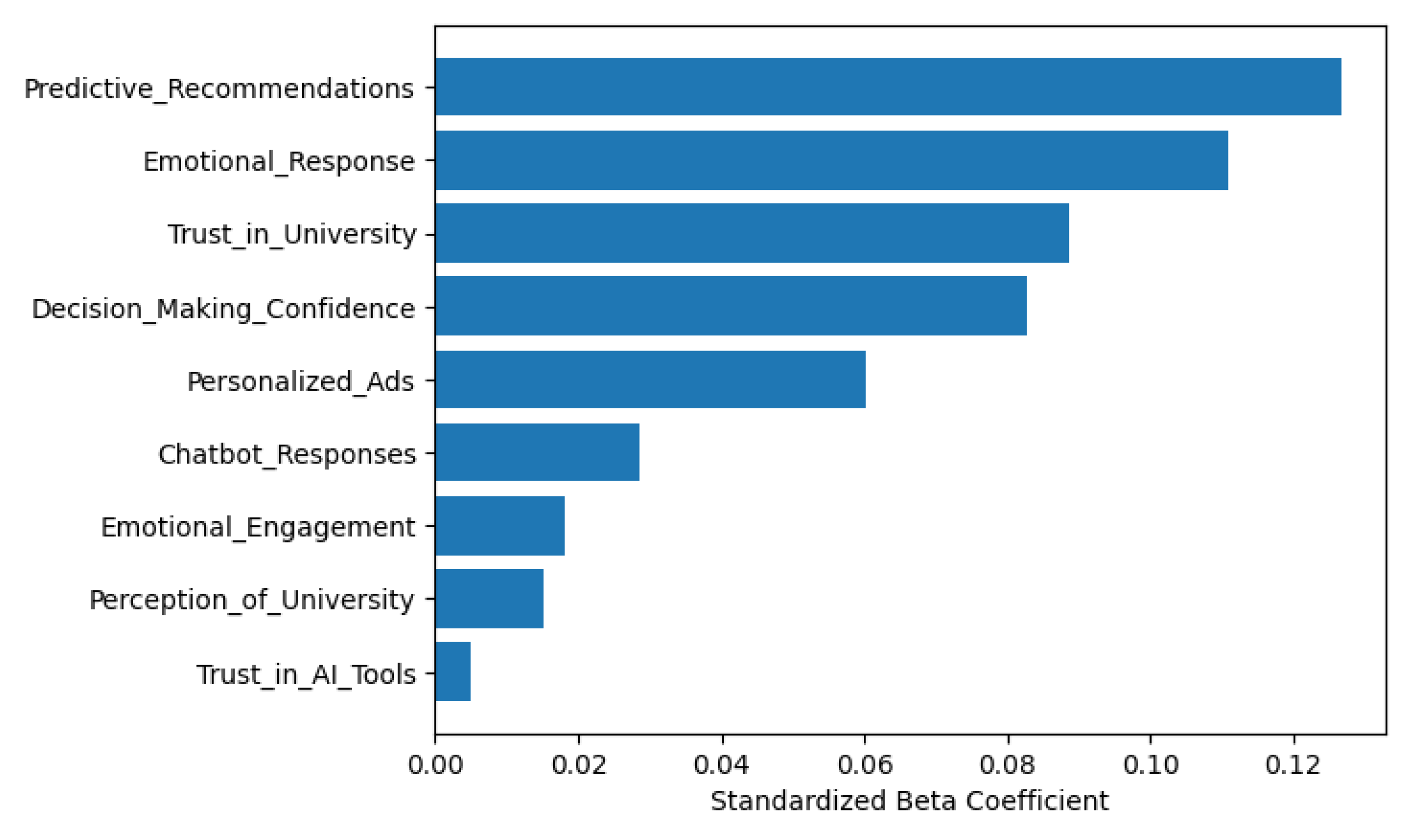

3.4. Predictor Importance and Hierarchical Influence

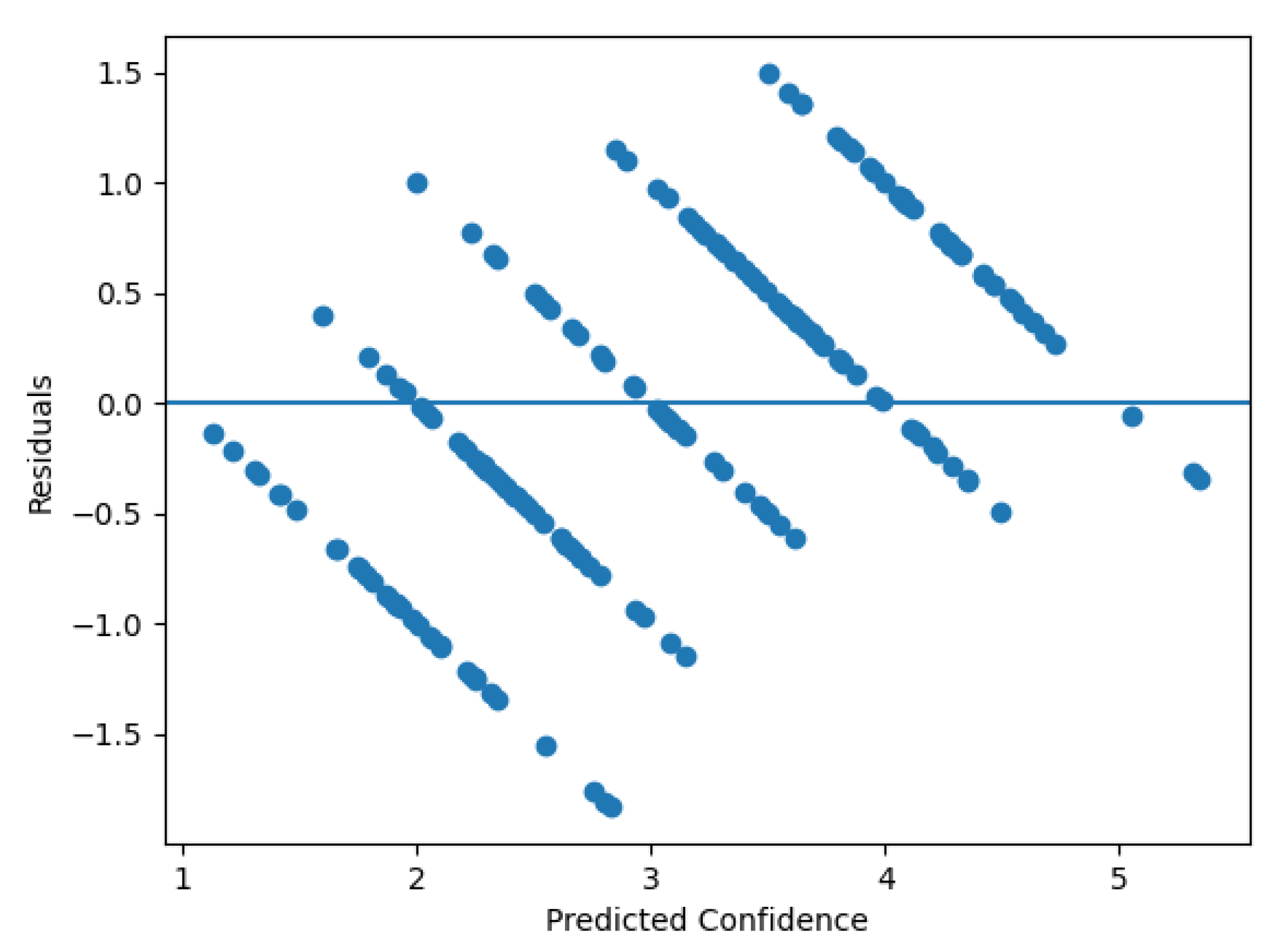

3.5. Model Robustness and Diagnostic Evaluation

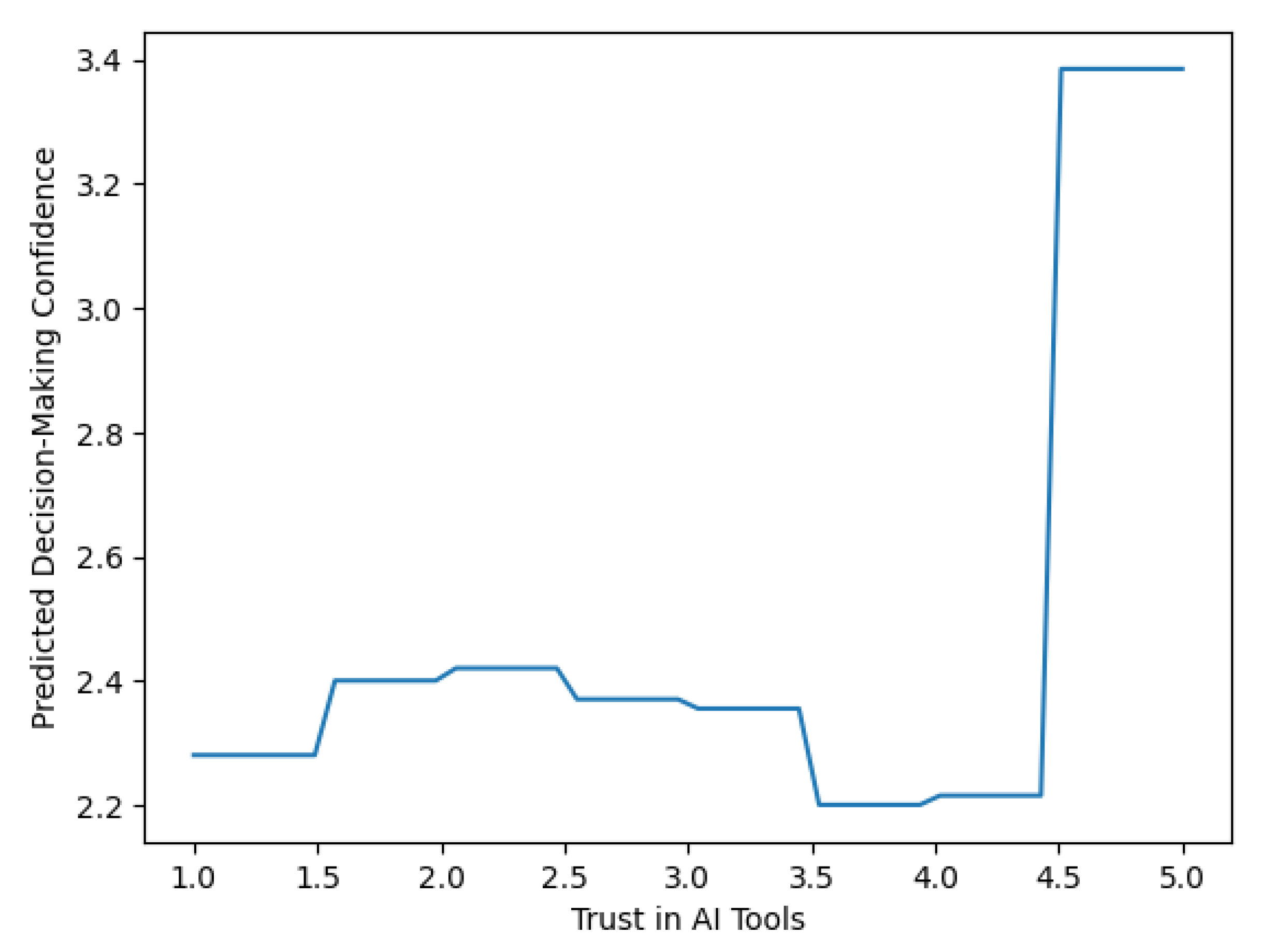

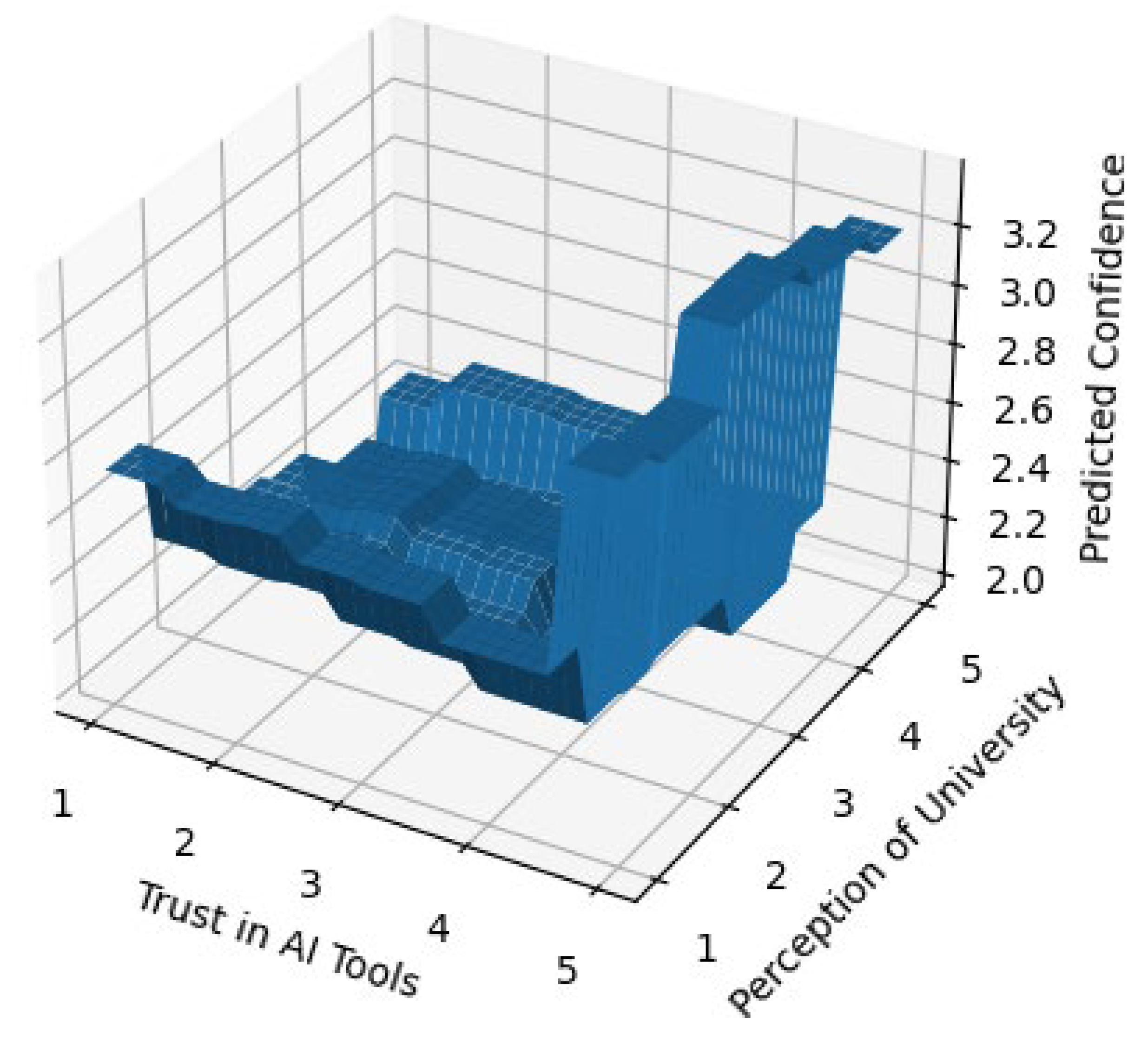

3.6. Sensitivity and Interaction Effects

3.7. Linear Triangulation of Predictive Structure

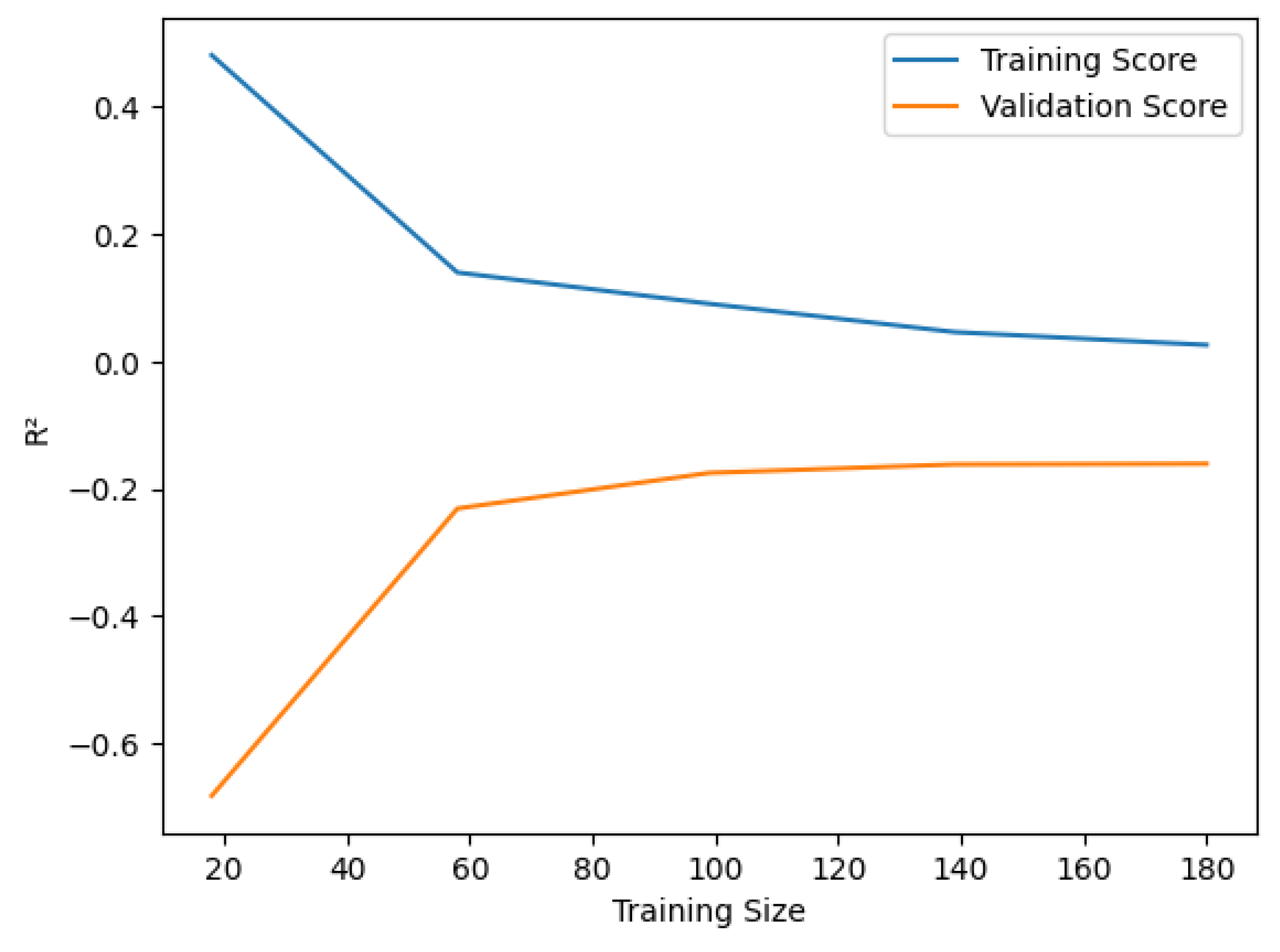

3.8. Sensitivity to Data Augmentation

4. Discussion

4.1. Empirical Validation of the Trust–Tech Nexus

4.2. Extending Technology Acceptance Theory through Trust

4.3. Non-Linearity and Threshold-Based Trust Formation

4.4. Institutional Perception as a Structural Moderator

4.5. Reconciling Predictive Accuracy and Behavioral Insight

4.6. Managerial Implications: From Insights to Decision Rules

4.7. Implications for AI Governance and Responsible AI

4.8. Methodological Contribution

4.9. Boundary Conditions of the Trust–Tech Nexus

4.10. Synthesis

5. Limitations and Future Scope

5.1. Methodological Limitations

5.2. Sample and Contextual Constraints

5.3. Model-Specific and Analytical Boundaries

5.4. Theoretical Scope and Boundary Conditions

5.5. Future Research Directions

6. Conclusion

Ethical Approval and Informed Consent

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Davenport, T.; Guha, A.; Grewal, D.; Bressgott, T. How artificial intelligence will change the future of marketing. Journal of the Academy of Marketing Science 2019, 48, 24–42. [Google Scholar] [CrossRef]

- Dwivedi, Y.K.; Hughes, L.; Ismagilova, E.; Aarts, G.; Coombs, C.; Crick, T.; et al. Artificial Intelligence (AI): Multidisciplinary perspectives on emerging challenges, opportunities, and agenda for research, practice and policy. Int J Inf Manage 2021, 57, 101994. [Google Scholar] [CrossRef]

- Davis, F.D. Perceived Usefulness, Perceived Ease of Use, and User Acceptance of Information Technology. MIS Quarterly 1989, 13, 319–40. [Google Scholar] [CrossRef]

- Venkatesh, V.; Bala, H. Technology Acceptance Model 3 and a Research Agenda on Interventions. Decision Sciences 2008, 39, 273–315. [Google Scholar] [CrossRef]

- Dwivedi, Y.K.; Kshetri, N.; Hughes, L.; Slade, E.L.; Jeyaraj, A.; Kar, A.K.; et al. Opinion Paper: “So what if ChatGPT wrote it?” Multidisciplinary perspectives on opportunities, challenges and implications of generative conversational AI for research, practice and policy. Int J Inf Manage 2023, 71, 102642. [Google Scholar] [CrossRef]

- Choung, H.; David, P.; Ross, A. Trust in AI and Its Role in the Acceptance of AI Technologies. Int J Hum Comput Interact 2023, 39, 1727–39. [Google Scholar] [CrossRef]

- Li, Y.; Wu, B.; Huang, Y.; Luan, S. Developing trustworthy artificial intelligence: insights from research on interpersonal, human-automation, and human-AI trust. Front Psychol 2024, 15. [Google Scholar] [CrossRef]

- Markou, V.; Serdaris, P.; Antoniadis, I.; Spinthiropoulos, K. Personalization, Trust, and Identity in AI-Based Marketing: An Empirical Study of Consumer Acceptance in Greece. Adm Sci 2025, 15, 440. [Google Scholar] [CrossRef]

- Vrontis, D.; Christofi, M.; Pereira, V.; Tarba, S.; Makrides, A.; Trichina, E. Artificial intelligence, robotics, advanced technologies and human resource management: a systematic review. The International Journal of Human Resource Management 2022, 33, 1237–66. [Google Scholar] [CrossRef]

- Morgan, R.M.; Hunt, S.D. The Commitment-Trust Theory of Relationship Marketing. J Mark 1994, 58, 20–38. [Google Scholar] [CrossRef]

- Breiman, L. Random Forests. Mach Learn 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Strobl, C.; Malley, J.; Tutz, G. An Introduction to Recursive Partitioning: Rationale, Application, and Characteristics of Classification and Regression Trees, Bagging, and Random Forests. Psychol Methods 2009, 14, 323–48. [Google Scholar] [CrossRef] [PubMed]

- Pardeshi, N.G.; Patil, D.V. Applying Gini Importance and RFE Methods for Feature Selection in Shallow Learning Models for Implementing Effective Intrusion Detection System; Volume 2023, pp. 214–34. [CrossRef]

- Guidotti, R.; Monreale, A.; Ruggieri, S.; Turini, F.; Giannotti, F.; Pedreschi, D. A Survey of Methods for Explaining Black Box Models. ACM Comput Surv 2019, 51, 1–42. [Google Scholar] [CrossRef]

- Singh, Sumit Kumar. Predictive analytics: Transforming historical data into strategic future insights. World Journal of Advanced Engineering Technology and Sciences 2025, 15, 1774–81. [Google Scholar] [CrossRef]

- Gatete, Olivier. Advancing Predictive Analytics: Integrating Machine Learning and Data Modelling for Enhanced Decision-Making. International Journal of Latest Technology in Engineering Management & Applied Science 2025, 14, 169–89. [Google Scholar] [CrossRef]

- Strayhorn, T.L. College Students’ Use and Perceptions of Artificial Intelligence (AI): A Survey Study. J Coll Stud Dev 2025, 66, 319–26. [Google Scholar] [CrossRef]

- Jo, H. Understanding AI tool engagement: A study of ChatGPT usage and word-of-mouth among university students and office workers. Telematics and Informatics 2023, 85, 102067. [Google Scholar] [CrossRef]

- Qin, F.; Li, K.; Yan, J. Understanding user trust in artificial intelligence-based educational systems: Evidence from China. British Journal of Educational Technology 2020, 51, 1693–710. [Google Scholar] [CrossRef]

- Shroff, R.H.; Deneen, C.C.; Ng, E.M.W. Analysis of the technology acceptance model in examining students’ behavioral intention to use an e-portfolio system. Australasian Journal of Educational Technology 2011, 27. [Google Scholar] [CrossRef]

- Dong, Y.; Itoh, M. A modification of technology acceptance model for investigating driver-vehicle interaction systems usage. PLoS One 2025, 20, e0322221. [Google Scholar] [CrossRef] [PubMed]

- Guo, S.; Song, Y.; Chen, G.; Han, H.; Wu, H.; Ma, J. Promoting trust and intention to adopt health information generated by ChatGPT among healthcare customers: An empirical study. Digit Health 2025, 11. [Google Scholar] [CrossRef]

- Tam, L.; Kim, S.; Gong, Y. Support for Businesses’ Use of Artificial Intelligence: Dynamics of Trust, Distrust, and Perceived Benefits. Media Commun 2025, 13. [Google Scholar] [CrossRef]

- Mendes-Moreira, J; Soares, C; Jorge, AM; De Sousa, JF. Ensemble approaches for regression. ACM Comput Surv 2012, 45, 1–40. [Google Scholar] [CrossRef]

- Fan, Z.; Yu, Z.; Yang, K.; Chen, W.; Liu, X.; Li, G.; et al. Diverse Models, United Goal: A Comprehensive Survey of Ensemble Learning. CAAI Trans Intell Technol 2025, 10, 959–82. [Google Scholar] [CrossRef]

- Pérez-Rodríguez, J.; Fernández-Navarro, F.; Ashley, T. Estimating ensemble weights for bagging regressors based on the mean–variance portfolio framework. Expert Syst Appl 2023, 229, 120462. [Google Scholar] [CrossRef]

- Gilroy, E.J. Reliability of a Variance Estimate Obtained from a Sample Augmented by Multivariate Regression. Water Resour Res 1970, 6, 1595–600. [Google Scholar] [CrossRef]

- Heine, J.; Fowler, E.E.E.; Berglund, A.; Schell, M.J.; Eschrich, S. Techniques to produce and evaluate realistic multivariate synthetic data. Sci Rep 2023, 13, 12266. [Google Scholar] [CrossRef]

- Leist, A.K.; Klee, M.; Kim, J.H.; Rehkopf, D.H.; Bordas, S.P.A.; Muniz-Terrera, G.; et al. Mapping of machine learning approaches for description, prediction, and causal inference in the social and health sciences. Sci Adv 2022, 8. [Google Scholar] [CrossRef]

- Meyer, P.B.; Asher, K. Augmenting U.S. Census data on industry and occupation of respondents. 2019 IEEE International Conference on Data Science and Advanced Analytics (DSAA), 2019; IEEE; pp. 600–1. [Google Scholar] [CrossRef]

- Lejeune, D.; Javadi, H.; Baraniuk, R.G. The Implicit Regularization of Ordinary Least Squares Ensembles. In Proceedings of the Twenty Third International Conference on Artificial Intelligence and Statistics, PMLR, 2020; pp. 3525–35. [Google Scholar]

- Nabila, Rizma; Azizah, Nur; Rifai, Komara. Penerapan Metode Support Vector Regression (SVR) dengan Kernel untuk Data Curah Hujan di Jawa Barat. Bandung Conference Series: Statistics 2025, 5. [Google Scholar] [CrossRef]

- Long, N.; Gianola, D.; Rosa, G.J.M.; Weigel, K.A. Application of support vector regression to genome-assisted prediction of quantitative traits. Theoretical and Applied Genetics 2011, 123, 1065–74. [Google Scholar] [CrossRef]

- Ren, H.; Pang, B.; Bai, P.; Zhao, G.; Liu, S.; Liu, Y.; et al. Flood Susceptibility Assessment with Random Sampling Strategy in Ensemble Learning (RF and XGBoost). Remote Sens (Basel) 2024, 16, 320. [Google Scholar] [CrossRef]

- Belsini GladShiya, V.; Sharmila, K.K. Using Ensemble Learning and Random Forest Techniques to Solve Complex Problems; 2023; pp. 388–407. [Google Scholar] [CrossRef]

- Zhao, C.; Wu, D.; Huang, J.; Yuan, Y.; Zhang, H.-T.; Peng, R.; et al. BoostTree and BoostForest for Ensemble Learning. IEEE Trans Pattern Anal Mach Intell 2022, 1–17. [Google Scholar] [CrossRef] [PubMed]

- Lu, H.; Mazumder, R. Randomized Gradient Boosting Machine. SIAM Journal on Optimization 2020, 30, 2780–808. [Google Scholar] [CrossRef]

- Picard, R.R.; Cook, R.D. Cross-Validation of Regression Models. J Am Stat Assoc 1984, 79, 575–83. [Google Scholar] [CrossRef]

- Malakouti, S.M.; Menhaj, M.B.; Suratgar, A.A. The usage of 10-fold cross-validation and grid search to enhance ML methods performance in solar farm power generation prediction. Clean Eng Technol 2023, 15, 100664. [Google Scholar] [CrossRef]

- Hooker, G.; Mentch, L. Bootstrap bias corrections for ensemble methods. Stat Comput 2018, 28, 77–86. [Google Scholar] [CrossRef]

- Wood, M. Bootstrapped Confidence Intervals as an Approach to Statistical Inference. Organ Res Methods 2005, 8, 454–70. [Google Scholar] [CrossRef]

- Xu, L.; Gotwalt, C.; Hong, Y.; King, C.B.; Meeker, W.Q. Applications of the Fractional-Random-Weight Bootstrap. Am Stat 2020, 74, 345–58. [Google Scholar] [CrossRef]

- Yin, G.; Huang, Z.; Fu, C.; Ren, S.; Bao, Y.; Ma, X. Examining active travel behavior through explainable machine learning: Insights from Beijing, China. Transp Res D Transp Environ 2024, 127, 104038. [Google Scholar] [CrossRef]

- Tamim Kashifi, M.; Jamal, A.; Samim Kashefi, M.; Almoshaogeh, M.; Masiur Rahman, S. Predicting the travel mode choice with interpretable machine learning techniques: A comparative study. Travel Behav Soc 2022, 29, 279–96. [Google Scholar] [CrossRef]

- Cho, E.; Kim, S.; Heo, S.-J.; Shin, J.; Ye, B.S.; Lee, J.H.; et al. Machine Learning-Based Predictive Models of Behavioral and Psychological Symptoms of Dementia. Innov Aging 2021, 5, 645–645. [Google Scholar] [CrossRef]

- Lei, J. Cross-Validation With Confidence. J Am Stat Assoc 2020, 115, 1978–97. [Google Scholar] [CrossRef]

- Hoerl, A.E.; Kennard, R.W. Ridge Regression: Biased Estimation for Nonorthogonal Problems. In Technometrics; STRING:PUBLICATION: WGROUP, 1970; Volume 12, pp. 55–67. [Google Scholar] [CrossRef]

- Wolff, S.M.; Breakwell, G.M.; Wright, D.B. Psychometric evaluation of the Trust in Science and Scientists Scale. R Soc Open Sci 2024, 11. [Google Scholar] [CrossRef]

- Karran, A.J.; Charland, P.; Trempe-Martineau, J.; Ortiz de Guinea Lopez de Arana, A.; Lesage, A.-M.; Sénécal, S.; et al. Multi-stakeholder perspective on responsible artificial intelligence and acceptability in education. NPJ Sci Learn 2025, 10, 44. [Google Scholar] [CrossRef] [PubMed]

- Jankovic, D.; Simic, M.; Herakovic, N. A comparative study of machine learning regression models for production systems condition monitoring. Advances in Production Engineering & Management 2024, 19, 78–92. [Google Scholar] [CrossRef]

- Huang, C.; Li, S.-X.; Caraballo, C.; Masoudi, F.A.; Rumsfeld, J.S.; Spertus, J.A.; et al. Performance Metrics for the Comparative Analysis of Clinical Risk Prediction Models Employing Machine Learning. Circ Cardiovasc Qual Outcomes 2021, 14. [Google Scholar] [CrossRef] [PubMed]

- Wu, Q.; Fokoue, E.; Kudithipudi, D. An Ensemble Learning Approach to the Predictive Stability of Echo State Networks. Journal of Informatics and Mathematical Sciences 2018, 10, 181–99. [Google Scholar] [CrossRef]

- Loecher, M. Unbiased variable importance for random forests. Commun Stat Theory Methods 2022, 51, 1413–25. [Google Scholar] [CrossRef]

- Chang, W.-C.; Gonzalez Garcia, N.; Esmaeili, B.; Hasanzadeh, S. Partial Personalization for Worker-robot Trust Prediction in the Future Construction Environment. 2024. [Google Scholar] [CrossRef]

- Pereira, J.P.B.; Stroes, E.S.G.; Zwinderman, A.H.; Levin, E. Covered Information Disentanglement: Model Transparency via Unbiased Permutation Importance. Proceedings of the AAAI Conference on Artificial Intelligence 2022, 36, 7984–92. [Google Scholar] [CrossRef]

- Zitoune, I.; Arabov, M.K. Comparative Analysis of Ensemble and Linear Machine Learning Models in the Task of House Price Prediction. 2024 International Russian Automation Conference (RusAutoCon), 2024; IEEE; pp. 50–5. [Google Scholar] [CrossRef]

- Cui, Y.L.; Lin Zeng, M.; Ke Du, X.; Miao He, W. What Shapes Learners’ Trust in AI? A Meta-Analytic Review of Its Antecedents and Consequences. IEEE Access 2025, 13, 164008–25. [Google Scholar] [CrossRef]

- Choung, H.; David, P.; Ross, A. Trust in AI and Its Role in the Acceptance of AI Technologies. Int J Hum Comput Interact 2023, 39, 1727–39. [Google Scholar] [CrossRef]

- Latif, I.S.; Saputro, R.E.; Barkah, A.S. Technology Acceptance Model TAM using Partial Least Squares Structural Equation Modeling PLS- SEM. Journal of Information Systems and Informatics 2025, 7, 1376–99. [Google Scholar] [CrossRef]

- Vorm, E.S.; Combs, D.J.Y. Integrating Transparency, Trust, and Acceptance: The Intelligent Systems Technology Acceptance Model (ISTAM). Int J Hum Comput Interact 2022, 38, 1828–45. [Google Scholar] [CrossRef]

- Choung, H.; David, P.; Ross, A. Trust in AI and Its Role in the Acceptance of AI Technologies. Int J Hum Comput Interact 2023, 39, 1727–39. [Google Scholar] [CrossRef]

- Fenneman, A.; Sickmann, J.; Pitz, T.; Sanfey, A.G. Two distinct and separable processes underlie individual differences in algorithm adherence: Differences in predictions and differences in trust thresholds. PLoS One 2021, 16, e0247084. [Google Scholar] [CrossRef]

- Ngo, V.M. Balancing AI transparency: Trust, Certainty, and Adoption. Information Development 2025. [Google Scholar] [CrossRef]

- Lei, A.Z.; Cultrera, C. The Effects of Past Experiences, Trust, and Perception on Decisions to Adopt New AI Technology. Journal of Student Research 2024, 13. [Google Scholar] [CrossRef]

- Long, C.P.; Sitkin, S.B. Contradictions that erode institutional trust & opportunities for addressing them. Behavioral Science & Policy 2023, 9, 1–6. [Google Scholar] [CrossRef]

- Breiman, L. Statistical Modeling: The Two Cultures (with comments and a rejoinder by the author). Statistical Science 2001, 16. [Google Scholar] [CrossRef]

- Breiman, L. Random forests. Mach Learn 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Madhavan, R.; Kerr, J.A.; Corcos, A.R.; Isaacoff, B.P. Toward Trustworthy and Responsible Artificial Intelligence Policy Development. IEEE Intell Syst 2020, 35, 103–8. [Google Scholar] [CrossRef]

- Duan, W.; Zhou, S.; Scalia, M.J.; Yin, X.; Weng, N.; Zhang, R.; et al. Understanding the Evolvement of Trust Over Time within Human-AI Teams. Proc ACM Hum Comput Interact 2024, 8, 1–31. [Google Scholar] [CrossRef]

- Fehlhaber, A.L.; EL-Awad, U. Trust development in online competitive game environments: a network analysis approach. Appl Netw Sci 2024, 9, 7. [Google Scholar] [CrossRef]

| Variable | VIF (Variance Inflation Factor) |

|---|---|

| Personalized Ads | 4.93 |

| Chatbot Responses | 4.68 |

| Predictive Recommendations | 4.95 |

| Trust in AI Tools | 5.27 |

| Emotional Engagement | 4.88 |

| Trust in University | 5.18 |

| Perception of University | 5.14 |

| Emotional Response | 4.61 |

| Model | RMSE (Mean) | RMSE (SD) | R2 (Mean) | R2 (SD) |

|---|---|---|---|---|

| Linear Regression | 1.449 | 0.163 | −0.132 | 0.092 |

| Ridge Regression | 1.449 | 0.163 | −0.131 | 0.092 |

| SVR (RBF) | 1.509 | 0.162 | −0.243 | 0.200 |

| Random Forest | 1.427 | 0.180 | −0.107 | 0.185 |

| Gradient Boosting | 1.549 | 0.216 | −0.349 | 0.456 |

| Metric | 95% Confidence Interval |

|---|---|

| RMSE | [1.36, 1.52] |

| R2 | [−0.18, 0.02] |

| Predictor | Δ Accuracy (Mean) | SD |

|---|---|---|

| Trust in AI Tools | 0.306 | 0.027 |

| Personalized Ads | 0.289 | 0.019 |

| Predictive Recommendations | 0.265 | 0.024 |

| Emotional Response | 0.217 | 0.015 |

| Trust in University | 0.191 | 0.017 |

| Chatbot Responses | 0.165 | 0.014 |

| Emotional Engagement | 0.161 | 0.013 |

| Metric | Value |

|---|---|

| Trust Threshold (Likert Scale) | 4.43 |

| Confidence Gain (Below → Above Threshold) | +1.11 units |

| Dataset | RMSE |

|---|---|

| Original Dataset (N = 200) | 1.479 |

| Augmented Dataset (N = 500) | 1.440 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).