Submitted:

07 February 2026

Posted:

09 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Motivation and Research Gap

1.2. Objectives and Scope

1.3. Contributions

- Multi-asset empirical evaluation. We present a controlled ablation study spanning five asset classes with distinct market microstructures, volatility profiles, and trading dynamics, enabling us to assess whether findings generalise beyond the single-asset benchmarks common in prior work.

- Statistical rigour. Our experimental design incorporates multiple random seeds, paired hypothesis tests, effect size quantification, and aggregated reporting with standard deviations—accounting for the stochastic variability inherent in neural network training and distinguishing reliable differences from noise.

- Indicator category decomposition. By evaluating momentum, trend, and volatility indicators both individually and in combination, we identify differential impacts across indicator families and provide evidence of redundancy when multiple indicator types are combined.

- Practical guidelines. The findings translate into actionable recommendations for practitioners on feature engineering, baseline selection, and architecture choice in deep learning-based trading systems.

2. Related Work

2.1. Financial Time Series: Challenges and Properties

2.2. Deep Learning for Financial Markets

Recurrent architectures.

Multi-asset and large-scale studies.

Surveys and open questions.

2.3. Technical Indicators in Machine Learning and Deep Learning Models

Classical machine learning with indicators.

Deep learning with indicators.

Synthesis.

2.4. Evaluation Methodology, Ablation Studies, and Statistical Practice

2.5. Closest Related Empirical Studies

Summary of the research gap.

3. Data

3.1. Asset Selection

Commodities.

- Crude Oil (WTI Futures). West Texas Intermediate crude oil, a benchmark for global oil prices, exhibiting high volatility and sensitivity to geopolitical events. Daily data from 2010-01-04 to 2025-12-31 (4,024 trading days).

- Gold (XAU/USD). Spot gold price in U.S. dollars, traditionally considered a safe-haven asset with moderate volatility. Daily data from 2010-01-04 to 2025-12-31 (4,023 trading days).

Cryptocurrencies.

- Bitcoin (BTC/USD). The largest cryptocurrency by market capitalisation, characterised by extreme volatility and 24/7 trading. Daily data from 2014-09-17 to 2025-12-31 (4,124 trading days).

- Ethereum (ETH/USD). The second-largest cryptocurrency, serving as the foundation for decentralised applications. Daily data from 2017-11-09 to 2025-12-31 (2,975 trading days).

Equities.

- Apple Inc. (AAPL). A large-cap technology stock with moderate volatility and high liquidity. Daily data from 2010-01-04 to 2025-12-31 (4,024 trading days).

- Microsoft Corporation (MSFT). A large-cap technology stock with similar characteristics to AAPL. Daily data from 2010-01-04 to 2025-12-31 (4,024 trading days).

Foreign exchange.

- EUR/USD. The most actively traded currency pair globally, exhibiting lower volatility than equities and commodities. Daily data from 2010-01-01 to 2025-12-31 (4,165 trading days).

- USD/JPY. A major currency pair reflecting U.S.–Japan monetary policy dynamics. Daily data from 2010-01-01 to 2025-12-31 (4,165 trading days).

Market indices.

- NASDAQ Composite. A technology-weighted U.S. equity index tracking over 3,000 stocks. Daily data from 2010-01-04 to 2025-12-31 (4,024 trading days).

- S&P 500. A capitalisation-weighted index of 500 large U.S. companies, widely used as a benchmark. Daily data from 2010-01-04 to 2025-12-31 (4,024 trading days).

3.2. Data Format and Source

- Date: Trading date in UTC.

- Open: Opening price for the trading session.

- High: Highest intraday price.

- Low: Lowest intraday price.

- Close: Closing price (adjusted for splits where applicable).

- Volume: Number of shares or contracts traded.

3.3. Feature Configurations

E0: OHLCV only (baseline).

E1: OHLCV + momentum indicators.

- Relative Strength Index (RSI, 14-period)

- Stochastic %K (14-period high/low window, 3-period smoothing)

- Stochastic %D (3-period SMA of %K)

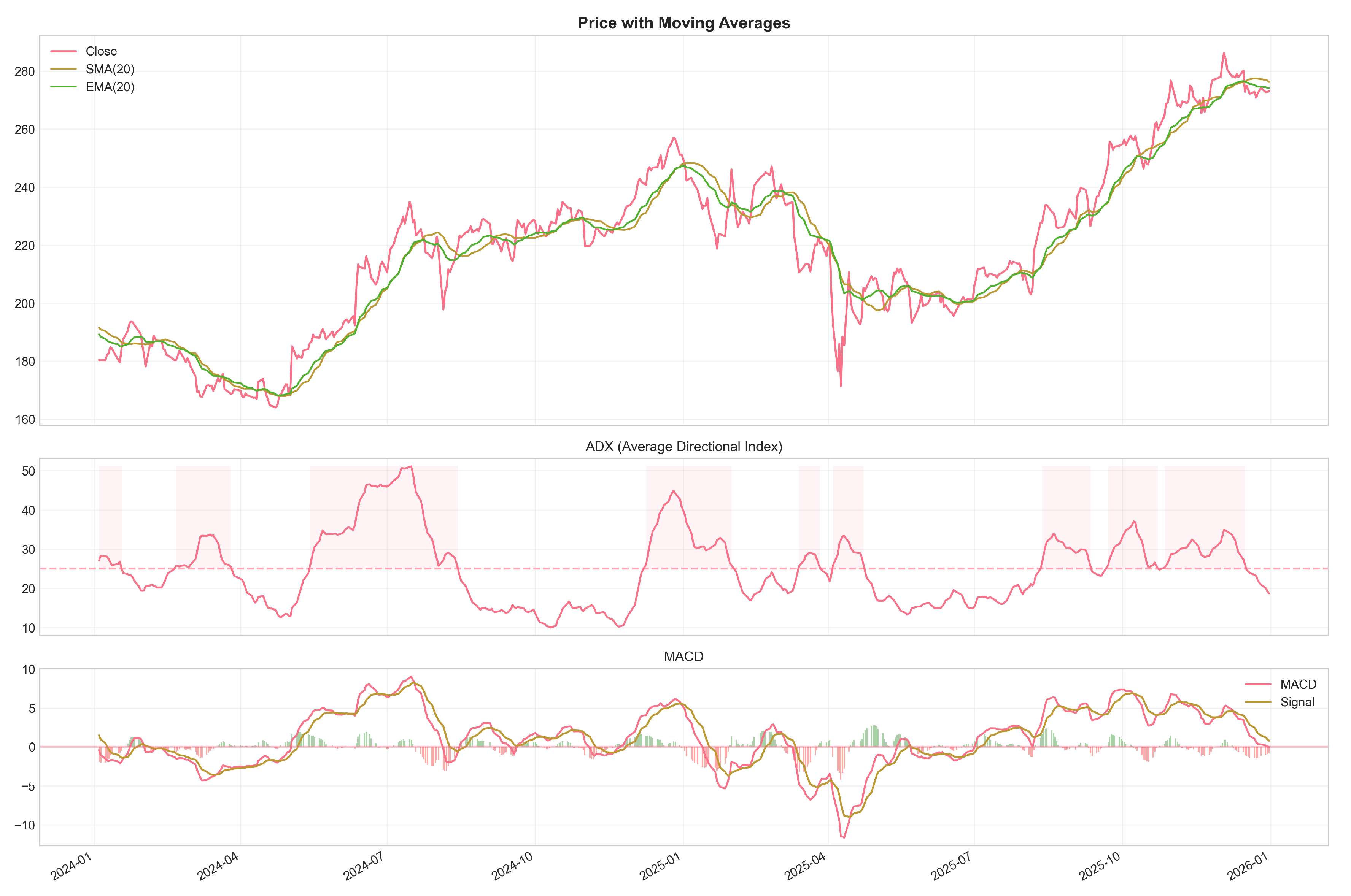

E2: OHLCV + trend indicators.

- Simple Moving Average (SMA, 20-period)

- Exponential Moving Average (EMA, 20-period)

- Average Directional Index (ADX, 14-period)

- MACD line (12-period EMA − 26-period EMA)

- MACD signal line (9-period EMA of MACD)

- MACD histogram (MACD − signal)

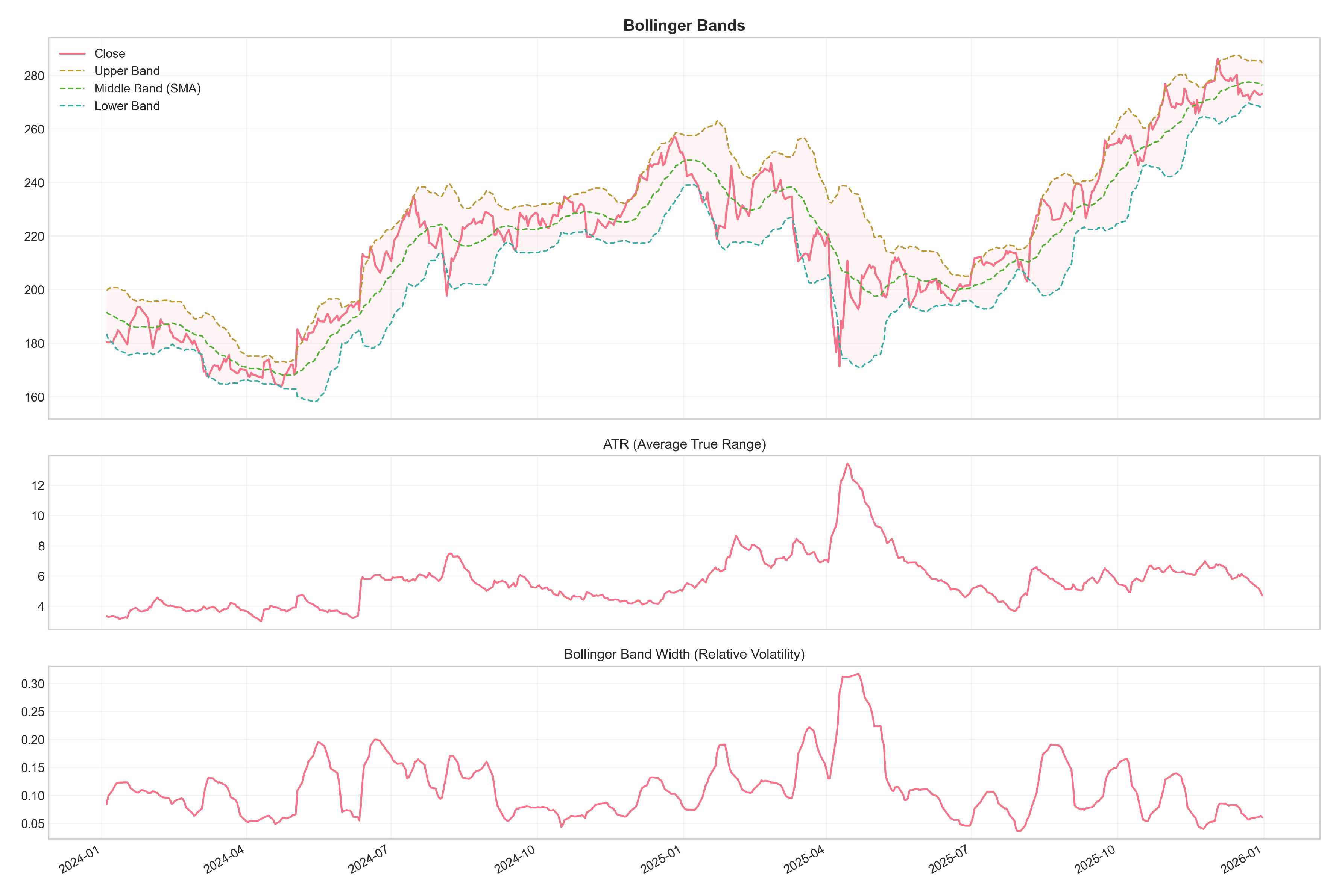

E3: OHLCV + volatility indicators.

- Average True Range (ATR, 14-period)

- Bollinger Band upper boundary (20-period SMA )

- Bollinger Band lower boundary (20-period SMA )

- Bollinger %B (price position relative to bands, scaled 0–1)

E4: OHLCV + all indicators.

3.4. Technical Indicator Calculations

Relative Strength Index (RSI).

Stochastic Oscillator.

Moving Average Convergence Divergence (MACD).

Average True Range (ATR).

Bollinger Bands.

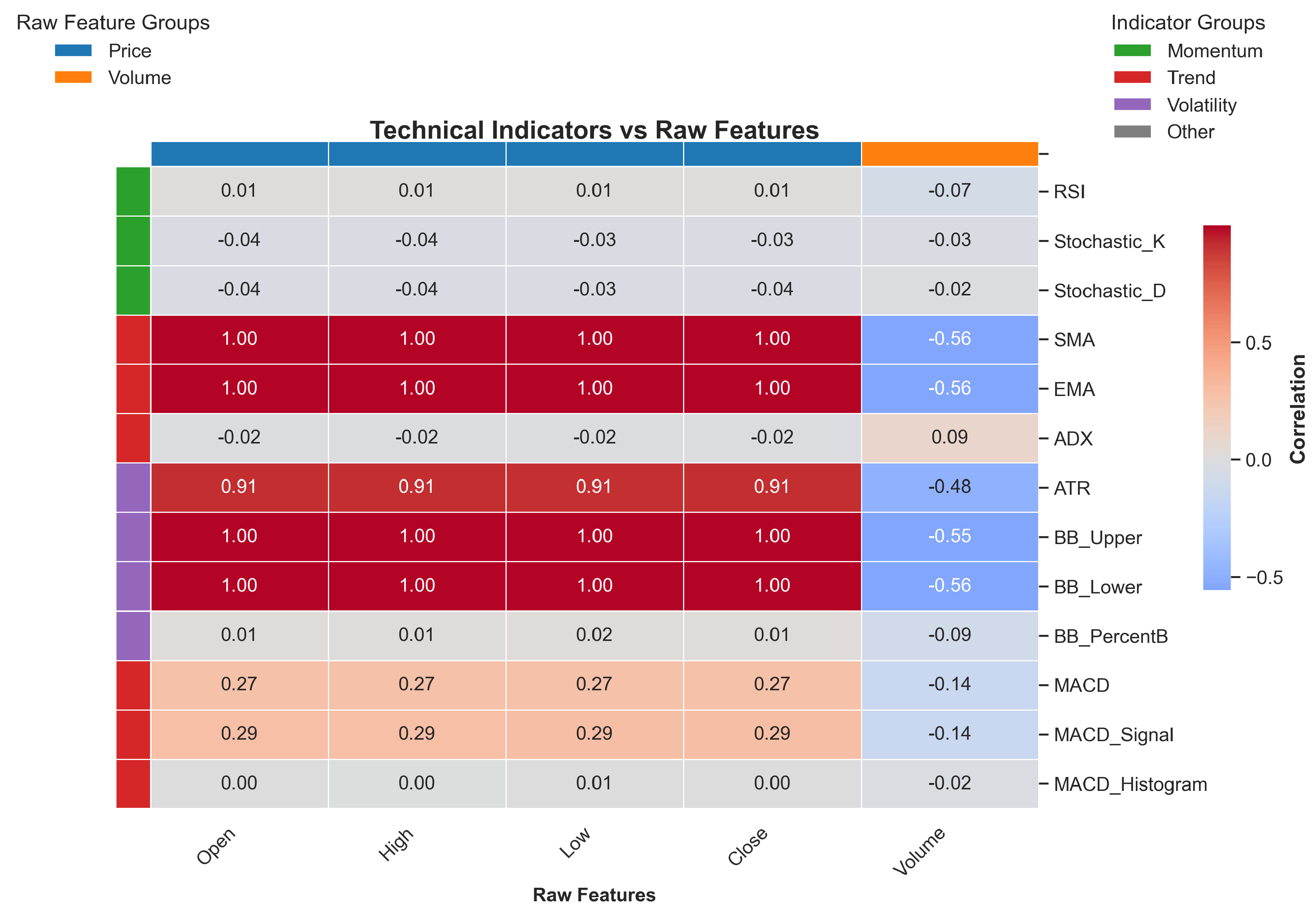

3.5. Feature Analysis

- Strong intra-category correlations: Indicators within the same family are highly correlated (e.g., SMA and EMA: ; MACD components: ).

- OHLCV relationships: Raw price features correlate moderately with trend indicators but less so with momentum and volatility measures.

- Momentum–volatility linkage: RSI and Stochastic oscillators show moderate correlation with Bollinger Band position (), reflecting their shared sensitivity to recent price extremes.

- Limited inter-category independence: Most cross-category correlation coefficients fall below 0.50, suggesting that different indicator families do capture distinct aspects of market dynamics.

3.6. Problem Formulation

3.7. Data Preprocessing

- 1.

- Missing values: forward-fill small gaps; drop rows that remain NaN after indicator warm-up.

- 2.

- Indicator computation: compute indicators using only past and present values (causal rolling or exponentially weighted filters).

- 3.

- Temporal split: partition chronologically into 80% training, 10% validation, and 10% test sets (no shuffling).

- 4.

-

Normalisation: apply Min–Max scaling using training-set extrema only:Validation and test sets are transformed with the training-set parameters.

- 5.

- Windowing: construct lookback windows of length with stride 1 (overlap ). Each sample has shape (batched: ), where F is the feature count for the chosen group.

- 6.

- Target: the supervised target is the raw next-day closing price (horizon ).

3.8. Dataset Statistics

3.9. Stationarity and Modelling Choice

| Asset | Period (samples) | Volatility |

|---|---|---|

| Crude Oil (WTI) | 2010-01-04–2025-12-31 (4,024) | High |

| Gold (XAU/USD) | 2010-01-04–2025-12-31 (4,023) | Moderate |

| Bitcoin (BTC/USD) | 2014-09-17–2025-12-31 (4,124) | Very High |

| Ethereum (ETH/USD) | 2017-11-09–2025-12-31 (2,975) | Very High |

| Apple (AAPL) | 2010-01-04–2025-12-31 (4,024) | Moderate |

| Microsoft (MSFT) | 2010-01-04–2025-12-31 (4,024) | Moderate |

| EUR/USD | 2010-01-01–2025-12-31 (4,165) | Low |

| USD/JPY | 2010-01-01–2025-12-31 (4,165) | Low |

| NASDAQ Composite | 2010-01-04–2025-12-31 (4,024) | Moderate |

| S&P 500 | 2010-01-04–2025-12-31 (4,024) | Moderate |

3.10. Look-Ahead Bias Prevention

- Causal indicator computation: every indicator uses rolling or exponentially weighted filters that depend only on present and past values; no future prices are accessed when computing features for time t.

- Warm-up exclusion: rows within the indicator warm-up window are removed, so that samples only begin once all features are well-defined.

- Train-set-only scaling: normalisation parameters (min/max) are computed exclusively on the training split and then applied to the validation and test sets.

- Alignment checks: input windows and targets are constructed so that the target is never included in the input ; index alignment is verified in the data pipeline.

- Hyperparameter isolation: model selection and hyperparameter tuning use only the validation set; test-set evaluations are reserved for final reporting. When nested evaluation (walk-forward) is used as a robustness check, inner loops draw only on earlier data.

- Pipeline encapsulation: preprocessing objects (imputers, scalers, indicator parameters) are saved from the training pipeline to ensure identical transformations at inference time.

3.11. Reproducibility Notes

4. Methodology

4.1. Model Architectures

4.1.1. LSTM Architecture

- Input Layer: Accepts sequences of shape , where F is the number of input features (5–18 depending on the feature configuration).

- LSTM Layer 1: 64 hidden units with return_sequences=True, dropout rate 0.05.

- LSTM Layer 2: 64 hidden units with return_sequences=False, dropout rate 0.05.

- Dense Layer: 32 units with ReLU activation.

- Output Layer: Single unit with linear activation for regression.

4.1.2. GRU Architecture

- Input Layer: Accepts sequences of shape .

- GRU Layer 1: 64 hidden units with return_sequences=True, dropout rate 0.05.

- GRU Layer 2: 64 hidden units with return_sequences=False, dropout rate 0.05.

- Dense Layer: 32 units with ReLU activation.

- Output Layer: Single unit with linear activation.

4.2. Training Protocol

4.2.1. Optimisation

- Learning rate:

- Exponential decay rates: ,

- Numerical stability constant:

4.2.2. Loss Function

4.2.3. Regularisation

- Dropout (rate ) on recurrent layer outputs.

- L2 weight regularisation () on kernel weights.

4.2.4. Training Configuration

- Batch size: 32 samples.

- Maximum epochs: 100.

- Early stopping: training halts if validation loss fails to decrease by at least for 15 consecutive epochs; the weights corresponding to the minimum validation loss are then restored.

- Learning rate reduction: if validation loss plateaus for 3 consecutive epochs, the learning rate is reduced by a factor of 0.8, subject to a minimum of .

4.2.5. Weight Initialisation

- Kernel weights: Glorot uniform initialisation [39].

- Recurrent weights: orthogonal initialisation.

- Bias terms: zero initialisation.

4.3. Sequence Construction

4.4. Random Seed Protocol

- NumPy random number generation.

- TensorFlow/Keras random state.

- Python’s built-in random module.

- Weight initialisation.

- Mini-batch shuffling order during training.

4.5. Evaluation Metrics

4.5.1. Root Mean Squared Error (RMSE)

4.5.2. Mean Absolute Error (MAE)

4.5.3. Mean Absolute Percentage Error (MAPE)

4.5.4. Directional Accuracy (DA)

4.6. Statistical Testing

4.6.1. Paired t-Test

4.6.2. Independent t-Test

4.6.3. Cohen’s d Effect Size

4.6.4. Significance Threshold

5. Experiments

5.1. Experimental Design

- Assets: 10 assets from 5 categories (commodities, cryptocurrencies, equities, foreign exchange, indices).

- Feature configurations: 5 configurations (E0: baseline OHLCV; E1–E4: indicator-augmented variants).

- Model architectures: 2 recurrent architectures (LSTM, GRU).

- Random seeds: 5 seeds per configuration (42, 123, 456, 739, 1126).

5.2. Research Hypotheses

5.3. Statistical Methodology

Unit of inference.

Primary comparison.

Multiple comparison correction.

Effect size quantification.

- Cohen’s d: the standardised mean difference, , where is the standard deviation of differences across assets.

- Percentage improvement:.

- Win rate: the proportion of assets for which configuration i outperforms the baseline.

Robustness checks.

Cross-asset aggregation.

5.4. Implementation and Reproducibility

5.5. Data Preparation and Model Training Protocol

Temporal validation split.

Lookback window selection.

Feature scaling.

Early stopping mechanism.

Evaluation on held-out test set.

5.6. Hyperparameter Configuration

| Parameter | Value |

|---|---|

| Lookback window | 60 trading days |

| Prediction horizon | 1 trading day |

| Batch size | 32 samples |

| Maximum epochs | 100 |

| Early stopping patience | 15 epochs |

| Learning rate | |

| Dropout rate | 0.05 |

| L2 regularisation | |

| Hidden units per layer | 64 |

| Number of recurrent layers | 2 |

Hyperparameter justification.

5.7. Statistical Power and Sample Size

6. Results

6.1. Experimental Summary

- RMSE range: 0.016–0.867 (normalised scale).

- Mean RMSE:.

- Directional accuracy range: 42.2%–67.5%.

- Mean directional accuracy:.

- Lowest RMSE: Crude Oil with GRU and E4 configuration (0.016).

- Highest RMSE: Gold with LSTM and E4 configuration (0.867).

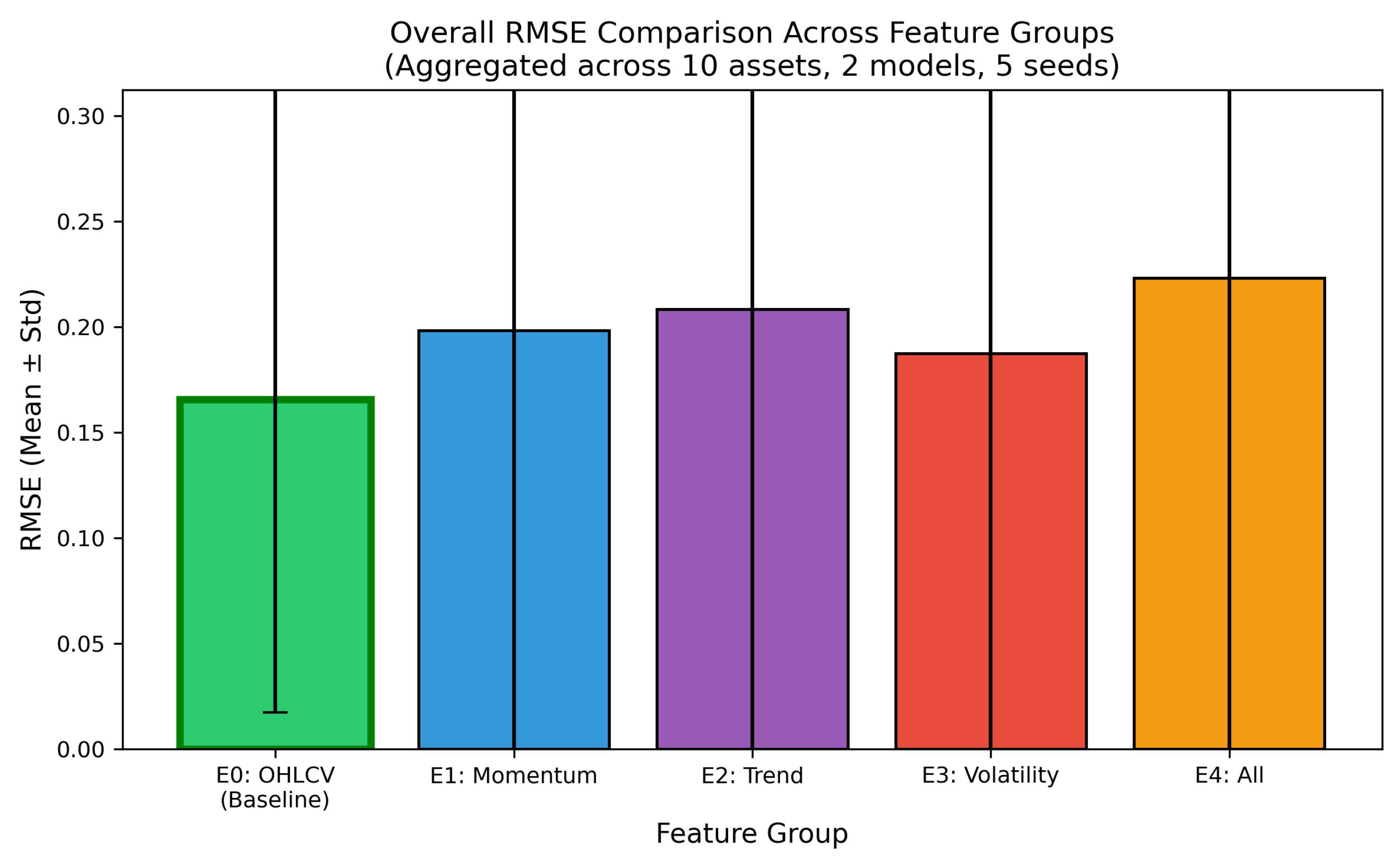

6.2. Feature Configuration Comparison

Main finding.

| Feature Configuration | RMSE | MAE | MAPE (%) | DA (%) |

|---|---|---|---|---|

| E0: OHLCV Only (Baseline) | 0.166 ± 0.148 | 0.151 ± 0.130 | 10.7 ± 4.9 | 55.7 ± 5.5 |

| E1: OHLCV + Momentum | 0.198 ± 0.205 | 0.181 ± 0.179 | 12.3 ± 6.8 | 52.3 ± 4.0 |

| E2: OHLCV + Trend | 0.208 ± 0.220 | 0.190 ± 0.193 | 12.8 ± 7.4 | 52.8 ± 4.3 |

| E3: OHLCV + Volatility | 0.187 ± 0.196 | 0.171 ± 0.170 | 11.6 ± 6.4 | 53.9 ± 4.7 |

| E4: OHLCV + All | 0.223 ± 0.231 | 0.204 ± 0.203 | 13.6 ± 8.0 | 51.1 ± 2.9 |

- E1 (Momentum): RMSE increase (2 of 20 configurations improved, 18 degraded).

- E2 (Trend): RMSE increase (4 of 20 configurations improved, 16 degraded).

- E3 (Volatility): RMSE increase (9 of 20 configurations improved, 11 degraded).

- E4 (All indicators): RMSE increase (4 of 20 configurations improved, 16 degraded).

Statistical significance.

| Comparison | RMSE | t-statistic | p-value | Cohen’s d |

|---|---|---|---|---|

| E1 (Momentum) vs. E0 | ||||

| E2 (Trend) vs. E0 | ||||

| E3 (Volatility) vs. E0 | ||||

| E4 (All) vs. E0 |

- E1 (Momentum): , .

- E2 (Trend): , .

- E3 (Volatility): , .

- E4 (All indicators): , .

6.3. Indicator Category Ranking

Finding.

- 1.

- E3 (Volatility, 4 indicators): degradation.

- 2.

- E1 (Momentum, 3 indicators): degradation.

- 3.

- E2 (Trend, 6 indicators): degradation.

- 4.

- E4 (All indicators, 13 total): degradation.

6.4. Asset Class Analysis

Finding.

| Asset Class | RMSE | MAE | DA (%) |

|---|---|---|---|

| Commodity | 0.381 ± 0.368 | 0.330 ± 0.320 | 53.3 ± 6.8 |

| Cryptocurrency | 0.158 ± 0.106 | 0.147 ± 0.105 | 55.7 ± 4.4 |

| Equity | 0.213 ± 0.022 | 0.201 ± 0.021 | 53.3 ± 3.6 |

| Foreign Exchange | 0.049 ± 0.027 | 0.045 ± 0.028 | 49.8 ± 1.8 |

| Market Index | 0.182 ± 0.017 | 0.173 ± 0.017 | 53.8 ± 2.9 |

| Aggregated by volatility regime: | |||

| High volatility (Commodity, Crypto) | RMSE = 0.270, DA = 54.5% | ||

| Low volatility (Equity, Forex, Index) | RMSE = 0.148, DA = 52.3% | ||

- Foreign exchange achieves the lowest mean RMSE (), most likely because of the lower volatility and narrower price ranges of currency pairs. Directional accuracy (49.8%), however, is statistically indistinguishable from random, indicating that while the models predict price levels well, they fail to capture directional movements.

- Cryptocurrencies show moderate RMSE (0.158) coupled with the highest directional accuracy (55.7%), possibly reflecting stronger momentum patterns that recurrent networks are able to exploit.

- Commodities exhibit the highest variance (), driven by the large disparity between crude oil (which shows extreme outlier behaviour) and gold.

- High-volatility assets (cryptocurrencies and commodities combined) have 83% higher mean RMSE than low-volatility assets (equities, forex, indices): 0.270 versus 0.148.

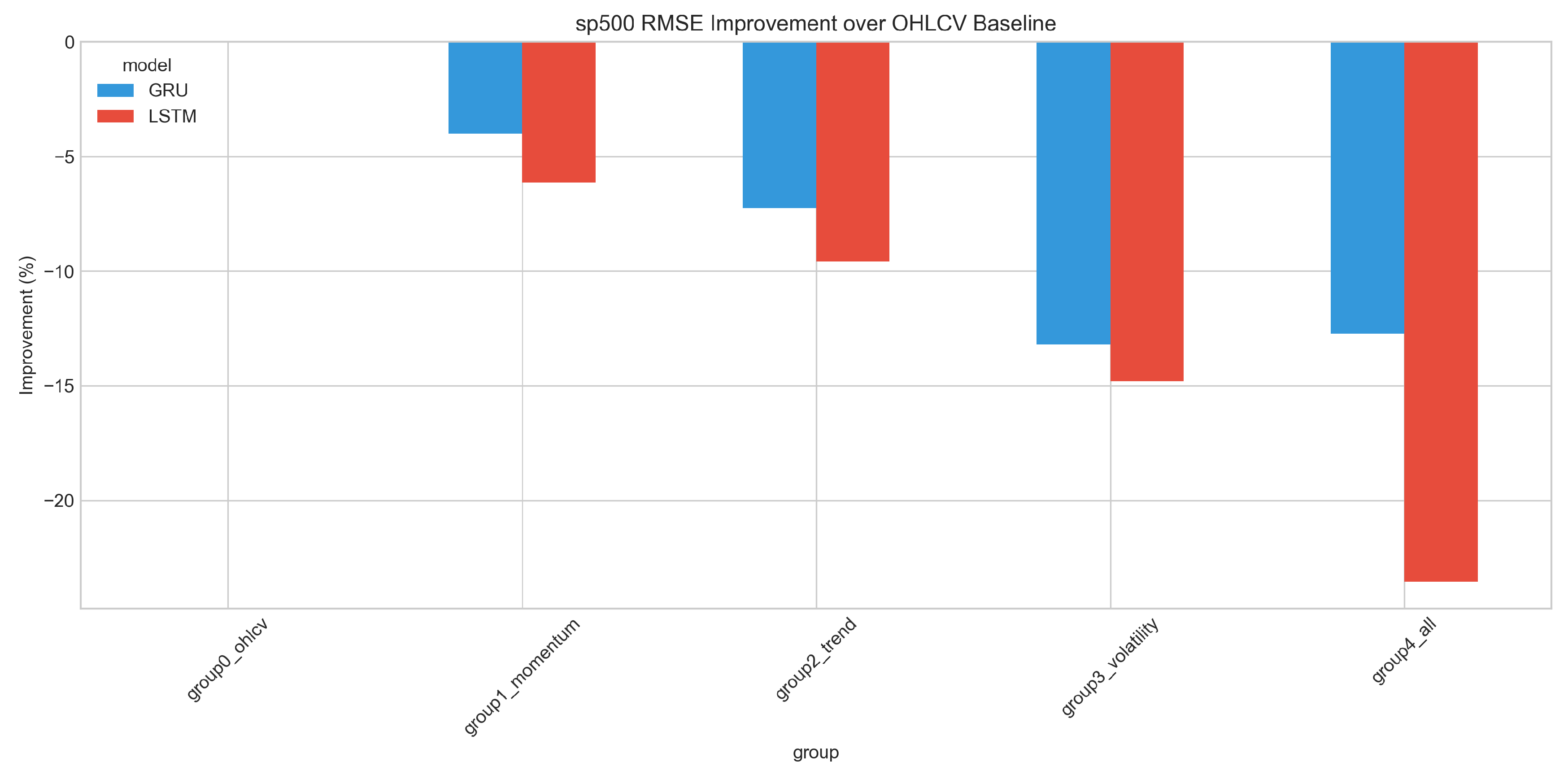

Volatility indicator impact by asset class.

| Asset Class | RMSE | Improvement? |

|---|---|---|

| Commodity | No | |

| Cryptocurrency | No | |

| Equity | No | |

| Foreign Exchange | Yes | |

| Market Index | No |

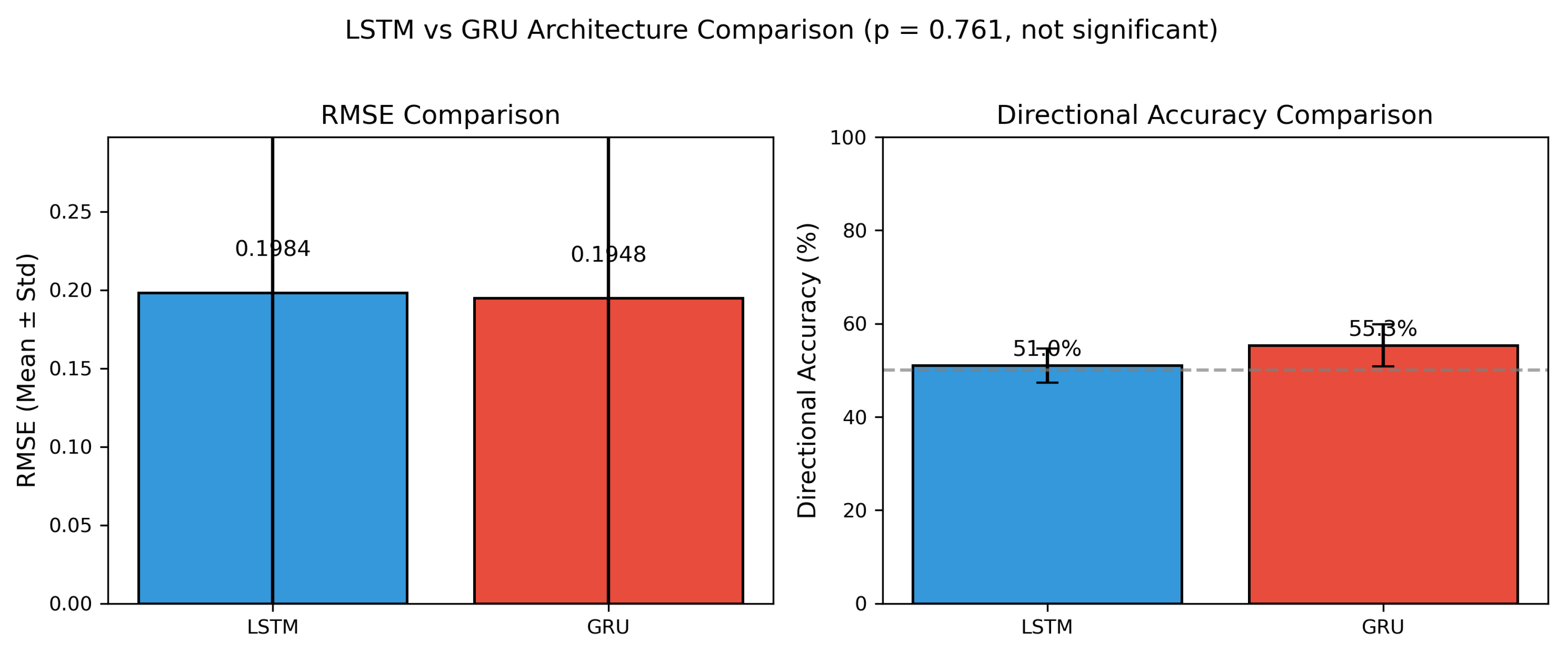

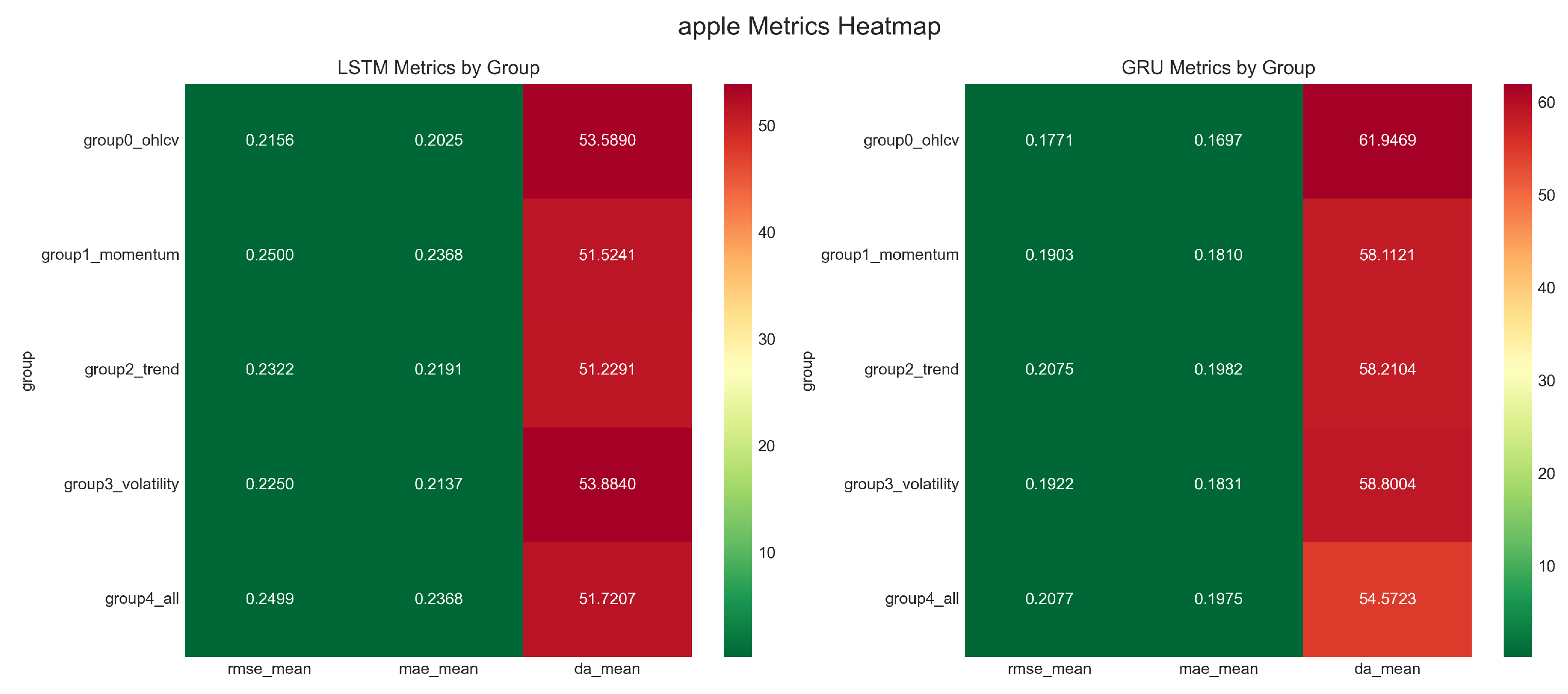

6.5. Architecture Comparison: LSTM versus GRU

Finding.

| Architecture | RMSE | MAE | DA (%) | Parameters |

|---|---|---|---|---|

| LSTM | 0.198 ± 0.204 | 0.179 ± 0.176 | 51.0 ± 3.7 | 26,817 |

| GRU | 0.195 ± 0.202 | 0.180 ± 0.179 | 55.3 ± 4.5 | 20,385 |

| Statistical test (independent t-test): | ||||

| , (not significant), Cohen’s (negligible) | ||||

- GRU achieves lower mean RMSE ( versus ).

- GRU achieves higher mean directional accuracy ( versus ).

- Independent t-test: , (not significant at ).

- Effect size: Cohen’s (negligible).

6.6. Evidence of Feature Redundancy

Finding.

- E3 (4 volatility indicators): RMSE degradation

- E1 (3 momentum indicators): RMSE degradation

- E2 (6 trend indicators): RMSE degradation

- E4 (13 total indicators): RMSE degradation

- 1.

- Inter-indicator redundancy: Multiple indicators derived from similar price relationships (e.g., SMA and EMA, or RSI and Stochastic) are highly correlated, providing duplicative rather than complementary information.

- 2.

- Noise accumulation: Each indicator introduces additional variance that compounds across the feature set without proportional signal gain.

- 3.

- Increased model complexity: Higher-dimensional input spaces require learning more parameters, potentially increasing overfitting risk given fixed training set sizes.

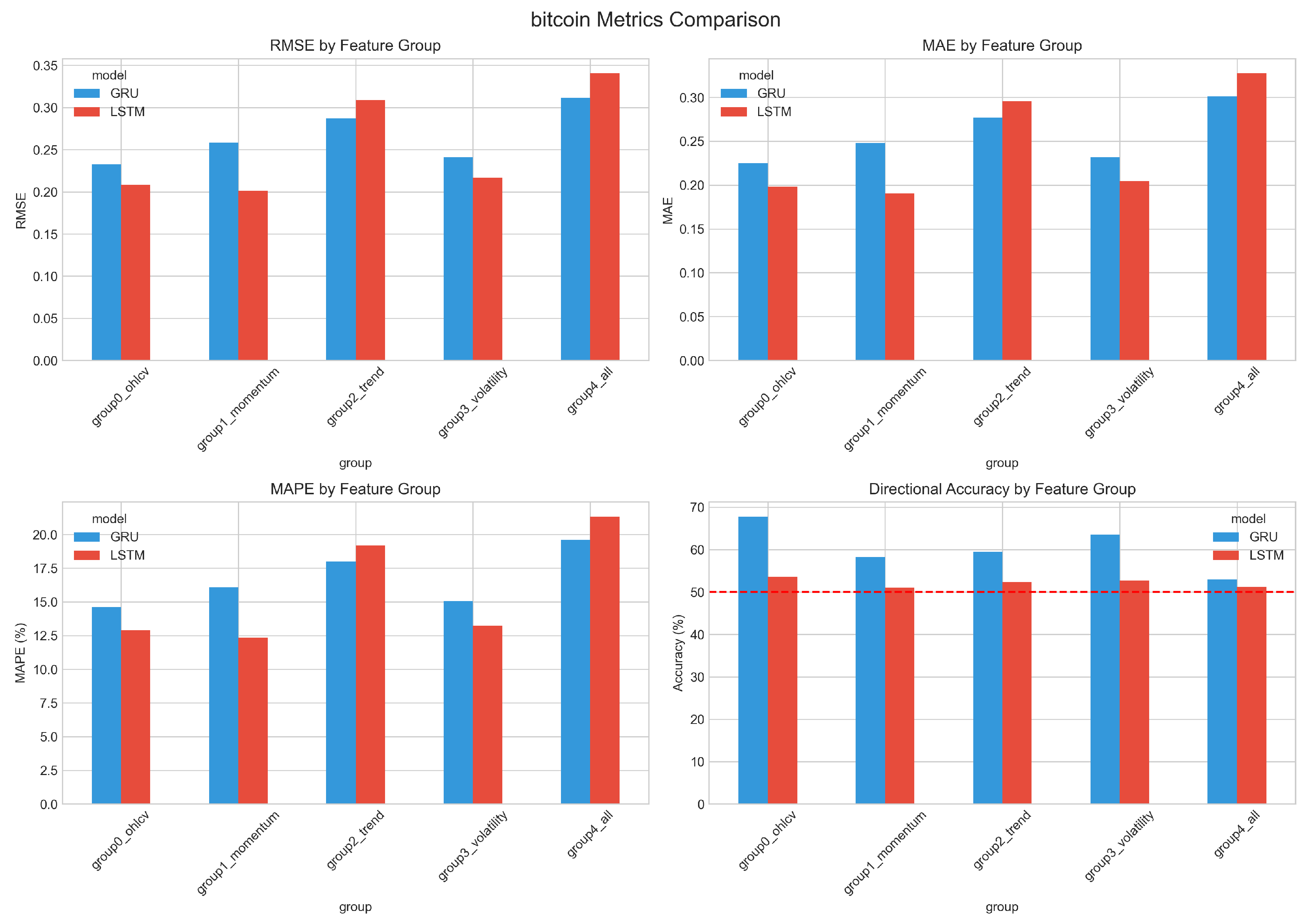

6.7. Best Configurations by Asset

| Category | Asset | Model | Best Config. | RMSE | DA (%) |

|---|---|---|---|---|---|

| Commodity | Crude Oil | GRU | E4: All | 0.016 | 47.8 |

| Gold | LSTM | E0: OHLCV | 0.453 | 58.2 | |

| Cryptocurrency | Bitcoin | LSTM | E0: OHLCV | 0.181 | 54.2 |

| Ethereum | GRU | E3: Volatility | 0.045 | 58.3 | |

| Equity | Apple | GRU | E0: OHLCV | 0.175 | 61.5 |

| Microsoft | LSTM | E3: Volatility | 0.171 | 50.8 | |

| Forex | EUR/USD | GRU | E4: All | 0.019 | 49.7 |

| USD/JPY | LSTM | E3: Volatility | 0.045 | 50.8 | |

| Index | NASDAQ | GRU | E0: OHLCV | 0.152 | 57.5 |

| S&P 500 | GRU | E0: OHLCV | 0.168 | 58.2 |

- Baseline dominance: 5 of 10 assets (Gold, Bitcoin, Apple, NASDAQ, S&P 500) perform best with the raw OHLCV baseline (E0), requiring no technical indicators at all.

- Volatility indicator utility: 3 of 10 assets (Ethereum, Microsoft, USD/JPY) achieve their best results with volatility indicators (E3).

- All-indicators configuration: 2 of 10 assets (Crude Oil, EUR/USD) favour the full indicator set (E4).

- GRU preference: 6 of 10 assets achieve their best results with GRU rather than LSTM.

- Highest directional accuracy: Apple with GRU and baseline features reaches 61.5% directional accuracy—substantially above the 50% random baseline.

6.8. Visualisation

6.9. Summary of Principal Findings

- 1.

- Technical indicators do not improve deep learning forecasting performance on average; all four indicator categories show statistically significant degradation relative to the baseline.

- 2.

- The comprehensive all-indicators configuration exhibits the worst performance ( RMSE increase, ), providing clear evidence of feature redundancy rather than complementarity.

- 3.

- Among augmented configurations, volatility indicators show the smallest degradation overall and are the only indicators that improve performance for a specific asset class—foreign exchange (4.2% RMSE reduction).

- 4.

- LSTM and GRU perform comparably, with no statistically significant RMSE difference (), although GRU achieves higher directional accuracy.

- 5.

- Performance differs markedly by asset class: high-volatility assets exhibit 83% higher prediction error than low-volatility assets.

- 6.

- The baseline OHLCV configuration achieves optimal or near-optimal performance for the majority of assets tested.

7. Discussion

7.1. Interpretation of Results

7.1.1. Why Do Technical Indicators Degrade Performance?

Information redundancy.

Noise introduction.

Curse of dimensionality.

Implicit feature learning.

Temporal smoothing.

7.1.2. Why Does the All-Indicators Configuration Perform Worst?

- Accumulated redundancy: indicators within the same family (e.g., SMA and EMA; RSI and Stochastic) are highly correlated, contributing duplicative information that inflates parameter counts without proportional signal.

- Noise compounding: measurement noise and indicator-specific artefacts from each feature accumulate rather than cancel, raising the overall variance of the input representation.

- Capacity dilution: with more input dimensions, the model must spread its representational capacity across a larger feature set, potentially diluting attention to the most informative signals.

- Conflicting signals: different indicator categories may suggest contradictory market states—momentum indicators might signal mean-reversion while trend indicators signal continuation—creating confusion during learning.

7.1.3. Why Does Foreign Exchange Benefit from Volatility Indicators?

- Volatility clustering: currency pairs exhibit pronounced volatility persistence (GARCH effects), making explicit volatility measures informative about near-term price dynamics.

- Policy-driven regimes: central bank interventions and monetary policy announcements create distinct volatility regimes that explicit indicators may capture more effectively than raw prices alone.

- Lower baseline volatility: with lower absolute volatility than cryptocurrencies or commodities, the signal-to-noise ratio of volatility indicators is higher in forex markets.

- Mean-reverting dynamics: major currency pairs often exhibit mean-reverting behaviour within trading ranges, and Bollinger Band position indicators (%B) may capture these dynamics effectively.

7.1.4. LSTM versus GRU: Why No Significant Difference?

- Both architectures address the vanishing gradient problem through gating mechanisms.

- The LSTM’s separate cell state provides additional memory capacity that may be unnecessary for the moderate sequence lengths (60 days) and temporal dependencies typical of daily financial data.

- At daily sampling frequency, extreme long-range dependencies spanning months or years are less critical than in domains such as natural language processing.

7.2. Assessment of Prior Expectations

Hypothesis 1: Technical indicators enhance model performance.

Hypothesis 2: Different asset classes benefit from different indicator types.

Hypothesis 3: Volatility indicators benefit high-volatility assets.

Hypothesis 4: Comprehensive indicator combinations achieve superior performance.

Hypothesis 5: LSTM outperforms GRU due to its additional memory capacity.

7.3. Relation to Prior Literature

Bao et al. (2017).

Nelson et al. (2017).

Selvin et al. (2017).

Chong et al. (2017).

Patel et al. (2015).

Methodological positioning.

7.4. Practical Implications

7.4.1. For Trading System Developers

- 1.

- Establish baselines first. Raw OHLCV data should serve as the default starting point. Technical indicators should only be added when rigorous validation on held-out data demonstrates consistent improvement.

- 2.

- Evaluate on an asset-specific basis. Indicator utility varies by asset class. Volatility indicators may benefit foreign exchange models while offering no advantage for equities or cryptocurrencies.

- 3.

- Resist feature proliferation. Adding more indicators generally degrades performance. When using indicators, a small set of uncorrelated features is preferable to a comprehensive indicator suite.

- 4.

- Consider GRU for efficiency. With prediction accuracy comparable to LSTM and fewer parameters, GRU offers faster training and may be preferable for deployment.

- 5.

- Validate rigorously. Indicator benefits should not be assumed on the basis of prior literature or intuition. Each proposed feature should be tested explicitly against an OHLCV baseline using proper train/validation/test splits and multiple random seeds.

7.4.2. For Researchers

- 1.

- Report baseline comparisons. Studies proposing new feature engineering approaches should benchmark against OHLCV baselines, not only against alternative indicator configurations.

- 2.

- Use statistical testing. Results should be reported across multiple random seeds, accompanied by significance tests and effect sizes, rather than relying on single-run comparisons.

- 3.

- Evaluate across assets. Findings from a single asset may not generalise. Multi-asset studies provide stronger foundations for robust conclusions.

- 4.

- Publish null and negative results. Evidence that indicators do not improve performance is scientifically valuable and helps counteract publication bias in the literature.

7.5. Theoretical Implications

Deep learning as feature learner.

Domain knowledge integration.

Market efficiency considerations.

7.6. Limitations

- 1.

- Temporal resolution. We analyse daily data exclusively. Intraday markets may exhibit different indicator dynamics, particularly for momentum and volatility measures.

- 2.

- Prediction horizon. The study is restricted to one-day-ahead prediction. Longer horizons (weekly, monthly) or multi-step forecasting may favour different feature configurations.

- 3.

- Indicator coverage. Although our set covers the major categories, it is not exhaustive. Adaptive indicators, alternative oscillators, or market-specific measures may behave differently.

- 4.

- Market regime coverage. The study period (2010–2025) encompasses diverse market conditions but may not include every regime type. Indicator utility may be state-dependent in ways not captured by aggregate analysis.

- 5.

- Architecture scope. We evaluate standard LSTM and GRU configurations. Attention-based models (Transformers, Temporal Fusion Transformers) may interact with indicator features differently.

- 6.

- Hyperparameter sensitivity. Indicator parameters (lookback periods) are fixed at conventional values. Jointly optimising indicator and model hyperparameters could alter the conclusions.

- 7.

- Alternative targets. We predict raw closing prices. Indicators may prove more useful for alternative targets such as volatility forecasting, regime classification, or return prediction.

7.7. Future Research Directions

- 1.

- Learnable indicators. Develop indicators whose parameters are optimised jointly with model weights during training, enabling end-to-end learning of feature transformations tailored to the prediction task.

- 2.

- Regime-conditional features. Investigate whether indicators provide benefits in specific market regimes (trending versus ranging, high versus low volatility) through conditional or regime-switching models.

- 3.

- Attention-based architectures. Evaluate whether Transformer and Temporal Fusion architectures [28] can more effectively leverage indicator features through attention mechanisms that weight feature importance dynamically.

- 4.

- Higher-frequency analysis. Extend the methodology to intraday data, where indicator calculation windows, market microstructure, and prediction horizons differ substantially from the daily setting.

- 5.

- Multi-task learning. Explore whether indicators benefit auxiliary prediction tasks (volatility forecasting, regime classification) even when they do not improve price prediction.

- 6.

- Interpretability analysis. Apply feature attribution methods (SHAP, integrated gradients, attention visualisation) to understand how deep learning models utilise raw versus derived features.

8. Conclusion

8.1. Principal Findings

- 1.

- Technical indicators degrade forecasting performance. Contrary to conventional practice, indicator-augmented feature sets increase prediction error relative to raw OHLCV data. The baseline configuration achieves the lowest mean RMSE () and the highest mean directional accuracy ().

- 2.

- All four indicator categories produce statistically significant degradation. Momentum (), trend (), volatility (), and the combined configuration () all exhibit significantly higher RMSE than the baseline.

- 3.

- Combining indicators yields the worst performance. The comprehensive E4 configuration, comprising 13 indicators, incurs a 34.6% RMSE increase, indicating that feature combination introduces redundancy and noise rather than complementary signal.

- 4.

- Volatility indicators provide an asset-class-specific benefit. Foreign exchange is the sole asset class where volatility indicators improve performance (4.2% RMSE reduction), plausibly reflecting the importance of volatility regimes in currency markets.

- 5.

- High-volatility assets are harder to predict. Cryptocurrencies and commodities show 83% higher mean RMSE than equities, forex, and indices, consistent with the greater difficulty of forecasting volatile price series.

- 6.

- LSTM and GRU perform comparably. No statistically significant RMSE difference exists between architectures (, Cohen’s ), though GRU achieves modestly higher directional accuracy with fewer parameters.

- 7.

- Simple configurations often dominate. Five of ten assets achieve optimal performance with the OHLCV baseline alone, requiring no technical indicators.

8.2. Contributions

Empirical contribution.

Methodological contribution.

Practical contribution.

Theoretical contribution.

8.3. Recommendations

For trading system developers.

- Begin with OHLCV baseline features; add indicators only when validation evidence demonstrates improvement.

- Evaluate volatility indicators specifically for foreign exchange applications.

- Limit indicator count to minimise noise accumulation.

- Consider GRU architecture for comparable accuracy at reduced computational cost.

For researchers.

- Benchmark proposed methods against OHLCV baselines, not only against other indicator configurations.

- Report results from multiple random seeds with statistical significance testing.

- Evaluate across multiple asset classes to assess generalisability.

- Publish null and negative findings to help counteract publication bias.

8.4. Scope and Limitations

8.5. Concluding Remarks

Data Availability Statement

Acknowledgments

References

- Cont, R. Empirical properties of asset returns: Stylized facts and statistical issues. Quantitative Finance 2001, 1, 223–236. [Google Scholar] [CrossRef]

- Sezer, O.B.; Gudelek, M.U.; Ozbayoglu, A.M. Financial time series forecasting with deep learning: A systematic literature review: 2005–2019. Applied Soft Computing 2020, 90, 106181. [Google Scholar] [CrossRef]

- Hochreiter, S.; Schmidhuber, J. Long short-term memory. Neural Computation 1997, 9, 1735–1780. [Google Scholar] [CrossRef]

- Cho, K.; Van Merriënboer, B.; Gulcehre, C.; Bahdanau, D.; Bougares, F.; Schwenk, H.; Bengio, Y. Learning phrase representations using RNN encoder-decoder for statistical machine translation. In Proceedings of the Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing (EMNLP), 2014; pp. 1724–1734. [Google Scholar]

- Fischer, T.; Krauss, C. Deep learning with long short-term memory networks for financial market predictions. European Journal of Operational Research 2018, 270, 654–669. [Google Scholar] [CrossRef]

- Murphy, J.J. Technical Analysis of the Financial Markets: A Comprehensive Guide to Trading Methods and Applications; New York Institute of Finance, 1999. [Google Scholar]

- Achelis, S.B. Technical Analysis from A to Z; McGraw-Hill, 2001. [Google Scholar]

- Bao, W.; Yue, J.; Rao, Y. A deep learning framework for financial time series using stacked autoencoders and long-short term memory. PLoS ONE 2017, 12, e0180944. [Google Scholar] [CrossRef]

- Nelson, D.M.Q.; Pereira, A.C.M.; de Oliveira, R.A. Stock market’s price movement prediction with LSTM neural networks. In Proceedings of the 2017 International Joint Conference on Neural Networks (IJCNN), 2017; IEEE; pp. 1419–1426. [Google Scholar]

- Selvin, S.; Vinayakumar, R.; Gopalakrishnan, E.; Menon, V.K.; Soman, K. Stock price prediction using LSTM, RNN and CNN-sliding window model. In Proceedings of the 2017 International Conference on Advances in Computing, Communications and Informatics (ICACCI), 2017; IEEE; pp. 1643–1647. [Google Scholar]

- Chong, E.; Han, C.; Park, F.C. Deep learning networks for stock market analysis and prediction: Methodology, data representations, and case studies. Expert Systems with Applications 2017, 83, 187–205. [Google Scholar] [CrossRef]

- Cao, J.; Li, Z.; Li, J. Financial time series forecasting model based on CEEMDAN and LSTM. Physica A: Statistical Mechanics and its Applications 2019, 519, 127–139. [Google Scholar] [CrossRef]

- Zhang, L.; Aggarwal, C.; Kong, X.; Philip, S.Y. Stock price prediction via discovering multi-frequency trading patterns. In Proceedings of the 23rd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, 2017; pp. 2141–2149. [Google Scholar]

- Nti, I.K.; Adekoya, A.F.; Weyori, B.A. A systematic review of fundamental and technical analysis of stock market predictions. Artificial Intelligence Review 2020, 53, 3007–3057. [Google Scholar] [CrossRef]

- Fama, E.F. Efficient capital markets: A review of theory and empirical work. The Journal of Finance 1970, 25, 383–417. [Google Scholar] [CrossRef]

- Fama, E.F.; Blume, M.E. Filter rules and stock-market trading. The Journal of Business 1966, 39, 226–241. [Google Scholar] [CrossRef]

- Lo, A.W.; Mamaysky, H.; Wang, J. Foundations of technical analysis: Computational algorithms, statistical inference, and empirical implementation. The Journal of Finance 2000, 55, 1705–1765. [Google Scholar] [CrossRef]

- Brock, W.; Lakonishok, J.; LeBaron, B. Simple technical trading rules and the stochastic properties of stock returns. The Journal of Finance 1992, 47, 1731–1764. [Google Scholar] [CrossRef]

- Neely, C.; Weller, P.; Dittmar, R. Is technical analysis in the foreign exchange market profitable? A genetic programming approach. Journal of Financial and Quantitative Analysis 1997, 32, 405–426. [Google Scholar] [CrossRef]

- Lo, A.W. The adaptive markets hypothesis. The Journal of Portfolio Management 2004, 30, 15–29. [Google Scholar] [CrossRef]

- Tsay, R.S. Analysis of Financial Time Series, 3rd ed.; John Wiley & Sons, 2010. [Google Scholar]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef]

- Chung, J.; Gulcehre, C.; Cho, K.; Bengio, Y. Empirical evaluation of gated recurrent neural networks on sequence modeling. In Proceedings of the NIPS 2014 Workshop on Deep Learning, 2014. [Google Scholar]

- Greff, K.; Srivastava, R.K.; Koutník, J.; Steunebrink, B.R.; Schmidhuber, J. LSTM: A search space odyssey. IEEE Transactions on Neural Networks and Learning Systems 2017, 28, 2222–2232. [Google Scholar] [CrossRef]

- Gu, S.; Kelly, B.; Xiu, D. Empirical asset pricing via machine learning. The Review of Financial Studies 2020, 33, 2223–2273. [Google Scholar] [CrossRef]

- Krauss, C.; Do, X.A.; Huck, N. Deep neural networks, gradient-boosted trees, random forests: Statistical arbitrage on the S&P 500. European Journal of Operational Research 2017, 259, 689–702. [Google Scholar]

- Sirignano, J.; Cont, R. Universal features of price formation in financial markets: Perspectives from deep learning. Quantitative Finance 2019, 19, 1449–1459. [Google Scholar] [CrossRef]

- Lim, B.; Zohren, S. Time-series forecasting with deep learning: A survey. Philosophical Transactions of the Royal Society A 2021, 379, 20200209. [Google Scholar] [CrossRef]

- Kara, Y.; Boyacioglu, M.A.; Baykan, Ö.K. Predicting direction of stock price index movement using artificial neural networks and support vector machines: The sample of the Istanbul Stock Exchange. Expert Systems with Applications 2011, 38, 5311–5319. [Google Scholar] [CrossRef]

- Patel, J.; Shah, S.; Thakkar, P.; Kotecha, K. Predicting stock and stock price index movement using trend deterministic data preparation and machine learning techniques. Expert Systems with Applications 2015, 42, 259–268. [Google Scholar] [CrossRef]

- Zhong, X.; Enke, D. Forecasting daily stock market return using dimensionality reduction. Expert Systems with Applications 2019, 126, 256–268. [Google Scholar] [CrossRef]

- Hoseinzade, E.; Haratizadeh, S. CNNpred: CNN-based stock market prediction using a diverse set of variables. Expert Systems with Applications 2019, 129, 273–285. [Google Scholar] [CrossRef]

- Dietterich, T.G. Approximate statistical tests for comparing supervised classification learning algorithms. Neural Computation 1998, 10, 1895–1923. [Google Scholar] [CrossRef]

- Henderson, P.; Islam, R.; Bachman, P.; Pineau, J.; Precup, D.; Meger, D. Deep reinforcement learning that matters. In Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence, 2018; Vol. 32. [Google Scholar]

- Makridakis, S.; Spiliotis, E.; Assimakopoulos, V. Statistical and machine learning forecasting methods: Concerns and ways forward. PLoS ONE 2018, 13, e0194889. [Google Scholar] [CrossRef] [PubMed]

- Tsai, C.F.; Hsiao, Y.C. Combining multiple feature selection methods for stock prediction: Union, intersection, and multi-intersection approaches. Decision Support Systems 2010, 50, 258–269. [Google Scholar] [CrossRef]

- Kim, T.; Kim, H.K. Forecasting stock prices with a feature fusion LSTM-CNN model using different representations of the same data. PLoS ONE 2019, 14, e0212320. [Google Scholar] [CrossRef]

- Kingma, D.P.; Ba, J. Adam: A method for stochastic optimization. arXiv 2014, arXiv:1412.6980. [Google Scholar]

- Glorot, X.; Bengio, Y. Understanding the difficulty of training deep feedforward neural networks. In Proceedings of the Proceedings of the Thirteenth International Conference on Artificial Intelligence and Statistics. JMLR Workshop and Conference Proceedings, 2010; pp. 249–256. [Google Scholar]

- Cohen, J. Statistical Power Analysis for the Behavioral Sciences, 2nd ed.; Lawrence Erlbaum Associates, 1988. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).