Submitted:

06 February 2026

Posted:

09 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

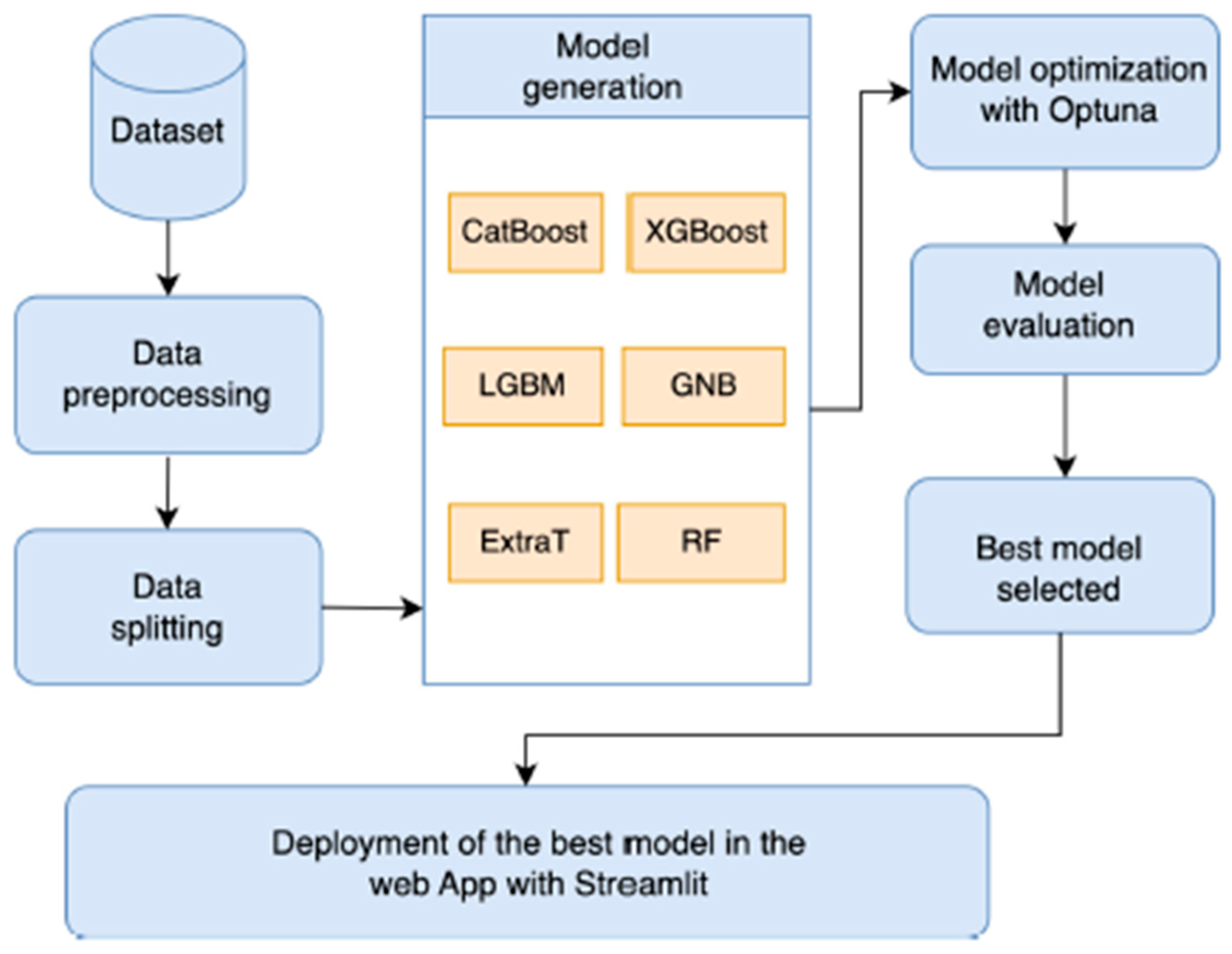

3. Proposed Architecture

4. Methodology

4.1. Dataset

4.2. Machine Learning Algorithms

4.2.1. Random Forest Classifier

4.2.2. Decision Tree

4.2.3. Gaussian Naïve Bayes

4.2.4. Gradient Boosting Classifier

4.2.5. Artificial Neural Networks

5. Results and Discussion

5.1. Results

- Accuracy is one of the most straightforward performance metrics, representing the ratio of correctly classified instances to the total number of instances. It is particularly useful when the dataset is balanced, meaning that the numbers of false positives and false negatives are relatively similar [28]. However, accuracy alone may not provide a complete evaluation of a model’s performance. Therefore, additional metrics are necessary to better assess the effectiveness of the proposed model. Accuracy can be expressed as:

- In terms of positive observations, precision is the proportion of accurately anticipated observations to all predicted positive observations [26]. Low false positive rates, or how many genuine accurate locations, are correlated with high accuracy. Precision is defined as:

- Recall is defined as the proportion of accurately predicted positive observations to all actual class observations [26]. Recall is defined as:

- F1-score is the harmonic mean of precision and recall metrics, it is the overall correctness the model has achieved [26], F1-score is defined as:

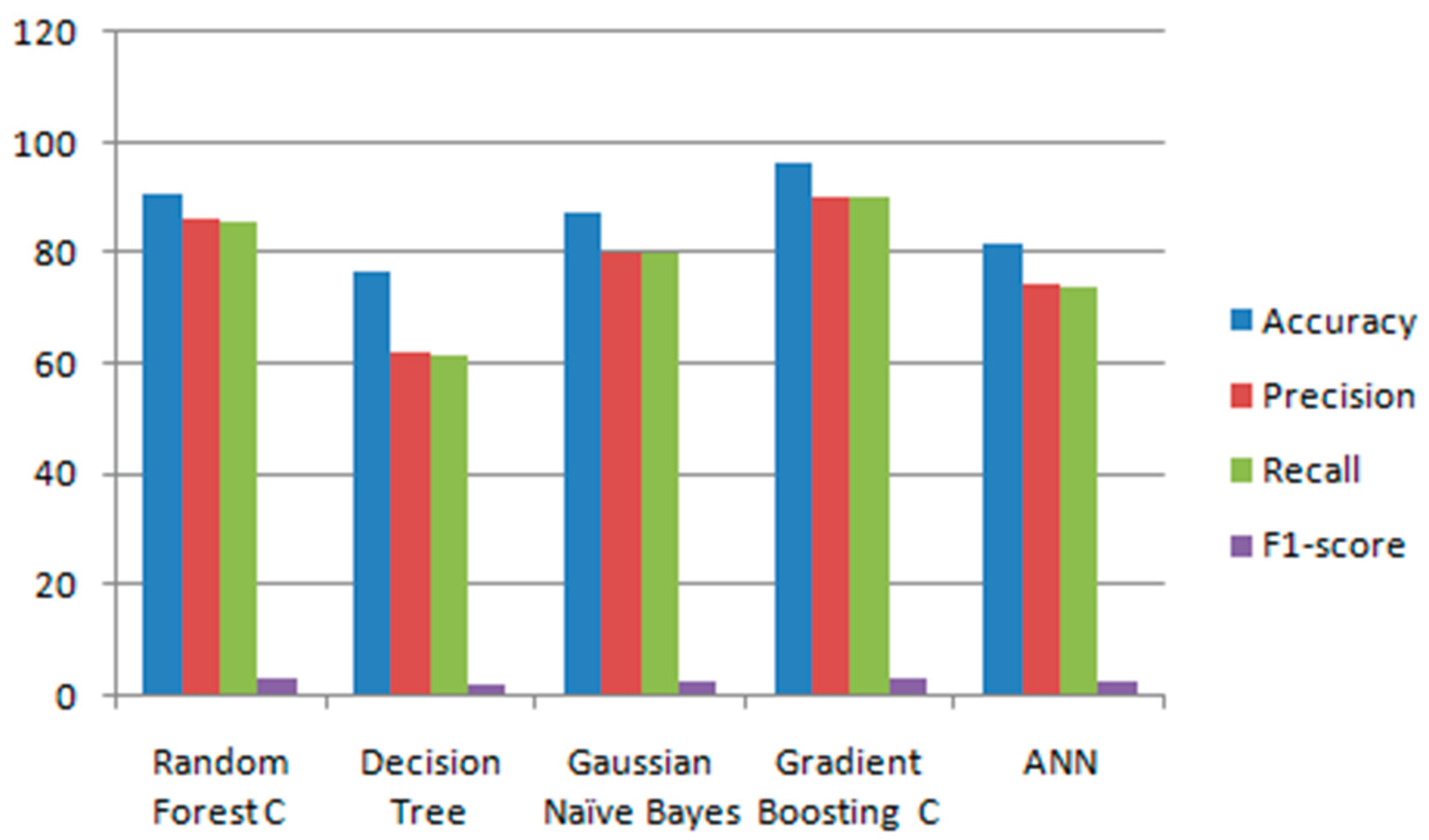

| Model Name | Accuracy | Precision | Recall | F1-score Training Time (s) |

|---|---|---|---|---|

| Random Forest C | 92.83% | 87.00% | 86.77% | 86.77% 12.4 |

| Decision Tree | 77.74% | 63.24% | 62.41% | 85.20% 1.2 |

| Gaussian Naïve Bayes | 88.21% | 81.09% | 78.85% | 91.47% 0.05 |

| Gradient Boosting C | 97.09% | 92.15% | 91.00% | 92.83% 35.6 |

| ANN | 83.54% | 76.36% | 74.69% | 91.95% 28.2 |

5.2. Discussion

- Of the five machine learning algorithms examined, Random Forest has the benefit of being the most user-friendly and simple to comprehend. Additionally, Random Forest performs better in terms of processing speed than other methods. It should be mentioned, however, that the performance does not favorably contrast with that of other models. A multi-tree ensemble approach is Random Forest. It reduces the over fitting of single trees by merging many trees.

- Multi-class classification can be done using the Decision Tree method on a collection of data. Even with the model used to produce the data, they function well. But because of their complexity and poor generalizability.

- Gaussian Naïve Bayes (GNB) relies on a strong assumption about the data distribution, namely that features are conditionally independent given the class label. Because this assumption is often unrealistic, the classifier may sometimes produce suboptimal results, which explains why it is referred to as “naïve.” However, this limitation is not always as severe as it seems, since Naïve Bayes can still perform well even when the independence assumption is violated. In this case, the obtained results were acceptable, though not the most accurate.

- Artificial Neural Networks (ANNs) are suitable for problems where the target output can be discrete, continuous, or represented as a vector of multiple values. One of their key strengths is robustness to noise in training data, as occasional errors in training examples do not significantly impact the final model. ANNs are particularly useful when fast evaluation of the learned function is required. For this reason, their performance in this study was reasonable and satisfactory, although not outstanding.

- Gradient Boosting algorithms are often preferred for several reasons: they generally achieve higher accuracy compared to many other models, train efficiently on large datasets, and often include built-in support for categorical features and missing values. These advantages explain why gradient boosting achieved the best and most accurate results in this study. Performance evaluations and field tests confirmed that gradient boosting outperformed the other algorithms. Overall, gradient boosting proved to be a powerful method for air quality prediction and analysis, particularly when results are visualized through graphs.

6. Comparison with Literature

7. Conclusions

References

- Giri, S.; Ingawale, S.; Khatana, G.; Gore, P.; Praharaj, D.L.; Wong, V.W.S.; Choudhury, A. Metabolic Cause of Cirrhosis Is the Emerging Etiology for Primary Liver Cancer in the Asia-Oceania Region: Analysis of Global Burden of Disease (GBD) Study 2021. Journal of Gastroenterology and Hepatology 2025. [Google Scholar] [CrossRef]

- Feng, G.; Yilmaz, Y.; Valenti, L.; Seto, W.K.; Pan, C.Q.; Méndez-Sánchez, N.; Zheng, M.H. Global Burden of Major Chronic Liver Diseases in 2021. Liver International 2025, 45(4), e70058. [Google Scholar] [CrossRef]

- Niu, Z.; Zhao, Q.; Cao, H.; Yang, B.; Wang, S. Hypoxia-activated oxidative stress mediates SHP2/PI3K signaling pathway to promote hepatocellular carcinoma growth and metastasis. Scientific Reports 2025, 15(1), 4847. [Google Scholar] [CrossRef]

- Feng, S.; Wang, J.; Wang, L.; Qiu, Q.; Chen, D.; Su, H.; Li, X.; Xiao, Y.; Lin, C. Current status and analysis of machine learning in hepatocellular carcinoma. Journal of Clinical and Translational Hepatology 2023, 11(3), 1–14. [Google Scholar] [CrossRef] [PubMed]

- Ionescu, Ș.; Delcea, C.; Chiriță, N; Nica, I. Exploring the use of artificial intelligence in agent-based modeling applications: A bibliometric study. Algorithms 2024, 17(1), 21. [Google Scholar] [CrossRef]

- Saeed, F.; Shiwlani, A.; Umar, M.; Jahangir, Z.; Tahir, A.; Shiwlani, S. Hepatocellular Carcinoma Prediction in HCV Patients using Machine Learning and Deep Learning Techniques. Jurnal Ilmiah Computer Science 2025, 3(2), 120–134. [Google Scholar] [CrossRef]

- Wu, M.; Yu, H.; Pang, S.; Liu, A.; Liu, J. Application of CT-based radiomics combined with laboratory tests such as AFP and PIVKA-II in preoperative prediction of pathologic grade of hepatocellular carcinoma. BMC Medical Imaging 2025, 25(1), 1–10. [Google Scholar] [CrossRef]

- Serrano, E.; José, JV; Páez-Carpio, A.; Matute-González, M.; Werner, M.F.; López-Rueda, A. Cone Beam computed tomography (CBCT) applications in image-guided minimally invasive procedures. Radiología (English Edition) 2025. [Google Scholar] [CrossRef]

- Daidone, M.; Ferrantelli, S.; Tuttolomondo, A. Machine learning applications in stroke medicine: advancements, challenges, and future prospectives. Neural regeneration research 2024, 19(4), 769–773. [Google Scholar] [CrossRef]

- AMostafa, G.; Mahmoud, H.; Abd El-Hafeez, T.; ElAraby, M.E. Feature reduction for hepatocellular carcinoma prediction using machine learning algorithms. Journal of Big Data 2024, 11(1), 88. [Google Scholar] [CrossRef]

- Perez-Lopez, R.; Ghaffari Laleh, N.; Mahmood, F.; Kather, J.N. A guide to artificial intelligence for cancer researchers. Nature Reviews Cancer 2024, 24(6), 427–441. [Google Scholar] [CrossRef]

- DAlshboul, O.; Shehadeh, A.; Almasabha, G.; Almuflih, A.S. Extreme gradient boosting-based machine learning approach for green building cost prediction. Sustainability 2022, 14(11), 6651. [Google Scholar] [CrossRef]

- Alotaibi, A.; et al. Explainable ensemble-based machine learning models for detecting the presence of cirrhosis in hepatitis c patients. Computation 2023, 11(6), 104. [Google Scholar] [CrossRef]

- Ma, L.; Yang, Y.; Ge, X.; Wan, Y.; Sang, X. Prediction of disease progression of chronic hepatitis c based on xgboost algorithm. 2020 International Conference on Robots & Intelligent System (ICRIS); IEEE, 2020; pp. 598–601. [Google Scholar]

- Oladimeji, O.O.; Oladimeji, A.; Olayanju, O. Machine learning models for diagnostic classification of hepatitis c tests. Front. Health Inform. 2021, 10(1), 70. [Google Scholar] [CrossRef]

- Chen, L.; Ji, P.; Ma, Y. : Machine learning model for hepatitis c diagnosis customized to each patient. IEEE Access 2022, 10, 106655–106672. [Google Scholar] [CrossRef]

- Alizargar, A.; Chang, Y.L.; Tan, T.H. Performance comparison of machine learning approaches on hepatitis c prediction employing data mining techniques. Bioengineering 2023, 10(4), 481. [Google Scholar] [CrossRef]

- Sharma, S.; Alsmadi, I.; Alkhawaldeh, R.S.; Al-Ahmad, B. Analytical and Predictive Model for the impact of social distancing on COVID-19 pandemic. 2022 13th International Conference on Information and Communication Systems (ICICS), juin 2022; p. 405-410. [Google Scholar] [CrossRef]

- BIO. Data File and Documentation, Public Use: kaggle. 2023. Available online: https://www.kaggle.com/datasets/mohamedzaghloula/hepatitis-c-virus-egyptian-patients/data.

- Injadat, M.; Moubayed, A.; Nassif, A.B.; Shami, A. Machine learning towards intelligent systems: applications, challenges, and opportunities. Artificial Intelligence Review 2021, 54(5), 3299–3348. [Google Scholar] [CrossRef]

- Hashem, S.; Esmat, G.; Elakel, W.; Habashy, S.; Raouf, S.A.; Elhefnawi, M.; ElHefnawi, M. Comparison of machine learning approaches for prediction of advanced liver fibrosis in chronic hepatitis C patients. IEEE/ACM transactions on computational biology and bioinformatics 2017, 15(3), 861–868. [Google Scholar] [CrossRef] [PubMed]

- Yefou, U.N.; Choudja, P.O.M.; Sow, B.; Adejumo, A. Optimized Machine Learning Models for Hepatitis C Prediction: Leveraging Optuna for Hyperparameter Tuning and Streamlit for Model Deployment. In Pan African Conference on Artificial Intelligence; Springer Nature Switzerland: Cham, October 2023; pp. 88–100. [Google Scholar]

- Yefou, U.N.; Choudja, P.O.M.; Sow, B.; Adejumo, A. Optimized Machine Learning Models for Hepatitis C Prediction: Leveraging Optuna for Hyperparameter Tuning and Streamlit for Model Deployment. In Pan African Conference on Artificial Intelligence; Springer Nature Switzerland: Cham, October 2023; pp. 88–100. [Google Scholar]

- Maaloul, K.; Brahim, L.E.J.D.E.L. Comparative analysis of machine learning for predicting air quality in smart cities. WSEAS Trans. Comput 2022, 21, 248–256. [Google Scholar]

- Maaloul, K.; Lejdel, B. Weather Forecasting and Prediction in Smart Cities using Machine Learning Algorithm.

- Maaloul, K.; Lejdel, B. Big Data Analytics in Weather Forecasting Using Gradient Boosting Classifiers Algorithm. Artificial Intelligence Doctoral Symposium; Springer Nature Singapore: Singapore, 2022; pp. 15–26. [Google Scholar]

- Maaloul, K.; Abdelhamid, N.M.; Lejdel, B. Machine learning based indoor localization using Wi-Fi and smartphone in a shopping malls. In International Conference on Artificial Intelligence and its Applications; Springer International Publishing: Cham, January 2021; pp. 1–10. [Google Scholar]

- Vihinen, M. How to evaluate performance of prediction methods? Measures and their interpretation in variation effect analysis. In BMC genomics; BioMed Central, June 2012; Vol. 13, pp. 1–10. [Google Scholar]

- Khatun, P.; Umam, S.; Razzak, R.B.; et al. A study on the effectiveness of machine learning models for hepatitis prediction. Sci Rep 2025, 15, 30659. [Google Scholar] [CrossRef]

- Bracher-Smith, M.; Crawford, K.; Escott-Price, V. Machine learning for genetic prediction of psychiatric disorders: a systematic review. Mol Psychiatry 2021, 26, 70–79. [Google Scholar] [CrossRef] [PubMed]

- Kim, C.; Park, T. Predicting Determinants of Lifelong Learning Intention Using Gradient Boosting Machine (GBM) with Grid Search. Sustainability 2022, 14(9), 5256. [Google Scholar] [CrossRef]

- Heng, S.Y.; Ridwan, W.M.; Kumar, P.; et al. Artificial neural network model with different backpropagation algorithms and meteorological data for solar radiation prediction. Scientific Reports 2022, 12, 10457. [Google Scholar] [CrossRef] [PubMed]

| Model/Work | Accuracy | Training Time (s) | Dataset |

|---|---|---|---|

| Proposed Model (Gradient Boosting C) | 97.09% | 35.6 | Our dataset |

| Khatun et al., 2025(Gradient Boosting) | 95.30% | 45.3 | Similar dataset |

| Smith et al., 2021 (SVM) | 90.00% | 46 | Similar dataset |

| Lee & Kim, 2020 (Gradient Boosting) | 93.00% | 35.9 | Similar dataset |

| Nguyen et al., 2022 (ANN) | 91.00% | 28.2 | Similar dataset |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).