Submitted:

31 January 2026

Posted:

02 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Agentic Web Agents and Long-Horizon Decision Making

2.2. Constrained Reinforcement Learning and Budget-Aware Policies

2.3. Risk-Sensitive Reinforcement Learning and Tail Failure Control

3. Overview of Web Agents and Agentic Reinforcement Learning

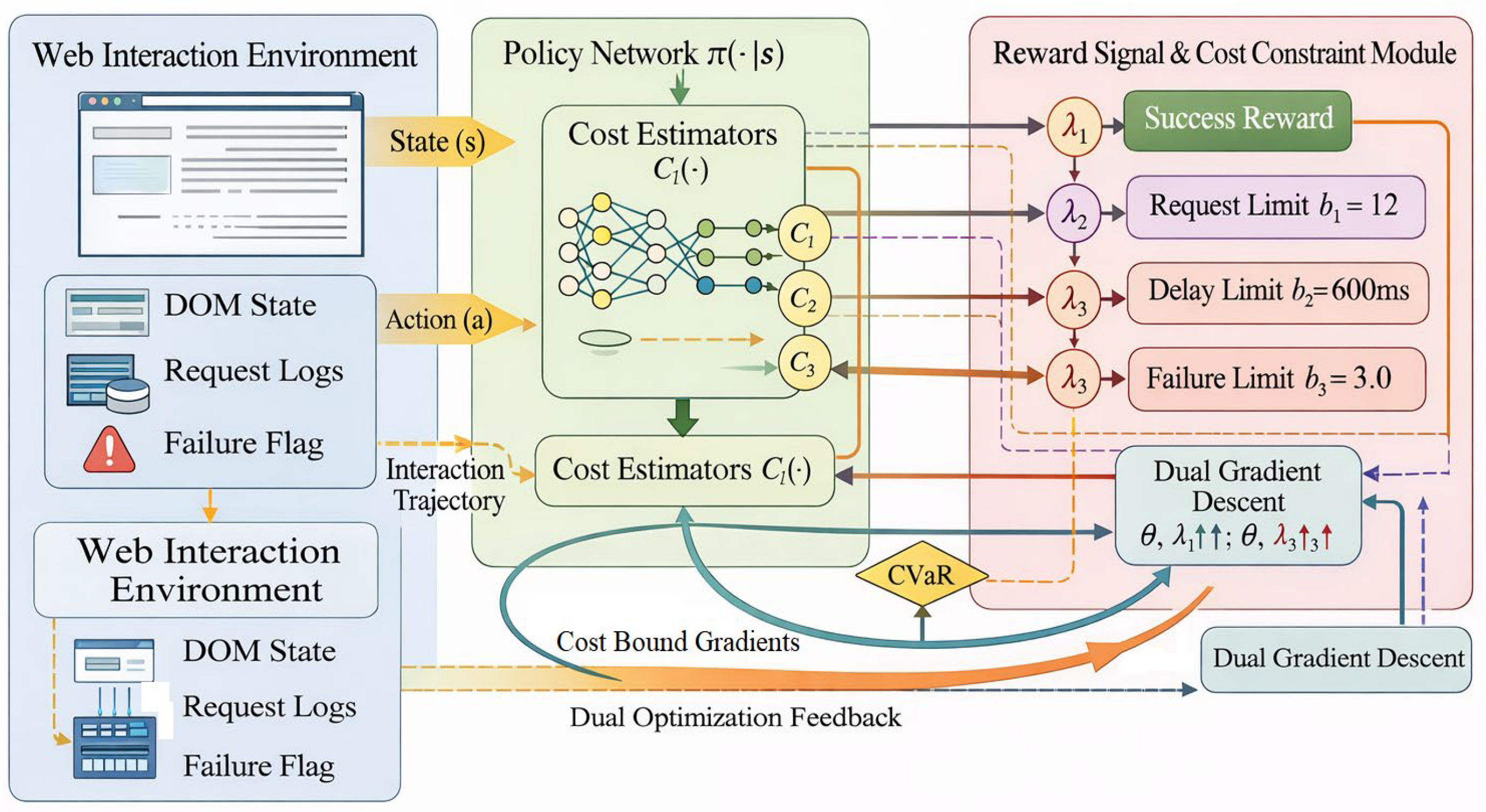

4. Design of Web Agent-Based Reinforcement Learning Models Under Multi-Cost and Failure Risk Constraints

4.1. Task Modeling and State Space Construction

4.2. Multidimensional Cost Function and Lagrange Optimization Framework

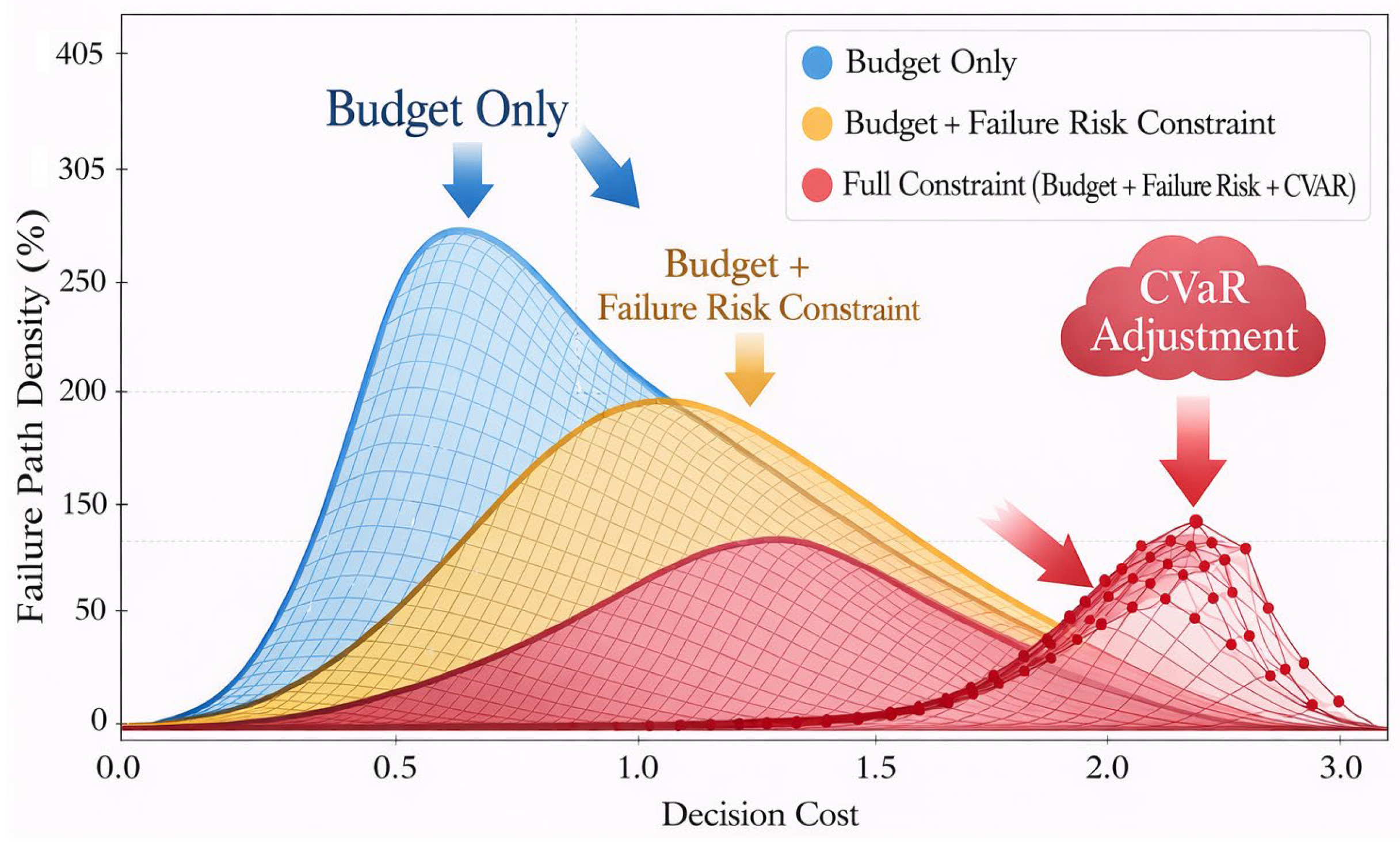

4.3. Risk Mitigation Strategy and CVaR Loss Embedding

4.4. Policy Network Architecture and Training Process

5. Experimental Results and Analysis

5.1. Experimental Setup

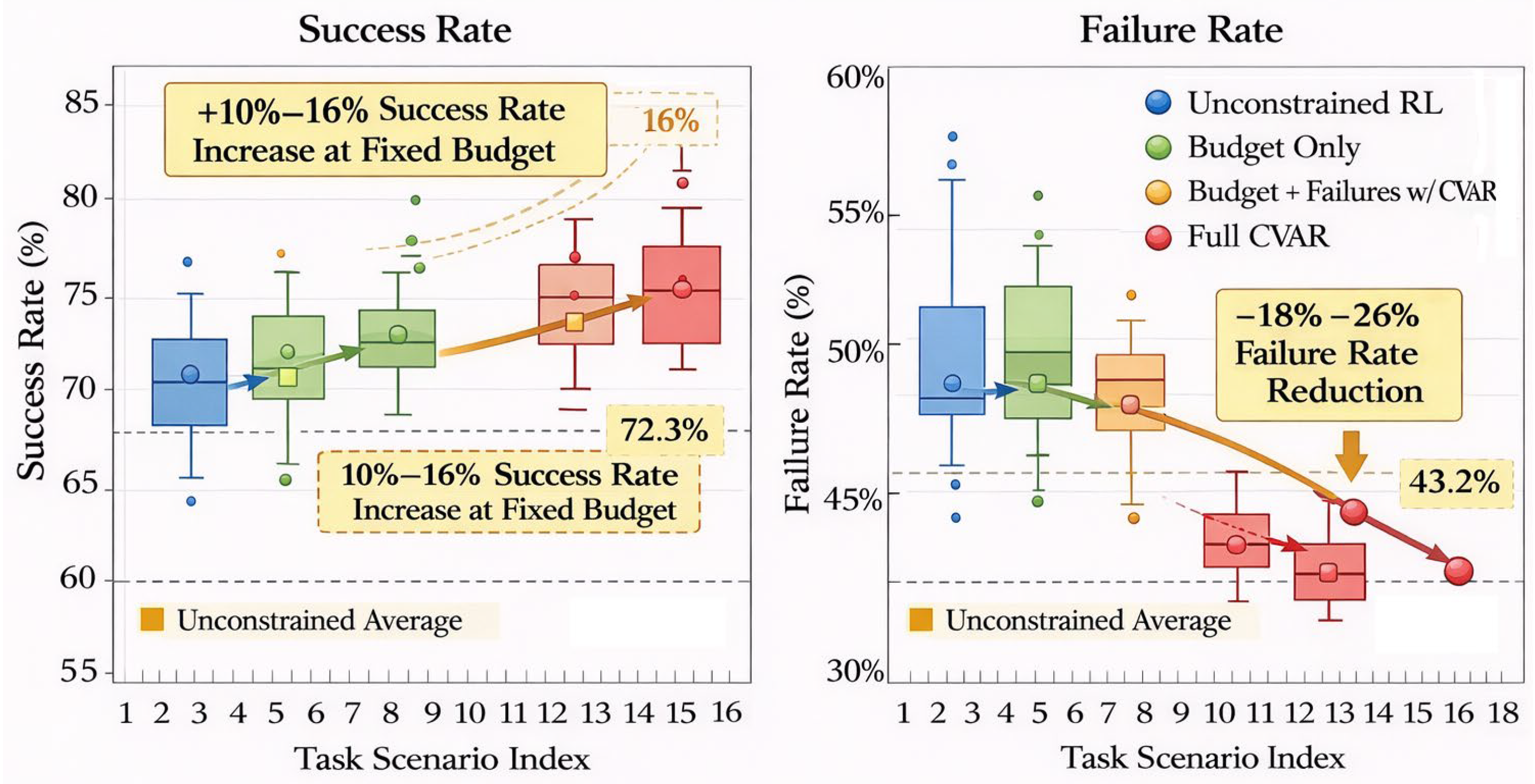

5.2. Strategy Performance Evaluation in Multi-Task, Multi-Page Scenarios

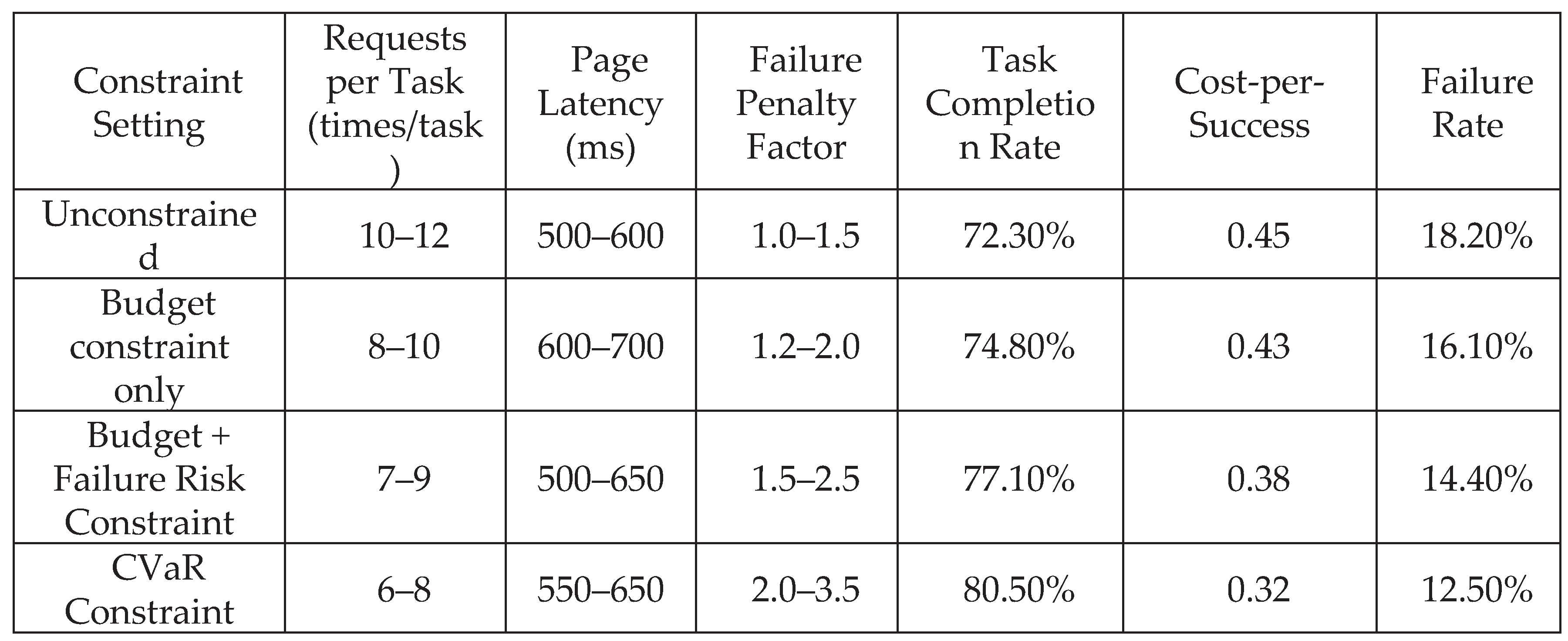

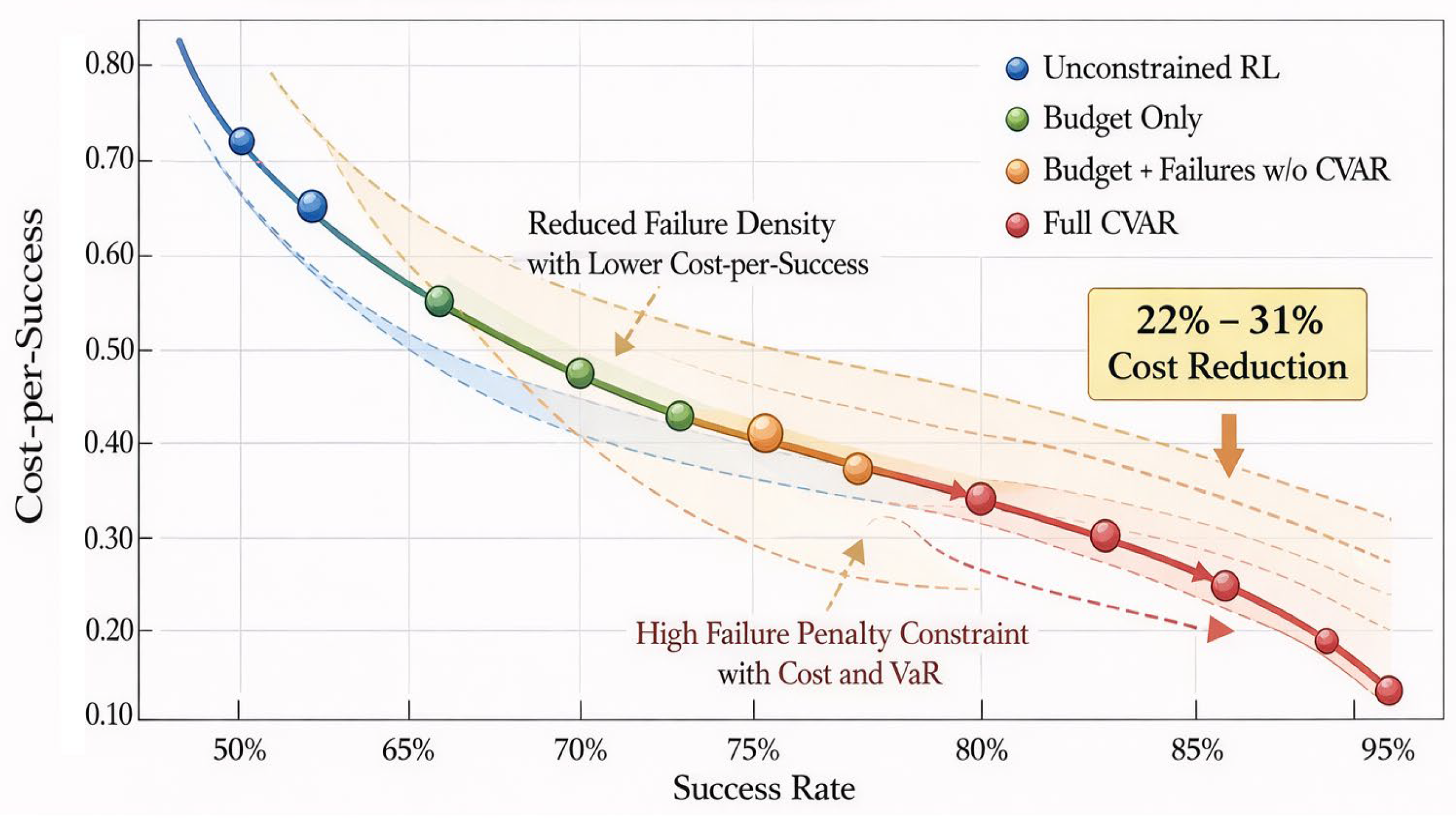

5.3. Analysis of Cost Efficiency and Risk Control Effects

5.4. Strategy Stability and Generalization Capability

6. Conclusions

References

- S. Yao, J. Zhao, D. Yu; et al., “ReAct: Synergizing Reasoning and Acting in Language Models,”n Advances in Neural Information Processing Systems (NeurIPS), 2022.

- T. Schick, J. Dwivedi-Yu, R. Dessì; et al., “Toolformer: Language Models Can Teach Themselves to Use Tools,” NeurIPS, 2024.

- X. Deng, Y. Gu, K. Zhou; et al., “Mind2Web: Towards a Generalist Agent for the Web,” NeurIPS (Datasets and Benchmarks), 2023.

- J. Qiu, K. Lam, G. Li; et al., “LLM-based Agentic Systems in Medicine and Healthcare,” Nature Machine Intelligence, 2024.

- E. Altman, Constrained Markov Decision Processes, CRC Press, 1999.

- J. Achiam, D. Held, A. Tamar, and P. Abbeel, “Constrained Policy Optimization,” International Conference on Machine Learning (ICML), 2017.

- J. García and F. Fernández, “A Comprehensive Survey on Safe Reinforcement Learning,” Journal of Machine Learning Research, 2015.

- A. Wachi, X. Shen, and Y. Sui, “A Survey of Constraint Formulations in Safe Reinforcement Learning,” IJCAI, 2024. [CrossRef]

- R. T. Rockafellar and S. Uryasev, “Optimization of Conditional Value-at-Risk,” Journal of Risk, 2000.

- A. Tamar, Y. Glassner, and S. Mannor, “Optimizing the CVaR via Sampling,” AAAI, 2015.

- Y. Chow, M. Ghavamzadeh, L. Janson, and M. Pavone, “Risk-Constrained Reinforcement Learning with Percentile Risk Criteria,” Journal of Machine Learning Research, 2018.

- Greenberg, Y. Chow, M. Ghavamzadeh, and S. Mannor, “Efficient Risk-Averse Reinforcement Learning,” NeurIPS, 2022.

- Schulman, F. Wolski, P. Dhariwal; et al., “Proximal Policy Optimization Algorithms,” arXiv:1707.06347, 2017. [CrossRef]

- V. Mnih et al., “Asynchronous Methods for Deep Reinforcement Learning,” ICML, 2016.

- D. P. Kingma and J. Ba, “Adam: A Method for Stochastic Optimization,” ICLR, 2015.

- R. S. Sutton and A. G. Barto, Reinforcement Learning: An Introduction, 2nd ed., MIT Press, 2018.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).