2. Methodology

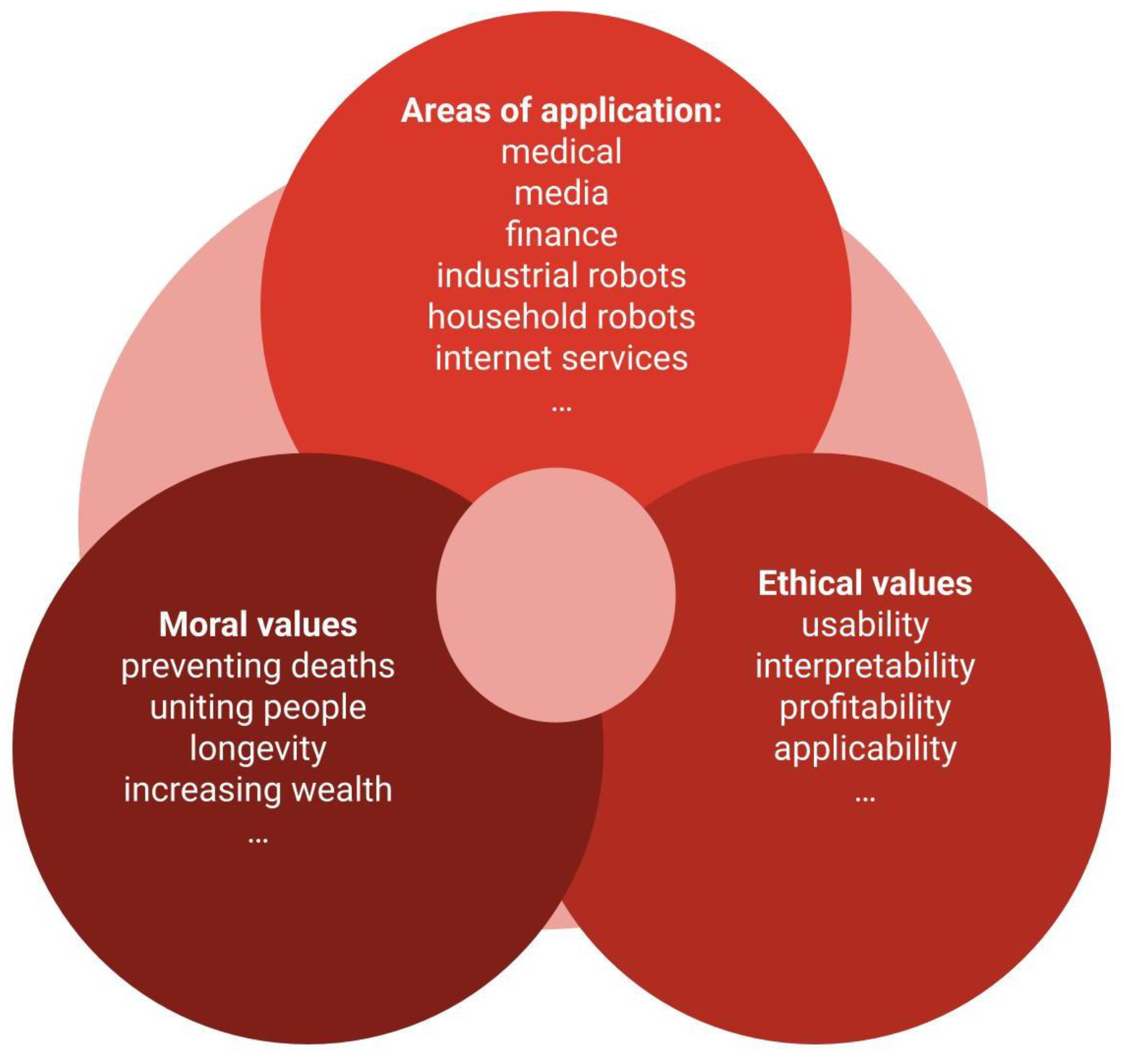

This review explores how to define ethical values and how to transfer these values to technology, especially artificial intelligence. Due to influences, crimes, security concerns, etc., discussions about artificial intelligence have moved beyond academia and entered into the political, legal, and religious realms. Since the goal is to assist or automate decision-making, reintroducing moral values into artificial intelligence is desirable; in other words, implementing moral agency. Initially, moral agency (Etzioni et al., 2017) can be planned focusing on tools that prevent deaths, bring people together at home, save money, improve quality of life at work, increase longevity, and so on. Technically, it is possible to discuss the implementation of moral agency in the development steps to transfer human values to the applications, such as interpretability, applicability, profitability, usability, innovation, originality, and so on, along with other ethical values.

Transferring human intelligence to AI lies also in incorporating ethical values during the planning and development of technologies. For example, interpretability. Solving mathematical equations is easy for a computer, even without knowledge of some variables. However, for humans, interpreting the results remains difficult. Another example of an ethical value is applicability, which characterizes in what terms it is worthwhile to apply the technology, such as monitoring, diagnosis, alarms, and so on. Furthermore, there is profitability, that is, interest in products that help accumulate wealth at the expense of superfluous software; or usability, which considers the interests and capabilities of users' minds. Defining ethical criteria and values for choosing a method is a task in itself (Michalski et al., 2025).

Figure 1 shows an example of a framework for transferring ethical-moral values to artificial intelligence.

Human moral essence seems to originate from the use of tools, as well as in reading and writing. However, it is only seen in interactions among humans between humans and other living beings. Computer tools and language are different from those of humans, so in essence, the morality of robots and human are different. What can be done, therefore, is to consider human morality and ethics in the development and in the use of technology.

In computer science, ethical values are defined in layers. The first layer, data, defines criteria such as reproducibility, data consistency, coherence of hypotheses about the data, and so on. The second layer, the algorithm layer, assigns values to the techniques themselves, such as monitoring, prediction, diagnosis, etc. The third layer, the algorithm's interface with the user, encompasses the human values that the algorithm can assimilate. That is, which ethical values can be translated into numbers. Examples include interpretability, usability, complexity, and so on. Assigning values to techniques allows human intelligence to be applied to the use or development of the technology.

One way to define ethical criteria for the techniques in machine learning is through literature reviews, as done in Michalski et al. (2025). The proposed review method associates the terms of the values, the application label, and the names of the techniques when these appear in the same study. From there, the frequency of these associations is analysed. It is then possible to quantify whether the "random forest" machine learning method, for example, has high usability. That is, whether "random forest" appears in the same studies where keywords that define usability values appear.

When dealing with specific application area, such as engineering, medicine, etc., the most values are defined by interest. "Diagnosing," "predicting," "clustering," "recognization," "monitoring," among others, are terms that can be associated with the values of these applications. Furthermore, various disciplines offer techniques for solving artificial intelligence problems, such as statistics, mathematics, linguistics, and, most naturally, computer science.