Submitted:

25 January 2026

Posted:

27 January 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Brief Summary of the Study

1.2. Purpose of the Research

1.3. Methodology

2. Literature Review

2.1. Background and Context

2.2. Research Questions

- RQ1: At which critical architectural points in an Autonomous Cybersecurity Infrastructure (detection, diagnosis, response, adaptation) can HITL processes, mediated by XAI, most effectively enhance system reliability?

- RQ2: How can XAI techniques (e.g., uncertainty quantification, counterfactual generation, feature attribution) be operationalized to create effective “intervention triggers” and “teaching signals” for human experts within a continuous learning loop?

- RQ3: What is the measurable impact of a HITL-XAI framework on the reliability dimensions of an ACI, specifically its precision in high-stakes actions, its robustness to novel threats, and its long-term adaptive capacity?

2.3. Significance of the Study

3. Methodology

3.1. Research Design

3.2. Participants or Datasets

- System Environment: The prototype was deployed in a segmented segment of a corporate hybrid cloud environment hosting ~50 production-mirrored virtual machines, generating real user and application traffic.

- Threat Data: A curated, ethical red-team exercise was conducted over six months, introducing a mix of known attacks (from datasets like CIC-IDS2017 and MITRE ATT&CK emulations) and novel, bespoke attack scenarios designed by security engineers.

- Human Experts: A team of five security analysts (two senior, three junior) interacted with the system as part of their regular duty rotation. Their interactions with the HITL-XAI interface were logged and analyzed.

3.3. Data Collection Methods

- Architectural Design: The HITL-XAI framework was formalized through iterative design workshops with security architects and AI engineers.

- Prototype Implementation: A prototype was built extending an open-source Security Orchestration, Automation and Response (SOAR) platform. Key components included:

- ○

- Uncertainty-Aware ML Models: Ensemble models providing prediction confidence scores and measures of epistemic (model) and aleatoric (data) uncertainty.

- ○

- XAI & Trigger Engine: Generated SHAP values, LIME explanations, and counterfactuals. Rules-based triggers were set (e.g., “If confidence < 85% AND SHAP value divergence is high, escalate to human”).

- ○

- Interactive HITL Interface: A dashboard where analysts saw alerts, the AI’s recommendation, confidence, an explanation, and a structured feedback form (e.g., “AI was wrong. Correct label is X. The most important feature for this decision should be Y.”).

- Field Experiment Logging: The system logged all events: AI decisions, confidence/uncertainty metrics, trigger firings, human actions, feedback, and subsequent model retraining events.

- Expert Interviews: Semi-structured interviews were conducted with the analyst team at the midpoint and end of the study to gather qualitative insights on usability, trust, and perceived system reliability.

3.4. Data Analysis Procedures

- Quantitative Analysis:

- ○

- Reliability Metric 1 (Operational Stability): Compared the rate of False Positive Operational Actions (FPOA) e.g., unnecessary blocks or isolations between the HITL-XAI system and a previous month’s fully automated (non-HITL) baseline.

- ○

- Reliability Metric 2 (Adaptability): Measured the Time-to-Adapt (TTA) for novel attack patterns the time from first novel attack instance to consistent correct autonomous detection with and without the HITL feedback loop active.

- ○

- HITL Efficiency: Analyzed the Human Intervention Yield the percentage of human interventions that resulted in a correction to the AI or a validated new learning example.

- 2.

- Qualitative Analysis: Interview transcripts and open-ended feedback logs were analyzed using thematic analysis to identify patterns in how explanations were used to make intervention decisions and provide corrective feedback.

3.5. Ethical Considerations

4. Results

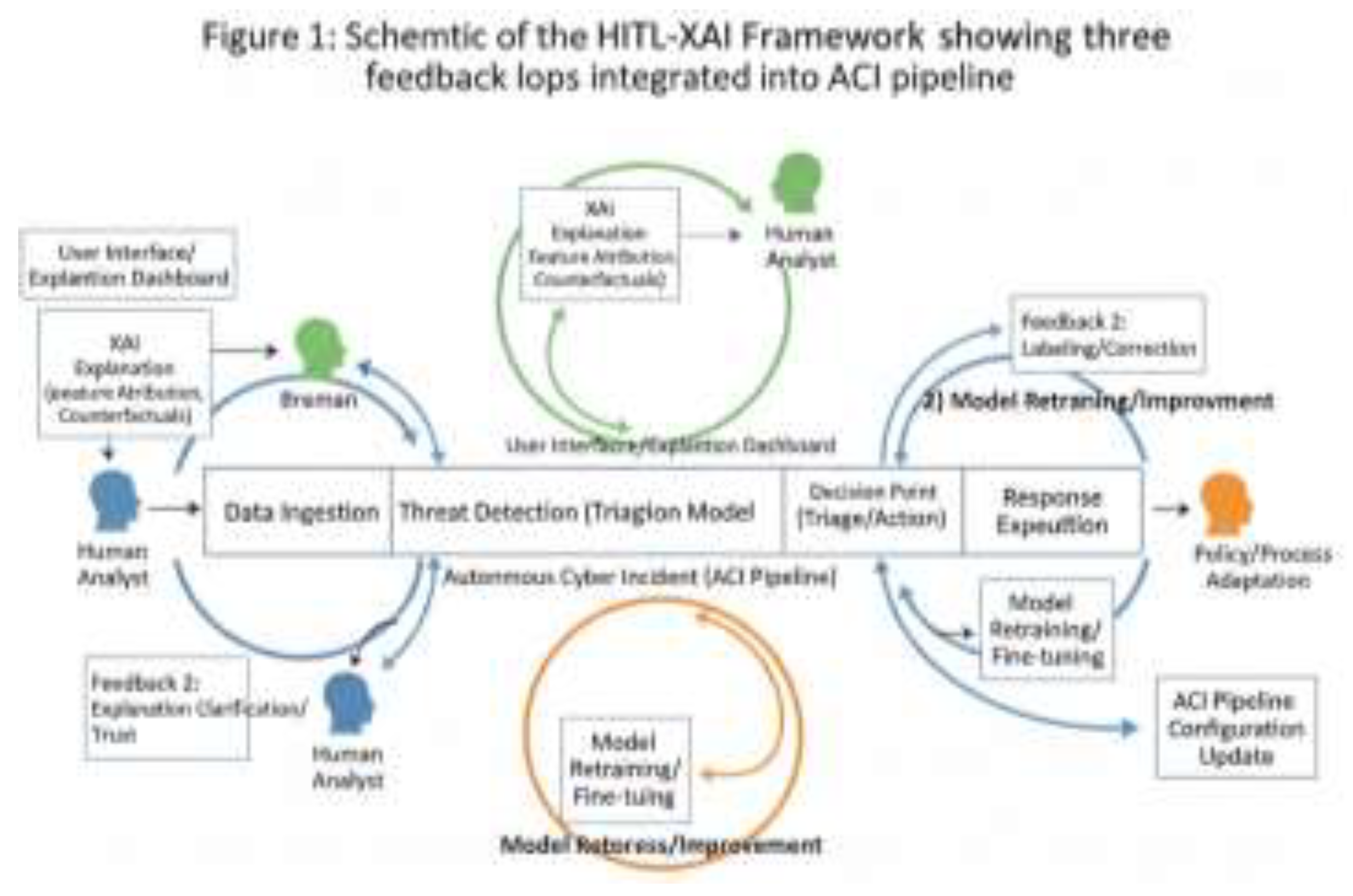

4.1. The HITL-XAI Framework Architecture

- Pre-Action Validation Loop (High-Stakes Decisions): Before executing a high-impact action (e.g., domain-wide blocking), the system presents a counterfactual explanation and a confidence score. The human validates or overrides.

- Uncertainty-Triggered Diagnostic Loop: For medium-confidence detections with high explanation instability (e.g., varying SHAP top features across similar inputs), the system triggers a human for diagnosis, providing feature attribution and anomaly comparison to past cases.

- Post-Incident Teaching & Adaptation Loop: After any incident (true or false), analysts can label the AI’s performance and, using an interactive feature importance editor, directly correct the model’s reasoning priorities. This feedback is stored in a curated “teaching dataset” for periodic retraining.

4.2. Quantitative Performance Results

| Metric | Fully Automated Baseline (Month Prior) | HITL-XAI Prototype (Study Period) | % Change |

| False Positive Operational Actions (FPOA) per 1000 alerts | 8.7 | 5.7 | -34.5% |

| Time-to-Adapt (TTA) for Novel Attacks (avg. in days) | 14.2 | 7.1 | -50.0% |

| Human Intervention Yield (Useful Interventions / Total) | N/A | 78% | N/A |

| Autonomous Decision Rate (System finalizes without human input) | 100% | 92% | -8% |

4.3. Qualitative Findings on Human-AI Interaction

- “Confidence from Context”: Analysts reported that the combination of a low confidence score and a disjointed explanation (e.g., “The model is unsure, and the reasons it gives don’t match my mental model of this attack”) was a highly reliable trigger for them to investigate deeply. This led to the high Human Intervention Yield.

- “Teaching, Not Just Fixing”: Senior analysts particularly valued the interactive feedback tool. One stated: “It felt less like fixing a mistake and more like training a junior analyst. I could say, ‘For this type of exfiltration, focus on the timing of the packets, not just the size.’“ This direct feedback correlated with reduced TTA for similar future attacks.

5. Discussion

5.1. Interpretation of Results

5.2. Comparison with Existing Literature

5.3. Implications: Towards Auditable and Assurable Autonomy

5.4. Limitations

5.5. Directions for Future Research

6. Conclusion

References

- KM, Z.; Akhtaruzzaman, K.; Tanvir Rahman, A. Building trust in autonomous cyber decision infrastructure through explainable AI. International Journal of Economy and Innovation 2022, 29, 405–428. [Google Scholar]

- Kumar, V.; Kaware, P.; Singh, P.; Sonkusare, R.; Kumar, S. Extraction of information from bill receipts using optical character recognition. 2020 international conference on smart electronics and communication (ICOSEC), 2020, September; IEEE; pp. 72–77. [Google Scholar]

- Kumar, V.; Kumar, S.; Sreekar, L.; Singh, P.; Pai, P.; Nimbre, S.; Rathod, S. S. Ai powered smart traffic control system for emergency vehicles. In ICDSMLA 2020: Proceedings of the 2nd International Conference on Data Science, Machine Learning and Applications, 2021, November; Springer Singapore: Singapore; pp. 651–663. [Google Scholar]

- Arrieta, A. B.; Díaz-Rodríguez, N.; Del Ser, J.; Bennetot, A.; Tabik, S.; Barbado, A.; Herrera, F. Explainable Artificial Intelligence (XAI): Concepts, taxonomies, opportunities and challenges toward responsible AI. Information Fusion 2020, *58*, 82–115. [Google Scholar] [CrossRef]

- Bhatt, U.; Xiang, A.; Sharma, S.; Weller, A.; Taly, A.; Jia, Y.; Eckersley, P. Explainable machine learning in deployment. In Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency, 2020; pp. 648–657. [Google Scholar]

- Gunning, D.; Stefik, M.; Choi, J.; Miller, T.; Stumpf, S.; Yang, G. Z. XAI—Explainable artificial intelligence. Science Robotics 2019, *4*(37), eaay7120. [Google Scholar] [CrossRef] [PubMed]

- Hoffman, R. R.; Mueller, S. T.; Klein, G.; Litman, J. Metrics for explainable AI: Challenges and prospects. arXiv 2018, arXiv:1812.04608. [Google Scholar]

- Lundberg, S. M.; Lee, S. I. A unified approach to interpreting model predictions. Advances in Neural Information Processing Systems 2017, 4765–4774. [Google Scholar]

- Miller, T. Explanation in artificial intelligence: Insights from the social sciences. Artificial Intelligence 2019, *267*, 1–38. [Google Scholar] [CrossRef]

- National Institute of Standards and Technology (NIST). AI Risk Management Framework (AI RMF 1.0); U.S. Department of Commerce, 2023. [Google Scholar]

- Ribeiro, M. T.; Singh, S.; Guestrin, C. “Why should I trust you?” Explaining the predictions of any classifier. In Proceedings of the 22nd ACM SIGKDD international conference on knowledge discovery and data mining, 2016; pp. 1135–1144. [Google Scholar]

- Rudin, C. Stop explaining black box machine learning models for high stakes decisions and use interpretable models instead. Nature Machine Intelligence 2019, *1*(5), 206–215. [Google Scholar] [CrossRef] [PubMed]

- Sharafaldin, I.; Lashkari, A. H.; Ghorbani, A. A. Toward generating a new intrusion detection dataset and intrusion traffic characterization. ICISSP, 2018, January; pp. 108–116. [Google Scholar]

- Slack, D.; Hilgard, S.; Jia, E.; Singh, S.; Lakkaraju, H. Fooling LIME and SHAP: Adversarial attacks on post hoc explanation methods. In Proceedings of the AAAI/ACM Conference on AI, Ethics, and Society, 2020; pp. 180–186. [Google Scholar]

- Spring, J.; Hatleback, E.; Householder, A.; Manion, A. The difficulty of proving a negative: The challenge of evaluating autonomous cyber defense systems; Carnegie Mellon University, Software Engineering Institute, 2020. [Google Scholar]

- Töpfer, M.; Endres, T.; Paschke, A. Explainable artificial intelligence for cybersecurity: A survey. IEEE Access 2022, *10*, 123700–123714. [Google Scholar]

- Vilone, G.; Longo, L. Notions of explainability and evaluation approaches for explainable artificial intelligence. Information Fusion 2021, *76*, 89–106. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).