Submitted:

24 January 2026

Posted:

27 January 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Materials and Methods

2.1. Samples and Study Scope

2.2. Experimental Design and Control Setup

2.3. Measurement Procedures and Quality Control

2.4. Data Processing and Model Formulation

2.5. Statistical Analysis

3. Results and Discussion

3.1. Alignment Shifts Bias from Text Cues to Visual Priors

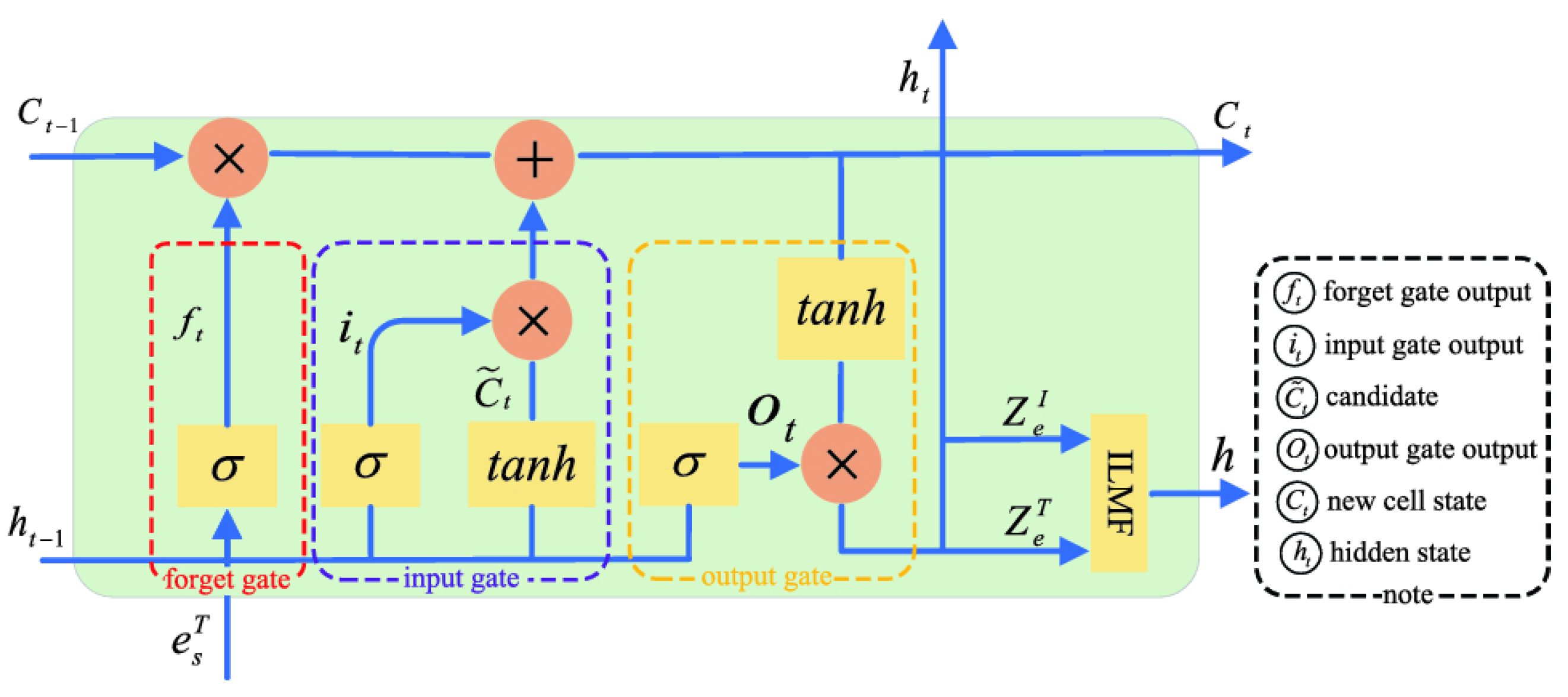

3.2. Cross-Modal Transfer Is Shaped by Fusion and Weighting Mechanisms

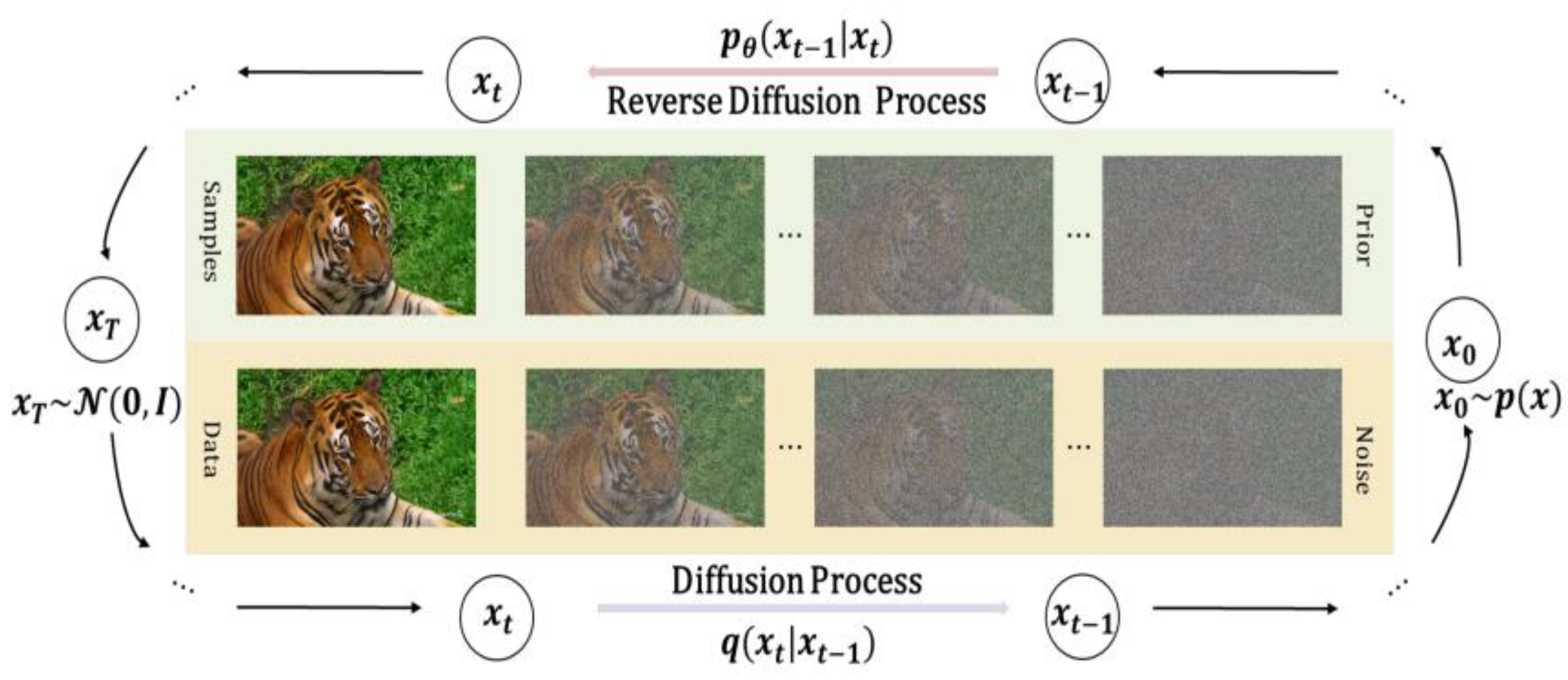

3.3. Temporal Persistence Amplifies Visually Driven Bias Carryover

3.4. Comparison with Prior Work and Implications for Mitigation

4. Conclusion

References

- Hayawi, K.; Shahriar, S. Generative AI for Text-to-Video Generation: Recent Advances and Future Directions. 2025. [Google Scholar]

- Yang, M.; Wang, Y.; Shi, J.; Tong, L. Reinforcement Learning Based Multi-Stage Ad Sorting and Personalized Recommendation System Design. 2025. [Google Scholar] [PubMed]

- Firoozi, R.; Tucker, J.; Tian, S.; Majumdar, A.; Sun, J.; Liu, W.; Schwager, M. Foundation models in robotics: Applications, challenges, and the future. The International Journal of Robotics Research 2025, 44(5), 701–739. [Google Scholar] [CrossRef]

- Narumi, K.; Qin, F.; Liu, S.; Cheng, H. Y.; Gu, J.; Kawahara, Y.; Yao, L. Self-healing UI: Mechanically and electrically self-healing materials for sensing and actuation interfaces. In Proceedings of the 32nd Annual ACM Symposium on User Interface Software and Technology, 2019, October; pp. 293–306. [Google Scholar]

- Ibrahim, S.; Mostafa, M.; Jnadi, A.; Salloum, H.; Osinenko, P. Comprehensive overview of reward engineering and shaping in advancing reinforcement learning applications. In IEEE Access.; 2024. [Google Scholar]

- Wu, Q.; Shao, Y.; Wang, J.; Sun, X. Learning Optimal Multimodal Information Bottleneck Representations. arXiv 2025, arXiv:2505.19996. [Google Scholar] [CrossRef]

- Cai, Z.; Qiu, H.; Zhao, H.; Wan, K.; Li, J.; Gu, J.; Hu, J. From Preferences to Prejudice: The Role of Alignment Tuning in Shaping Social Bias in Video Diffusion Models. arXiv 2025, arXiv:2510.17247. [Google Scholar] [CrossRef]

- Persson, L. M.; Falbén, J. K.; Tsamadi, D.; Macrae, C. N. People perception and stereotype-based responding: task context matters. Psychological Research 2023, 87(4), 1219–1231. [Google Scholar] [CrossRef] [PubMed]

- Tan, L.; Peng, Z.; Song, Y.; Liu, X.; Jiang, H.; Liu, S.; Xiang, Z. Unsupervised domain adaptation method based on relative entropy regularization and measure propagation. Entropy 2025, 27(4), 426. [Google Scholar] [CrossRef] [PubMed]

- Kazlaris, I.; Antoniou, E.; Diamantaras, K.; Bratsas, C. From illusion to insight: A taxonomic survey of hallucination mitigation techniques in LLMs. AI 2025, 6(10), 260. [Google Scholar] [CrossRef]

- Sheu, J. B.; Gao, X. Q. Alliance or no alliance—Bargaining power in competing reverse supply chains. European Journal of Operational Research 2014, 233(2), 313–325. [Google Scholar] [CrossRef]

- Thatch, C.; Bramwell, L. Cross-Modal Vision Representation Learning for Real-World Visual Understanding. Journal of Computer Technology and Software 2025, 4(4). [Google Scholar]

- Bai, W.; Wu, K.; Wu, Q.; Lu, K. AFLGopher: Accelerating Directed Fuzzing via Feasibility-Aware Guidance. arXiv 2025, arXiv:2511.10828. [Google Scholar] [CrossRef]

- Yerramilli, S.; Tamarapalli, J. S.; Francis, J.; Nyberg, E. Attribution Regularization for Multimodal Paradigms. arXiv 2024, arXiv:2404.02359. [Google Scholar] [CrossRef]

- Du, Y. Research on Deep Learning Models for Forecasting Cross-Border Trade Demand Driven by Multi-Source Time-Series Data. Journal of Science, Innovation & Social Impact 2025, 1(2), 63–70. [Google Scholar]

- Roth, L. S.; McGreevy, P. Horse vision through two lenses: Tinbergen’s Four Questions and the Five Domains. Frontiers in Veterinary Science 2025, 12, 1647911. [Google Scholar] [CrossRef] [PubMed]

- Mao, Y.; Ma, X.; Li, J. Research on API Security Gateway and Data Access Control Model for Multi-Tenant Full-Stack Systems. 2025. [Google Scholar]

- Gaw, N.; Yousefi, S.; Gahrooei, M. R. Multimodal data fusion for systems improvement: A review. In Handbook of Scholarly Publications from the Air Force Institute of Technology (AFIT); 2022; Volume 1, p. 2000-2020, 101-136. [Google Scholar]

- Mao. Research on Web System Anomaly Detection and Intelligent Operations Based on Log Modeling and Self-Supervised Learning. 2025. [Google Scholar] [CrossRef]

- Yarom, M.; Bitton, Y.; Changpinyo, S.; Aharoni, R.; Herzig, J.; Lang, O.; Szpektor, I. What you see is what you read? improving text-image alignment evaluation. Advances in Neural Information Processing Systems 2023, 36, 1601–1619. [Google Scholar]

- Liu, S.; Feng, H.; Liu, X. A Study on the Mechanism of Generative Design Tools' Impact on Visual Language Reconstruction: An Interactive Analysis of Semantic Mapping and User Cognition; Authorea Preprints, 2025. [Google Scholar]

- Anderson, T.; Brooks, M.; Martinez, A.; Williams, J. Adaptive Latent Interaction Reasoning for Multimodal Misinformation Analysis. 2025. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).