Submitted:

09 January 2026

Posted:

26 January 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Brief Summary of the Study

1.2. Purpose of the Research

1.3. Methodology

2. Literature Review

2.1. Background and Context

2.2. Research Questions

2.3. Significance of the Study

3. Methodology

3.1. Research Design

3.2. Participants or Datasets

3.3. Data Collection Methods

- Literature Review: 78 relevant papers were identified, screened, and synthesized to map XAI techniques to cyber defense tasks.

- Experimental Data: The CIC-IDS2017 dataset was preprocessed (handling missing values, normalization, label encoding). It was split into 70% training, 15% validation, and 15% testing sets. Three model architectures were implemented: a Decision Tree (DT - intrinsically interpretable), a Random Forest (RF - ensemble black-box), and a Deep Neural Network (DNN - deep black-box).

3.4. Data Analysis Procedures

- Model Training & Baseline Performance: All models were trained, and baseline metrics (Accuracy, Precision, Recall, F1-Score, Inference Time) were recorded.

-

XAI Application: Post-hoc explanations were generated for a stratified sample of 1000 test instances (including true positives, false positives, true negatives, false negatives).

- o SHAP (SHapley Additive exPlanations): KernelExplainer for DT and RF; DeepExplainer for DNN.

- o LIME (Local Interpretable Model-agnostic Explanations): Applied to all models.

- o Surrogate Model: A global logistic regression model was trained on the predictions of the RF and DNN models.

-

Evaluation Metrics:

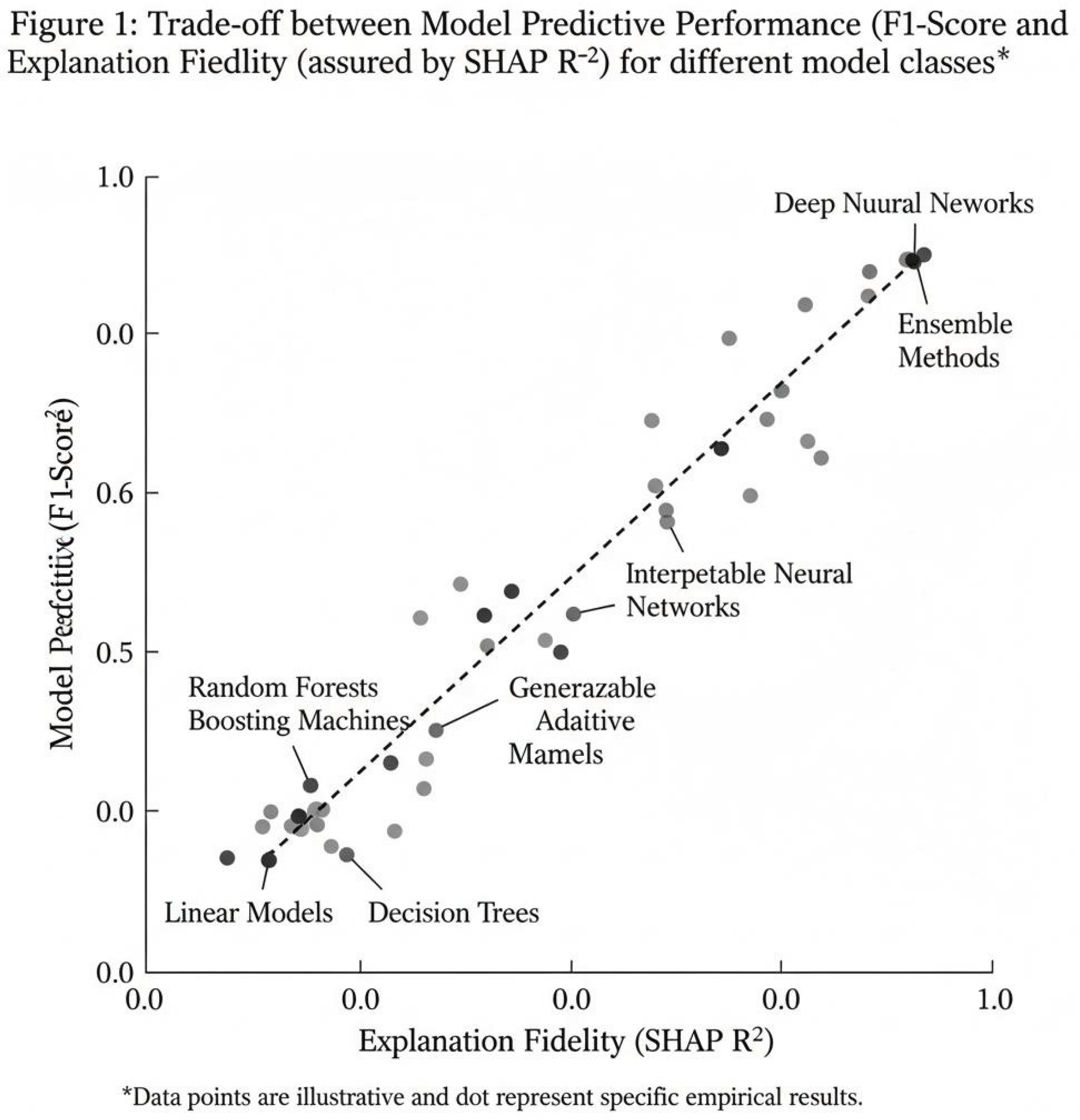

- o Explanation Fidelity: For LIME and the surrogate model, we measured how well the explanation model approximated the black-box model's predictions (using R² for regression tasks and accuracy for classification tasks on the explained instances).

- o Stability: Measured by applying LIME multiple times to the same instance and calculating the Jaccard similarity between the top-k features identified.

- o Computational Overhead: The time added to the inference pipeline to generate an explanation was measured.

- Human-Trust Simulation: A simplified survey was designed where 5 expert security analysts were presented with model predictions for 10 incident scenarios, both with and without SHAP-generated feature attribution plots. They rated their confidence in the system's decision on a Likert scale (1-5).

3.5. Ethical Considerations

4. Results

4.1. Model Performance Baseline

| Model | Accuracy | F1-Score (Weighted) | Avg. Inference Time (ms) |

| Decision Tree (DT) | 96.2% | 0.959 | 0.8 |

| Random Forest (RF) | 99.1% | 0.990 | 4.2 |

| Deep Neural Net (DNN) | 98.7% | 0.987 | 3.1 (GPU) |

4.2. XAI Framework Performance

| XAI Technique |

Avg. Explanation Fidelity (R²) |

Avg. Stability (Jaccard@5) | Avg. Overhead per Explanation (ms) |

| SHAP | 0.89 | 0.92 | 320 |

| LIME | 0.76 | 0.71 | 45 |

| Surrogate Model | 0.82 | 1.00 | 5 (after training) |

4.3. Impact on Human Trust

5. Discussion

5.1. Interpretation of Results

5.2. Comparison with Existing Literature

5.3. Implications

5.4. Limitations

5.5. Directions for Future Research

6. Conclusions

References

- KM, Z.; Akhtaruzzaman, K.; Tanvir Rahman, A. Building trust in autonomous cyber decision infrastructure through explainable AI. International Journal of Economy and Innovation 2022, 29, 405–428. [Google Scholar]

- Kumar, V.; Kaware, P.; Singh, P.; Sonkusare, R.; Kumar, S. Extraction of information from bill receipts using optical character recognition. 2020 International Conference on Smart Electronics and Communication (ICOSEC); LOCATION OF CONFERENCE, IndiaDATE OF CONFERENCE; pp. 72–77.

- Kumar, V.; Kumar, S.; Sreekar, L.; Singh, P.; Pai, P.; Nimbre, S.; Rathod, S.S. AI Powered Smart Traffic Control System for Emergency Vehicles; CONFERENCE NAME, LOCATION OF CONFERENCE, COUNTRYDATE OF CONFERENCE; pp. 651–663.

- Arrieta, A.B.; Díaz-Rodríguez, N.; Del Ser, J.; Bennetot, A.; Tabik, S.; Barbado, A.; Garcia, S.; Gil-Lopez, S.; Molina, D.; Benjamins, R.; et al. Explainable Artificial Intelligence (XAI): Concepts, taxonomies, opportunities and challenges toward responsible AI. Inf. Fusion 2020, 58, 82–115. [Google Scholar] [CrossRef]

- Bhatt, U.; Xiang, A.; Sharma, S.; Weller, A.; Taly, A.; Jia, Y.; Eckersley, P. Explainable machine learning in deployment. In Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency, 2020; pp. 648–657. [Google Scholar]

- Gunning, D.; Stefik, M.; Choi, J.; Miller, T.; Stumpf, S.; Yang, G. Z. XAI— Explainable artificial intelligence. Science Robotics 2019, *4*(37), eaay7120. [Google Scholar] [CrossRef] [PubMed]

- Hoffman, R. R.; Mueller, S. T.; Klein, G.; Litman, J. Metrics for explainable AI. 2018. [Google Scholar]

- Challenges and prospects. arXiv arXiv:1812.04608.

- Lundberg, S. M.; Lee, S. I. A unified approach to interpreting model predictions. In Advances in Neural Information Processing Systems; 2017; pp. 4765–4774. [Google Scholar]

- Miller, T. Explanation in artificial intelligence: Insights from the social sciences. Artif. Intell. 2019, 267, 1–38. [Google Scholar] [CrossRef]

- National Institute of Standards and Technology (NIST). AI Risk Management Framework (AI RMF 1.0); U.S. Department of Commerce, 2023. [Google Scholar]

- Ribeiro, M.; Singh, S.; Guestrin, C. “Why Should I Trust You?”: Explaining the Predictions of Any Classifier. In Proceedings of the 2016 Conference of the North American Chapter of the Association for Computational Linguistics: Demonstrations, LOCATION OF CONFERENCE, United StatesDATE OF CONFERENCE.

- Rudin, C. Stop explaining black box machine learning models for high stakes decisions and use interpretable models instead. Nat. Mach. Intell. 2019, 1, 206–215. [Google Scholar] [CrossRef] [PubMed]

- Sharafaldin, I.; Lashkari, A.H.; Ghorbani, A.A. Toward generating a new intrusion detection dataset and intrusion traffic characterization. In Proceedings of the International Conference on Information Systems Security and Privacy, Funchal, Portugal, 22–24 January 2018; pp. 108–116. [Google Scholar]

- Slack, D.; Hilgard, S.; Jia, E.; Singh, S.; Lakkaraju, H. Fooling LIME and SHAP: Adversarial attacks on post hoc explanation methods. In Proceedings of the AAAI/ACM Conference on AI, Ethics, and Society, 2020; pp. 180–186. [Google Scholar]

- Spring, J.; Hatleback, E.; Householder, A.; Manion, A. The difficulty of proving a negative: The challenge of evaluating autonomous cyber defense systems; Carnegie Mellon University, Software Engineering Institute, 2020. [Google Scholar]

- Töpfer, M.; Endres, T.; Paschke, A. Explainable artificial intelligence for cybersecurity: A survey. IEEE Access 2022, *10*, 123700–123714. [Google Scholar]

- Vilone, G.; Longo, L. Notions of explainability and evaluation approaches for explainable artificial intelligence. Inf. Fusion 2021, 76, 89–106. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.