Submitted:

22 January 2026

Posted:

26 January 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Contributions

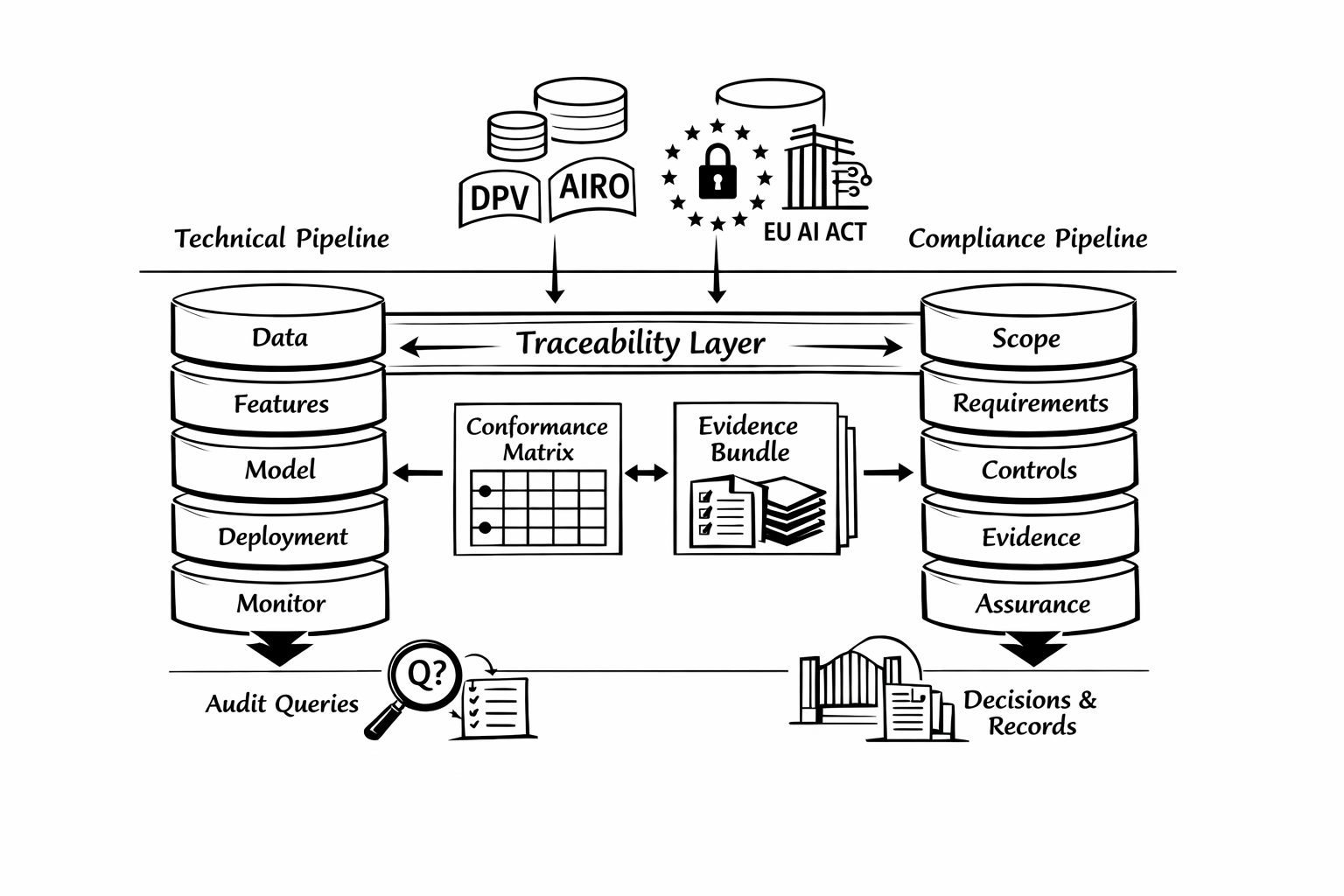

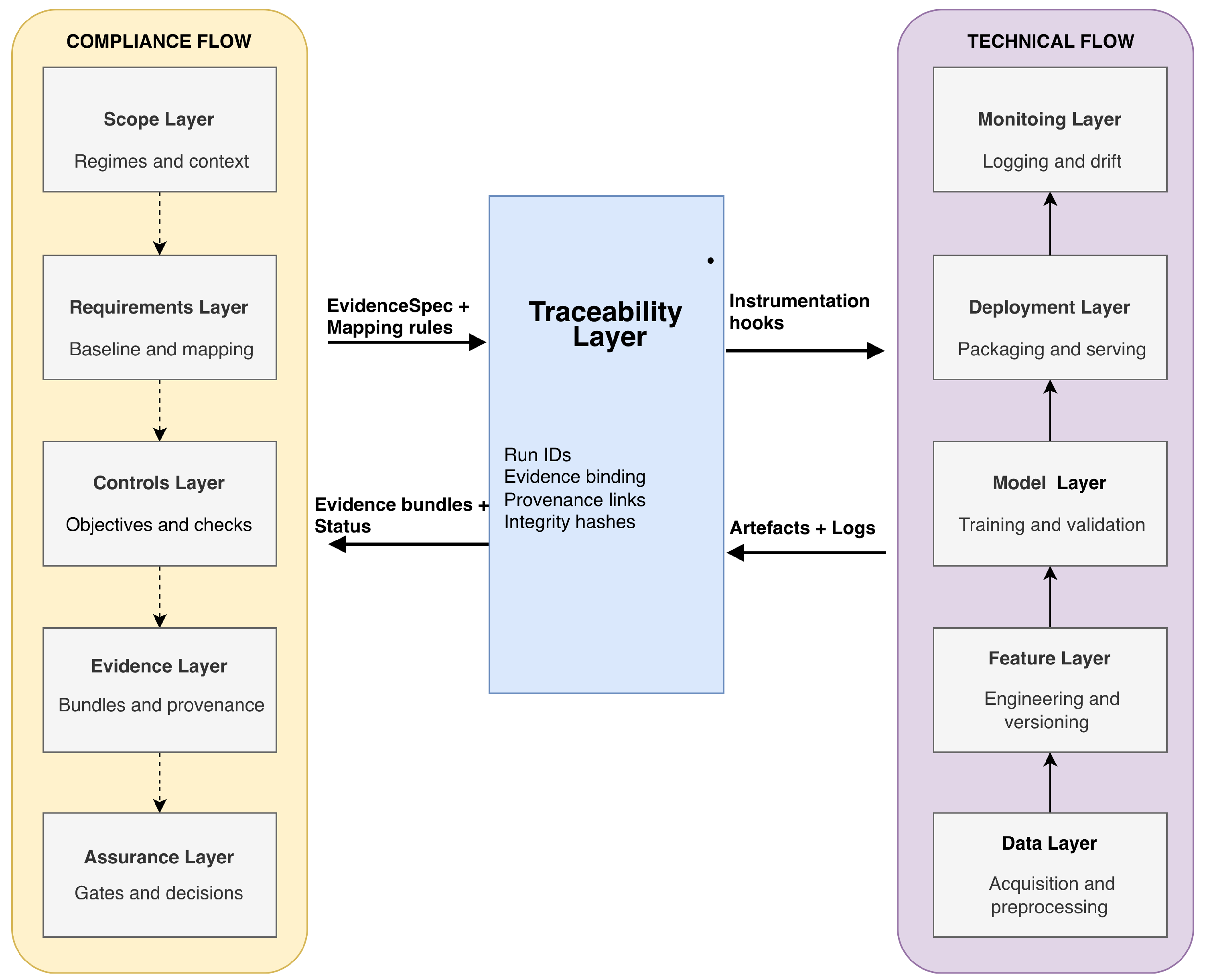

- C1 — Canonical dual-flow framing: We formalise a dual-flow architecture that distinguishes the upstream technical pipeline from a downstream compliance pipeline engineered for operational audit readiness.

- C2 — Ontology-grounded vocabulary: We specify an ontology-aligned interoperability layer, leveraging DPV and AIRO, to support consistent terminology across conformance matrices and evidence records.

- C3 — Compliance-matrix metamodel: We define a metamodel capturing requirements, controls, artefacts, and evidence items with explicit trace links, stable identifiers, and validation constraints.

- C4 — Case-by-case instantiation workflow: We provide an instantiation workflow that produces context-specific conformance matrix instances and supports multi-regime alignment, with a primary instantiation for GDPR and the EU AI Act.

- C5 — Verification and quality checks: We propose competency questions and consistency checks that assess the completeness and traceability of a given matrix instance and its evidence bindings.

1.2. Paper Organisation

2. Canonical Dual-Flow Architecture and Problem Framing

2.1. Dual-Flow, Layered Architecture

- Scope: Define the system boundary, intended use, relevant data flows, and the set of applicable regimes and organisational policies (primary instantiation: GDPR and EU AI Act).

- Requirements: derive and record the applicable normative requirements under the defined scope, including applicability conditions and scoping assumptions.

- Controls: translate requirements into verifiable control objectives and implementable controls with stable identifiers, ownership, and verification intent.

- Evidence: specify the minimal evidence required for each control (required artefact types, checks, and acceptance criteria) and bind evidence expectations to lifecycle touchpoints in the technical flow.

- Assurance: Operationalise verification through gates and decision records, producing pass/fail/incomplete statuses per control and conditioning lifecycle progression on evidence sufficiency.

3. Background and Related Work

3.1. Governance-by-Design and Assurance Across ML Lifecycles

3.2. Traceability, Provenance, and Conformance Matrices

3.3. Ontologies for Privacy, Risk, and Compliance Interoperability

4. Normative Scope and Requirements

4.1. Scope and Assumptions

4.2. Derived Requirements

4.2.1. Functional Requirements

- FR1

- Norm-to-control formalisation. Represent normative obligations as explicit control objectives with unambiguous identifiers, scope conditions, and accountable roles [4].

- FR2

- Control-to-artefact mapping. Bind each control objective to concrete pipeline artefacts and evidence items (what must exist, where it is produced, and how it is verified), enabling deterministic trace paths from requirements to artefacts.

- FR3

- Evidence specification and gating. For each control, define evidence specifications and gate conditions (pass/fail criteria, decision records, and corrective actions) so that conformance is assessed continuously rather than reconstructed retroactively [14].

- FR4

- Matrix instantiation per context. Support case-by-case instantiation that yields different conformance matrix instances for different pipelines, risk contexts, and applicable regimes (multi-regime by design).

- FR5

- Queryable audit layer. Enable auditors and assurance stakeholders to query trace links and evidence bundles using stable identifiers (e.g., by requirement, control, run, or lifecycle stage), including support for constrained-access assessment settings [15].

- FR6

- Nonconformity handling. Represent nonconformities, exceptions, and remediation actions as first-class entities linked to impacted controls and artefacts, supporting an auditable corrective loop [5].

4.2.2. Non-Functional Requirements

- NFR1

- Interoperability. The vocabulary, mappings, and evidence descriptions should be exportable in interoperable forms, including provenance-aligned representations [8].

- NFR2

- Extensibility. The metamodel and mapping rules should admit new regim es and organisational controls without redesigning the core structure (multi-regime by design).

- NFR3

- NFR4

- NFR5

- Reproducibility. Re-running a given pipeline stage should reproduce the declared trace structure (even when outcomes change), reflecting established expectations in software engineering for machine learning [5].

5. Ontology Vocabulary, Matrix Metamodel, and Instantiation

5.1. Ontology-Aligned Vocabulary for Interoperability

Minimal Profile and Reuse-First Strategy

DPV and AIRO as Complementary Anchors

5.2. Conformance-Matrix Metamodel and Mapping Rules

Core Entities

- Control Objective (CO): a verifiable objective that operationalises one or more NR items in a technical setting, aligned with assurance-orientated practice [3].

- Control (C): an implementable control specification (policy, process, technical mechanism, or check) that realises a CO.

- Evidence Specification (ES): a definition of the minimal evidence required to assess a control (evidence type, provenance expectations, responsible role, retention, and acceptable variance).

- Pipeline Artefact Type (AT) and Evidence Item (EI): respectively, a class of artefacts produced by the pipeline (e.g., dataset lineage record; model card; risk register entry) and a concrete evidence item generated for a given run or review cycle.

- Gate (G) and Decision Record (DR): an explicit checkpoint that evaluates one or more ES items and records pass/fail decisions with rationale and accountability metadata.

Trace Relations and Completeness Constraints

Deterministic Mapping Rules

- which pipeline stage(s) a control applies to (e.g., data acquisition, training, evaluation, deployment, monitoring);

- which artefact types are required to evidence the control at that stage (AT);

- which minimal evidence items must be produced (EI), with provenance expectations;

- which gate(s) evaluate the evidence, and what decision records must be produced (DR).

5.3. Case-by-Case Instantiation Workflow

Step 1: Scope the Context and Select Regimes

Step 2: Derive Atomic Normative Requirements

Step 3: Define Control Objectives and Controls

Step 4: Specify Evidence and Gates

Step 5: Bind Controls to Pipeline Artefacts and Generate the Matrix Instance

Conceptual Validation

6. Evidence Model and Audit Queries

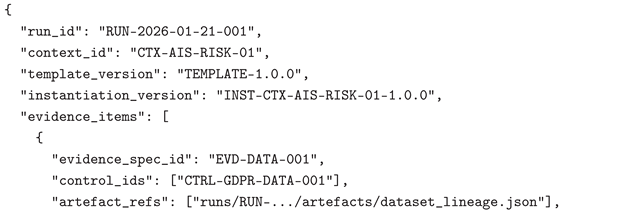

6.1. Evidence Bundles: Structure and Minimum fields

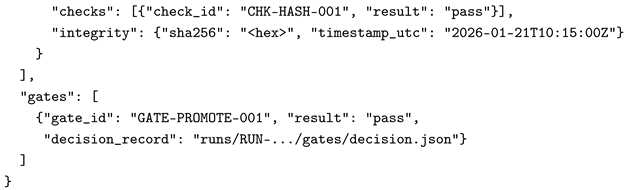

6.2. Run-Level Traceability and Integrity

6.3. Audit Queries and Competency Questions

- Coverage and completeness: Which controls mapped from GDPR and the EU AI Act are currently uncovered or have missing/failed evidence for the latest approved run?

- Gate compliance: For a given deployment, which gates were passed, with which evidence bundles, and under which acceptance criteria?

- Lineage and change impact: For a given requirement, which artefacts and runs support it, and what changed between two approved runs?

- Nonconformities: Which nonconformities are open, which controls they affect, and which corrective actions are pending or verified?

- Risk-to-control trace: For a given AI risk concept (AIRO-aligned), which mitigation controls are instantiated and what evidence exists for their implementation and verification?

7. Implementation

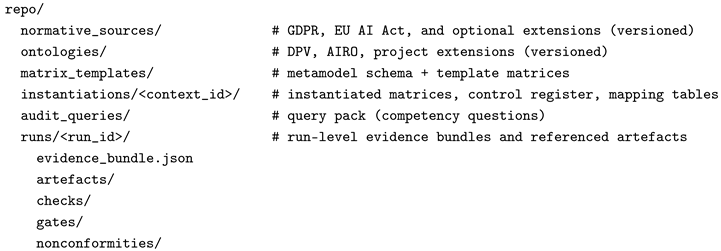

7.1. Representations and Repository Structure

- Ontologies and vocabularies: OWL/RDF serialisations (e.g., Turtle) for the adopted vocabularies (DPV, AIRO) and any project-specific extensions, each with an explicit version identifier.

- Conformance-matrix template and instantiations: (a) a human-auditable tabular view (CSV) and (b) a typed machine-readable view (YAML/JSON). The tabular view supports review and sampling; the structured view enables unambiguous typing and automated validation.

- Evidence bundles: a per-run bundle (JSON/YAML) captURIsng referenced artefact instances (paths/URIs), verification results, and integrity metadata (hashes, timestamps, tool versions).

- Audit query pack: a simple specification (YAML/JSON) that encodes competency questions, parameters (e.g., control ID, run ID, time window), and retrieval scopes.

7.2. Evidence Bundle Schema and Identifiers

7.3. Matrix Templates, Instantiation, and Integration Hooks

8. Evaluation

8.1. Evaluation Objectives and Protocol

- O1 (Metamodel sufficiency): the metamodel includes the entities and relations required to represent a complete norm-to-evidence trace chain (requirement → control objective → control → evidence specification → evidence items), including gates, decision records, and nonconformity/corrective-action handling.

- 1.

- Structural inspection: verify that the metamodel provides explicit entities for normative source, requirement, control, evidence specification, check, gate, run, decision record, nonconformity, and corrective action, enabling end-to-end traceability.

- 2.

- Consistency checks: validate that the vocabulary layer and the metamodel do not introduce contradictory typing or relation constraints, and that identifiers are unambiguous and stable (e.g., one evidence specification cannot satisfy incompatible control expectations without an explicit scope rationale).

- 3.

- Audit exercises: execute representative audit queries over an illustrative instantiation (AI risk pipeline) to retrieve control coverage, evidence completeness, verification outcomes, and gate decisions. This targets audit demands that are not reliably supported by black-box-only access. [15]

- 4.

- Change-impact exercise: apply representative changes (dataset update, feature pipeline change, model retraining) and verify that impacted controls and evidence specifications can be identified and re-verified, addressing trace erosion and hidden technical debt commonly observed in machine-learning systems. [5,6]

8.2. Competency Questions and Audit Tasks

- 1.

- CQ1 (Scope and rationale): Which GDPR/EU AI Act-derived controls are in-scope for a given system context, which are out-of-scope, and what scoping assumptions justify this decision?

- 2.

- CQ2 (Evidence specification): For a given control, what evidence is minimally required, which artefact types can satisfy it, and which checks must pass?

- 3.

- CQ3 (Run-level status): For a selected run, which controls are pass/fail/incomplete, which evidence items support each status, and which gate decisions followed?

- 4.

- CQ4 (Nonconformities and corrective actions): Which nonconformities are open, which controls they affect, which corrective actions were prescribed, and whether re-verification closed them?

- 5.

- CQ5 (Change impact): Given a change in data, features, or model version, which controls and evidence specifications must be re-verified before promotion can proceed?

8.3. Threats to Validity and Limitations

9. Discussion

9.1. Operational Audit Readiness as an Engineering Property

9.2. Interoperability and Multi-Regime Generality

9.3. Limitations and Future Work

10. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- European Parliament and Council of the European Union. Regulation (EU) 2016/679 of the European Parliament and of the Council of 27 April 2016 on the protection of natural persons with regard to the processing of personal data and on the free movement of such data (General Data Protection Regulation). Official Journal of the European Union, L 119, 2016.

- European Parliament and Council of the European Union. Regulation (EU) 2024/1689 of the European Parliament and of the Council of 13 June 2024 laying down harmonised rules on artificial intelligence (Artificial Intelligence Act). Official Journal of the European Union, 2024.

- National Institute of Standards and Technology. Artificial Intelligence Risk Management Framework (AI RMF 1.0). Technical Report NIST AI 100-1, U.S. Department of Commerce, 2023. [CrossRef]

- Raji, I.D.; Smart, A.; White, R.N.; Mitchell, M.; Gebru, T.; Hutchinson, B.; Smith-Loud, J.; Theron, D.; Barnes, P. Closing the AI Accountability Gap: Defining an End-to-End Framework for Internal Algorithmic Auditing. In Proceedings of the Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency (FAccT ’20). Association for Computing Machinery, 2020, pp. 33–44. [CrossRef]

- Amershi, S.; Begel, A.; Bird, C.; DeLine, R.; Gall, H.; Kamar, E.; Nagappan, N.; Nushi, B.; Zimmermann, T. Software Engineering for Machine Learning: A Case Study. In Proceedings of the 2019 IEEE/ACM 41st International Conference on Software Engineering: Software Engineering in Practice (ICSE-SEIP), 2019, pp. 291–300. [CrossRef]

- Sculley, D.; Holt, G.; Golovin, D.; Davydov, E.; Phillips, T.; Ebner, D.; Chaudhary, V.; Young, M.; Crespo, J.F.; Dennison, D. Hidden Technical Debt in Machine Learning Systems. In Proceedings of the Advances in Neural Information Processing Systems, 2015, Vol. 28, pp. 2503–2511.

- Winkler, S.; von Pilgrim, J. A Survey of Traceability in Requirements Engineering and Model-Driven Development. Software and Systems Modeling 2010, 9, 529–565. [CrossRef]

- Lebo, T.; Sahoo, S.; McGuinness, D. PROV-O: The PROV Ontology. W3C Recommendation, 2013.

- W3C Data Privacy Vocabularies and Controls Community Group (DPVCG). Data Privacy Vocabulary (DPV) — Version 2.0. Specification, 2025.

- Golpayegani, D.; Pandit, H.J.; Lewis, D. AIRO: An Ontology for Representing AI Risks Based on the Proposed EU AI Act and ISO Risk Management Standards. In Proceedings of the Proceedings of the 18th International Conference on Semantic Systems (SEMANTiCS 2022). IOS Press, 2022, pp. 101–108. [CrossRef]

- Gotel, O.; Finkelstein, A. An Analysis of the Requirements Traceability Problem. In Proceedings of the Proceedings of the First International Conference on Requirements Engineering. IEEE Computer Society, 1994, pp. 94–101. [CrossRef]

- AI Risk Ontology (AIRO) Community. AIRO: AI Risk Ontology. Ontology specification, 2025.

- Hartmann, D. Addressing the regulatory gap: moving towards an EU AI audit framework. AI and Ethics 2024. [CrossRef]

- Burr, C.; Leslie, D. Ethical assurance: a practical approach to the responsible design, development, and deployment of data-driven technologies. AI and Ethics 2023, 3, 73–98. [CrossRef]

- Casper, S.; Ezell, C.; Siegmann, C.; Kolt, N.; Curtis, T.L.; Bucknall, B.; Haupt, A.A.; Wei, K.; Scheurer, J.; Hobbhahn, M.; et al. Black-Box Access is Insufficient for Rigorous AI Audits. In Proceedings of the Proceedings of the 2024 ACM Conference on Fairness, Accountability, and Transparency (FAccT ’24). Association for Computing Machinery, 2024, pp. 2254–2272. [CrossRef]

- Zarour, M.; Alzabut, H.; Al-Sarayreh, K.T. MLOps Best Practices, Challenges and Maturity Models: A Systematic Literature Review. Information and Software Technology 2025, 183, 107733. [CrossRef]

- Pandit, H.J.; Polleres, A.; Bos, B.; Brennan, R.; Bruegger, B.; Ekaputra, F.J.; Fernández, J.D.; Hamed, R.G.; Kiesling, E.; Lizar, M.; et al. Creating a Vocabulary for Data Privacy — The First-Year Report of Data Privacy Vocabularies and Controls Community Group (DPVCG). In Proceedings of the On the Move to Meaningful Internet Systems: OTM 2019 Conferences. Springer, 2019, Vol. 11877, Lecture Notes in Computer Science, pp. 714–730. [CrossRef]

- Mitchell, M.; Wu, S.; Zaldivar, A.; Barnes, P.; Vasserman, L.; Hutchinson, B.; Spitzer, E.; Raji, I.D.; Gebru, T. Model Cards for Model Reporting. In Proceedings of the Proceedings of the Conference on Fairness, Accountability, and Transparency (FAT* ’19). ACM, 2019, pp. 220–229. [CrossRef]

- Gebru, T.; Morgenstern, J.; Vecchione, B.; Vaughan, J.W.; Wallach, H.; Daumé III, H.; Crawford, K. Datasheets for Datasets. Communications of the ACM 2021, 64, 86–92. [CrossRef]

| Observed problem | Why the technical pipeline is insufficient | Audit/assurance consequence | Response in this paper |

|---|---|---|---|

| Evidence is dispersed across tools and teams | Normative requirements are not encoded as operational controls with stable trace links | High reconstruction cost; low verifiability | Dual-flow architecture + conformance-matrix metamodel linking requirements–controls–artefacts–evidence |

| Controls are documented but not operationalised | No explicit gates, decision records, or acceptance criteria coupled to lifecycle events | Retroactive compliance; weak assurance claims | Compliance flow with gates, decision records, and a corrective-action loop (assurance layer) |

| Terminology varies across stakeholders and artefacts | Semantic heterogeneity makes mappings brittle and inconsistent | Inconsistent matrices; poor interoperability | Ontology-aligned vocabulary (DPV, AIRO) to stabilise concepts across instantiations |

| Continuous change (data/model/deployment) invalidates prior evidence | Evidence is not run-scoped, versioned, and integrity-anchored | Stale evidence; audit findings hard to reproduce | Run-level evidence bundles, stable identifiers, integrity metadata, and traceability checks |

| Normative anchor (high-level) | Control objective (CO) | Control (C) | Evidence specification (ES) | Gate |

|---|---|---|---|---|

| GDPR (lawful processing / accountability) | Demonstrate lawful basis and processing scope for in-scope data | Maintain processing register + approval record | Processing register entry; approval decision record; versioned scope assumptions | Data approval |

| GDPR (data minimisation / purpose limitation) | Ensure data fields and transformations are justified and documented | Dataset schema control + preprocessing lineage capture | Dataset schema; preprocessing lineage artefact; hash/timestamp; reviewer sign-off | Data release |

| EU AI Act (risk management for high-risk AI) | Maintain a risk register and mitigation trace for the system context | Risk assessment control + mitigation mapping | Risk register entry; mitigation-to-control mapping; review outcome | Model promotion |

| EU AI Act (monitoring / post-market duties) | Detect relevant drift/incidents and trigger corrective actions | Monitoring control + incident workflow control | Monitoring report; incident ticket; corrective-action record; re-verification result | Deployment/ rollback |

| Type | Role in the model | Suggested ID pattern | Key trace relations |

|---|---|---|---|

| NR | Atomic normative requirement (regime-specific, scoped) | NR-<REG>-<NNN> | NR → CO |

| CO | Verifiable control objective operationalising one/more NR items | CO-<DOM>-<NNN> | CO → C; CO → NR |

| C | Implementable control specification (policy/process/technical check) | CTRL-<REG>-<DOM>-<NNN> | C → ES; C → AT/EI |

| ES | Evidence specification (minimum evidence, checks, acceptance criteria) | EVD-<DOM>-<NNN> | ES → EI; ES → G |

| AT / EI | Artefact type / concrete evidence item (run-scoped instance) | AT-<DOM>-<NNN> / EI-<RUN>-<NNN> | EI @ RUN → DR; EI ↔ provenance links |

| G / DR | Gate and decision record (pass/fail + rationale + accountability) | GATE-<STAGE>-<NNN> / DR-<RUN>-<NNN> | G → DR; DR → (C, ES, RUN) |

| NC / CA | Nonconformity / corrective action (auditable remediation loop) | NC-<RUN>-<NNN> / CA-<RUN>-<NNN> | NC → CA; CA → (C, EI, DR) |

| Step | Inputs | Outputs | Minimal quality checks |

|---|---|---|---|

| 1 | Context scope (system boundary, data flows, intended use) + selected regimes | Scope specification + assumptions baseline | Scope completeness; explicit assumptions recorded; regime versions fixed |

| 2 | Regime texts + scope specification | Atomic NR set (scoped requirement statements) | Atomicity; non-duplication; applicability conditions explicit |

| 3 | NR set + organisational policies/practices | CO set + control register (C) | Verifiability of CO/C; responsibility and frequency assigned |

| 4 | Controls (C) + lifecycle touchpoints | Evidence specifications (ES) + gate definitions (G) | Evidence sufficiency; acceptance criteria defined; gate coverage over lifecycle events |

| 5 | (NR, CO, C, ES, G) + mapping rules | Matrix instance + run-scoped evidence requirements | Trace completeness (NR→...→EI/DR); stable IDs; consistency constraints |

| Field | Purpose |

|---|---|

| bundle_id | Stable identifier for the evidence bundle. |

| control_id / requirement_id | Trace to the control(s) and normative requirement(s) the bundle supports. |

| system_instance_id | Binds evidence to a specific system/pipeline instance and operational context. |

| run_id | Links evidence to a concrete execution (training, evaluation, deployment, monitoring). |

| artefact_id, artefact_type | Identifies each artefact instance and its declared type (as per EvidenceSpec). |

| location / pointer | Reference to the artefact payload (file path, object-store key, database pointer). |

| hash / checksum | Integrity anchor for artefact payloads (tamper-evident verification). |

| timestamp | When the artefact/check was produced or last validated. |

| producer_role / agent | Who produced the artefact (role/agent), enabling accountability. |

| check_id, check_result | Verification results and statuses against acceptance criteria. |

| status | Aggregate bundle status (e.g., complete/incomplete; pass/fail; pending review). |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).