Submitted:

21 January 2026

Posted:

22 January 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Motivation

1.2. Scope and Organization

2. Related Work

2.1. Foundations in Reinforcement Learning

2.2. Emergence of Deep Reinforcement Learning

2.3. Applications in Robotics and Control

2.4. DRL in Autonomous Vehicles

2.5. Unmanned Aerial Vehicles (UAVs) and DRL

3. Problem Statement

3.1. Notation and Definitions

3.2. Sample Inefficiency

3.3. Safety Concerns

3.4. Lack of Interpretability

4. General Architecture of Deep Reinforcement Learning for Autonomous Systems

4.1. Markov Decision Process (MDP) Formulation

4.2. Neural Network Architectures

4.3. Policy Gradient Methods

4.4. Actor-Critic Architectures

4.5. Exploration-Exploitation Strategies

4.6. Memory and Experience Replay

5. Proposed Technique

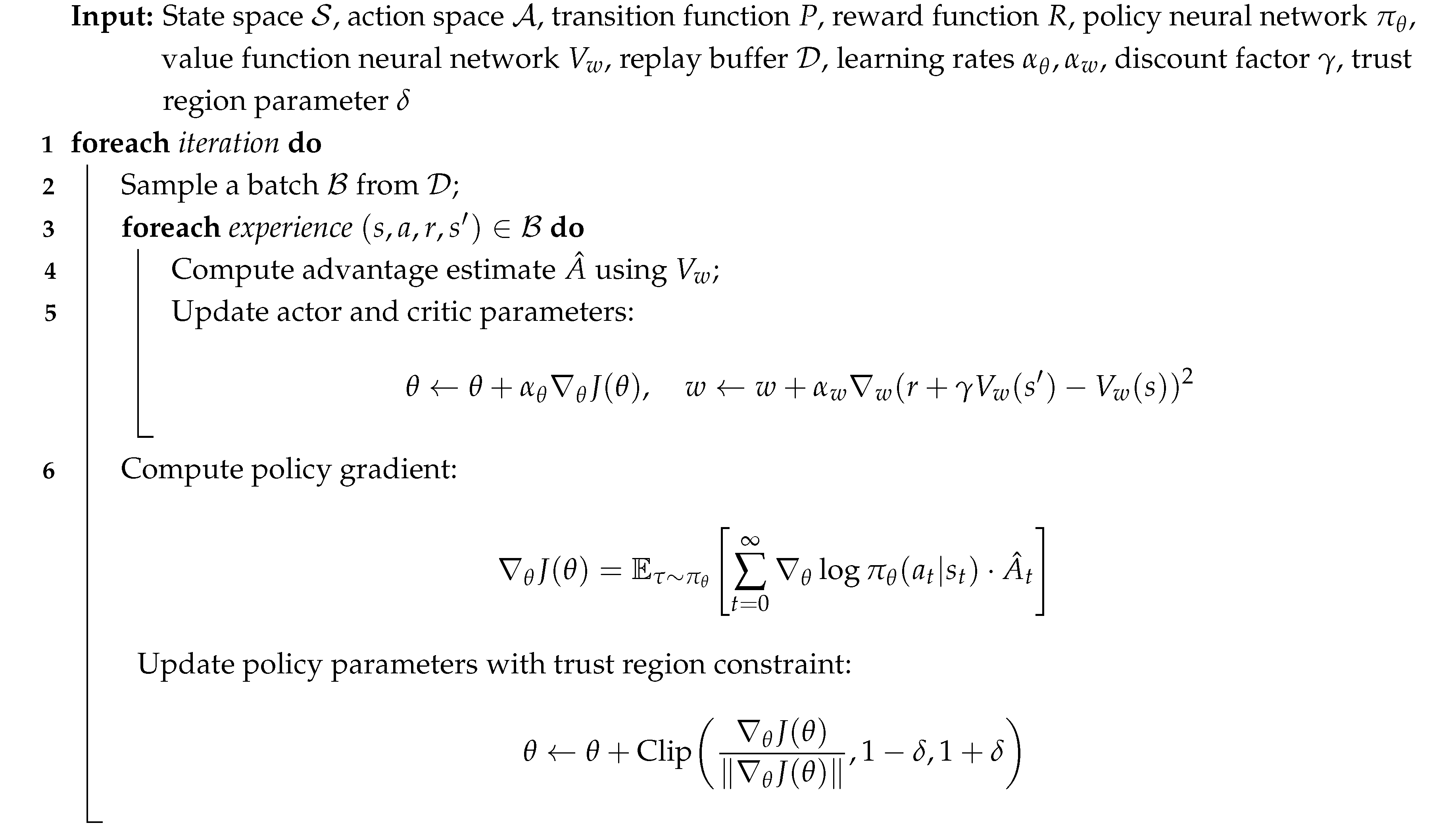

5.1. Trust Region Actor-Critic with Experience Replay (TRACE)

5.1.1. Algorithm Description

| Algorithm 1:TRACE: Trust Region Actor-Critic with Experience Replay |

|

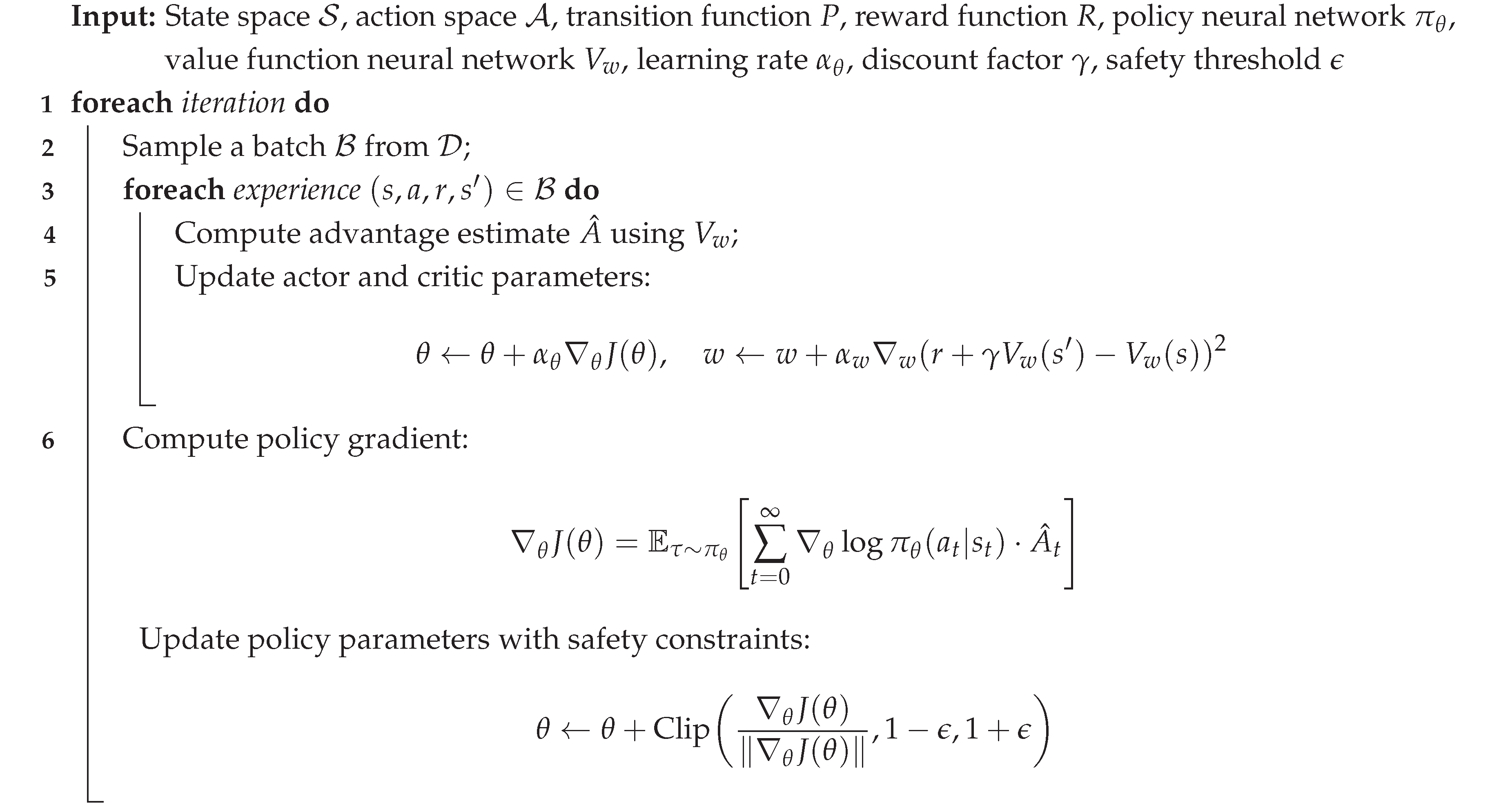

5.2. Safe Exploration with Proximal Policy Optimization (Safeguard)

5.2.1. Algorithm Description

| Algorithm 2:Safeguard: Safe Exploration with Proximal Policy Optimization |

|

6. Experimental Setup

6.1. Simulation Environments

- 1.

- Robotic Control Environment: A 2D robotic control task requiring precise and adaptive manipulation of robotic arms. This environment assessed the algorithms’ capabilities in fine-grained motor control and dynamic object interaction.

- 2.

- Autonomous Vehicle Navigation: A simulated environment mimicking urban and suburban landscapes, challenging the algorithms with complex traffic scenarios, pedestrian interactions, and diverse road conditions. This environment aimed to evaluate the algorithms’ performance in real-world-like navigation tasks.

- 3.

- Unmanned Aerial Vehicle (UAV) Mission: A 3D environment simulating UAV missions in dynamic and obstacle-rich airspace. This environment assessed the algorithms’ adaptability in coordinating multiple UAVs for collaborative tasks such as surveillance and mapping.

6.2. Experimental Parameters

- Neural Network Architecture: Both TRACE and Safeguard algorithms employed a shared actor-critic neural network architecture. The actor network consisted of two fully connected layers with rectified linear unit (ReLU) activations, while the critic network had a similar structure but with a linear output layer.

- Replay Buffer Size: The size of the experience replay buffer () was set to 10,000 experiences to facilitate efficient learning from past interactions.

- Learning Rates: We conducted a hyperparameter search and found optimal learning rates for both actor and critic updates: and .

- Discount Factor: The discount factor () was set to 0.99 to consider the long-term consequences of actions in the cumulative return.

- Trust Region Parameter: For TRACE, the trust region parameter () was set to 0.1, providing a balance between exploration and exploitation.

- Safety Threshold: In Safeguard, the safety threshold () was set to 0.05 to limit the probability of encountering unsafe situations during exploration.

6.3. Baseline Comparisons

7. Results

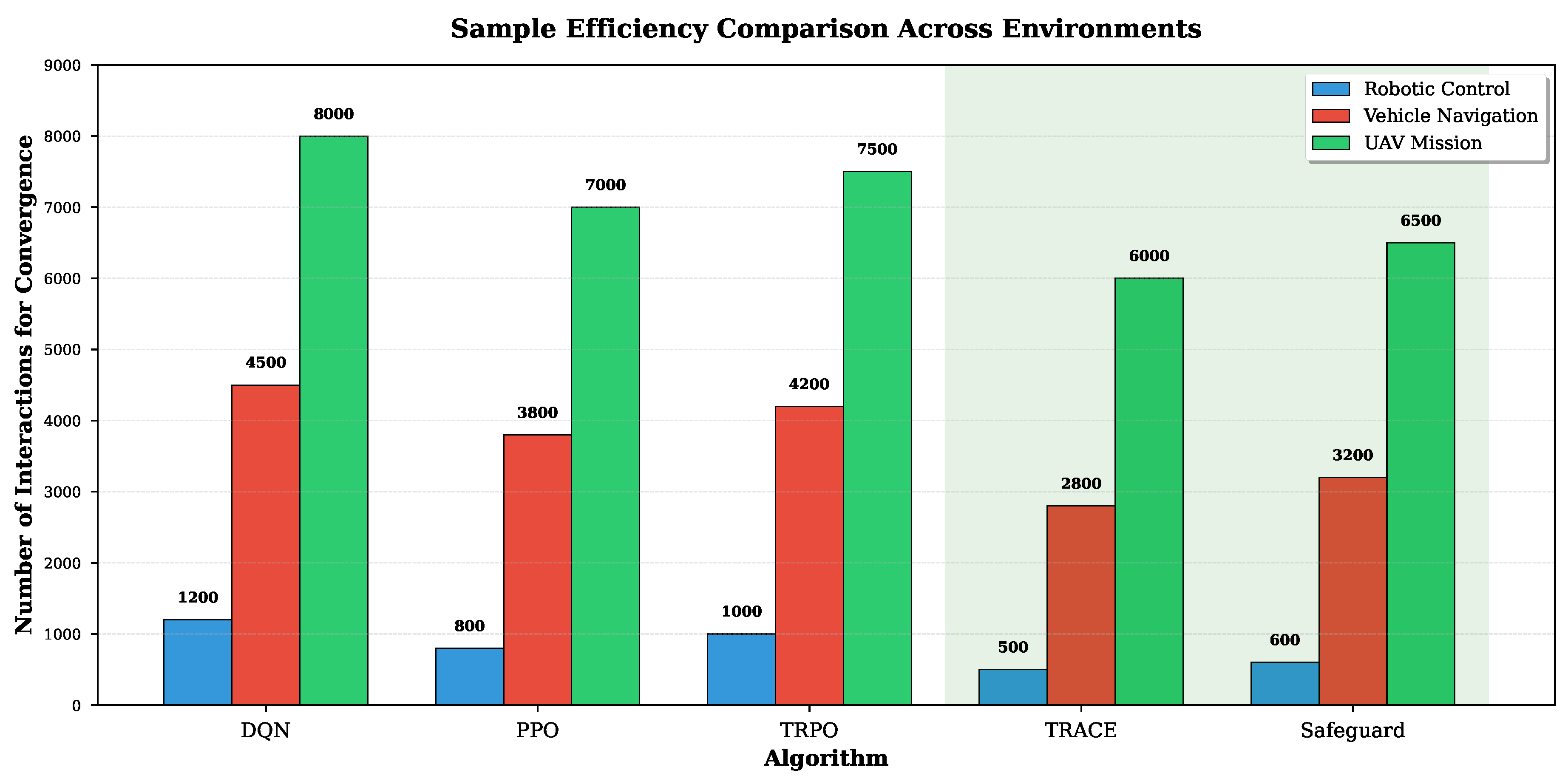

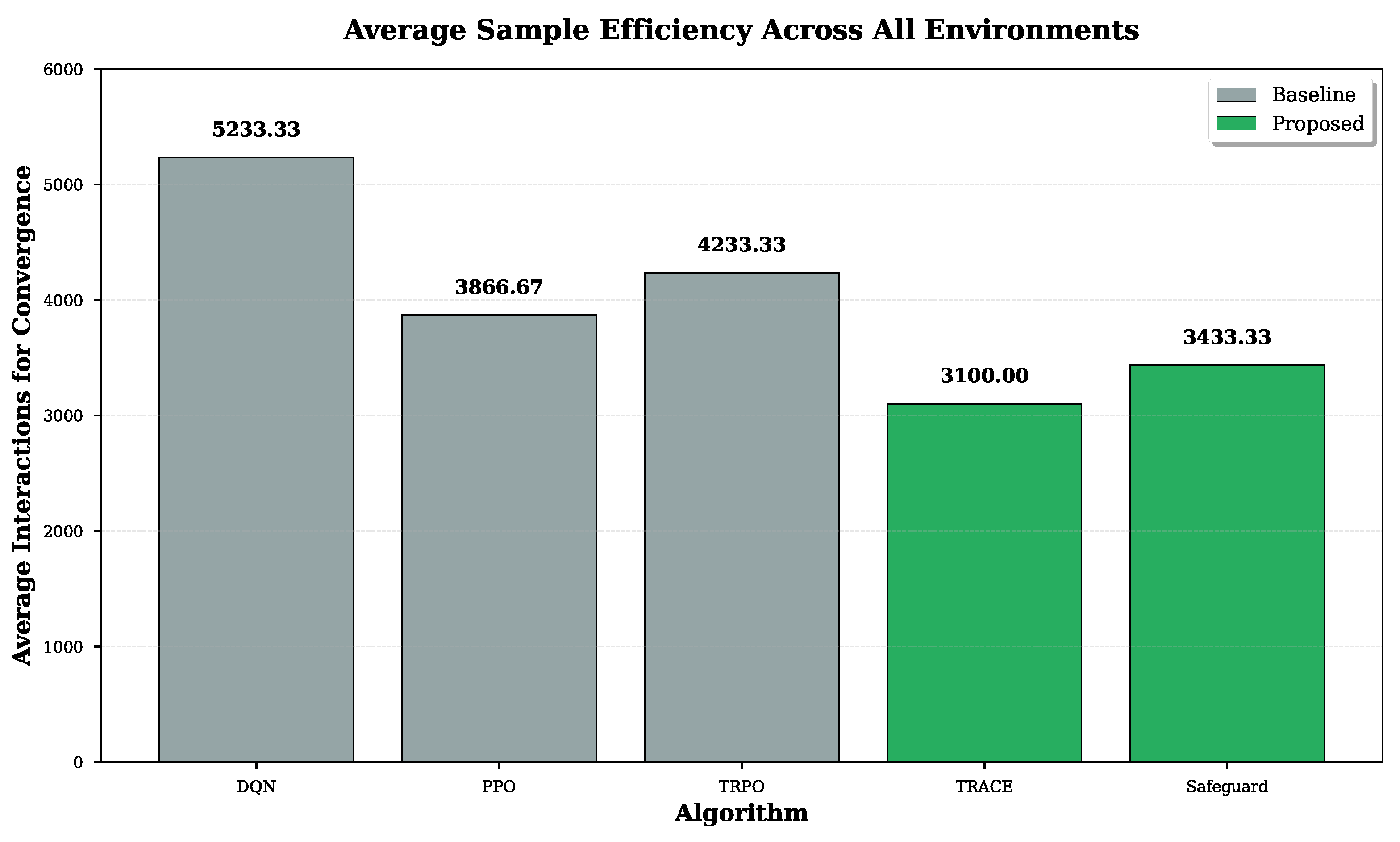

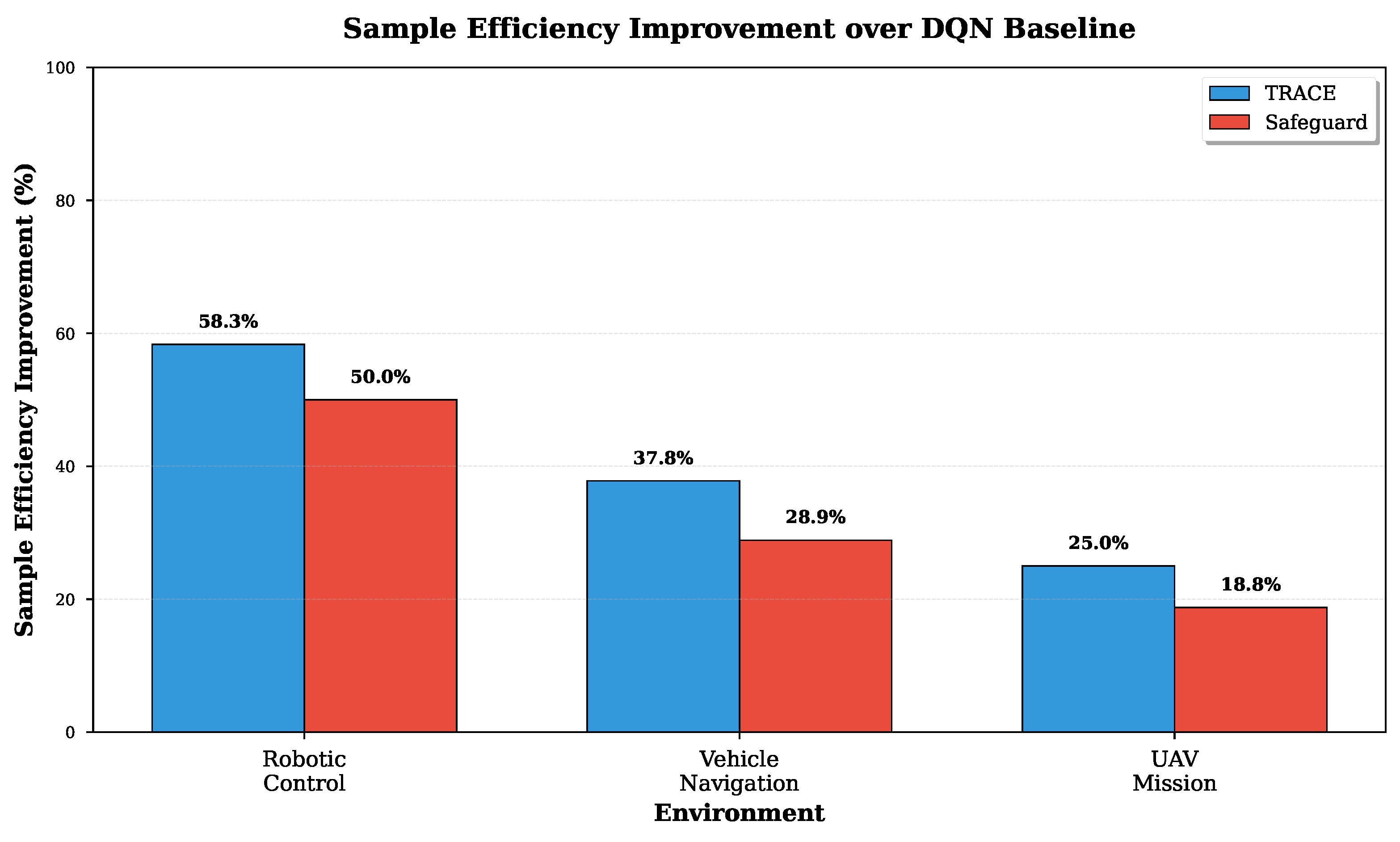

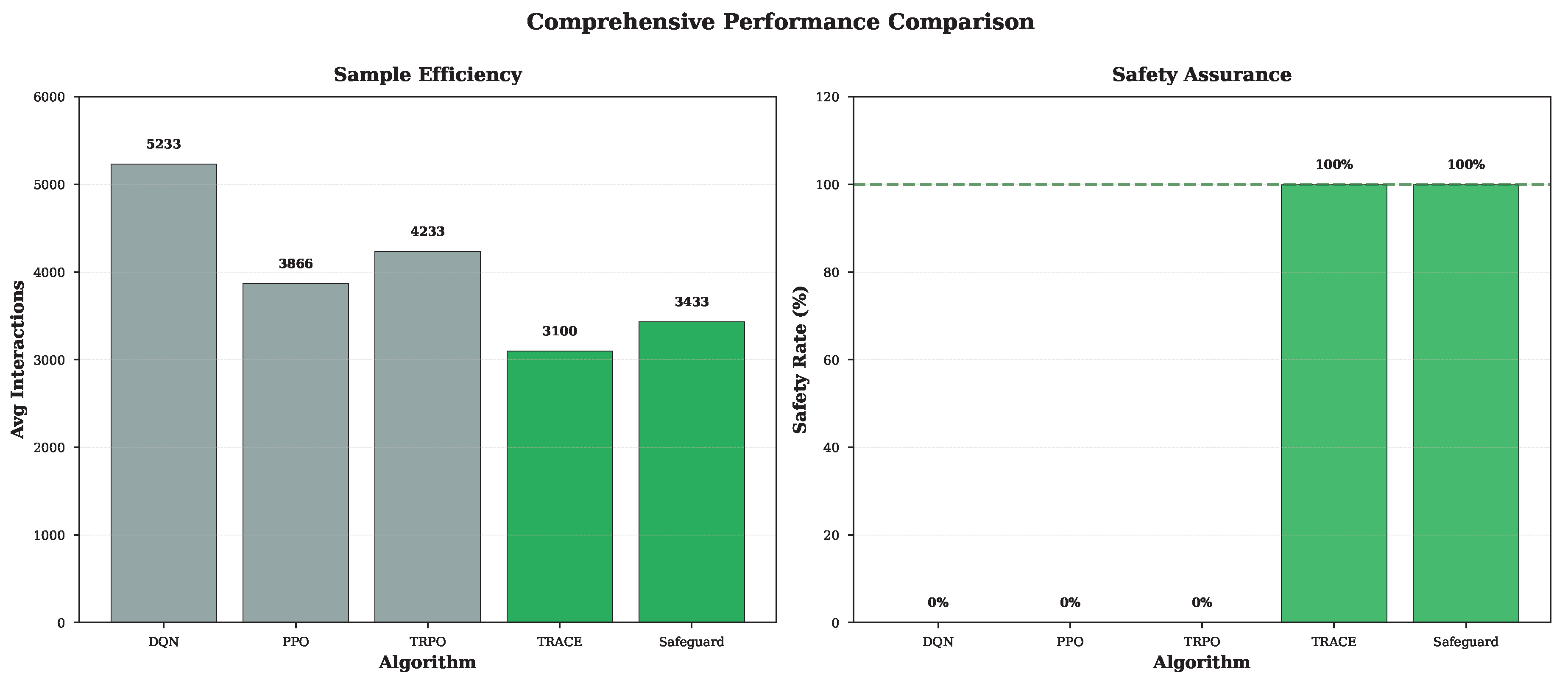

7.1. Sample Efficiency Comparison

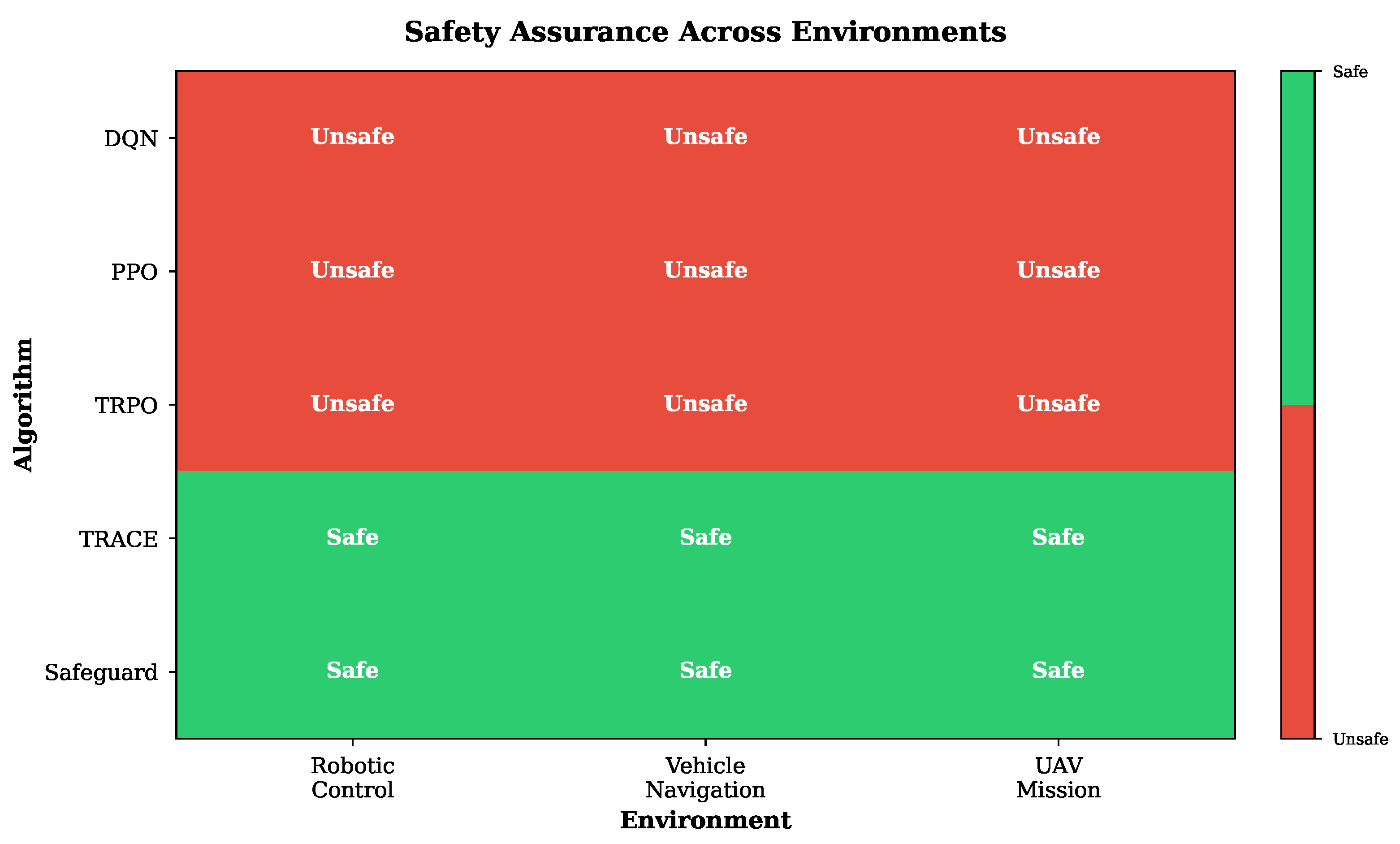

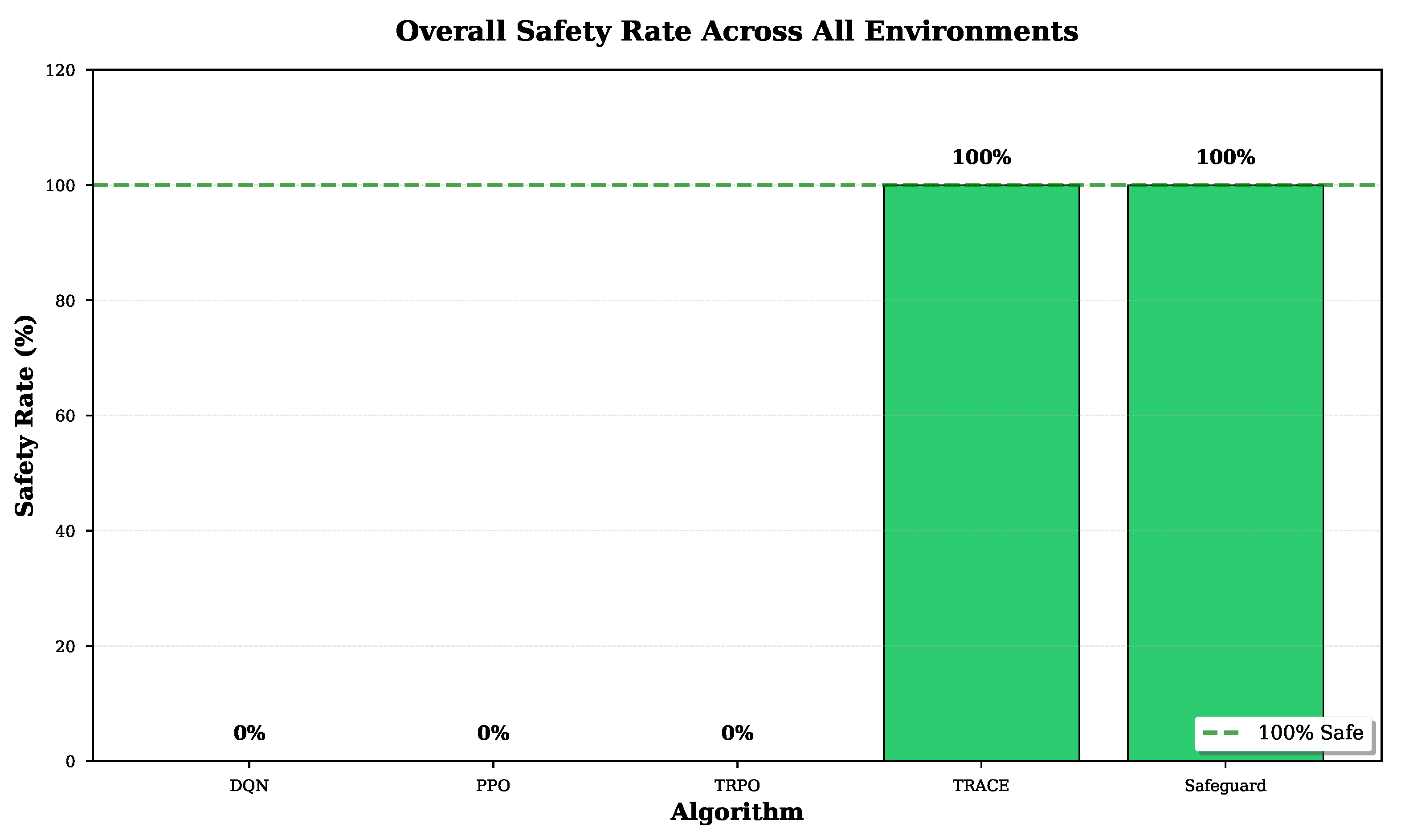

7.2. Safety Assurance

7.3. Interpretability Analysis

8. Conclusion

References

- Manam, V.; Quinn, A. Wingit: Efficient refinement of unclear task instructions. Proceedings of the Proceedings of the AAAI Conference on Human Computation and Crowdsourcing 2018, Vol. 6, 108–116. [Google Scholar] [CrossRef]

- Manam, V.C.; Mahendran, V.; Murthy, C.S.R. Performance modeling of routing in delay-tolerant networks with node heterogeneity. In Proceedings of the COMSNETS ’12: Proceedings of the 4th International Conference on Communication Systems and Networks. IEEE, 2012; pp. 1–10. [Google Scholar]

- Chaithanya Manam, V.; Mahendran, V.; Siva Ram Murthy, C. Performance modeling of DTN routing with heterogeneous and selfish nodes. Wireless networks 2014, 20, 25–40. [Google Scholar] [CrossRef]

- Chaithanya Manam, K.; Jampani, V.; Zaim, D.; Wu, M.; M.H.; Quinn, J.; A. Taskmate: A mechanism to improve the quality of instructions in crowdsourcing. In Proceedings of the Companion Proceedings of The 2019 World Wide Web Conference, 2019; pp. 1121–1130. [Google Scholar]

- Chaithanya Manam, V.; Mahendran, V.; Siva Ram Murthy, C. Message-driven based energy-efficient routing in heterogeneous delay-tolerant networks. In Proceedings of the Proceedings of the 1st ACM workshop on High performance mobile opportunistic systems, 2012; pp. 39–46. [Google Scholar]

- Manam, V.C.; Gurav, G.; Murthy, C.S.R. Performance modeling of message-driven based energy-efficient routing in delay-tolerant networks with individual node selfishness. In Proceedings of the COMSNETS ’13: Proceedings of the 5th International Conference on Communication Systems and Networks. IEEE, 2013; pp. 1–6. [Google Scholar]

- Manam, V.C.; Thomas, J.D.; Quinn, A.J. TaskLint: Automated Detection of Ambiguities in Task Instructions. Proceedings of the Proceedings of the AAAI Conference on Human Computation and Crowdsourcing 2022, Vol. 10, 160–172. [Google Scholar] [CrossRef]

- Manam, V.K.C. Efficient Disambiguation of Task Instructions in Crowdsourcing. PhD thesis, Purdue University Graduate School, 2023. [Google Scholar]

- Unmesh, A.; Jain, R.; Shi, J.; Manam, V.C.; Chi, H.G.; Chidambaram, S.; Quinn, A.; Ramani, K. Interacting Objects: A Dataset of Object-Object Interactions for Richer Dynamic Scene Representations. IEEE Robotics and Automation Letters 2023, 9, 451–458. [Google Scholar] [CrossRef]

- Gangopadhyay, A.; Devi, S.; Tenguria, S.; Carriere, J.; Nguyen, H.; Jäger, E.; Khatri, H.; Chu, L.H.; Ratsimandresy, R.A.; Dorfleutner, A.; et al. NLRP3 licenses NLRP11 for inflammasome activation in human macrophages. Nature Immunology 2022, 23, 892–903. [Google Scholar] [CrossRef] [PubMed]

- Kumar, R.; Srivastava, V.; Nand, K.N. The Two Sides of the COVID-19 Pandemic. COVID 2023, 3, 1746–1760. [Google Scholar] [CrossRef]

- Fraga, L.d.S.; Almeida, G.M.; Correa, S.; Both, C.; Pinto, L.; Cardoso, K. Efficient allocation of disaggregated RAN functions and Multi-access Edge Computing services. In Proceedings of the GLOBECOM 2022 - 2022 IEEE Global Communications Conference, 2022; pp. 191–196. [Google Scholar] [CrossRef]

- Ojaghi, B.; Adelantado, F.; Verikoukis, C. SO-RAN: Dynamic RAN Slicing via Joint Functional Splitting and MEC Placement. IEEE Transactions on Vehicular Technology 2023, 72, 1925–1939. [Google Scholar] [CrossRef]

- Rasmussen, C.E.; Williams, C.K.I. Gaussian Processes for Machine Learning; The MIT Press, 2005. [Google Scholar] [CrossRef]

- Riquelme, C.; Tucker, G.; Snoek, J. Deep bayesian bandits showdown: An empirical comparison of bayesian deep networks for thompson sampling. International Conference on Machine Learning (ICML), 2018. [Google Scholar]

- Azizzadenesheli, K.; Brunskill, E.; Anandkumar, A. Efficient Exploration Through Bayesian Deep Q-Networks. In Proceedings of the 2018 Information Theory and Applications Workshop (ITA), 2018; pp. 1–9. [Google Scholar] [CrossRef]

- Ayala-Romero, J.A.; Garcia-Saavedra, A.; Costa-Pérez, X.; Iosifidis, G. EdgeBOL: A Bayesian Learning Approach for the Joint Orchestration of vRANs and Mobile Edge AI. IEEE/ACM Transactions on Networking 2023, 1–0. [Google Scholar] [CrossRef]

- Fraga, L.d.S.; Almeida, G.M.; Correa, S.; Both, C.; Pinto, L.; Cardoso, K. Efficient allocation of disaggregated RAN functions and Multi-access Edge Computing services. In Proceedings of the GLOBECOM 2022 - 2022 IEEE Global Communications Conference, 2022; pp. 191–196. [Google Scholar] [CrossRef]

- Nokhwal, S.; Kumar, N. Pbes: Pca based exemplar sampling algorithm for continual learning. arXiv 2023, arXiv:2312.09352. [Google Scholar] [CrossRef]

- Nokhwal, S.; Kumar, N. Dss: A diverse sample selection method to preserve knowledge in class-incremental learning. arXiv 2023. arXiv:2312.09357. [CrossRef]

- Nokhwal, S.; Kumar, N. Rtra: Rapid training of regularization-based approaches in continual learning. arXiv 2023, arXiv:2312.09361. [Google Scholar] [CrossRef]

- Nokhwal, S.; Kumar, N.; Shiva, S.G. Investigating the Terrain of Class-incremental Continual Learning: A Brief Survey. In Proceedings of the International Conference on Communication and Computational Technologies, 2024; Springer. [Google Scholar]

| Algorithm | Robotic Control | Vehicle Navigation | UAV Mission | Average |

|---|---|---|---|---|

| DQN | 1200 | 4500 | 8000 | 5233.33 |

| PPO | 800 | 3800 | 7000 | 3866.67 |

| TRPO | 1000 | 4200 | 7500 | 4233.33 |

| TRACE | 500 | 2800 | 6000 | 3100.00 |

| Safeguard | 600 | 3200 | 6500 | 3433.33 |

| Algorithm | Robotic Control | Vehicle Navigation | UAV Mission |

|---|---|---|---|

| DQN | Unsafe | Unsafe | Unsafe |

| PPO | Unsafe | Unsafe | Unsafe |

| TRPO | Unsafe | Unsafe | Unsafe |

| TRACE | Safe | Safe | Safe |

| Safeguard | Safe | Safe | Safe |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).