Submitted:

14 January 2026

Posted:

15 January 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- We propose a secure and verifiable edge federated learning framework for UAV scenarios. The framework mitigates gradient inversion and membership inference risks caused by plaintext exposure in existing HE-based schemes. Device-side updates are protected with homomorphic encryption, and server-side decryption and aggregation are confined to a remotely attested Trusted Execution Environment. Plaintext access to model updates is restricted at the server side.

- We introduce an aggregate-signature-based verification mechanism for federated aggregation. The mechanism addresses the lack of source authentication and update integrity in existing HE-based federated learning schemes. Participant identity and update integrity are enforced before aggregation. Malicious or tampered updates are prevented from entering the aggregation process.

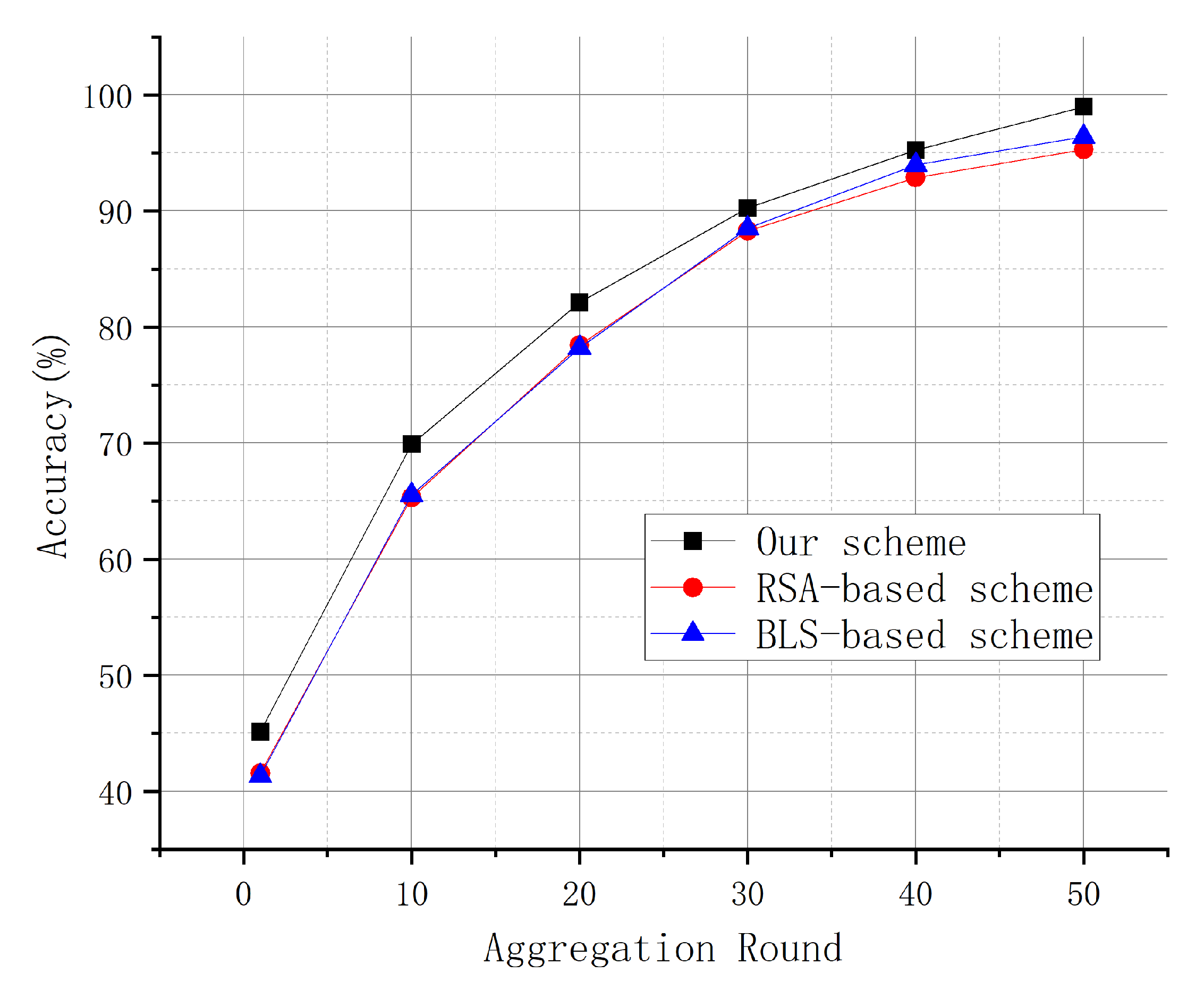

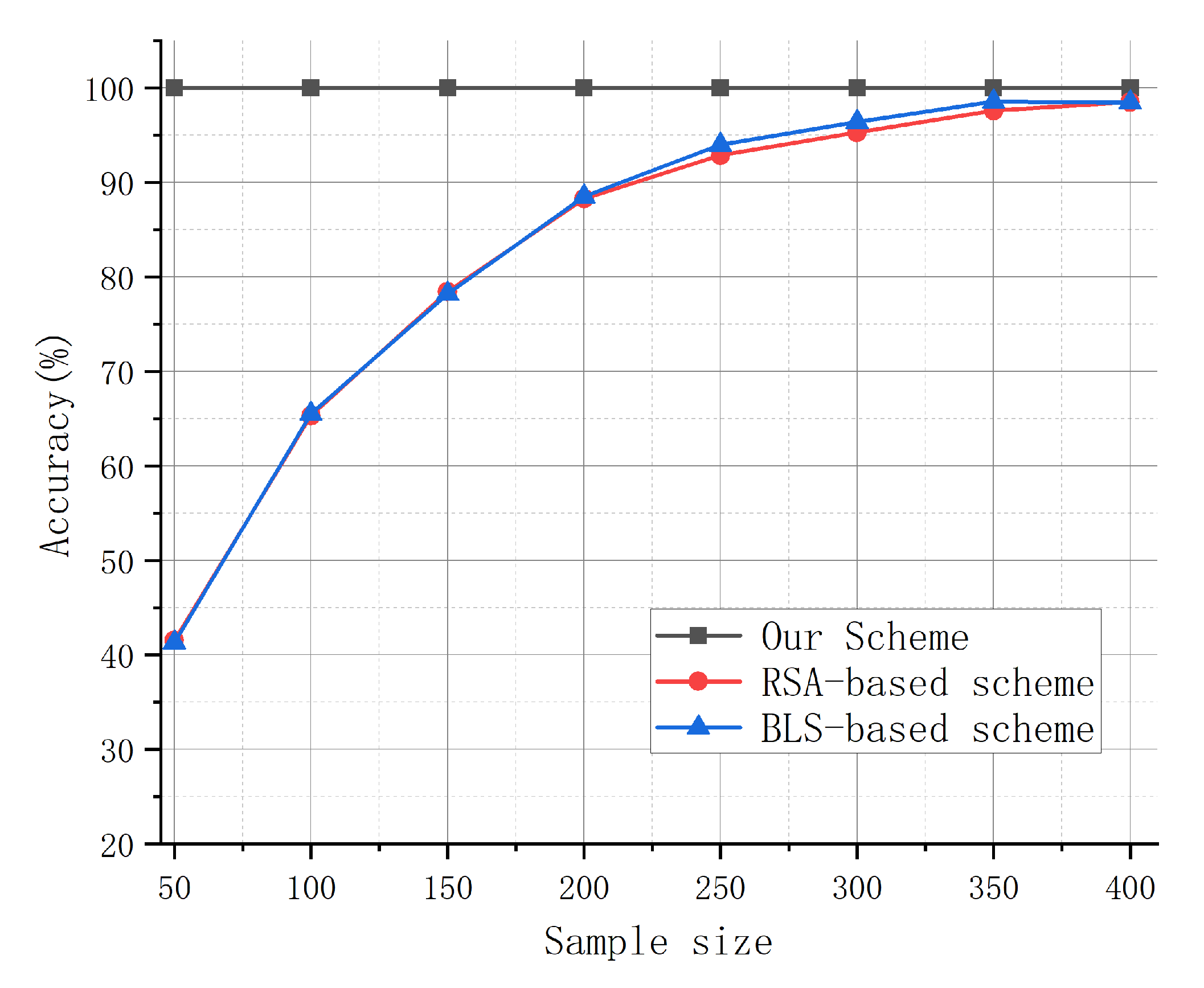

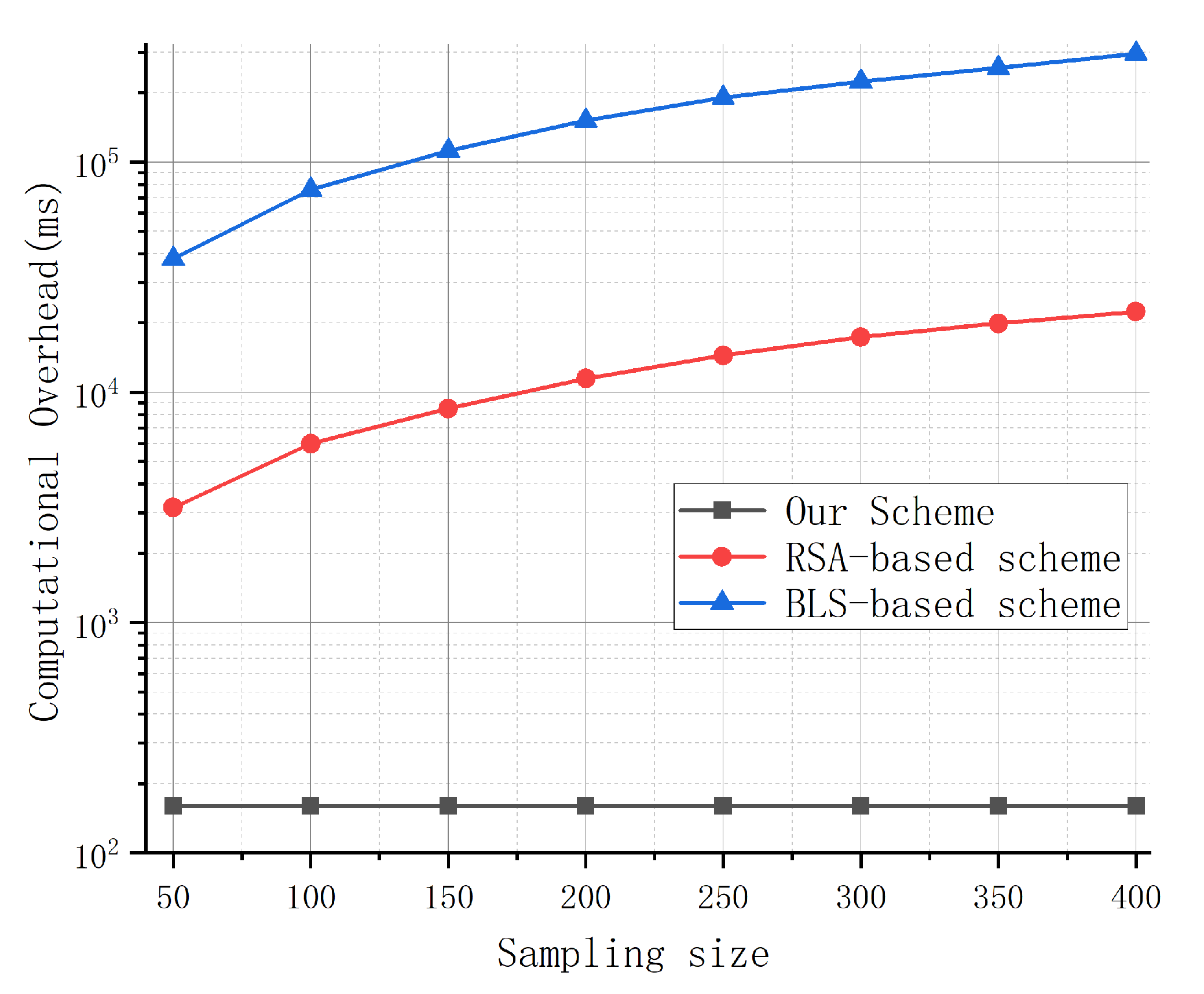

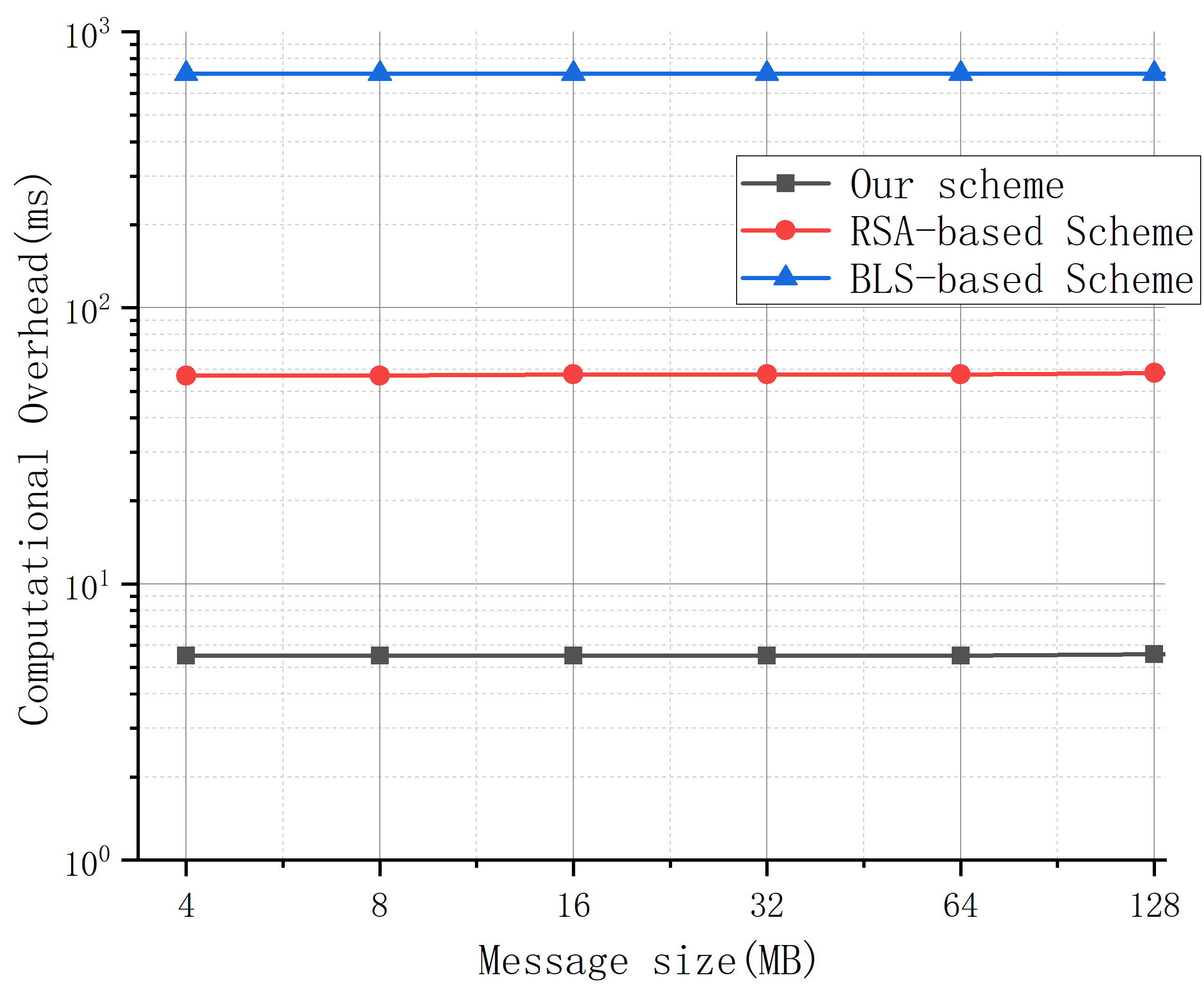

- We evaluate the proposed framework through extensive experimental simulations. The results show an approximately 3% improvement in model accuracy over comparative schemes. Computational overhead is reduced by about 20%. Stable convergence is maintained under packet loss and device heterogeneity.

2. Related Work

3. Preliminary

3.1. Homomorphic Encryption

3.2. Trusted Execution Environment

3.3. Schnorr Aggregated Signature

4. Secure and Verifiable Edge-Federated Learning for UAV Applications with HE and TEE

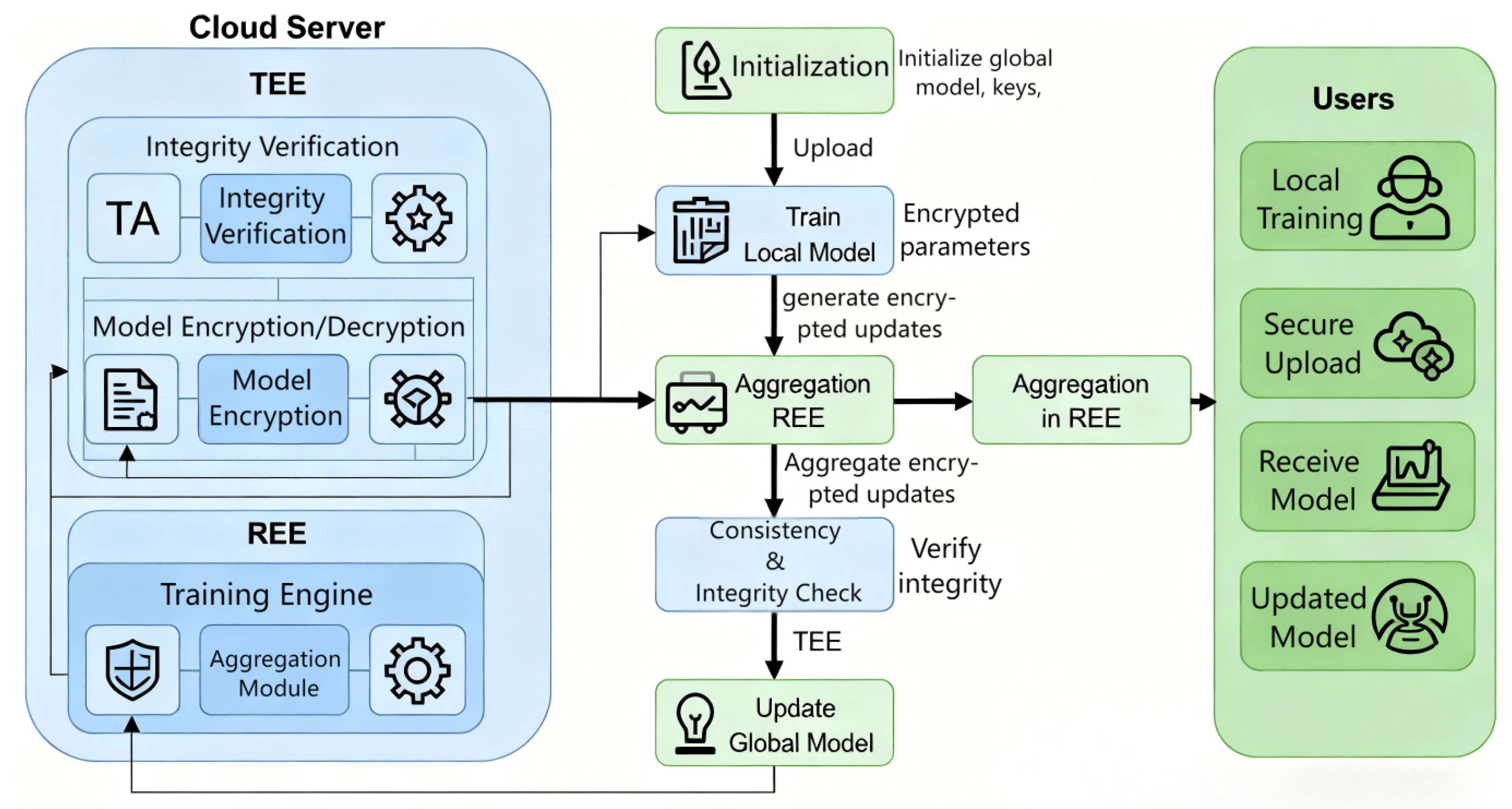

4.1. System Model

4.2. Security Aggregation and Authentication Mechanism

4.2.1. System Initialization

- First, the TA deployed in the TEE selects a common point G, the Collision Resistant Hash Function (CRHF) , and shares , G and with all Federated Learning users. Thus, the server and all federated learning users use the same parameters for generating and verifying integrity proofs.

- TA selects its private key , Calculate the corresponding public key , and select a random integer x. Subsequently, the TA generates detection requests for each of the N federated learning users . Detecting a request is a tuple of three elements, , where is the identity of the federated learning user .

- After consultation among all participants, it was agreed to encrypt the user’s local model parameters using the HE function to generate the public and private keys required to perform HE. Subsequently, the server generates the initial global model parameter and distributes it to each user together with .

4.2.2. Local Model Training and Parameter Upload

- User checks the correctness of by checking if the user identification () in is correct. If it is correct, the is considered valid, otherwise the is discarded. in a subsequent step, the integrity proof is generated using and .

- The user chooses a random integer and obtains . Note that and are time-sensitive values that will only be used once.

- User generates the signature of the ciphertext of the local model parameters, denoted as , which serves as the integrity proof of .

- The user generates the response and sends it back to the TA in response to the TA’s check request. The response is a tuple containing three elements, , and also sends to the server.

4.2.3. Local Model Parameter Integrity Validation

- The REE initiates a parameter integrity verification request to the TA and sends its own received to the TA.

- TA aggregates the signatures and R of each user in the response set RES to obtain .

-

TA perform the following calculations and determine if the equation holds true.If Equation (4) holds, the ciphertexts received by the server for all users’ local model parameters are complete, and the TA sends the message to the REE, which receives and acknowledges it and performs the ciphertext aggregation computation to obtain the aggregated ciphertext .

-

If the calculation yields that Equation (4) does not hold, the TA starts a lookup of the corrupted local model parameters. Depending on the size of N, this scheme provides two methods of lookup.Sequential lookup: If N is small, the TA can verify each element in individually, one by one, to verify the integrity of to computeIf Equation (5) above holds, the integrity of can be determined. However, as mentioned earlier, the individual-by-individual verification approach results in a high computational overhead when N is large. Instead, it is obvious that aggregate verification can be performed on any corresponding subset of the response set and the ciphertext set C. This allows the TA to divide a set of integrity proofs into multiple subsets for aggregate verification.Bisection Lookup: When the number of users N is large, the idea of bisection lookup can be borrowed to iteratively split the response set into two subsets on average, and verify the aggregation of these subsets. The specific process includes the following basic steps:

- If and Equation (4) still does not hold, it indicates that C contains multiple model parameter ciphers, at least one of which is corrupted. At this point, C and are partitioned into two corresponding subsets, denoted as , , and .

- Repeat steps 5 and 6 for and , respectively, until all corrupted model parameter ciphertexts have been successfully localized.

4.2.4. Global Model Parameter Consistency Check

- TA sends a detection request to , where is the identity of the new global model parameter W.

- After confirming that the received reqREE is valid, the selects its private key and the corresponding public key . and selects a random integer to obtain .

-

generates an integrity proof of W, denoted as , which is used to perform a consistency check of the global model parameters, where

-

After user receives the from REE, it first performs the integrity proof of W, and calculates= If Equation (7) holds, determines that its received W is complete and subsequently sends to the TA for consistency verification.

- Let the signatures received by the TA from any two different users and be and . Since the global model parameters obtained by users and are the same in the normal case, it is certain that the users receive the same global model parameters if .

5. Security Analysis

5.1. Correctness

5.1.1. Individual Verification of Method Correctness

5.1.2. Aggregate Validation Method Correctness

5.2. Anti-Forgery Attacks

- Scenario 1: In this case, obtains the forged ciphertext data by corrupting , or by generating it by itself and sends it to the server, and according to Equation (5), the necessary condition for to be able to pass the authentication at TA is:holds, but since , then if Eqs. 10 hold, it implies that produces the same hash value for two different inputs, which conflicts with the fact that is collision resistant.

-

Scenario 2: In this case, chooses and generates its own public-private key pair and . then intercepts a valid proof for user and forges an integrity proof for based on .According to Equations (3) and (5), if we want the forged to pass the verification of TA, we need to make , which is because the hash function is a one-way function that contains ,and thus it is impossible to change the hash value or R_k in Equation (3). In orden to forge the integrity proof must know s and However, as mentioned earlier, s and are held separately and undisclosed by , and the attacker can only obtain the corresponding public keys and Nevertheless, if has a non-negligible advantage in obtaining and through and ,respectively, this will violate the ECDLP complexity assumption. In summary, our scheme guarantees security against forgery attacks.

5.3. Anti-Collusion Attack

- Key query. B receives such a query from A on user and runs a key generation algorithm to generate a key pair and returns it to A.

- Forgery. forges an individual integrity proof for a set of users after using the received keys of each user , which is signed in the ciphertext set under the ciphertext set . Subsequently A generates the forged aggregation integrity proofs and .

- Aggregation Verification Query. generates a response set using the key pairs received from each user, and sends the RES together with the ciphertext set C to for an aggregate verification query. receives the response and simulates the aggregate verification algorithm to determine whether is valid. then returns the validation result to .

6. Experiments And Analysis

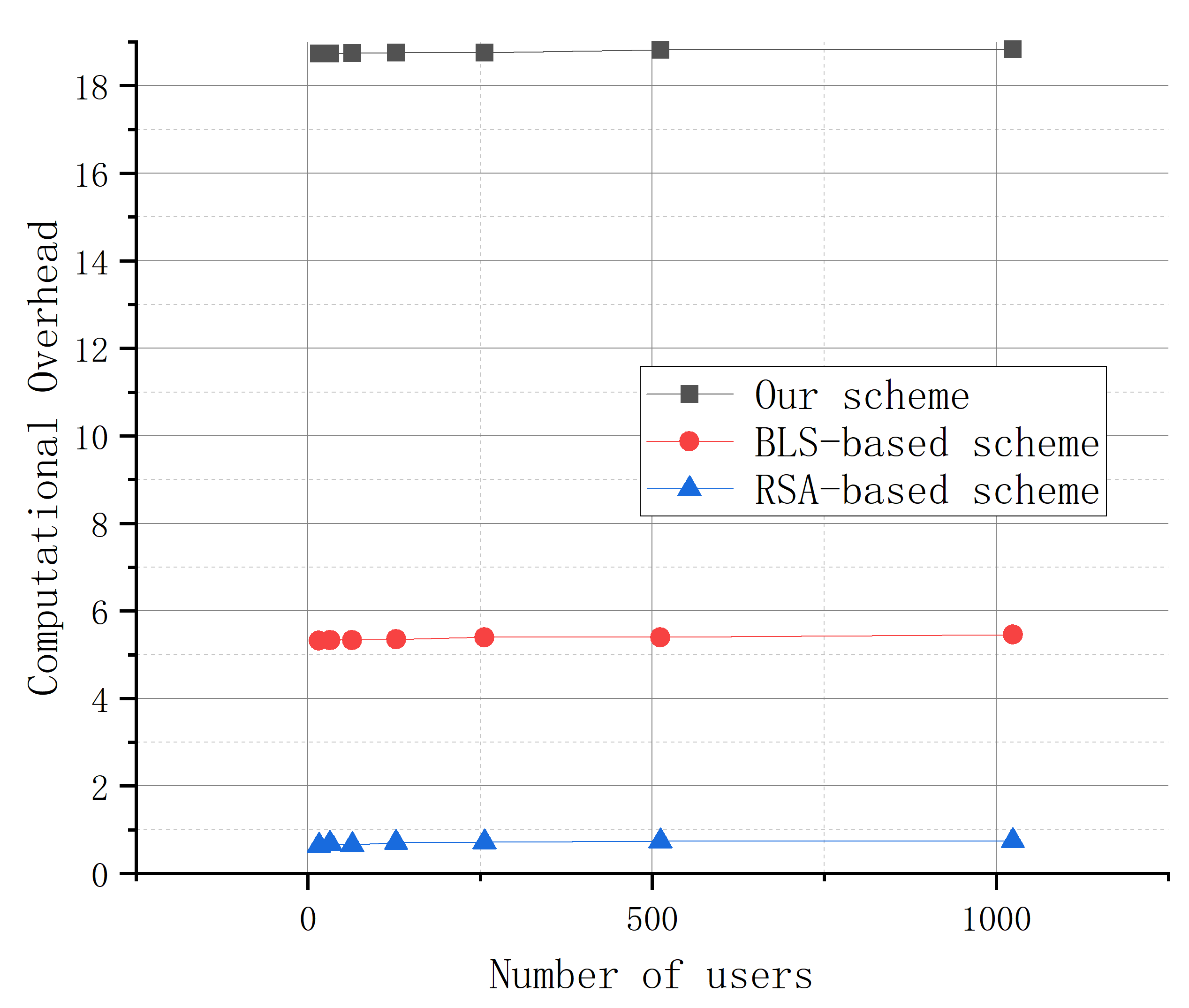

6.1. Efficiency Analysis

6.2. Experiment

6.2.1. Experimental Setup

6.2.2. Model Accuracy Analysis

6.2.3. Experimental Analysis of Program Availability

6.2.4. Experimental Analysis of Program Efficiency

7. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Zhang C., Xie Y., Bai H. and others. A survey on federated learning. Knowledge-Based Systems 2021, 216, 106775. [CrossRef]

- Kairouz P., McMahan H. B., Avent B. and others. Advances and open problems in federated learning. Foundations and Trends in Machine Learning 2021, 14(1–2), 1–210. [CrossRef]

- Jia N., Qu Z., Ye B., Wang Y., Hu S., Guo S. Federated learning: Strategies for improving communication efficiency. IEEE Communications Surveys & Tutorials 2025.

- Ferrag M. A., Tihanyi N., Debbah M. Reasoning beyond limits: Advances and open problems for llms. ICT Express 2025.

- Zhang C., Ekanut S., Zhen L. and others. Federated learning: Challenges, methods, and applications. IEEE Transactions on Neural Networks and Learning Systems 2022.

- Xie Q., Jiang S., Jiang L. and others. Efficient federated learning with homomorphic encryption for privacy-preserving AI. IEEE Transactions on Artificial Intelligence 2023.

- Wang Y. Optimizing Distributed Computing Resources with Federated Learning: Task Scheduling and Communication Efficiency. Journal of Computer Technology and Software 2025, 4(3).

- Aghaei M., Nikfar A., Shamsolmoali P. and others. Security and privacy in AI systems: Implications of GDPR. IEEE Transactions on Knowledge and Data Engineering 2021.

- Li X., He Z., Xu F. and others. Federated learning: A privacy-preserving approach to machine learning. IEEE Transactions on Artificial Intelligence 2021.

- Qin L., Zhu T., Zhou W., Yu P. S. Knowledge distillation in federated learning: A survey on long lasting challenges and new solutions. International Journal of Intelligent Systems 2025, 2025(1), pp. 7406934. [CrossRef]

- Ren Y., Leng Y., Cheng Y., Wang J. Secure data storage based on blockchain and coding in edge computing. Math. Biosci. Eng 2019, 16(4), pp. 1874–1892. [CrossRef]

- Dimlioglu T., Choromanska A. Communication-Efficient Distributed Training for Collaborative Flat Optima Recovery in Deep Learning. arXiv preprint arXiv:2507.20424 2025. [CrossRef]

- Aubinais E., Gassiat E., Piantanida P. Fundamental limits of membership inference attacks on machine learning models. Journal of Machine Learning Research 2025, 26(263), pp. 1–54.

- Park S., Ye J. C. Multi-task distributed learning using vision transformer with random patch permutation. IEEE Transactions on Medical Imaging 2022, 42(7), 2091–2105. [CrossRef]

- Lu W., Wang J., Chen Y. and others. Personalized federated learning with adaptive batchnorm for healthcare. IEEE Transactions on Big Data 2022.

- Ren Y., Leng Y., Qi J., Sharma P. K., Wang J., Almakhadmeh Z., Tolba A. Multiple cloud storage mechanism based on blockchain in smart homes. Future Generation Computer Systems 2021, 115, pp. 304–313. [CrossRef]

- Thakur A., Sharma P., Clifton D. A. Dynamic neural graphs based federated reptile for semi-supervised multi-tasking in healthcare applications. IEEE Journal of Biomedical and Health Informatics 2021, 26(4), 1761–1772. [CrossRef]

- Li J., Jiang M., Qin Y. and others. Intelligent depression detection with asynchronous federated optimization. Complex & Intelligent Systems 2023, 9(1), 115–131.

- Nowroozi E., Haider I., Taheri R., Conti M. Federated learning under attack: Exposing vulnerabilities through data poisoning attacks in computer networks. IEEE Transactions on Network and Service Management 2025.

- Fan X., Wang Y., Huo Y. and others. BEV-SGD: Best effort voting SGD against Byzantine attacks for analog-aggregation-based federated learning over the air. IEEE Internet of Things Journal 2022, 9(19), 18946–18959. [CrossRef]

- Jaswal R., Panda S. N., Khullar V. Federated learning: An approach for managing data privacy and security in collaborative learning. Recent Advances in Electrical & Electronic Engineering 2025.

- Zhang K., Song X., Zhang C., Yu S. Challenges and future directions of secure federated learning: A survey. Frontiers of computer science 2022, 16(5), pp. 165817. [CrossRef]

- Xue J., Liu Y., Li S. A Tripartite Federated Learning Framework with Ternary Gradients and Differential Privacy for Secure IoV Data Sharing. In 2025 5th International Symposium on Computer Technology and Information Science (ISCTIS), pp. 682–686. 2025.

- Sun L., Wang Y., Ren Y., Xia F. Path signature-based xai-enabled network time series classification. Science China Information Sciences 2024, 67(7), pp. 170305. [CrossRef]

- Wahab O. A., Rjoub G., Bentahar J. and others. Federated against the cold: A trust-based federated learning approach to counter the cold start problem in recommendation systems. Information Sciences 2022, 601, 189–206. [CrossRef]

- Xie Q., Jiang S., Jiang L., Huang Y., Zhao Z., Khan S., Dai W., Liu Z., Wu K. Efficiency optimization techniques in privacy-preserving federated learning with homomorphic encryption: A brief survey. IEEE Internet of Things Journal 2024, 11(14), pp. 24569–24580. [CrossRef]

- Madi A., Stan O., Mayoue A. and others. A secure federated learning framework using homomorphic encryption and verifiable computing. Proceedings of RDAAPS 2021, 1–8.

- Zhang C., Ekanut S., Zhen L. and others. Augmented multi-party computation against gradient leakage in federated learning. IEEE Transactions on Big Data 2022, 10(6), 742–751. [CrossRef]

- Xie Q., Jiang S., Jiang L. and others. Efficiency optimization techniques in privacy-preserving federated learning with homomorphic encryption: A brief survey. IEEE Internet of Things Journal 2024, 11(14), 24569–24580. [CrossRef]

- Jin W., Yao Y., Han S., Gu J., Joe-Wong C., Ravi S., Avestimehr S., He C. FedML-HE: An efficient homomorphic-encryption-based privacy-preserving federated learning system. arXiv preprint arXiv:2303.10837 2023.

- Ren Y., Lv Z., Xiong N. N., Wang J. HCNCT: A cross-chain interaction scheme for the blockchain-based metaverse. ACM Transactions on Multimedia Computing, Communications and Applications 2024, 20(7), pp. 1–23. [CrossRef]

- Li Y., Li H., Xu G. and others. Efficiency in unreliable users. IEEE Internet of Things Journal 2021, 9(13), 11590–11603. [CrossRef]

- He C., Liu G., Guo S. and others. Privacy-preserving and low-latency federated learning in edge computing. IEEE Internet of Things Journal 2022, 9(20), 20149–20159. [CrossRef]

- Zhang L., Xu J., Vijayakumar P. and others. Homomorphic encryption-based privacy-preserving federated learning in IoT-enabled healthcare system. IEEE Transactions on Network Science and Engineering 2022, 10(5), 2864–2880. [CrossRef]

- Wang B., Li H., Guo Y. and others. PPFLHE: A privacy-preserving federated learning scheme with homomorphic encryption for healthcare data. Applied Soft Computing 2023, 146, 110677. [CrossRef]

- Firdaus M., Rhee K. H. Secure federated learning with blockchain and homomorphic encryption for healthcare data sharing. Proceedings of the International Conference on Cyberworlds (CW) 2024, 257–263.

- Mantey E. A., Zhou C., Anajemba J. H. and others. Federated learning approach for secured medical recommendation in internet of medical things using homomorphic encryption. IEEE Journal of Biomedical and Health Informatics 2024, 28(6), 3329–3340. [CrossRef]

- Liu C., Guo H., Xu M. and others. Extending on-chain trust to off-chain—Trustworthy blockchain data collection using trusted execution environment (TEE). IEEE Transactions on Computers 2022, 71(12), 3268–3280. [CrossRef]

- Wang Y., Zhang Z., He N. and others. Symgx: Detecting cross-boundary pointer vulnerabilities of SGX applications via static symbolic execution. Proceedings of the ACM SIGSAC Conference on Computer and Communications Security (CCS) 2023, 2710–2724.

- Luo F., Wang H., Yan X. Comments on “VERSA: Verifiable secure aggregation for cross-device federated learning”. IEEE Transactions on Dependable and Secure Computing 2023, 21(1), 499–500. [CrossRef]

- Gao H., He N., Gao T. SVeriFL: Successive verifiable federated learning with privacy-preserving. Information Sciences 2023, 622, 98–114. [CrossRef]

| Stage | Dot-addition Operation | Dot-Multiplication | Hash Operation |

|---|---|---|---|

| Initialization Stage | 0 | 1 | 0 |

| Parameter Upload Stage | 1 | 2 | 1 |

| Individual Verification (IVS) | n | 2n | n |

| Polymerization Verification (IVS) | 2n | n+1 | n |

| Localization (IVS) | 2 | 2n | n |

| Coherence Ccheck Phase | 2 | 5 | 2 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).