Submitted:

14 January 2026

Posted:

15 January 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

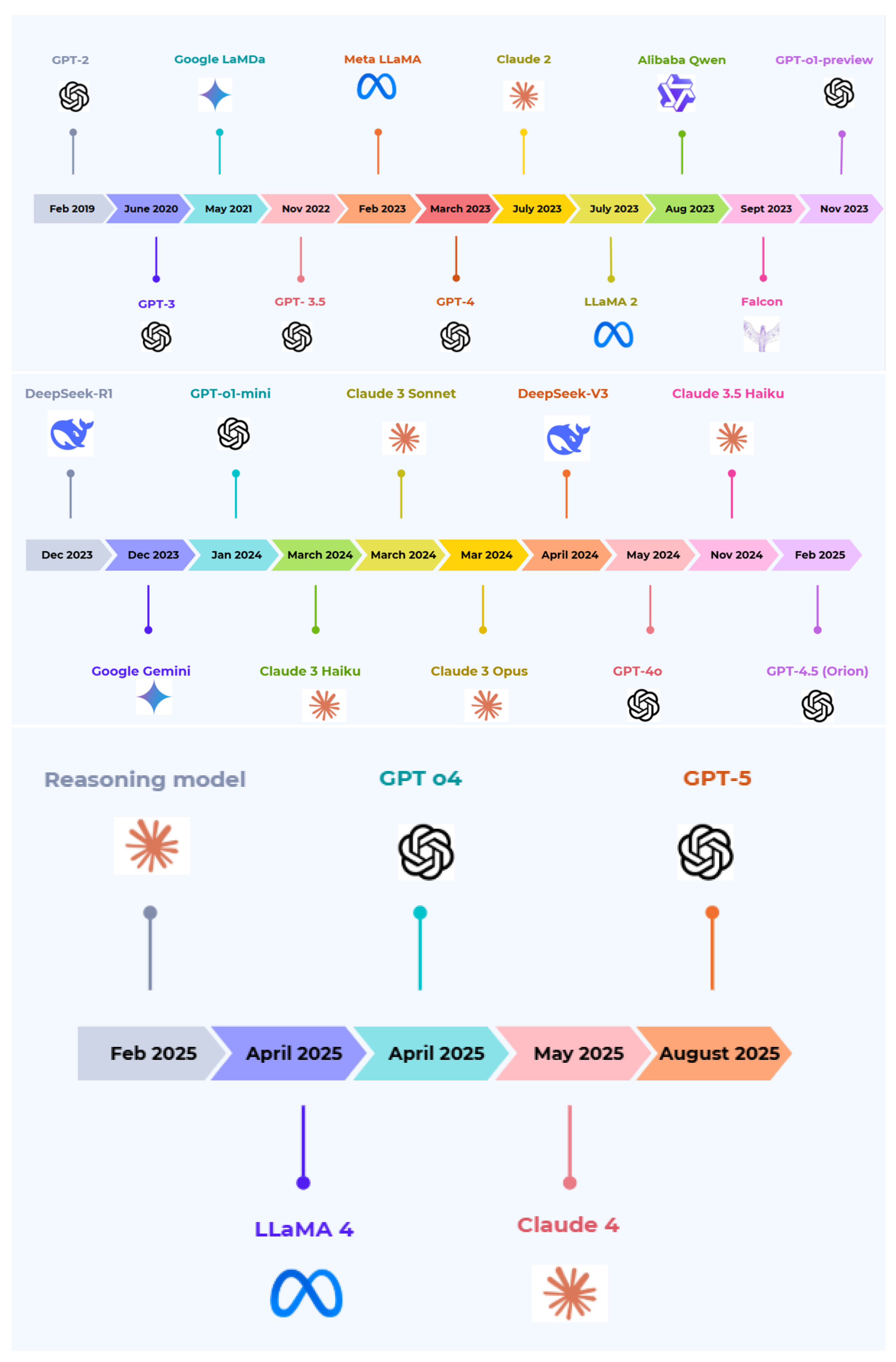

1.1. Historical Background: From GPT-2 to Modern Multimodal LLMs

1.2. Goals of This Paper

- A systematic consolidation of existing literature into a coherent taxonomy of contemporary LLM architectures spanning proprietary and open-source ecosystems.

- Reconstruction and comparative analysis of over 50 representative LLM architectures, abstracted from technical reports and model documentation to enable consistent cross-model comparison.

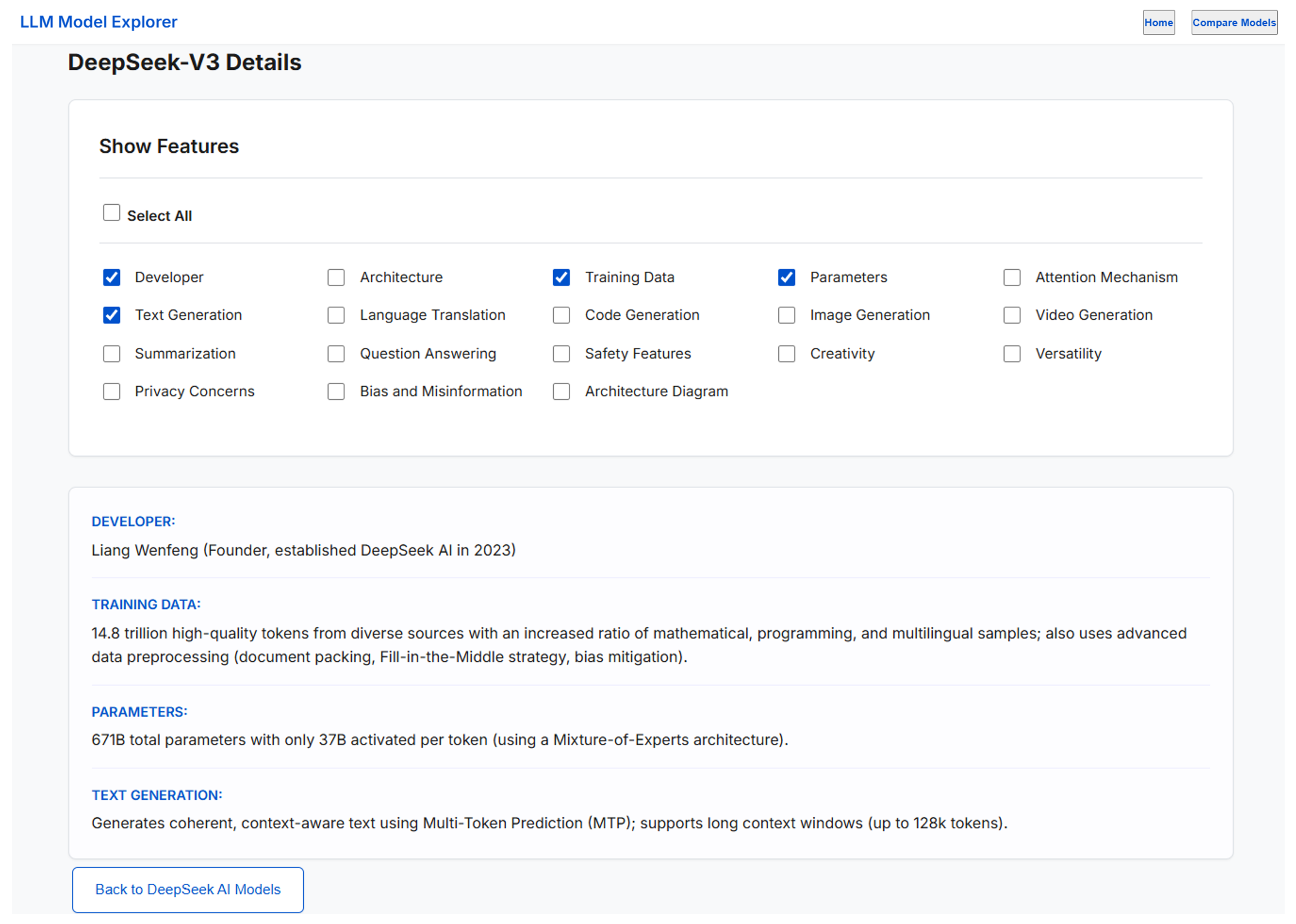

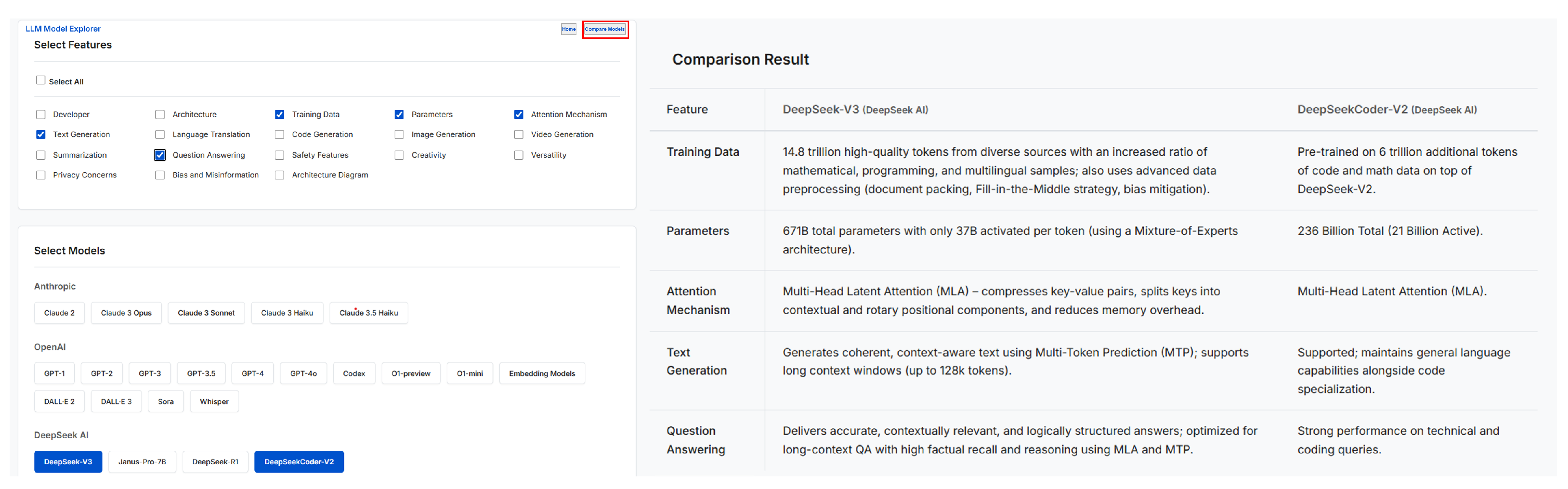

- An interactive, feature-driven visualization interface that operationalizes the proposed taxonomy, enabling selective inspection and side-by-side comparison across architectural, training, capability, and safety dimensions.

- Release of an open-source implementation and live web-based deployment of the LLM Model Explorer to support transparency, reproducibility, and continued community-driven exploration.

- Comparative analysis of core design choices, including attention mechanisms, normalization strategies, activation functions, context-length scaling, and efficiency-oriented optimizations.

- Examination of alignment and safety methodologies, including Reinforcement Learning from Human Feedback (RLHF) and constitutional approaches, with emphasis on instruction adherence and hallucination mitigation.

- Identification of open challenges related to transparency, computational cost, data governance, and responsible deployment.

2. Related Work

2.1. Foundational Transformer Models and LLMs

2.2. Surveys on Generative AI, Alignment, and Multimodal Learning

2.3. Positioning of This Work

3. Taxonomy of Large Language Model (LLM) Architectures

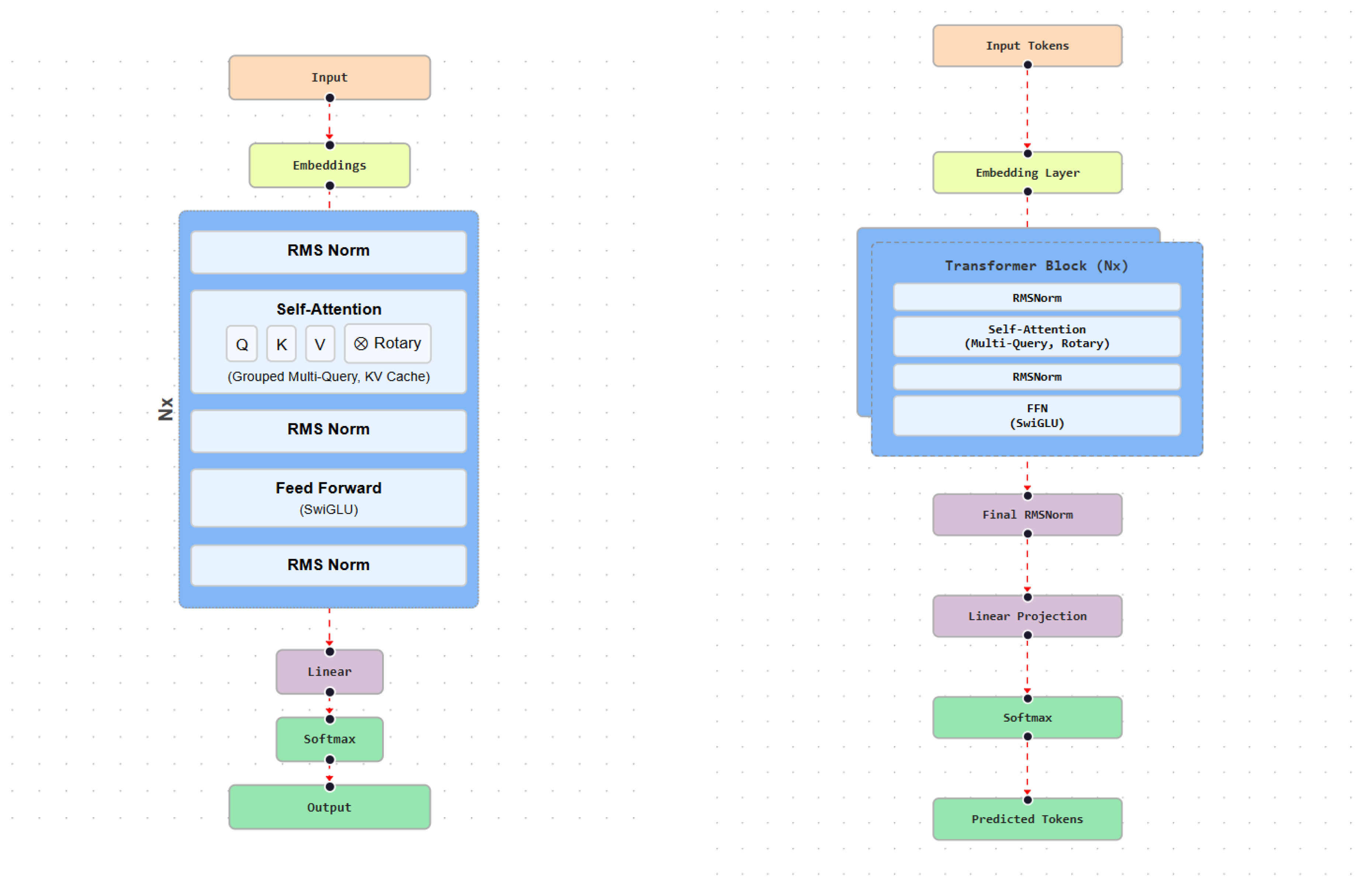

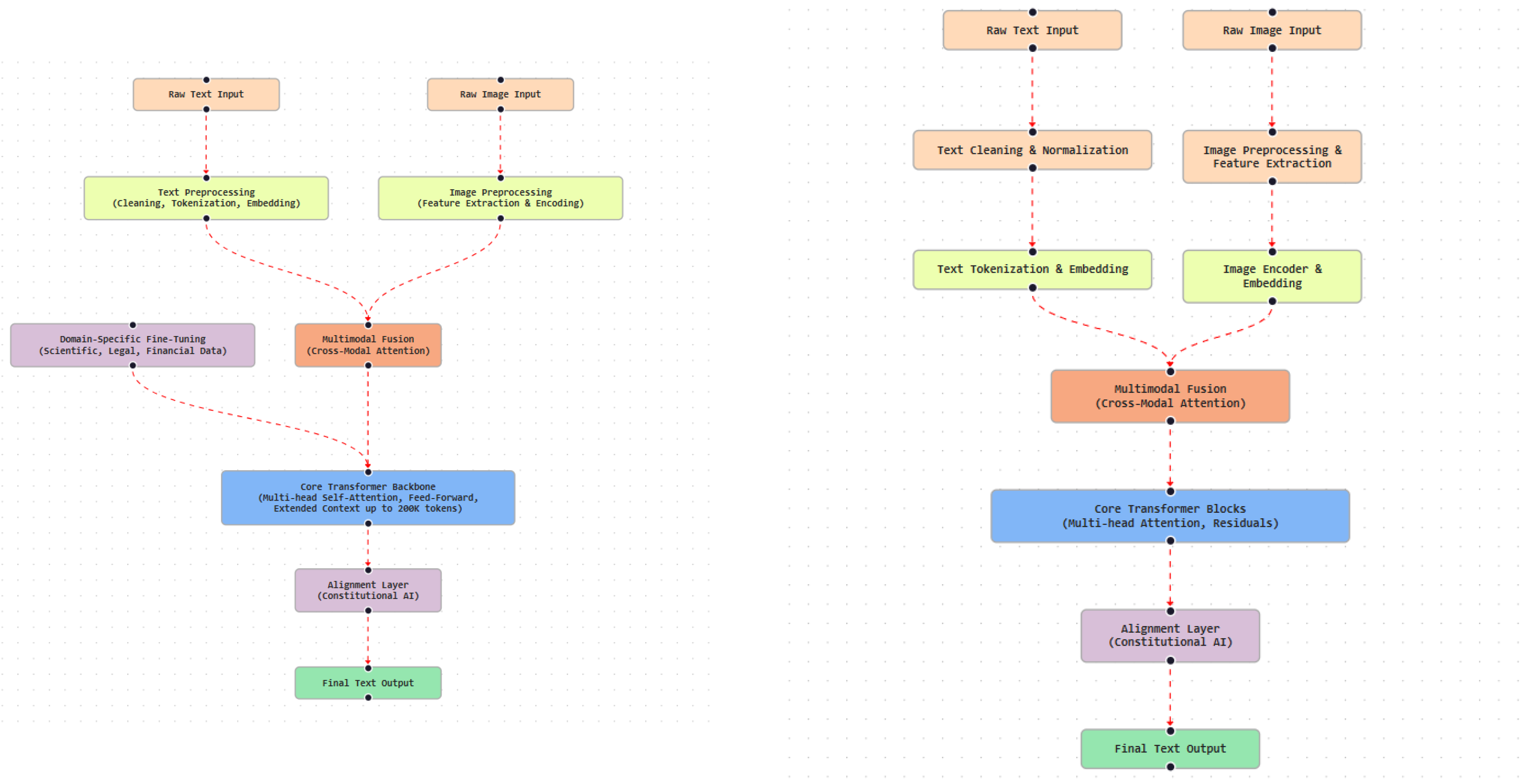

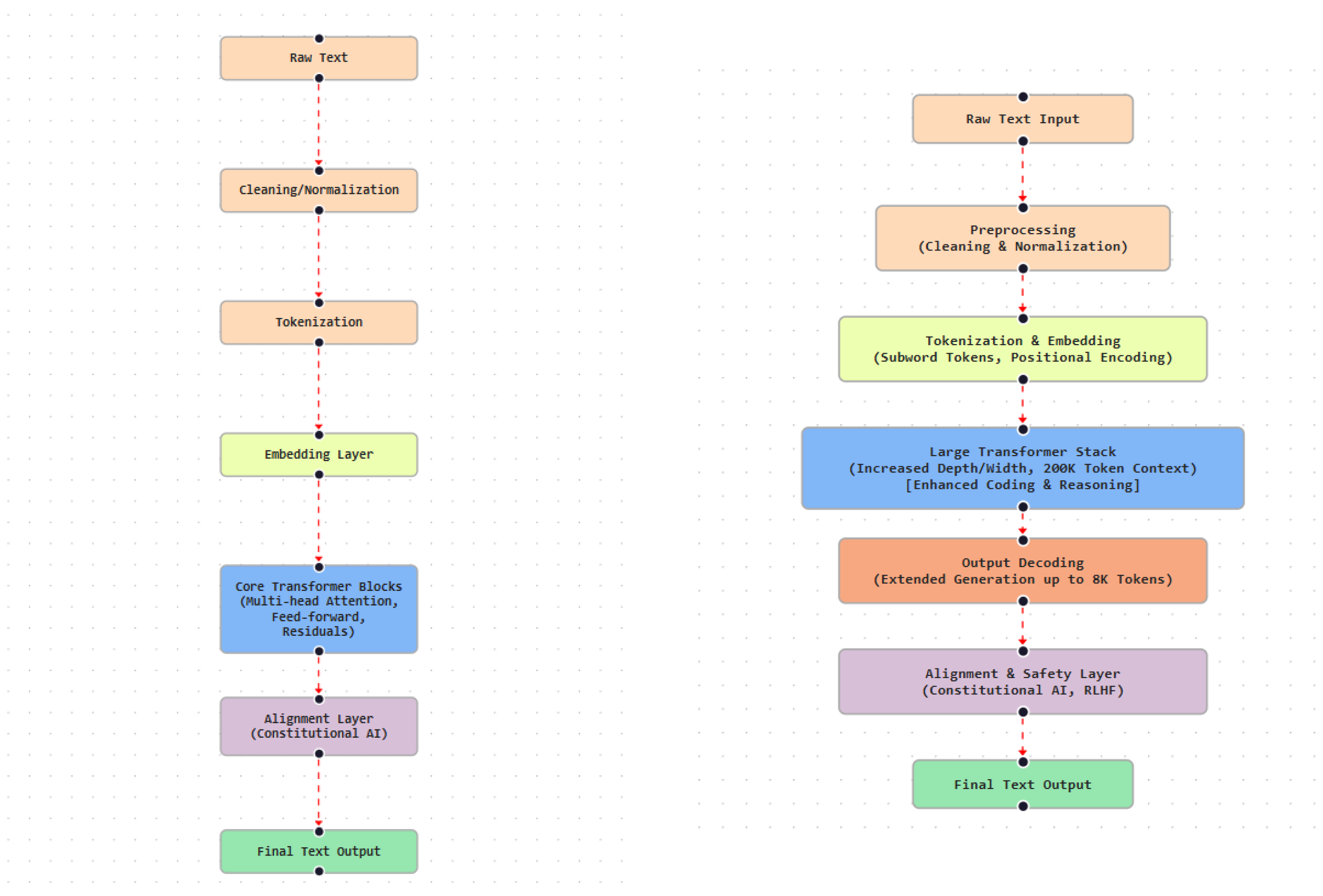

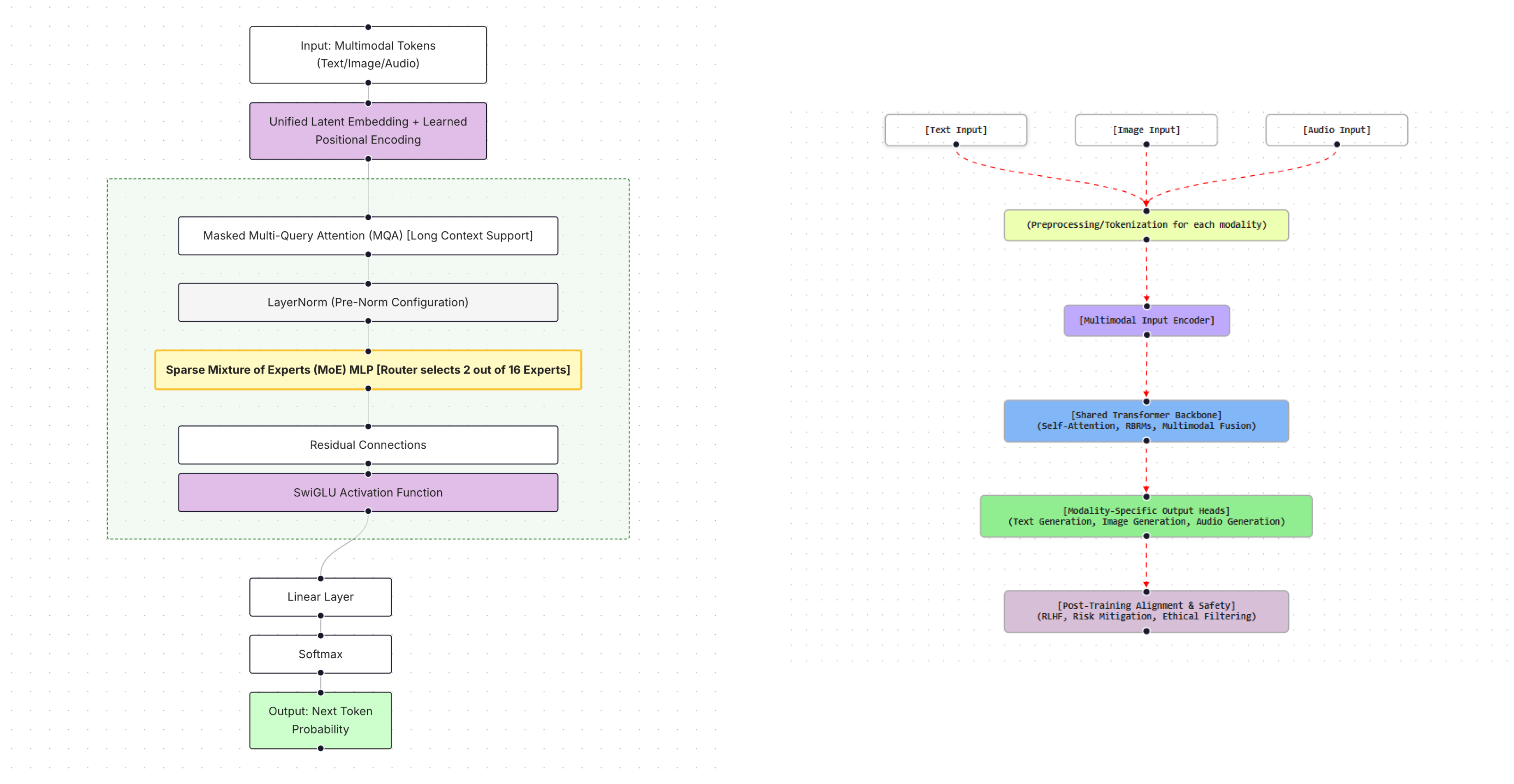

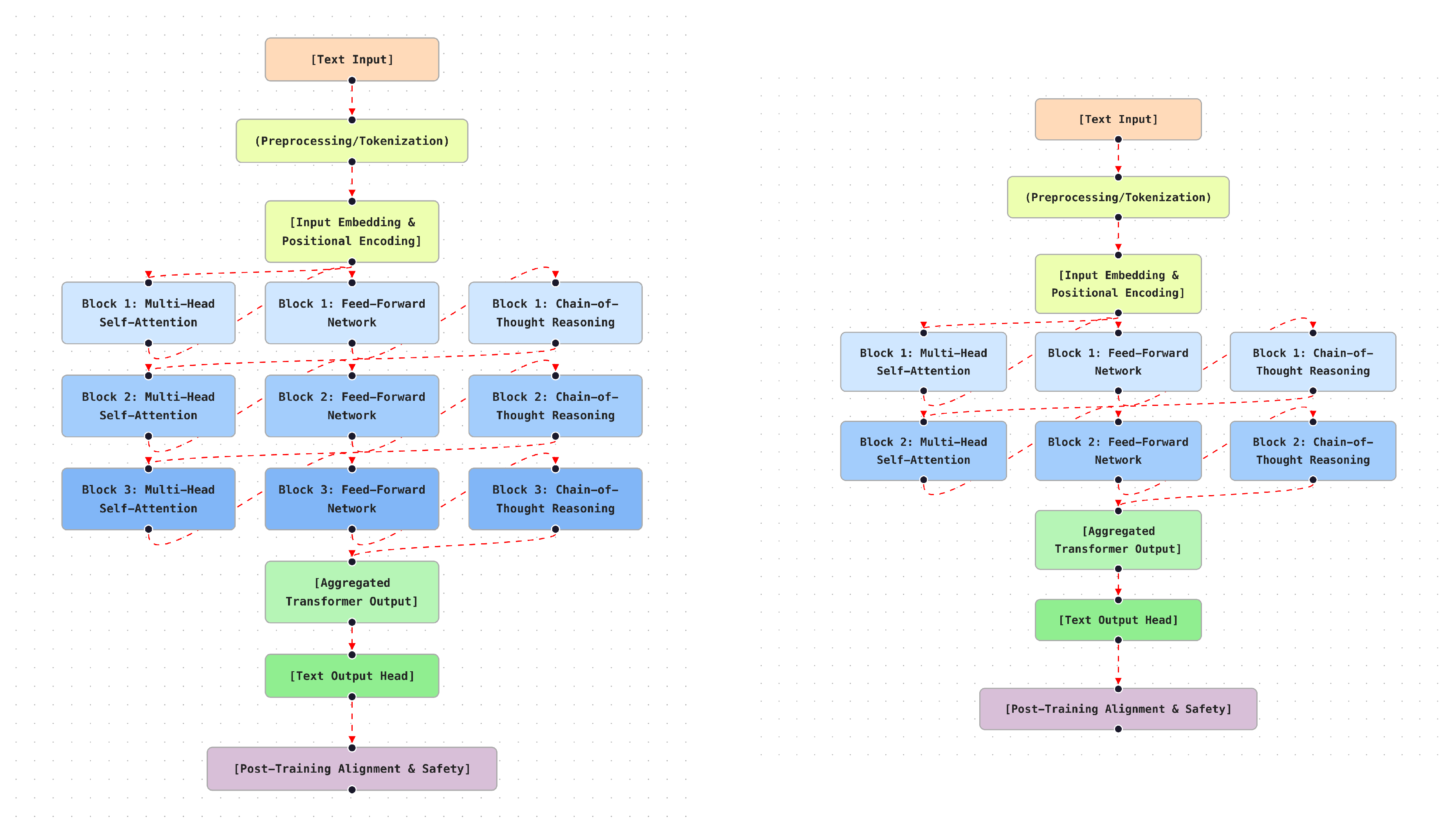

3.1. Transformer Evolution and Architectural Enhancements

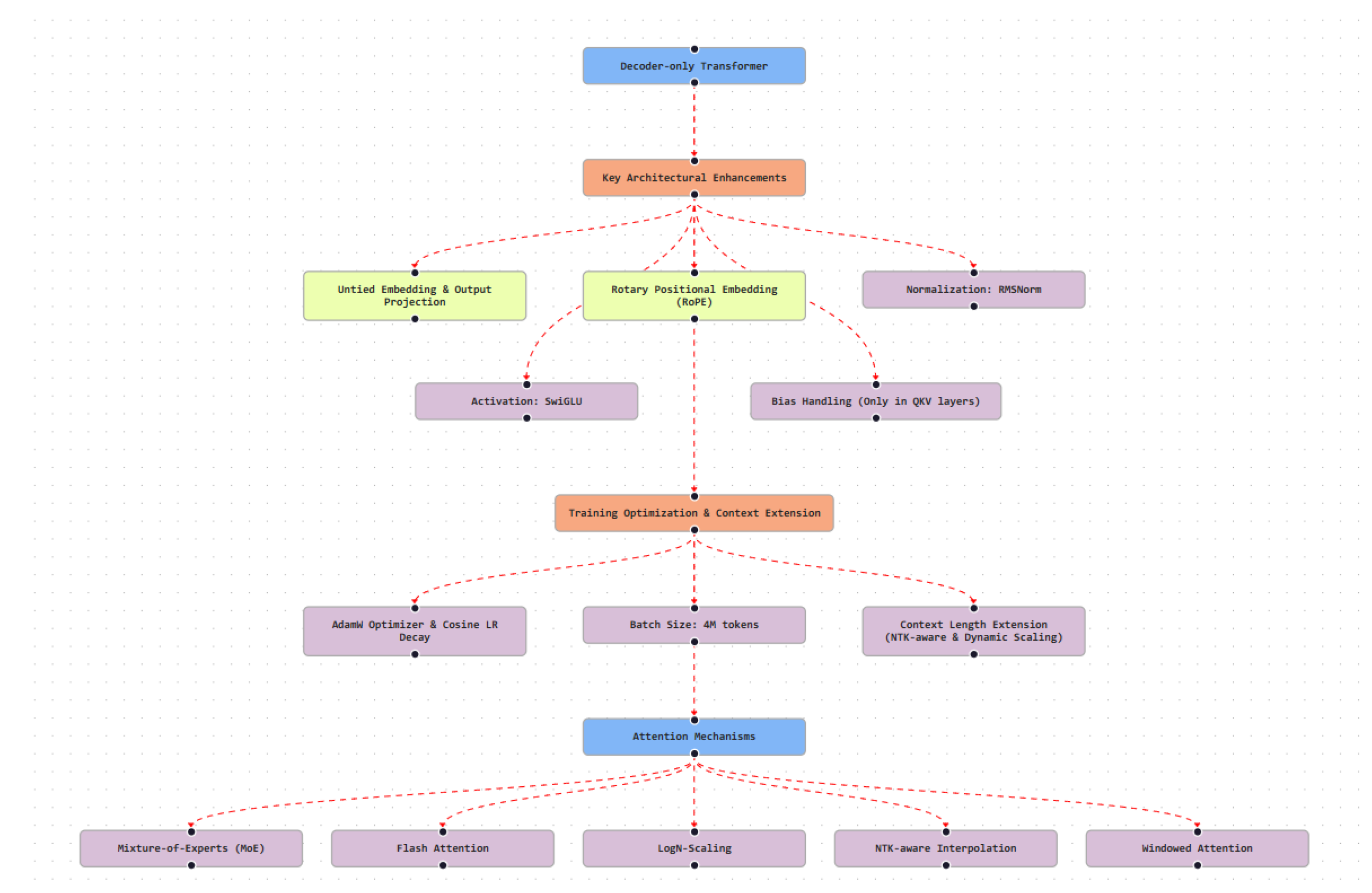

- Grouped Query Attention (GQA), adopted in LLaMA 2 [3], which reduces inference cost by sharing key–value projections across groups of attention heads.

- MoE routing, employed in Gemini [4], which enables sparse activation of expert subnetworks and parameter-efficient scaling.

- Multiquery and Multigroup Attention, used in Falcon [18], which lowers key–value cache memory requirements during inference.

- Multi-Head Latent Attention (MLA), introduced in DeepSeek [14], which applies low-rank compression to attention representations to reduce computation and storage overhead.

3.2. Core Architectural Components

3.2.1. Positional Encodings

3.2.2. Self-Attention (SA) Variants

- Multiquery Attention, used in Falcon and Qwen, which shares key and value projections across heads to reduce memory usage.

- GQA, employed in LLaMA 2, which balances efficiency and representational capacity.

- Cross-Modal Attention, implemented in Gemini, which integrates textual, visual, and auditory inputs within a unified attention framework.

3.2.3. Activation Functions

3.2.4. Normalization Strategies

3.3. Optimization and Alignment Techniques

- AdamW optimization, typically combined with cosine learning-rate decay and linear warm-up schedules [21].

- Mixed-precision training, using formats such as bfloat16 or FP8 to improve training efficiency while maintaining numerical stability.

- Gradient clipping and Z-loss regularization, applied in large-scale models such as Falcon-180B to stabilize optimization under large batch sizes [18].

- Instruction tuning and RLHF, employed by models including GPT-4, Claude, and DeepSeek to align outputs with human preferences and safety constraints [16].

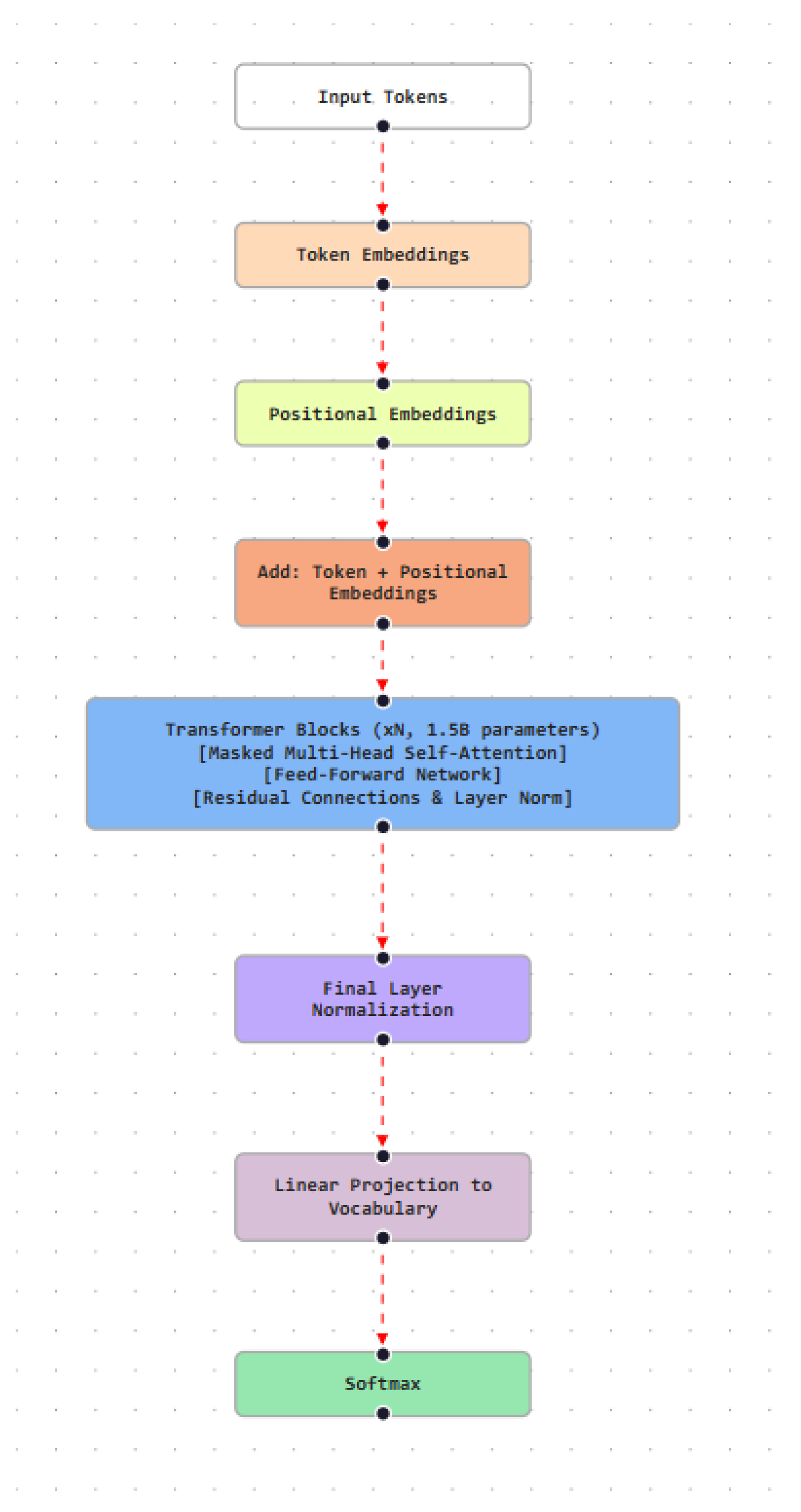

4. GPT-2 Model

4.1. Model Architecture

4.2. Data and Preprocessing

4.3. Training and Alignment

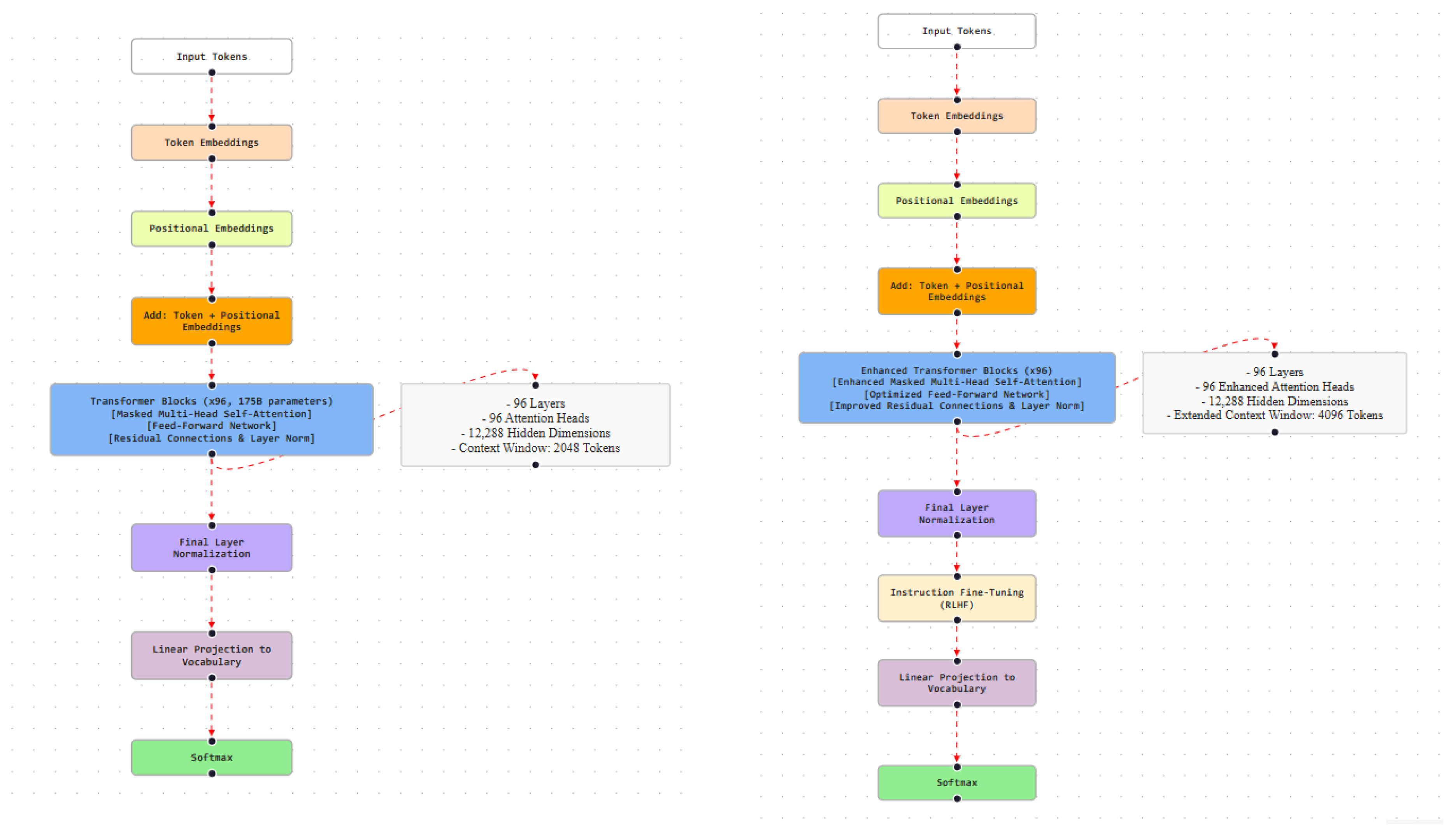

5. GPT-3 Model

5.1. Model Architecture

5.2. Data and Preprocessing

5.3. Training and Alignment

6. GPT-3.5 Model

6.1. Model Architecture

6.2. Training and Alignment

7. GPT-4 Model

7.1. Model Architecture

7.2. Data and Preprocessing

7.3. Training and Alignment

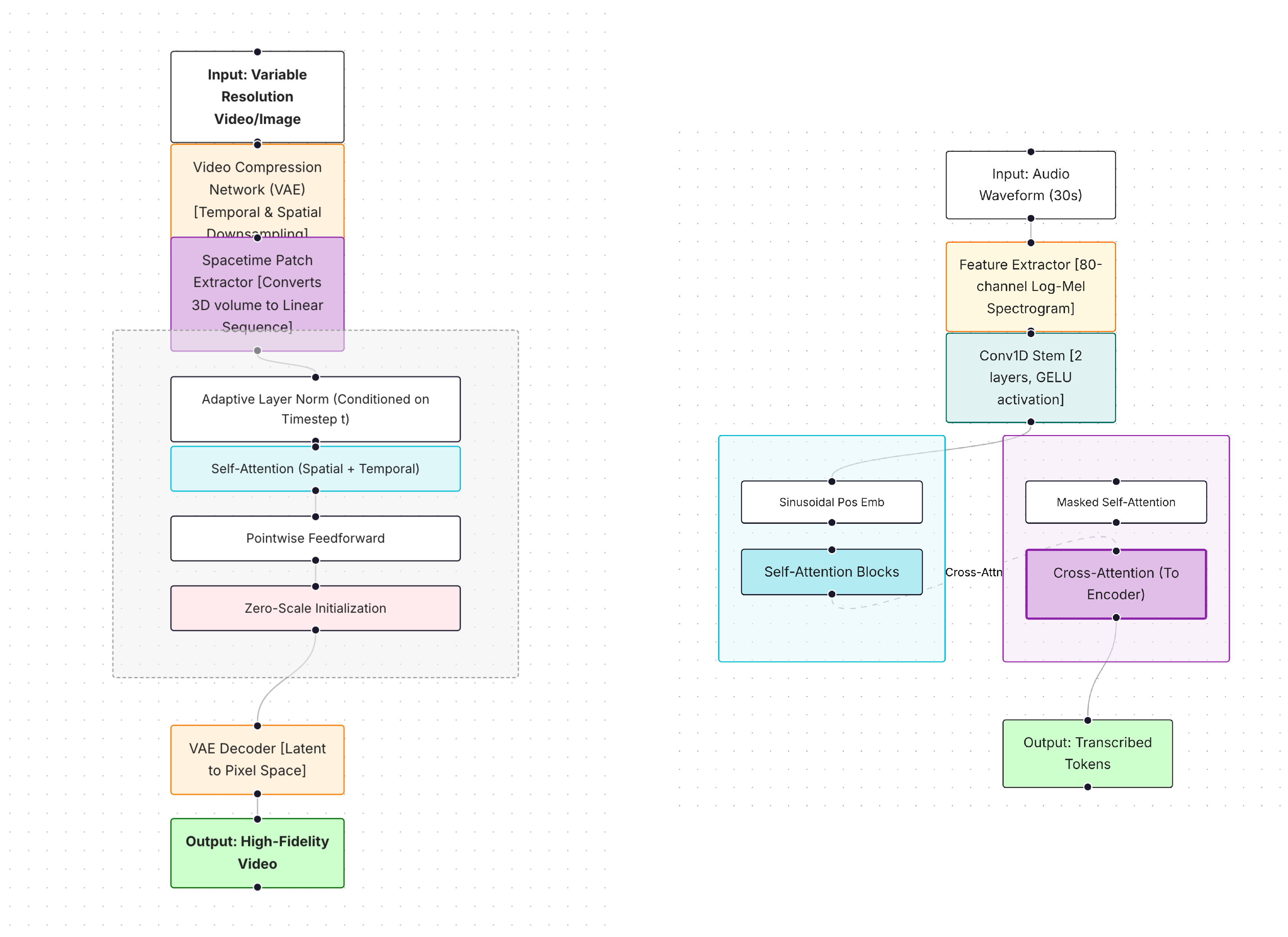

8. GPT-4o Model

8.1. Training and Alignment

8.2. Safety and Compliance Measures

9. GPT-O1 Model

9.1. Model Architecture

9.2. Training and Alignment

9.3. Data Processing and Safety

9.4. Safety and Model Variants

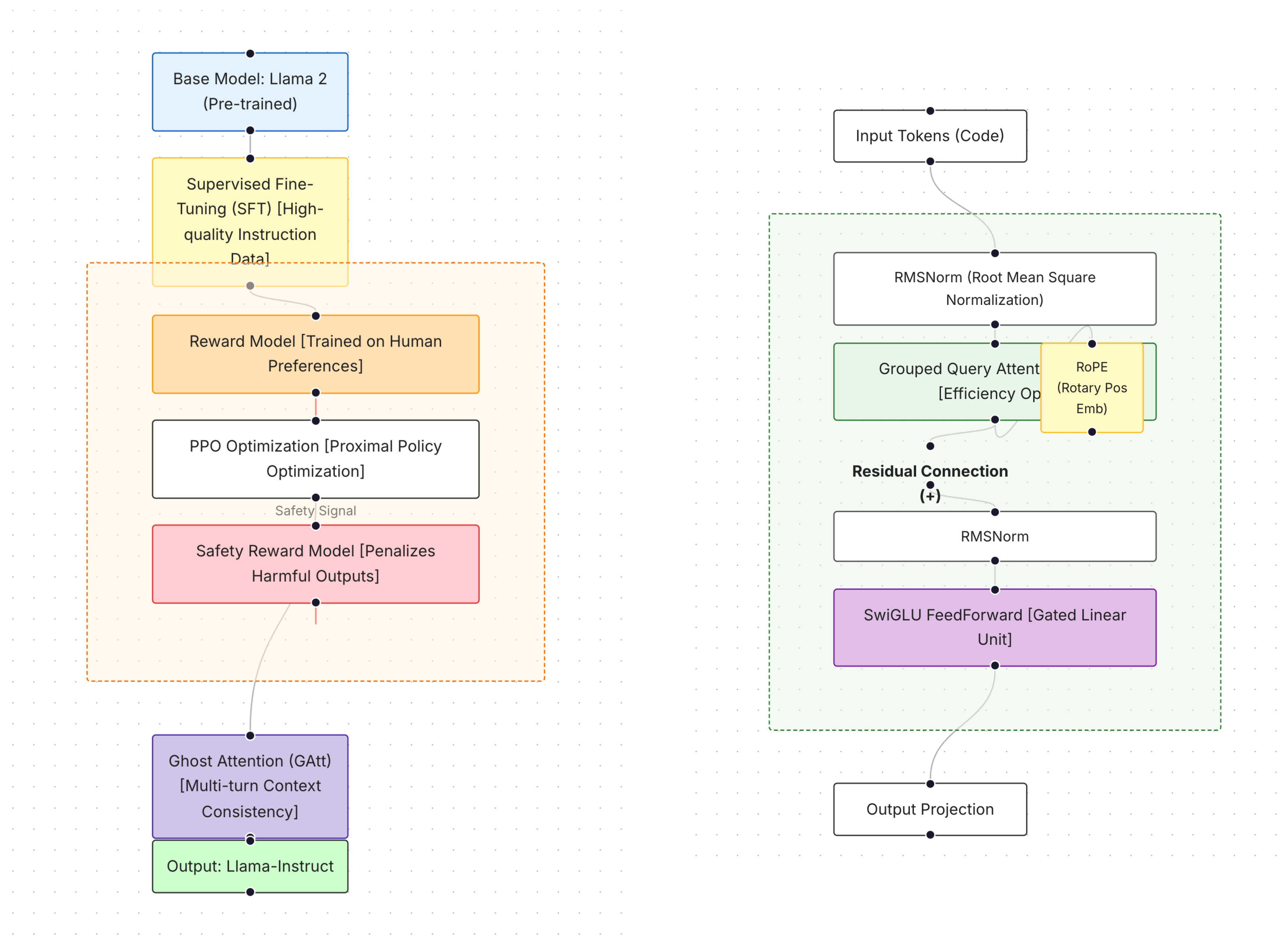

10. LLaMA 2 Model

10.1. Model Architecture and Innovations

10.2. Model Configurations

10.3. Training Data and Optimization

10.4. Alignment and Instruction Retention

10.5. Applications

10.6. Safety, Bias, and Trust

11. Google’s Gemini

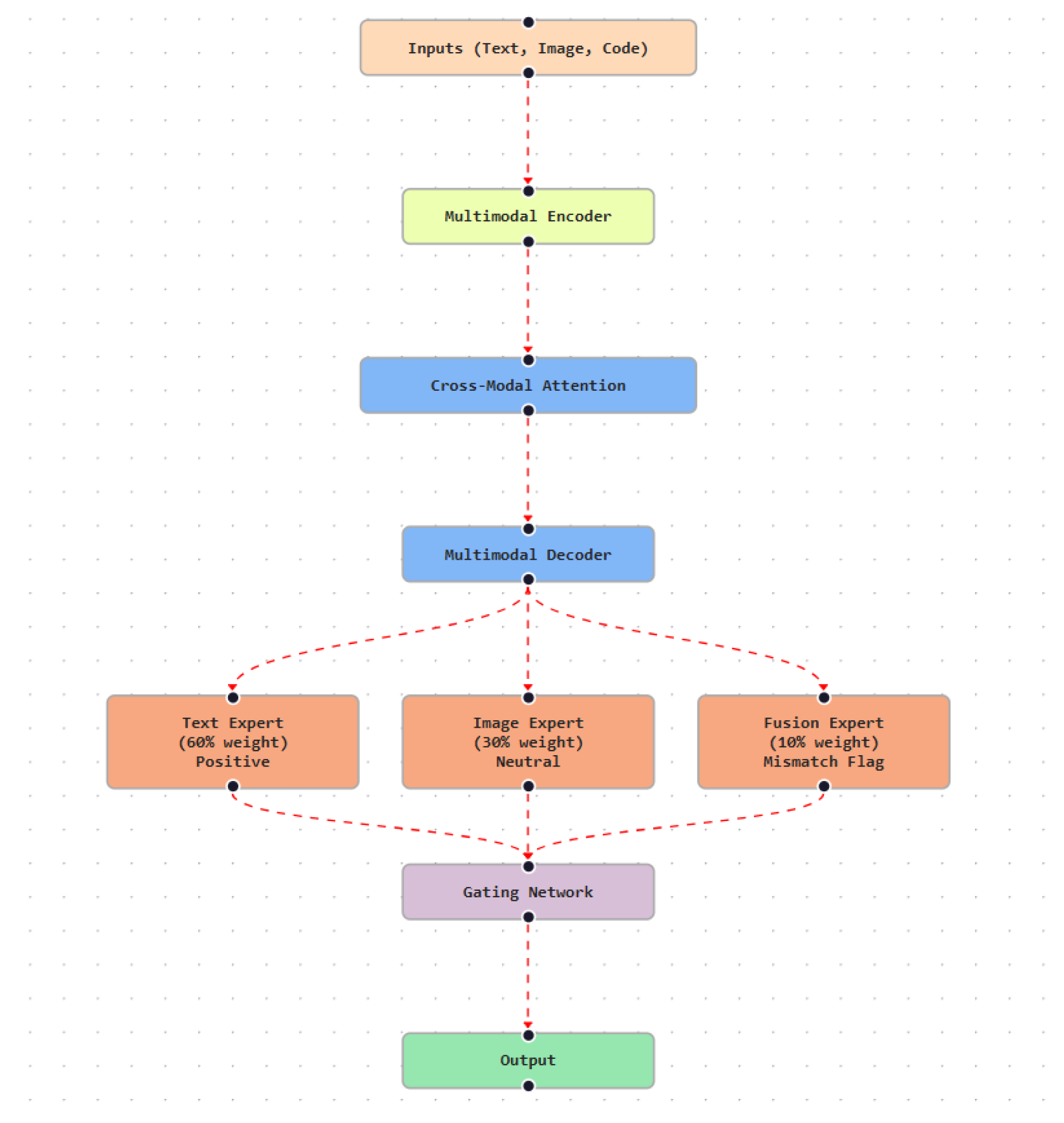

11.1. Model Architecture and Modular Design

11.2. Model Configurations and Training Data

11.3. Attention Mechanisms and Efficiency Optimizations

11.4. Capabilities and Applications

11.5. Safety, Privacy, and Trust

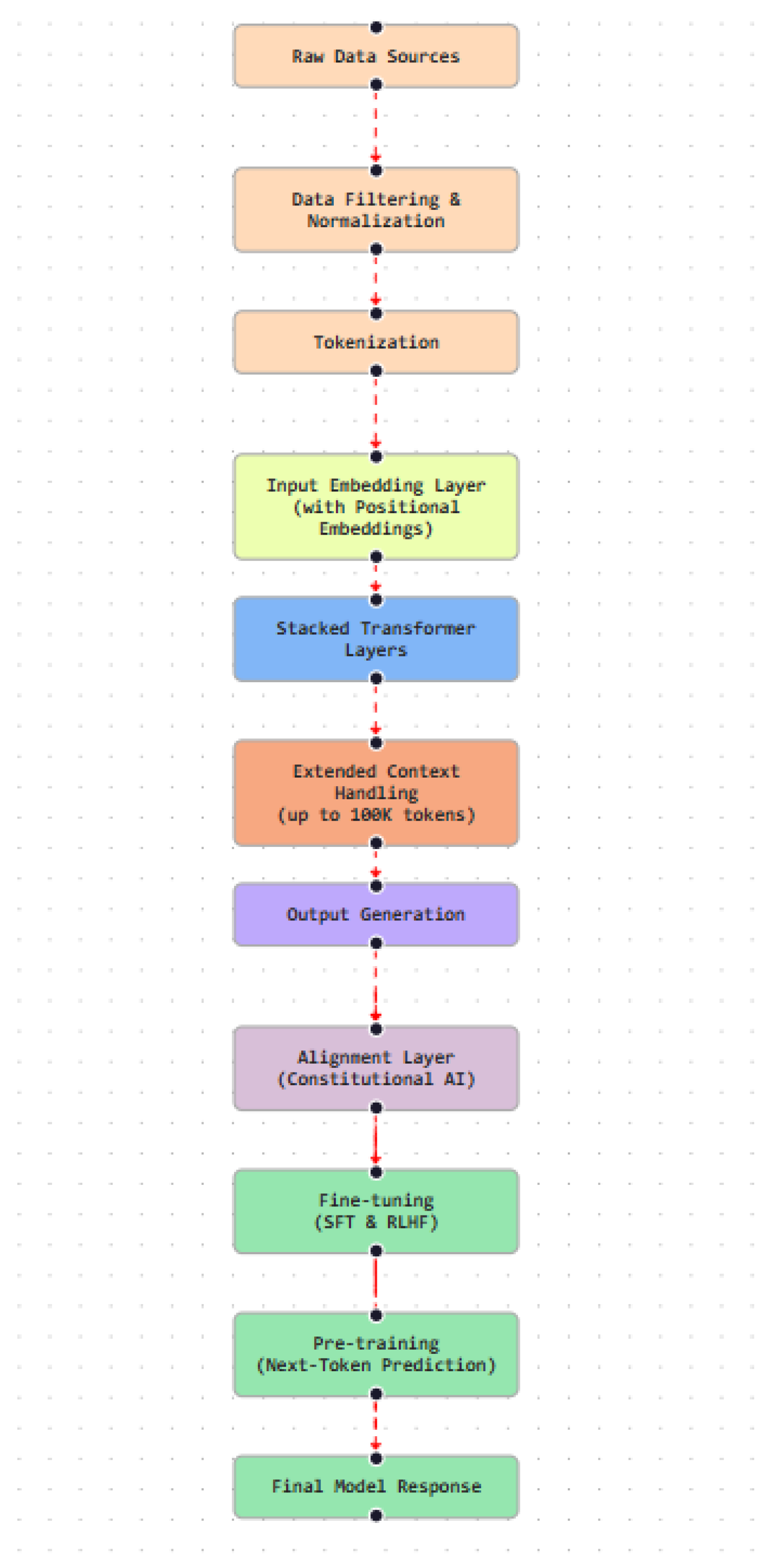

12. Claude

12.1. Claude 2

12.2. Claude 3 Model Family

12.3. Claude 3.5 and Capabilities

12.4. Capabilities and Applications

12.5. Safety, Alignment, and Trust

13. Falcon AI

13.1. Falcon-7B

13.2. Falcon-40B

13.3. Falcon-180B

14. Falcon 2 Series

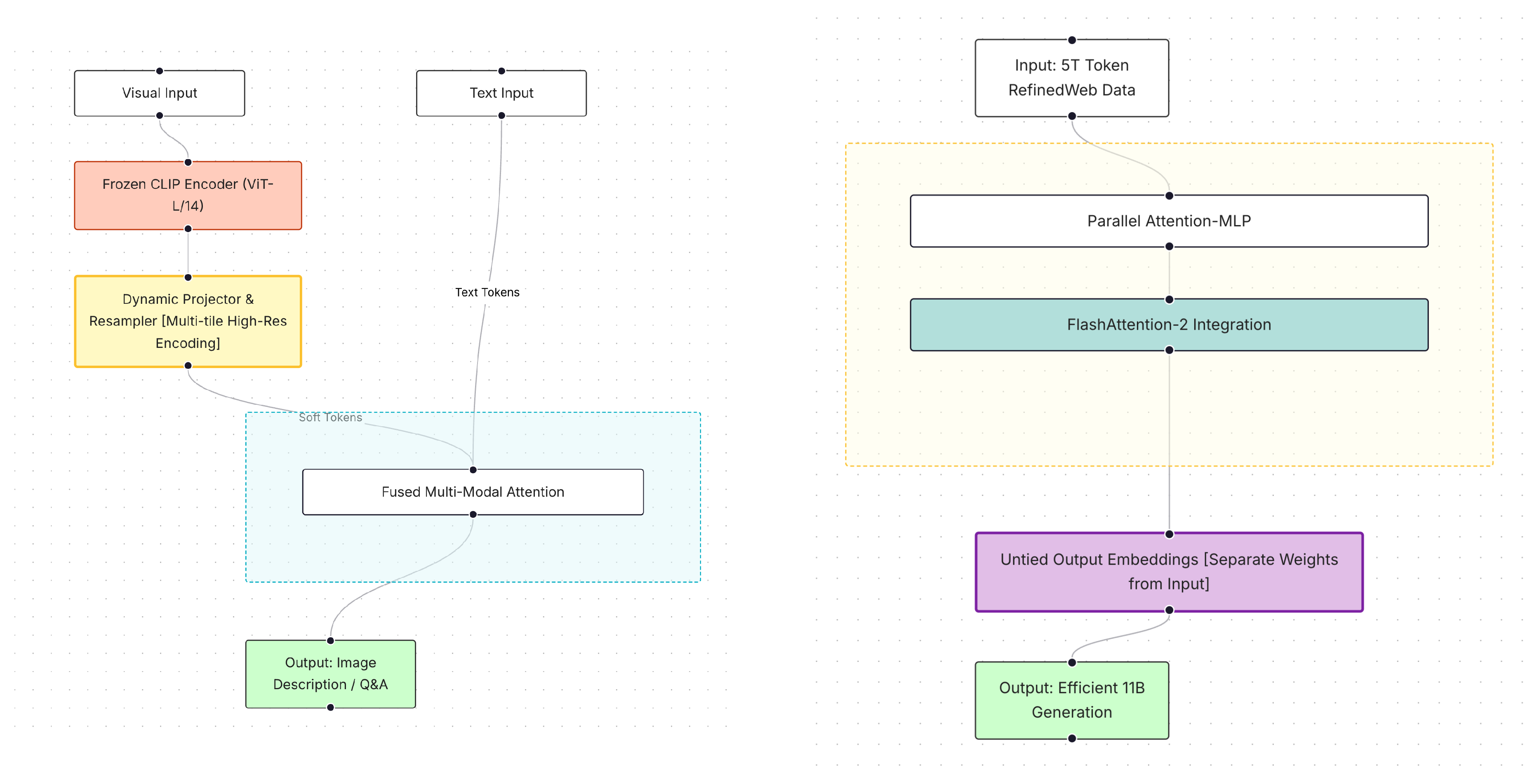

14.1. Falcon2-11B

14.2. Falcon2-11B VLM

15. Falcon Mamba 7B

15.1. Data and Training

16. Falcon Series 3

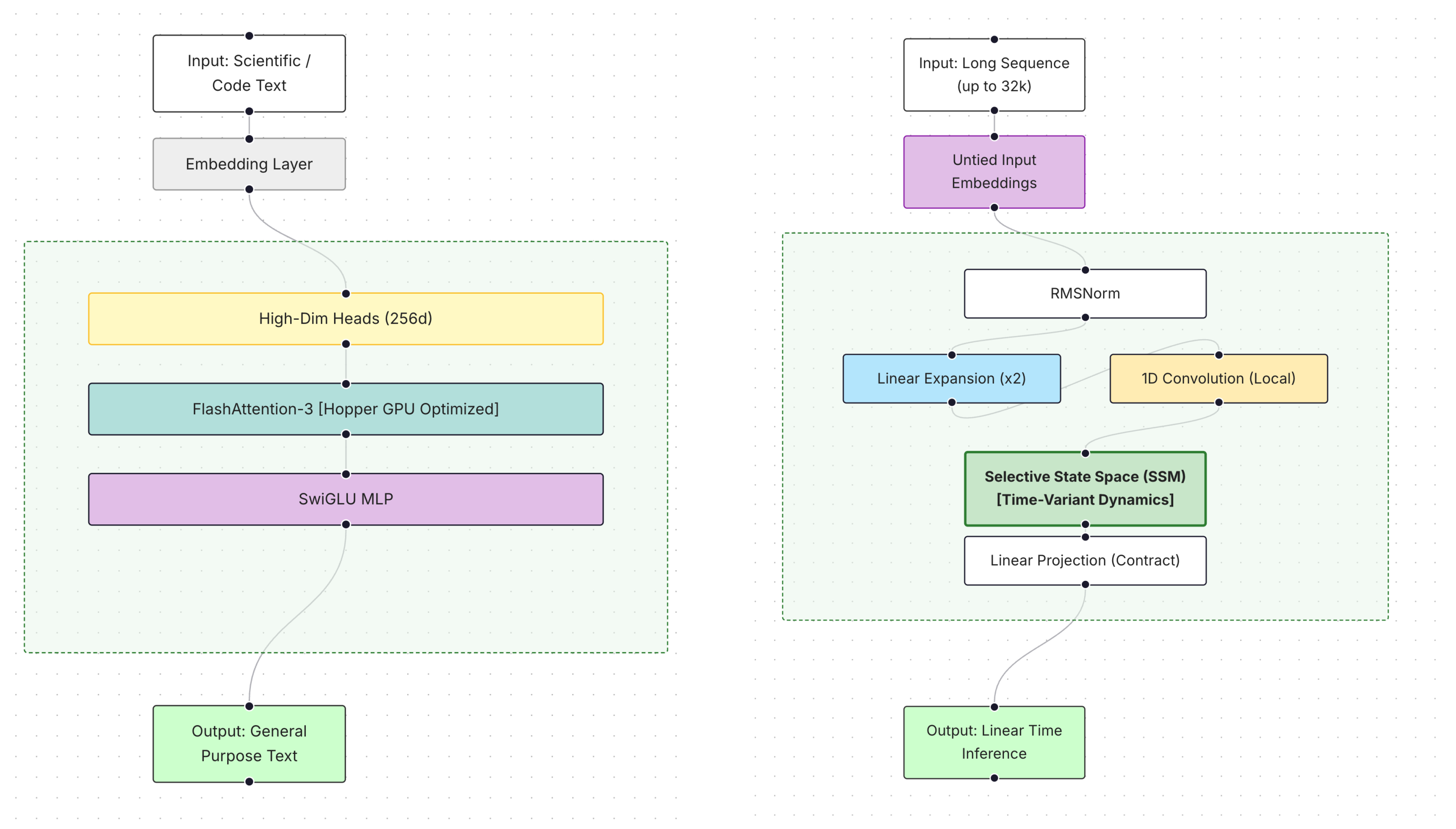

16.1. Falcon3-1B

16.2. Falcon3-3B-Base

16.3. Falcon3-Mamba-7B-Base

16.4. Falcon3-7B-Base

16.5. Falcon3-10B-Base

16.6. Deployment and Privacy Considerations

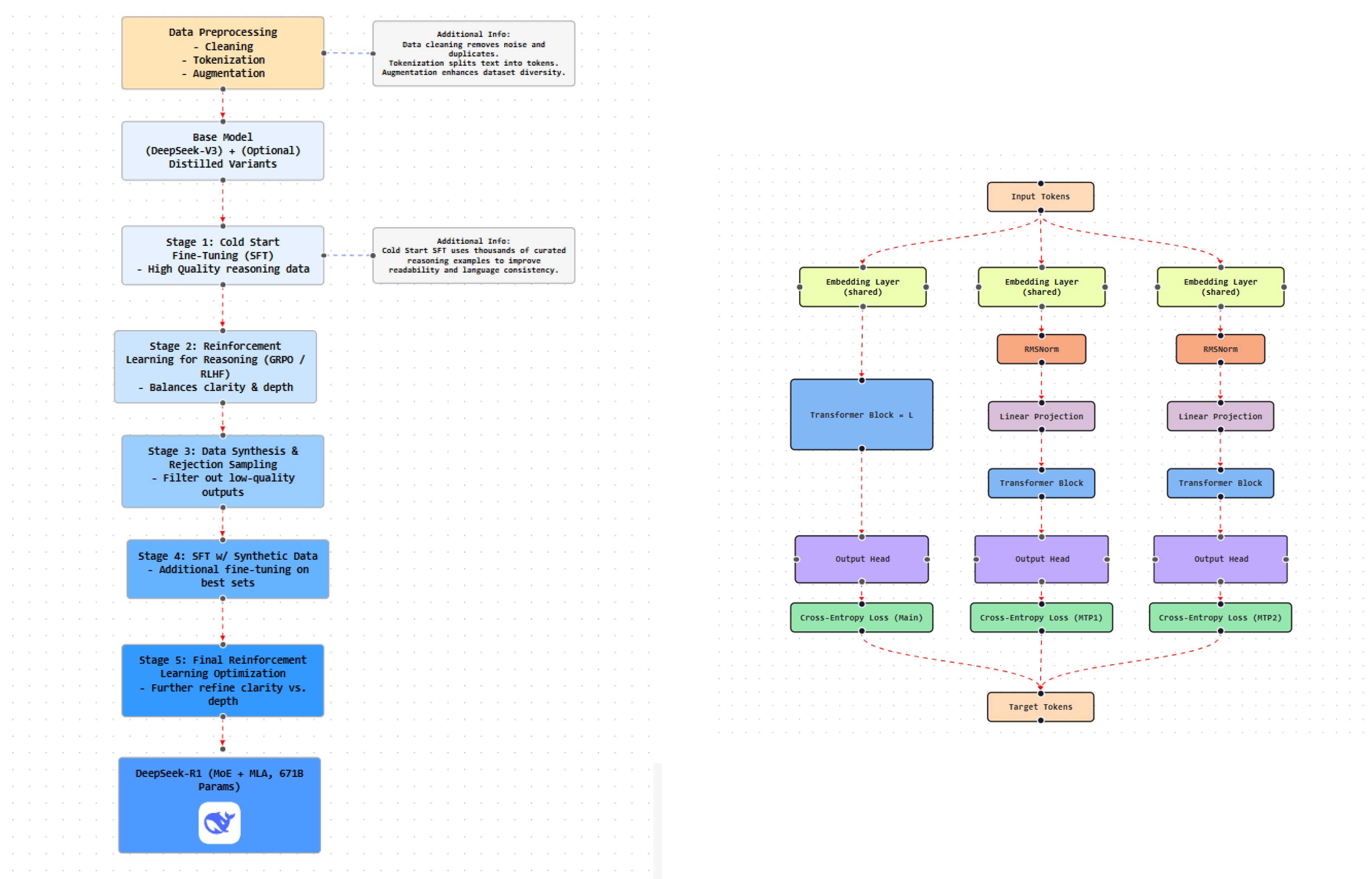

17. DeepSeek-R1

17.1. Model Architecture and Training Pipeline

17.2. Model Evolution and Performance

17.3. Data Processing and Optimization

17.4. MoEs and Attention Design

17.5. Generation Characteristics

18. DeepSeek-V3

18.1. Architectural Innovations

18.2. Training Methodology

18.3. Data Pipeline

- Enhanced coverage of programming, mathematics, and multilingual content.

- Byte-level BPE with a 128K vocabulary and optimized pretokenization.

- Fill-in-the-Middle (FIM) training to improve interpolation.

- Token boundary randomization to reduce few-shot bias.

18.4. Performance and Capabilities

18.5. Safety and Alignment

19. Qwen AI

19.1. Architecture and Core Design

19.2. Training Strategy and Data Pipeline

19.3. Model Variants and Configurations

19.4. Capabilities and Performance

19.5. Safety, Privacy, and Bias

20. Comparative Analysis of LLMs

21. Discussion

21.1. Trends in Scaling, Architecture, and Safety

21.2. Trade-Offs Between Model Complexity and Performance

21.3. Open-Source and Proprietary Development Paradigms

22. Future Directions

22.1. Transparent and Standardized Evaluation

22.2. Cross-Lingual Generalization and Low-Resource Settings

22.3. Long-Context Reasoning and Memory-Augmented Models

22.4. Governance and Responsible Deployment

23. Conclusions

References

- Radford, A.; Wu, J.; Child, R.; Luan, D.; Amodei, D.; Sutskever, I.; et al. Language models are unsupervised multitask learners. OpenAI blog 2019, 1, 9.

- Brown, T.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.D.; Dhariwal, P.; Neelakantan, A.; Shyam, P.; Sastry, G.; Askell, A.; et al. Language models are few-shot learners. Advances in neural information processing systems 2020, 33, 1877–1901.

- Touvron, H.; Lavril, T.; Izacard, G.; Martinet, X.; Lachaux, M.A.; Lacroix, T.; Rozière, B.; Goyal, N.; Lample, G.; et al. LLaMA 2: Open Foundation and Fine-Tuned Chat Models. arXiv preprint arXiv:2307.09288 2023. [CrossRef]

- Rane, A.; Choudhary, P.; Rane, P. Gemini 1.5 Technical Overview. https://arxiv.org/abs/2403.05530, 2024.

- Anthropic. Claude 3 Model Family. Anthropic Blog 2024.

- AI, D. DeepSeek-R1: Reinforcement Learning Powered Reasoning. https://www.deepseek.com/blog/deepseek-r1, 2024.

- AI, D. DeepSeek-V3: Multimodal Capabilities and Efficient Memory Use. https://www.deepseek.com/blog/deepseek-v3, 2024.

- Cloud, A. Qwen: Language Models for Multilingual AI. https://www.alibabacloud.com/help/en/model-studio/what-is-qwen-llm, 2024.

- (TII), T.I.I. Falcon: Open-Source Large Language Models. TII Technical Report 2023.

- OpenAI. GPT-4o: OpenAI’s Multimodal Flagship. https://openai.com/index/hello-gpt-4o/, 2024.

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. In Proceedings of the Advances in Neural Information Processing Systems, 2017.

- OpenAI. GPT-4 Technical Report. arXiv preprint arXiv:2303.08774 2023. [CrossRef]

- Bai, Y.; Kadavath, S.; Kundu, S.; Askell, A.; Kernion, J.; Jones, A.; Kaplan, J.; et al. Constitutional AI: Harmlessness from AI feedback. arXiv preprint arXiv:2212.08073 2022. [CrossRef]

- Guo, D.; Yang, D.; Zhang, H.; Song, J.; Zhang, R.; Xu, R.; Zhu, Q.; Ma, S.; Wang, P.; Bi, X.; et al. Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning. arXiv preprint arXiv:2501.12948 2025.

- Bommasani, R.; Hudson, D.A.; Adeli, E.; Altman, R.; Arora, S.; von Arx, S.; Bernstein, M.S.; Bohg, J.; Bosselut, A.; Brunskill, E.; et al. On the opportunities and risks of foundation models. arXiv preprint arXiv:2108.07258 2021. [CrossRef]

- Ouyang, L.; Wu, J.; Jiang, X.; Almeida, D.; Wainwright, C.; Mishkin, P.; Zhang, C.; Agarwal, S.; Slama, K.; Ray, A.; et al. Training language models to follow instructions with human feedback. arXiv preprint arXiv:2203.02155 2022. [CrossRef]

- Alayrac, J.B.; Donahue, J.; Luc, P.; Miech, A.; Barr, I.; Hasson, Y.; Lenc, K.; Mensch, A.; Millican, K.; Reynolds, M.; et al. Flamingo: a visual language model for few-shot learning. Advances in neural information processing systems 2022, 35, 23716–23736.

- Almazrouei, E.; Alobeidli, H.; Alshamsi, A.; Cappelli, A.; Cojocaru, R.; Hesslow, D.; Launay, J.; Malartic, Q.; Mazzotta, D.; Noune, B.; et al. The Falcon Series of Open Language Models. ArXiv (Cornell University) 2023. [CrossRef]

- Su, J.; Lu, Y.; Pan, S.; Murtadha, A.; Wen, B.; Liu, Y. RoFormer: Enhanced Transformer with Rotary Position Embedding 2021. [CrossRef]

- Zhang, B.; Sennrich, R. Root mean square layer normalization. Advances in Neural Information Processing Systems 2019, 32.

- Loshchilov, I.; Hutter, F. Decoupled weight decay regularization. arXiv preprint arXiv:1711.05101 2017.

- Roumeliotis, K.I.; Tselikas, N.D. Chatgpt and open-ai models: A preliminary review. Future Internet 2023, 15, 192. [CrossRef]

- Achiam, J.; et al. Gpt-4 technical report. arXiv preprint arXiv:2303.08774 2023. [CrossRef]

- OpenAI. GPT-4 Research. https://openai.com/index/gpt-4-research/, 2023.

- OpenAI. GPT-4o System Card. https://openai.com/index/gpt-4o-system-card/, 2024.

- OpenAI. OpenAI O1 System Card. https://cdn.openai.com/o1-system-card.pdf#page=16, 2024. Accessed: 2024-10-30.

- Bhati, D.; Neha, F.; Guercio, A.; Amiruzzaman, M.; Kasturiarachi, A.B., Diffusion Model and Generative AI for Images. In A Beginner’s Guide to Generative AI: An Introductory Path to Diffusion Models, ChatGPT, and LLMs; Springer Nature Switzerland: Cham, 2026; pp. 135–184. [CrossRef]

- Meta. Introducing LLaMA: A foundational, 65-billion-parameter large language model. https://ai.meta.com/blog/large-language-model-llama-meta-ai/, 2023.

- Meta. Meta and Microsoft Introduce the Next Generation of Llama. https://ai.meta.com/blog/llama-2/, 2023.

- Portakal, E. LLaMA 2 Use Cases. https://textcortex.com/post/llama-2-use-cases, 2023.

- Xu, H.; Kim, Y.J.; Sharaf, A.; Awadalla, H.H. Advanced Language Model-based Translator (ALMA): A Fine-tuning Approach for Translation Using LLaMA-2. arXiv preprint arXiv:2309.11674 2023.

- Rozière, B.; Gehring, J.; Gloeckle, F.; Sootla, S.; Gat, I.; Tan, X.E.; Adi, Y.; Liu, J.; Sauvestre, R.; Remez, T.; et al. Code Llama: Open Foundation Models for Code. arXiv preprint arXiv:2308.12950 2023.

- Ahmed, W. Meta’s Llama 2 Model & Its Capabilities. https://www.linkedin.com/pulse/metas-llama-2-model-its-capabilities-waqas-ala1f/, 2023.

- Meta. Code Llama: An AI model for code generation and discussion. https://about.fb.com/news/2023/08/code-llama-ai-for-coding/, 2024.

- Schmid, P.; Sanseviero, O.; Cuenca, P.; Tunstall, L.; von Werra, L.; Ben Allal, L.; Zucker, A.; Gante, J. Code Llama: Llama 2 learns to code. https://huggingface.co/blog/codellama, 2023.

- Van Veen, D.; Van Udekemeier, L.; Delbrouck, J.B.; Aali, A.; Bluethgen, C.; et al. Clinical Text Summarization: Adapting Large Language Models Can Outperform Human Experts. Nature Medicine 2023.

- Google. Introducing Gemini: Google DeepMind’s Next-Generation AI. https://blog.google/technology/ai/google-gemini-ai/#sundar-note, 2023.

- Unite.ai. Google’s Multimodal AI Gemini: A technical deep dive. https://www.unite.ai/googles-multimodal-ai-gemini-a-technical-deep-dive/, 2023.

- Google Cloud. Essentials of Gemini: The new era of AI. https://medium.com/google-cloud/essentials-of-gemini-the-new-era-of-ai-efca53293341, 2023.

- Swipe Insight. Google’s Gemini training data sources: A deep dive into tech companies’ transparency. https://web.swipeinsight.app/posts/google-s-gemini-training-data-sources-a-deep-dive-into-tech-companies-transparency-5846, 2023.

- Islam, R.; Ahmed, I. Gemini: The most powerful LLM—Myth or truth. In Proceedings of the Proceedings of the 5th Information Communication Technologies Conference, 2024. [CrossRef]

- Pande, A.; Patil, R.; Mukkemwar, R.; Panchal, R.; Bhoite, S. Comprehensive study of Google Gemini and text generating models: Understanding capabilities and performance. Grenze International Journal of Engineering and Technology 2024. June Issue.

- Evonence. Gemini: The unrivaled platform for language translation. https://www.evonence.com/blog/gemini-the-unrivaled-platform-for-language-translation, 2024.

- Anthropic. Model Card and Evaluations for Claude Models. https://www-cdn.anthropic.com/bd2a28d2535bfb0494cc8e2a3bf135d2e7523226/Model-Card-Claude-2.pdf, 2023.

- Bai, Y.; et al. Training a helpful and harmless assistant with reinforcement learning from human feedback. arXiv preprint arXiv:2204.05862 2022. [CrossRef]

- Anthropic. The Claude 3 Model Family: Opus, Sonnet, Haiku. https://www-cdn.anthropic.com/de8ba9b01c9ab7cbabf5c33b80b7bbc618857627/Model_Card_Claude_3.pdf, 2024.

- Penedo, G.; et al. The RefinedWeb dataset for Falcon LLM: outperforming curated corpora with web data, and web data only. arXiv preprint arXiv:2306.01116 2023. [CrossRef]

- Tian, X. Fine-tuning Falcon LLM 7B/40B. Lambda.ai Blog, 2023. https://lambda.ai/blog/fine-tuning-falcon-llm-7b/40b.

- Malartic, Q.; et al. Falcon2-11b technical report. arXiv preprint arXiv:2407.14885 2024. [CrossRef]

- Zuo, J.; et al. Falcon mamba: The first competitive attention-free 7b language model. arXiv preprint arXiv:2410.05355 2024. [CrossRef]

- Team, F.L. The Falcon 3 Family of Open Models, 2024.

- Stattelman, M. FALCONS.AI. https://falcons.ai/privacypolicy.html, 2021.

- Wenfeng, L. DeepSeek AI founder Liang Wenfeng: The entrepreneur behind China’s AI ambitions. https://apnews.com/article/deepseek-founder-liang-wenfeng-china-ai-0673d5c39d90108189cc31b88d85b9f8, 2024.

- Neha, F.; Bhati, D. A Survey of DeepSeek Models. Authorea Preprints.

- Guo, D.; Yang, D.; Zhang, H.; Song, J.; Zhang, R.; Xu, R.; He, Y. Deepseek-R1: Incentivizing Reasoning Capability in LLMs via reinforcement learning. arXiv preprint arXiv:2501.12948 2025.

- Mumuni, A.; Mumuni, F. Automated data processing and feature engineering for deep learning and big data applications: A survey. arXiv preprint arXiv:2403.11395 2024. [CrossRef]

- NVIDIA. DeepSeek-R1 Model Card. https://build.nvidia.com/deepseek-ai/deepseek-r1/modelcard, 2025.

- AWS. DeepSeek-R1 Model Now Available in Amazon Bedrock Marketplace and Amazon SageMaker JumpStart. https://aws.amazon.com/blogs/machine-learning/deepseek-r1-model-now-available-in-amazon-bedrock-marketplace-and-amazon-sagemaker-jumpstart/, 2025.

- Lu, H.; Liu, W.; Zhang, B.; Wang, B.; Dong, K.; Liu, B.; Sun, J.; Ren, T.; Li, Z.; Yang, H.; et al. Deepseek-vl: towards real-world vision-language understanding. arXiv preprint arXiv:2403.05525 2024.

- Infosecurity Magazine. DeepSeek-R1 and AI Security: How DeepSeek Ensures Safe AI Deployment. https://www.infosecurity-magazine.com/news/deepseek-r1-security/, 2025.

- Alibaba Cloud. Qwen: Generative AI Model by Alibaba Cloud. https://www.alibabacloud.com/en/solutions/generative-ai/qwen, 2024.

| Model | Arch. | Purpose | I/O | Params | Special Features |

|---|---|---|---|---|---|

| GPT-2 | Transformer | Text generation | Text/Text | 1.5B | First OpenAI transformer-based model |

| GPT-3 | Transformer | Text generation | Text/Text | 175B | Large-scale autoregressive pretraining |

| GPT-3.5 | Transformer | Enhanced generation | Text/Text | 175B | Instruction tuning and better reasoning |

| GPT-4 | Transformer-Multimodal | Text + vision | Txt+Img/Txt+Img | 1.76T | Larger context window, multimodal |

| DALL-E 2 | Enc-Dec Transformer | Text-to-image | Text/Image | 3.5B | CLIP-based latent inversion, photo-quality |

| DALL-E 3 | Transformer | Image generation | Text/Image | – | Better captions, prompt alignment |

| Sora | Diffusion Transformer | Text-to-video | Txt+Img/Vid | – | 3D coherence, simulation, editing |

| Whisper | Transformer (Audio) | Speech recog. | Audio/Text | 798M | Multilingual, VAD, translation |

| Codex | GPT-3 variant | Code generation | Text/Code | 12B | Multi-language programming |

| GPT-4o | Multimodal Transformer | Unified multimodal | Txt+Img+Aud/All | – | Emotion, real-time audio-vision-text |

| O1-preview | Transformer (CoT) | Reasoning + safety | Text/Text | – | Chain-of-thought, jailbreak resistance |

| O1-mini | Optimized Transf. | Fast reasoning | Text/Text | – | Low-latency, coding-focused |

| LLaMA 1 | LLaMA 2 |

|---|---|

| LLaMA 7B | LLaMA 2 7B |

| LLaMA 13B | LLaMA 2 13B |

| LLaMA 33B | LLaMA 2 70B |

| LLaMA 65B | – |

| Feature | DeepSeek-R1-Zero | DeepSeek-R1 |

|---|---|---|

| Training Paradigm | RL-only (GRPO) | Hybrid RL + SFT + Synthetic Data |

| Reasoning Quality | High but unstable | Optimized and consistent |

| Language Coherence | Limited | Structured and fluent |

| Data Sources | RL-generated | Human-labeled + synthetic |

| Output Style | Variable | Normalized |

| Model | Training Techniques | Context Length / Tokenization | Performance Benchmarks | Multimodal Capabilities | Safety & Alignment |

|---|---|---|---|---|---|

| GPT-4 / GPT-4o | SFT, RLHF, PPO, feedback loops | 128K (GPT-4o), BPE | SOTA on MMLU, HumanEval, DROP | Text, image, audio, video | RLHF, moderation APIs, content filters, human evaluation |

| GPT-O1 (preview/mini) | SFT, RLHF, chain-of-thought | 8K–32K, BPE | Logical reasoning, code pass@1 | Text | Reward models, jailbreak resistance, policy optimization |

| LLaMA 2 | SFT, RLHF, Ghost Attention | 4K, RoPE | BLEU/COMET, QA, summarization | Text | Safety reward models, RLHF, ToxiGen, TruthfulQA |

| Gemini 1.5 | SFT, RLHF, MoE routing | Up to 1M, hybrid tokenizer | HumanEval, MMLU, Natural2Code | Text, image, audio, video, code | Expert gating, moderation filters, on-device privacy |

| Claude 2 / Claude 3 | SFT, RLHF, Constitutional AI | 100K–200K, BPE | SWE-bench, MathVista, ChartQA | Text, image (Claude 3) | Self-critique, RLAIF, red teaming |

| Falcon Series (180B/2/3) | Gradient clipping, z-loss, distillation | 4K–32K, RoPE | BBH, GSM8K, ARC, MBPP | Text (VLM in Falcon2) | Safety classifiers, LoRA-based alignment |

| DeepSeek (R1 / V3) | RL-only (Zero), SFT + RL + synthetic data | 32K–128K, RoPE / MLA | Logical reasoning, math, CoT QA | Text | Reward filtering, rejection sampling |

| Qwen AI | SFT, RLHF, preference optimization | 32K+, RoPE, untied embeddings | Multilingual QA, code, summarization | Text | Fairness filtering, RLHF, bias mitigation |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).