Submitted:

08 January 2026

Posted:

09 January 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

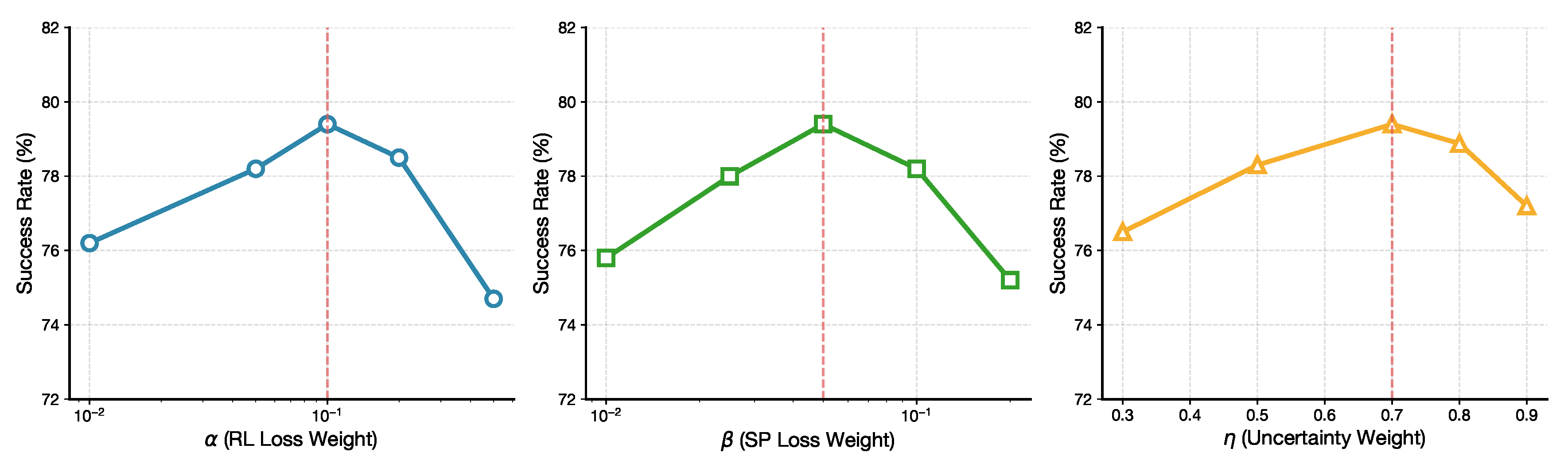

2.1. Vision–Language–Action Models from a Symmetry Perspective

2.2. Low-Cost Robotic Manipulators and Hardware Symmetry

3. Method

3.1. Symmetry Formalization

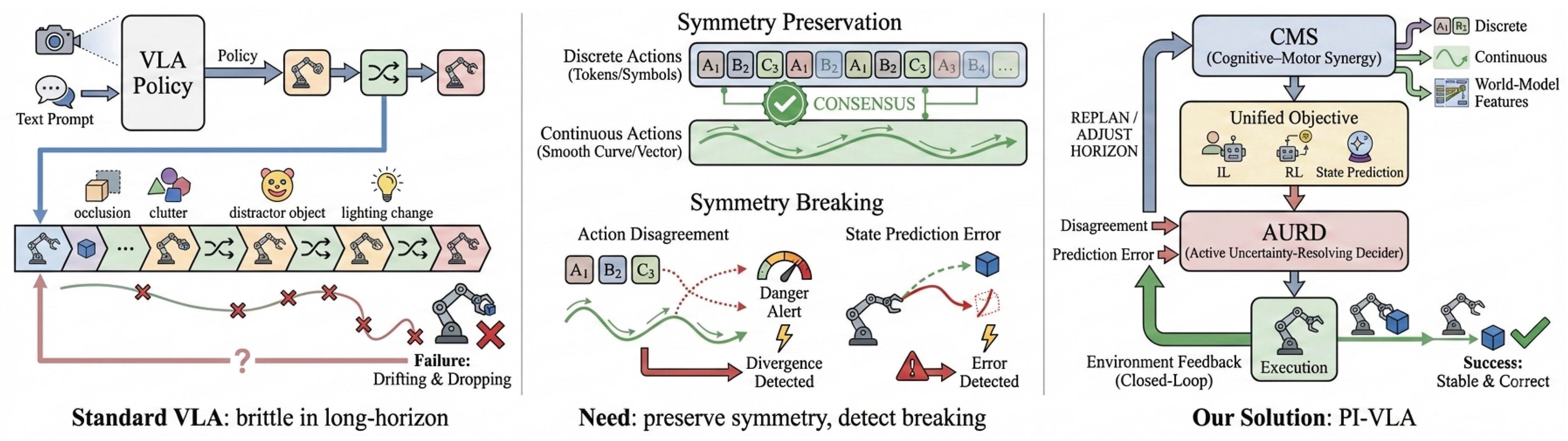

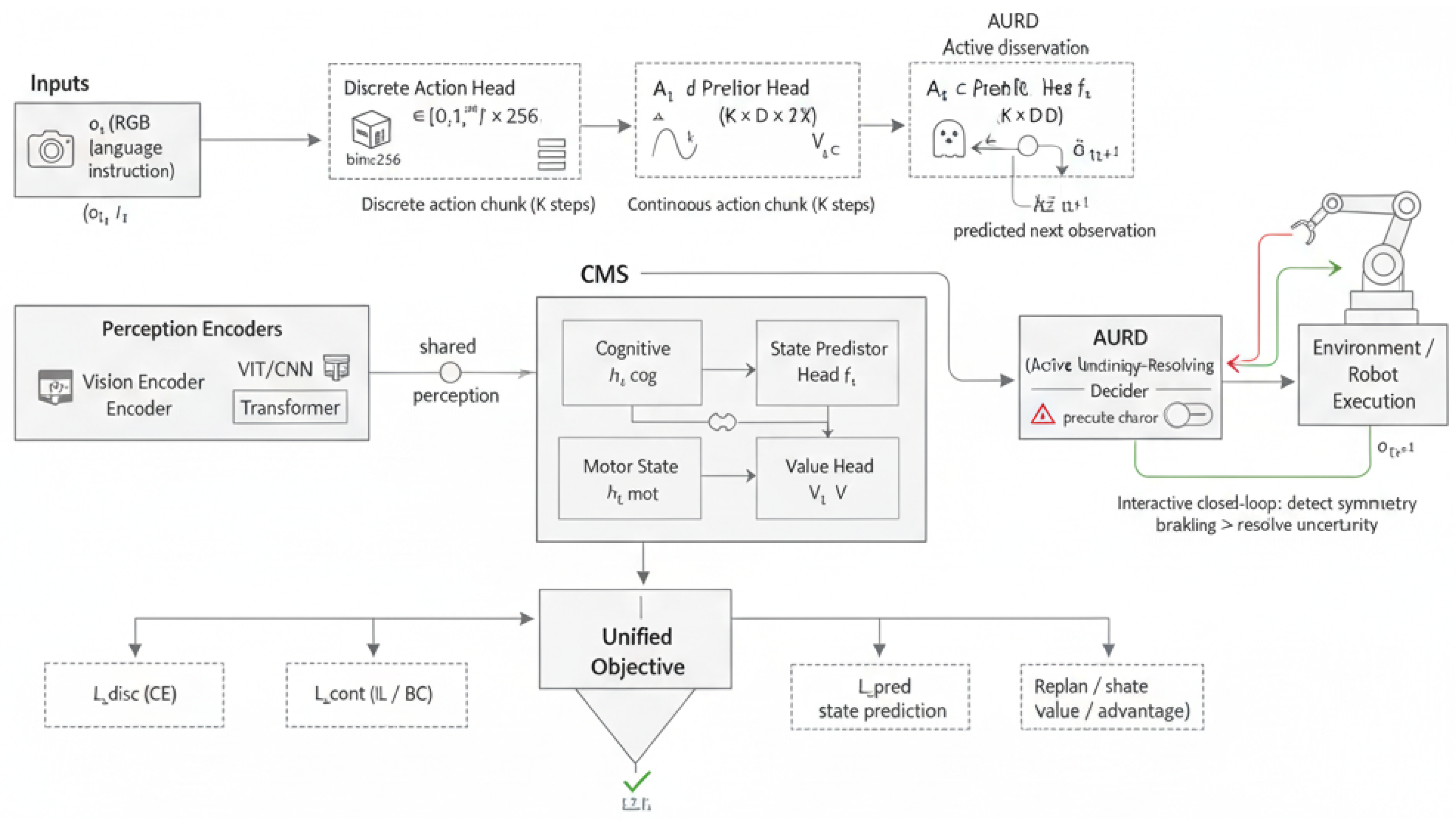

3.2. Overview of PI-VLA

3.3. Cognitive–Motor Synergy (CMS) Architecture

3.4. Unified Training Objective

3.4.1. Imitation Learning Loss ()

3.4.2. Reinforcement Learning Loss ()

3.4.3. State Prediction Loss ()

3.5. Active Uncertainty-Resolving Decider (AURD)

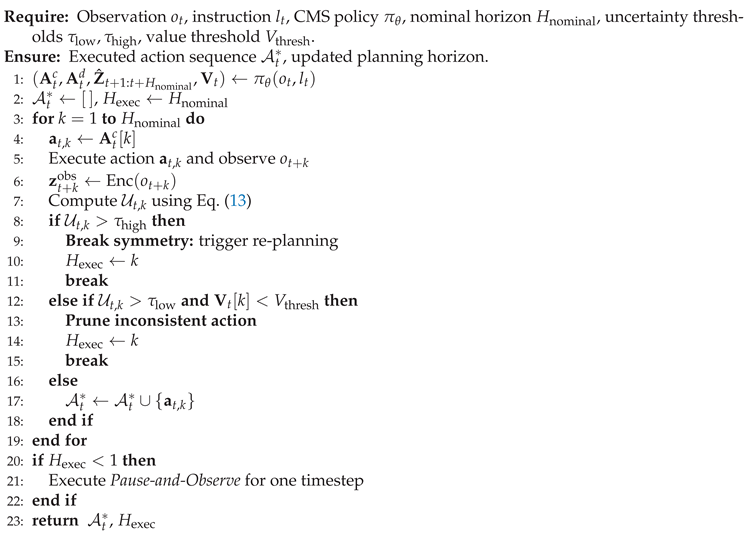

| Algorithm 1: Active Uncertainty-Resolving Decider (AURD) |

|

3.6. Hardware Setup

4. Experiments

4.1. Dataset

4.2. Implementation Details

4.3. Baselines

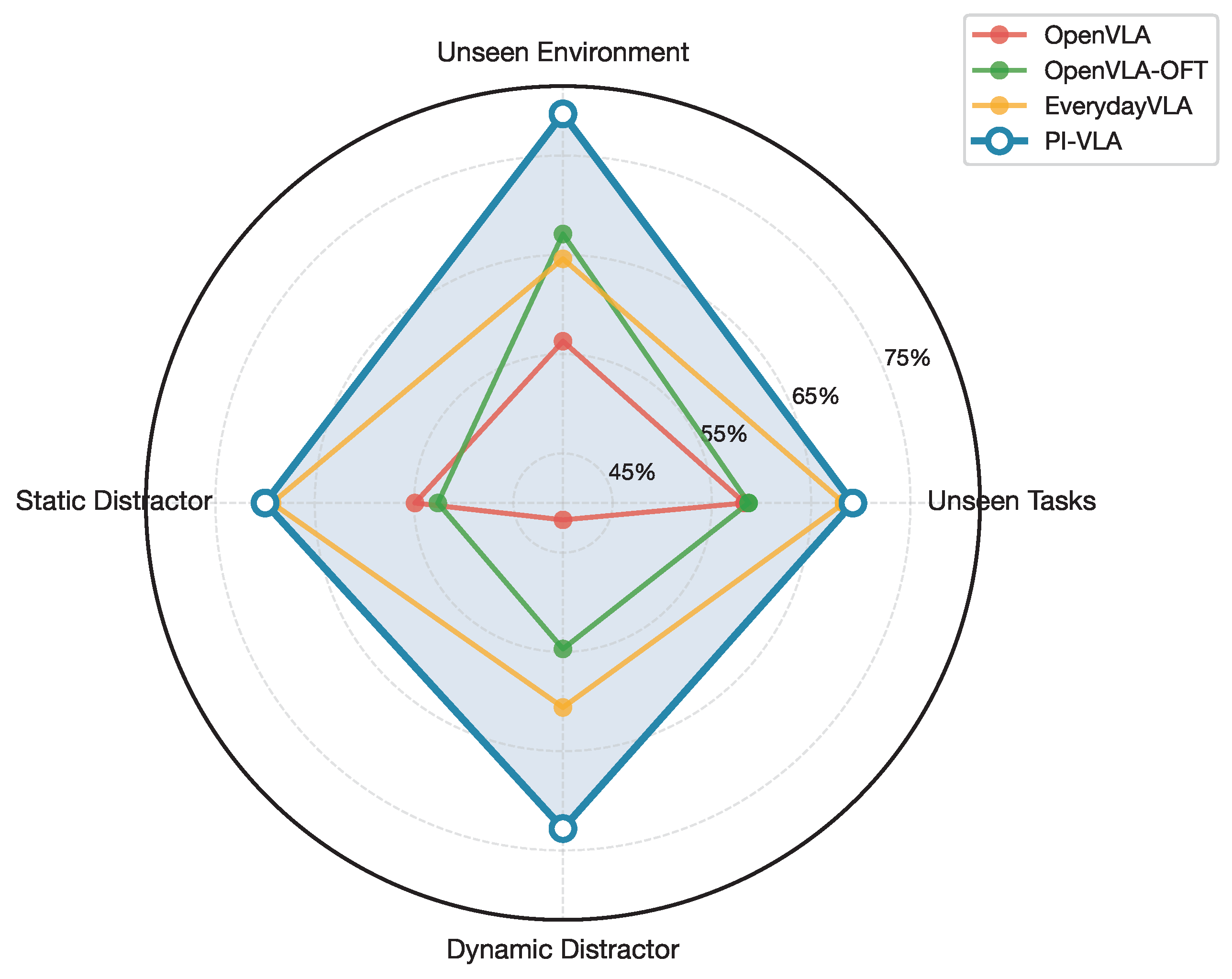

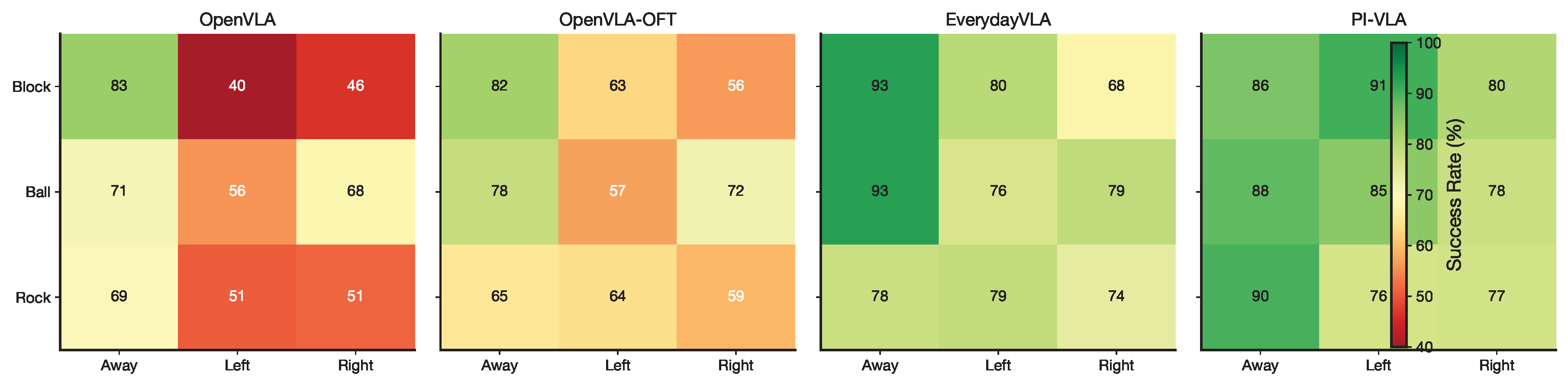

4.4. Symmetry-Centric Evaluation

5. Results

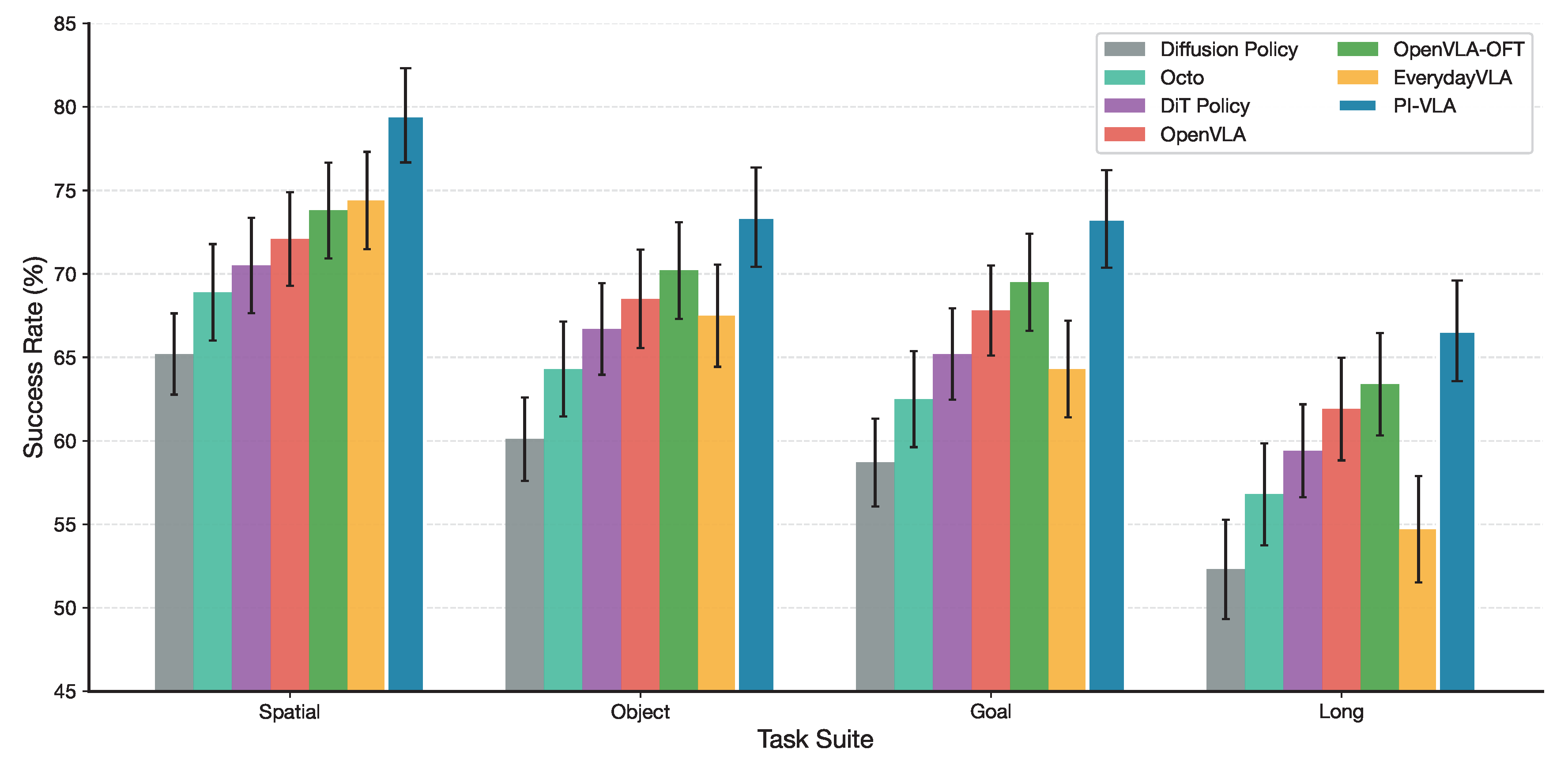

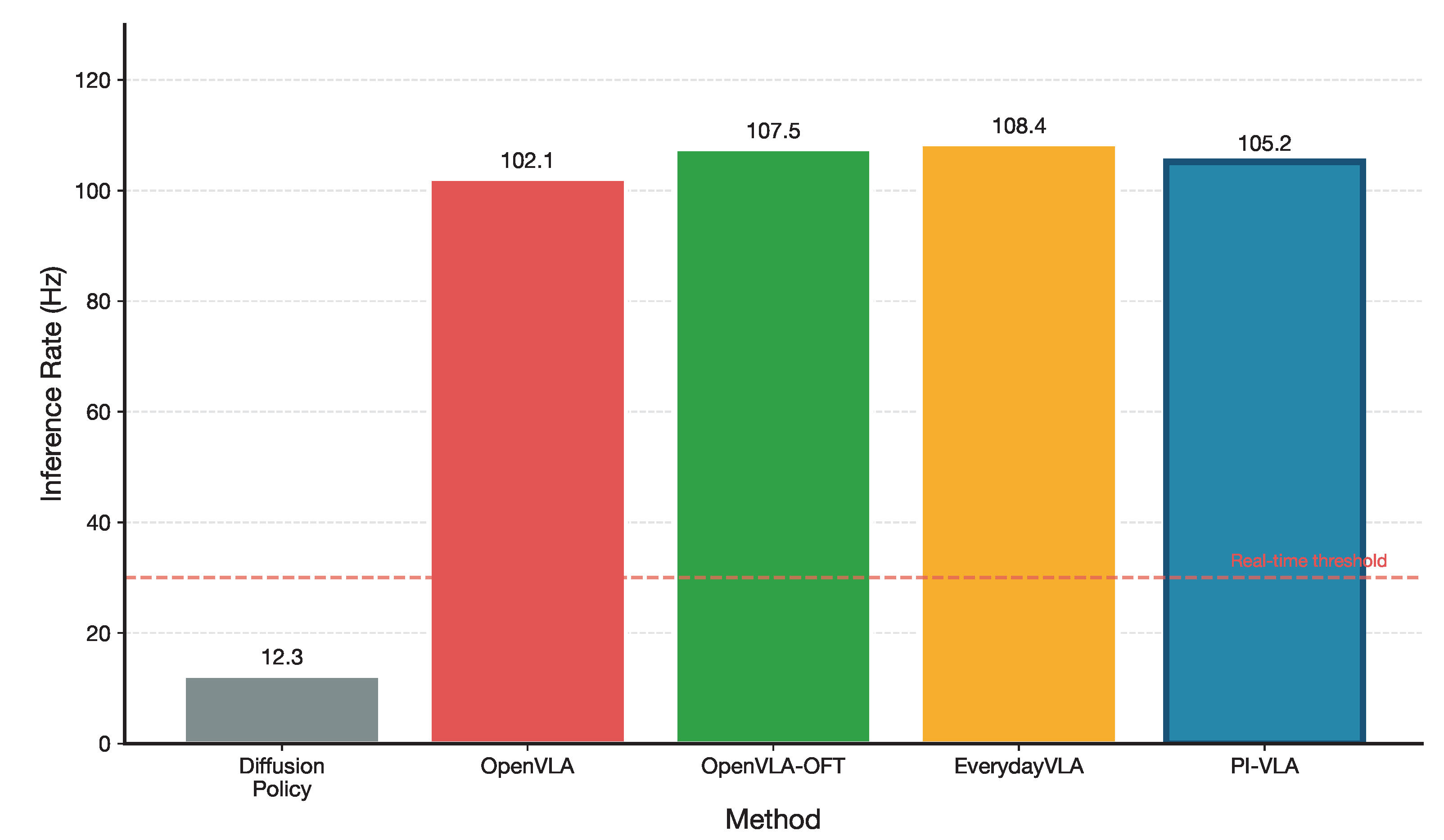

5.1. Results on the LIBERO Simulation Benchmark

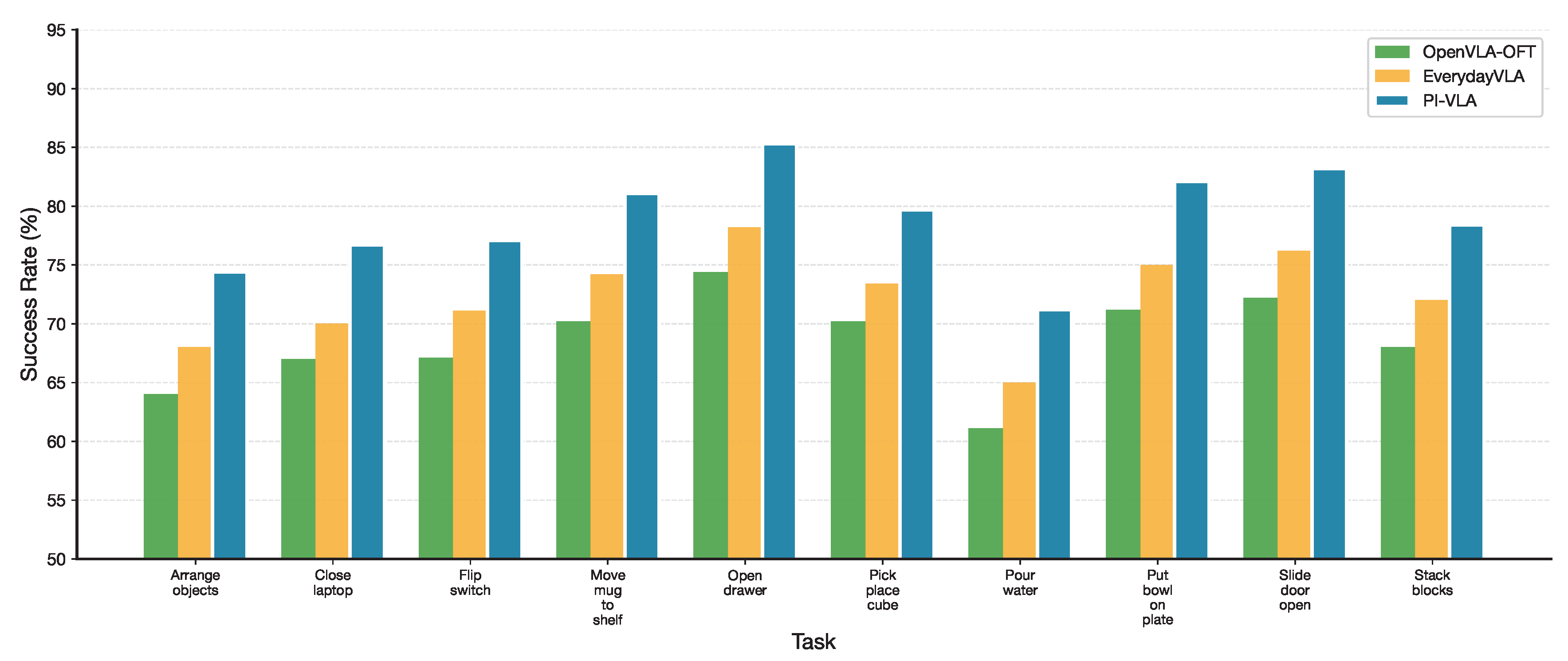

5.2. Results on Real-World Tests

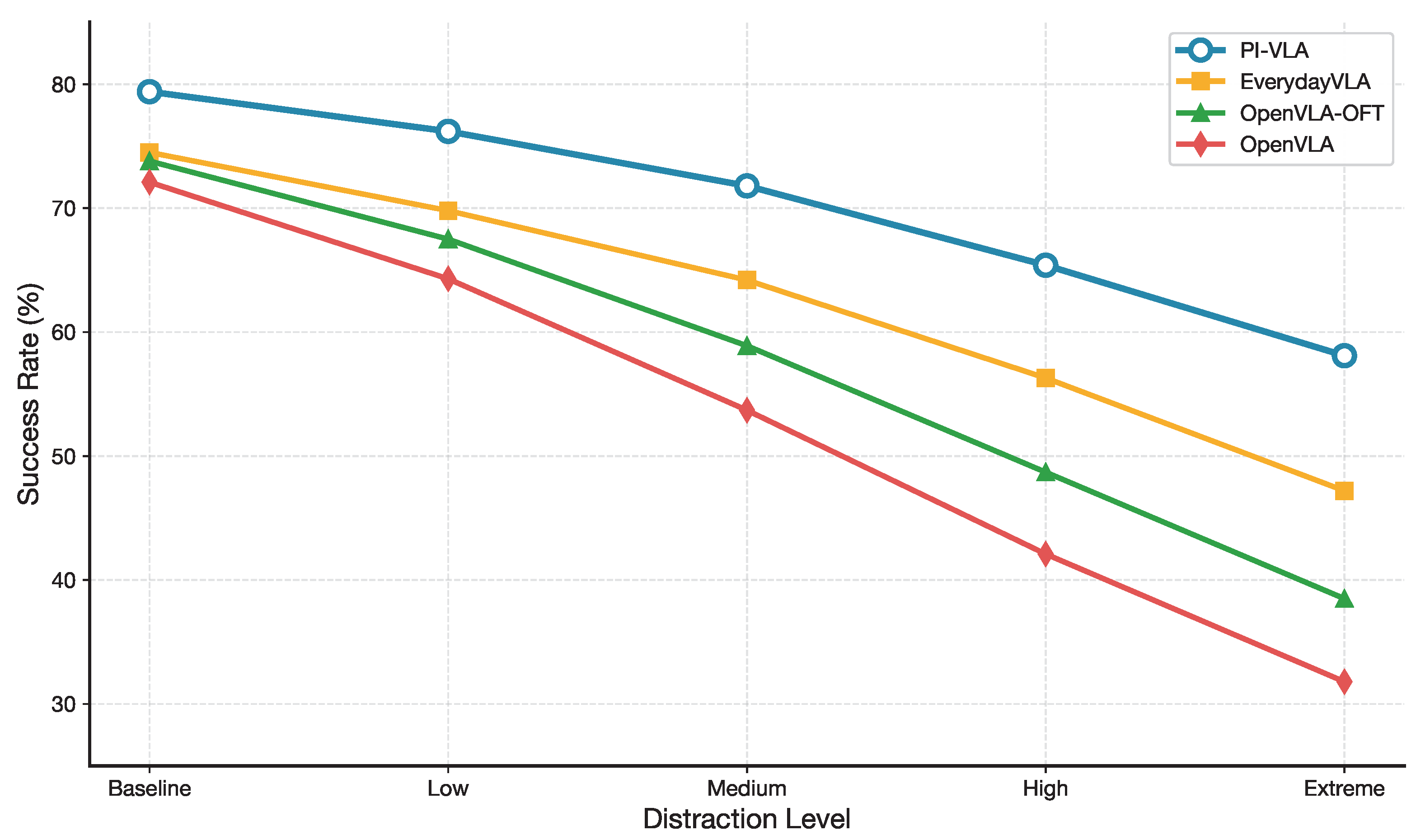

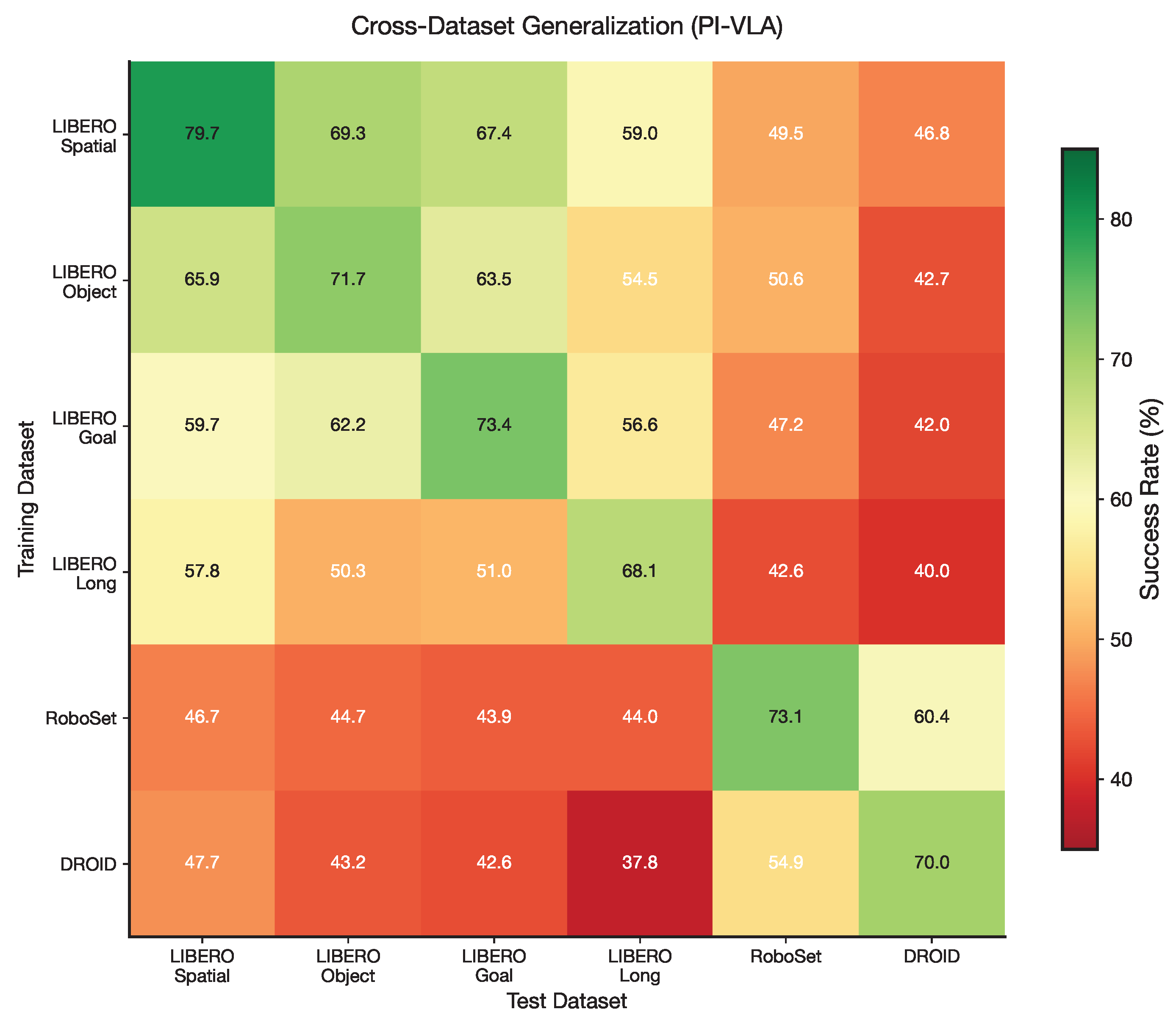

5.3. Generalization and Robustness

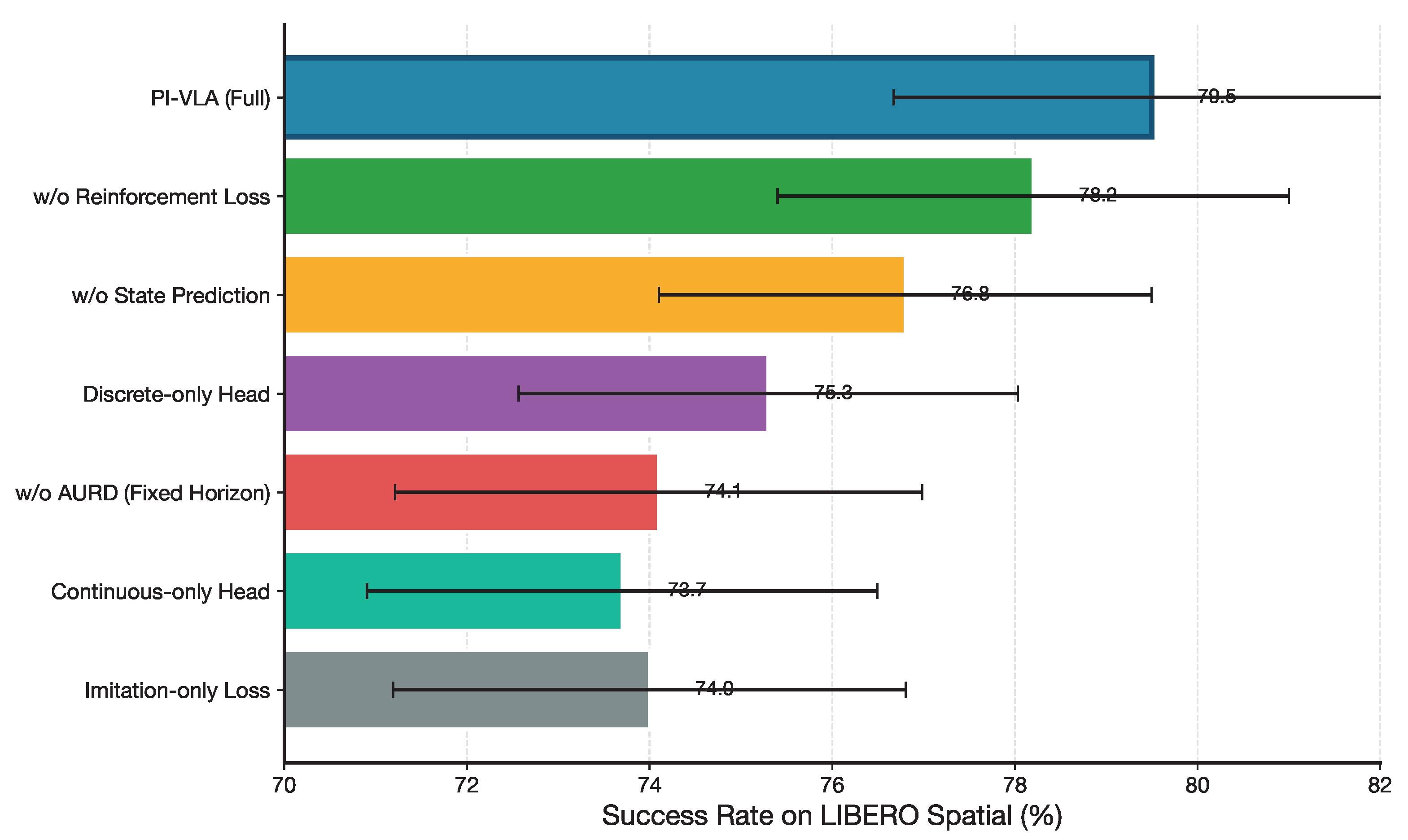

6. Ablation Studies

6.1. Ablation on CMS Action Heads

6.2. Ablation on Unified Training Objective

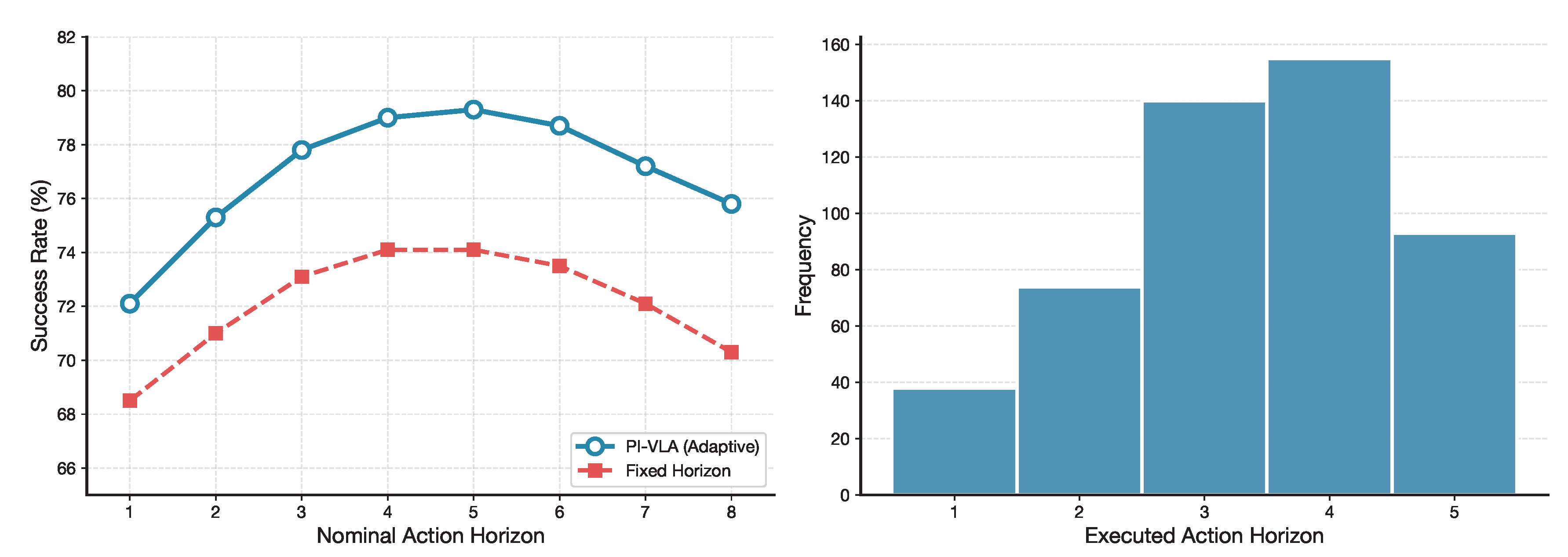

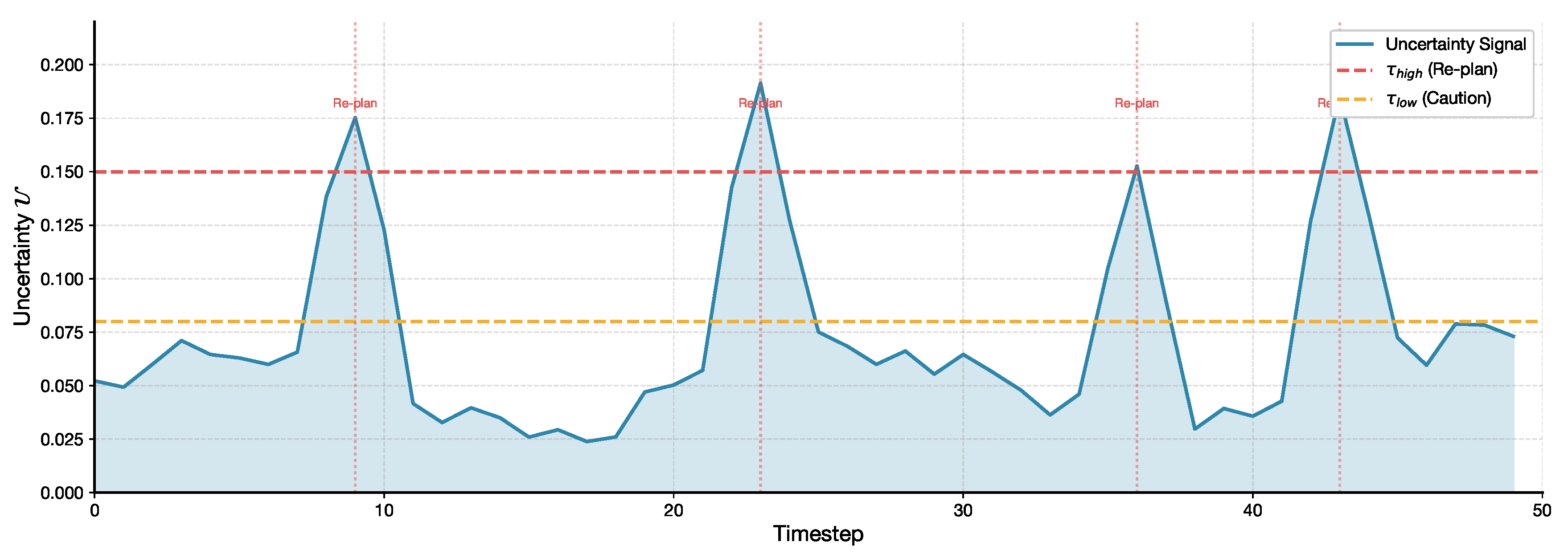

6.3. Ablation on Active Uncertainty-Resolving Decider (AURD)

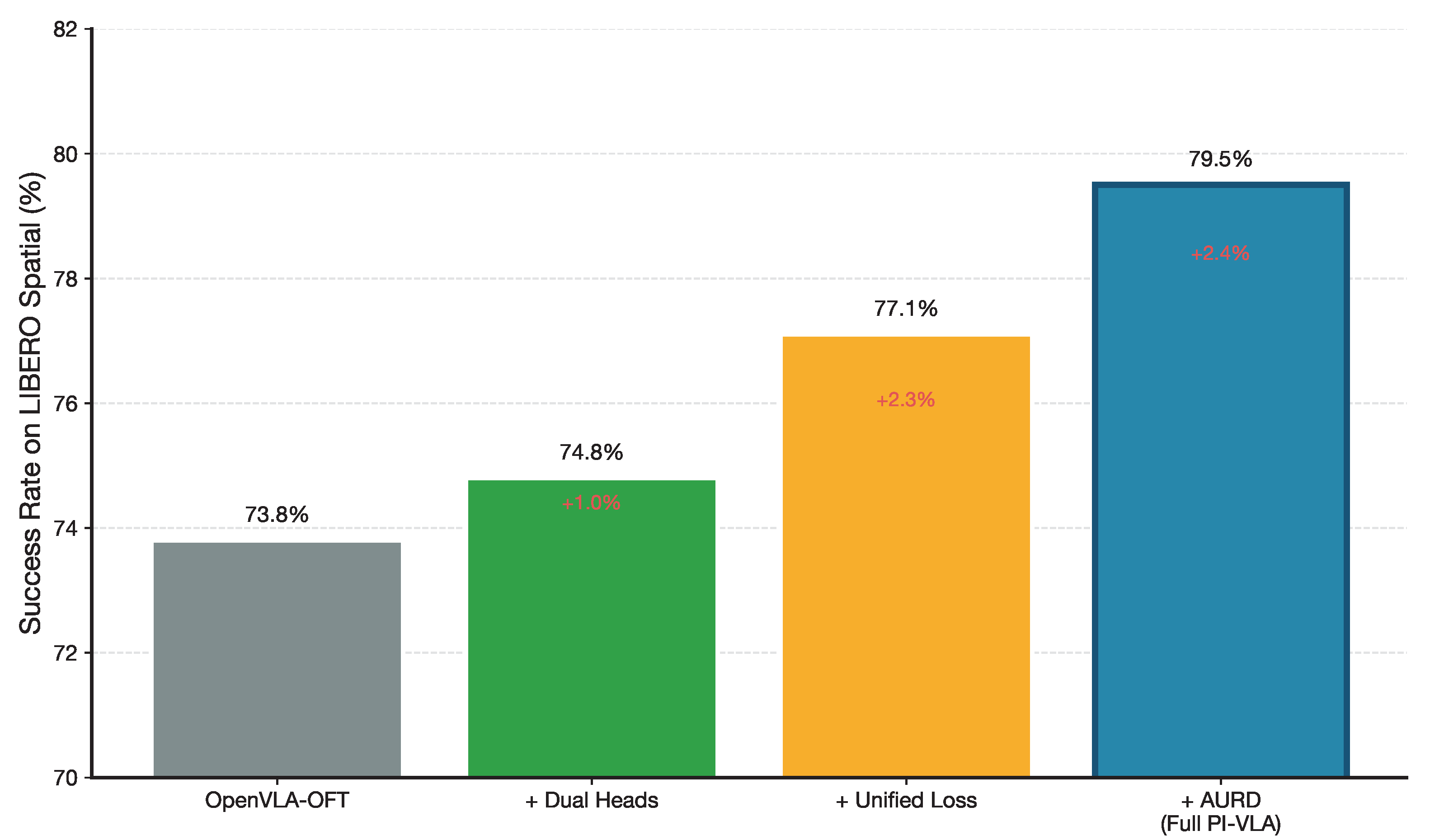

6.4. Comprehensive Component Analysis

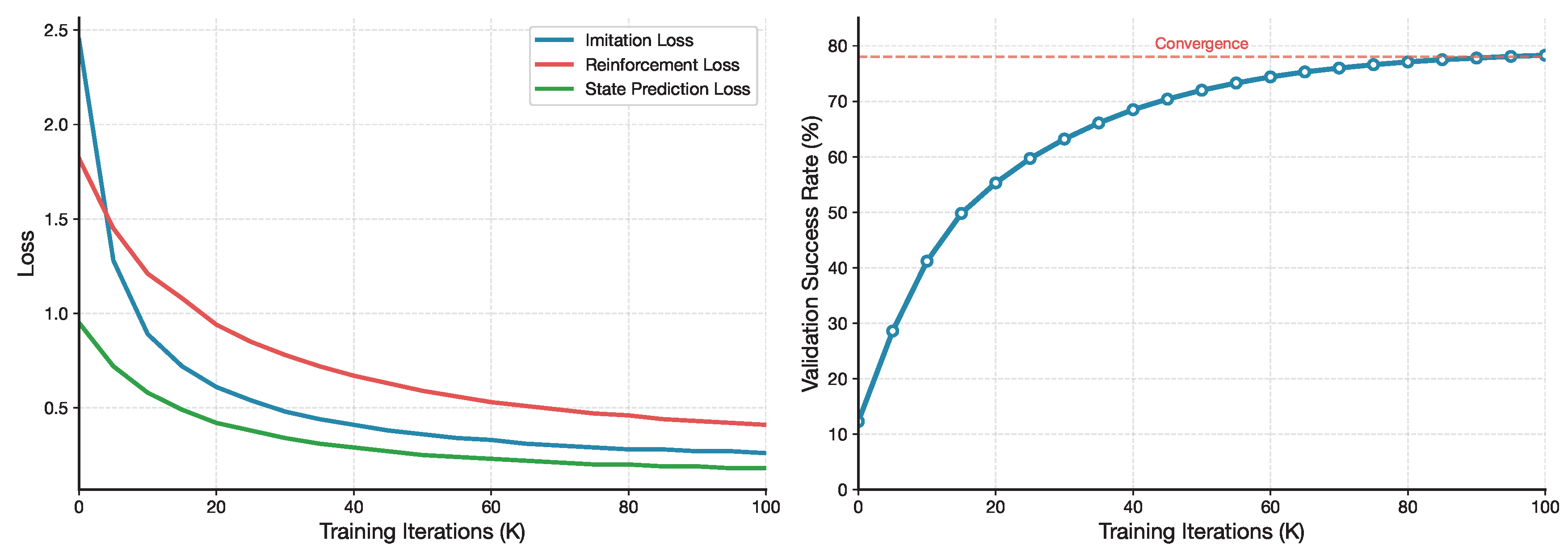

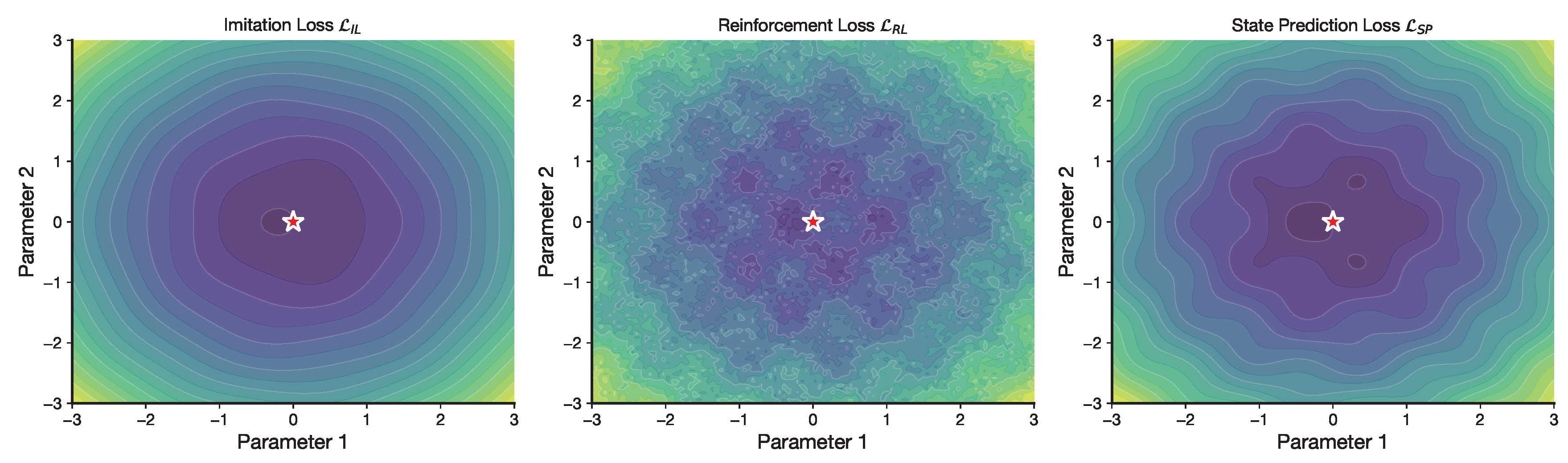

7. Training Dynamics Analysis

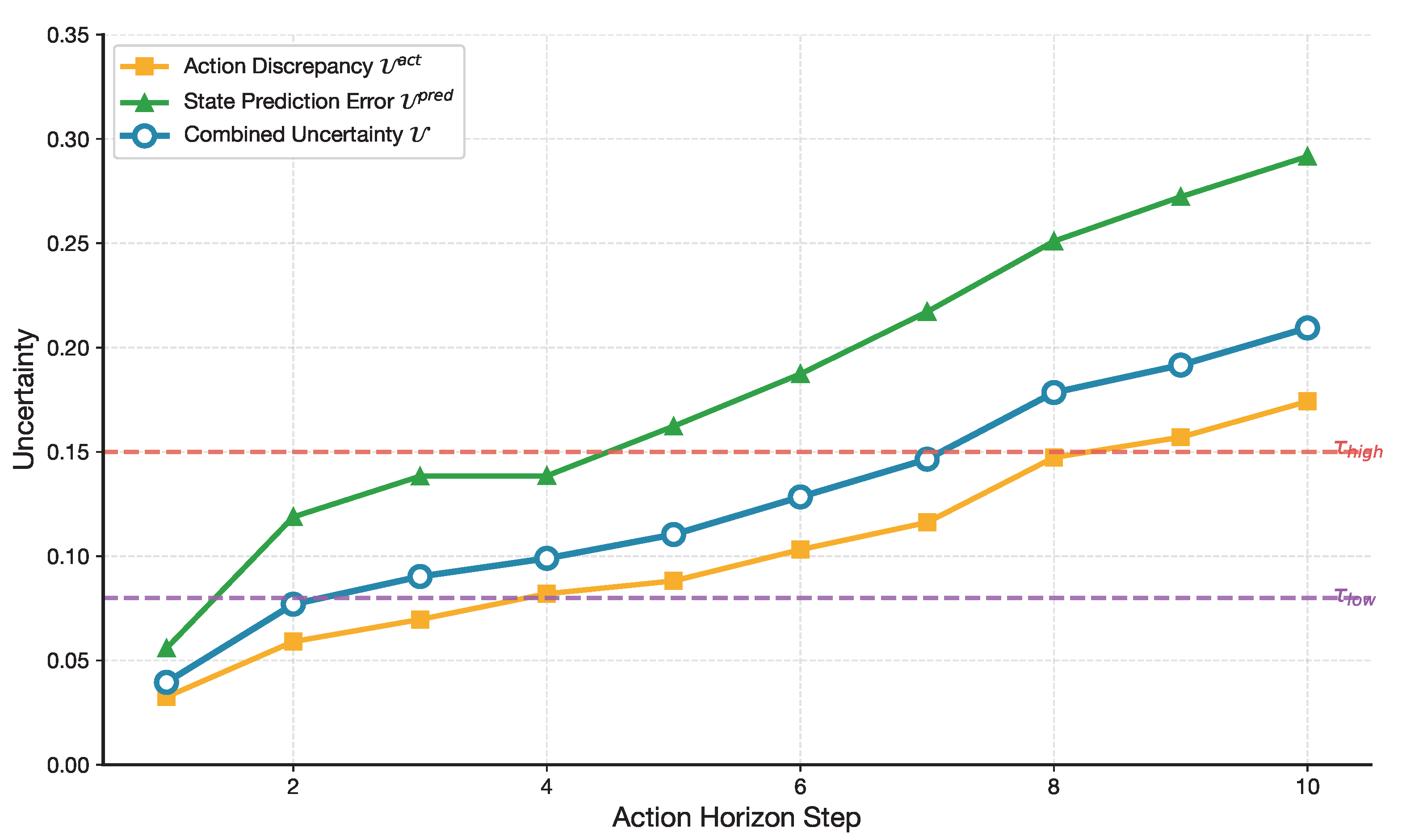

8. Uncertainty Analysis

9. Hyperparameter Sensitivity

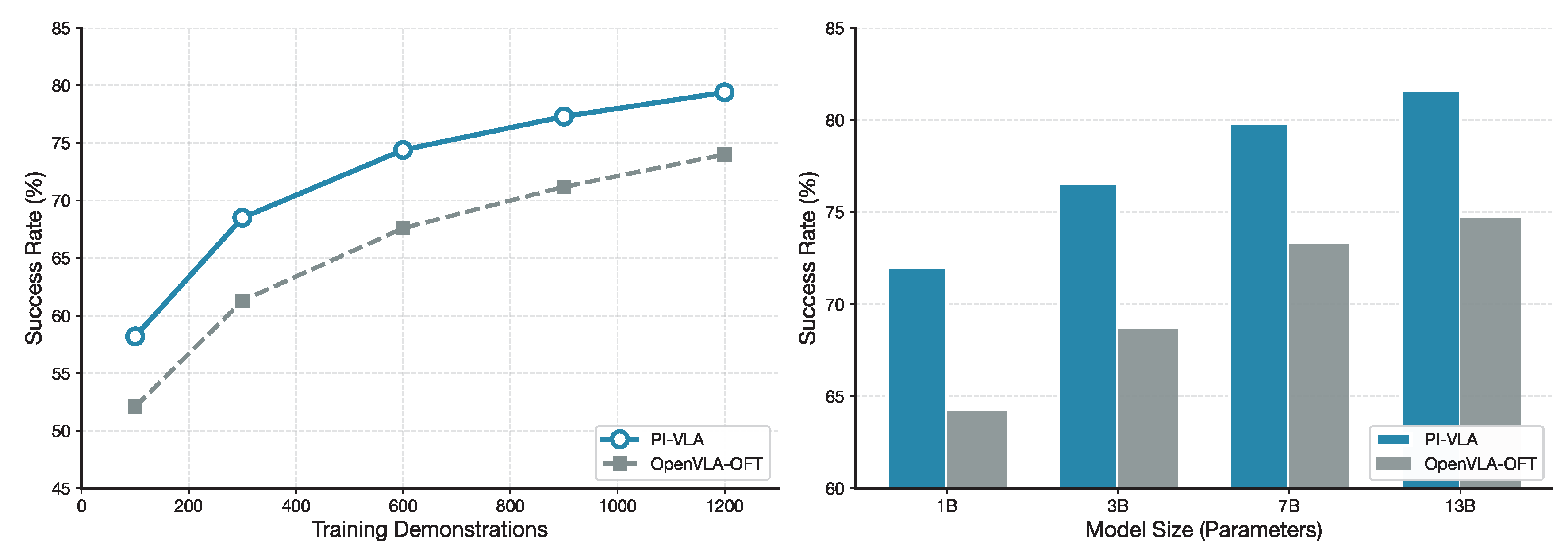

10. Scalability Analysis

11. Conclusion

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Brohan, A.; Brown, N.; Carbajal, J.; Chebotar, Y.; Dabis, J.; Finn, C.; Gober, K.; Joshi, M.; Julian, R.; Kalashnikov, D.; et al. RT-1: Robotics Transformer for Real-World Control at Scale. arXiv preprint arXiv:2212.06817 2022.

- Chen, X.; Wang, X.; Changpinyo, S.; Piergiovanni, A.; Padlewski, P.; Salz, D.; Goodman, S.; Grycner, A.; Mustafa, B.; Beyer, L.; et al. PaLI: A Jointly-Scaled Multilingual Language-Image Model. arXiv preprint arXiv:2209.06794 2022.

- Chi, C.; Feng, S.; Du, Y.; Xu, Z.; Cousineau, E.; Burchfiel, B.; Song, S. Diffusion Policy: Visuomotor Policy Learning via Action Diffusion. In Proceedings of the Proceedings of Robotics: Science and Systems (RSS), 2023. [Google Scholar]

- Xie, A.; Lee, Y.; Abbeel, P.; Roberts, S. Decomposing the Generalization Gap in Imitation Learning for Visual Robotic Manipulation. arXiv preprint arXiv:2307.03659 2024.

- Octo Model Team.; Ghosh, D.; Walke, H.; Pertsch, K.; Black, K.; Mees, O.; Dasari, S.; Hejna, J.; Kreiman, T.; Xu, C.; et al. Octo: An Open-Source Generalist Robot Policy. arXiv preprint arXiv:2405.12213 2024.

- Brohan, A.; Brown, N.; Carbajal, J.; Chebotar, Y.; Chen, X.; Choromanski, K.; Ding, T.; Driess, D.; Dubey, A.; Finn, C.; et al. RT-2: Vision-Language-Action Models Transfer Web Knowledge to Robotic Control. arXiv preprint arXiv:2307.15818 2023.

- Quigley, M.; Asbeck, A.; Ng, A. Low-cost Accelerometers for Robotic Manipulator Perception. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems, 2009. [Google Scholar]

- Dass, S.; Yoneda, T.; Desai, A.; Hawkins, C.; Stone, P.; Kroemer, O. PATO: Policy Assisted TeleOperation for Scalable Robot Data Collection. In Proceedings of the Proceedings of Robotics: Science and Systems (RSS), 2022. [Google Scholar]

- Christensen, H.I.; et al. A Roadmap for US Robotics: From Internet to Robotics. Computing Community Consortium 2016.

- Wang, M.; Xu, S.; Jiang, J.; Xiang, D.; Hsieh, S.Y. Global reliable diagnosis of networks based on Self-Comparative Diagnosis Model and g-good-neighbor property. Journal of Computer and System Sciences 2025, 103698. [Google Scholar] [CrossRef]

- Wang, M.; Xiang, D.; Qu, Y.; Li, G. The diagnosability of interconnection networks. Discrete Applied Mathematics 2024, 357, 413–428. [Google Scholar] [CrossRef]

- Wang, M.; Wang, S. Connectivity and diagnosability of center k-ary n-cubes. Discrete Applied Mathematics 2021, 294, 98–107. [Google Scholar] [CrossRef]

- Xiang, D.; Hsieh, S.Y.; et al. G-good-neighbor diagnosability under the modified comparison model for multiprocessor systems. Theoretical Computer Science 2025, 1028, 115027. [Google Scholar]

- Wang, S.; Wang, Z.; Wang, M.; Han, W. g-Good-neighbor conditional diagnosability of star graph networks under PMC model and MM* model. Frontiers of Mathematics in China 2017, 12, 1221–1234. [Google Scholar] [CrossRef]

- Wang, M.; Lin, Y.; Wang, S.; Wang, M. Sufficient conditions for graphs to be maximally 4-restricted edge connected. Australas. J Comb. 2018, 70, 123–136. [Google Scholar]

- Liu, B.; Zhu, Y.; Gao, C.; Feng, Y.; Liu, Q.; Zhu, Y.; Stone, P. LIBERO: Benchmarking Knowledge Transfer for Lifelong Robot Learning. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2023. [Google Scholar]

- Kim, M.J.; Pertsch, K.; Karamcheti, S.; Xiao, T.; Balakrishna, A.; Nair, S.; Rafailov, R.; Foster, E.; Lam, G.; Sanketi, P.; et al. OpenVLA: An Open-Source Vision-Language-Action Model. In Proceedings of the Proceedings of The 8th Conference on Robot Learning (CoRL), 2024; pp. 2679–2713. [Google Scholar]

- Li, X.; Liu, M.; Zhang, H.; Yu, C.; Xu, J.; Wu, H.; Cheang, C.; Jing, Y.; Zhang, W.; Liu, H.; et al. Vision-Language Foundation Models as Effective Robot Imitators. arXiv preprint arXiv:2311.01378 2023.

- Black, K.; Brown, N.; Driess, D.; Esber, A.; Suber, M.; Vijayakumar, A.; Chi, C.; Finn, C.; Levine, S. π0: A Vision-Language-Action Flow Model for General Robot Control. arXiv preprint arXiv:2410.24164 2024.

- Touvron, H.; Lavril, T.; Izacard, G.; Martinet, X.; Lachaux, M.A.; Lacroix, T.; Rozière, B.; Goyal, N.; Hambro, E.; Azhar, F.; et al. LLaMA: Open and Efficient Foundation Language Models. arXiv preprint arXiv:2302.13971 2023. arXiv:2302.13971.

- Open X-Embodiment Collaboration. Open X-Embodiment: Robotic Learning Datasets and RT-X Models. arXiv preprint arXiv:2310.08864 2024.

- Walke, H.; Black, K.; Lee, A.; Kim, M.J.; Du, M.; Zheng, C.; Zhao, T.; Hansen-Estruch, P.; Vuong, Q.; Lu, A.; et al. BridgeData V2: A Dataset for Robot Learning at Scale. In Proceedings of the Conference on Robot Learning (CoRL), 2023. [Google Scholar]

- Khazatsky, A.; Pertsch, K.; Nair, S.; Balakrishna, A.; Dasari, S.; Karamcheti, S.; Nasiriany, S.; Srirama, M.K.; Chen, L.Y.; et al. DROID: A Large-Scale In-The-Wild Robot Manipulation Dataset. arXiv preprint arXiv:2403.12945 2024.

- Zhou, X.; Ren, Z.; Min, S.; Liu, M. Language Conditioned Spatial Relation Reasoning for 3D Object Grounding. arXiv preprint arXiv:2211.09646 2023.

- Pertsch, K.; Kim, M.J.; Luo, J.; Finn, C.; Levine, S. FAST: Efficient Action Tokenization for Vision-Language-Action Models. arXiv preprint arXiv:2501.09747 2025.

- Zhao, T.Z.; Tompson, J.; Driess, D.; Florence, P.; Xia, F.; et al. ALOHA 2: An Enhanced Low-Cost Hardware for Bimanual Teleoperation. arXiv preprint arXiv:2405.02292 2024.

- Wen, J.; Zhu, Y.; Zhang, J.; Weng, M.; Mu, Y.; et al. TinyVLA: Towards Fast, Data-Efficient Vision-Language-Action Models for Robotic Manipulation. arXiv preprint arXiv:2409.12514 2025.

- Zhang, H.; Wu, Y.; Liu, E.; Shih, C.; Zhou, Z.; Torralba, A. Improving Training Efficiency of Diffusion Models via Multi-Stage Framework and Tailored Multi-Decoder Architecture. arXiv preprint arXiv:2312.09181 2024.

- Wang, Z.; Zheng, H.; He, P.; Chen, W.; Zhou, M. Patch Diffusion: Faster and More Data-Efficient Training of Diffusion Models. In Proceedings of the Advances in Neural Information Processing Systems, 2023. [Google Scholar]

- Kim, M.J.; Finn, C.; Liang, P. Fine-Tuning Vision-Language-Action Models: Optimizing Speed and Success. arXiv preprint arXiv:2502.19645 2025.

- Ho, J.; Jain, A.; Abbeel, P. Denoising Diffusion Probabilistic Models. In Proceedings of the Advances in Neural Information Processing Systems, 2020, Vol. 33, pp. 6840–6851.

- Zhao, T.Z.; Kumar, V.; Levine, S.; Finn, C. Learning Fine-Grained Bimanual Manipulation with Low-Cost Hardware. In Proceedings of the Proceedings of Robotics: Science and Systems (RSS), 2023. [Google Scholar]

- Pan, C.H.; Qu, Y.; Yao, Y.; Wang, M.J.S. HybridGNN: A Self-Supervised graph neural network for efficient maximum matching in bipartite graphs. Symmetry 2024, 16, 1631. [Google Scholar] [CrossRef]

- Liu, J.; Chen, H.; An, P.; Liu, Z.; Zhang, R.; Gu, C.; Li, X.; Guo, Z.; Chen, S.; Liu, M.; et al. HybridVLA: Collaborative Diffusion and Autoregression in a Unified Vision-Language-Action Model. arXiv preprint arXiv:2503.10631 2025.

- Yang, B.; Jayaraman, D.; Levine, S.; et al. RepLAB: A Reproducible Low-Cost Arm Benchmark Platform for Robotic Learning. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), 2019. [Google Scholar]

- Cui, H.; Zhuo, Z.; Wang, S.; Zhang, Z. Aha-Robot: Low-Cost, Open-Source Humanoid Research Platform for Robot Learning. arXiv preprint arXiv:2502.02545 2025. arXiv:2502.02545.

- Wu, P.; Xie, Y.; Gopinath, D.; Koh, J. GELLO: A General, Low-Cost, and Intuitive Teleoperation Framework for Robot Manipulators. arXiv preprint arXiv:2309.13037 2024.

- Cocota, J.A.N.; Holanda, G.B.; Fujimoto, L.B.M. A Low-Cost 6-DOF Serial Robotic Arm for Educational Purposes. IFAC Proceedings Volumes 2012, 45, 285–290. [Google Scholar]

- Bruyninckx, H. Open Robot Control Software: The OROCOS Project. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), 2001; pp. 2523–2528. [Google Scholar]

- Quigley, M.; Conley, K.; Gerkey, B.; Faust, J.; Foote, T.; Leibs, J.; Wheeler, R.; Ng, A.Y. ROS: An Open-Source Robot Operating System. In Proceedings of the ICRA Workshop on Open Source Software, 2009. [Google Scholar]

- Karamcheti, S.; Nair, S.; Balakrishna, A.; Liang, P.; Kollar, T.; Sadigh, D. Prismatic VLMs: Investigating the Design Space of Visually-Conditioned Language Models. In Proceedings of the International Conference on Machine Learning (ICML), 2024. [Google Scholar]

- Song, X.; Chen, K.; Bi, Z.; Niu, Q.; Liu, J.; Peng, B.; Zhang, S.; Liu, M.; Li, M.; Pan, X.; et al. Mastering Reinforcement Learning: Foundations, Algorithms, and Real-World Applications. arXiv preprint arXiv:2501.xxxxx 2025.

- Schulman, J.; Moritz, P.; Levine, S.; Jordan, M.; Abbeel, P. High-Dimensional Continuous Control Using Generalized Advantage Estimation. arXiv preprint arXiv:1506.02438 2015.

- Hu, E.J.; Shen, Y.; Wallis, P.; Allen-Zhu, Z.; Li, Y.; Wang, S.; Wang, L.; Chen, W. LoRA: Low-Rank Adaptation of Large Language Models. 2021. [Google Scholar]

- Liang, C.X.; Bi, Z.; Wang, T.; Liu, M.; Song, X.; Zhang, Y.; Song, J.; Niu, Q.; Peng, B.; Chen, K.; et al. Low-Rank Adaptation for Scalable Large Language Models: A Comprehensive Survey. arXiv preprint arXiv:2502.xxxxx 2025.

- Hou, C.; Zhang, C.; Liu, Y.; Li, Y. Diffusion Transformer Policy. arXiv preprint arXiv:2410.15959 2024.

- Li, Y.; Chen, Y.; Wang, L. COGAct: A Cognitive Multi-Task Approach for Robot Skill Learning. arXiv preprint arXiv:2402.10314 2024.

- Wang, R.F.; Qu, H.R.; Su, W.H. From sensors to insights: Technological trends in image-based high-throughput plant phenotyping. Smart Agricultural Technology 2025, p. 101257.

- Wang, R.F.; Su, W.H. The application of deep learning in the whole potato production Chain: A Comprehensive review. Agriculture 2024, 14, 1225. [Google Scholar] [CrossRef]

- Wang, R.F.; Qin, Y.M.; Zhao, Y.Y.; Xu, M.; Schardong, I.B.; Cui, K. RA-CottNet: A Real-Time High-Precision Deep Learning Model for Cotton Boll and Flower Recognition. AI 2025, 6, 235. [Google Scholar] [CrossRef]

- Sun, H.; Xi, X.; Wu, A.Q.; Wang, R.F. ToRLNet: A Lightweight Deep Learning Model for Tomato Detection and Quality Assessment Across Ripeness Stages. Horticulturae 2025, 11, 1334. [Google Scholar] [CrossRef]

- Huihui, S.; Rui-Feng, W. BMDNet-YOLO: A Lightweight and Robust Model for High-Precision Real-Time Recognition of Blueberry Maturity. Horticulturae 2025, 11, 1202. [Google Scholar]

- Wang, R.F.; Tu, Y.H.; Li, X.C.; Chen, Z.Q.; Zhao, C.T.; Yang, C.; Su, W.H. An Intelligent Robot Based on Optimized YOLOv11l for Weed Control in Lettuce. In Proceedings of the 2025 ASABE Annual International Meeting. American Society of Agricultural and Biological Engineers, 2025, p. 1.

- Pan, P.; Zhang, Y.; Deng, Z.; Qi, W. Deep learning-based 2-D frequency estimation of multiple sinusoidals. IEEE Transactions on Neural Networks and Learning Systems 2021, 33, 5429–5440. [Google Scholar] [CrossRef] [PubMed]

- Pan, P.; Zhang, Y.; Deng, Z.; Wu, G. Complex-valued frequency estimation network and its applications to superresolution of radar range profiles. IEEE Transactions on Geoscience and Remote Sensing 2021, 60, 1–12. [Google Scholar] [CrossRef]

- Pan, P.; Zhang, Y.; Deng, Z.; Fan, S.; Huang, X. TFA-Net: A deep learning-based time-frequency analysis tool. IEEE Transactions on Neural Networks and Learning Systems 2022, 34, 9274–9286. [Google Scholar] [CrossRef] [PubMed]

- Zhang, Y.; Pan, P.; Li, Y.; Guo, R. Efficient off-grid frequency estimation via ADMM with residual shrinkage and learning enhancement. Mechanical Systems and Signal Processing 2025, 224, 112200. [Google Scholar] [CrossRef]

- Pan, P.; Zhang, Y.; Li, Y.; Ye, Y.; He, W.; Zhu, Y.; Guo, R. Interpretable Optimization-Inspired Deep Network for Off-Grid Frequency Estimation. IEEE Transactions on Neural Networks and Learning Systems; 2025. [Google Scholar]

| Method | Spatial | Object | Goal | Long | Avg. |

|---|---|---|---|---|---|

| Diffusion Policy | 65.2 | 60.1 | 58.7 | 52.3 | 59.1 |

| Octo | 68.9 | 64.3 | 62.5 | 56.8 | 63.1 |

| DiT Policy | 70.5 | 66.7 | 65.2 | 59.4 | 65.5 |

| OpenVLA | 72.1 | 68.5 | 67.8 | 61.9 | 67.6 |

| OpenVLA-OFT | 73.8 | 70.2 | 69.5 | 63.4 | 69.2 |

| EverydayVLA | 74.4 | 67.5 | 64.3 | 54.7 | 65.2 |

| PI-VLA (Ours) | 79.5 | 73.4 | 73.3 | 66.6 | 73.2 |

| Method | Block | Ball | Rock | Avg. | ||||||

|---|---|---|---|---|---|---|---|---|---|---|

| Away | Left | Right | Away | Left | Right | Away | Left | Right | ||

| OpenVLA | 80 | 45 | 50 | 75 | 60 | 70 | 70 | 50 | 55 | 61.7 |

| OpenVLA-OFT | 85 | 60 | 65 | 80 | 65 | 75 | 75 | 60 | 65 | 70.0 |

| EverydayVLA | 90 | 80 | 75 | 90 | 85 | 80 | 85 | 80 | 75 | 82.2 |

| PI-VLA (Ours) | 95 | 90 | 85 | 95 | 90 | 85 | 90 | 85 | 80 | 88.3 |

| Method | Unseen Tasks | Unseen Env. | Static Dist. | Dynamic Dist. |

|---|---|---|---|---|

| OpenVLA | 55.2 | 58.7 | 50.1 | 45.3 |

| OpenVLA-OFT | 60.5 | 63.4 | 55.8 | 50.2 |

| EverydayVLA | 65.8 | 68.9 | 64.2 | 63.5 |

| PI-VLA (Ours) | 72.4 | 75.1 | 71.8 | 70.1 |

| Variant | Spatial Suite (%) |

|---|---|

| PI-VLA (Full CMS) | 79.5 |

| w/ Discrete-only head | 75.3 |

| w/ Continuous-only head | 73.9 |

| Training Objective Variant | Spatial Suite (%) |

|---|---|

| Full | 79.5 |

| w/o State Prediction Loss () | 76.8 |

| w/o Reinforcement Loss () | 78.2 |

| Imitation-only ( only) | 74.0 |

| Execution Strategy | Spatial Suite (%) |

|---|---|

| PI-VLA (Full with AURD) | 79.5 |

| w/o AURD (Fixed Horizon = 5) | 74.1 |

| Replace AURD with AdaHorizon | 76.4 |

| Replace AURD with ACT (Temporal Ens.) | 73.2 |

| Model Configuration | Spatial Suite (%) |

|---|---|

| OpenVLA-OFT | 73.8 |

| + Dual-Heads (Discrete + Continuous) | 74.8 |

| + Unified Loss () | 77.1 |

| + AURD (Full PI-VLA) | 79.5 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).