Submitted:

07 January 2026

Posted:

08 January 2026

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

2. Background

2.1. The LLM Agent Framework

2.2. Taxonomy

3. Evolutionary Drivers

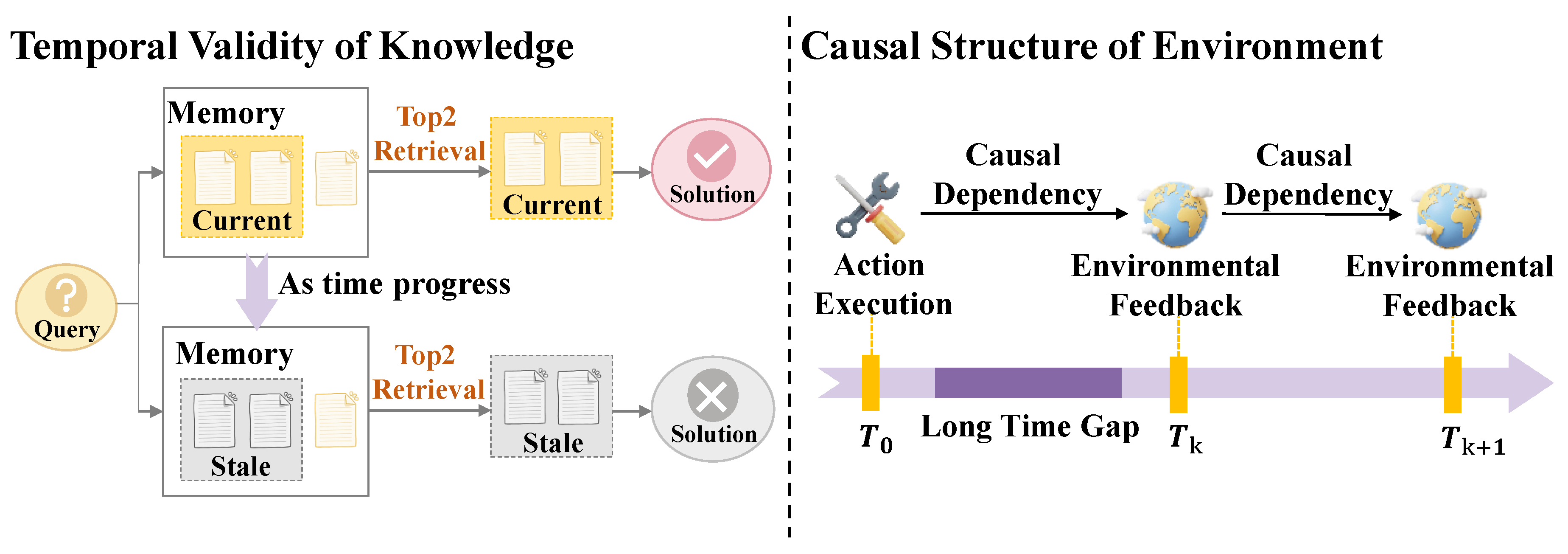

3.1. Long-Term Consistency

3.2. Dynamic Environments

3.3. Continual Learning

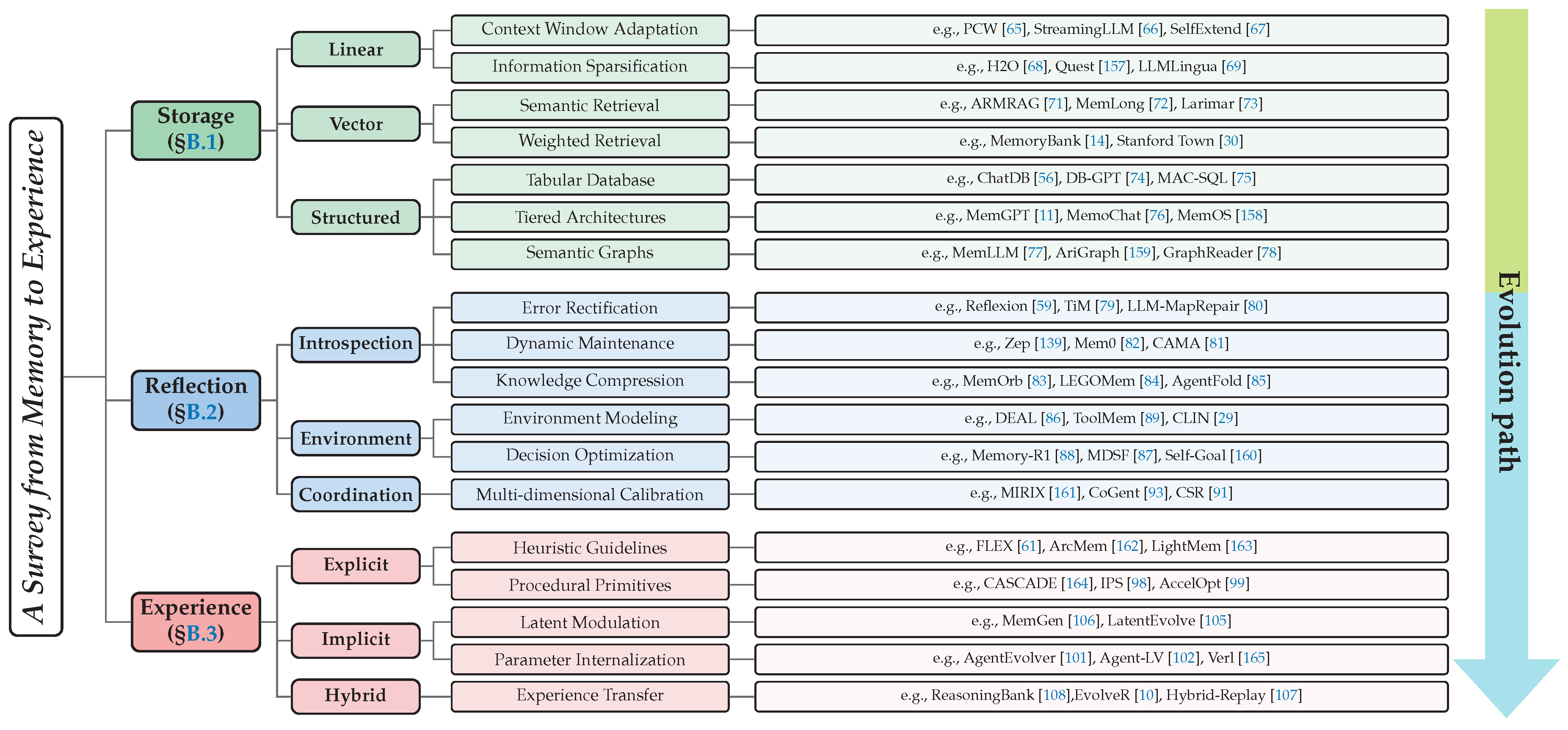

4. Evolutionary Path

4.1. Storage

4.2. Reflection

4.3. Experience

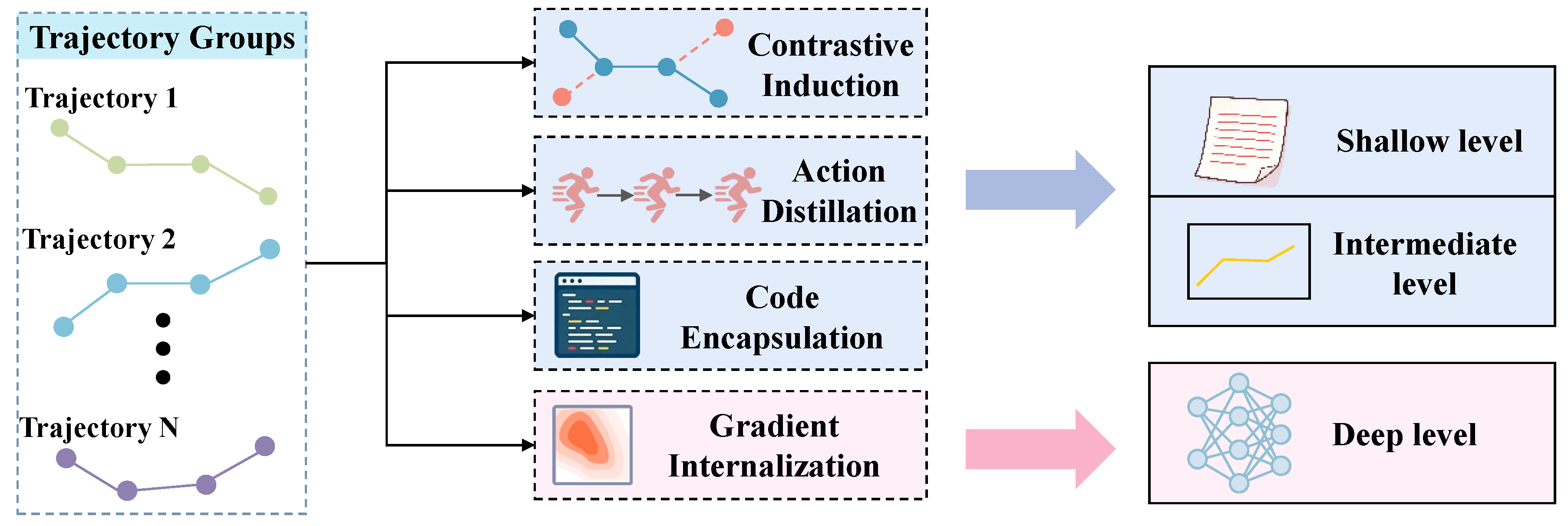

5. Transformative Experience

5.1. Active Exploration

5.2. Cross-Trajectory Abstraction

- Within the novel stage of memory mechanisms characterized by Experience, active exploration and abstraction across trajectories represent the two primary features of this phase.

- The feedback loop between exploration and abstraction serves as the central engine that drives the autonomous and continuous evolution of LLM agents during the phase of experience.

6. Future Directions

7. Conclusion

8. Limitations

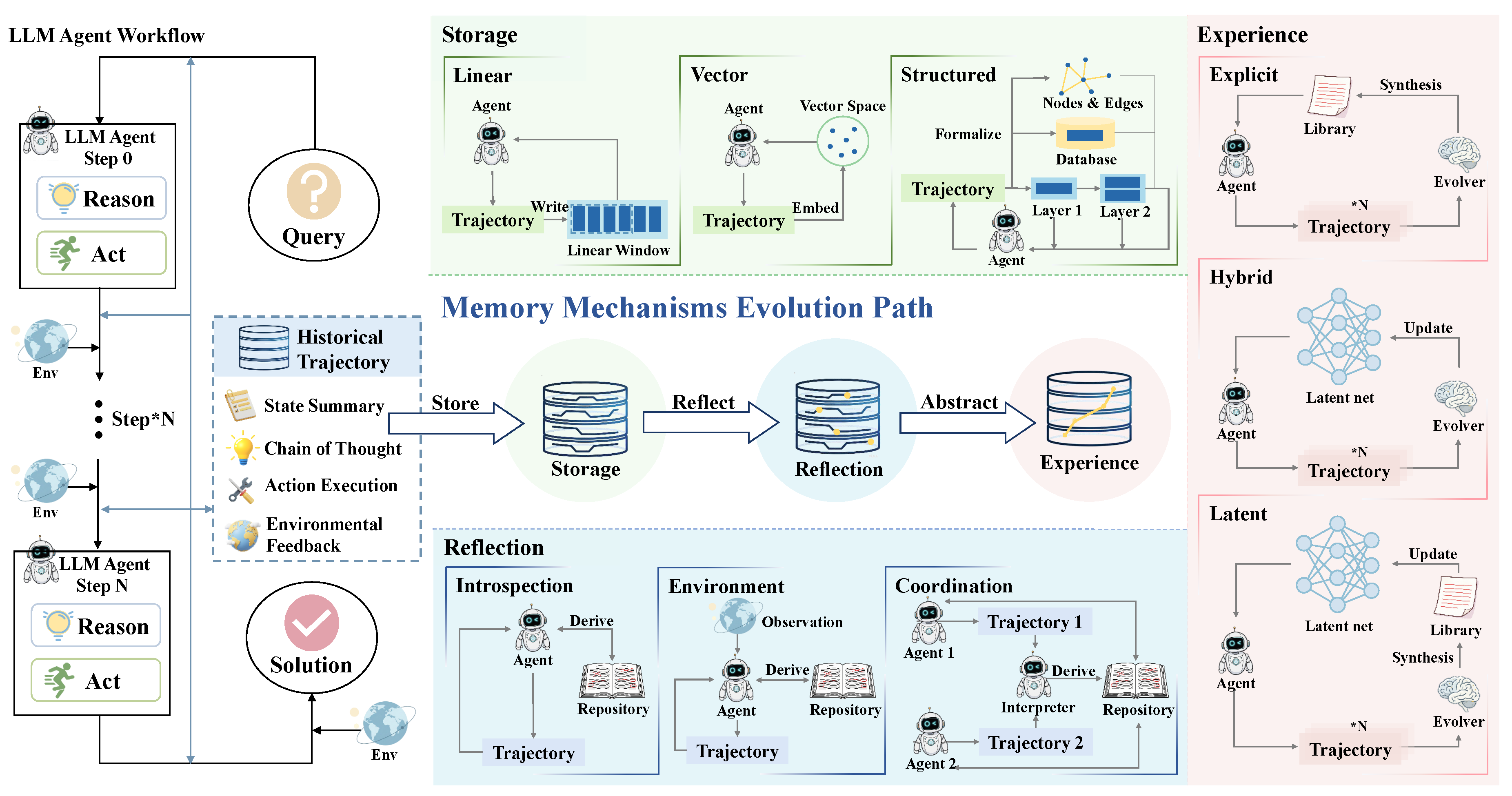

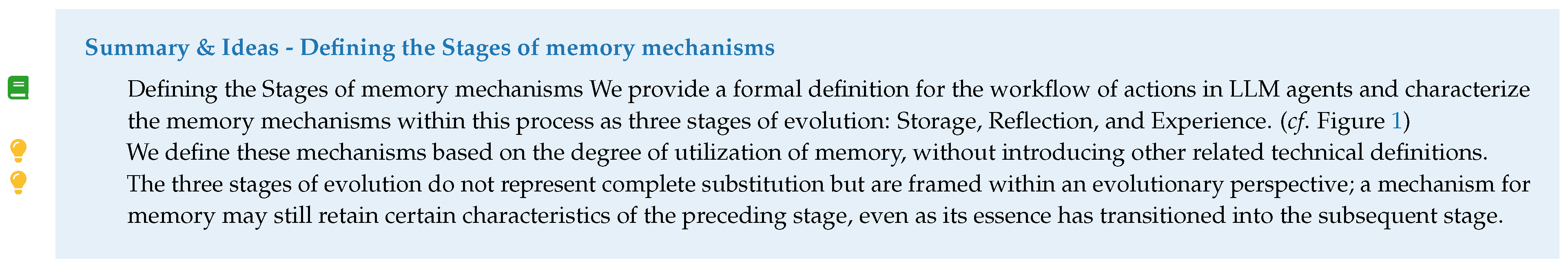

Appendix A. Overview

- Storage: As the foundational layer of evolution, this stage focuses on the faithful preservation of trajectories from interactions over a long duration to address constraints regarding the memory capacity of LLM agents.

- Reflection: Through the introduction of loops for dynamic evaluation, the memory mechanism transitions from a recorder of information to an evaluator, thereby mitigating issues related to hallucinations and logical errors within the memory of LLM agents.

- Experience: Representing the highest level of cognition, this stage employs abstraction across multiple trajectories to extract behavioral patterns of a higher order. This process compresses redundant memory into heuristic strategies that are transferable and reusable.

- Scope & Coverage: To address the absence of a perspective on evolution and the significant fragmentation in contemporary research on memory mechanisms, this survey provides a comprehensive overview that is forward-looking. This work encompasses research that has been overlooked, the most recent advancements, and theoretical perspectives of a broader nature.

- Organization & Structure: This survey constructs an evolutionary framework in three stages to organize the manuscript. On this basis, we systematically delineate the drivers and pathways for the development of memory mechanisms, as well as characteristics at the frontier. This perspective provides novel insights for research within this domain.

- Insights & Critical Analysis: This survey provides original interpretations and an in-depth analysis of the existing literature. For instance, we propose a taxonomy from an evolutionary perspective, using the degree of utilization for trajectories of past interaction as a benchmark. Furthermore, we summarize two pivotal characteristics of memory mechanisms in the stage of experience and identify several issues that remain underexplored or unresolved.

- Timeliness & Relevance: In the inaugural year of LLM agents, this work represents the first survey to systematically examine memory mechanisms from a perspective of evolution, capturing research at the frontier through 2025. It addresses the urgent necessity for adaptation and learning as agents encounter the real world for the first time. Through the synthesis of existing literature, we provide a new foundation for further exploration and innovation in this critical field.

Appendix B. Detail within the Evolutionary Path

Appendix B.1. Storage

- Context Window Adaptation: Context window adaptation techniques seek to extend the usable input length of LLMs by modifying attention mechanisms, positional encoding schemes, or input structures. Representative approaches include optimizing intrinsic attention computation [66], remapping positional encodings to enable longer sequences [67], and restructuring inputs to mitigate length constraints [65]. These methods expand raw storage capacity but do not alter the semantics of stored trajectories.

- Information Sparsification: Information sparsification treats memory compression as a mechanical denoising process independent of agent reflection. Methods typically rely on statistical or attention-based heuristics to remove low-utility tokens. For example, Zhang et al. [68] evicts tokens based on cumulative attention scores, while Tang et al. [157] and Xiao et al. [70] retrieve salient memory blocks via query–key similarity. Jiang et al. [69] further identifies redundant segments through perplexity estimation. While effective for efficiency, these methods operate without semantic abstraction.

- Semantic Retrieval: Semantic retrieval constitutes the foundational approach to vector memory, where relevance is determined by geometric proximity in embedding space. Melz [71] retrieves historical reasoning chains via semantic alignment, while Liu et al. [72] integrates fine-grained retrieval-attention during decoding to sustain long-context reasoning. Das et al. [73] further internalizes episodic memory into a latent matrix, enabling one-shot read–write operations. Despite improved recall, these methods treat retrieved content as flat historical evidence.

- Weighted Retrieval: Weighted retrieval extends semantic similarity by assigning differentiated importance to memories using multi-dimensional scoring signals. Zhong et al. [14] models temporal decay via the Ebbinghaus Forgetting Curve, while Park et al. [30] retrieves memories based on a weighted combination of relevance, recency, and importance. Such mechanisms improve prioritization but remain retrieval-centric rather than abstraction-driven.

- Tabular Database: Database-backed memory systems leverage mature relational databases to store agent knowledge in structured tabular form. Early work frames databases as symbolic memory [56], while subsequent approaches translate natural language queries into SQL via specialized controllers for secure and efficient access [74]. Multi-agent extensions further distribute database construction and maintenance across specialized roles [75].

- Tiered Architectures: Tiered memory architectures draw inspiration from computer storage hierarchies and human cognition to balance capacity and access latency. MemGPT [11] introduces a dual-layer design separating main and external context, enabling virtual context expansion. Cognitive-inspired systems such as SWIFT–SAGE [167] dynamically adjust retrieval intensity, while streaming-update architectures maintain long-term stability without exhaustive retrieval [76,168].

- Semantic Graphs: Graph memory represents interaction histories as networks of entities and relations, enabling structured reasoning beyond flat storage. Triplet-based extraction supports precise updates and retrieval [77], while neuro-symbolic approaches integrate logical constraints into graph representations [169]. Graph-based world models further support environment-centric reasoning [159], and coarse-to-fine traversal over text graphs enables efficient long-context retrieval [76,168].

Appendix B.2. Reflection

- Error Rectification: targets hallucinations and multi-step reasoning errors by verifying and repairing stored trajectories through self-critique.Shinn et al. [59] introduces Reflexion, which prompts agents to reflect on failed trajectories and distill corrective feedback into textual memory. This mechanism enables systematic error correction and sustained performance improvement across episodes, establishing introspective reflection as a central mechanism rather than a peripheral heuristic.Building on this paradigm, Liu et al. [79] introduces a post-reasoning verification stage to retain only validated memories, while Zhang et al. [80] detects contradictory or erroneous segments through introspective consistency checks, thereby limiting error accumulation and propagation.

- Dynamic Maintenance: Dynamic maintenance focuses on lifecycle management of memory content. Li et al. [81] incrementally updates internal knowledge schemas via clustering, while Rasmussen et al. [139] and Chhikara et al. [82] maintain continuity by parsing and updating structured entity relations. At the system level, rule-based controllers inspired by operating systems strategically update and persist core memories [11,13,173].

- Knowledge Compression: Knowledge compression distills high-dimensional trajectories into compact and reusable representations. Huang et al. [83] generates structured reflections to extract coherent character profiles, while Han et al. [84] decomposes interaction sequences into modular procedural memories. Multi-granularity abstraction further aligns distilled memories with task demands [17,140], and context-folding techniques preserve working-context efficiency during reasoning [28,85,174].

- Environment Modeling: Environmental modeling aligns internal memory with dynamic external conditions such as environments, tools, and user preferences. Sun et al. [86] enables agents to infer and validate world rules from demonstrations, while Xiao et al. [89] summarizes tool behavior from execution outcomes. Preference-aware updates integrate short-term variation with long-term trends [90], and EM-based formulations ensure memory consistency under distribution shifts [175].

- Decision Optimization: Decision optimization treats memory management as a learnable policy guided by environmental rewards or execution feedback. Yan et al. [88] learns discrete actions from outcome-based rewards, while Yan et al. [87] refines memory quality using value-annotated decision trajectories. For complex planning, interaction feedback is used to validate and prune goal hierarchies [160].

- Multi-dimensional Calibration: Multi-dimensional calibration realizes distributed memory management through heterogeneous agent societies. Wang and Chen [161] coordinates core, episodic, and semantic memory modules to process multimodal long contexts. Wang et al. [93] decomposes graph reasoning into perception, caching, and execution roles to reduce context loss. Narrative-level coherence is achieved by integrating episodic and semantic memories across agents [92].Furthermore, Ozer et al. [94] and Bo et al. [91] frameworks further enhance reasoning consistency and collaboration efficiency in agent societies by implementing collaborative reflection across diverse roles and personalized feedback mechanisms

Appendix B.3. Experience

- Heuristic Guidelines: Heuristic guidelines serve to crystallize implicit intuition into explicit natural language strategies. In this domain, researchers focus on distilling experience into textual rules: Ouyang et al. [108] abstracts key decision principles through contrastive analysis of successful and failed trajectories, while Suzgun et al. [180] proposes dynamically generated "prompt lists" for real-time heuristic guidance. Xu et al. [181] and Hassell et al. [96] investigate rule induction from supervisory signals, achieving textual experience transfer via "cross-domain knowledge diffusion" and "semantic task guidance," respectively. To transcend linear text limitations in modeling complex dependencies, recent work shifts toward structured schemas. Ho et al. [162] and Zhang et al. [95] abstract multi-turn reasoning traces into experience graphs, leveraging topological structures to capture logical dependencies and enable effective storage and reuse of collaboration patterns and high-level cognitive principles. Moreover, Cai et al. [61] organizes heuristic knowledge into modular and compositional units, enabling systematic reuse across tasks.

- Procedural Primitives: Procedural primitives represent the abstraction of complex reasoning chains into executable entities, designed to significantly reduce planning overhead. Wang et al. [98] proposes a skill induction mechanism that encapsulates high-frequency action sequences into functions, enabling agents to invoke complex skills as atomic actions. Zhang et al. [99] extends this executable paradigm to hardware optimization, enabling agents to accumulate kernel optimization skills that iteratively enhance accelerator performance. In this line of work, Huang et al. [164] enables the composition and cascading execution of such procedural primitives, allowing agents to construct complex behaviors through structured skill invocation.

- Latent Modulation: Latent modulation operates on the cognitive stream within continuous high-dimensional latent space. By encoding experience into latent variables or activation states, this paradigm "weaves" historical insights into current reasoning in a parameter-free manner, circumventing expensive parameter updates. Zhang et al. [106] introduces the MemGen framework, employing a "Memory Weaver" to dynamically generate and inject latent token sequences conditioned on current reasoning state. Zhang et al. [105] achieves smooth transfer from historical experience to current decision-making without altering static parameters, using alternating Fast Retrieval and Slow Integration within latent space.

- Parameter Internalization: Parameter Internalization transforms explicit trajectories into intrinsic capabilities within model weights. Through gradient updates, this mechanism instills adaptive priors into LLM agents, enabling effective navigation of complex environments. For context distillation, Alakuijala et al. [63] proposes iterative distillation to internalize corrective hints into model weights. Liu et al. [182] converts business rules into model priors, alleviating retrieval overload in RAG systems, while Zhai et al. [101] introduces "Experience Stripping," eliminating retrieval segments during training to force internalization of explicit experience into autonomous reasoning capabilities independent of external auxiliaries. For Reinforcement Learning, Zhang et al. [102] proposes a pioneering early experience paradigm, leveraging implicit world models and sub-reflective prediction to internalize trial-and-error experience into policy priors without extrinsic rewards. Lyu et al. [183] achieves strategic transformation from Reflection to Experience by applying RL to student-generated reflections. Feng et al. [184] proposes group-based policy optimization for fine-grained experience internalization across multi-turn interactions. Jiang et al. [165] establishes standardized alignment between RL and tool invocation, enhancing agents’ capacity to transmute tool-use experience into intrinsic strategies.

- Experience Transfer: Experience Transfer facilitates capability internalization by progressively decoupling agents from external retrieval reliance. [10] employs offline distillation to abstract complex trajectories into structured experience for inference guidance, then uses these experiences to generate high-quality trajectories for policy optimization. By transferring knowledge from explicit experience pools into model parameters via gradient updates, this approach eliminates dependence on external retrieval systems. [107,108] maintains an explicit experience replay buffer preserving high-value exploration trajectories. Through a hybrid On-Policy and Off-Policy update strategy, this framework leverages explicit memory for immediate exploration while encoding successful experiences into network parameters via offline updates, ensuring agents sustain optimal performance through internalized "intuition" without external support.

Appendix C. Datasets

- Extreme Context: Extreme context types focus on probing the physical limits of memory in LLM agents, specifically the capacity for extracting and processing minute facts within massive volumes of distracting information. For instance, these benchmarks define the actual effective window of the model through the retrieval of multiple needles [185], assess the capabilities of agents by embedding reasoning tasks within backgrounds of a million words [186] and assessing the reliability of memory within a long context [187], or extend these challenges to the domain of vision [188]. The core of this area is the evaluation of the authentic capacity for memory in the model.

- Interactive Consistency: Research regarding the category of Interactive Consistency is based on interaction across sessions, which requires LLM agents to maintain memory with consistency throughout such interactions. Examples include the provision of frameworks for coherent dialogue at the scale of ten million words [189], the direct evaluation of the update of knowledge and the capacity for rejection during continuous interaction [190], and the detection of how consistency and accuracy for personas are maintained over histories of long duration [14,191]. The core of this stage is the assessment of the capacity for memory with consistency over long distances.

- Relational Fact: Benchmarks of the relational fact category primarily evaluate the capacity of LLM agents for semantic association and reasoning across multiple hops. This involves testing the ability of the model for the integration of facts across documents and reasoning in multiple steps within the context of personal trivia [192,193]. Furthermore, certain frameworks focus on emotional support and interactive scenarios to evaluate the model’s capacity for memory recall across proactive and passive paradigms [194].

- Error Correction: Error correction primarily evaluates whether errors or hallucinations emerge during the lifecycle of the memory system. For instance, it involves testing operations for the search, editing, and matching of memory [195], examining the presence of hallucinations during the stages of extraction or update [196].

- Personalization: Personalization focuses on the capacity for the extraction of deep personalization from the history of the agent, which includes the mining of latent information through reflection to identify implicit preferences [197,198], traits of users [199,200], key information [201,202], and shared components [203,204].

- Dynamic Reasoning: Dynamic reasoning emphasizes the critical role of memory in reasoning across multiple steps and the perception of environments with high complexity. This involves the selective forgetting of memory [205], backtracking on decisions [206], scenarios in the real world [207,208], and the mechanisms for summarization and transition [190].

| Stage | Dataset | Reference | Size | Description |

|---|---|---|---|---|

| Storage Stage Benchmark | LongBench | Bai et al. [212] | 4.7k | Evaluate faithful memory preservation and retrieval by performing information extraction and reasoning across multiple tasks with sequences up to 32k tokens. |

| LongBenchv2 | Bai et al. [213] | 503 | Answer complex multiple-choice questions through the processing of extremely long sequences with lengths between 8k and 2M words for the purpose of evaluating the capacity of memory and the precision of reasoning. | |

| RULER | Hsieh et al. [185] | Scalable | Evaluate the effectiveness of retrieval and synthesis within long contexts through tasks such as multi-needle extraction or multi-hop reasoning to identify true memory capacity. | |

| MMNeedle | Wang et al. [188] | 280k | Identify a target sub image within a massive collection of images through the analysis of textual descriptions and visual contents for the purpose of measuring the limits of multimodal retrieval. | |

| HotpotQA | Yang et al. [193] | 113k | Answer questions by performing reasoning across multiple hops over information scattered in diverse documents based on Wikipedia to provide accurate results and supporting facts. | |

| MemoryBank | Zhong et al. [14] | 194 | Answer questions by recalling pertinent information and summarizing user traits across a history of interactions spanning ten days to evaluate the precision of retrieval and the maintenance of user portraits for long-term dialogues. | |

| BABILong | Kuratov et al. [186] | Scalable | Answer questions by performing reasoning on facts scattered across extremely long documents of natural language to test the limits of memory and retrieval for contexts with length up to one million tokens. | |

| DialSim | Kim et al. [214] | 1.3k | Evaluate the precision of retrieval for memory by answering spontaneous questions across sessions of dialogue involving multiple parties with long durations. | |

| LongMemEval | Maharana et al. [190] | 500 | Answer questions through the extraction of information from histories of interactive chat with multiple sessions for the purpose of evaluating the performance of retrieval and reasoning across dependencies of long range. | |

| BEAM | Tavakoli et al. [189] | 100 | Evaluate the capacity of memory and the precision of retrieval by answering questions based on coherent and topically diverse conversations with length up to ten million tokens. | |

| MPR | Zhang et al. [192] | 108k | Answer complex questions by conducting reasoning across multiple hops of factual information specific to a user within a framework of explicit or implicit memory for the purpose of evaluating the precision of retrieval. | |

| LOCCO | Jia et al. [191] | 1.6k | Evaluate the persistence of memory and the retrieval of historical facts by analyzing chronological conversations across extended periods of time for the purpose of measuring information decay. | |

| MADial-Bench | He et al. [194] | 160 | Evaluate the effectiveness of retrieval and recognition for historical events across multiple turns of interaction by simulating paradigms of passive and proactive recall for the purpose of providing emotional support. | |

| HELMET | Yen et al. [187] | Scalable | Evaluate the effectiveness of models for long-context by performing information extraction and reasoning across seven diverse categories for sequences with lengths up to 128k tokens to provide a thorough assessment of memory capacity. | |

| Reflection Stage Benchmark | Minerva | Xia et al. [195] | Scalable | Analyze the proficiency of LLMs in utilizing and manipulating context memory through a programmable framework of atomic and composite tasks for the purpose of pinpointing specific functional deficiencies and providing actionable insights. |

| HaluMem | Chen et al. [196] | 3.5k | Evaluate the fidelity of memory by quantifying the occurrence of fabrication and omission during the stages of storage and retrieval across dialogues of multiple turns. | |

| MABench | Hu et al. [205] | 2.1k | Evaluate the competencies of accurate retrieval and learning at test time across sequences of incremental interactions with multiple turns. | |

| PRM | Yuan et al. [201] | 700 | Evaluate the capability of personalized assistants to maintain a dynamic memory bank by preserving evolving user knowledge and experiences across long term dialogues for the purpose of generating tailored responses. | |

| PersonMemv2 | Jiang et al. [197] | 1k | Generate personalized responses through the extraction of implicit personas from interactions with long context and thousands of preferences of users to evaluate the adaptation of agents. | |

| LoCoMo | Maharana et al. [190] | 50 | Evaluate the reliability of memory by executing question answering and event summarization across sequences of conversation with lengths of up to nine thousand tokens spanning thirty-five sessions. | |

| WebChoreArena | Miyai et al. [208] | 451 | Analyze the performance of memory for information retrieval and complex aggregation by performing multiple steps of navigation and reasoning across hundreds of web pages. | |

| MT-Mind2Web | Deng et al. [207] | 720 | Evaluate the performance of conversational web navigation via multiturn instruction following across sequential interactions with both users and environment for the purpose of managing context dependency and limited memory space. | |

| StoryBench | Wan and Ma [206] | 80 | Evaluate the capacity for reflection and sequential reasoning by navigating hierarchical decision trees within interactive fiction games to trace back and revise earlier choices independently across multiple turns. | |

| PerLTQA | Du et al. [199] | 8.6k | Answer personalized questions by retrieving and synthesizing semantic and episodic information from a memory bank of thirty characters to evaluate the accuracy of retrieval for memory. | |

| ImplexConv | Li et al. [202] | 2.5k | Evaluate implicit reasoning in personalized dialogues by retrieving and synthesizing subtle or semantically distant information from history encompassing 100 sessions to test the efficiency of hierarchical memory structures. | |

| Share | Kim et al. [204] | 3.2k | Improve the quality of interactions across long durations by extracting persona data and memories of shared history from scripts of movies to sustain a consistent relationship between two individuals. | |

| Mem-PAL | Huang et al. [198] | 100 | Evaluate the capability of personalization for assistants oriented toward service by identifying subjective traits and preferences of users from histories of dialogue and behavioral logs across multiple sessions for the purpose of generating tailored responses. | |

| PrefEval | Zhao et al. [200] | 3k | Quantify the robustness of proactive preference following by evaluating the ability of models to infer and satisfy explicit or implicit user traits amidst long context distractions for sequences with length up to 100k tokens. | |

| LIDB | Tsaknakis et al. [203] | 210 | Discover the latent preferences of users and generate personalized responses through interactions across multiple turns within a framework of three agents for the purpose of evaluating the efficiency of elicitation and adaptation. | |

| Experience Stage Benchmark | StreamBench | Wu et al. [209] | 9.7k | Evaluate the capacity for continuous improvement and online learning via iterative feedback processing across diverse task streams to measure the adaptation of agents after deployment. |

| MemoryBench | Ai et al. [210] | 20k | Evaluate the capacity for continual learning of Large Language Model systems by simulating the accumulation of feedback from users across multiple domains to measure the effectiveness of procedural memory. | |

| Evo-Memory | Wei et al. [129] | 3.7k | Evaluate the capacity for learning at test time and the evolution of memory by processing continuous streams of tasks for the purpose of reuse of experience across diverse scenarios. | |

| LABench | Zheng et al. [211] | 1.4k | Evaluate the lifelong learning ability and transfer of knowledge across sequences of interdependent tasks in dynamic environments for the purpose of measuring the acquisition and retention of skills. |

References

- Touvron, H.; Lavril, T.; Izacard, G.; Martinet, X.; Lachaux, M.; Lacroix, T.; Rozière, B.; Goyal, N.; Hambro, E.; Azhar, F.; et al. LLaMA: Open and Efficient Foundation Language Models. CoRR 2023, abs/2302.13971, [2302.13971]. [CrossRef]

- Hurst, A.; Lerer, A.; Goucher, A.P.; Perelman, A.; Ramesh, A.; Clark, A.; Ostrow, A.; Welihinda, A.; Hayes, A.; Radford, A.; et al. GPT-4o System Card. CoRR 2024, abs/2410.21276, [2410.21276]. [CrossRef]

- Yang, A.; Li, A.; Yang, B.; Zhang, B.; Hui, B.; Zheng, B.; Yu, B.; Gao, C.; Huang, C.; Lv, C.; et al. Qwen3 Technical Report. CoRR 2025, abs/2505.09388, [2505.09388]. p. 2505.09388. [CrossRef]

- Yao, S.; Zhao, J.; Yu, D.; Du, N.; Shafran, I.; Narasimhan, K.; Cao, Y. ReAct: Synergizing Reasoning and Acting in Language Models. ArXiv 2022. abs/2210.03629. [Google Scholar]

- Qin, Y.; Liang, S.; Ye, Y.; Zhu, K.; Yan, L.; Lu, Y.; Lin, Y.; Cong, X.; Tang, X.; Qian, B.; et al. ToolLLM: Facilitating Large Language Models to Master 16000+ Real-world APIs. In Proceedings of the The Twelfth International Conference on Learning Representations, ICLR 2024, Vienna, Austria, May 7-11, 2024; OpenReview.net. 2024. [Google Scholar]

- Luo, Z.; Shen, Z.; Yang, W.; Zhao, Z.; Jwalapuram, P.; Saha, A.; Sahoo, D.; Savarese, S.; Xiong, C.; Li, J. MCP-Universe: Benchmarking Large Language Models with Real-World Model Context Protocol Servers. CoRR 2025, abs/2508.14704, [2508.14704]. [CrossRef]

- Huang, J.; Chen, X.; Mishra, S.; Zheng, H.S.; Yu, A.W.; Song, X.; Zhou, D. Large Language Models Cannot Self-Correct Reasoning Yet. ArXiv 2023. [Google Scholar]

- Xiong, Z.; Lin, Y.; Xie, W.; He, P.; Tang, J.; Lakkaraju, H.; Xiang, Z. How Memory Management Impacts LLM Agents: An Empirical Study of Experience-Following Behavior. ArXiv 2025. [Google Scholar]

- Wang, L.; Ma, C.; Feng, X.; Zhang, Z.; ran Yang, H.; Zhang, J.; Chen, Z.Y.; Tang, J.; Chen, X.; Lin, Y.; et al. A survey on large language model based autonomous agents. Frontiers of Computer Science 2023, 18. [Google Scholar] [CrossRef]

- Wu, R.; Wang, X.; Mei, J.; Cai, P.; Fu, D.; Yang, C.; Wen, L.; Yang, X.; Shen, Y.; Wang, Y.; et al. EvolveR: Self-Evolving LLM Agents through an Experience-Driven Lifecycle. ArXiv 2025. [Google Scholar]

- Packer, C.; Fang, V.; Patil, S.G.; Lin, K.; Wooders, S.; Gonzalez, J. MemGPT: Towards LLMs as Operating Systems. ArXiv 2023. abs/2310.08560. [Google Scholar]

- Hu, M.; Chen, T.; Chen, Q.; Mu, Y.; Shao, W.; Luo, P. HiAgent: Hierarchical Working Memory Management for Solving Long-Horizon Agent Tasks with Large Language Model. ArXiv 2024. [Google Scholar]

- Kang, J.; Ji, M.; Zhao, Z.; Bai, T. Memory OS of AI Agent. ArXiv 2025. [Google Scholar]

- Zhong, W.; Guo, L.; Gao, Q.F.; Ye, H.; Wang, Y. MemoryBank: Enhancing Large Language Models with Long-Term Memory. ArXiv 2023. [Google Scholar] [CrossRef]

- Hou, Y.; Tamoto, H.; Miyashita, H. My agent understands me better": Integrating Dynamic Human-like Memory Recall and Consolidation in LLM-Based Agents. Extended Abstracts of the CHI Conference on Human Factors in Computing Systems; 2024. [Google Scholar]

- Xu, W.; Liang, Z.; Mei, K.; Gao, H.; Tan, J.; Zhang, Y. A-MEM: Agentic Memory for LLM Agents. ArXiv 2025. [Google Scholar]

- Yang, W.; Xiao, J.; Zhang, H.; Zhang, Q.; Wang, Y.; Xu, B. Coarse-to-Fine Grounded Memory for LLM Agent Planning. ArXiv 2025. [Google Scholar]

- Zhang, Z.; Dai, Q.; Chen, X.; Li, R.; Li, Z.; Dong, Z. MemEngine: A Unified and Modular Library for Developing Advanced Memory of LLM-based Agents. In Companion Proceedings of the ACM on Web Conference; 2025. [Google Scholar]

- Wu, Y.; Liang, S.; Zhang, C.; Wang, Y.; Zhang, Y.; Guo, H.; Tang, R.; Liu, Y. From Human Memory to AI Memory: A Survey on Memory Mechanisms in the Era of LLMs. ArXiv 2025. [Google Scholar]

- Du, Y.; Huang, W.; Zheng, D.; Wang, Z.; Montella, S.; Lapata, M.; Wong, K.F.; Pan, J.Z. Rethinking Memory in AI: Taxonomy, Operations, Topics, and Future Directions. ArXiv 2025. abs/2505.00675. [Google Scholar]

- Wu, S.; Shu, K. Memory in LLM-based Multi-agent Systems: Mechanisms, Challenges, and Collective Intelligence. 2025. [Google Scholar] [CrossRef]

- Cao, Z.; Deng, J.; Yu, L.; Zhou, W.; Liu, Z.; Ding, B.; Zhao, H. Remember Me, Refine Me: A Dynamic Procedural Memory Framework for Experience-Driven Agent Evolution. 2025. [Google Scholar] [CrossRef]

- Zhang, Z.; Dai, Q.; Bo, X.; Ma, C.; Li, R.; Chen, X.; Zhu, J.; Dong, Z.; Wen, J.R. A Survey on the Memory Mechanism of Large Language Model-based Agents. ACM Transactions on Information Systems 2024, 43, 1–47. [Google Scholar] [CrossRef]

- Hu, Y.; Liu, S.; Yue, Y.; Zhang, G.; Liu, B.; Zhu, F.; Lin, J.; Guo, H.; Dou, S.; Xi, Z.; et al. Memory in the Age of AI Agents. 2025. [Google Scholar] [CrossRef]

- Huang, Y.; Xu, J.; Jiang, Z.; Lai, J.; Li, Z.; Yao, Y.; Chen, T.; Yang, L.; Xin, Z.; Ma, X. Advancing Transformer Architecture in Long-Context Large Language Models: A Comprehensive Survey. ArXiv 2023. [Google Scholar]

- Sumers, T.R.; Yao, S.; Narasimhan, K.; Griffiths, T.L. Cognitive Architectures for Language Agents. Trans. Mach. Learn. Res. 2023, 2024. [Google Scholar]

- Yao, S.; Yu, D.; Zhao, J.; Shafran, I.; Griffiths, T.L.; Cao, Y.; Narasimhan, K. Tree of Thoughts: Deliberate Problem Solving with Large Language Models. ArXiv 2023. [Google Scholar]

- Sun, W.; Lu, M.; Ling, Z.; Liu, K.; Yao, X.; Yang, Y.; Chen, J. Scaling Long-Horizon LLM Agent via Context-Folding. ArXiv 2025, abs/2510.11967. [Google Scholar]

- Majumder, B.P.; Dalvi, B.; Jansen, P.A.; Tafjord, O.; Tandon, N.; Zhang, L.; Callison-Burch, C.; Clark, P. CLIN: A Continually Learning Language Agent for Rapid Task Adaptation and Generalization. ArXiv 2023. [Google Scholar]

- Park, J.S.; O’Brien, J.C.; Cai, C.J.; Morris, M.R.; Liang, P.; Bernstein, M.S. Generative Agents: Interactive Simulacra of Human Behavior. In Proceedings of the 36th Annual ACM Symposium on User Interface Software and Technology; 2023. [Google Scholar]

- Westhäußer, R.; Minker, W.; Zepf, S. Enabling Personalized Long-term Interactions in LLM-based Agents through Persistent Memory and User Profiles. ArXiv 2025. abs/2510.07925. [Google Scholar]

- Liang, J.; Li, H.; Li, C.; Zhou, J.; Jiang, S.; Wang, Z.; Ji, C.; Zhu, Z.; Liu, R.; Ren, T.; et al. AI Meets Brain: Memory Systems from Cognitive Neuroscience to Autonomous Agents. 2025. [Google Scholar] [CrossRef]

- Huang, X.; Liu, W.; Chen, X.; Wang, X.; Wang, H.; Lian, D.; Wang, Y.; Tang, R.; Chen, E. Understanding the planning of LLM agents: A survey. ArXiv 2024. [Google Scholar]

- Everitt, T.; Garbacea, C.; Bellot, A.; Richens, J.; Papadatos, H.; Campos, S.; Shah, R. Evaluating the Goal-Directedness of Large Language Models. ArXiv 2025. [Google Scholar]

- Li, Z.; Chang, Y.; Yu, G.; Le, X. HiPlan: Hierarchical Planning for LLM-Based Agents with Adaptive Global-Local Guidance. ArXiv 2025. [Google Scholar]

- Gao, D.; Li, Z.; Kuang, W.; Pan, X.; Chen, D.; Ma, Z.; Qian, B.; Yao, L.; Zhu, L.; Cheng, C.; et al. AgentScope: A Flexible yet Robust Multi-Agent Platform. ArXiv 2024. [Google Scholar]

- Liu, J.; Kong, Z.; Yang, C.; Yang, F.; Li, T.; Dong, P.; Nanjekye, J.; Tang, H.; Yuan, G.; Niu, W.; et al. RCR-Router: Efficient Role-Aware Context Routing for Multi-Agent LLM Systems with Structured Memory. ArXiv 2025. abs/2508.04903. [Google Scholar]

- Lazaridou, A.; Kuncoro, A.; Gribovskaya, E.; Agrawal, D.; Liska, A.; Terzi, T.; Giménez, M.; de Masson d’Autume, C.; Kociský, T.; Ruder, S.; et al. Mind the Gap: Assessing Temporal Generalization in Neural Language Models. In Proceedings of the Neural Information Processing Systems, 2021. [Google Scholar]

- Jang, J.; Ye, S.; Lee, C.; Yang, S.; Shin, J.; Han, J.; Kim, G.; Seo, M. TemporalWiki: A Lifelong Benchmark for Training and Evaluating Ever-Evolving Language Models. In Proceedings of the Conference on Empirical Methods in Natural Language Processing, 2022. [Google Scholar]

- Ko, D.; Kim, J.; Choi, H.; Kim, G. GrowOVER: How Can LLMs Adapt to Growing Real-World Knowledge? In Proceedings of the Annual Meeting of the Association for Computational Linguistics, 2024. [Google Scholar]

- Luu, K.; Khashabi, D.; Gururangan, S.; Mandyam, K.; Smith, N.A. Time Waits for No One! Analysis and Challenges of Temporal Misalignment. arXiv 2022, arXiv:2111.07408. [Google Scholar] [CrossRef]

- Kalai, A.T.; Vempala, S.S. Calibrated Language Models Must Hallucinate. In Proceedings of the 56th Annual ACM Symposium on Theory of Computing; 2023. [Google Scholar]

- Kasai, J.; Sakaguchi, K.; Takahashi, Y.; Bras, R.L.; Asai, A.; Yu, X.; Radev, D.; Smith, N.A.; Choi, Y.; Inui, K. RealTime QA: What’s the Answer Right Now? arXiv 2024, arXiv:cs. [Google Scholar]

- Siyue, Z.; Xue, Y.; Zhang, Y.; Wu, X.; Luu, A.T.; Chen, Z. MRAG: A Modular Retrieval Framework for Time-Sensitive Question Answering. ArXiv 2024. [Google Scholar]

- Salama, R.; Cai, J.; Yuan, M.; Currey, A.; Sunkara, M.; Zhang, Y.; Benajiba, Y. MemInsight: Autonomous Memory Augmentation for LLM Agents. ArXiv 2025. [Google Scholar]

- Du, X.; Li, L.; Zhang, D.; Song, L. MemR3: Memory Retrieval via Reflective Reasoning for LLM Agents. 2025. [Google Scholar]

- Houichime, T.; Souhar, A.; Amrani, Y.E. Memory as Resonance: A Biomimetic Architecture for Infinite Context Memory on Ergodic Phonetic Manifolds. 2025. [Google Scholar]

- Joshi, A.; Ahmad, A.; Modi, A. COLD: Causal reasOning in cLosed Daily activities. abs/2411.19500; ArXiv. 2024. [Google Scholar]

- Cui, S.; Mouchel, L.; Faltings, B. Uncertainty in Causality: A New Frontier. In Proceedings of the Annual Meeting of the Association for Computational Linguistics, 2025. [Google Scholar]

- Liu, X.; Xu, P.; Wu, J.; Yuan, J.; Yang, Y.; Zhou, Y.; Liu, F.; Guan, T.; Wang, H.; Yu, T.; et al. Large Language Models and Causal Inference in Collaboration: A Comprehensive Survey. ArXiv 2025. [Google Scholar]

- Du, Y.; Wang, B.; Xiang, Y.; Wang, Z.; Huang, W.; Xue, B.; Liang, B.; Zeng, X.; Mi, F.; Bai, H.; et al. Memory-T1: Reinforcement Learning for Temporal Reasoning in Multi-session Agents. 2025. [Google Scholar]

- Raman, V.; VijaiAravindh, R.; Ragav, A. REMI: A Novel Causal Schema Memory Architecture for Personalized Lifestyle Recommendation Agents. ArXiv 2025. abs/2509.06269. [Google Scholar]

- Tang, H.; Key, D.; Ellis, K. WorldCoder, a Model-Based LLM Agent: Building World Models by Writing Code and Interacting with the Environment. ArXiv 2024. [Google Scholar]

- Kim, M.; won Hwang, S. CoEx - Co-evolving World-model and Exploration. ArXiv 2025. [Google Scholar]

- Bohnet, B.; Kamienny, P.; Sedghi, H.; Gorur, D.; Awasthi, P.; Parisi, A.T.; Swersky, K.; Liu, R.; Nova, A.; Fiedel, N. Enhancing LLM Planning Capabilities through Intrinsic Self-Critique. 2025. [Google Scholar] [CrossRef]

- Hu, C.; Fu, J.; Du, C.; Luo, S.; Zhao, J.J.; Zhao, H. ChatDB: Augmenting LLMs with Databases as Their Symbolic Memory. ArXiv 2023. abs/2306.03901. [Google Scholar]

- Srivastava, S.S.; He, H. MemoryGraft: Persistent Compromise of LLM Agents via Poisoned Experience Retrieval. arXiv 2025, arXiv:2512.16962. [Google Scholar] [CrossRef]

- Liu, S.; Yang, J.; Jiang, B.; Li, Y.; Guo, J.; Liu, X.; Dai, B. Context as a Tool: Context Management for Long-Horizon SWE-Agents. 2025. [Google Scholar] [CrossRef]

- Shinn, N.; Cassano, F.; Labash, B.; Gopinath, A.; Narasimhan, K.; Yao, S. Reflexion: language agents with verbal reinforcement learning. In Proceedings of the Neural Information Processing Systems, 2023. [Google Scholar]

- Tang, X.; Qin, T.; Peng, T.; Zhou, Z.; Shao, Y.; Du, T.; Wei, X.; Xia, P.; Wu, F.; Zhu, H.; et al. Agent KB: Leveraging Cross-Domain Experience for Agentic Problem Solving. ArXiv 2025. abs/2507.06229. [Google Scholar]

- Cai, Z.; Guo, X.; Pei, Y.; Feng, J.; Chen, J.; Zhang, Y.Q.; Ma, W.Y.; Wang, M.; Zhou, H. FLEX: Continuous Agent Evolution via Forward Learning from Experience. 2025. [Google Scholar] [CrossRef]

- Xia, P.; Xia, P.; Zeng, K.; Liu, J.; Qin, C.; Wu, F.; Zhou, Y.; Xiong, C.; Yao, H. Agent0: Unleashing Self-Evolving Agents from Zero Data via Tool-Integrated Reasoning. 2025. [Google Scholar]

- Alakuijala, M.; Gao, Y.; Ananov, G.; Kaski, S.; Marttinen, P.; Ilin, A.; Valpola, H. Memento No More: Coaching AI Agents to Master Multiple Tasks via Hints Internalization. ArXiv 2025. [Google Scholar]

- Guo, J.; Yang, L.; Chen, P.; Xiao, Q.; Wang, Y.; Juan, X.; Qiu, J.; Shen, K.; Wang, M. GenEnv: Difficulty-Aligned Co-Evolution Between LLM Agents and Environment Simulators. 2025. [Google Scholar]

- Ratner, N.; Levine, Y.; Belinkov, Y.; Ram, O.; Magar, I.; Abend, O.; Karpas, E.; Shashua, A.; Leyton-Brown, K.; Shoham, Y. Parallel Context Windows for Large Language Models. In Proceedings of the Annual Meeting of the Association for Computational Linguistics, 2022. [Google Scholar]

- Xiao, G.; Tian, Y.; Chen, B.; Han, S.; Lewis, M. Efficient Streaming Language Models with Attention Sinks. ArXiv 2023. [Google Scholar]

- Jin, H.; Han, X.; Yang, J.; Jiang, Z.; Liu, Z.; yuan Chang, C.; Chen, H.; Hu, X. LLM Maybe LongLM: Self-Extend LLM Context Window Without Tuning. ArXiv 2024. abs/2401.01325.

- Zhang, Z.A.; Sheng, Y.; Zhou, T.; Chen, T.; Zheng, L.; Cai, R.; Song, Z.; Tian, Y.; Ré, C.; Barrett, C.W.; et al. H2O: Heavy-Hitter Oracle for Efficient Generative Inference of Large Language Models. ArXiv 2023. [Google Scholar]

- Jiang, H.; Wu, Q.; Lin, C.Y.; Yang, Y.; Qiu, L. LLMLingua: Compressing Prompts for Accelerated Inference of Large Language Models. In Proceedings of the Conference on Empirical Methods in Natural Language Processing, 2023. [Google Scholar]

- Xiao, C.; Zhang, P.; Han, X.; Xiao, G.; Lin, Y.; Zhang, Z.; Liu, Z.; Han, S.; Sun, M. InfLLM: Training-Free Long-Context Extrapolation for LLMs with an Efficient Context Memory. Advances in Neural Information Processing Systems 2024. [Google Scholar]

- Melz, E. Enhancing LLM Intelligence with ARM-RAG: Auxiliary Rationale Memory for Retrieval Augmented Generation. ArXiv 2023. [Google Scholar]

- Liu, W.; Tang, Z.; Li, J.; Chen, K.; Zhang, M. MemLong: Memory-Augmented Retrieval for Long Text Modeling. ArXiv 2024. [Google Scholar]

- Das, P.; Chaudhury, S.; Nelson, E.; Melnyk, I.; Swaminathan, S.; Dai, S.; Lozano, A.C.; Kollias, G.; Chenthamarakshan, V.; Navrátil, J.; et al. Larimar: Large Language Models with Episodic Memory Control. ArXiv 2024. [Google Scholar]

- Xue, S.; Jiang, C.; Shi, W.; Cheng, F.; Chen, K.; Yang, H.; Zhang, Z.; He, J.; Zhang, H.; Wei, G.; et al. DB-GPT: Empowering Database Interactions with Private Large Language Models. ArXiv 2023. [Google Scholar]

- Lee, S.; Ko, H. Training a Team of Language Models as Options to Build an SQL-Based Memory. In Applied Sciences; 2025. [Google Scholar]

- Lu, J.; An, S.; Lin, M.; Pergola, G.; He, Y.; Yin, D.; Sun, X.; Wu, Y. MemoChat: Tuning LLMs to Use Memos for Consistent Long-Range Open-Domain Conversation. ArXiv 2023. abs/2308.08239. [Google Scholar]

- Modarressi, A.; Köksal, A.; Imani, A.; Fayyaz, M.; Schutze, H. MemLLM: Finetuning LLMs to Use An Explicit Read-Write Memory. ArXiv 2024. [Google Scholar]

- Li, S.; He, Y.; Guo, H.; Bu, X.; Bai, G.; Liu, J.; Liu, J.; Qu, X.; Li, Y.; Ouyang, W.; et al. GraphReader: Building Graph-based Agent to Enhance Long-Context Abilities of Large Language Models. In Proceedings of the Conference on Empirical Methods in Natural Language Processing, 2024. [Google Scholar]

- Liu, L.; Yang, X.; Shen, Y.; Hu, B.; Zhang, Z.; Gu, J.; Zhang, G. Think-in-Memory: Recalling and Post-thinking Enable LLMs with Long-Term Memory. ArXiv 2023. abs/2311.08719. [Google Scholar]

- Zhang, P.; Chen, X.; Feng, Y.; Jiang, Y.; Meng, L. Constructing coherent spatial memory in LLM agents through graph rectification. ArXiv 2025. [Google Scholar]

- Li, R.; Zhang, Z.; Bo, X.; Tian, Z.; Chen, X.; Dai, Q.; Dong, Z.; Tang, R. CAM: A Constructivist View of Agentic Memory for LLM-Based Reading Comprehension. ArXiv 2025. abs/2510.05520. [Google Scholar]

- Chhikara, P.; Khant, D.; Aryan, S.; Singh, T.; Yadav, D. Mem0: Building Production-Ready AI Agents with Scalable Long-Term Memory. ArXiv 2025. [Google Scholar]

- Huang, Y.; Liu, Y.; Zhao, R.; Zhong, X.; Yue, X.; Jiang, L. MemOrb: A Plug-and-Play Verbal-Reinforcement Memory Layer for E-Commerce Customer Service. ArXiv 2025. [Google Scholar]

- Han, D.; Couturier, C.; Diaz, D.M.; Zhang, X.; Rühle, V.; Rajmohan, S. LEGOMem: Modular Procedural Memory for Multi-agent LLM Systems for Workflow Automation. ArXiv 2025. abs/2510.04851. [Google Scholar]

- Ye, R.; Zhang, Z.; Li, K.; Yin, H.; Tao, Z.; Zhao, Y.; Su, L.; Zhang, L.; Qiao, Z.; Wang, X.; et al. AgentFold: Long-Horizon Web Agents with Proactive Context Management. ArXiv 2025. [Google Scholar]

- Sun, Z.; Shi, H.; Côté, M.A.; Berseth, G.; Yuan, X.; Liu, B. Enhancing Agent Learning through World Dynamics Modeling. ArXiv 2024. [Google Scholar]

- Yan, X.; Ou, Z.; Yang, M.; Song, Y.; Zhang, H.; Li, Y.; Wang, J. Memory-Driven Self-Improvement for Decision Making with Large Language Models. ArXiv 2025. abs/2509.26340. [Google Scholar]

- Yan, S.; Yang, X.; Huang, Z.; Nie, E.; Ding, Z.; Li, Z.; Ma, X.; Schutze, H.; Tresp, V.; Ma, Y. Memory-R1: Enhancing Large Language Model Agents to Manage and Utilize Memories via Reinforcement Learning. ArXiv 2025, abs/2508.19828.

- Xiao, Y.; Li, Y.; Wang, H.; Tang, Y.; Wang, Z.Z. ToolMem: Enhancing Multimodal Agents with Learnable Tool Capability Memory. ArXiv 2025. abs/2510.06664. [Google Scholar]

- Sun, H.; Zhang, Z.; Zeng, S. Preference-Aware Memory Update for Long-Term LLM Agents. ArXiv 2025. abs/2510.09720. [Google Scholar]

- Bo, X.; Zhang, Z.; Dai, Q.; Feng, X.; Wang, L.; Li, R.; Chen, X.; Wen, J.R. Reflective Multi-Agent Collaboration based on Large Language Models. Advances in Neural Information Processing Systems 2024. [Google Scholar]

- Balestri, R.; Pescatore, G. Narrative Memory in Machines: Multi-Agent Arc Extraction in Serialized TV. ArXiv 2025. [Google Scholar]

- Wang, R.; Liang, S.; Chen, Q.; Huang, Y.; Li, M.; Ma, Y.; Zhang, D.; Qin, K.; Leung, M.F. GraphCogent: Mitigating LLMs’Working Memory Constraints via Multi-Agent Collaboration in Complex Graph Understanding. 2025. [Google Scholar]

- Ozer, O.; Wu, G.; Wang, Y.; Dosti, D.; Zhang, H.; Rue, V.D.L. MAR:Multi-Agent Reflexion Improves Reasoning Abilities in LLMs. 2025. [Google Scholar]

- Zhang, G.M.; Fu, M.; Wan, G.; Yu, M.; Wang, K.; Yan, S. G-Memory: Tracing Hierarchical Memory for Multi-Agent Systems. ArXiv 2025. [Google Scholar]

- Hassell, J.; Zhang, D.; Kim, H.J.; Mitchell, T.; Hruschka, E. Learning from Supervision with Semantic and Episodic Memory: A Reflective Approach to Agent Adaptation. ArXiv 2025, abs/2510.19897. [Google Scholar]

- Wan, C.; Dai, X.; Wang, Z.; Li, M.; Wang, Y.; Mao, Y.; Lan, Y.; Xiao, Z. LoongFlow: Directed Evolutionary Search via a Cognitive Plan-Execute-Summarize Paradigm. 2025. [Google Scholar]

- Wang, Z.Z.; Gandhi, A.; Neubig, G.; Fried, D. Inducing Programmatic Skills for Agentic Tasks. ArXiv 2025. [Google Scholar]

- Zhang, G.; Zhu, S.; Wei, A.; Song, Z.; Nie, A.; Jia, Z.; Vijaykumar, N.; Wang, Y.; Olukotun, K. AccelOpt: A Self-Improving LLM Agentic System for AI Accelerator Kernel Optimization. 2025. [Google Scholar]

- Shi, Y.; Cai, Y.; Cai, S.; Xu, Z.; Chen, L.; Qin, Y.; Zhou, Z.; Fei, X.; Qiu, C.; Tan, X.; et al. Youtu-Agent: Scaling Agent Productivity with Automated Generation and Hybrid Policy Optimization. 2025. [Google Scholar] [CrossRef]

- Zhai, Y.; Tao, S.; Chen, C.; Zou, A.; Chen, Z.; Fu, Q.; Mai, S.; Yu, L.; Deng, J.; Cao, Z.; et al. AgentEvolver: Towards Efficient Self-Evolving Agent System. 2025. [Google Scholar]

- Zhang, K.; Chen, X.; Liu, B.; Xue, T.; Liao, Z.; Liu, Z.; Wang, X.; Ning, Y.; Chen, Z.; Fu, X.; et al. Agent Learning via Early Experience. ArXiv 2025. abs/2510.08558. [Google Scholar]

- Tandon, A.; Dalal, K.; Li, X.; Koceja, D.; Rod, M.; Buchanan, S.; Wang, X.; Leskovec, J.; Koyejo, S.; Hashimoto, T.; et al. End-to-End Test-Time Training for Long Context. 2025. [Google Scholar]

- Yu, W.; Liang, Z.; Huang, C.; Panaganti, K.; Fang, T.; Mi, H.; Yu, D. Guided Self-Evolving LLMs with Minimal Human Supervision. 2025. [Google Scholar] [CrossRef]

- Zhang, G.M.; Meng, F.; Wan, G.; Li, Z.; Wang, K.; Yin, Z.; Bai, L.; Yan, S. LatentEvolve: Self-Evolving Test-Time Scaling in Latent Space. ArXiv 2025. [Google Scholar]

- Zhang, G.M.; Fu, M.; Yan, S. MemGen: Weaving Generative Latent Memory for Self-Evolving Agents. ArXiv 2025. [Google Scholar]

- Anonymous. Exploratory Memory-Augmented LLM Agent via Hybrid On- and Off-Policy Optimization. In Proceedings of the Submitted to The Fourteenth International Conference on Learning Representations, 2025. under review. [Google Scholar]

- Ouyang, S.; Yan, J.; Hsu, I.H.; Chen, Y.; Jiang, K.; Wang, Z.; Han, R.; Le, L.T.; Daruki, S.; Tang, X.; et al. ReasoningBank: Scaling Agent Self-Evolving with Reasoning Memory. ArXiv 2025. abs/2509.25140. [Google Scholar]

- Zheng, Q.; Henaff, M.; Zhang, A.; Grover, A.; Amos, B. Online Intrinsic Rewards for Decision Making Agents from Large Language Model Feedback. ArXiv 2024. [Google Scholar]

- Pan, Y.; Liu, Z.; Wang, H. Wonder Wins Ways: Curiosity-Driven Exploration through Multi-Agent Contextual Calibration. ArXiv 2025. [Google Scholar]

- Sun, H.; Chai, Y.; Wang, S.; Sun, Y.; Wu, H.; Wang, H. Curiosity-Driven Reinforcement Learning from Human Feedback. In Proceedings of the Annual Meeting of the Association for Computational Linguistics, 2025. [Google Scholar]

- Wei, Z.; Yao, W.; Liu, Y.; Zhang, W.; Lu, Q.; Qiu, L.; Yu, C.; Xu, P.; Zhang, C.; Yin, B.; et al. WebAgent-R1: Training Web Agents via End-to-End Multi-Turn Reinforcement Learning. ArXiv 2025. [Google Scholar]

- Ahn, M.; Brohan, A.; Brown, N.; Chebotar, Y.; Cortes, O.; David, B.; Finn, C.; Gopalakrishnan, K.; Hausman, K.; Herzog, A.; et al. Do As I Can, Not As I Say: Grounding Language in Robotic Affordances. In Proceedings of the Conference on Robot Learning, 2022. [Google Scholar]

- Wang, Z.; Wang, K.; Wang, Q.; Zhang, P.; Li, L.; Yang, Z.; Yu, K.; Nguyen, M.N.; Liu, L.; Gottlieb, E.; et al. RAGEN: Understanding Self-Evolution in LLM Agents via Multi-Turn Reinforcement Learning. ArXiv 2025. [Google Scholar]

- Qi, Z.; Liu, X.; Iong, I.L.; Lai, H.; Sun, X.; Zhao, W.; Yang, Y.; Yang, X.; Sun, J.; Yao, S.; et al. WebRL: Training LLM Web Agents via Self-Evolving Online Curriculum Reinforcement Learning. arXiv 2025, arXiv:cs. [Google Scholar]

- Cheng, J.; Kumar, A.; Lal, R.; Rajasekaran, R.; Ramezani, H.; Khan, O.Z.; Rokhlenko, O.; Chiu-Webster, S.; Hua, G.; Amiri, H. WebATLAS: An LLM Agent with Experience-Driven Memory and Action Simulation. 2025. [Google Scholar]

- Liu, J.; Xiong, K.; Xia, P.; Zhou, Y.; Ji, H.; Feng, L.; Han, S.; Ding, M.; Yao, H. Agent0-VL: Exploring Self-Evolving Agent for Tool-Integrated Vision-Language Reasoning. 2025. [Google Scholar]

- Shi, Z.; Fang, M.; Chen, L. Monte Carlo Planning with Large Language Model for Text-Based Game Agents. ArXiv 2025, abs/2504.16855.

- Bidochko, A.; Vyklyuk, Y. Thought Management System for long-horizon, goal-driven LLM agents. Journal of Computational Science 2026, 93, 102740. [Google Scholar] [CrossRef]

- Forouzandeh, S.; Peng, W.; Moradi, P.; Yu, X.; Jalili, M. Learning Hierarchical Procedural Memory for LLM Agents through Bayesian Selection and Contrastive Refinement. 2025. [Google Scholar] [CrossRef]

- He, K.; Zhang, M.; Yan, S.; Wu, P.; Chen, Z. IDEA: Enhancing the Rule Learning Ability of Large Language Model Agent through Induction, Deduction, and Abduction. In Proceedings of the Annual Meeting of the Association for Computational Linguistics, 2024. [Google Scholar]

- Fang, R.; Liang, Y.; Wang, X.; Wu, J.; Qiao, S.; Xie, P.; Huang, F.; Chen, H.; Zhang, N. Memp: Exploring Agent Procedural Memory. ArXiv 2025. abs/2508.06433. [Google Scholar]

- Latimer, C.; Boschi, N.; Neeser, A.; Bartholomew, C.; Srivastava, G.; Wang, X.; Ramakrishnan, N. Hindsight is 20/20: Building Agent Memory that Retains, Recalls, and Reflects. 2025. [Google Scholar] [CrossRef]

- Yang, Y.; Kang, S.; Lee, J.; Lee, D.; Yun, S.; Lee, K. Automated Skill Discovery for Language Agents through Exploration and Iterative Feedback. ArXiv 2025. abs/2506.04287. [Google Scholar]

- Zhang, G.; Ren, H.; Zhan, C.; Zhou, Z.; Wang, J.; Zhu, H.; Zhou, W.; Yan, S. MemEvolve: Meta-Evolution of Agent Memory Systems. 2025. [Google Scholar]

- Ding, B.; Chen, Y.; Lv, J.; Yuan, J.; Zhu, Q.; Tian, S.; Zhu, D.; Wang, F.; Deng, H.; Mi, F.; et al. Rethinking Expert Trajectory Utilization in LLM Post-training. 2025. [Google Scholar] [CrossRef]

- Chen, S.; Zhu, T.; Wang, Z.; Zhang, J.; Wang, K.; Gao, S.; Xiao, T.; Teh, Y.W.; He, J.; Li, M. Internalizing World Models via Self-Play Finetuning for Agentic RL. ArXiv 2025. abs/2510.15047. [Google Scholar]

- Chen, S.; Lin, S.; Gu, X.; Shi, Y.; Lian, H.; Yun, L.; Chen, D.; Sun, W.; Cao, L.; Wang, Q. SWE-Exp: Experience-Driven Software Issue Resolution. ArXiv 2025. [Google Scholar]

- Wei, T.; Sachdeva, N.; Coleman, B.; He, Z.; Bei, Y.; Ning, X.; Ai, M.; Li, Y.; He, J.; hsin Chi, E.H.; et al. Evo-Memory: Benchmarking LLM Agent Test-time Learning with Self-Evolving Memory. 2025. [Google Scholar]

- Hayashi, H.; Pang, B.; Zhao, W.; Liu, Y.; Gokul, A.; Bansal, S.; Xiong, C.; Yavuz, S.; Zhou, Y. Self-Abstraction from Grounded Experience for Plan-Guided Policy Refinement. 2025. [Google Scholar]

- Wang, Z.Z.; Mao, J.; Fried, D.; Neubig, G. Agent Workflow Memory. ArXiv 2024. [Google Scholar]

- Liu, Y.; Si, C.; Narasimhan, K.R.; Yao, S. Contextual Experience Replay for Self-Improvement of Language Agents. In Proceedings of the Annual Meeting of the Association for Computational Linguistics, 2025. [Google Scholar]

- Yu, S.; Li, G.; Shi, W.; Qi, P. PolySkill: Learning Generalizable Skills Through Polymorphic Abstraction. ArXiv 2025. [Google Scholar]

- Cheng, M.; Jie, O.; Yu, S.; Yan, R.; Luo, Y.; Liu, Z.; Wang, D.; Liu, Q.; Chen, E. Agent-R1: Training Powerful LLM Agents with End-to-End Reinforcement Learning. 2025. [Google Scholar]

- Luo, X.; Zhang, Y.; He, Z.; Wang, Z.; Zhao, S.; Li, D.; Qiu, L.K.; Yang, Y. Agent Lightning: Train ANY AI Agents with Reinforcement Learning. ArXiv 2025. abs/2508.03680. [Google Scholar]

- Wang, J.; Yan, Q.; Wang, Y.; Tian, Y.; Mishra, S.S.; Xu, Z.; Gandhi, M.; Xu, P.; Cheong, L.L. Reinforcement Learning for Self-Improving Agent with Skill Library. 2025. [Google Scholar] [CrossRef]

- Gharat, H.; Agrawal, H.; Patro, G.K. From Personalization to Prejudice: Bias and Discrimination in Memory-Enhanced AI Agents for Recruitment. 2025. [Google Scholar] [CrossRef]

- Wang, P.; Tian, M.; Li, J.; Liang, Y.; Wang, Y.; Chen, Q.; Wang, T.; Lu, Z.; Ma, J.; Jiang, Y.E.; et al. O-Mem: Omni Memory System for Personalized, Long Horizon, Self-Evolving Agents. 2025. [Google Scholar]

- Rasmussen, P.; Paliychuk, P.; Beauvais, T.; Ryan, J.; Chalef, D. Zep: A Temporal Knowledge Graph Architecture for Agent Memory. ArXiv 2025, abs/2501.13956.

- Tan, Z.; Yan, J.; Hsu, I.H.; Han, R.; Wang, Z.; Le, L.T.; Song, Y.; Chen, Y.; Palangi, H.; Lee, G.; et al. Prospect and Retrospect: Reflective Memory Management for Long-term Personalized Dialogue Agents. ArXiv 2025. abs/2503.08026. [Google Scholar]

- Du, X.; Li, L.; Zhang, D.; Song, L. MemR3: Memory Retrieval via Reflective Reasoning for LLM Agents. arXiv 2025, arXiv:cs. [Google Scholar]

- Luo, H.; Morgan, N.; Li, T.; Zhao, D.; Ngo, A.V.; Schroeder, P.; Yang, L.; Ben-Kish, A.; O’Brien, J.; Glass, J.R. Beyond Context Limits: Subconscious Threads for Long-Horizon Reasoning. ArXiv 2025. [Google Scholar]

- Zhang, Y.; Shu, J.; Ma, Y.; Lin, X.; Wu, S.; Sang, J. Memory as Action: Autonomous Context Curation for Long-Horizon Agentic Tasks. arXiv 2025, arXiv:2510.12635. [Google Scholar] [CrossRef]

- Nan, J.; Ma, W.; Wu, W.; Chen, Y. Nemori: Self-Organizing Agent Memory Inspired by Cognitive Science. ArXiv 2025. [Google Scholar]

- Behrouz, A.; Zhong, P.; Mirrokni, V.S. Titans: Learning to Memorize at Test Time. ArXiv 2024. [Google Scholar]

- Wu, S.; et al. Memory in LLM-based Multi-agent Systems: Mechanisms, Challenges, and Collective Intelligence. TechRxiv 2025. [Google Scholar]

- Tran, K.T.; Dao, D.; Nguyen, M.D.; Pham, Q.V.; O’Sullivan, B.; Nguyen, H.D. Multi-Agent Collaboration Mechanisms: A Survey of LLMs. ArXiv 2025. abs/2501.06322. [Google Scholar]

- Liao, C.C.; Liao, D.; Gadiraju, S.S. AgentMaster: A Multi-Agent Conversational Framework Using A2A and MCP Protocols for Multimodal Information Retrieval and Analysis. ArXiv 2025. [Google Scholar]

- Zou, J.; Yang, X.; Qiu, R.; Li, G.; Tieu, K.; Lu, P.; Shen, K.; Tong, H.; Choi, Y.; He, J.; et al. Latent Collaboration in Multi-Agent Systems. 2025. [Google Scholar] [CrossRef]

- Yuen, S.; Medina, F.G.; Su, T.; Du, Y.; Sobey, A.J. Intrinsic Memory Agents: Heterogeneous Multi-Agent LLM Systems through Structured Contextual Memory. ArXiv 2025. abs/2508.08997. [Google Scholar]

- Rezazadeh, A.; Li, Z.; Lou, A.; Zhao, Y.; Wei, W.; Bao, Y. Collaborative Memory: Multi-User Memory Sharing in LLM Agents with Dynamic Access Control. ArXiv 2025. [Google Scholar]

- Liu, J.; Sun, Y.; Cheng, W.; Lei, H.; Chen, Y.; Wen, L.; Yang, X.; Fu, D.; Cai, P.; Deng, N.; et al. MemVerse: Multimodal Memory for Lifelong Learning Agents. 2025. [Google Scholar] [CrossRef]

- Zhou, Y.; Li, X.; Liu, Y.; Zhao, Y.; Wang, X.; Li, Z.; Tian, J.; Xu, X. M2PA: A Multi-Memory Planning Agent for Open Worlds Inspired by Cognitive Theory. In Proceedings of the Annual Meeting of the Association for Computational Linguistics, 2025. [Google Scholar]

- He, X.; Zhang, Y.; Gao, S.; Li, W.; Hong, L.; Chen, M.; Jiang, K.; Fu, J.; Zhang, W. RSAgent: Learning to Reason and Act for Text-Guided Segmentation via Multi-Turn Tool Invocations. 2025. [Google Scholar]

- Feng, T.; Wang, X.; Jiang, Y.G.; Zhu, W. Embodied AI: From LLMs to World Models. ArXiv 2025, abs/2509. [Google Scholar]

- Long, X.; Zhao, Q.; Zhang, K.; Zhang, Z.; Wang, D.; Liu, Y.; Shu, Z.; Lu, Y.; Wang, S.; Wei, X.; et al. A Survey: Learning Embodied Intelligence from Physical Simulators and World Models. ArXiv 2025. abs/2507.00917. [Google Scholar]

- Tang, J.; Zhao, Y.; Zhu, K.; Xiao, G.; Kasikci, B.; Han, S. Quest: Query-Aware Sparsity for Efficient Long-Context LLM Inference. ArXiv 2024. [Google Scholar]

- Li, Z.; Song, S.; Xi, C.; Wang, H.; Tang, C.; Niu, S.; Chen, D.; Yang, J.; Li, C.; Yu, Q.; et al. MemOS: A Memory OS for AI System. ArXiv 2025. [Google Scholar]

- Anokhin, P.; Semenov, N.; Sorokin, A.Y.; Evseev, D.; Burtsev, M.; Burnaev, E. AriGraph: Learning Knowledge Graph World Models with Episodic Memory for LLM Agents. In Proceedings of the International Joint Conference on Artificial Intelligence, 2024. [Google Scholar]

- Yang, R.; Chen, J.; Zhang, Y.; Yuan, S.; Chen, A.; Richardson, K.; Xiao, Y.; Yang, D. SelfGoal: Your Language Agents Already Know How to Achieve High-level Goals. ArXiv 2024. [Google Scholar]

- Wang, Y.; Chen, X. MIRIX: Multi-Agent Memory System for LLM-Based Agents. ArXiv 2025. [Google Scholar]

- Ho, M.; Si, C.; Feng, Z.; Yu, F.; Yang, Y.; Liu, Z.; Hu, Z.; Qin, L. ArcMemo: Abstract Reasoning Composition with Lifelong LLM Memory. ArXiv 2025. abs/2509.04439. [Google Scholar]

- Fang, J.; Deng, X.; Xu, H.; Jiang, Z.; Tang, Y.; Xu, Z.; Deng, S.; Yao, Y.; Wang, M.; Qiao, S.; et al. LightMem: Lightweight and Efficient Memory-Augmented Generation. ArXiv 2025. [Google Scholar]

- Huang, X.; Chen, J.; Fei, Y.; Li, Z.; Schwaller, P.; Ceder, G. CASCADE: Cumulative Agentic Skill Creation through Autonomous Development and Evolution. 2025. [Google Scholar] [CrossRef]

- Jiang, D.; Lu, Y.; Li, Z.; Lyu, Z.; Nie, P.; Wang, H.; Su, A.; Chen, H.; Zou, K.; Du, C.; et al. VerlTool: Towards Holistic Agentic Reinforcement Learning with Tool Use. ArXiv 2025. abs/2509.01055. [Google Scholar]

- Xi, Z.; Chen, W.; Guo, X.; He, W.; Ding, Y.; Hong, B.; Zhang, M.; Wang, J.; Jin, S.; Zhou, E.; et al. The Rise and Potential of Large Language Model Based Agents: A Survey. ArXiv 2023. [Google Scholar] [CrossRef]

- Lin, B.Y.; Fu, Y.; Yang, K.; Ammanabrolu, P.; Brahman, F.; Huang, S.; Bhagavatula, C.; Choi, Y.; Ren, X. SwiftSage: A Generative Agent with Fast and Slow Thinking for Complex Interactive Tasks. ArXiv 2023. [Google Scholar]

- Zhou, W.; Jiang, Y.E.; Cui, P.; Wang, T.; Xiao, Z.; Hou, Y.; Cotterell, R.; Sachan, M. RecurrentGPT: Interactive Generation of (Arbitrarily) Long Text. ArXiv 2023. [Google Scholar]

- Wang, S.; Wei, Z.; Choi, Y.; Ren, X. Symbolic Working Memory Enhances Language Models for Complex Rule Application. ArXiv 2024. [Google Scholar]

- Zhang, M.; Press, O.; Merrill, W.; Liu, A.; Smith, N.A. How Language Model Hallucinations Can Snowball. ArXiv 2023. [Google Scholar]

- Ghasemabadi, A.; Niu, D. Can LLMs Predict Their Own Failures? Self-Awareness via Internal Circuits. 2025. [Google Scholar] [CrossRef]

- Zhang, K.; Yao, Q.; Liu, S.; Zhang, W.; Cen, M.; Zhou, Y.; Fang, W.; Zhao, Y.; Lai, B.; Song, M. Replay Failures as Successes: Sample-Efficient Reinforcement Learning for Instruction Following. 2025. [Google Scholar] [CrossRef]

- Zhou, Z.; Qu, A.; Wu, Z.; Kim, S.; Kim, S.; Prakash, A.; Rus, D.; Zhao, J.; Low, B.K.H.; Liang, P. MEM1: Learning to Synergize Memory and Reasoning for Efficient Long-Horizon Agents. ArXiv 2025. [Google Scholar]

- Li, X.; Jiao, W.; Jin, J.; Dong, G.; Jin, J.; Wang, Y.; Wang, H.; Zhu, Y.; Wen, J.R.; Lu, Y.; et al. DeepAgent: A General Reasoning Agent with Scalable Toolsets. ArXiv 2025. [Google Scholar]

- Yin, Z.; Sun, Q.; Guo, Q.; Zeng, Z.; Cheng, Q.; Qiu, X.; Huang, X. Explicit Memory Learning with Expectation Maximization. In Proceedings of the Conference on Empirical Methods in Natural Language Processing, 2024. [Google Scholar]

- Renze, M.; Guven, E. Self-Reflection in Large Language Model Agents: Effects on Problem-Solving Performance. 2024 2nd International Conference on Foundation and Large Language Models (FLLM); 2024; pp. 516–525. [Google Scholar]

- Hong, C.; He, Q. Enhancing memory retrieval in generative agents through LLM-trained cross attention networks. Frontiers in Psychology 2025, 16. [Google Scholar] [CrossRef]

- Zhu, K.; Liu, Z.; Li, B.; Tian, M.; Yang, Y.; Zhang, J.; Han, P.; Xie, Q.; Cui, F.; Zhang, W.; et al. Where LLM Agents Fail and How They can Learn From Failures. ArXiv 2025. abs/2509.25370. [Google Scholar]

- Fu, D.; He, K.; Wang, Y.; Hong, W.; Gongque, Z.; Zeng, W.; Wang, W.; Wang, J.; Cai, X.; Xu, W. AgentRefine: Enhancing Agent Generalization through Refinement Tuning. ArXiv 2025. abs/2501.01702. [Google Scholar]

- Suzgun, M.; Yüksekgönül, M.; Bianchi, F.; Jurafsky, D.; Zou, J. Dynamic Cheatsheet: Test-Time Learning with Adaptive Memory. ArXiv 2025. abs/2504.07952. [Google Scholar]

- Xu, H.; Hu, J.; Zhang, K.; Yu, L.; Tang, Y.; Song, X.; Duan, Y.; Ai, L.; Shi, B. SEDM: Scalable Self-Evolving Distributed Memory for Agents. ArXiv 2025. [Google Scholar]

- Liu, J.; Wang, Z.; Huang, X.; Li, Y.; Fan, X.; Li, X.; Guo, C.; Sarikaya, R.; Ji, H. Analyzing and Internalizing Complex Policy Documents for LLM Agents. ArXiv 2025, abs/2510.11588. 2025.

- Lyu, Y.; Wang, C.; Huang, J.; Xu, T. From Correction to Mastery: Reinforced Distillation of Large Language Model Agents. ArXiv 2025. [Google Scholar]

- Feng, L.; Xue, Z.; Liu, T.; An, B. Group-in-Group Policy Optimization for LLM Agent Training. ArXiv 2025, abs/2505.10978.

- Hsieh, C.P.; Sun, S.; Kriman, S.; Acharya, S.; Rekesh, D.; Jia, F.; Ginsburg, B. RULER: What’s the Real Context Size of Your Long-Context Language Models? ArXiv 2024. [Google Scholar]

- Kuratov, Y.; Bulatov, A.; Anokhin, P.; Rodkin, I.; Sorokin, D.; Sorokin, A.Y.; Burtsev, M. BABILong: Testing the Limits of LLMs with Long Context Reasoning-in-a-Haystack. ArXiv 2024. [Google Scholar]

- Yen, H.; Gao, T.; Hou, M.; Ding, K.; Fleischer, D.; Izsak, P.; Wasserblat, M.; Chen, D. HELMET: How to Evaluate Long-Context Language Models Effectively and Thoroughly. ArXiv 2024. [Google Scholar]

- Wang, H.; Shi, H.; Tan, S.; Qin, W.; Wang, W.; Zhang, T.; Nambi, A.U.; Ganu, T.; Wang, H. Multimodal Needle in a Haystack: Benchmarking Long-Context Capability of Multimodal Large Language Models. In Proceedings of the North American Chapter of the Association for Computational Linguistics, 2024. [Google Scholar]

- Tavakoli, M.; Salemi, A.; Ye, C.; Abdalla, M.; Zamani, H.; Mitchell, J.R. Beyond a Million Tokens: Benchmarking and Enhancing Long-Term Memory in LLMs. ArXiv 2025. [Google Scholar]

- Maharana, A.; Lee, D.H.; Tulyakov, S.; Bansal, M.; Barbieri, F.; Fang, Y. Evaluating Very Long-Term Conversational Memory of LLM Agents. ArXiv 2024. [Google Scholar]

- Jia, Z.; Liu, Q.; Li, H.; Chen, Y.; Liu, J. Evaluating the Long-Term Memory of Large Language Models. In Proceedings of the Findings of the Association for Computational Linguistics: ACL 2025; Vienna, Austria, Che, W., Nabende, J., Shutova, E., Pilehvar, M.T., Eds.; 2025; pp. 19759–19777. [Google Scholar] [CrossRef]

- Zhang, Z.; Zhang, Y.; Tan, H.; Li, R.; Chen, X. Explicit v.s. Implicit Memory: Exploring Multi-hop Complex Reasoning Over Personalized Information. ArXiv 2025. [Google Scholar]

- Yang, Z.; Qi, P.; Zhang, S.; Bengio, Y.; Cohen, W.W.; Salakhutdinov, R.; Manning, C.D. HotpotQA: A Dataset for Diverse, Explainable Multi-hop Question Answering. In Proceedings of the Conference on Empirical Methods in Natural Language Processing, 2018. [Google Scholar]

- He, J.; Zhu, L.; Wang, R.; Wang, X.; Haffari, G.; Zhang, J. MADial-Bench: Towards Real-world Evaluation of Memory-Augmented Dialogue Generation. In Proceedings of the North American Chapter of the Association for Computational Linguistics, 2024. [Google Scholar]

- Xia, M.; Ruehle, V.; Rajmohan, S.; Shokri, R. Minerva: A Programmable Memory Test Benchmark for Language Models. ArXiv 2025. abs/2502.03358. [Google Scholar]

- Chen, D.; Niu, S.; Li, K.; Liu, P.; Zheng, X.; Tang, B.; Li, X.; Xiong, F.; Li, Z. HaluMem: Evaluating Hallucinations in Memory Systems of Agents. ArXiv 2025. abs/2511.03506. [Google Scholar]

- Jiang, B.; Yuan, Y.; Shen, M.; Hao, Z.; Xu, Z.; Chen, Z.; Liu, Z.; Vijjini, A.R.; He, J.; Yu, H.; et al. PersonaMem-v2: Towards Personalized Intelligence via Learning Implicit User Personas and Agentic Memory. 2025. [Google Scholar]

- Huang, Z.; Dai, Q.; Wu, G.; Wu, X.; Chen, K.; Yu, C.; Li, X.; Ge, T.; Wang, W.; Jin, Q. Mem-PAL: Towards Memory-based Personalized Dialogue Assistants for Long-term User-Agent Interaction. 2025. [Google Scholar]

- Du, Y.; Wang, H.; Zhao, Z.; Liang, B.; Wang, B.; Zhong, W.; Wang, Z.; Wong, K.F. PerLTQA: A Personal Long-Term Memory Dataset for Memory Classification, Retrieval, and Synthesis in Question Answering. ArXiv 2024. [Google Scholar]

- Zhao, S.; Hong, M.; Liu, Y.; Hazarika, D.; Lin, K. Do LLMs Recognize Your Preferences? Evaluating Personalized Preference Following in LLMs. ArXiv 2025. abs/2502.09597. [Google Scholar]

- Yuan, R.; Sun, S.; Wang, Z.; Cao, Z.; Li, W. Personalized Large Language Model Assistant with Evolving Conditional Memory. In Proceedings of the International Conference on Computational Linguistics, 2023. [Google Scholar]

- Li, X.; Bantupalli, J.; Dharmani, R.; Zhang, Y.; Shang, J. Toward Multi-Session Personalized Conversation: A Large-Scale Dataset and Hierarchical Tree Framework for Implicit Reasoning. ArXiv 2025. [Google Scholar]

- Tsaknakis, I.C.; Song, B.; Gan, S.; Kang, D.; García, A.; Liu, G.; Fleming, C.; Hong, M. Do LLMs Recognize Your Latent Preferences? A Benchmark for Latent Information Discovery in Personalized Interaction. ArXiv 2025. [Google Scholar]

- Kim, E.; Park, C.; Chang, B. SHARE: Shared Memory-Aware Open-Domain Long-Term Dialogue Dataset Constructed from Movie Script. ArXiv 2024. [Google Scholar]

- Hu, Y.; Wang, Y.; McAuley, J. Evaluating Memory in LLM Agents via Incremental Multi-Turn Interactions. ArXiv 2025. [Google Scholar]

- Wan, L.; Ma, W. StoryBench: A Dynamic Benchmark for Evaluating Long-Term Memory with Multi Turns. ArXiv 2025. [Google Scholar]

- Deng, Y.; Zhang, X.; Zhang, W.; Yuan, Y.; Ng, S.K.; Chua, T.S. On the Multi-turn Instruction Following for Conversational Web Agents. In Proceedings of the Annual Meeting of the Association for Computational Linguistics, 2024. [Google Scholar]

- Miyai, A.; Zhao, Z.; Egashira, K.; Sato, A.; Sunada, T.; Onohara, S.; Yamanishi, H.; Toyooka, M.; Nishina, K.; Maeda, R.; et al. WebChoreArena: Evaluating Web Browsing Agents on Realistic Tedious Web Tasks. ArXiv 2025. abs/2506.01952. [Google Scholar]

- Wu, C.K.; Tam, Z.R.; Lin, C.Y.; Chen, Y.N.; yi Lee, H. StreamBench: Towards Benchmarking Continuous Improvement of Language Agents. ArXiv 2024. abs/2406.08747.

- Ai, Q.; Tang, Y.; Wang, C.; Long, J.; Su, W.; Liu, Y. MemoryBench: A Benchmark for Memory and Continual Learning in LLM Systems. ArXiv 2025, abs/2510.17281. [Google Scholar]

- Zheng, J.; Cai, X.; Li, Q.; Zhang, D.; Li, Z.; Zhang, Y.; Song, L.; Ma, Q. LifelongAgentBench: Evaluating LLM Agents as Lifelong Learners. ArXiv 2025. [Google Scholar]

- Bai, Y.; Lv, X.; Zhang, J.; Lyu, H.; Tang, J.; Huang, Z.; Du, Z.; Liu, X.; Zeng, A.; Hou, L.; et al. LongBench: A Bilingual, Multitask Benchmark for Long Context Understanding. ArXiv 2023. [Google Scholar]

- Bai, Y.; Tu, S.; Zhang, J.; Peng, H.; Wang, X.; Lv, X.; Cao, S.; Xu, J.; Hou, L.; Dong, Y.; et al. LongBench v2: Towards Deeper Understanding and Reasoning on Realistic Long-context Multitasks. ArXiv 2024. [Google Scholar]

- Kim, J.; Chay, W.; Hwang, H.; Kyung, D.; Chung, H.; Cho, E.; Jo, Y.; Choi, E. DialSim: A Real-Time Simulator for Evaluating Long-Term Dialogue Understanding of Conversational Agents. ArXiv 2024. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).