Submitted:

07 January 2026

Posted:

08 January 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- To identify the needs, expectations, challenges, and perspectives of key stakeholders—quality engineers, production managers, suppliers, auditors/regulators, and customers—regarding the integration of generative AI into QA and auditing activities.

- To propose practical measures and guidelines for the responsible use of generative AI in regulated QM contexts, including human-in-the-loop approvals, data governance, traceability, and alignment with recognized quality standards.

- To design a conceptual framework that enables systematic, function-sensitive integration of generative AI across supplier performance, in-process control, and post-market feedback, structured along the Plan–Execute–Improve cycle.

- To validate the proposed framework in practice across the three core QM functions using process-quality thresholds and AI-governance controls.

- To provide role-specific recommendations for different stakeholder groups on the adoption, governance, and oversight of generative AI in QA and auditing.

2. State-of-the-Art: Generative AI Tools and Their Applications for Manufacturing Quality Assurance

2.1. Generative AI in Quality Assurance Contexts

2.2. Key Quality Metrics, Indices, Models and Standards in Manufacturing Enterprises

- First Pass Yield (FPY)—measures the percentage of products manufactured correctly without rework [14].

- Process Capability Indices (Cp/Cpk)—reflect how well a process meets specification limits [18].

- Overall Equipment Effectiveness (OEE)—evaluates availability, performance, and quality effectiveness of equipment [19].

- Cost of Poor Quality (CoPQ)—aggregates costs from scrap, rework, and warranty claims [20].

- Six Sigma—uses the DMAIC (Define–Measure–Analyse–Improve–Control) framework to reduce defects. AI and Machine Learning (ML) methods increasingly support analysis and diagnosis [23].

- Lean and Lean Six Sigma (LSS)—applies tools such as 5S, value stream mapping, and error-proofing (poka-yoke) to reduce waste and variability [24].

- Statistical Process Control (SPC)—maintains stability via control charts; ML-enhanced SPC supports dynamic anomaly detection [25].

- Failure Mode and Effects Analysis (FMEA)—a core tool for proactive risk management; generative AI can support failure identification and action prioritization [26].

- Total Quality Management (TQM) and Root Cause Analysis—are long-established approaches increasingly augmented by digital analytics and data-driven systems [27].

2.3. Taxonomy of Generative AI Tools for Quality Assurance in Manufacturing Companies

- Authoring and compliance documentation tools (document assistants; CAPA narrators) support structured QMS records and reporting. Because these outputs can affect compliance, human-in-the-loop review remains essential to confirm accuracy, traceability, and standards alignment.

- Supplier-facing tools (generative AI-enabled supplier QA portals) improve submission consistency and first-time-right rates by guiding suppliers through required evidence and checks. Their deployment requires strong validation and data-protection controls due to proprietary supplier inputs.

- Operational guidance tools (interactive inspection agents; anomaly explainers) assist frontline personnel with procedure retrieval, deviation interpretation, and context-aware troubleshooting. Their effectiveness depends on reliable integration, low latency, and user trust calibrated by clear guardrails.

3. Related Work

3.1. Applications of Generative AI Tools for Quality Assurance in Manufacturing Companies

- QMS/MES/PLM-embedded copilots (controlled drafting and evidence linkage):—Copilots embedded in eQMS, MES, and PLM can generate initial drafts of PFMEA sections, control-plan updates, PPAP summaries, and work instructions by grounding on CTQs, specifications, SPC/MSA results, and historical NCR/CAPA records. Reported benefits include shorter revision cycles and more consistent documentation, while key controls include enforced citations to approved sources, versioned audit trails, and human sign-off prior to release [42].

- Vision–language assistants for automated optical inspection (AOI) and in-process diagnostics:—Multimodal models can interpret AOI imagery (or line-camera outputs) together with SPC signals to produce operator-facing explanations (e.g., defect pattern + chart shift suggesting paste-volume instability). Evidence from industrial anomaly-detection research—including recent surveys and diffusion-based methods—indicates substantial gains in image-based detection performance; in QA settings, these models are typically positioned as advisory and require MSA-aligned validation and periodic requalification [43,44].

- Retrieval-augmented generation (RAG) systems:—answer free-text questions (e.g., reaction plans after a rule violation) by retrieving approved work instructions, control plans, and customer standards, then generating responses with explicit citations. Recent smart-manufacturing studies show that hybrid RAG designs—combining metadata/knowledge-graph structure with vector retrieval—improve precision and traceability, making them suitable for controlled QA knowledge support [45].

- Supplier quality and incoming-inspection copilots:—Assistants can summarize supplier defect histories (e.g., NCR patterns and PPM trends), flag documentation gaps, and propose sampling adjustments based on risk indicators. While these functions combine conventional analytics with text generation, governance typically requires that dispositions remain under Supplier Quality Engineer responsibility, supported by access control and traceable rationales [42].].

- CAPA and audit-evidence assemblers:—Generative tools can draft 8D narratives, map containment/correction/prevention actions to PFMEA causes, and compile evidence packs (logs, test results, photos) using templates and schema validation with explicit role approvals. Related conformance-checking and process-mining research demonstrates how event logs can be transformed into objective audit evidence and KPIs for audit readiness, providing structures that generative systems can leverage to improve documentation completeness and speed [46].

- Metrology and laboratory reporting aides (ISO/IEC 17025 contexts):—In testing and calibration laboratories, assistants can support drafting of method descriptions, uncertainty narratives, and result interpretation summaries. However, these outputs must remain consistent with validated methods, documented uncertainty budgets, impartiality requirements, and authorized sign-off practices expected in accredited environments [47].

- Simulation and digital-twin scenario generators:—By coupling process models with generative AI, organizations can explore “what-if” scenarios for inspection planning (e.g., changing sampling regimes) and generate stress-test cases before releasing process changes. Recent work also shows LLM-enabled digital-twin approaches that learn temporal features from production data, supporting rapid hypothesis testing, confirmation runs, and evidence-backed parameter updates [41].

3.2. Frameworks for Application of Generative AI in Manufacturing QA

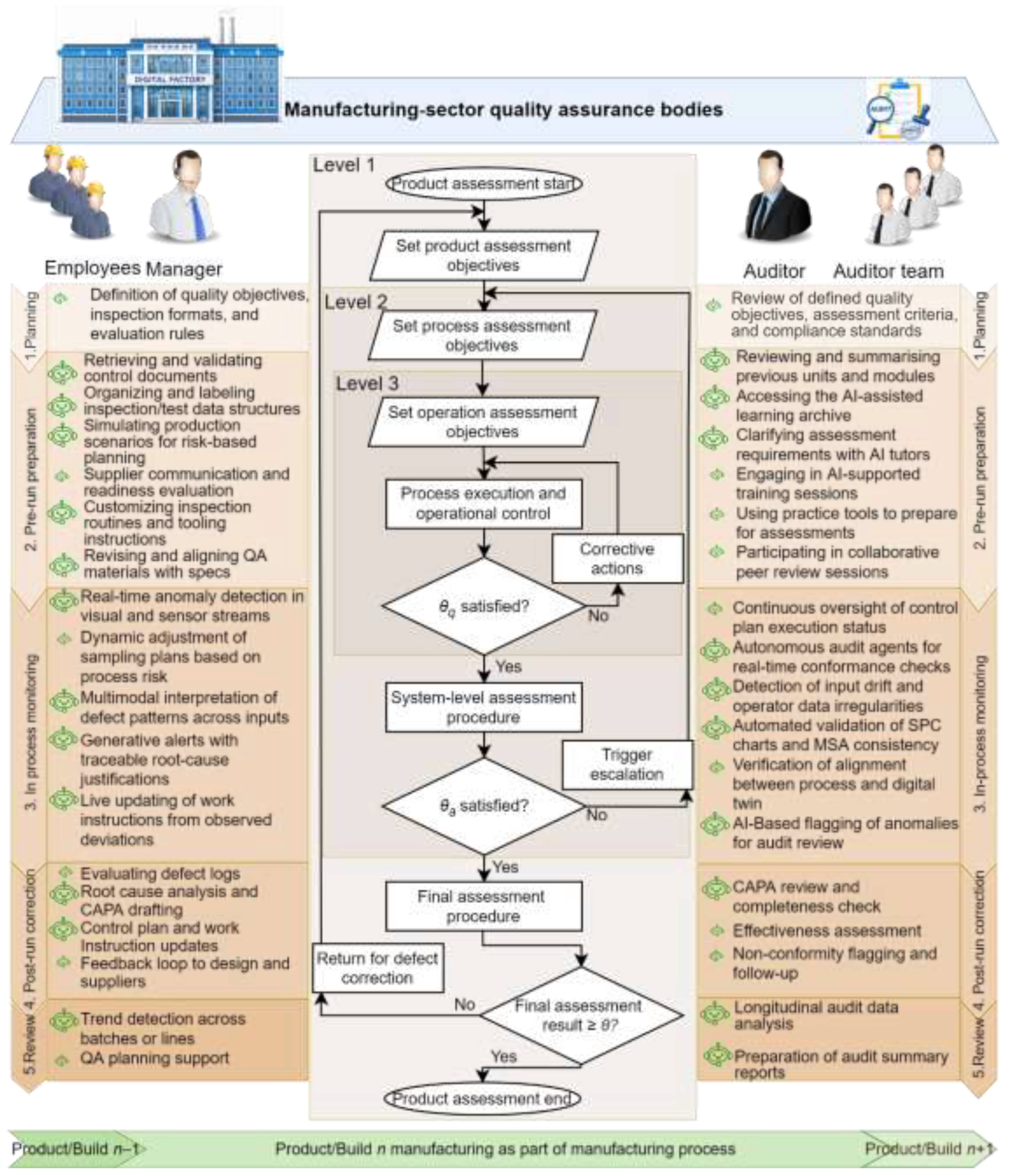

4. Framework for Generative AI-Supported Quality Assurance in Manufacturing Enterprises

5. Validation of the Proposed Generative AI-Based Quality Assurance Framework

5.1. Case Study: NPI Quality Planning for Smart Thermostat ST-200

5.2. Expert Team Solution

5.3. GPT-5-Thinking Solution (Generative AI-Driven Planning)

5.4. Comparative Evaluation

5.5. Discussion

6. Conclusions and Future Research

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| 8D | Eight Disciplines Problem Solving |

| AOI | Automated Optical Inspection |

| APQP | Advanced Product Quality Planning |

| BOM | Bill of Materials |

| CAPA | Corrective/preventive actions |

| CoPQ | Cost of Poor Quality |

| Cp | Process’s potential capability |

| Cpk | Process capability index |

| CRC | Cyclic Redundancy Check |

| CTQ | Critical-to-Quality |

| DC | Direct Current |

| DMAIC | Define-Measure-Analyse-Improve-Control |

| DOE | Design of Experiment |

| DPMO | Defects per Million Opportunities |

| DPU | Defects per Unit |

| FMEA | Failure Mode and Effects Analysis |

| FPY | First Pass Yield |

| FT | Functional Test |

| GRR | Gauge Repeatability & Reproducibility |

| IPC | Acceptability Standard for Electronic Devices |

| LIMS | Laboratory Information Management System |

| LQC | Lean Quality Control |

| LSS | Lean Six Sigma |

| MSA | Measurement System Analysis |

| NCR | Non-Conformance Report |

| NPI | New Product Introduction |

| OEE | Overall Equipment Effectiveness |

| OEM | Original Equipment Manufacturing |

| PDCA | Plan-Do-Check-Act |

| PFMEA | Process Failure Mode and Effects Analysis |

| PLM | Product Lifecycle Management |

| PMIC | Power Management Integrated Circuit |

| PPAP | Production Part Approval Process |

| PPM | Parts Per Million |

| QA | Quality Assurance |

| QM | Quality Management |

| QMS | Quality Management System |

| RCA | Root Cause Analysis |

| SMT | Surface-Mount Technology |

| SPC | Statistical Process Control |

| S/O/D/RPN | Severity/Occurrence/Detection/Risk Priority Number |

| SOPs | Standard Operating Procedures |

| SPI | Solder Paste Inspection |

| SQE | Supplier Quality Engineer |

| SQM | Supplier Quality Management |

| TQM | Total Quality Management |

| V&V | Verification & Validation |

| XAI | Explainable AI |

References

- Escobar, C.A.; McGovern, M.E.; Morales-Menendez, R. Quality 4.0: A review of big data challenges in manufacturing. J. Intell. Manuf. 2021, 32, 2319–2334. [Google Scholar] [CrossRef]

- Mahin, M.; Kadasah, N.; Alsabban, A.; Albliwi, S. Exploring the landscape of Quality 4.0: A comprehensive review of its benefits, challenges, and critical success factors. Prod. Manuf. Res. 2024, 12, 2373739. [Google Scholar] [CrossRef]

- Khinvasara, T.; Ness, S.; Shankar, A. Leveraging AI for enhanced quality assurance in medical device manufacturing. Asian J. Res. Comput. Sci. 2024, 17, 13–35. [Google Scholar] [CrossRef]

- Li, Y.; Zhao, H.; Jiang, H.; Pan, Y.; Liu, Z.; Wu, Z.; Shu, P.; Tian, J.; Yang, T.; Xu, S. Large language models for manufacturing. arXiv 2024, arXiv:2410.21418. [Google Scholar]

- Mokander, J.; Sheth, M.; Gersbro-Sundler, M.; Blomgren, P.; Floridi, L. Challenges and best practices in corporate AI governance: Lessons from the biopharmaceutical industry. Front. Comput. Sci. 2022, 4, 1068361. [Google Scholar] [CrossRef]

- Chhetri, K.B. Applications of artificial intelligence and machine learning in food quality control and safety assessment. Food Eng. Rev. 2024, 16, 1–21. [Google Scholar] [CrossRef]

- National Institute of Standards and Technology (NIST). Artificial Intelligence Risk Management Framework (AI RMF 1.0) (NIST AI 100-1); U.S. Department of Commerce: Gaithersburg, MD, USA, 2023. [Google Scholar] [CrossRef]

- ISO/IEC 42001:2023; Information Technology—Artificial Intelligence—Management System. International Organization for Standardization and International Electrotechnical Commission: Geneva, Switzerland, 2023. Available online: https://www.iso.org/standard/81230.html (accessed on 1 January 2026).

- European Union. Regulation (EU) 2024/1689 of the European Parliament and of the Council of 13 June 2024 laying down harmonised rules on artificial intelligence (Artificial Intelligence Act). Off. J. Eur. Union OJ L 2024, 2024/1689, 12.7.2024. Available online: https://eur-lex.europa.eu/eli/reg/2024/1689/oj/eng (accessed on 1 January 2026).

- Cassoli, B.B.; Jourdan, N.; Nguyen, P.H.; Sen, S.; Garcia-Ceja, E.; Metternich, J. Frameworks for data-driven quality management in cyber-physical systems for manufacturing: A systematic review. Procedia CIRP 2022, 112, 567–572. [Google Scholar] [CrossRef]

- Ejjami, R.; Boussalham, K. Industry 5.0 in manufacturing: Enhancing resilience and responsibility through AI-driven predictive maintenance, quality control, and supply chain optimization. Int. J. Multidiscip. Res. 2024, 6, 1–31. [Google Scholar] [CrossRef]

- NIST AI. Artificial Intelligence Risk Management Framework: Generative Artificial Intelligence Profile (NIST AI 600-1); National Institute of Standards and Technology: Gaithersburg, MD, USA, 2024. [Google Scholar] [CrossRef]

- Verma, H.; Padh, K.; Thelisson, E. Can AI be Auditable? arXiv 2025, arXiv:2509.00575. [Google Scholar] [CrossRef]

- Raj Mohan, R.; Thiruppathi, K.; Venkatraman, R.; Raghuraman, S. Quality improvement through first pass yield using statistical process control approach. J. Appl. Sci. 2012, 12, 985–991. [Google Scholar] [CrossRef]

- Verna, E.; Genta, G.; Galetto, M.; Franceschini, F. Defects-per-unit control chart for assembled products based on defect prediction models. Int. J. Adv. Manuf. Technol. 2022, 119, 2835–2846. [Google Scholar] [CrossRef]

- Coskun, A.; Serteser, M.; Unsal, I. Sigma metric revisited: True known mistakes. Biochem. Med. 2019, 29, 010902. [Google Scholar] [CrossRef] [PubMed]

- Steiner, S.H.; MacKay, R.J. Effective monitoring of processes with parts per million defective: A hard problem! In Frontiers in Statistical Quality Control 7; Lenz, H.J., Wilrich, P., Eds.; Physica-Verlag: Heidelberg, Germany, 2004; pp. 140–149. [Google Scholar] [CrossRef]

- ISO 22514-2:2017; Statistical Methods in Process Management—Capability and Performance—Part 2: Process Capability and Performance Measures. International Organization for Standardization: Geneva, Switzerland, 2017. Available online: https://www.iso.org/standard/71617.html (accessed on 31 August 2025).

- Muchiri, P.; Pintelon, L. Performance measurement using overall equipment effectiveness (OEE): Literature review and practical application discussion. Int. J. Prod. Res. 2008, 46, 3517–3535. [Google Scholar] [CrossRef]

- Schiffauerova, A.; Thomson, V. A review of research on cost of quality models and best practices. Int. J. Qual. Reliab. Manag. 2006, 23, 647–669. [Google Scholar] [CrossRef]

- Isack, H.D.; Mutingi, M.; Kandjeke, H.; Vashishth, A.; Chakraborty, A. Exploring the adoption of Lean principles in medical laboratory industry: Empirical evidences from Namibia. Int. J. Lean Six Sigma 2018, 9, 133–155. [Google Scholar] [CrossRef]

- Gueorguiev, T. An approach to integrate artificial intelligence in ISO 9001-based quality management systems. Meas. Sens. 2025, 38, 101787. [Google Scholar] [CrossRef]

- Sood, A.C.; Dhull, K.S. The future of Six Sigma—Integrating AI for continuous improvement. Int. J. Innov. Res. Eng. Manag. 2024, 11, 8–15. [Google Scholar] [CrossRef]

- Abdullah, R.; Lee, H.K.; Abdul Rasib, A.H.; Mansoor, H.O. Lean Six Sigma framework to improve the assembly process at a printer manufacturing company. J. Adv. Manuf. Technol. 2023, 17, 33–46. [Google Scholar]

- Tanuska, P.; Spendra, L.; Kebisek, M.; Duris, R.; Stremy, M. Smart anomaly detection and prediction for assembly process maintenance in compliance with Industry 4.0. Sensors 2021, 21, 2376. [Google Scholar] [CrossRef]

- El Hassani, I.; Masrour, T.; Kourouma, N.; Tavcar, J. AI-driven FMEA: Integration of large language models for faster and more accurate risk analysis. Des. Sci. 2025, 11, e10. [Google Scholar] [CrossRef]

- Liu, H.C.; Liu, R.; Gu, X.; Yang, M. From total quality management to Quality 4.0: A systematic literature review and future research agenda. Front. Eng. Manag. 2023, 10, 191–205. [Google Scholar] [CrossRef]

- ISO 9001:2015; Quality Management Systems—Requirements. International Organization for Standardization: Geneva, Switzerland, 2015. Available online: https://www.iso.org/standard/62085.html (accessed on 1 January 2026).

- IATF 16949:2016; Quality Management System Requirements for Automotive Production and Relevant Service Parts Organizations. International Automotive Task Force: Southfield, MI, USA, 2016. Available online: https://www.iatfglobaloversight.org/iatf-169492016/about (accessed on 1 January 2026).

- Doshi, J.A.; Desai, D. Overview of automotive core tools: Applications and benefits. J. Inst. Eng. India Ser. C 2017, 98, 515–526. [Google Scholar] [CrossRef]

- ISO/IEC 17025:2017; General Requirements for the Competence of Testing and Calibration Laboratories. International Organization for Standardization and International Electrotechnical Commission: Geneva, Switzerland, 2017. Available online: https://www.iso.org/standard/66912.html (accessed on 1 January 2026).

- ISO 10012:2003; Measurement Management Systems—Requirements for Measurement Processes and Measuring Equipment. International Organization for Standardization: Geneva, Switzerland, 2003. Available online: https://www.iso.org/standard/26033.html (accessed on 1 January 2026).

- ISO 19011:2018; Guidelines for Auditing Management Systems. International Organization for Standardization: Geneva, Switzerland, 2018. Available online: https://www.iso.org/standard/70017.html (accessed on 1 January 2026).

- Arunagiri, T.; Kannaiah, K.P.; Vasanthan, M. Enhancing pharmaceutical product quality with a comprehensive corrective and preventive actions (CAPA) framework: From reactive to proactive. Cureus 2024, 16, e69762. [Google Scholar] [CrossRef]

- Holloway, S. The role of natural language processing in streamlining supply chain communication. Preprints 2024, 2024112303. [Google Scholar] [CrossRef]

- Scarton, G.; Formentini, M.; Romano, P. Automating quality control through an expert system. Electron. Mark. 2025, 35, 14. [Google Scholar] [CrossRef]

- Friday, S.C.; Lawal, C.I.; Ayodeji, D.C.; Sobowale, A. Reviewing the effectiveness of digital audit tools in enhancing corporate transparency. Int. J. Adv. Multidiscip. Res. Stud. 2024, 6, 1679–1689. [Google Scholar] [CrossRef]

- Kluska, R.A.; Rocha Loures, E.; Deschamps, F.; Camilotti, L.; Zanetti Freire, R.; Rotondo, R. Intelligent dashboard for asset management and maintenance with generative AI: A case study in maintenance engineering. In Intelligent Production and Industry 5.0 with Human Touch, Resilience, and Circular Economy; Lecture Notes in Production Engineering; Sormaz, D.N., Bidanda, B., Alhawari, O., Geng, Z., Eds.; Springer: Cham, Switzerland, 2025. [Google Scholar] [CrossRef]

- Moosavi, S.; Farajzadeh-Zanjani, M.; Razavi-Far, R.; Palade, V.; Saif, M. Explainable AI in manufacturing and industrial cyber-physical systems: A survey. Electronics 2024, 13, 3497. [Google Scholar] [CrossRef]

- Tercan, H.; Meisen, T. Machine learning and deep learning based predictive quality in manufacturing: A systematic review. J. Intell. Manuf. 2022, 33, 1879–1905. [Google Scholar] [CrossRef]

- Sun, Y.; Zhang, Q.; Bao, J.; Lu, Y.; Liu, S. Empowering digital twins with large language models for global temporal feature learning. J. Manuf. Syst. 2024, 74, 83–99. [Google Scholar] [CrossRef]

- Gao, R.X.; Kruger, J.; Merklein, M.; Kitzig-Frank, H.; Vancza, J. Artificial intelligence in manufacturing: State of the art, perspectives, and future directions. CIRP Ann.—Manuf. Technol. 2024, 73, 723–749. [Google Scholar] [CrossRef]

- Liu, J.; Xie, G.; Wang, J.; Li, S.; Wang, C.; Zheng, F.; Jin, Y. Deep industrial image anomaly detection: A survey. Mach. Intell. Res. 2024, 21, 104–135. [Google Scholar] [CrossRef]

- Xu, H.; Xu, S.; Yang, W. Unsupervised industrial anomaly detection with diffusion models. J. Vis. Commun. Image Represent. 2023, 97, 103983. [Google Scholar] [CrossRef]

- Wan, Y.; Chen, Z.; Liu, Y.; Chen, C.; Packianather, M. Empowering LLMs by hybrid retrieval-augmented generation for domain-centric Q&A in smart manufacturing. Adv. Eng. Inform. 2025, 65, 103212. [Google Scholar] [CrossRef]

- Jans, M.; Alles, M.G.; Vasarhelyi, M.A. A field study on the use of process mining of event logs as an analytical procedure in auditing. Account. Rev. 2014, 89, 1751–1773. [Google Scholar] [CrossRef]

- Rab, S.; Wan, M.; Sharma, R.K.; Kumar, L.; Zafer, A.; Saeed, K.; Yadav, S. Digital avatar of metrology. Mapan 2023, 38, 561–568. [Google Scholar] [CrossRef]

- Picard, C.; Cozot, R.; Boissieux, L.; Segovia, B. Evaluating vision-language models for engineering design (including manufacturing and inspection tasks). Artif. Intell. Rev. 2025, 58, 288. [Google Scholar] [CrossRef]

- Rydzi, S.; Zahradnikova, B.; Sutova, Z.; Ravas, M.; Hornacek, D.; Tanuska, P. A predictive quality inspection framework for the manufacturing process in the context of Industry 4.0. Sensors 2024, 24, 5644. [Google Scholar] [CrossRef] [PubMed]

- Nguyen, H.T.T.; Nguyen, L.P.T.; Cao, H. XEdgeAI: A human-centered industrial inspection framework with data-centric explainable edge AI approach. Inf. Fusion 2025, 116, 102782. [Google Scholar] [CrossRef]

- Lin, Y.Z.; Shi, Q.; Yang, Z.; Latibari, B.S.; Shao, S.; Salehi, S.; Satam, P. DDD-gendt: Dynamic data-driven generative digital twin framework. IEEE Trans. Artif. Intell. 2025, 1, 1–15. [Google Scholar] [CrossRef]

- Shafiee, S. Generative AI in manufacturing: A literature review of recent applications and future prospects. Procedia CIRP 2025, 132, 1–6. [Google Scholar] [CrossRef]

- Thomas, D. Revolutionizing failure modes and effects analysis with ChatGPT: Unleashing the power of AI language models. J. Fail. Anal. Prev. 2023, 23, 911–913. [Google Scholar] [CrossRef]

- Alsaif, K.M.; Albeshri, A.A.; Khemakhem, M.A.; Eassa, F.E. Multimodal large language model-based fault detection and diagnosis in context of Industry 4.0. Electronics 2024, 13, 4912. [Google Scholar] [CrossRef]

- Wang, T.; Zhang, B.; Jiang, D.; Li, D. A multimodal large language model framework for intelligent perception and decision-making in smart manufacturing. Sensors 2025, 25, 3072. [Google Scholar] [CrossRef]

- Shadid, N.; Hamad, K.; Andi, O.; Alamassi, I. Revolutionizing quality management: Exploring Quality 4.0 frameworks in the context of Industry 4.0. In Big Data in Finance: Transforming the Financial Landscape; Springer: Cham, Switzerland, 2025; Volume 2, pp. 177–192. [Google Scholar] [CrossRef]

- Alvaro, J.A.H.; Gonzalez Barreda, J. An advanced retrieval-augmented generation system for manufacturing quality control. Adv. Eng. Inform. 2025, 64, 103007. [Google Scholar] [CrossRef]

- Wan, Y.; Chen, Z.; Liu, Y.; Packianather, M.; Wang, R. Empowering LLMs by hybrid retrieval-augmented generation for domain-centric Q&A in smart manufacturing. Adv. Eng. Inform. 2025, 65, 103212. [Google Scholar] [CrossRef]

- Sun, Y.; Zhang, Q.; Bao, J.; Lu, Y.; Liu, S. Empowering digital twins with large language models for global temporal feature learning. J. Manuf. Syst. 2024, 74, 83–99. [Google Scholar] [CrossRef]

- Mata, O.; Ponce, P.; Perez, C.; Ramirez, M.; Anthony, B.; Russel, B.; Apte, P.; MacCleery, B.; Molina, A. Digital twin designs with generative AI: Crafting a comprehensive framework for manufacturing systems. J. Intell. Manuf. 2025. [Google Scholar] [CrossRef]

- ISO 22514-2:2017; Process Capability and Performance of Time-Dependent Process Models. International Organization for Standardization: Geneva, Switzerland, 2018. Available online: https://www.iso.org/standard/71617.html (accessed on 1 January 2026).

| Tool Category | Primary Function | Primary Users | Representative Applications |

|---|---|---|---|

| Document Generation Assistants | Create and update QA/QMS documentation (control plans, SOPs, WIs) | Quality engineers; QA managers | Draft control plans or work instructions using prior versions and product specifications |

| CAPA Narrative Generators | Generate structured narratives for NCRs and CAPA records | Compliance officers; auditors | Produce RCA/CAPA narratives linked to inspection evidence and traceable quality records |

| Generative AI-enabled Supplier QA Portals | Standardize supplier submissions and guide required documentation | Suppliers; supplier quality/procurement teams | Validate PPAP completeness and flag inconsistencies with specifications |

| Interactive Inspection Agents | Provide real-time guidance during inspections and quality checks | Line operators; floor supervisors | Voice/chat assistants that guide steps, answer procedure questions, and log results |

| Anomaly Explanation Engines | Explain anomalies and propose corrective actions | Process engineers; QA analysts | Summarize abnormal trends from sensor/SPC data and suggest likely causes |

| Compliance/Audit Review Tools | Identify gaps and compile audit-ready compliance evidence | QA leads; internal auditors | Review QMS records against standards and generate checklists or regulator-ready summaries |

| Governance Dashboards | Monitor AI outputs, approvals, and traceability for oversight | QA directors; risk managers | Aggregate AI recommendations, human approvals, and open issues for management review and audits |

| Category | Typical QA Tasks | Primary Data | Integrations | Example Outputs | Key Assurance Controls | Where it Fits |

|---|---|---|---|---|---|---|

| QMS/MES/PLM co-pilot [42] | Draft PFMEA, control plans, PPAP packages, work instructions, summarize NCRs | CTQs, specifications, NCR/CAPA history | eQMS, MES, PLM | Drafted sections with citations, change logs | Human approval, version control, source-locked RAG | Supplier quality, in-process QA |

| Vision–language AOI aide [43,44] | Explain defects, recommend inspection checks | AOI/line images, SPC signals | AOI, MES | Defect rationales, operator checklists | MSA-aligned validation, advisory-only use | In-process QA |

| RAG QA assistant [45] | Line-side Q&A with citations, retrieve reaction plans | Approved work instructions, control plans, standards | eQMS, DMS | Grounded answers with links | Access control, citation enforcement | In-process QA, audit support |

| Supplier quality co-pilot [42] | PPAP completeness checks, risk-based sampling proposals | Supplier performance history, specifications | ERP, SQM, eQMS | Risk-stratified sampling plans, scorecards | SQE approval, auditable rationale trail | Supplier quality, incoming inspection |

| CAPA/audit assembler [46] | Draft 8D/CAPA narratives, compile evidence packs | NCRs, test results, event logs | eQMS, LIMS | Structured reports, clause-to-evidence matrices | Schema validation, role-based sign-off | Cross-functional (QA, production, audit) |

| Metrology aide [47] | Draft method descriptions and uncertainty narratives | Lab methods, measurement data, uncertainty budgets | LIMS, QMS | ISO/IEC 17025-aligned narratives | Impartiality controls, authorized signatories | Testing, release decision support |

| Simulation and digital-twin aide [41] | What-if studies, DOE support, Cp/Cpk and yield forecasts | CTQs/specs, SPC, NCR/CAPA, sensor/process data | MES, SPC/eQMS, PLM/CAD | CTQ/yield predictions, sensitivity drivers, operating limits | V&V, input lineage, human approval | Pre-production, qualification, in-process optimization |

| Reference | GAI Tool/ Component |

Target QA Feature |

Methodological Approach |

|---|---|---|---|

| Rydzi et al. (2024) [49] | Predictive inspection triage (ML, GenAI proposed) | End-of-line defect prediction | ML-based triage with planned GenAI extension |

| Nguyen et al. (2024) [50] | XedgeAI (XAI + LVLMs) | Edge-based visual inspection | Modular XAI system with data augmentation |

| Lin et al. (2025) [51] | DDD-GenDT (LLM-augmented DT) | Real-time process monitoring | LLM ensemble in adaptive digital twin |

| Shafiee (2025) [52] | GAN/VAE for defect synthesis | Visual model training augmentation | Synthetic data generation |

| Thomas (2023) [53] | ChatGPT for FMEA drafting | Failure mode analysis | Prompt-based failure scenario generation |

| Alsaif et al. (2024) [54] | Multimodal LLM | Fault detection and diagnosis | Signal-text LLM fusion for anomaly diagnosis |

| Wang et al. (2025) [55] | LLM-based Q&A assistant | Real-time decision support | Shop-floor assistant with multimodal input |

| Álvaro & González (2025) [57] | RAG assistant for QA | Line-side Q&A over controlled knowledge | Controlled retrieval with versioned sources |

| Wan et al. (2025) [58] | Hybrid KG+RAG | Factory Q&A and guidance | Ontology-constrained RAG for QA |

| Sun et al. (2024) [59], Mata et al. (2025) [60] | LLM, LLM-enhanced digital twins |

Dynamic inspection and confirmation | LLM integration into live simulation pipelines |

| Step | Failure Mode | Effect | Cause | S/O/D/RPN | Current Control |

Action |

|---|---|---|---|---|---|---|

| SMT Print | Solder bridging (RF area) | No connection, high draw | Excess paste, stencil wear | 8/4/4/128 | SPI, AOI | Reduce aperture, retrain operator |

| Placement | Tombstoning of 0402s | Intermittent RF issues | Offset, thermal stress | 7/3/5/105 | AOI, placement program verification; feeder/nozzle checks | Adjust design layout, feeder check |

| Reflow | Voids under PMIC | Thermal instability | Poor soak profile | 9/2/5/90 | X-ray, FT | DOE on profile, SPC control |

| Display Bond | Misalignment > 0.2 mm | Light-bleed | Fixture drift, excess glue | 6/4/5/120 | Visual check | Add vision inspection, GRR validation |

| Firmware Flash | Wrong image | Bricked unit | Config control lapse | 9/2/6/108 | CRC, manual log | Auto flash check, signed build required |

| Functional Test | Temp offset > ±0.5 °C | Inaccurate user comfort | Sensor miscalibration | 8/3/4/96 | Calibration, FT | Cp/Cpk monitoring on calibration CTQ, drift alarms, supplier sensor CoC/verification |

| Step | Failure Mode | Effect | Cause | S/O/D/RPN | Current Control | Recommended Action |

Owner | rRPN |

|---|---|---|---|---|---|---|---|---|

| SMT Print | Solder bridging | Electrical failure | Overpaste, stencil wear | 8/4/4/128 | 100% SPI, AOI confirmation | Optimize aperture design, enforce stencil life/cleaning, retrain operator | ME-D3 | 72 |

| Reflow | Voids on PMIC pad | Power instability/shutoff | Inadequate thermal soak | 9/2/5/90 | Profile verification, X-ray sampling, FT correlation | DOE to optimize soak/reflow profile, add SPC limits on key reflow parameters, define reaction plan for void-rate drift | PE-D5 | 54 |

| AOI | False negative (fine-pitch) | Latent field failure | Glare, poor contrast, suboptimal thresholds | 8/6/2/96 | AOI program control, golden board checks, periodic verification | Standardize illumination/contrast calibration, add rule-based “high-risk” recheck (e.g., X-ray/ICT sampling), perform MSA/GRR on AOI setup changes | VisEng-D6 | 48 |

| Firmware Flash | Wrong firmware version | Bricked device | Versioning/control lapse | 9/2/6/108 | Automated version check, CRC verification, release-controlled builds | Require signed firmware and locked release pipeline, block manual override, log hash/version to device ID for traceability | SW-D4 | 27 |

| Functional Test | Temperature drift > 0.5 °C | Comfort complaints/returns | Calibration error, sensor drift | 9/2/6/108 | Calibration routine, FT limits, periodic verification | Add Cp/Cpk monitoring for calibration CTQ, introduce drift alarms and recalibration triggers, tighten supplier verification for sensors | QE-D7 | 42 |

| Index | Expert Team | GPT-5- Thinking |

Rationale |

|---|---|---|---|

| DQ | 3.0 | 3.8 | GPT-5 provides traceable, audit-ready packs with clause links |

| DC | 2.6 | 3.0 | GPT-5 includes anomaly synthesis, expert solution lacks pre-pilot hold logic |

| PCS | 3.0 | 3.4 | 1.33; GPT-5 adds degradation alarms |

| GP | 2.9 | 3.9 | GPT-5 supports versioning, ownership, change-trace |

| TEI | 3.2 | 3.7 | GPT-5 auto-generates audits, triggers; expert solution requires manual integration |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).