2. Review of Related Literature

Artificial intelligence (AI) has increasingly become a focal point of educational research as schools and universities explore new ways to enhance teaching and learning processes. AI refers to computer systems capable of performing tasks that typically require human intelligence, such as reasoning, learning, decision-making, and language processing. In education, AI is commonly embedded in adaptive learning platforms, automated assessment systems, intelligent tutoring systems, and data-driven analytics tools (Holmes et al., 2019). The growing body of literature reflects both optimism and caution regarding AI’s role in shaping educational practices.

One major theme in the literature is the potential of AI to support personalized learning. Personalized or adaptive learning systems use algorithms to analyze students’ performance data and adjust instructional content to suit individual learning needs. Studies suggest that such systems can help learners progress at their own pace, address learning gaps, and improve academic performance, particularly in mathematics and science subjects (Pane et al., 2017). Researchers argue that AI-driven personalization moves education away from a one-size-fits-all approach toward more inclusive learning environments.

The integration of technology in education has dramatically reshaped teaching and learning practices in the 21st century. Among these innovations, artificial intelligence (AI) presents significant opportunities for enhancing student engagement, personalization, and academic performance. However, alongside these benefits come notable challenges and ethical considerations that require careful attention. Several recent studies provide insights into the broader context of educational technology and digital media use, offering a foundation for understanding AI’s role in contemporary learning environments.

Celada et al. (2025) explored the effects of media exposure on young children’s social development, noting that excessive engagement with digital media could hinder meaningful social interactions and limit critical cognitive and emotional growth. While the study focused on early childhood, its implications extend to AI-assisted learning tools in higher education, where digital content may similarly influence students’ social and cognitive habits. In the context of AI in education, the potential overreliance on automated learning systems or AI-generated feedback may reduce opportunities for peer interaction, collaborative learning, and the development of critical thinking skills.

Expanding on the role of digital platforms in learning, Genelza (2024) examined the integration of TikTok as an academic aid, highlighting its potential to enhance student engagement and provide alternative avenues for knowledge acquisition. The study demonstrated that short-form, interactive content could support personalized learning experiences and improve students’ motivation. This aligns with AI applications in education, where intelligent systems can adapt content to individual learning needs, provide immediate feedback, and create interactive learning environments. However, as with TikTok, the ethical and practical challenges—such as distractions, content reliability, and equitable access—remain significant considerations.

Peralta et al. (2025) conducted a systematic review on the effects of video games, finding that interactive digital experiences could positively influence problem-solving skills, motivation, and engagement when used strategically. Conversely, excessive or unstructured use could result in negative outcomes such as reduced attention spans or social disengagement. This dual nature mirrors the opportunities and challenges posed by AI in education: while AI can enhance engagement and provide adaptive learning experiences, its misuse or overreliance may inadvertently undermine learning quality or student autonomy. These findings highlight the need for balanced and intentional implementation of AI-driven educational technologies.

Finally, Cedeño et al. (2025) conducted a systematic review of the Quipper learning management system, demonstrating its effectiveness in blended learning environments. The study underscored how technology can facilitate personalized instruction, monitor student progress, and support flexible learning pathways. These findings provide parallels to AI-enabled educational tools, which can similarly enhance teaching and learning through intelligent feedback, adaptive learning paths, and data-driven instructional support. At the same time, concerns regarding student privacy, equity, and ethical usage remain central, emphasizing the need for policies and guidelines to govern AI implementation.

Collectively, these studies highlight the complex interplay of opportunities, challenges, and ethical considerations associated with technology in education. While AI promises personalized learning, improved engagement, and enhanced instructional efficiency, its use must be balanced with attention to social development, equitable access, and ethical responsibility. Understanding these dimensions is critical for educators, administrators, and policymakers to maximize AI’s potential while mitigating risks. In this context, research into AI in education provides valuable insights into how emerging technologies can be harnessed to support learning, foster critical thinking, and uphold ethical standards in contemporary educational settings.

In addition to personalization, AI has been found to enhance student engagement and motivation. Intelligent tutoring systems and conversational agents, such as chatbots, provide immediate feedback and continuous support, which can increase learners’ sense of autonomy and confidence (Woolf et al., 2013). Several studies indicate that students are more willing to engage with learning tasks when AI tools offer real-time assistance without the fear of judgment often associated with traditional classroom interactions.

AI also plays a significant role in supporting teachers’ instructional practices. Automated grading systems, for example, have been shown to reduce teachers’ workload, particularly in large classes, by efficiently assessing objective tests and written responses (Luckin et al., 2016). Learning analytics tools further assist educators by providing insights into students’ learning behaviors, enabling data-informed instructional decisions. These tools are widely viewed as supportive mechanisms rather than replacements for teachers.

Despite these opportunities, the literature consistently highlights challenges related to AI integration in education. One of the most frequently cited concerns is the lack of adequate infrastructure and resources, particularly in developing countries. Williamson and Eynon (2020) emphasize that unequal access to technology may deepen educational inequalities, as students in well-resourced schools benefit more from AI innovations than those in underprivileged settings. This digital divide remains a significant barrier to equitable AI adoption.

Teacher readiness and competence represent another critical challenge. Studies reveal that many educators have limited understanding of AI concepts and lack the pedagogical training necessary to integrate AI tools effectively (Zawacki-Richter et al., 2019). Without proper professional development, teachers may struggle to align AI technologies with curriculum goals, resulting in superficial or ineffective implementation.

The literature also raises concerns about students’ overreliance on AI systems. While AI tools can support learning, excessive dependence may reduce learners’ critical thinking, problem-solving, and independent learning skills (Selwyn, 2019). Some researchers argue that AI should function as a scaffold rather than a substitute for cognitive effort, emphasizing the importance of maintaining balance between technological support and human intellectual engagement.

Ethical considerations form a substantial portion of existing research on AI in education. Data privacy and security are among the most pressing ethical issues. AI systems often collect large amounts of sensitive student data, including academic records and behavioral patterns. Slade and Prinsloo (2013) caution that inadequate data governance policies may expose learners to privacy violations and unauthorized data use.

Closely related to data privacy is the issue of informed consent. Several scholars argue that students and parents are often unaware of how their data are collected, processed, and stored by AI systems (Holmes et al., 2019). This lack of transparency raises ethical concerns regarding students’ rights and autonomy, particularly for minors in basic education.

Algorithmic bias is another ethical issue highlighted in the literature. AI systems are trained on existing datasets, which may reflect social and cultural biases. O’Neil (2016) warns that biased algorithms can lead to unfair academic tracking, misclassification of learners, and discriminatory educational outcomes. Such risks underscore the need for ethical oversight and inclusive system design.

Genelza (2022) critically examined why schools are often slow to adopt innovative technologies, pointing to systemic barriers such as limited resources, resistance to change, and inadequate teacher training. These challenges are particularly relevant for AI integration, which requires not only technological infrastructure but also pedagogical expertise and ethical frameworks. The study emphasizes that successful adoption of AI tools depends on both institutional readiness and the capacity of educators to use technology responsibly, ensuring that AI complements rather than replaces effective teaching practices.

The rise of generative AI has also intensified discussions on academic integrity. Recent studies note that AI-powered writing and problem-solving tools challenge traditional assessment practices and notions of originality (Bernal et al., 2025). While these tools offer learning support, they may also facilitate plagiarism or academic dishonesty if institutional policies are unclear or outdated.

Several scholars emphasize the importance of developing ethical frameworks and governance policies to guide AI use in education. These frameworks often advocate for transparency, accountability, fairness, and human oversight in AI systems (Floridi et al., 2018). Ethical AI, according to these scholars, should align with educational values and prioritize learners’ well-being over efficiency alone.

Human-centered perspectives on AI in education further stress that teaching and learning are fundamentally relational processes. Biesta (2015) argues that education involves moral, social, and emotional dimensions that cannot be fully replicated by machines. From this viewpoint, AI should enhance—not replace—the human elements of education, such as empathy, mentorship, and professional judgment.

Recent literature also highlights the need for contextualized research on AI in education. Much of the existing research is concentrated in higher education and technologically advanced regions, leaving gaps in understanding AI implementation in basic education and developing contexts (Zawacki-Richter et al., 2019). Context-sensitive studies are therefore necessary to inform policy and practice.

Overall, the literature suggests that AI in education presents a complex interplay of opportunities, challenges, and ethical considerations. While AI has the potential to transform learning experiences and instructional practices, its effectiveness depends on thoughtful integration, adequate teacher preparation, and strong ethical safeguards. A balanced and critical approach is essential to ensure that AI serves educational goals rather than undermining them.

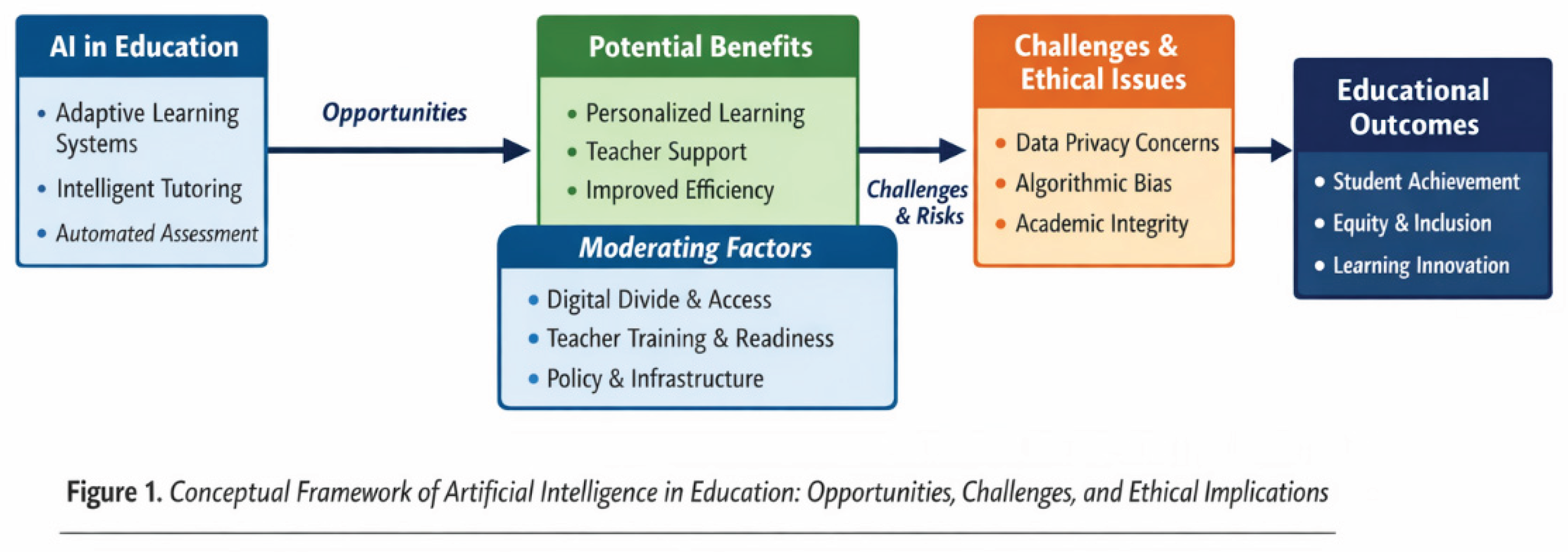

Figure 1.

Conceptual Framework of Artificial Intelligence in Education: Opportunities, Challenges, and Ethical Implications.

Figure 1.

Conceptual Framework of Artificial Intelligence in Education: Opportunities, Challenges, and Ethical Implications.