Submitted:

05 January 2026

Posted:

06 January 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Attention Mechanisms in NMT

2.2. The Transformer Architecture

2.3. Subword Tokenization

3. Methodology

3.1. Data Sources

- OPUS-100 [13]: A massively multilingual corpus derived from the OPUS collection. For our English-Spanish experiments, we use 1 million parallel sentence pairs for training and combine the original development and test splits into a 4,000-pair development set.

- FLORES+ [8]: A high-quality benchmark dataset specifically designed for evaluating machine translation systems. We use 2,009 sentences for our blind test set. Unlike OPUS-100, FLORES+ contains professionally translated Wikipedia content, providing a challenging out-of-domain test of generalization.

3.2. Preprocessing

3.3. Model Architectures

3.3.1. Baseline: Vanilla LSTM

3.3.2. BiLSTM + Bahdanau Attention

3.3.3. Transformer

3.4. Hyperparameters

3.5. Evaluation Metrics

- BLEU [5]: Measures n-gram precision overlap with a brevity penalty.

- chrF: Character n-gram F-score, providing robustness to morphological variations.

4. Experimental Results

4.1. Quantitative Analysis

4.2. Embedding Compatibility

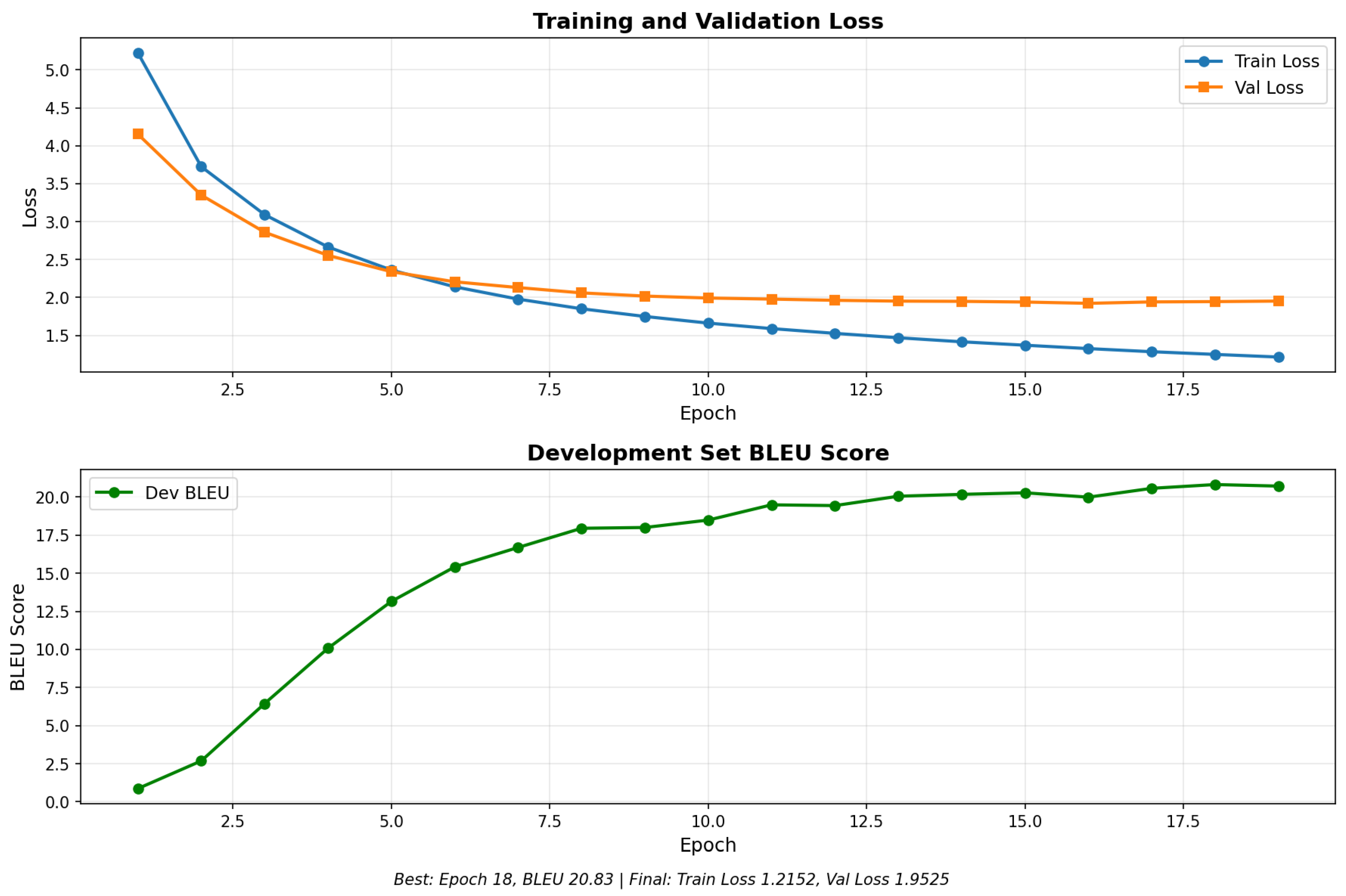

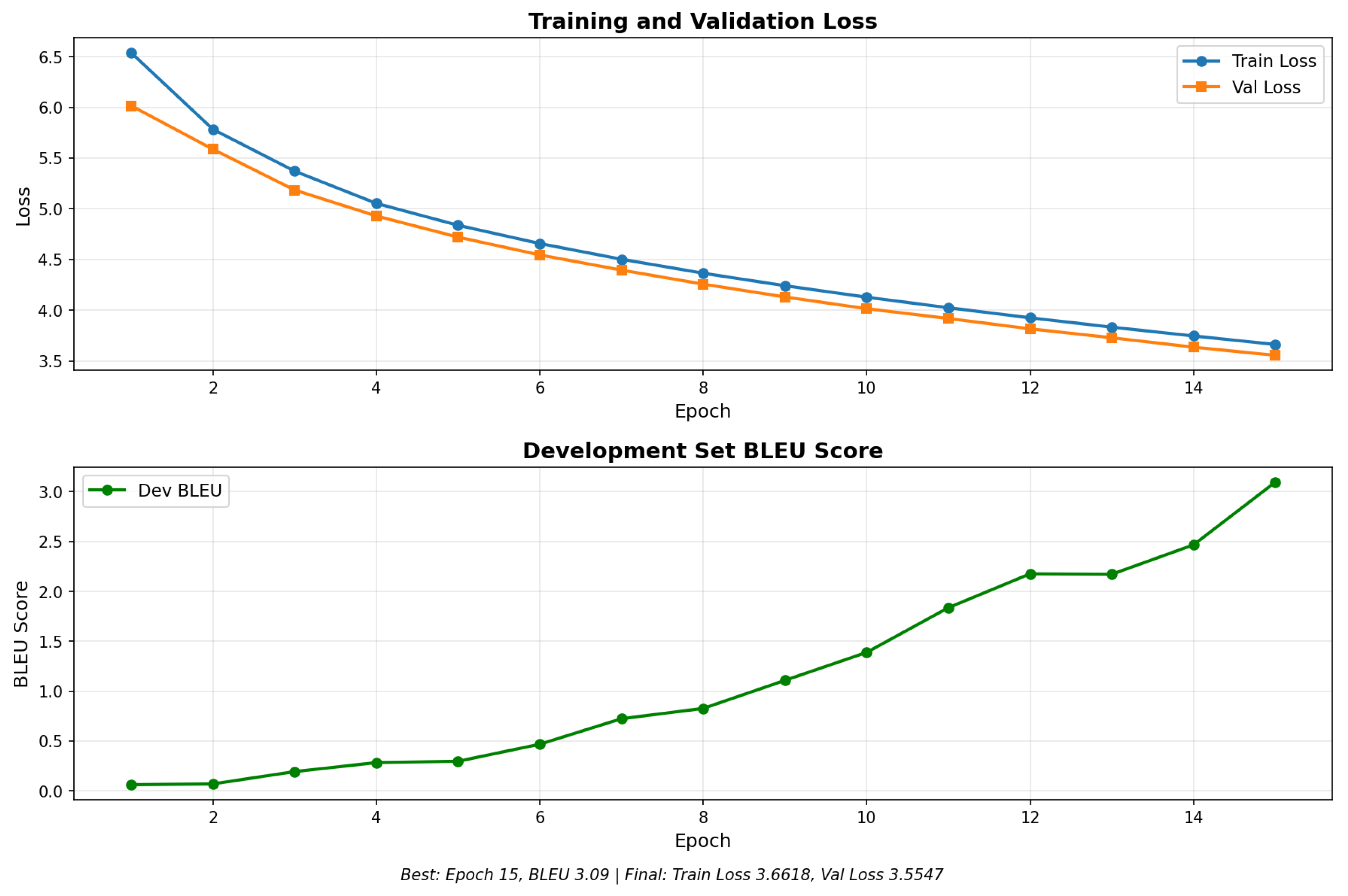

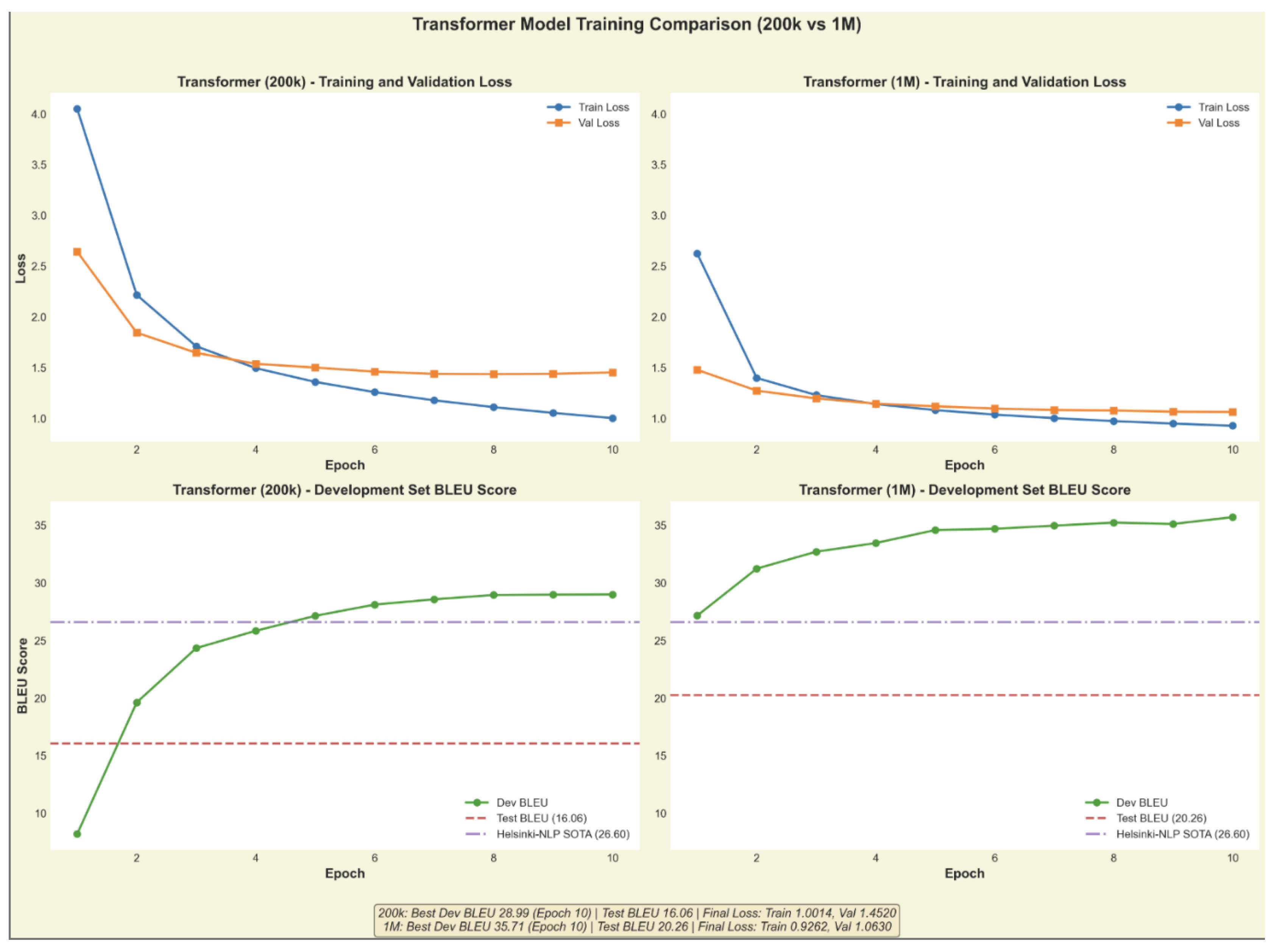

4.3. Training Dynamics

5. Discussion and Error Analysis

5.1. LSTM Failure Modes

5.2. Transformer vs. State-of-the-Art

| Source (English) | Our Transformer (1M) | SOTA (Helsinki-NLP) |

|---|---|---|

| The judge decided to reduce the sentence. | El juez decidió reducir la frase. | El juez decidió reducir la sentencia. |

| It is crucial that she arrive on time. | Es crucial que ella llega a tiempo. | Es crucial que ella llegue a tiempo. |

| The orthodontist checked her teeth. | El ortodoxo revisó sus dientes. | El ortodoncista revisó sus dientes. |

6. Conclusion

Acknowledgments

References

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A. N.; Kaiser; Polosukhin, I. Attention is all you need. Proc. NeurIPS, 2017; pp. 5998–6008. [Google Scholar]

- Bahdanau, D.; Cho, K.; Bengio, Y. Neural machine translation by jointly learning to align and translate. Proc. ICLR, 2015. [Google Scholar]

- Sutskever, I.; Vinyals, O.; Le, Q. V. Sequence to sequence learning with neural networks. Proc. NeurIPS, 2014; pp. 3104–3112. [Google Scholar]

- Sennrich, R.; Haddow, B.; Birch, A. Neural machine translation of rare words with subword units. Proc. ACL, 2016; pp. 1715–1725. [Google Scholar]

- Papineni, K.; Roukos, S.; Ward, T.; Zhu, W.-J. BLEU: A method for automatic evaluation of machine translation. Proc. ACL, 2002; pp. 311–318. [Google Scholar]

- Jiao, W.; Wang, W.; Huang, J.-T.; Wang, X.; Tu, Z. Is ChatGPT a good translator? A preliminary study. arXiv 2023, arXiv:2301.08745. [Google Scholar]

- Hendy, A.; Abdelrehim, M.; Sharaf, A.; Raunak, V.; Gabr, M.; Matsue, H.; Lobban, S. Y.; Awadalla, H. H. How good are GPT models at machine translation? A comprehensive evaluation. arXiv 2023, arXiv:2302.09210. [Google Scholar] [CrossRef]

- Costa-jussà, M. R.; Cross, J.; NLLB Team. No language left behind: Scaling human-centered machine translation. arXiv 2022, arXiv:2207.04672. [Google Scholar] [CrossRef]

- Rei, R.; de Souza, J. G. C.; Alves, D.; Zerva, P.; Farinha, A. C.; Glushkova, T.; Lavie, A.; Coheur, L.; Martins, A. F. COMET-22: Unifying translation evaluation. Proc. WMT, 2022; pp. 585–597. [Google Scholar]

- Kocmi, T.; Bawden, R.; Bojar, O.; Dvorkovich, A.; Federmann, C.; Fishel, M.; Gowda, T.; Graham, Y.; Grundkiewicz, R.; Haddow, B. Findings of the 2022 conference on machine translation (WMT22). Proc. WMT, 2022; pp. 1–45. [Google Scholar]

- Stahlberg, F. Neural machine translation: A review. J. Artif. Intell. Res. 2021, vol. 69, 343–418. [Google Scholar] [CrossRef]

- Moslem, Y.; Haque, R.; Kelleher, J. D. Domain-specific text generation for machine translation. Proc. AACL-IJCNLP, 2022; pp. 14–30. [Google Scholar]

- Zhang, B.; Williams, P.; Titov, I.; Sennrich, R. Improving massively multilingual neural machine translation and zero-shot translation. Proc. ACL, 2020; pp. 1628–1639. [Google Scholar]

| Parameter | BiLSTM | Transformer |

|---|---|---|

| Embedding Dimension | 512 | 512 |

| Hidden Dimension | 512 | 512 |

| Feed-forward Dimension | - | 2048 |

| Encoder/Decoder Layers | 2 | 6 |

| Attention Heads | - | 8 |

| Dropout | 0.2 | 0.1 |

| Optimizer | Adam | AdamW |

| Learning Rate | ||

| Batch Size | 64 | 64 |

| Model | BLEU ↑ | chrF ↑ | COMET ↑ |

|---|---|---|---|

| Dictionary Baseline | 3.05 | 33.27 | 0.435 |

| Vanilla LSTM | 0.92 | 19.09 | 0.325 |

| BiLSTM + Attention | 10.66 | 38.09 | 0.467 |

| LSTM + Pretrained Emb. | 1.04 | 18.15 | 0.327 |

| Transformer (200K) | 16.06 | 45.97 | 0.674 |

| Transformer (1M) | 20.26 | 50.07 | 0.779 |

| SOTA (Helsinki-NLP) | 26.60 | 54.95 | 0.850 |

| Source (English) | Vanilla LSTM Output | BiLSTM + Attention Output |

|---|---|---|

| On Monday, scientists from the Stanford University... | s de los Juegos de la ciudad de la Universidad de la Universidad... | ...luego de la Escuela Primagrafía de la Uni... |

| Local media reports an airport fire vehicle... | s servicios de la empresa de la empresa lleva... | ...locales informes de un medio de aeropu... |

| 28-year-old Vidal had joined Barça three seasons... | años de ese edificio de él había llegado a la... | ...ce a dos metros Veh-F. Había unido a Barça... |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).