Submitted:

05 January 2026

Posted:

06 January 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- RQ1: What cue families are described in the empirical literature as distinguishing AI vs human text?

- RQ2: How stable are these cues across tasks, genres, lengths, and revision conditions?

2. Methods

2.1. Protocol and Registration

2.2. Eligibility Criteria

2.3. Information Sources

2.4. Search

3. Results

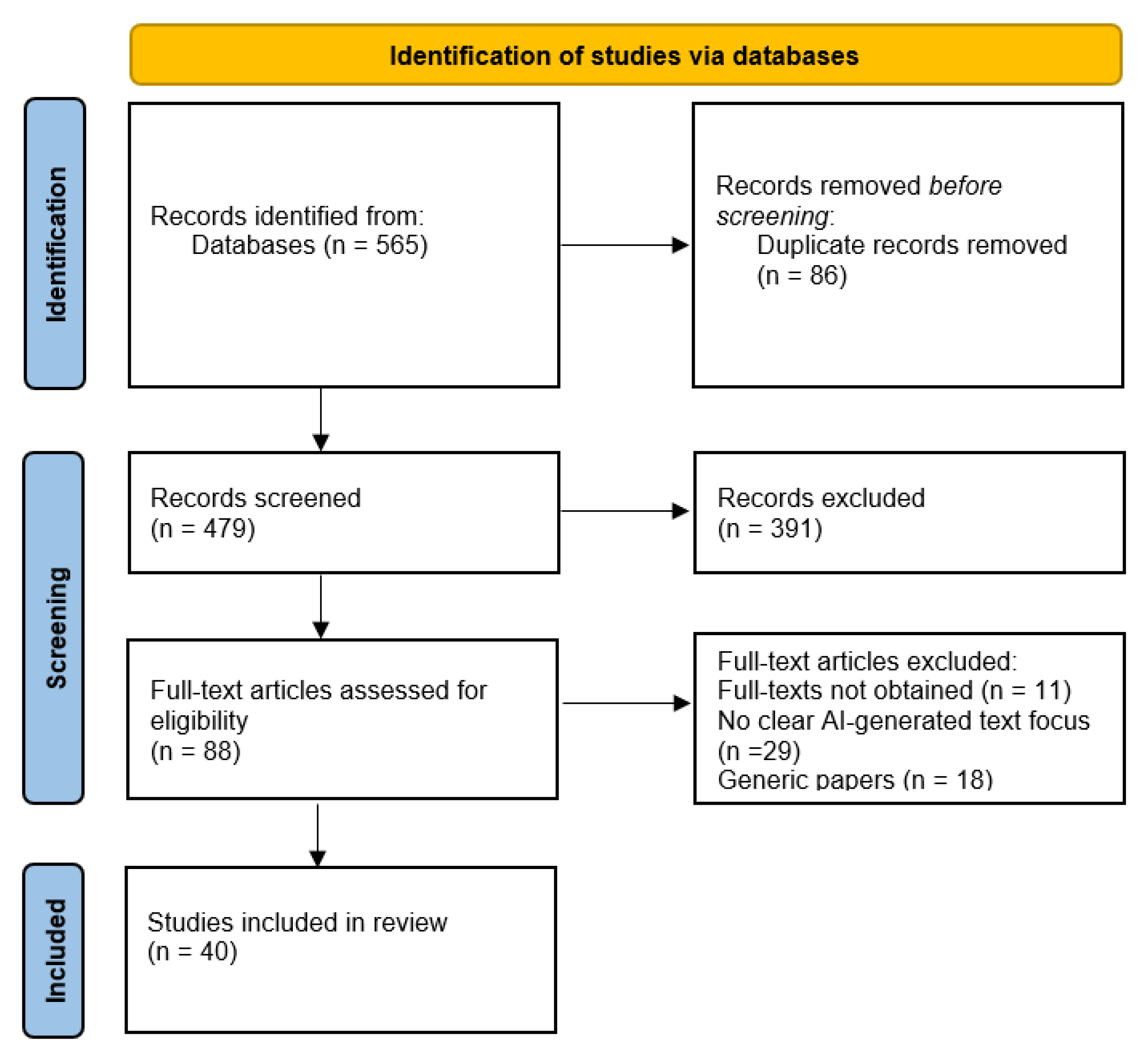

3.1. Selection of Sources of Evidence

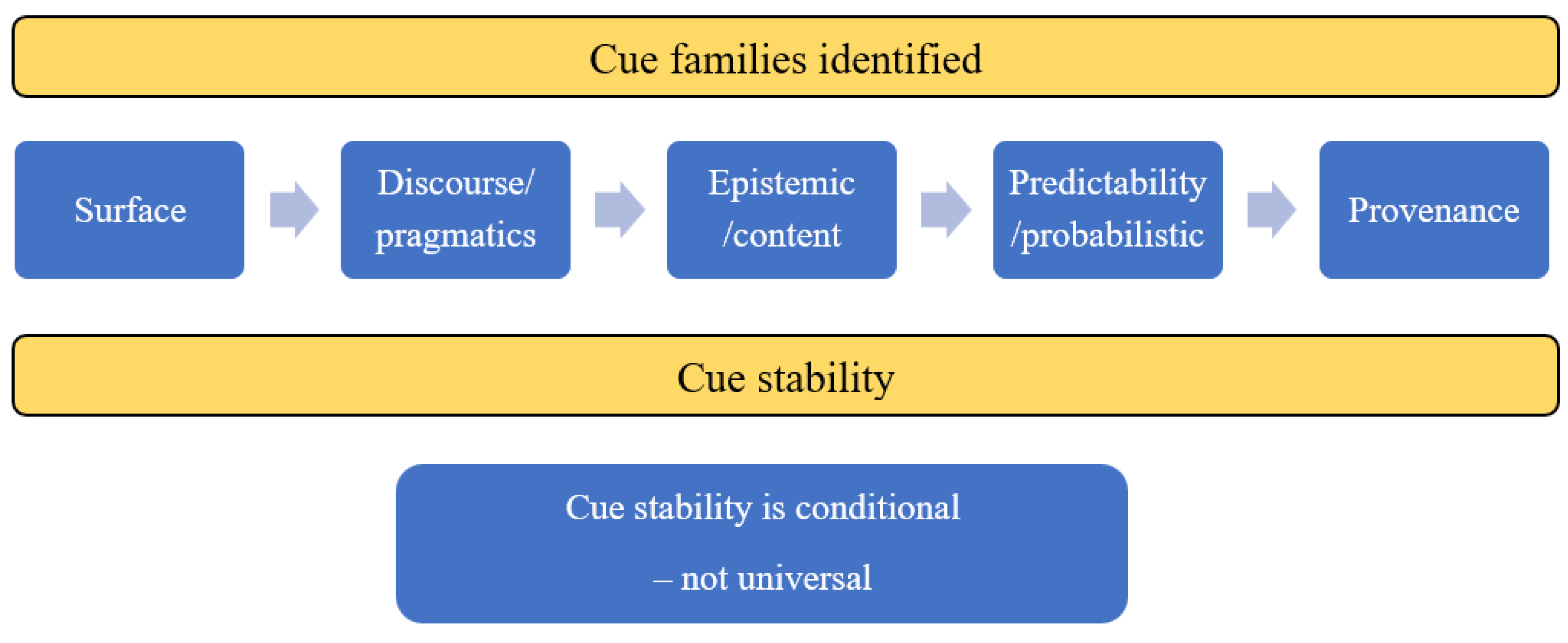

3.2. RQ1: Cue Families Distinguishing AI- vs Human-Authored Text

3.2.1. Overview of cue Families in the Qualified Literature

3.2.2. Surface Cues

3.2.3. Discourse/Pragmatic Cues

3.2.4. Epistemic/Content Cues

3.2.5. Predictability/Probabilistic Cues

3.2.6. Provenance Cues

3.3. RQ2: Cue Stability Across Tasks, Genres, Lengths, and Revision Conditions

3.3.1. Overview of the Qualified Studies Regarding Cue Stability

3.3.2. Stability Across Tasks, Genres, and Registers

3.3.3. Stability Under Length Constraints and Short Samples

3.3.4. Stability Under Revision, Paraphrasing, and Mixed Authorship

4. Discussion

4.1. Cue Families Distinguishing AI- vs Human-Authored Text

4.2. Stability of Cues Across Contexts, Length, and Revision/Paraphrasing

4.3. Limitations and Future Work

5. Conclusions

Supplementary Materials

Acknowledgments

Conflicts of Interest

References

- Neumann, A. T., Yin, Y., Sowe, S., Decker, S., & Jarke, M. (2025). An LLM-driven chatbot in higher education for databases and information systems. IEEE Transactions on Education, 68(1), 103–116.

- Rane, N. L., Tawde, A., Choudhary, S. P., & Rane, J. (2023). Contribution and performance of ChatGPT and other Large Language Models (LLM) for scientific and research advancements: a double-edged sword. International Research Journal of Modernization in Engineering Technology and Science, 5(10), 875-899.

- Cheng, S. (2025). When Journalism meets AI: Risk or opportunity?. Digital Government: Research and Practice, 6(1), 1-12.

- Chkirbene, Z., Hamila, R., Gouissem, A., & Devrim, U. (2024, December). Large language models (llm) in industry: A survey of applications, challenges, and trends. In 2024 IEEE 21st International Conference on Smart Communities: Improving Quality of Life using AI, Robotics and IoT (HONET) (pp. 229-234). IEEE.

- Tengler, K., & Brandhofer, G. (2025). Exploring the difference and quality of AI-generated versus human-written texts. Discover Education, 4(1), 113.

- Liang, W., Yuksekgonul, M., Mao, Y., Wu, E., & Zou, J. (2023). GPT detectors are biased against non-native English writers. Patterns, 4(7).

- Wu, J., Yang, S., Zhan, R., Yuan, Y., Chao, L. S., & Wong, D. F. (2025). A survey on llm-generated text detection: Necessity, methods, and future directions. Computational Linguistics, 51(1), 275-338.

- Kirchner, J. H., Ahmad, L., Aaronson, S., & Leike, J. (2023, January 31). New AI classifier for indicating AI-written text. OpenAI. https://openai.com/index/new-ai-classifier-for-indicating-ai-written-text/.

- Ippolito, D., Duckworth, D., Callison-Burch, C., & Eck, D. (2020). Automatic detection of generated text is easiest when humans are fooled. In Proceedings of the 58th annual meeting of the association for computational linguistics (pp. 1808-1822).

- Krishna, K., Song, Y., Karpinska, M., Wieting, J., & Iyyer, M. (2023). Paraphrasing evades detectors of ai-generated text, but retrieval is an effective defense. Advances in Neural Information Processing Systems, 36, 27469-27500.

- Wang, Y., Mansurov, J., Ivanov, P., Su, J., Shelmanov, A., Tsvigun, A., ... & Nakov, P. (2024). Semeval-2024 task 8: Multidomain, multimodel and multilingual machine-generated text detection. arXiv preprint arXiv:2404.14183.

- Dathathri, S., See, A., Ghaisas, S., Huang, P. S., McAdam, R., Welbl, J., ... & Kohli, P. (2024). Scalable watermarking for identifying large language model outputs. Nature, 634(8035), 818-823.

- Fiedler, A., & Döpke, J. (2025). Do humans identify AI-generated text better than machines? Evidence based on excerpts from German theses. International Review of Economics Education, 49, 100321.

- Stevens, A., Hersi, M., Garritty, C., Hartling, L., Shea, B. J., Stewart, L. A., ... & Tricco, A. C. (2025). Rapid review method series: interim guidance for the reporting of rapid reviews. BMJ evidence-based medicine, 30(2), 118-123.

- Hamel, C., Michaud, A., Thuku, M., Skidmore, B., Stevens, A., Nussbaumer-Streit, B., & Garritty, C. (2021). Defining rapid reviews: a systematic scoping review and thematic analysis of definitions and defining characteristics of rapid reviews. Journal of clinical epidemiology, 129, 74-85.

- Tricco, A. C., Straus, S. E., Ghaffar, A., & Langlois, E. V. Rapid reviews for health policy and systems decision-making: more important than ever before. Syst Rev. 2022; 11 (1): 153.

- El-Dakhs, D. A. S., Afzaal, M., & Siyanova-Chanturia, A. (2026). A Genre-Based Comparison of Chat-GPT-Generated Abstracts Versus Human-Authored Abstracts: Focus on Applied Linguistics Research Articles. Corpus Pragmatics, 10(1), 1.

- Culda, L. C., Nerişanu, R. A., Cristescu, M. P., Mara, D. A., Bâra, A., & Oprea, S. V. (2025). Comparative linguistic analysis framework of human-written vs. machine-generated text. Connection Science, 37(1), 2507183.

- Salnikov, E., & Bonch-Osmolovskaya, A. (2025). Detecting LLM-Generated Text with Trigram–Cosine Stylometric Delta: An Unsupervised and Interpretable Approach. Journal of Language and Education, 11(3), 138-151.

- Berriche, L., & Larabi-Marie-Sainte, S. (2024). Unveiling ChatGPT text using writing style. Heliyon, 10(12).

- Schaaff, K., Schlippe, T., & Mindner, L. (2024). Classification of human-and AI-generated texts for different languages and domains. International Journal of Speech Technology, 27(4), 935-956.

- Zhang, M., & Zhang, J. (2025). Human-written vs. ChatGPT-generated texts: Stance in English research article abstracts. System, 134, 103842.

- AbdAlgane, M., Ali, R., Othman, K., Ibrahim, I. Z. A., Alhaj, M. K. M., MT, E., & Ali, F. S. A. (2026). Exploring AI-generated texts vs. human-written texts in EFL academic writing: A case study of Qassim University in Saudi Arabia. World, 16(2), 114-131.

- Emara, I. F. (2025). A linguistic comparison between ChatGPT-generated and nonnative student-generated short story adaptations: a stylometric approach. Smart Learning Environments, 12(1), 36.

- Georgiou, G. P. (2025). Differentiating Between Human-Written and AI-Generated Texts Using Automatically Extracted Linguistic Features. Information, 16(11), 979.

- Herbold, S., Hautli-Janisz, A., Heuer, U., Kikteva, Z., & Trautsch, A. (2023). A large-scale comparison of human-written versus ChatGPT-generated essays. Scientific reports, 13(1), 18617.

- Amirjalili, F., Neysani, M., & Nikbakht, A. (2024, March). Exploring the boundaries of authorship: A comparative analysis of AI-generated text and human academic writing in English literature. In Frontiers in Education (Vol. 9, p. 1347421). Frontiers Media SA.

- Zhu, H., & Lei, L. (2025). Detecting authorship between generative AI models and humans: a Burrows’s Delta approach. Digital Scholarship in the Humanities, fqaf048.

- Zaitsu, W., Jin, M., Ishihara, S., Tsuge, S., & Inaba, M. (2025). Stylometry can reveal artificial intelligence authorship, but humans struggle: A comparison of human and seven large language models in Japanese. PLoS One, 20(10), e0335369.

- Zaitsu, W., & Jin, M. (2023). Distinguishing ChatGPT (-3.5,-4)-generated and human-written papers through Japanese stylometric analysis. PLoS One, 18(8), e0288453.

- Przystalski, K., Argasiński, J. K., Grabska-Gradzińska, I., & Ochab, J. K. (2026). Stylometry recognizes human and LLM-generated texts in short samples. Expert Systems with Applications, 296.

- Kayabas, A., Topcu, A. E., Alzoubi, Y. I., & Yıldız, M. (2025). A deep learning approach to classify AI-generated and human-written texts. Applied Sciences, 15(10), 5541.

- Yan, S., Wang, Z., & Dobolyi, D. (2025). An explainable framework for assisting the detection of AI-generated textual content. Decision Support Systems, 114498.

- Najjar, A. A., Ashqar, H. I., Darwish, O., & Hammad, E. (2025). Leveraging explainable ai for llm text attribution: Differentiating human-written and multiple llm-generated text. Information, 16(9), 767.

- Tudino, G., & Qin, Y. (2024). A corpus-driven comparative analysis of AI in academic discourse: Investigating ChatGPT-generated academic texts in social sciences. Lingua, 312, 103838.

- Zhang, M., & Zhang, J. (2025). Disciplinary variation of metadiscourse: A comparison of human-written and ChatGPT-generated English research article abstracts. Journal of English for Academic Purposes, 76, 101540.

- Yao, G., & Liu, Z. (2025). Can AI simulate or emulate human stance? Using metadiscourse to compare GPT-generated and human-authored academic book reviews. Journal of Pragmatics, 247, 103-115.

- Wen, Y., & Laporte, S. (2025). Experiential narratives in marketing: A comparison of generative AI and human content. Journal of Public Policy & Marketing, 44(3), 392-410.

- Markowitz, D. M., Hancock, J. T., & Bailenson, J. N. (2024). Linguistic markers of inherently false AI communication and intentionally false human communication: Evidence from hotel reviews. Journal of Language and Social Psychology, 43(1), 63-82.

- Zhao, Y., Tang, S., Zhang, H., & Lyu, L. (2025). AI vs. human: A large-scale analysis of AI-generated fake reviews, human-generated fake reviews and authentic reviews. Journal of Retailing and Consumer Services, 87, 104400.

- Abdalla, M. H. I., Malberg, S., Dementieva, D., Mosca, E., & Groh, G. (2023). A benchmark dataset to distinguish human-written and machine-generated scientific papers. Information, 14(10), 522.

- Holzmann, U., Anand, S., & Payumo, A. Y. (2025). The ChatGPT Fact-Check: exploiting the limitations of generative AI to develop evidence-based reasoning skills in college science courses. Advances in Physiology Education, 49(1), 191-196.

- Idan, D., Ben-Shitrit, I., Volevich, M., Binyamin, Y., Nassar, R., Nassar, M., ... & Einav, S. (2025). Evaluating the performance of large language models versus human researchers on real world complex medical queries. Scientific Reports, 15(1), 37824.

- Matalon, J., Spurzem, A., Ahsan, S., White, E., Kothari, R., & Varma, M. (2024). Reader’s digest version of scientific writing: comparative evaluation of summarization capacity between large language models and medical students in analyzing scientific writing in sleep medicine. Frontiers in Artificial Intelligence, 7, 1477535.

- Sadasivan, V. S., Kumar, A., Balasubramanian, S., Wang, W., & Feizi, S. (2025). Can AI-generated text be reliably detected? stress testing AI text detectors under various attacks. Transactions on Machine Learning Research.

- Lu, N., Liu, S., He, R., & Tang, K. (2024). Large language models can be guided to evade AI-generated text detection. Transactions on Machine Learning Research.

- Malik, M. A., & Amjad, A. I. (2025). AI vs AI: How effective are Turnitin, ZeroGPT, GPTZero, and Writer AI in detecting text generated by ChatGPT, Perplexity, and Gemini?. Journal of Applied Learning and Teaching, 8(1), 91-101.

- Pratama, A. R. (2025). The accuracy-bias trade-offs in AI text detection tools and their impact on fairness in scholarly publication. PeerJ Computer Science, 11, e2953.

- Li, X., Ruan, F., Wang, H., Long, Q., & Su, W. J. (2025). A statistical framework of watermarks for large language models: Pivot, detection efficiency and optimal rules. The Annals of Statistics, 53(1), 322-351.

- Li, X., Liu, X., & Li, G. (2025). Adaptive Testing for Segmenting Watermarked Texts From Language Models. Stat, 14(4), e70118.

- Al Hosni, J. K. (2025). Preserving Authorial Voice in Academic Texts in the Age of Generative AI: A Thematic Literature Review. Arab World English Journal (AWEJ) Volume, 16.

- Pividori, M., & Greene, C. S. (2024). A publishing infrastructure for Artificial Intelligence (AI)-assisted academic authoring. Journal of the American Medical Informatics Association, 31(9), 2103-2113.

- Hashemi, A., Shi, W., & Corriveau, J. P. (2024). AI-generated or AI touch-up? Identifying AI contribution in text data. International Journal of Data Science and Analytics, 1-12.

- Alam, M. S., Asmawi, A., Haque, M. H., Patwary, M. N., Ullah, M. M., & Fatema, S. (2024). Distinguishing between Student-Authored and ChatGPT-Generated Texts: A Preliminary Exploration of Human Evaluation Techniques. Iraqi Journal for Computer Science and Mathematics, 5(3), 40.

- Ali, E. H. F., Kottaparamban, M., Ahmed, F. E. Y., Usmani, S., Hamd, M. A. A., Ibrahim, M. A. E. S., & Hamed, S. O. E. (2025). Beyond the human pen: The role of artificial intelligence in literary creation. Humanities, 6(4).

- Lau, H. T., & Zubiaga, A. (2025). Understanding the effects of human-written paraphrases in LLM-generated text detection. Natural Language Processing Journal, 100151.

- Burrows, J. (2002). ‘Delta’: a measure of stylistic difference and a guide to likely authorship. Literary and linguistic computing, 17(3), 267-287.

- Stamatatos, E. (2009). A survey of modern authorship attribution methods. Journal of the American Society for information Science and Technology, 60(3), 538-556.

- Hyland, K. (2005). Stance and engagement: A model of interaction in academic discourse. Discourse studies, 7(2), 173-192.

- Biber, D. (2006). Stance in spoken and written university registers. Journal of English for academic purposes, 5(2), 97-116.

- Ji, Z., Lee, N., Frieske, R., Yu, T., Su, D., Xu, Y., ... & Fung, P. (2023). Survey of hallucination in natural language generation. ACM computing surveys, 55(12), 1-38.

- Asgari, E., Montaña-Brown, N., Dubois, M., Khalil, S., Balloch, J., Yeung, J. A., & Pimenta, D. (2025). A framework to assess clinical safety and hallucination rates of LLMs for medical text summarisation. npj Digital Medicine, 8(1), 274.

- Gehrmann, S., Strobelt, H., & Rush, A. M. (2019). Gltr: Statistical detection and visualization of generated text. arXiv preprint arXiv:1906.04043.

- Mitchell, E., Lee, Y., Khazatsky, A., Manning, C. D., & Finn, C. (2023). Detectgpt: Zero-shot machine-generated text detection using probability curvature. In International conference on machine learning (pp. 24950-24962). PMLR.

- Kirchenbauer, J., Geiping, J., Wen, Y., Katz, J., Miers, I., & Goldstein, T. (2023). A watermark for large language models. In International Conference on Machine Learning (pp. 17061-17084). PMLR.

- Shportko, A., & Verbitsky, I. (2025). Paraphrasing Attack Resilience of Various Machine-Generated Text Detection Methods. In Proceedings of the 2025 Conference of the Nations of the Americas Chapter of the Association for Computational Linguistics: Human Language Technologies (Volume 4: Student Research Workshop) (pp. 474-484).

- Macko, D., Moro, R., Uchendu, A., Lucas, J., Yamashita, M., Pikuliak, M., ... & Bielikova, M. (2023, December). MULTITuDE: Large-scale multilingual machine-generated text detection benchmark. In Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing (pp. 9960-9987).

| Cue family | What it includes | What the qualified studies most consistently report | Representative references |

| Surface cues | Lexical diversity/complexity, POS/parse patterns, readability indices, function words, punctuation, character/word/POS n-grams, distance-based stylometry (e.g., Delta), short-sample stylometry | Most pervasive family; AI–human separation frequently achievable via distributional style signals; often framed as stylometric distance or learnable feature regularities | [19,20,21,23,24,25,26,27,28,29,30,31,32] |

| Discourse/pragmatic cues | Rhetorical moves, metadiscourse resources, stance/engagement markers, reader guidance, disciplinary “fit,” register/genre conformity | Strong discriminators within academic/discipline-bound genres; AI can approximate genre templates but still differs in stance/metadiscourse distributions and rhetorical packaging | [17,18,22,35,36,37] |

| Epistemic/content cues | Evidentiality/grounding, plausibility, content authenticity, hallucination-like ungroundedness, deception/intent contrasts (esp. reviews) | Salient where truthfulness/experience claims matter (reviews, narratives, science/health contexts); treated as cues but not uniquely diagnostic (humans can also err/deceive) | [38,39,40,41,42,43,44] |

| Predictability/probabilistic cues | Detector outputs; probability-rank regularities; robustness under perturbations; evasion sensitivity; ML probability decisions | Central in detector benchmarking; powerful but fragile under paraphrase/attacks/evasion; interacts with distribution shift and fairness/bias concerns | [32,33,34,45,46,47,48] |

| Provenance cues | Watermark detectability, statistical decision rules, detection efficiency; segmenting/localizing signal in mixed text | Distinct paradigm: explicit origin signal rather than inference from style; key issue becomes robust detection under editing/mixing and span localization | [49,50] (plus robustness intersections in [45]) |

| Cue stability | Concentrated finding on stability | Cue families most implicated | Representative included refs |

| Stability across tasks, genres, and registers | Stability is conditional and genre-/register-bounded: cues can remain discriminative within constrained contexts (e.g., academic genres), but their patterns shift with genre norms, disciplinary conventions, and task ecologies, limiting cross-genre generalization. | Surface; Discourse/pragmatic; Epistemic/content (task-dependent) | [17,18,22,23,24,35,36,37] |

| Stability under length constraints and short samples | Length affects stability unevenly: surface/stylometric cues can persist in short samples, while provenance (and often probability-based decisions) becomes more fragile when signal is sparse, turning detection into a signal-density and localization problem. | Surface/stylometric; Provenance; Predictability/probabilistic | [19,31,50] |

| Stability under revision, paraphrasing, and mixed authorship | Revision is a major destabilizer: paraphrasing/post-editing and hybrid AI touch-up workflows attenuate or redistribute cues, and detector-relevant probabilistic signals are especially vulnerable under perturbation/evasion; stability therefore must be evaluated under realistic editing/mixing assumptions. | Predictability/probabilistic; Surface; Discourse/pragmatic; Provenance | [45,46,50,52,53,56] |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).