1. Introduction

In recent years, machine learning methods, particularly artificial neural networks (ANNs), have found widespread application beyond traditional data analysis tasks, entering the realm of scientific computing [

1]. One promising direction is the use of ANNs for the numerical solution of differential equations, opening new possibilities for modeling complex physical and engineering systems [

2]. Compared to classical numerical methods (such as the finite difference or finite element method), neural network approaches offer several potential advantages: they allow obtaining a solution in the form of a continuous and differentiable function over the entire domain, can work efficiently in high-dimensional spaces, and are well-suited for problems with inverse parameters or incomplete data.

Various neural network architectures are used to solve ordinary differential equations (ODEs). The most common are multilayer networks, such as the classic multilayer perceptron (MLP) [

3] or specialized Physics-Informed Neural Networks (PINNs), which explicitly incorporate governing laws into the loss function [

4]. However, these architectures often require significant computational resources for training due to the large number of tunable parameters and can suffer from the vanishing gradient problem. An alternative is offered by single-layer neural networks based on the functional link principle (Functional Link Artificial Neural Network, FLANN) [

6]. In the FLANN model, the hidden layer is replaced by a functional expansion block that increases the dimensionality of the input data through decomposition into orthogonal polynomial bases (e.g., Chebyshev, Legendre). This significantly reduces the number of network parameters and the number of iterations required for convergence, making FLANN a computationally efficient model with a high training speed.

FLANN networks based on Chebyshev (ChNN) and Legendre (LeNN) polynomials have already been successfully applied to solve various ODEs [

7,

8,

9], demonstrating competitive accuracy. However, the literature lacks a systematic investigation and direct comparison of the effectiveness of these two approaches. Moreover, the potential of neural networks based on other classical orthogonal polynomials, particularly Laguerre polynomials (LaNN), for solving initial and boundary value problems for ODEs remains virtually unexplored. Thus, there is a clear knowledge gap: there is no comparative analysis within the entire family of polynomial FLANNs, nor an assessment of their advantages compared to standard deep architectures.

The aim of this work is to fill this gap through a comprehensive study of the performance of the family of single-layer polynomial neural networks ChNN, LeNN, and LaNN. The Cauchy problem and the boundary value problem for a nonlinear second-order ODE of the Lane-Emden type, for which an exact analytical solution is known, are chosen as test examples. This allows for an objective assessment of the accuracy of each method. The paper conducts a comparative analysis of the polynomial networks among themselves, as well as with the reference multilayer neural network MLP, based on two key criteria: the accuracy of the solution approximation and the algorithm execution time. It is demonstrated that properly configured polynomial networks can outperform MLP in computational efficiency while maintaining high accuracy, making them a promising tool for rapid numerical integration of differential equations.

2. Preliminaries

Orthogonal polynomials are widely used in approximation theory, in particular for constructing quadrature formulas for approximate calculation of integrals or function approximation [

10].

Let and be real functions belonging to the class .

Definition 1.

Legendre polynomials of degree n for form an orthogonal system and can be computed by the recurrence formula:

Definition 2.

Chebyshev polynomials of degree n for form an orthogonal system and can be computed by the recurrence formula:

Definition 3.

Laguerre polynomials of degree n for form an orthogonal system and can be computed by the recurrence formula:

Recall that orthogonality of systems (

1)-(

3) means that the scalar product of any two functions of this system is equal to zero. More details about the properties of orthogonal polynomials can be found in [

10].

For simplicity, we introduce the notation

, which defines the family of orthogonal polynomials (

1)-(

3).

3. Neural Network Model Based on Orthogonal Polynomials

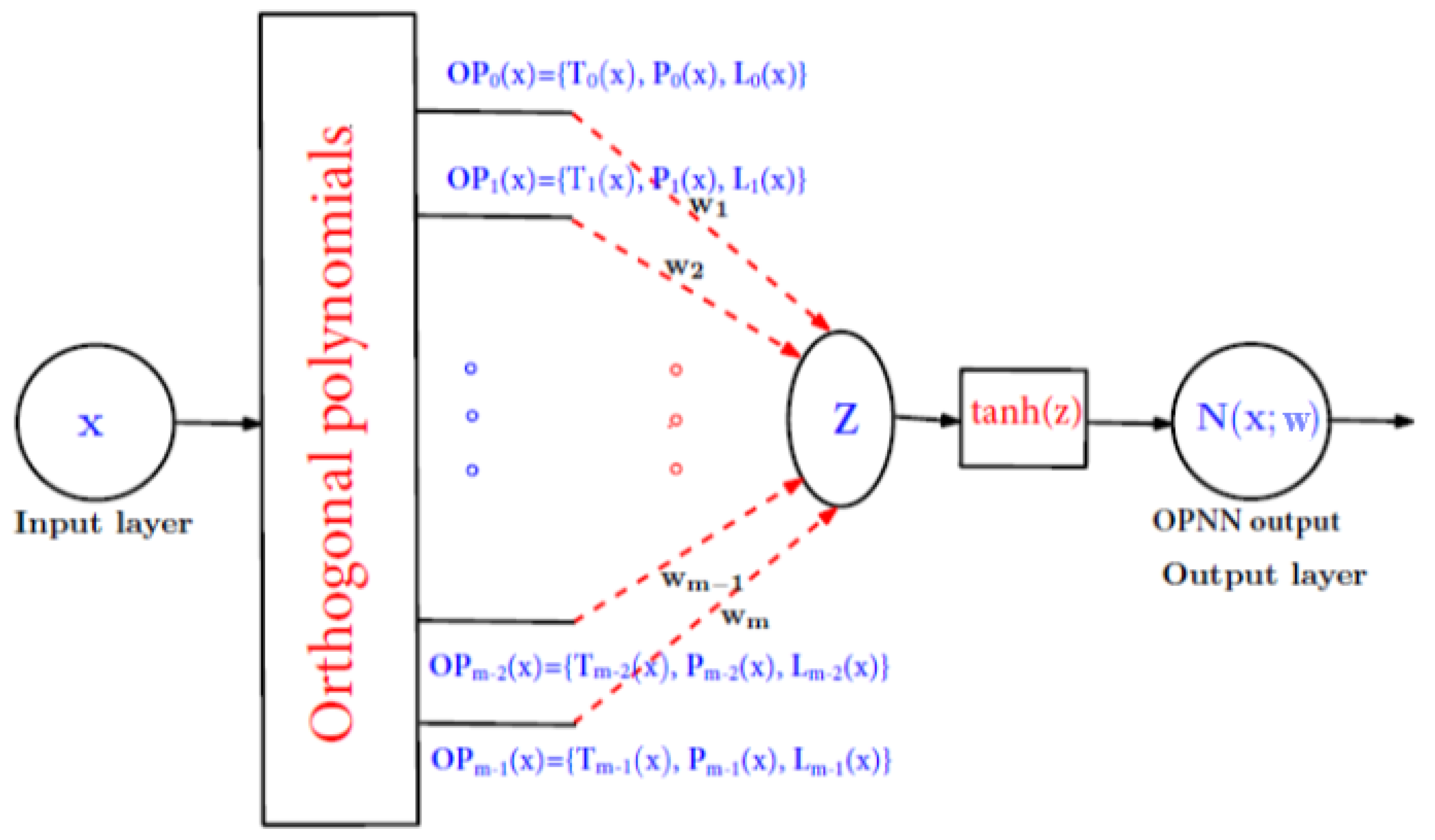

Consider a single-layer neural network based on the functional-link principle (

Figure 1).

Figure 1 shows the structure of a single-layer neural network, which consists of one input node, one output layer and a functional expansion block based on Legendre, Chebyshev and Laguerre orthogonal polynomials. Here, by the notation OPNN we will mean one of the three neural networks LeNN, LaNN or ChNN. The hidden layer is eliminated by transforming the input pattern into a higher-dimensional space using polynomials (

1)-(

3).

As input data, we consider a vector

of dimension

n. The enhanced pattern is obtained using orthogonal polynomials (

1)-(

3):

According to (

4), n-dimensional input

x is extended into

m-dimensional improved polynomials. Then a weighted sum

z of the expanded input data is formed, which is written as:

To update the weights

of the neural network, the error backpropagation learning algorithm is used. Thus, the gradient of the error function with respect to the tunable parameters

is determined. The nonlinear hyperbolic tangent function

is considered as the activation function. For training, the gradient descent algorithm is used, and the weights are updated using the negative gradient at each iteration. The weights are initialized randomly and then updated:

where

is the learning parameter taking values from 0 to 1,

k is the iteration step used to update the weights, as usual in neural networks, and

is the error function,

.

The output layer

is determined by the input vector

x and the tunable parameters

of the selected neural network by the formula:

The activation function (

7) can be chosen differently depending on the problem.

4. Problem Statement

Consider the following problem for a second order ODE for

:

Problem (

8) is a Cauchy problem.

Definition 4.

The trial solution of the Cauchy problem (8) we will call the function:

Consider the following boundary value problem for a second order ODE for

:

Definition 5.

The trial solution of the boundary value problem (10) we will call the function:

The error function for recalculating the weights according to formula (

6) taking into account representations (

9) or (

11) is given as a residual of the following form:

The calculation of derivatives (gradients) in formula (

12) is performed taking into account formulas (

9) and (

11). In the algorithms for implementing polynomial networks, gradient computation was performed automatically using the Python library [

11].

5. Algorithms for Implementing Polynomial Neural Networks

The polynomial neural network algorithms were implemented in the Python programming language [

11] in the PyCharm environment [

12] in the OPNN computer program. The OPNN program flowchart is shown in

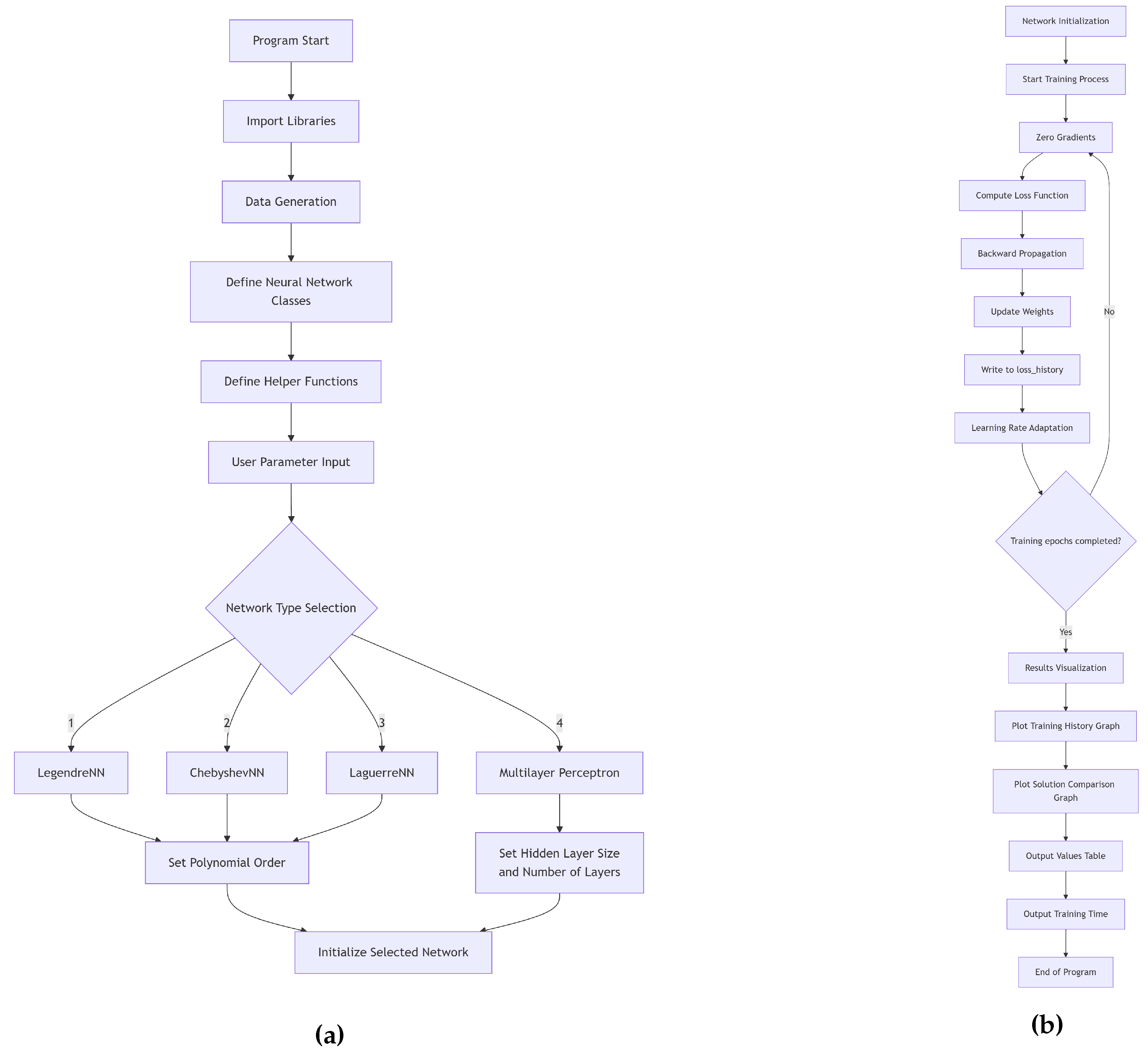

Figure 2. It consists of two stages: the first stage defines the input parameters and selects the appropriate polynomial neural network, while the second stage contains the procedures for training it and inferring the obtained results. The main procedures in the algorithms are presented as pseudocode (Algorithms 1-7).

Note that Algorithms 1-5 are the same for the Cauchy problem and the boundary value problem, but the differences lie in the definition of the trial function (

9) and (

11), i.e., Algorithms 6 and 7.

|

Algorithm 1 Neural Network Training for ODE Solution |

- 1:

procedure MainTraining(, ) - 2:

Generate training data

- 3:

Define exact solution:

- 4:

- 5:

if then

- 6:

- 7:

else if then

- 8:

- 9:

else if then

- 10:

- 11:

else

- 12:

- 13:

end if

- 14:

- 15:

- 16:

- 17:

- 18:

- 19:

Visualize loss history and results - 20:

Output comparative value table - 21:

return

- 22:

end procedure |

|

Algorithm 2 Model Training Procedure |

- 1:

procedure TrainModel(, , , ) - 2:

Initialize Adam optimizer with learning rate

- 3:

Initialize ReduceLROnPlateau scheduler - 4:

- 5:

- 6:

for to do

- 7:

- 8:

- 9:

- 10:

- 11:

- 12:

- 13:

if then

- 14:

Output current loss and learning rate - 15:

end if

- 16:

end for

- 17:

- 18:

return

- 19:

end procedure |

|

Algorithm 3 Loss Function Computation |

- 1:

procedure ComputeLoss(, x) - 2:

Set

- 3:

- 4:

- 5:

Compute equation components: - 6:

- 7:

- 8:

- 9:

if then

- 10:

- 11:

else

- 12:

- 13:

end if

- 14:

- 15:

- 16:

- 17:

- 18:

- 19:

return

- 20:

end procedure |

|

Algorithm 4 Trial Solution Derivative Computation |

- 1:

procedure ComputeDerivatives(x, ) - 2:

(with ) - 3:

- 4:

- 5:

Compute first derivative: - 6:

(using automatic differentiation) - 7:

- 8:

Compute second derivative: - 9:

(using automatic differentiation) - 10:

- 11:

return

- 12:

end procedure |

|

Algorithm 5 Polynomial Neural Network Forward Pass |

- 1:

procedure Forward(x, ) - 2:

- 3:

- 4:

return

- 5:

end procedure |

PolynomialBasis(x) is a procedure for calculating a polynomial basis for a given input

x. Depending on the type of neural network, it invokes different procedures according to definitions (

1)-(

3).

A trial solution to the Cauchy problem according to formula (

9) is calculated using Algorithm 6.

|

Algorithm 6 Trial Solution with Boundary Conditions (The Cauchy Problem) |

- 1:

procedure TrialSolution(x, ) - 2:

- 3:

- 4:

return y

- 5:

end procedure |

A trial solution to the boundary value problem according to formula (

11) is calculated using Algorithm 7.

|

Algorithm 7 Trial Solution with Boundary Conditions (Boundary value problem) |

- 1:

procedure TrialSolution(x, ) - 2:

- 3:

- 4:

return y

- 5:

end procedure |

6. Research Results

Consider the operation of orthogonal polynomial networks on the example of solving the Cauchy problem for the singular nonlinear Lane-Emden differential equation:

as well as for the boundary value problem:

Remark 1. The Lane-Emden equation is often encountered in astrophysics, in the theory of stellar structure, in the theory of thermal behavior of a spherical gas cloud and the theory of thermionic currents [8,13].

Remark 2.

It is known from works [8,13] that the Cauchy problem (13) has an exact solution of the form:

Based on Definition 4 by formula (

9), the trial solution for the Cauchy problem (

13) is written as:

and for the boundary value problem (

14), also valid is the exact solution (

15) and taking into account representation (

11) in the form:

The parameters of the orthogonal polynomial neural networks were set as follows: order of polynomials

, number of training points 11 from the segment

, number of epochs 10000, learning rate

. The calculation results for solving the Cauchy problem (

13) are given in

Table 1.

From

Table 1 it can be seen that the polynomial neural networks give approximately the same result.

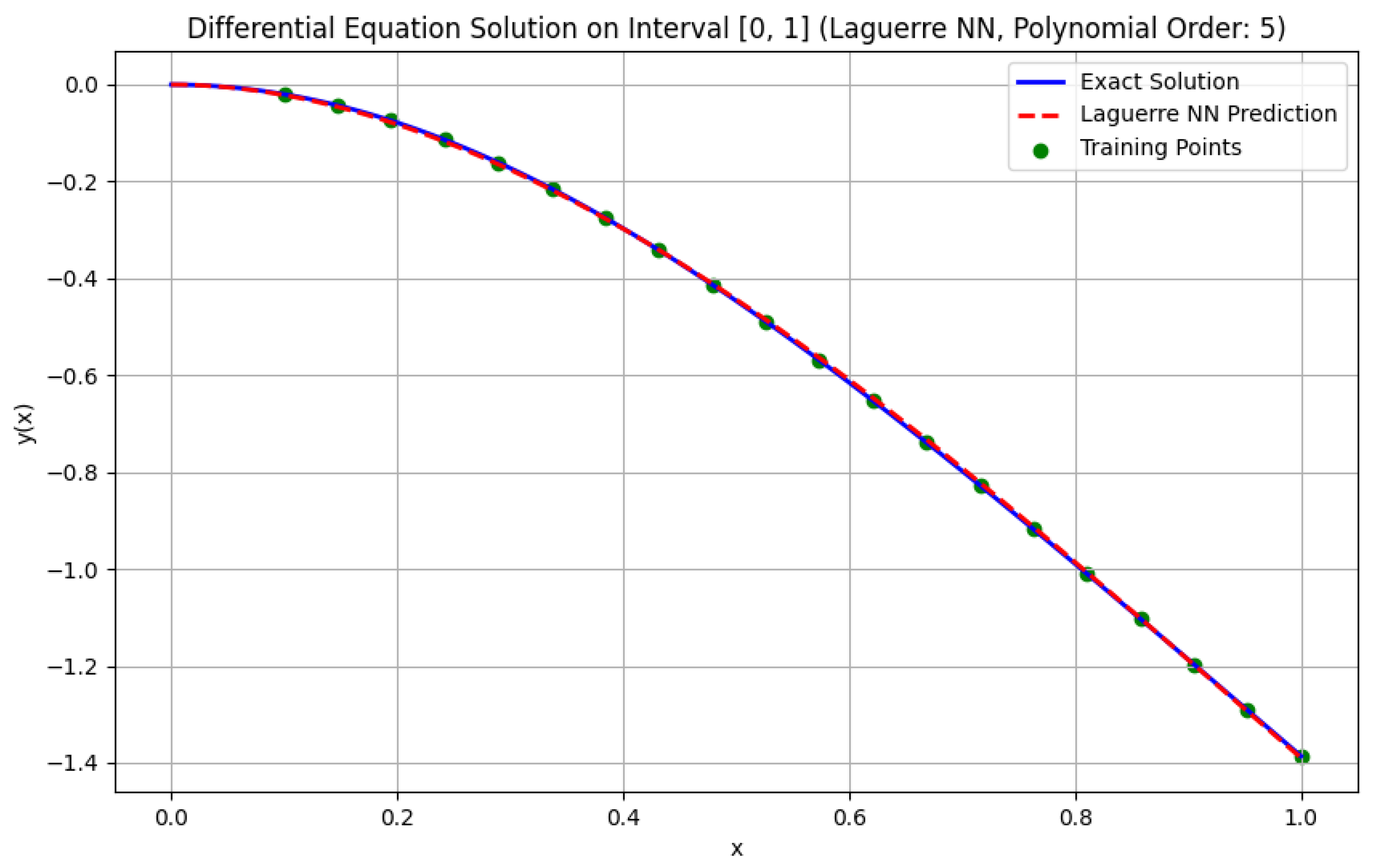

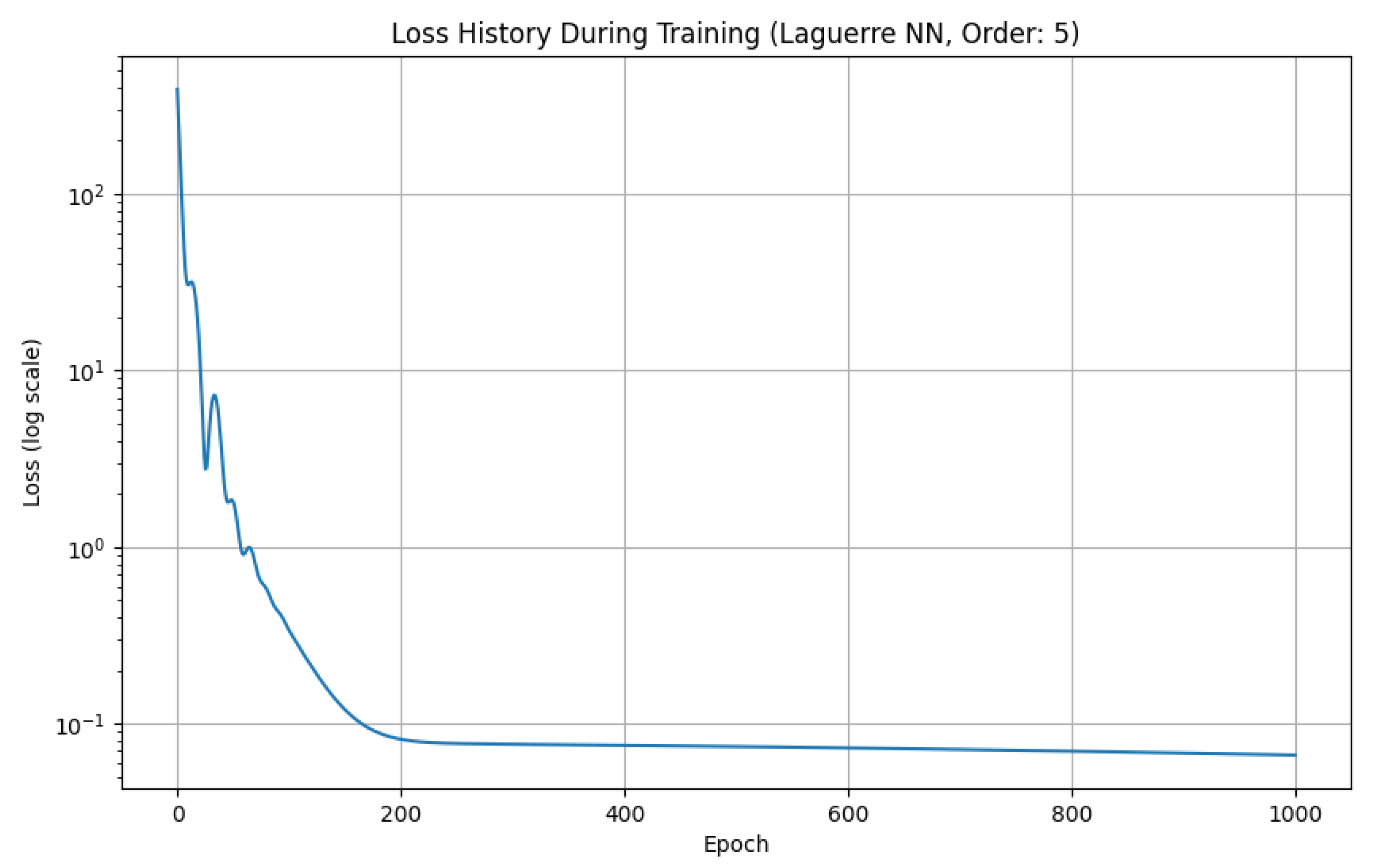

Figure 3 shows the solution graph obtained by the LaNN neural network, and

Figure 4 shows the loss graph during training.

The graph in

Figure 3 demonstrates the efficiency of the Laguerre NN neural network in solving the Cauchy problem (

13). The loss function graph on a logarithmic scale is shown in

Figure 4.

From

Figure 4 it can be seen that the loss decreases with an increase in the number of epochs, which indicates successful model training. At the beginning of training (first 200 epochs), a rapid decrease in loss is observed, then the rate of decrease slows down, which is typical for the neural network training process.

The loss graph allows adjusting the hyperparameters of the neural network: learning rate, number of epochs, etc.

The solution of the boundary value problem (

14) is given in

Table 2.

Here it can be noted that the LaNN neural network solved the problem more accurately than the ChNN network, but was less accurate than the LeNN network.

Table 3 shows the work of the multilayer neural network MLP with two hidden layers of 20 and 40 neurons respectively for solving the Cauchy problem (

13).

It can be seen from

Table 3 that to achieve acceptable accuracy in MLP, it is necessary to increase the number of neurons in each layer. This leads, as we will show below, to an increase in the algorithm execution time.

Table 4 shows the solution of the boundary value problem (

14) using the MLP network.

From

Table 4 it can be seen that increasing the number of neurons in each hidden layer leads to a deterioration in the accuracy of solving the boundary value problem (

14). Let us show how the execution time of algorithms for solving problems (

13) and (

14) depends on the choice of neural network architecture. Neural network parameters: learning rate

, number of epochs – 1000, order of polynomials

.

Table 5 shows the times spent on executing the algorithm for solving the Cauchy problem (

13).

From

Table 5 it can be seen that

. Here it should be noted that the neural network based on Laguerre orthogonal polynomials coped with the task faster than the neural network based on Legendre orthogonal polynomials. Moreover, the LaNN and ChNN neural networks were faster in solving the Cauchy problem (

13) than the multilayer MLP network.

The results of solving the boundary value problem (

14) are given in

Table 6.

From

Table 6 it follows that

, and therefore the trend in the execution times of algorithms for solving the boundary value problem (

14) remains as in

Table 5. Here, a multilayer neural network MLP with three hidden layers of 20 neurons in each layer was used. From

Table 6 it follows that single-layer orthogonal polynomial neural networks can work faster than multilayer neural networks of the MLP type, for example, the LeNN neural network.

It should be noted that increasing the order of the polynomial

n can also lead to a deterioration in the result. Let us take the parameters for the LeNN neural network from the previous example (

Table 3) and find solutions to problems (

13) and (

14) for polynomial orders

and

. The research results are given in

Table 7 and

Table 8.

From

Table 7 we see that with an increase in the order of the polynomial, the calculation error increased, although the algorithm execution time decreased from 10.01 s for

to 8.92 s for

.

From

Table 8 we see that increasing the order of the Legendre polynomial leads to a deterioration in accuracy, and also to a decrease in the algorithm execution time for

Table 8 at

– 6.54 s, and at

– 8.66 s. Therefore, the order of the polynomial must be chosen optimally.

7. Overfitting Issues

It should be noted that the issue of overfitting remains relevant when using neural networks to solve differential equations. Overfitting here in the context of the problem of solving a differential equation is understood differently. Here, the training sample is the differential equation itself, which essentially defines an infinite set of constraints that the solution function must satisfy. The neural network here tries to approximate this function that satisfies these constraints everywhere in a given domain. It should also be said that there is no division of data into test and training samples here. We are looking for a single solution for the entire domain. The concept of "new data" from the same domain is erased — we want the network to work well on the entire domain at once. Thus, pure "memorization of noise in the training data" is not applicable here, since there are no discrete data initially. The concept of overfitting is understood mainly as overfitting at collocation points. We cannot check the fulfillment of the equation on the entire infinite domain. Therefore, we choose a finite set of points (collocation points) at which we calculate the residual of the equation and minimize it. The neural network between collocation points can behave incorrectly, for example, oscillate. The residual at the training collocation points will be very low (sometimes almost zero), but if we check the solution on another, denser grid of points, the residual will be huge. This is a sign of overfitting. Similarly, the concept of overfitting can arise at boundary (initial) points of the domain.

Here, overfitting can be combated by various methods similar to solving the issue of classical overfitting. For example, known methods are L1/L2 regularization, adaptive weights for the loss function, etc. However, in our opinion, the most effective and common method is dynamic selection of collocation points. Instead of using a fixed set of points, at each iteration (or after several epochs) collocations are selected anew (often randomly). Here it is necessary to remember about the non-optimal choice of collocation points. If the points at which the equation residual is calculated are chosen unsuccessfully (for example, uniformly over the entire domain), the network may learn well in some areas and poorly in others (for example, in areas of high gradients) [

14]. This approach does not allow the network to "memorize" their location and forces it to learn to satisfy the equation in the entire domain. We also note article [

4], in which the authors study the issues of overfitting of the multilayer PINN caused by gradient imbalance.

8. Conclusions

The work for the first time investigated the approximate solution of the Cauchy problem and the boundary value problem for the Lane-Emden differential equation using three single-layer neural networks based on Legendre, Chebyshev and Laguerre orthogonal polynomials. It is shown that the new neural network based on Laguerre polynomials gives acceptable results, and in some cases can be more accurate than the ChNN neural network and faster than the LeNN neural network. All three polynomial neural networks were also compared with the multilayer MLP neural network. Research has shown that single-layer polynomial neural networks can work better than the multilayer MLP neural network. Key parameters for polynomial neural networks, in addition to choosing the number of training epochs and learning rate, is the order of the polynomial. However, this order must be chosen optimally; its further increase or decrease can lead to a deterioration in results. Note that in multilayer neural networks it is necessary to adjust the number of hidden layers and the number of neurons in each of them. The latter means that single-layer polynomial neural networks have a simpler architecture than multilayer neural networks and, accordingly, they are easier to implement on a computer. The choice of neural network architecture depends on the specific task for an ordinary differential equation.

In the future, it is planned to apply polynomial neural networks to solve fractional differential equations and their systems [

15,

16,

17,

18,

19], as well as to partial differential equations by analogy with work [

20].

Funding

The work was carried out within the framework of the state assignment of IKIR FEB RAS (reg. No. 124012300245-2).

Data Availability Statement

The original contributions presented in this study are included in the article. Further inquiries can be directed to the corresponding author.

Abbreviations

The following abbreviations are used in this manuscript:

| ChNN |

Chebyshev Neural Network |

| FLANN |

Functional Link Artificial Neural Network |

| LeNN |

Legendre Neural Network |

| LaNN |

Laguerre Neural Network |

| MLP |

Multilayer Perceptron |

| PINN |

Physics-Informed Neural Networks |

References

- Abdolrasol, M.G.M.; Hussain, S.M.S.; Ustun, T.S.; Sarker, M.R.; Hannan, M.A.; Mohamed, R.; Ali, J.A.; Mekhilef, S.; Milad, A. Artificial Neural Networks Based Optimization Techniques: A Review. Journal Abbreviation 2021, 10, 2689. [Google Scholar] [CrossRef]

- Tan, L. S.; Zainuddin, Z.; Ong, P. Solving ordinary differential equations using neural networks. AIP Conference Proceedings 2018, 1974, 020070. [Google Scholar] [CrossRef]

- Riedmiller, M.; Lernen, A. Multi layer perceptron. Machine learning lab special lecture, University of Freiburg 2014, 24, 11-60.

- Wang, S.; Teng, Y.; Perdikaris, P. Understanding and mitigating gradient flow pathologies in physics-informed neural networks. SIAM Journal on Scientific Computing 2021, 43, A3055–A3081. [Google Scholar] [CrossRef]

- Fan, Q.; Zhang, X.; Wen, Z.; Xu, L.; Zhang, Q. Nonlinear Compensation of the Linear Variable Differential Transducer Using an Advanced Snake Optimization Integrated with Tangential Functional Link Artificial Neural Network. Sensors 2025, 25, 1074. [Google Scholar] [CrossRef] [PubMed]

- Pao, Y.H.; Phillips, S.M. The functional link net and learning optimal control. Neurocomputing 1995, 9, 149–164. [Google Scholar] [CrossRef]

- Chaharborj, S. S.; Chaharborj, S. S.; See, P. P. Application of Chebyshev neural network to solve Van der Pol equations. International Journal of Basic and Applied Sciences 2021, 10, 7–19. [Google Scholar] [CrossRef]

- Mall, S.; Chakraverty, S. Application of Legendre neural network for solving ordinary differential equations. Applied Soft Computing 2016, 43, 347–356. [Google Scholar] [CrossRef]

- Yang, Y.; Hou, M.; Luo, J. A novel improved extreme learning machine algorithm in solving ordinary differential equations by Legendre neural network methods. Advances in Difference Equations 2018, 2018, 469. [Google Scholar] [CrossRef]

- Chihara, T. S. An introduction to orthogonal polynomials; Courier Corporation: Mineola, USA, 2011. [Google Scholar]

- Shaw, Z.A. Learn Python the Hard Way; Addison-Wesley Professional: Boston, USA, 2024. [Google Scholar]

- Van Horn, B. M.; Nguyen, Q. Hands-on application development with PyCharm: Build applications like a Pro with the ultimate Python development tool; Packt Publishing Ltd: Bermingham, UK, 2023. [Google Scholar]

- Yildirim, A.; Ozis, T. Solutions of singular IVPs of Lane–Emden type by the variational iteration method. Nonlinear Anal. 2009, 70, 2480–2484. [Google Scholar] [CrossRef]

- Nabian, M. A.; Gladstone, R. J.; Meidani, H. Efficient training of physics-informed neural networks via importance sampling. Computer-Aided Civil and Infrastructure Engineering 2021, 36, 962–977. [Google Scholar] [CrossRef]

- Mall, S.; Chakraverty, S. Single layer Chebyshev neural network model for solving elliptic partial differential equations. Neural Processing Letters 2017, 45, 825–840. [Google Scholar] [CrossRef]

- Tverdyi, D.; Parovik, R. Application of the Fractional Riccati Equation for Mathematical Modeling of Dynamic Processes with Saturation and Memory Effect. Fractal and Fractional 2022, 6, 163. [Google Scholar] [CrossRef]

- Allahviranloo, T.; Jafarian, A.; Saneifard, R.; Ghalami, N.; Nia; Measoomy, S.; Kiani, F.; Fernandez-Gamiz, U.; Noeiaghdam, S. An application of artificial neural networks for solving fractional higher-order linear integro-differential equations. Bound. Value Probl. 2023, 2023, 74. [Google Scholar] [CrossRef]

- Tverdiy, D. A.; Makarov, E.O.; Parovik, R.I. Hereditary Mathematical Model of the Dynamics of Radon Accumulation in the Accumulation Chamber. Mathematics 2023, 11, 850. [Google Scholar] [CrossRef]

- Nguyen, T. D. Neural network method for solving the boundary value problem for fractional differential equations. Computational methods and programming 2025, 26, 245–253. [Google Scholar]

- Ali, I. Advanced machine learning technique for solving elliptic partial differential equations using Legendre spectral neural networks. Electronic Research Archive 2025, 33, 826–848. [Google Scholar] [CrossRef]

|

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).