Submitted:

19 December 2025

Posted:

22 December 2025

You are already at the latest version

Abstract

Keywords:

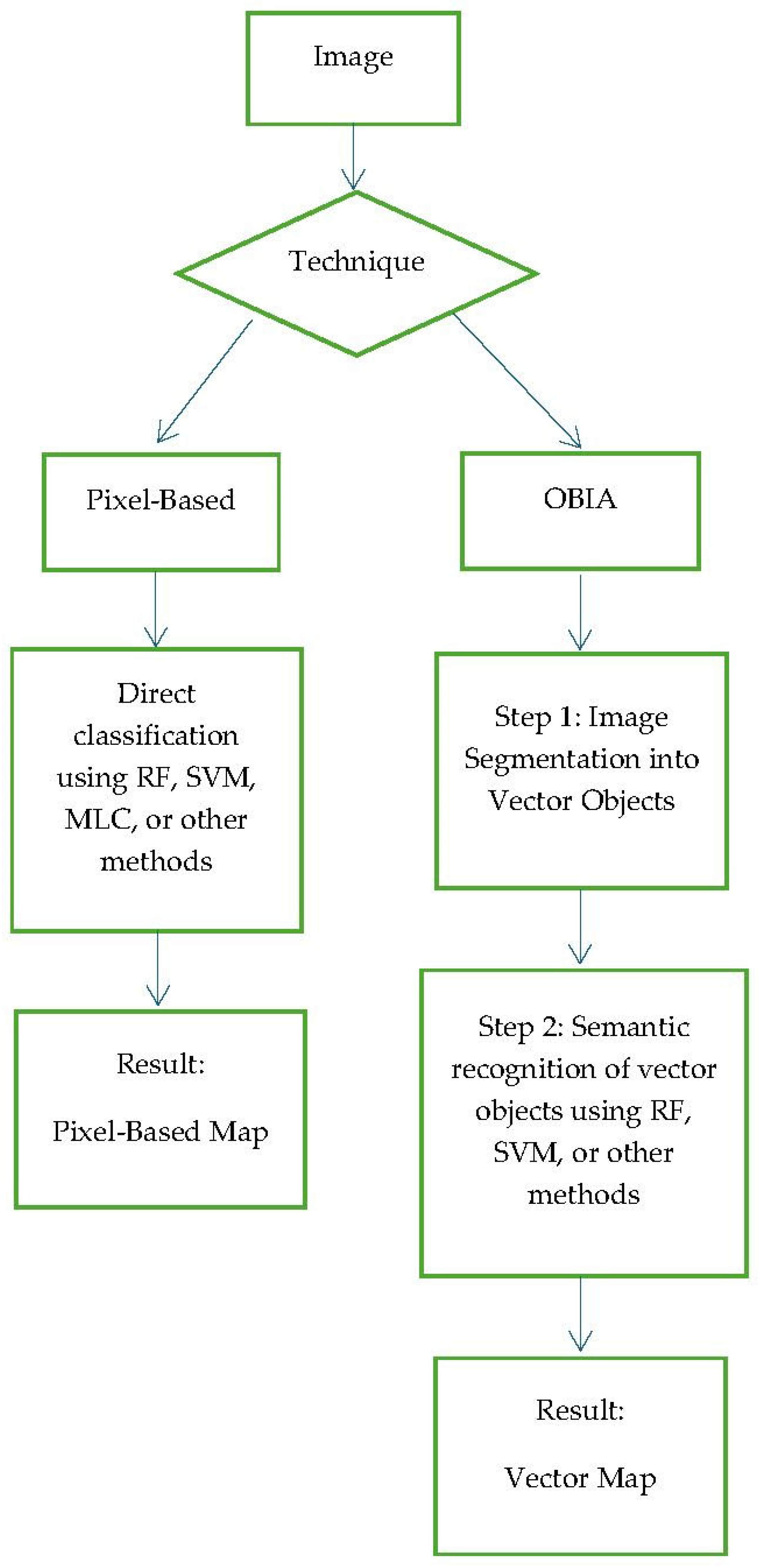

1. Introduction

2. Methods

2.1. Formatting of Mathematical Components

- Internal energy, which maintains the curve’s regularity.

- External energy, which attracts the curve towards the edges in the image.

2.2. Algorithmic Implementation

- a)

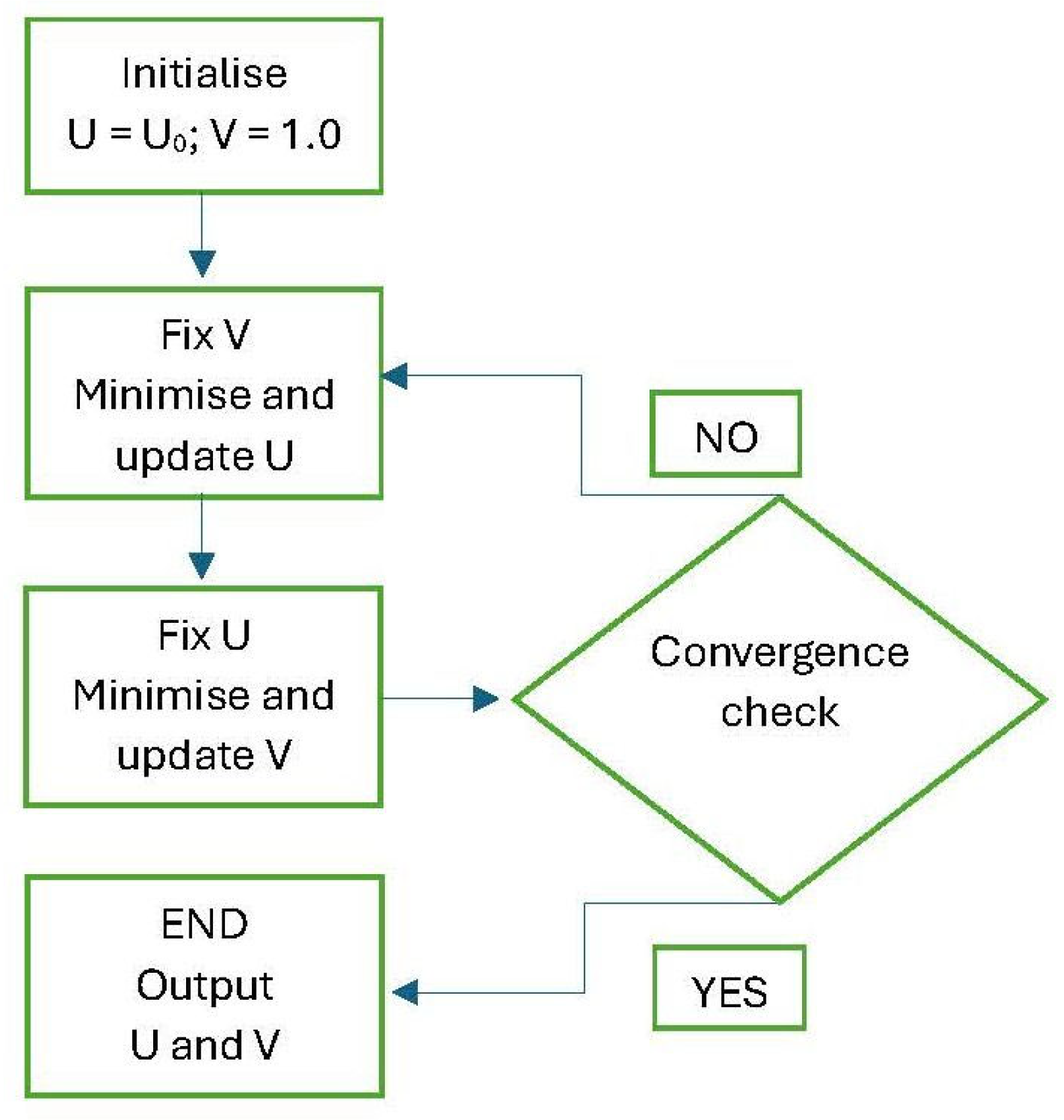

- Initialisation:

- A matrix u(x,y) is created to approximate the original image g(x,y) as closely as possible. The ideal initial solution is therefore to initialise u(x,y) with the exact numerical values of g(x,y).

- For the function v, which will contain the edge map, a matrix of the same dimensions as u(x,y) is initialised, with all its elements assigned the value v=1. Starting with the value 1 everywhere means assuming, in the initial phase, that there are no edges in the image—a neutral starting hypothesis. In subsequent iterations, the algorithm will update this matrix, pushing values towards the threshold 0 to identify discontinuities (i.e., the edges of the sought objects).

- b)

- Iterative Minimisation; once the matrix is initialised, the functional is minimised through an iterative process:

- Minimise with respect to u: The values in the matrix u(x,y) are updated, using the current v matrix (initially all 1s).

- Minimise with respect to v: Using the newly computed u(x,y) matrix from the previous step, the functional is minimised again, this time with respect to v. This yields an updated edge map matrix v.

- c)

- Convergence: these alternating minimisations between the and matrices are repeated until the changes in the respective updated matrices are deemed negligible.

- If is LOW (e.g., ): The “cost” of a long contour is low. The algorithm is not heavily penalised for creating jagged and complex contours to follow every small detail or variation in the data.

- If is HIGH (e.g., ): The “cost” of a long contour is high. The algorithm will be strongly penalised if it creates a complex contour. It will therefore seek the solution with the shortest and simplest possible contour, even if this means approximating the data slightly less accurately. The result will be smoother, more regular, and “minimal” edges. In other words, the algorithm will suppress small details and noise but may also cut out genuine corners or fine details.

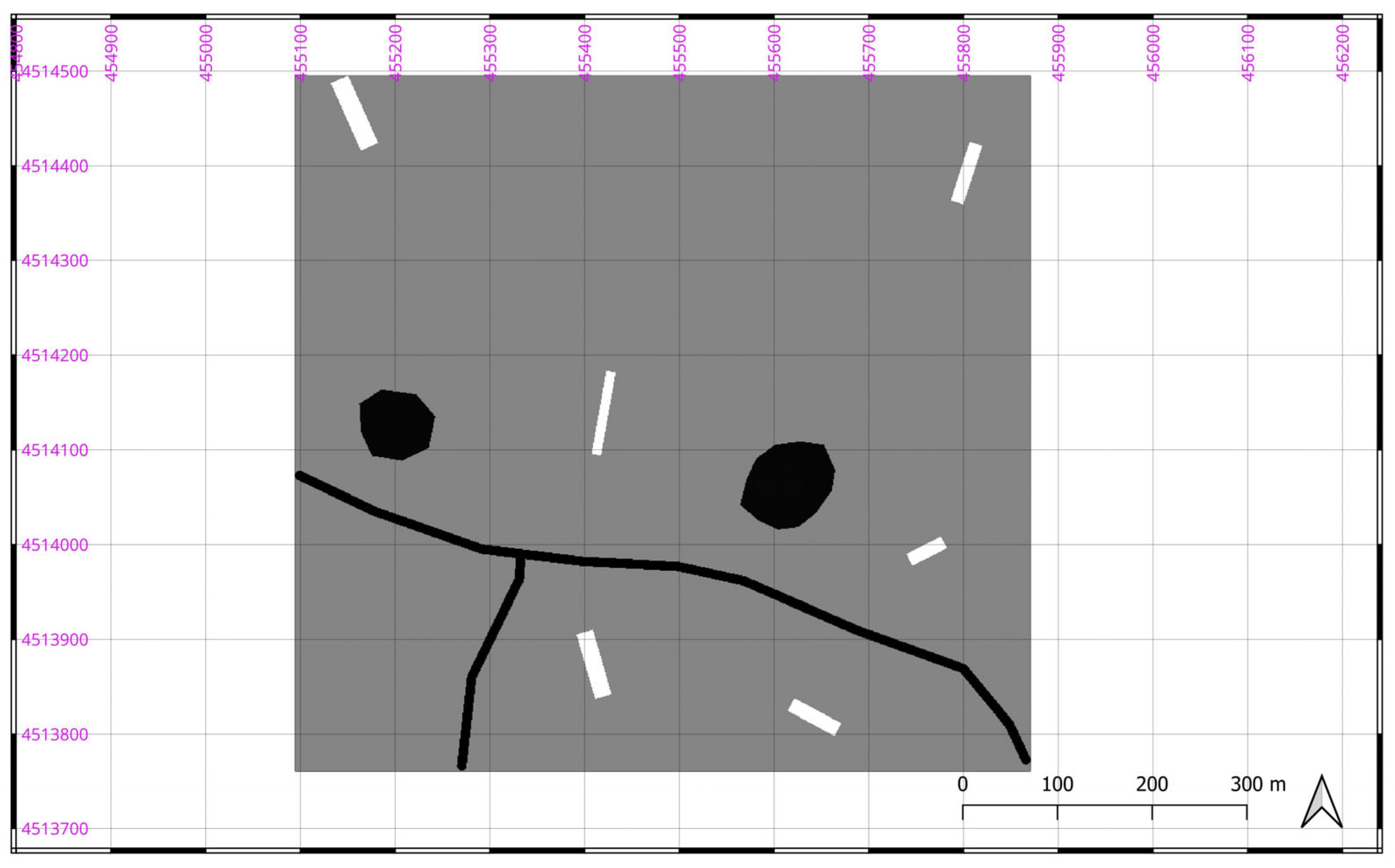

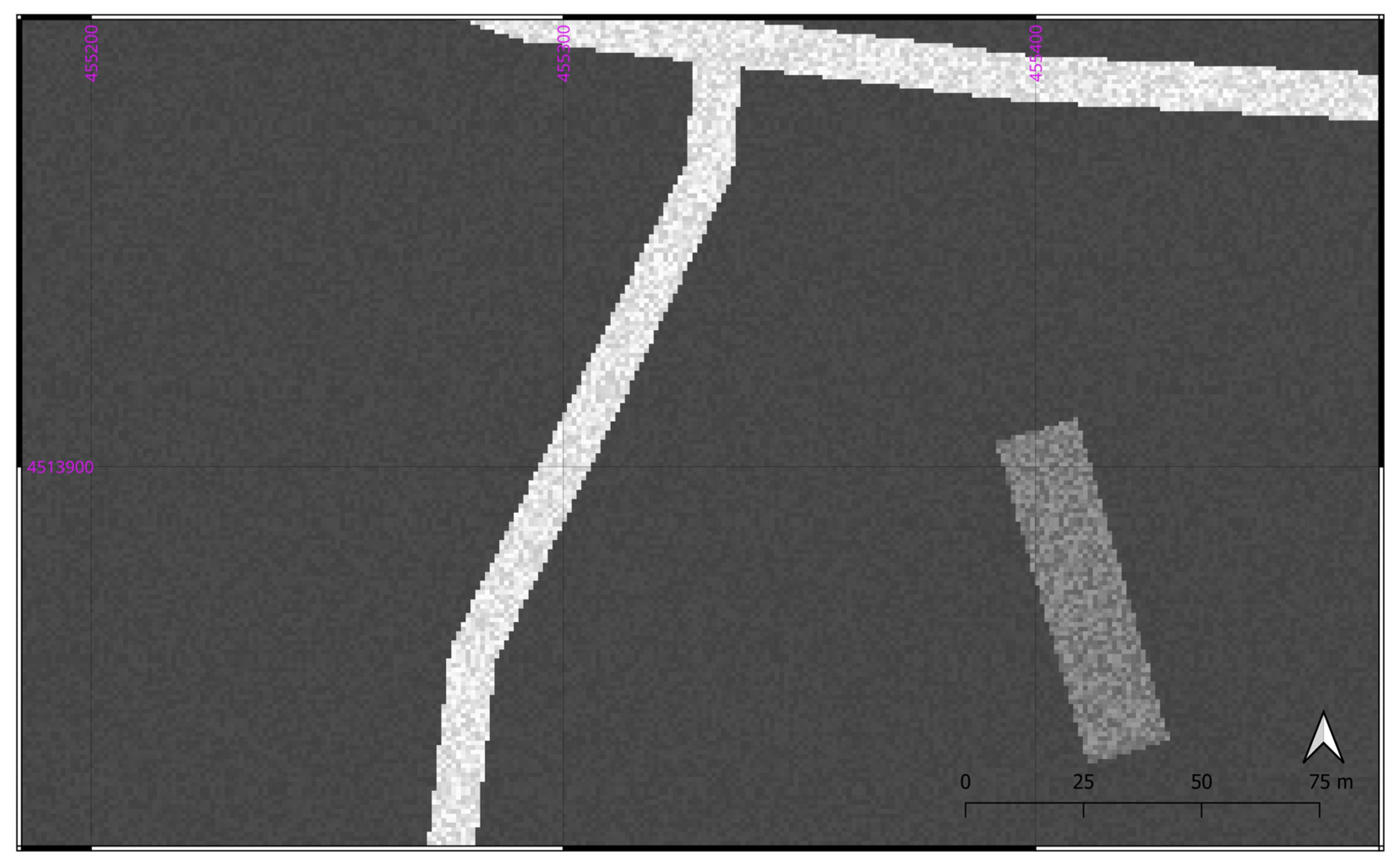

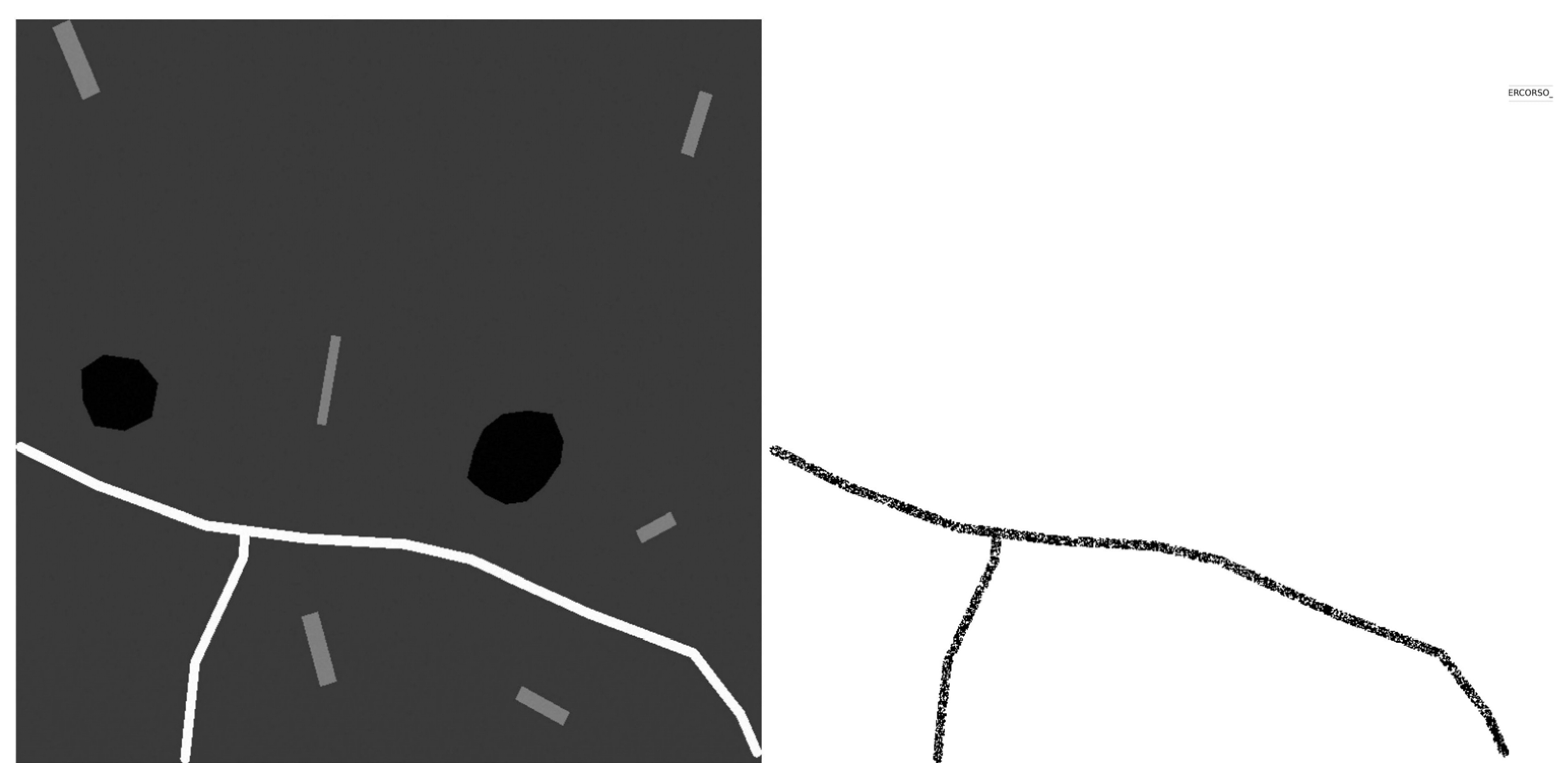

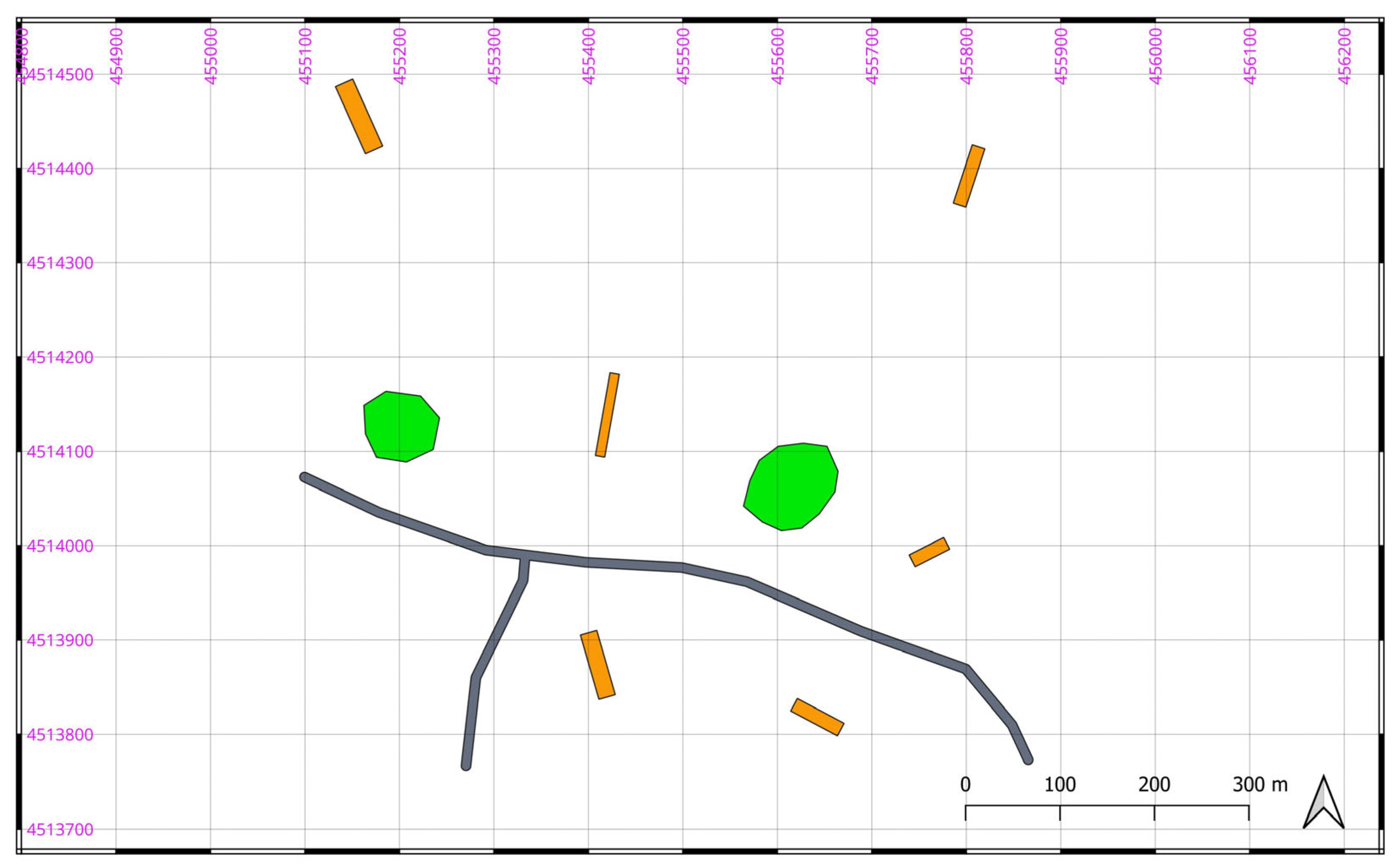

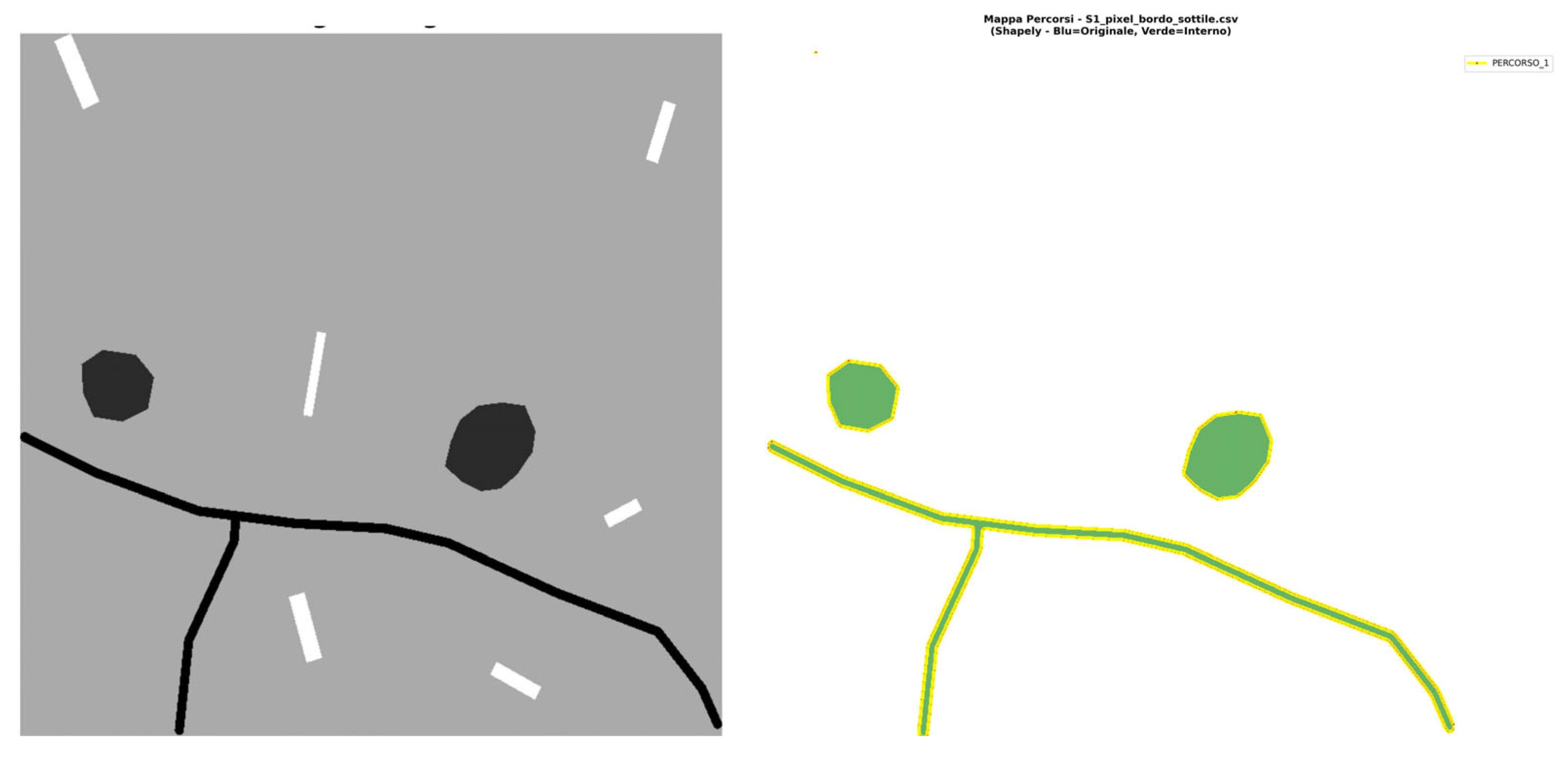

3. Material, Experiment and Results

| Scenario | Trees | Buildings | Roads | Background | Global Variance |

| S1 | 67 | 77 | 65 | 73 | 12 |

| S2 | 62 | 82 | 60 | 73 | 25 |

| S3 | 57 | 87 | 55 | 73 | 45 |

| S4 | 52 | 92 | 50 | 73 | 85 |

| S5 | 47 | 97 | 45 | 73 | 165 |

| S6 | 42 | 102 | 40 | 73 | 330 |

| S7 | 37 | 107 | 35 | 73 | 660 |

| S8 | 32 | 112 | 30 | 73 | 1230 |

| S9 | 27 | 117 | 25 | 73 | 2640 |

| S10 | 22 | 122 | 20 | 73 | 5280 |

| Scenario |

Trees (range) |

Buildings (range) |

Roads (range) |

Background (range) |

Global Variance |

| S1_R | 28-32 | 118-122 | 208-212 | 69-71 | 450-500 |

| S2_R | 25-35 | 115-125 | 205-215 | 68-72 | 550-650 |

| S3_R | 20-40 | 110-130 | 200-220 | 66-74 | 800-1000 |

| S4_R | 15-45 | 100-140 | 190-230 | 63-77 | 1500-2000 |

| S5_R | 8-50 | 90-150 | 180-240 | 60-80 | 2500-3500 |

| S6_R | 18-42 | 105-135 | 195-215 | 69-71 | 700-900 |

| S7_R | 22-38 | 112-128 | 205-215 | 68-72 | 600-750 |

| S8_R | 24-36 | 116-124 | 202-218 | 67-73 | 500-600 |

| S9_R | 26-34 | 116-124 | 206-214 | 68-72 | 480-520 |

4. Discussion

5. Patents

Conflicts of Interest

References

- Abdi, A. M. Land cover and land use classification performance of machine learning algorithms in a boreal landscape using Sentinel-2 data. GIScience & Remote Sensing 2020, 57(1), 1–20. [Google Scholar]

- Yimer, S. M.; Bouanani, A.; Kumar, N.; Tischbein, B.; Borgemeister, C. Comparison of different machine-learning algorithms for land use land cover mapping in a heterogenous landscape over the Eastern Nile River basin, Ethiopia. Advances in Space Research 2024, 74(5), 2180–2199. [Google Scholar] [CrossRef]

- Schulz, D.; Yin, H.; Tischbein, B.; Verleysdonk, S.; Adamou, R.; Kumar, N. Land use mapping using Sentinel-1 and Sentinel-2 time series in a heterogeneous landscape in Niger, Sahel. ISPRS Journal of Photogrammetry and Remote Sensing 2021, 178, 97–111. [Google Scholar] [CrossRef]

- Saponaro, M.; Tarantino, E. LULC Classification Performance of Supervised and Unsupervised Algorithms on UAV-Orthomosaics. In International Conference on Computational Science and Its Applications; Springer International Publishing: Cham, July 2022; pp. 311–326. [Google Scholar]

- Qian, Y.; Zhou, W.; Yan, J.; Li, W.; Han, L. Comparing machine learning classifiers for object-based land cover classification using very high-resolution imagery. Remote sensing 2014, 7(1), 153–168. [Google Scholar] [CrossRef]

- Phiri, D.; Morgenroth, J. Developments in Landsat land cover classification methods: A review. Remote sensing 2017, 9(9), 967. [Google Scholar] [CrossRef]

- Lu, D.; Weng, Q. A survey of image classification methods and techniques for improving classification performance. International journal of Remote sensing 2007, 28(5), 823–870. [Google Scholar] [CrossRef]

- Shao, Y.; Lunetta, R. S. Comparison of support vector machine, neural network, and CART algorithms for the land-cover classification using limited training data points. ISPRS Journal of Photogrammetry and Remote Sensing 2012, 70, 78–87. [Google Scholar] [CrossRef]

- Gašparović, M.; Zrinjski, M.; Gudelj, M. Automatic cost-effective method for land cover classification (ALCC). Computers, Environment and Urban Systems 2019, 76, 1–10. [Google Scholar] [CrossRef]

- Wolpert, D. H.; Macready, W. G. No free lunch theorems for optimization. IEEE transactions on evolutionary computation 2002, 1(1), 67–82. [Google Scholar] [CrossRef]

- Hastie, T.; Tibshirani, R.; Friedman, J. Model assessment and selection. In The elements of statistical learning: data mining, inference, and prediction; Springer New York: New York, NY, 2008; pp. 219–259. [Google Scholar]

- Domingos, P. A few useful things to know about machine learning. Communications of the ACM 2012, 55(10), 78–87. [Google Scholar] [CrossRef]

- Maxwell, A. E.; Warner, T. A.; Fang, F. Implementation of machine-learning classification in remote sensing: An applied review. International journal of remote sensing 2018, 39(9), 2784–2817. [Google Scholar] [CrossRef]

- Duro, D. C.; Franklin, S. E.; Dubé, M. G. A comparison of pixel-based and object-based image analysis with selected machine learning algorithms for the classification of agricultural landscapes using SPOT-5 HRG imagery. Remote sensing of environment 2012, 118, 259–272. [Google Scholar] [CrossRef]

- Blaschke, T. Object based image analysis for remote sensing. ISPRS journal of photogrammetry and remote sensing 2010, 65(1), 2–16. [Google Scholar] [CrossRef]

- Object-based image analysis: spatial concepts for knowledge-driven remote sensing applications; Blaschke, T., Lang, S., Hay, G., Eds.; Springer Science & Business Media, 2008. [Google Scholar]

- Myint, S. W.; Gober, P.; Brazel, A.; Grossman-Clarke, S.; Weng, Q. Per-pixel vs. object-based classification of urban land cover extraction using high spatial resolution imagery. Remote sensing of environment 2011, 115(5), 1145–1161. [Google Scholar] [CrossRef]

- Michez, A.; Piégay, H.; Lisein, J.; Claessens, H.; Lejeune, P. Classification of riparian forest species and health condition using multi-temporal and hyperspatial imagery from a unmanned aerial system. Environmental Monitoring and Assessment 2016, 188(3), 146. [Google Scholar] [CrossRef] [PubMed]

- Zhou, W.; Troy, A. An object-oriented approach for analysing and characterizing urban landscape at the parcel level. International Journal of Remote Sensing 2008, 29(11), 3119–3135. [Google Scholar] [CrossRef]

- Laliberte, A. S.; Browning, D. M.; Rango, A. A comparison of three feature selection methods for object-based classification of sub-decimeter resolution UltraCam-L imagery. International Journal of Applied Earth Observation and Geoinformation 2012, 15, 70–78. [Google Scholar] [CrossRef]

- Haralick, R. M.; Shapiro, L. G. Image segmentation techniques. Computer vision, graphics, and image processing 1985, 29(1), 100–132. [Google Scholar] [CrossRef]

- Jin, X.; Davis, C. H. Automated building extraction from high-resolution satellite imagery in urban areas using structural, contextual, and spectral information. EURASIP Journal on Advances in Signal Processing 2005, 2005(14), 745309. [Google Scholar] [CrossRef]

- Baatz, M.; Schape, A. Multiresolution Segmentation: An Optimization Approach for High Quality Multi-Scale Image Segmentation. In Angewandte Geographische Informations-Verarbeitung; Strobl, J., Blaschke, T., Griesbner, G., Eds.; Wichmann Verlag: Karlsruhe, Germany, 2000; Volume XII, pp. 12–23. [Google Scholar]

- MacQueen, J. Some methods for classification and analysis of multivariate observations. Proceedings of the Fifth Berkeley Symposium on Mathematical Statistics and Probability 1967, 1(14), 281–297. [Google Scholar]

- Hartigan, J. A.; Wong, M. A. Algorithm AS 136: A K-means clustering algorithm. Journal of the Royal Statistical Society. Series C (Applied Statistics) 1979, 28(1), 100–108. [Google Scholar] [CrossRef]

- Comaniciu, D.; Meer, P. Mean shift: A robust approach toward feature space analysis. IEEE Transactions on pattern analysis and machine intelligence 2002, 24(5), 603–619. [Google Scholar] [CrossRef]

- Shi, J.; Malik, J. Normalized cuts and image segmentation. IEEE Transactions on pattern analysis and machine intelligence 2000, 22(8), 888–905. [Google Scholar]

- Felzenszwalb, P. F.; Huttenlocher, D. P. Efficient graph-based image segmentation. International journal of computer vision 2004, 59(2), 167–181. [Google Scholar] [CrossRef]

- McLachlan, G. J.; Peel, D. Finite mixture models; John Wiley & Sons, 2000. [Google Scholar]

- Pal, S. K.; Ghosh, A. Image segmentation using fuzzy correlation. Information Sciences 1992, 62(3), 223–250. [Google Scholar] [CrossRef]

- Cheng, H. D.; Jiang, X. H.; Sun, Y.; Wang, J. Color image segmentation: advances and prospects. Pattern recognition 2001, 34(12), 2259–2281. [Google Scholar] [CrossRef]

- Garcia-Garcia, A.; Orts-Escolano, S.; Oprea, S.; Villena-Martinez, V.; Garcia-Rodriguez, J. A review on deep learning techniques applied to semantic segmentation. arXiv 2017, arXiv:1704.06857. [Google Scholar] [CrossRef]

- Mumford, D.; Shah, J. Optimal approximations by piecewise smooth functions and associated variational problems. Communications on Pure and Applied Mathematics 1989, 42(5), 577–685. [Google Scholar] [CrossRef]

- Tsai, A.; Yezzi, A.; Willsky, A. S. Curve evolution implementation of the Mumford-Shah functional for image segmentation, denoising, interpolation, and magnification. IEEE transactions on Image Processing 2001, 10(8), 1169–1186. [Google Scholar] [CrossRef]

- Vitti, A. The Mumford–Shah variational model for image segmentation: An overview of the theory, implementation and use. ISPRS journal of photogrammetry and remote sensing 2012, 69, 50–64. [Google Scholar] [CrossRef]

- Dal Maso, G.; Morel, J. M.; Solimini, S. A variational method in image segmentation: existence and approximation results. Acta Mathematica 1992, 168(1), 89–151. [Google Scholar] [CrossRef]

- Ambrosio, L.; Tortorelli, V. M. Approximation of functional depending on jumps by elliptic functional via t-convergence. Communications on Pure and Applied Mathematics 1990, 43(8), 999–1036. [Google Scholar] [CrossRef]

- De Giorgi, E.; Ambrosio, L. New functionals in calculus of variations. In Nonsmooth Optimization and Related Topics; (a); Springer US: Boston, MA, 1989; pp. 49–59. [Google Scholar]

- De Giorgi, E.; Carriero, M.; Leaci, A. Existence theorem for a minimum problem with free discontinuity set (b). Arch. Rational Mech. Anal. 1989, 108(no. 3), 195–218. [Google Scholar] [CrossRef]

- Braides, A. A handbook of Г-convergence. In Handbook of Differential Equations: stationary partial differential equations; North-Holland, 2006; Vol. 3, pp. 101–213. [Google Scholar]

- Braides, A. Approximation of Free-discontinuity Problems. In Lecture Notes in Mathematics; Springer-Verlag: Berlin, 1998; Volume 1694. [Google Scholar]

- Modica, L. The gradient theory of phase transitions and the minimal interface criterion. Arch. Rational Mech. Anal. 1987, 98(no. 2), 123–142. [Google Scholar] [CrossRef]

- Fleiss, J. L.; Levin, B.; Paik, M. C. The measurement of interrater agreement. In Statistical methods for rates and proportions, 3rd ed.; John Wiley & Sons, 2003; pp. 598–626. [Google Scholar] [CrossRef]

- Real, R.; Vargas, J. M. The probabilistic basis of Jaccard’s index of similarity. Systematic biology 1996, 45(3), 380–385. [Google Scholar] [CrossRef]

| Parametric | Non-parametric | |

| Supervised | Maximum Likelihood Classifier (MLC); Regressione Logistica |

Random Forest (RF) Support Vector Machine (SVM) K-Nearest Neighbours (KNN) Neural Networks (ANN) |

| Unsupervised | Gaussian Mixture Models (GMM) | K-Means ISODATA DBSCAN Hierarchical Clustering |

| Parameter | Interpretation | Range | Parameter Selection Criteria |

| μ | It controls the “importance” or weighting of the regularization on u. | 1 | It is typically set to 1.0 to normalize the other parameters. |

| ν | It controls the “importance” or weighting of the regularization on v (and consequently, of the contour length). | 10-100 | It depends on the scale of the image pixel values. For images in the range [0,255], a value between 20 and 100 serves as a practical starting point. |

| ε | It controls the “width” of the transition of function v from 0 to 1. A smaller ε results in sharper transitions. | 0.1-1.0 | It depends on the scale of the features one aims to capture. A value of 0.5 or 1.0 is often used as an initial estimate. |

| Alfa value | The effect on the Snake’s behaviour | Analogy |

| 1.0 | Very RIGID Snake, equidistant points | Taut string / cable – does not bend easily |

| 0.4 | Elastic snake, adapts to contours | Elastic band – stretches but retains its form |

| 0.1 | Highly FLEXIBLE snake, irregular points | Slack rope – deforms easily |

| Beta value | The effect on the Snake’s behaviour | Analogy |

| 0.5 | Very smooth snake, fewer details | Train rail – very broad/sweeping curves |

| 0.2 | Balanced snake, good detail | Flexible hose – natural curves |

| 0.05 | Highly jagged snake, very detailed | Wool thread – follows every irregularity |

| Ambrosio-Tortorelli | Snake | |||

| Scenario (S) | Cohen’s | Jaccard | Cohen’s | Jaccard |

| S1 | 0.8502 | 0.7498 | 0.0000 | 0.0500 |

| S2 | 0.9657 | 0.9371 | 1.0000 | 1.0000 |

| S3 | 0.9657 | 0.9371 | 1.0000 | 1.0000 |

| S4 | 0.9657 | 0.9371 | 1.0000 | 1.0000 |

| S5 | 0.9657 | 0.9371 | 1.0000 | 1.0000 |

| S6 | 0.9657 | 0.9371 | 1.0000 | 1.0000 |

| S7 | 0.9657 | 0.9371 | 1.0000 | 1.0000 |

| S8 | 0.9657 | 0.9371 | 1.0000 | 1.0000 |

| S9 | 0.9657 | 0.9371 | 1.0000 | 1.0000 |

| S10 | 0.9657 | 0.9371 | 1.0000 | 1.0000 |

| Ambrosio-Tortorelli | Snake | |||

| Scenario (S) | Agreement for ‘1’ | Agreement for ‘0’ |

Agreement for ‘1’ | Agreement for ‘0’ |

| S1 | 78.30% | 99.77% | 100% | 0.00% |

| S2 | 99.29% | 99.69% | 100.00% | 100.00% |

| S3 | 99.29% | 99.69% | 100.00% | 100.00% |

| S4 | 99.29% | 99.69% | 100.00% | 100.00% |

| S5 | 99.29% | 99.69% | 100.00% | 100.00% |

| S6 | 99.29% | 99.69% | 100.00% | 100.00% |

| S7 | 99.29% | 99.69% | 100.00% | 100.00% |

| S8 | 99.29% | 99.69% | 100.00% | 100.00% |

| S9 | 99.29% | 99.69% | 100.00% | 100.00% |

| S10 | 99.29% | 99.69% | 100.00% | 100.00% |

| Ambrosio-Tortorelli | Snake | |||

| Scenario (S) | Cohen’s | Jaccard | Cohen’s | Jaccard |

| S1_R | 0.5255 | 0.3694 | 0.5431 | 0.3848 |

| S2_R | 0.5255 | 0.3694 | 0.4810 | 0.3279 |

| S3_R | 0.5255 | 0.3694 | 0.4178 | 0.2742 |

| S4_R | 0.5255 | 0.3694 | 0.2410 | 0.1432 |

| S5_R | 0.0617 | 0.0351 | 0.1209 | 0.0675 |

| S6_R | 0.5255 | 0.3694 | 0.4567 | 0.3068 |

| S7_R | 0.5255 | 0.3694 | 0.4189 | 0.2750 |

| S8_R | 0.5255 | 0.3694 | 0.4500 | 0.3010 |

| S9_R | 0.5253 | 0.3992 | 0.1724 | 0.0988 |

| Ambrosio-Tortorelli | Snake | |||

| Scenario (S) | Agreement for ‘1’ | Agreement for ‘0’ |

Agreement for ‘1’ | Agreement for ‘0’ |

| S1_R | 38.24% | 99.81% | 38.48% | 100.00% |

| S2_R | 38.24% | 99.81% | 32.79% | 100.00% |

| S3_R | 38.24% | 99.81% | 27.42% | 100.00% |

| S4_R | 38.24% | 99.81% | 14.32% | 100.00% |

| S5_R | 3.63% | 99.82% | 6.75% | 100.00% |

| S6_R | 38.24% | 99.81% | 30.68% | 100.00% |

| S7_R | 38.24% | 99.81% | 27.50% | 100.00% |

| S8_R | 38.24% | 99.81% | 30.10% | 100.00% |

| S9_R | 38.23% | 99.81% | 9.88% | 100.00% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.