Submitted:

21 December 2025

Posted:

22 December 2025

Read the latest preprint version here

Abstract

Keywords:

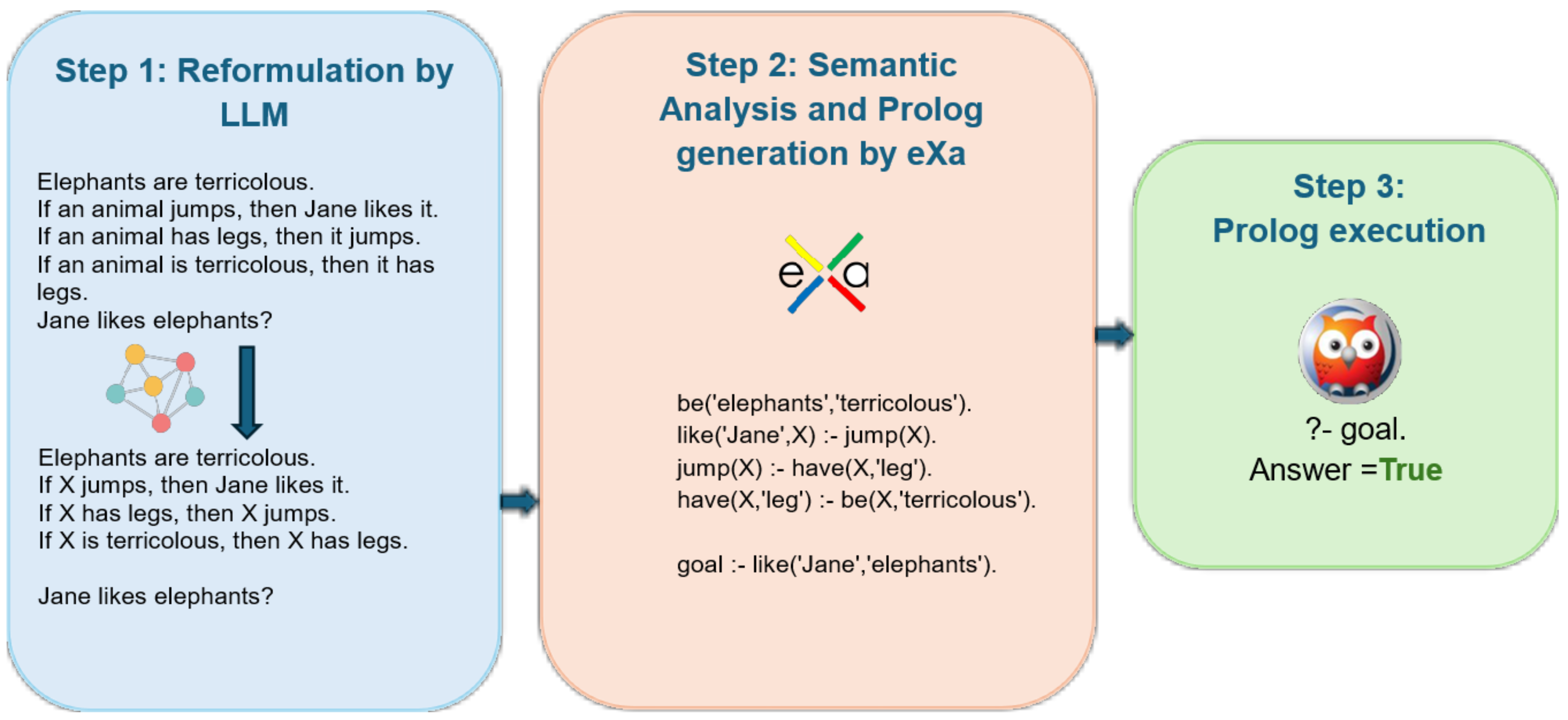

1. Introduction

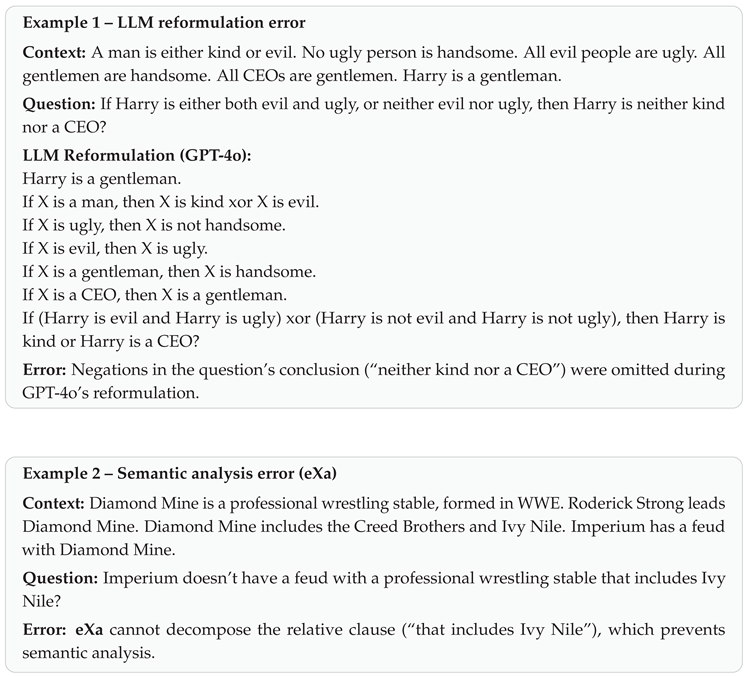

2. Related Work

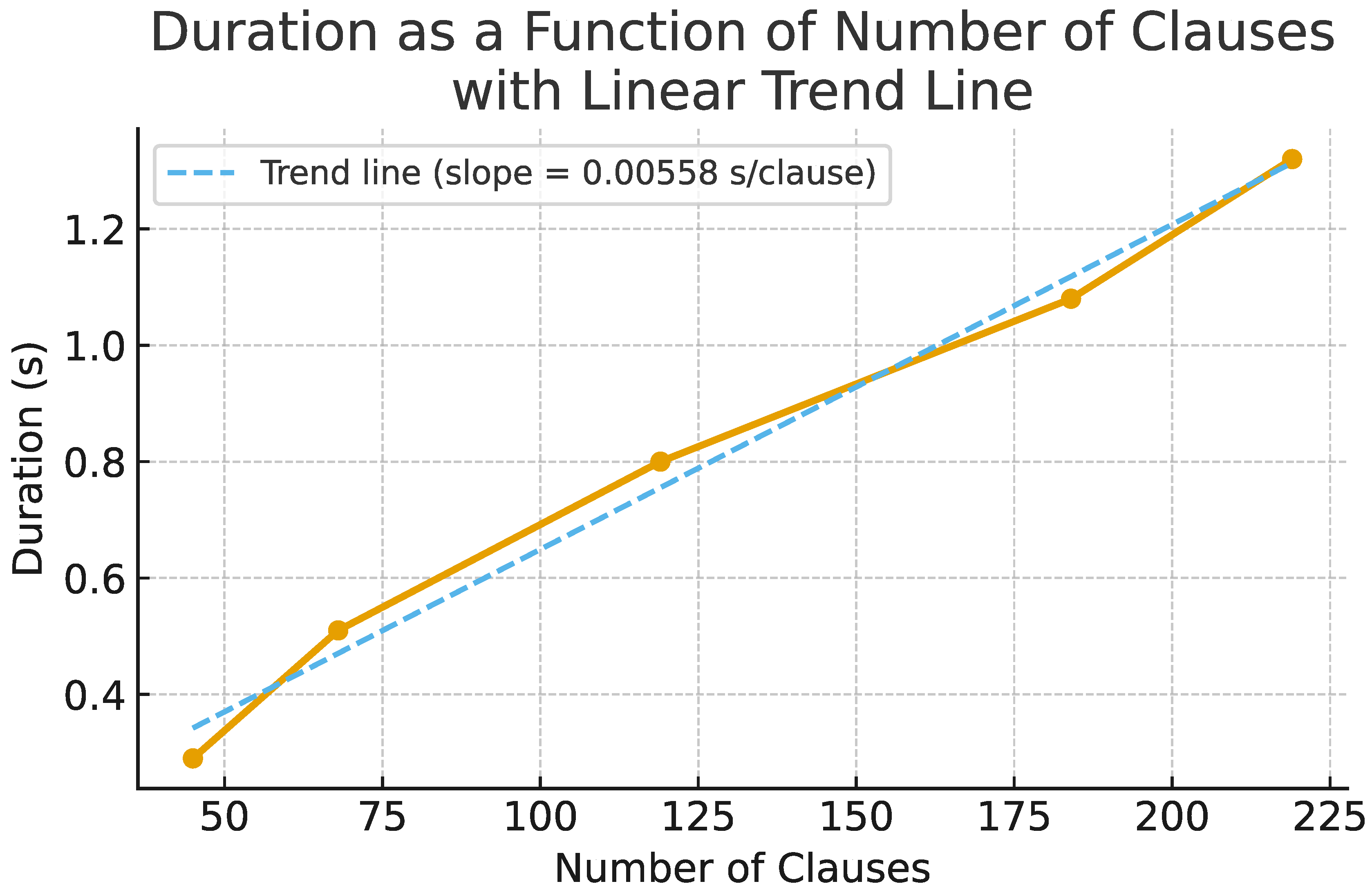

3. Reformulation Prompt

4. eXa

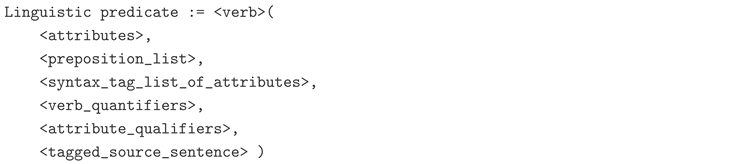

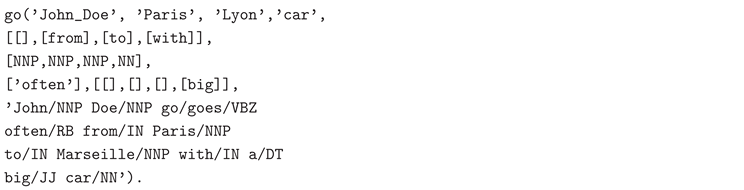

4.1. eXaSem

4.2. eXaLog

| Original rule | Transformed form(s) |

|---|---|

| If | |

4.3. eXaMeta

4.4. eXaGen

4.5. eXaGol

5. Experiments

6. Results and Discussion

| Dataset | GPT-4 (*) | GPT-4o | |||||

|---|---|---|---|---|---|---|---|

| Std. | CoT | LINC | Logic-LM | Std. | CoT | eXa (ours) | |

| PrOntoQA | 77.40 | 98.79 | – | 83.20 | 85.45 | 99.80 | 100.00 (1) |

| (+0.20) | |||||||

| ProofWriter | 52.67 | 68.11 | 98.30 | 79.66 | 65.71 | 74.29 | 100.00 (1) |

| (+1.70) | |||||||

| FOLIO | 69.11 | 70.58 | 72.50 | 78.92 | 79.78 | 91.85 | 92.90 (2) |

| (+1.05) | |||||||

7. Impact of Closed-World Conversion (CWA)

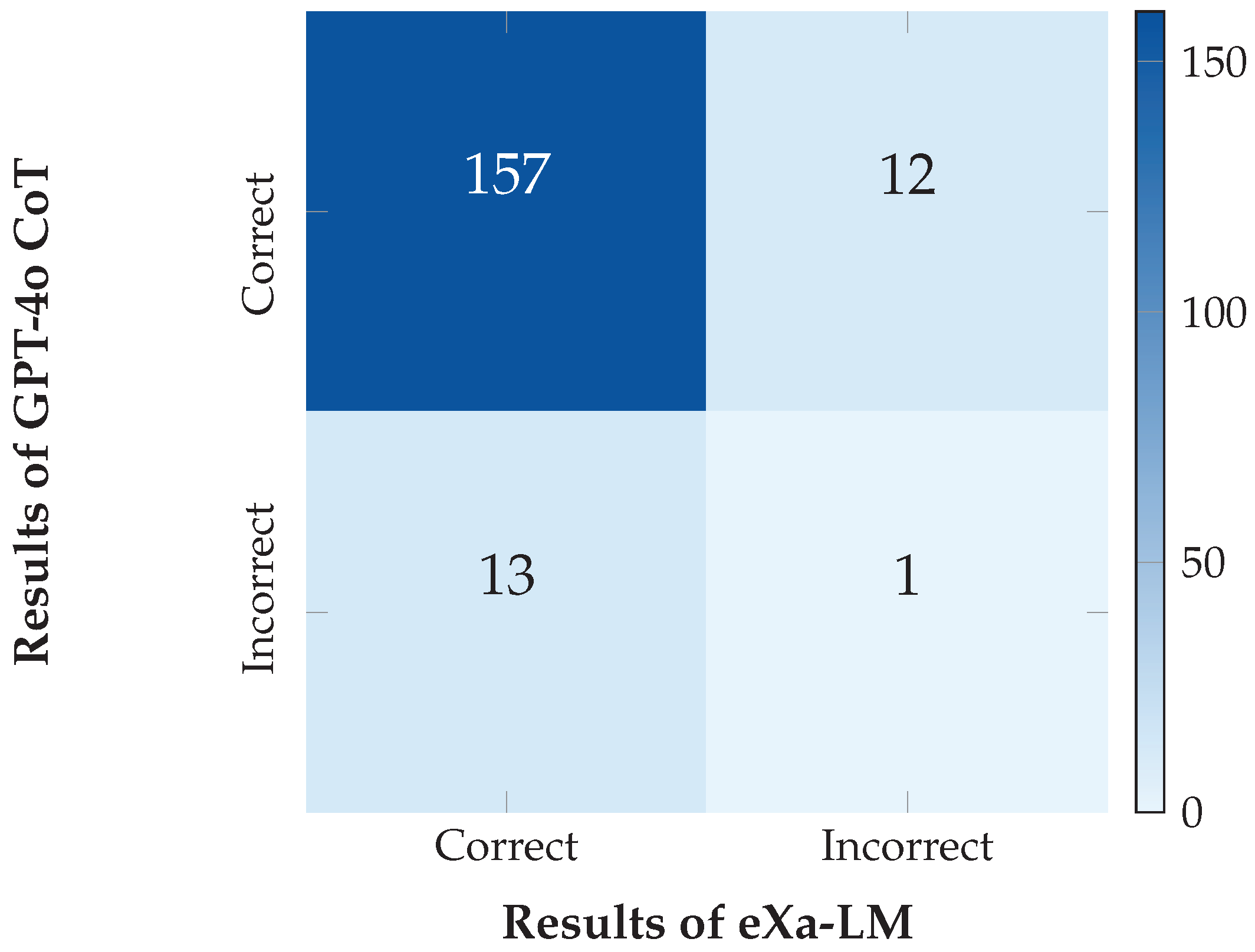

8. Error Analysis

| Error Source | Count |

|---|---|

| LLM reformulation | 1 |

| eXa semantic analysis | 5 |

| Missing explicit information in input | 8 |

| Total | 13 |

9. Ablation Study

| Model / Setting | Accuracy (%) |

|---|---|

| GPT-4o CoT (baseline) | 91.85 |

| Reformulation + GPT-4o CoT | |

| (ablation) | 90.76 |

| eXa-LM (ours) | 92.90 |

10. Independence of the LLM

11. Computational Costs

| Dataset | Average execution time (s) |

|---|---|

| PrOntoQA | 0.21 |

| ProofWriter | 0.27 |

| FOLIO | 0.21 |

| Metric | GPT-4o CoT | eXa-LM |

|---|---|---|

| Prompt + Task tokens | 350 | 1,100 |

| Output tokens | 850 | 1,200 |

| Total tokens | 1,200 | 2,300 |

| Execution time (s) | ∼3 | ∼5 |

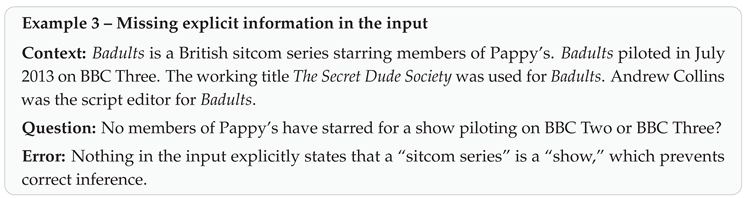

12. Scalability

13. Limitations and Future Work

- Limited linguistic coverage and expressivity. The CNL grammar is deliberately narrow, excluding nested relatives and complex passives; linguistic predicates do not encode tense or grammatical gender. Future work: Extend grammar via semi-supervised learning and enrich predicates.

- Absence of abductive and inductive reasoning. eXa-LM implements deduction and some hypothetical reasoning; abduction is not implemented and induction is present but unused. Future work: Leverage eXaGOL for rule induction and implement abduction.

- Limits of logical representation. eXaLog extends Prolog but does not cover full FOL (nested quantifiers, multiple quantifier scopes). Future work: Support richer FOL constructs and partial skolemization strategies.

- Closed-world assumption (CWA). Simplifies reasoning but limits inference in partially known contexts. Future work: Study mixed CWA/OWA variants.

- Extraction of implicit knowledge from LLMs. Some FOLIO errors stem from missing intermediate relations. Future work: Design prompting methods to surface missing intermediate relations.

- Robustness evaluation. Current evaluation uses inference-focused datasets. Future work: Test on broader QA datasets (SQuAD [21], Natural Questions [22], BoolQ [23], DROP [24], HotpotQA [25])

- Portability and multilingualism. eXa is French-first. Future work: Implement a native English CNL and assess transferability.

14. Conclusion

Institutional Review Board Statement

References

- Wei, J.; Wang, X.; Schuurmans, D.; Bosma, M.; Ichter, B.; Xia, F.; Chi, E.H.; Le, Q.V.; Zhou, D. Chain-of-thought prompting elicits reasoning in large language models. In Proceedings of the Proceedings of the 36th International Conference on Neural Information Processing Systems, Red Hook, NY, USA, 2022; NIPS ’22. [Google Scholar]

- Tafjord, O.; Dalvi, B.; Clark, P. ProofWriter: Generating Implications, Proofs, and Abductive Statements over Natural Language. In Proceedings of the Findings of the Association for Computational Linguistics: ACL-IJCNLP 2021; Online, Zong, C., Xia, F., Li, W., Navigli, R., Eds.; 2021; pp. 3621–3634. [Google Scholar] [CrossRef]

- Han, S.; Schoelkopf, H.; Zhao, Y.; Qi, Z.; Riddell, M.; Zhou, W.; Coady, J.; Peng, D.; Qiao, Y.; Benson, L.; et al. FOLIO: Natural Language Reasoning with First-Order Logic. In Proceedings of the Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing; Miami, Florida, USA, Al-Onaizan, Y., Bansal, M., Chen, Y.N., Eds.; 2024; pp. 22017–22031. [Google Scholar] [CrossRef]

- Valmeekam, K.; Sreedharan, S.; Kambhampati, S. Can LLMs Really Reason and Plan? In Proceedings of the Proceedings of AAAI 2023, 2023. [Google Scholar]

- Pan, L.; Albalak, A.; Wang, X.; Wang, W. Logic-LM: Empowering Large Language Models with Symbolic Solvers for Faithful Logical Reasoning. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2023; Bouamor, H., Pino, J., Bali, K., Eds.; Singapore, 2023; pp. 3806–3824. [Google Scholar] [CrossRef]

- Olausson, T.; Gu, A.; Lipkin, B.; Zhang, C.; Solar-Lezama, A.; Tenenbaum, J.; Levy, R. LINC: A Neurosymbolic Approach for Logical Reasoning by Combining Language Models with First-Order Logic Provers. In Proceedings of the Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing; Singapore, Bouamor, H., Pino, J., Bali, K., Eds.; 2023; pp. 5153–5176. [Google Scholar] [CrossRef]

- Schwitter, R. Controlled natural languages for knowledge representation. In Proceedings of the Coling 2010: Posters, 2010; pp. 1113–1121. [Google Scholar]

- Saparov, A.; He, H. Language Models Are Greedy Reasoners: A Systematic Formal Analysis of Chain-of-Thought. arXiv 2023, arXiv:2210.01240. [Google Scholar] [CrossRef]

- OpenAI, *!!! REPLACE !!!*. GPT-4 Technical Report. 2023, 2303.08774. [Google Scholar] [CrossRef]

- OpenAI. GPT-4o System Card. 2024, 2410.21276. [Google Scholar] [CrossRef]

- Fuchs, N.; Kaljurand, K.; Kuhn, T. Attempto Controlled English for Knowledge Representation. Proceedings of the Reasoning Web 2008, Lecture Notes in Computer Science 2008, Vol. 5224, 104–124. [Google Scholar] [CrossRef]

- Kuhn, T. A Survey and Classification of Controlled Natural Languages. Computational Linguistics 2014, 40, 121–170. [Google Scholar] [CrossRef]

- Dong, L.; Lapata, M. Coarse-to-Fine Decoding for Neural Semantic Parsing. In Proceedings of the Proceedings of the 56th Annual Meeting of the Association for Computational Linguistics; Melbourne, Australia, Gurevych, I., Miyao, Y., Eds.; 2018; Volume 1, pp. 731–742. [Google Scholar] [CrossRef]

- Kojima, T.; Gu, S.S.; Reid, M.; Matsuo, Y.; Iwasawa, Y. Large language models are zero-shot reasoners. In Proceedings of the Proceedings of the 36th International Conference on Neural Information Processing Systems, Red Hook, NY, USA, 2022; NIPS ’22. [Google Scholar]

- Sadeddine, Z.; Suchanek, F.M. Verifying the Steps of Deductive Reasoning Chains. In Proceedings of the Findings of the Association for Computational Linguistics: ACL 2025; Che, W., Nabende, J., Shutova, E., Pilehvar, M.T., Eds.; Vienna, Austria, 2025; pp. 456–475. [Google Scholar] [CrossRef]

- Wielemaker, J.; Schrijvers, T.; Triska, M.; Lager, T. Swi-prolog. Theory Pract. Log. Program. 2012, 12, 67–96. [Google Scholar] [CrossRef]

- Chrupala, G.; Dinu, G.; van Genabith, J. Learning Morphology with Morfette. In Proceedings of the Proceedings of the Sixth International Conference on Language Resources and Evaluation (LREC’08); Marrakech, Morocco, Calzolari, N., Choukri, K., Maegaard, B., Mariani, J., Odijk, J., Piperidis, S., Tapias, D., Eds.; 2008. [Google Scholar]

- Feneyrol, C. La segmentation automatique de la phrase dans le cadre de l’analyse du français. In Proceedings of the Actes du Congrès international informatique et sciences humaines – L.A.S.L.A., Université de Liège, 1981. [Google Scholar]

- Muggleton, S. Inductive logic programming. New Gen. Comput. 1991, 8, 295–318. [Google Scholar] [CrossRef]

- Mistral, AI. Mistral Large 2 (v24.07); Paris, France, 2024. [Google Scholar]

- Rajpurkar, P.; Zhang, J.; Lopyrev, K.; Liang, P. SQuAD: 100,000+ Questions for Machine Comprehension of Text. In Proceedings of the Proceedings of the 2016 Conference on Empirical Methods in Natural Language Processing; Austin, Texas, Su, J., Duh, K., Carreras, X., Eds.; 2016; pp. 2383–2392. [Google Scholar] [CrossRef]

- Kwiatkowski, T.; Palomaki, J.; Redfield, O.; Collins, M.; Parikh, A.; Alberti, C.; Epstein, D.; Polosukhin, I.; Devlin, J.; Lee, K.; et al. Natural Questions: A Benchmark for Question Answering Research. Transactions of the Association for Computational Linguistics 2019, 7, 452–466. [Google Scholar] [CrossRef]

- Clark, C.; Lee, K.; Chang, M.W.; Kwiatkowski, T.; Collins, M.; Toutanova, K. BoolQ: Exploring the Surprising Difficulty of Natural Yes/No Questions. In Proceedings of the Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies; Minneapolis, Minnesota, Burstein, J., Doran, C., Solorio, T., Eds.; 2019; Volume 1, pp. 2924–2936. [Google Scholar] [CrossRef]

- Dua, D.; Wang, Y.; Dasigi, P.; Stanovsky, G.; Singh, S.; Gardner, M. DROP: A Reading Comprehension Benchmark Requiring Discrete Reasoning Over Paragraphs. In Proceedings of the Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies; Minneapolis, Minnesota, Burstein, J., Doran, C., Solorio, T., Eds.; 2019; Volume 1, pp. 2368–2378. [Google Scholar] [CrossRef]

- Yang, Z.; Qi, P.; Zhang, S.; Bengio, Y.; Cohen, W.; Salakhutdinov, R.; Manning, C.D. HotpotQA: A Dataset for Diverse, Explainable Multi-hop Question Answering. In Proceedings of the Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing; Brussels, Belgium, Riloff, E., Chiang, D., Hockenmaier, J., Tsujii, J., Eds.; 2018; pp. 2369–2380. [Google Scholar] [CrossRef]

- Strubell, E.; Ganesh, A.; McCallum, A. Energy and Policy Considerations for Modern Deep Learning Research. 2020, Vol. 34, 13693–13696. [Google Scholar] [CrossRef]

| 1 | eXa is a monolingual system originally designed for French. All examples in this paper have been translated into English for ease of understanding. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).