Submitted:

16 December 2025

Posted:

17 December 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

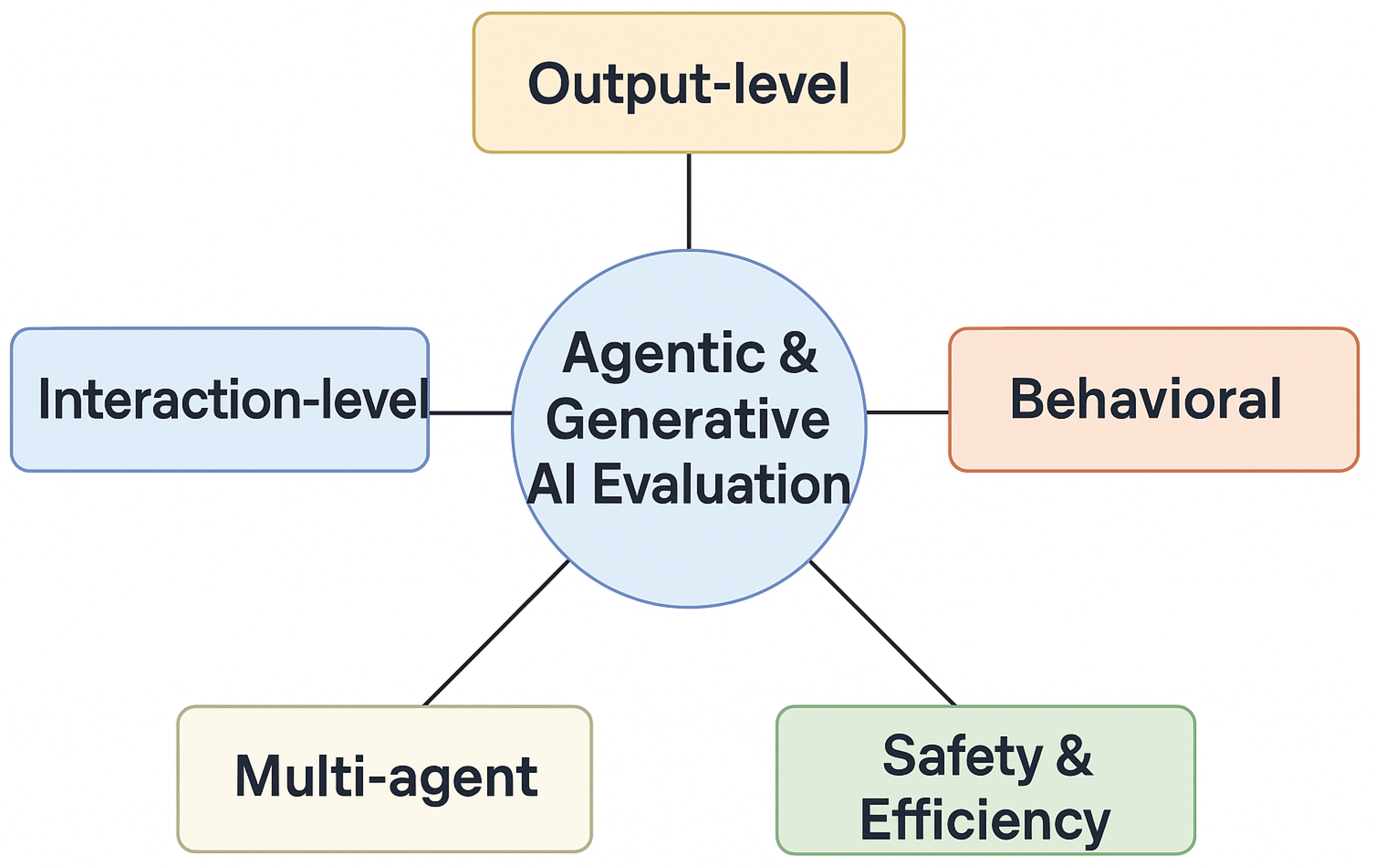

2. Taxonomy of Evaluation Dimensions

2.1. Output-Level Evaluation

2.2. Behavioural Evaluation

2.3. Interaction-Level Evaluation

2.4. Multi-Agent Evaluation

2.5. Safety and Robustness

3. Benchmarks for Generative AI

4. Benchmarks for Agentic AI

4.1. Tool-Use Benchmarks

4.2. Planning and Long-Horizon Tasks

4.3. Memory Benchmarks

4.4. Multi-Agent Benchmarks

4.5. Self-Evaluation and Meta-Cognition

5. Evaluation Methodologies

5.1. Human Evaluation

5.2. Automated Evaluation

5.3. Simulation-Based Evaluation

5.4. Real-World Evaluation

6. Challenges and Gaps

- Limited Realism. Many benchmarks use synthetic tasks that do not reflect complex enterprise workflows. Realistic multi-step tasks with noisy data, ambiguous instructions and external dependencies are rare.

- Environment Diversity. Few benchmarks cover multiple domains or multi-agent settings. AgentBench is a notable exception but still limited in scope.

- Process-Level Evaluation. Existing metrics focus on final outcomes. There is no consensus on how to evaluate planning traces, decision chains or intermediate tool calls. Pass@k metrics reveal reliability issues but do not explain failure modes.

- Safety and Alignment. Safety benchmarks often measure text toxicity but neglect tool misuse, prompt injection attacks and emergent behaviours. Cost, latency and policy compliance are seldom reported.

- Standardization. Benchmark definitions and scoring methods vary widely. Lack of standard metrics hinders comparison across studies.

7. Future Directions

- Multi-Domain Agentic Benchmark Suites. Develop unified benchmark suites spanning web tasks, embodied environments, enterprise workflows, knowledge-intensive tasks and cooperative games. Such suites should provide diverse tasks with clear metrics.

- Continuous and Long-Term Evaluation. Assess agents over days or weeks to capture robustness to environment drift, memory retention and evolving instructions.

- Safety Stress Testing. Incorporate adversarial prompts, jailbreak attempts, harmful tool scenarios and policy compliance checks. Evaluate both textual and action-level safety.

- Multi-Agent Ecosystem Simulations. Create large-scale simulations of economies, supply chains or social systems to study emergent collaboration and competition. Metrics should evaluate coordination efficiency, fairness and emergent behaviours.

- Self-Evaluation Benchmarks. Design tasks that require agents to estimate confidence, detect errors and correct themselves. Investigate how self-reflection improves reliability and safety.

- Enterprise-Oriented Metrics. Adopt frameworks like CLEAR that integrate cost, latency, reliability, assurance and compliance. Provide tools to measure these metrics on real workloads.

8. Balanced Framework and Adaptive Monitoring

9. Conclusions

References

- Shukla, M. A. Evaluating Agentic AI Systems: A Balanced Framework for Performance, Robustness, Safety and Beyond. TechRxiv 2025. [Google Scholar] [CrossRef]

- Shukla, M. Adaptive Monitoring and Real-World Evaluation of Agentic AI Systems. arXiv preprint. 2025. Available online: https://arxiv.org/abs/2509.00115.

- Shukla, M.; Yemi Reddy, J. Agentic Sign Language: Balanced Evaluation and Adaptive Monitoring for Inclusive Multimodal Communication. Preprints.org 2025. [Google Scholar] [CrossRef]

- Mehta, S.; et al. CLEAR: A Holistic Evaluation Framework for Enterprise Agentic AI. arXiv preprint 2025, arXiv:2511.14136. [Google Scholar]

- Tan, X.; et al. MemBench: Evaluating Memory in Large Language Model Agents. arXiv preprint 2025, arXiv:2506.21605. [Google Scholar]

- Liu, B.; et al. AgentBench: Benchmarking LLMs as Agents. arXiv preprint 2023, arXiv:2308.03688. [Google Scholar]

- Valmeekam, K.; et al. PlanBench: A Benchmark for Reasoning about Change Using LLMs. arXiv preprint 2022, arXiv:2206.10498. [Google Scholar]

- Guo, D.; et al. StableToolBench: Enabling Stable Tool Learning for Large Language Models. arXiv preprint 2025, arXiv:2403.07714. [Google Scholar]

- Qian, J.; et al. ChatDev: Collaborating with Large Language Model Agents for Software Development. arXiv preprint 2023, arXiv:2307.07924. [Google Scholar]

- Liang, P.; et al. Holistic Evaluation of Language Models. arXiv preprint 2022, arXiv:2211.09110. [Google Scholar] [CrossRef]

- Shridhar, M.; et al. ALFWorld: Aligning Text and Embodied Environments for Interactive Instruction Following. arXiv preprint 2020, arXiv:2010.03768. [Google Scholar]

- Yao, A.; et al. WebArena: A Realistic Web Environment for Building Autonomous Agents. arXiv preprint 2023, arXiv:2307.09288. [Google Scholar]

- Ma, W.; et al. AutoMeco: Evaluating and Improving Intrinsic Meta-Cognition in Large Language Models. arXiv preprint 2025, arXiv:2506.08410. [Google Scholar]

- Wan, Y.; Ma, W. StoryBench: A Dynamic Benchmark for Long-Term Memory Reasoning. arXiv preprint 2025, arXiv:2506.13356. [Google Scholar]

- Anonymous. Reflection-Bench: Evaluating Epistemic Agency in Large Language Models. Submitted manuscript 2025. [Google Scholar]

- Manakul, P.; et al. SelfCheckGPT: Zero-Resource Hallucination Detection for Generative Language Models. In Proceedings of the Conference on Empirical Methods in Natural Language Processing (EMNLP 2023), 2023. [Google Scholar]

- Hendrycks, D.; et al. Measuring Massive Multitask Language Understanding. arXiv preprint 2020, arXiv:2009.03300. [Google Scholar]

- Cobbe, K.; et al. Training Verifiers to Solve Math Word Problems. arXiv preprint 2021, arXiv:2110.14168. [Google Scholar] [CrossRef]

- Srivastava, A.; et al. Beyond the Imitation Game: Quantifying and Extrapolating the Capabilities of Language Models. Proceedings of the AAAI Conference on Artificial Intelligence 2023, 37(13), 12474–12485. [Google Scholar]

| Category | Example Benchmarks | Key Metrics |

| Reasoning | MMLU, GSM8K, BigBench/BBH, ARC Challenge | Subject accuracy, reasoning depth |

| Language Understanding | GLUE, SuperGLUE, SQuAD | Accuracy, F1 score |

| Summarization | XSum, CNN/Daily Mail | ROUGE, BERTScore |

| Multimodal | MMMU, SEED, MathVista, ChartQA | Accuracy across modalities |

| Coding | HumanEval, MBPP, RepoBench | Pass@k, functional correctness |

| Safety | HELM Safety, AIR-Bench, FACTS | Toxicity rates, factuality |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).