Submitted:

11 December 2025

Posted:

12 December 2025

You are already at the latest version

Abstract

Keywords:

MSC: 94-02

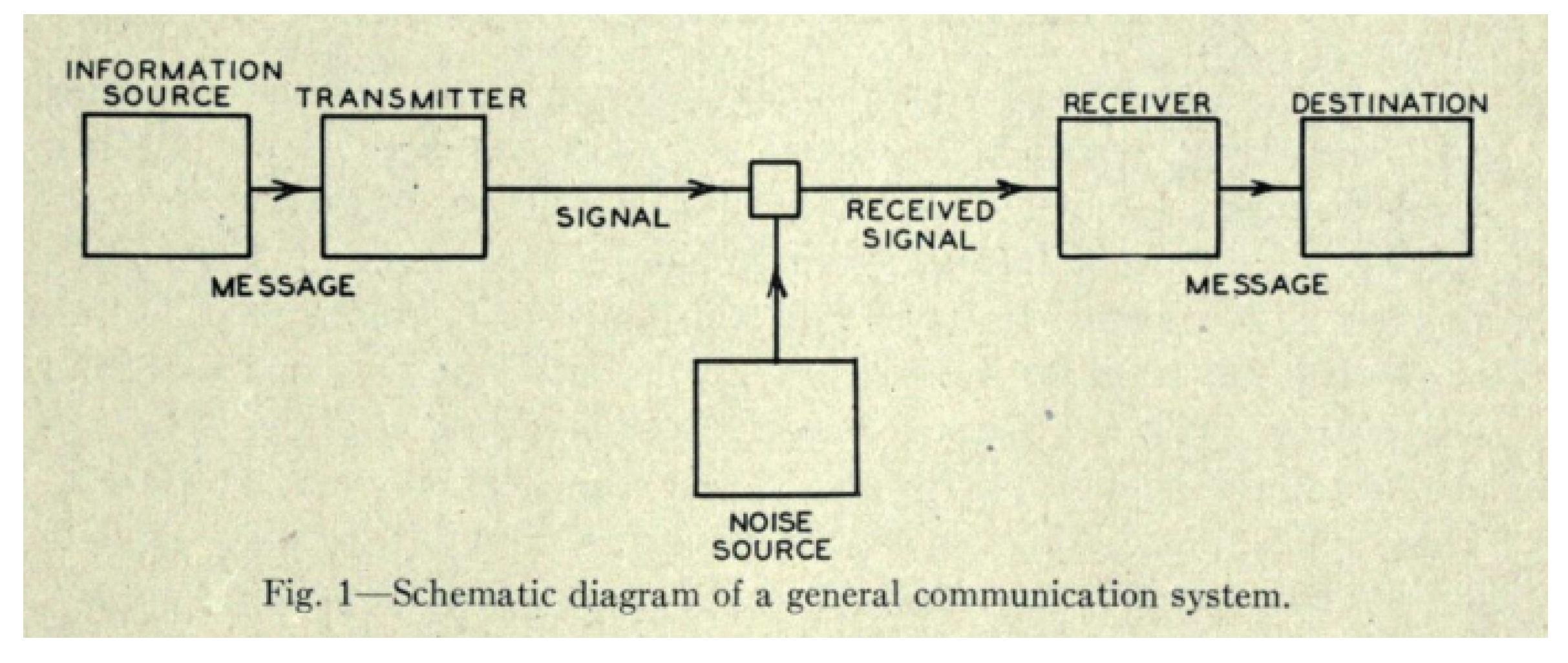

1. Introduction

1.1. Aim and Scope

1.2. Genesis & Historical Lineage

1.3. Paper Structure

- We start with by reviewing the necessary material to understand the so-called Information Theory Laws,

- Secondly, we linger on the two main flavors of information inequalities i.e.: Shannon-type and non-Shannon-type inequalities before focusing on the Information Theory Laws and bringing the attention to

- In third, we propose two retrospects: 1) on the machine-assisted verification relation of information inequalities, 2) on the evolution of research with respect to the complexity verification task of these inequalities.

- Finally, we close this study with our impressions left by the work and exploration of this field.

2. Preliminaries and Main Concepts

2.1. Definitions: Shannon Information Measures

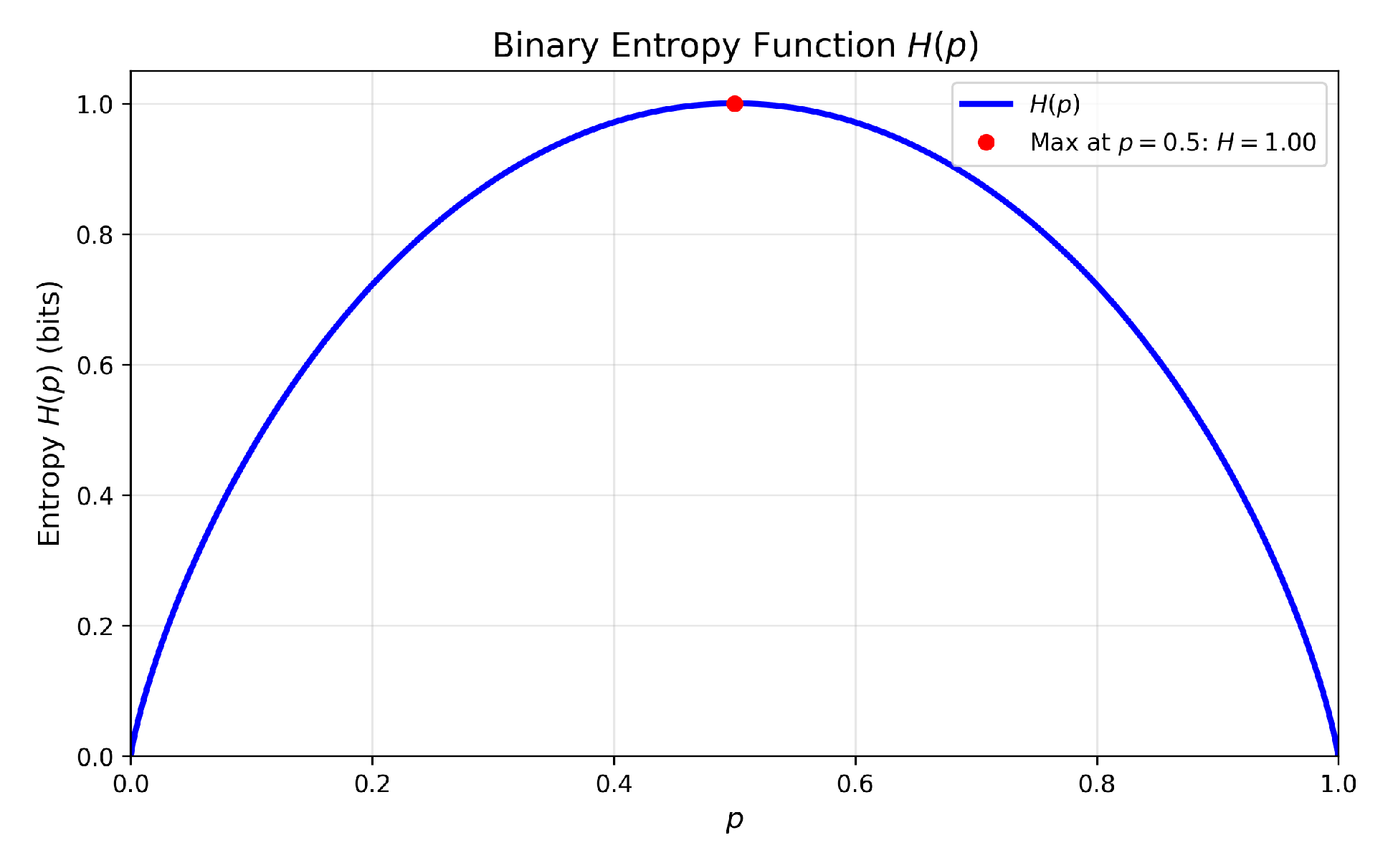

2.1.1. Shannon Entropy

2.1.2. Entropy Function –

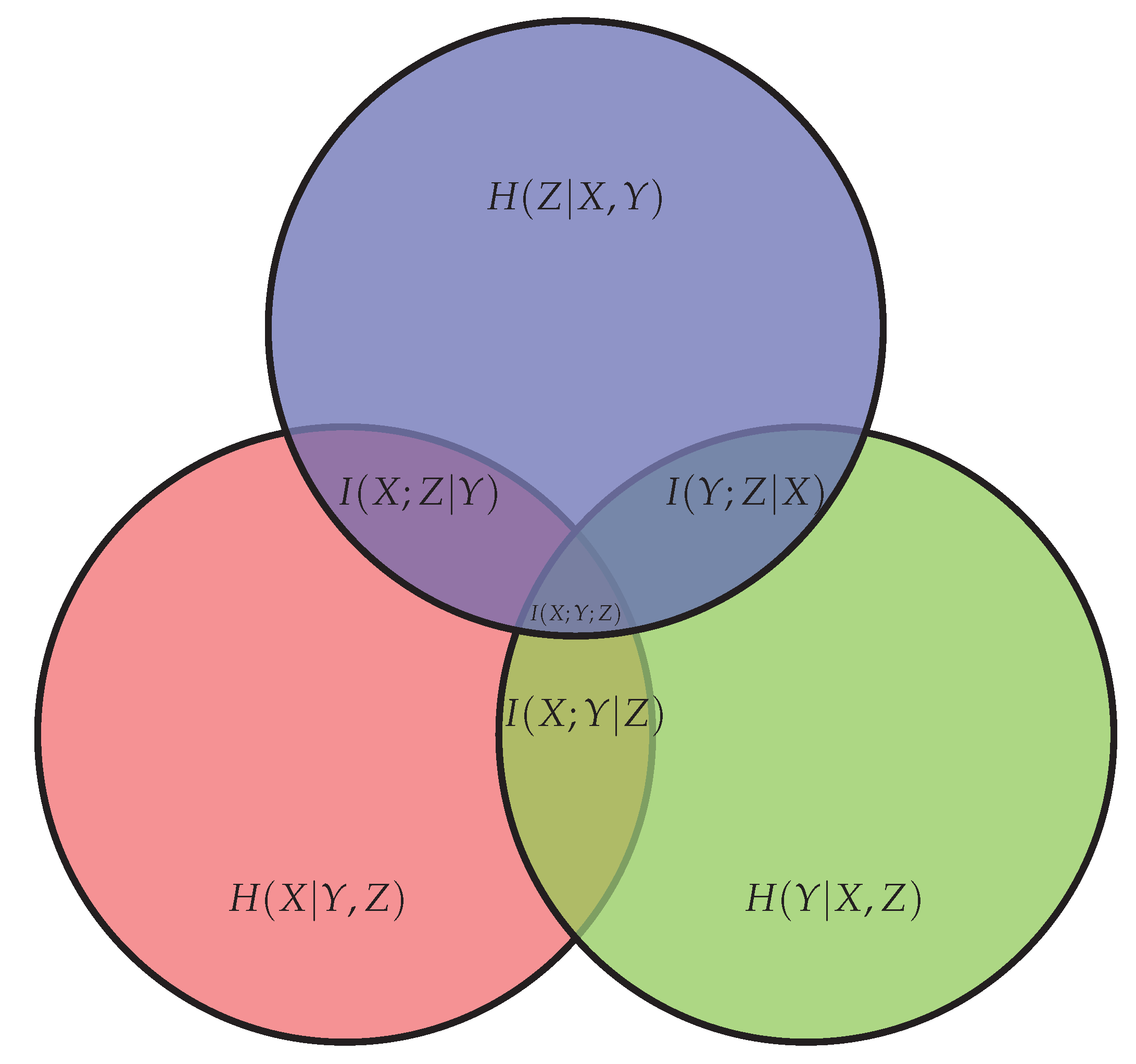

2.1.3. Conditional Entropy, Conditional and Mutual Information

- -

- (3) – Conditional Entropy,

- -

- (4) – Mutual Information,

- -

- (5) – Conditional Mutual Information.

2.1.4. Entropy Space –

2.1.5. {Entropy, Shannon} Regions –

2.2. Linear Inequalities

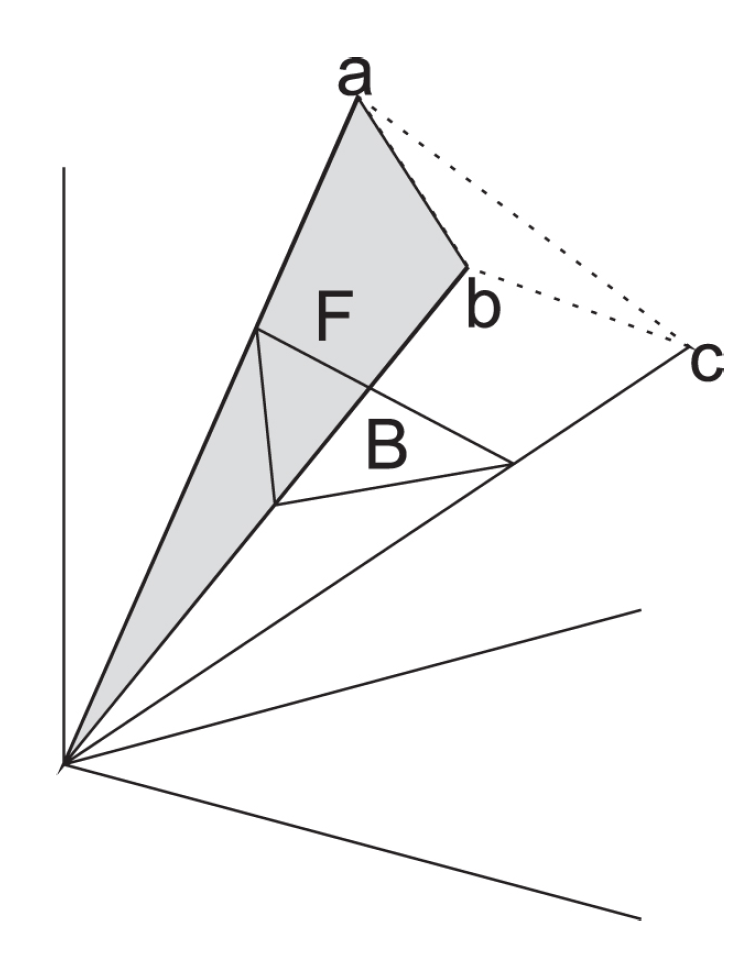

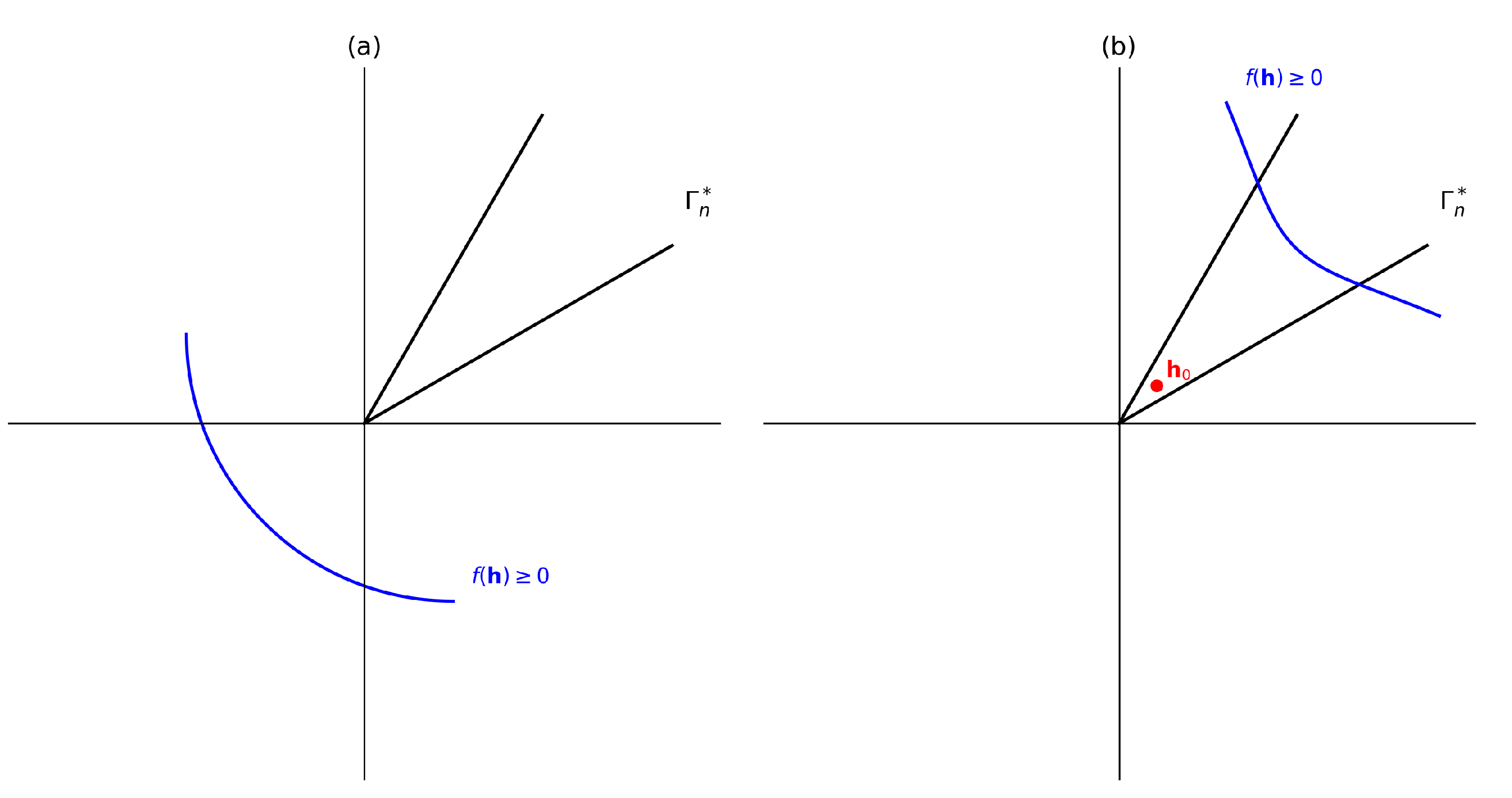

2.3. Geometric Framework

- -

- The identification of Shannon-type inequalities through the use of a geometric form and an interactive theorem verifier i.e. an automated way to generate, verify and prove inequalities built from the canonical form,

- -

- Being able to prove its correctness, or;

- Disproving it by providing a counterexample.

- The inequality is true but there are infinitely many of them – non-Shannon-type (3.2) of information inequalities, or;

- The inequality is not true in general i.e. there exists counterexamples which can disprove it.

2.4. Polymatroid Axioms

- -

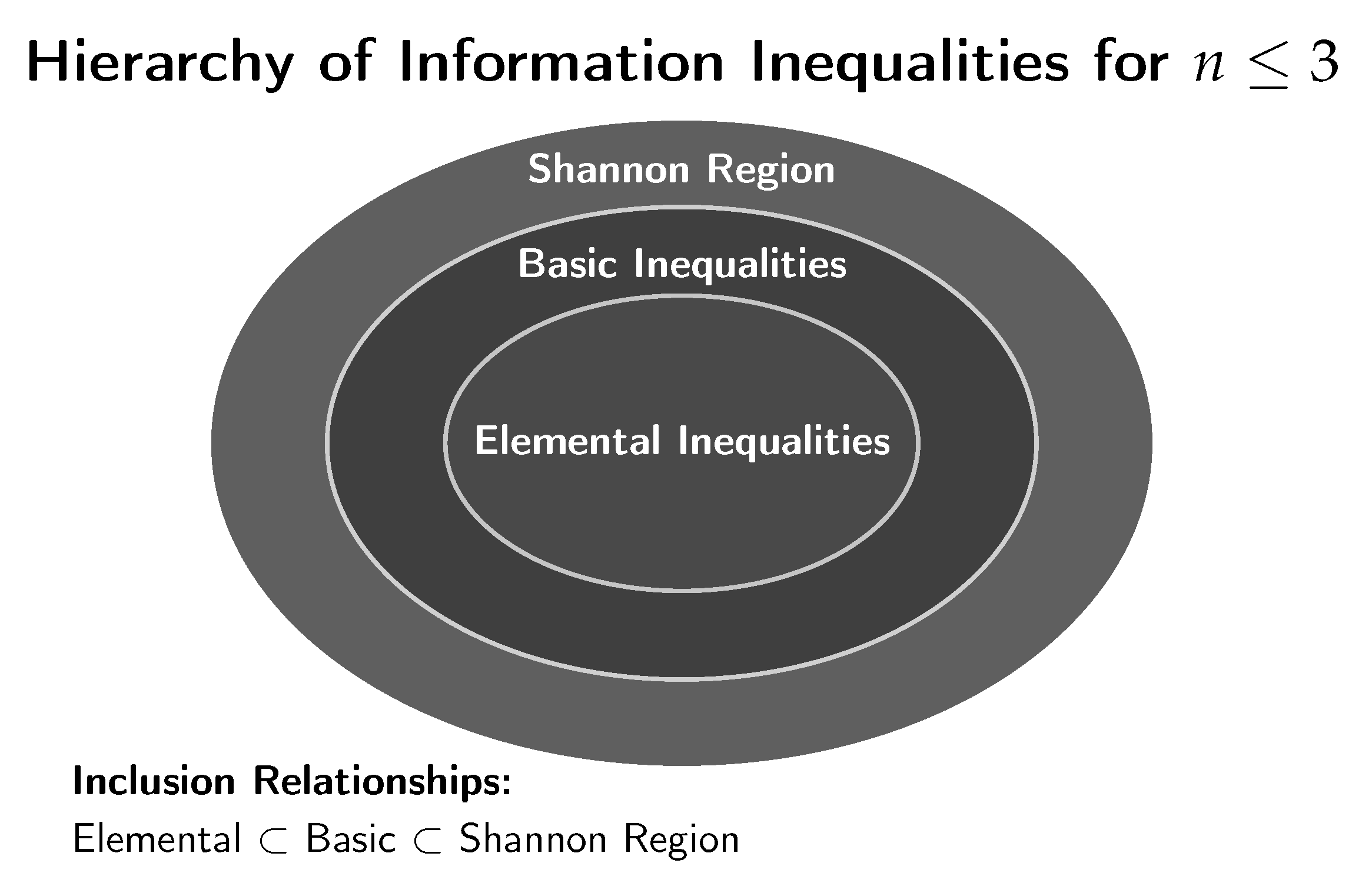

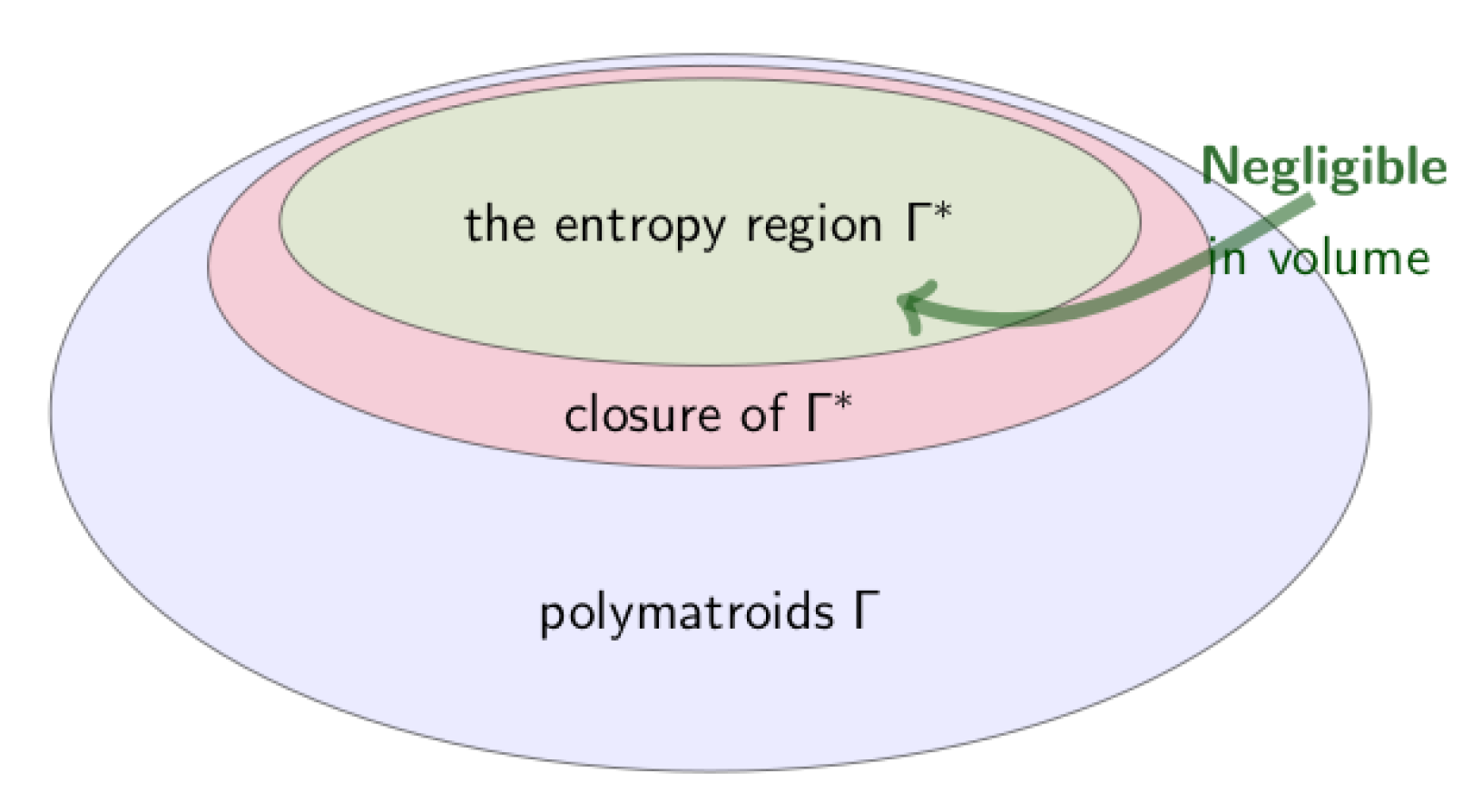

- The region (Shannon region) consists of all vectors that satisfy the elemental inequalities,

- -

- The region describes the space of entropic vectors that corresponds to actual entropy functions for some d.r.v. collection (). A vector is called entropic if and only if it belongs to .

- -

- The region is the closure of – also called the almost entropic region.

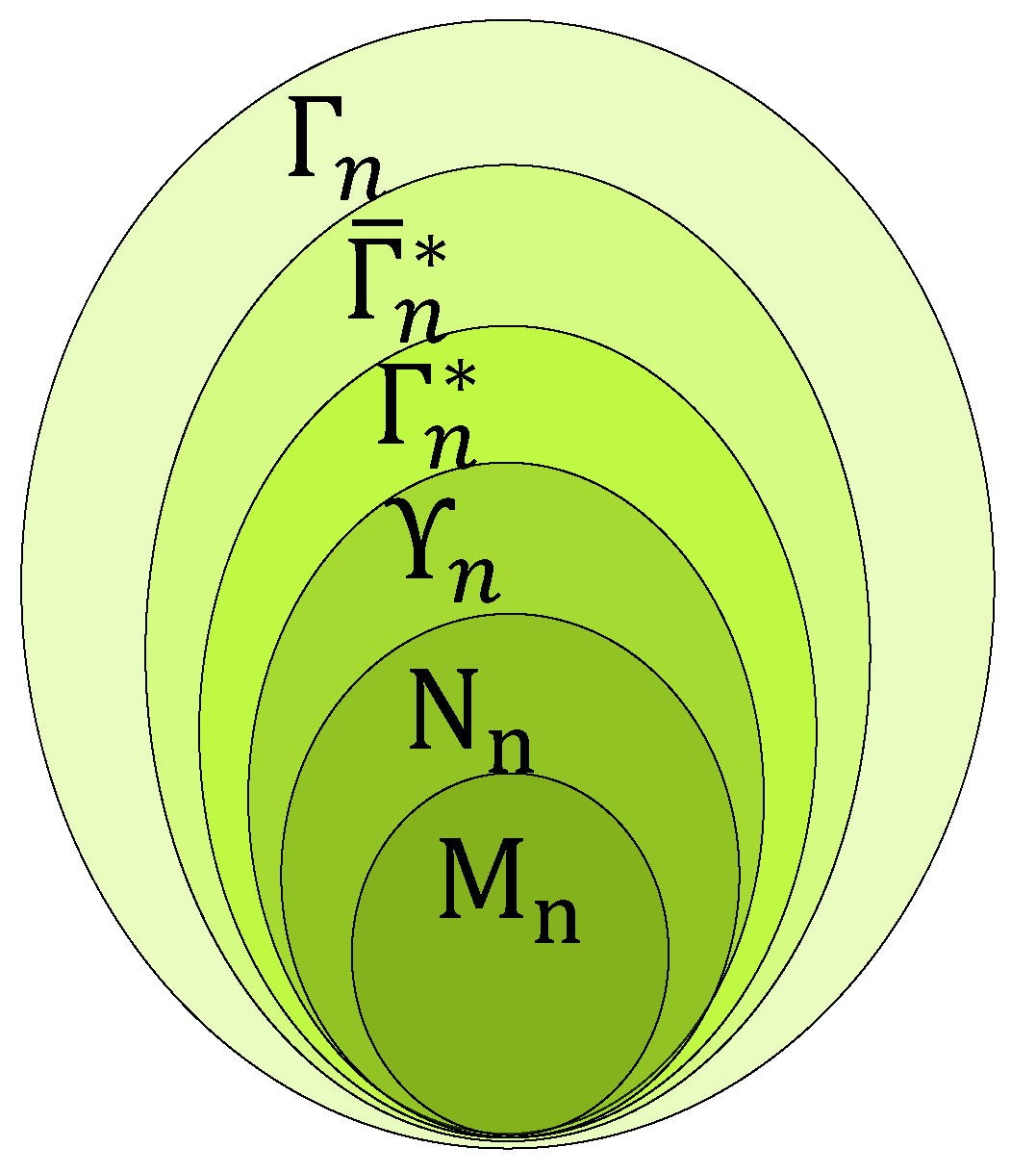

| Symbol Region | Meaning |

|---|---|

| Modular Polymatroids | |

| Normal Polymatroids | |

| Group Realizable | |

| Entropic | |

| Almost-Entropic | |

| Polymatroid |

- -

- ,

- -

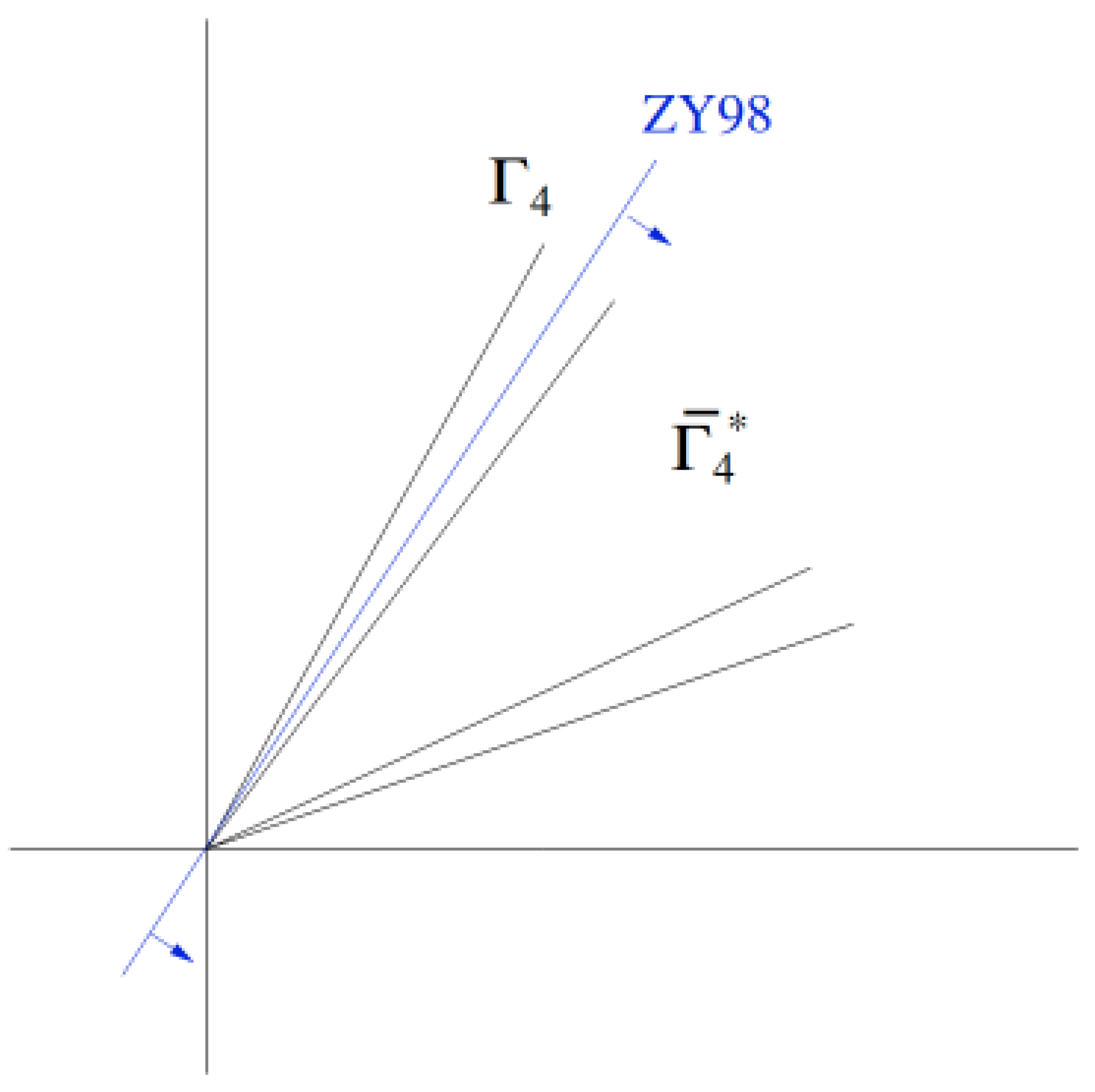

- but i.e. is the smallest cone containing (),

- -

- .

- -

- Little is known for behavior for and for given ,

- -

- In general, is a convex cone,

- -

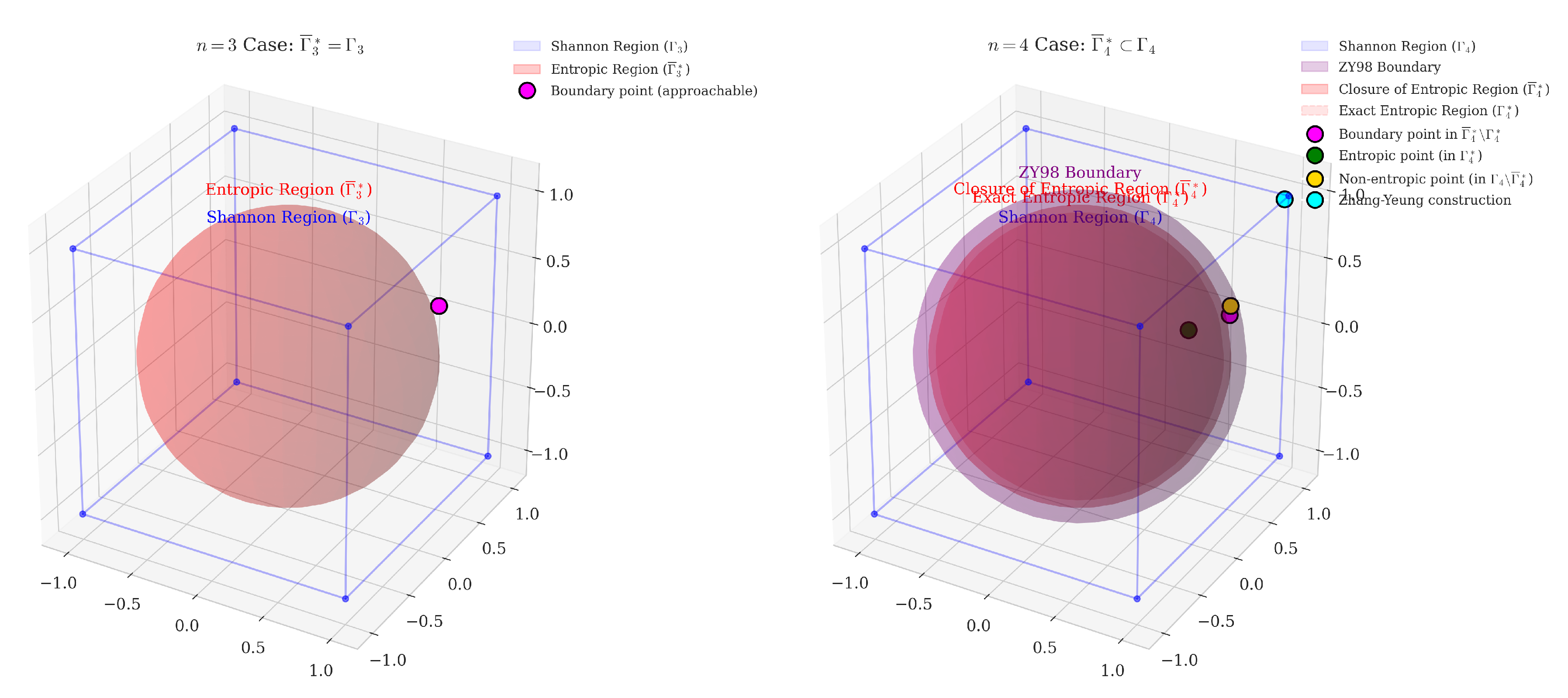

2.4.1. Polymatroids Visualizations

- A polymatroid convex cone in and the concept of facet,

- The hierarchy relation between elemental inequalities, basic inequalities and the Shannon region () for r.d.v.,

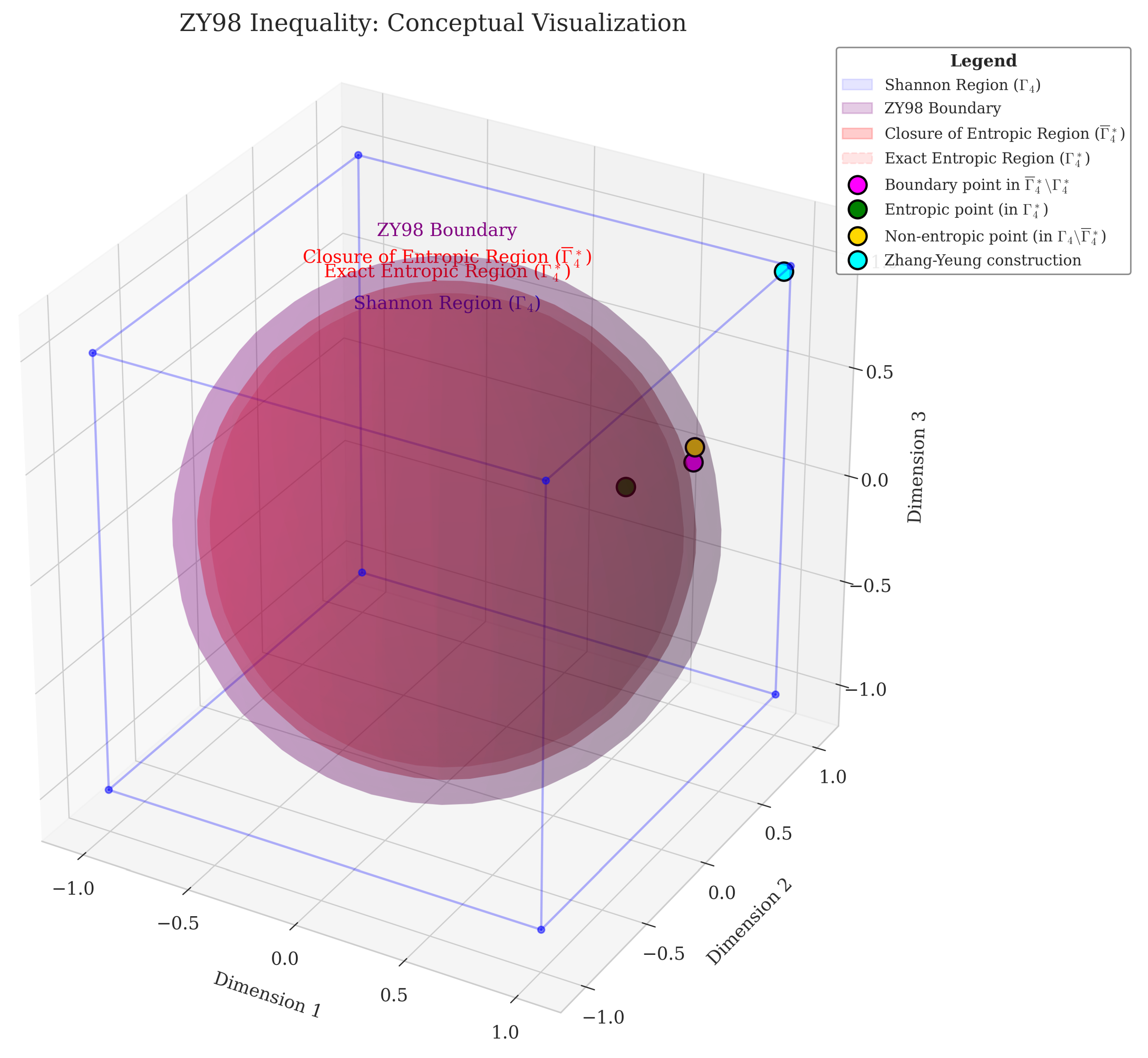

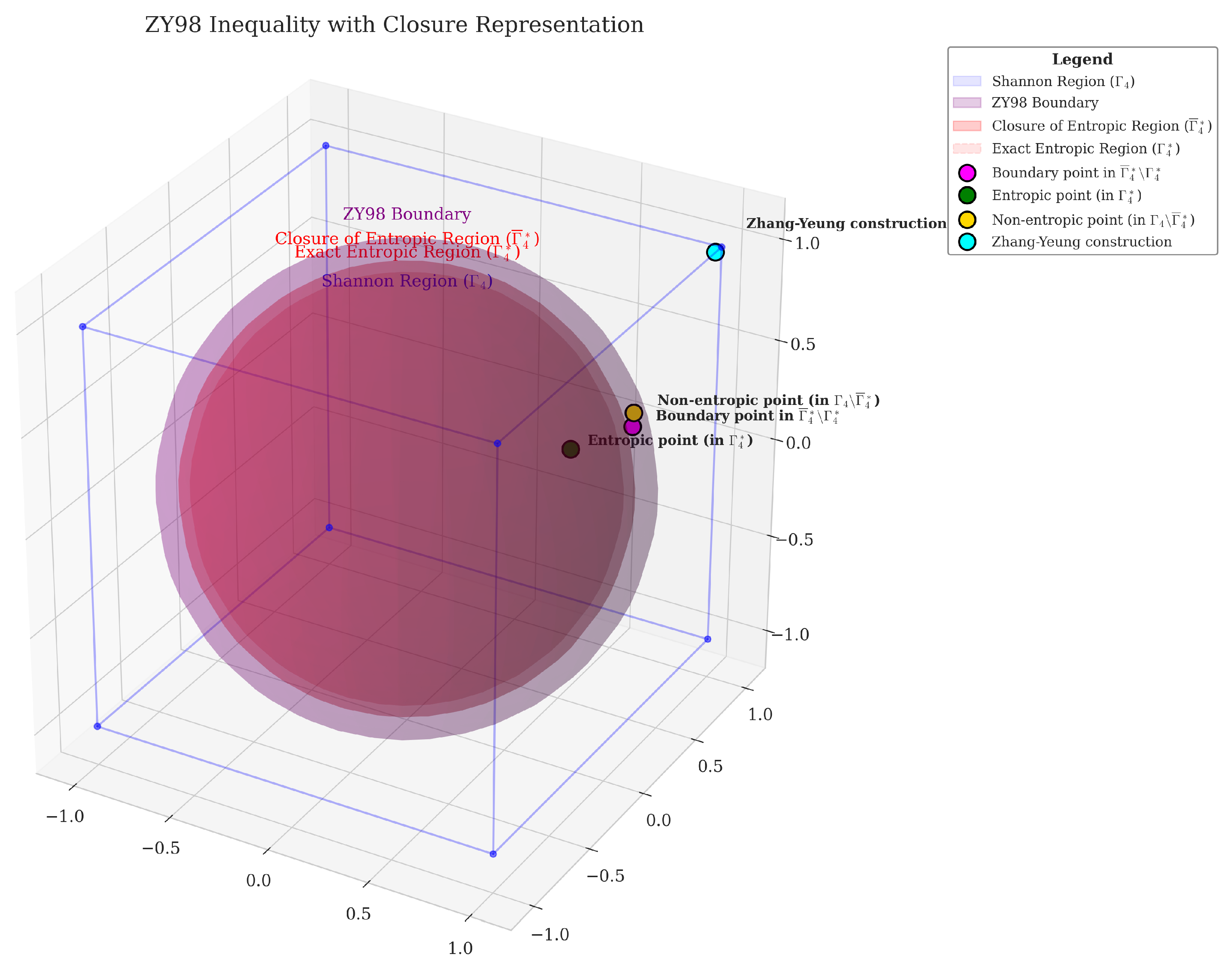

- Notes – Figure 8:

- -

- (Exact Entropic Region) is the sphere in red,

- -

- The closure of Exact Entropic Region ,

- -

- The purple surface is the ZY98 boundary defined by the ZY98 inequality,

- -

- (Shannon region) is the cube in blue – points satisfying the basic inequalities,

- -

- Magenta point(s): boundary points in ,

- -

- Green point(s): entropic points in ,

- -

- Gold point(s): non-entropic points in (satisfying basic inequalities but non-entropic),

- -

- Cyan point(s): Zhang-Yeung construction along the extreme ray [20].

2.4.2. Remarks – Visualizations “hacks” cube/sphere

2.4.3. Polymatroid Properties

| Property | Meaning |

|---|---|

| Nonnegativity | |

| Monotonicity | |

| Submodularity |

2.5. Notation

| Symbol | Meaning |

|---|---|

| Entropy Space (in ) | |

| Entropy Vector | |

| f | (Linear) Information Expression (IE) |

| Linear Combination on IE | |

| or | Entropic Region |

| Almost Entropic Region (closure on ) | |

| Shannon Region | |

| ZY98 | Unconstrained Non-Shannon-type Zhang-Yeung Inequality (2) |

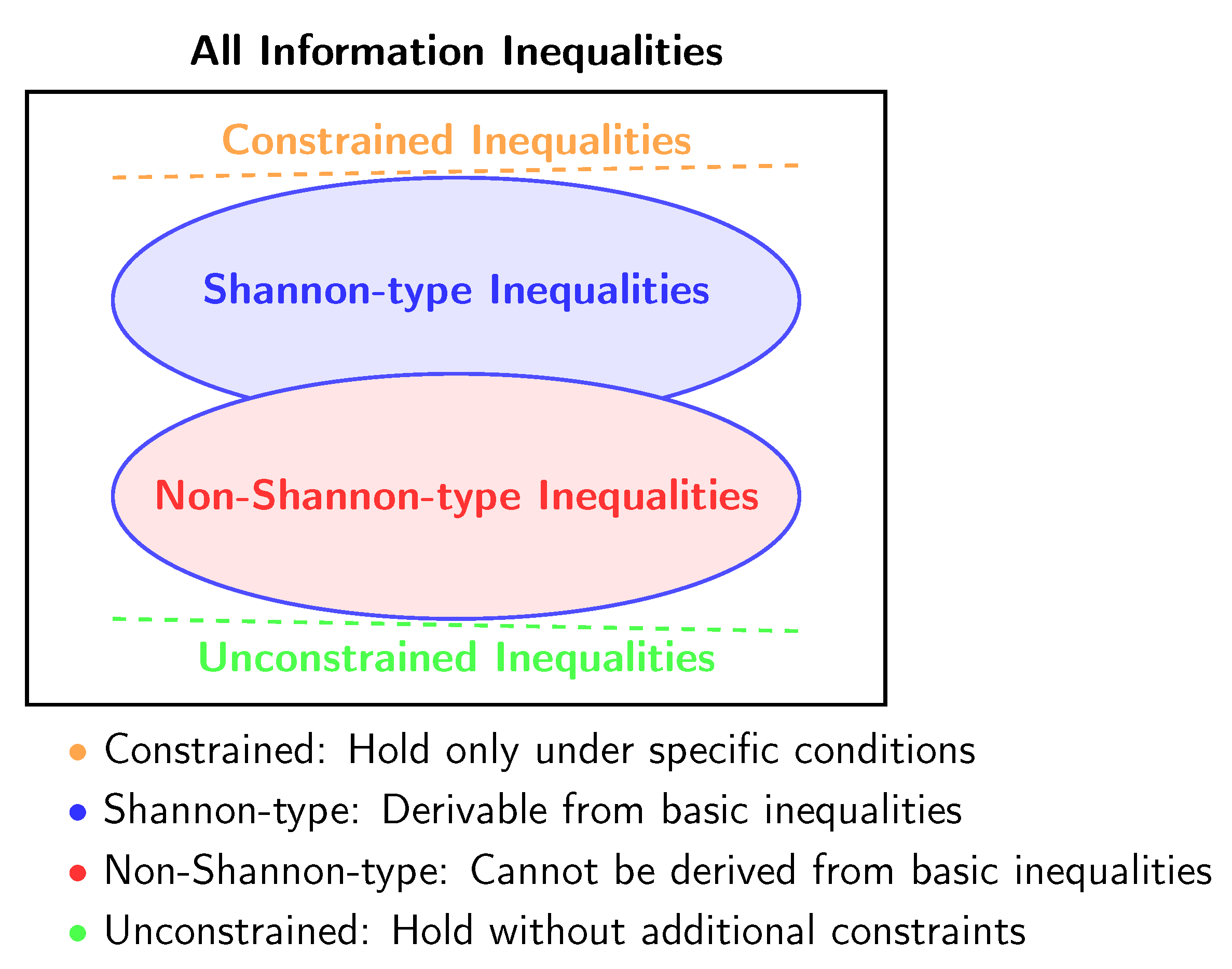

3. Spectrum of Information Inequalities

3.1. Shannon-type Inequalities

Constrained and Unconstrained Shannon-type Inequalities

3.2. Non-Shannon-type Inequalities

Constrained and Unconstrained Non-Shannon-type Inequalities:

3.3. Laws of Information Theory

3.4. Except Lin as Laws 7

The first law of information theory: the total amount of data L (the sum of entropy and information, L = S + I ) of an isolated system remains unchanged.

The second law of information theory: Information (I) of an isolated system decreases to a minimum at equilibrium.

The third law of information theory: For a solid structure of perfect symmetry (e.g., a perfect crystal), the information I is zero and the (information theory) entropy (called by me as static entropy for solid state) S is at the maximum.

4. Information Inequalities – Timelines

- We start by highlighting the inflection point(s) from where the use of “mechanization” becomes required (4.1).

- Then, we present a timeline of the main machine-assisted software for information inequality verification.

- Finally, we give a historical/broader overview of these evolutions.

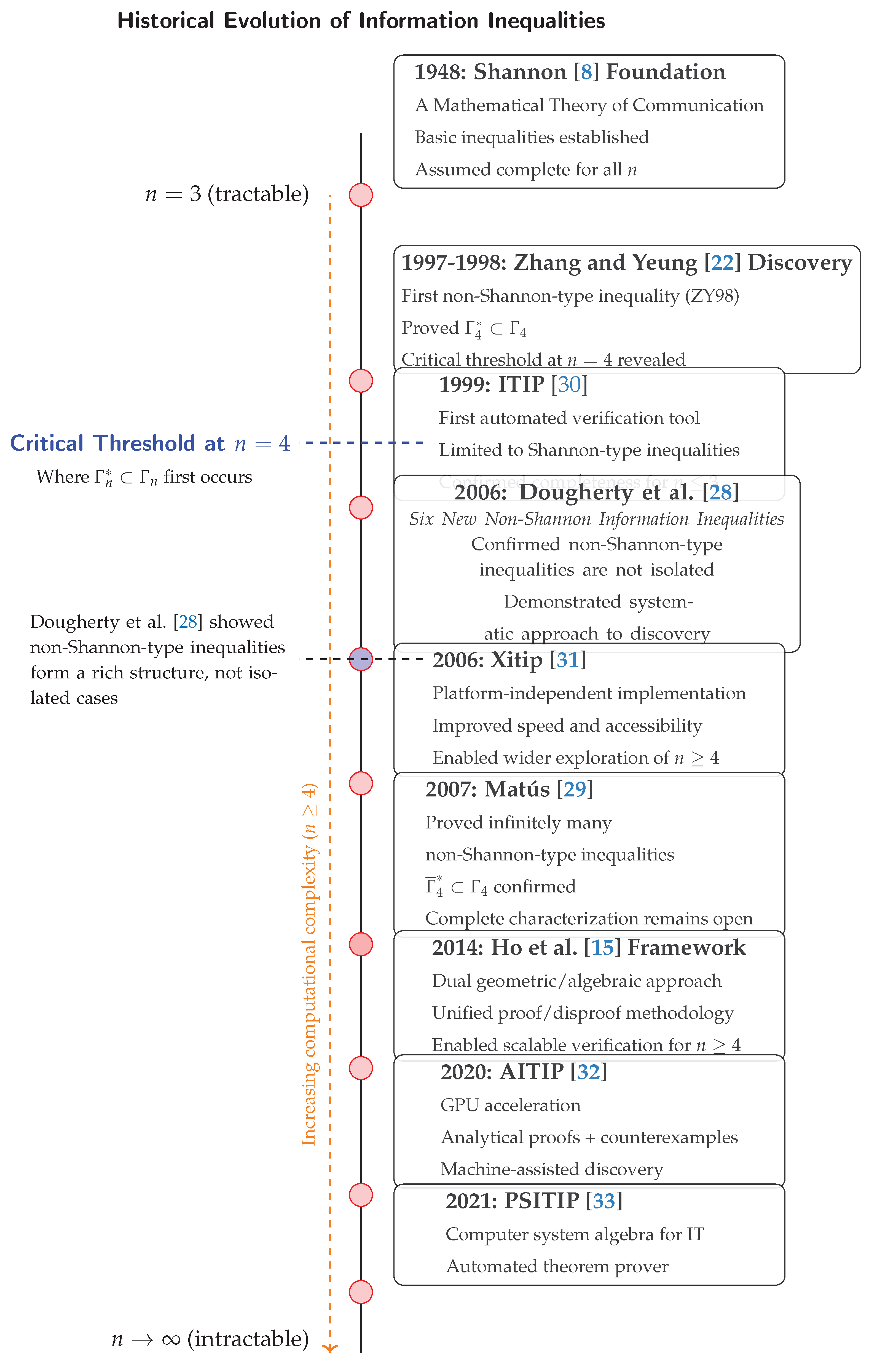

4.1. The Critical Threshold: and

- -

- For : , the basic inequalities are complete; the space of entropic vectors is completely characterized by the Shannon region ().

- -

- For : (proper subset), but ; all information inequalities are of Shannon-type, yet the space of entropic vectors () is strictly contained within the Shannon region (though its closure equals the Shannon region ()).

- -

- For : and ; the basic inequalities are insufficient because there exist non-Shannon-type inequalities that cannot be derived from basic inequalities; the space of entropic vectors () is strictly contained within the Shannon region (), with additional constraints beyond Shannon-type inequalities.

- -

- n=2 variables: simple and easy case where basic inequalities completely characterize all the possible information relationships.

- -

- n=3 variables: still theoretically manageable, this is why early researchers could have believed that basic inequalities might have been universally sufficient.

- -

- n=4 variables: the critical threshold where everything changed. The Zhang-Yeung discovery [22] showed that: 1) basic inequalities are no longer complete, 2) non-Shannon-type inequalities exist, 3) the verification complexity increased drastically.

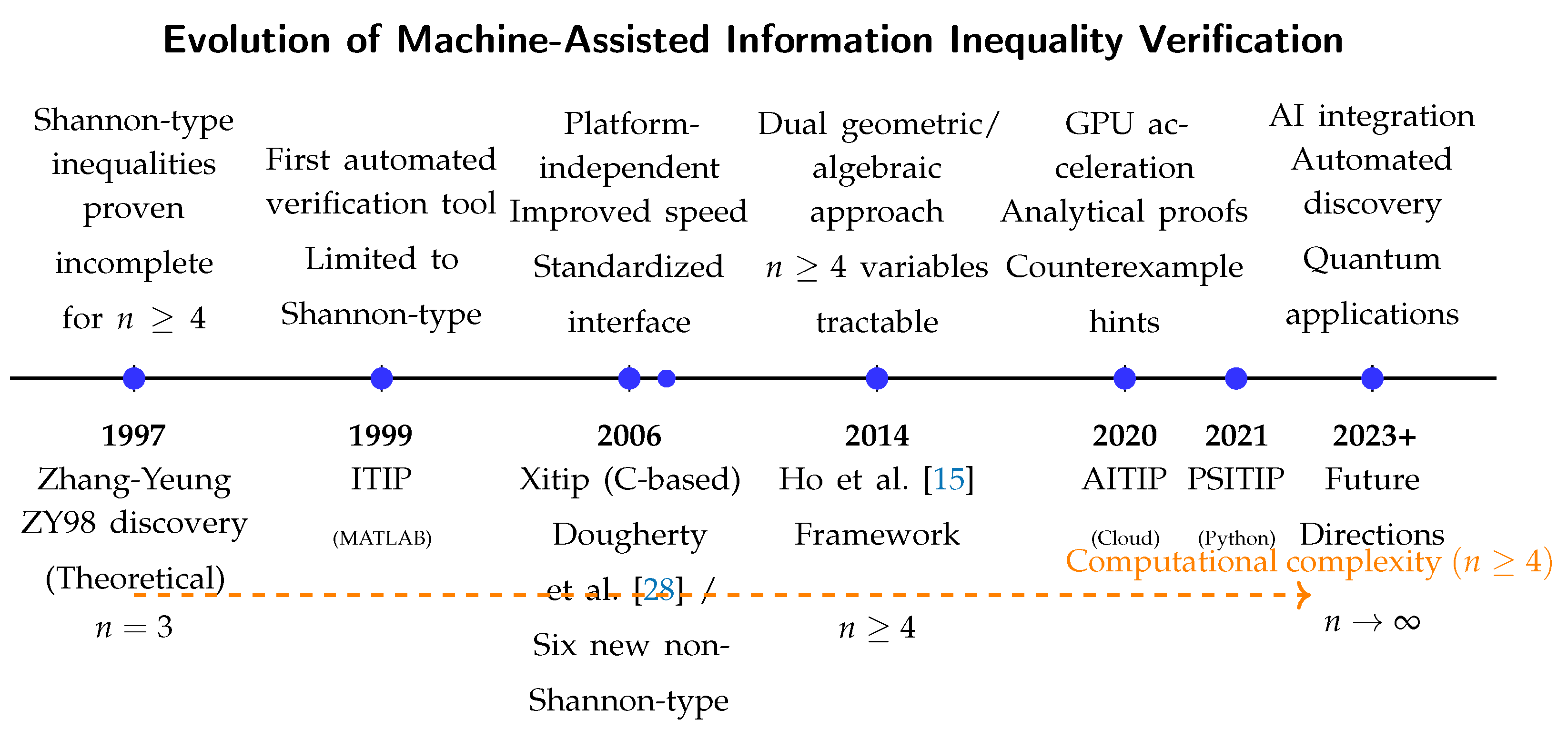

4.2. Machine-Assisted Information Inequality Verification

-

1948-1997Since Shannon’s foundational paper [8], researchers believed that all information inequalities were of the Shannon-type (). The question was: do inequalities exist that are not of the Shannon-type?

-

1997-1998Zhang and Yeung’s landmark paper [22] demonstrated the existence of an unconstrained non-Shannon-type inequality (ZY98). This proved that the (the space of entropic vectors) is strictly contained within the Shannon region () – which is defined by Shannon inequalities. This proved that: , which was in contrast to where . This showed that information has a deeper structure than what was commonly thought.

-

2006Dougherty et al. [28] expanded the list of non-Shannon-type with six additional independent inequalities.

-

2007Matús [29] showed that there are infinitely many non-Shannon-type inequalities for random variables.

-

2007-presentAs shown in the timeline, computational methods emerged and are required to deal with the “complexity explosion” for and verify their validity.

4.3. Historical Evolution of Information Inequalities

5. Discussion and Conclusion

Author Contributions

Acknowledgments

Conflicts of Interest

Abbreviations

| IT | Information Theory |

| IE | Information Expression |

| d.r.v. | Discrete Random Variable |

| DNA | Deoxyribonucleic Acid |

| ITIP | Information Theoretic Inequality Prover |

| AITIP | Automated Information Theoretic Inequality Prover |

| PSITIP | Python Symbolic Information Theoretic Inequality Prover |

Appendix A. Information Expressions

Appendix A.1. Information Identities

Appendix B. Information Theory

Appendix B.1. Information Identities

Appendix B.2. Chain Rules

References

- Parcalabescu, L.; Trost, N.; Frank, A. What is Multimodality?, 2021, [arXiv:cs.AI/2103.06304].

- Cropper, W.H. Rudolf Clausius and the road to entropy. American Journal of Physics 1986, 54, 1068–1074. [CrossRef]

- Brush, S.G. The Kind of Motion We Call Heat: A History of the Kinetic Theory of Gases in the 19th Century; Vol. 6, Studies in Statistical Mechanics, North-Holland Publishing Company: Amsterdam, 1976. Published in two volumes: Book 1 (Physics and the Atomists) and Book 2 (Statistical Physics and Irreversible Processes).

- von Neumann, J. Mathematical Foundations of Quantum Mechanics: New Edition; Princeton University Press: Princeton, 2018. [CrossRef]

- Pippenger, N. The inequalities of quantum information theory. IEEE Trans. Inf. Theory 2003, 49, 773–789. [CrossRef]

- Brillouin, L. Science and Information Theory; Courier Corporation, 2013.

- Rényi, A. On measures of entropy and information. Proc. 4th Berkeley Symp. Math. Stat. Probab. 1, 547-561 (1961)., 1961.

- Shannon, C.E. A mathematical theory of communication. Bell Syst. Tech. J. 1948, 27, 623–656. [CrossRef]

- Shannon, C.E. The Bell System Technical Journal 1948. 27. Includes seminal papers such as C. E. Shannon’s "A Mathematical Theory of Communication" (Parts I and II in Issues 3 and 4). Available at the Internet Archive.

- Hartnett, K. With Category Theory, Mathematics Escapes From Equality. Quanta Magazine 2019.

- Yeung, R.W. Facets of entropy. Commun. Inf. Syst. 2015, 15, 87–117. [CrossRef]

- Yeung, R.W. Information theory and network coding, 2008 ed.; Information Technology: Transmission, Processing and Storage, Springer: New York, NY, 2008.

- Guo, L.; Yeung, R.W.; Gao, X.S. Proving Information Inequalities and Identities With Symbolic Computation. IEEE Trans. Inf. Theor. 2023, 69, 4799–4811. [CrossRef]

- Yeung, R.W. A framework for linear information inequalities. IEEE Trans. Inf. Theory 1997, 43, 1924–1934. [CrossRef]

- Ho, S.; Ling, L.; Tan, C.W.; Yeung, R.W. Proving and Disproving Information Inequalities: Theory and Scalable Algorithms. IEEE Trans. Inf. Theory 2020, 66, 5522–5536. [CrossRef]

- Fujishige, S. Polymatroidal dependence structure of a set of random variables. Information and Control 1978, 39, 55–72. [CrossRef]

- Csirmaz, L. Around entropy inequalities. https://seafile.lirmm.fr/f/1a837bfc0063408f934b/, 12 Oct 2022. Accessed: 2025-11-23.

- Suciu, D. Applications of Information Inequalities to Database Theory Problems, 2024, [arXiv:cs.DB/2304.11996].

- Yeung, R.W. A framework for information inequalities. Proceedings of IEEE International Symposium on Information Theory 1997, pp. 268–.

- Chen, Q.; Yeung, R.W. Characterizing the entropy function region via extreme rays. In Proceedings of the 2012 IEEE Information Theory Workshop, Lausanne, Switzerland, September 3-7, 2012. IEEE, 2012, pp. 272–276. [CrossRef]

- Yeung, R.W.; Li, C.T. Machine-Proving of Entropy Inequalities. IEEE BITS the Information Theory Magazine 2021, 1, 12–22. [CrossRef]

- Zhang, Z.; Yeung, R. On characterization of entropy function via information inequalities. IEEE Transactions on Information Theory 1998, 44, 1440–1452. [CrossRef]

- Chaves, R.; Luft, L.; Gross, D. Causal structures from entropic information: geometry and novel scenarios. New Journal of Physics 2014, 16, 043001. [CrossRef]

- Tiwari, H.; Thakor, S. On Characterization of Entropic Vectors at the Boundary of Almost Entropic Cones. In Proceedings of the 2019 IEEE Information Theory Workshop, ITW 2019, Visby, Sweden, August 25-28, 2019. IEEE, 2019, pp. 1–5. [CrossRef]

- Zhang, Z.; Yeung, R. A non-Shannon-type conditional inequality of information quantities. IEEE Transactions on Information Theory 1997, 43, 1982–1986. [CrossRef]

- Matús, F. Piecewise linear conditional information inequality. IEEE Transactions on Information Theory 2006, 52, 236–238. [CrossRef]

- Lin, S.K. Gibbs Paradox and the Concepts of Information, Symmetry, Similarity and Their Relationship. Entropy 2008, 10, 1–5. [CrossRef]

- Dougherty, R.; Freiling, C.F.; Zeger, K. Six New Non-Shannon Information Inequalities. In Proceedings of the Proceedings 2006 IEEE International Symposium on Information Theory, ISIT 2006, The Westin Seattle, Seattle, Washington, USA, July 9-14, 2006. IEEE, 2006, pp. 233–236. [CrossRef]

- Matús, F. Infinitely Many Information Inequalities. In Proceedings of the IEEE International Symposium on Information Theory, ISIT 2007, Nice, France, June 24-29, 2007. IEEE, 2007, pp. 41–44. [CrossRef]

- Yeung, R.W.; Yan, Y.O. ITIP—Information Theoretic Inequality Prover. http://user-www.ie.cuhk.edu.hk/~ITIP/, 1996.

- Pulikkoonattu, R.; Perron, E.; Diggavi, S. Xitip Information Theoretic Inequalities Prover. http://xitip.epfl.ch, 2008.

- Ho, S.W.; Ling, L.; Tan, C.W.; Yeung, R.W. AITIP. https://aitip.org, 2020.

- Li, C.T. An Automated Theorem Proving Framework for Information-Theoretic Results 2021. pp. 2750–2755. [CrossRef]

| 1 | Inscribed in a closer temporal timeframe: Pippenger [5]. |

| 2 | Let’s us recall the prior foundational work of R. V. R. Hartley. |

| 3 | Incidentally, the canonical symbol defining equality (=) is depicted using two superposed segments; the nature of “equality” is questioned: [10] |

| 4 | In this case: ITIP(Information Theoretic Inequality Prover) but the approach is generally applicable. |

| 5 | |

| 6 | For the interested reader in a thorough mathematical description Yeung [12] can be consulted. |

| 7 | This is a wink to a Python exception handling statement / design pattern. |

| 8 | That is, in the context of Science. |

| 9 | This is not in the sense of Computational Complexity Theory (CCT) as a field, though some close-ups could be made. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).