Submitted:

10 December 2025

Posted:

10 December 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Materials

3. Methods

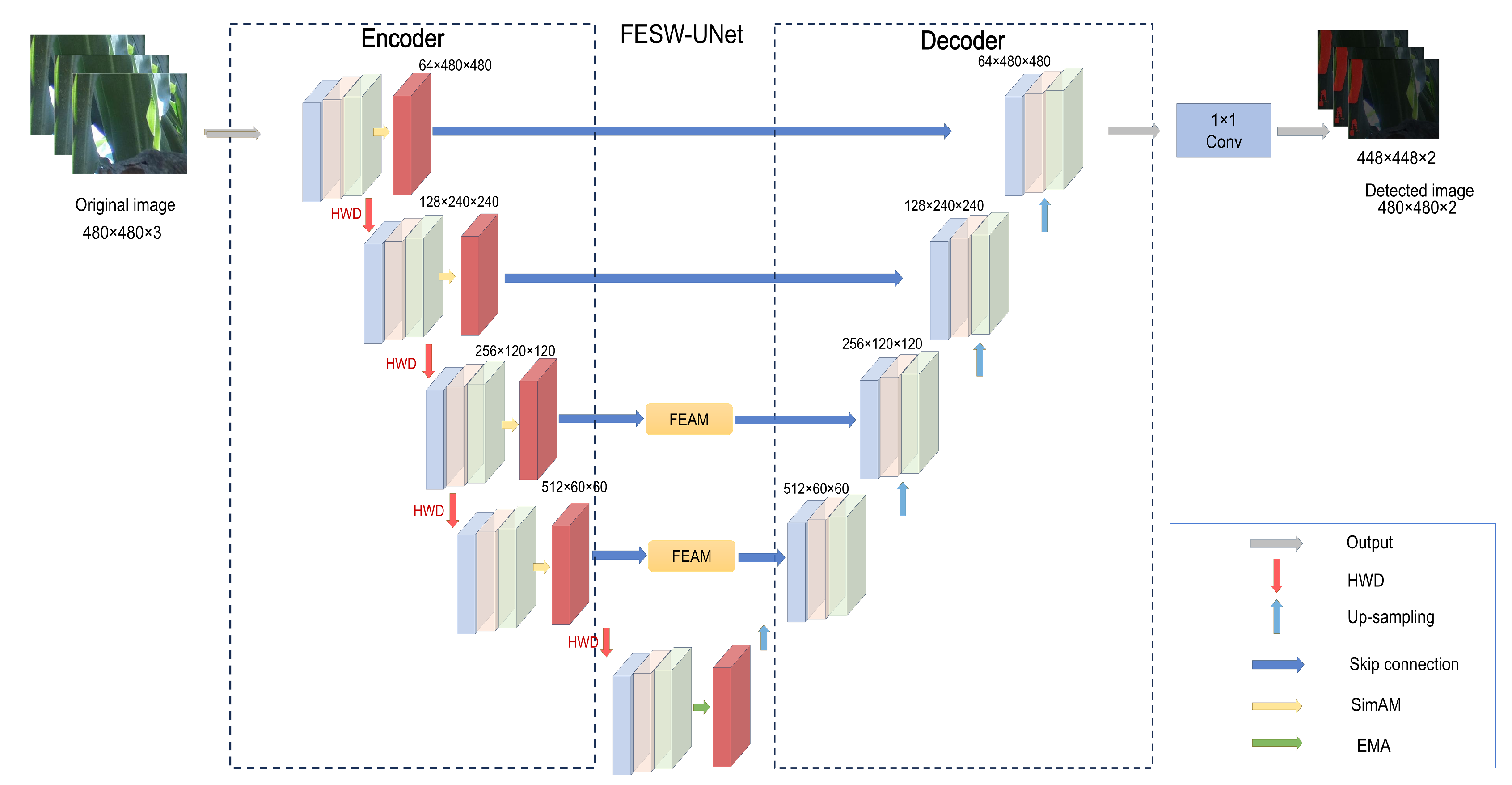

3.1. Overall Architecture of FESW-UNet

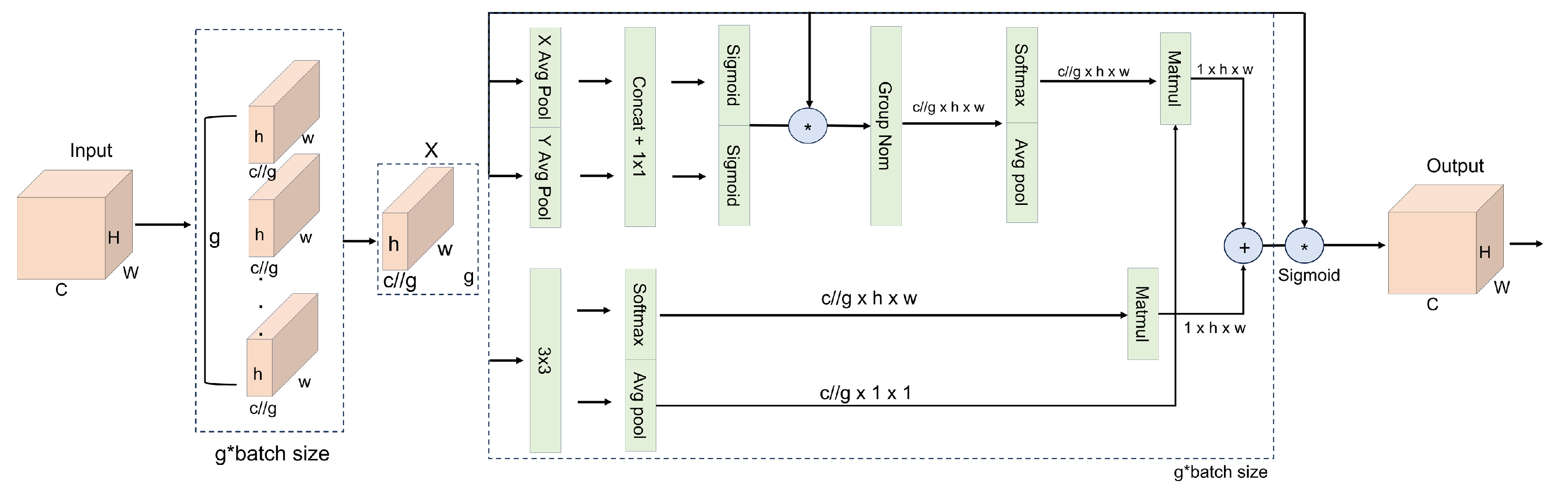

3.2. EMA Module

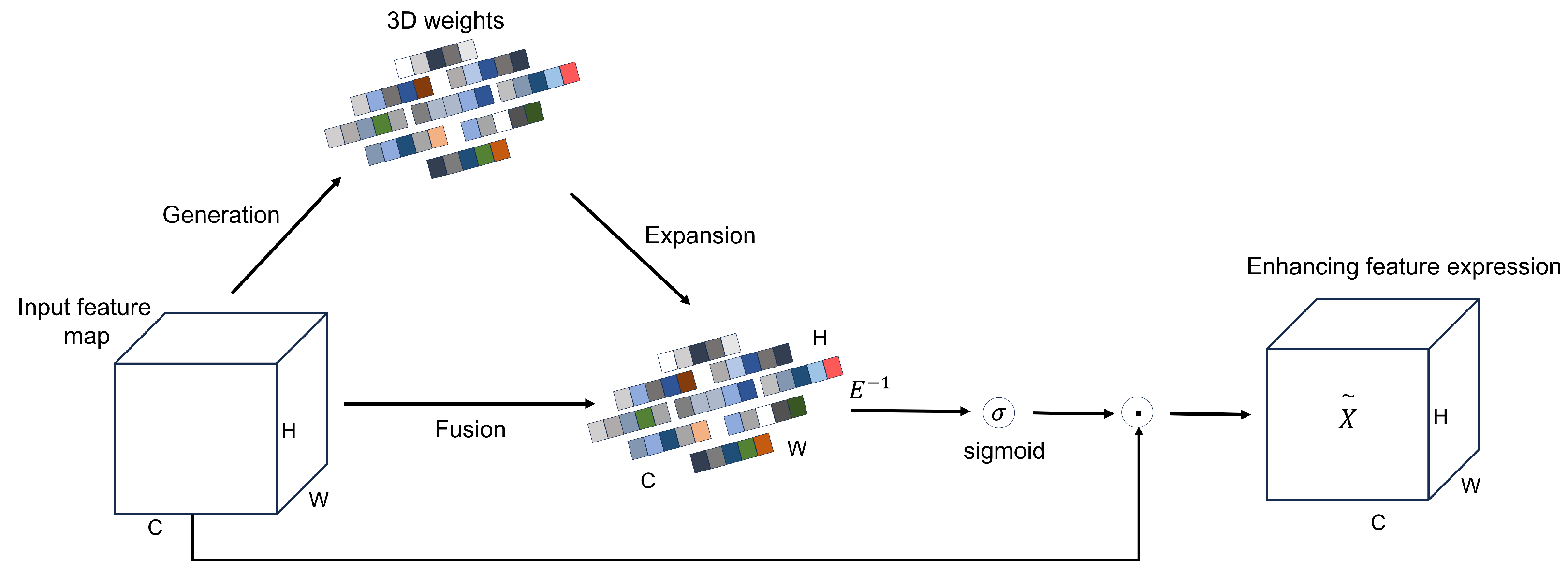

3.3. SimAM Module

3.4. HWD Downsampling

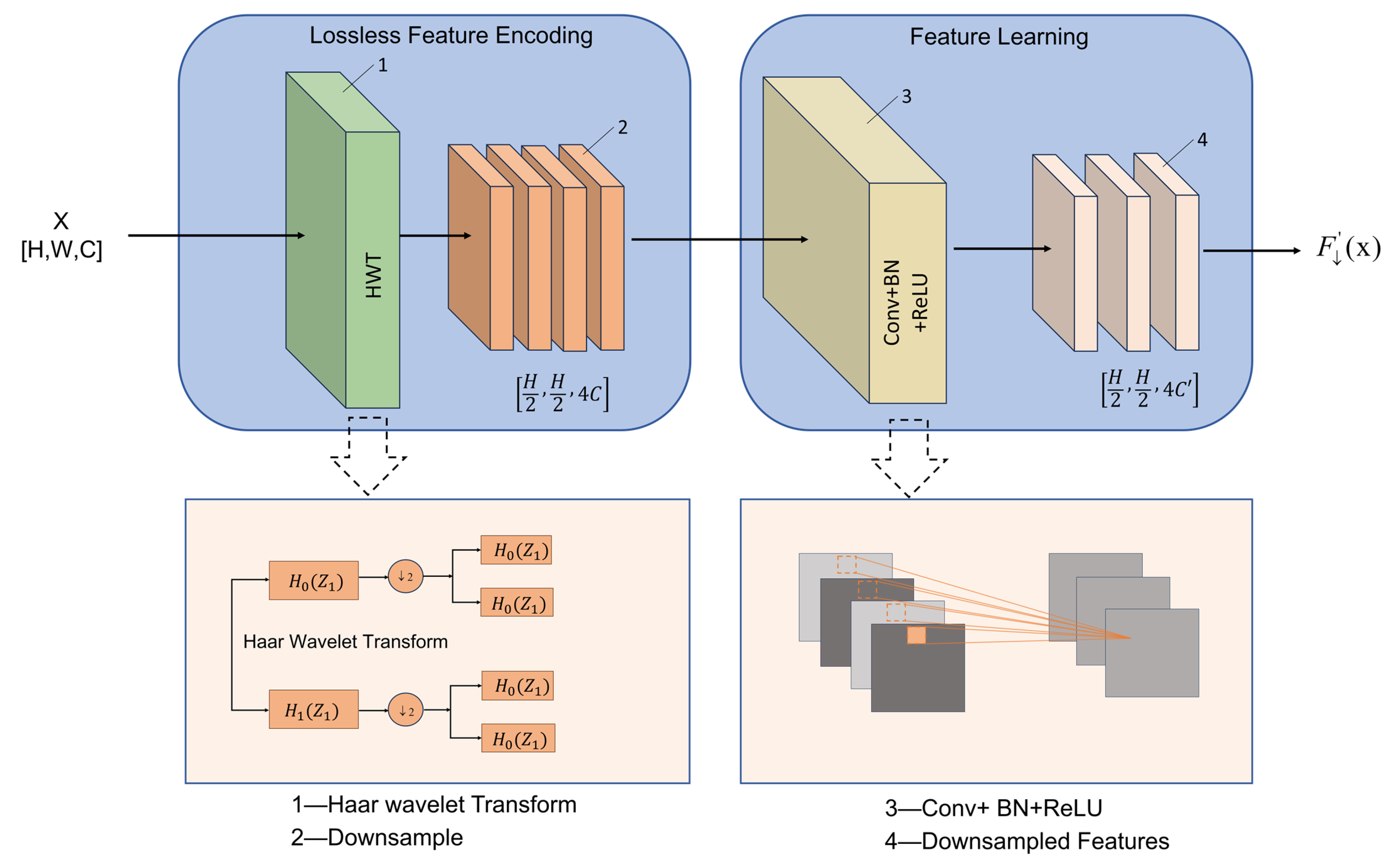

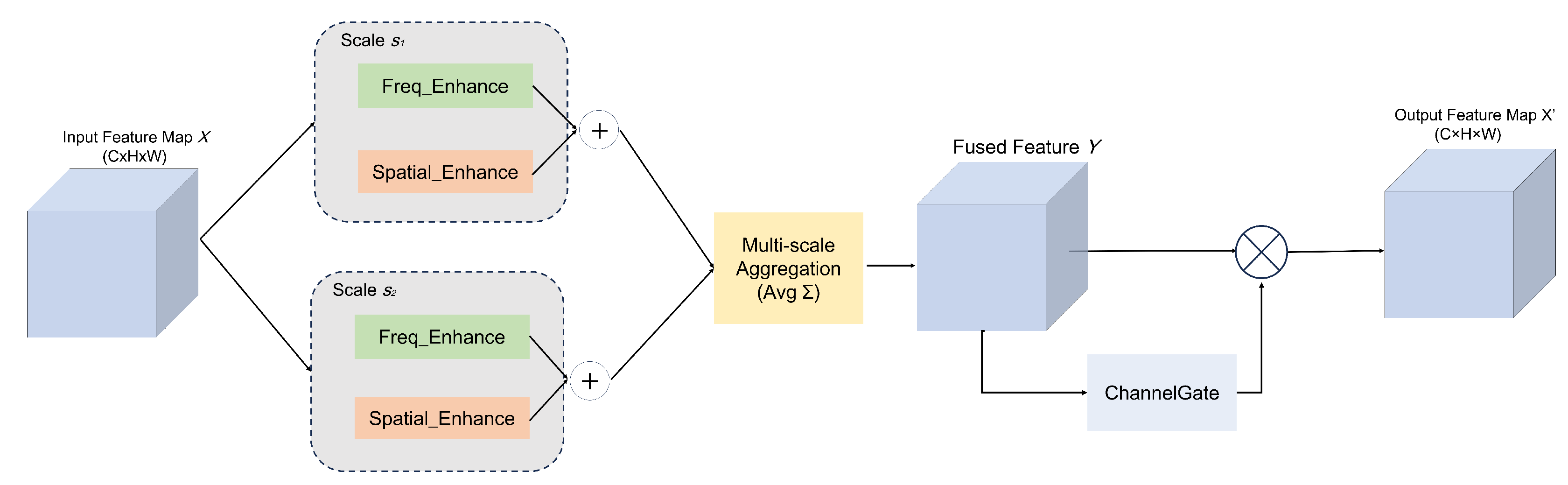

3.5. FEAM Module

4. Experimental Results

4.1. Experimental Settings

| Category | Component | Specification |

|---|---|---|

| [-0.8em]Hardware environment | CPU | Intel(R) Xeon(R) Silver 4210R @ 2.40 GHz |

| GPU | NVIDIA GeForce RTX 3080 (10 GB VRAM) | |

| RAM | 32 GB | |

| Software environment | Operating system | Ubuntu 20.04.6 |

| Deep learning framework | PyTorch 2.0.1 | |

| Python | 3.8 | |

| CUDA Toolkit | CUDA 12.2 |

4.2. Evaluation Indicators

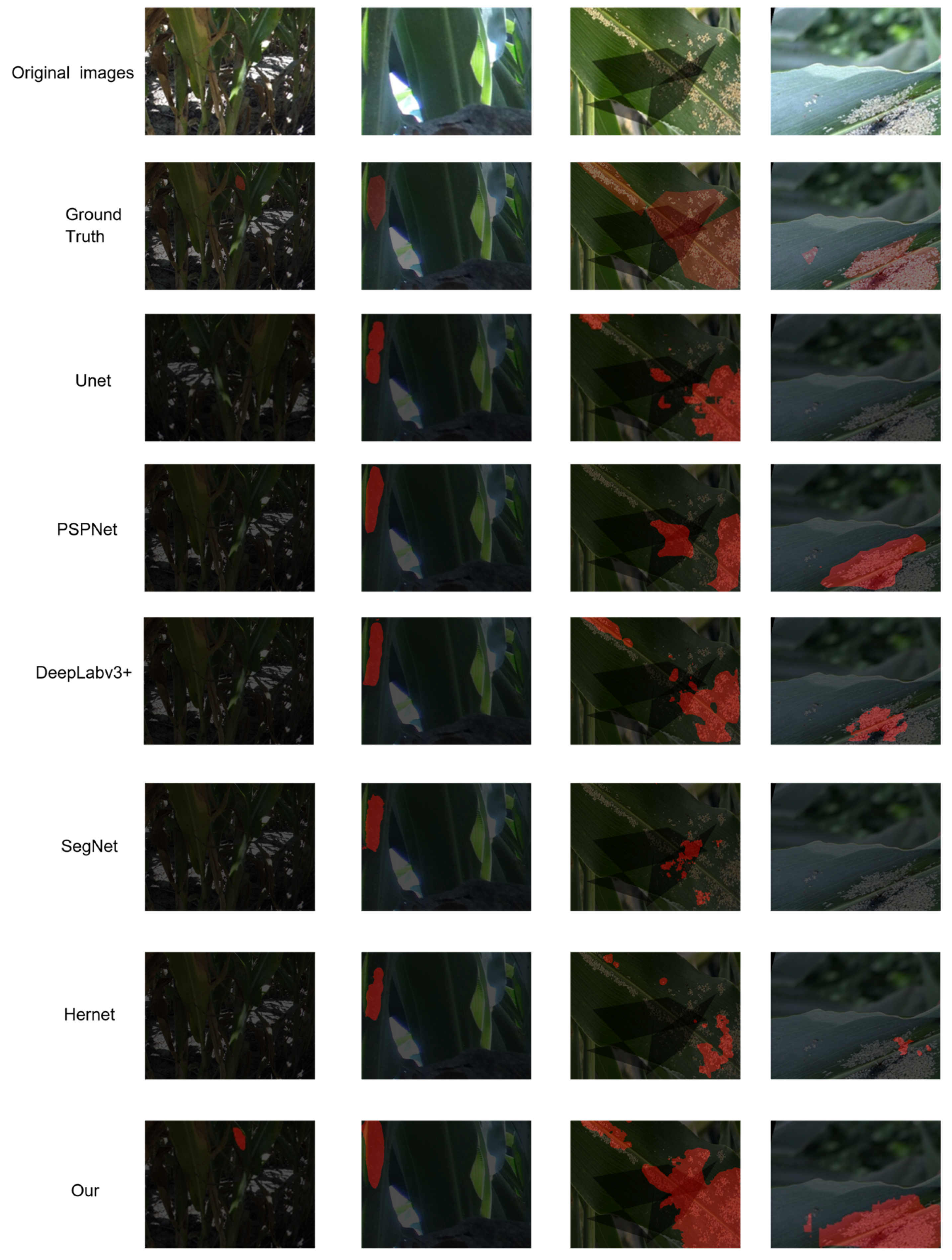

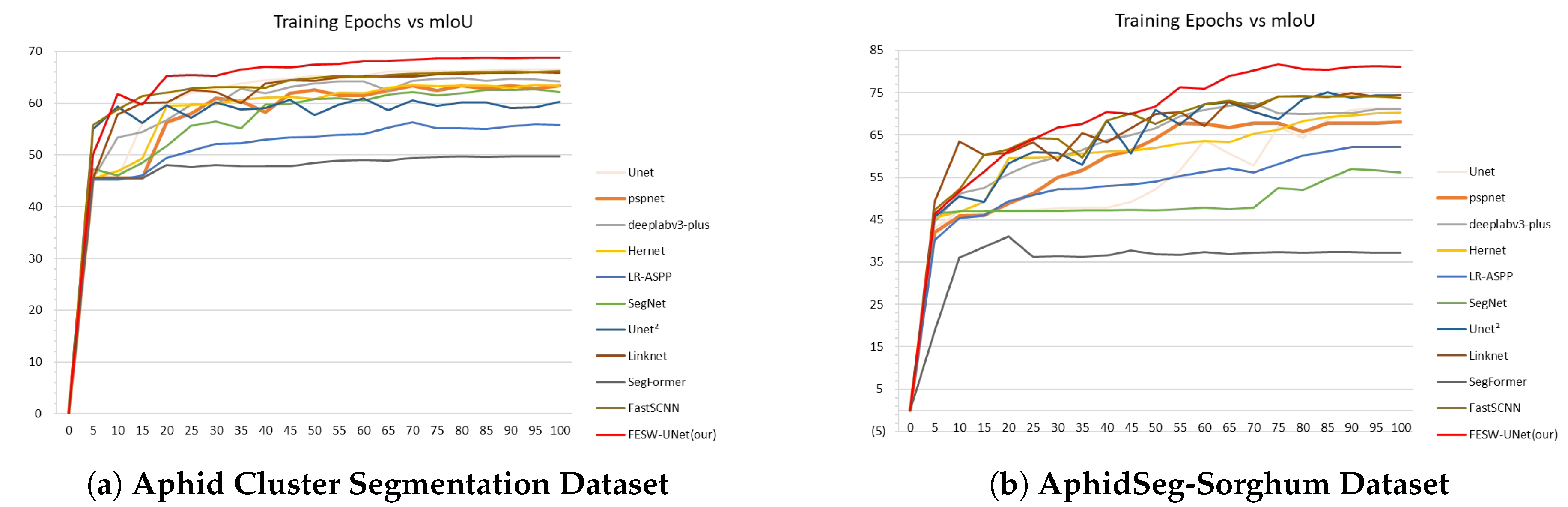

4.3. Model Comparative Experiments

4.4. The Effect of Different Numbers of FEAM Modules on FESW-UNet

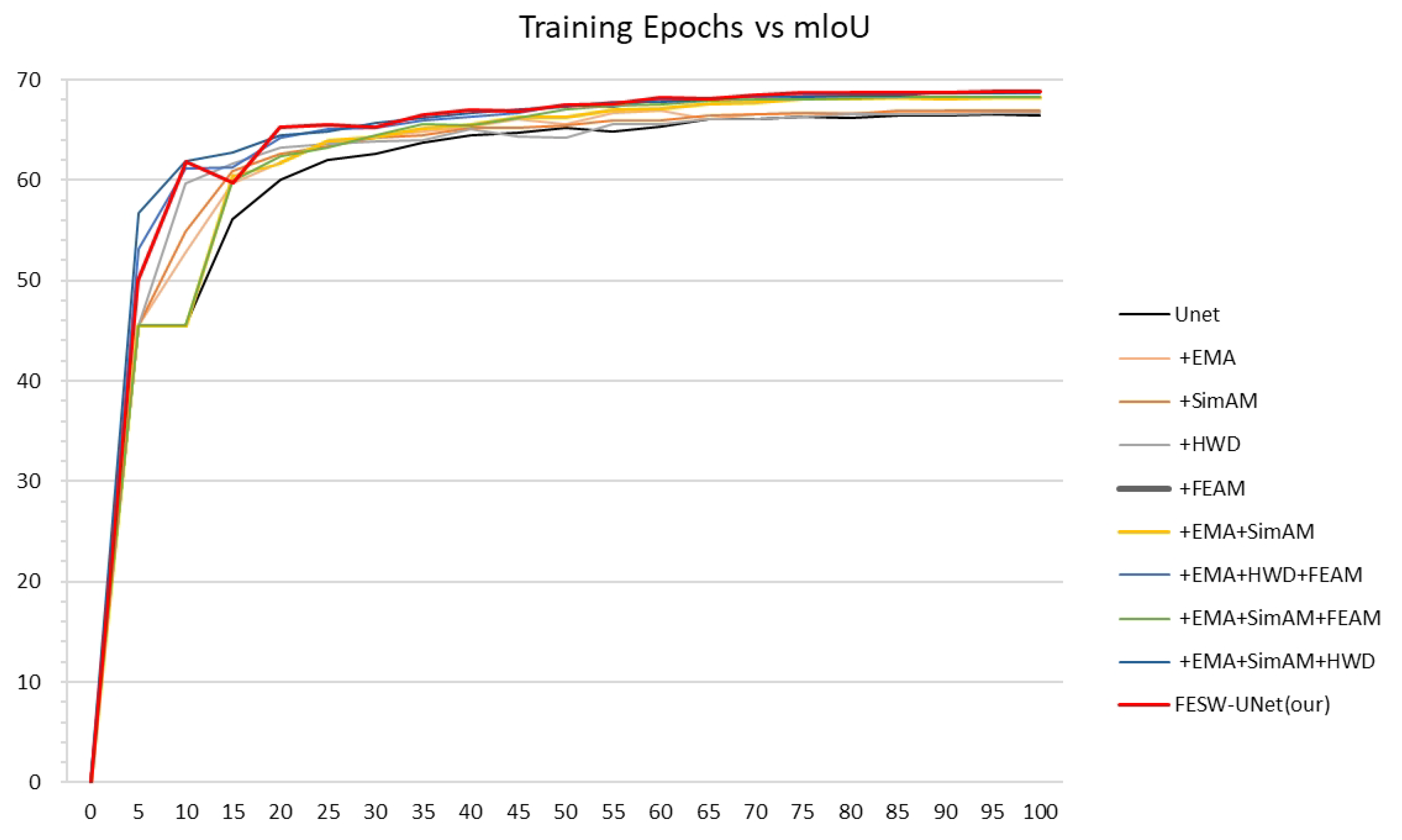

4.5. Ablation Study

5. Discussion

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Zander, A.; Lofton, J.; Harris, C.; Kezar, S. Grain sorghum production: Influence of planting date, hybrid selection, and insecticide application. Agrosystems, Geosciences & Environment 2021, 4, e20162. [Google Scholar] [CrossRef]

- Li, W.; Zheng, T.; Yang, Z.; Li, M.; Sun, C.; Yang, X. Classification and detection of insects from field images using deep learning for smart pest management: A systematic review. Ecological Informatics 2021, 66, 101460. [Google Scholar] [CrossRef]

- Ye, J.; Yu, Z.; Wang, Y.; Lu, D.; Zhou, H. PlantBiCNet: A new paradigm in plant science with bi-directional cascade neural network for detection and counting. Engineering Applications of Artificial Intelligence 2024, 130, 107704. [Google Scholar] [CrossRef]

- Kasinathan, T.; Singaraju, D.; Uyyala, S.R. Insect classification and detection in field crops using modern machine learning techniques. Information Processing in Agriculture 2021, 8, 446–457. [Google Scholar] [CrossRef]

- Teng, Y.; Zhang, J.; Dong, S.; Zheng, S.; Liu, L. MSR-RCNN: a multi-class crop pest detection network based on a multi-scale super-resolution feature enhancement module. Frontiers in Plant Science 2022, 13, 810546. [Google Scholar] [CrossRef]

- Wang, F.; Wang, R.; Xie, C.; Yang, P.; Liu, L. Fusing multi-scale context-aware information representation for automatic in-field pest detection and recognition. Computers and Electronics in Agriculture 2020, 169, 105222. [Google Scholar] [CrossRef]

- Yu, Z.; Ye, J.; Li, C.; Zhou, H.; Li, X. TasselLFANet: a novel lightweight multi-branch feature aggregation neural network for high-throughput image-based maize tassels detection and counting. Frontiers in Plant Science 2023, 14, 1158940. [Google Scholar] [CrossRef]

- Kumar, Y.; Dubey, A.K.; Jothi, A. Pest detection using adaptive thresholding. In Proceedings of the 2017 International Conference on Computing, Communication and Automation (ICCCA), 2017; IEEE; pp. 42–46. [Google Scholar]

- Zhang, J.; Feng, W.; Hu, C.; Luo, Y. Image segmentation method for forestry unmanned aerial vehicle pest monitoring based on composite gradient watershed algorithm. Transactions of the Chinese Society of Agricultural Engineering 2017, 33, 93–99. [Google Scholar]

- Deng, L.; Wang, Z.; Wang, C.; He, Y.; Huang, T.; Dong, Y.; Zhang, X. Application of agricultural insect pest detection and control map based on image processing analysis. Journal of Intelligent & Fuzzy Systems 2020, 38, 379–389. [Google Scholar]

- Liu, L.; Wang, R.; Xie, C.; Yang, P.; Wang, F.; Sudirman, S.; Liu, W. PestNet: An end-to-end deep learning approach for large-scale multi-class pest detection and classification. IEEE Access 2019, 7, 45301–45312. [Google Scholar] [CrossRef]

- Wang, R.; Jiao, L.; Xie, C.; Chen, P.; Du, J.; Li, R. S-RPN: Sampling-balanced region proposal network for small crop pest detection. Computers and Electronics in Agriculture 2021, 187, 106290. [Google Scholar] [CrossRef]

- Wang, F.; Wang, R.; Xie, C.; Yang, P.; Liu, L. Fusing multi-scale context-aware information representation for automatic in-field pest detection and recognition. Computers and Electronics in Agriculture 2020, 169, 105222. [Google Scholar] [CrossRef]

- Domingues, T.; Brandão, T.; Ferreira, J.C. Machine learning for detection and prediction of crop diseases and pests: A comprehensive survey. Agriculture 2022, 12, 1350. [Google Scholar] [CrossRef]

- Feiyan, Z.; Linpeng, J.; Jun, D. A review of research on convolutional neural networks. J. Comput. Sci 2017, 40, 1229–1251. [Google Scholar]

- Shao, Y.; Zhang, D.; Chu, H.; Zhang, X.; Rao, Y. A review of deep learning-based YOLO target detection. Journal of Electronics and Information 2022, 44. [Google Scholar]

- Jin, L.; Liu, G. An approach on image processing of deep learning based on improved SSD. Symmetry 2021, 13, 495. [Google Scholar] [CrossRef]

- Huang, J.; Shi, Y.; Gao, Y. Multi-scale faster RCNN detection algorithm for small targets. Journal of computer research and development 2019, 56, 319–327. [Google Scholar]

- ZHAO, K.; SHAN, Y.; YUAN, J.; ZHAO, Y. Research on maize pest detection based on instance segmentation. Journal of Henan Agricultural Sciences 2022, 51, 153. [Google Scholar]

- Shen, Y.; Zhou, H.; Li, J.; Jian, F.; Jayas, D.S. Detection of stored-grain insects using deep learning. Computers and Electronics in Agriculture 2018, 145, 319–325. [Google Scholar] [CrossRef]

- Kumar, R.; Gupta, M.; Kathait, R.; et al. Deep Learning Based Analysis and Detection of Potato Leaf Disease. NEU Journal for Artificial Intelligence and Internet of Things 2023, 2. [Google Scholar]

- Ranftl, R.; Bochkovskiy, A.; Koltun, V. Vision transformers for dense prediction. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2021; pp. 12179–12188. [Google Scholar]

- Xu, C.; Yu, C.; Zhang, S.; Wang, X. Multi-scale convolution-capsule network for crop insect pest recognition. Electronics 2022, 11, 1630. [Google Scholar] [CrossRef]

- Rahman, R.; Indris, C.; Bramesfeld, G.; Zhang, T.; Li, K.; Chen, X.; Grijalva, I.; McCornack, B.; Flippo, D.; Sharda, A.; et al. A new dataset and comparative study for aphid cluster detection and segmentation in sorghum fields. Journal of Imaging 2024, 10, 114. [Google Scholar] [CrossRef] [PubMed]

- Ouyang, D.; He, S.; Zhang, G.; Luo, M.; Guo, H.; Zhan, J.; Huang, Z. Efficient multi-scale attention module with cross-spatial learning. In Proceedings of the ICASSP 2023-2023 IEEE international conference on acoustics, speech and signal processing (ICASSP), 2023; IEEE; pp. 1–5. [Google Scholar]

- Qin, X.; Li, N.; Weng, C.; Su, D.; Li, M. Simple attention module based speaker verification with iterative noisy label detection. In Proceedings of the ICASSP 2022-2022 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), 2022; IEEE; pp. 6722–6726. [Google Scholar]

- Xu, G.; Liao, W.; Zhang, X.; Li, C.; He, X.; Wu, X. Haar wavelet downsampling: A simple but effective downsampling module for semantic segmentation. Pattern recognition 2023, 143, 109819. [Google Scholar] [CrossRef]

- Patro, B.N.; Namboodiri, V.P.; Agneeswaran, V.S. Spectformer: Frequency and attention is what you need in a vision transformer. In Proceedings of the 2025 IEEE/CVF Winter Conference on Applications of Computer Vision (WACV), 2025; IEEE; pp. 9543–9554. [Google Scholar]

- Brigham, E.; Morrow, R. The fast Fourier transform. IEEE Spectrum 2009, 4, 63–70. [Google Scholar] [CrossRef]

- Yang, Z.; Zhu, L.; Wu, Y.; Yang, Y. Gated channel transformation for visual recognition. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2020; pp. 11794–11803. [Google Scholar]

- Long, X.; Zhang, W.; Zhao, B. PSPNet-SLAM: A semantic SLAM detect dynamic object by pyramid scene parsing network. IEEE Access 2020, 8, 214685–214695. [Google Scholar] [CrossRef]

- Peng, H.; Xue, C.; Shao, Y.; Chen, K.; Xiong, J.; Xie, Z.; Zhang, L. Semantic segmentation of litchi branches using DeepLabV3+ model. IEEE Access 2020, 8, 164546–164555. [Google Scholar] [CrossRef]

- Chandra, D.S.; Varshney, S.; Srijith, P.; Gupta, S. Continual learning with dependency preserving hypernetworks. In Proceedings of the Proceedings of the IEEE/CVF winter conference on applications of computer vision, 2023; pp. 2339–2348. [Google Scholar]

- Chu, X.; Zhang, B.; Xu, R. Moga: Searching beyond mobilenetv3. In Proceedings of the ICASSP 2020-2020 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), 2020; IEEE; pp. 4042–4046. [Google Scholar]

- Hassan, B.; Ahmed, R.; Hassan, T.; Werghi, N. Sip-segnet: A deep convolutional encoder-decoder network for joint semantic segmentation and extraction of sclera, iris and pupil based on periocular region suppression. arXiv arXiv:2003.00825.

- Qin, X.; Zhang, Z.; Huang, C.; Dehghan, M.; Zaiane, O.R.; Jagersand, M. U2-Net: Going deeper with nested U-structure for salient object detection. Pattern recognition 2020, 106, 107404. [Google Scholar] [CrossRef]

- Sulaiman, A.; Anand, V.; Gupta, S.; Al Reshan, M.S.; Alshahrani, H.; Shaikh, A.; Elmagzoub, M. An intelligent LinkNet-34 model with EfficientNetB7 encoder for semantic segmentation of brain tumor. Scientific Reports 2024, 14, 1345. [Google Scholar] [CrossRef]

- Xie, E.; Wang, W.; Yu, Z.; Anandkumar, A.; Alvarez, J.M.; Luo, P. SegFormer: Simple and efficient design for semantic segmentation with transformers. Advances in neural information processing systems 2021, 34, 12077–12090. [Google Scholar]

- Chen, L.j.; Zou, J.m.; Zhou, Y.; Hao, G.z.; Wang, Y. Real-Time Semantic Segmentation of Maritime Navigation Scene Based on Improved Fast-SCNN. In Proceedings of the International Conference on Artificial Intelligence and Autonomous Transportation, 2024; Springer; pp. 346–362. [Google Scholar]

| Dataset | Source | No. of images | Annotation | Augmentation | Split |

|---|---|---|---|---|---|

| Aphid Cluster Segmentation Dataset | Rahman et al. | 7,720 | Pixel-level aphid regions | None (original images) | 70% / 30% (train/test) |

| AphidSeg-Sorghum | This study | 1,224 | Pixel-level aphid regions | Flip, brightness adjustment, noise injection | 70% / 30% (train/test) |

| Model | mIoU(%) | mPA(%) | Accuracy(%) | mRecall(%) | FPS | Para(M) |

|---|---|---|---|---|---|---|

| UNet | 66.31 | 75.79 | 92.65 | 75.79 | 214.714 | 31.03 |

| PSPNet[31] | 63.20 | 68.92 | 92.99 | 68.92 | 175.847 | 2.38 |

| DeepLabv3+[32] | 63.52 | 69.91 | 92.83 | 69.91 | 272.790 | 5.81 |

| Hernet[33] | 63.63 | 69.65 | 92.98 | 69.65 | 266.132 | 9.39 |

| LR-ASPP[34] | 55.80 | 60.95 | 91.40 | 60.96 | 99.36 | 3.22 |

| SegNet[35] | 62.68 | 71.69 | 91.74 | 71.69 | 242.162 | 29.44 |

| UNet2[36] | 61.62 | 68.67 | 92.13 | 68.67 | 241.693 | 9.16 |

| LinkNet[37] | 65.97 | 74.90 | 92.71 | 74.90 | 234.002 | 11.53 |

| SegFormer[38] | 49.73 | 55.60 | 88.78 | 55.60 | 241.295 | 0.40 |

| FastSCNN[39] | 66.23 | 76.75 | 92.38 | 76.75 | 243.574 | 1.14 |

| FESW-UNet (ours) | 68.76 | 78.19 | 93.32 | 78.19 | 254.780 | 32.76 |

| Model | mIoU(%) | mPA(%) | Accuracy(%) | mRecall(%) | FPS | Para(M) |

|---|---|---|---|---|---|---|

| UNet | 71.43 | 77.42 | 98.04 | 77.42 | 75.697 | 31.03 |

| PSPNet[31] | 69.85 | 75.30 | 97.82 | 75.30 | 73.850 | 2.38 |

| DeepLabv3+[32] | 70.12 | 76.08 | 97.90 | 76.08 | 78.460 | 5.81 |

| Hernet[33] | 70.25 | 75.86 | 97.93 | 75.86 | 76.310 | 9.39 |

| LR-ASPP[34] | 61.20 | 66.95 | 96.90 | 66.95 | 70.120 | 3.22 |

| SegNet[35] | 55.23 | 59.08 | 96.57 | 59.08 | 74.200 | 29.44 |

| UNet2[36] | 74.55 | 80.05 | 98.32 | 80.05 | 74.630 | 9.16 |

| LinkNet[37] | 74.76 | 82.25 | 98.22 | 82.25 | 75.050 | 11.53 |

| SegFormer[38] | 37.14 | 45.13 | 72.77 | 45.13 | 74.500 | 0.40 |

| FastSCNN[39] | 73.67 | 79.50 | 98.23 | 79.50 | 76.200 | 1.14 |

| FESW-UNet (ours) | 81.22 | 87.97 | 98.75 | 87.97 | 75.348 | 32.76 |

| Model | mIoU(%) | mPA(%) | Accuracy(%) | mRecall(%) | FPS | Para(M) |

|---|---|---|---|---|---|---|

| UNet-SimAM-EMA-HWD-FEAM×1 | 68.69 | 77.86 | 93.36 | 77.86 | 254.127 | 32.723 |

| UNet-SimAM-EMA-HWD-FEAM×2 | 68.76 | 78.19 | 93.32 | 78.19 | 254.780 | 32.756 |

| UNet-SimAM-EMA-HWD-FEAM×3 | 68.60 | 77.44 | 93.40 | 77.44 | 251.407 | 32.765 |

| UNet-SimAM-EMA-HWD-FEAM×4 | 68.49 | 77.97 | 93.24 | 77.97 | 250.062 | 32.767 |

| Model | mIoU(%) | mPA(%) | Accuracy(%) | mRecall(%) | FPS | Para(M) |

|---|---|---|---|---|---|---|

| UNet | 66.31 | 75.79 | 92.65 | 75.79 | 214.71 | 31.03 |

| + EMA | 66.77 | 75.83 | 92.96 | 75.83 | 219.16 | 31.20 |

| + SimAM | 66.75 | 75.71 | 92.90 | 75.71 | 236.64 | 31.03 |

| + HWD | 66.61 | 75.98 | 92.77 | 75.98 | 236.17 | 32.43 |

| + FEAM | 66.75 | 76.29 | 92.76 | 76.29 | 236.87 | 31.20 |

| + EMA + SimAM | 68.05 | 76.91 | 93.26 | 76.91 | 240.51 | 31.20 |

| + EMA + HWD + FEAM | 68.72 | 78.07 | 93.32 | 78.07 | 238.59 | 32.76 |

| + EMA + SimAM + FEAM | 68.03 | 77.01 | 93.23 | 77.01 | 239.75 | 31.36 |

| + EMA + SimAM + HWD | 68.62 | 77.67 | 93.36 | 77.67 | 242.62 | 32.59 |

| FESW-UNet | 68.76 | 78.19 | 93.32 | 78.19 | 254.78 | 32.76 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).