Submitted:

09 December 2025

Posted:

10 December 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Functional LARE Regression and Its Estimation

3. The Strong Consistency of the Kernel Estimator

- (HM1)

- The small-ball probability satisfies for all , with

- (HM2)

- For all , the functions are of class (with respect to s ) and satisfy

- (HM3)

- The kernel M is measurable, supported on , and bounded: .

- (HM4)

- The small-ball probability satisfies

- (HM5)

- The response variable is bounded by inverse moments:

4. Smoothing Parameter Selection and Random Matrix Theory for Metric Approximation

4.1. Leave-One-Out Cross-Validation Principle

4.2. Bootstrap Approach

- Step 1:

- Choose an arbitrary bandwidth and compute .

- Step 2:

- Compute the residual .

- Step 3:

- Generate a bootstrap residual using the distribution. , (d is dirac measure ).

- Step 4:

- Construct a bootstrap sample

- Step 5:

- Compute the estimators using the sample .

- Step 6:

- Repeat the steps 3-6 times and we put the estimator at the replication r.

- Step 7:

- Choose the optimal bandwidth m according to the following criterion

4.3. Random Matrix Metric

-

Step 1:Create the sample covariance matrixHere is assumed to have been column-centered. This matrix gives the observed covariances between all pairs of sampling points.

-

Step 2:The Marčenko-Pastur law describes the eigenvalue distribution for a random matrix. We compare the eigenvalue spectrum of our empirical to the spectrum predicted by the Marčenko-Pastur distribution for a random matrix with the same dimensions and variance.

- Perform the eigen-decomposition: , where is the diagonal matrix of eigenvalues .

- Identify the eigenvalues that are outside the support of the random distribution. These are considered as signal eigenvalues. The eigenvalues within the random bounds are considered noise.

-

Step 3:Create a cleaned covariance matrix by retaining only the signal components. A common method is to replace the noise eigenvalues with a constant value:where is a representative value for the noise eigenvalues .

-

Step 4:With the cleaned covariance matrix, we can define a stable metric. The Mahalanobis distance is a natural choice. For two curves represented as vectors and , the RMT-metric is:This metric is more stable and reliable than one calculated from the noisy covariance matrix , which is highly distorted by spurious correlations.In conclusion, RMT acts as a filter to denoise the covariance structure of the dataset. This cleaned structure increases the accurate metric for comparing any two individual curves within that dataset.

5. Data-Driven Analysis

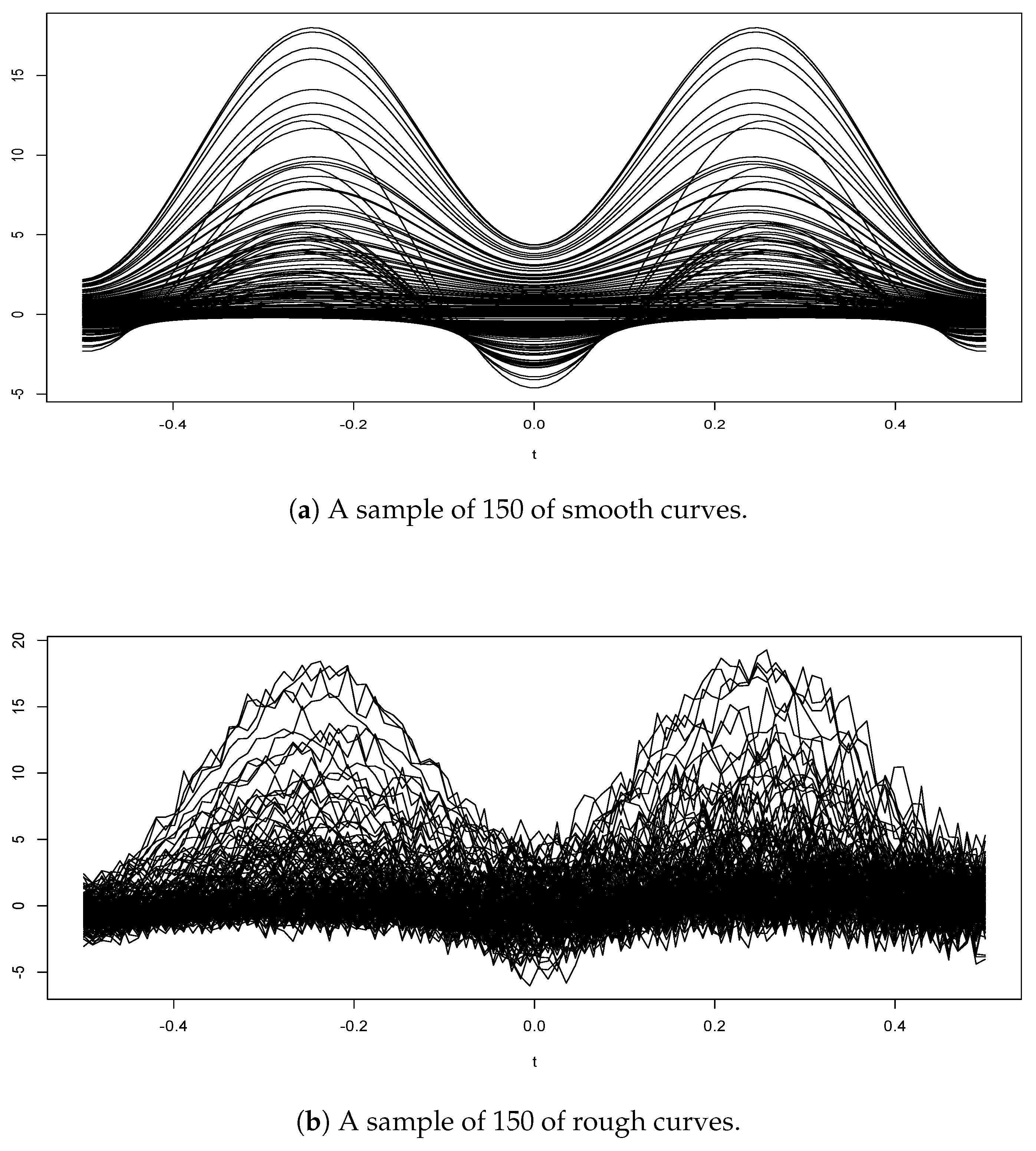

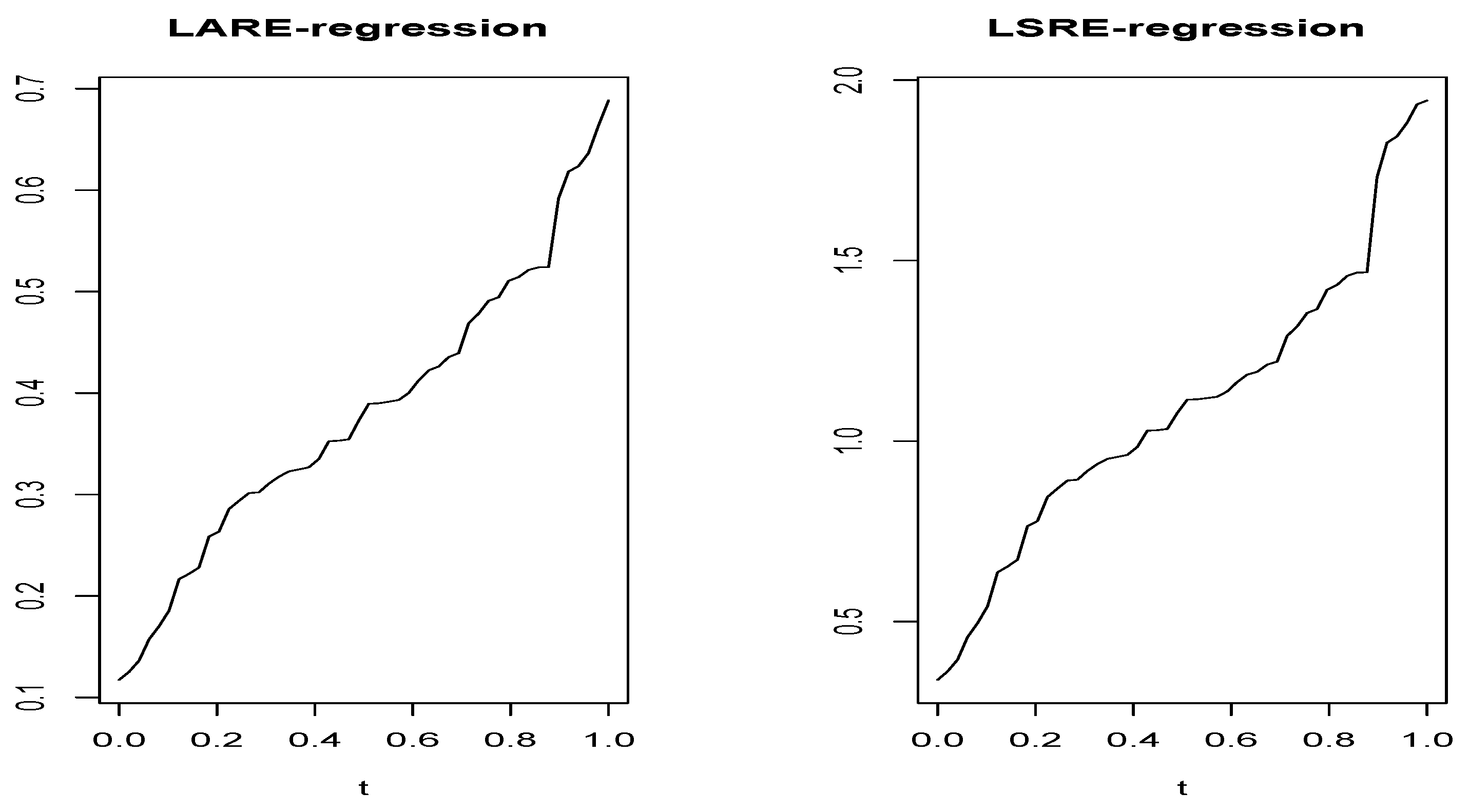

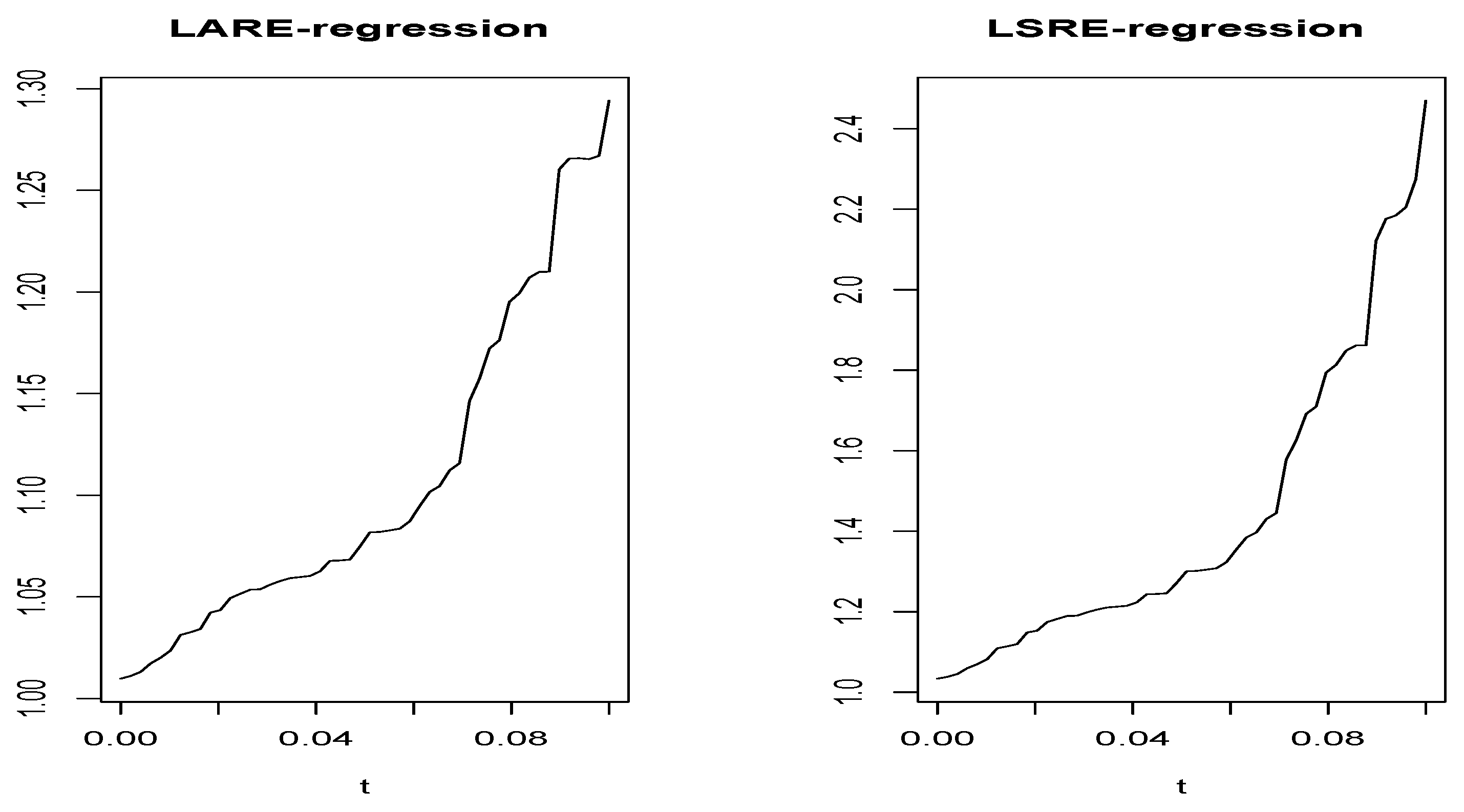

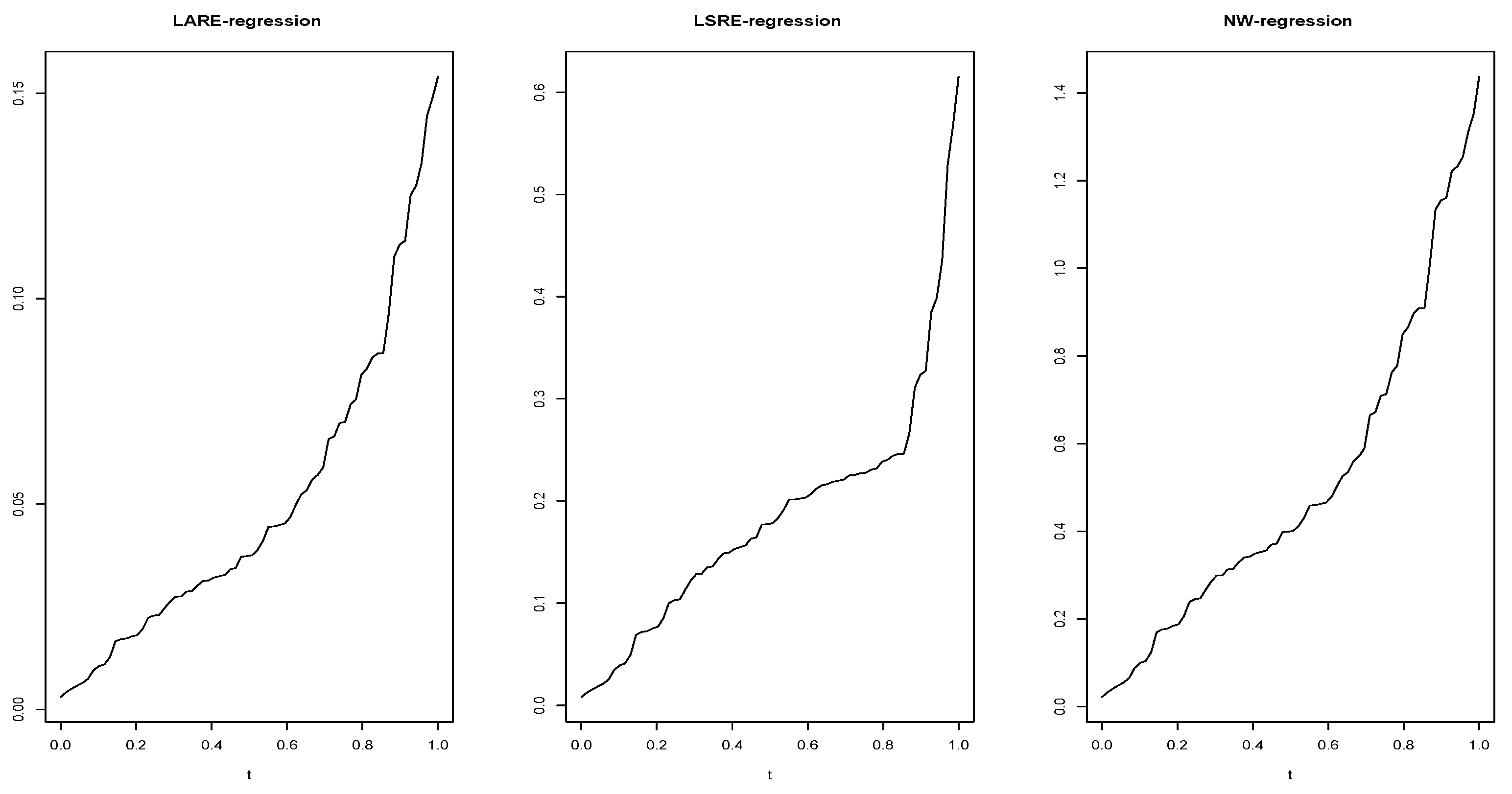

5.1. A Simulated Data Case

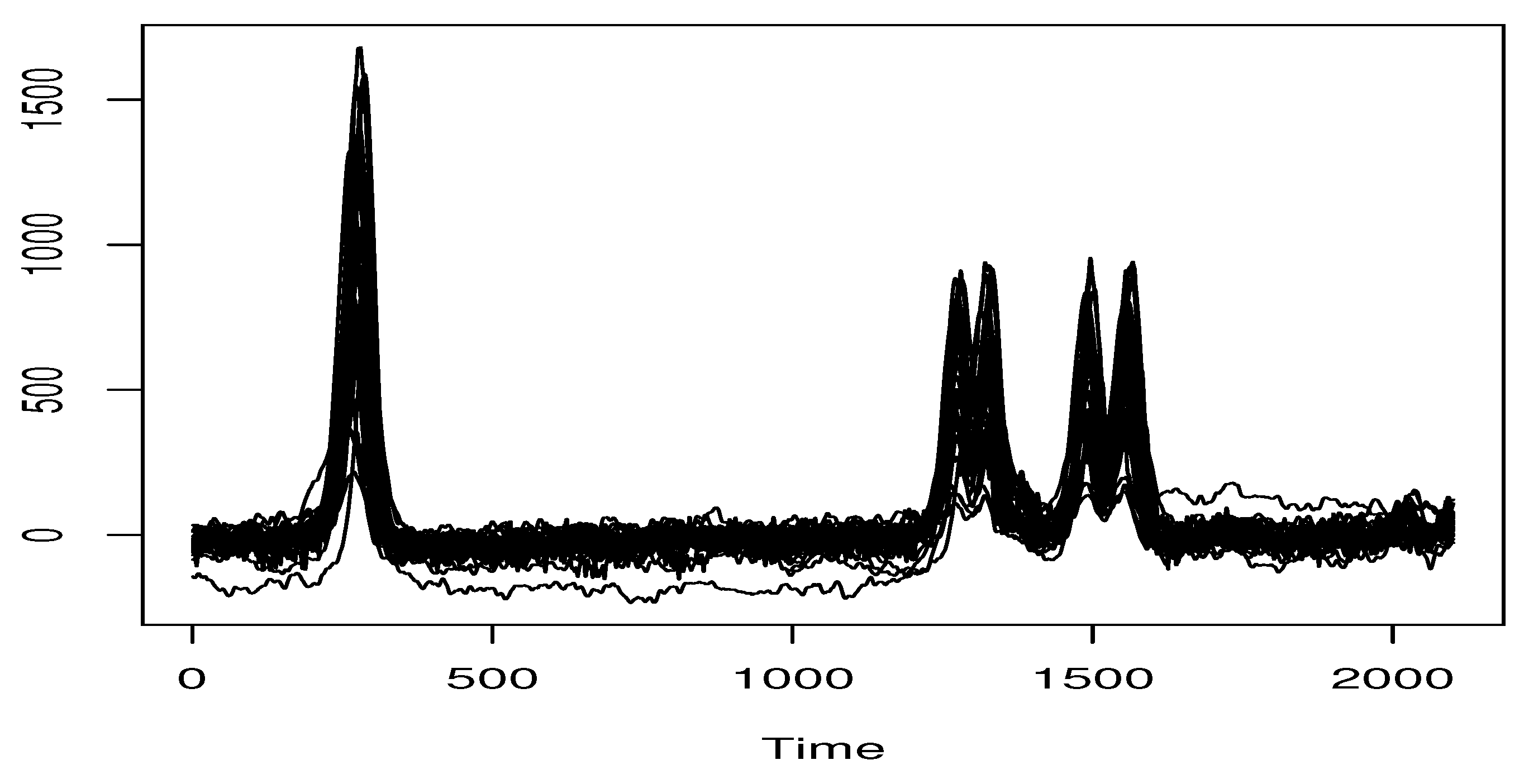

5.2. Real Data Application

6. Conclusions and Prospects

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A

References

- Ferraty, F.; Vieu, P. The functional nonparametric model and application to spectrometric data. Computational Statistics 2002, 17(4), 545–564. [Google Scholar] [CrossRef]

- Dabo-Niang, S.; Rhomari, N. Estimation non paramétrique de la régression avec variable explicative dans un espace métrique. Comptes rendus. Mathématique 2003, 336(1), 75–80. [Google Scholar] [CrossRef]

- Delsol, L. Régression non-paramétrique fonctionnelle: Expressions asymptotiques des moments. Annales de l’ISUP 2007, 51(No. 3), 43–67. [Google Scholar]

- Masry, E. Nonparametric regression estimation for dependent functional data: asymptotic normality. Stochastic Processes and their Applications 2005, 115(1), 155–177. [Google Scholar] [CrossRef]

- Ferraty, F.; Laksaci, A.; Tadj, A.; Vieu, P. Rate of uniform consistency for nonparametric estimates with functional variables. Journal of Statistical planning and inference 2010, 140(2), 335–352. [Google Scholar] [CrossRef]

- Attouch, M.K.; Laksaci, A.; Saïd, E.O. Robust regression for functional time series data. Journal of the Japan Statistical Society 2013, 42(2), 125–143. [Google Scholar] [CrossRef]

- Barrientos-Marin, J.; Ferraty, F.; Vieu, P. Locally modelled regression and functional data. Journal of Nonparametric Statistics 2010, 22(5), 617–632. [Google Scholar] [CrossRef]

- Azzi, A.; Belguerna, A.; Laksaci, A.; Rachdi, M. The scalar-on-function modal regression for functional time series data. Journal of Nonparametric Statistics 2024, 36(2), 503–526. [Google Scholar] [CrossRef]

- Narula, S.C.; Wellington, J.F. Prediction, linear regression and the minimum sum of relative errors. Technometrics 1977, 19(2), 185–190. [Google Scholar] [CrossRef]

- Chatfield, C. The joys of consulting. Significance 2007, 4(1), 33–36. [Google Scholar] [CrossRef]

- Chen, K.; Guo, S.; Lin, Y.; Ying, Z. Least absolute relative error estimation. Journal of the American Statistical Association 2010, 105(491), 1104–1112. [Google Scholar] [CrossRef]

- Yang, Y.; Ye, F. General relative error criterion and M-estimation. Frontiers of Mathematics in China 2013, 8(3), 695–715. [Google Scholar] [CrossRef]

- Jones, M.C.; Park, H.; Shin, K.I.; Vines, S.K.; Jeong, S.O. Relative error prediction via kernel regression smoothers. Journal of Statistical Planning and Inference 2008, 138(10), 2887–2898. [Google Scholar] [CrossRef]

- Mechab, W.; Laksaci, A. Nonparametric relative regression for associated random variables. Metron 2016, 74(1), 75–97. [Google Scholar] [CrossRef]

- Attouch, M.; Laksaci, A.; Messabihi, N. Nonparametric relative error regression for spatial random variables. Statistical papers 2017, 58(4), 987–1008. [Google Scholar] [CrossRef]

- Demongeot, J.; Hamie, A.; Laksaci, A.; Rachdi, M. Relative-error prediction in nonparametric functional statistics: Theory and practice. Journal of Multivariate Analysis 2016, 146, 261–268. [Google Scholar] [CrossRef]

- Altendji, B.; Demongeot, J.; Laksaci, A.; Rachdi, M. Functional data analysis: estimation of the relative error in functional regression under random left-truncation model. Journal of Nonparametric Statistics 2018, 30(2), 472–490. [Google Scholar] [CrossRef]

- Chikr Elmezouar, Z.; Alshahrani, F.; Almanjahie, I.M.; Kaid, Z.; Laksaci, A.; Rachdi, M. Scalar-on-Function Relative Error Regression for Weak Dependent Case. Axioms 2023, 12(7), 613. [Google Scholar] [CrossRef]

- Benzamouche, S.; Ould Saïd, E.; Sadki, O. Nonparametric estimation of the relative error regression for twice censored and dependent data. Communications in Statistics-Theory and Methods 2025, 54(5), 1492–1525. [Google Scholar] [CrossRef]

- Xia, X.; Ming, H.; Li, J. Relative error model average for multiplicative models. Statistics and Computing 2026, 36(1), 18. [Google Scholar] [CrossRef]

- Wang, J.L.; Chiou, J.M.; Müller, H.G. Functional data analysis. Annual Review of Statistics and its application 2016, 3(1), 257–295. [Google Scholar] [CrossRef]

- New Trends in Functional Statistics and Related Fields; Aneiros, G., Bongiorno, E.G., Goia, A., Hušková, M., Eds.; Springer Nature, 2025. [Google Scholar]

- Ndiaye, M.; Dabo-Niang, S.; Ngom, P.; Thiam, N.; Brehmer, P.; El Vally, Y. Nonparametric prediction and supervised classification for spatial dependent functional data under fixed sampling design. In Nonlinear Analysis, Geometry and Applications: Proceedings of the Third NLAGA-BIRS Symposium, AIMS-Mbour, Senegal, 2024, May; Springer Nature Switzerland: Cham; pp. 69–100. [Google Scholar]

- Ferraty, F.; Vieu, P. Nonparametric functional data analysis: theory and practice; Springer New York: New York, NY, 2006. [Google Scholar]

- Rachdi, M.; Vieu, P. Nonparametric regression for functional data: automatic smoothing parameter selection. Journal of statistical planning and inference 2007, 137(9), 2784–2801. [Google Scholar] [CrossRef]

- Benaglia, T.; Chauveau, D.; Hunter, D.R.; Young, D.S. mixtools: an R package for analyzing mixture models. Journal of statistical software 2010, 32, 1–29. [Google Scholar]

- Huber, P. J.; Ronchetti, E. M. Robust Statistics, 2nd ed.; John Wiley & Sons, 2009. [Google Scholar]

- Clark, C. J.; et al. Dry matter and soluble solids in onion (Allium cepa L.): use of near-infrared spectroscopy as a screening tool. Journal of the Science of Food and Agriculture 2003, 83(5), 371–379. [Google Scholar]

- Koenker, R.; Zhao, Q. Conditional quantile estimation and inference for ARCH models. Econometric theory 1996, 12(5), 793–813. [Google Scholar] [CrossRef]

| Method | Distribution | LOOCV | Bootstrap | |

|---|---|---|---|---|

| Smooth curves selector | S.N.D. | 0.83 | 0.97 | |

| S.U.D. | 0.98 | 1.23 | ||

| S.B.D. | 1.12 | 1.37 | ||

| C.D. | 1.18 | 1.23 | ||

| Rough curves | S.N.D. | 1.17 | 1.84 | |

| S.U.D. | 1.26 | 1.74 | ||

| S.B.D. | 1.29 | 2.18 | ||

| C.D. | 1.52 | 1.57 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).