Submitted:

09 December 2025

Posted:

12 December 2025

You are already at the latest version

Abstract

Keywords:

I. Introduction

II. Related Work

III. Threat Model

IV. Synthetic Dataset and Evaluation Design

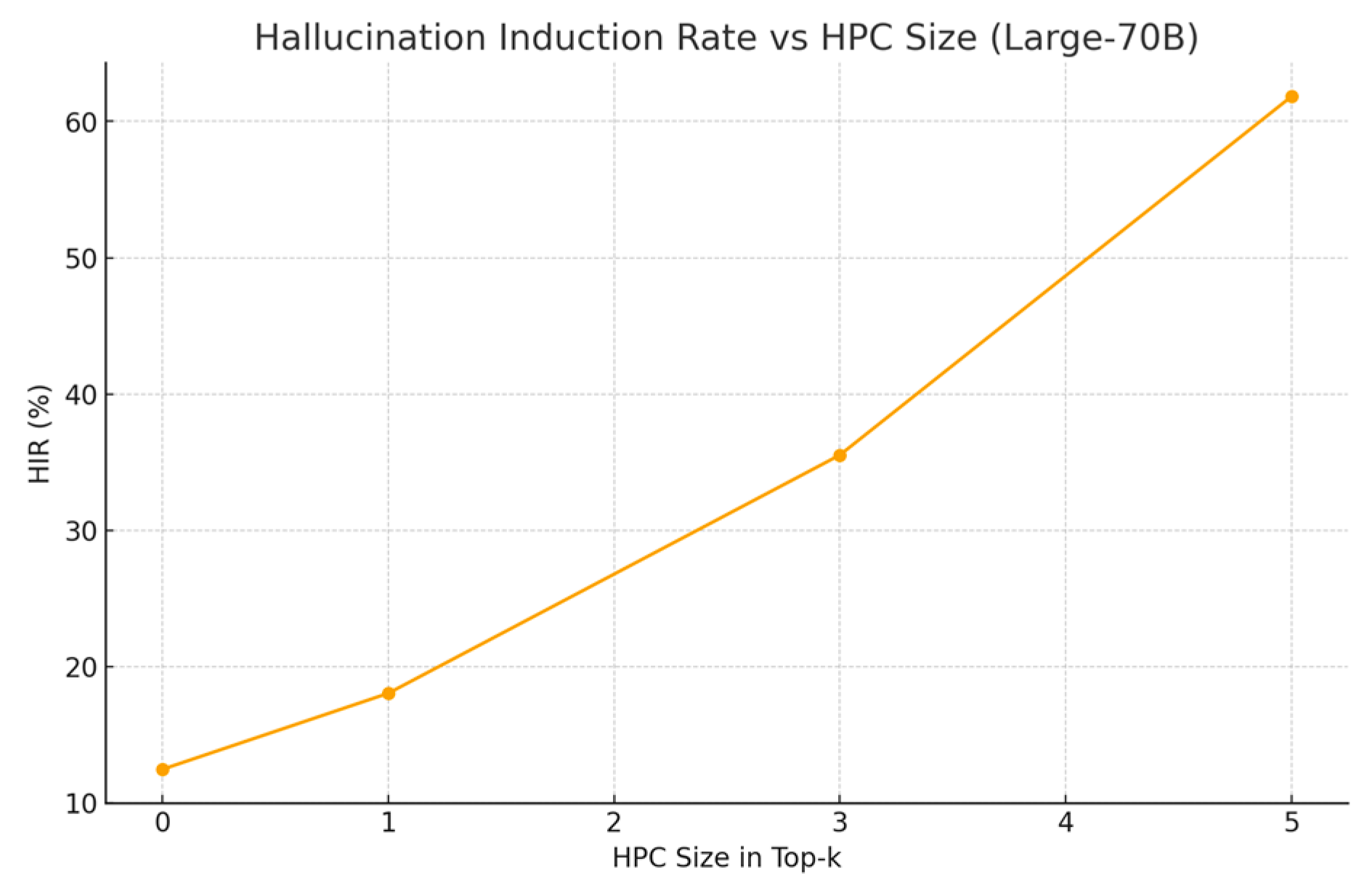

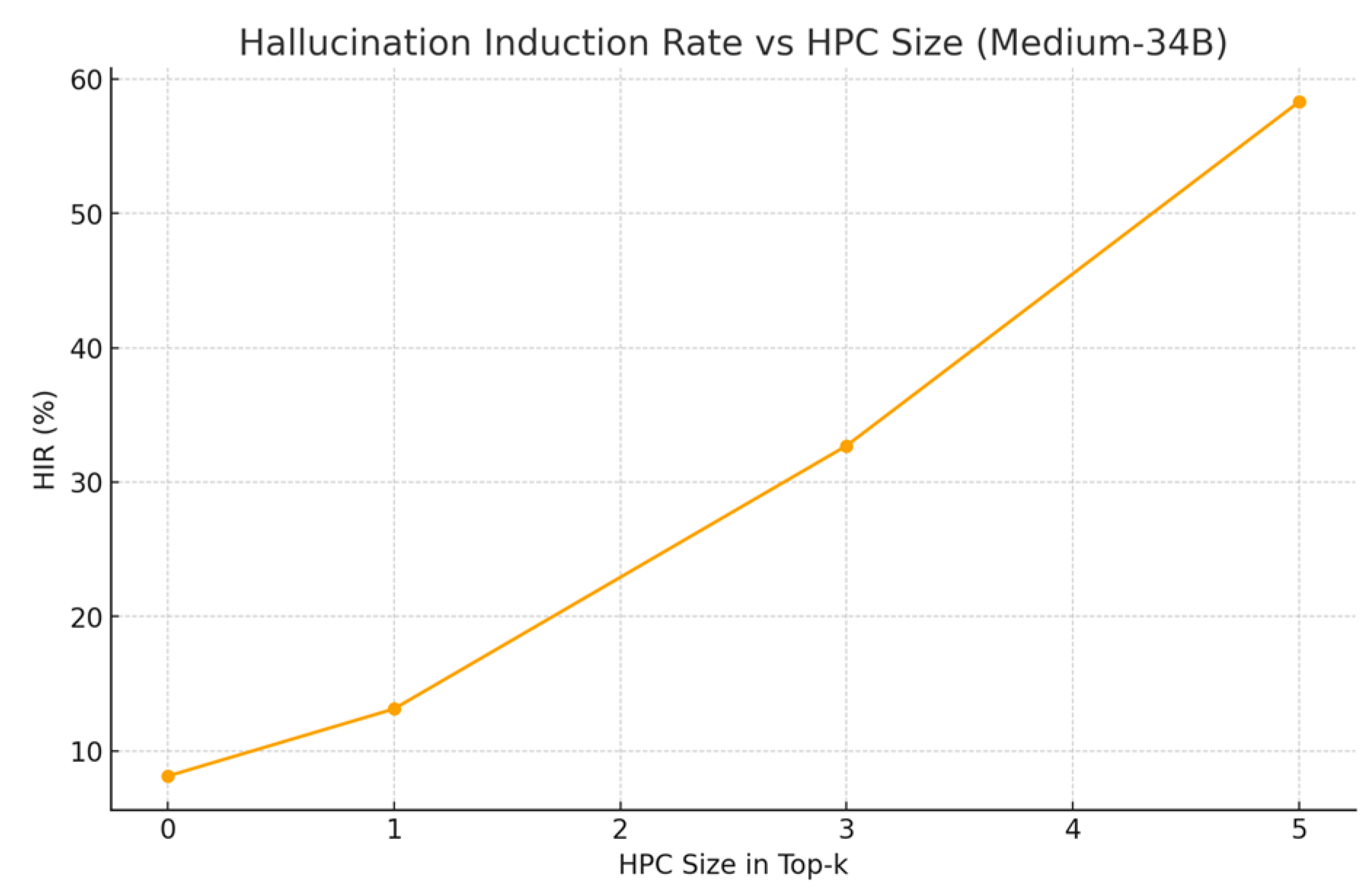

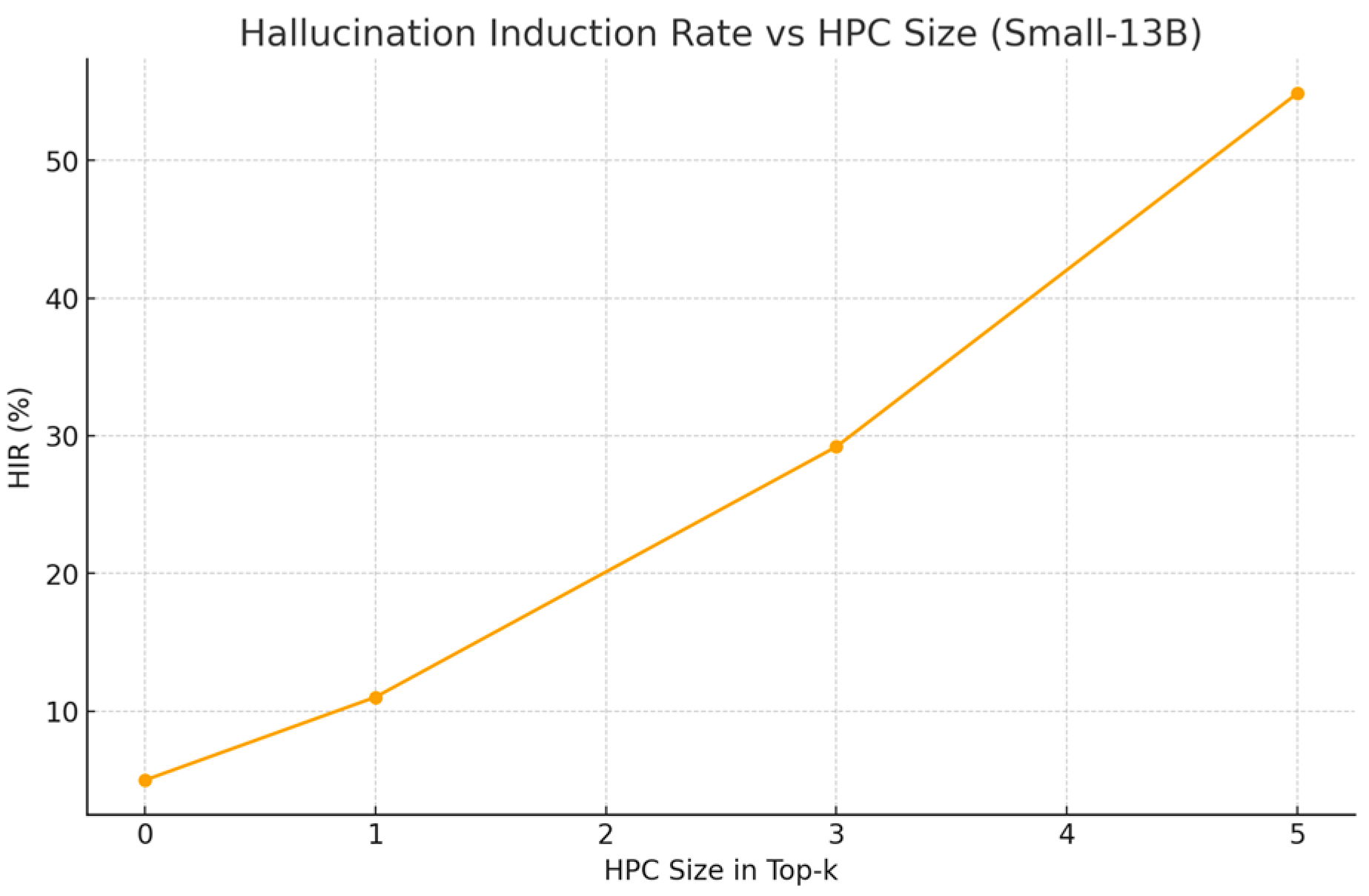

V. Results

| Condition | AMR (%) |

| HPC-3 | 17.71 |

| HPC-5 | 38.18 |

| No Attack | 3.23 |

| Single Poisoned Doc | 6.21 |

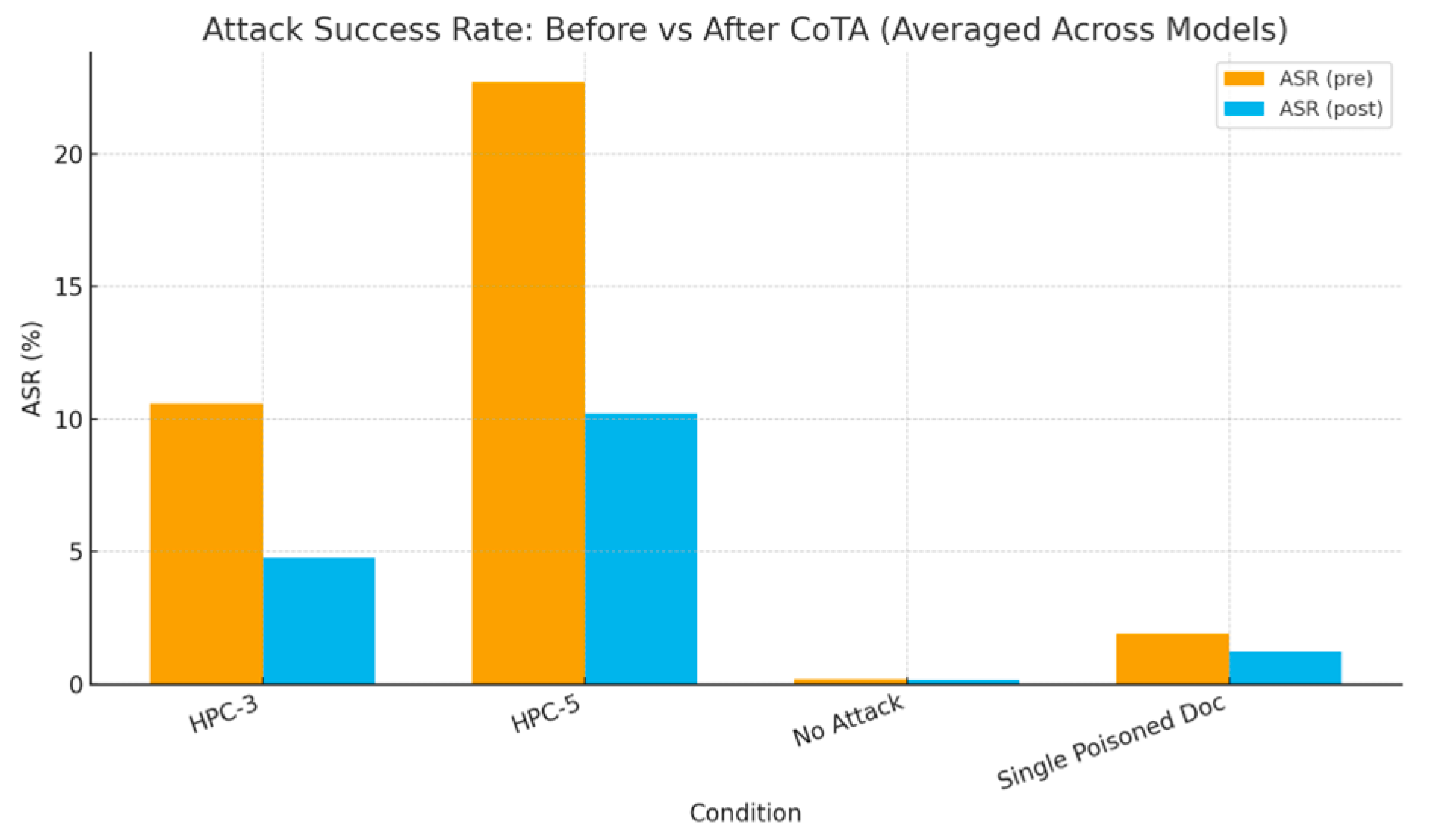

| Condition | ASR (pre) % | ASR (post) % |

| HPC-3 | 10.58 | 4.76 |

| HPC-5 | 22.71 | 10.22 |

| No Attack | 0.17 | 0.15 |

| Single Poisoned Doc | 1.88 | 1.22 |

VII. Conclusion

References

- Shen, G. Large Language Models for Cyber Security: A Systematic Literature Review. arXiv 2023, arXiv:2309.11638. [Google Scholar]

- Liu, J. Prompt Injection Attacks Against LLM-Integrated Applications. arXiv 2023, arXiv:2302.12173. [Google Scholar]

- Wei, A. Jailbroken: How Does LLM Safety Training Fail? NeurIPS 2023. [Google Scholar]

- Meng, K. Locating and Editing Factual Associations in GPT. NeurIPS 2022. [Google Scholar]

- Carlini, N. Extracting Training Data from Large Language Models. USENIX Security 2021. [Google Scholar]

- Goodfellow. Explaining and Harnessing Adversarial Examples. ICLR 2015. [Google Scholar]

- Li et al. TextBugger: Generating Adversarial Text Against NLP. IEEE S&P 2019. [Google Scholar]

- Wallace, E. Poisoning Language Models During Instruction Tuning. ICML 2023. [Google Scholar]

- Manakul, P. SelfCheckGPT: Zero-Resource Black-Box Hallucination Detection. EMNLP 2023. [Google Scholar]

- Lewis, P. Retrieval-Augmented Generation for Knowledge-Intensive NLP. NAACL 2020. [Google Scholar]

- Chaudhary, F. R. ChatGPT for Security: A Systematic Evaluation of Cybersecurity Capabilities. arXiv 2023, arXiv:2309.05572. [Google Scholar]

- Rossow, C.; Arnes, A. G. Attributing Cyber Attacks. J. Strategic Studies 2014. [Google Scholar]

- Yeh, S. S. Explaining NLP Models via Minimal Contrastive Editing. ACL 2022. [Google Scholar]

- Solaiman. Release Strategies and the Social Impacts of Language Models. arXiv 2019, arXiv:1908.09203. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).