Submitted:

05 December 2025

Posted:

08 December 2025

You are already at the latest version

Abstract

Keywords:

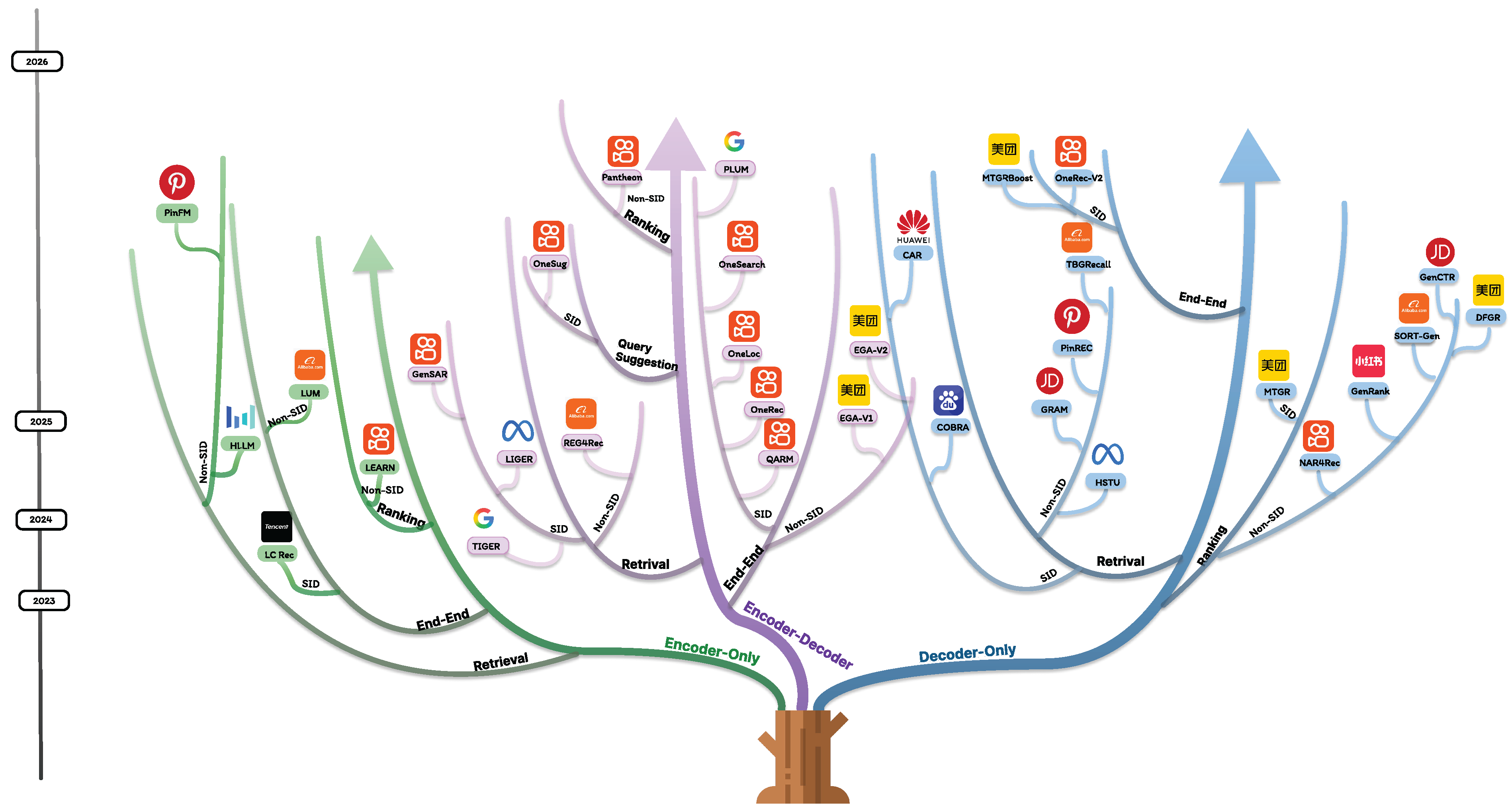

1. Introduction

- Dynamic Representation Learning: Unlike static two-tower retrievers, generative approaches, for example, TIGER [11] and LIGER [20] employ adaptive tokenization strategies that learn cascaded sparse-dense representations in a unified space. This enables simultaneous handling of semantic matching (through dense embeddings) and exact matching (via sparse lexical features), as shown in COBRA [21].

- Industrial-Scale Optimization: Frameworks such as HSTU [22], EGA-V1/V2 [3,23] and Meituan’s MTGRBoost [?] demonstrate how generative models achieve superior computational efficiency through distributed parallelism, mixed precision, and model sharding—scaling to hundreds of GPUs while maintaining low inference latency.

- Architectural Unification: Modern generative recommenders like GRAM [12] and COBRA[21] overcome the semantic gap between retrieval and ranking through transformer-based architectures that jointly optimize for both breadth (recall) and precision (ranking). The MTGR framework [24] demonstrates how trillion-parameter sequential transducers [22] can replace multi-stage pipelines with single-model solutions.

2. From Discriminative to Generative Recommendation

2.1. Discriminative Recommendation (DR)

- Contextual generalization. Discriminative models rely on fixed feature spaces and limited contextual windows. Given a scoring function , the model often operates on static embeddings derived from short-term behavior, without the ability to reason over high-level intents or open-ended goals. For example, in a query such as “gift ideas for a 10-year-old”, a DR model trained on co-click patterns cannot generalize to unseen combinations of age, context, and semantic intent.

-

Decoupled stages of retrieval and ranking. Industrial pipelines typically separate candidate retrieval () and ranking (), whereThis modularization, while scalable, breaks the end-to-end optimization of user satisfaction. The retrieval model is optimized for recall, whereas the ranking model focuses on precision, often leading to sub-optimal joint performance.

- Token compositionality and representation bottlenecks. DR systems treat item IDs as atomic categorical variables . This prevents compositional reasoning over attributes such as color, brand, or visual style. Multimodal signals—text, image, or video embeddings—are often appended post-hoc and not integrated into the underlying generative process, resulting in limited cross-modal understanding.

- Limited reasoning and language grounding. Since DR models predict scalar scores rather than structured outputs, they cannot naturally explain or verbalize recommendations. In contrast, generative models can express reasoning paths, e.g.,enabling interpretability and human-aligned feedback. This gap restricts the deployment of DR systems in conversational or interactive settings.

- Sparse supervision and cold-start sensitivity. DR models depend on observed user–item interactions , where y denotes engagement labels. The learning signal is extremely sparse—only positive clicks or purchases are known—making cold-start or long-tail item generalization difficult. Without pretraining on large textual or multimodal corpora, embeddings for new users/items remain uninformative.

- Inflexibility to multi-task or multi-objective learning. In industrial applications, recommendation must optimize heterogeneous objectives (CTR, GMV, diversity, fairness, latency). Traditional DR models require explicit multi-tower or multi-head architectures to approximate joint optimization:but gradients across tasks often conflict, leading to unstable or biased optimization. Generative frameworks, on the other hand, can encode objectives as instructions or natural-language prompts, achieving more flexible multi-intent modeling.

2.2. Generative Recommendation (GR)

3. Generative Recommendation Models in Industry

3.1. Modeling Paradigm

3.1.1. Encoder-Only Models

3.1.2. Encoder–Decoder Models

3.1.3. Decoder-Only Models

3.2. Functional Scope

3.2.1. Generative Retrieval

3.2.2. Generative Reranking

3.2.3. Unified End-to-End Recommendation

3.2.4. Generative Augmentation and Auxiliary Tasks

3.3. Representation Space

3.3.1. Semantic ID and Codebook Learning

3.3.1.1. Hierarchical and Residual Quantization

Codebook-based Discretization

3.3.1.3. Training Stability and Codebook Utilization

3.3.2. Dense and Hybrid Representations

3.4. Training and Alignment Objectives

3.4.1. No Alignment (Next-Token Prediction)

3.4.2. Alignment via Reinforcement Learning and Preference Optimization

Examples from Generative Recommender Systems

Practical Guidelines

4. Scaling Law in Generative Recommendation Systems

- Architectural Heterogeneity: Recommendation models exhibit far greater structural diversity (e.g., Wide & Deep, DIN, graph neural networks) than the Transformer-dominated NLP landscape, making it unclear how to systematically scale capacity across different paradigms.

- Extreme Data Scale: User–item interaction logs span hundreds of millions of users and billions of items, yielding token counts up to —two orders of magnitude larger than typical LLM training corpora—which complicates both storage and computation.

- Strict Latency Requirements: Online recommendation services demand end-to-end response times under ∼30 ms, imposing severe constraints on model complexity and making deployment of large-scale models significantly more challenging than in NLP applications.

4.1. Scaling Traditional Discriminative Models

4.2. Scaling Generative Recommendation Models

4.3. Synthesis and Open Challenges

| Paper | Online A/B Test | Offline Data | Scaling Law | Domain |

|---|---|---|---|---|

| TIGER [11] | N | Amazon reviews | N | Web-scale search |

| LC Rec [25] | N | Amazon reviews | N | social media |

| HSTU [22] | Y | Amazon reviews & MovieLens | Y | Social media |

| QARM [28] | Y | Kuaishou internal data | N | Short video / multimodal |

| GenSAR [15] | N | Amazon reviews & Chinese app | N | Short video / social feed |

| LEARN [29] | Y | Amazon reviews & Kuaishou internal data | N | Short video / social feed |

| HLLM [30] | Y | PixelRec & Amazon reviews | N | Short video / social feed |

| LUM [31] | Y | MovieLens & Amazon Booka | Y | E-commerce / search |

| LIGER [20] | N | Amazon & Steam [57] | N | Social media / cross-domain |

| GRAM [12] | Y (5%) | JD internal data | N | E-commerce |

| COBRA [21] | Y (10%) | Amazon | N | Web-scale search |

| PinRec [32] | Y | Pinterest internal data | N | Visual content discovery |

| Pantheon [33] | Y | Kuaishou internal data | N | Multi-objective ranking |

| TBGRecall [34] | Y (5%) | RecFlow & Taobao internal data | Y | E-commerce |

| REG4Rec [35] | Y | Amazon & Internal | N | E-commerce |

| NAR4Rec [13] | Y | Avito & Internal | N | Short video |

| GenRank [36] | Y (10%) | Xiaohongshu | N | Visual content discovery |

| MTGR [24] | Y (2%) | Meituan internal data | Y | Food delivery, local services |

| MTGRBoost [37] | N | Meituan internal data | N | Food delivery, local services |

| SORT-Gen [38] | Y | Taobao internal data | N | E-commerce |

| GenCTR [39] | Y | JD internal data | N | E-commerce advertising |

| DFGR [40] | N | RecFlow & KuaiSAR & Trec | Y | Food delivery, local services |

| OneRec [16] | Y (1%) | Kuaishou internal data | Y | Short video |

| OneRec-V2 [41] | Y (5%) | Kuaishou internal data | Y | Short video |

| EGA-V1 [3] | Y | Meituan internal data | Y | Food delivery, local services |

| EGA-V2 [23] | N | Meituan internal data | N | Food delivery, local services |

| OneLoc [42] | Y (10%) | Kuaishou internal data | Y | Local-life services |

| OneSearch [27] | Y | Kuaishou internal data | N | Search / e-commerce |

| OneSug [43] | Y | Kuaishou internal data | N | Query suggestion |

| CAR [44] | Y (5%) | Amazon reviews | Y | E-commerce / app recommendation |

| PinFM [45] | Y | Pinterest internal data | Y | Visual content discovery |

| PLUM [46] | Y | YouTube internal data | Y | Video recommendation |

5. Scalability

5.1. Model-Architecture Scalability

5.2. System-Level Scalability

Distributed Training and Parallelism

Low-Latency Inference and Efficient Serving

6. Evaluation

6.1. Generative Retrieval Models

6.2. Generative (Re)ranking Models

6.3. End-to-End Generative Recommendation

7. Challenges and Future Directions

7.1. Current Challenges in Generative Recommendation Systems

Current Technical Limitations and Challenges

Evaluation and Benchmarking Challenges

Scalability and Resource Constraints

7.2. Future Directions

Integration of Multimodal Large Language Models

Foundation Models and Cross-Domain Transfer

Ethical AI and Responsible Recommendation

Technical Innovation Frontiers

Industry Adoption and Standardization

Long-term Vision and Transformative Potential

8. Conclusions

References

- Resnick, P.; Varian, H.R. Collaborative Filtering Recommender Systems. In The Adaptive Web: Methods and Strategies of Web Personalization; Springer: Berlin, Heidelberg, 2007; Vol. 4321, Lecture Notes in Computer Science, chapter 9, pp. 291–324.

- Zhou, G.; Zhu, X.; Song, C.; Fan, Y.; Zhu, H.; Ma, X.; Yan, Y.; Jin, J.; Li, H.; Gai, K. Deep Interest Network for Click-Through Rate Prediction. In Proceedings of the Proceedings of the 24th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining. ACM, 2018, pp. 1059–1068.

- Qiu, J.; Wang, Z.; Zhang, F.; Zheng, Z.; Zhu, J.; Fan, J.; Zhang, T.; Wang, H.; Wang, Y.; Wang, X. EGA-V1: Unifying Online Advertising with End-to-End Learning, 2025, [arXiv:cs.IR/2505.19755].

- Koren, Y.; Bell, R.; Volinsky, C. Matrix Factorization Techniques for Recommender Systems. IEEE Computer 2009, 42, 30–37.

- Naumov, M.; Mudigere, D.; Shi, H.J.M.; Huang, J.; Sundaraman, N.; Park, J.; Wang, X.; Gupta, U.; Wu, C.J.; Azzolini, A.G.; et al. Deep Learning Recommendation Model for Personalization and Recommendation Systems, 2019, [arXiv:cs.IR/1906.00091].

- Bromley, J.; Guyon, I.; LeCun, Y.; Säckinger, E.; Shah, R. Signature verification using a "Siamese" time delay neural network. In Proceedings of the Proceedings of the 7th International Conference on Neural Information Processing Systems, San Francisco, CA, USA, 1993; NIPS’93, p. 737–744.

- Kim, Y. Applications and Future of Dense Retrieval in Industry. In Proceedings of the Proceedings of the 45th International ACM SIGIR Conference on Research and Development in Information Retrieval, New York, NY, USA, 2022; SIGIR ’22, p. 3373–3374. [CrossRef]

- Wang, R.; Fu, B.; Fu, G.; Wang, M. Deep & Cross Network for Ad Click Predictions. In Proceedings of the Proceedings of the ADKDD’17. ACM, 2017.

- Bottou, L.; Bousquet, O. The Tradeoffs of Large Scale Learning. In Proceedings of the Advances in Neural Information Processing Systems; Platt, J.; Koller, D.; Singer, Y.; Roweis, S., Eds. Curran Associates, Inc., 2007, Vol. 20.

- Xi, Y.; Liu, W.; Dai, X.; Tang, R.; Zhang, W.; Liu, Q.; He, X.; Yu, Y. Context-aware Reranking with Utility Maximization for Recommendation, 2022, [arXiv:cs.IR/2110.09059].

- Rajput, S.; Mehta, N.; Singh, A.; Keshavan, R.H.; Vu, T.; Heldt, L.; Hong, L.; Tay, Y.; Tran, V.Q.; Samost, J.; et al. Recommender Systems with Generative Retrieval, 2023, [arXiv:cs.IR/2305.05065].

- Pang, M.; Yuan, C.; He, X.; Fang, Z.; Xie, D.; Qu, F.; Jiang, X.; Peng, C.; Lin, Z.; Luo, Z.; et al. Generative Retrieval and Alignment Model: A New Paradigm for E-commerce Retrieval, 2025, [arXiv:cs.IR/2504.01403].

- Ren, Y.; Yang, Q.; Wu, Y.; Xu, W.; Wang, Y.; Zhang, Z. Non-autoregressive Generative Models for Reranking Recommendation, 2025, [arXiv:cs.IR/2402.06871].

- Xu, Z.; Zhang, Y. LLM-Enhanced Reranking for Complementary Product Recommendation, 2025, [arXiv:cs.IR/2507.16237].

- Shi, T.; Xu, J.; Zhang, X.; Zang, X.; Zheng, K.; Song, Y.; Yu, E. Unified Generative Search and Recommendation, 2025, [arXiv:cs.IR/2504.05730].

- Deng, J.; Wang, S.; Cai, K.; Ren, L.; Hu, Q.; Ding, W.; Luo, Q.; Zhou, G. OneRec: Unifying Retrieve and Rank with Generative Recommender and Iterative Preference Alignment, 2025, [arXiv:cs.IR/2502.18965].

- Liang, S.; Zhang, Y.; Wang, Y. C-TLSAN: Content-Enhanced Time-Aware Long- and Short-Term Attention Network for Personalized Recommendation, 2025, [arXiv:cs.LG/2506.13021].

- Liang, S.; Zhang, Y.; Guo, Y. PersonaAgent with GraphRAG: Community-Aware Knowledge Graphs for Personalized LLM, 2025, [arXiv:cs.LG/2511.17467].

- Wu, L.; Zheng, Z.; Qiu, Z.; Wang, H.; Gu, H.; Shen, T.; Qin, C.; Zhu, C.; Zhu, H.; Liu, Q.; et al. A Survey on Large Language Models for Recommendation, 2024, [arXiv:cs.IR/2305.19860].

- Yang, L.; Paischer, F.; Hassani, K.; Li, J.; Shao, S.; Li, Z.G.; He, Y.; Feng, X.; Noorshams, N.; Park, S.; et al. Unifying Generative and Dense Retrieval for Sequential Recommendation, 2024, [arXiv:cs.IR/2411.18814].

- Yang, Y.; Ji, Z.; Li, Z.; Li, Y.; Mo, Z.; Ding, Y.; Chen, K.; Zhang, Z.; Li, J.; Li, S.; et al. Sparse Meets Dense: Unified Generative Recommendations with Cascaded Sparse-Dense Representations, 2025, [arXiv:cs.IR/2503.02453].

- Zhai, J.; Liao, L.; Liu, X.; Wang, Y.; Li, R.; Cao, X.; Gao, L.; Gong, Z.; Gu, F.; He, J.; et al. Actions Speak Louder than Words: Trillion-Parameter Sequential Transducers for Generative Recommendations. In Proceedings of the Proceedings of the 41st International Conference on Machine Learning; Salakhutdinov, R.; Kolter, Z.; Heller, K.; Weller, A.; Oliver, N.; Scarlett, J.; Berkenkamp, F., Eds. PMLR, 21–27 Jul 2024, Vol. 235, Proceedings of Machine Learning Research, pp. 58484–58509.

- Zheng, Z.; Wang, Z.; Yang, F.; Fan, J.; Zhang, T.; Wang, Y.; Wang, X. EGA-V2: An End-to-end Generative Framework for Industrial Advertising, 2025, [arXiv:cs.IR/2505.17549].

- Han, R.; Yin, B.; Chen, S.; Jiang, H.; Jiang, F.; Li, X.; Ma, C.; Huang, M.; Li, X.; Jing, C.; et al. MTGR: Industrial-Scale Generative Recommendation Framework in Meituan, 2025, [arXiv:cs.IR/2505.18654].

- Zheng, B.; Hou, Y.; Lu, H.; Chen, Y.; Zhao, W.X.; Chen, M.; Wen, J.R. Adapting Large Language Models by Integrating Collaborative Semantics for Recommendation. In Proceedings of the 2024 IEEE 40th International Conference on Data Engineering (ICDE), 2024, pp. 1435–1448. [CrossRef]

- Zhang, B.; Luo, L.; Chen, Y.; Nie, J.; Liu, X.; Guo, D.; Zhao, Y.; Li, S.; Hao, Y.; Yao, Y.; et al. Wukong: Towards a Scaling Law for Large-Scale Recommendation, 2024, [arXiv:cs.LG/2403.02545].

- Chen, B.; Guo, X.; Wang, S.; Liang, Z.; Lv, Y.; Ma, Y.; Xiao, X.; Xue, B.; Zhang, X.; Yang, Y.; et al. OneSearch: A Preliminary Exploration of the Unified End-to-End Generative Framework for E-commerce Search, 2025, [arXiv:cs.IR/2509.03236].

- Luo, X.; Cao, J.; Sun, T.; Yu, J.; Huang, R.; Yuan, W.; Lin, H.; Zheng, Y.; Wang, S.; Hu, Q.; et al. Qarm: Quantitative alignment multi-modal recommendation at kuaishou. arXiv preprint arXiv:2411.11739 2024.

- Jia, J.; Wang, Y.; Li, Y.; Chen, H.; Bai, X.; Liu, Z.; Liang, J.; Chen, Q.; Li, H.; Jiang, P.; et al. LEARN: Knowledge Adaptation from Large Language Model to Recommendation for Practical Industrial Application. In Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence, 2025, Vol. 39, pp. 11861–11869.

- Chen, J.; Chi, L.; Peng, B.; Yuan, Z. Hllm: Enhancing sequential recommendations via hierarchical large language models for item and user modeling. arXiv preprint arXiv:2409.12740 2024.

- Yan, B.; Liu, S.; Zeng, Z.; Wang, Z.; Zhang, Y.; Yuan, Y.; Liu, L.; Liu, J.; Wang, D.; Su, W.; et al. Unlocking Scaling Law in Industrial Recommendation Systems with a Three-step Paradigm based Large User Model. arXiv preprint arXiv:2502.08309 2025.

- Badrinath, A.; Agarwal, P.; Bhasin, L.; Yang, J.; Xu, J.; Rosenberg, C. PinRec: Outcome-Conditioned, Multi-Token Generative Retrieval for Industry-Scale Recommendation Systems, 2025, [arXiv:cs.IR/2504.10507].

- Cao, J.; Xu, P.; Cheng, Y.; Guo, K.; Tang, J.; Wang, S.; Leng, D.; Yang, S.; Liu, Z.; Niu, Y.; et al. Pantheon: Personalized Multi-objective Ensemble Sort via Iterative Pareto Policy Optimization. arXiv preprint arXiv:2505.13894 2025.

- Liang, Z.; Wu, C.; Huang, D.; Sun, W.; Wang, Z.; Yan, Y.; Wu, J.; Jiang, Y.; Zheng, B.; Chen, K.; et al. TBGRecall: A Generative Retrieval Model for E-commerce Recommendation Scenarios. arXiv preprint arXiv:2508.11977 2025.

- Xing, H.; Deng, H.; Mao, Y.; Hu, J.; Xu, Y.; Zhang, H.; Wang, J.; Wang, S.; Zhang, Y.; Zeng, X.; et al. REG4Rec: Reasoning-Enhanced Generative Model for Large-Scale Recommendation Systems, 2025, [arXiv:cs.IR/2508.15308].

- Huang, Y.; Chen, Y.; Cao, X.; Yang, R.; Qi, M.; Zhu, Y.; Han, Q.; Liu, Y.; Liu, Z.; Yao, X.; et al. Towards Large-scale Generative Ranking, 2025, [arXiv:cs.IR/2505.04180].

- Wang, Y.; Yan, X.; Ma, C.; Huang, M.; Li, X.; Yu, L.; Liu, C.; Han, R.; Jiang, H.; Yin, B.; et al. MTGRBoost: Boosting Large-scale Generative Recommendation Models in Meituan, 2025, [arXiv:cs.DC/2505.12663].

- Meng, Y.; Guo, C.; Cao, Y.; Liu, T.; Zheng, B. A Generative Re-ranking Model for List-level Multi-objective Optimization at Taobao, 2025, [arXiv:cs.IR/2505.07197].

- Kong, L.; Wang, L.; Peng, C.; Lin, Z.; Law, C.; Shao, J. Generative Click-through Rate Prediction with Applications to Search Advertising, 2025, [arXiv:cs.LG/2507.11246].

- Guo, H.; Xue, E.; Huang, L.; Wang, S.; Wang, X.; Wang, L.; Wang, J.; Chen, S. Action is All You Need: Dual-Flow Generative Ranking Network for Recommendation, 2025, [arXiv:cs.IR/2505.16752].

- Zhou, G.; Hu, H.; Cheng, H.; Wang, H.; Deng, J.; Zhang, J.; Cai, K.; Ren, L.; Ren, L.; Yu, L.; et al. OneRec-V2 Technical Report, 2025, [arXiv:cs.IR/2508.20900].

- Wei, Z.; Cai, K.; She, J.; Chen, J.; Chen, M.; Zeng, Y.; Luo, Q.; Zeng, W.; Tang, R.; Gai, K.; et al. OneLoc: Geo-Aware Generative Recommender Systems for Local Life Service, 2025, [arXiv:cs.IR/2508.14646].

- Guo, X.; Chen, B.; Wang, S.; Yang, Y.; Lei, C.; Ding, Y.; Li, H. OneSug: The Unified End-to-End Generative Framework for E-commerce Query Suggestion, 2025, [arXiv:cs.IR/2506.06913].

- Wang, Y.; Gan, W.; Xiao, L.; Zhu, J.; Chang, H.; Wang, H.; Zhang, R.; Dong, Z.; Tang, R.; Li, R. Act-With-Think: Chunk Auto-Regressive Modeling for Generative Recommendation, 2025, [arXiv:cs.IR/2506.23643].

- Chen, X.; Rajesh, K.; Lawhon, M.; Wang, Z.; Li, H.; Li, H.; Joshi, S.V.; Eksombatchai, P.; Yang, J.; Hsu, Y.P.; et al. Pinfm: foundation model for user activity sequences at a billion-scale visual discovery platform. In Proceedings of the Proceedings of the Nineteenth ACM Conference on Recommender Systems, 2025, pp. 381–390.

- He, R.; Heldt, L.; Hong, L.; Keshavan, R.; Mao, S.; Mehta, N.; Su, Z.; Tsai, A.; Wang, Y.; Wang, S.C.; et al. PLUM: Adapting Pre-trained Language Models for Industrial-scale Generative Recommendations. arXiv preprint arXiv:2510.07784 2025.

- Liu, Z.; Wang, S.; Wang, X.; Zhang, R.; Deng, J.; Bao, H.; Zhang, J.; Li, W.; Zheng, P.; Wu, X.; et al. OneRec-Think: In-Text Reasoning for Generative Recommendation. arXiv preprint arXiv:2510.11639 2025.

- Oord, A.v.d.; Vinyals, O.; Kavukcuoglu, K. Neural Discrete Representation Learning. In Proceedings of the NeurIPS, 2017.

- Esser, P.; Rombach, R.; Ommer, B. Taming Transformers for High-Resolution Image Synthesis. In Proceedings of the CVPR, 2021.

- Wang, X.; Chen, K.; Zha, Z.J.; Luo, J. Learning Image-Adaptive Codebooks for Class-Agnostic Image Restoration. In Proceedings of the CVPR, 2023.

- Zeghidour, N.; Luebs, A.; Omran, A.; Skoglund, J.; Tagliasacchi, M. SoundStream: An End-to-End Neural Audio Codec. In Proceedings of the IEEE/ACM Transactions on Audio, Speech, and Language Processing. IEEE, 2021. [CrossRef]

- Jégou, H.; Douze, M.; Schmid, C. Product Quantization for Nearest Neighbor Search. IEEE Transactions on Pattern Analysis and Machine Intelligence 2011, 33, 117–128. [CrossRef]

- Ju, C.M.; Collins, L.; Neves, L.; Kumar, B.; Wang, L.Y.; Zhao, T.; Shah, N. Generative Recommendation with Semantic IDs: A Practitioner’s Handbook, 2025, [arXiv:cs.IR/2507.22224].

- Rafailov, E.; Ouyang, L.; Wang, J.; Zhu, S.; Amodei, D.; Christiano, P.; Leike, J.; Irving, G. Direct Preference Optimization: Your Language Model is Secretly a Reward Model. arXiv preprint arXiv:2305.18290 2023.

- Xiong, W.; Dong, H.; Ye, C.; Wang, Z.; Zhong, H.; Ji, H.; Jiang, N.; Zhang, T. Iterative Preference Learning from Human Feedback: Bridging Theory and Practice for RLHF under KL-constraint. In Proceedings of the Proceedings of the 41st International Conference on Machine Learning (ICML/PMLR), 2024, pp. 54715–54754.

- OpenAI. GPT-4 Technical Report. https://openai.com/research/gpt-4, 2023. Accessed: 2025-08-04.

- Kang, W.C.; McAuley, J. Self-Attentive Sequential Recommendation, 2018, [arXiv:cs.IR/1808.09781].

- Ivchenko, D.; Van Der Staay, D.; Taylor, C.; Liu, X.; Feng, W.; Kindi, R.; Sudarshan, A.; Sefati, S. TorchRec: a PyTorch Domain Library for Recommendation Systems. In Proceedings of the Proceedings of the 16th ACM Conference on Recommender Systems, New York, NY, USA, 2022; RecSys ’22, p. 482–483. [CrossRef]

- Cai, H.; Li, Y.; Yuan, R.; Wang, W.; Zhang, Z.; Li, W.; Chua, T.S. Exploring Training and Inference Scaling Laws in Generative Retrieval. In Proceedings of the Proceedings of the 48th International ACM SIGIR Conference on Research and Development in Information Retrieval, New York, NY, USA, 2025; SIGIR ’25, p. 1339–1349. [CrossRef]

- Lin, X.; Yang, C.; Wang, W.; Li, Y.; Du, C.; Feng, F.; Ng, S.K.; Chua, T.S. Efficient Inference for Large Language Model-based Generative Recommendation. In Proceedings of the Proceedings of the 13th International Conference on Learning Representations (ICLR), 2025.

- Hou, Y.; Li, J.; He, Z.; Yan, A.; Chen, X.; McAuley, J. Bridging Language and Items for Retrieval and Recommendation. arXiv preprint arXiv:2403.03952 2024.

- Liu, Q.; Zheng, K.; Huang, R.; Li, W.; Cai, K.; Chai, Y.; Niu, Y.; Hui, Y.; Han, B.; Mou, N.; et al. RecFlow: An Industrial Full Flow Recommendation Dataset. arXiv preprint arXiv:2410.20868 2024.

- Sun, Z.; Si, Z.; Zang, X.; Leng, D.; Niu, Y.; Song, Y.; Zhang, X.; Xu, J. KuaiSAR: A Unified Search And Recommendation Dataset. In Proceedings of the Proceedings of the 32nd ACM International Conference on Information and Knowledge Management, New York, NY, USA, 2023; CIKM ’23, p. 5407–5411. [CrossRef]

- Jain, R.; Bhasi, V.M.; Jog, A.; Sivasubramaniam, A.; Kandemir, M.T.; Das, C.R. Pushing the Performance Envelope of DNN-based Recommendation Systems Inference on GPUs. In Proceedings of the 2024 57th IEEE/ACM International Symposium on Microarchitecture (MICRO). IEEE, 2024, pp. 1217–1232.

- Jiang, C.; Wang, J.; Ma, W.; Clarke, C.L.; Wang, S.; Wu, C.; Zhang, M. Beyond Utility: Evaluating LLM as Recommender. In Proceedings of the Proceedings of the ACM on Web Conference 2025, 2025, pp. 3850–3862.

- Shevchenko, V.; Belousov, N.; Vasilev, A.; Zholobov, V.; Sosedka, A.; Semenova, N.; Volodkevich, A.; Savchenko, A.; Zaytsev, A. From Variability to Stability: Advancing RecSys Benchmarking Practices. arXiv. org 2024.

- Geng, X.; Li, J.; Zhao, W.X.; Wen, J.R. P5: Unified Text-to-Text Pretraining for User Modeling and Recommendation. arXiv preprint arXiv:2203.13366 2022.

- Cui, L.; Wang, X.; Zhang, Y.; Xiao, X.; Xie, X. M6-Rec: A Simple and Effective Pre-Trained Model for Recommendation with Prompt Tuning. arXiv preprint arXiv:2205.02180 2022.

| DLRM | GR | |

|---|---|---|

| Model | Uses pointwise samples. The model structure emphasizes a large cross-module to combine user and item features. | Uses listwise samples. The model focuses on the User module to learn long-term behavioral patterns. |

| Training | Each exposure corresponds to one sample; repeated user interactions lead to redundant user feature computation. Negative sampling is required to reduce training cost. | Each exposure is treated as a token; all exposures from a user are packed into a single sequence sample. User features are computed once. Negative sampling is not required, and the cost of full-data training is negligible. |

| Inference | Inference targets differ across services, and the large Cross module must often be recomputed per request, leading to low computational reusability. | Cross module is computed independently per candidate, while User module computation is shared across candidates. KV cache can be applied to reduce attention costs across requests, improving throughput and enabling large-scale deployment. |

| Paper | Venue | Model Type | Scope | Representation Space | Company |

|---|---|---|---|---|---|

| TIGER [11] | NIPS 2023 | Encoder-decoder | Retrieval | SID | |

| LC Rec [25] | ICDE 2024 | Encoder only | End-to-end | SID | Tencent |

| HSTU [22] | ICML 2024 | Decoder only | Ranking/Retrieval | Non-SID | Meta |

| QARM [28] | Arxiv 2024 | Encoder-decoder | End-to-end | SID | Kuaishou |

| GenSAR [15] | Arxiv 2025 | Encoder–Decoder | Retrieval | SID | Kuaishou |

| LEARN [29] | AAAI 2025 | Encoder only | Ranking | Non-SID | Kuaishou |

| HLLM [30] | RECSYS 2025 | Encoder only | Ranking/Retrieval | Non-SID | ByteDance |

| LUM [31] | Arxiv 2025 | Encoder only | End-to-End | Non-SID | Alibaba |

| LIGER [20] | TMLR 2025 | Encoder-decoder | Retrieval | SID | Meta |

| GRAM [12] | WWW 2025 | Decoder only | Retrieval | Non-SID | JD.com |

| COBRA [21] | Arxiv 2025 | Decoder only | Retrieval | SID | Baidu |

| PinRec [32] | Arxiv 2025 | Decoder only | Retrieval | Non-SID | |

| Pantheon [33] | CoRR 2025 | Encoder–Decoder | Reranking | Non-SID | Kuaishou |

| TBGRecall [34] | Arxiv 2025 | Decoder only | Retrieval | Non-SID | Taobao |

| REG4Rec [35] | Arxiv 2025 | Encoder-decoder | Retrieval | Non-SID | Alibaba |

| NAR4Rec [13] | KDD 2024 | Decoder only | Ranking | Non-SID | Kuaishou |

| GenRank [36] | Arxiv 2025 | Decoder only | Reranking | Non-SID | Xiaohongshu |

| MTGR [24] | CIKM 2025 | Decoder only | Ranking | SID | Meituan |

| MTGRBoost [37] | Arxiv 2025 | Decoder only | End-to-End | Non-SID | Meituan |

| SORT-Gen [38] | SIGIR 2025 | Decoder only | (Re)Ranking | Non-SID | Taobao |

| GenCTR [39] | Arxiv 2025 | Decoder only | Ranking | Non-SID | JD.com |

| DFGR [40] | Arxiv 2025 | Decoder only | Ranking | Non-SID | Meituan |

| OneRec [16] | Arxiv 2025 | Encoder-decoder | End-to-end | SID | Kuaishou |

| OneRec-V2 [41] | Arxiv 2025 | Decoder only | End-to-end | SID | Kuaishou |

| EGA-V1 [3] | Arxiv 2025 | Encoder-decoder | End-to-end | Non-SID | Meituan |

| EGA-V2 [23] | CIKM 2025 | Encoder-decoder | End-to-end | Non-SID | Meituan |

| OneLoc [42] | Arxiv 2025 | Encoder-decoder | End-to-end | SID | Kuaishou |

| OneSearch [27] | Arxiv 2025 | Encoder-decoder | End-to-end | SID | Kuaishou |

| OneSug [43] | Arxiv 2025 | Encoder-decoder | Query suggestion | SID | Kuaishou |

| CAR [44] | Arxiv 2025 | Decoder only | Retrieval | SID | Huawei |

| PinFM [45] | RECSYS 2025 | Encoder only | Retrieval | Non-SID | |

| PLUM [46] | Arxiv 2025 | Encoder-decoder | End-to-end | SID |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).