6. Abduction, counter-abduction, and confirmation strength

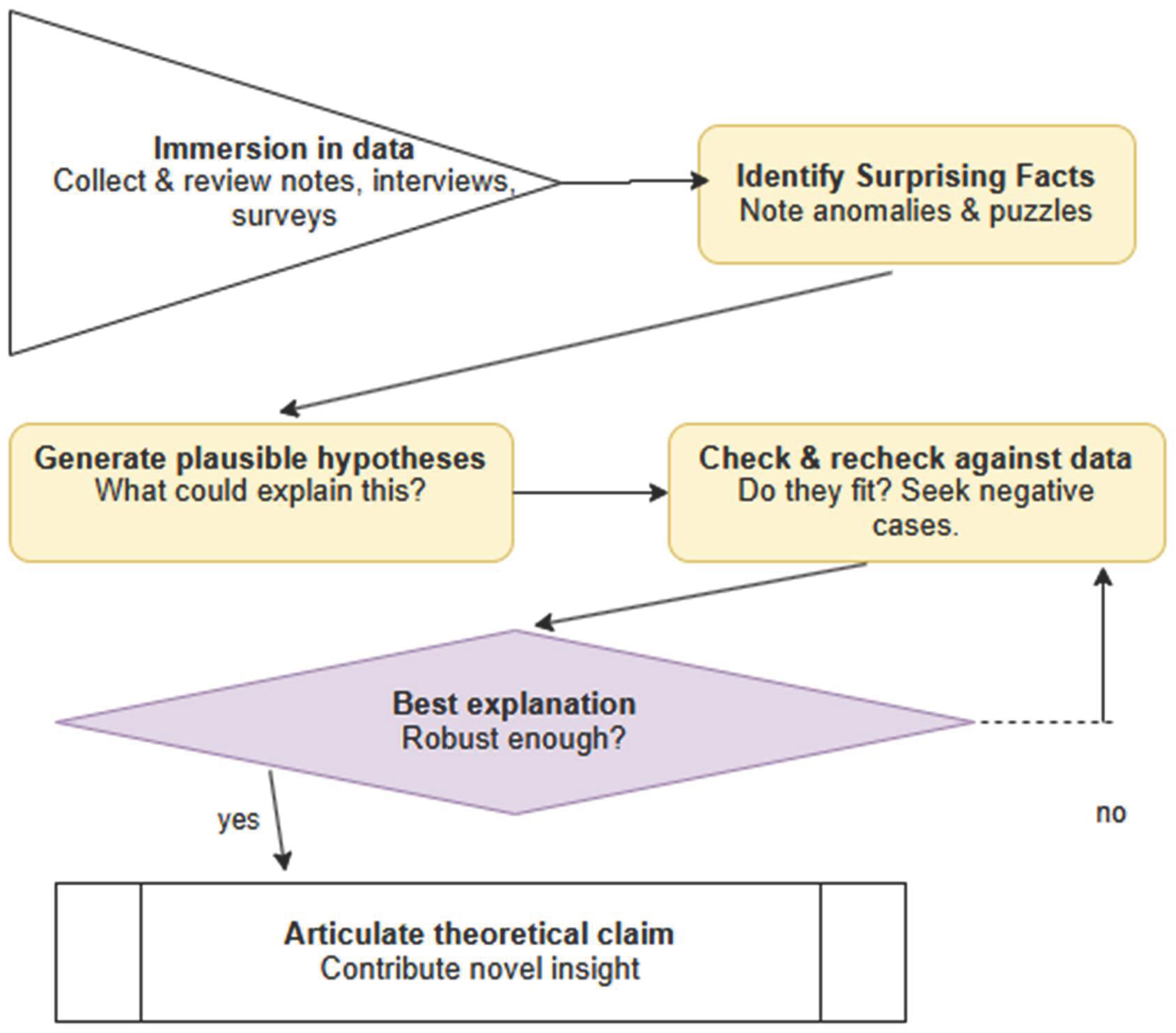

The role of counter-abduction in neuro-symbolic reasoning is best understood by tracing its origins to classical accounts of abductive inference and modern theories of confirmation. Abduction, originally formulated by Charles Sanders Peirce (1878; 1903), denotes the inferential move in which a reasoner proposes a hypothesis H that, if true, would render a surprising observation E intelligible. Peirce emphasized that abduction is neither deductively valid nor inductively warranted; its justification lies in explanatory plausibility rather than certainty. Subsequent philosophers of science, including Harman (1965) and Lipton (2004), elaborated abduction as “inference to the best explanation”—a process by which agents preferentially select hypotheses that most effectively make sense of the evidence.

However, in both human and machine reasoning, the first abductive hypothesis is often not the most reliable. This motivates the introduction of counter-abduction, a concept developed implicitly in sociological methodology (Timmermans & Tavory 2012; Tavory & Timmermans 2014) and more formally in abductive logic programming (Kakas, Kowalski & Toni 1992). Counter-abduction refers to the generation of alternative hypotheses that likewise explain the evidence, thereby challenging the primacy of the initial explanation. For example, while an explosion may abductively explain a loud bang and visible smoke, counter-abductive alternatives—such as a car backfire combined with smoke from a barbecue—demonstrate that multiple explanations can account for the same phenomena (Haig 2005; Haig 2014).

To evaluate these competing hypotheses, the framework draws on confirmation theory, which provides probabilistic and logical tools for assessing evidential support (Carnap 1962; Earman 1992). In Bayesian terms, evidence E confirms hypothesis H if it increases its probability, i.e., if P(H∣E)>P(H). Probability-increase measures such as d(H,E)=P(H∣E)−P(H) and ratio-based measures such as r(H,E)=P(H∣E)/P(H) quantify the extent of confirmation (Crupi, Tentori & González 2007). Likelihood-based measures, including the likelihood ratio P(E∣H)/P(E∣¬H), further assess how much more expected the evidence is under the hypothesis than under alternatives (Hacking 1965). These tools allow structured comparison of hypotheses {H1, H2,… } generated via abduction and counter-abduction.

Cross-domain examples illustrate how this comparison unfolds. Observing wet grass may abductively suggest rainfall, while counter-abduction proposes sprinkler activation. Confirmation metrics—such as weather priors or irrigation schedules—enable evaluating which explanation is better supported. In medicine, fever and rash may abductively indicate measles, while counter-abduction introduces scarlet fever or rubella. Prevalence, symptom specificity, and conditional likelihoods (Gillies 1991; Lipton 2004) allow systematic ranking of hypotheses. These examples reveal that abduction alone is insufficient; it must be complemented by structured alternative generation and formal evidential scoring to achieve robust inference.

The abductive–counter-abductive process naturally adopts a dialogical structure (Dung 1995; Prakken & Vreeswijk 2002). Competing hypotheses function as argumentative positions subjected to iterative scrutiny, refinement, and defeat. Dialogue is the mechanism through which hypotheses confront counterarguments, are evaluated using confirmation metrics, and are revised or abandoned. Such adversarial exchange mirrors the epistemic practices of scientific communities, legal proceedings, clinical differential diagnosis, and multi-agent AI reasoning systems (Haig 2014; Timmermans & Tavory 2012).

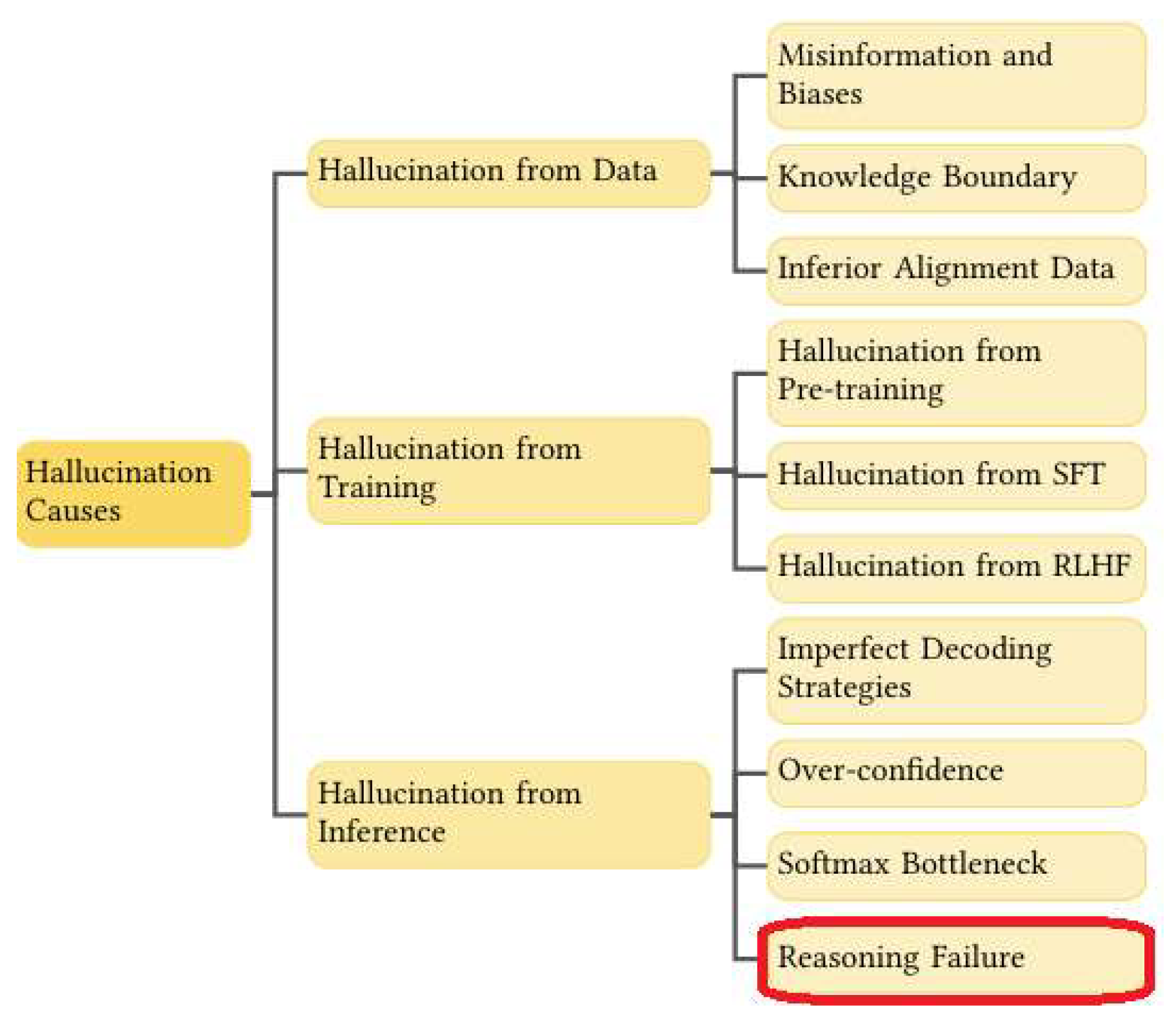

Nevertheless, challenges persist. Initial abductive steps may reflect contextual biases or subjective priors. Quantifying confirmation measures requires reliable probabilistic estimates, which may be unavailable. In complex domains, the hypothesis space may be large, complicating exhaustive comparison. Moreover, confirmation strengths must be dynamically updated as new evidence emerges (Earman 1992). Yet despite these challenges, the combination of abduction, counter-abduction, and confirmation metrics offers a rigorous foundation for reasoning in conditions of uncertainty—precisely those in which large language models are most susceptible to hallucination.

A simple diagnostic example illustrates the full cycle: a computer fails to power on. Abduction suggests a faulty power supply; counter-abduction proposes an unplugged cable or damaged motherboard. Prior probabilities and likelihoods (e.g., frequency of cable issues) inform confirmation scores. Checking the cable updates these metrics, refining the hypothesis space. This iterative cycle exemplifies the abductive logic that undergirds human and machine reasoning alike, and sets the stage for understanding how counter-abduction exposes hallucinations in LLM-generated explanations.

The next section will demonstrate how this classical abductive framework becomes a core mechanism for hallucination detection and correction in neuro-symbolic CoT reasoning.

6.1. Counter-abduction and information gain

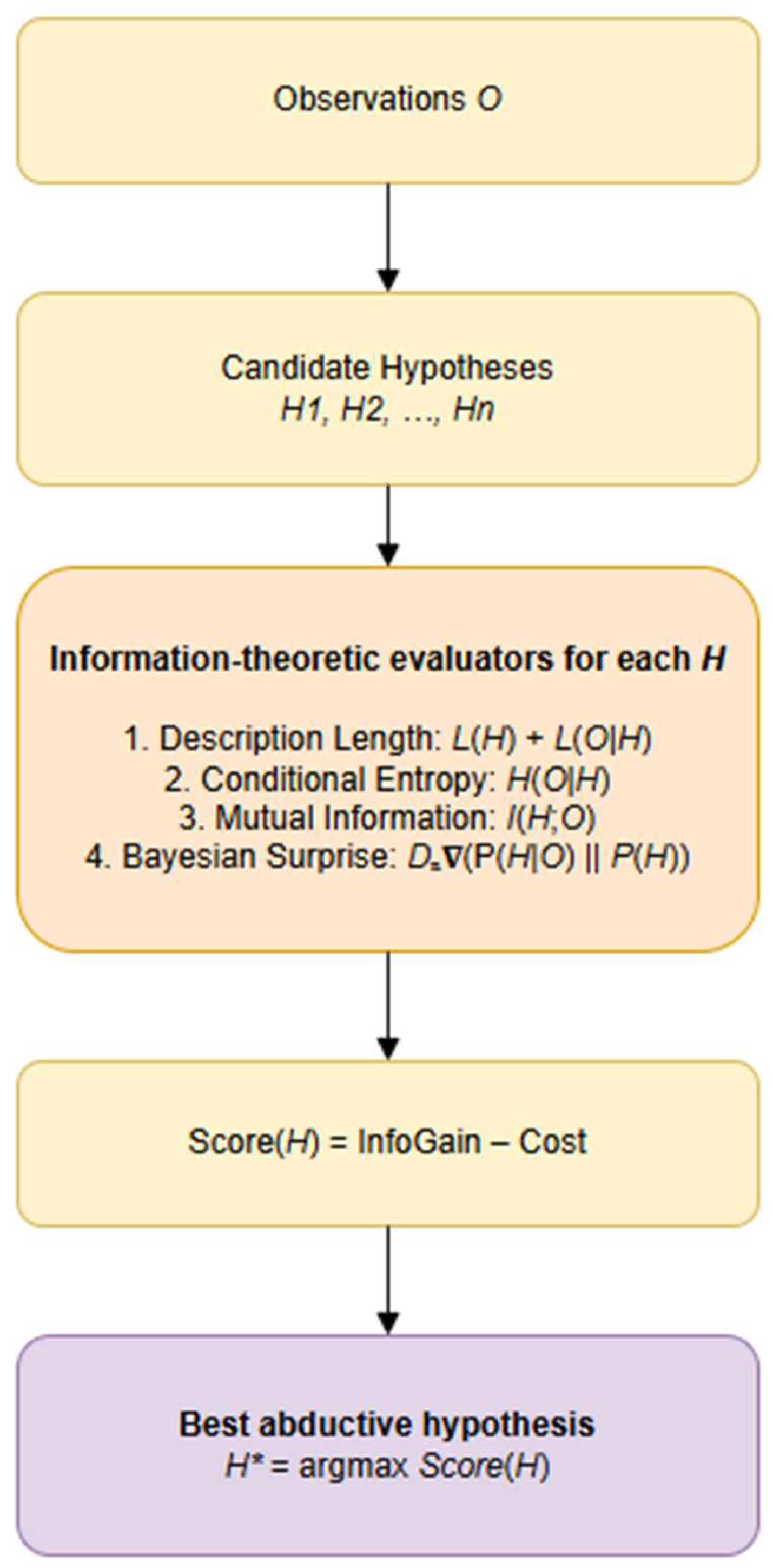

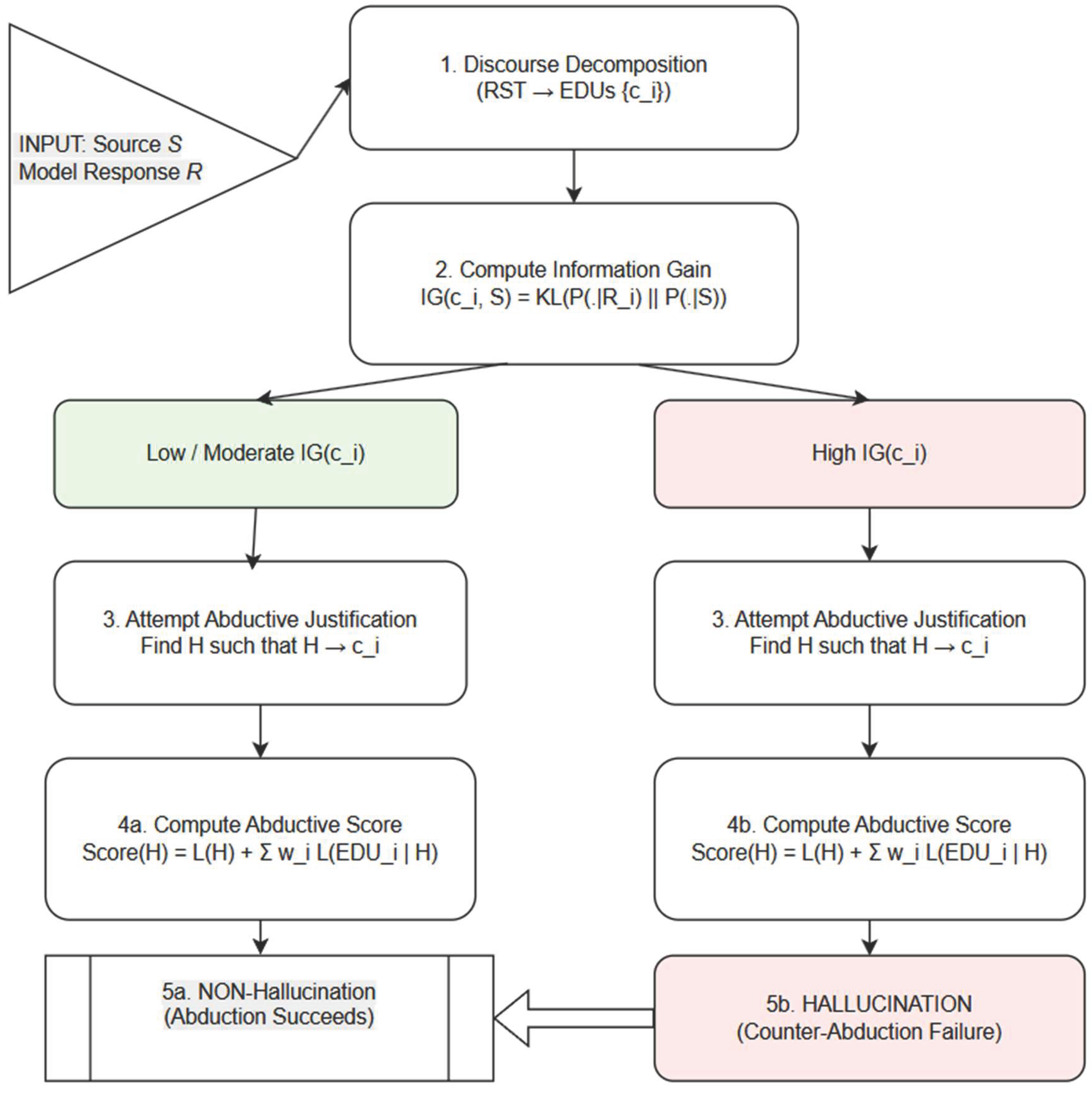

While abduction identifies hypotheses that best explain an observation, counter-abduction addresses the complementary problem: determining when a candidate explanation should not be accepted because it introduces excessive uncertainty, complexity, or informational divergence. If abduction seeks “the simplest hypothesis that makes the observation unsurprising,” counter-abduction identifies cases where no reasonable hypothesis can make the observation sufficiently unsurprising without incurring prohibitive explanatory cost. This mechanism plays a crucial role in hallucination detection, particularly in generative models where plausible-sounding but unsupported claims frequently arise.

Information theory provides a natural mathematical foundation for counter-abduction. A claim is counter-abducted—that is, rejected as a viable explanation—when incorporating it into the hypothesis space results in a net increase in informational cost relative to the explanatory benefit it provides.

Counter-abduction occurs when every possible H that supports the claim produces a score larger than the score obtained by explaining the observation without the claim. In such cases, adopting the explanatory hypothesis increases overall bit-cost and therefore violates abductive optimality.

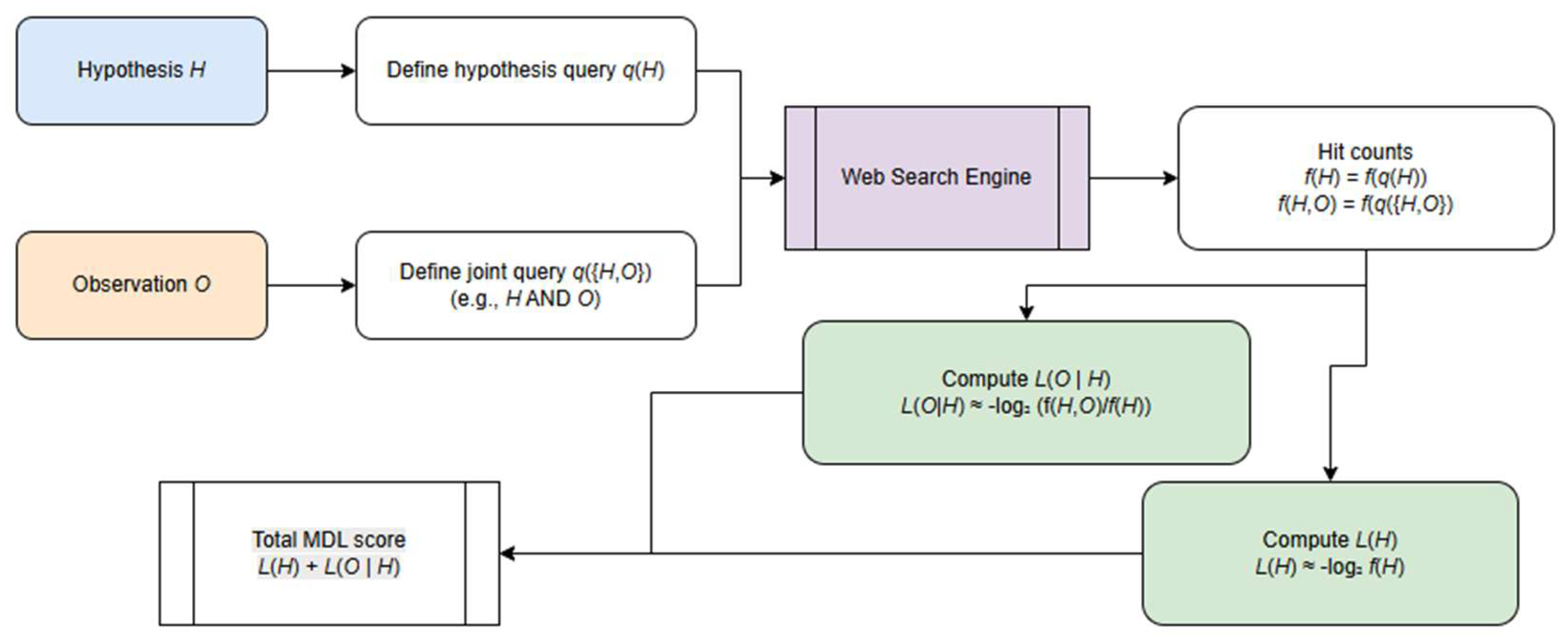

This evaluation can be expressed in terms of IG. For an observation O and a response-generated claim c, IG measures the divergence between the distribution over world states conditioned on the source and the distribution conditioned on the response (formula (1)):

A claim with high information gain significantly shifts the system’s belief state away from what the source supports. Counter-abduction leverages this: if the claim’s IG cannot be reduced through any admissible hypothesis H (i.e., L(EDUi∣H) remains high, or L(H) grows excessively), the system concludes that the claim is not abductively repairable. In other words, the claim’s informational “cost” outweighs the benefits of explanatory consistency, and it is rejected as a hallucination.

Thus, counter-abduction is the abductive analogue of falsification: it identifies claims that cannot be integrated into the reasoning system without violating principles of informational economy. Combining counter-abduction with IG results in a two-sided evaluation: abduction selects explanations that minimize informational surprise, while counter-abduction detects claims whose informational divergence cannot be justified even by creating new hypotheses. This dual mechanism is essential for robust hallucination detection, especially in generative models that often produce coherent but abductively unsupported statements.

Let c be a claim generated by a model, and let denote the space of admissible abductive hypotheses. For each H∈ we evaluate the discourse-aware information-theoretic score

We define the baseline score for explaining the source-supported content (i.e., without endorsing claim c)

Let (c)⊆ be the subset of hypotheses that support claim c, meaning c is entailed or rendered probabilistically unsurprising under H. Then the best explanation for the discourse including the claim is:

A claim c exhibits counter-abductive failure if:

> (***)

and this inequality holds strictly for all H∈.

Intuitively, a claim fails abductively when no admissible hypothesis can incorporate it without increasing the total informational cost relative to the best explanation that excludes it.

Information-gain interpretation is as follows. Let the claim-conditioned and source-conditioned distributions be P(⋅∣R=c) and P(⋅∣S). Counter-abductive failure corresponds to claims with irreducibly high information gain, the expression (IG) above.

A claim exhibits counter-abductive failure precisely when:

for some threshold τ derived from , meaning the claim’s divergence from the source cannot be reduced by any reasonable hypothesis.

Counter-abductive failure is therefore the formal criterion for hallucination: if there exists a simple, coherent hypothesis that reduces the claim’s informational cost → abduction succeeds. If no such hypothesis exists, and every attempt to justify the claim increases description length, entropy, or divergence → counter-abduction rejects the claim, marking it as hallucinated. This makes counter-abduction the negative counterpart to abductive inference and an essential mechanism for robust hallucination detection.

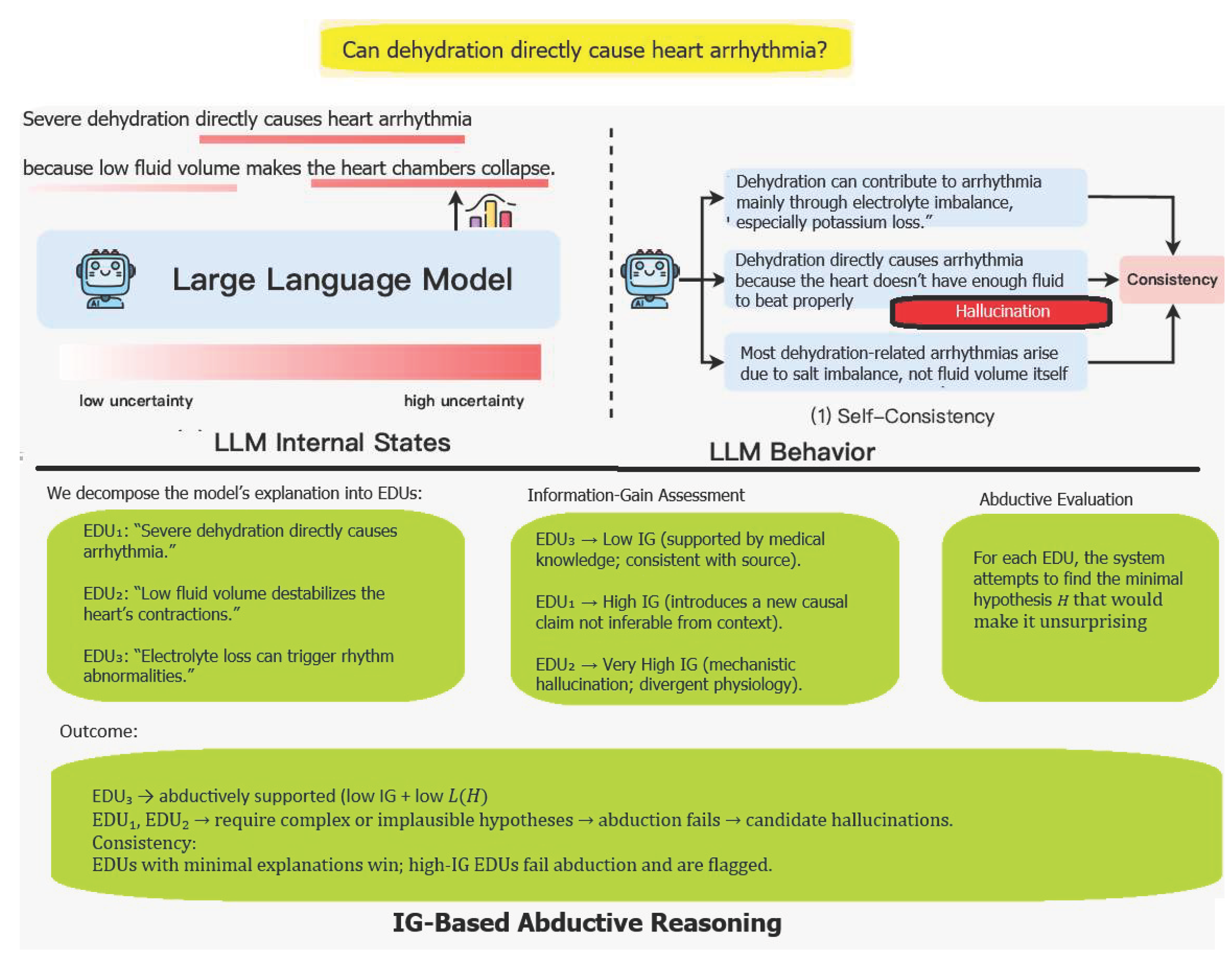

6.2. Counter-abduction for detecting oversimplified explanatory hallucinations

A distinctive class of hallucinations (Huang et al 2025) addressed in this work concerns situations in which a model generates a claim that appears easily explainable from the given premises, yet the explanation it relies upon is incorrect or excessively superficial. In such cases, the claim itself may well be true, but the inferential route leading to it is flawed. This phenomenon arises when the model identifies a causally appealing but domain-inadequate explanatory shortcut—an abductive leap driven more by intuitive simplicity than by the underlying domain mechanisms.

Consider the common misconception that a gout attack can be caused by walking in cold water. On the surface, the abductive pathway is straightforward: cold exposure → uric acid crystallization → gout flare. This explanation is compact, causally intuitive, and readily generated by an LLM. However, it is medically incorrect. Gout flares depend primarily on systemic urate load, metabolic triggers, dietary factors, and local inflammatory processes; cold exposure may modulate symptoms but is not itself a causal trigger. Thus, while the event (“a gout flare occurred after walking in cold water”) may be true, the explanation is invalid precisely because it is too easy relative to the domain’s real causal structure.

Counter-abduction provides a principled mechanism for identifying such errors. Whereas standard abduction seeks the most plausible explanation consistent with the premises, counter-abduction introduces explicit competition among explanations. The system generates not only a candidate abductive explanation but also alternative counter-explanations that challenge its plausibility. These counter-abductions encode more accurate or more domain-coherent mechanisms for the same phenomenon and thereby serve as defeaters for oversimplified reasoning.

Operationally, counter-abduction proceeds in three steps. First, an abductive explanation is produced for why the claim might hold. Second, the system constructs counter-hypotheses that demonstrate either (a) how the same premises do not support the claim under correct causal interpretation, or (b) how the claim, if true, would more plausibly arise from mechanisms absent from the premises. Third, the abductive explanation is evaluated against these counter-hypotheses. If a counter-abduction offers a better, richer, or more medically grounded account, it defeats the original explanation, indicating that the model relied on an invalid or overly convenient reasoning path.

This defeat relation is central for hallucination detection. Unlike approaches that focus solely on factual contradictions or fabricated content, counter-abduction targets flawed explanatory structures. It allows us to flag answers in which the claim is not the problem—but the justification is. In safety-critical domains such as medicine or law, these explanation-level hallucinations are particularly dangerous, as they may persuade users with coherent yet incorrect causal narratives.

By requiring explanations to withstand competition from counter-explanations, counter-abduction mitigates the tendency of LLMs to prefer low-complexity, heuristically salient causal links. It ensures that abductive reasoning is not accepted merely because it looks plausible but only if it remains valid when confronted with alternative, domain-informed reasoning paths. In doing so, counter-abduction offers a structurally grounded approach for identifying and defeating “too-easy” explanations that underlie a subtle but important form of hallucination.

6.3. Intra-LLM abduction for Retrieval Augmented Generation

Given a natural-language query Q and a retrieved evidence set ={e1,e2,…,en}, a conventional Retrieval Augmented Generation (RAG) pipeline conditions the LLM directly on (Q,) to generate an answer A. When is incomplete or in a week discourse agreement, the model may either fail to produce an answer or hallucinate unsupported content. In our framework, abductive reasoning addresses this gap by introducing a hypothesized missing premise drawn from the space of discourse-weighted abducibles. Abductive completion is thus formalized as identifying a premise such that

∧ ⊢ A,

where ⊢ denotes entailment under our weighted abductive logic program. Crucially, the premise is not supplied by the retrieval stage; it must be generated, ranked, and validated through abductive and counter-abductive search over candidate hypotheses.

We first evaluate whether the retrieved evidence set provides sufficient support for answering Q. A lightweight LLM-based reasoning and rhetoric sufficiency classifier or an NLI model estimates

rhetoric_sufficiency(Q,)= Pr(supportive∣ Q,)

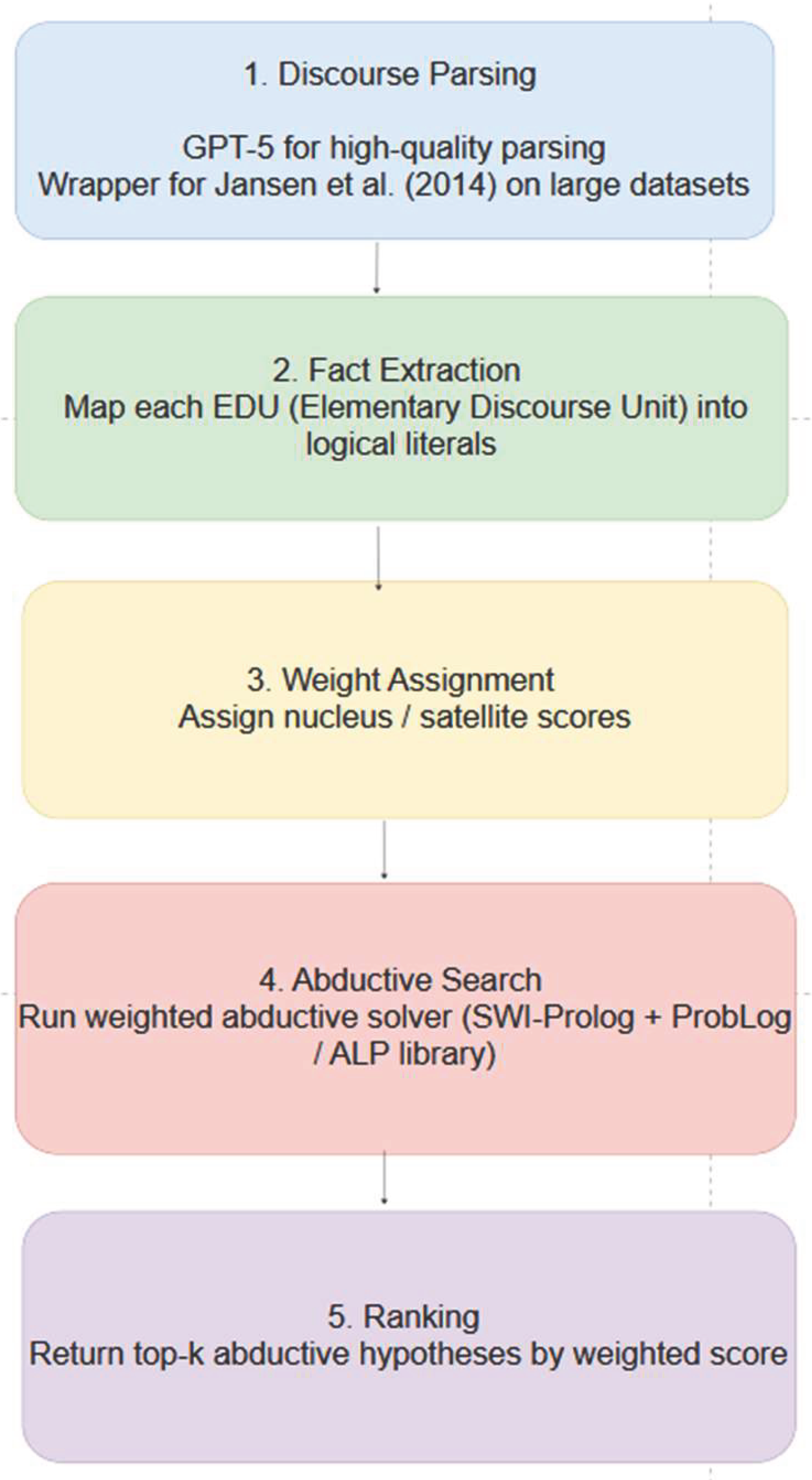

If rhetoric_sufficiency(Q,)<τ, where τ is a predefined threshold, the system enters the abductive completion stage of our D-ALP pipeline.

We prompt the LLM to generate a set of discourse-compatible abductive hypotheses ={p1,p2,…,pn}conditioned on (Q,):

=LLM(Q,, “What missing assumption would make the reasoning valid?”).

In the discourse-aware variant, each candidate pi is also assigned a nucleus–satellite weight derived from its rhetorical role, yielding an initial abductive weight wi. To reduce hallucination, we may apply retrieval-augmented prompting, retrieving passages semantically aligned with each candidate premise before evaluation.

Each candidate premise pi undergoes a two-stage validation procedure grounded in our abductive logic program:

We compute an overall validation score extending (Lin 2025):

score(pi)=α⋅entail(, pi)+β⋅retrieve(pi)+γ⋅wi

where wi is the discourse-weight (nucleus/satellite factor) assigned to the hypothesis, and α,β,γ control the contribution of logical entailment, empirical support, and discourse salience. The highest-scoring premise p* is selected.

The enriched abductive context (Q,, p*) is then supplied to the LLM:

Final answer A=LLM(Q,, p*),

yielding an answer whose justification reflects both retrieved evidence and the abductively inferred missing premise. Combined with counter-abductive filtering, this mechanism mitigates unsupported reasoning chains and substantially reduces hallucination risk.

6.4. Conditional abduction

In the entropy-based account of hallucination detection, a model’s response is evaluated in terms of how sharply it shifts the probability distribution over plausible world states relative to what is supported by the source. High information gain signals that the response introduces content that is not inferable from the given evidence. While this provides a quantitative measure of informational inconsistency, it does not determine whether the new content may nevertheless be justified by a plausible explanatory hypothesis. Integrating computational abduction into the entropy framework provides a principled mechanism for distinguishing between unsupported hallucinations and legitimate abductive extensions.

Within computational reasoning, abduction is best understood as conditional inference: for an observation OOO, the task is to identify or construct a condition H such that H→OH. This perspective aligns naturally with the role of hallucination detection: a model-generated claim is acceptable if (i) its information gain is low, or (ii) it has high information gain but can be abductively justified by a minimal, coherent, computationally valid hypothesis set. The absence of such hypotheses marks a claim as a genuine hallucination.

Three operational classes of abduction contribute differently within the hallucination-detection pipeline:

1. Selective abduction corresponds to classical abductive logic programming: the system selects an existing rule H→OH whose consequent matches the claim. In hallucination detection, if a claim c has high information gain but matches the consequent of a known rule in the knowledge base, the antecedent H acts as an abductive justification, reducing the hallucination severity. For example, a model may introduce a fact absent from the source but derivable from domain rules; selective abduction recognizes such cases as legitimate extrapolations rather than hallucinations.

2. Conditional-creative abduction supports hypotheses where the system constructs a new rule linking an existing antecedent to the observed claim. In entropy terms, such claims typically carry moderate IG: they are not fully supported by the source but can be justified by positing a missing causal or definitional dependency. Within the hallucination framework, the rule induction step must be constrained by minimal description length or complexity penalties (e.g., Bayes factors, rule weights, information-theoretic priors). A claim is considered hallucinated if creating such a rule incurs a prohibitive cost relative to the IG introduced by the claim.

3. Propositional-conditional-creative abduction corresponds to the creation of a new proposition H and a new rule H→O. This mechanism is particularly important in open-world or discovery-oriented tasks but poses the greatest risk of hallucination in LLM outputs. Claims of high information gain accompanied by high abductive creation cost—because the antecedent is novel and the rule is invented—are typically classified as hallucinations unless strong structural, ontological, or probabilistic evidence supports the introduction of the new concept. This subtype maps directly onto cases where LLMs fabricate entities, relations, or events (e.g., non-existent persons, impossible chemical reactions).

In the abduction-penalized information gain (formula (1)) L(Hc) quantifies its complexity (selective < conditional-creative < propositional-creative); and λ modulates the strength of the abductive penalty. Claims falling into selective abduction require minimal or no penalty, whereas claims requiring complex or novel hypothesis formation yield large L(Hc), amplifying their effective hallucination score.

This combined measure distinguishes between:

Faithful claims: low IG, no abductive penalty.

Legitimate abductive elaborations: high IG, but low L(Hc).

Speculative abductive leaps: high IG, moderate L(Hc).

Hallucinations proper: high IG and prohibitively high (or undefined) L(Hc).

In practice, this yields a unified neuro-symbolic verification pipeline: entropy quantifies informational deviation, while abduction evaluates whether a computationally minimal, logically coherent hypothesis could reconcile that deviation with the source. A claim is labeled hallucinated precisely when no such hypothesis exists or when the abductive cost vastly outweighs the informational benefit of allowing the claim.