Submitted:

05 December 2025

Posted:

08 December 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Motivation and Problem Statement

1.2. Regulatory Landscape: GDPR and AI Act

1.3. Objectives and Contributions

1.4. Article Structure

2. Background and Related Work

2.1. Explainability, Governance and Compliance

2.2. Existing Frameworks and Their Limitations

2.3. Need for a Unified Technical–Regulatory Mapping

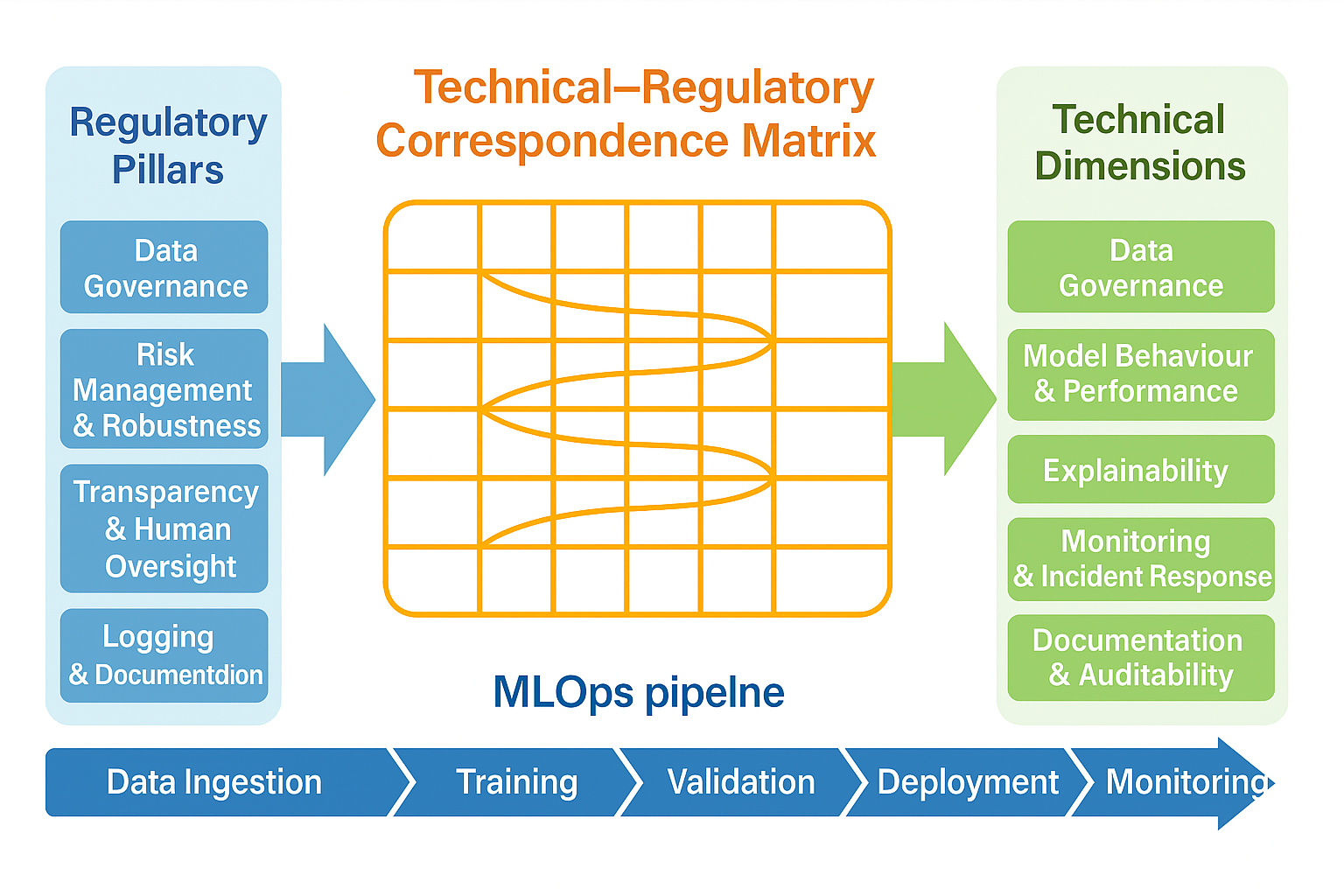

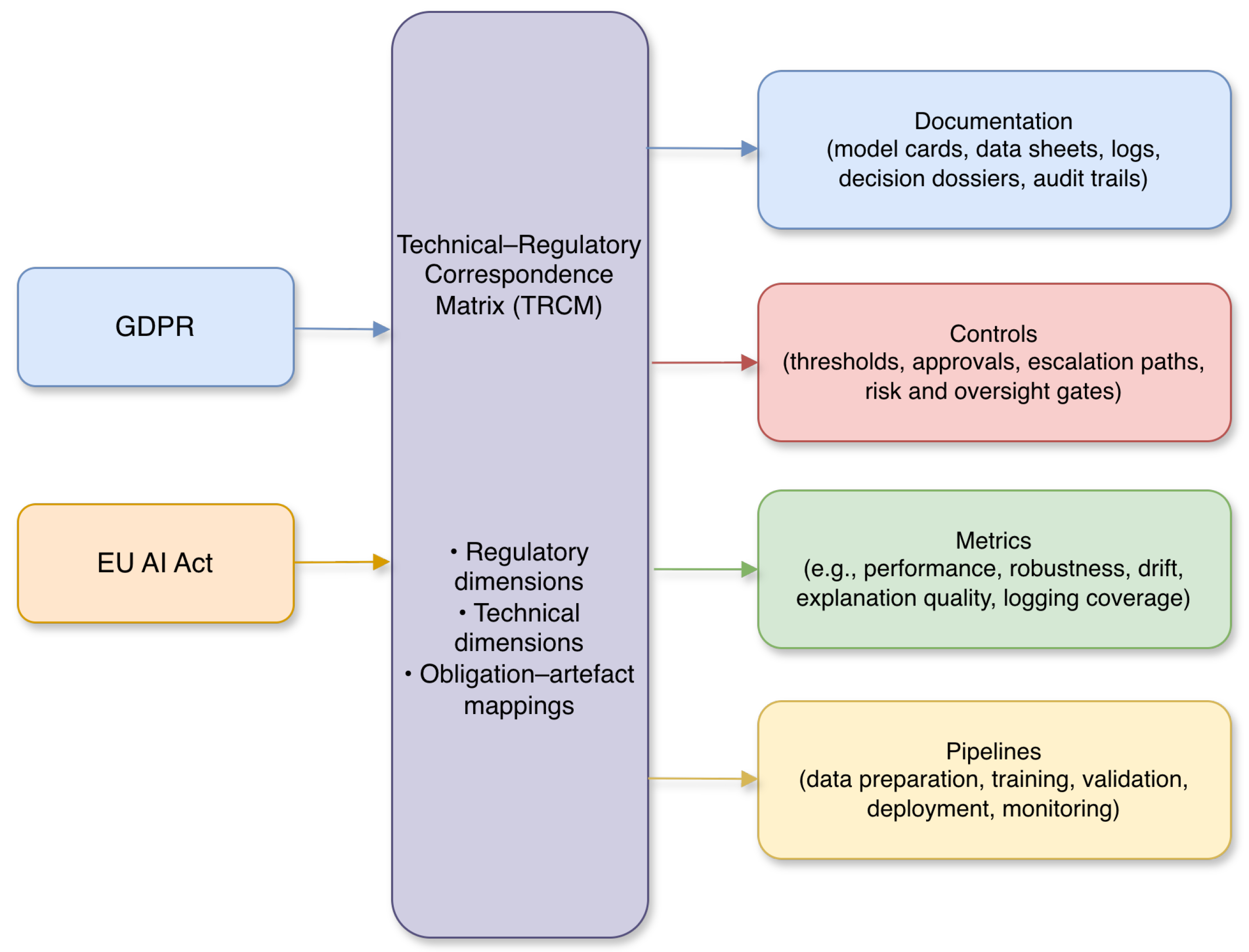

3. The Technical–Regulatory Correspondence Matrix

3.1. Conceptual Overview

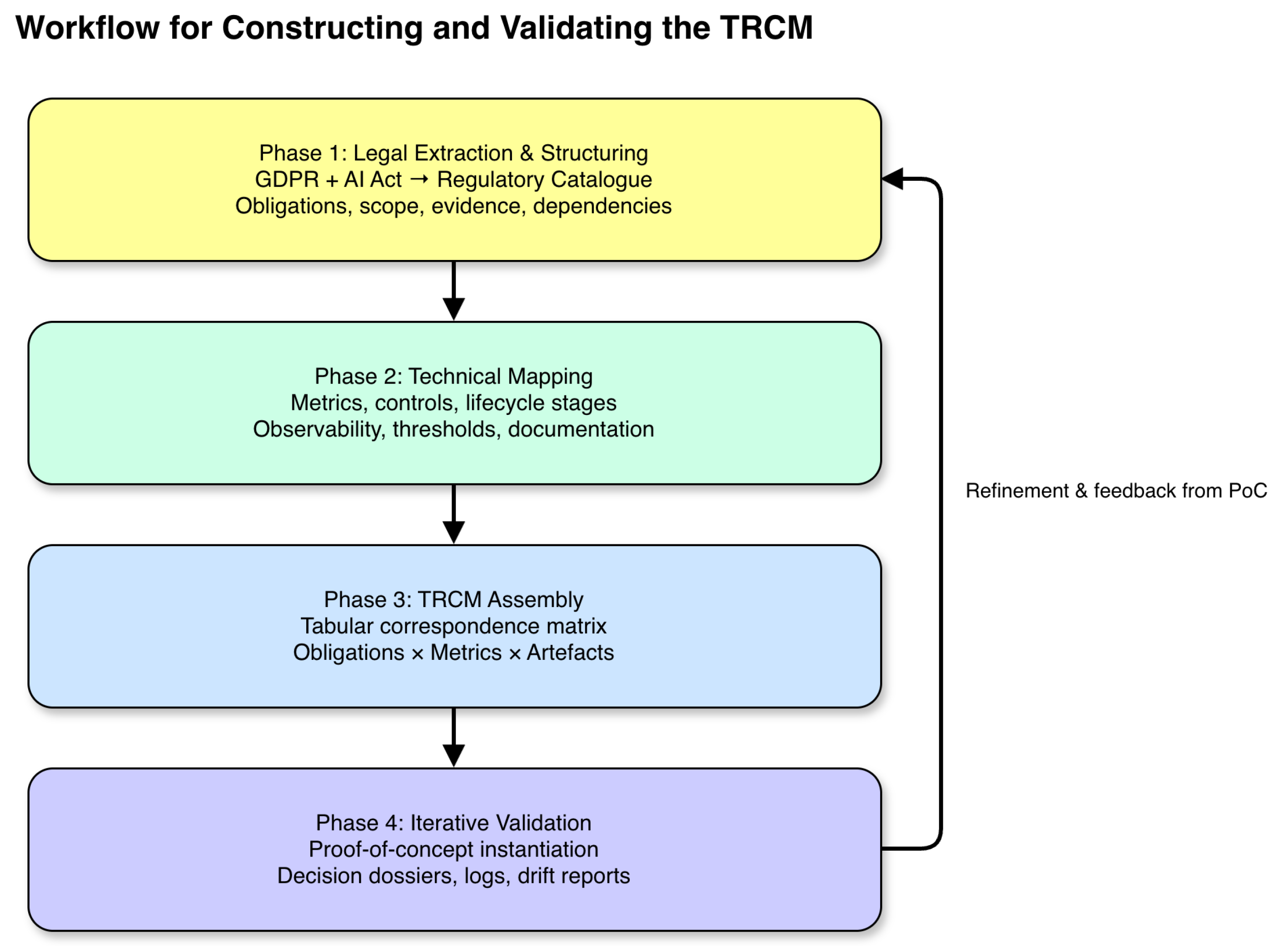

3.2. Matrix Construction Methodology

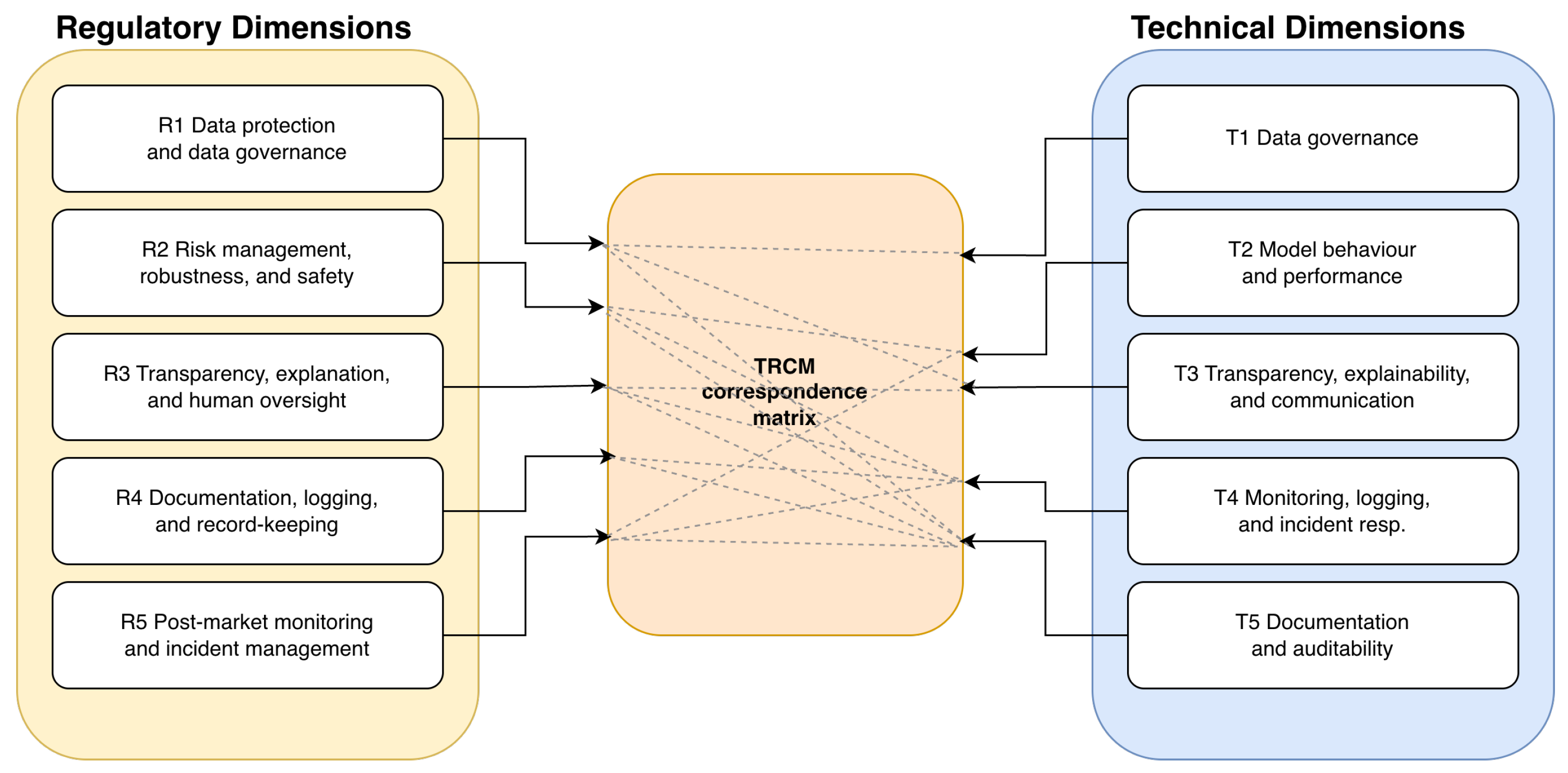

3.3. Regulatory Dimensions

3.4. Technical Dimensions

3.5. Linking Legal and Technical Dimensions

4. Application of the TRCM to High-Risk AI Systems

4.1. Selecting a High-Risk Use Case

4.2. Mapping Technical Controls to Legal Requirements

4.3. Operational Implications

5. Discussion

5.1. Positioning the TRCM in the AI Governance Landscape

5.2. Implications for Engineering Practice and Compliance

5.3. Limitations and Threats to Validity

5.4. Directions for Future Work

6. Conclusion and Future Work

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Almeida, F.; Calistru, C. Artificial Intelligence and Its Impact on the Public Sector. Administrative Sciences 2021, 11, 75. [Google Scholar] [CrossRef]

- Taeihagh, A. Governance of artificial intelligence. Policy and Society 2021, 40, 137–157. [Google Scholar] [CrossRef]

- Rodriguez, J.; Farooq, B. Connecting Algorithmic Transparency and Accountability: A Systematic Review. AI and Ethics 2023, 3, 567–586. [Google Scholar] [CrossRef]

- Justo-Hanani, R. The politics of Artificial Intelligence regulation and governance reform in the European Union. In Policy Sciences; 2022. [Google Scholar] [CrossRef]

- Papagiannidis, E.; Mikalef, P.; Conboy, K. Responsible artificial intelligence governance: A review and research framework. J. Strateg. Inf. Syst. 2025. [Google Scholar] [CrossRef]

- Rodríguez, N.D.; Ser, J.; Coeckelbergh, M.; de Prado, M.L.; Herrera-Viedma, E.; Herrera, F. Connecting the Dots in Trustworthy Artificial Intelligence: From AI Principles, Ethics, and Key Requirements to Responsible AI Systems and Regulation. Information Fusion 2023, 91, 1–25. [Google Scholar] [CrossRef]

- Seger, E. In Defence of Principlism in AI Ethics and Governance. Philosophy & Technology 2022, 35, 1–8. [Google Scholar] [CrossRef]

- Lu, Q.; Zhu, L.; Xu, X.; Whittle, J.; Zowghi, D.; Jacquet, A. Responsible AI Pattern Catalogue: A Collection of Best Practices for AI Governance and Engineering. ACM Computing Surveys 2022, 55, 1–38. [Google Scholar] [CrossRef]

- Birkstedt, T.; Minkkinen, M.; Tandon, A.; Mäntymäki, M. AI governance: themes, knowledge gaps and future agendas. Internet Research 2023, 33, 135–170. [Google Scholar] [CrossRef]

- Siala, H.; Wang, Y. SHIFTing artificial intelligence to be responsible in healthcare: A systematic review. Social Science & Medicine 2022, 301, 114927. [Google Scholar] [CrossRef]

- Nasir, S.; Khan, R.A.; Bai, S. Ethical Framework for Harnessing the Power of AI in Healthcare and Beyond. IEEE Access 2023, 11, 110073–110093. [Google Scholar] [CrossRef]

- Stogiannos, N.; Malik, R.; Kumar, A.; Barnes, A.; Pogose, M.; Harvey, H.; McEntee, M.; Malamateniou, C. Black box no more: a scoping review of AI governance frameworks to guide procurement and adoption of AI in medical imaging and radiotherapy in the UK. The British Journal of Radiology 2023, 96, 20230744. [Google Scholar] [CrossRef] [PubMed]

- Cheng, L.; Varshney, K.R.; Liu, H. Socially Responsible AI Algorithms: Issues, Purposes, and Challenges. Journal of Artificial Intelligence Research 2021, 70, 1177–1217. [Google Scholar] [CrossRef]

- Sharma, S. Benefits or Concerns of AI: A Multistakeholder Responsibility. Futures 2024, 154, 103277. [Google Scholar] [CrossRef]

- Ntoutsi, E.; Fafalios, P.; Gadiraju, U.; Iosifidis, V.; Nejdl, W.; Vidal, M.E.; Ruggieri, S.; Turini, F.; Papadopoulos, S.; Krasanakis, E.; et al. Bias in data-driven artificial intelligence systems—An introductory survey. Wiley Interdisciplinary Reviews: Data Mining and Knowledge Discovery 2020, 10, e1356. [Google Scholar] [CrossRef]

- Schwalbe, G.; Finzel, B. A comprehensive taxonomy for explainable artificial intelligence: a systematic survey of surveys on methods and concepts. Data Mining and Knowledge Discovery 2021, 35, 973–1042. [Google Scholar] [CrossRef]

- Rong, Y.; Leemann, T.; trang Nguyen, T.; Fiedler, L.; Qian, P.; Unhelkar, V.; Seidel, T.; Kasneci, G.; Kasneci, E. Towards Human-Centered Explainable AI: A Survey of User Studies for Model Explanations. IEEE Transactions on Pattern Analysis and Machine Intelligence 2022. [Google Scholar] [CrossRef]

- Abgrall, G.; Holder, A.L.; Dagdia, Z.C.; Zeitouni, K.; Monnet, X. Should AI models be explainable to clinicians? Critical Care 2024. [Google Scholar] [CrossRef]

- Martins, T.; Almeida, A.M.D.; Cardoso, E.; Nunes, L. Explainable Artificial Intelligence (XAI): A Systematic Literature Review on Taxonomies and Applications in Finance. IEEE Access 2022. [Google Scholar] [CrossRef]

- Outeda, C.C. The EU’s AI act: A framework for collaborative governance. Internet of Things 2024, 25, 101949. [Google Scholar] [CrossRef]

- Li, B.; Qi, P.; Liu, B.; Di, S.; Liu, J.; Pei, J.; Yi, J.; Zhou, B. Trustworthy AI: From Principles to Practices. ACM Computing Surveys 2021, 54, 1–38. [Google Scholar] [CrossRef]

- Schneider, J.; Abraham, R.; Meske, C.; Brocke, J. Artificial Intelligence Governance For Businesses. Information Systems Management 2020, 37, 314–329. [Google Scholar] [CrossRef]

- Corrêa, N.; Galvão, C.; Santos, J.; Pino, C.; Pinto, E.P.; Barbosa, C.; Massmann, D.; Mambrini, R.; Galvao, L.; Terem, E. Worldwide AI ethics: A review of 200 guidelines and recommendations for AI governance. Patterns 2022, 3, 100550. [Google Scholar] [CrossRef]

- Kuziemski, M.; Misuraca, G. AI governance in the public sector: Three tales from the frontiers of automated decision-making in democratic settings. Telecommunications Policy 2020, 44, 101976. [Google Scholar] [CrossRef]

- Janssen, M.; Brous, P.; Estevez, E.; Barbosa, L.; Janowski, T. Data governance: Organizing data for trustworthy Artificial Intelligence. Government Information Quarterly 2020, 37, 101493. [Google Scholar] [CrossRef]

- Lazcoz, G.; Hert, P. Humans in the GDPR and AIA governance of automated and algorithmic systems. Essential pre-requisites against abdicating responsibilities. Computer Law & Security Review 2023, 51, 105824. [Google Scholar] [CrossRef]

- Zaidan, E.; Ibrahim, I.A. AI Governance in a Complex and Rapidly Changing Regulatory Landscape: A Global Perspective. Humanities and Social Sciences Communications 2024, 11, 1–12. [Google Scholar] [CrossRef]

- Bouderhem, R. Shaping the future of AI in healthcare through ethics and governance. Humanities and Social Sciences Communications 2024, 11, 1–10. [Google Scholar] [CrossRef]

- Morley, J.; Floridi, L.; Kinsey, L.A.; Elhalal, A. From What to How: Guidelines for Responsible AI Governance through a Bidirectional and Iterative Oversight Model. AI & Society 2021, 36, 715–729. [Google Scholar] [CrossRef]

- Hartmann, D. Observability-Driven AI Governance: A Framework for Compliance and Audit Readiness Under the EU AI Act. AI & Society 2024. [Google Scholar] [CrossRef]

| Regulatory dimension | Primary legal focus | Illustrative technical evidence |

|---|---|---|

| Data protection and data governance | GDPR data protection principles (lawfulness, purpose limitation, minimisation, accuracy, storage limitation, accountability; Arts. 5–6), special categories and safeguards (Art. 9), information duties and data subject rights (Arts. 13–15), and AI Act data governance requirements for training, validation and testing data (Art. 10).[1,25] | Dataset documentation, lawful-basis and purpose records, data minimisation and pseudonymisation logs, data quality and representativeness reports, access and retention policies.[6,15] |

| Risk management, robustness, and safety | AI Act obligations to establish and maintain a risk management system and to ensure accuracy, robustness and cybersecurity of high-risk AI systems (Arts. 9 and 15), together with related safety and risk-mitigation duties.[2,20] | Risk registers linked to use cases, scenario-based tests, robustness and stress-testing reports, security assessment and penetration-testing evidence.[10,12,21] |

| Transparency, explanation, and human oversight | GDPR duties to provide meaningful information about automated decision-making and enable rights of access, explanation and contestation (Arts. 12–15, 22), and AI Act transparency and human-oversight requirements for high-risk AI systems (Arts. 13–14).[3,26] | User-facing notices, explanation logs, explanation quality metrics, records of human review and overrides, decision dossiers documenting human-in-the-loop interventions.[16,17,18] |

| Documentation, logging, and record-keeping | GDPR accountability obligations and record-keeping duties (Arts. 5(2), 24, 30, 35–36) and AI Act requirements on technical documentation, logging and conformity assessment files (Arts. 11–12, 18–21).[1,22] | Model and dataset cards, pipeline and configuration records, event logs and logging schemas, conformity assessment documentation, audit trails.[6,24] |

| Post-market monitoring and incident management | AI Act obligations for post-market monitoring, reporting of serious incidents and malfunctioning, and updating of risk management and mitigation measures (Arts. 61–62 and related provisions).[20,27] | Monitoring strategies and dashboards, alerting rules, incident tickets and reports, post-mortem analyses, change logs and updated risk and conformity documentation.[8,10,13] |

| Technical dimension | Primary focus | Illustrative artefacts |

|---|---|---|

| Data governance | Data provenance, quality, representativeness, bias and lifecycle management.[15,25] | Dataset documentation, lineage records, preprocessing logs, bias and drift reports, access and retention policies.[1,6] |

| Model behaviour and performance | Accuracy, robustness, calibration and evaluation under risk-relevant scenarios.[10,12] | Validation reports, robustness tests, performance dashboards, stress-test scenarios, model versioning records.[11,21] |

| Transparency, explainability and communication | Provision of meaningful information about automated decisions and support for human understanding and contestability.[16,17] | Explanation configuration files, explanation logs, stability and fidelity metrics, decision dossiers linking inputs, models, explanations and human interventions.[3,18,26] |

| Monitoring, logging and incident response | Continuous surveillance of system behaviour, detection of anomalies and handling of incidents.[8,10] | Runtime logs, drift and anomaly indicators, alert rules, incident tickets, remediation playbooks, post-mortem reports.[13,20] |

| Documentation and auditability | Integration of technical evidence into coherent, audit-ready documentation.[6,22] | Model and dataset cards, DPIA and risk assessment summaries, conformity assessment files, governance records, holistic audit trails.[5,23] |

| Regulatory dimension | Main technical dimensions | Rationale for the linkage |

|---|---|---|

| Data protection and data governance | Data governance; Documentation and auditability | Data protection principles and AI Act data governance requirements are instantiated through dataset documentation, lineage and preprocessing logs, bias and drift analyses, and retention and access policies, which are then consolidated into audit-ready documentation. |

| Risk management, robustness, and safety | Model behaviour and performance; Monitoring, logging, and incident response; Documentation and auditability | Risk management and robustness duties require systematic evaluation under risk-relevant scenarios, runtime monitoring for anomalies and failures, and documentation of risk registers, tests and mitigation measures that can be inspected during audits and conformity assessments. |

| Transparency, explanation, and human oversight | Transparency, explainability, and communication; Monitoring, logging, and incident response; Documentation and auditability | Duties on meaningful information, contestability and human oversight are supported by explanation artefacts and metrics, logging of explanations and human actions, and decision dossiers that preserve a complete record of how decisions were produced, explained and reviewed. |

| Documentation, logging, and record-keeping | Documentation and auditability; Monitoring, logging, and incident response; Data governance | Accountability, logging and conformity assessment requirements depend on consistent logging across the lifecycle, structured documentation of models, data and pipelines, and the ability to reconstruct how data were collected, processed and used in decision-making. |

| Post-market monitoring and incident management | Monitoring, logging, and incident response; Documentation and auditability; Model behaviour and performance | Post-market monitoring and incident-reporting obligations rely on continuous observability of system behaviour, incident tickets and post-mortems, and updated documentation that links changes in models, data and controls to revised risk and conformity records. |

| Aspect | Description |

|---|---|

| Application domain | Network monitoring and anomaly detection in an operator of essential services, with potential impact on critical infrastructures and service continuity. |

| AI Act category (Annex III) | AI systems used for the management and operation of critical infrastructures, triggering high-risk obligations (risk management, data governance, robustness, transparency, human oversight, logging and post-market monitoring). |

| Data processed (GDPR) | Traffic metadata, event logs and other telemetry that can be directly or indirectly linked to natural persons (for example, internal users, administrators, customers). |

| Main decisions supported | Security alerts, incident prioritisation, triggering of automatic or semi-automatic responses (traffic blocking, host isolation, escalation to incident response teams). |

| Key risks | False negatives (undetected incidents), massive false positives (analyst overload and alert fatigue), automated actions that may affect rights and freedoms (excessive monitoring, intrusive profiling of internal users). |

| Salient GDPR obligations | Lawfulness of processing and DPIA, data minimisation and purpose limitation, transparency towards relevant data subjects, integrity and confidentiality of data, management of data subject rights (access, rectification, objection, restriction). |

| Salient AI Act obligations | Risk management system across the lifecycle, data and data governance, documentation and technical file, robustness and accuracy requirements, transparency and human oversight mechanisms, logging and post-market monitoring, conformity assessment documentation. |

| Regulatory pillar | TRCM technical dimension | Illustrative technical control | Evidence artefact |

|---|---|---|---|

| Data protection and data governance (GDPR, AI Act) | Data governance | Inventory of data sources (logs, flows, telemetry) and mapping of personal/pseudonymous data; retention and deletion policies aligned with GDPR storage limitation. | Data asset register, lineage records, retention policies, DPIA annexes. |

| Data protection and data governance | Documentation and auditability | Systematic recording of datasets used for training, validation and testing; explicit description of sampling and exclusion criteria. | Data sheets, dataset descriptions, quality and representativeness assessment reports. |

| Risk management, robustness and safety (AI Act) | Model behaviour and performance | Performance tests under different traffic loads, noise levels and drift scenarios; definition of target metrics (for example, minimum detection rate, false positive limits). | Test reports, scenario-by-scenario result grids, documentation of thresholds and acceptance criteria. |

| Risk management, robustness and safety | Monitoring, logging and incident response | Continuous performance monitoring in production; automatic alerts for degradation beyond predefined limits; documented procedures for model rollback and emergency reconfiguration. | Monitoring dashboards, incident tickets, post-incident review minutes, deployment and rollback logs. |

| Transparency and human oversight (GDPR, AI Act) | Documentation and auditability | Preparation of model cards and system cards describing goals, assumptions, limitations and acceptable use conditions. | Approved technical datasheets, attached to conformity documentation and operational manuals. |

| Transparency and human oversight | Model behaviour and performance | Generation of local explanations for alerts (for example, feature contributions, similar past examples) and aggregated views for analysts (explainability dashboards). | Explainability reports, dashboard snapshots, archived examples of explanations linked to incident tickets. |

| Transparency and human oversight | Human–AI interaction design | Definition of workflows that allow analysts to contest, comment on or override alerts; clear escalation and override rules. | Standard operating procedures, records of human decisions, ticket templates capturing human adjudication. |

| Stakeholder | Main operational implications of TRCM adoption |

|---|---|

| Development and MLOps teams | Integration of regulatory obligations as engineering requirements from the outset; need to parameterise pipelines (training, validation, deployment, monitoring) so that evidence artefacts are generated automatically; stronger discipline in versioning models, data and configurations. |

| Data protection officer (DPO) and compliance teams | Access to a shared technical–regulatory artefact for discussing risks and controls; ability to reuse TRCM profiles and DPIA annex templates across similar projects; improved traceability between GDPR/AI Act obligations and the controls actually implemented in systems. |

| Risk management and internal audit | More systematic mapping between identified risks, implemented controls and monitoring arrangements; clearer visibility of residual risks and outstanding remediation actions recorded directly in the matrix; easier preparation of internal audit work programmes focused on high-risk cells of the TRCM. |

| Business owners and product managers | Clearer understanding of the compliance impact of design choices; ability to plan budgets and timelines based on the set of technical dimensions and artefacts required by the filtered TRCM; more informed trade-offs between model complexity, operational flexibility and regulatory burden. |

| Operators and end-users (for example, security analysts) | More transparent and contestable AI behaviour, supported by explanation artefacts and documented escalation paths; clearer articulation of roles and responsibilities in human–AI decision-making loops; better alignment between tool design and operational practices. |

| Regulators and external auditors | Availability of a structured, verifiable account of the system’s lifecycle and control environment; direct traceability from legal provisions to technical controls and evidence artefacts; more efficient conformity assessments grounded in the TRCM rather than in ad hoc document collections. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).