Submitted:

03 February 2026

Posted:

04 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Related Work

2. Materials and Methods

2.1. Data

2.2. SURE Pipeline

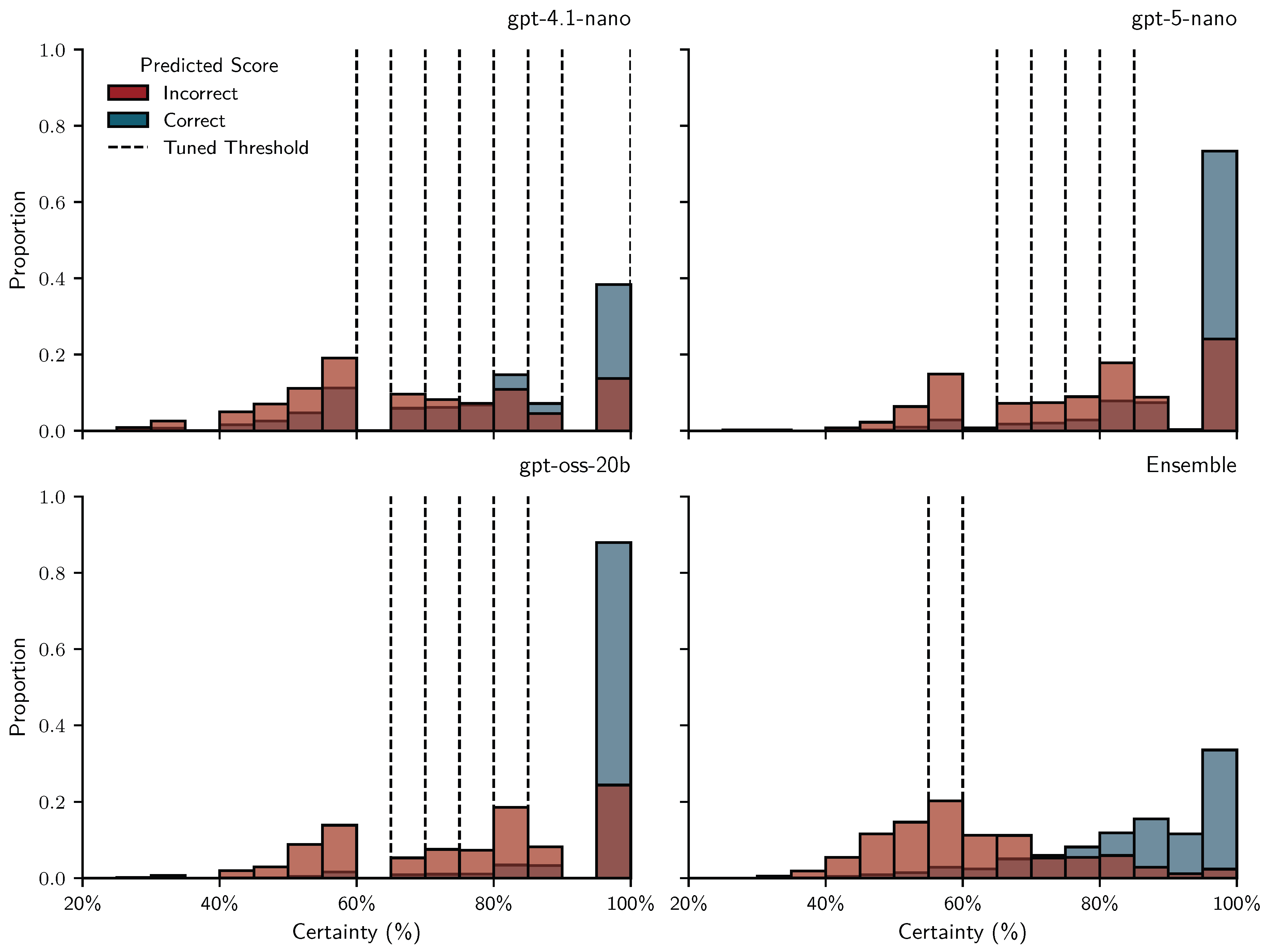

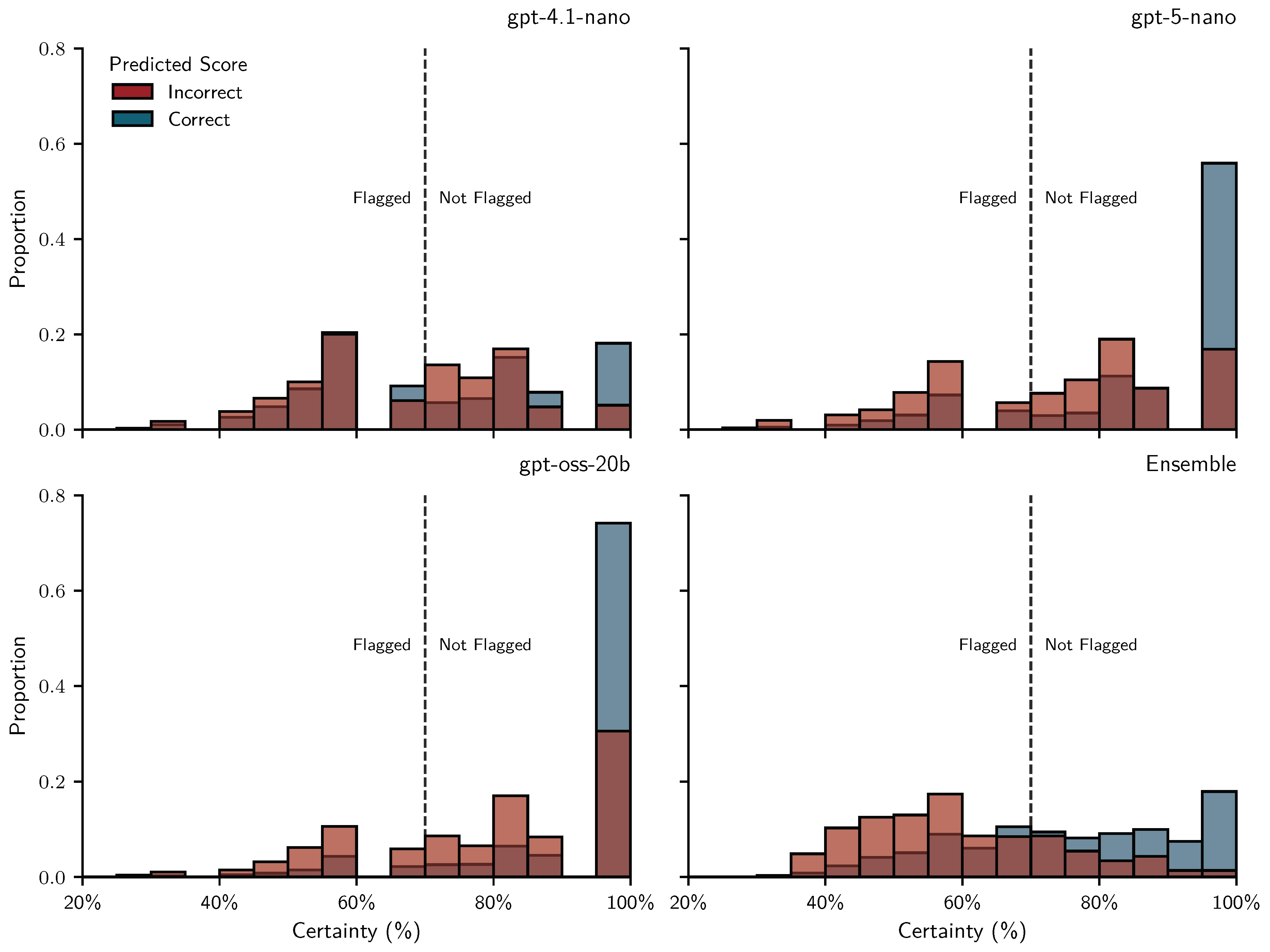

2.2.1. Repeated Prompting for Score Prediction and Uncertainty Estimation

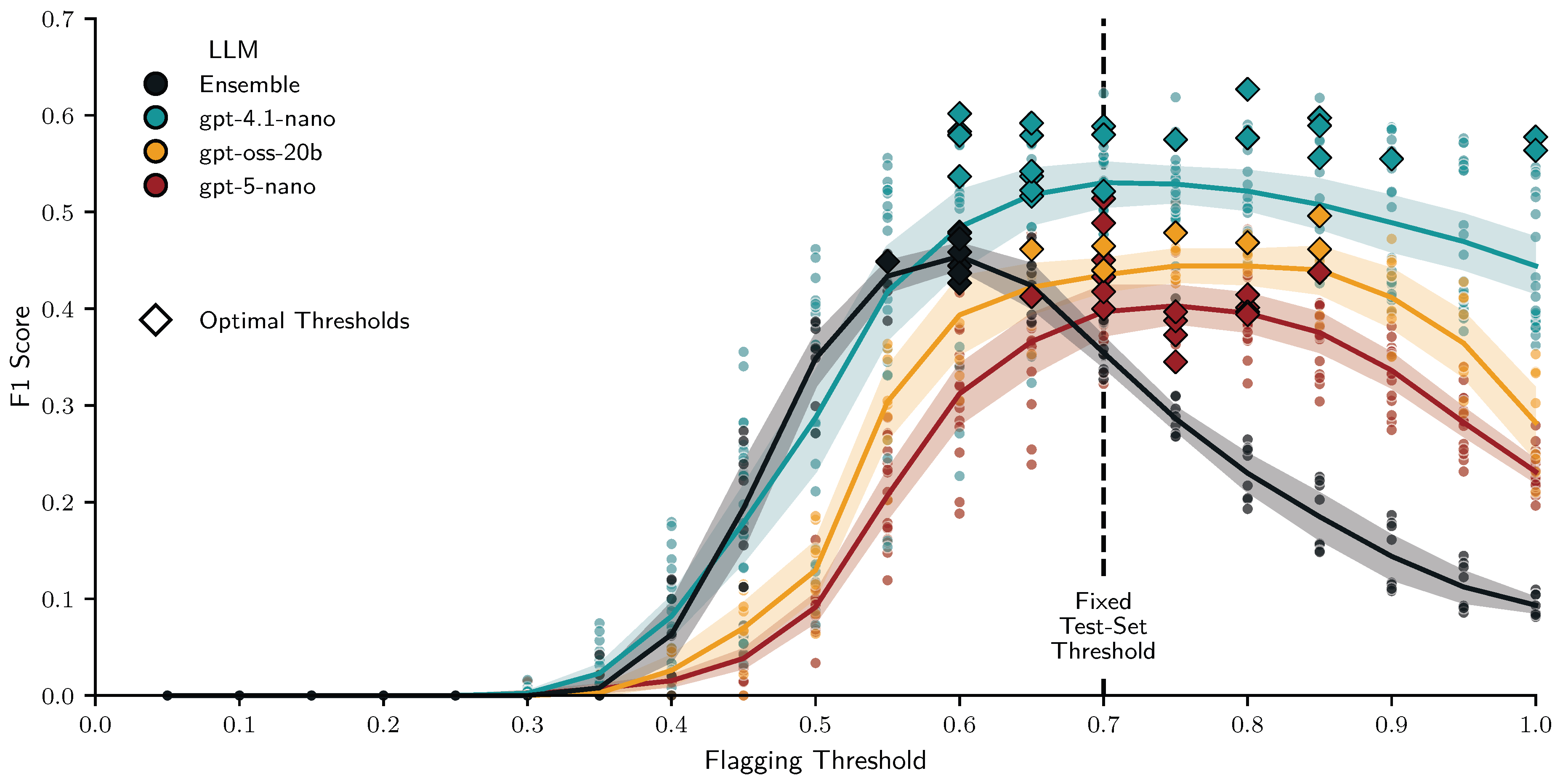

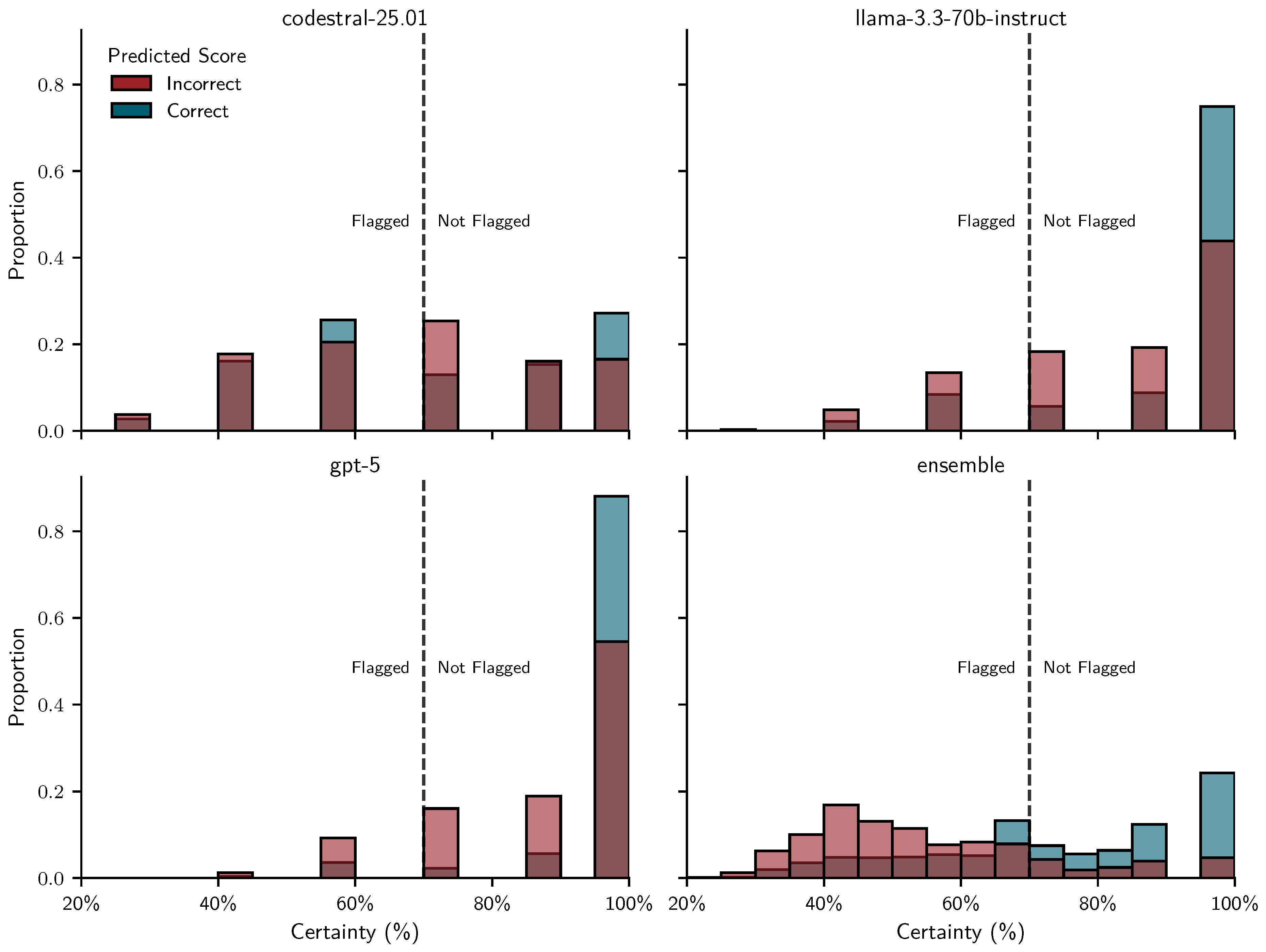

2.2.2. Flagging Low-Certainty Scores and Simulating Human Regrading

- TP: LLM is uncertain (flagged) and incorrect — a useful flag

- FP: LLM is uncertain (flagged) but correct — unnecessary teacher effort

- TN: LLM is confident (unflagged) and correct — ideal automatic grading

- FN: LLM is confident (unflagged) but incorrect — undetected error

2.3. LLM Configurations and Diversification Strategies

2.3.1. Parameter Variations

2.3.2. Prompt Perturbations

- 1.

- Shuffled rubrics – Each rubric consisted of a list of subtractive grading criteria [1]. When this intervention was active, we randomly sampled 20 criteria orderings from all possible permutations. For rubrics with fewer than four criteria (), permutations were repeated equally until reaching 20. Otherwise, criteria followed the original order.

- 2.

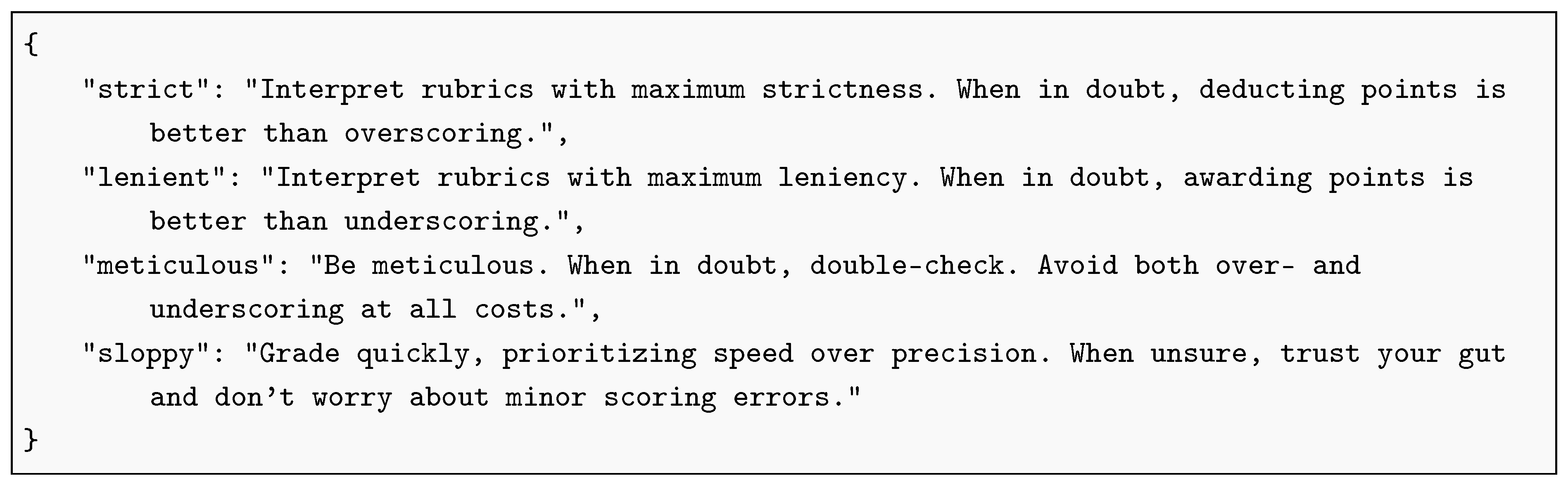

- Grader personas – We defined four personas: strict, lenient, meticulous, and sloppy (see Listing A3). When enabled, we sampled each persona 5 times to add a persona to each of the 20 prompts. Otherwise, prompts contained no persona.

- 3.

- Multilingual prompting – We used gpt-5-nano to translate all prompt components (base prompt, questions, rubrics, and persona snippets) into German, Spanish, French, Japanese, and Chinese, and verified the translations by back-translating to English via DeepL [68]. When this intervention was active, we sampled equally across the six languages (including English). Otherwise, all 20 prompts were in English.

2.3.3. Post-Hoc Ensembles

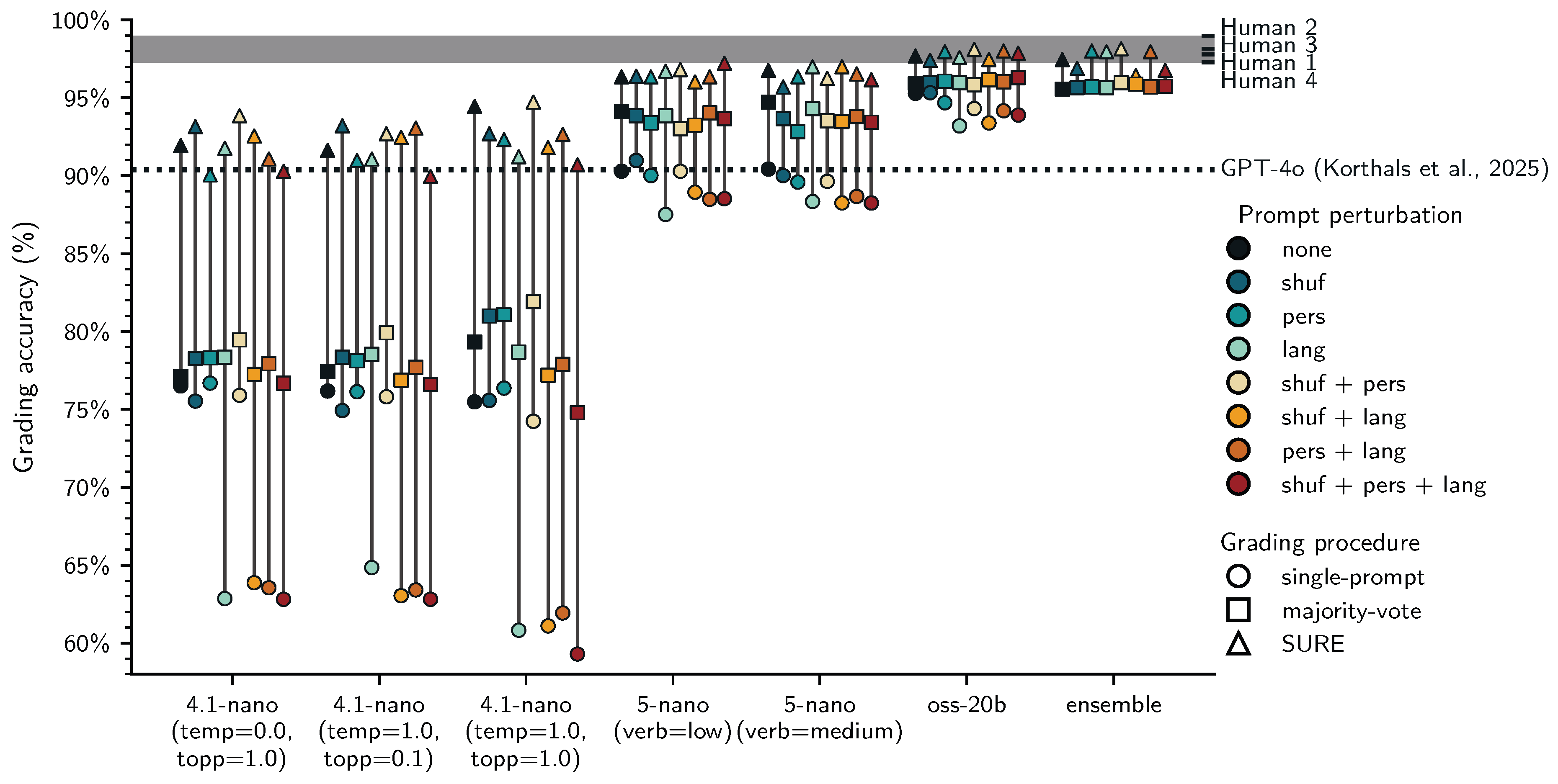

2.4. Grading Procedures

- Majority-voting (MV): Fully automated grading based on the most frequent scores assigned to each student answer across 20 repeated grading iterations. We computed these for all 56 conditions (prompting + post-hoc ensembles).

- Single-prompt (SP): Fully automated grading based on a single score we sampled from the 20 iterations for each student answer. We applied this only to the 48 prompting conditions, ensembles per definition aggregate the outputs from multiple prompts.

- SURE: Human-in-the-loop grading based on majority-voting with simulated human regrading of flagged scores. We assessed SURE for all 56 conditions (prompting + post-hoc ensembles). In the training set we tuned separate uncertainty thresholds by maximizing the score for each of the 56 conditions. In the test set we used the median of these 56 thresholds as a fixed certainty threshold.

2.5. Research Questions

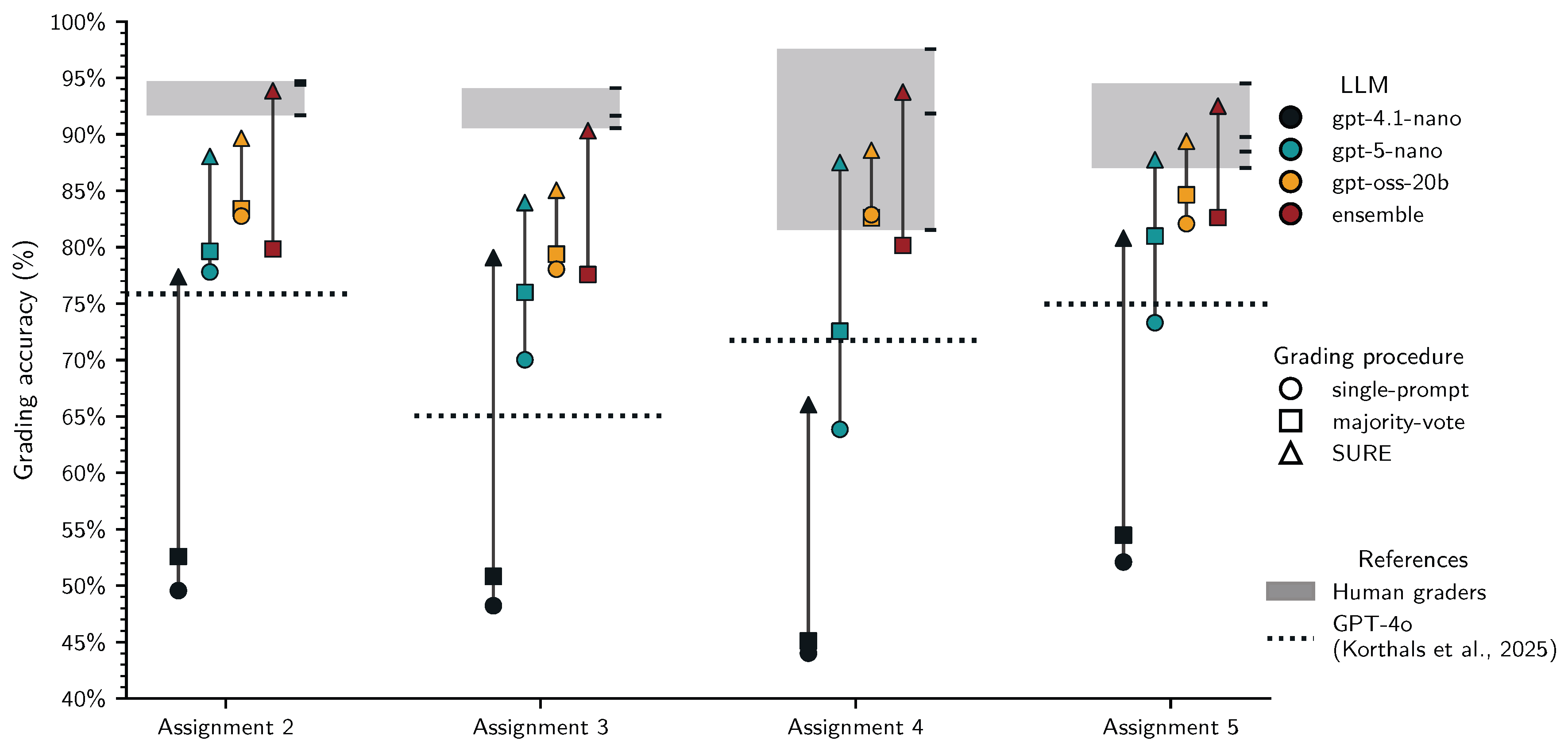

- 1.

- RQ1: Can majority-voting improve the accuracy of fully automated LLM grading?

- 2.

- RQ2: Can SURE improve the accuracy over fully automated LLM grading?

- 3.

- RQ3: Can diversification strategies (token sampling, prompt perturbations, LLM ensembles) improve the SURE protocol?

- 4.

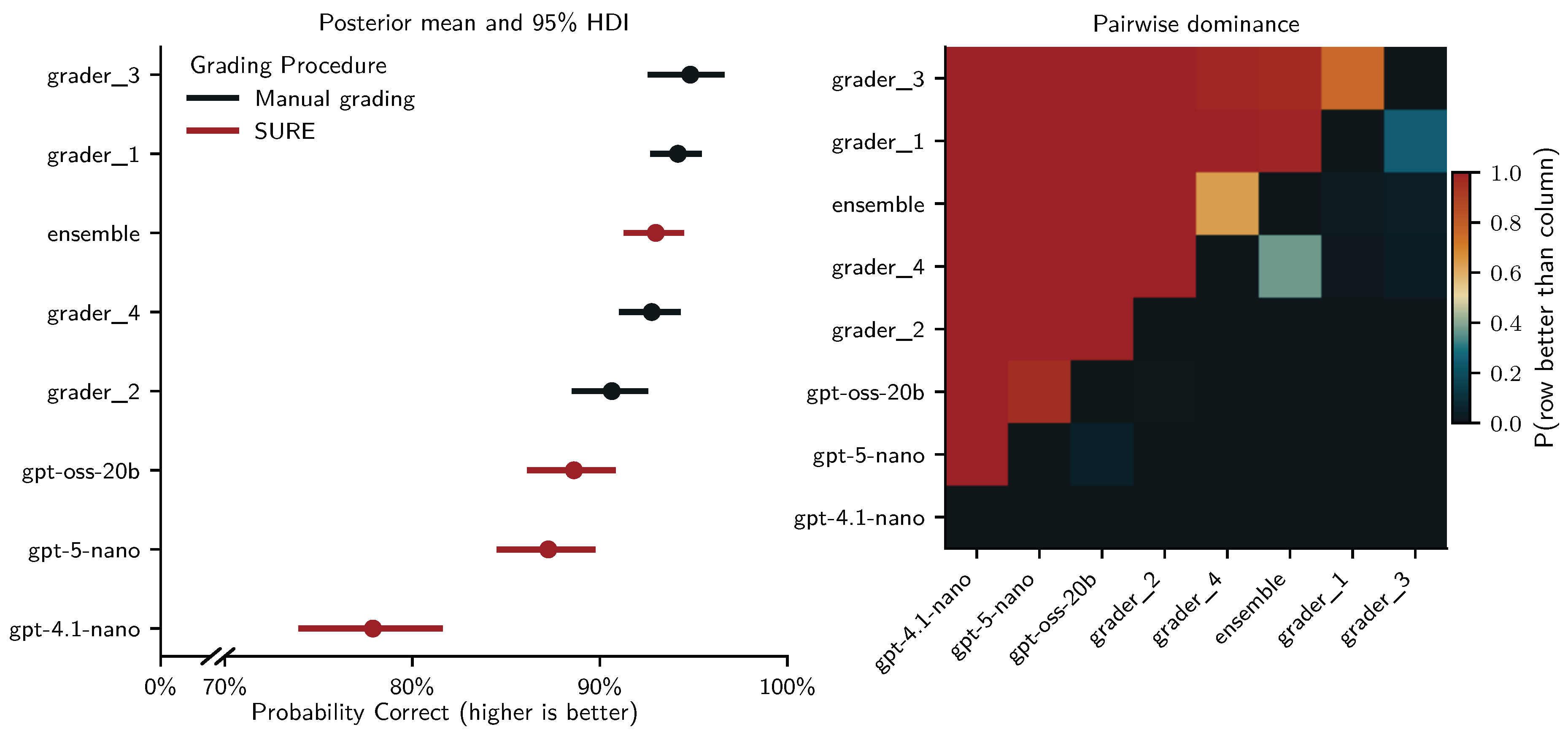

- RQ4: How effective (accuracy) and efficient (time spent grading) is SURE compared to fully manual grading?

2.6. Exploratory Analyses on the Training Set

2.6.1. Grading Procedures and Diversification Strategies

- A negative coefficient for single-prompt grading would indicate that majority-grading improves fully automated grading (RQ1).

- A positive coefficient for SURE that is larger than those for SP and MV would indicate that the proposed pipeline improves accuracy over automated grading (RQ2).

- Positive coefficients for any of the diversification strategies – particularly in combination with SURE – would indicate that diversification strategies are beneficial (RQ3).

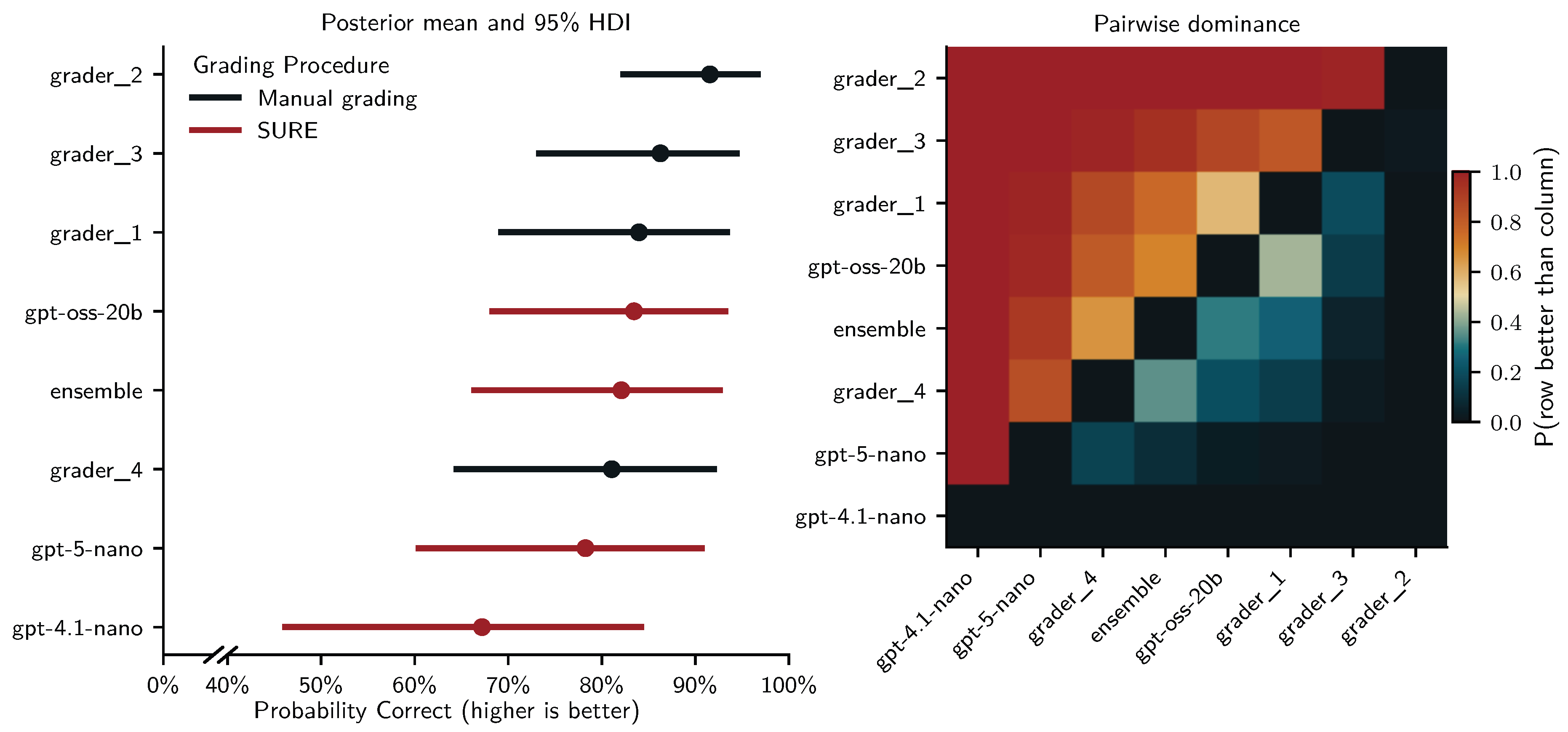

2.6.2. Comparing Single-Prompt, Majority-Voting, SURE and Manual Grading

- gpt-4.1-nano with temperature and top_p set to 1.0.

- gpt-5-nano with text_verbosity set to medium.

- gpt-oss-20b with text_verbosity set to medium.

- ensemble based on the three selected LLM configurations.

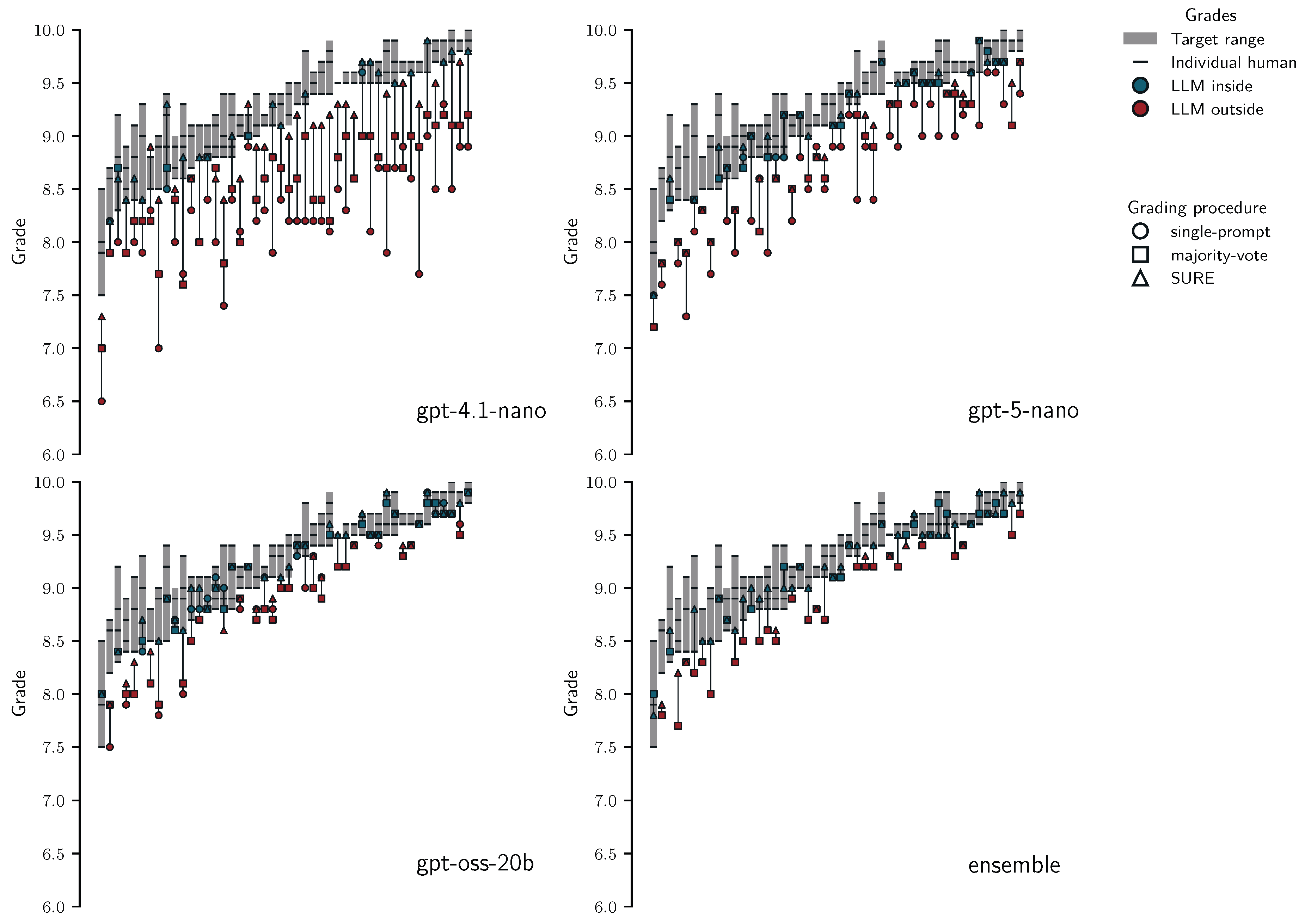

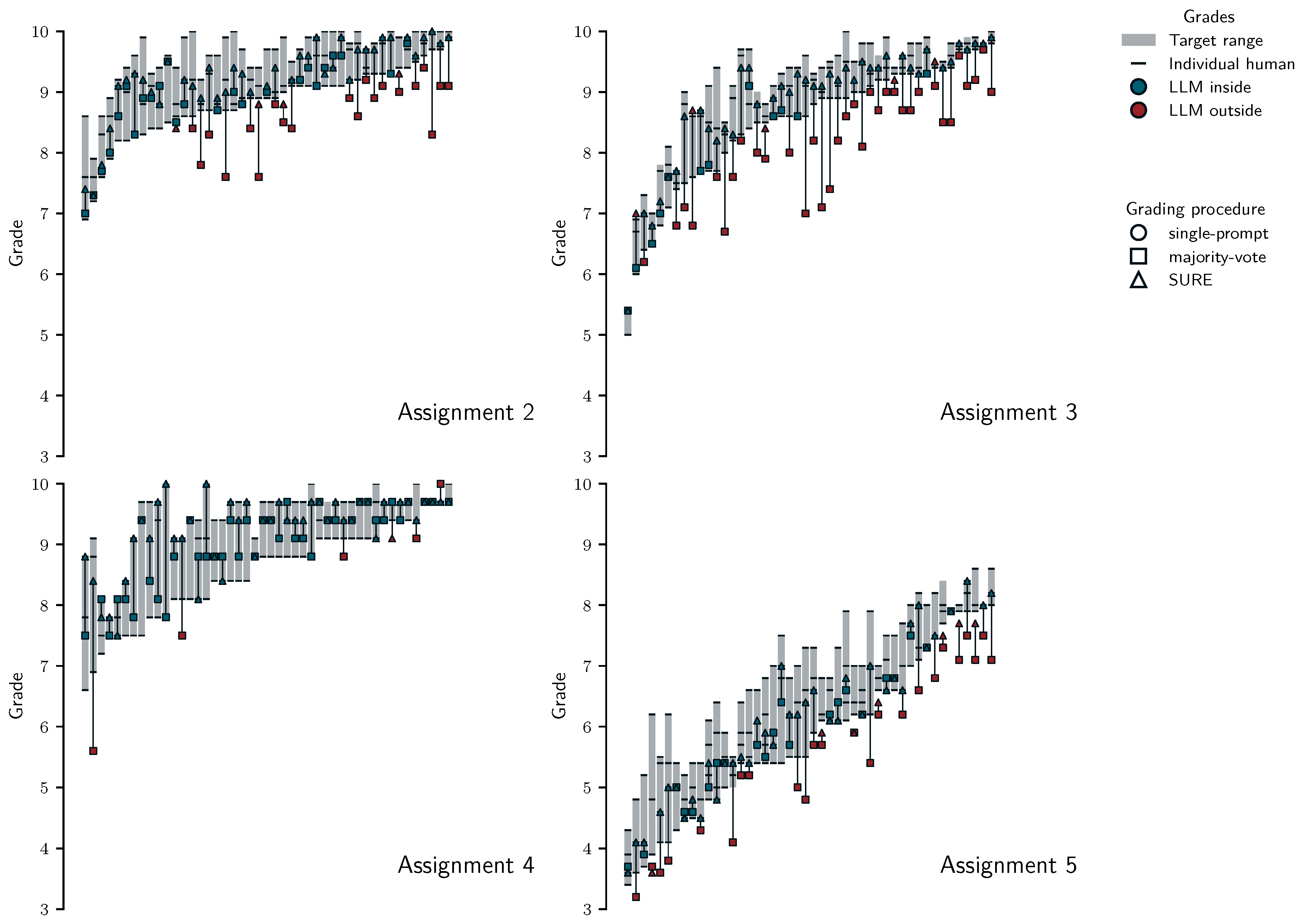

- If majority-voted grades would be more aligned with target ranges than grades based on single-prompts this would lend support that majority-voting improves fully automated gradign (RQ1)

- If grades from SURE would be more aligned than fully automated grades (SP and MV) this would indicate the benefit of SURE (RQ2).

- By assessing the proportion of SURE grades that fall inside the target ranges and the maximum and median deviations from target range boundaries we assess whether SURE may be suitable to replace manual grading (RQ4).

2.7. Planned Analyses on the Test Set

- gpt-4.1-nano with temperature and top_p set to 1.0.

- gpt-5-nano with text_verbosity set to medium.

- gpt-oss-20b with text_verbosity set to medium.

- ensemble based on the three selected LLM configurations.

2.8. Additional Exploratory Analyses

3. Results

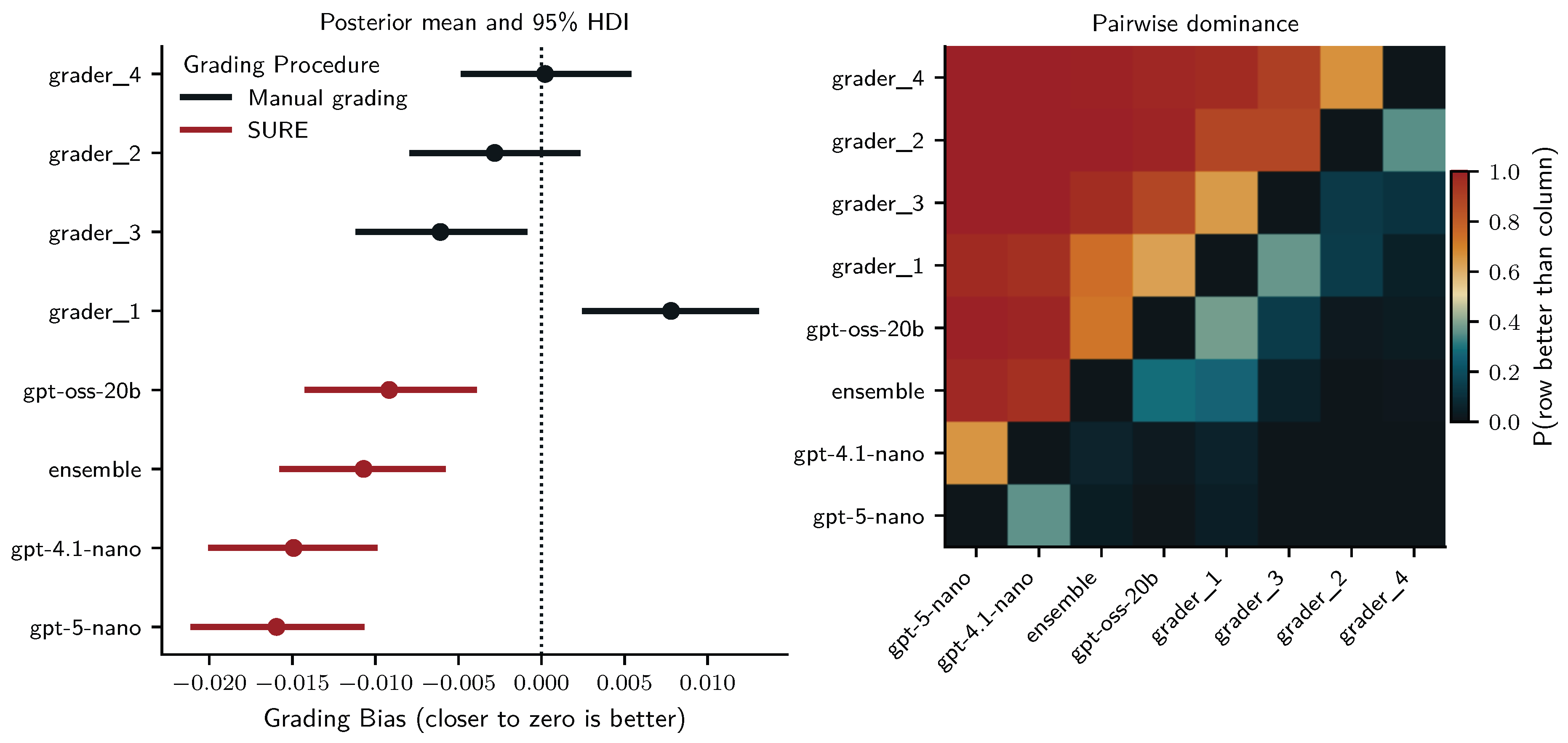

3.1. Interrater Reliability of Human Graders

3.2. Exploratory Findings on the Training Set

3.2.1. Descriptive Findings

3.2.2. Grading Procedures and Diversification Strategies

- gpt-4.1-nano with temperature and top_p set to 1.0.

- gpt-5-nano with default "medium" text_verbosity.

- gpt-oss-20b with default "medium" text_verbosity.

- ensemble based on the three selected LLM configurations.

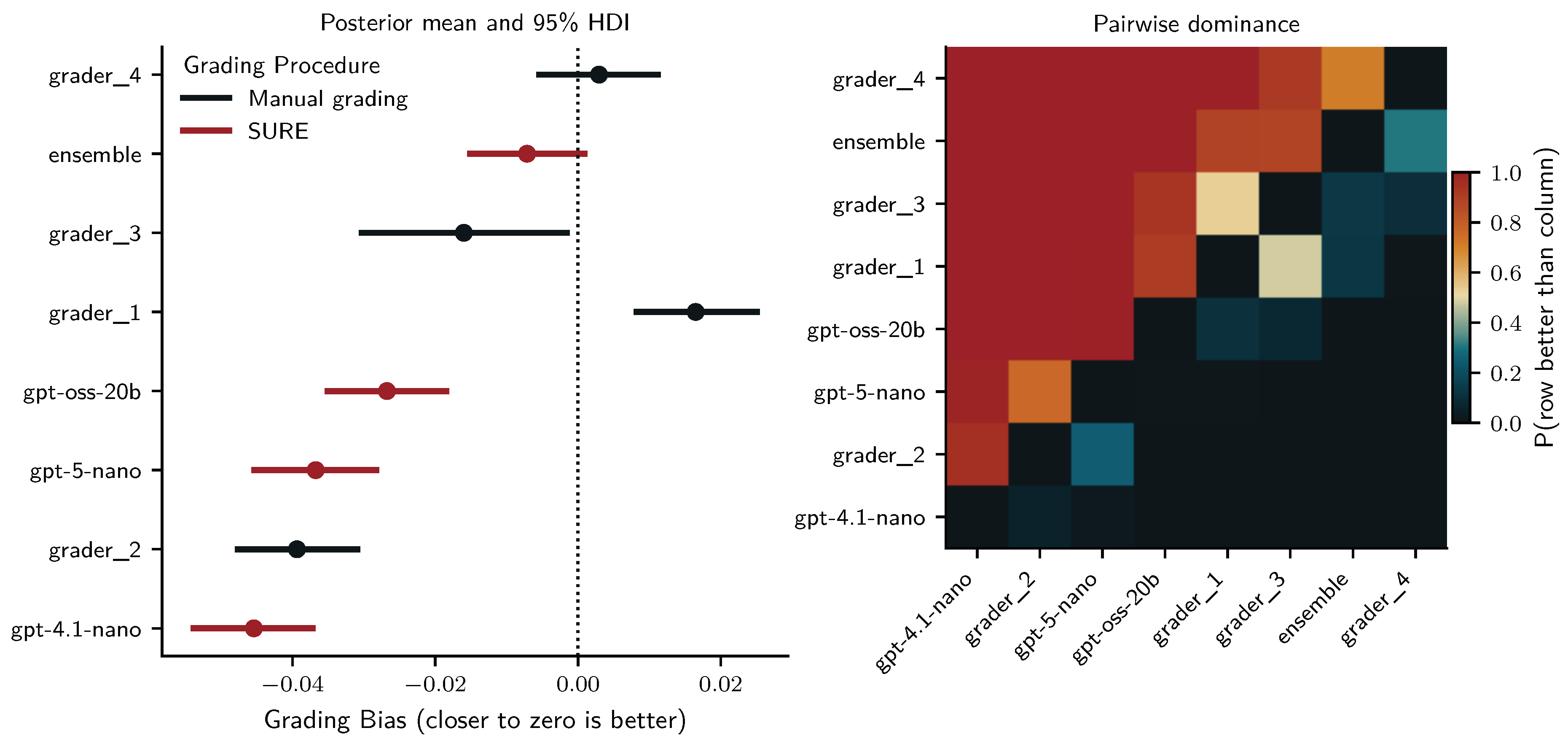

3.2.3. Comparing Single-Prompt, Majority-Voting, SURE and Manual Grading

3.2.4. Summary of Training Set Results

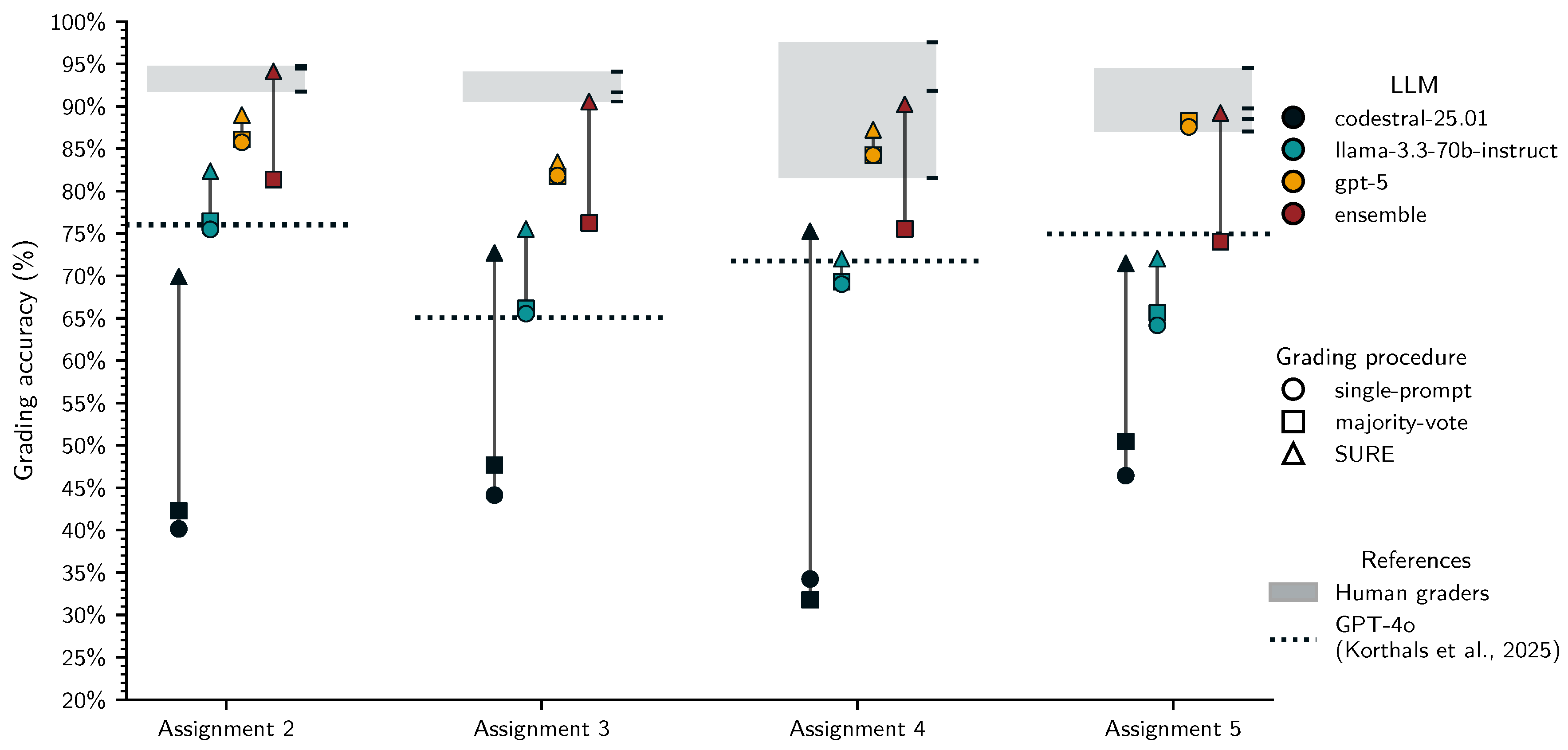

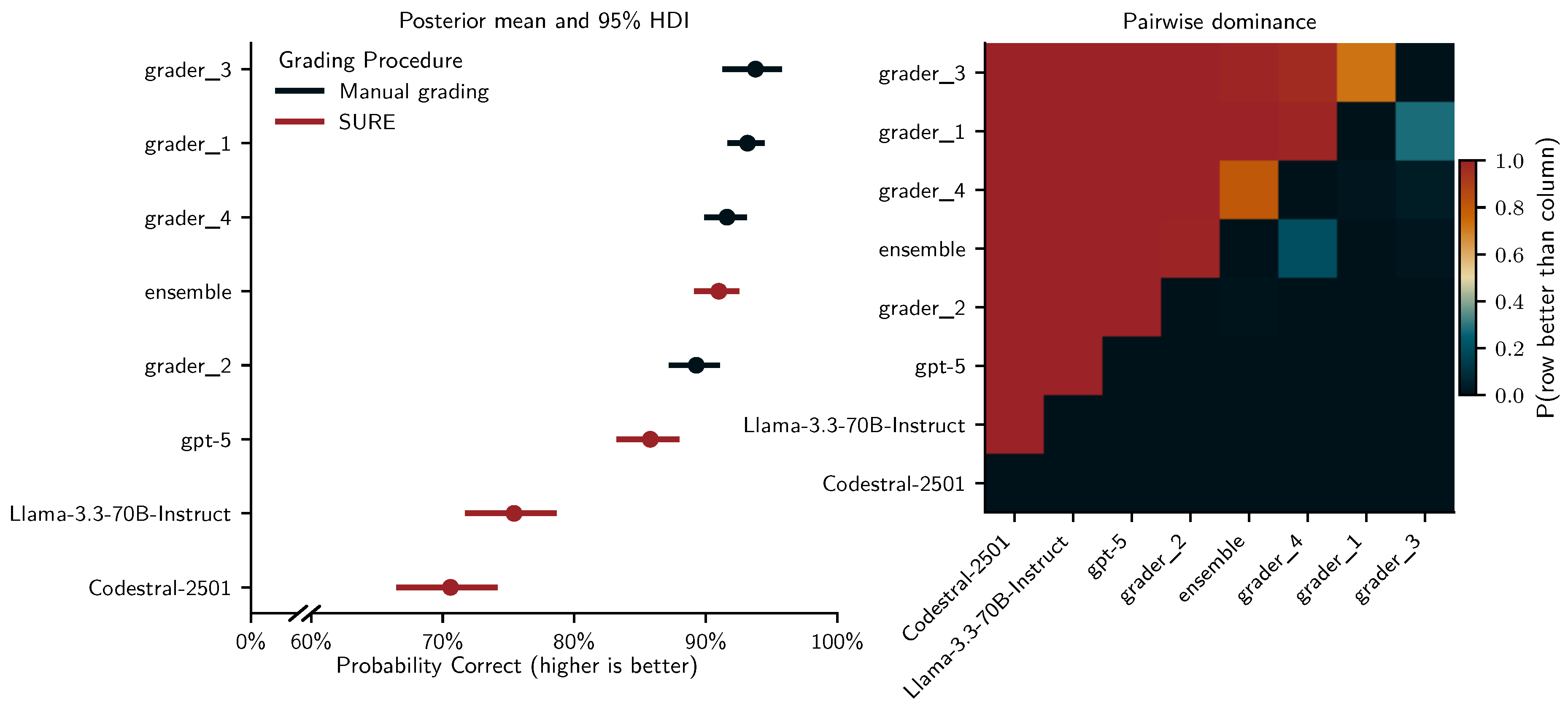

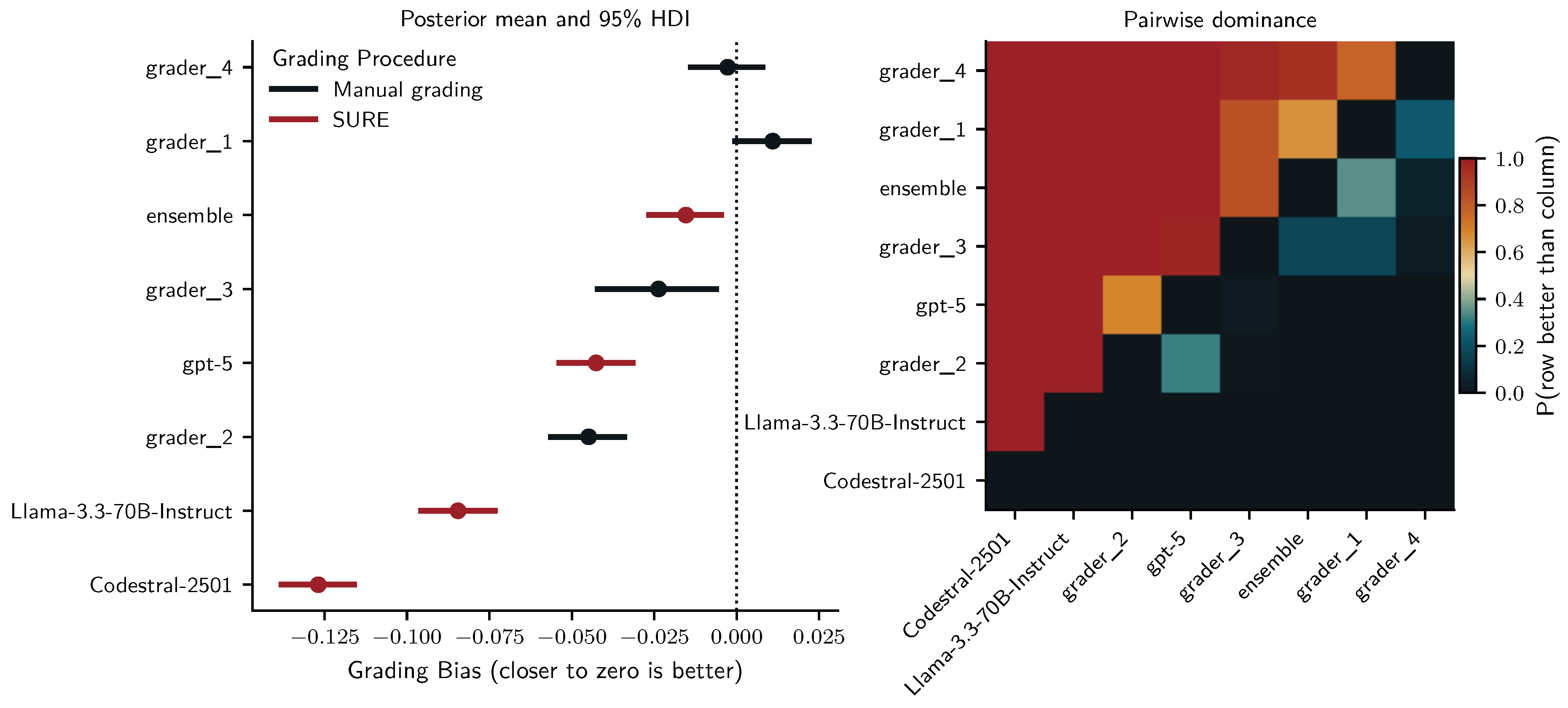

3.3. Test Set Validation

3.3.1. Descriptive Findings

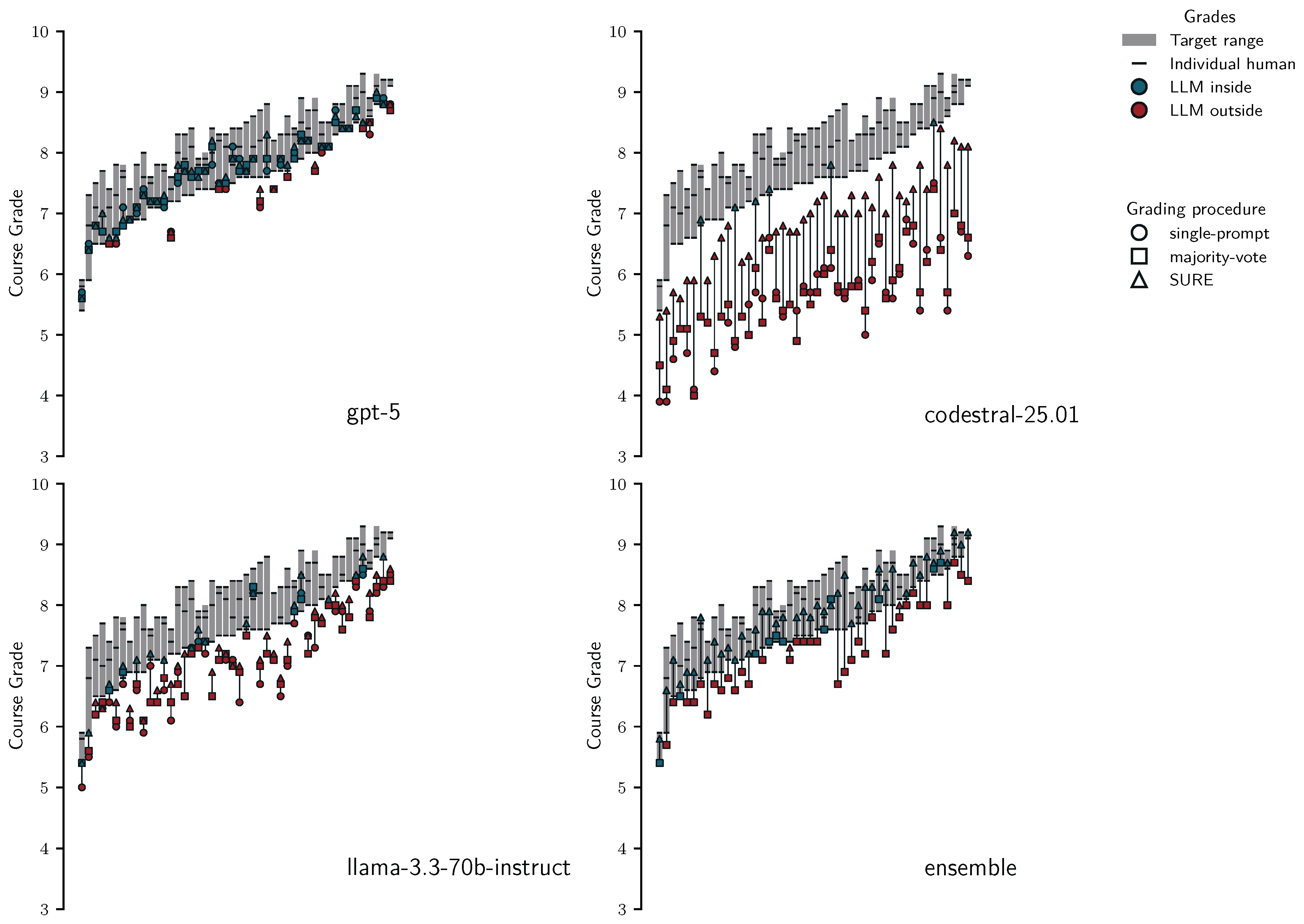

3.3.2. Comparing Single-Prompt, Majority-Voting, SURE and Manual Grading

3.4. Additional Exploratory Analyses

| LLM | Grading Procedure | % in Target Range | Maximum Grade Deviation | Median Grade Deviation |

|---|---|---|---|---|

| Overall Course Grade | ||||

| gpt-5 | SP | 73.913 | 0.5 | 0.1 |

| MV | 78.261 | 0.6 | 0.1 | |

| SURE | 86.957 | 0.5 | 0.1 | |

| codestral-25.01 | SP | 0 | 3.2 | 1.9 |

| MV | 0 | 2.9 | 1.85 | |

| SURE | 13.043 | 1.0 | 0.6 | |

| llama-3.3-70b-instruct | SP | 10.87 | 1.2 | 0.4 |

| MV | 17.391 | 1 | 0.4 | |

| SURE | 39.13 | 0.9 | 0.2 | |

| new ensemble | MV | 23.913 | 0.9 | 0.2 |

| SURE | 95.652 | 0.3 | 0.1 | |

| Regrading (min) and time savings (%) | |||||

|---|---|---|---|---|---|

| Grader | Manual (min) | gpt-5 | codestral-25.01 | llama-3.3-70b-instruct | New Ensemble |

| Assignments 2-5 Cumulated | |||||

| Grader 1 | 748 | 52 (93%) | 372 (50%) | 111 (85%) | 432 (42%) |

| Grader 2 | 934 | 67 (93%) | 471 (50%) | 137 (85%) | 556 (40%) |

| Grader 3 2 | 298 | 12 (96%) | 51 (55%) | 51 (83%) | 181 (39%) |

| Grader 4 | 734 | 55 (93%) | 354 (52%) | 113 (85%) | 436 (41%) |

3.5. Summary of Results

4. Discussion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| LLMs | Large language models |

| AI | Artificial intelligence |

| SURE | Selective Uncertainty-based Re-Evaluation |

| IRT | Item Response Theory |

| TP | True positive |

| FP | False positive |

| TN | True negative |

| FN | False negative |

| SP | single-prompt |

| MV | majority-voting |

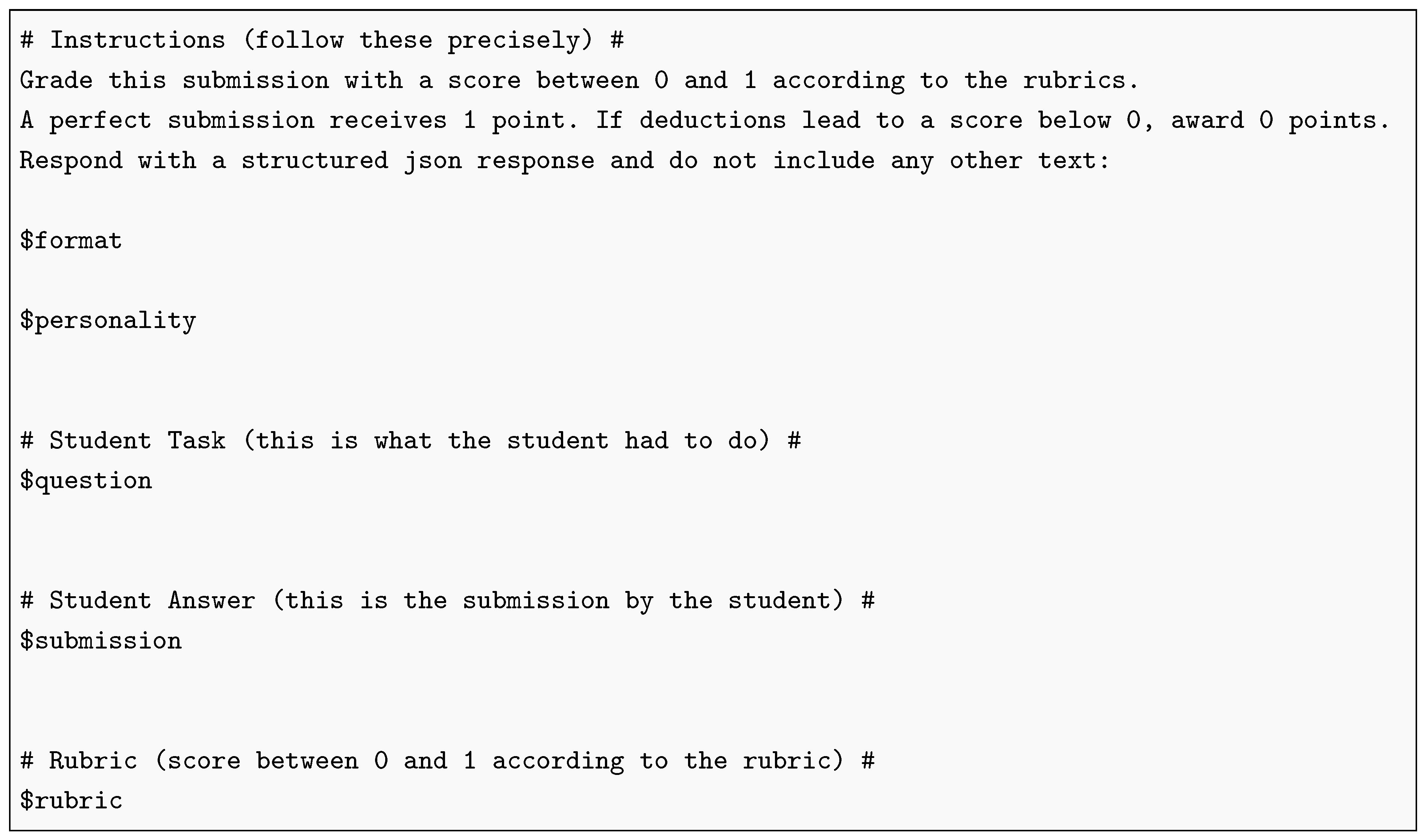

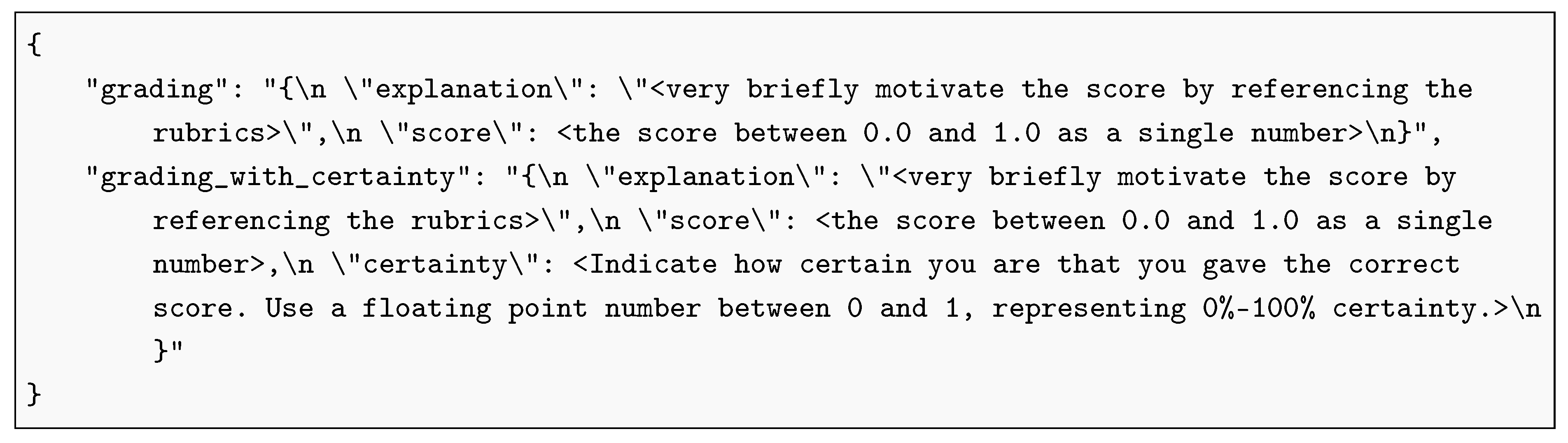

Appendix A. LLM Prompts

| Listing 1: Base prompt for LLM grading |

|

| Listing 2: JSON response format used in the format placeholder |

|

| Listing 3: Grading personas used for the personality placeholder |

|

References

- Korthals, L.; Rosenbusch, H.; Grasman, R.; Visser, I. Grading University Students with LLMs: Performance and Acceptance of a Canvas-Based Automation. In Proceedings of the Artificial Intelligence in Education. Posters and Late Breaking Results, Workshops and Tutorials, Industry and Innovation Tracks, Practitioners, Doctoral Consortium, Blue Sky, and WideAIED; Cristea, A.I., Walker, E., Lu, Y., Santos, O.C., Isotani, S., Eds.; Cham, 2025; pp. 36–43. [Google Scholar] [CrossRef]

- Grévisse, C. LLM-based automatic short answer grading in undergraduate medical education. BMC Medical Education 2024, 24, 1060. [Google Scholar] [CrossRef] [PubMed]

- Flodén, J. Grading exams using large language models: A comparison between human and AI grading of exams in higher education using ChatGPT. British Educational Research Journal 2025, 51, 201–224. [Google Scholar] [CrossRef]

- Ishida, T.; Liu, T.; Wang, H.; Cheung, W.K. Large Language Models as Partners in Student Essay Evaluation. arXiv 2024, arXiv:2405.18632. [Google Scholar] [CrossRef]

- Yavuz, F.; Çelik, O.; Yavaş Çelik, G. Utilizing large language models for EFL essay grading: An examination of reliability and validity in rubric-based assessments. British Journal of Educational Technology 2025, 56, 150–166. Available online: https://bera-journals.onlinelibrary.wiley.com/doi/pdf/10.1111/bjet.13494. [CrossRef]

- Polat, M. Analysis of Multiple-Choice versus Open-Ended Questions in Language Tests According to Different Cognitive Domain Levels. In Novitas-ROYAL (Research on Youth and Language); Children’s Research Center-Turkey ERIC Number: EJ1272114, 2020; Volume 14, pp. 76–96. [Google Scholar]

- Schneider, J.; Schenk, B.; Niklaus, C. Towards LLM-based Autograding for Short Textual Answers. arXiv 2024, arXiv:2309.11508. [Google Scholar] [CrossRef]

- Kortemeyer, G.; Nöhl, J. Assessing confidence in AI-assisted grading of physics exams through psychometrics: An exploratory study. In Physical Review Physics Education Research; American Physical Society, 2025; Volume 21, p. 010136. [Google Scholar] [CrossRef]

- European Parliament and Council of the European Union. Regulation (EU) 2024/1689 of the European Parliament and of the Council of 13 June 2024 laying down harmonised rules on artificial intelligence and amending Regulations (EC) No 300/2008, (EU) No 167/2013, (EU) No 168/2013, (EU) 2018/858, (EU) 2018/1139 and (EU) 2019/2144 and Directives 2014/90/EU, (EU) 2016/797 and (EU) 2020/1828 (Artificial Intelligence Act) (Text with EEA relevance). Official Journal of the European Union 2024, 2024/1689. [Google Scholar]

- Bhandari, S.; Pardos, Z. Can Language Models Grade Algebra Worked Solutions? Evaluating LLM-Based Autograders Against Human Grading. In Proceedings of the Proceedings of the 18th International Conference on Educational Data Mining, 2025; pp. 554–558. [Google Scholar] [CrossRef]

- Chen, Z.; Wan, T. Grading Explanations of Problem-Solving Process and Generating Feedback Using Large Language Models at Human-Level Accuracy. Physical Review Physics Education Research 2025, 21, 010126. [Google Scholar] [CrossRef]

- Wang, X.; Wei, J.; Schuurmans, D.; Le, Q.; Chi, E.; Narang, S.; Chowdhery, A.; Zhou, D. Self-Consistency Improves Chain of Thought Reasoning in Language Models. arXiv 2023, arXiv:2203.11171. [Google Scholar] [CrossRef]

- Portillo Wightman, G.; Delucia, A.; Dredze, M. Strength in Numbers: Estimating Confidence of Large Language Models by Prompt Agreement. In Proceedings of the Proceedings of the 3rd Workshop on Trustworthy Natural Language Processing (TrustNLP 2023); Ovalle, A., Chang, K.W., Mehrabi, N., Pruksachatkun, Y., Galystan, A., Dhamala, J., Verma, A., Cao, T., Kumar, A., Gupta, R., Eds.; Toronto, Canada, 2023; pp. 326–362. [Google Scholar] [CrossRef]

- Kruse, M.; Afshar, M.; Khatwani, S.; Mayampurath, A.; Chen, G.; Gao, Y. Simple Yet Effective: An Information-Theoretic Approach to Multi-LLM Uncertainty Quantification. Proceedings of the Conference on Empirical Methods in Natural Language Processing. Conference on Empirical Methods in Natural Language Processing 2025, 2025, 30481–30492. [Google Scholar] [CrossRef] [PubMed]

- Hamidieh, K.; Thost, V.; Gerych, W.; Yurochkin, M.; Ghassemi, M. Complementing Self-Consistency with Cross-Model Disagreement for Uncertainty Quantification. In Proceedings of the NeurIPS 2025 Workshop: Reliable ML from Unreliable Data, 2025. [Google Scholar]

- Horton, P.; Florea, A.; Stringfield, B. Conformal validation: A deferral policy using uncertainty quantification with a human-in-the-loop for model validation. Machine Learning with Applications 2025, 22, 100733. [Google Scholar] [CrossRef]

- Strong, J.; Men, Q.; Noble, A. Trustworthy and Practical AI for Healthcare: A Guided Deferral System with Large Language Models. arXiv [cs]. 2025, arXiv:2406.07212. [Google Scholar] [CrossRef]

- Alves, J.V.; Leitão, D.; Jesus, S.; Sampaio, M.O.P.; Liébana, J.; Saleiro, P.; Figueiredo, M.A.T.; Bizarro, P. A benchmarking framework and dataset for learning to defer in human-AI decision-making. In Scientific Data; Nature Publishing Group, 2025; Volume 12, p. 506. [Google Scholar] [CrossRef]

- OpenAI. How Should I Set the Temperature Parameter? Available online: https://platform.openai.com/docs/faq/how-should-i-set-the-temperature-parameter.

- Peeperkorn, M.; Kouwenhoven, T.; Brown, D.; Jordanous, A. Is Temperature the Creativity Parameter of Large Language Models? arXiv 2024, arXiv:2405.00492. [Google Scholar] [CrossRef]

- Holtzman, A.; Buys, J.; Du, L.; Forbes, M.; Choi, Y. The Curious Case of Neural Text Degeneration. 2019. [Google Scholar]

- OpenAI. Using GPT-5. 2025. Available online: https://platform.openai.com.

- Lu, Y.; Bartolo, M.; Moore, A.; Riedel, S.; Stenetorp, P. Fantastically Ordered Prompts and Where to Find Them: Overcoming Few-Shot Prompt Order Sensitivity. In Proceedings of the Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers); Muresan, S., Nakov, P., Villavicencio, A., Eds.; Dublin, Ireland, 2022; pp. 8086–8098. [Google Scholar] [CrossRef]

- Wang, Q.; Pan, S.; Linzen, T.; Black, E. Multilingual Prompting for Improving LLM Generation Diversity. In Proceedings of the Proceedings of the 2025 Conference on Empirical Methods in Natural Language Processing; Christodoulopoulos, C., Chakraborty, T., Rose, C., Peng, V., Eds.; Suzhou, China, 2025; pp. 6378–6400. [Google Scholar]

- Fröhling, L.; Demartini, G.; Assenmacher, D. Personas with Attitudes: Controlling LLMs for Diverse Data Annotation. In Proceedings of the Proceedings of the The 9th Workshop on Online Abuse and Harms (WOAH); Calabrese, A., de Kock, C., Nozza, D., Plaza-del Arco, F.M., Talat, Z., Vargas, F., Eds.; Vienna, Austria, 2025; pp. 468–481. [Google Scholar]

- Chen, J.; Mueller, J. Quantifying Uncertainty in Answers from any Language Model and Enhancing their Trustworthiness. In Proceedings of the Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers); Ku, L.W., Martins, A., Srikumar, V., Eds.; Bangkok, Thailand, 2024; pp. 5186–5200. [Google Scholar] [CrossRef]

- Hastie, T.; Tibshirani, R.; Friedman, J. Ensemble Learning. In The Elements of Statistical Learning: Data Mining, Inference, and Prediction; Hastie, T., Tibshirani, R., Friedman, J., Eds.; Springer: New York, NY, 2009; pp. 605–624. [Google Scholar] [CrossRef]

- Tekin, S.F.; Ilhan, F.; Huang, T.; Hu, S.; Liu, L. LLM-TOPLA: Efficient LLM Ensemble by Maximising Diversity. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2024; Al-Onaizan, Y., Bansal, M., Chen, Y.N., Eds.; Miami, Florida, USA, 2024; pp. 11951–11966. [Google Scholar] [CrossRef]

- Wang, J.; Wang, J.; Athiwaratkun, B.; Zhang, C.; Zou, J. Mixture-of-Agents Enhances Large Language Model Capabilities. arXiv 2024, arXiv:2406.04692. [Google Scholar] [CrossRef]

- Yang, H.; Li, M.; Zhou, H.; Xiao, Y.; Fang, Q.; Zhou, S.; Zhang, R. Large Language Model Synergy for Ensemble Learning in Medical Question Answering: Design and Evaluation Study. Journal of Medical Internet Research 2025, 27, e70080. [Google Scholar] [CrossRef]

- Lewkowycz, A.; Andreassen, A.; Dohan, D.; Dyer, E.; Michalewski, H.; Ramasesh, V.; Slone, A.; Anil, C.; Schlag, I.; Gutman-Solo, T.; et al. Solving Quantitative Reasoning Problems with Language Models. In Proceedings of the Advances in Neural Information Processing Systems; Koyejo, S., Mohamed, S., Agarwal, A., Belgrave, D., Cho, K., Oh, A., Eds.; Curran Associates, Inc., 2022; Vol. 35, pp. 3843–3857. [Google Scholar]

- OpenAI; Hurst, A.; Lerer, A.; Goucher, A.P.; Perelman, A.; Ramesh, A.; et al. GPT-4o System Card, 2024. [CrossRef]

- Valenti, S.; Neri, F.; Cucchiarelli, A. An Overview of Current Research on Automated Essay Grading. Journal of Information Technology Education: Research 2003, 2, 319–330. [Google Scholar] [CrossRef]

- Dikli, S. An Overview of Automated Scoring of Essays. The Journal of Technology, Learning and Assessment 2006, 5. [Google Scholar]

- Ifenthaler, D. Automated Essay Scoring Systems. In Handbook of Open, Distance and Digital Education; Zawacki-Richter, O., Jung, I., Eds.; Springer Nature Singapore: Singapore, 2023; pp. 1057–1071. [Google Scholar] [CrossRef]

- Page, E.B. The Use of the Computer in Analyzing Student Essays. International Review of Education 1968, 14, 210–225. [Google Scholar] [CrossRef]

- Mohler, M.; Mihalcea, R. Text-to-Text Semantic Similarity for Automatic Short Answer Grading. In Proceedings of the Proceedings of the 12th Conference of the European Chapter of the ACL (EACL 2009); Lascarides, A., Gardent, C., Nivre, J., Eds.; Athens, Greece, 2009; pp. 567–575. [Google Scholar]

- Dong, F.; Zhang, Y. Automatic Features for Essay Scoring – An Empirical Study. In Proceedings of the Proceedings of the 2016 Conference on Empirical Methods in Natural Language Processing, Austin, Texas, 2016; pp. 1072–1077. [Google Scholar] [CrossRef]

- Taghipour, K.; Ng, H.T. A Neural Approach to Automated Essay Scoring. In Proceedings of the Proceedings of the 2016 Conference on Empirical Methods in Natural Language Processing, Austin, Texas, 2016; pp. 1882–1891. [Google Scholar] [CrossRef]

- Uto, M. A Review of Deep-Neural Automated Essay Scoring Models. Behaviormetrika 2021, 48, 459–484. [Google Scholar] [CrossRef]

- Foltz, P.; Laham, D.; Landauer, T. The Intelligent Essay Assessor: Applications to Educational Technology. Interactive Multimedia Electronic Journal of Computer-Enhanced Learning 1999. [Google Scholar]

- Leacock, C.; Chodorow, M. C-Rater: Automated Scoring of Short-Answer Questions. Computers and the Humanities 2003, 37, 389–405. [Google Scholar] [CrossRef]

- Attali, Y.; Burstein, J. Automated Essay Scoring with E-Rater® V.2. The Journal of Technology, Learning and Assessment 2006, 4. [Google Scholar] [CrossRef]

- Messer, M.; Brown, N.C.C.; Kölling, M.; Shi, M. Automated Grading and Feedback Tools for Programming Education: A Systematic Review. ACM Transactions on Computing Education 2024, 24, 1–43. [Google Scholar] [CrossRef]

- Ihantola, P.; Ahoniemi, T.; Karavirta, V.; Seppälä, O. Review of Recent Systems for Automatic Assessment of Programming Assignments. In Proceedings of the Proceedings of the 10th Koli Calling International Conference on Computing Education Research, Koli Finland, 2010; pp. 86–93. [Google Scholar] [CrossRef]

- Rodríguez, J.F.; Fernández-García, A.J.; Verdú, E. Grading Open-Ended Questions Using LLMS and RAG. Expert Systems 2026, 43, e70174. [Google Scholar] [CrossRef]

- Jukiewicz, M. A Systematic Comparison of Large Language Models for Automated Assignment Assessment in Programming Education: Exploring the Importance of Architecture and Vendor. arXiv 2025, arXiv:2509.26483. [Google Scholar] [CrossRef]

- Yewon, A.; Sang-Ki, L. The Impact of Prompt Engineering on GPT-4o’s Scoring Reliability in English Writing Assessment. 2025. [Google Scholar] [CrossRef]

- Golchin, S.; Garuda, N.; Impey, C.; Wenger, M. Large Language Models As MOOCs Graders. 2024. [Google Scholar] [CrossRef]

- Ferreira Mello, R.; Pereira Junior, C.; Rodrigues, L.; Pereira, F.D.; Cabral, L.; Costa, N.; Ramalho, G.; Gasevic, D. Automatic Short Answer Grading in the LLM Era: Does GPT-4 with Prompt Engineering Beat Traditional Models? In Proceedings of the Proceedings of the 15th International Learning Analytics and Knowledge Conference, New York, NY, USA, 2025; LAK ’25, pp. 93–103. [Google Scholar] [CrossRef]

- Qiu, H.; White, B.; Ding, A.; Costa, R.; Hachem, A.; Ding, W.; Chen, P. SteLLA: A Structured Grading System Using LLMs with RAG. arXiv 2025, arXiv:2501.09092. [Google Scholar] [CrossRef]

- Latif, E.; Zhai, X. Fine-Tuning ChatGPT for Automatic Scoring. Computers and Education: Artificial Intelligence 2024, 6, 100210. [Google Scholar] [CrossRef]

- Jonsson, A.; Svingby, G. The Use of Scoring Rubrics: Reliability, Validity and Educational Consequences. Educational Research Review 2007, 2, 130–144. [Google Scholar] [CrossRef]

- Messer, M.; Brown, N.C.C.; Kölling, M.; Shi, M. How Consistent Are Humans When Grading Programming Assignments? ACM Transactions on Computing Education 2025, 25, 1–37. [Google Scholar] [CrossRef]

- Team, R.C. R: A Language and Environment for Statistical Computing, 2022.

- Van Rossum, G.; Drake, F.L., Jr. Python reference manual; Centrum voor Wiskunde en Informatica Amsterdam, 1995. [Google Scholar]

- Hossain, S. Visualization of Bioinformatics Data with Dash Bio. SciPy 2019. [Google Scholar] [CrossRef]

- Shrout, P.E.; Fleiss, J.L. Intraclass Correlations: Uses in Assessing Rater Reliability. 86, 420–428. [CrossRef]

- Revelle, W. Psych: Procedures for Psychological, Psychometric, and Personality Research, 2025.

- Bates, D.; Mächler, M.; Bolker, B.; Walker, S. Fitting Linear Mixed-Effects Models Using Lme4. 67, 1–48. [CrossRef]

- Simpson, E.H. Measurement of Diversity. Nature 1949, 163, 688–688. [Google Scholar] [CrossRef]

- Akaike, H. A New Look at the Statistical Model Identification. IEEE Transactions on Automatic Control 1974, 19, 716–723. [Google Scholar] [CrossRef]

- Schwarz, G. Estimating the Dimension of a Model. The Annals of Statistics 1978, 6, 461–464. [Google Scholar] [CrossRef]

- OpenAI. GPT-4.1 Nano - OpenAI API. Available online: https://platform.openai.com/docs/models/gpt-4.1-nano.

- OpenAI. GPT-5 Nano - OpenAI API. Available online: https://platform.openai.com/docs/models/gpt-5-nano.

- OpenAI. Gpt-Oss-20b - OpenAI API. Available online: https://platform.openai.com/docs/models/gpt-oss-20b.

- Microsoft. Azure Machine Learning - ML as a Service | Microsoft Azure. Available online: https://azure.microsoft.com/en-us/products/machine-learning.

- DeepL. DeepL Translate: The World’s Most Accurate Translator. Available online: https://www.deepl.com/translator.

- Capretto, T.; Piho, C.; Kumar, R.; Westfall, J.; Yarkoni, T.; Martin, O.A. Bambi: A simple interface for fitting Bayesian linear models in Python. arXiv 2022, arXiv:2012.10754. [Google Scholar] [CrossRef]

- Westfall, J. Statistical details of the default priors in the Bambi library. arXiv 2017, arXiv:1702.01201. [Google Scholar] [CrossRef]

- OpenAI. Introducing GPT-5. 2025. Available online: https://openai.com/index/introducing-gpt-5/.

- Grattafiori, A.; Dubey, A.; Jauhri, A.; Pandey, A.; Kadian, A.; Al-Dahle, A.; Letman, A.; Mathur, A.; Schelten, A.; Vaughan, A.; et al. The Llama 3 Herd of Models. arXiv 2024, arXiv:2407.21783. [Google Scholar] [CrossRef]

- Mistral AI Team. Codestral 25.01 | Mistral AI. 2025. Available online: https://mistral.ai/news/codestral-2501?utm_source=chatgpt.com.

- Universiteit van Amsterdam. UvA AI Chat. 2025. Available online: https://www.uva.nl/over-de-uva/over-de-universiteit/ai/ai-in-het-onderwijs/uva-ai-chat/uva-ai-chat.html.

- Wang, Y.; Huang, J.; Du, L.; Guo, Y.; Liu, Y.; Wang, R. Evaluating large language models as raters in large-scale writing assessments: A psychometric framework for reliability and validity. Computers and Education: Artificial Intelligence 2025, 9, 100481. [Google Scholar] [CrossRef]

- Johnson, M.; Zhang, M. Examining the responsible use of zero-shot AI approaches to scoring essays. In Scientific Reports; Nature Publishing Group, 2024; Volume 14, p. 30064. [Google Scholar] [CrossRef]

- Cohn, C.; S, A.T.; Mohammed, N.; Biswas, G. CoTAL: Human-in-the-Loop Prompt Engineering for Generalizable Formative Assessment Scoring. 2025. [Google Scholar] [CrossRef]

- Armfield, D.; Chen, E.; Omonkulov, A.; Tang, X.; Lin, J.; Thiessen, E.; Koedinger, K. Avalon: A Human-in-the-Loop LLM Grading System with Instructor Calibration and Student Self-assessment. In Proceedings of the Artificial Intelligence in Education. Posters and Late Breaking Results, Workshops and Tutorials, Industry and Innovation Tracks, Practitioners, Doctoral Consortium, Blue Sky, and WideAIED; Cristea, A.I., Walker, E., Lu, Y., Santos, O.C., Isotani, S., Eds.; Cham, 2025; pp. 111–118. [Google Scholar] [CrossRef]

- Rodrigues, L.; Xavier, C.; Costa, N.; Gasevic, D.; Mello, R.F. Is GPT-4 Fair? An Empirical Analysis in Automatic Short Answer Grading. Computers and Education: Artificial Intelligence 2025, 8, 100428. [Google Scholar] [CrossRef]

- An, J.; Huang, D.; Lin, C.; Tai, M. Measuring Gender and Racial Biases in Large Language Models: Intersectional Evidence from Automated Resume Evaluation. PNAS Nexus 2025, 4, pgaf089. [Google Scholar] [CrossRef] [PubMed]

- Hattie, J.; Timperley, H. The Power of Feedback. Review of Educational Research 2007, 77, 81–112. [Google Scholar] [CrossRef]

- Morris, R.; Perry, T.; Wardle, L. Formative Assessment and Feedback for Learning in Higher Education: A Systematic Review. Review of Education 2021, 9, e3292. [Google Scholar] [CrossRef]

- Dai, W.; Lin, J.; Jin, H.; Li, T.; Tsai, Y.S.; Gašević, D.; Chen, G. Can Large Language Models Provide Feedback to Students? A Case Study on ChatGPT. In Proceedings of the 2023 IEEE International Conference on Advanced Learning Technologies (ICALT), 2023; pp. 323–325. [Google Scholar] [CrossRef]

- Jia, Q.; Cui, J.; Du, H.; Rashid, P.; Xi, R.; Li, R.; Gehringer, E. LLM-generated Feedback in Real Classes and Beyond: Perspectives from Students and Instructors. In Proceedings of the Proceedings of the 17th International Conference on Educational Data Mining, 2024; pp. 862–867. [Google Scholar] [CrossRef]

- Meyer, J.; Jansen, T.; Schiller, R.; Liebenow, L.W.; Steinbach, M.; Horbach, A.; Fleckenstein, J. Using LLMs to Bring Evidence-Based Feedback into the Classroom: AI-generated Feedback Increases Secondary Students’ Text Revision, Motivation, and Positive Emotions. Computers and Education: Artificial Intelligence 2024, 6, 100199. [Google Scholar] [CrossRef]

- Nieminen, J.H.; Boud, D. Student Self-Assessment: A Meta-Review of Five Decades of Research. Assessment in Education: Principles, Policy & Practice 2025, 32, 127–151. [Google Scholar] [CrossRef]

- Canvas. Available online: https://www.instructure.com/canvas.

| LLM | temperature | top_p | text verbosity | shuffled rubrics | varied personas | varied languages | n conditions |

|---|---|---|---|---|---|---|---|

| Prompting conditions | |||||||

| gpt-4.1-nano | 0 / 1 | 0.1 / 1 | - | no / yes | no / yes | no / yes | 24 |

| gpt-5-nano | - | - | low / medium | no / yes | no / yes | no / yes | 16 |

| gpt-oss-20b | - | - | medium | no / yes | no / yes | no / yes | 8 |

| Post-hoc conditions | |||||||

| ensemble | 1 (gpt-4.1-nano) | 1 (gpt-4.1-nano) | medium (gpt-5-nano & gpt-oss-20b) | no / yes | no / yes | no / yes | 8 |

| student | question | condition | procedure | correct | error |

|---|---|---|---|---|---|

| 1 | #R23 | 1 | SP | 0 | -0.5 |

| 1 | #R23 | 1 | MV | 0 | 0.25 |

| 1 | #R23 | 1 | SURE | 1 | 0 |

| 2000 | #R23 | 1 | MV | 0 | 0.25 |

| 2000 | #R23 | 1 | SURE | 1 | 0 |

| condition | llm | temp | topp | verb | shuf | pers | lang |

|---|---|---|---|---|---|---|---|

| 1 | gpt-4.1-nano | 0 | 1 | 0 | 0 | 0 | 0 |

| 2 | gpt-4.1-nano | 1 | 1 | 0 | 0 | 0 | 0 |

| 3 | gpt-4.1-nano | 1 | 1 | 0 | 1 | 0 | 0 |

| 2000 | ensemble | 1 | 1 | 1 | 0 | 0 | 0 |

| student | question | grader | correct | error |

|---|---|---|---|---|

| 1 | #R23 | grader-1 | 0 | 0.25 |

| 1 | #R23 | grader-2 | 0 | -0.5 |

| 1 | #R23 | grader-3 | 1 | 0 |

| 1 | #R23 | grader-4 | 1 | 0 |

| 1 | #R23 | gpt-4.1-nano | 0 | -0.75 |

| 1 | #R23 | gpt-5-nano | 0 | 0.25 |

| 1 | #R23 | gpt-oss-20b | 1 | 0 |

| 1 | #R23 | ensemble | 1 | 0 |

| Coefficient | Mean | 2.5% HDI | 97.5% HDI |

|---|---|---|---|

| HDI excludes zero | |||

| Intercept | 1.601 | 1.199 | 1.980 |

| procedure[single-prompt] | -0.230 | -0.300 | -0.161 |

| procedure[SURE] | 1.311 | 1.219 | 1.400 |

| llm[gpt-5-nano] | 1.437 | 1.319 | 1.557 |

| llm[gpt-oss-20b] | 1.958 | 1.821 | 2.100 |

| llm[ensemble] | 1.989 | 1.830 | 2.147 |

| topp(llm=gpt-4.1-nano; temp=1) | 0.176 | 0.075 | 0.275 |

| languages | -0.080 | -0.167 | -0.002 |

| procedure[SURE] : llm[ensemble] | -0.742 | -0.880 | -0.601 |

| procedure[SURE] : llm[gpt-5-nano] | -0.632 | -0.751 | -0.513 |

| procedure[SURE] : llm[gpt-oss-20b] | -0.686 | -0.831 | -0.542 |

| topp(llm=gpt-4.1-nano; temp=1) : procedure[single-prompt] | -0.158 | -0.233 | -0.082 |

| languages : procedure[single-prompt] | -0.527 | -0.580 | -0.475 |

| languages : llm[gpt-5-nano] | 0.295 | 0.192 | 0.397 |

| languages : llm[gpt-oss-20b] | 0.283 | 0.160 | 0.397 |

| languages : shuffle_rubrics | -0.082 | -0.140 | -0.024 |

| languages : topp(llm=gpt-4.1-nano; temp=1) | -0.169 | -0.260 | -0.073 |

| 1|student_sigma | 0.355 | 0.282 | 0.435 |

| 1|question_sigma | 1.253 | 1.004 | 1.531 |

| HDI includes zero | |||

| temp(llm=gpt-4.1-nano) | -0.002 | -0.100 | 0.099 |

| verb(llm=gpt-5-nano) | 0.067 | -0.076 | 0.195 |

| shuffle_rubrics | 0.067 | -0.013 | 0.152 |

| personalities | -0.003 | -0.086 | 0.077 |

| procedure[single-prompt] : llm[ensemble-3.5] | -0.018 | -1.987 | 1.846 |

| procedure[single-prompt] : llm[gpt-5-nano] | -0.098 | -0.189 | 0.004 |

| procedure[single-prompt] : llm[gpt-oss-20b] | 0.111 | -0.002 | 0.230 |

| temp(llm=gpt-4.1-nano) : procedure[single-prompt] : | -0.003 | -0.072 | 0.075 |

| temp(llm=gpt-4.1-nano) : procedure[SURE] | 0.010 | -0.078 | 0.113 |

| temp(llm=gpt-4.1-nano) : shuffle_rubrics | -0.034 | -0.125 | 0.060 |

| temp(llm=gpt-4.1-nano) : personalities | 0.011 | -0.085 | 0.101 |

| temp(llm=gpt-4.1-nano) : languages | 0.021 | -0.076 | 0.111 |

| topp(llm=gpt-4.1-nano; temp=1) : procedure[SURE] | 0.035 | -0.065 | 0.130 |

| topp(llm=gpt-4.1-nano; temp=1) : shuffle_rubrics | -0.030 | -0.125 | 0.063 |

| topp(llm=gpt-4.1-nano; temp=1) : personalities | 0.000 | -0.099 | 0.088 |

| verb(llm=gpt-5-nano) : procedure[single-prompt] : | -0.048 | -0.161 | 0.070 |

| verb(llm=gpt-5-nano) : procedure[SURE] | -0.035 | -0.175 | 0.112 |

| verb(llm=gpt-5-nano) : shuffle_rubrics | -0.081 | -0.196 | 0.037 |

| verb(llm=gpt-5-nano) : personalities | -0.062 | -0.173 | 0.062 |

| verb(llm=gpt-5-nano) : languages | 0.047 | -0.065 | 0.164 |

| shuffle_rubrics : procedure[SURE] | 0.032 | -0.032 | 0.099 |

| shuffle_rubrics : procedure[single-prompt] : | -0.014 | -0.065 | 0.036 |

| shuffle_rubrics : llm[ensemble-3.5] | -0.127 | -0.269 | 0.022 |

| shuffle_rubrics : llm[gpt-5-nano] | 0.004 | -0.099 | 0.121 |

| shuffle_rubrics : llm[gpt-oss-20b] | -0.030 | -0.153 | 0.092 |

| shuffle_rubrics : personalities | -0.007 | -0.062 | 0.051 |

| personalities : procedure[SURE] | -0.009 | -0.077 | 0.053 |

| personalities : procedure[single-prompt] : | 0.002 | -0.053 | 0.053 |

| personalities : llm[ensemble-3.5] | 0.123 | -0.023 | 0.260 |

| personalities : llm[gpt-5-nano] | 0.015 | -0.093 | 0.121 |

| personalities : llm[gpt-oss-20b] | 0.064 | -0.059 | 0.181 |

| personalities : languages | -0.023 | -0.080 | 0.037 |

| languages : procedure[SURE] | -0.036 | -0.106 | 0.022 |

| languages : llm[ensemble-3.5] | 0.101 | -0.046 | 0.240 |

| 1|condition_sigma | 0.027 | 0.000 | 0.052 |

| LLM | Grading Procedure | % in Target Range | Maximum Grade Deviation | Median Grade Deviation |

|---|---|---|---|---|

| Assignment 1 | ||||

| gpt-4.1-nano | SP | 6.522 | 1.9 | 0.85 |

| MV | 8.696 | 1.2 | 0.5 | |

| SURE | 60.870 | 0.4 | 0.1 | |

| gpt-5-nano | SP | 19.565 | 1.1 | 0.40 |

| MV | 47.826 | 0.7 | 0.1 | |

| SURE | 60.870 | 0.5 | 0.1 | |

| gpt-oss-20b | SP | 54.348 | 0.7 | 0.1 |

| MV | 52.174 | 0.6 | 0.1 | |

| SURE | 73.913 | 0.3 | 0 | |

| ensemble | MV | 45.652 | 0.7 | 0.1 |

| SURE | 73.913 | 0.4 | 0 | |

| Regrading (min) and time savings (%) | |||||

|---|---|---|---|---|---|

| Grader | Manual (min) | gpt-4.1-nano | gpt-5-nano | gpt-oss-20b | Ensemble |

| Assignment 1 | |||||

| Grader 1 | 186 | 85 (54%) | 22 (88%) | 19 (90%) | 22 (88%) |

| Grader 2 | 195 | 95 (51%) | 25 (87%) | 24 (88%) | 26 (87%) |

| Grader 3 | 399 | 203 (49%) | 56 (87%) | 57 (86%) | 68 (83%) |

| Grader 4 | 238 | 115 (52%) | 30 (87%) | 33 (86%) | 38 (84%) |

| LLM | Grading Procedure | % in Target Range | Maximum Grade Deviation | Median Grade Deviation |

|---|---|---|---|---|

| Assignment 2 | ||||

| gpt-4.1-nano | SP | 4.444 | 2.8 | 1.1 |

| MV | 8.889 | 2.8 | 1.0 | |

| SURE | 42.222 | 1.2 | 0.2 | |

| gpt-5-nano | SP | 33.333 | 1.8 | 0.3 |

| MV | 46.667 | 1.7 | 0.3 | |

| SURE | 73.333 | 1.2 | 0.1 | |

| gpt-oss-20b | SP | 55.556 | 1.1 | 0.2 |

| MV | 57.778 | 1.2 | 0.2 | |

| SURE | 75.556 | 1.0 | 0.1 | |

| ensemble | MV | 55.556 | 1.4 | 0.2 |

| SURE | 91.111 | 0.4 | 0.1 | |

| Assignment 3 | ||||

| gpt-4.1-nano | SP | 4.348 | 2.8 | 0.85 |

| MV | 15.217 | 2.4 | 0.7 | |

| SURE | 71.739 | 0.6 | 0.1 | |

| gpt-5-nano | SP | 8.696 | 2.7 | 0.8 |

| MV | 13.043 | 1.8 | 0.4 | |

| SURE | 50 | 1.2 | 0.2 | |

| gpt-oss-20b | SP | 26.087 | 1.5 | 0.3 |

| MV | 32.609 | 1.7 | 0.3 | |

| SURE | 52.174 | 1.4 | 0.1 | |

| ensemble | MV | 26.087 | 1.8 | 0.3 |

| SURE | 89.13 | 0.3 | 0.1 | |

| Assignment 4 | ||||

| gpt-4.1-nano | SP | 4.348 | 3.1 | 1.55 |

| MV | 2.174 | 2.5 | 1.3 | |

| SURE | 19.565 | 1.6 | 0.6 | |

| gpt-5-nano | SP | 19.565 | 4.4 | 0.8 |

| MV | 34.783 | 3.8 | 0.3 | |

| SURE | 73.913 | 1.9 | 0.0 | |

| gpt-oss-20b | SP | 54.348 | 3.5 | 0.3 |

| MV | 47.826 | 3.2 | 0.3 | |

| SURE | 60.87 | 1.3 | 0.0 | |

| ensemble | MV | 54.348 | 3.2 | 0.3 |

| SURE | 91.304 | 0.4 | 0.0 | |

| Assignment 5 | ||||

| gpt-4.1-nano | SP | 21.739 | 2.1 | 0.65 |

| MV | 10.87 | 2.3 | 0.65 | |

| SURE | 54.348 | 0.9 | 0.2 | |

| gpt-5-nano | SP | 32.609 | 2.6 | 0.45 |

| MV | 47.826 | 1.2 | 0.2 | |

| SURE | 69.565 | 0.9 | 0.1 | |

| gpt-oss-20b | SP | 58.696 | 1.5 | 0.2 |

| MV | 76.087 | 0.8 | 0.1 | |

| SURE | 80.435 | 0.4 | 0.0 | |

| ensemble | MV | 47.826 | 0.9 | 0.2 |

| SURE | 84.783 | 0.4 | 0.0 | |

| Regrading (min) and time savings (%) | |||||

|---|---|---|---|---|---|

| Grader | Manual (min) | gpt-4.1-nano | gpt-5-nano | gpt-oss-20b | Ensemble |

| Assignment 2 | |||||

| Grader 1 | 137 | 59 (57%) | 34 (75%) | 22 (84%) | 62 (55%) |

| Grader 2 | 224 | 102 (54%) | 50 (78%) | 30 (87%) | 95 (58%) |

| Grader 4 | 194 | 93 (52%) | 39 (80%) | 27 (86%) | 82 (58%) |

| Assignment 3 | |||||

| Grader 1 | 323 | 168 (48%) | 70 (78%) | 51 (84%) | 163 (50%) |

| Grader 2 | 380 | 214 (44%) | 91 (76%) | 67 (82%) | 201 (47%) |

| Grader 4 | 253 | 147 (42%) | 67 (74%) | 49 (81%) | 142 (44%) |

| Assignment 4 | |||||

| Grader 1 | 125 | 57 (54%) | 66 (47%) | 27 (78%) | 89 (29%) |

| Grader 2 | 145 | 65 (55%) | 87 (40%) | 30 (79%) | 107 (26%) |

| Grader 4 | 94 | 39 (59%) | 50 (47%) | 21 (78%) | 69 (27%) |

| Assignment 5 | |||||

| Grader 1 | 162 | 91 (44%) | 44 (73%) | 31 (81%) | 89 (45%) |

| Grader 2 | 185 | 96 (48%) | 58 (69%) | 37 (80%) | 99 (46%) |

| Grader 3 | 294 | 160 (46%) | 89 (70%) | 51 (83%) | 161 (45%) |

| Grader 4 | 192 | 99 (48%) | 55 (71%) | 36 (81%) | 105 (45%) |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).