Submitted:

26 November 2025

Posted:

26 November 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

- Extension of the R4VR-framework by definition of a reliable data collection process

- Translation of the R4VR-framework to practical recommendations allowing practitioners from different domains to adopt the framework

- Demonstration of the framework using a use case which is relevant for many industries

- Creation of a code base allowing adaptation to diverse contexts

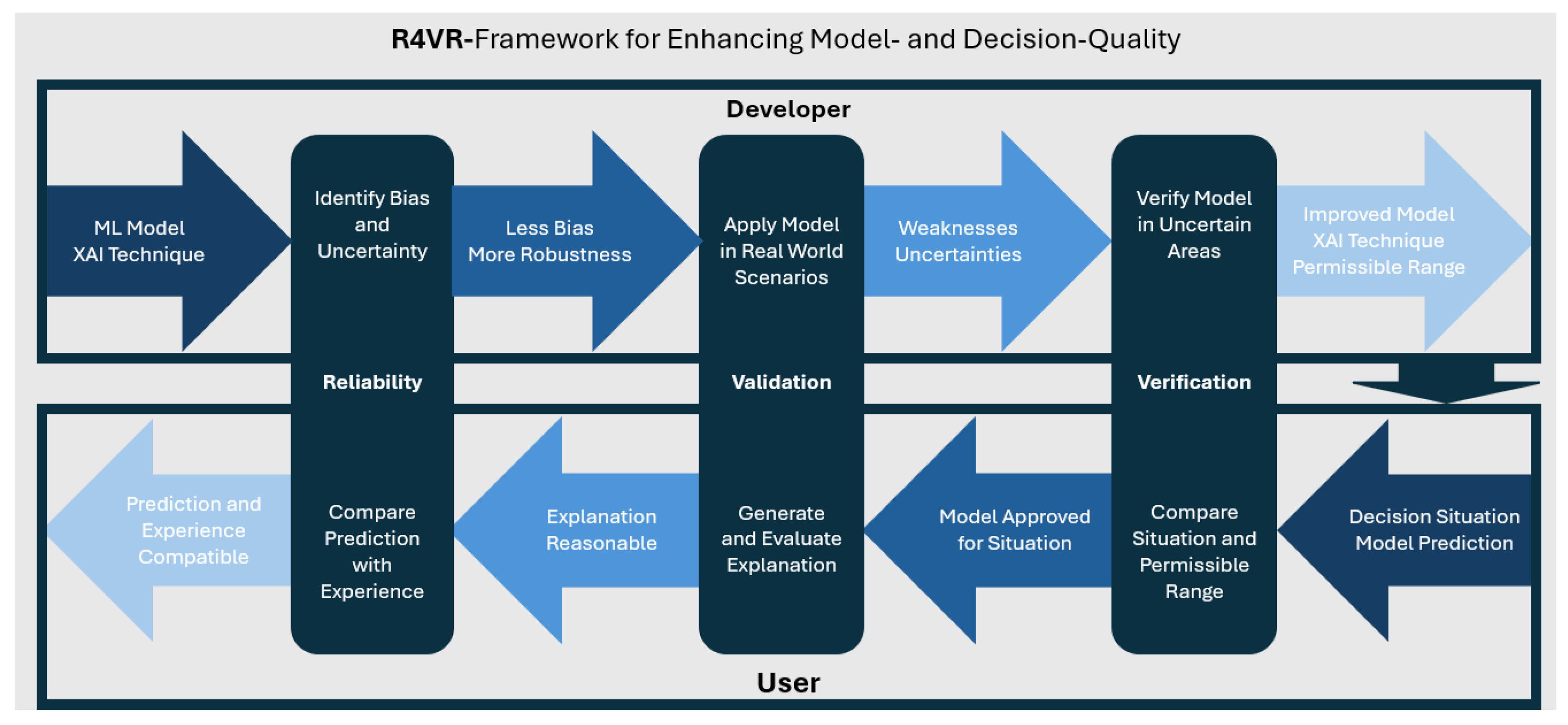

2. The R4VR-Framework and Its Relation to the EU AI Act

- 1.

- Reliability: First of all, XAI can help to increase reliability of decisions made by ML models or with the help of ML models. On the one hand, XAI can contribute to creation of more reliable models and on the other hand, XAI can provide human decision makers with additional information for assessing a model prediction and making a decision based on the prediction.

- 2.

- Validation: XAI techniques can help to validate a model’s prediction as they allow to check if the explanation is stringent, meaning that the features that are highlighted as relevant in the explanation really affect the model prediction if changed.

- 3.

- Verification: Finally, XAI techniques can serve to verify a model’s functioning in certain ranges. Although, it is not possible to verify that a model predicts correctly for all conceivable data points, XAI techniques can help to assess how a model behaves in certain ranges and decrease probability of wrong predictions for these ranges.

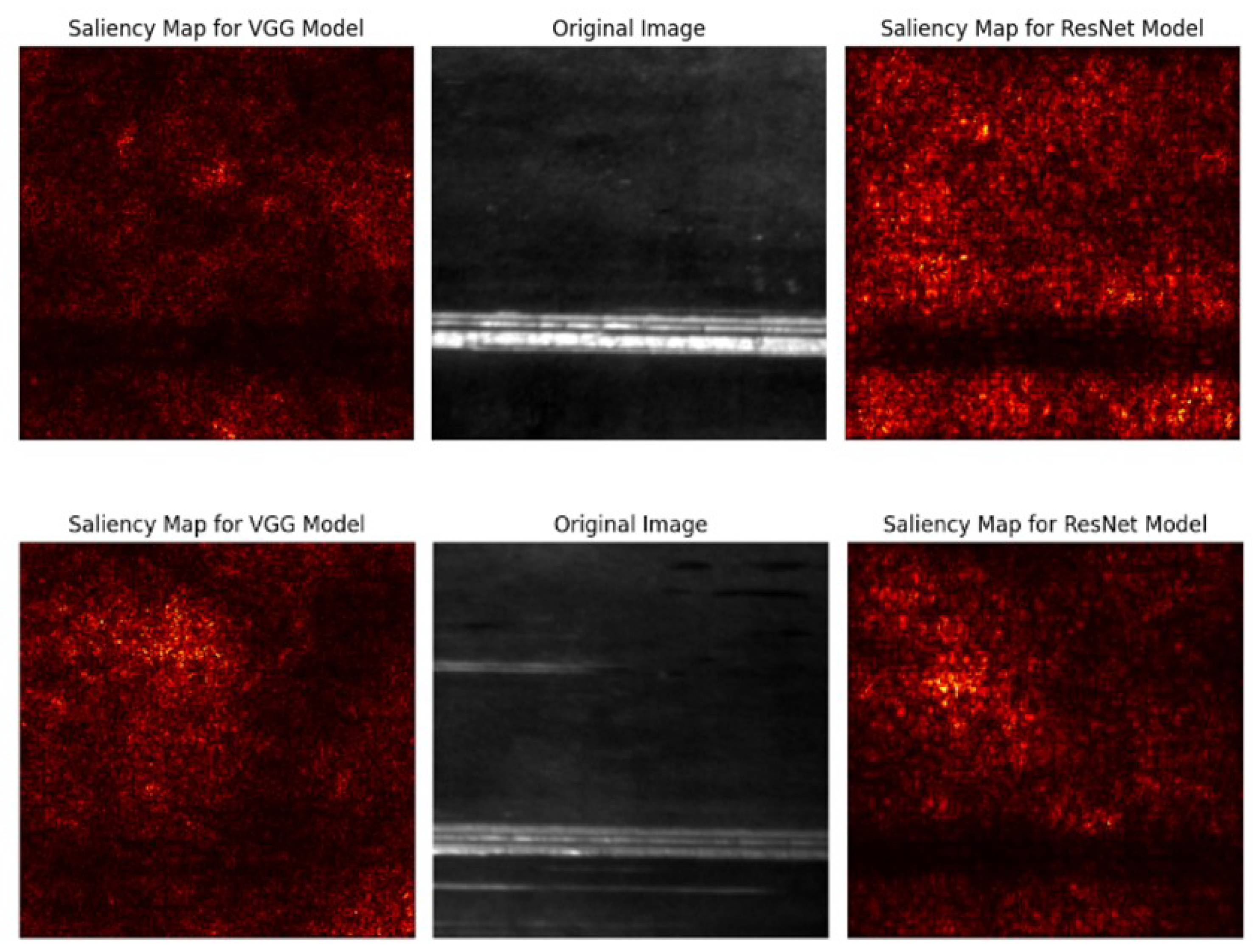

2.1. Explainable Artificial Intelligence as the First Pillar of the R4VR-Framework

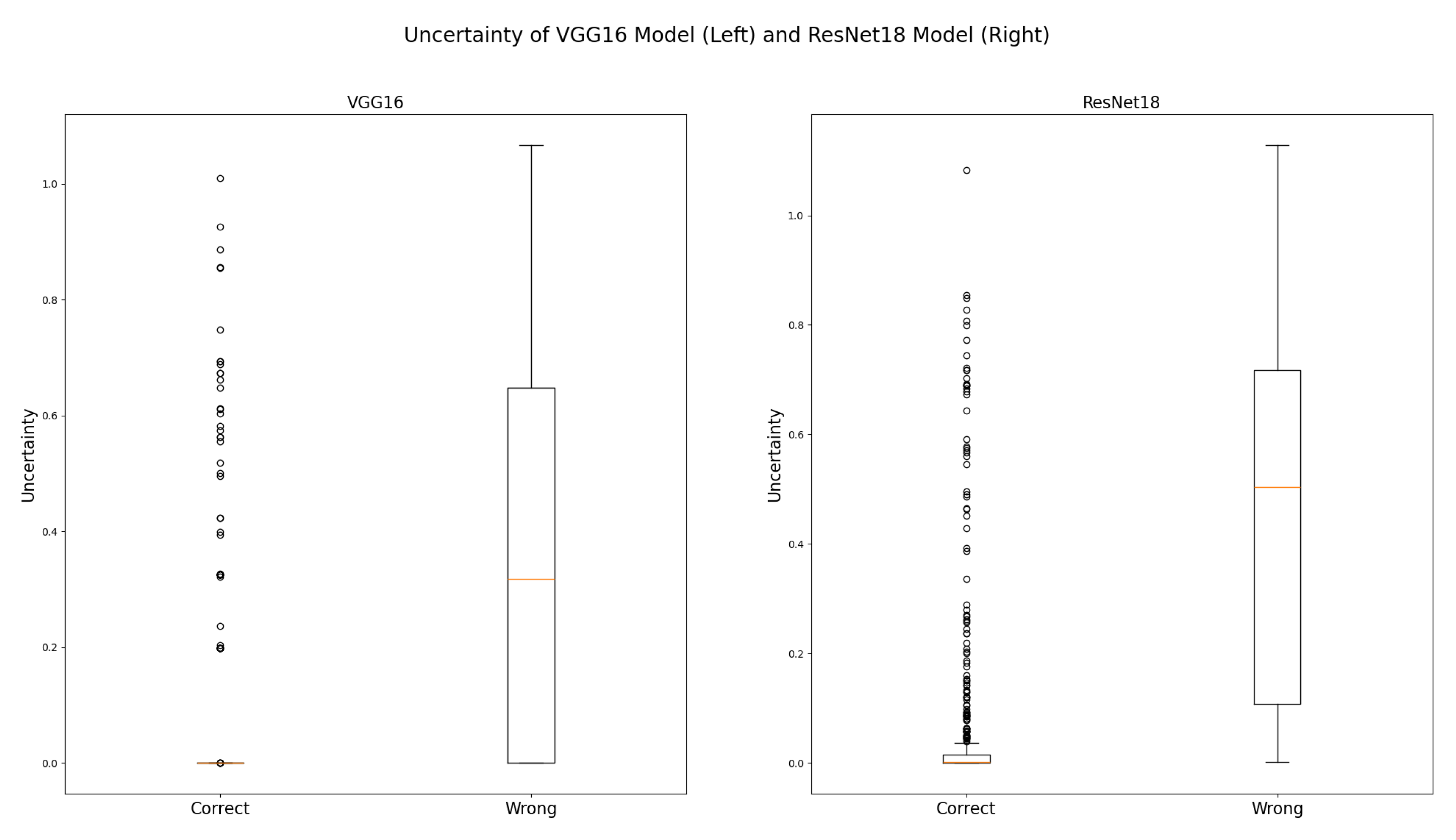

2.2. Uncertainty Quantification as the Second Pillar of the R4VR-Framework

2.3. EU AI Act for High Risk Applications

3. R4VR-Framework for Visual Inspection

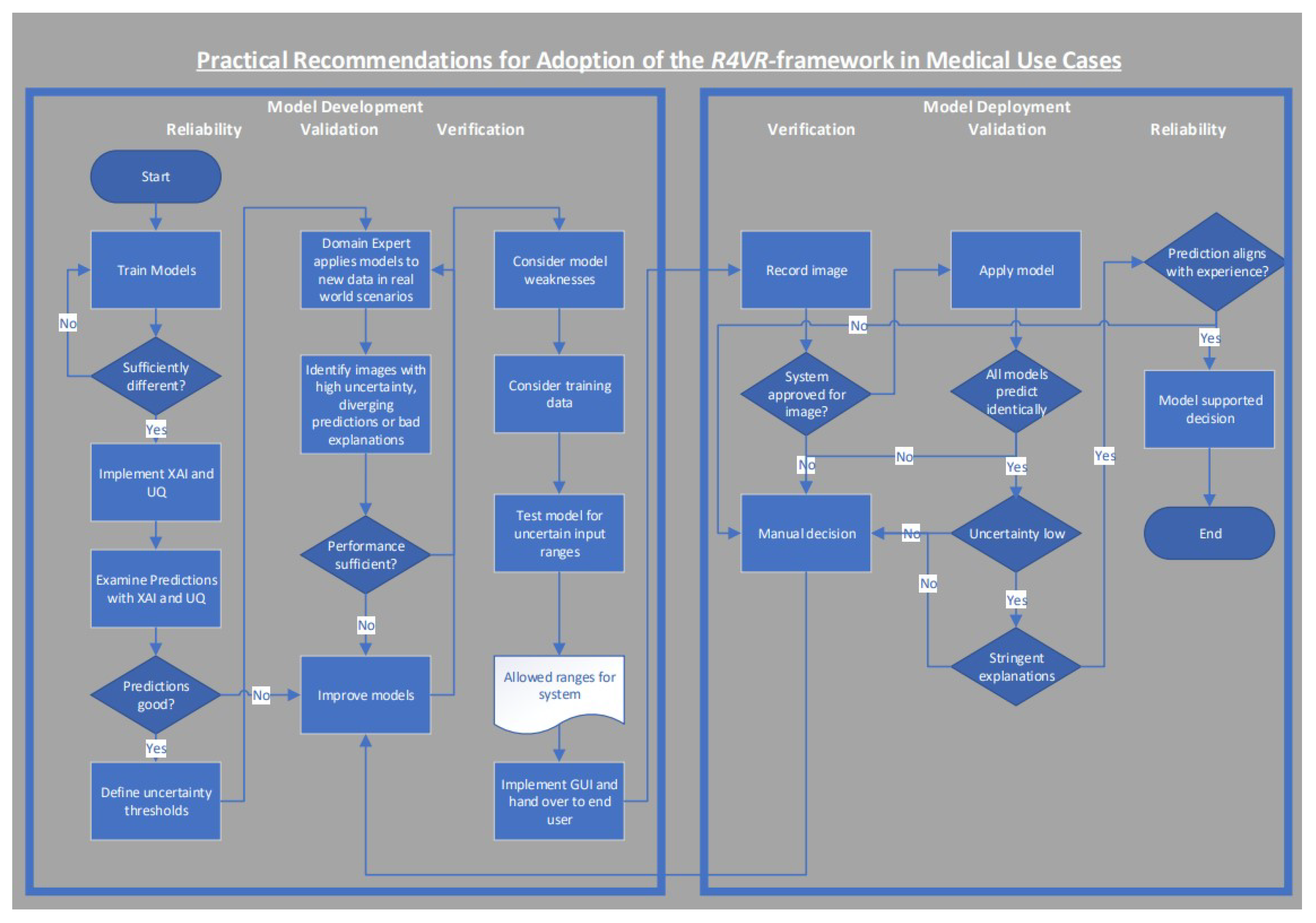

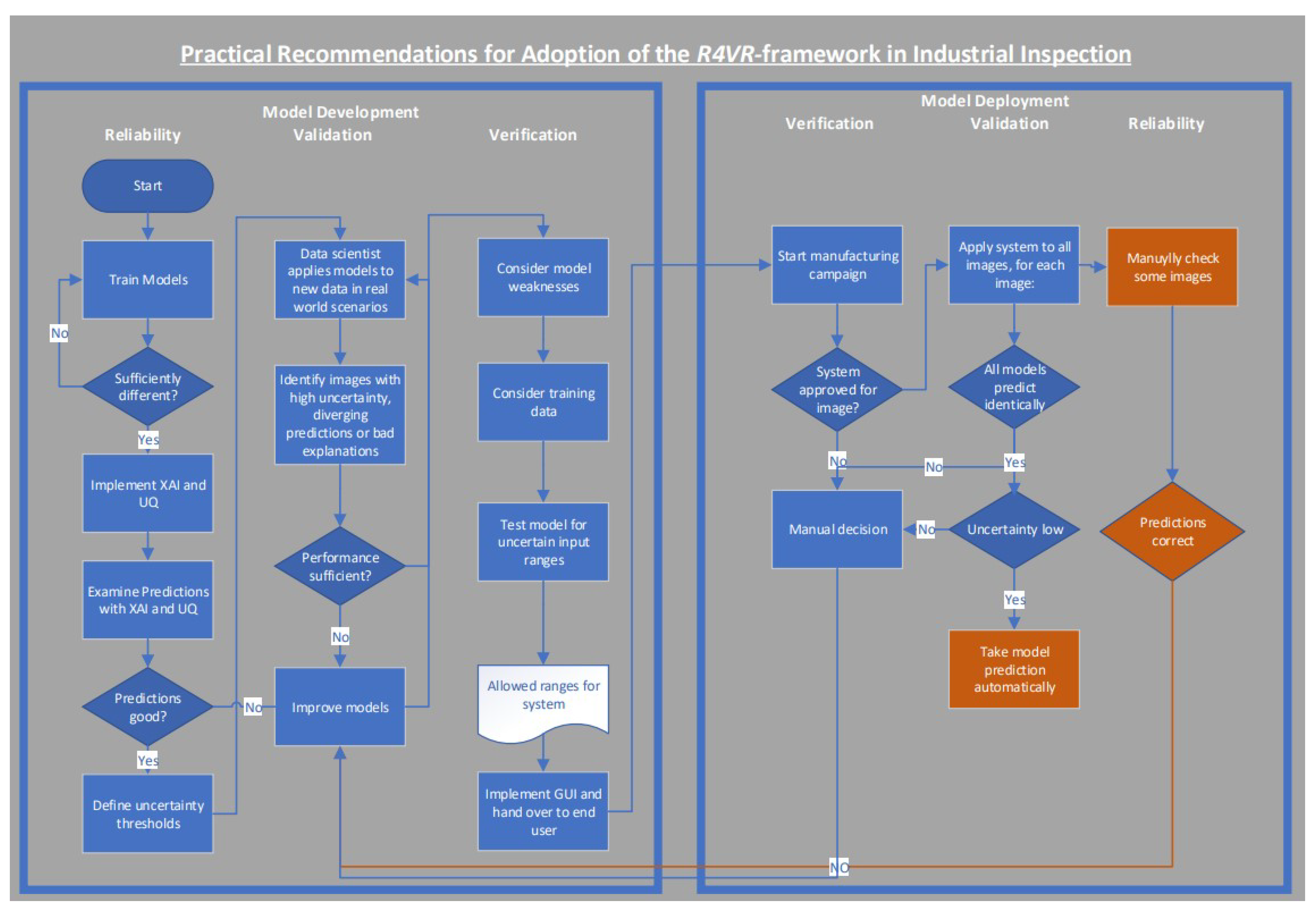

3.1. Practical Implications from the R4VR-Framework

3.2. R4VR-Framework in Medical Use Cases

- Type of allowed images (MRI, CT, ...)

- Shape of images

- Institution and / or device where the images were recorded

- Population for which the model makes good predictions (e.g. a model for breast cancer detection in MRI images may be only admitted for application to female persons)

- Allows the user to upload images and make a prediction

- Incorporates a visual representation of models’ uncertainty allowing the user to immediately see if one of the models is too uncertain

- Visualizes the models’ explanation

- Allows the end user to mark images where uncertainty is high, an explanation is not stringent or at least one of the models predicts a result the user does not agree with

- 1.

- Verification: First of all, the end user has to make sure the model is approved for the use case. For example, if a model is only approved for female persons, he should not apply it for diagnosing males.

- 2.

-

Validation: In the next step, the end user should apply the model to images (e.g. MRI images from his female patient) and validate the model prediction by:

- (a)

- Making sure all models predict identically

- (b)

- No model exceeds predefined uncertainty thresholds

- (c)

- The models’ explanations are stringent meaning they do not rely on irrelevant features from the image such as the background

- 3.

- Reliability: Finally, the physician should compare the model’s prediction to his experience. To that end, he should regard the image himself and check if he agrees with the system’s prediction. In this step, the models’ predictions - in particular the explanation - can already be used to find important features in the image more efficiently and make a better decision based on that.

3.3. R4VR-Framework in Industrial Image Recognition

- Automatically predicts for each new image recorded at the inspection system

- Incorporates a visual representation of models’ uncertainty allowing the user to immediately see if one of the models is too uncertain

- Visualizes the models’ explanations

- Allows the end user to mark and annotate images with bounding boxes and real class when uncertainty is high, an explanation is not stringent or at least one of the models predicts a result the user does not agree with

-

Warns the operator when:

- –

- Model uncertainty exceeds a predefined threshold

- –

- Two models predict differently

- 1.

- Verification: Whenever settings at the inspection system are changed or a new project is manufactured, the end user has to verify the model is approved for the situation.

- 2.

-

Validation: Validation is required when:

- Model uncertainty is high

- Models predict differently

The end user is automatically warned when validation is required. In that case, he should manually decide which class the image belongs to. Beyond that, he could relabel the image in the user interface and create a new training example that way. - 3.

- Reliability: In order to detect performance degradation (e.g. due to data shift), the end user should regularly check a certain amount of images manually and make sure the system still classifies reliably.

4. Implementation of R4VR-Framework for Visual Steel Surface Inspection

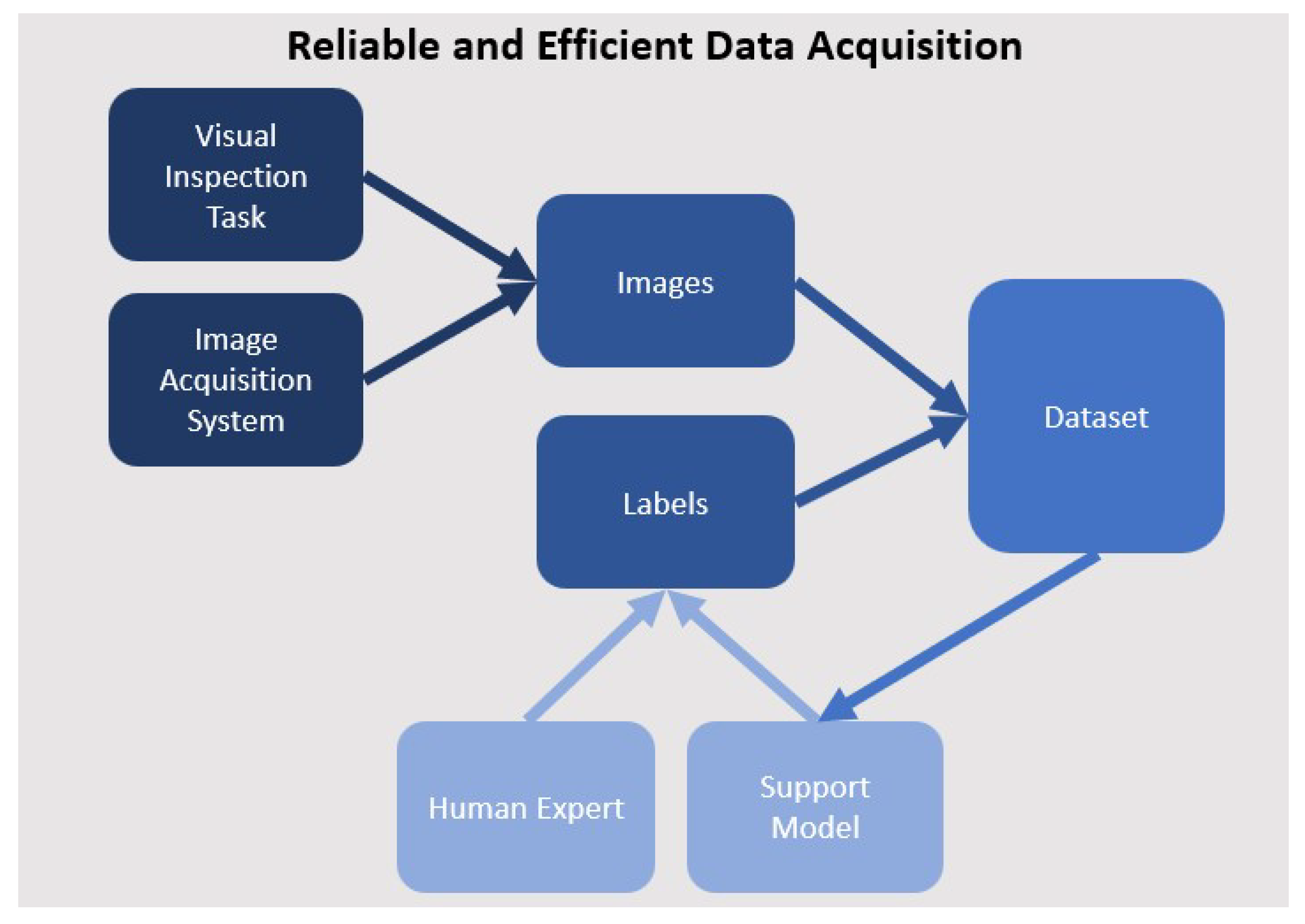

4.1. Data Collection

4.2. Reliability - Creating Strong Baselines

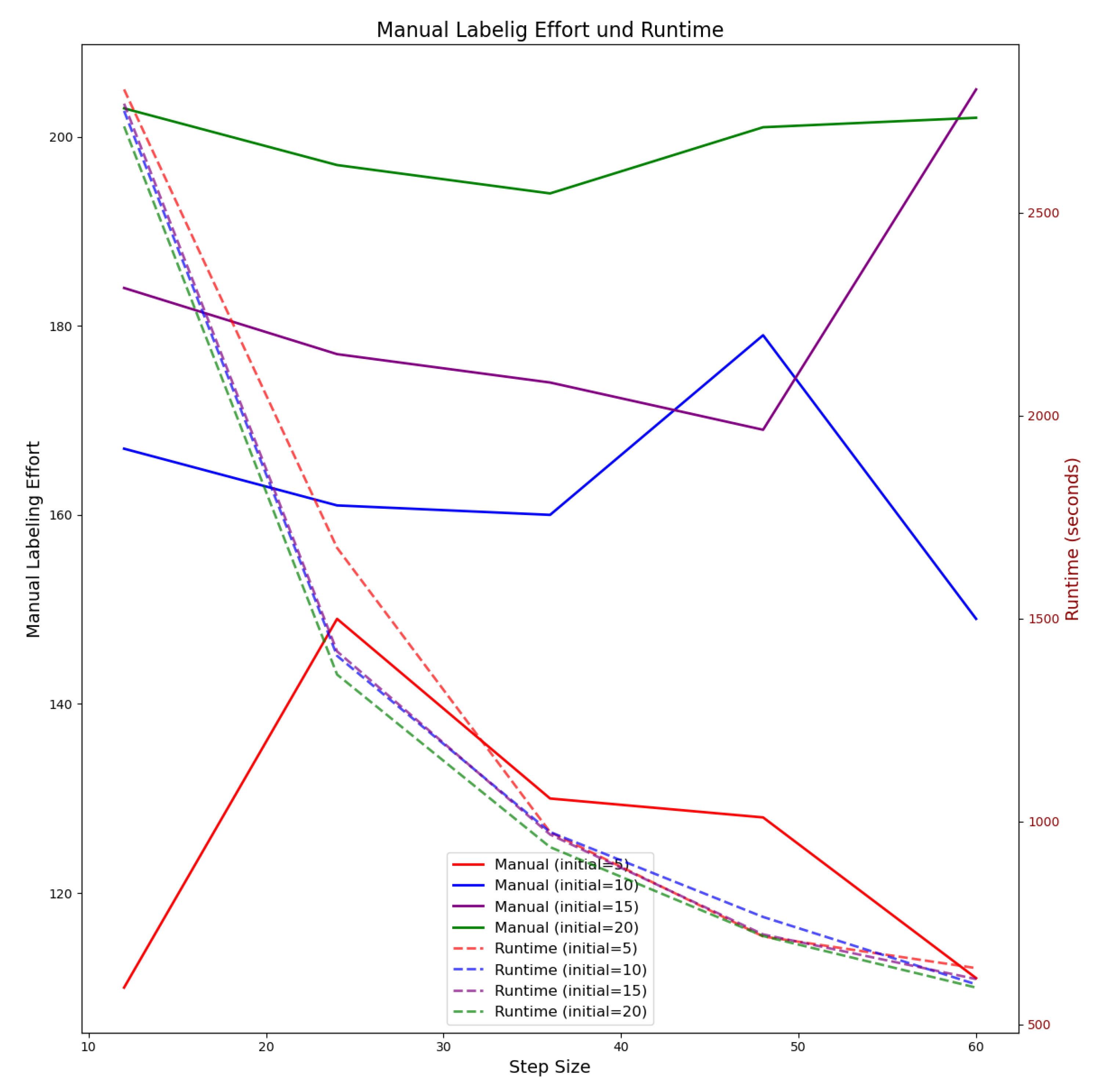

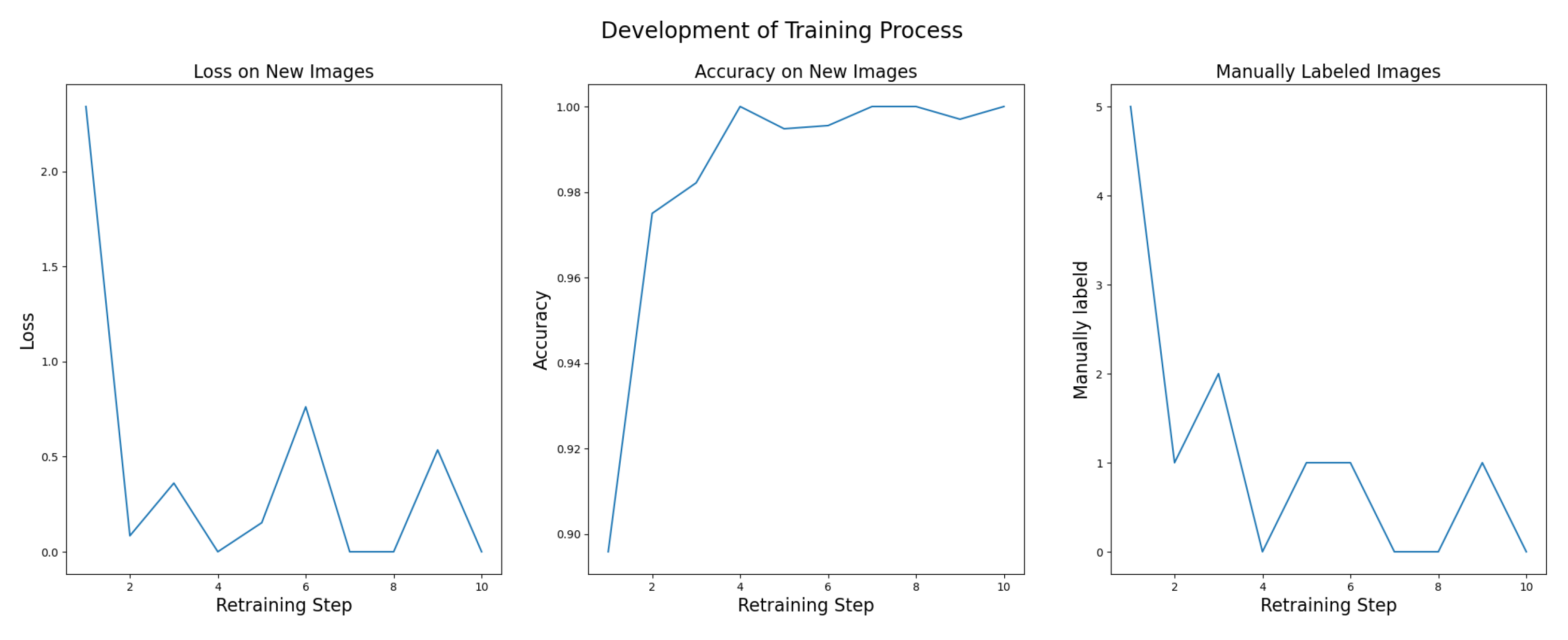

4.3. Validation - Iteratively Improving the Baseline

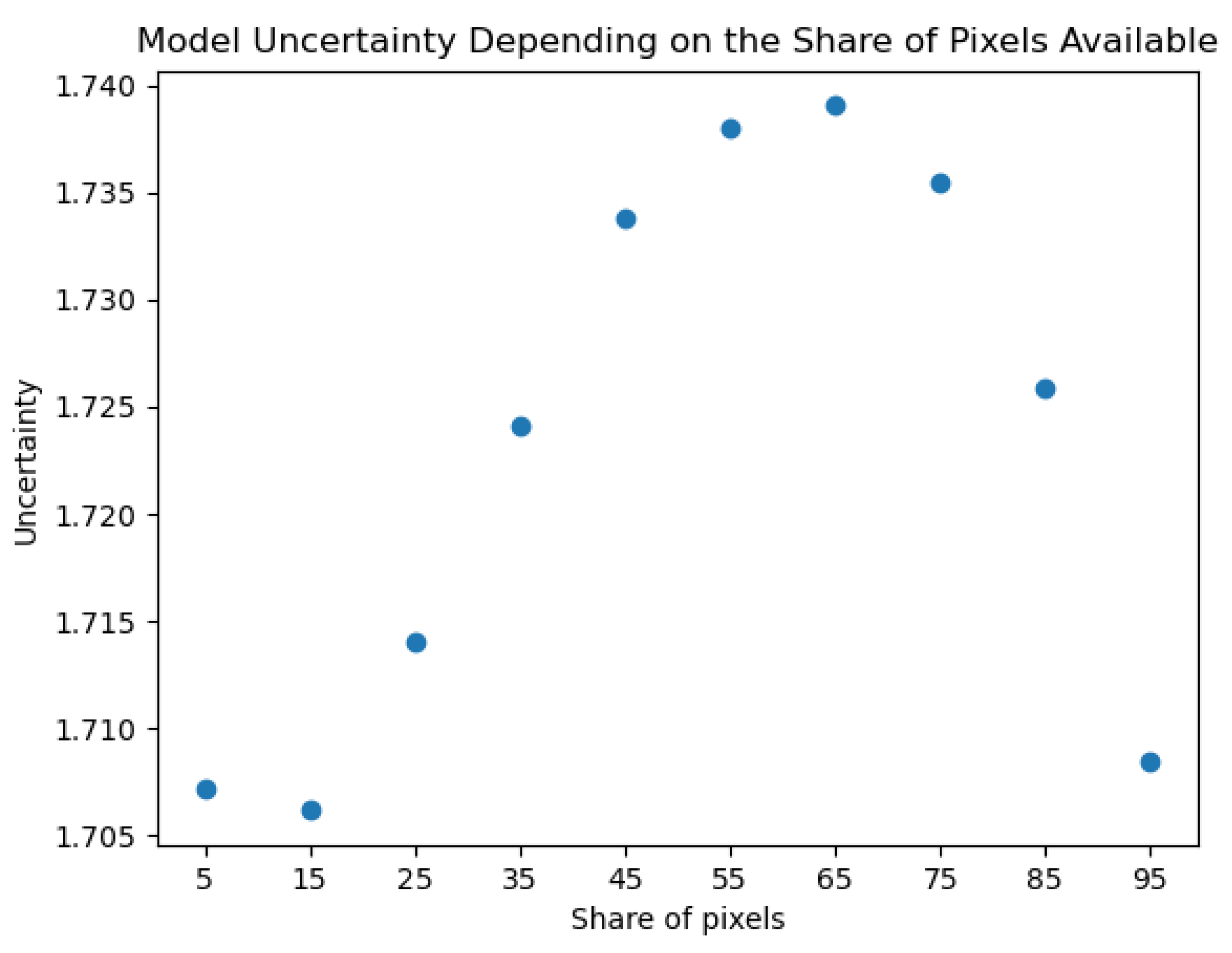

4.4. Verification - What Is the Model Capable of?

4.5. Guidelines for Model Deployment & Monitoring

5. Discussion

5.1. Discussion of Methodological Approach

5.2. Discussion of Results

5.3. Implications for Practice

6. Conclusions

References

- Madan, M.; Reich, C. Strengthening Small Object Detection in Adapted RT-DETR Through Robust Enhancements. Electronics 2025, 14. [CrossRef]

- Jia, Y.; McDermid, J.; Lawton, T.; Habli, I. The Role of Explainability in Assuring Safety of Machine Learning in Healthcare. IEEE Trans. Emerg. Top. Comput. 2022, 10, 1746–1760. [CrossRef]

- Amirian, S.; Carlson, L.A.; Gong, M.F.; Lohse, I.; Weiss, K.R.; Plate, J.F.; Tafti, A.P. Explainable AI in Orthopedics: Challenges, Opportunities, and Prospects. In Proceedings of the 2023 Congress in Computer Science, Computer Engineering, & Applied Computing (CSCE), 2023, pp. 1374–1380. [CrossRef]

- Renjith, V.; Judith, J. A Review on Explainable Artificial Intelligence for Gastrointestinal Cancer using Deep Learning. In Proceedings of the 2023 Annual International Conference on Emerging Research Areas: International Conference on Intelligent Systems (AICERA/ICIS), Kerala, India, 11 2023; pp. 1–6. [CrossRef]

- Plevris, V. Assessing uncertainty in image-based monitoring: addressing false positives, false negatives, and base rate bias in structural health evaluation. Stochastic Environmental Research and Risk Assessment 2025, 39, 959 – 972. [CrossRef]

- R, V.C.; Asha, V.; Saju, B.; N, S.; Mrudhula Reddy, T.R.; M, S.K. Face Recognition and Identification Using Deep Learning. In Proceedings of the 2023 Third International Conference on Advances in Electrical, Computing, Communication and Sustainable Technologies (ICAECT), 2023, pp. 1–5. [CrossRef]

- Adadi, A.; Berrada Khan, M. Peeking inside the black-box: A survey on explainable artificial intelligence (XAI). IEEE Access 2018, 6, 52138–52160. [CrossRef]

- Kaur, D.; Uslu, S.; Rittichier, K.J.; Durresi, A. Trustworthy Artificial Intelligence: A Review. ACM Comput. Surv. 2022, 55. [CrossRef]

- Wiggerthale, J.; Reich, C. Explainable Machine Learning in Critical Decision Systems: Ensuring Safe Application and Correctness. AI 2024, 5, 2864–2896. [CrossRef]

- European Parliament. EU AI Act: First Regulation on Artificial Intelligence. Available online: https://www.europarl.europa.eu/topics/en/article/20230601STO93804/eu-ai-act-first-regulation-on-artificial-intelligence, 2023. accesed on 28 Sep 2024.

- Mahajan, P.; Aujla, G.S.; Krishna, C.R. Explainable Edge Computing in a Distributed AI - Powered Autonomous Vehicular Networks. In Proceedings of the 2024 IEEE International Conference on Communications Workshops (ICC Workshops), Denver, USA, 03 2024; pp. 1195–1200. [CrossRef]

- Paul, S.; Vijayshankar, S.; Macwan, R. Demystifying Cyberattacks: Potential for Securing Energy Systems With Explainable AI. In Proceedings of the 2024 International Conference on Computing, Networking and Communications (ICNC), Hawaii, USA, 02 2024; pp. 430–434. [CrossRef]

- Afzal-Houshmand, S.; Papamartzivanos, D.; Homayoun, S.; Veliou, E.; Jensen, C.D.; Voulodimos, A.; Giannetsos, T. Explainable Artificial Intelligence to Enhance Data Trustworthiness in Crowd-Sensing Systems. In Proceedings of the 2023 19th International Conference on Distributed Computing in Smart Systems and the Internet of Things (DCOSS-IoT), Pafos, Cyprus, 06 2023; pp. 568–576. [CrossRef]

- Moghadasi, N.; Piran, M.; Valdez, R.S.; Baek, S.; Moghaddasi, N.; Polmateer, T.L.; Lambert, J.H. Process Quality Assurance of Artificial Intelligence in Medical Diagnosis. In Proceedings of the 2024 International Conference on Intelligent Systems and Computer Vision (ISCV), Fez, Morocco, 05 2024; pp. 1–8. [CrossRef]

- Rožman, J.; Hagras, H.; Andreu-Perez, J.; Clarke, D.; Müeller, B.; Fitz, S. A Type-2 Fuzzy Logic Based Explainable AI Approach for the Easy Calibration of AI models in IoT Environments. In Proceedings of the 2021 IEEE International Conference on Fuzzy Systems (FUZZ-IEEE), Luxembourg, 07 2021; pp. 1–8. [CrossRef]

- Shtayat, M.M.; Hasan, M.K.; Sulaiman, R.; Islam, S.; Khan, A.U.R. An Explainable Ensemble Deep Learning Approach for Intrusion Detection in Industrial Internet of Things. IEEE Access 2023, 11, 115047–115061. [CrossRef]

- Sherry, L.; Baldo, J.; Berlin, B. Design of Flight Guidance and Control Systems Using Explainable AI. In Proceedings of the 2021 Integrated Communications Navigation and Surveillance Conference (ICNS), Virtual Event, 04 2021; pp. 1–10. [CrossRef]

- Wang, K.; Yin, S.; Wang, Y.; Li, S. Explainable Deep Learning for Medical Image Segmentation With Learnable Class Activation Mapping. In Proceedings of the 2023 2nd Asia Conference on Algorithms, Computing and Machine Learning, Shanghai, China, 05 2023; p. 210–215. [CrossRef]

- Ahmad Khan, M.; Khan, M.; Dawood, H.; Dawood, H.; Daud, A. in Heavy Transport. IEEE Access 2024, 12, 114940–114950. [CrossRef]

- Masud, M.T.; Keshk, M.; Moustafa, N.; Linkov, I.; Emge, D.K. Explainable Artificial Intelligence for Resilient Security Applications in the Internet of Things. IEEE open J. Commun. Soc. 2024, pp. 1–1. [CrossRef]

- Molnar, C. Interpretable Machine Learning, 2 ed.; Independently published, 2022.

- Kares, F.; Speith, T.; Zhang, H.; Langer, M. What Makes for a Good Saliency Map? Comparing Strategies for Evaluating Saliency Maps in Explainable AI (XAI), 2025, [arXiv:cs.HC/2504.17023].

- Gizzini, A.K.; Medjahdi, Y.; Ghandour, A.J.; Clavier, L. Towards Explainable AI for Channel Estimation in Wireless Communications. IEEE Transactions on Vehicular Technology 2024, 73, 7389–7394. [CrossRef]

- Salvi, M.; Seoni, S.; Campagner, A.; Gertych, A.; Acharya, U.; Molinari, F.; Cabitza, F. Explainability and uncertainty: Two sides of the same coin for enhancing the interpretability of deep learning models in healthcare. International Journal of Medical Informatics 2025, 197, 105846. [CrossRef]

- Abdar, M.; Pourpanah, F.; Hussain, S.; Rezazadegan, D.; Liu, L.; Ghavamzadeh, M.; Fieguth, P.; Cao, X.; Khosravi, A.; Acharya, U.R.; et al. A review of uncertainty quantification in deep learning: Techniques, applications and challenges. J. Inf. Fusion 2021, 76, 243–297. [CrossRef]

- Gal, Y.; Ghahramani, Z. Dropout as a Bayesian Approximation: Representing Model Uncertainty in Deep Learning. arXiv 2016, [arXiv:stat.ML/1506.02142].

- Islam, F.; Rahman, M. Metal Surface Defect Inspection through Deep Neural Network. In Proceedings of the Proceedings of the International Conference on Mechanical, Industrial and Energy Engineering 2018, 2018.

| Phase | Objective | Core Activities | Roles | Regulatory Alignment |

|---|---|---|---|---|

| Data Collection | Generate traceable and representative data | Implement labeling interface | Domain expert labels and corrects uncertain cases | Article 10 |

| Log all annotations | ||||

| Include uncertainty and data source metadata | ||||

| Reliability | Build robust models with intrinsic safety | Train diverse models | Developer examines explanations to identify bias | Article 15 |

| Integrate XAI (e.g., Grad-CAM) and UQ (e.g., MC Dropout) | Developer defines uncertainty thresholds for validation phase | Article 13 | ||

| Detect bias and overfitting | ||||

| Validation | Iteratively improve models via expert feedback | Identify samples with high uncertainty / conflicting predictions | Domain expert reviews flagged samples and refines labels | Article 14 |

| Retrain using expert-verified data | Article 15 | |||

| Verification | Define and verify model application scope | Determine operational ranges (image type, brightness, device) | Developer validates model under defined operating conditions | Art. 9 |

| Define confidence thresholds | Article 11 | |||

| Provide instructions for application | ||||

| Deployment & Monitoring | Continuous assurance and improvement | Deploy model with uncertainty-based alerts | Operator supervises automated decisions | Article 12 |

| Regular operator audits | Article 13 | |||

| Log all interactions | Article 14 |

| Defect Class | Scratches | Rolled | Pitted | Patches | Inclusion | Crazing | Total |

|---|---|---|---|---|---|---|---|

| VGG uncertain | 8 | 5 | 1 | 0 | 0 | 0 | 14 |

| ResNet uncertain | 6 | 0 | 3 | 2 | 0 | 1 | 12 |

| Deviation | 4 | 0 | 2 | 0 | 0 | 0 | 6 |

| Total | 9 | 5 | 5 | 2 | 0 | 1 | 22 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).