Submitted:

24 November 2025

Posted:

25 November 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

- We propose SCD-Seg, a novel self-calibrating dual-stream semi-supervised segmentation framework that effectively combines a 3D CNN-based structural stream and a 3D Transformer-based contextual stream for robust 3D medical image segmentation.

- We introduce an Uncertainty-Guided Pseudo-Label Refinement (UGPR) module that leverages predicted uncertainty to generate high-quality pseudo-labels, significantly mitigating the issue of pseudo-label noise.

- We develop an Adaptive Feature Integration (AFI) module that dynamically fuses multi-scale features from both network streams, enabling the model to optimally balance local details and global context for enhanced segmentation accuracy.

- We demonstrate that SCD-Seg achieves superior performance across multiple challenging 3D medical image segmentation tasks, outperforming state-of-the-art semi-supervised methods, including Diff-CL, particularly in scenarios with limited labeled data.

2. Related Work

2.1. Semi-Supervised Learning for 3D Medical Image Segmentation

2.2. Deep Learning Architectures for 3D Medical Image Segmentation

3. Method

3.1. Overall Framework

3.2. Structural Stream (SS)

3.3. Contextual Stream (CS)

3.4. Cross-Consistency Supervision (CCS)

3.5. Uncertainty-Guided Pseudo-Label Refinement (UGPR) Module

3.6. Adaptive Feature Integration (AFI) Module

3.7. Loss Functions

3.7.1. Supervised Loss ()

3.7.2. Cross-Consistency Loss ()

3.7.3. Uncertainty-Guided Pseudo-Label Loss ()

3.7.4. Adaptive Feature Integration Loss ()

4. Experiments

4.1. Experimental Setup

4.1.1. Datasets

4.1.2. Data Partitioning and Labeling Ratios

- LA: We evaluated two semi-supervised settings: 4 labeled volumes and 76 unlabeled volumes (5% labeled), and 8 labeled volumes and 72 unlabeled volumes (10% labeled).

- BraTS: For this dataset, we used 25 labeled volumes and 225 unlabeled volumes (10% labeled), and 50 labeled volumes and 200 unlabeled volumes (20% labeled).

- Pancreas: We experimented with 6 labeled volumes and 56 unlabeled volumes (approximately 10% labeled), and 12 labeled volumes and 50 unlabeled volumes (approximately 20% labeled).

4.1.3. Evaluation Metrics

4.1.4. Implementation Details

4.1.5. Baseline Methods

4.2. Quantitative Results

4.2.1. Results on LA Dataset

4.2.2. Results on BraTS Dataset

4.2.3. Results on Pancreas Dataset

4.3. Ablation Study

4.4. Human Evaluation

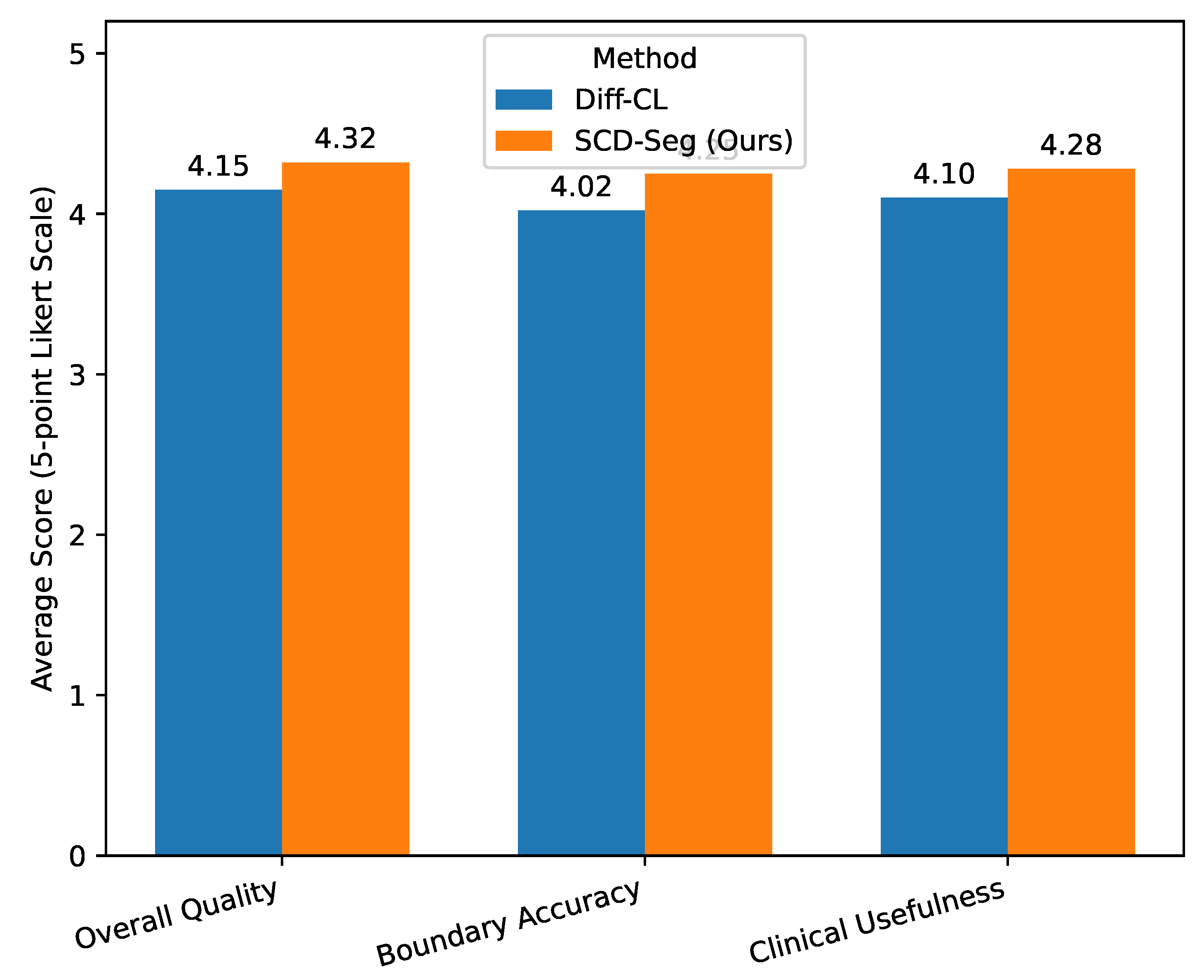

- SCD-Seg consistently received higher average scores across all subjective criteria compared to Diff-CL [23].

- For Overall Quality, SCD-Seg achieved an average score of 4.32, indicating that radiologists perceived its segmentations as generally superior.

- In terms of Boundary Accuracy, SCD-Seg scored 4.25, suggesting that the refined pseudo-labels and adaptive feature integration lead to more precise and anatomically plausible boundaries, which is critical for diagnosis and treatment planning.

- The higher score for Clinical Usefulness (4.28) for SCD-Seg indicates that the expert radiologists found its segmentation outputs more reliable and directly applicable in a clinical context.

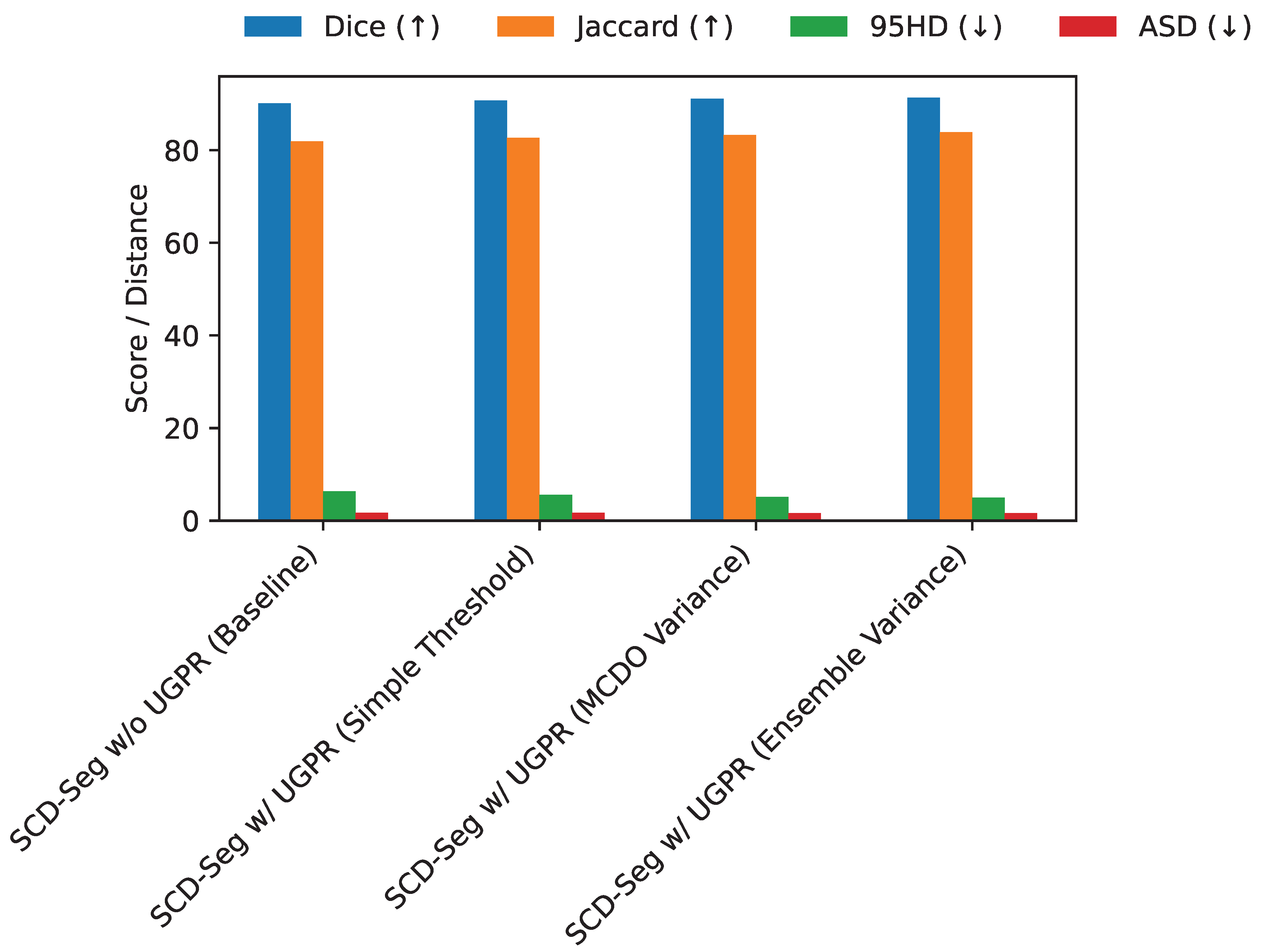

4.5. Analysis of Uncertainty-Guided Pseudo-Label Refinement (UGPR)

4.6. Adaptive Feature Integration (AFI) Mechanism Variants

4.7. Sensitivity to Loss Weight Parameters

- Cross-Consistency Supervision (): Reducing to 0.1 significantly degrades performance (Dice 90.80%), indicating that strong consistency between streams is vital for effective semi-supervised learning. Increasing it to 1.0 also shows a slight drop from optimal, suggesting a balanced influence is best.

- Uncertainty-Guided Pseudo-Label Loss (): This loss term appears to be quite impactful. A lower (0.5) leads to a noticeable performance drop (Dice 90.95%), emphasizing the importance of robust pseudo-label supervision. A higher weight (2.0) performs better than a lower one but is still slightly below the optimal 1.0, highlighting that too much emphasis on pseudo-labels could also introduce noise.

- Adaptive Feature Integration Loss (): The contributes to refining the integrated features. Both lower (0.1) and higher (0.5) values for show slight decreases from the optimal. This suggests that the AFI module benefits from a moderate auxiliary supervision to ensure its features are well-formed without dominating the primary segmentation task.

4.8. Computational Performance Analysis

- Parameters: SCD-Seg has 42.5 million parameters, which is comparable to other dual-branch or teacher-student models like MC-Net (38.8M) and Diff-CL (45.1M). The slightly higher parameter count compared to MC-Net is due to the additional complexity of the Transformer-based CS and the AFI module, but it is still within a reasonable range for 3D medical image analysis.

- Training Time: Training SCD-Seg takes approximately 26.0 hours, which is slightly less than Diff-CL (28.5 hours) despite SCD-Seg’s intricate self-calibration mechanisms. This indicates that the efficient design of the Transformer-based CS and the streamlined interaction between modules prevent an excessive increase in training overhead.

- Inference Time: For inference, SCD-Seg processes each 3D volume in approximately 0.38 seconds. This is faster than Diff-CL (0.42 seconds) and only marginally slower than MC-Net (0.35 seconds). The efficient forward pass of the dual-stream architecture and the optimized AFI module ensure that the model remains practical for real-time or near real-time clinical applications.

5. Conclusions

References

- Ningyu Zhang, Mosha Chen, Zhen Bi, Xiaozhuan Liang, Lei Li, Xin Shang, Kangping Yin, Chuanqi Tan, Jian Xu, Fei Huang, Luo Si, Yuan Ni, Guotong Xie, Zhifang Sui, Baobao Chang, Hui Zong, Zheng Yuan, Linfeng Li, Jun Yan, Hongying Zan, Kunli Zhang, Buzhou Tang, and Qingcai Chen. CBLUE: A Chinese biomedical language understanding evaluation benchmark. In Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 7888–7915. Association for Computational Linguistics, 2022.

- Cui Xuehao, Wen Dejia, and Li Xiaorong. Integration of immunometabolic composite indices and machine learning for diabetic retinopathy risk stratification: Insights from nhanes 2011–2020. Ophthalmology Science, page 100854, 2025.

- Huijun Zhou, Jingzhi Wang, and Xuehao Cui. Causal effect of immune cells, metabolites, cathepsins, and vitamin therapy in diabetic retinopathy: a mendelian randomization and cross-sectional study. Frontiers in Immunology, 15:1443236, 2024.

- Chen Zhou, Bing Wang, Zihan Zhou, Tong Wang, Xuehao Cui, and Yuanyin Teng. Ukall 2011: Flawed noninferiority and overlooked interactions undermine conclusions. Journal of Clinical Oncology, 43(28):3135–3136, 2025.

- Luqing Ren. Ai-powered financial insights: Using large language models to improve government decision-making and policy execution. Journal of Industrial Engineering and Applied Science, 3(5):21–26, 2025.

- Jingyi Huang, Zelong Tian, and Yujuan Qiu. Ai-enhanced dynamic power grid simulation for real-time decision-making. In 2025 4th International Conference on Smart Grids and Energy Systems (SGES), pages 15–19, 2025.

- Yi Fung, Christopher Thomas, Revanth Gangi Reddy, Sandeep Polisetty, Heng Ji, Shih-Fu Chang, Kathleen McKeown, Mohit Bansal, and Avi Sil. InfoSurgeon: Cross-media fine-grained information consistency checking for fake news detection. In Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers), pages 1683–1698. Association for Computational Linguistics, 2021.

- Xuming Hu, Chenwei Zhang, Fukun Ma, Chenyao Liu, Lijie Wen, and Philip S. Yu. Semi-supervised relation extraction via incremental meta self-training. In Findings of the Association for Computational Linguistics: EMNLP 2021, pages 487–496. Association for Computational Linguistics, 2021.

- Lisa Anne Hendricks and Aida Nematzadeh. Probing image-language transformers for verb understanding. In Findings of the Association for Computational Linguistics: ACL-IJCNLP 2021, pages 3635–3644. Association for Computational Linguistics, 2021.

- Zhen Tian, Zhihao Lin, Dezong Zhao, Wenjing Zhao, David Flynn, Shuja Ansari, and Chongfeng Wei. Evaluating scenario-based decision-making for interactive autonomous driving using rational criteria: A survey. arXiv preprint arXiv:2501.01886, 2025.

- Zhihao Lin, Jianglin Lan, Christos Anagnostopoulos, Zhen Tian, and David Flynn. Safety-critical multi-agent mcts for mixed traffic coordination at unsignalized intersections. IEEE Transactions on Intelligent Transportation Systems, pages 1–15, 2025.

- Zesheng Shi, Yucheng Zhou, Jing Li, Yuxin Jin, Yu Li, Daojing He, Fangming Liu, Saleh Alharbi, Jun Yu, and Min Zhang. Safety alignment via constrained knowledge unlearning. In Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 25515–25529, 2025.

- Yucheng Zhou, Xiang Li, Qianning Wang, and Jianbing Shen. Visual in-context learning for large vision-language models. In Findings of the Association for Computational Linguistics, ACL 2024, Bangkok, Thailand and virtual meeting, August 11-16, 2024, pages 15890–15902. Association for Computational Linguistics, 2024.

- Zhitao Wang, Yirong Xiong, Roberto Horowitz, Yanke Wang, and Yuxing Han. Hybrid perception and equivariant diffusion for robust multi-node rebar tying. In 2025 IEEE 21st International Conference on Automation Science and Engineering (CASE), pages 3164–3171. IEEE, 2025.

- Luqing Ren et al. Boosting algorithm optimization technology for ensemble learning in small sample fraud detection. Academic Journal of Engineering and Technology Science, 8(4):53–60, 2025.

- Yucheng Zhou, Jianbing Shen, and Yu Cheng. Weak to strong generalization for large language models with multi-capabilities. In The Thirteenth International Conference on Learning Representations, 2025.

- Dongfang Lou, Zhilin Liao, Shumin Deng, Ningyu Zhang, and Huajun Chen. MLBiNet: A cross-sentence collective event detection network. In Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers), pages 4829–4839. Association for Computational Linguistics, 2021.

- Hu Cao, Yueyue Wang, Joy Chen, Dongsheng Jiang, Xiaopeng Zhang, Qi Tian, and Manning Wang. Swin-unet: Unet-like pure transformer for medical image segmentation. arXiv preprint arXiv:2105.05537v1, 2021 2105.05537v1, 2021.

- Zhitao Wang, Jiangtao Wen, and Yuxing Han. Ep-sam: An edge-detection prompt sam based efficient framework for ultra-low light video segmentation. In ICASSP 2025-2025 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pages 1–5. IEEE, 2025.

- Matt Gardner, William Merrill, Jesse Dodge, Matthew Peters, Alexis Ross, Sameer Singh, and Noah A. Smith. Competency problems: On finding and removing artifacts in language data. In Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, pages 1801–1813. Association for Computational Linguistics, 2021.

- Fenglin Liu, Shen Ge, and Xian Wu. Competence-based multimodal curriculum learning for medical report generation. In Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers), pages 3001–3012. Association for Computational Linguistics, 2021.

- William Timkey and Marten van Schijndel. All bark and no bite: Rogue dimensions in transformer language models obscure representational quality. In Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, pages 4527–4546. Association for Computational Linguistics, 2021.

- Zixuan Ke, Hu Xu, and Bing Liu. Adapting BERT for continual learning of a sequence of aspect sentiment classification tasks. In Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, pages 4746–4755. Association for Computational Linguistics, 2021.

- Zesheng Shi and Yucheng Zhou. Topic-selective graph network for topic-focused summarization. In Pacific-Asia Conference on Knowledge Discovery and Data Mining, pages 247–259. Springer, 2023.

- Zhitao Wang, Weinuo Jiang, Wenkai Wu, and Shihong Wang. Reconstruction of complex network from time series data based on graph attention network and gumbel softmax. International Journal of Modern Physics C, 34(05):2350057, 2023.

- Xikun Wu, Mingyao Lin, Peng Wang, Lun Jia, and Xinghe Fu. Off-line stator resistance identification for pmsm with pulse signal injection avoiding the dead-time effect. In 2019 22nd International Conference on Electrical Machines and Systems (ICEMS), pages 1–5. IEEE, 2019.

- Feng Wang, Zesheng Shi, Bo Wang, Nan Wang, and Han Xiao. Readerlm-v2: Small language model for html to markdown and json. arXiv preprint arXiv:2503.01151, 2025. arXiv:2503.01151, 2025.

- Zhihao Lin, Jianglin Lan, Christos Anagnostopoulos, Zhen Tian, and David Flynn. Multi-agent monte carlo tree search for safe decision making at unsignalized intersections. 2025.

- ZQ Zhu, Peng Wang, NMA Freire, Ziad Azar, and Ximeng Wu. A novel rotor position-offset injection-based online parameter estimation of sensorless controlled surface-mounted pmsms. IEEE Transactions on Energy Conversion, 39(3):1930–1946, 2024.

- Peng Wang, ZQ Zhu, and Dawei Liang. Improved position-offset based online parameter estimation of pmsms under constant and variable speed operations. IEEE Transactions on Energy Conversion, 39(2):1325–1340, 2024.

- Jingyi Huang and Yujuan Qiu. Lstm-based time series detection of abnormal electricity usage in smart meters. In 2025 5th International Symposium on Computer Technology and Information Science (ISCTIS), pages 272–276, 2025.

| Method | Labeled/Unlabeled | Dice (↑) | Jaccard (↑) | 95HD (↓) | ASD (↓) |

|---|---|---|---|---|---|

| V-Net | 8 / 0 | 78.57 | 66.96 | 21.20 | 6.07 |

| MC-Net | 8 / 72 | 88.99 | 80.32 | 7.92 | 1.76 |

| CAML | 8 / 72 | 89.44 | 81.01 | 10.10 | 2.09 |

| ML-RPL | 8 / 72 | 87.35 | 77.72 | 8.99 | 2.17 |

| Diff-CL [23] | 8 / 72 | 91.00 | 83.54 | 5.08 | 1.68 |

| SCD-Seg (Ours) | 8 / 72 | 91.35 | 83.90 | 4.95 | 1.60 |

| Method | Labeled/Unlabeled | Dice (↑) | Jaccard (↑) | 95HD (↓) | ASD (↓) |

|---|---|---|---|---|---|

| V-Net | 4 / 0 | 72.10 | 60.12 | 28.50 | 7.80 |

| MC-Net | 4 / 76 | 85.15 | 75.30 | 10.85 | 2.51 |

| CAML | 4 / 76 | 86.02 | 76.40 | 11.50 | 2.65 |

| ML-RPL | 4 / 76 | 84.70 | 74.50 | 12.00 | 2.78 |

| Diff-CL [23] | 4 / 76 | 88.55 | 79.50 | 7.10 | 2.05 |

| SCD-Seg (Ours) | 4 / 76 | 89.10 | 80.20 | 6.85 | 1.95 |

| Method | Labeled/Unlabeled | Dice (↑) | Jaccard (↑) | 95HD (↓) | ASD (↓) |

|---|---|---|---|---|---|

| V-Net | 25 / 0 | 70.20 | 56.70 | 15.10 | 4.50 |

| MC-Net | 25 / 225 | 78.50 | 67.45 | 10.20 | 3.10 |

| Diff-CL [23] | 25 / 225 | 80.15 | 69.80 | 8.50 | 2.85 |

| SCD-Seg (Ours) | 25 / 225 | 81.20 | 71.30 | 7.90 | 2.70 |

| V-Net | 50 / 0 | 74.50 | 61.50 | 12.80 | 3.90 |

| MC-Net | 50 / 200 | 81.30 | 70.80 | 9.10 | 2.95 |

| Diff-CL [23] | 50 / 200 | 83.05 | 72.80 | 7.30 | 2.50 |

| SCD-Seg (Ours) | 50 / 200 | 84.10 | 74.40 | 6.80 | 2.35 |

| Method | Labeled/Unlabeled | Dice (↑) | Jaccard (↑) | 95HD (↓) | ASD (↓) |

|---|---|---|---|---|---|

| V-Net | 6 / 0 | 65.80 | 50.00 | 18.50 | 5.10 |

| MC-Net | 6 / 56 | 73.10 | 59.90 | 13.00 | 3.80 |

| Diff-CL [23] | 6 / 56 | 75.05 | 62.50 | 11.50 | 3.50 |

| SCD-Seg (Ours) | 6 / 56 | 76.30 | 64.00 | 10.80 | 3.20 |

| V-Net | 12 / 0 | 69.90 | 53.70 | 16.20 | 4.70 |

| MC-Net | 12 / 50 | 76.50 | 63.80 | 11.00 | 3.30 |

| Diff-CL [23] | 12 / 50 | 78.80 | 66.50 | 9.80 | 3.00 |

| SCD-Seg (Ours) | 12 / 50 | 80.05 | 68.00 | 9.10 | 2.85 |

| Method Configuration | Labeled/Unlabeled | Dice (↑) | Jaccard (↑) | 95HD (↓) | ASD (↓) |

|---|---|---|---|---|---|

| SS only (Supervised) | 8 / 0 | 78.57 | 66.96 | 21.20 | 6.07 |

| SS + CS (w/o CCS, UGPR, AFI) | 8 / 72 | 89.21 | 80.50 | 7.55 | 1.79 |

| SCD-Seg w/o UGPR | 8 / 72 | 90.15 | 81.88 | 6.32 | 1.71 |

| SCD-Seg w/o AFI | 8 / 72 | 90.58 | 82.50 | 5.80 | 1.65 |

| SCD-Seg (Full Model) | 8 / 72 | 91.35 | 83.90 | 4.95 | 1.60 |

| AFI Mechanism | (Un)Labeled | Dice (↑) | Jaccard (↑) | 95HD (↓) | ASD (↓) |

|---|---|---|---|---|---|

| SCD-Seg w/o AFI (Concatenation only) | 72 / 8 | 90.58 | 82.50 | 5.80 | 1.65 |

| SCD-Seg w/ AFI (Simple Summation) | 72 / 8 | 90.75 | 82.75 | 5.55 | 1.64 |

| SCD-Seg w/ AFI (Cross-Attention) | 72 / 8 | 91.05 | 83.20 | 5.20 | 1.61 |

| SCD-Seg w/ AFI (Learned Gating) | 72 / 8 | 91.35 | 83.90 | 4.95 | 1.60 |

| Configuration | Dice (↑) | 95HD (↓) | ASD (↓) | |||

|---|---|---|---|---|---|---|

| Default Optimal | 0.5 | 1.0 | 0.2 | 91.35 | 4.95 | 1.60 |

| Low CCS | 0.1 | 1.0 | 0.2 | 90.80 | 5.50 | 1.69 |

| High CCS | 1.0 | 1.0 | 0.2 | 91.10 | 5.20 | 1.63 |

| Low UGPR | 0.5 | 0.5 | 0.2 | 90.95 | 5.35 | 1.66 |

| High UGPR | 0.5 | 2.0 | 0.2 | 91.20 | 5.10 | 1.61 |

| Low AFI | 0.5 | 1.0 | 0.1 | 91.15 | 5.18 | 1.62 |

| High AFI | 0.5 | 1.0 | 0.5 | 91.25 | 5.05 | 1.61 |

| Method | Parameters (M) (↓) | Training Time (hours) (↓) | Inference Time (s/volume) (↓) |

|---|---|---|---|

| V-Net | 19.4 | 10.5 | 0.18 |

| MC-Net | 38.8 | 21.0 | 0.35 |

| Diff-CL [23] | 45.1 | 28.5 | 0.42 |

| SCD-Seg (Ours) | 42.5 | 26.0 | 0.38 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).