Submitted:

23 November 2025

Posted:

24 November 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

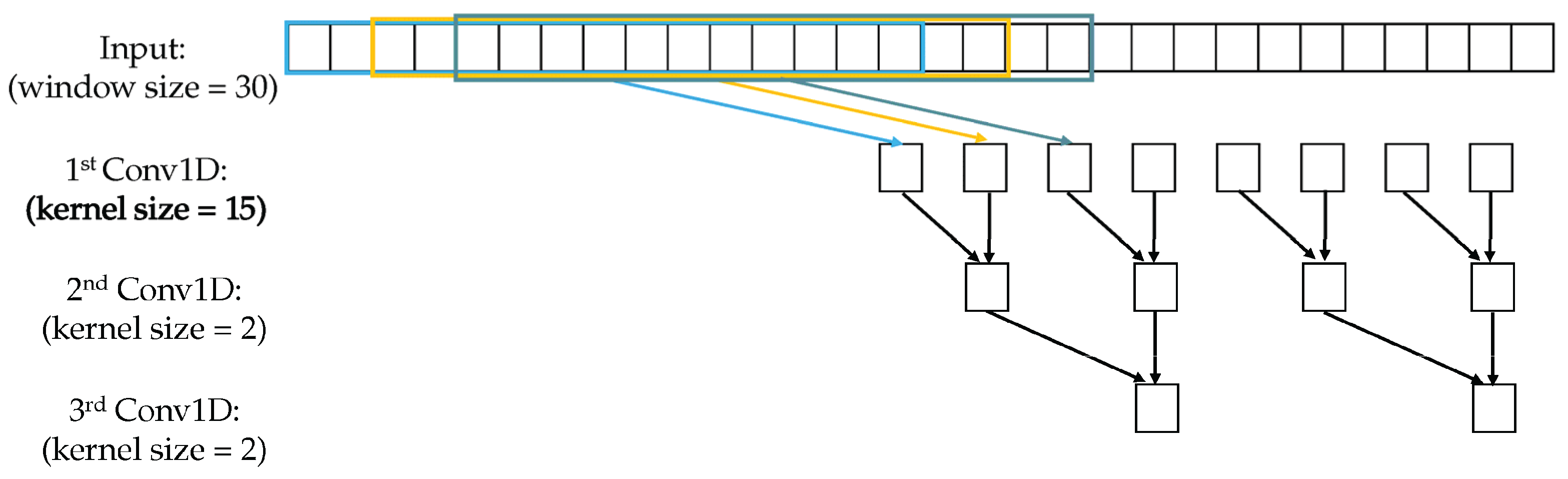

2. Materials and Methods

2.1. Sample Description and Data Sources

2.2. Experimental Design and Baseline Models

2.3. Measurement Methods and Quality Control

2.4. Data Processing and Model Equations

2.5. Statistical Analysis and Model Validation

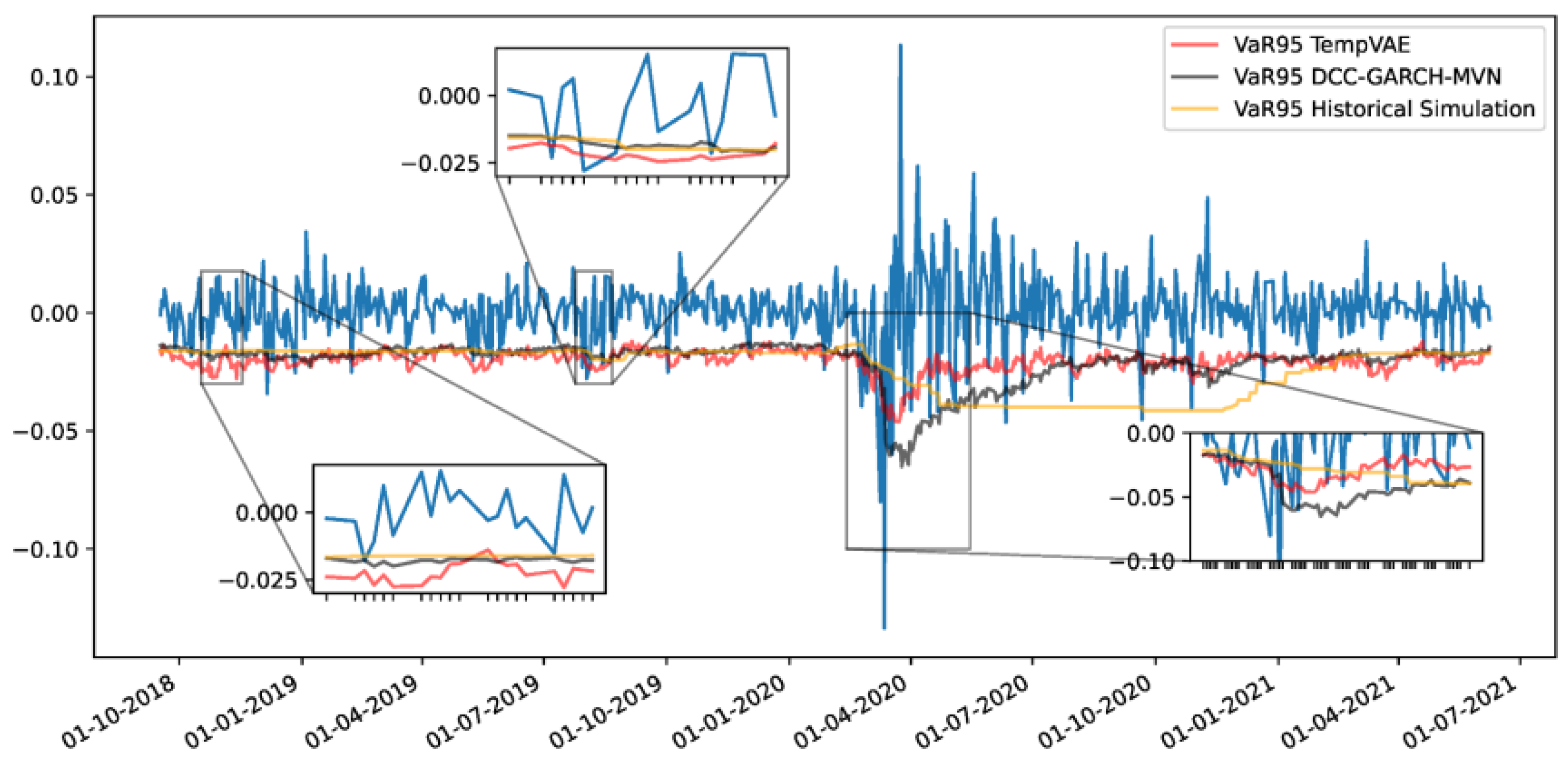

3. Results and Discussion

3.1. Model Accuracy and Feature Contribution

3.2. Comparison with Linear Dimensional Reduction

3.3. Robustness Under Market Shifts

3.4. Comparison with Other Hybrid Prediction Methods

4. Conclusions

References

- Purnomo, E.; Alfiansyah, R. A Dynamic Nexus: Integrating Big Data Analytics and Distributed Computing for Real-Time Risk Management of Derivatives Portfolios. Int. J. Intell. Data Mach. Learn. 2025, 2, 37–44. [Google Scholar]

- Behera, I.; Nanda, P.; Mitra, S.; Kumari, S. Machine Learning Approaches for Forecasting Financial Market Volatility. Mach. Learn. Approaches Financ. Anal. 2024, 431–451. [Google Scholar]

- Yang, J.; Li, Y.; Harper, D.; Clarke, I.; Li, J. Macro Financial Prediction of Cross Border Real Estate Returns Using XGBoost LSTM Models. J. Artif. Intell. Inf. 2025, 2, 113–118. [Google Scholar]

- Kim, J.; Kim, H.; Kim, H.; Lee, D.; Yoon, S. A Comprehensive Survey of Deep Learning for Time Series Forecasting: Architectural Diversity and Open Challenges. arXiv 2024, arXiv:2411.05793. [Google Scholar] [CrossRef]

- Whitmore, J.; Mehra, P.; Yang, J.; Linford, E. Privacy Preserving Risk Modeling Across Financial Institutions via Federated Learning with Adaptive Optimization. Front. Artif. Intell. Res. 2025, 2, 35–43. [Google Scholar] [CrossRef]

- Elias, M. E. (2025). Barbells in Hilbert Space: Nonlinear Risk, Quantum Inference, and the Collapse of Classical Finance. Toward a Post-Gaussian, Non-Ergodic Framework for Risk Management.

- Zhu, W. , & Yang, J. (2025). Causal Assessment of Cross-Border Project Risk Governance and Financial Compliance: A Hierarchical Panel and Survival Analysis Approach Based on H Company's Overseas Projects.

- Stamatopoulos, I. (2025). Extracting market events from limit order book data: a data-driven approach to financial feature engineering (Master's thesis, Πανεπιστήμιο Πειραιώς).

- Wang, J. , & Xiao, Y. (2025). Assessing the Spillover Effects of Marketing Promotions on Credit Risk in Consumer Finance: An Empirical Study Based on AB Testing and Causal Inference.

- Hollmann, N.; Müller, S.; Purucker, L.; Krishnakumar, A.; Körfer, M.; Bin Hoo, S.; Schirrmeister, R.T.; Hutter, F. Accurate predictions on small data with a tabular foundation model. Nature 2025, 637, 319–326. [Google Scholar] [CrossRef] [PubMed]

- Ai, M. (2023, December). Enhancing Realized Volatility Prediction: An Exploration into LightGBM Baseline Models. In International Conference on 3D Imaging Technologies (pp. 179-189). Singapore: Springer Nature Singapore.

- Liu, Z. (2022, January). Stock volatility prediction using LightGBM based algorithm. In 2022 International Conference on Big Data, Information and Computer Network (BDICN) (pp. 283-286). IEEE.

- Alarbi, A.; Khalifa, W.; Alzubi, A. A Hybrid AI Framework for Enhanced Stock Movement Prediction: Integrating ARIMA, RNN, and LightGBM Models. Systems 2025, 13, 162. [Google Scholar] [CrossRef]

- Hu, Q. , Li, X., Li, Z., & Zhang, Y. (2025). Generative AI of Pinecone Vector Retrieval and Retrieval-Augmented Generation Architecture: Financial Data-Driven Intelligent Customer Recommendation System.

- Sultana, N.; Shoha, S.; Dolon, S.A.; Al Shiam, S.A.; Zakaria, R.M.; Shimanto, A.H.; Arefeen, S.M.S.; Abir, S.I. Machine Learning Solutions for Predicting Stock Trends in BRICS amid Global Economic Shifts and Decoding Market Dynamics. J. Econ. Finance Account. Stud. 2024, 6, 84–101. [Google Scholar] [CrossRef]

- Li, S. Momentum, volume and investor sentiment study for u.s. technology sector stocks—A hidden markov model based principal component analysis. PLOS ONE 2025, 20, e0331658. [Google Scholar] [CrossRef] [PubMed]

- Li, T. , Liu, S., Hong, E., & Xia, J. (2025). Human Resource Optimization in the Hospitality Industry Big Data Forecasting and Cross-Cultural Engagement.

- Kubiak, S., Weyde, T., Galkin, O., Philps, D., & Gopal, R. (2024, November). Denoising Diffusion Probabilistic Model for Realistic Financial Correlation Matrices. In Proceedings of the 5th ACM International Conference on AI in Finance (pp. 1-9).

- Kim, H.H.; Swanson, N.R. Forecasting financial and macroeconomic variables using data reduction methods: New empirical evidence. J. Econ. 2014, 178, 352–367. [Google Scholar] [CrossRef]

- Koochali, A.; Tahaei, E.; Dengel, A.; Ahmed, S. VAEneu: a new avenue for VAE application on probabilistic forecasting. Appl. Intell. 2025, 55, 1–23. [Google Scholar] [CrossRef]

- Stuart-Smith, R. , Studebaker, R., Yuan, M., Houser, N., & Liao, J. (2022). Viscera/L: Speculations on an Embodied, Additive and Subtractive Manufactured Architecture. Traits of Postdigital Neobaroque: Pre-Proceedings (PDNB), edited by Marjan Colletti and Laura Winterberg. Innsbruck: Universitat Innsbruck.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).