This section presents findings from learning analytics and student survey data collected over three academic years (2022/23–2024/25), evaluating the effectiveness of the integrated design framework in supporting student engagement, content quality, learning outcomes, and experiences. Results are organised into four subsections: engagement and completion patterns from learning analytics, quantitative survey findings across four dimensions (satisfaction, content quality, engagement, learning outcomes), longitudinal trends, and qualitative insights from open-ended responses.

5.1. Engagement and Completion Patterns

Over the three-year implementation period, cumulative enrolment reached more than 5,220+ students across 15+ academic programs and five faculties, demonstrating the module’s extensive reach and transdisciplinary scope.

Table 3 presents enrolment, engagement, and completion data for each of the seven DLAT microcurricula across the three cohorts.

Engagement rates, calculated as the percentage of enrolled students who attempted final assessments, varied across microcurricula and years. In 2022/23, DLAT2, DLAT3, and DLAT7 showed lower engagement (47.15–48.82%) due to several programs deferring these topics to subsequent semesters, resulting in inflated enrolment figures relative to active participation. This administrative artefact does not reflect student disengagement but rather programmatic scheduling decisions. In subsequent years, engagement rates for these microcurricula normalised, with the overall average increasing from 68.58% (2022/23) to 74.38% (2023/24) and 78.09% (2024/25).

It is important to note that engagement rates are calculated using total enrolled students as the denominator, including those who dropped courses, became inactive, or deferred participation—a conservative approach that yields lower percentages than calculations based solely on active students. This method provides a realistic assessment of actual participation relative to initial registrations. The engagement metric is reliable because, by mastery learning design, students must complete core content and activities to access final assessments; reaching assessment indicates substantive engagement with instructional materials rather than merely superficial access.

Pass rates, calculated as the percentage of students who attempted assessments and successfully completed them, were consistently high across all years and microcurricula, exceeding 95% in nearly all cases. Average pass rates increased from 95.70% (2022/23) to 96.92% (2023/24) and 99.27% (2024/25), with several microcurricula achieving 100% pass rates in later years. These rates substantially exceed typical completion rates for voluntary non-credit online courses and MOOCs, which often range from 5–15% [

54,

55], and compare favourably even with mandatory credit-bearing online courses in higher education.

While direct comparisons require caution due to differences in context, incentive structures, and completion definitions, the DLAT module’s sustained high completion rates are notable. Similar high rates (≥90%) have been reported for mandatory faculty development programs with strong institutional support [

56], suggesting that credit-bearing status, assessment requirements, and embedded support mechanisms—all features of the DLAT design—contribute significantly to completion. The combination of microcurricula modularity, extensive formative assessment with feedback, interactive content, mastery learning support, and constructive alignment likely accounts for these exceptionally strong outcomes.

5.2. Survey Responses: Quantitative Findings

The end-of-module survey yielded 1,743 complete responses across three years, representing a 33% response rate relative to total enrolment. The following subsections present findings organised by the four survey dimensions: Satisfaction, Content Quality, Engagement, and Learning Outcomes.

5.2.1. Satisfaction with Module Design and Delivery

Students consistently reported high satisfaction with the module’s design, clarity of learning outcomes, and alignment between stated objectives and actual instruction (

Table 4). In 2022/23, the inaugural year with smaller cohorts and response samples, mean satisfaction across the three items was 3.85 (between “Neutral” and “Agree”). Satisfaction increased in subsequent years to means of 3.94 (2023/24) and 4.08 (2024/25), with the aggregated three-year mean reaching 4.02 (“Agree”).

The progressive improvement in satisfaction scores across years likely reflects iterative refinements to module design based on ongoing evaluation and student feedback. By 2024/25, over 85% of respondents agreed or strongly agreed that learning outcomes were clearly stated (Q1), expectations were clear (Q2), and outcomes aligned with instruction (Q3). These findings suggest that the constructive alignment framework—explicitly linking outcomes, activities, and assessments—was effectively communicated to students and perceived as coherent.

5.2.2. Content Quality, Diversity, and Relevance

Students evaluated content positively across all quality dimensions, including preparation, quality, format diversity, and disciplinary relevance (

Table 5). Mean scores increased from 3.74 (2022/23) to 3.99 (2023/24) and 4.03(2024/25), with the aggregated three-year mean of 3.99 indicating consistent agreement that content met quality standards.

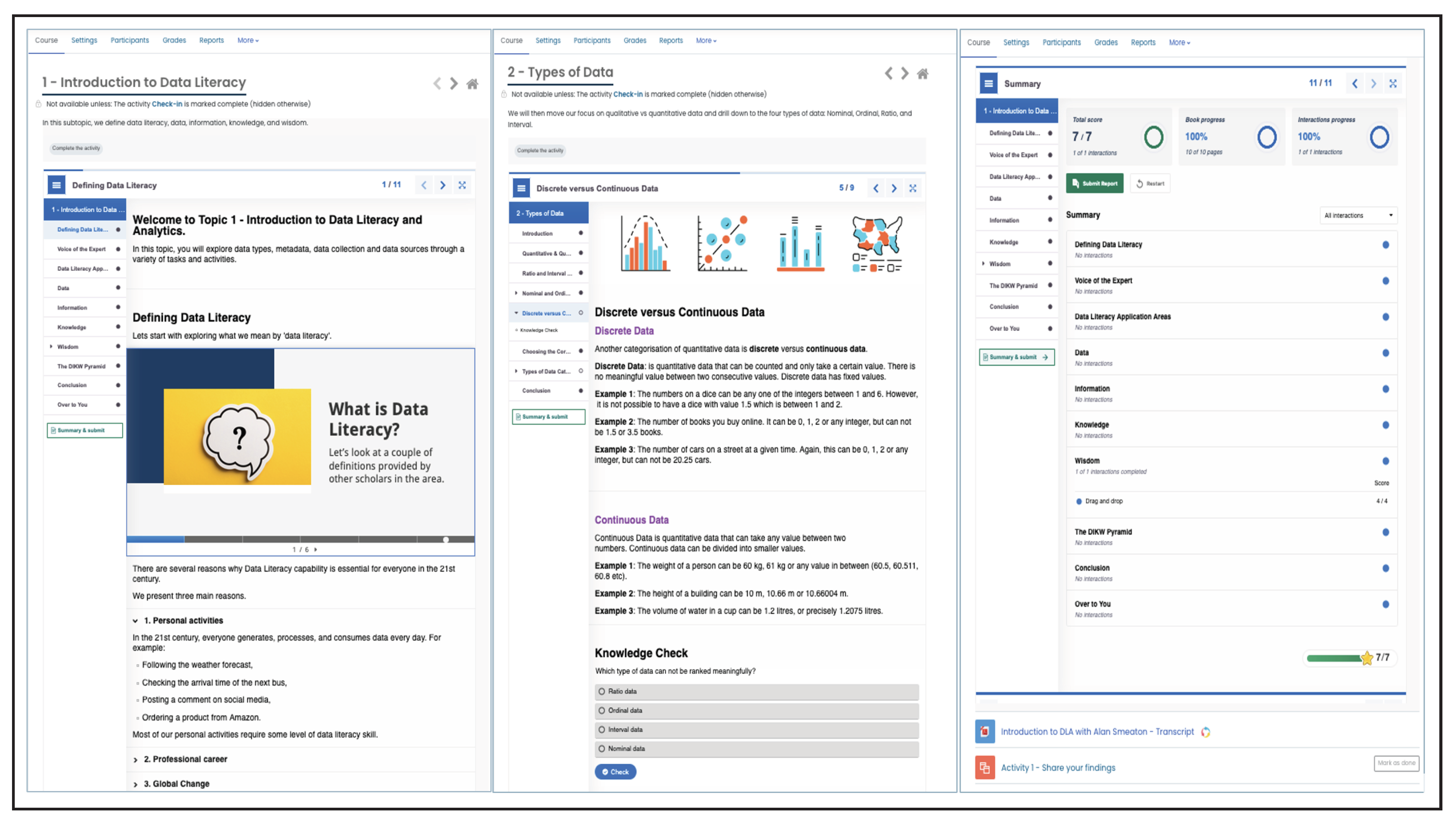

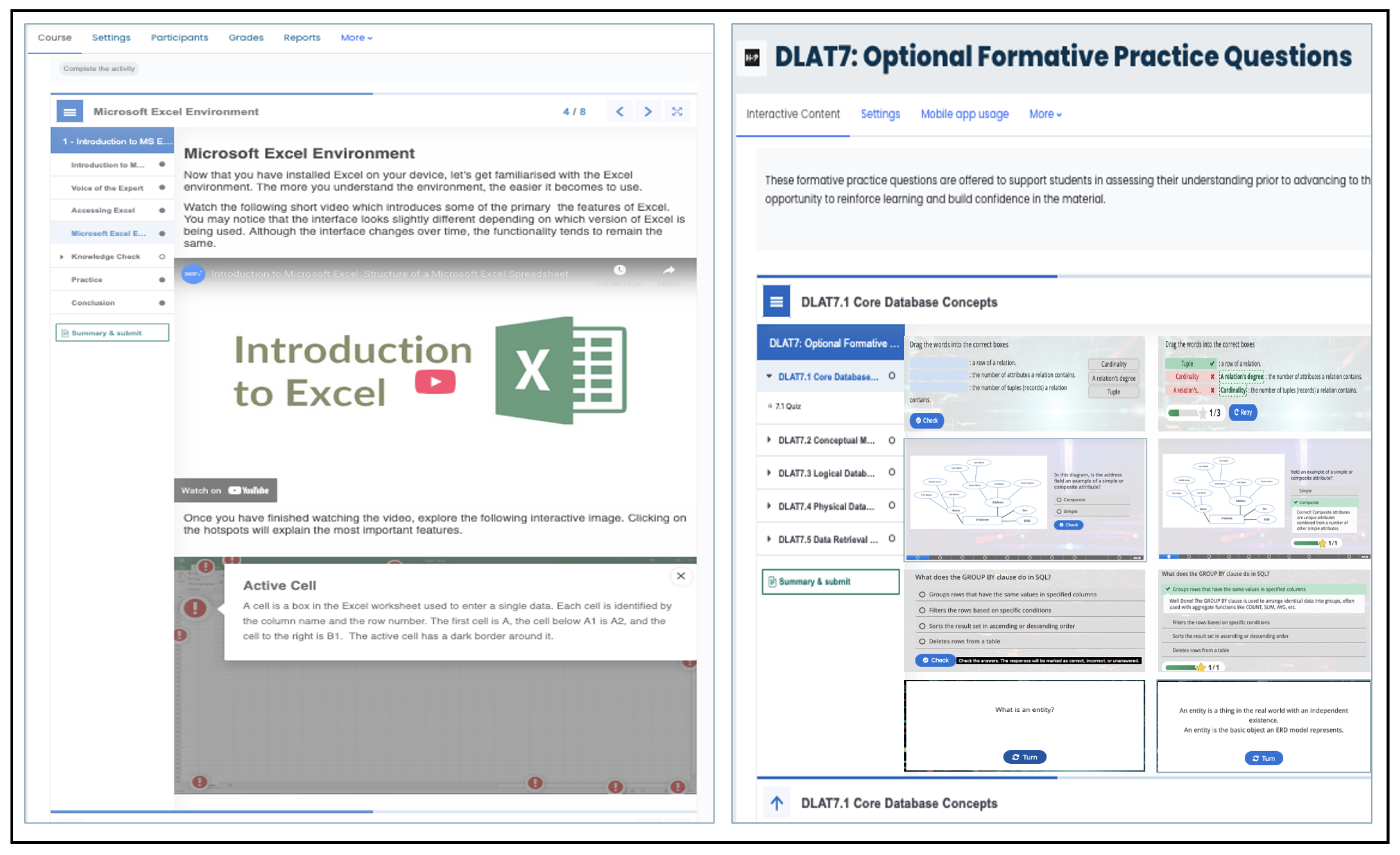

Particularly strong agreement emerged for items regarding material preparation (Q4, M=4.09) and quality (Q5, M=4.07), suggesting that the H5P interactive books, multimodal resources, and structured content design met students’ expectations. Format diversity (Q7, M=3.96) was also positively evaluated, reflecting the UDL principle of multiple means of representation through varied content types including text, videos, interactive elements, and expert interviews (“fireside chats”).

Disciplinary relevance (Q8, M=3.87) showed the lowest mean among content items but still reflected agreement. This is notable given the module’s transdisciplinary nature—serving 15+ programs with diverse content emphases. That students across varied disciplines generally found content relevant speaks to the effectiveness of the microcurricula pathway structure (Spreadsheets, R, Python) and the incorporation of domain-agnostic foundational topics alongside tool-specific skills development.

Qualitative feedback corroborated these findings, with students praising “the structure, the mix between text, slides, questions, practical tasks, and quizzes.” However, some students reported challenges navigating multiple course pages—an artefact of the microcurricula modular structure—suggesting an area for interface design improvement. Additionally, in 2022/23, some students found the 80% passing threshold challenging for certain assessments, prompting subsequent adjustments to assessment difficulty calibration and provision of additional formative practice opportunities.

5.2.3. Engagement and Intellectual Stimulation

Student engagement with the module, as measured by perceptions of content presentation effectiveness and intellectual stimulation, showed progressive improvement across years (

Table 6). Mean engagement scores increased from 3.11 (2022/23) to 3.70 (2023/24) and 3.82 (2024/25), with the aggregated mean of 3.75 indicating agreement that the module engaged attention and provided intellectual challenge.

The lower engagement scores in 2022/23, particularly for intellectual stimulation (Q10, M=3.00, “Neutral”), likely reflect multiple factors: the module’s novelty for both students and instructors, minor technical issues during initial deployment (particularly first semester), and students’ unfamiliarity with fully asynchronous online learning formats. The substantial improvement in subsequent years (Q6: +0.68 points; Q10: +0.75 points from 2022/23 to 2024/25) aligns with the introduction of enhanced interactive H5P activities, refined formative assessment integration, and increased peer collaboration opportunities through discussion forums and peer-learning activities.

Qualitative responses provided additional context. While many students praised interactive elements and engagement features, a recurring challenge was the absence of face-to-face instruction—a preference expressed by students accustomed to synchronous, lecture-based formats. Time management emerged as the most frequently reported challenge, particularly for students new to asynchronous learning who struggled with self-pacing and deadlines. Students noted difficulties including “too much new information”, “lack of face-to-face interaction,” and “text-heavy content” in some sections. However, almost all students indicated experiencing no major difficulties completing the module, suggesting these concerns represented minority perspectives rather than systemic barriers.

5.2.4. Learning Outcomes and Knowledge Gains

Students consistently reported strong perceived learning outcomes, including knowledge transferability to their disciplines and increased data literacy competencies (

Table 7). Mean scores were 3.64 (2022/23), 4.01 (2023/24), and 4.06 (2024/25), with an aggregated mean of 4.03 indicating agreement that the module enhanced their knowledge and skills.

Students particularly strongly endorsed knowledge increase (Q11, M=4.10), with over 80% agreeing or strongly agreeing across all years. Transferability to disciplinary contexts (Q9, M=3.95) also received strong endorsement, though slightly lower—likely reflecting variability in how directly different programs apply data literacy skills. These self-reported learning gains align with the objective pass rates (>95%) discussed in

Section 5.1, suggesting convergence between perceived and demonstrated competency achievement.

Qualitative responses reinforced these findings. Students frequently cited practical skills—particularly spreadsheet functions, data visualisation, and programming basics—as valuable learning outcomes with clear applicability: “The EXCEL functions were pretty useful”, “Learning the broad variety of uses Python has”, “It was very informative while providing supportive services for us as first years.” The integration of formative assessments with immediate feedback was repeatedly highlighted as supporting learning: “I enjoyed the formative assessments as they gave you an indication of your level of knowledge.”

However, students reported cognitive load challenges: “The amount of new terminology took some time to process” and “There was too much to do.” These comments suggest that for some learners, particularly those with limited prior technical experience, the breadth of content represented a significant challenge despite scaffolding and support mechanisms in place via weekly synchronous drop-in sessions. This points to a tension inherent in comprehensive data literacy education: balancing thorough coverage with manageable cognitive demands for diverse learners.

5.3. Longitudinal Trends Across Three Years

Aggregating data across all survey dimensions reveals consistent positive trends over the three-year implementation. Satisfaction scores increased modestly from 3.85 to 4.08 (+0.23 points), reflecting iterative design improvements and clarification of learning expectations. Content quality ratings improved from 3.66 to 3.99 (+0.33 points), suggesting refinements to materials, enhanced multimodal integration, and improved disciplinary relevance through pathway development.

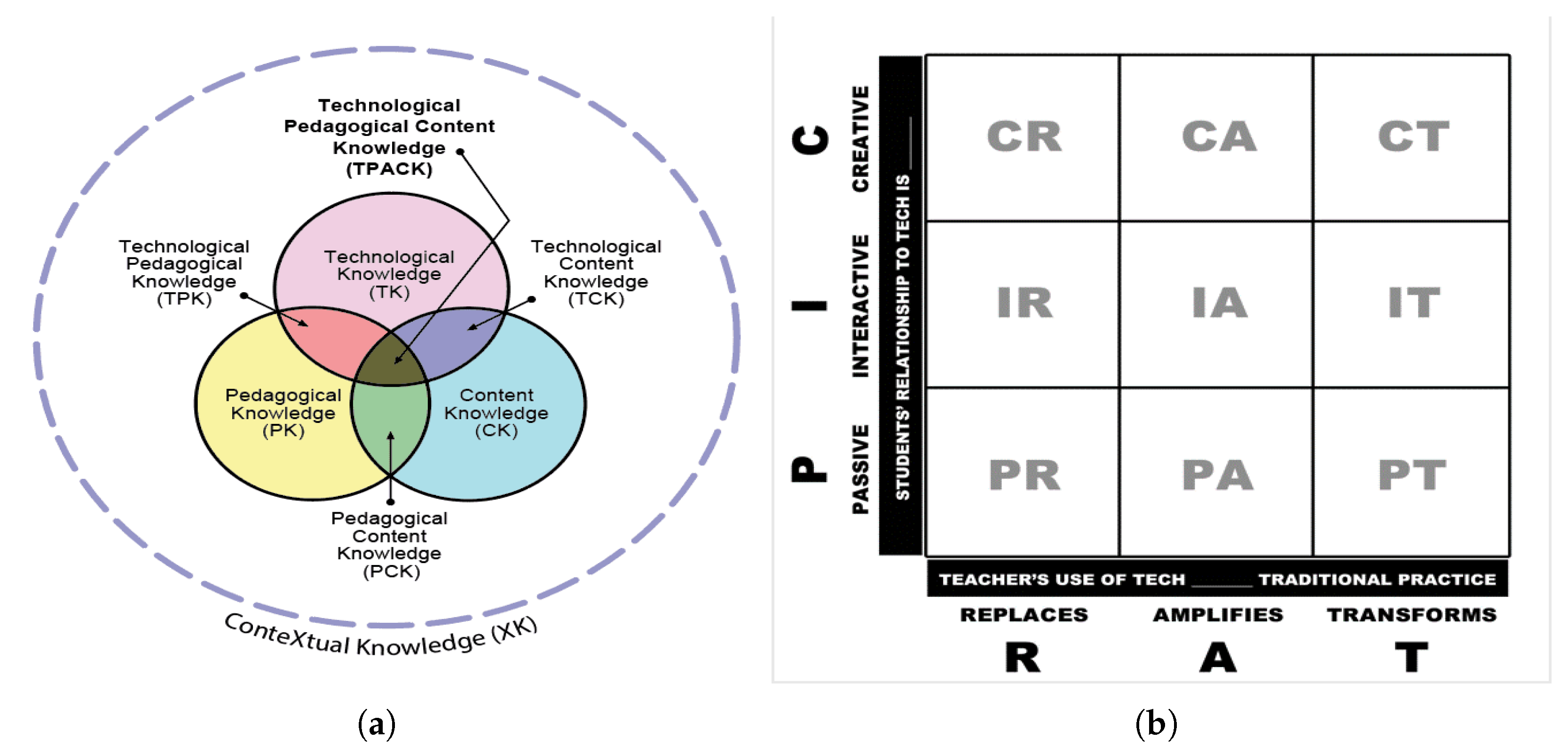

Engagement demonstrated the most substantial improvement, increasing from 3.11 to 3.82 (+0.71 points)—a notable shift from neutral to agreement. This improvement aligns temporally with the expansion of interactive H5P content types, introduction of additional collaborative peer-learning activities, and inclusion of several formative assessments co-created with students. The engagement gains suggest that deliberate application of the PICRAT framework to enhance interactivity and creativity in technology use translated into measurably improved student perceptions.

Learning outcome perceptions remained consistently high throughout (), indicating sustained effectiveness of the pedagogical approach regardless of iterative refinements. The stability of learning outcome scores, combined with increasing satisfaction, content, and engagement ratings, suggests that the foundational design—grounded in UDL, constructive alignment, and active learning—was sound from inception, with improvements addressing experience quality rather than fundamental effectiveness.

Collectively, these longitudinal patterns demonstrate that ongoing refinements guided by the integrated framework and informed by continuous evaluation contributed to improved learner experiences while maintaining consistent learning outcomes. The positive trajectory across all dimensions provides evidence that the systematic approach to integrating pedagogy, technology, and content produced a sustainable, improvable design rather than a static implementation.

5.4. Qualitative Insights from Open-Ended Responses

Analysis of open-ended responses (excluding short non-informative entries such as “No,” “None”) revealed predominantly positive sentiment (approximately 82% positive polarity), with neutral comments accounting for most remaining responses and negative comments representing a small minority.

5.4.1. Valued Features and Effective Design Elements

Students consistently praised several features aligned with the integrated framework’s design principles:

Interactivity and Immediate Feedback: The extensive formative assessment questions (350+ distributed in seven DLATs) with immediate, detailed feedback were frequently cited as particularly valuable: “I enjoyed the formative assessments as they gave you an indication of your level of knowledge”, “The immediate feedback helped me understand where I went wrong.” This aligns with the framework’s emphasis on Interactive-Amplification (PICRAT), where technology enables rapid feedback loops impossible at scale without automation.

Multimodal Content and Accessibility: Students appreciated the variety of content formats—interactive books, videos, expert interviews, quizzes, etc. —reflecting successful implementation of UDL’s multiple means of representation: “The mix between text, slides, practical tasks, and quizzes”, “Different styles such as videos and fireside chats made topics more engaging.” The ability to access content flexibly across devices and revisit materials supported diverse learning preferences and schedules.

Tool Choice and Practical Application: Students valued the ability to select tool pathways (Spreadsheets, R, Python) aligned with their program requirements and interests, exemplifying UDL’s multiple means of engagement: “Being able to choose Python was great for my major”, “Excel skills will be directly useful in my career.” The emphasis on practical, applicable skills resonated strongly: “Learning the broad variety of uses Python has”, “The EXCEL functions were pretty useful in the lab.”

Self-Paced Asynchronous Structure: Many students appreciated the flexibility of asynchronous access and self-pacing, particularly beneficial for managing diverse schedules: “I could work through content when it suited my timetable”, “The ability to go at my own pace helped me really understand concepts”. However, this same flexibility posed challenges for some students (discussed below), illustrating the double-edged nature of learner autonomy.

5.4.2. Challenges and Suggested Improvements

Students identified several challenges, providing valuable insights for ongoing refinement:

Time Management and Self-Regulation: The most frequently mentioned challenge was managing time effectively in an asynchronous format, particularly for students new to fully online learning: “Time management was difficult without set lecture times”, “I left things too late because there weren’t weekly deadlines.” This suggests that while asynchronous flexibility benefits many learners, some require additional scaffolding for self-regulation—an area for potential enhancement through progress reminders or optional milestone structures.

Cognitive Load and Content Volume: Some students found the breadth and depth of content overwhelming, particularly when tackling multiple microcurricula simultaneously: “Too much new terminology took time to process”, “There was too much to do.” These comments highlight a tension between comprehensive coverage and manageable cognitive demands, suggesting potential value in clearer guidance about content prioritisation or staged engagement strategies.

Absence of Face-to-Face Interaction: A recurring request was for synchronous lecture components or optional face-to-face sessions, reflecting some students’ preference for traditional instructional formats: “I missed having lectures to ask questions in real-time”, “Face-to-face would help with difficult concepts.” While the module’s fully asynchronous design serves scaling and accessibility needs, incorporating optional synchronous support sessions (office hours, drop-in clinics) were added to address this concern without compromising the asynchronous core.

Navigation and Module Structure: Some students found navigating across multiple microcurricula course pages challenging: “It was confusing having so many separate pages”, “Navigation between topics could be clearer.” This feedback points to potential improvements in interface design, course organisation, or provision of clearer navigation aids—addressing usability without altering pedagogical fundamentals.

Requests for Domain-Specific Examples: Students across disciplines requested more examples directly tied to their specific fields: “More business-related data examples would help”, “Case studies from health sciences would make it more relevant”. While the transdisciplinary nature necessitates balance, strategic incorporation of pathway-specific case studies or optional discipline-aligned examples were already included but additional example might enhance perceived relevance without fragmenting core content.

5.4.3. Synthesis of Qualitative Findings

The convergence between positive sentiment and constructive feedback demonstrates students’ reflective engagement with the module and perceived relevance to their learning goals. The features students valued most—interactivity, feedback, multimodality, flexibility, practical application—directly map onto the integrated framework’s design principles (PICRAT Interactive/Creative uses, UDL multiple means, constructive alignment of authentic tasks). Conversely, challenges identified—time management, cognitive load, desire for synchronous interaction, navigation complexity—reveal areas where additional scaffolding, clearer guidance, or optional supplementary support might enhance the experience for learners who struggle with fully asynchronous, self-directed formats.

Importantly, the qualitative data contextualise the quantitative findings: high pass rates and positive survey responses do not imply universal ease or absence of struggle. Rather, they suggest that the design successfully supports diverse learners in achieving outcomes despite varied challenges. The minority of students who found content overwhelming or missed face-to-face interaction still generally completed successfully, indicating that the extensive formative support, interactive elements, and flexible pacing provided sufficient scaffolding even when the learning experience felt demanding.

The qualitative insights also validate the framework’s theoretical foundations. Students’ explicit appreciation for features aligned with UDL principles (choice, multimodality, flexibility), active learning strategies (practical application, problem-solving), and technology integration (interactivity, immediate feedback, creative production) provides direct evidence that these pedagogical approaches were not merely implemented but meaningfully experienced and valued by learners. The alignment between design intentions and student perceptions suggests fidelity of implementation and validates the framework’s applicability to large-scale online education contexts.

5.5. Summary of Results

The results from three years of implementation demonstrate that the integrated design framework successfully supported interactive and inclusive online learning at substantial scale. Learning analytics revealed high engagement rates (69–78% of enrolled students attempting assessments) and exceptional pass rates (95–99%), substantially exceeding typical online course completion rates. Quantitative survey data showed consistently positive student perceptions across all dimensions—satisfaction (M=4.02), content quality (M=3.96), engagement (M=3.75), and learning outcomes (M=4.03)—with notable improvements over time, particularly in engagement (+0.71 points). Qualitative feedback corroborated these findings, with students praising interactive features, multimodal content, practical applicability, and flexibility while identifying time management, cognitive load, and preference for synchronous interaction as challenges for some learners.

The longitudinal trends demonstrate not only initial effectiveness but also sustained improvement through iterative refinement guided by ongoing evaluation and the integrated framework’s principles. The convergence of objective completion data, self-reported perceptions, and qualitative insights provides robust triangulated evidence that the systematic integration of pedagogy, technology, and content design produced measurable benefits for diverse student populations in an online asynchronous environment.