Submitted:

13 November 2025

Posted:

19 November 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Methods

2.1. Research Design

2.2. Dataset

2.3. Baseline CNN

| Component | Output Shape | Parameters |

|---|---|---|

| Input + Augmentation | (224, 224, 3) | 0 |

| Conv2D (32 filters) + BN + MaxPool | (112, 112, 32) | 9,200 |

| Conv2D (64 filters) + BN + MaxPool | (56, 56, 64) | 37,900 |

| Conv2D (128 filters) + BN + MaxPool | (28, 28, 128) | 150,600 |

| Conv2D (256 filters) + BN + MaxPool | (14, 14, 256) | 295,200 |

| Flatten | (50176) | 0 |

| Dense (512, ReLU, L2 0.01) + Dropout (0.7) | (512) | 25,690,000 |

| Dense (3, Softmax) | (3) | 1,539 |

| Total Parameters | 26,185,439 | |

| Trainable Parameters | 26,185,439 | |

| Non-trainable Parameters | 0 |

- Optimizer: RMSprop with a learning rate of 0.001 ensures stable and efficient training.

- Batch Size: 32 balances memory usage and training speed, suitable for the dataset size.

- Epochs: Up to 20, with early stopping after 10 epochs if validation performance plateaus.

-

Callbacks:

- −

- EarlyStopping: Tracks validation loss, stopping training after 10 epochs without improvement and restoring the best weights.

- −

- ModelCheckpoint: Saves the model with the highest validation accuracy.

- −

- ReduceLROnPlateau: Reduces the learning rate by half if validation loss stalls for 3 epochs, with a minimum of 0.000001.

2.4. Transfer Learning

- Global Average Pooling (GAP) condenses feature maps into a 1280-dimensional vector, reducing computational complexity.

- A dense layer with 256 neurons uses ReLU activation and a 0.5 dropout rate to enhance generalization and prevent overfitting.

- A final output layer with 3 neurons and softmax activation provides class probabilities for lane dividers, pedestrian crossings, and speed bumps.

| Component | Output Shape | Parameters |

|---|---|---|

| EfficientNetB0 (Base, Frozen) | (None, 7, 7, 1280) | 4,049,571 (non-trainable) |

| Global Average Pooling (GAP) | (None, 1280) | 0 |

| Dense (256 neurons, ReLU, Dropout 0.5) | (None, 256) | 327,936 |

| Dense (3 neurons, Softmax) | (None, 3) | 771 |

| Total Parameters | 4,378,278 | |

| Trainable Parameters | 328,707 | |

| Non-trainable Parameters | 4,049,571 |

- Optimizer: Adam with a learning rate of 0.0001 ensures stable and efficient training.

- Batch Size: 32 balances memory usage and training speed, suitable for the dataset size.

- Epochs: Up to 10, with early stopping after 7 epochs if validation performance plateaus.

-

Callbacks:

- −

- EarlyStopping: Tracks validation loss, stopping training after 10 epochs without improvement and restoring the best weights.

- −

- ModelCheckpoint: Saves the model with the highest validation accuracy.

- −

- ReduceLROnPlateau: Reduces the learning rate by half if validation loss stalls for 3 epochs, with a minimum of 0.000001.

2.5. Experimental Setup

3. Results

4. Discussion

References

- Mamun, A.A.; Ping, E.P.; Hossen, J.; Tahabilder, A.; Jahan, B. A comprehensive review on lane marking detection using deep neural networks. Sensors 2022, 22, 7682. [CrossRef]

- Tian, Y.; Gelernter, J.; Wang, X.; Chen, W.; Gao, J.; Zhang, Y.; Li, X. Lane marking detection via deep convolutional neural network. Neurocomputing 2018, 280, 46–55. [CrossRef]

- Tümen, V.; Ergen, B. Intersections and crosswalk detection using deep learning and image processing techniques. Physica A: Statistical Mechanics and its Applications 2020, 543, 123510. [CrossRef]

- Darwiche, M.; El-Hajj-Chehade, W. Speed bump detection for autonomous vehicles using signal-processing techniques. BAU Journal-Science and Technology 2019, 1, 5. [CrossRef]

- Yadav, S.; Sawale, M. A review on image classification using deep learning. World J Adv Res Rev 2023, 17, 480–2.

- Chen, T.; Chen, Z.; Shi, Q.; Huang, X. Road marking detection and classification using machine learning algorithms. In Proceedings of the 2015 IEEE Intelligent Vehicles Symposium (IV). IEEE, 2015, pp. 617–621. [CrossRef]

- Arunpriyan, J.; Variyar, V.S.; Soman, K.; Adarsh, S. Real-time speed bump detection using image segmentation for autonomous vehicles. In Proceedings of the International Conference on Intelligent Computing, Information and Control Systems. Springer, 2019, pp. 308–315.

- Rezapour, M.; Ksaibati, K. Convolutional neural network for roadside barriers detection: Transfer learning versus non-transfer learning. Signals 2021, 2, 72–86. [CrossRef]

- Hussain, M.; Bird, J.J.; Faria, D.R. A study on CNN transfer learning for image classification. In Proceedings of the UK Workshop on computational Intelligence. Springer, 2018, pp. 191–202. [CrossRef]

- Shaha, M.; Pawar, M. Transfer learning for image classification. In Proceedings of the 2018 second international conference on electronics, communication and aerospace technology (ICECA). IEEE, 2018, pp. 656–660.

- Chen, Z.; Zhang, T.; Ouyang, C. End-to-end airplane detection using transfer learning in remote sensing images. Remote Sensing 2018, 10, 139. [CrossRef]

- Wu, Y.; Qin, X.; Pan, Y.; Yuan, C. Convolution neural network based transfer learning for classification of flowers. In Proceedings of the 2018 IEEE 3rd international conference on signal and image processing (ICSIP). IEEE, 2018, pp. 562–566.

- Qasim, R.; Bangyal, W.H.; Alqarni, M.A.; Ali Almazroi, A. A fine-tuned BERT-based transfer learning approach for text classification. Journal of healthcare engineering 2022, 2022, 3498123. [CrossRef]

- Khan, M.; Khan, M.T. Transfer Learning for Brain Tumor MRI Classification Using VGG16: A Comparative and Explainable AI Approach, 2025.

- Doğan, G.; Ergen, B. A new hybrid mobile CNN approach for crosswalk recognition in autonomous vehicles. Multimedia Tools and Applications 2024, 83, 67747–67762. [CrossRef]

- Kaya, Ö.; Çodur, M.Y.; Mustafaraj, E. Automatic detection of pedestrian crosswalk with faster r-cnn and yolov7. Buildings 2023, 13, 1070. [CrossRef]

- Weyant, E. Research Design: Qualitative, Quantitative, and Mixed Methods Approaches: by John W. Creswell and J. David Creswell, Los Angeles, CA: SAGE, 2018, 38.34, 304pp., ISBN: 978-1506386706, 2022.

- Rai, N.; Thapa, B. A study on purposive sampling method in research. Kathmandu: Kathmandu School of Law 2015, 5, 8–15.

- LeCun, Y.; Bottou, L.; Bengio, Y.; Haffner, P. Gradient-based learning applied to document recognition. Proceedings of the IEEE 2002, 86, 2278–2324. [CrossRef]

- Tan, M.; Le, Q. Efficientnet: Rethinking model scaling for convolutional neural networks. In Proceedings of the International conference on machine learning. PMLR, 2019, pp. 6105–6114.

| Class | Training Images | Validation Images | Test Images |

|---|---|---|---|

| Lane Divider | ∼159 | ∼68 | 25 |

| Pedestrian Crossing | ∼162 | ∼69 | 25 |

| Speed Bump | ∼174 | ∼76 | 25 |

| Total | ∼495 | ∼213 | 75 |

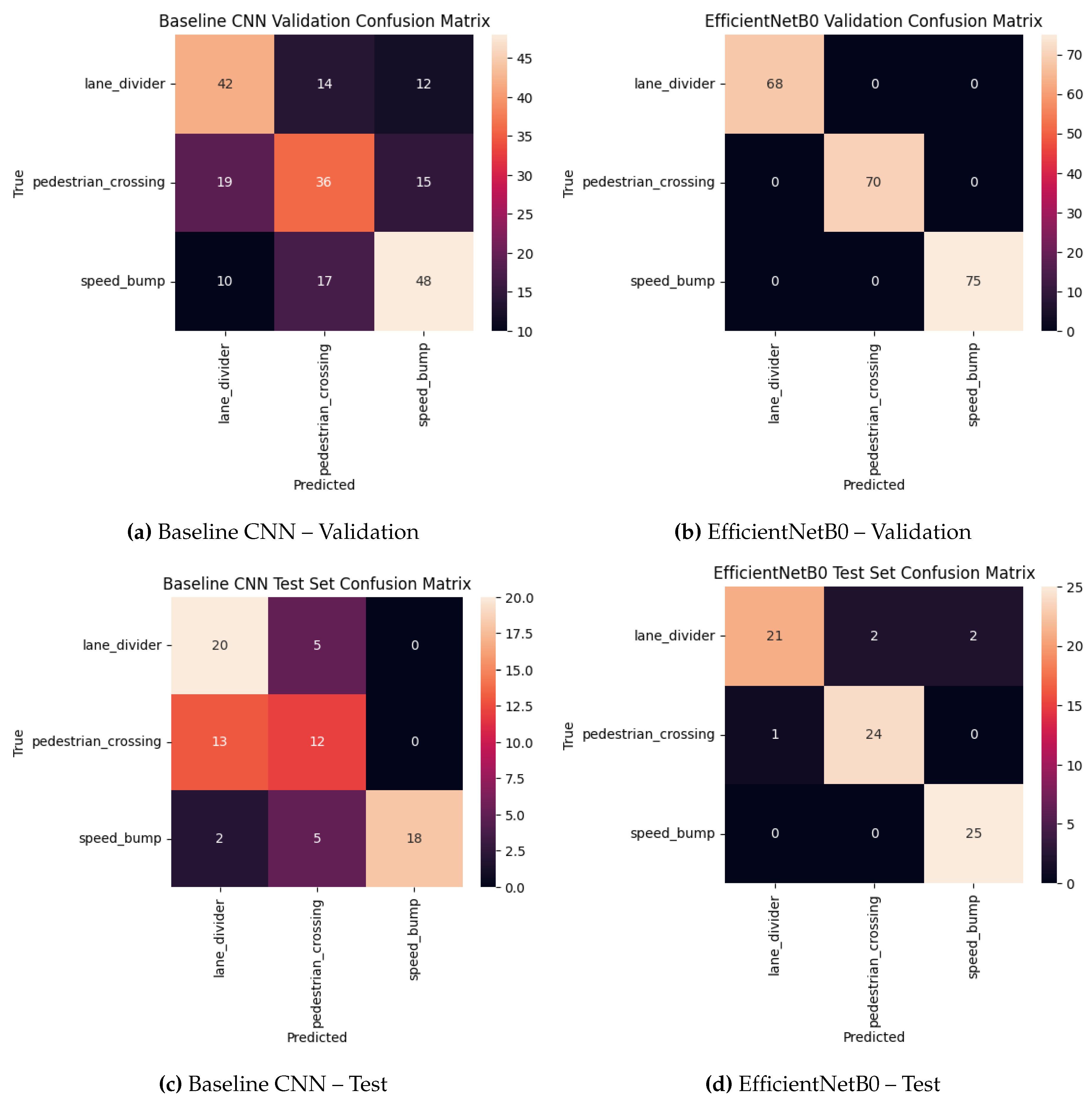

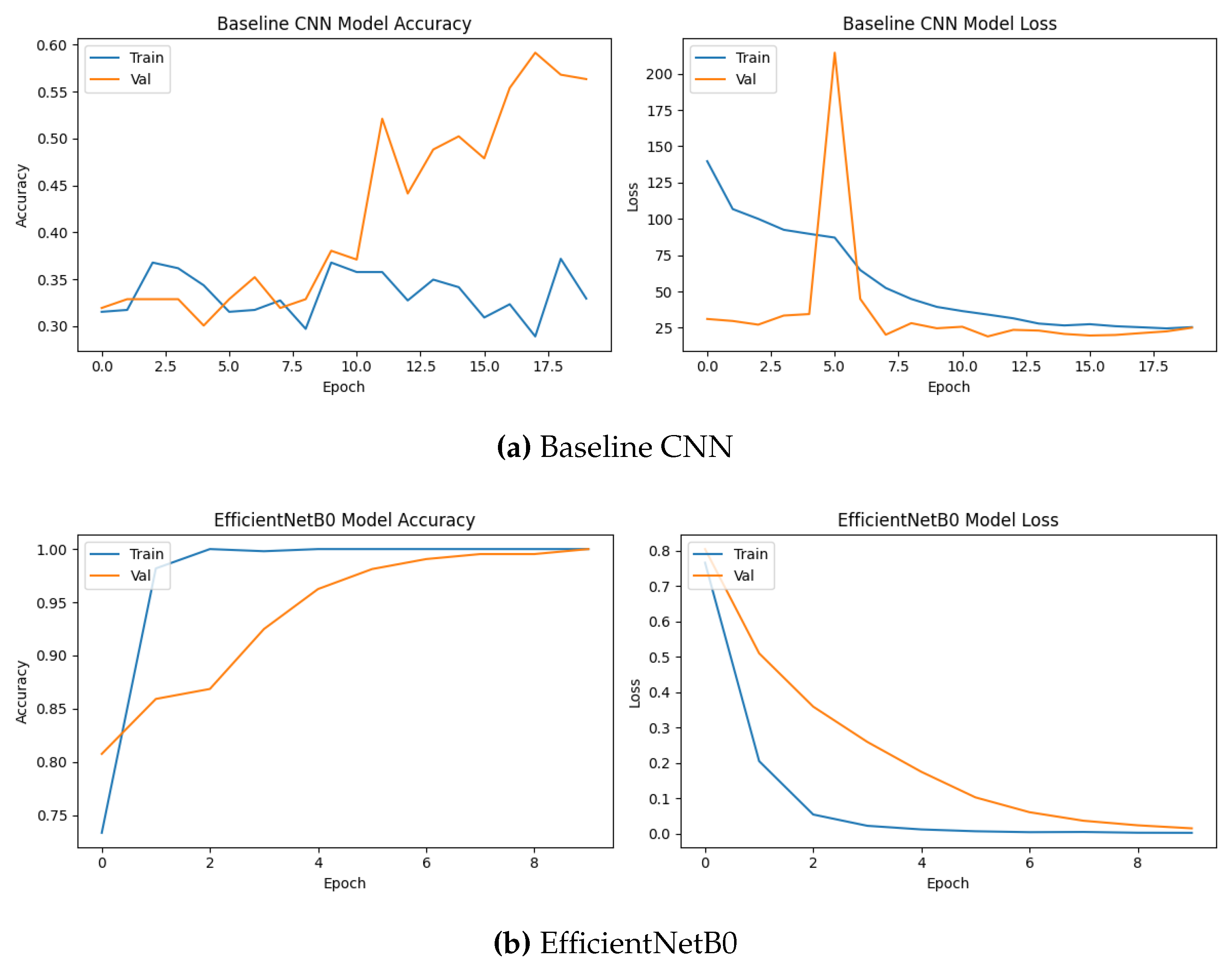

| Model | Val Acc | Val Macro F1 | Test Acc | Test Macro F1 |

|---|---|---|---|---|

| Baseline CNN | 0.563 | 0.590 | 0.667 | 0.672 |

| EfficientNetB0 (TL) | 1.000 | 1.000 | 0.933 | 0.932 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).