Submitted:

12 November 2025

Posted:

19 November 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

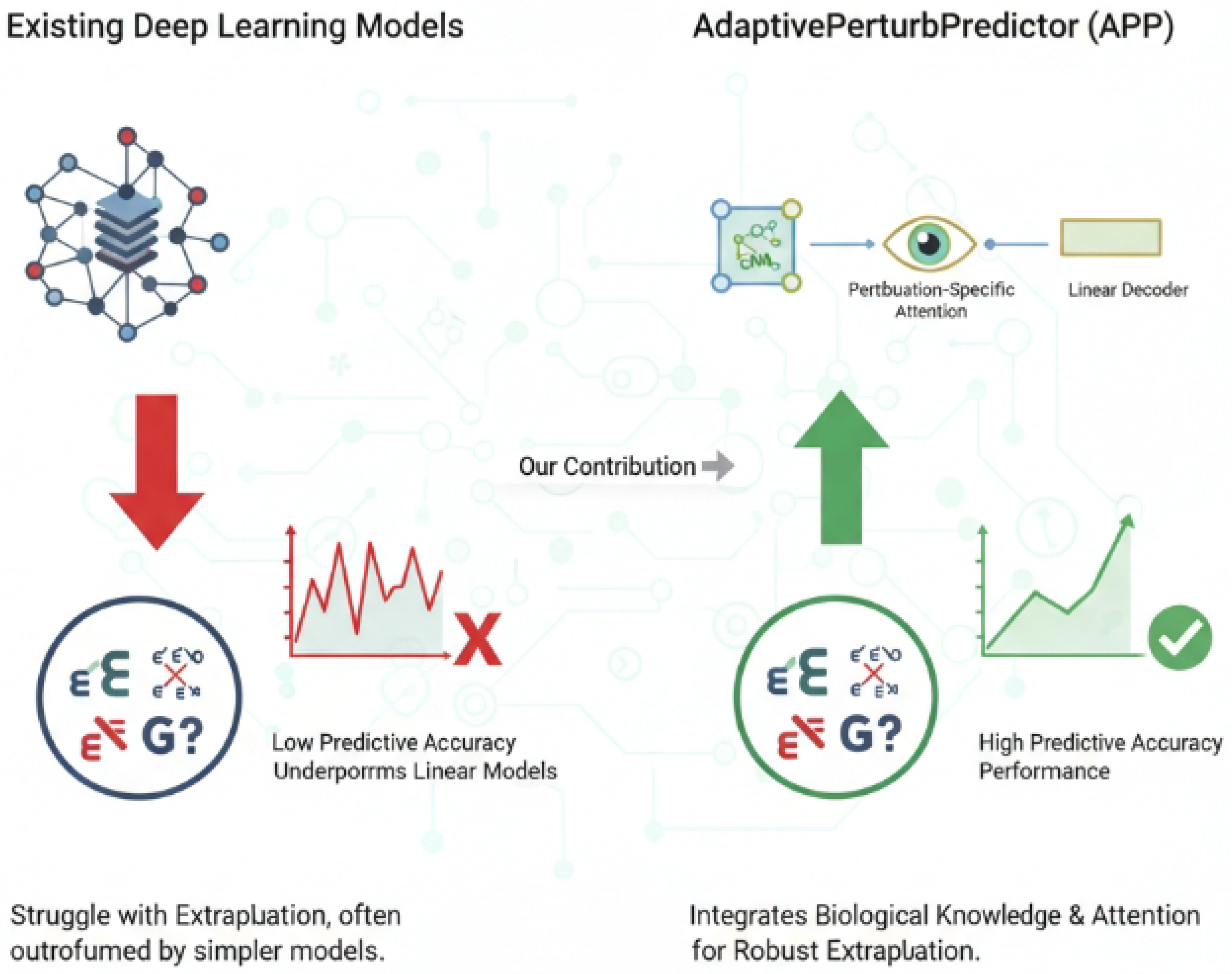

- We propose AdaptivePerturbPredictor (APP), a novel lightweight deep learning framework that effectively integrates gene regulatory networks via GNNs and employs a perturbation-specific attention mechanism for robust gene perturbation prediction.

- We demonstrate that APP significantly outperforms both simple linear baselines and complex existing deep learning "foundation models" in predicting the effects of double gene perturbations, showcasing superior extrapolation capabilities.

- We show that APP achieves state-of-the-art performance in predicting the effects of unseen single gene perturbations, highlighting its enhanced generalization ability for genes not encountered during training.

2. Related Work

2.1. Computational Models for Gene Perturbation Prediction

2.2. Graph Neural Networks and Attention Mechanisms in Computational Biology

3. Method

3.1. Overall Architecture of AdaptivePerturbPredictor (APP)

- Gene Embedding via Graph Neural Networks (GNNs): Initially, the biological network information is processed by multi-layer Graph Neural Networks. These GNN layers learn contextual embeddings for each gene, effectively capturing their intricate relationships and functional roles within the broader biological system. These embeddings go beyond simple gene identity, encoding the gene’s position and influence within the network topology.

- Perturbation-Specific Attention Module: Subsequently, these contextual gene embeddings are fed into a novel perturbation-specific attention module. This module processes the embeddings in conjunction with the identities of the specific genes that have been perturbed. It dynamically computes attention scores, effectively weighting the influence of different genes based on the particular perturbation event. This process culminates in the generation of a comprehensive cell state vector, which encapsulates the predicted global impact of the perturbation.

- Linear Prediction Decoder: Finally, the perturbation-aware cell state vector is passed to a lightweight linear decoder. This decoder maps the high-dimensional cell state representation to the predicted log-fold-changes in gene expression for all genes in the system. This output represents the cellular transcriptional response to the specific genetic perturbation.

3.2. Gene Embedding via Graph Neural Networks

3.3. Perturbation-Specific Attention Module

3.4. Linear Prediction Decoder

4. Experiments

4.1. Experimental Setup

4.1.1. Datasets

4.1.2. Preprocessing and Evaluation Metrics

4.1.3. Training and Test Splits

4.1.4. Baselines and Compared Deep Learning Models

4.2. Main Results

4.3. Ablation Study

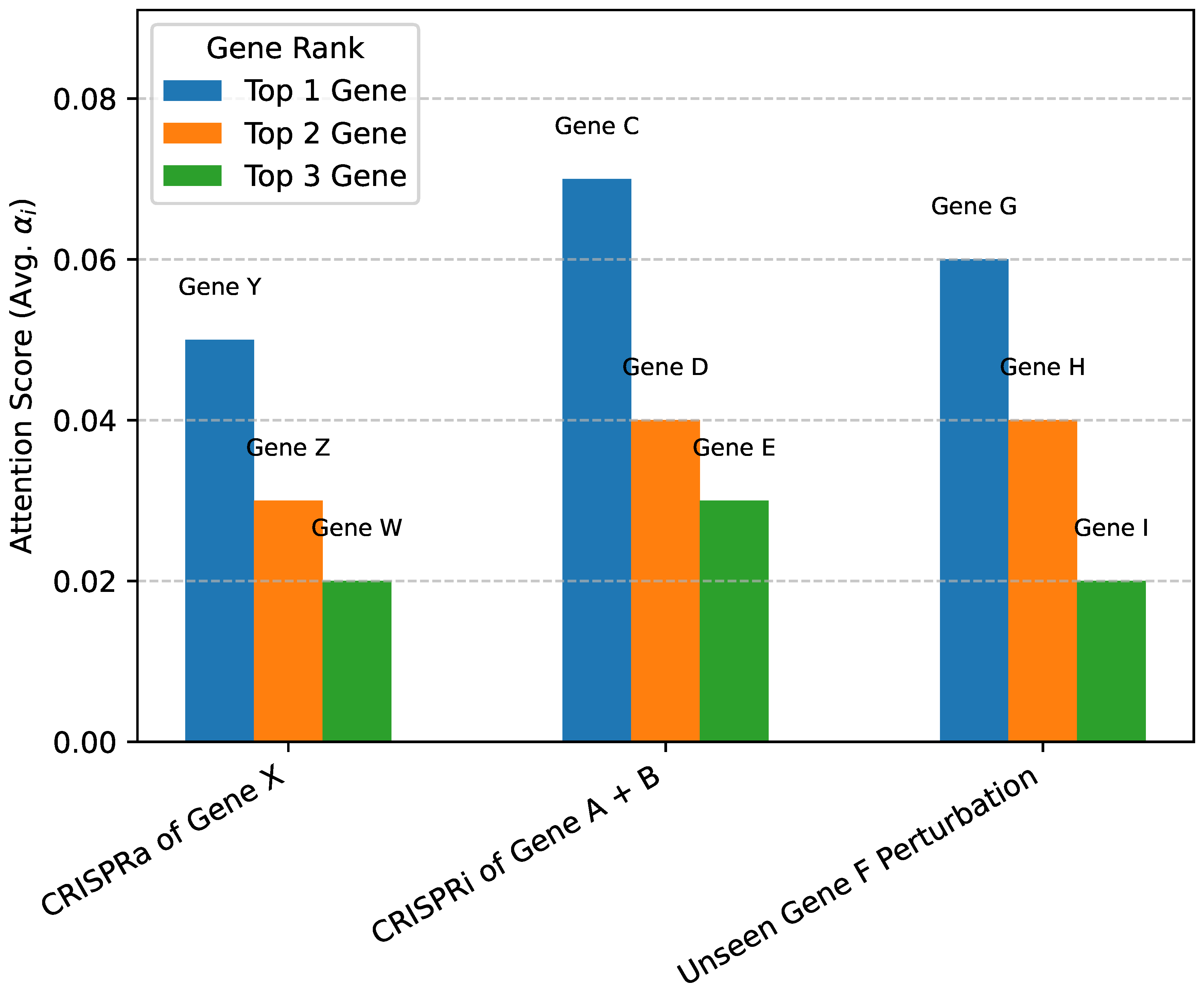

4.4. Interpretability of Perturbation-Specific Attention

4.5. In-depth Analysis of Extrapolation Capabilities

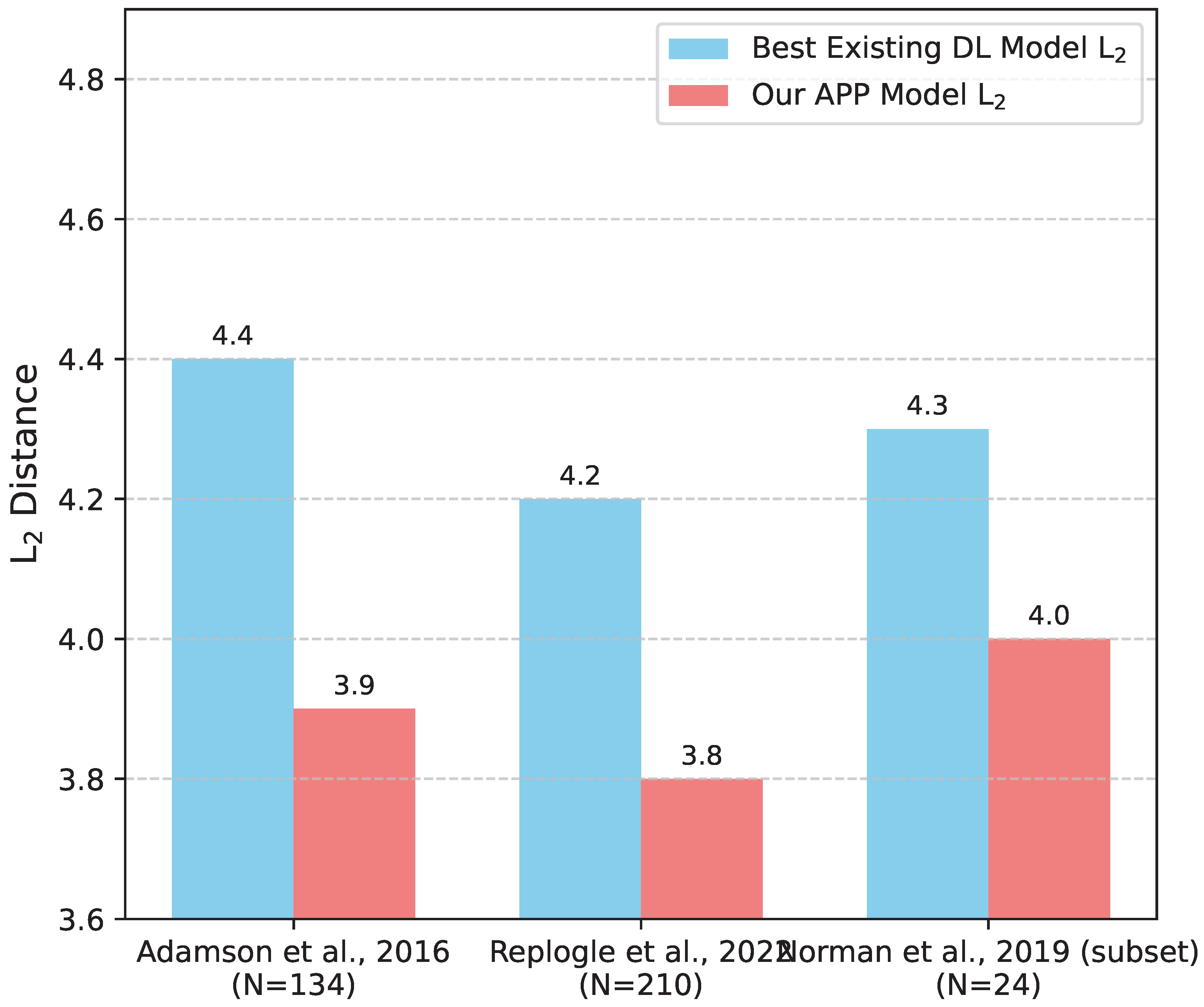

4.5.1. Unseen Single Gene Perturbations

4.5.2. Novel Double Gene Perturbations

4.6. Impact of Biological Network Quality

4.7. Computational Efficiency and Scalability

5. Conclusions

References

- Iida, H.; Thai, D.; Manjunatha, V.; Iyyer, M. TABBIE: Pretrained Representations of Tabular Data. In Proceedings of the Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. Association for Computational Linguistics; 2021; pp. 3446–3456. [Google Scholar] [CrossRef]

- Song, X.; Huang, L.; Xue, H.; Hu, S. Supervised Prototypical Contrastive Learning for Emotion Recognition in Conversation. In Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics; 2022; pp. 5197–5206. [Google Scholar] [CrossRef]

- Bao, G.; Zhang, Y.; Teng, Z.; Chen, B.; Luo, W. G-Transformer for Document-Level Machine Translation. In Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). Association for Computational Linguistics; 2021; pp. 3442–3455. [Google Scholar] [CrossRef]

- Zhou, Y.; Shen, J.; Cheng, Y. Weak to strong generalization for large language models with multi-capabilities. In Proceedings of the The Thirteenth International Conference on Learning Representations; 2025. [Google Scholar]

- Qian, R.; Ross, C.; Fernandes, J.; Smith, E.M.; Kiela, D.; Williams, A. Perturbation Augmentation for Fairer NLP. In Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics; 2022; pp. 9496–9521. [Google Scholar] [CrossRef]

- Kim, G.; Cho, K. Length-Adaptive Transformer: Train Once with Length Drop, Use Anytime with Search. In Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). Association for Computational Linguistics; 2021; pp. 6501–6511. [Google Scholar] [CrossRef]

- Piper, A.; So, R.J.; Bamman, D. Narrative Theory for Computational Narrative Understanding. In Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics; 2021; pp. 298–311. [Google Scholar] [CrossRef]

- Zhou, Y.; Geng, X.; Shen, T.; Tao, C.; Long, G.; Lou, J.G.; Shen, J. Thread of thought unraveling chaotic contexts. arXiv 2023, arXiv:2311.08734. [Google Scholar] [CrossRef]

- Fu, J.; Huang, X.; Liu, P. SpanNER: Named Entity Re-/Recognition as Span Prediction. In Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). Association for Computational Linguistics; 2021; pp. 7183–7195. [Google Scholar] [CrossRef]

- Yang, W.; Lin, Y.; Li, P.; Zhou, J.; Sun, X. RAP: Robustness-Aware Perturbations for Defending against Backdoor Attacks on NLP Models. In Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics, 2021; pp. 8365–8381. [Google Scholar] [CrossRef]

- Moradi, M.; Samwald, M. Evaluating the Robustness of Neural Language Models to Input Perturbations. In Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics; 2021; pp. 1558–1570. [Google Scholar] [CrossRef]

- Ross, A.; Wu, T.; Peng, H.; Peters, M.; Gardner, M. Tailor: Generating and Perturbing Text with Semantic Controls. In Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers). Association for Computational Linguistics; 2022; pp. 3194–3213. [Google Scholar] [CrossRef]

- Tian, Z.; Lin, Z.; Zhao, D.; Zhao, W.; Flynn, D.; Ansari, S.; Wei, C. Evaluating scenario-based decision-making for interactive autonomous driving using rational criteria: A survey. arXiv 2025, arXiv:2501.01886 2025. [Google Scholar]

- Lin, Z.; Lan, J.; Anagnostopoulos, C.; Tian, Z.; Flynn, D. Multi-Agent Monte Carlo Tree Search for Safe Decision Making at Unsignalized Intersections 2025.

- Lin, Z.; Lan, J.; Anagnostopoulos, C.; Tian, Z.; Flynn, D. Safety-Critical Multi-Agent MCTS for Mixed Traffic Coordination at Unsignalized Intersections. IEEE Transactions on Intelligent Transportation Systems 2025, 1–15. [Google Scholar] [CrossRef]

- Tian, Y.; Yang, Z.; Liu, C.; Su, Y.; Hong, Z.; Gong, Z.; Xu, J. CenterMamba-SAM: Center-Prioritized Scanning and Temporal Prototypes for Brain Lesion Segmentation. arXiv 2025, arXiv:cs.CV/2511.01243]. [Google Scholar]

- Wang, P.; Zhu, Z.; Feng, Z. Virtual Back-EMF Injection-based Online Full-Parameter Estimation of DTP-SPMSMs Under Sensorless Control. IEEE Transactions on Transportation Electrification 2025. [Google Scholar] [CrossRef]

- Wang, P.; Zhu, Z.Q.; Feng, Z. Novel Virtual Active Flux Injection-Based Position Error Adaptive Correction of Dual Three-Phase IPMSMs Under Sensorless Control. IEEE Transactions on Transportation Electrification 2025. [Google Scholar] [CrossRef]

- Wang, P.; Zhu, Z.; Liang, D. Improved position-offset based online parameter estimation of PMSMs under constant and variable speed operations. IEEE Transactions on Energy Conversion 2024, 39, 1325–1340. [Google Scholar] [CrossRef]

- Zhong, W.; Cui, R.; Guo, Y.; Liang, Y.; Lu, S.; Wang, Y.; Saied, A.; Chen, W.; Duan, N. AGIEval: A Human-Centric Benchmark for Evaluating Foundation Models. In Proceedings of the Findings of the Association for Computational Linguistics: NAACL 2024. Association for Computational Linguistics; 2024; pp. 2299–2314. [Google Scholar] [CrossRef]

- Zhou, Y.; Li, X.; Wang, Q.; Shen, J. Visual In-Context Learning for Large Vision-Language Models. In Proceedings of the Findings of the Association for Computational Linguistics, ACL 2024, Bangkok, Thailand and virtual meeting, 2024. Association for Computational Linguistics, 2024, August 11-16; pp. 15890–15902.

- Zhuang, J.; Li, G.; Xu, H.; Xu, J.; Tian, R. TEXT-TO-CITY Controllable 3D Urban Block Generation with Latent Diffusion Model. In Proceedings of the 29th International Conference of the Association for Computer-Aided Architectural Design Research in Asia (CAADRIA), Singapore; 2024; pp. 20–26. [Google Scholar]

- Lu, X.; West, P.; Zellers, R.; Le Bras, R.; Bhagavatula, C.; Choi, Y. NeuroLogic Decoding: (Un)supervised Neural Text Generation with Predicate Logic Constraints. In Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. Association for Computational Linguistics; 2021; pp. 4288–4299. [Google Scholar] [CrossRef]

- Kuribayashi, T.; Oseki, Y.; Ito, T.; Yoshida, R.; Asahara, M.; Inui, K. Lower Perplexity is Not Always Human-Like. In Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). Association for Computational Linguistics; 2021; pp. 5203–5217. [Google Scholar] [CrossRef]

- Kirstain, Y.; Ram, O.; Levy, O. Coreference Resolution without Span Representations. In Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 2: Short Papers). Association for Computational Linguistics; 2021; pp. 14–19. [Google Scholar] [CrossRef]

- Jing, B.; You, Z.; Yang, T.; Fan, W.; Tong, H. Multiplex Graph Neural Network for Extractive Text Summarization. In Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics; 2021; pp. 133–139. [Google Scholar] [CrossRef]

- Zhuang, J.; Miao, S. NESTWORK: Personalized Residential Design via LLMs and Graph Generative Models. In Proceedings of the ACADIA 2024 Conference, November 16 2024; Vol. 3, pp. 99–100.

- Tian, Y.; Chen, G.; Song, Y. Aspect-based Sentiment Analysis with Type-aware Graph Convolutional Networks and Layer Ensemble. In Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. Association for Computational Linguistics; 2021; pp. 2910–2922. [Google Scholar] [CrossRef]

- Xu, Y.; Zhu, C.; Xu, R.; Liu, Y.; Zeng, M.; Huang, X. Fusing Context Into Knowledge Graph for Commonsense Question Answering. In Proceedings of the Findings of the Association for Computational Linguistics: ACL-IJCNLP 2021. Association for Computational Linguistics; 2021; pp. 1201–1207. [Google Scholar] [CrossRef]

- Zhang, F.; Wang, C.; Cheng, Z.; Peng, X.; Wang, D.; Xiao, Y.; Chen, C.; Hua, X.S.; Luo, X. DREAM: Decoupled Discriminative Learning with Bigraph-aware Alignment for Semi-supervised 2D-3D Cross-modal Retrieval. In Proceedings of the AAAI Conference on Artificial Intelligence, 2025, Vol. 39, pp. 13206–13214.

- Zhang, F.; Zhou, H.; Hua, X.S.; Chen, C.; Luo, X. Hope: A hierarchical perspective for semi-supervised 2d-3d cross-modal retrieval. IEEE Transactions on Pattern Analysis and Machine Intelligence 2024, 46, 8976–8993. [Google Scholar] [CrossRef]

- Zhang, F.; Hua, X.S.; Chen, C.; Luo, X. Fine-grained prototypical voting with heterogeneous mixup for semi-supervised 2d-3d cross-modal retrieval. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2024, pp. 17016–17026.

- Wang, B.; Che, W.; Wu, D.; Wang, S.; Hu, G.; Liu, T. Dynamic Connected Networks for Chinese Spelling Check. In Proceedings of the Findings of the Association for Computational Linguistics: ACL-IJCNLP 2021. Association for Computational Linguistics; 2021; pp. 2437–2446. [Google Scholar] [CrossRef]

- Luo, Z.; Hong, Z.; Ge, X.; Zhuang, J.; Tang, X.; Du, Z.; Tao, Y.; Zhang, Y.; Zhou, C.; Yang, C.; et al. Embroiderer: 147 Do-It-Yourself Embroidery Aided with Digital Tools. In Proceedings of the Eleventh International Symposium of Chinese CHI, 2023, pp. 614–621.

- Norman, T.M.; Horlbeck, M.A.; Replogle, J.M.; Ge, A.Y.; Xu, A.; Jost, M.; Gilbert, L.A.; Weissman, J.S. Exploring genetic interaction manifolds constructed from rich single-cell phenotypes. Science 2019, 365, 786–793. [Google Scholar] [CrossRef] [PubMed]

- Adamson, B.; Norman, T.M.; Jost, M.; Cho, M.Y.; Nuñez, J.K.; Chen, Y.; Villalta, J.E.; Gilbert, L.A.; Horlbeck, M.A.; Hein, M.Y.; et al. A multiplexed single-cell CRISPR screening platform enables systematic dissection of the unfolded protein response. Cell 2016, 167, 1867–1882. [Google Scholar] [CrossRef] [PubMed]

- Roohani, Y.; Huang, K.; Leskovec, J. Predicting transcriptional outcomes of novel multigene perturbations with GEARS. Nature Biotechnology 2024, 42, 927–935. [Google Scholar] [CrossRef] [PubMed]

| Task Type | Test Perturbations | Baseline Model | Baseline L2 | Best Existing DL Model L2 | Our APP Model L2 |

|---|---|---|---|---|---|

| Double Gene Perturbation | 62 | Additive | |||

| Single Gene Perturbation (Unseen) | 134 / 210 / 24 | Linear Model (LM) |

| Model Variant | Double Gene Perturbation | Single Gene Perturbation (Unseen) |

|---|---|---|

| APP (Full Model) | ||

| APP w/o GNNs (Random Embeddings) | ||

| APP w/o GNNs (Identity Embeddings) | ||

| APP w/o Perturbation-Specific Attention | ||

| APP w/o Lightweight Decoder (MLP Decoder) |

| Model | L2 on Novel Double Gene Perturbations | Improvement over Additive Baseline |

|---|---|---|

| Additive Baseline | — | |

| Best Existing DL Model | -10.9% (worse) | |

| Our APP Model | +3.6% (better) |

| Network Condition | Double Gene Perturbation L2 | Unseen Single Gene Perturbation L2 |

|---|---|---|

| Original Network (Full APP) | ||

| 10% Random Edges Removed | ||

| 25% Random Edges Removed | ||

| 10% Random Edges Added (Noise) | ||

| 25% Random Edges Added (Noise) |

| Model | Total Parameters (Millions) | Avg. Training Time (hours) | Avg. Inference Time (ms/perturbation) |

|---|---|---|---|

| APP | |||

| scFoundation + Decoder | |||

| scBERT + Decoder | |||

| Geneformer + Decoder |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).