Submitted:

11 November 2025

Posted:

12 November 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

3. Methodology

4. Algorithm and Model

4.1. HEMA-RCL Architecture Overview

4.2. Hierarchical Expert System with LLM Specialization

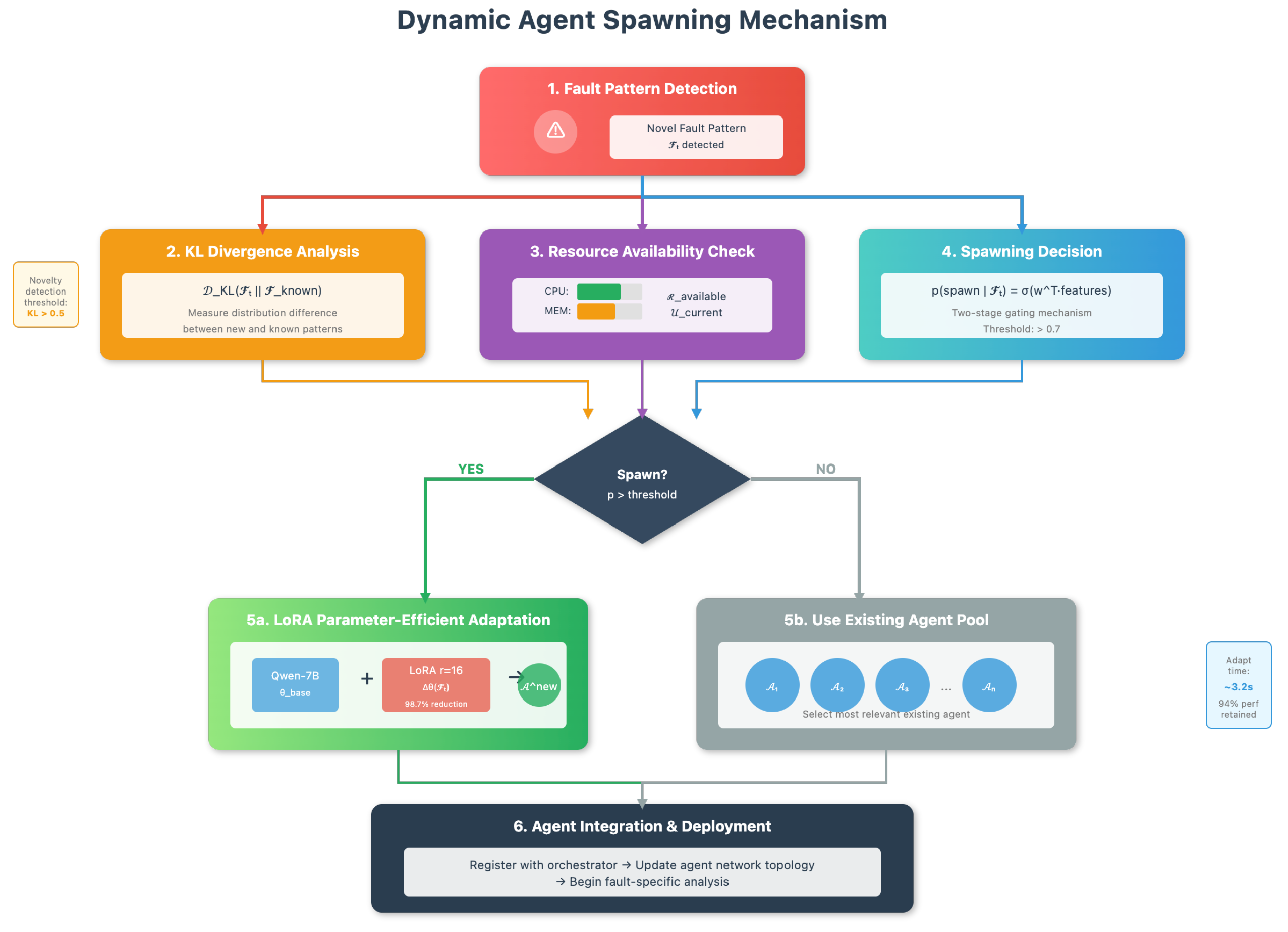

4.3. Dynamic Agent Spawning Mechanism

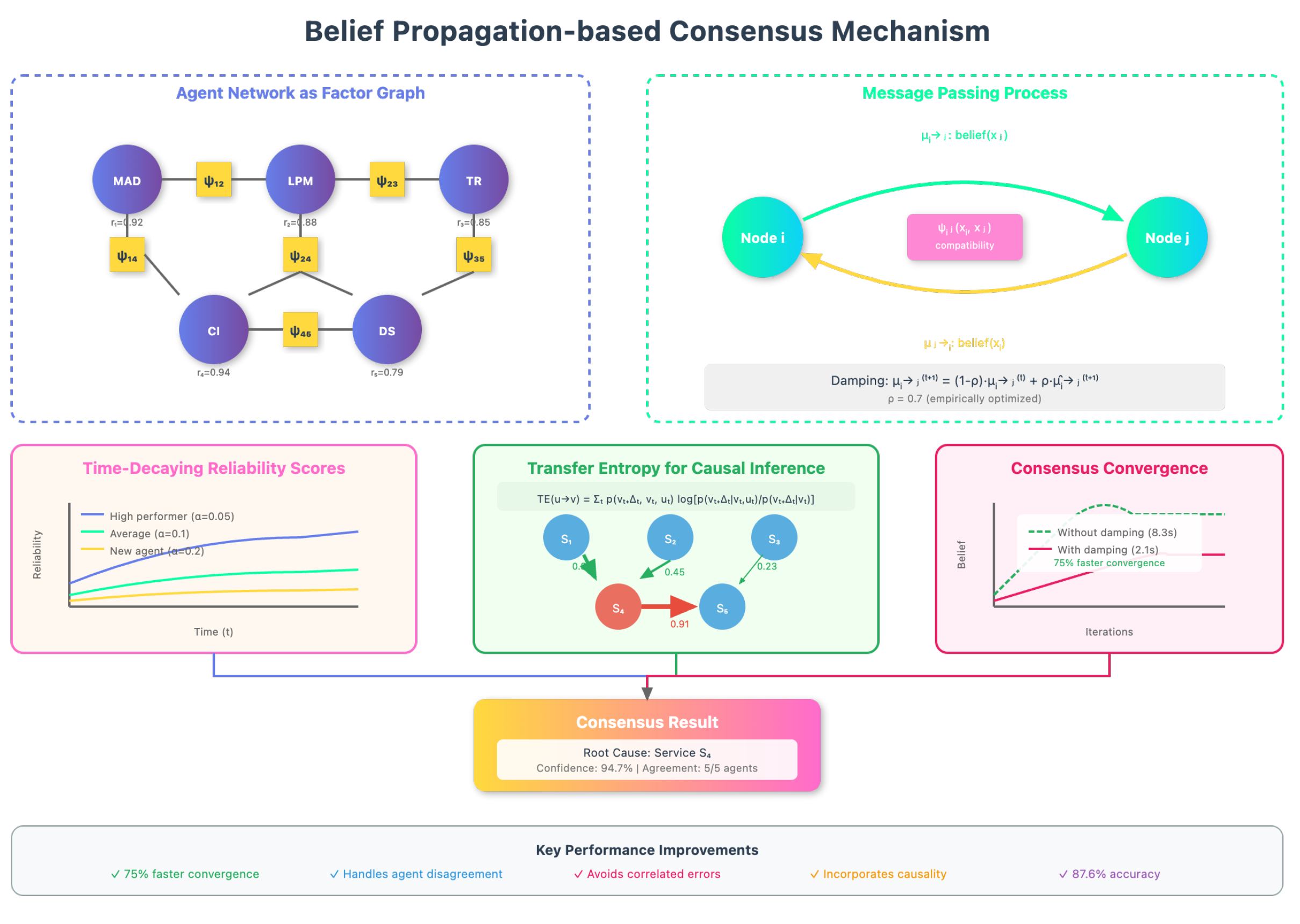

4.4. Belief Propagation-based Consensus Mechanism

4.5. Causal Inference Enhancement

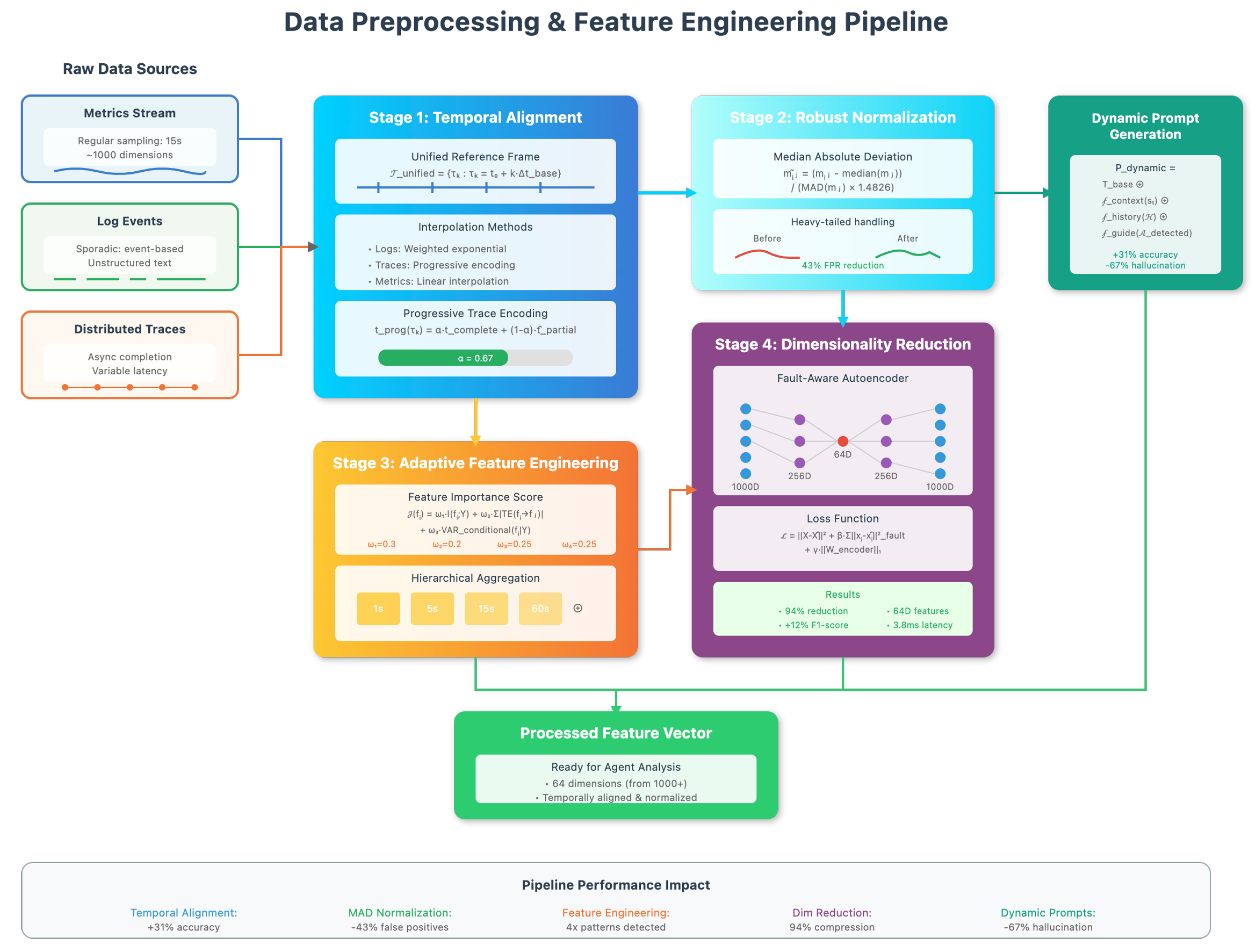

4.6. Data Preprocessing

4.6.1. Multi-modal Data Alignment and Temporal Synchronization

4.6.2. Adaptive Feature Engineering and Dimensionality Reduction

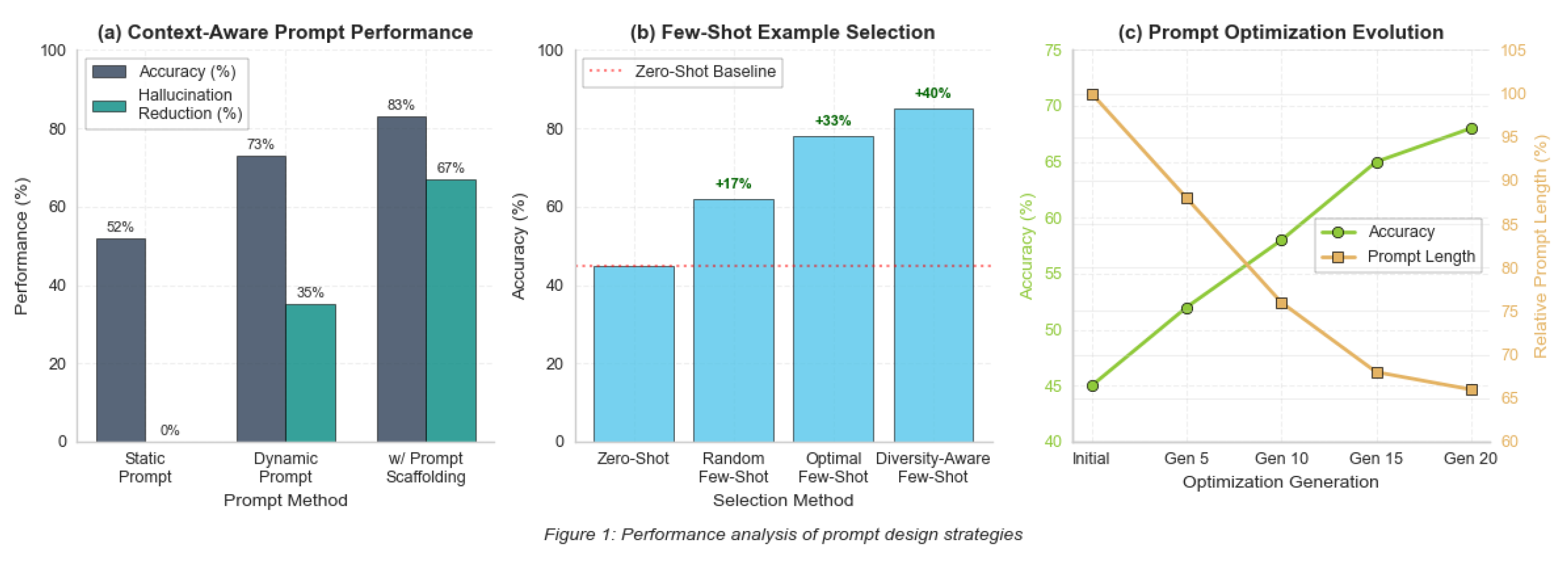

4.7. Prompt Design

4.7.1. Context-Aware Dynamic Prompt Generation

4.7.2. Few-Shot Learning and Prompt Optimization

4.8. Evaluation Metrics

4.8.1. Primary Metrics

4.8.2. Secondary Metrics

5. Experiment Results

5.1. Experimental Setup

5.2. Main Results and Comparisons

5.3. Ablation Studies and Scalability

5.4. Real-Time Performance

6. Conclusions

References

- Zhang, C.; Dong, Z.; Peng, X.; Zhang, B.; Chen, M. Trace-based multi-dimensional root cause localization of performance issues in microservice systems. In Proceedings of the Proceedings of the IEEE/ACM 46th International Conference on Software Engineering, 2024, pp. 1–12.

- Wang, Y.; Zhu, Z.; Fu, Q.; Ma, Y.; He, P. MRCA: Metric-level root cause analysis for microservices via multi-modal data. In Proceedings of the Proceedings of the 39th IEEE/ACM International Conference on Automated Software Engineering, 2024, pp. 1057–1068.

- Xin, R.; Chen, P.; Zhao, Z. Causalrca: Causal inference based precise fine-grained root cause localization for microservice applications. Journal of Systems and Software 2023, 203, 111724.

- Zhu, Y.; Wang, J.; Li, B.; Zhao, Y.; Zhang, Z.; Xiong, Y.; Chen, S. Microirc: Instance-level root cause localization for microservice systems. Journal of Systems and Software 2024, 216, 112145.

- Sun, Y.; Lin, Z.; Shi, B.; Zhang, S.; Ma, S.; Jin, P.; Zhong, Z.; Pan, L.; Guo, Y.; Pei, D. Interpretable failure localization for microservice systems based on graph autoencoder. ACM Transactions on Software Engineering and Methodology 2025, 34, 1–28.

- Wang, T.; Qi, G.; Wu, T. KGroot: A knowledge graph-enhanced method for root cause analysis. Expert Systems with Applications 2024, 255, 124679.

- Panahandeh, M.; Hamou-Lhadj, A.; Hamdaqa, M.; Miller, J. ServiceAnomaly: An anomaly detection approach in microservices using distributed traces and profiling metrics. Journal of Systems and Software 2024, 209, 111917.

- Tian, Q.; Zou, D.; Han, Y.; Li, X. A Business Intelligence Innovative Approach to Ad Recall: Cross-Attention Multi-Task Learning for Digital Advertising. In Proceedings of the 2025 IEEE 6th International Seminar on Artificial Intelligence, Networking and Information Technology (AINIT). IEEE, 2025, pp. 1249–1253.

- Guan, S. Predicting Medical Claim Denial Using Logistic Regression and Decision Tree Algorithm. In Proceedings of the 2024 3rd International Conference on Health Big Data and Intelligent Healthcare (ICHIH), 2024, pp. 7–10. [CrossRef]

- Zhu, Y.; Liu, Y. LLM-NER: Advancing Named Entity Recognition with LoRA+ Fine-Tuned Large Language Models. In Proceedings of the 2025 11th International Conference on Computing and Artificial Intelligence (ICCAI), 2025, pp. 364–368. [CrossRef]

| Method/Fault Type | Acc@1 | Acc@3 | NDCG@5 | MTTD(s) | F1-Score |

|---|---|---|---|---|---|

| Baseline Methods | |||||

| MicroRCA | 0.542 | 0.721 | 0.687 | 142.3 | 0.521 |

| CloudRanger | 0.618 | 0.793 | 0.742 | 98.7 | 0.598 |

| TraceDiag | 0.695 | 0.851 | 0.812 | 68.4 | 0.678 |

| HEMA-RCL (full) | 0.876 | 0.952 | 0.928 | 38.7 | 0.859 |

| HEMA-RCL by Fault Type | |||||

| Resource Exhaustion | 0.913 | 0.968 | 0.947 | 32.4 | 0.905 |

| Network Issues | 0.856 | 0.941 | 0.912 | 41.2 | 0.848 |

| Application Errors | 0.892 | 0.959 | 0.934 | 35.8 | 0.900 |

| Configuration Drift | 0.821 | 0.923 | 0.898 | 48.6 | 0.807 |

| Cascading Failures | 0.847 | 0.938 | 0.916 | 44.3 | 0.854 |

| Byzantine Faults | 0.783 | 0.901 | 0.876 | 52.1 | 0.769 |

| Configuration | Acc@1 | MTTD(s) | Acc@1 | GPU-Hours |

|---|---|---|---|---|

| Component Ablation | ||||

| Full Model | 0.876 | 38.7 | – | 2.18 |

| w/o Dynamic Spawning | 0.812 | 45.2 | -7.3% | 1.92 |

| w/o Belief Propagation | 0.831 | 42.1 | -5.1% | 2.05 |

| w/o Hierarchical Structure | 0.798 | 51.3 | -8.9% | 2.76 |

| w/o Multi-modal Fusion | 0.789 | 48.7 | -9.9% | 1.84 |

| Single LLM (DeepSeek-V2) | 0.751 | 67.3 | -14.3% | 1.12 |

| Scalability (Service Count) | ||||

| 50 services | 0.912 | 24.3 | +4.1% | 0.82 |

| 100 services | 0.891 | 31.7 | +1.7% | 1.43 |

| 150 services | 0.876 | 38.7 | 0.0% | 2.18 |

| 200 services | 0.863 | 46.2 | -1.5% | 3.02 |

| 250 services | 0.851 | 54.8 | -2.9% | 3.91 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).