Submitted:

01 November 2025

Posted:

05 November 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

3. Datasets and Cross-Dataset Mapping

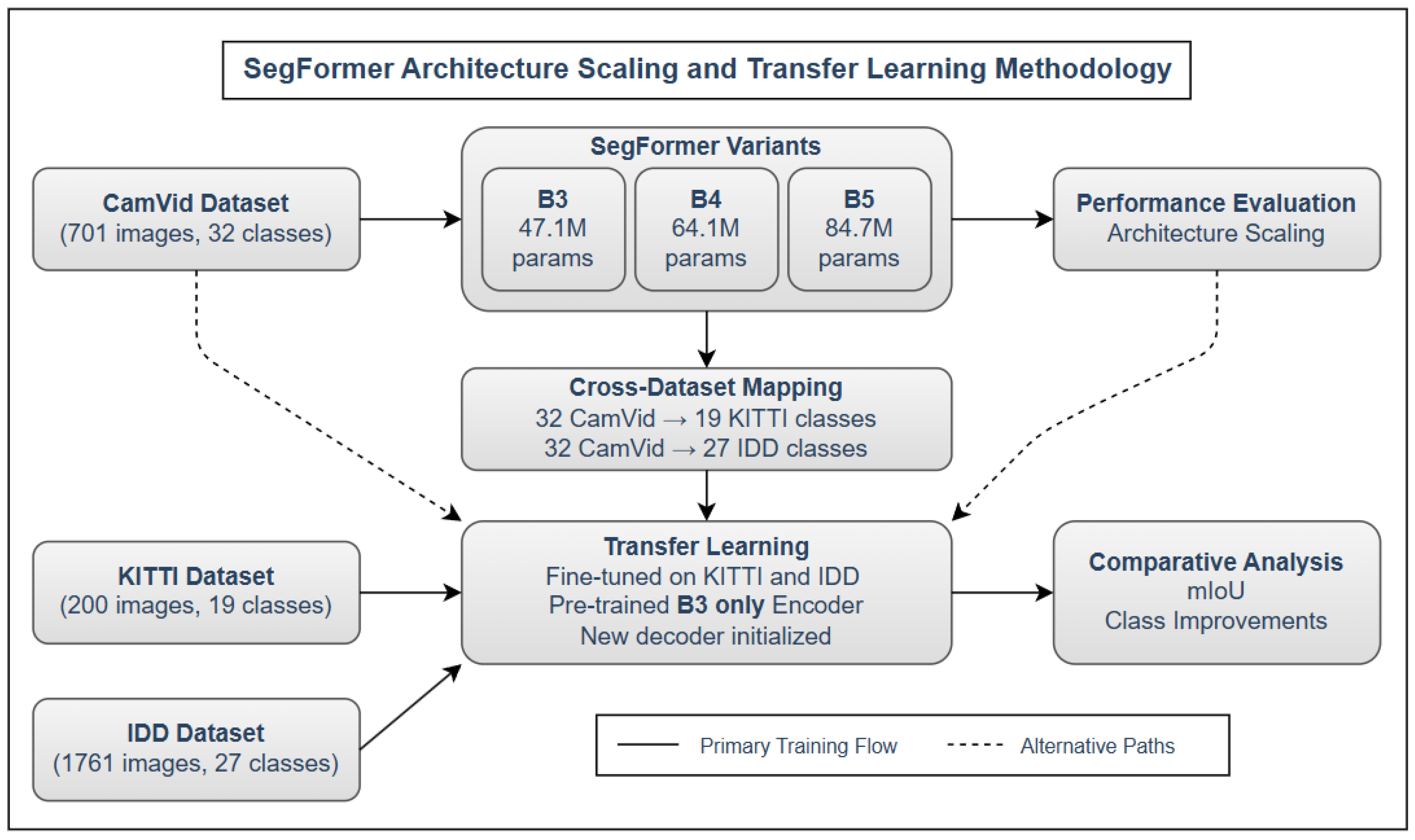

4. Methodology

4.1. SegFormer Architecture Overview

- B3: With 47.1M parameters, this variant employs a hierarchical structure of 12 transformer layers distributed across 4 stages (2,3,6,3), where each stage operates at progressively reduced spatial resolutions of , , , and . It utilizes a hidden dimension of 512 in the deeper layers and a reduction ratio of 1 in its efficient self-attention mechanism.

- B4: The intermediate variant contains 64.1M parameters with 12 transformer layers arranged in a (2,2,8,2) configuration across the four stages. B4 employs a larger embedding dimension of 640, increasing its capacity to model complex relationships while maintaining reasonable computational demands.

- B5: The largest variant with 84.7M parameters, B5 maintains the (2,2,8,2) layer distribution of B4 but expands the embedding dimension to 768. This provides substantially increased representational capacity and attention width, allowing for more nuanced feature extraction and relationship modeling.

4.2. Cross-Dataset Transfer Learning

- Initialize the encoder using CamVid-pretrained weights.

- Re-initialize the decoder to match the target dataset’s class taxonomy.

- Adapt input-output pipelines using custom class mappings.

- Fine-tune the model with a reduced learning rate to avoid catastrophic forgetting.

- Direct Mappings (e.g., road → road)

- Semantic Mappings (e.g., bicyclist → rider)

- Novel Classes (e.g., autorickshaw in IDD)

4.3. Transfer Learning Algorithm

| Algorithm 1 Cross-Dataset Knowledge Transfer for Semantic Segmentation |

|

4.4. Loss Function

4.5. Explainability via Confidence Heatmaps

- High-confidence regions (well-learned objects)

- Uncertain predictions (occlusions, rare classes)

- Model confusion at boundaries

5. Evaluation Metrics and Results

5.1. Mathematical Formulation of Evaluation Metrics

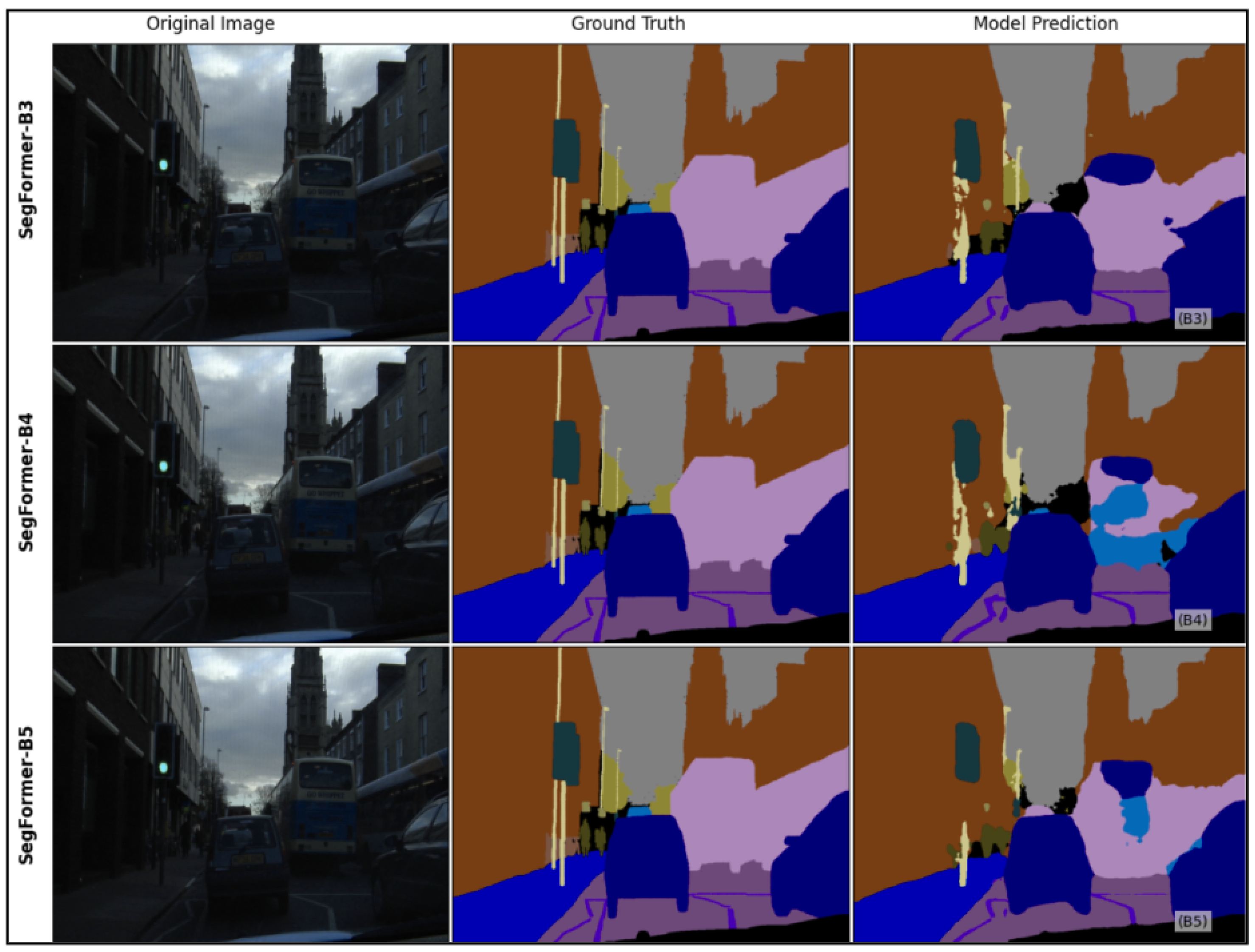

5.2. Architecture Scaling Analysis on CamVid

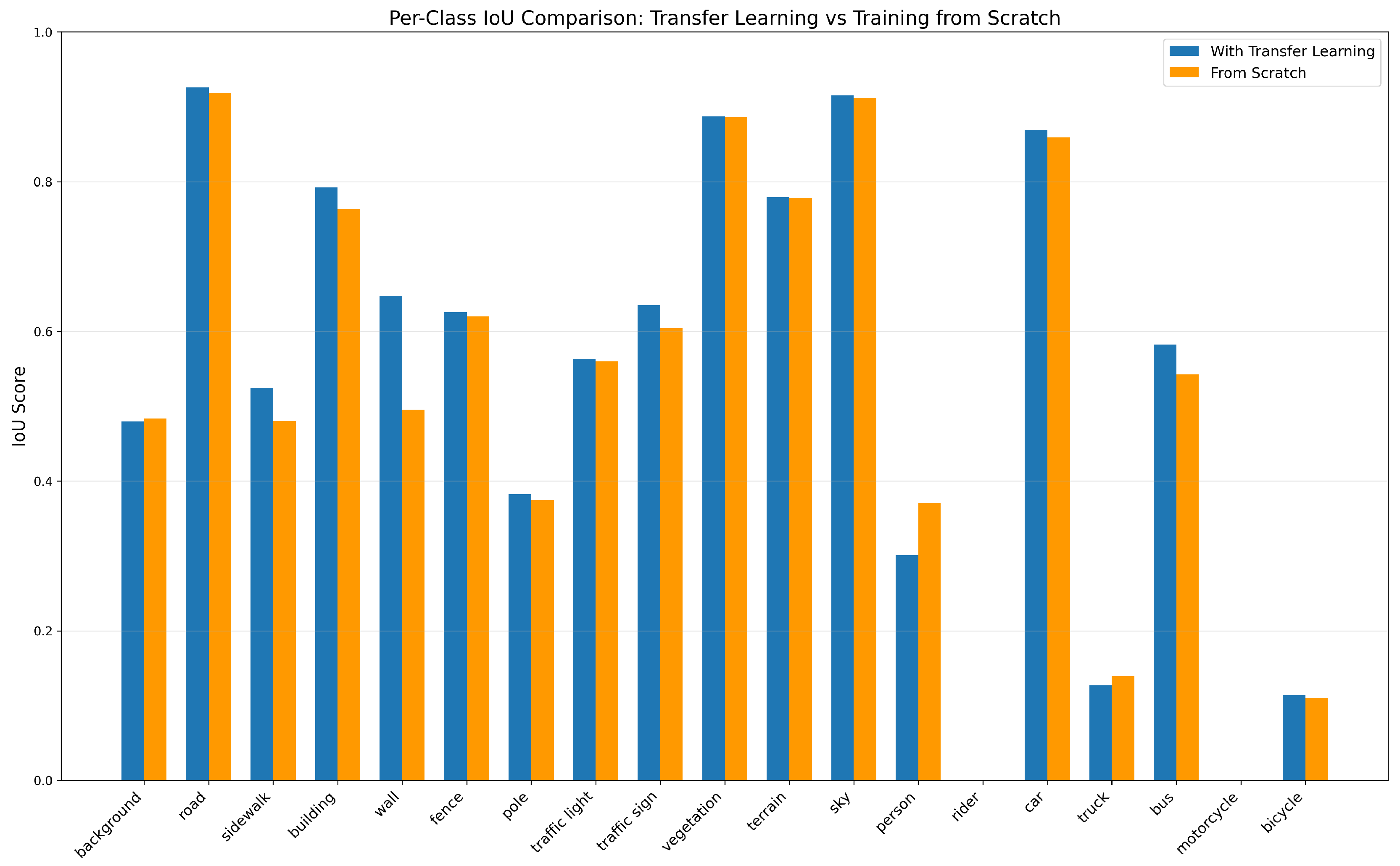

5.3. KITTI Transfer Performance

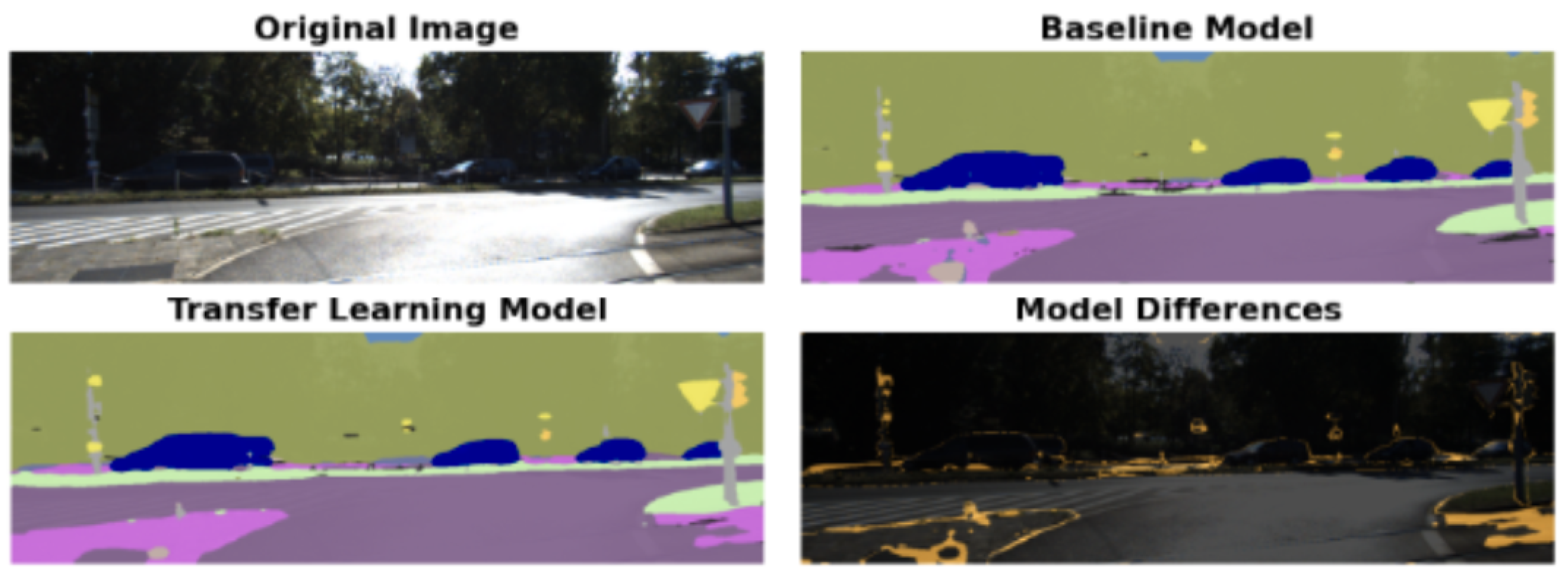

5.4. IDD Transfer Performance

6. Explainability and Interpretability

6.1. Visual Results with Prediction Overlay

7. Discussion and Future Work

8. Conclusion

References

- LeCun, Y.; Bottou, L.; Bengio, Y.; Haffner, P. Gradient-based learning applied to document recognition. In Proceedings of the Proceedings of the IEEE; 1998. [Google Scholar]

- Long, J.; Shelhamer, E.; Darrell, T. Fully convolutional networks for semantic segmentation; CVPR, 2015.

- Ronneberger, O.; Fischer, P.; Brox, T. U-Net: Convolutional networks for biomedical image segmentation; MICCAI, 2015.

- Chen, L.C.; Zhu, Y.; Papandreou, G.; Schroff, F.; Adam, H. Encoder-decoder with atrous separable convolution for semantic image segmentation. ECCV 2018, DeepLabv3. [Google Scholar]

- Xie, E.; Wang, W. ; Yu,.Z.; Anandkumar, A.; Alvarez, J.M.; Luo, P. SegFormer: Simple and efficient design for semantic segmentation with transformers. In Advances in Neural Information Processing Systems (NeurIPS); NeurIPS Proceedings, 2021.

- Dosovitskiy, A.; et al. An image is worth 16x16 words: Transformers for image recognition at scale; ICLR (Vision Transformer, 2020.

- Cordts, M.; et al. The Cityscapes dataset for semantic urban scene understanding; CVPR, 2016.

- Brostow, G.J.; Shotton, J.; Fauqueur, J.; Cipolla, R. Semantic object classes in video: A high-definition ground truth database; Pattern Recognition Letters (CamVid, 2009.

- Geiger, A.; Lenz, P.; Urtasun, R. Are we ready for autonomous driving? The KITTI vision benchmark suite; CVPR, 2012.

- Varma, G.; et al. IDD: A dataset for exploring problems of autonomous navigation in unconstrained environments; WACV, 2019.

- Hatkar, T.S.; Ahmed, S.B. Urban scene segmentation and cross-dataset transfer learning using SegFormer. In Proceedings of the Eighth International Conference on Machine Vision and Applications (ICMVA 2025); Osten, W.; Jiang, X.; Qian, K., Eds. International Society for Optics and Photonics, SPIE, Vol. 13734; 2025; p. 1373406. [Google Scholar] [CrossRef]

- Badrinarayanan, V.; Kendall, A.; Cipolla, R. SegNet: A deep convolutional encoder-decoder architecture for image segmentation. IEEE Transactions on Pattern Analysis and Machine Intelligence 2017, 39, 2481–2495. [Google Scholar] [CrossRef] [PubMed]

- Jégou, S.; Drozdzal, M.; Vazquez, D.; Romero, A.; Bengio, Y. The One Hundred Layers Tiramisu: Fully convolutional DenseNets for semantic segmentation. In Proceedings of the CVPR Workshops; 2017. [Google Scholar]

- Yu,.C.; Wang, J.; Peng, C.; Gao, C.; Yu,.G.; Sang, N. BiSeNet: Bilateral segmentation network for real-time semantic segmentation. In ECCV; IEEE, 2018.

- Xu, X.; Li, Y.; Wu, B.; Yang, W. PIDNet: A real-time semantic segmentation network inspired by PID controllers. In ECCV; Springer Science, 2022.

- Zhang, Y.; Li, K.; Chen, K.; Wang, X.; Hu, J. RTFormer: Efficient design for real-time semantic segmentation with transformer. In CVPR; IEEE, 2022.

- Tang, Y.; Wang, L.; Zhao, W. Skip-SegFormer: Efficient semantic segmentation for urban driving. In Proceedings of the IEEE International Conference on Intelligent Systems; 2023. [Google Scholar]

- Zhao, L.; Wei, X.; Chen, J. CFF-SegFormer: Lightweight network modeling based on SegFormer. IEEE Access 2023, 11, 84372–84384. [Google Scholar]

- Hoyer, L.; Dai, D.; Van Gool, L. DAFormer: Improving network architectures and training strategies for domain-adaptive semantic segmentation. In CVPR; IEEE, 2022; pp. 10157–10167.

- Kendall, A.; Badrinarayanan, V.; Cipolla, R. Bayesian SegNet: Model uncertainty in deep convolutional encoder-decoder architectures for scene understanding. In BMVA; British Machine Vision Association, 2016.

- Brostow, G.J.; Fauqueur, J.; Cipolla, R. Semantic object classes in video: A high-definition ground truth database. Pattern Recognition Letters 2009, 30, 88–97. [Google Scholar] [CrossRef]

- Geiger, A.; Lenz, P.; Stiller, C.; Urtasun, R. Vision meets robotics: The KITTI dataset. International Journal of Robotics Research 2013, 32, 1231–1237. [Google Scholar] [CrossRef]

| Mapping Type | Description | Examples |

|---|---|---|

| Direct Mappings | Classes with equivalent semantics and visual representation across datasets | Road → Road, Building → Building, Sky → Sky |

| Semantic Mappings | Classes where labels differ but visual category is similar | Bicyclist (CamVid) → Rider (KITTI, IDD), Pedestrian → Person, Vegetation and Tree → Vegetation |

| Unique/Novel Classes | Classes present only in target dataset with no source equivalent | KITTI-specific: Train, Motorcycle, Terrain; IDD-specific: Autorickshaw, Billboard, Animal |

| Model | Params (M) | mIoU (%) | PA (%) | Inference Time (ms) |

|---|---|---|---|---|

| SegFormer-B3 | 47.1 | 77.9 | 94.3 | 25.3 |

| SegFormer-B4 | 64.1 | 78.5 | 94.7 | 28.5 |

| SegFormer-B5 | 84.7 | 82.4 | 95.6 | 32.8 |

| Class | IoU (Scratch) | IoU (Transfer) | Gain (%) |

|---|---|---|---|

| Wall | 0.4953 | 0.6476 | +30.75 |

| Sidewalk | 0.4800 | 0.5241 | +9.18 |

| Bus | 0.5421 | 0.5824 | +7.44 |

| Traffic Sign | 0.6039 | 0.6353 | +5.19 |

| Bicycle | 0.1100 | 0.1143 | +3.95 |

| Class | IoU (Scratch) | IoU (Transfer) | Gain (%) |

|---|---|---|---|

| Motorcycle | 0.1748 | 0.3019 | +72.74 |

| Rider | 0.2156 | 0.3103 | +43.91 |

| Traffic Light | 0.3351 | 0.4145 | +23.69 |

| Autorickshaw | 0.2925 | 0.3389 | +15.87 |

| Curb | 0.3937 | 0.5088 | +29.27 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).