In this section, we present a comprehensive empirical evaluation of the proposed WinStat family of positional encodings across all available heterogeneous benchmarks. Our experimental design aims to identify overall trends that may indicate the most effective encoding variant across diverse datasets. To this end, we evaluate all five proposed variants on each dataset—HPC, the ETT hourly variants, NYC, and TINA—alongside established baselines, using identical architectural configurations and training protocols to ensure fair comparison. Performance is assessed through MSE and MAE metrics across multiple independent runs. To validate that improvements stem from meaningful positional structure rather than incidental artifacts, we include decoder-input shuffling tests that systematically disrupt temporal order. This ablation approach allows us to establish a causal link between the proposed local semantic encodings and forecasting accuracy, while the learned mixture weights provide interpretable insights into which positional components contribute most effectively to each domain.

5.1. Comparative Results

We use the five previously introduced datasets as a comprehensive testing ground to evaluate the proposed encodings (WinStat, WinStatLag, WinStatFlex, WinStatTPE, WinStatSPE) against established baselines under identical architectural configurations and data splits. Collectively, these datasets constitute a heterogeneous benchmark environment, capturing a wide spectrum of temporal properties such as seasonality, stationarity, locality, and the coexistence of high- and low-frequency information. This diversity provides a rigorous basis for assessing the robustness and generality of the proposed methods across problems with distinct structural and semantic characteristics.

Table 3 consolidates all results across datasets and methods, including Informer (with

timeF), sinusoidal APE only, a no-PE ablation, and the WinStat family. Informer serves as the reference baseline, with removal of PE leading to substantial performance degradation (higher MSE/MAE), whereas sinusoidal APE shows moderate gains.

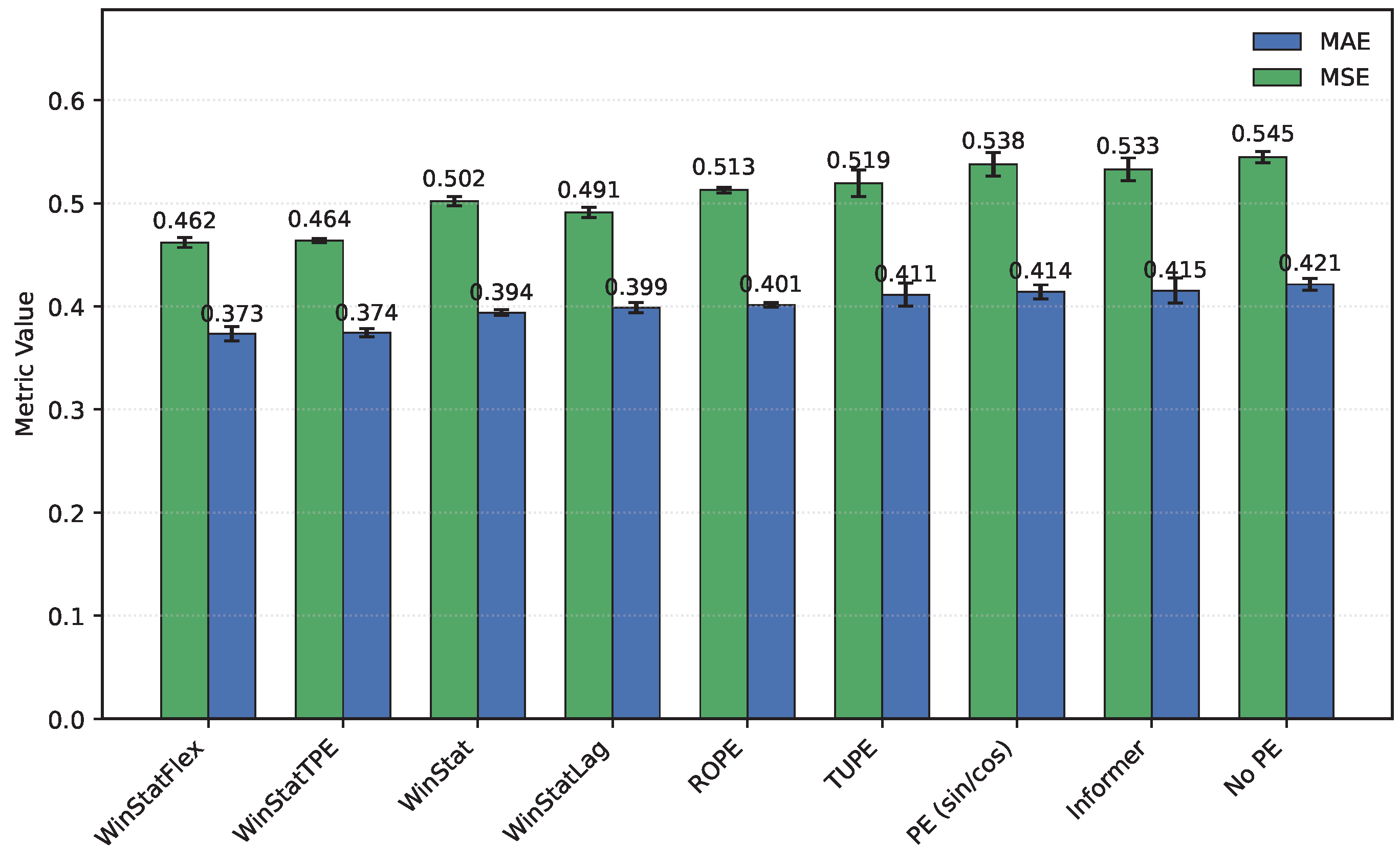

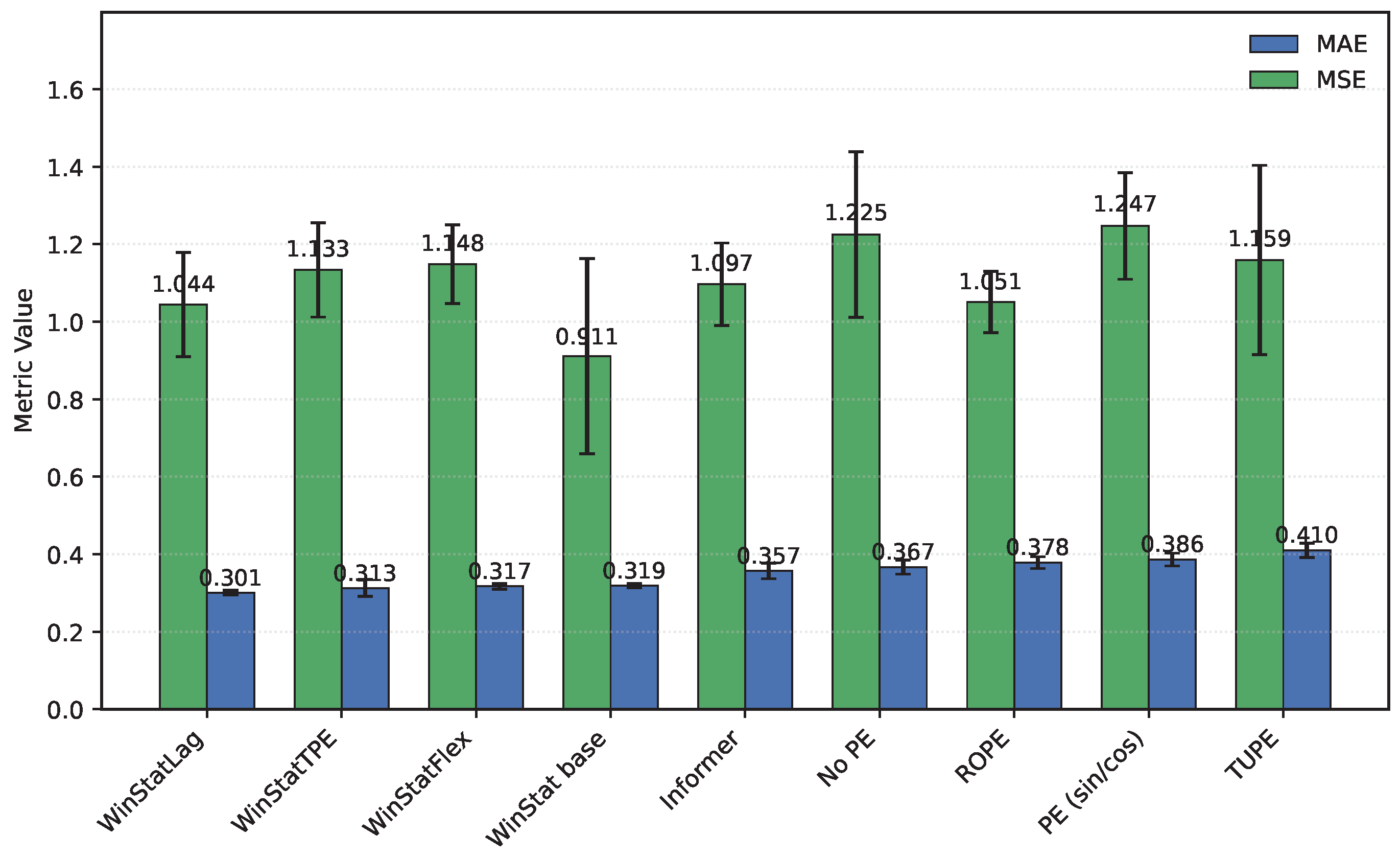

Figure 1 shows that, among the WinStat variants, WinStatFlex and WinStatTPE consistently achieve the lowest errors and most stable variances in

HPC. Additionally, results on TUPE and ROPE datasets—representative of state-of-the-art benchmarks—confirm that the observed patterns generalize beyond the

HPC dataset.

On the

ETT hourly benchmarks (

ETTh1/ETTh2), both WinStatFlex and WinStatTPE markedly outperform Informer, while the

no-PE ablation consistently ranks last, underscoring the necessity of explicit positional information.

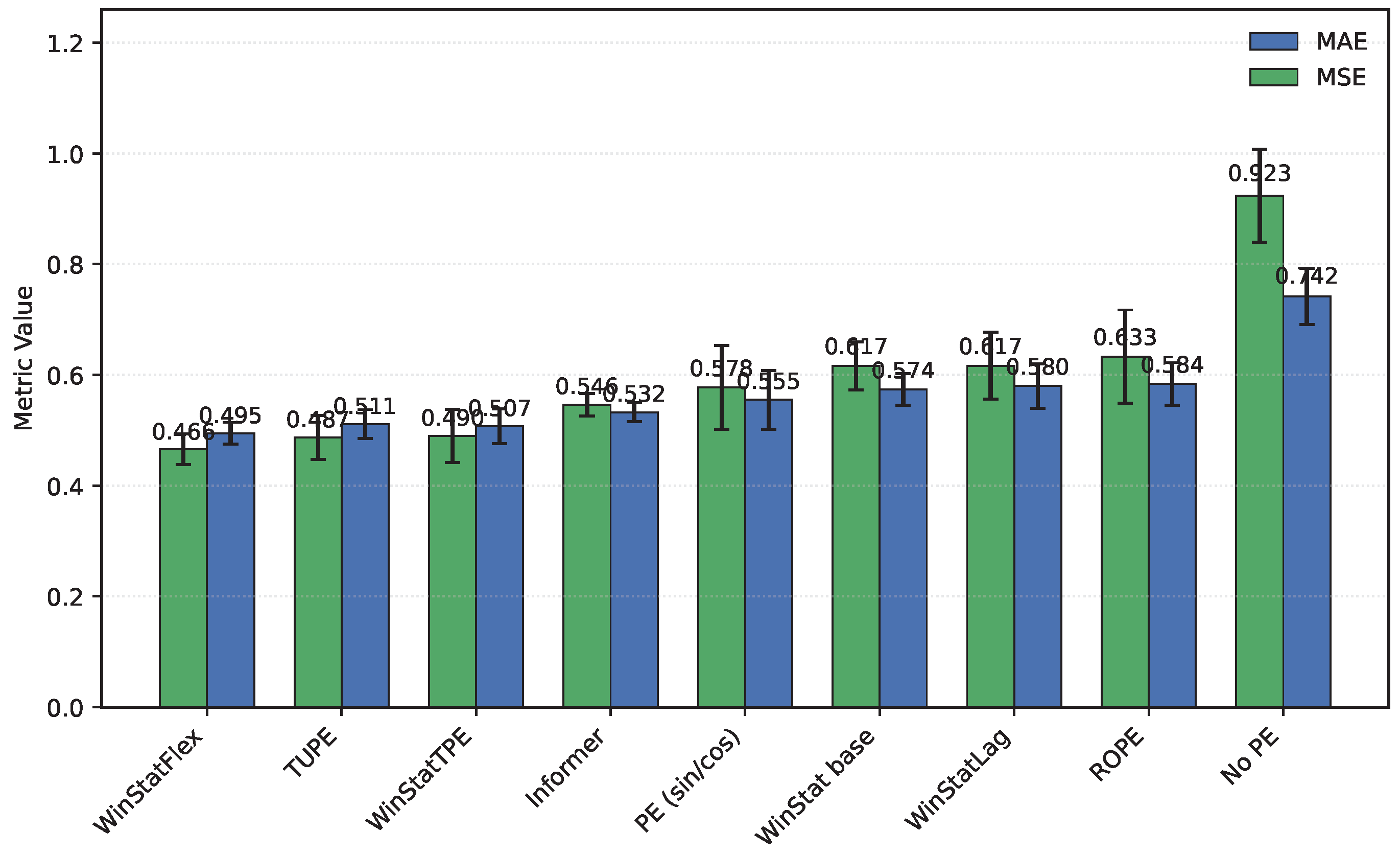

Figure 2 shows the performance in

ETTh1: TUPE situates between WinStatFlex and WinStatTPE, highlighting its intermediate effectiveness. Sinusoidal APE behaves poorly, in some cases even worse than the shuffled WinStat variants, suggesting that purely geometric absolute signals may misalign with the true temporal dependencies of this subset unless complemented by local or semantic components. As shown in

Table 3, the ranking for

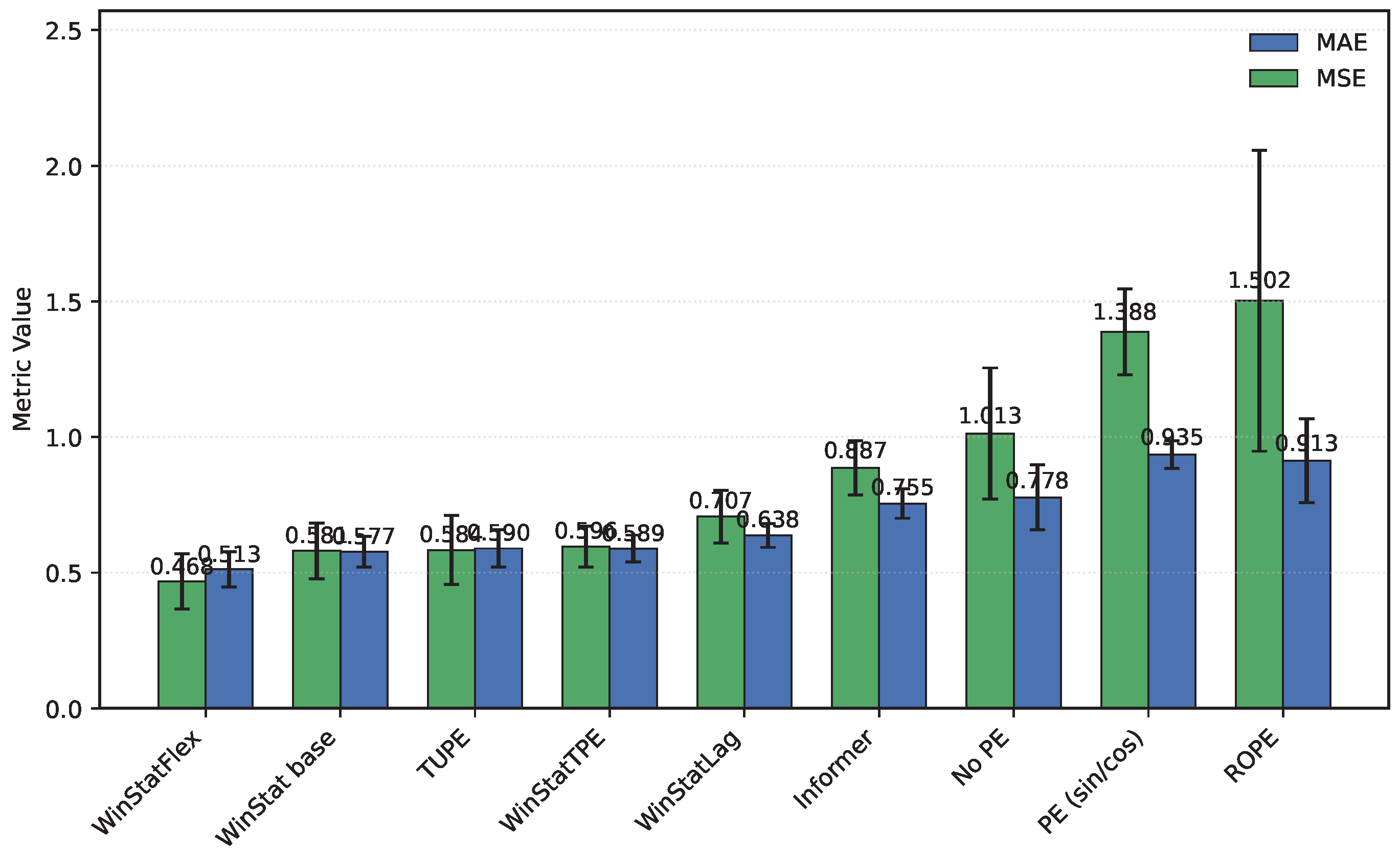

ETTh2, from best to worst, is: WinStatFlex > WinStat base > TUPE > WinStatTPE > WinStatLag, with ROPE performing particularly poorly and occupying the last position. As illustrated in

Figure 3, the visual comparison clearly emphasizes this gap, and reveals the high variance in ROPE’s results, underscoring its instability across runs. This confirms that semantic and local positional information, as captured by the WinStat variants, remains critical for accurately modeling long-range dependencies in these datasets.

Regarding the hourly

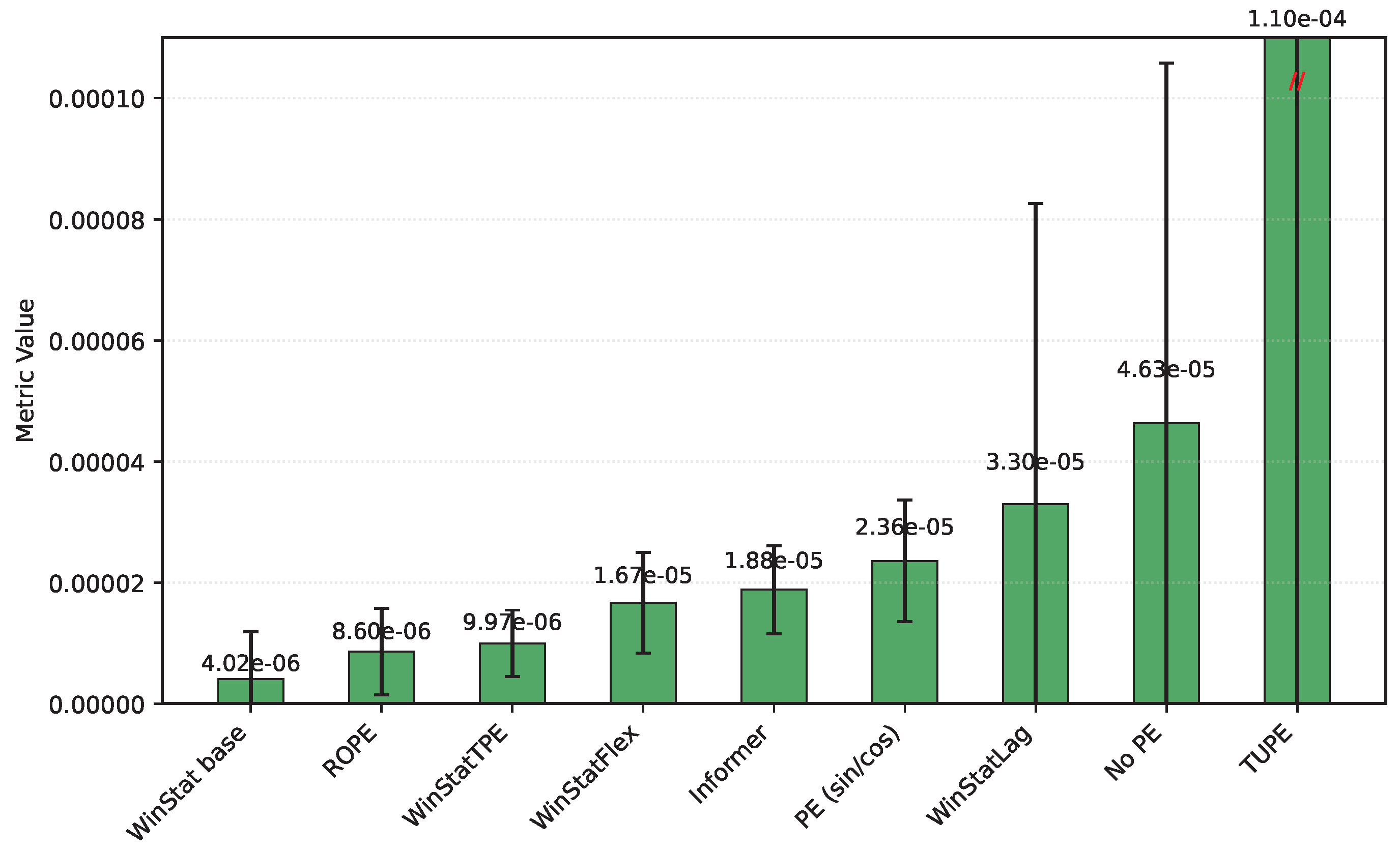

NYC aggregates, we caution that the series was artificially extended, which may bias the metrics toward optimistic values due to the smoothing effects of generative augmentation. With that caveat, WinStat base achieves the best performance overall. WinStatTPE and ROPE show very similar results, while TUPE performs particularly poorly—worse even than the

no-PE ablation. WinStatFlex still surpasses Informer and sinusoidal APE, but the ranking highlights that, in this dataset, the effectiveness of positional encoding varies substantially across methods. As shown in

Table 3 and

Figure 4 and

Figure 5, this variability is visually evident, revealing marked contrasts in performance stability between the WinStat family and methods like TUPE. Due to this reason, the figure has been divided to better read the results.

The fourth benchmark,

TINA—an industrial, high-dimensional dataset originally curated for anomaly analysis—poses a stringent test of computational efficiency and robustness. Even after careful optimization, its scale requires substantial hardware and long runs; however, its breadth provides a valuable stress test for the positional mechanisms. As summarized in

Table 3, the results indicate that, in terms of MSE, WinStat base achieves the best performance, followed closely by ROPE, with Informer slightly behind; WinStatTPE, WinStatFlex, and TUPE all perform worse than Informer. However, when considering MAE, the ranking shifts: WinStatLag becomes the top performer, while WinStatTPE, WinStatFlex, and WinStatBase exhibit very similar results, and TUPE ranks last—even below the

no-PE ablation. These fluctuations highlight that the relative effectiveness of positional encodings can vary substantially due to the particular characteristics of this dataset. Graphically, the behavior of the encodings can be observed in

Figure 6, where WinStatFlex and WinStatTPE clearly stand out, achieving substantially better results than the other methods. For the remaining models, however, it becomes difficult to establish a clear hierarchy, as the relative performance varies depending on the metric considered.

5.2. Analysis of the Learned Mixture Weights

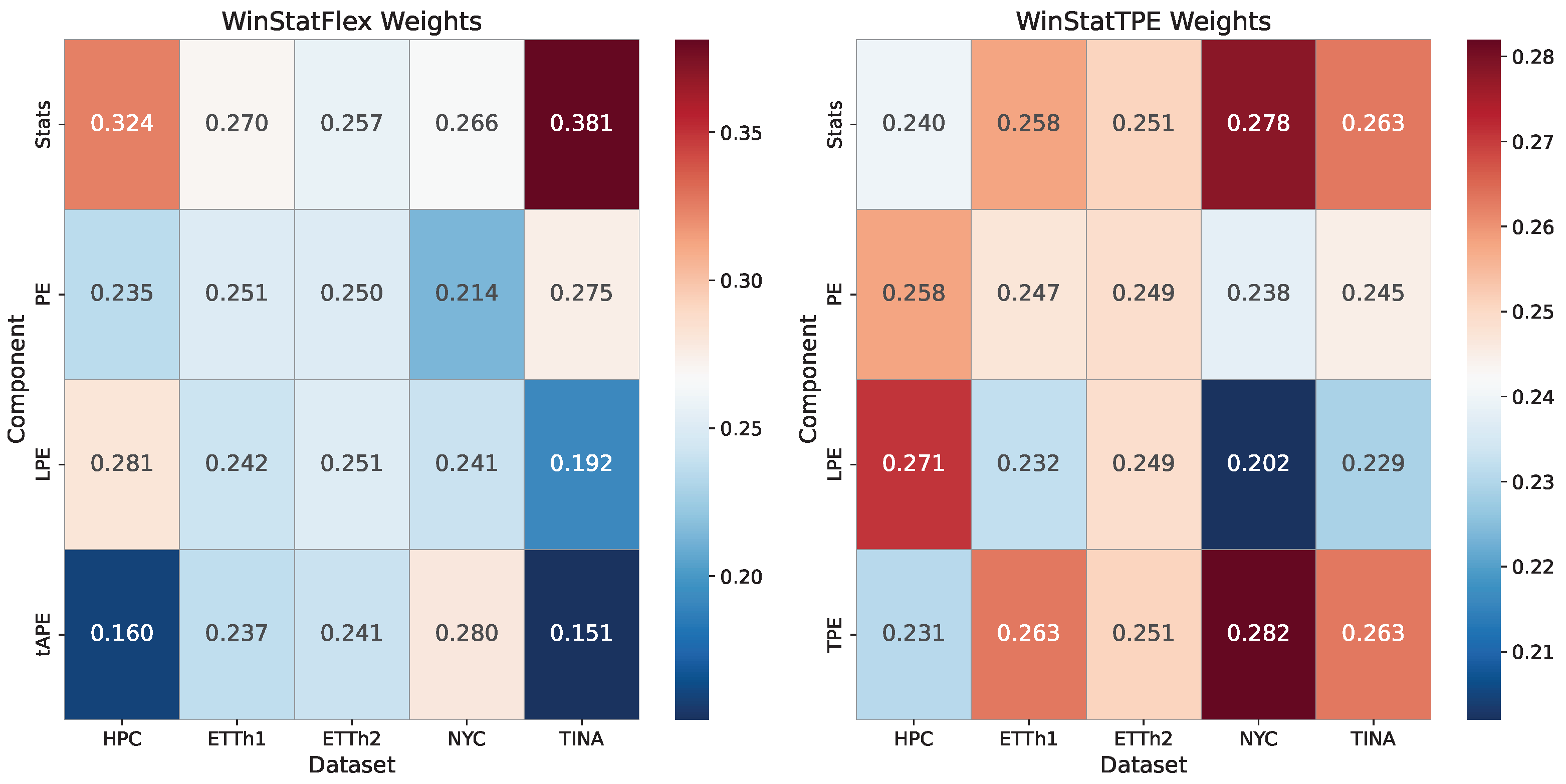

To gain a clearer insight into the internal behavior of the proposed encodings, we analyze the relative contribution of each component within the normalized weighting scheme of WinStatFlex and WinStatTPE. For this purpose, we compile tables reporting the normalized weights associated with each component, enabling us to assess their relative importance in the overall computation. This analysis allows us to identify which parts dominate the representation and to evaluate whether the observed weighting patterns align with the intended design of the encodings.

HPC

The learned mixture weights, as reported in

Table 4, indicate that the strongest variants assign non-trivial mass to the local-statistics component together with absolute/learnable encodings (and, for TPE, a semantic term), rationalizing their superiority over fixed APE.

ETT

In the case of the

ETT benchmarks, both datasets exhibit a fairly similar and balanced behavior. For the first subset,

ETTh1 (

Table 5), and the WinStatFlex variant, the statistical component is marginally higher than the others, although the relevance distribution remains close to one-fourth across all components. For WinStatTPE, this slight increase shifts toward the TPE component itself, potentially due to its semantic contribution, which facilitates the modeling of outcomes. Overall, however, the weighting can be described as nearly uniform across components for both variants. This effect becomes even more pronounced in

ETTh2 (

Table 6), where both models achieve values that closely approximate an equal proportionality among components.

Yellow Trip Data (NYC): Aggregated Urban Mobility

Learned mixture weights, as reported in

Table 7, show non-trivial mass on

Stats and

TPE, indicating that local statistics and semantic similarity are the dominant contributors in this domain. In this case, the differences among components are more pronounced than in the previous datasets. For the WinStatFlex variant,

Stats and

tAPE gain greater relevance, reducing the proportional weight of the fixed encoding, which appears to contribute little useful information. For WinStatTPE, the emphasis again falls on the semantic components, namely

Stats and

TPE. Interestingly, however, the learnable encoding becomes the least relevant component in this setting, which slightly increases the relative importance of the fixed PE.

TINA (Industrial): High-Dimensional, Long Sequences

In the particular case of the TINA dataset, the differences among variants are even more pronounced. For WinStatFlex, the largest share of the weighting falls on the concatenated statistical values, as the high complexity of the time series and its specific characteristics make the semantic information provided by this component especially critical. The tAPE component, despite being specifically designed to adapt the traditional encoding to the properties of the series, does not appear to achieve the intended effect, possibly introducing desynchronization and thus ranking last in relevance. For WinStatTPE, however, the differences among components are minimal, potentially due to the strong semantic contribution of the involved components, but also as a result of the particular characteristics of this dataset.

Table 8.

TINA: learned mixture weights in WinStat variants (normalized). The symbol * identifies a component not considered in the weighting definition.

Table 8.

TINA: learned mixture weights in WinStat variants (normalized). The symbol * identifies a component not considered in the weighting definition.

| Component |

WinStatFlex |

WinStatTPE |

| Stats |

0.3814 |

0.2630 |

| PE |

0.2752 |

0.2450 |

| LPE |

0.1918 |

0.2290 |

| tAPE |

0.1515 |

* |

| TPE |

* |

0.2630 |

Lessons learned from weight training

After estimating the weights associated with each of the methods integrated into WinStat, several noteworthy conclusions can be drawn. First, the results highlight the high adaptability of WinStat, which stems from the diversity of its constituent components. This adaptability is clearly illustrated by the heatmap in

Figure 7, which highlights the substantial variation in weights across different datasets. The visualization makes it evident that the algorithm is capable of dynamically adjusting its weighting strategy according to the characteristics of each specific scenario. Such flexibility is a desirable property in practical applications, as it enhances the robustness and generalizability of the method across heterogeneous contexts.

In addition, the statistical computation component emerges as particularly influential, consistently receiving a relatively high share of importance. For instance, in both the TINA and HPC datasets, this component accounts for more than 30% of the total weight, thereby reinforcing its central role in the overall performance of the framework. The prominence of this component indicates that the extraction and processing of statistical information remain a key driver of predictive capacity within the WinStat architecture.

At the same time, a certain degree of uniformity in the choice of weights is observed across several datasets. This tendency suggests that, while the algorithm adapts to scenario-specific requirements, it also identifies stable patterns of relevance among its components. Taken together with the strong performance metrics consistently achieved by the proposed method, these observations emphasize not only the complementary nature of the individual components but also their collective contribution to the effectiveness of WinStat. Consequently, the results provide empirical support for the design choices underlying the framework and point to its potential applicability in a wide range of real-world tasks.

5.3. Comparing the Semantic Quality of the Encoding

In this Section, we highlight the semantic role of positional encodings and their importance for evaluating encoding quality. Although semantics lie outside the architecture, whose layers process only numerical tensors, such information may be implicitly captured through locality and patterns, which our proposed encodings aim to exploit.

A straightforward way to assess this is to shuffle the decoder inputs during inference and compare the performance with intact sequences, as suggested in [

6]. This procedure provides an intuitive measure of whether an encoding preserves order and locality, as a significant drop in performance indicates that the model relies on sequential structure to make accurate predictions. In contrast, if shuffling has little effect, it suggests that the encoding does not effectively capture temporal dependencies.

To examine this property, we employed the five datasets evaluated previously, selected to study the impact of input shuffling across problems with diverse temporal and semantic characteristics, particularly in terms of seasonality and stationary patterns. The decoder shuffling procedure was applied to the WinStat family, as well as to TUPE, ROPE, and the Informer model itself, enabling a direct comparison of its effect across these different approaches.

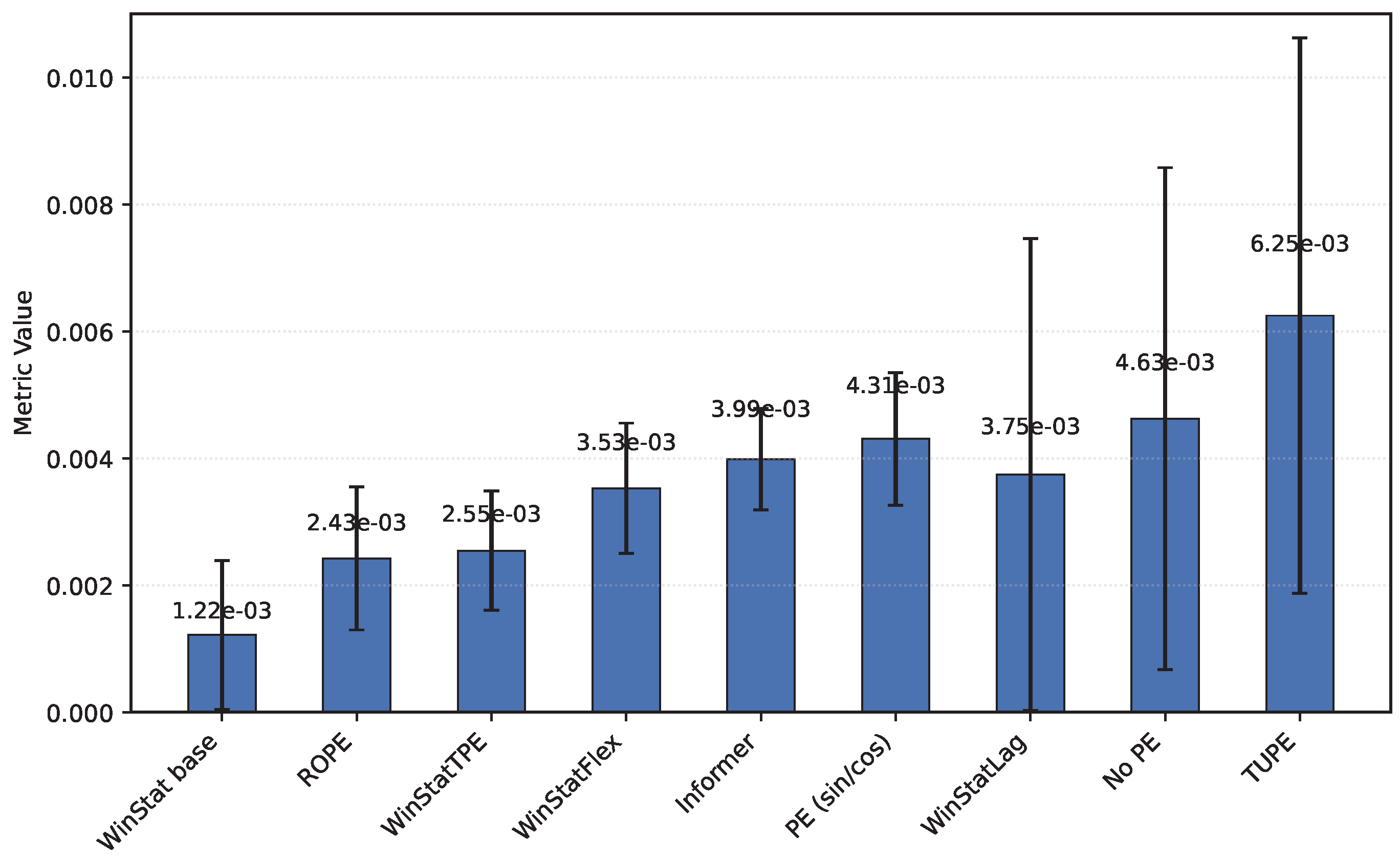

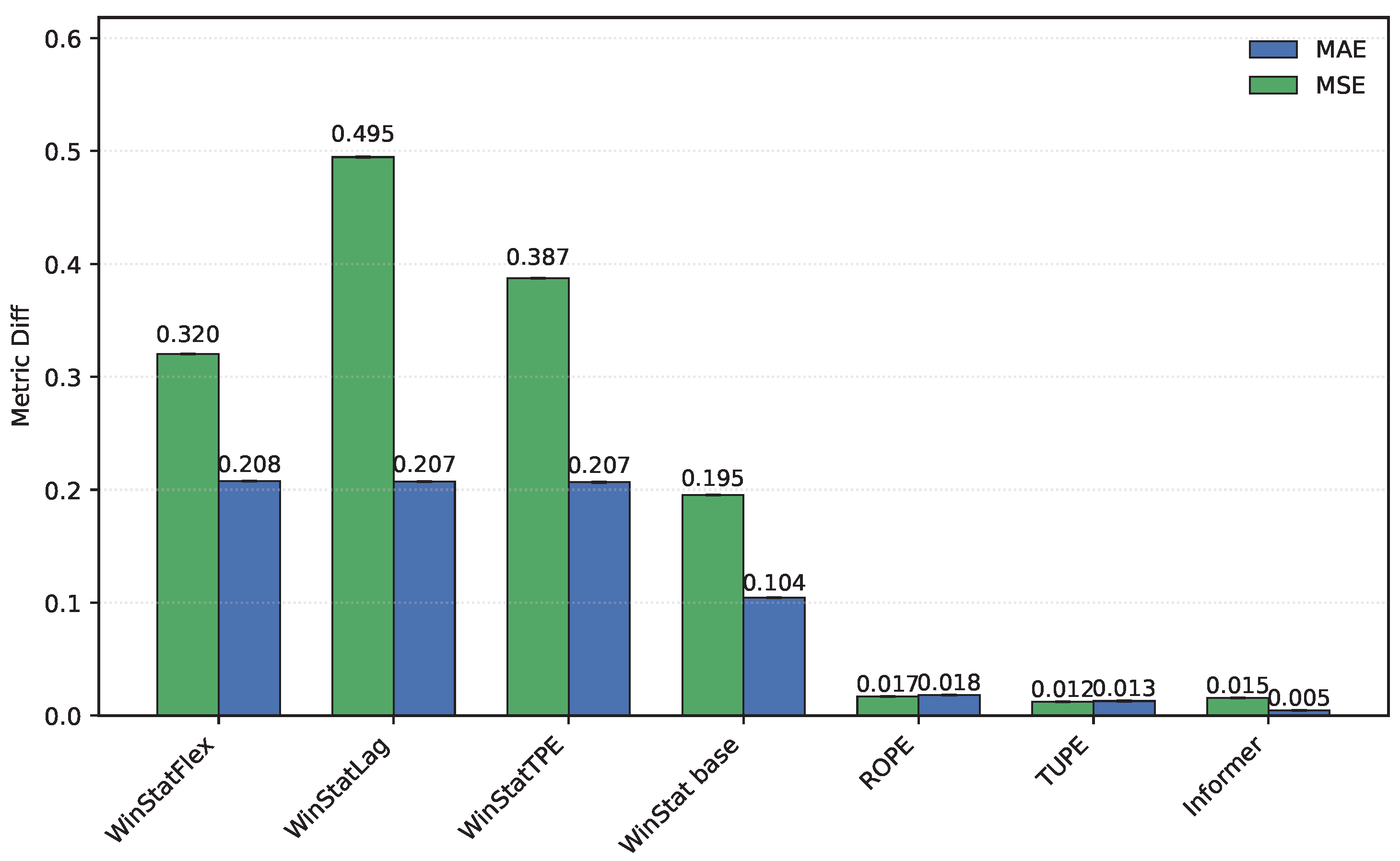

As shown in

Table 9, the performance of the original Informer and its shuffled variant is nearly identical, which is consistent with the findings of the original study in [

6]. The version without positional encoding performs at an intermediate level, leading to overall similar results across all three models and underscoring the limited semantics and weak-order preservation provided by the traditional sinusoidal scheme.

In contrast, WinStatFlex exhibits a marked drop in performance when inputs are shuffled, a desirable behavior that reflects its ability to capture both contextual dependencies and the intrinsic sequential structure of the data.

A similar but more pronounced effect is observed for WinStatTPE, whose degradation under shuffling clearly exceeds that of the baseline Informer. This outcome indicates that the semantic information captured by T-PE is even more sensitive to disorder, highlighting its superior ability to encode a meaningful temporal structure compared to the traditional approach.

Although these two methods achieved the best results in the previous experiments within our model family, the remaining variants exhibit a consistent pattern: all experience a substantial performance degradation when input shuffling is applied. This provides empirical evidence, in a general sense, that our proposed encodings are significantly sensitive to structural changes in the data. Consequently, it demonstrates that they successfully inject meaningful positional information with a strong semantic component into the datasets.

Across the evaluated benchmarks, WinStat base consistently demonstrates superior performance on the TINA and NYC, reflecting the particular temporal characteristics of these series. In TINA, the time series exhibits complex, low-frequency fluctuations over a wide temporal range, making the statistical component especially critical for capturing relevant patterns. In Taxi, the synthetic extension of the series similarly emphasizes the importance of statistical features, helping to stabilize learning despite the artificial augmentation. In contrast, TUPE and ROPE consistently display a counterintuitive behavior on these same datasets: applying input shuffling leads to better performance than the unshuffled model, contrary to the expected clear degradation. This can be attributed both to the distinct nature of these mechanisms—being more strongly driven by attention computations than by the explicit encoding itself—and to the specific characteristics of the datasets. The smoothing effects in Taxi and the wide-ranging fluctuations in TINA reduce the direct impact of positional information on performance, highlighting a fundamental difference compared to the WinStat family, whose encodings are more robustly anchored in the statistical and semantic structure of the data.

We can analyze the differential behavior graphically by examining visualizations that compare the original and shuffled models across the evaluated datasets. In these representations, higher values indicate better performance, meaning that semantic and ordering information is being effectively injected into the model. These visual comparisons highlight how the encoding methods from WinStat family impact the model’s ability to capture meaningful temporal or structural dependencies across datasets.

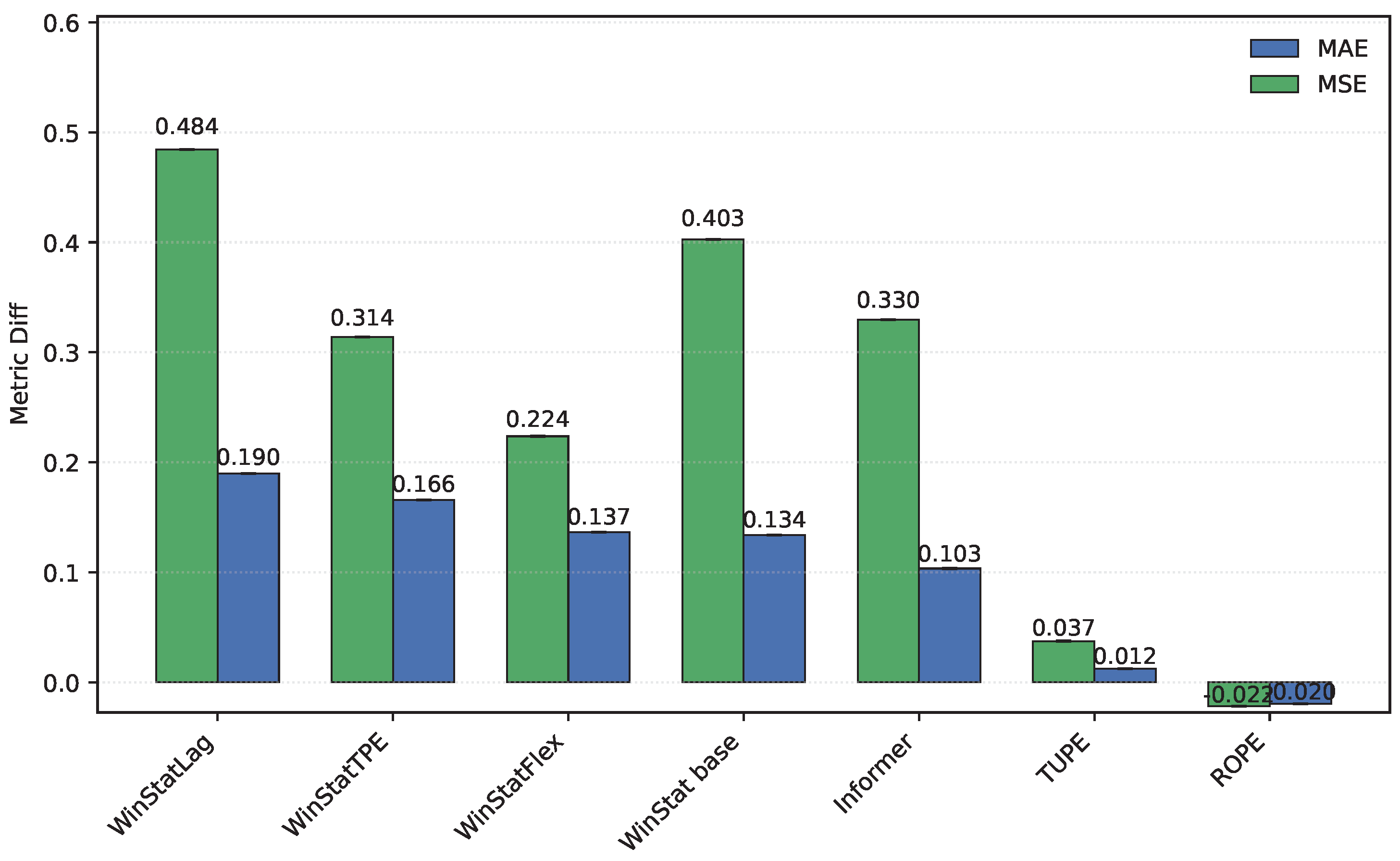

First, the

HPC dataset exhibits minimal differences between the original and shuffled Informer model, as illustrated in

Figure 8, which is particularly noteworthy and indicates the limited ordinal information contributed by this approach. A similar behavior is observed for the other tested methods, TUPE and ROPE. In contrast, all four WinStat variants show a substantially larger differential, highlighting a strong ordinal contribution to the model that is effectively disrupted when input shuffling is applied.

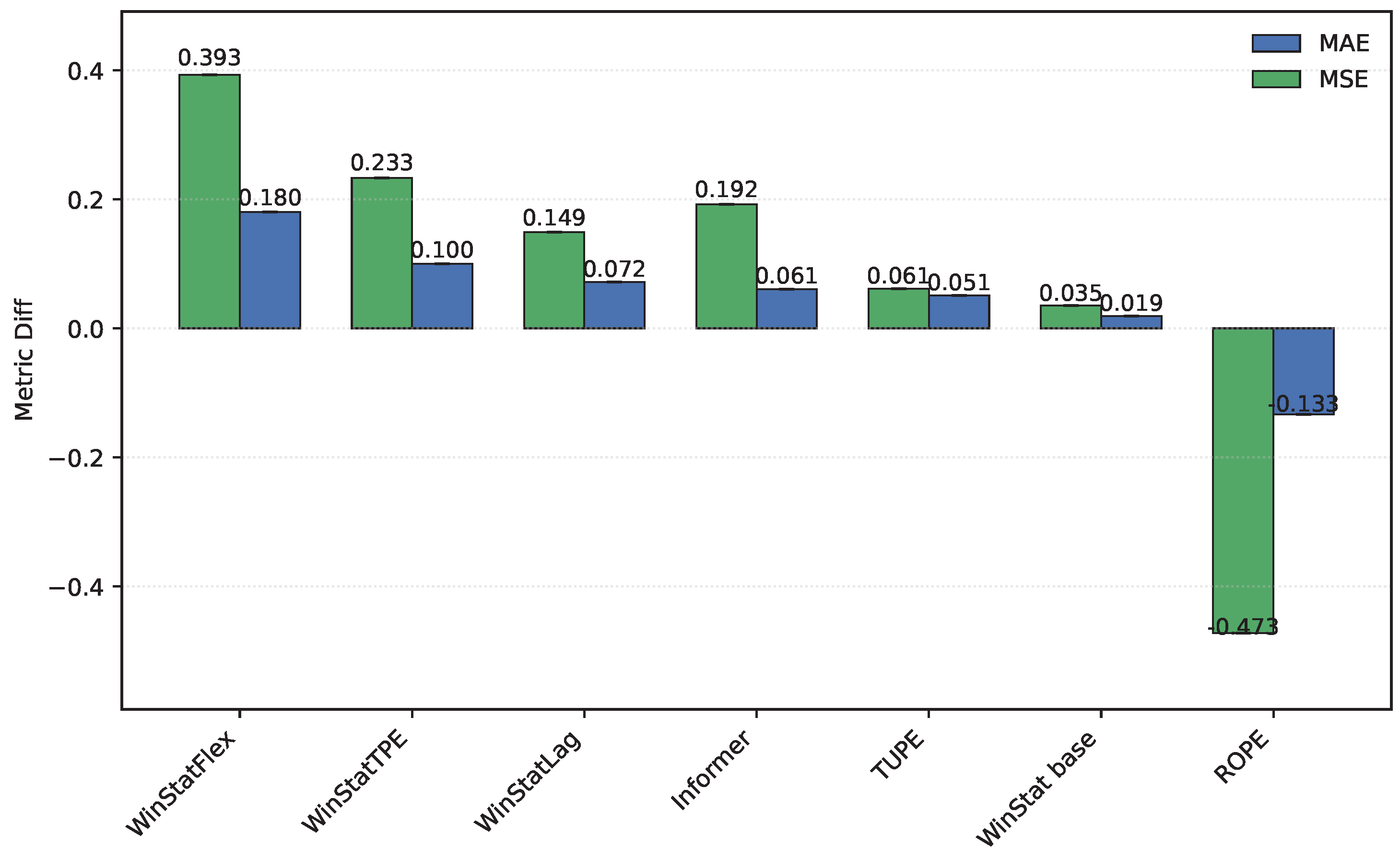

For

ETTh1 and

ETTh2, the observed results differ considerably. In

ETTh1, as shown in

Figure 9, input shuffling imposes a substantial penalty on Informer, although this effect is smaller in terms of MAE compared to the WinStat family. TUPE, in contrast, exhibits almost negligible degradation, while ROPE even shows a negative difference, indicating better performance under shuffling than in the original configuration, which suggests that this method is not well suited for this dataset. In

ETTh2, illustrated in

Figure 10, the behavior shifts: WinStatFlex now suffers the largest degradation (in contrast to WinStatLag in

ETTh1). Most notably, ROPE exhibits a pronounced negative delta, reflecting a substantial improvement under shuffling. As these experiments were repeated multiple times, this is not an artifact, but rather a clear indication that this mechanism is ineffective for the

ETTh2 dataset.

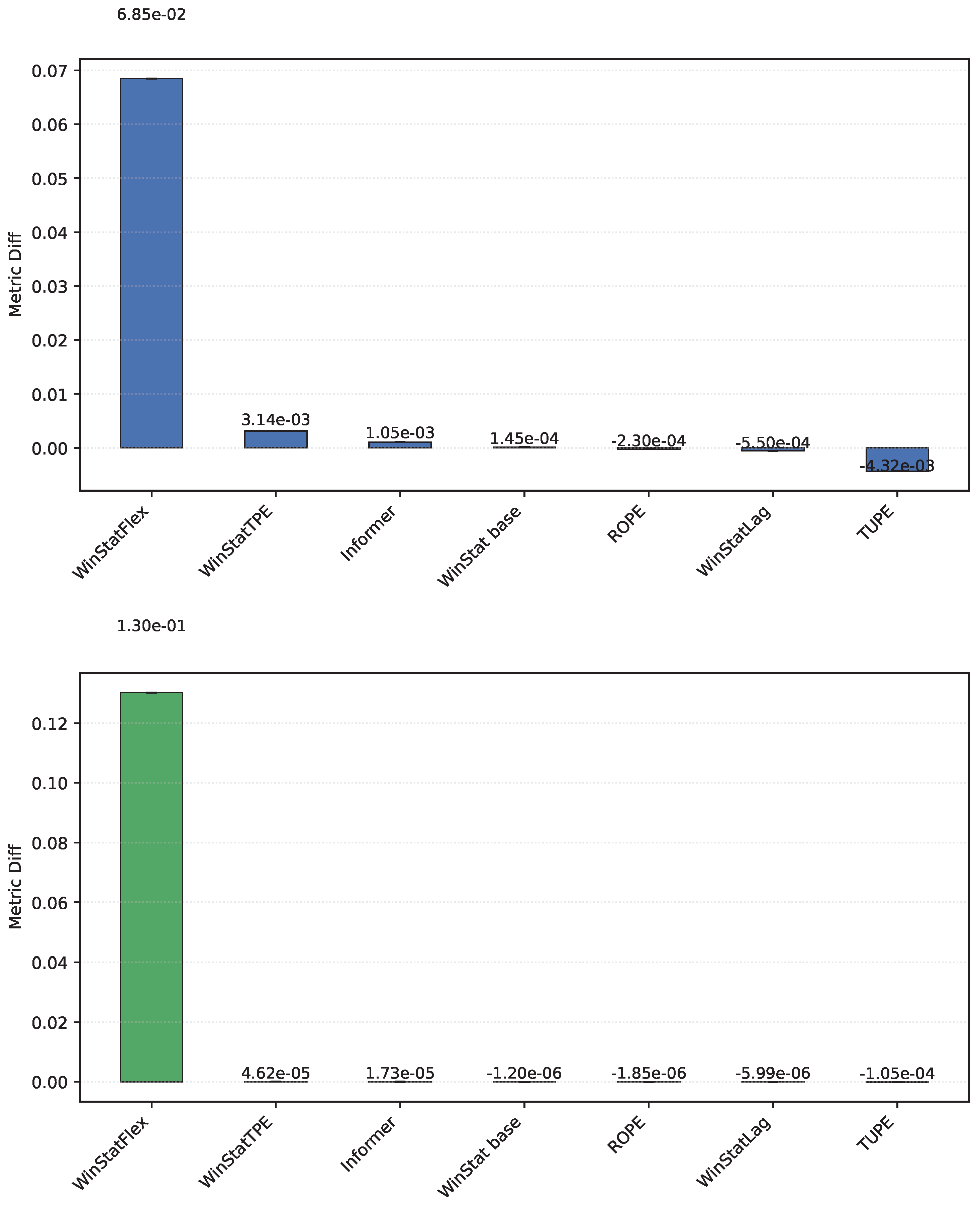

The

NYC dataset, shown in

Figure 11, exhibits one of the largest discrepancies observed in this experiment, with the

WinStatFlex encoding achieving a difference on the order of

. This represents, without doubt, the most pronounced result across all benchmarks. Although similar trends can be identified in other models within the WinStat family, the magnitude of the difference in this case is considerably greater, highlighting the distinct sensitivity of this dataset to the proposed encoding.

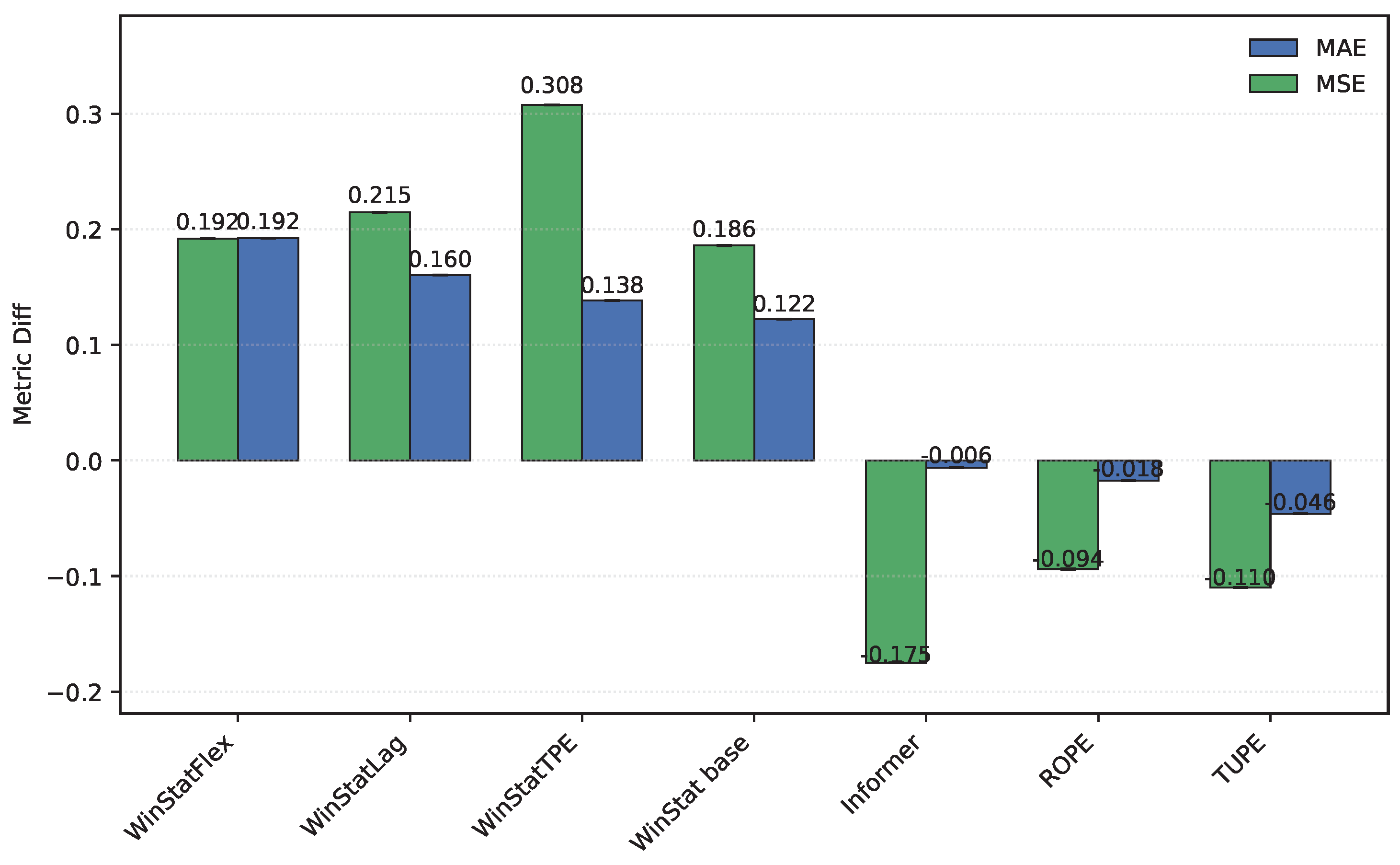

Finally, as illustrated in

Figure 12, in the

TINA dataset, several encodings—such as ROPE, TUPE, and the original Informer—exhibit negative differences, meaning that the shuffled models actually outperform their unshuffled counterparts. While this behavior is theoretically counterintuitive, it reinforces the notion that these positional encoding mechanisms are not well suited to the specific characteristics of this dataset. In contrast, all variants of the WinStat family display the expected degradation under shuffling—most notably WinStatTPE—thereby confirming their stronger capacity to encode meaningful positional and semantic information.