Submitted:

20 October 2025

Posted:

21 October 2025

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

| Key | Value | Note |

|---|---|---|

| 67.2 ns | 201 cycles @3GHz |

at 10Gbps / 14.8Mpps, time available for processing a single packet |

| CPU cache miss | 32 ns | CPU cache miss time |

| CPU cache miss x 2 | 64 ns | Running out of available delay per packet |

| socket buffer: s_buf | fast | Hits L3/L2 cache in most cases |

| placed to L3 cache directly | packet cache |

at Intel E5-xx, Data Direct I/O (DDIO) or DCA |

| L2 access cost | 4.3 ns | lat_mem_rd 1024 128 |

| L3 access cost | 7.9 ns | lat_mem_rd 1024 128 |

| atomic lock | 8.2 ns | 17–19 cycles |

| optimized spin lock | 16.1 ns | 34–39 cycles |

| system call overhead | too big enough | a few system call invocations consume over 67.2 ns |

| synchronized-cost | ||

| spin_ [lock/unlock] | 34 cycles 13.943 ns |

simple |

| local_BH_ [disable/enable] | 18 cycles 7.410 ns |

SW interrupt |

| local_IRQ_ [disable/enable] | 7 cycles 2.860 ns |

HW interrupt |

| local_IRQ_ [save/restore] | 37 cycles 14.837 ns |

HW interrupt + status |

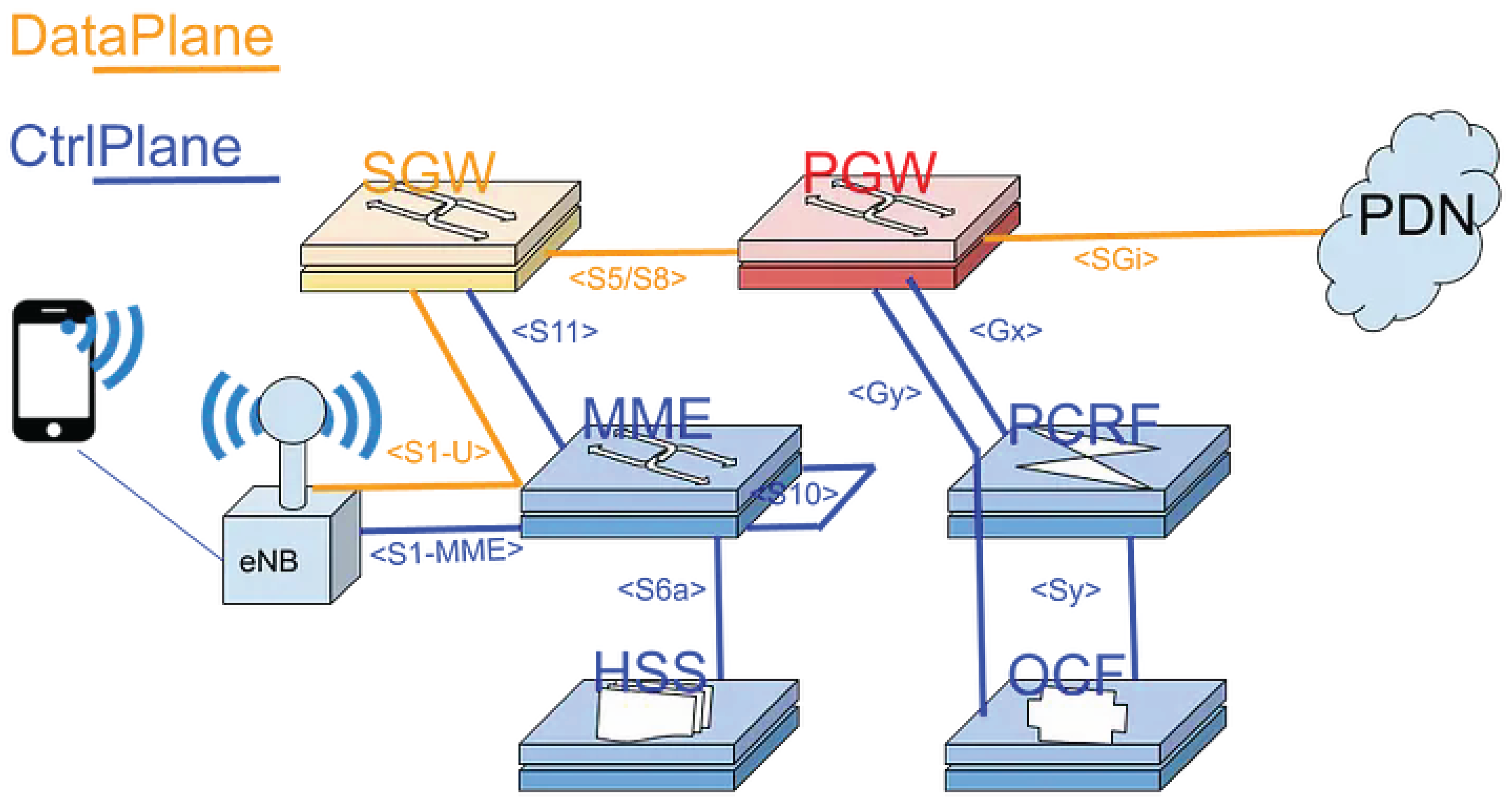

2. PGW

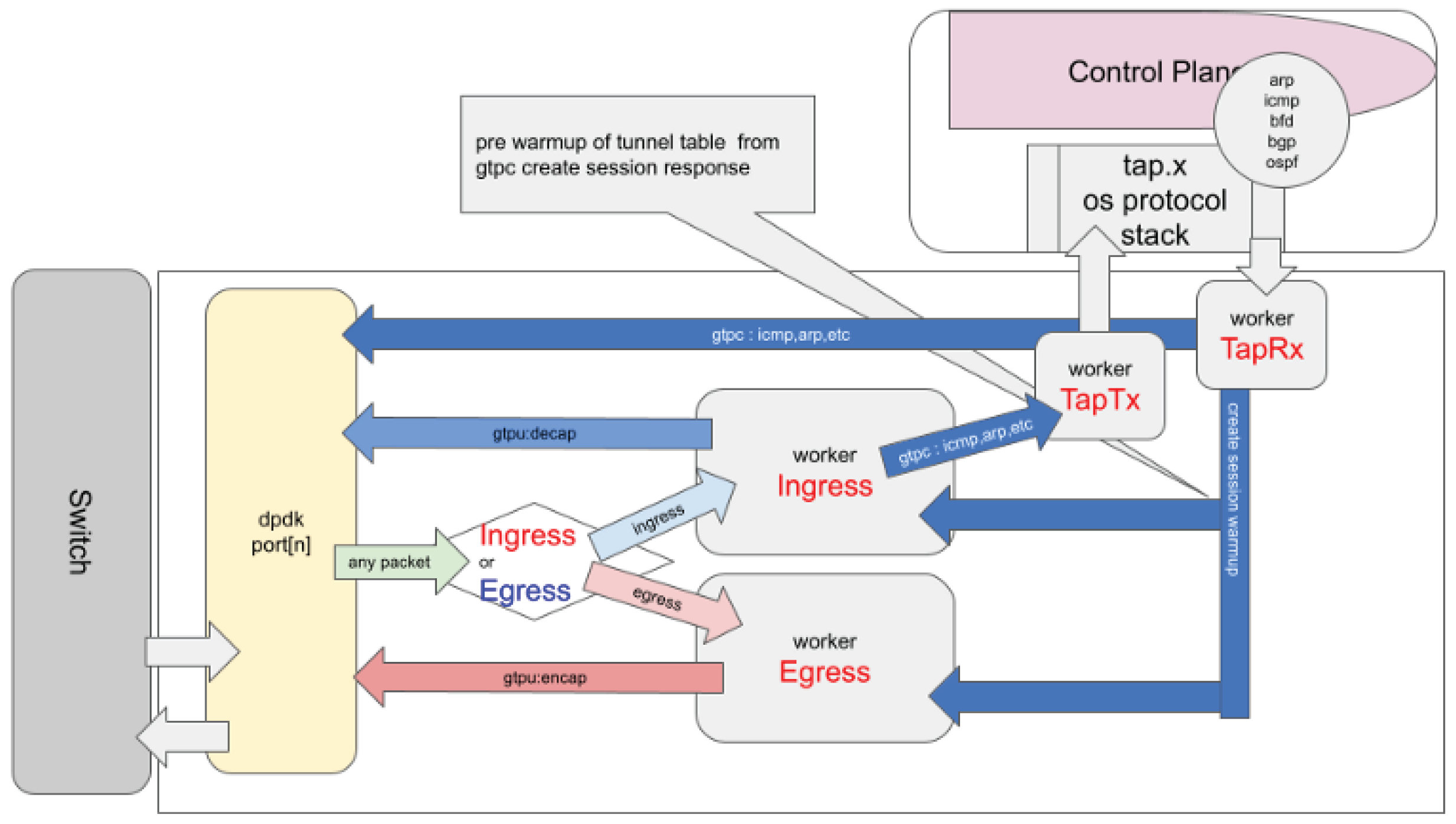

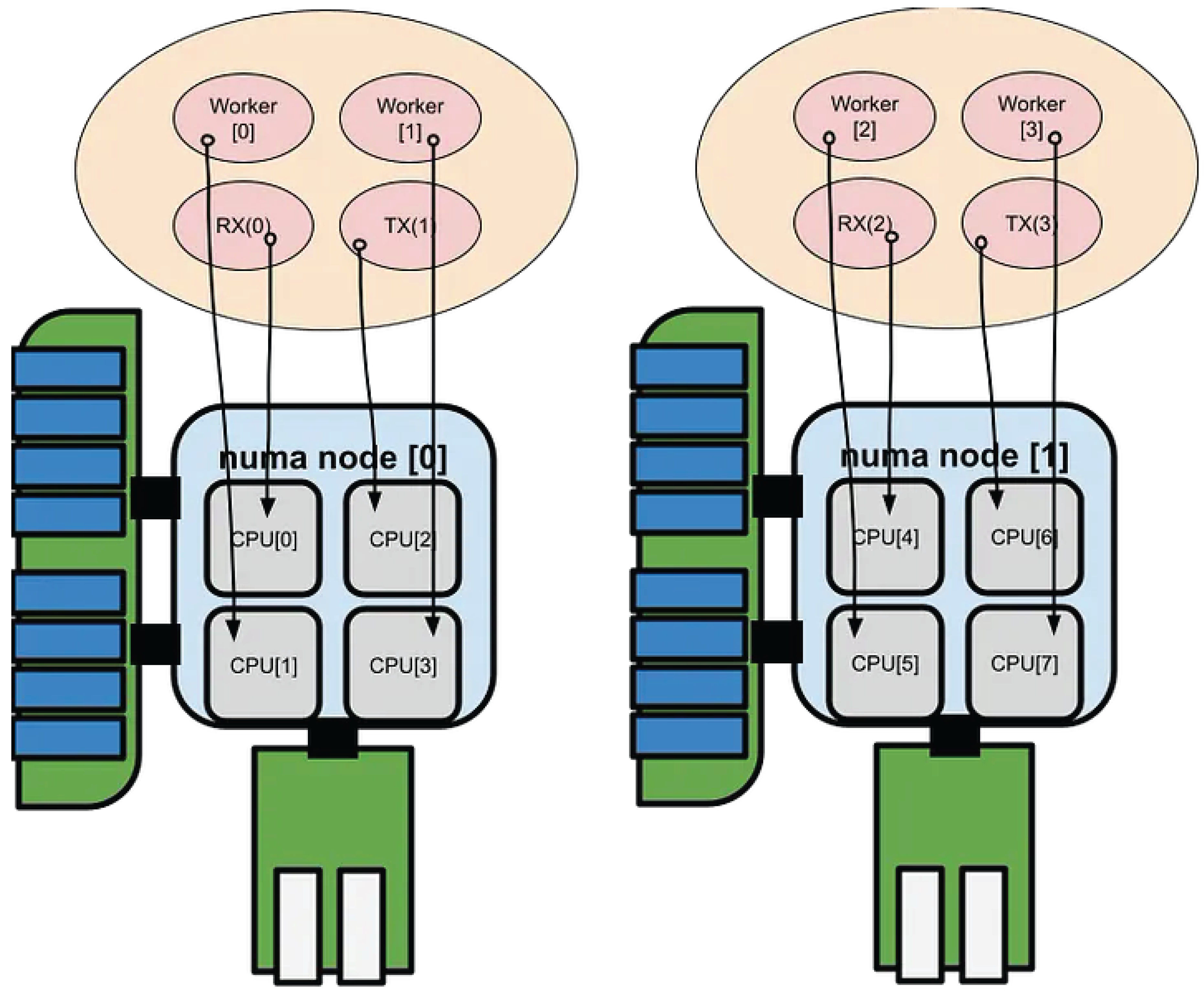

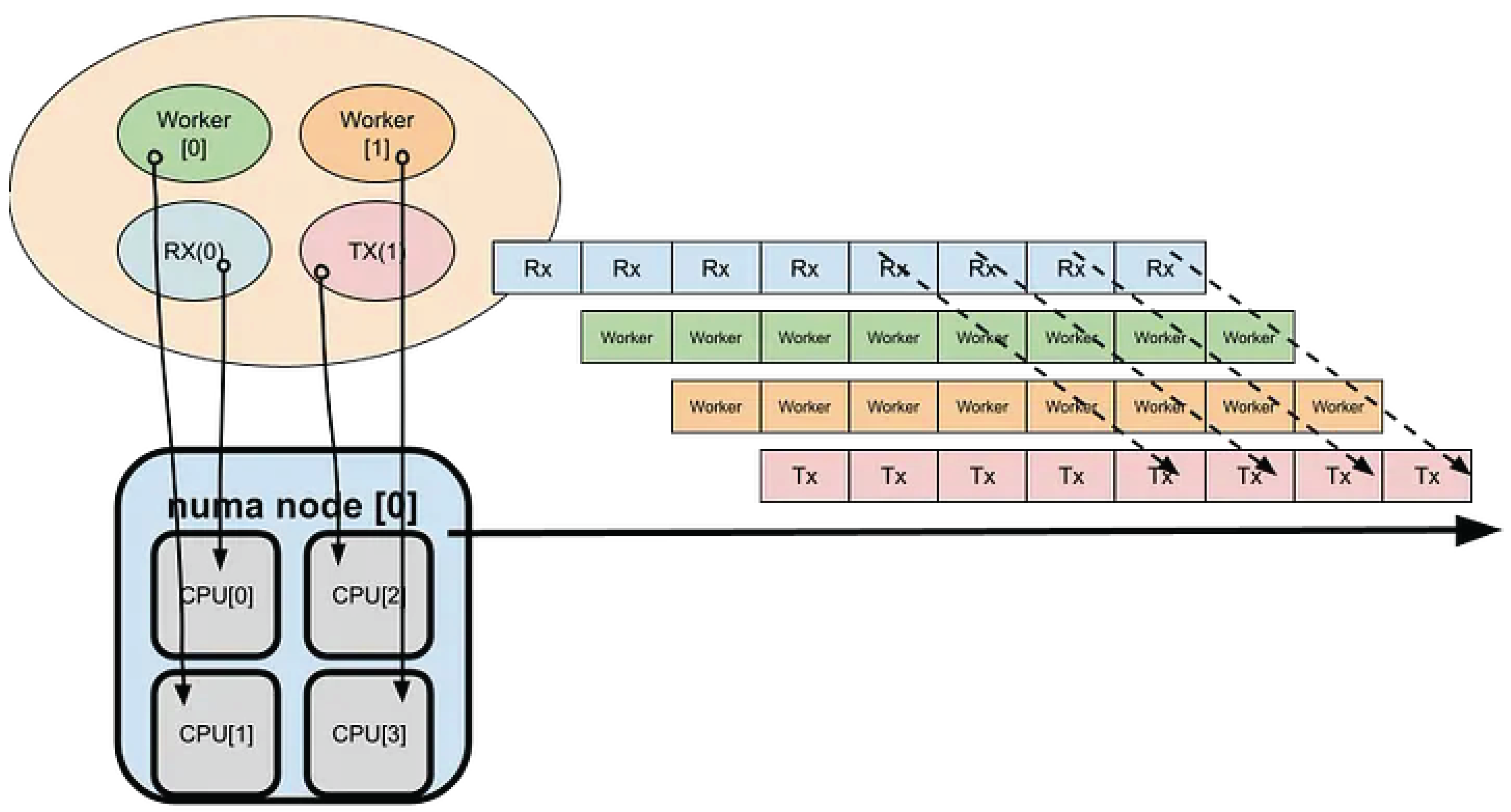

2.1. PipeLine Stage

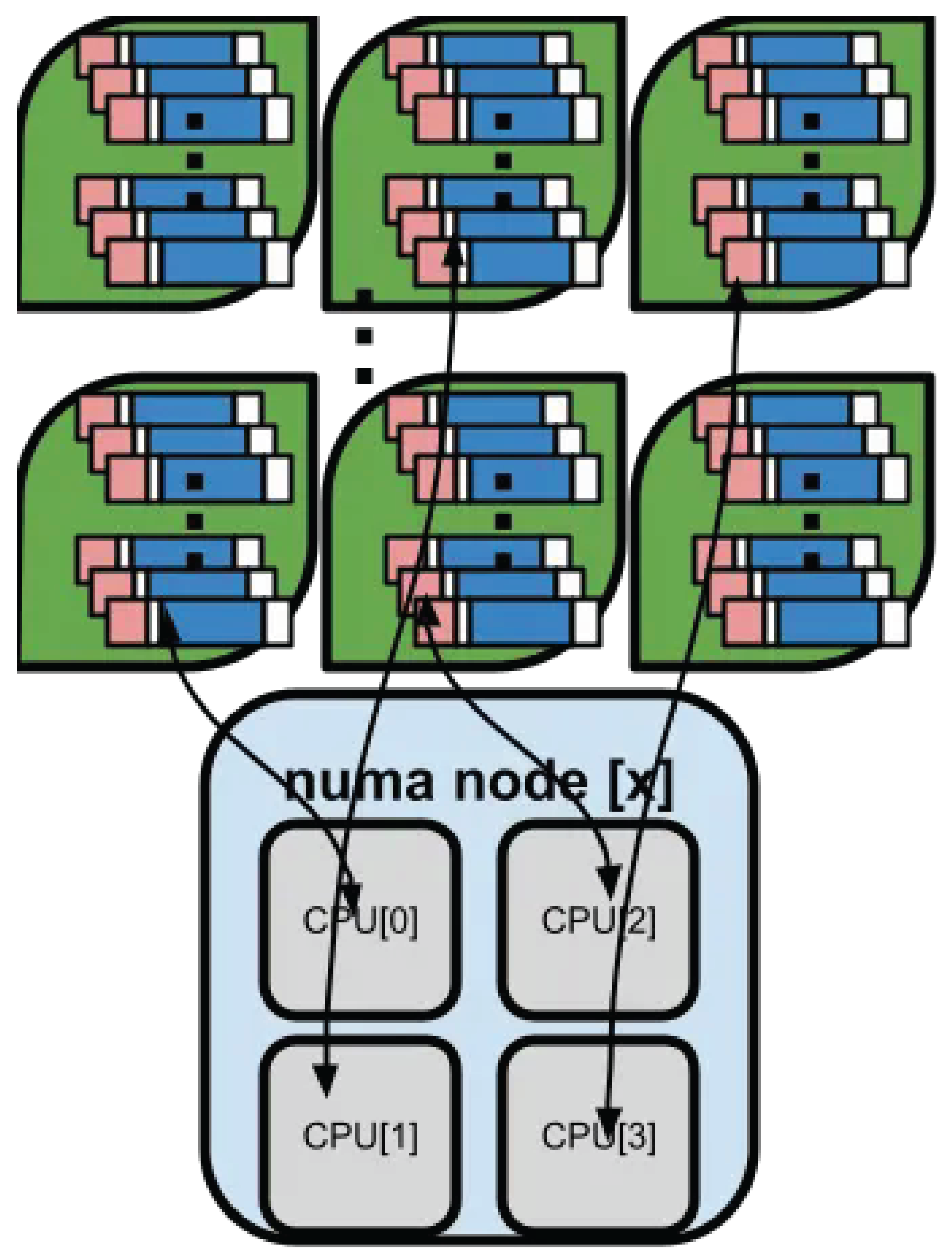

2.2. mbuf Pools

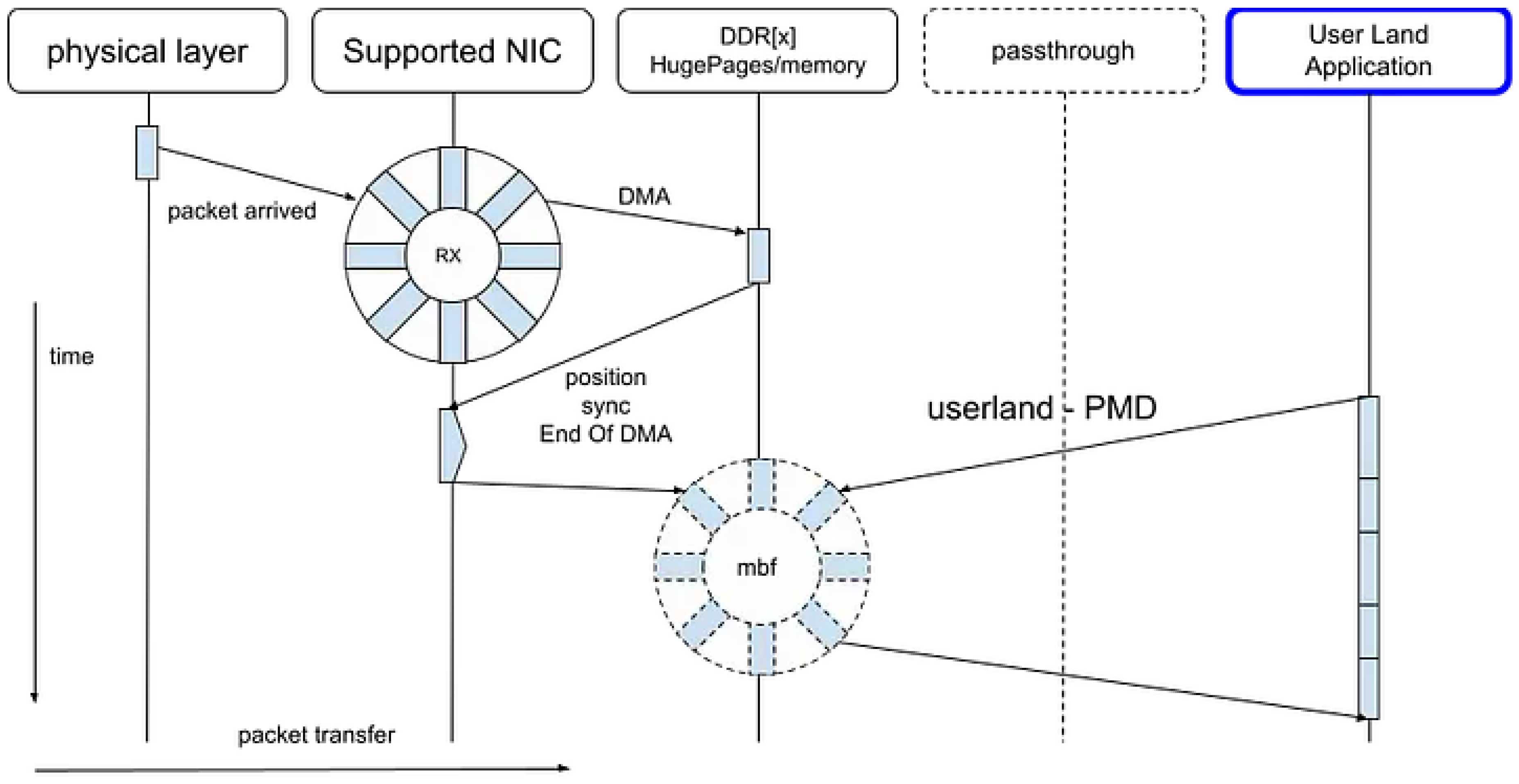

2.3. Pool Mode Driver

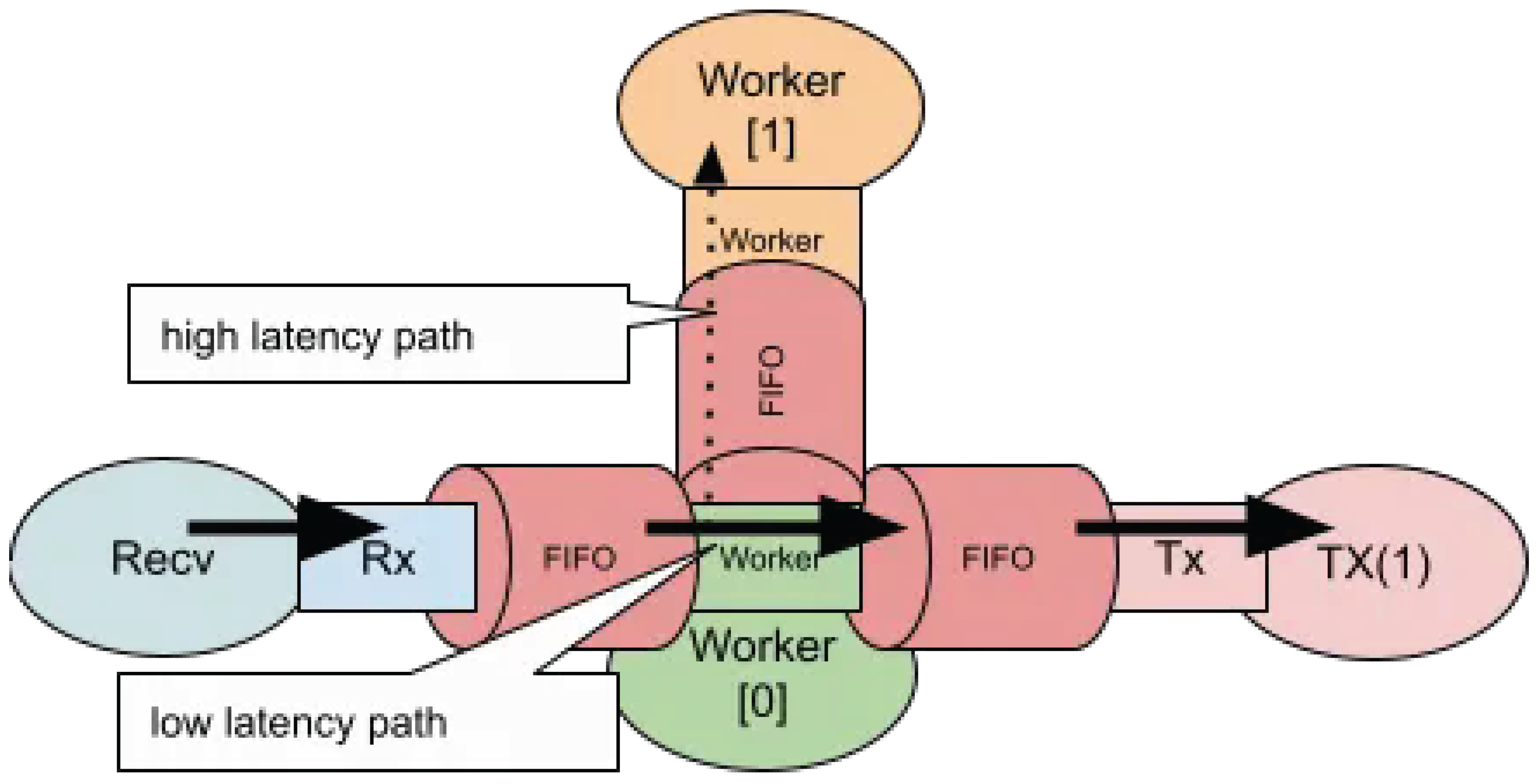

2.4. Mixed Latency Path

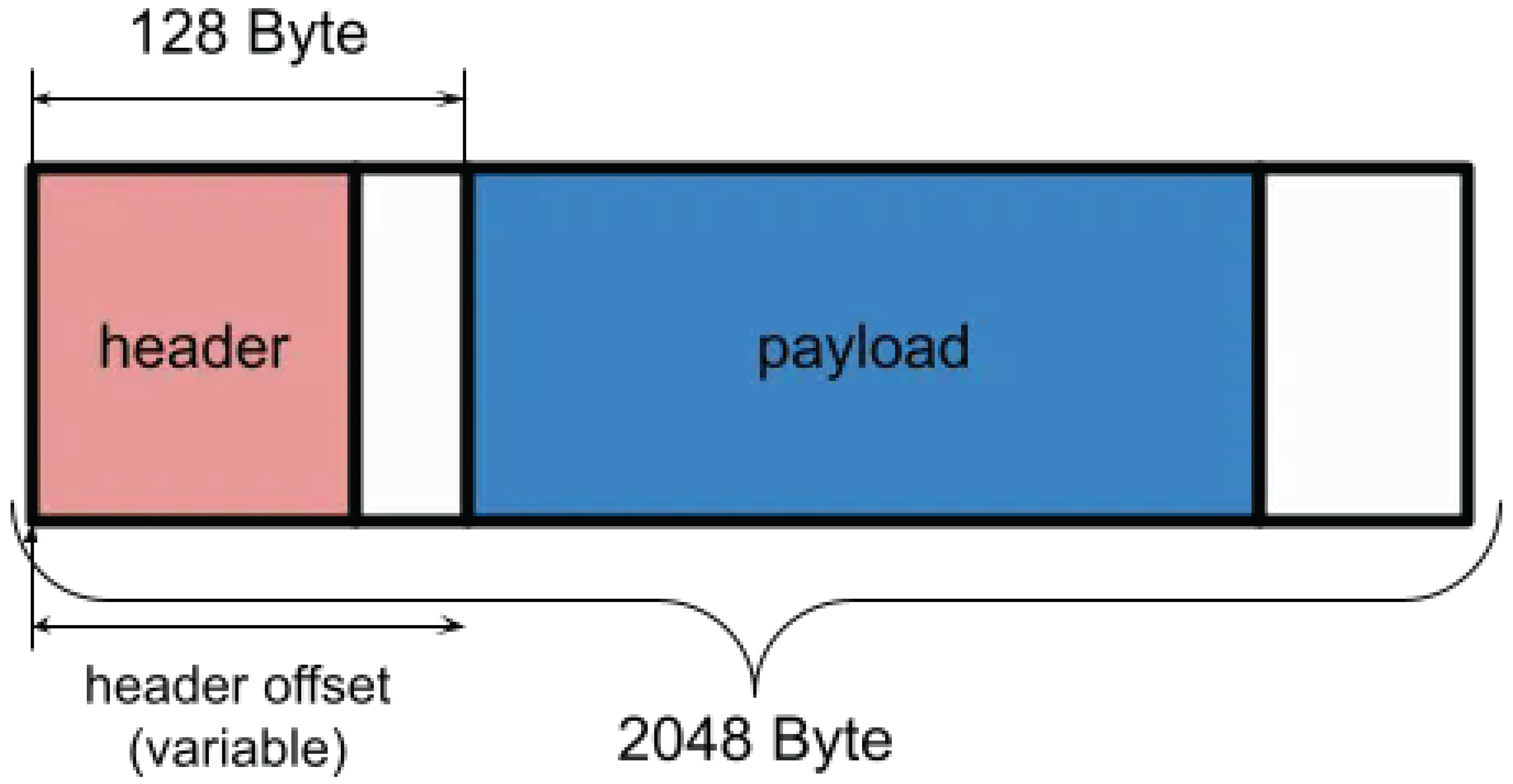

2.5. mbuf Structure

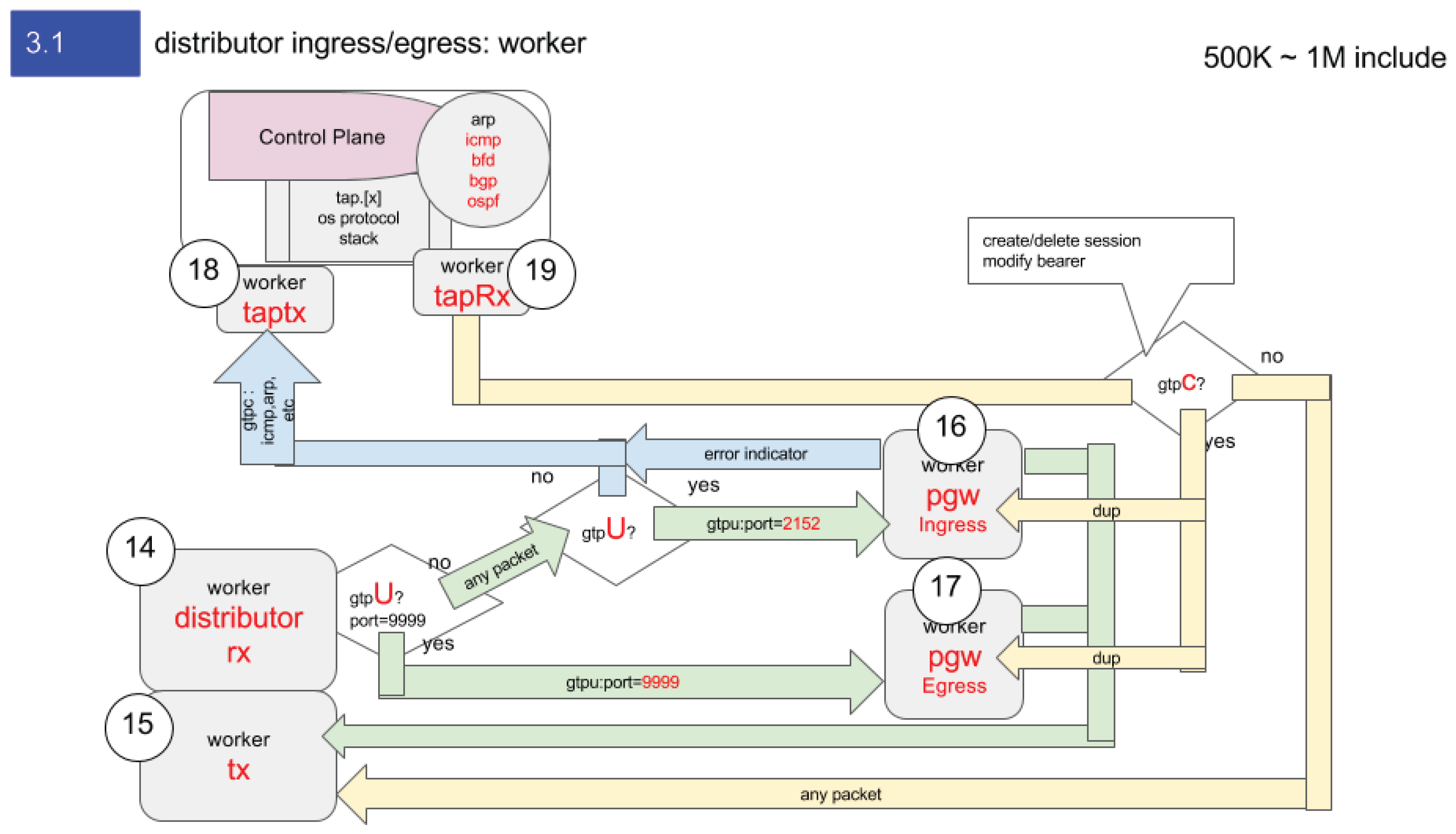

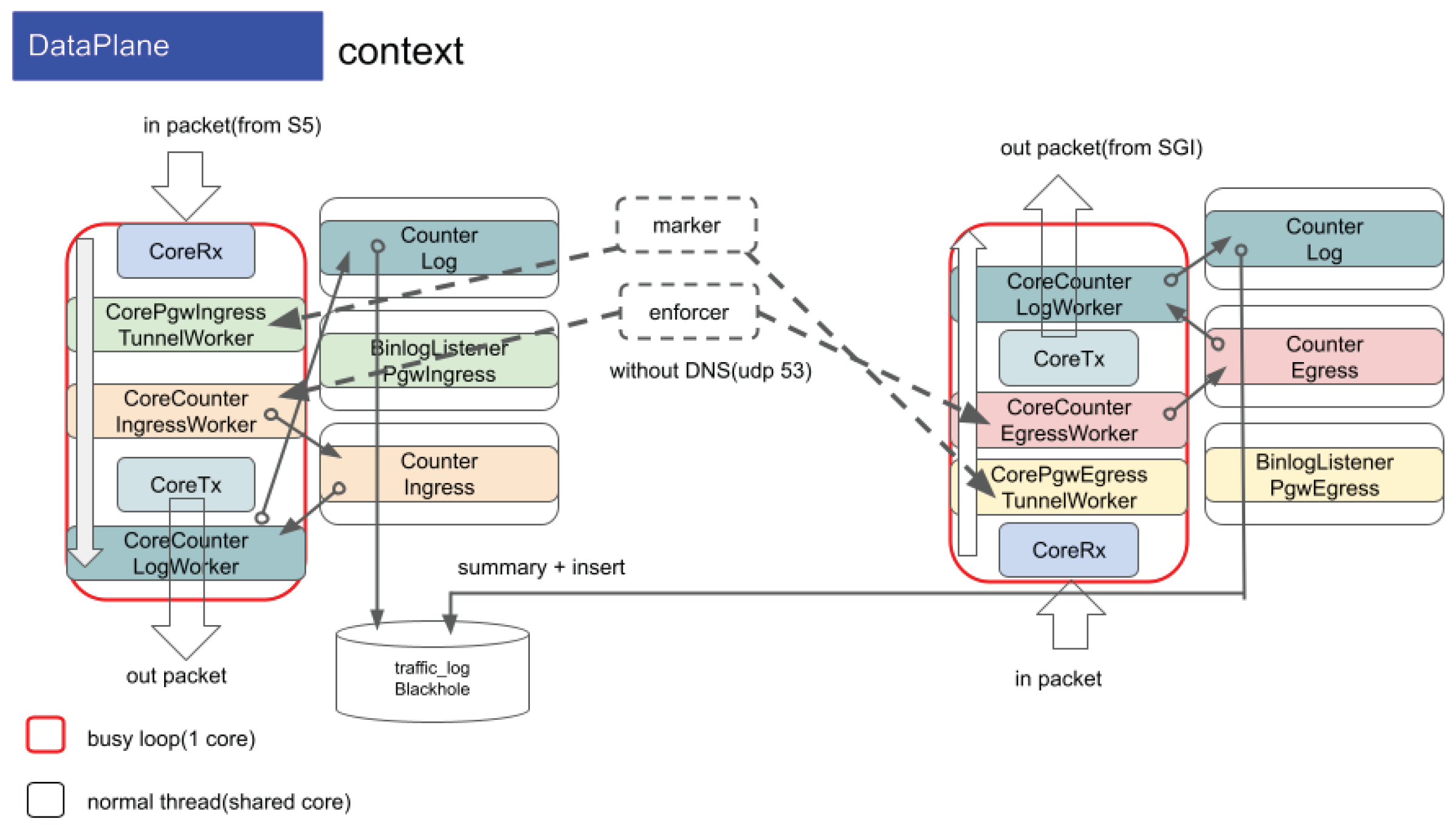

2.6. PGW-Dataplane

2.7. User Fairness

3. Accelerated Network Application

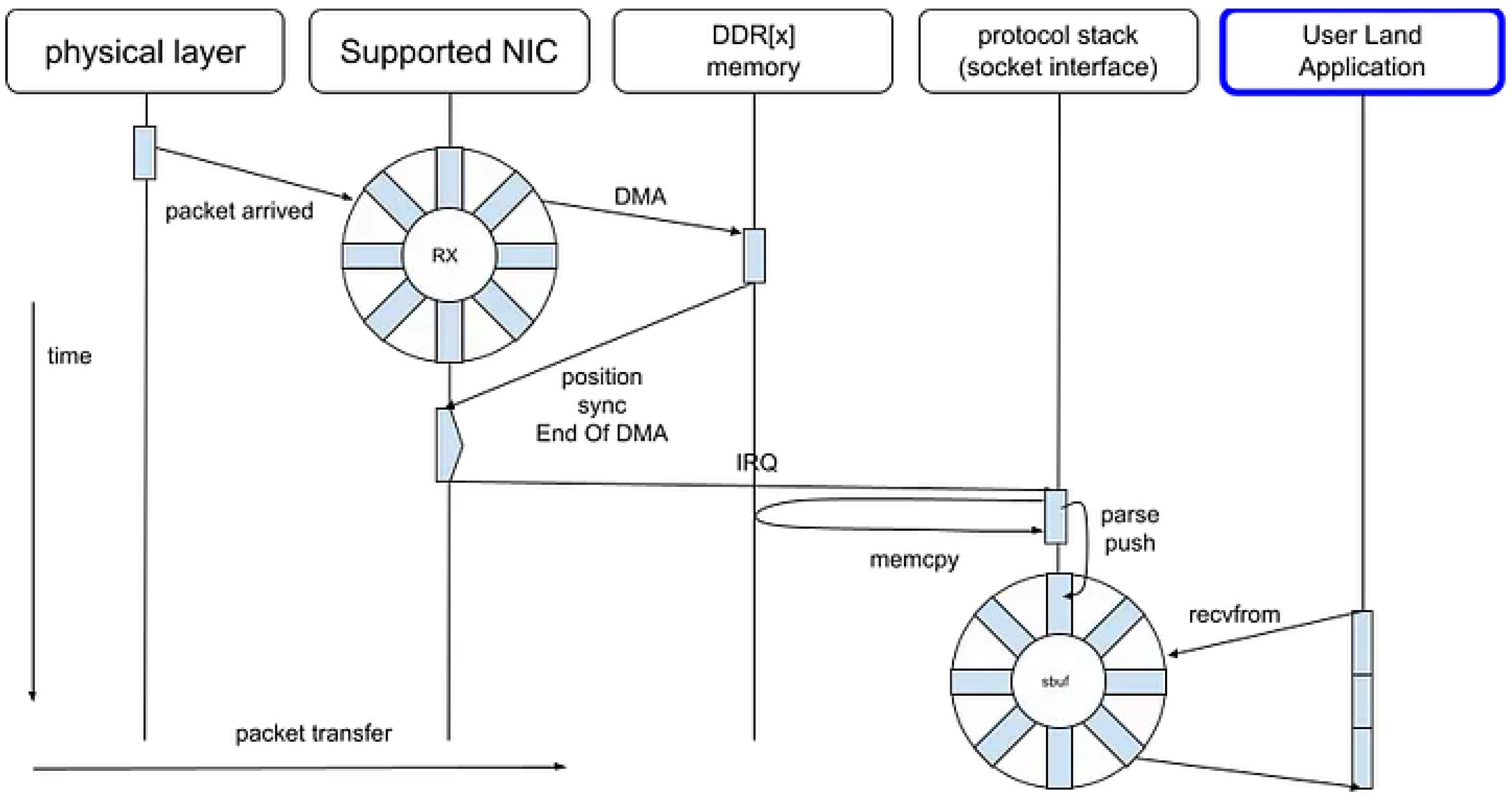

3.1. Legacy Socket

| Name | Description |

|---|---|

| libevent | Event-driven I/O abstraction based on callback-oriented socket operations. |

| socket(2) + select(2) | Legacy synchronous socket API using select(2) for multiplexing. |

| fread(3) | Buffered binary stream I/O abstraction layered on top of system calls. |

| FUdpSocket Receiver |

UDP socket wrapper within Unreal Engine 4’s networking subsystem. |

| IOCP | Windows-specific asynchronous I/O mechanism based on I/O Completion Ports. |

| Any runtime socket wrapper | Language-level abstractions of socket primitives (e.g., Python, Go, Rust). |

| Netty | High-performance asynchronous network framework for the Java runtime. |

3.2. DPDK PMD

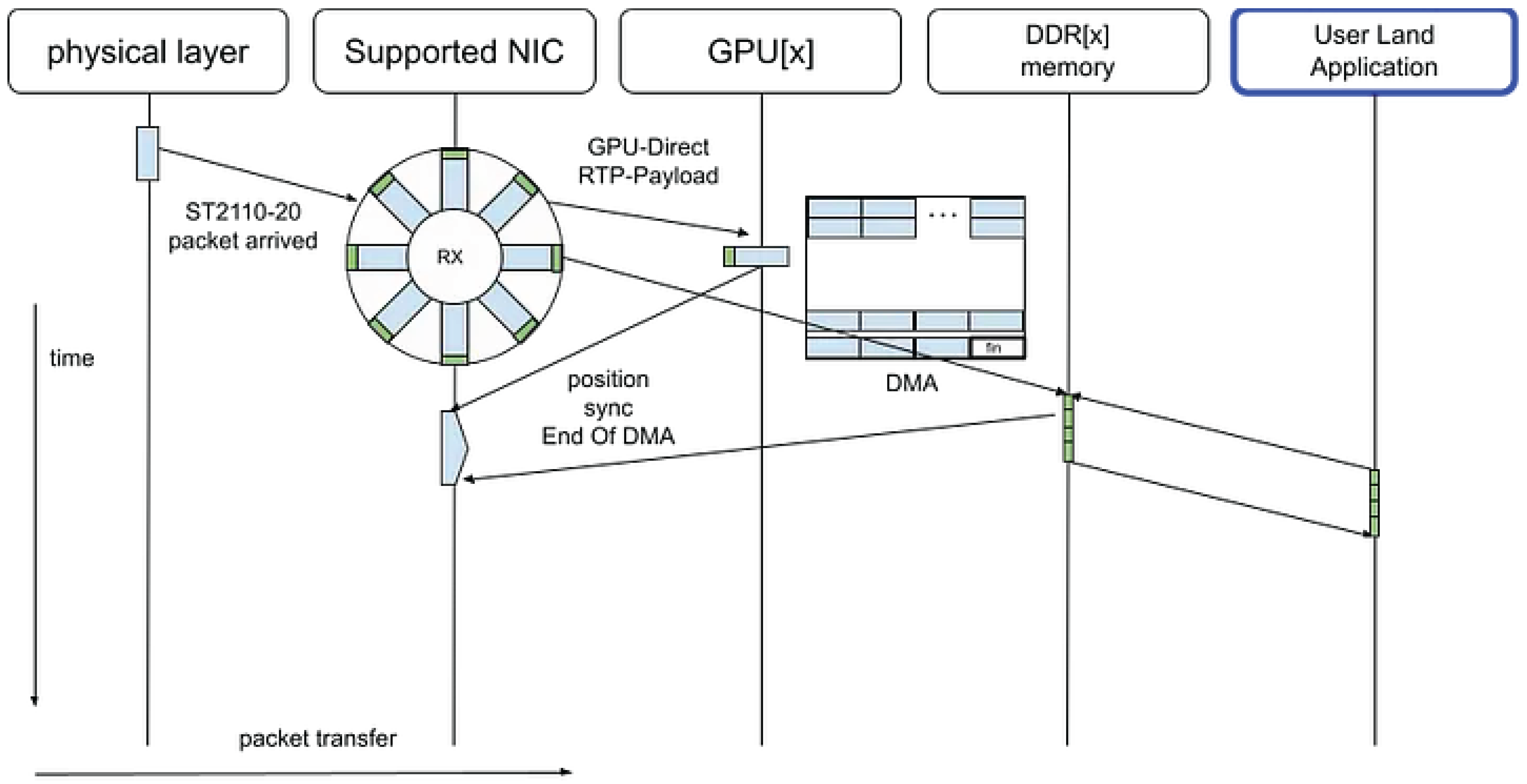

3.3. ConnectX GPU Direct

4. Related Work

4.1. netmap

4.2. fd.io/VPP (Vector Packet Processing)

4.3. NetVM

- (i) DMA from the NIC into shared huge pages

- (ii) lightweight descriptor rings between the hypervisor and each VM

- (iii) shared page references across trusted VMs

- System boundaries: NetVM optimizes inter-VM communication through shared huge pages and hypervisor switching. Our work instead optimizes a single-process, multi-threaded data path, eliminating context-switch and IOTLB overheads entirely.

- NUMA granularity: Although NetVM considers NUMA locality, our design formalizes it at the mbuf pool and core level, detailing explicit placement and synchronization strategies for deterministic latency.

- Pipeline composition: NetVM supports flexible VM service chaining; our pipeline focuses on mixed-latency flow handling, offset-variable header processing, and copy-minimized encapsulation paths.

- Target domain: NetVM is suitable for NFV and multi-tenant environments, while our system addresses deterministic, carrier-grade data-plane workloads (e.g., PGW) where predictable per-packet latency is paramount.

4.4. Barrelfish Operating System

5. Conclusion

References

- J. Heinanen and R. Guerin, "A Single Rate Three Color Marker (SRTCM)," RFC 2697, IETF Network Working Group, 99. Available at: https://datatracker.ietf.org/doc/html/rfc2697. 19 September 2697.

- Intel Corporation. DPDK: Data Plane Development Kit Programmers Guide. Intel Corporation, latest edition. https://www.dpdk.org/.

- Rizzo, L. , Netmap: A Novel Framework for Fast Packet I/O. In USENIX Annual Technical Conference (USENIX ATC 2012), 2012. https://www.usenix.org/conference/atc12/technical- sessions/presentation/rizzo.

- Jesper Dangaard Brouer, “Network Stack Challenges at Increasing Speeds: The 100 Gbit/s Challenge,” LinuxCon North America, 15. Available at: http://events17.linuxfoundation.org/sites/events/files/slides/net_stack_challenges_100G_1.pdf. 20 August.

- Cisco Systems, “FD.io / VPP (Vector Packet Processing),” 2016.Available: https://fd.

- J. Hwang, K. K. J. Hwang, K. K. Ramakrishnan, and T. Wood, “NetVM: High Performance and Flexible Networking Using Virtualization on Commodity Platforms,” in Proc. USENIX NSDI, 2014. https://www.usenix.org/system/files/conference/nsdi14/nsdi14-paper-hwang.pdf.

- “Barrelfish: Exploring a Multicore OS,” Microsoft Research Blog, , 2011.URL: https://www.microsoft.com/en-us/research/blog/barrelfish-exploring-multicore-os/. 7 July.

- NVIDIA Corporation, NVIDIA Rivermax SDK Documentation, Available: https://developer.nvidia.com/networking/rivermax.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).