Submitted:

24 September 2025

Posted:

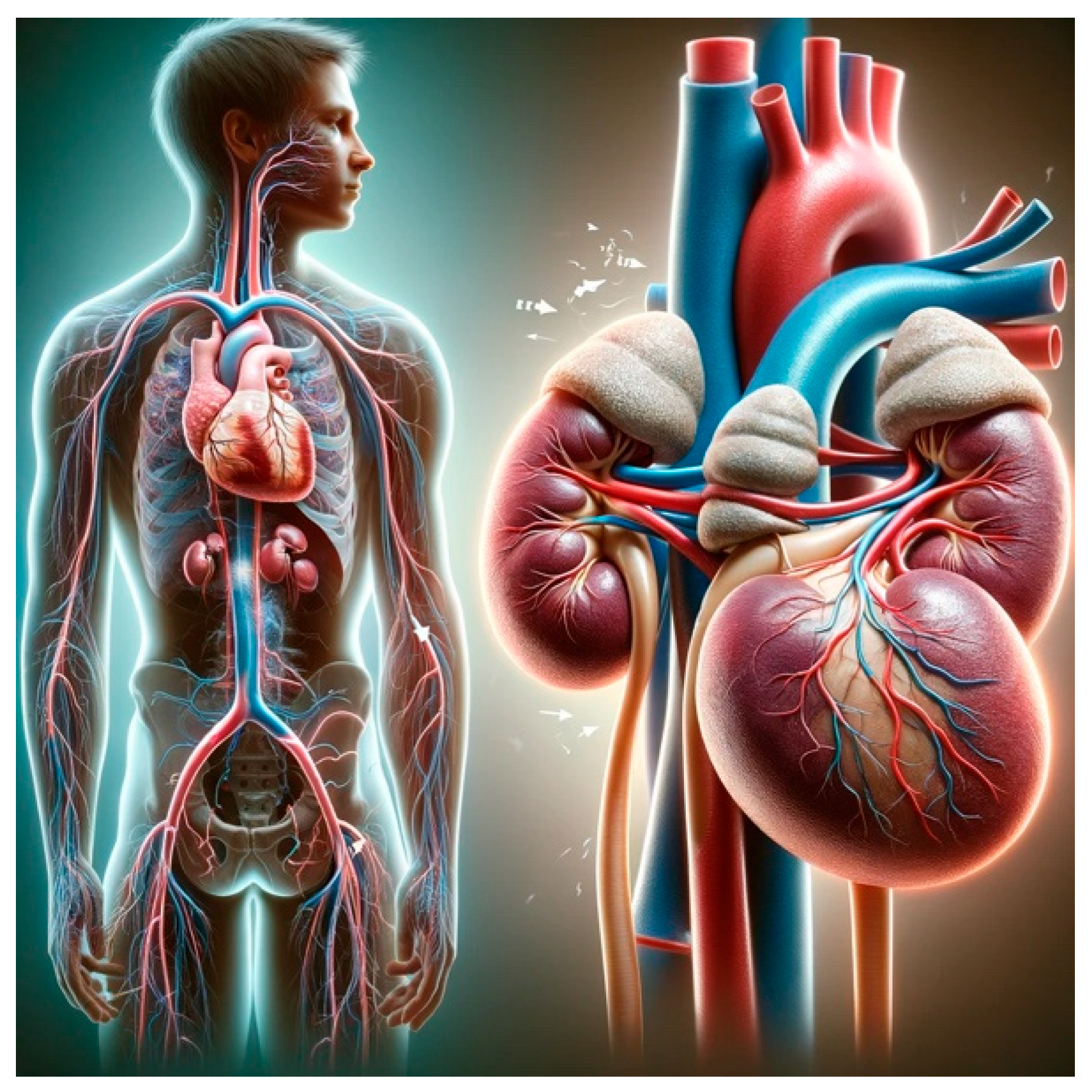

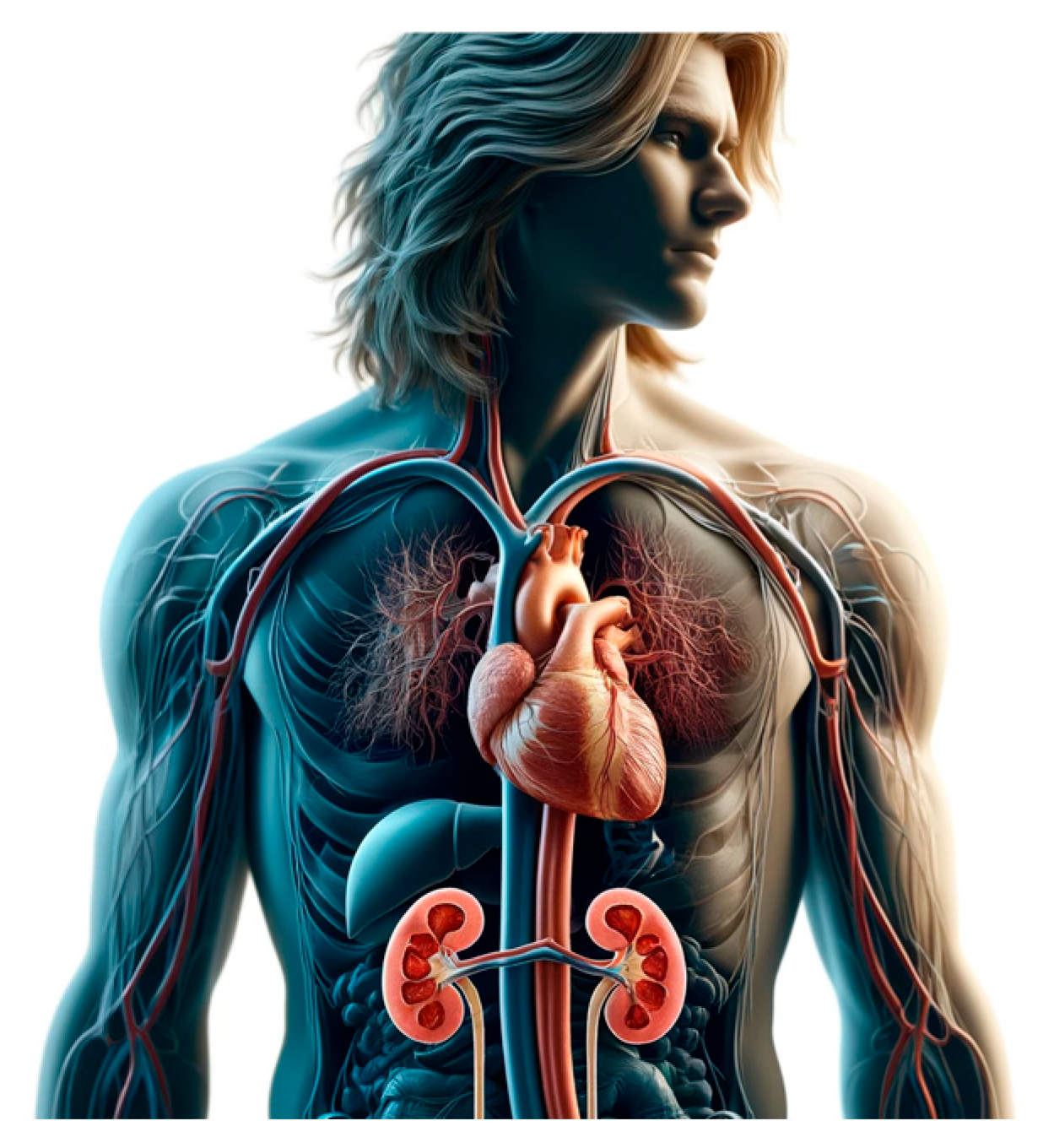

14 October 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. ChatGPT for Generating Scientific Illustration

3. A Practical Example

4. The Black Box Biases (ChatGPT) While Generating Scientific Illustrations

- Representation Bias: When certain groups, phenomena, or attributes are underrepresented or overrepresented in the training data.

- Confirmation Bias: When the model tends to confirm pre-existing hypotheses or popular beliefs in the scientific community, rather than providing objective outputs.

- Algorithmic Bias: When the model’s architecture or training process systematically favors certain outcomes over others.

5. OpenAI vs. Human Generated Content

- -

- Use diverse training data: ensure that the training data includes a wide range of perspectives and voices. This includes balancing the representation of genders in medical case studies, research, and literature used in training the AI.

- -

- Use algorithms and methods to detect and mitigate biases in the training data and the model’s outputs. This can involve both automatic methods, such as bias detection algorithms, and manual reviews by diverse human teams.

- -

- Feedback and revalidation to train algorithms currently used: continuously update the AI model with new data, research findings, and balanced perspectives to reflect the most current and comprehensive understanding of gender issues in medicine. Re-evaluate the model periodically to assess bias and the effectiveness of mitigation strategies.

- -

- Use strict ethical guidelines and standards that specifically address bias in AI. Embrace stringent ethical guidelines focusing on bias elimination to sustain the impartiality of AI tools in medical contexts.

- -

- Foster a culture of transparent feedback mechanisms to refine AI models continually, because robust feedback mechanisms that allow users to report perceived biases or errors in the AI’s responses. Use this feedback to make improvements.

- -

- Involve multidisciplinary teams, including ethicists, gender studies experts, medical professionals, and AI developers, in the design, training, and deployment processes of AI systems. This ensures a more holistic approach to recognizing and addressing potential biases.

- -

- Provide training to educate users on the potential for bias and encourage them to use AI tools as one of many informational resources, not as the sole decision-maker, especially in critical fields like medicine.

| Data Auditing: | Diverse and Representative Data | Ensuring that Training Datasets Are Comprehensive and Representative of all Relevant Variables and Populations. |

|---|---|---|

| Bias Detection | Implementing methods to detect and quantify biases in the training data. | |

| Model Transparency | Explainability Tools | Using tools and techniques to make the model’s decision-making process more interpretable. |

| Open Practices | Sharing model architectures, training methods, and datasets openly to allow for external scrutiny and validation. | |

| Ethical AI Practices | Bias Mitigation Techniques | Employing techniques such as reweighting, adversarial debiasing, and fairness constraints during model training. |

| Continuous Monitoring | Regularly assessing and updating models to address and correct biases. |

6. Conclusions

Author Contributions

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

List of Abbreviations

| AI | Artificial Intelligence |

| ChatGPT | Chatbot Generative Pre-trained Transformer |

| CCM | Critical Care Medicine |

| ICU | Intensive Care Unit |

| ML | Machine Learning |

| NLP | Natural Language Processing |

References

- Salvagno, M., Taccone, F.S. & Gerli, A.G. (2023). Can artificial intelligence help for scientific writing? Crit Care 27, 75. [CrossRef]

- Klug J, Pietsch U. (2024) Can artificial intelligence help for scientific illustration? Details matter. Crit Care. Jun 10;28(1):196. [CrossRef] [PubMed] [PubMed Central]

- Naghshineh S, Hafler. Miller AR, Blanco MA, Lipsitz SR, Katz JT. (2023) Formal Art Observation Training Improves Medical Students’ Visual Diagnostic Skills J Gen Intern Med 23(7):991–7.

- Yenewine P (2013) Visual Thinking Strategies: Using Art to deepen Learning Across School Disciplines. Harvard Education Press; 1st edition (October 1, 2013) ISBN-10 : 1612506097. ISBN-13 978-1612506098.

- Katz JT, Khoshbin S. (2014) Can Visual Arts training Improve Physician Performance? Trans Am Clin Climatol Assoc 125:331-342.

- Smith, A., Jones, B. (2021). The Role of Artificial Intelligence in Medicine: A Review of Current Trends and Future Directions. Journal of Medical AI, 5(2), 87-102. [CrossRef]

- Patel, C. , Lee, D. (2020). Leveraging AI Chatbots for Medical Education: A Scoping Review. Medical Education Journal, 18(4), 231-245. [CrossRef]

- Garcia, E. , Nguyen, H. (2019). Ethics in Artificial Intelligence: Addressing Biases and Fairness in AI Models. Journal of AI Ethics, 3(1), 56-72. [CrossRef]

- Fong, N. , Langnas, E., Law, T., Reddy, M., Lipnick, M., & Pirracchio, R. Availability of information needed to evaluate algorithmic fairness—A systematic review of publicly accessible critical care databases. Anaesthesia, Critical Care & Pain Medicine, 2023; 42(5): 101248. [CrossRef]

- STANDING-Together 2024 see https://www.datadiversity.org/recommendations. (last open 6th September 2024).

- Rubulotta F, Bahrami S, Marshall DC, Komorowski M. (2024) Machine Learning Tools for Acute Respiratory Distress Syndrome Detection and Prediction. Crit Care Med. Aug 12. Epub ahead of print. [CrossRef] [PubMed]

- Dhruva, S. S. , Ross, J. S., Akar, J. G., Caldwell, B., Childers, K., Chow, W., Ciaccio, L., Coplan, P., Dong, J., Dykhoff, H. J., Johnston, S., Kellogg, T., Long, C., Noseworthy, P. A., Roberts, K., Saha, A., Yoo, A., & Shah, N. D. (2020) Aggregating multiple real-world data sources using a patient-centered health-data-sharing platform. NPJ Digital Medicine; 3: 60. [CrossRef]

- Brown, K. , Adams, R. (2017). Exploring the Use of Chatbots in Medicine: Opportunities and Challenges. Journal of Health Information Technology, 23(2), 98-112. [CrossRef]

- Kalkman, S. , van Delden, J., Banerjee, A., Tyl, B., Mostert, M., & van Thiel, G. (2022) Patients’ and public views and attitudes towards the sharing of health data for research: A narrative review of the empirical evidence. Journal of Medical Ethics,; 48(1): 3–13. [CrossRef]

- Futoma, J. , Simons, M., Panch, T., Doshi-Velez, F., & Celi, L. A. (2020) The myth of generalisability in clinical research and machine learning in health care. The Lancet. Digital Health,;2(9): e489–e492. [CrossRef]

- Amann, J. , Blasimme, A., Vayena, E., Frey, D., Madai, V. I., & the Precise4Q consortium. (2020) Explainability for artificial intelligence in healthcare: A multidisciplinary perspective. BMC Medical Informatics and Decision Making; 20(1): 310. [CrossRef]

- Vukovic, J. , Ivankovic, D., Habl, C., & Dimnjakovic, J. (20220 Enablers and barriers to the secondary use of health data in Europe: General data protection regulation perspective. Archives of Public Health = Archives Belges De Sante Publique; 80(1): 115. [CrossRef]

- Naik, N. , Hameed, B.M., Shetty, D.K., Swain, D., Shah, M., Paul, R., Aggarwal, K., Ibrahim, S., Patil, V., Smriti, K. and Shetty, S (2022). Legal and ethical consideration in artificial intelligence in healthcare: who takes responsibility? Frontiers in surgery; 9: 266.

- Wang, X. , Chen, Y. (2018). Machine Learning Algorithms for Clinical Decision Support in Critical Care: A Systematic Review. Critical Care Research Journal, 12(3), 134-147. [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).