Submitted:

13 October 2025

Posted:

14 October 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Definition of Convolution and Its Importance in CNNs

1.2. Mathematical Foundations of Convolution

1.2.1. The Simple Intuition

1.2.2. Historical Development and Mathematical Formalization

1.2.3. Formal Mathematical Definition

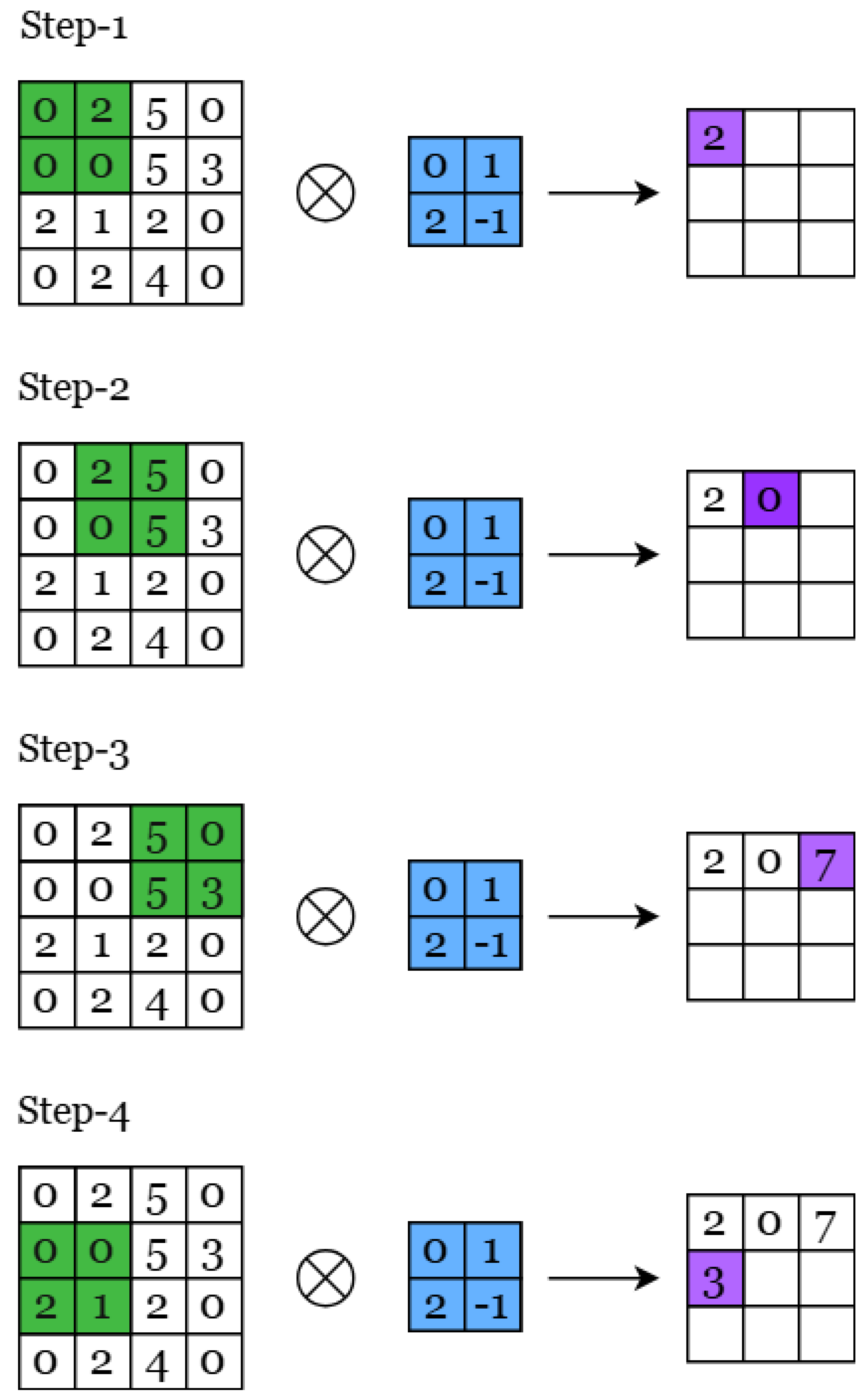

1.2.4. 2D Convolution for Image Processing

1.2.5. Tensor Formulation for Multi-Channel Images

1.3. Biological Inspiration: Neurons, Receptive Fields, and Feature Extraction

2. Fundamentals of Convolutions

2.1. What Convolution Actually Does in Neural Networks

2.2. Core Properties That Make Convolution Powerful

2.2.1. Translation Invariance: The Foundation of Robust Pattern Recognition

2.2.2. Weight Sharing: Computational Efficiency Through Parameter Reuse

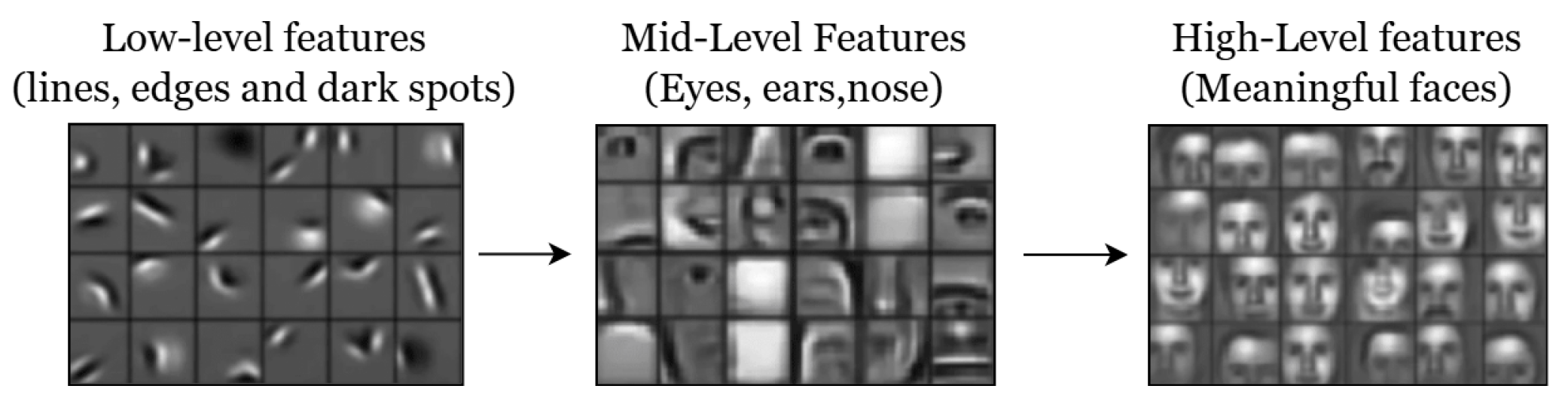

2.2.3. Hierarchical Feature Learning: From Edges to Objects

2.3. Dimensional Variants: Adapting Convolution to Data Structure

2.3.1. 1D Convolutions for Sequential Data Processing

2.3.2. 2D Convolutions: The Foundation of Computer Vision

2.3.3. 3D Convolutions: Spatiotemporal and Volumetric Processing

2.3.4. Transposed Convolutions: Learnable Upsampling Operations

2.3.5. Complex-Valued Convolutions: Phase-Aware Signal Processing

2.4. Activation Functions: Enabling Non-Linear Feature Composition

2.4.1. Sigmoid Function

2.4.2. Hyperbolic Tangent (Tanh)

2.4.3. Rectified Linear Unit (ReLU)

2.4.4. Leaky ReLU

2.4.5. Noisy ReLU

2.4.6. Parametric Linear Units (PReLU)

2.5. Evolution Beyond Hand-Crafted Features

2.6. Chapter Summary

3. Types of Convolutions in CNNs

3.1. Standard Convolutions: The Foundation

3.2. Expanding Receptive Fields: Dilated Convolutions

3.3. Computational Efficiency: Depthwise Separable Convolutions

3.4. Parallel Processing: Grouped Convolutions

3.5. Channel Transformation: Pointwise Convolutions

3.6. Spatial Downsampling: Strided Convolutions

3.7. Spatial Upsampling: Transposed Convolutions

3.8. Convolution Types: A Unified Perspective

4. Evolution of CNN Architectures

4.1. The Foundation: LeNet-5 and Early CNN Development

4.2. The Revolution: AlexNet and the Deep Learning Breakthrough

4.2.1. Why AlexNet Achieved the Breakthrough

4.2.2. Architecture and Design Principles

4.2.3. Key Innovations

4.2.4. Impact on Future Architectures

4.3. Systematic Depth: VGG and the Power of Simplicity

4.4. Multi-Scale Processing: GoogLeNet and Inception Modules

4.5. Solving the Depth Problem: ResNet and Residual Learning

4.5.1. The Transformative Impact

4.6. Maximum Connectivity: DenseNet and Feature Reuse

4.7. Mobile Efficiency: MobileNet and Resource-Constrained Architectures

4.7.1. Evolutionary Improvements

4.8. Architectural Evolution Summary

5. Advanced Convolutional Techniques

5.1. Attention Mechanisms in CNNs

5.2. Self-supervised and Contrastive Learning with CNNs

6. GANs and CNN-Based Generative Models

6.1. How CNNs are Used in GANs

6.2. DCGAN and Deep Convolutional Architectures

6.3. StyleGAN and High-resolution Image Generation

7. CNNs Beyond Image Processing

7.1. CNNs in Audio Processing

7.2. CNNs in Video Analysis and Generation

7.3. CNNs in Biomedical Imaging and Scientific Applications

7.4. CNNs in NLP

7.5. CNNs in Finance

8. Discussion

8.1. Limitations and Challenges of CNNs

8.2. Comparison with Alternative Architectures

9. Conclusion

9.1. Future Trends in CNN Architectures

9.2. The Role of CNNs in Modern AI Research

Acknowledgments

References

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the Proc. IEEE Conf. Comput. Vis. Pattern Recognit. 2016; pp. 770–778. [Google Scholar]

- Esteva, A.; Kuprel, B.; Novoa, R.A.; Ko, J.; Swetter, S.M.; Blau, H.M.; Thrun, S. Dermatologist-Level Classification of Skin Cancer with Deep Neural Networks. Nature 2017, 542, 115–118. [Google Scholar] [CrossRef] [PubMed]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep Learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef] [PubMed]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. ImageNet Classification with Deep Convolutional Neural Networks. In Proceedings of the Adv. Neural Inf. Process. Syst. 2012; pp. 1097–1105. [Google Scholar]

- Tan, M.; Le, Q.V. EfficientNet: Rethinking Model Scaling for Convolutional Neural Networks. In Proceedings of the Int. Conf. Mach. Learn. 2019; pp. 6105–6114. [Google Scholar]

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; et al. An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale. https://arxiv.org/abs/2010.1 1929, 2020, [2010. [Google Scholar]

- Euler, L. Institutionum Calculi Integralis; Impensis Academiae Imperialis Scientiarum, 1768.

- Laplace, P.S. Mémoire sur les probabilités. Mémoires de l’Académie royale des sciences de Paris 1781.

- Fourier, J. Théorie analytique de la chaleur; Firmin Didot, 1822.

- Cajori, F. A History of Mathematical Notations; Open Court Publishing Company, 1929.

- Dumoulin, V.; Visin, F. A Guide to Convolution Arithmetic for Deep Learning. https://arxiv.org/abs/1603.0 7285, 2016, [1603. [Google Scholar]

- Ronneberger, O.; Fischer, P.; Brox, T. U-Net: Convolutional Networks for Biomedical Image Segmentation. In Proceedings of the Med. Image Comput. Comput. Assist. Interv. 2015; pp. 234–241. [Google Scholar]

- Hubel, D.H.; Wiesel, T.N. Receptive Fields, Binocular Interaction and Functional Architecture in the Cat’s Visual Cortex. J. Physiol. 1962, 160, 106–154. [Google Scholar] [CrossRef] [PubMed]

- Fukushima, K. Neocognitron: A Self-organizing Neural Network Model for a Mechanism of Pattern Recognition Unaffected by Shift in Position. Biological Cybernetics 1980, 36, 193–202. [Google Scholar] [CrossRef] [PubMed]

- LeCun, Y.; Bottou, L.; Bengio, Y.; Haffner, P. Gradient-based Learning Applied to Document Recognition. Proceedings of the IEEE 1998, 86, 2278–2324. [Google Scholar] [CrossRef]

- Simonyan, K.; Zisserman, A. Very Deep Convolutional Networks for Large-Scale Image Recognition. In Proceedings of the arXiv preprint arXiv:1409.1556; 2014. [Google Scholar]

- Yamins, D.L.K.; Hong, H.; Cadieu, C.F.; Solomon, E.A.; Seibert, D.; DiCarlo, J.J. Performance-Optimized Hierarchical Models Predict Neural Responses in Higher Visual Cortex. Proc. Natl. Acad. Sci. U.S.A. 2014, 111, 8619–8624. [Google Scholar] [CrossRef] [PubMed]

- Yosinski, J.; Clune, J.; Bengio, Y.; Lipson, H. How Transferable are Features in Deep Neural Networks? In Proceedings of the Adv. Neural Inf. Process. Syst. 2014; pp. 3320–3328. [Google Scholar]

- Zeiler, M.D.; Fergus, R. Visualizing and Understanding Convolutional Networks. In Proceedings of the Proc. Eur. Conf. Comput. Vis. Springer; 2014; pp. 818–833. [Google Scholar]

- Selvaraju, R.R.; Cogswell, M.; Das, A.; Vedantam, R.; Parikh, D.; Batra, D. Grad-CAM: Visual Explanations from Deep Networks via Gradient-based Localization. In Proceedings of the Proc. IEEE Int. Conf. Comput. Vis. 2017; pp. 618–626. [Google Scholar]

- Goodfellow, I.J.; Shlens, J.; Szegedy, C. Explaining and Harnessing Adversarial Examples. https://arxiv.org/abs/1412. 6572, 2014, [1412. [Google Scholar]

- Xie, S.; Girshick, R.; Dollár, P.; Tu, Z.; He, K. Aggregated Residual Transformations for Deep Neural Networks. In Proceedings of the Proc. IEEE Conf. Comput. Vis. Pattern Recognit. 2017; pp. 1492–1500. [Google Scholar]

- Howard, A.G.; Zhu, M.; Chen, B.; Kalenichenko, D.; Wang, W.; Weyand, T.; Andreetto, M.; Adam, H. MobileNets: Efficient Convolutional Neural Networks for Mobile Vision Applications. https://arxiv.org/abs/1704.0 4861, 2017, [1704. [Google Scholar]

- Lavin, A.; Gray, S. Fast Algorithms for Convolutional Neural Networks. In Proceedings of the Proc. IEEE Conf. Comput. Vis. Pattern Recognit. 2016; pp. 4013–4021. [Google Scholar]

- Trabelsi, C.; Bilaniuk, O.; Zhang, Y.; Serdyuk, D.; Subramanian, S.; Santos, J.F.; Mehri, S.; Rostamzadeh, N.; Bengio, Y.; Pal, C.J. Deep Complex Networks. In Proceedings of the Int. Conf. Learn. Represent. 2018. [Google Scholar]

- Yu, F.; Koltun, V. Multi-Scale Context Aggregation by Dilated Convolutions. https://arxiv.org/abs/1511.0 7122, 2015, [1511. [Google Scholar]

- Chen, L.C.; Papandreou, G.; Kokkinos, I.; Murphy, K.; Yuille, A.L. DeepLab: Semantic Image Segmentation with Deep Convolutional Nets, Atrous Convolution, and Fully Connected CRFs. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 40, 834–848. [Google Scholar] [CrossRef] [PubMed]

- Chollet, F. Xception: Deep Learning with Depthwise Separable Convolutions. In Proceedings of the Proc. IEEE Conf. Comput. Vis. Pattern Recognit. 2017; pp. 1251–1258. [Google Scholar]

- Lin, M.; Chen, Q.; Yan, S. Network in Network. https://arxiv.org/abs/1312. 4400, 2013, [1312. [Google Scholar]

- Szegedy, C.; Liu, W.; Jia, Y.; Sermanet, P.; Reed, S.; Anguelov, D.; Erhan, D.; Vanhoucke, V.; Rabinovich, A. Going Deeper with Convolutions. In Proceedings of the Proc. IEEE Conf. Comput. Vis. Pattern Recognit. 2015; pp. 1–9. [Google Scholar]

- Sandler, M.; Howard, A.; Zhu, M.; Zhmoginov, A.; Chen, L.C. MobileNetV2: Inverted Residuals and Linear Bottlenecks. In Proceedings of the Proc. IEEE Conf. Comput. Vis. Pattern Recognit. 2018; pp. 4510–4520. [Google Scholar]

- Springenberg, J.T.; Dosovitskiy, A.; Brox, T.; Riedmiller, M. Striving for Simplicity: The All Convolutional Net. https://arxiv.org/abs/1412. 6806, 2014, [1412. [Google Scholar]

- Zeiler, M.D.; Krishnan, D.; Taylor, G.W.; Fergus, R. Deconvolutional Networks. In Proceedings of the Proc. IEEE Conf. Comput. Vis. Pattern Recognit. 2010; pp. 2528–2535. [Google Scholar]

- Radford, A.; Metz, L.; Chintala, S. Unsupervised Representation Learning with Deep Convolutional Generative Adversarial Networks. https://arxiv.org/abs/1511.0 6434, 2015, [1511. [Google Scholar]

- Nair, V.; Hinton, G.E. Rectified Linear Units Improve Restricted Boltzmann Machines. In Proceedings of the Int. Conf. Mach. Learn. 2010; pp. 807–814. [Google Scholar]

- Huang, G.; Liu, Z.; van der Maaten, L.; Weinberger, K.Q. Densely Connected Convolutional Networks. In Proceedings of the Proc. IEEE Conf. Comput. Vis. Pattern Recognit. 2017; pp. 4700–4708. [Google Scholar]

- Hu, J.; Shen, L.; Sun, G. Squeeze-and-Excitation Networks. In Proceedings of the Proc. IEEE Conf. Comput. Vis. Pattern Recognit. 2018; pp. 7132–7141. [Google Scholar]

- Woo, S.; Park, J.; Lee, J.Y.; Kweon, I.S. CBAM: Convolutional Block Attention Module. In Proceedings of the Proc. Eur. Conf. Comput. Vis. 2018; pp. 3–19. [Google Scholar]

- Wang, X.; Girshick, R.; Gupta, A.; He, K. Non-Local Neural Networks. In Proceedings of the Proc. IEEE Conf. Comput. Vis. Pattern Recognit. 2018; pp. 7794–7803. [Google Scholar]

- Chen, T.; Kornblith, S.; Norouzi, M.; Hinton, G. A Simple Framework for Contrastive Learning of Visual Representations. In Proceedings of the Int. Conf. Mach. Learn. 2020; pp. 1597–1607. [Google Scholar]

- He, K.; Fan, H.; Wu, Y.; Xie, S.; Girshick, R. Momentum Contrast for Unsupervised Visual Representation Learning. In Proceedings of the Proc. IEEE Conf. Comput. Vis. Pattern Recognit. 2020; pp. 9729–9738. [Google Scholar]

- Grill, J.B.; Strub, F.; Altché, F.; Tallec, C.; Richemond, P.H.; Buchatskaya, E.; Doersch, C.; Pires, B.A.; Guo, Z.D.; Azar, M.G.; et al. Bootstrap Your Own Latent: A New Approach to Self-Supervised Learning. https://arxiv.org/abs/2006.0 7733, 2020, [2006. [Google Scholar]

- Goodfellow, I.J.; Pouget-Abadie, J.; Mirza, M.; Xu, B.; Warde-Farley, D.; Ozair, S.; Courville, A.; Bengio, Y. Generative Adversarial Nets. In Proceedings of the Adv. Neural Inf. Process. Syst. 2014; pp. 2672–2680. [Google Scholar]

- Karras, T.; Laine, S.; Aittala, M.; Hellsten, J.; Lehtinen, J.; Aila, T. Analyzing and Improving the Image Quality of StyleGAN. In Proceedings of the Proc. IEEE Conf. Comput. Vis. Pattern Recognit. 2020; pp. 8110–8119. [Google Scholar]

- Ji, S.; Xu, W.; Yang, M.; Yu, K. 3D Convolutional Neural Networks for Human Action Recognition. IEEE Trans. Pattern Anal. Mach. Intell. 2013, 35, 221–231. [Google Scholar] [CrossRef] [PubMed]

- Tran, D.; Bourdev, L.; Fergus, R.; Torresani, L.; Paluri, M. Learning Spatiotemporal Features with 3D Convolutional Networks. In Proceedings of the Proc. IEEE Int. Conf. Comput. Vis. 2015; pp. 4489–4497. [Google Scholar]

- Kim, Y. Convolutional Neural Networks for Sentence Classification. In Proceedings of the Proc. Empirical Methods in Natural Language Processing (EMNLP); 2014; pp. 1746–1751. [Google Scholar]

- Tsantekidis, A.; Passalis, N.; Tefas, A.; Kanniainen, J.; Gabbouj, M.; Iosifidis, A. Forecasting Stock Prices from the Limit Order Book Using Convolutional Neural Networks. In Proceedings of the IEEE 19th Conf. Business Informatics (CBI), Vol. 1; 2017; pp. 7–12. [Google Scholar]

- Kipf, T.N.; Welling, M. Semi-Supervised Classification with Graph Convolutional Networks. https://arxiv.org/abs/1609.0 2907, 2016, [1609.07285]. [Google Scholar]

- Wu, C.; Pérez-Rúa, J.M.; Kim, T.K.; Evangelidis, P.; Brostow, G.; Xiang, T. CvT: Introducing Convolutions to Vision Transformers. https://arxiv.org/abs/2103.1 5808, 2021, [2103. [Google Scholar]

- Li, Y.; Cheng, K.; Liu, Y.; Wang, Y.; Zhang, Q.; Cheng, Y.; He, Z. Conformer-Based Self-Supervised Learning for Non-Speech Audio Tasks. https://arxiv.org/abs/2110.0 7313, 2021, [2110. [Google Scholar]

- Wu, B.; Wan, A.; Yue, X.; Jin, P.; Zhao, S.; Golmant, N.; Gholaminejad, A.; Gonzalez, J.; Keutzer, K. Shift: A Zero FLOP, Zero Parameter Alternative to Spatial Convolutions. In Proceedings of the Proc. IEEE Conf. Comput. Vis. Pattern Recognit. 2018; pp. 9127–9135. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).