4.1. Datasets and Evaluation Metrics

Datasets: We conduct a comprehensive evaluation of the proposed method on both synthetic and real-world image datasets. Realistic Single Image Dehazing (RESIDE) [

35] is a widely used benchmark dataset, consisting of the Indoor Training Set (ITS), the Outdoor Training Set (OTS), the Synthetic Objective Testing Set (SOTS), the Real-world Task-driven Testing Set (RTTS), and the Hybrid Subjective Testing Set (HSTS). In the synthetic experiments, we train our model on ITS and OTS, and evaluate it on SOTS-indoor and SOTS-outdoor, respectively. For real-world scenarios, we adopt the Dense-Haze [

36], NH-Haze [

37] and RTTS datasets to assess the performance of our method on real hazy images. Detailed settings are summarized in

Table 1.

Evaluation Metrics: For dehazing performance evaluation, we employ a total of seven widely used image quality assessment metrics, categorized into two types. The full-reference metrics include Peak Signal-to-Noise Ratio (PSNR), Structural Similarity Index (SSIM) [

38], and CIEDE2000 color difference [

39]. The no-reference metrics consist of Fog Aware Density Evaluation (FADE) [

40], Natural Image Quality Evaluator (NIQE) [

41], Perception-based Image Quality Evaluator (PIQE) [

42], and Blind/Referenceless Image Spatial Quality Evaluator (BRISQUE) [

43]. These metrics are widely adopted in the field of computer vision to measure visual quality and perceptual consistency of images. For network performance evaluation, we report the number of model parameters (Param, in millions), computational complexity (MACs, in billions of multiply-accumulate operations), and inference latency (Latency, in milliseconds). To ensure fairness, all experiments are conducted on images with a resolution of 250×250.

4.2. Implementation Details

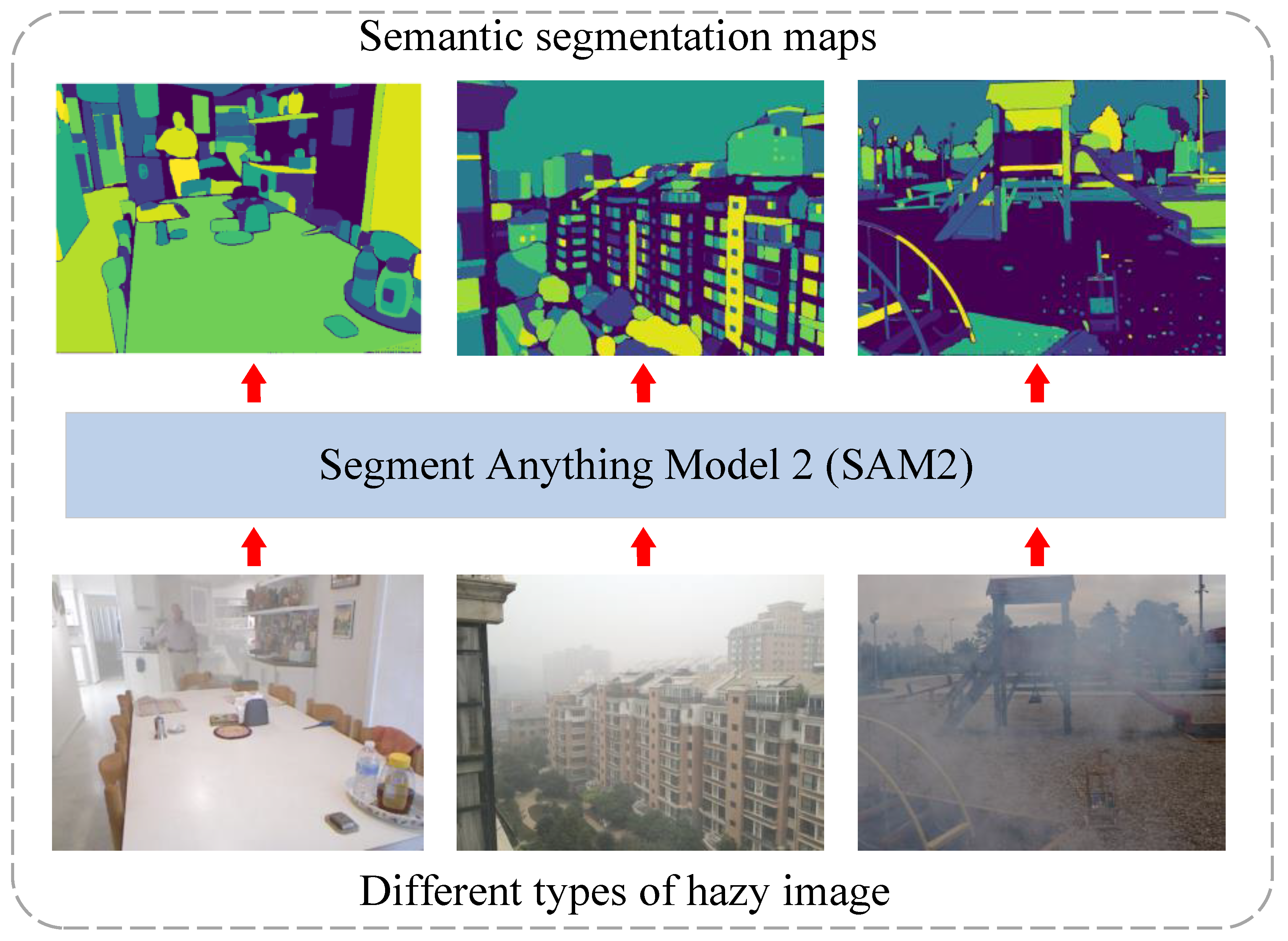

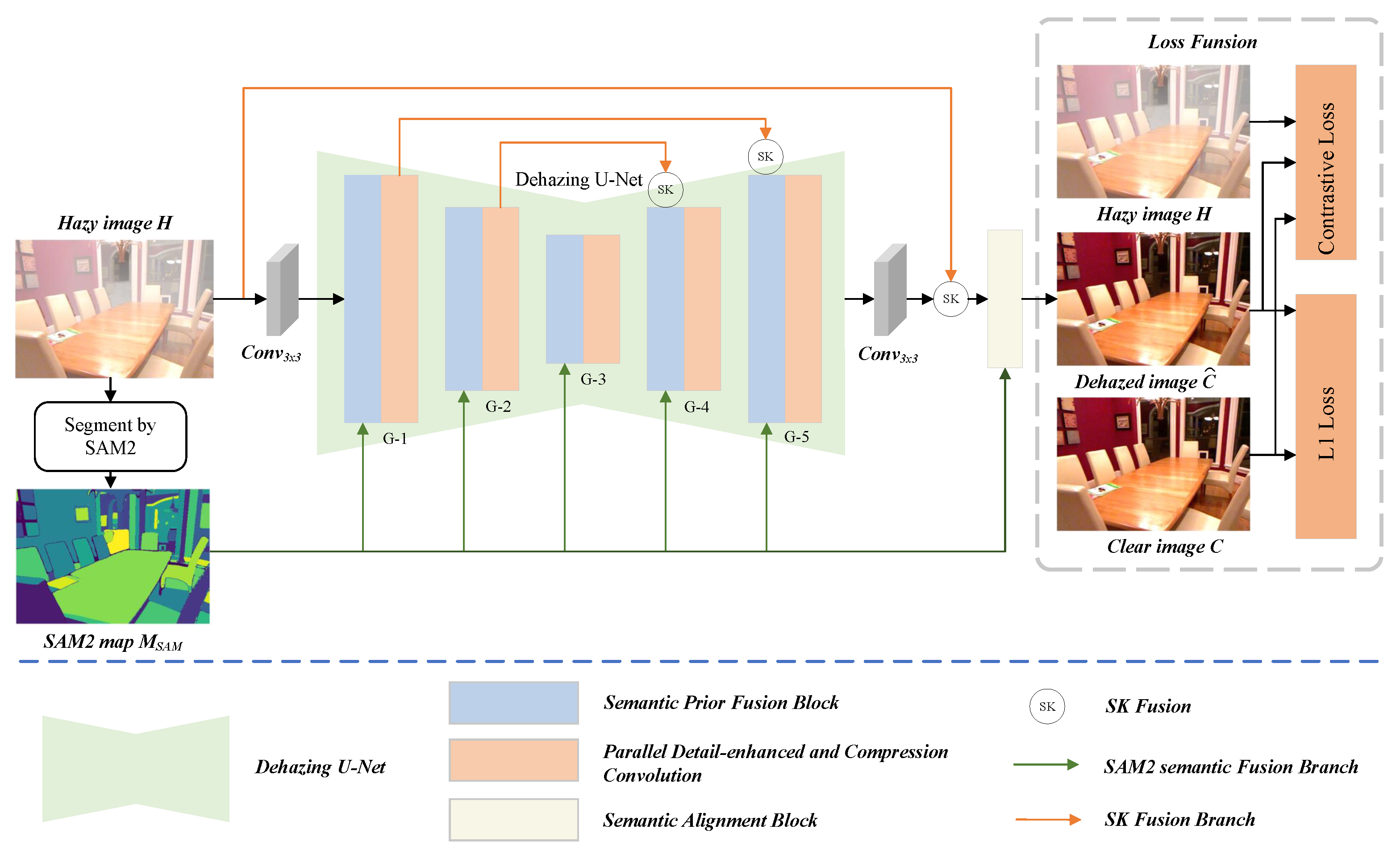

We use the SAM2 pretrained model for segmentation. Compared with the SAM segmentation model, SAM2 demonstrates significant improvements in segmentation accuracy, speed, interaction efficiency, and applicability across various scenarios. In particular, it shows stronger information processing capabilities and generalization in zero-shot segmentation tasks. All experiments are conducted on an NVIDIA RTX A6000 GPU (48GB VRAM), and the model is implemented based on the Pytorch 2.4.1 framework. During training, we optimize the network using the AdamW optimizer, with decay parameters set to

and

. The initial learning rate is set to

, and it is gradually decreased to

using a cosine annealing strategy to ensure training stability. During training, input images are randomly cropped into 256 × 256 patches. We design three variants of SAM2-Dehaze, named S (Small), B (Basic), and L (Large).

Table 2 presents detailed configurations of each variant, with two key columns indicating the number of G-blocks in the network and their corresponding embedding dimensions.

4.3. Comparison with State-of-the-Arts

In this section, we compare our SAM2-Dehaze with eleven dehazing methods, including DCP [

12], MSCNN [

16], AOD-Net [

17], GridDehazeNet [

20], FFA-Net [

7], AECR-Net [

21], Dehamer [

44], MIT-Net [

45], RIDCP [

46], C2PNet [

47], and DEA-Net [

23], on the synthetic haze datasets SOTS-indoor and SOTS-outdoor. For real-world datasets, we compare seven methods (DCP, AOD-Net, FFA-Net, Dehamer, MIT-Net, RIDCP, MixDehazeNet [

48]) on the Dense-Haze and NH-Haze datasets. For the RTTS dataset, we compare our method with seven existing approaches, including GridDehazeNet, FFA-Net, Dehamer, C2PNet, MIT-Net, DEA-Net, and IPC-Dehaze [

49]. On the synthetic datasets, we report results for three variants of SAM2-Dehaze (-S, -B, -L), while on the real-world datasets, only the SAM2-Dehaze-L variant is evaluated. To ensure a fair comparison, for other methods, we either use their officially released code or publicly reported evaluation results. If these are not available, we retrain the models using the same training datasets.

Results on Synthetic Datasets:Table 3 presents the quantitative evaluation results of our SAM2-Dehaze and other state-of-the-art methods on the SOTS dataset. As shown, our SAM2-Dehaze-L achieves the best performance on the SOTS-indoor dataset, with a PSNR of 42.83 dB and an SSIM of 0.997, outperforming all other methods. Even the lightweight SAM2-Dehaze-S achieves the second-best performance, apart from ours, with a PSNR of 41.41 dB and an SSIM of 0.996. On the SOTS-outdoor dataset, our method does not achieve the best result but still ranks in the upper-middle tier overall. The best-performing variant, SAM2-Dehaze-L, reaches a PSNR of 36.22 dB and SSIM of 0.989. In addition, as shown

Table 4, we report Params, MACs, and Latency as the main metrics for computational efficiency. Compared to recent advanced methods, our SAM2-Dehaze achieves a balanced trade-off between accuracy and efficiency. Given the performance improvements, the slight increase in computational cost is acceptable. It is worth noting that Params, MACs, and Latency are evaluated based on 256 × 256 RGB input images.

Figure 6 and

Figure 7 show the visual comparison results of our proposed method against several state-of-the-art dehazing methods on the synthetic datasets SOTS-indoor and SOTS-outdoor. To more comprehensively evaluate the performance of each method, we further incorporate the RGB histogram distribution of the images to quantitatively assess color consistency in addition to subjective visual analysis. On the SOTS-indoor dataset, traditional methods such as DCP and RIDCP perform poorly, with noticeable color distortions and residual artifacts in the restored images. AOD-Net also fails to remove most of the haze effectively. Although GridDehazeNet, FFA-Net, Dehamer, and MIT-Net produce visually plausible results, the histogram analysis shows that our method achieves a distribution in the RGB channels that is more consistent with the ground truth clear images, indicating its superiority in both color restoration and detail preservation. For the SOTS-outdoor scenario, DCP often introduces artifacts in sky regions, and although AOD-Net retains some structural information, noticeable haze still remains. It is worth noting that SOTS-outdoor is a synthetic dataset generated using the atmospheric scattering model, and some of the so-called "haze-free" images may still contain traces of real haze, which can introduce noise into model training and evaluation. While methods like GridDehazeNet, FFA-Net, Dehamer, MIT-Net, and RIDCP perform well on synthetic haze, they still exhibit limitations in handling residual real haze embedded in images. In contrast, our proposed method not only effectively removes synthetic haze but also demonstrates greater robustness and adaptability in dealing with residual real haze, achieving superior performance in both visual quality and detail preservation.

Results on Real-World Datasets:Table 5 shows the quantitative evaluation results of our SAM2-Dehaze-L compared with other state-of-the-art methods on the Dense-Haze and NH-Haze datasets. As observed, our method achieves competitive results on both datasets. On the Dense-Haze dataset, our method achieves the best performance with 20.61 dB in PSNR, 0.725 in SSIM, and 8.5909 in CIEDE2000. Compared with the baseline MixDehazeNet-L, our method improves PSNR by 4.71 dB, SSIM by 0.146, and CIEDE2000 by 28.99%. On the NH-Haze dataset, our method also achieves the best results, with a PSNR of 22.02 dB, SSIM of 0.831, and CIEDE2000 of 8.5108. Compared with the baseline, the improvements are 1.01 dB in PSNR, 0.004 in SSIM, and 10.53% in CIEDE2000. It is worth noting that the performance gain on the NH-Haze dataset is relatively smaller. We attribute this to the fact that the Dense-Haze dataset contains a higher concentration of haze and more severe image degradation, which poses greater challenges for traditional methods in feature extraction. In contrast, our method leverages semantic prior information from the SAM2, which significantly enhances the network’s structural perception and semantic representation capabilities under dense haze conditions, thereby leading to more substantial performance improvements.

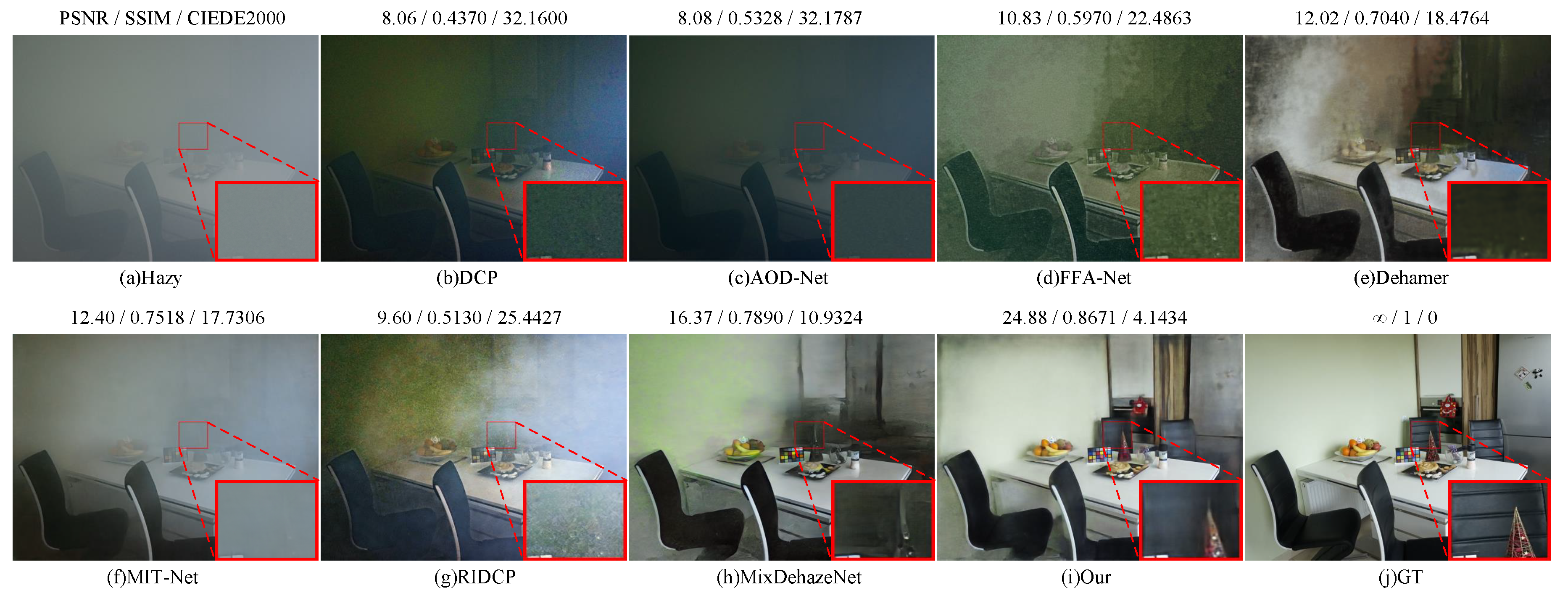

Figure 8 and

Figure 9 show the qualitative comparison results of our method on the Dense-Haze and NH-Haze real-world haze datasets. It can be observed that traditional methods such as DCP and RIDCP exhibit noticeable color distortions and residual haze on both datasets. Lightweight deep models like AOD-Net show limited dehazing capability. Although FFA-Net and MIT-Net perform better, they struggle with detail preservation, resulting in blurry regions in local areas. Dehamer improves image brightness, but introduces significant artifacts and unnatural regions. MixDehazeNet demonstrates overall improvement, but still fails to fully handle regions with dense haze. In contrast, our proposed method achieves superior dehazing performance on both datasets. It effectively restores realistic color and fine textures while suppressing noise and artifacts, producing results that are noticeably clearer, more natural, and more visually consistent with the ground truth images.

Results on RTTS datasets:Table 6 presents the quantitative comparison between our method and several state-of-the-art dehazing approaches on the RTTS dataset, evaluated using four no-reference image quality metrics: FADE, NIQE, PIQE, and BRISQUE. As shown in the table, although IPC-Dehaze achieves the best overall performance, our method outperforms most existing methods across all metrics.

We conduct a qualitative comparison on the RTTS dataset, as shown in

Figure 10. Overall, FFA-Net, Dehamer, GridDehazeNet, and MIT-Net exhibit limited dehazing performance across various complex scenes, with noticeable haze residuals remaining in the restored images. ICP-Dehaze demonstrates a certain level of effectiveness in some samples, but still falls short in terms of structural detail recovery and color fidelity. In contrast, our method consistently delivers superior visual quality across all test images. The restored results are significantly cleaner and more visually pleasing, with natural color reproduction and well-preserved details, without introducing noticeable artifacts or over-enhancement effects.

4.4. Ablation Study

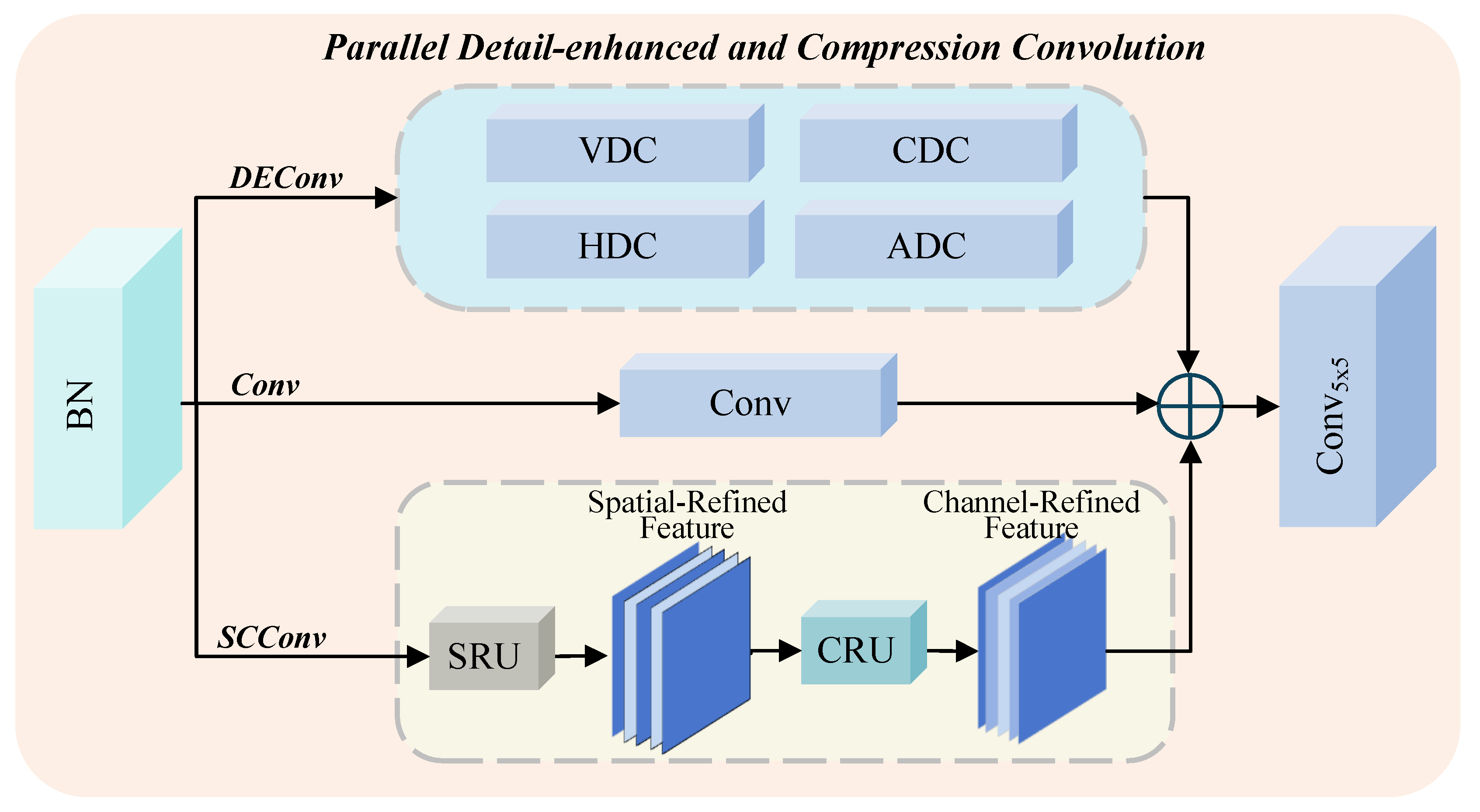

Impact of Different Components in the Network. To further validate the effectiveness of each proposed component, we conduct ablation studies to analyze the contributions of the key modules, including the SPFB, the PDCC, and the SAB. We take MixDehazeNet-S as the baseline network and construct four variants based on it as follows:

- (1)

Base + SPFB → V1

- (2)

Base + HEConv → V2

- (3)

Base + SPFB + HEConv → V3

- (4)

Base + SPFB + HEConv + SAB → V4

All models are trained using the same training strategy, and the “S” variant is evaluated on the ITS-indoor test set. The experimental results are shown in

Table 7 and

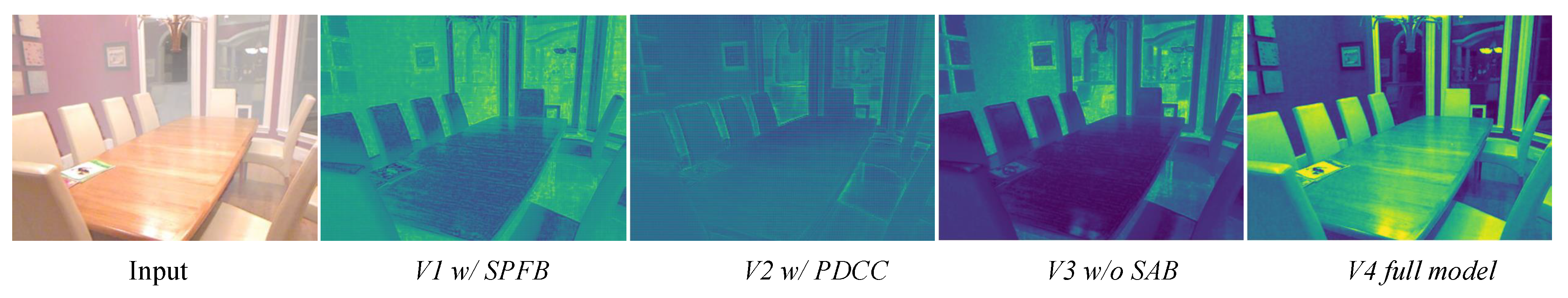

Figure 11. As illustrated in

Table 7, each proposed module contributes to performance improvements in dehazing quality. In particular, the SPFB module improves PSNR by 1.77 dB, respectively, over the baseline. The PDCC module also brings notable gains. Overall, each component contributes to better dehazing performance, verifying the effectiveness of our design. We also visualize the feature maps of each module to further demonstrate their impact. As shown:

enhances some edge features but still suffers from coarse outputs and insufficient detail recovery;

improves edge and structure clarity to some extent but lacks fine-grained textures;

yields moderate global improvement, but fails to preserve table textures and background structures, resulting in blurry features. In contrast, the

, which integrates SPFB, PDCC, and SAB, produces much clearer features with better spatial sharpness and detail recovery, including more precise object boundaries. These visualizations further confirm the effectiveness of each proposed component.

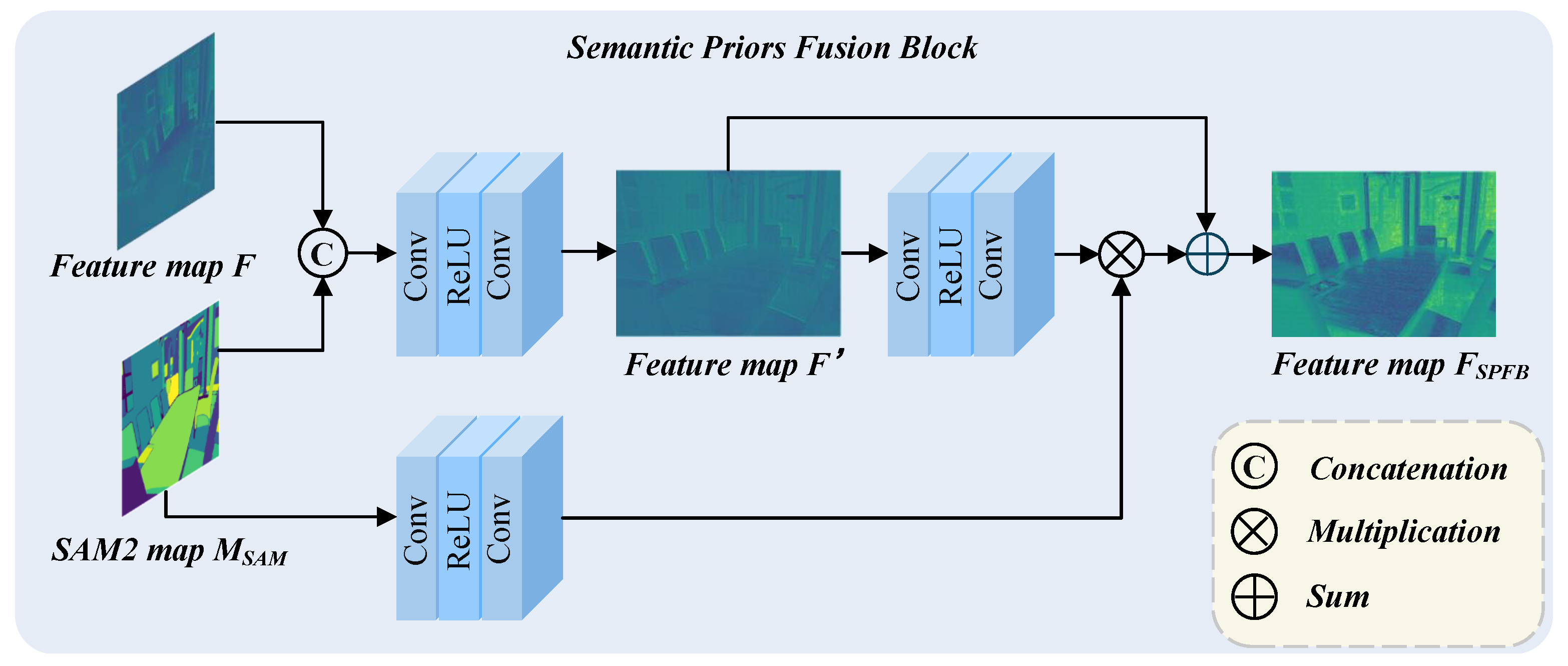

Ablation Study on SPFB Module. To thoroughly validate the effectiveness of the proposed SPFB module, we designed a set of detailed ablation experiments from two perspectives: external fusion strategies and internal structural variations of the SPFB module. For the fusion strategy, we considered the following baselines: (1) SPFB-N1: The SPFB module is not used; instead, feature fusion is performed using simple element-wise addition. (2) SPFB-N2: The input RGB image is extended to four channels by appending the segmentation mask as the fourth channel and feeding it together into the dehazing network. For the internal structure of the SPFB module, we introduced the following variants: (1) SPFB-F1: The fusion with input feature F is removed, and only the intermediate semantic attention map

is fed into the function

. (2) SPFB-F2: The feature extraction branch used to obtain the semantic prior from

is removed. As shown in the results table, none of these alternatives whether in terms of fusion strategies or SPFB structural variants can match the performance of our original SPFB design. This clearly demonstrates the superior capability of our SPFB module in both structural fusion and semantic guidance. Moreover, we further analyzed the impact of inserting the SPFB module at different positions in the network. As illustrated in the

Table 8, increasing the number of inserted SPFB modules consistently improves the network performance. This progressive enhancement trend further confirms the effectiveness of the SPFB module, especially in boosting the modeling capacity of multi-level features through structural and semantic reinforcement.

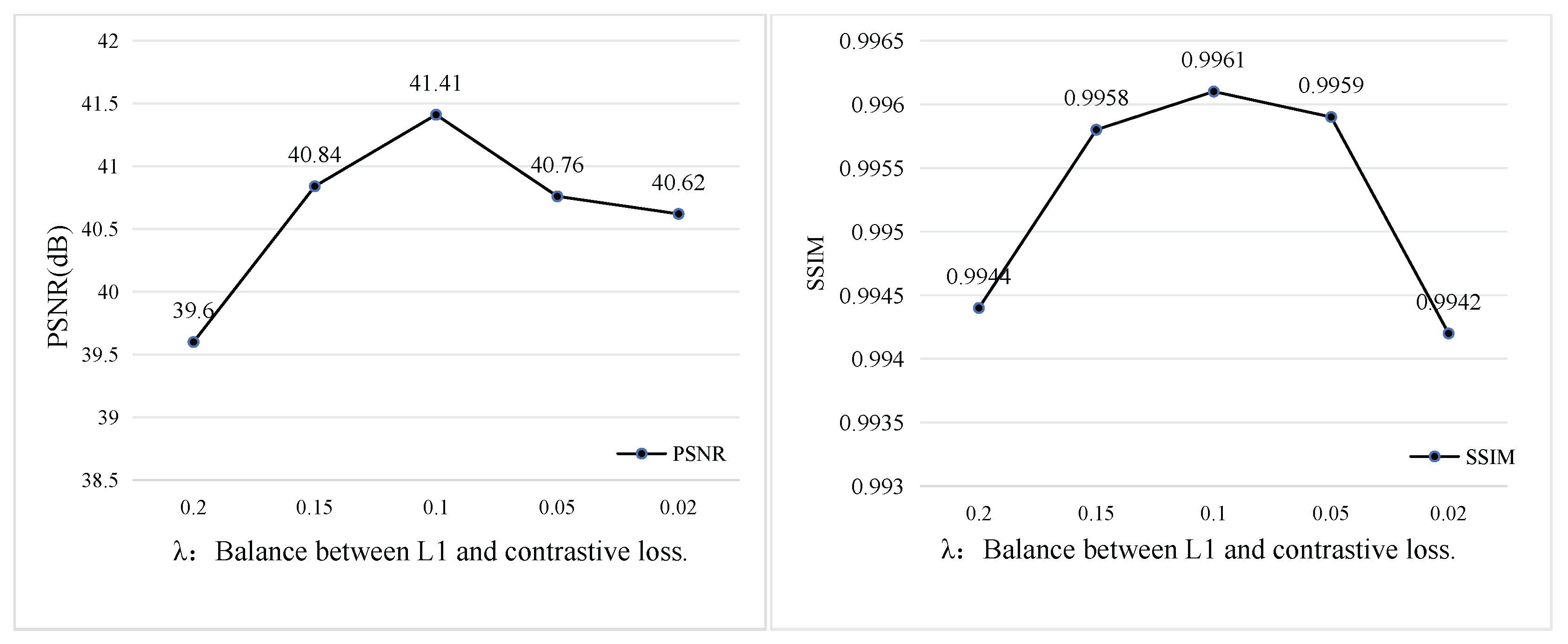

Impact of Loss Function Hyperparameters. To select the optimal hyperparameters, we conduct ablation experiments on the weight parameters of the contrastive loss and L1 loss. A penalty factor

lambda is introduced before the contrastive loss to determine its optimal contribution. As shown in

Figure 12, when

, the model achieves the best performance in both PSNR and SSIM, indicating the most effective dehazing capability.