Submitted:

10 October 2025

Posted:

14 October 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Background and Related Work

2.1. Brief History of Development Environments

2.2. Lexical Search

2.3. Semantic Search

2.3.1. Retrieval-Augmented Generation (RAG) for Code

2.3.2. Code Knowledge Graphs

2.4. Language Server Protocol (LSP)

2.5. Agentic Search

2.6. Multi-Agent and Sub-Agent Architectures

3. Study Design

3.1. Research Objectives

3.2. Research Approach

3.3. Study Methodology

3.3.1. Task Selection

| Language | Files | Lines | Blank | Comment | Code |

|---|---|---|---|---|---|

| JSON | 12 | 23,401 | 2 | 0 | 23,399 |

| Go | 97 | 12,334 | 1,693 | 348 | 10,293 |

| TypeScript JSX | 58 | 8,070 | 685 | 604 | 6,781 |

| Python | 63 | 5,762 | 1,109 | 349 | 4,304 |

| TypeScript | 26 | 2,700 | 326 | 292 | 2,082 |

| Markdown | 30 | 2,692 | 701 | 0 | 1,991 |

| JavaScript | 7 | 821 | 64 | 179 | 578 |

| SQL | 25 | 666 | 86 | 98 | 482 |

| Bourne Shell | 5 | 357 | 64 | 89 | 204 |

| CSS | 3 | 173 | 12 | 1 | 160 |

| Toml | 4 | 120 | 15 | 0 | 105 |

| Makefile | 1 | 76 | 9 | 8 | 59 |

| Protobuf | 2 | 88 | 25 | 21 | 42 |

| YAML | 1 | 41 | 8 | 0 | 33 |

| HTML | 1 | 20 | 3 | 0 | 17 |

| Docker | 1 | 38 | 10 | 11 | 17 |

| Autoconf | 1 | 3 | 0 | 0 | 3 |

| Plain Text | 1 | 4 | 1 | 0 | 3 |

| Total | 338 | 57,366 | 4,813 | 2,000 | 50,553 |

- Identifying the correct service directory where the GitHub connector is located across multiple folders and services

- Finding the location where the GitHub connector interface is defined across multiple files

- Understanding existing methods and validating whether they implement the GitHub connector interface

3.3.2. Agent Selection

- Adoption and community engagement (measured by GitHub stars, issue activity, and social media discussion),

- Diversity of retrieval approaches (agentic search, semantic indexing, LSP integration, multi-agent architectures), and

- Availability for analysis through either open-source code or comprehensive documentation.

3.3.3. Data Collection Protocol

- Qualitative observations: Retrieval strategy (tools invoked, search patterns, file exploration order), decision-making transparency (whether the agent’s retrieval logic is interpretable), and notable behaviors (iterative refinement, context re-gathering, tool failures).

- Quantitative metrics: Context window utilization as our primary metric (total tokens consumed including input, output, and reasoning). In addition, we recorded cost per run (based on model pricing), tool call counts (categorized by type: file read, search, navigation, execution), and task completion status.

- Execution traces: Trace of agent interactions, full chat logs or code files, and retrieved context snapshots (which files/snippets were included in prompts).

3.3.4. Analysis Approach

3.4. Scope and Limitations

- Single task, single repository: Findings may not generalize across different task types (bug fixing, feature addition, documentation), repository characteristics (language, size, architecture), or domains.

- Subjective analysis: While we collect quantitative metrics, much of the analysis relies on qualitative interpretation of agent behavior. Different researchers might draw different conclusions.

- No variable isolation: Agents differ in multiple dimensions simultaneously (model, prompt, tools, architecture). We cannot make causal claims about which specific factors drive observed differences.

- Rapid ecosystem evolution: Coding agents and their retrieval mechanisms evolve quickly. Findings reflect the state of tools at the time of experimentation and may become outdated.

4. Analysis

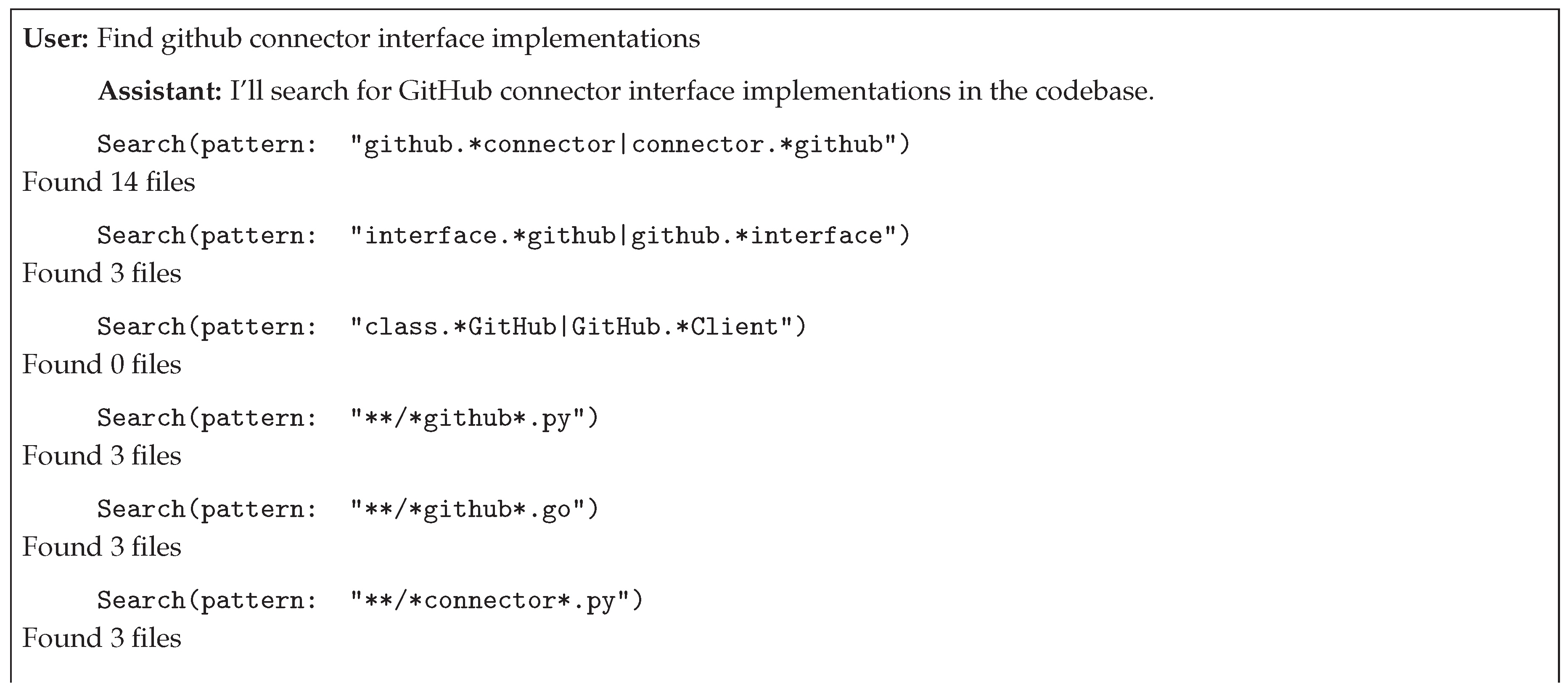

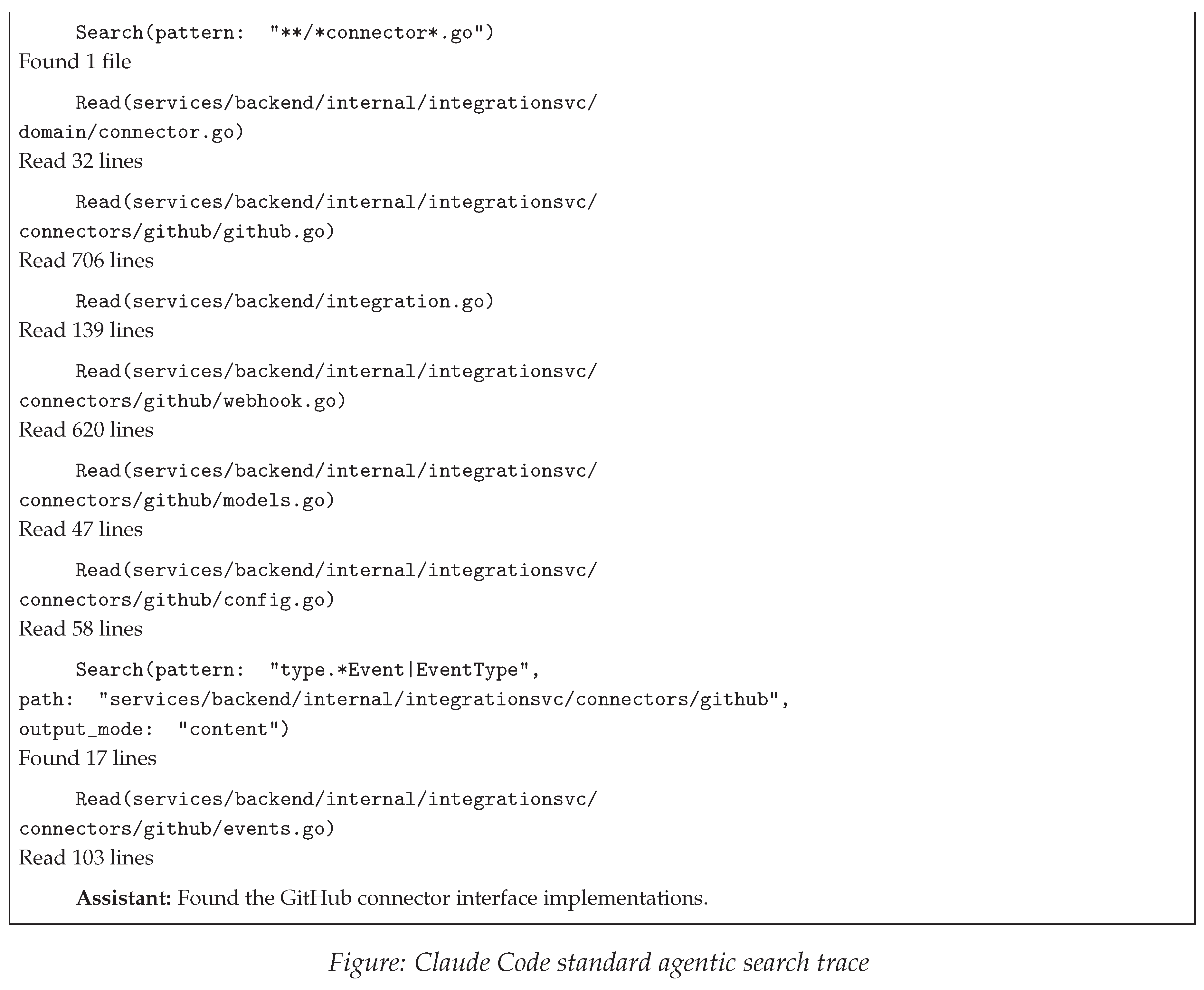

4.1. Claude Code

- Broad discovery phase: Initial pattern-based searches cast a wide net using general keywords to identify candidate locations across the codebase

- Progressive refinement: Iterative narrowing of search scope through more specific patterns and filters, reducing candidate sets from broad matches to focused targets

- Targeted examination: Selective file reading of high-confidence candidates to extract and validate findings

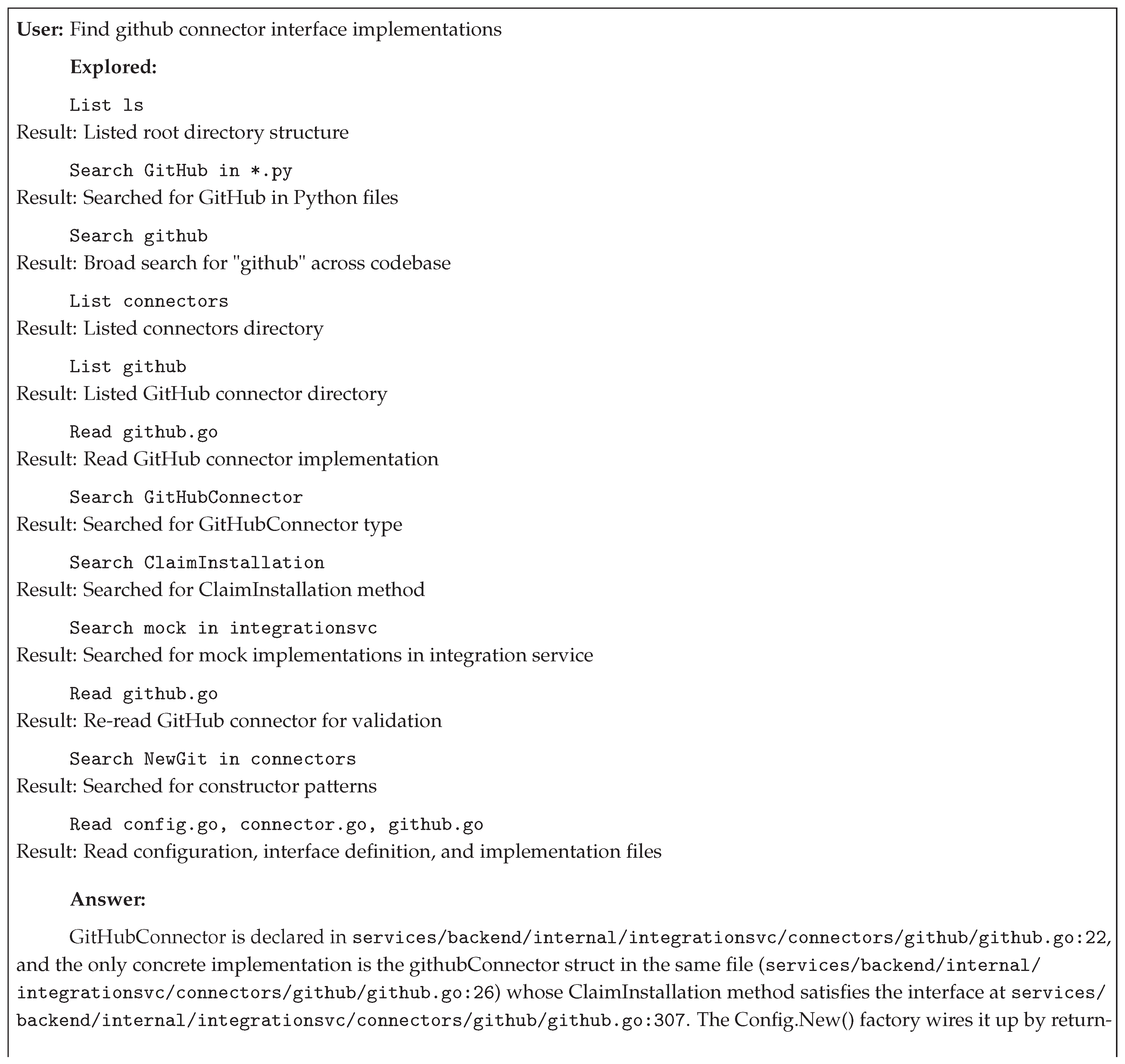

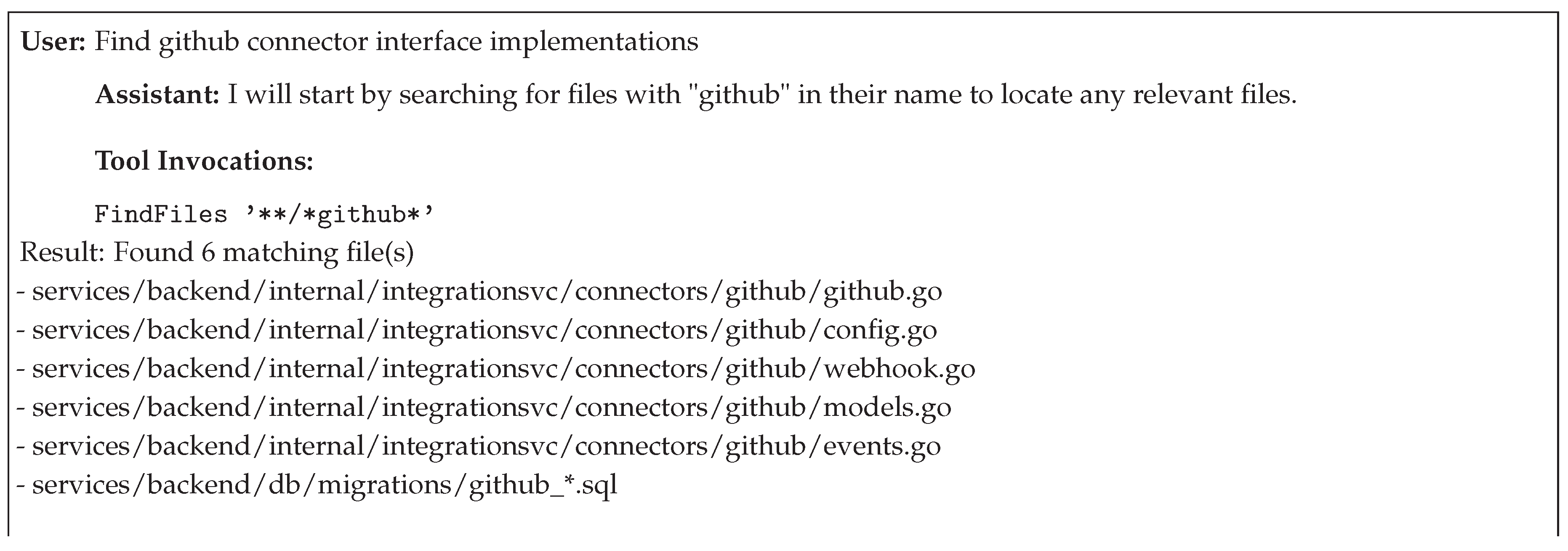

4.2. Codex CLI

- Broad keyword searches to identify initial candidate sets,

- Pattern-based refinement using regex to reduce candidates through structural constraints, and

- Targeted file reading with connector-specific validation. The approach leverages negative evidence, where searches returning zero results inform architectural understanding and guide subsequent query formulation. Progressive pattern specificity enables spatial reasoning about codebase organization, narrowing from general terms to type-specific constructs.

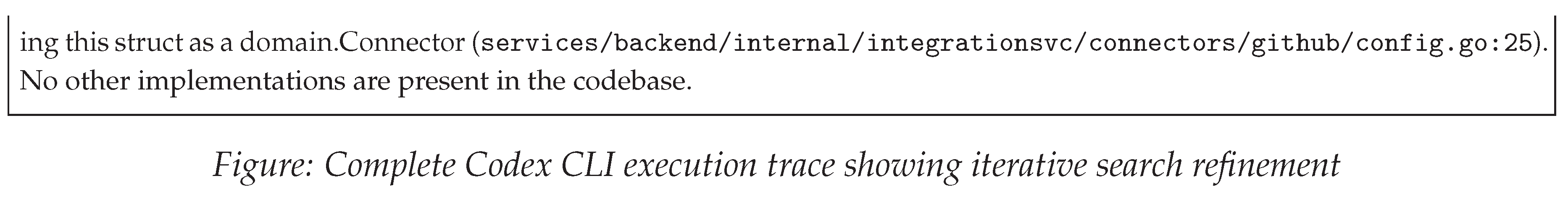

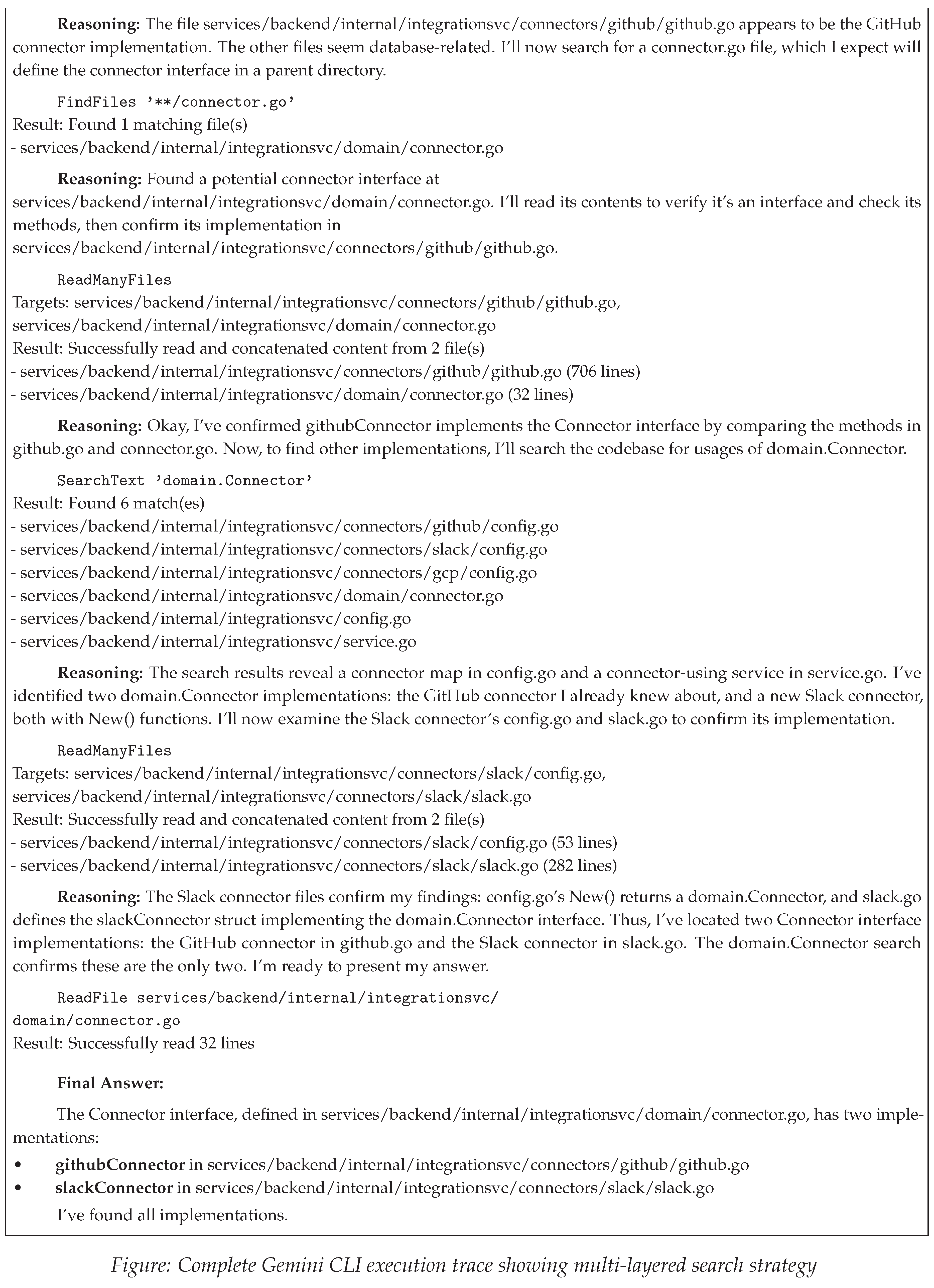

4.3. Gemini CLI

- Broad file pattern discovery using glob-based matching to identify candidate locations,

- Structural file location using targeted patterns to discover definitions,

- Parallel batch file reading to efficiently gather context from multiple sources, and

- Cross-reference searches to identify usage patterns and implementations.

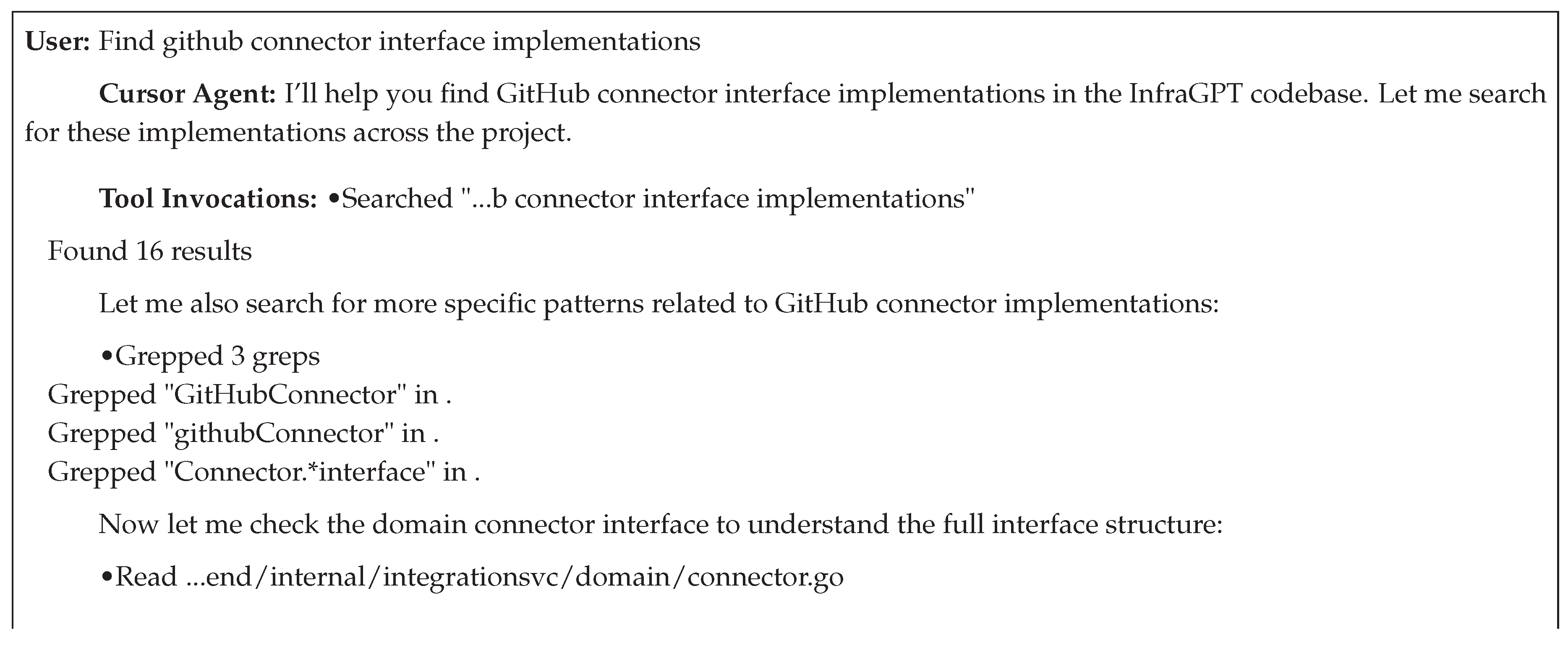

4.4. Cursor

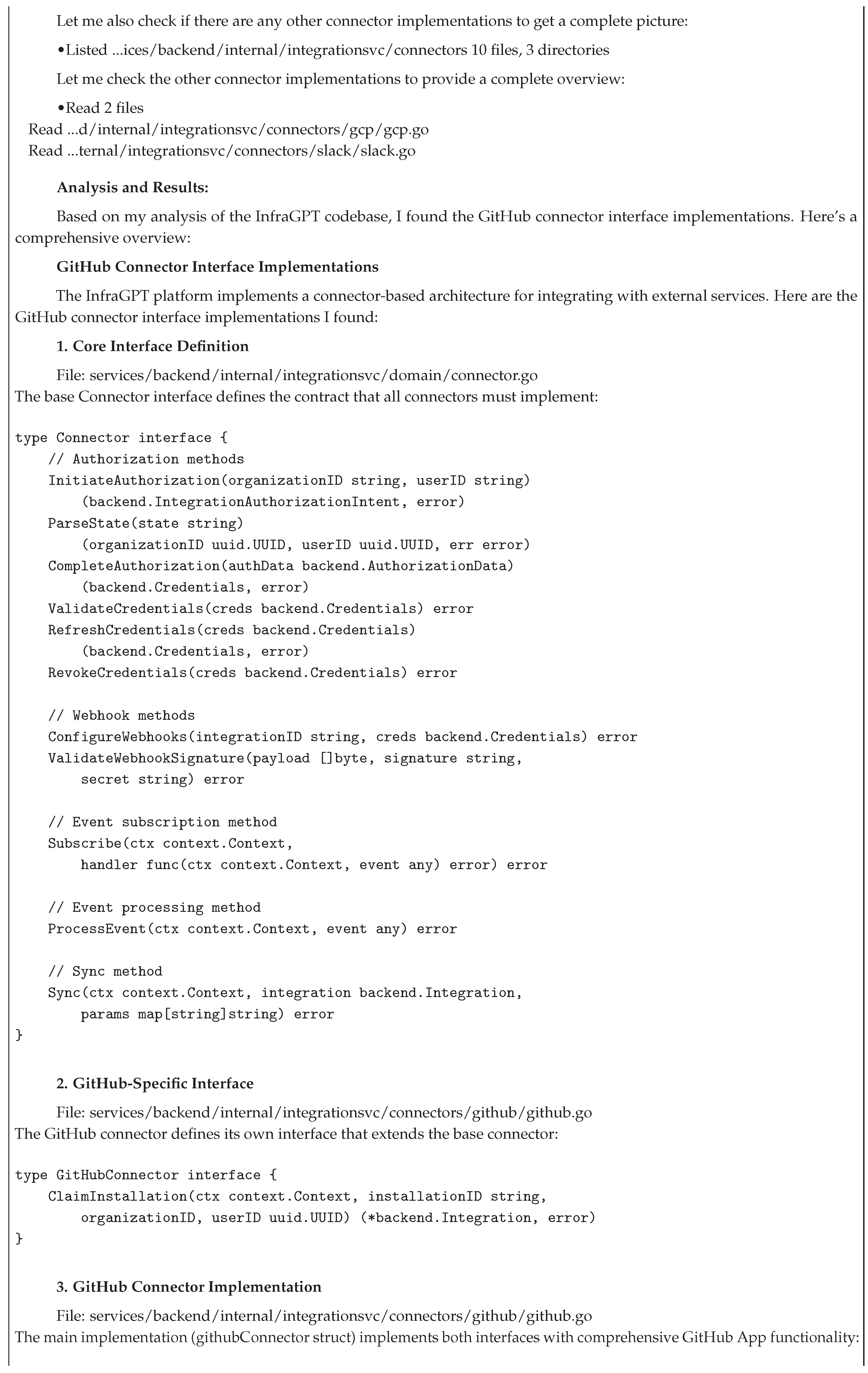

4.5. Amp

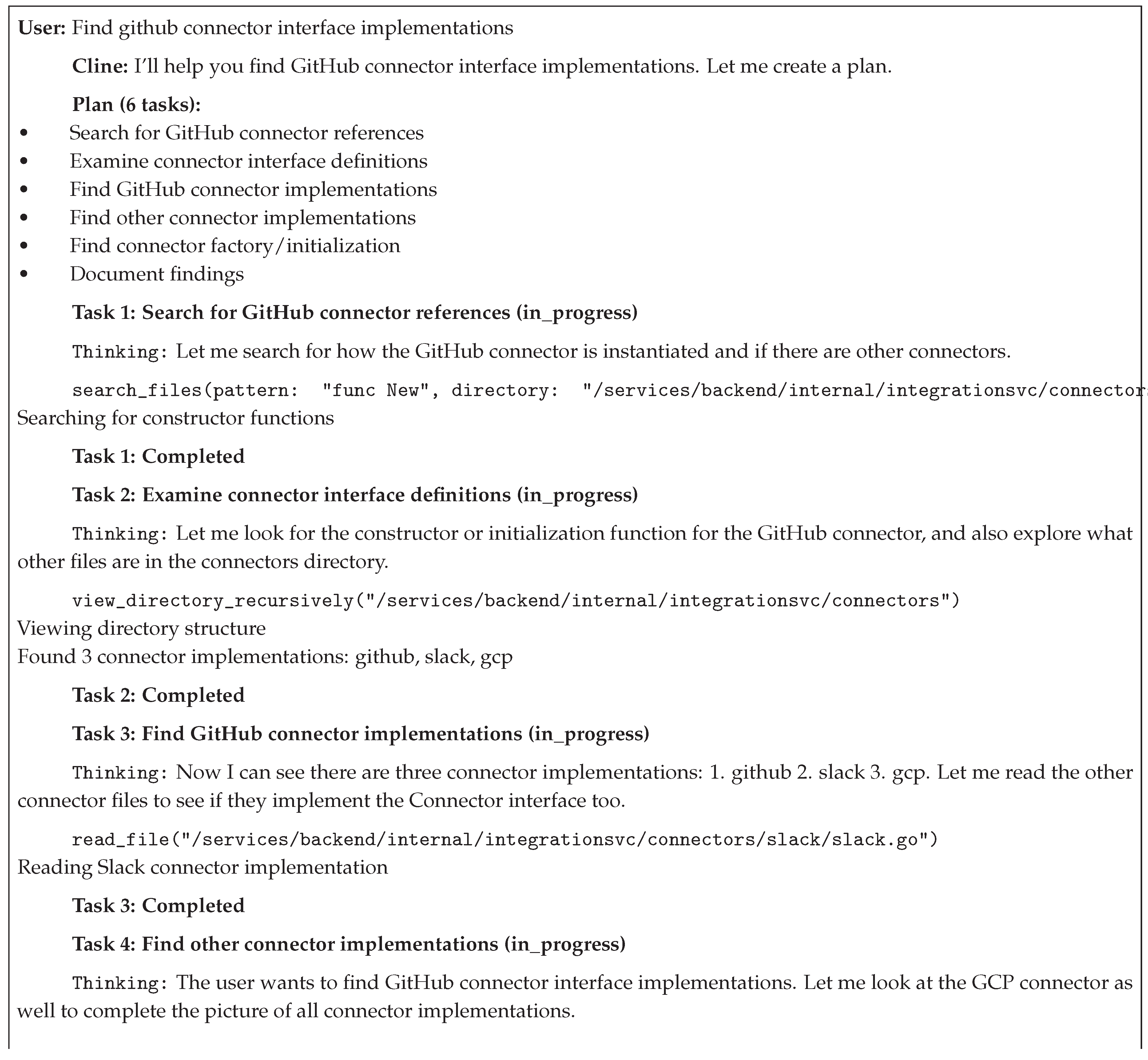

4.6. Cline

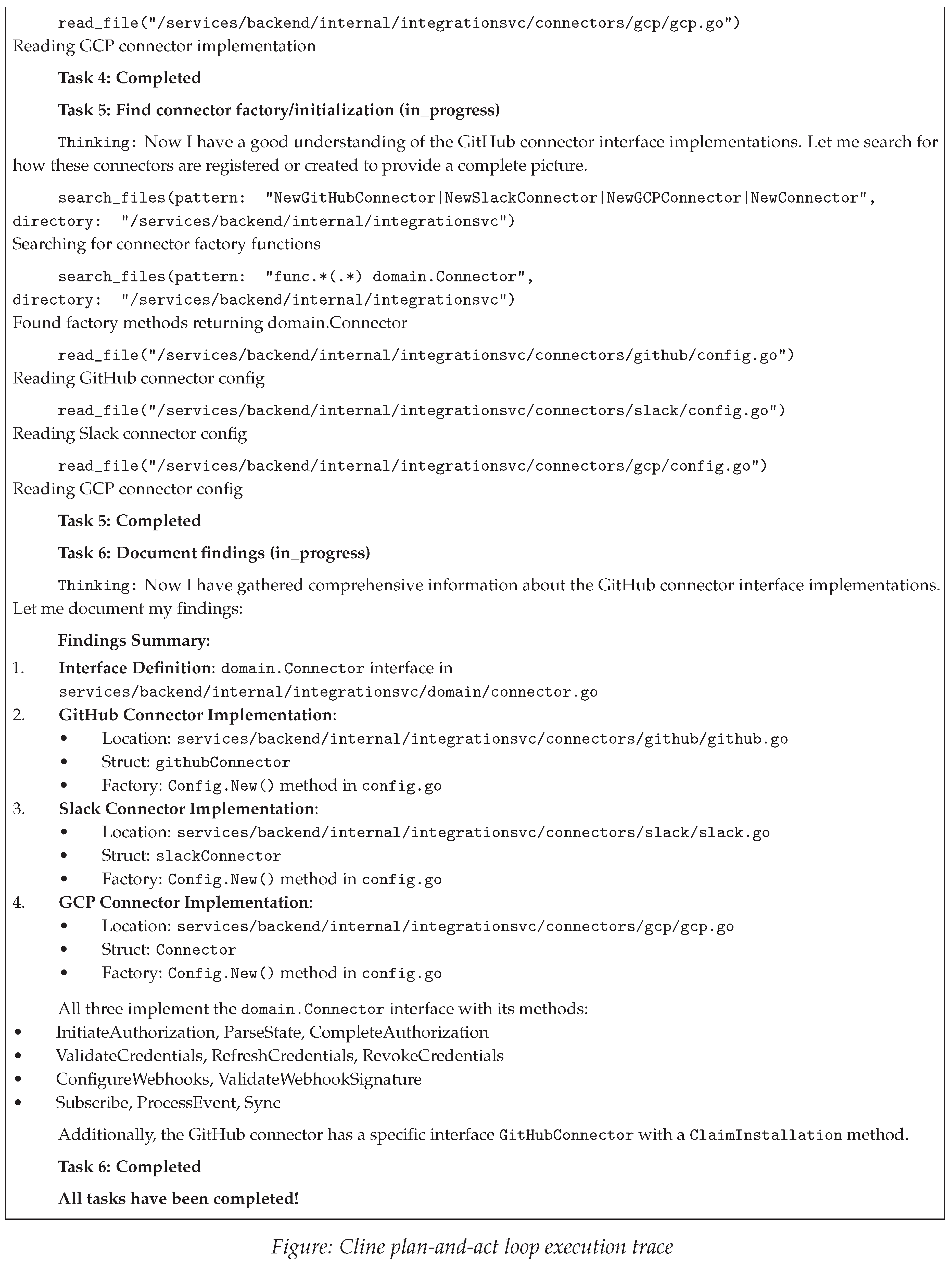

4.7. Aider

4.8. Resource Consumption Analysis

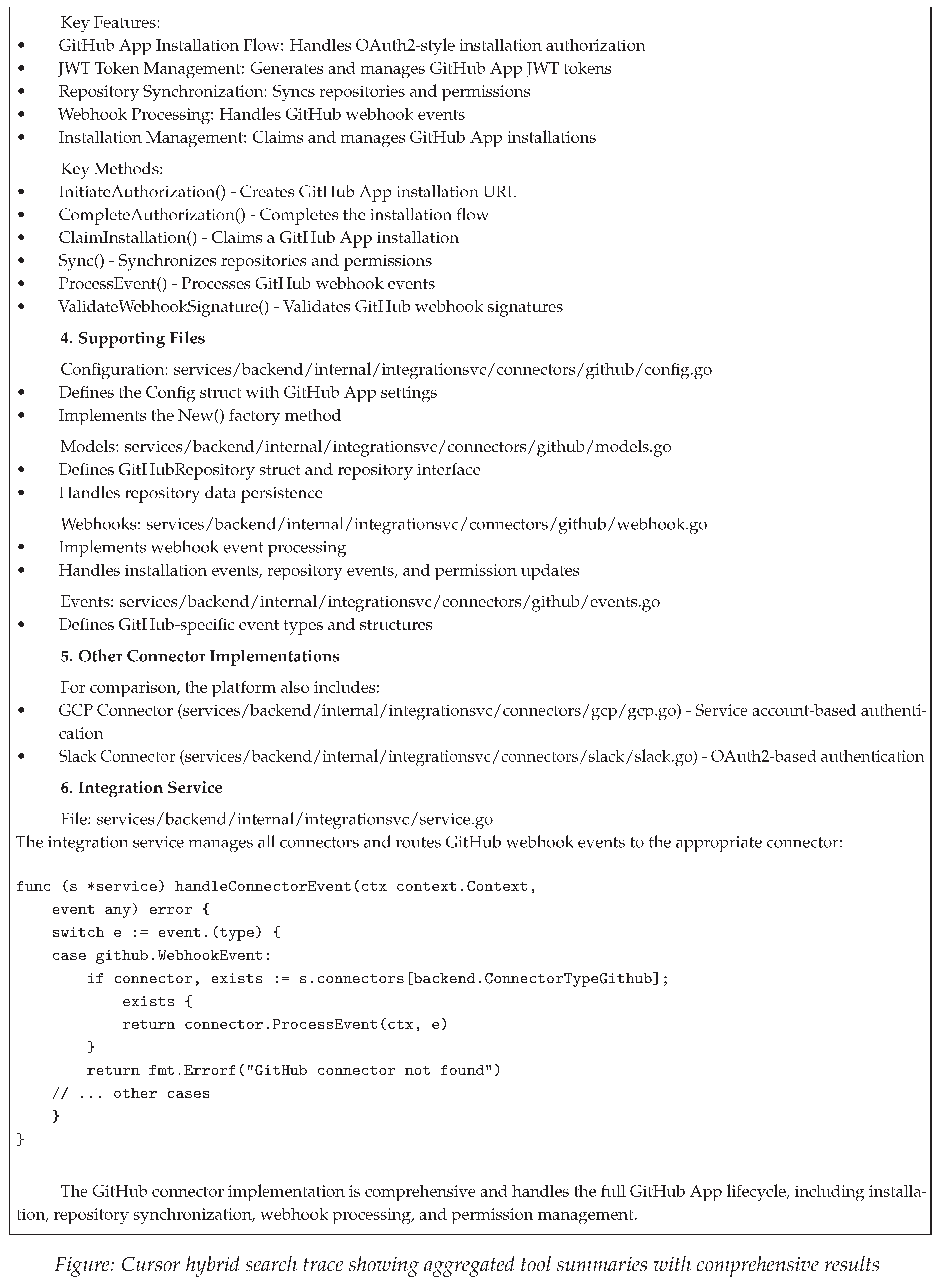

4.9. Comparison of Code Search Tools in Coding Agents

| Agent | Primary Retrieval Mechanism | Architectural Paradigm | Context Provisioning | Key Strengths | Noted Limitations |

|---|---|---|---|---|---|

| Claude Code | Agentic Search (grep) | CLI-Native | Explicit tools + Bash | Predictability and simplicity | High token consumption, potential for irrelevant context |

| Gemini CLI | Agentic Search (grep) | CLI-Native | Explicit tools + Bash | Parallel tool calls, fastest retrieval | High token consumption |

| Codex CLI | Shell Command Orchestration | CLI-Native | Explicit tools + Bash | Progressive search, directory scoped pattern search | Highest token consumption, incorrect token usage reporting |

| Cursor | Hybrid Semantic-Lexical (Embeddings + Grep) | IDE-Integrated (Hybrid CLI) | Background indexing and Explicit tools | Whole-codebase awareness, fast interactive queries | Partial transparency (aggregated tool summaries), initial indexing overhead |

| Cline | Hybrid Agentic Search (ripgrep + fzf + Tree-sitter AST) | IDE-Integrated (VS Code) | Explicit (plan-and-act loop) | Three-tier retrieval (lexical + fuzzy + AST), multi-language AST parsing, efficient context usage (35k tokens) | Limited AST depth (top-level only), 300 result limit, relies on effective agent planning |

| Aider CLI | Graph-Based AST Ranking (Tree-sitter + PageRank) | CLI-Native | Repo-map with symbol-level visibility | Graph topology preserves architecture, PageRank relevance scoring, deterministic offline operation, 40+ languages, lowest token usage (8.5k-13k), symbol signatures visible without full file retrieval | Indexing overhead |

| Amp | Multi-Agent Orchestration (Sub-Agent Delegation) | CLI-Native | Hybrid (explicit sub-agent + agentic search) | Modularity, parallel sub-agent execution, specialized prompting, efficient token usage ( 19k, 2% of 968k context) | Context isolation complexity, requires careful result marshaling |

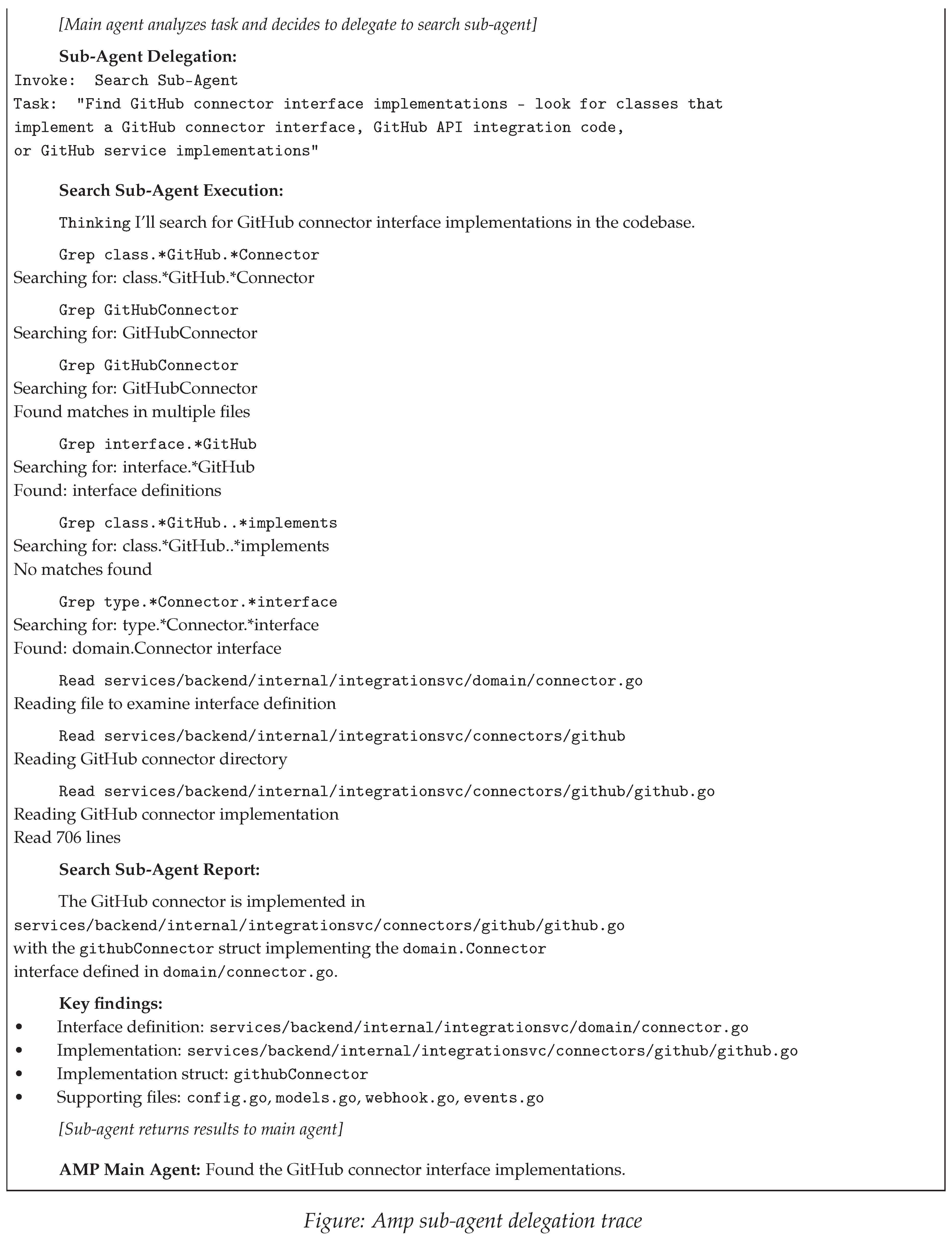

4.10. Code Search Transparency Comparison

5. Results

5.1. RQ1: Semantic Search vs. Lexical Search

5.2. RQ2: Human Developer Tools for Agents

5.3. RQ3: Specialized Retrieval Sub-Agents

5.4. Cross-Cutting Observations

6. Limitations

7. Challenges

7.1. Retrieval Quality and Context Management

7.2. Evaluation and Success Criteria

7.3. Architectural and Distributed Challenges

7.4. System and Tool Heterogeneity

8. Opportunities & Future Work

9. Conclusion

Appendix A. Claude Code: Complete Execution Traces

Appendix A.1. Standard Agentic Search

- Total context: 108k/200k tokens (54%)

- System prompt: 3.0k tokens (1.5%)

- System tools: 11.5k tokens (5.8%)

- MCP tools: 17.6k tokens (8.8%)

- Tool overhead (system + MCP): 29.1k tokens (14.6%) consumed before retrieval operations

- Messages: 30.2k tokens (15.1%)

- Autocompact buffer: 45k tokens (22.5%) reserved for conversation history

- Free space: 47k tokens (23.7%)

- Search operations: 7

- File reads: 6 (whole-file reads, not snippets)

- Total lines examined: 1,605

Appendix A.2. LSP-Augmented Search

- Total context: 117k/200k tokens (59%)

- System prompt: 3.0k tokens (1.5%)

- System tools: 11.5k tokens (5.8%)

- MCP tools: 17.6k tokens (8.8%)

- Messages: 39.8k tokens (19.9%)

- Free space: 38k tokens (18.9%)

- Search operations: 8 (5 grep/glob, 3 LSP)

- File reads: 8

- Total lines examined: 1,422

- LSP success rate: 1/3 (hover succeeded, definition and references failed)

Appendix A.3. Comparative Analysis

Appendix B. Codex CLI: Complete Execution Trace

Appendix B.1. Trace Analysis

Appendix B.2. Metrics

- Total tokens: 39,540 (input=36,874 + output=2,666)

- Cached tokens: 151,424 (still occupy context space, reduce computation cost)

- Reasoning tokens: 1,600 (excluded from context window for future turns)

- Real context consumption: 190,964 tokens (including cached)

- Displayed context remaining: 97% (based on non-cached tokens only)

- Actual context remaining: 32% (when cached tokens included)

- Search operations: 11 (List, Search, Read commands)

- File reads: 4 (github.go read twice, plus config.go, connector.go)

Appendix C. Gemini CLI: Complete Execution Trace

Appendix C.1. Trace Analysis

Appendix C.2. Metrics

- Total tokens: 102,280 (input=99,973 + output=763 + thoughts=1,544)

- Cached tokens: 53,304 (52.1% cache hit rate)

- FindFiles operations: 3

- SearchText operations: 2

- ReadManyFiles/ReadFile operations: 3

- Total unique files examined: 4

- Total lines read: 1,105 (706+32+53+282+32)

- API execution time: 48.2s

- Wall clock time: 1h 32m 44s

Appendix D. Cursor: Complete Execution Trace

Appendix D.1. Trace Analysis

Appendix D.2. Metrics

- Context utilization: 14.7% (approximately 29,400 tokens out of 200,000)

- Tool operations: 1 semantic search, 3 grep operations, 3 file reads, 1 directory listing

- Tool visibility: Aggregated summaries (operation types and counts visible, specific patterns and results hidden)

- Response comprehensiveness: High (identified core interface, implementations, supporting files, and cross-references)

- Retrieval transparency: Partial (tool categories visible, retrieval logic and ranking hidden)

- Query complexity: Low (natural language, no regex/glob syntax required)

Appendix E. Amp: Complete Execution Trace

- Context window: 968k tokens

- Total tokens consumed: 19k (2% of context window)

- Sub-agent context: Isolated with 20k token budget

- Main agent overhead: Task delegation and result synthesis

- Search operations: Multiple grep patterns with progressive refinement

- File reads: 3+ files (connector.go, github.go, supporting files)

- Execution model: Sequential (main agent waits for sub-agent completion)

Appendix E.1. Sub-Agent Architecture Analysis

Appendix F. Cline: Complete Execution Trace

- Total context: 35k/200k tokens (17.5%)

- Model: Sonnet 4.5

- API cost: $0.0104 (first request)

- Task completion: 6/6 automated steps

- Search operations: 4 (ripgrep-based content search)

- File reads: 6 files examined

- Directory traversal: 1 recursive view

- Execution model: Sequential plan-and-act loop with visible checkpoints

Appendix F.1. Plan-and-Act Loop Analysis

- Ripgrep content search: Pattern matching with "func New" and "func.*(.*) domain.Connector"

- Directory traversal: Recursive viewing to understand codebase structure

- File reading: Targeted reads of implementation and config files

- Targeted file reads (6 files) instead of bulk ingestion

- Directory traversal for structural awareness without reading all files

- Pattern-based searches returning only relevant matches

Appendix G. Aider: Complete Execution Trace

Appendix G.1. Graph-Based Retrieval Analysis

- Files already in chat context (50x multiplier)

- Files explicitly mentioned by user query (10x)

- Identifiers matching query terms (10x)

- Well-named identifiers with snake_case/camelCase (10x)

Appendix G.2. Tree-sitter AST Parsing Architecture

- name.definition.function - Function definitions

- name.definition.class - Class definitions

- name.reference.call - Function calls

- name.reference.type - Type references

- First scan takes time (415 files at 240.86 files/second)

- Subsequent scans are instant (cache hit)

- Modified files are automatically re-parsed based on mtime

- Cache invalidation on Tree-sitter version upgrades

References

- Anthropic. Introducing Claude Sonnet 4.5. https://www.anthropic.com/news/claude-sonnet-4-5, 2025. Accessed: 2025.

- OpenAI. Introducing GPT-5. https://openai.com/index/introducing-gpt-5/, 2025. Accessed: 2025.

- Comanici, G.; Bieber, E.; Schaekermann, M.; et al. Gemini 2.5: Pushing the Frontier with Advanced Reasoning, Multimodality, Long Context, and Next Generation Agentic Capabilities. arXiv preprint arXiv:2507.06261 2025.

- Google DeepMind. Gemini 2.5: Our Newest Gemini Model with Thinking. https://blog.google/technology/google-deepmind/gemini-model-thinking-updates-march-2025/, 2025. Accessed: 2025.

- Boekhoudt, C. The Big Bang Theory of IDEs. ACM Queue 2003, 1, 74–82. [CrossRef]

- Digital Museum. Integrated Development Environment. https://ide.digitalmuseum.jp/Integrated_development_environment, 2024. Accessed: 2025.

- Wikipedia. Language Server Protocol. https://en.wikipedia.org/wiki/Language_Server_Protocol, 2025. Accessed: 2025.

- Wikipedia. Grep. https://en.wikipedia.org/wiki/Grep, 2025. Accessed: 2025.

- Andrew Gallant. ripgrep. Fast line-oriented search tool. 2025. https://github.com/ BurntSushi/ripgrep.

- Husain, H.; Wu, H.H.; Gazit, T.; Allamanis, M.; Brockschmidt, M. CodeSearchNet Challenge: Evaluating the State of Semantic Code Search. arXiv preprint arXiv:1909.09436 2020.

- Max Brunsfeld. Tree-sitter: An Incremental Parsing System for Programming Tools. Parser generator tool and incremental parsing library. 2025. https://tree-sitter.github. io/tree-sitter/.

- Codecademy. Retrieval Augmented Generation in AI. https://www.codecademy.com/article/retrieval-augmented-generation-in-ai, 2024. Accessed: 2025.

- Edicom Group. LLM RAG: Improving the Retrieval Phase with Hybrid Search. https://careers.edicomgroup.com/techblog/llm-rag-improving-the-retrieval-phase-with-hybrid-search/, 2025. Accessed: 2025.

- Amazon Web Services. What is Retrieval-Augmented Generation? https://aws.amazon.com/what-is/retrieval-augmented-generation/, 2024. Accessed: 2025.

- Google Cloud. Retrieval-Augmented Generation. https://cloud.google.com/use-cases/retrieval-augmented-generation, 2025. Accessed: 2025.

- Yang, Z.; Chen, S.; Gao, C.; Li, Z.; Hu, X.; Liu, K.; Xia, X. An Empirical Study of Retrieval-Augmented Code Generation: Challenges and Opportunities. arXiv preprint arXiv:2501.13742 2025.

- Gu, W.; Chen, J.; Wang, Y.; Jiang, T.; Li, X.; Liu, M.; Liu, X.; Ma, Y.; Zheng, Z. What to Retrieve for Effective Retrieval-Augmented Code Generation? An Empirical Study and Beyond. arXiv preprint arXiv:2503.20589 2025.

- Pash, N. 3 Seductive Traps in Agent Building. Cline Blog, 2025. Accessed: 2025.

- Liu, J.; Ng, J. RAG for Coding Agents: Lightning Series. https://jxnl.co/writing/2025/06/19/rag-for-coding-agents-lightning-series/, 2025. Accessed: 2025.

- Chen, Z.; Li, S.; Wang, X.; Zhang, L.; Liu, M. Repository-Aware Knowledge Graphs for Software Repair: Bridging Code Understanding and Bug Localization. arXiv preprint arXiv:2503.21710v1 2025.

- Zhang, H.; Wang, Y.; Chen, J.; Li, Q.; Thompson, R. CodexGraph: Enhancing Large Language Models with Code Structure-Aware Retrieval. arXiv preprint arXiv:2408.03910v2 2024.

- Microsoft. Language Server Protocol Specification v3.17. https://microsoft.github.io/language-server-protocol/specifications/lsp/3.17/specification/, 2022. Accessed: 2025.

- arXiv:2409.00899v1 2024.MarsCode Agent: A Multi-Agent Collaborative Framework for Software Engineering. arXiv preprint arXiv:2409.00899v1 2024.

- Phan, H.N.; Nguyen, T.N.; Nguyen, P.X.; Bui, N.D.Q. HyperAgent: Generalist Software Engineering Agents to Solve Coding Tasks at Scale. arXiv preprint arXiv:2409.16299v3 2025.

- Yao, S.; Zhao, J.; Yu, D.; Du, N.; Shafran, I.; Narasimhan, K.; Cao, Y. ReAct: Synergizing Reasoning and Acting in Language Models. arXiv preprint arXiv:2210.03629 2023.

- Schick, T.; Dwivedi-Yu, J.; Dessì, R.; Raileanu, R.; Lomeli, M.; Zettlemoyer, L.; Cancedda, N.; Scialom, T. Toolformer: Language Models Can Teach Themselves to Use Tools. arXiv preprint arXiv:2302.04761 2023.

- Shrivastava, D.; Larochelle, H.; Tarlow, D. Repository-Level Prompting for Large Language Models. arXiv preprint arXiv:2406.12276 2024.

- Wang, X.; Zhao, Y.; Chen, L. Specialized Retrieval Agents for Code Search: A Multi-Agent Approach. arXiv preprint arXiv:2404.15823 2024.

- Wu, Q.; Bansal, G.; Zhang, J.; Wu, Y.; Zhang, S.; Zhu, E.; Li, B.; Jiang, L.; Zhang, X.; Wang, C. AutoGen: Enabling Next-Gen LLM Applications via Multi-Agent Conversation. arXiv preprint arXiv:2308.08155 2023.

- Hong, S.; Zhuge, M.; Chen, J.; Zheng, X.; Cheng, Y.; Zhang, C.; Wang, J.; Wang, Z.; Yau, S.K.S.; Lin, Z.; et al. MetaGPT: Meta Programming for A Multi-Agent Collaborative Framework. arXiv preprint arXiv:2308.00352 2024.

- Liu, J.; Xia, C.S.; Wang, Y.; Zhang, L. Is Your AI Pair Programmer Better with Multiple Agents? An Empirical Study. arXiv preprint arXiv:2407.18085 2024.

- Cognition AI. Don’t Build Multi-Agents. https://cognition.ai/blog/dont-build-multi-agents, 2025. Accessed: 2025.

- Priyanshu Jain. InfraGPT: Open-Source DevOps Debugging Agent. 2025. https: //github.com/priyanshujain/infragpt.

- OpenAI. Codex CLI: AI Coding Assistant. 2025. https://github.com/openai/codex.

- Google Gemini. Gemini CLI: AI-Powered Coding Assistant. 2025. https://github. com/google-gemini/gemini-cli.

- Cline. Cline: Autonomous Coding Agent. VS Code Extension. 2025. https://github. com/cline/cline.

- Aider AI. Aider: AI Pair Programming in Your Terminal. Command-line AI coding assistant. 2025. https://github.com/Aider-AI/aider.

- Anthropic. Claude Code Settings: Tools Available to Claude. https://docs.claude.com/en/docs/claude-code/settings, 2024. Accessed: 2025.

- Amp. Amp Owner’s Manual. https://ampcode.com/manual, 2024. Accessed: 2025.

- Cursor. Cursor Documentation: Codebase Indexing and Agent Tools. https://cursor.com/docs, 2024. Accessed: 2025.

- Isaac Phi. MCP Language Server. 2025. https://github.com/isaacphi/mcplanguage- server.

- Anthropic. Model Context Protocol (MCP). https://modelcontextprotocol.io, 2024. Accessed: 2025.

- Junegunn Choi. fzf: A Command-Line Fuzzy Finder. 2025. https://github.com/ junegunn/fzf.

- Page, L.; Brin, S.; Motwani, R.; Winograd, T. The PageRank Citation Ranking: Bringing Order to the Web. Technical Report 1999-66, Stanford InfoLab, 1999.

- Hagberg, A.A.; Schult, D.A.; Swart, P.J. Exploring Network Structure, Dynamics, and Function using NetworkX. Proceedings of the 7th Python in Science Conference 2008, pp. 11–15.

- Georg Brandl and contributors. Pygments: Python Syntax Highlighter. 2025. ttps: //pygments.org/.

| 1 | Tailwind CSS is a utility-first CSS framework. Version 4 introduced breaking changes in configuration and class naming that require migration guides unavailable to models trained before its release. |

| 2 | While modern LLMs advertise context windows of 1M+ tokens, empirical evidence suggests effective utilization plateaus at approximately 100k tokens for reasoning-intensive tasks. |

| 3 | The Language Server Protocol is an open standard that decouples language-specific tooling (code completion, go-to-definition, error checking) from editors, enabling any language to provide IDE features in any LSP-compatible editor. |

| 4 | RAG is a technique that enhances LLM outputs by retrieving relevant external documents or code snippets and including them in the prompt context, grounding generation in authoritative sources. |

| 5 | Tree-sitter is a parser generator tool and incremental parsing library that builds concrete syntax trees for source code, enabling language-agnostic structural code analysis. |

| 6 | Voyage AI: https://www.voyageai.com/

|

| 7 | Boris Cherny, Head of Claude Code at Anthropic, discussed this architectural decision in a Latent Space podcast interview: https://www.youtube.com/watch?v=zDmW5hJPsvQ (2025). |

| 8 | The benchmark framework and evaluation infrastructure are available at https://github.com/73ai/code-retrieval-eval

|

| Agent | Tokens Consumed | Context Window | Utilization % |

|---|---|---|---|

| Aider | 8,500–13,000 | 200,000 | 4.3–6.5% |

| Amp | 19,000 | 968,000 | 2.0% |

| Cursor | 29,400 | 200,000 | 14.7% |

| Cline | 35,000 | 200,000 | 17.5% |

| Codex CLI | 39,540 (190,964 with cached) | 272,000 | 14.5% (70.2% actual) |

| Gemini CLI | 102,280 | 200,000 | 51.1% |

| Claude Code (Standard) | 108,000 | 200,000 | 54.0% |

| Claude Code (LSP) | 117,000 | 200,000 | 58.5% |

| Agent | Query Visibility | File Visibility | Duration |

|---|---|---|---|

| Claude Code | Full | Full | Persistent |

| Gemini CLI | Full | Full | Persistent |

| Amp | Full | Full | Transient |

| Cline | Partial | Full | Persistent |

| Codex CLI | Partial | Full | Persistent |

| Cursor | Partial | Partial | Persistent |

| Aider | None | Partial | Persistent |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).