Submitted:

08 October 2025

Posted:

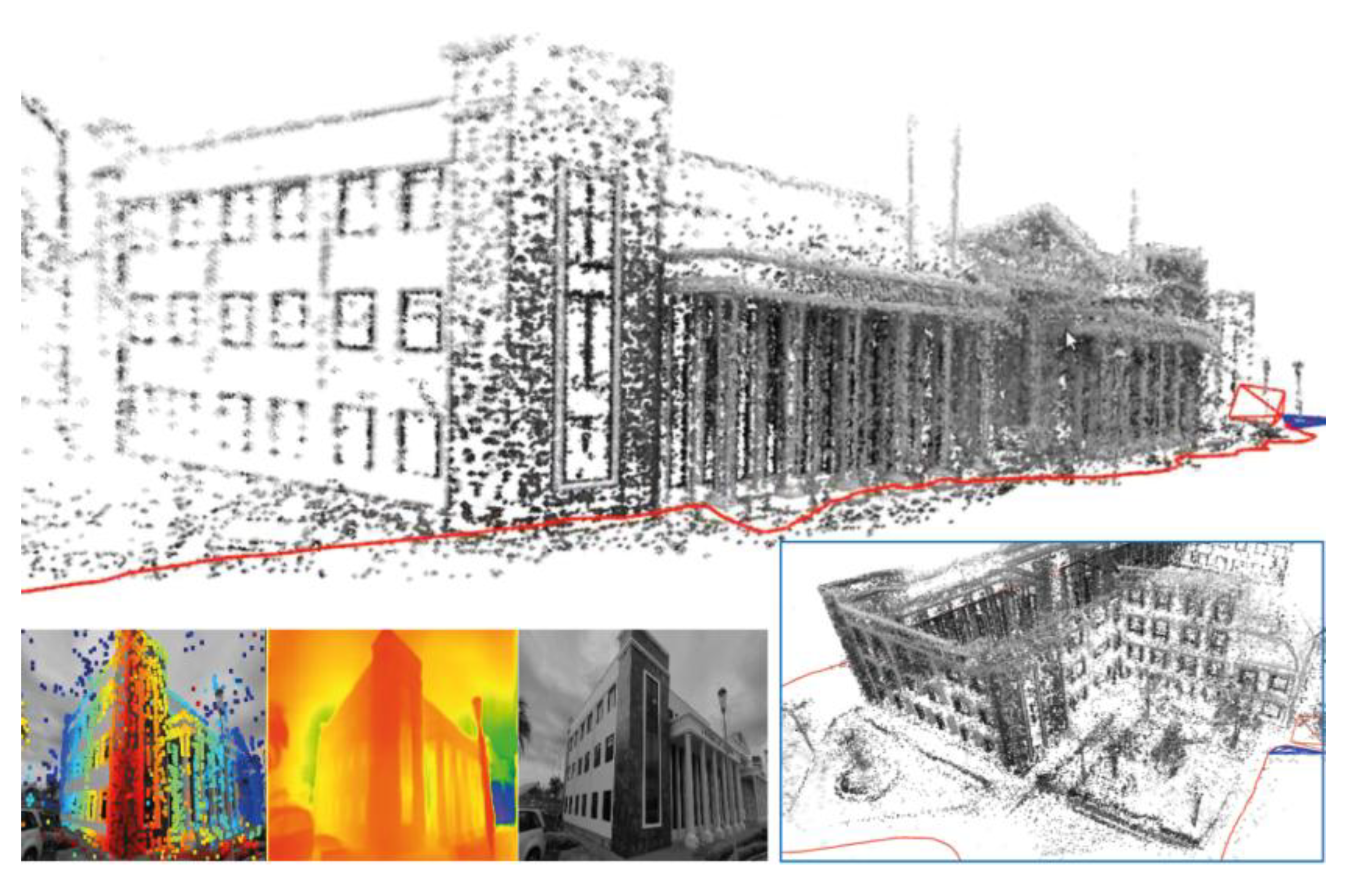

08 October 2025

You are already at the latest version

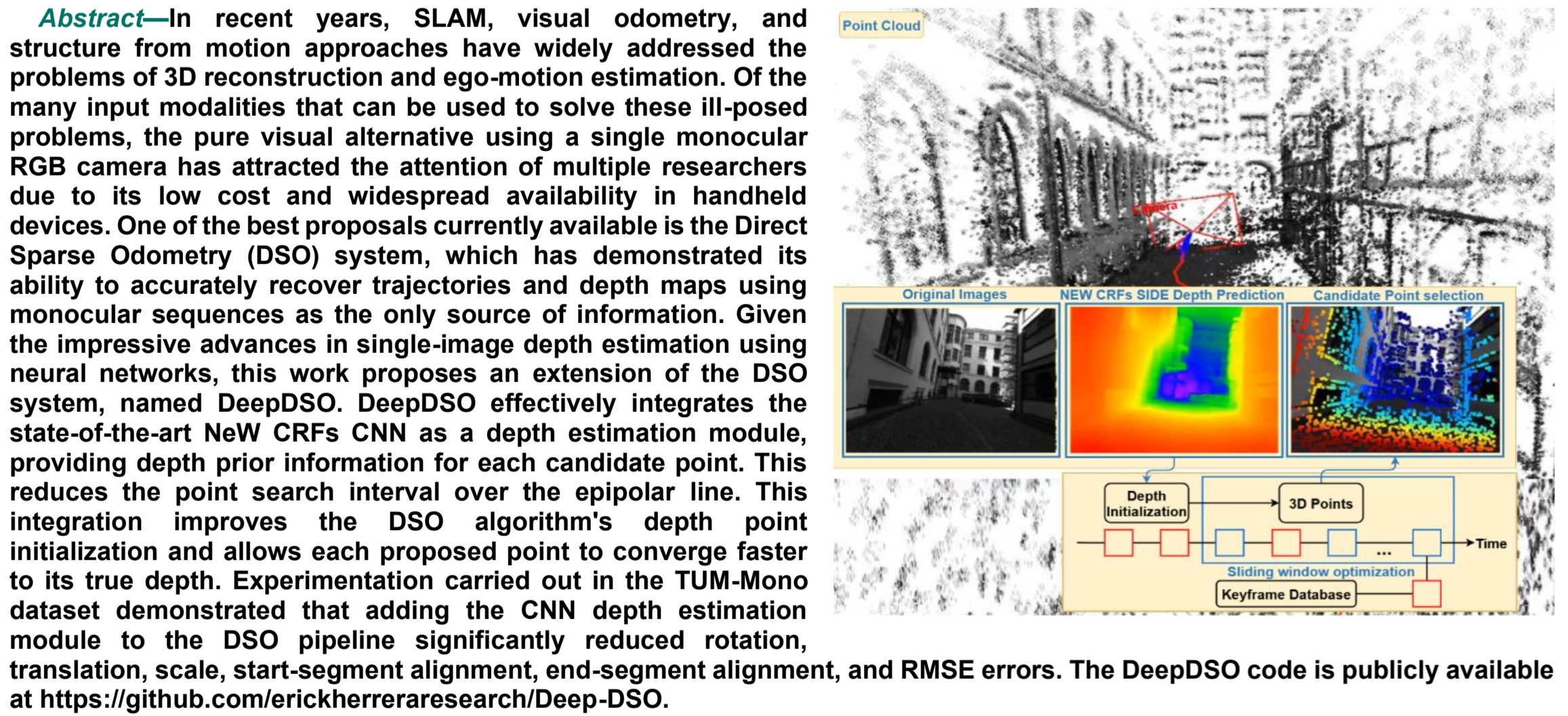

Abstract

Keywords:

1. Introduction

2.1. Related Works

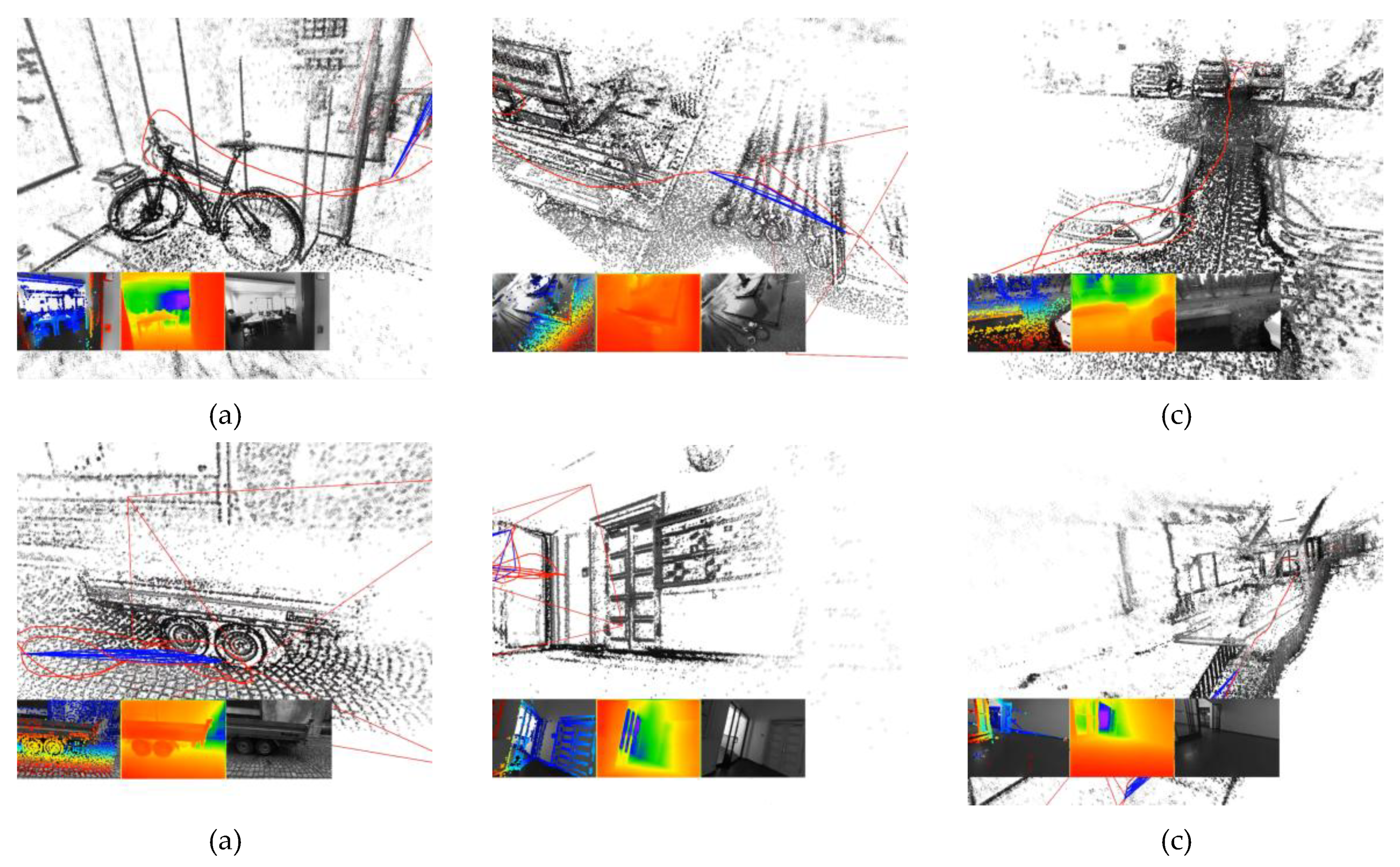

2. Materials and Methods

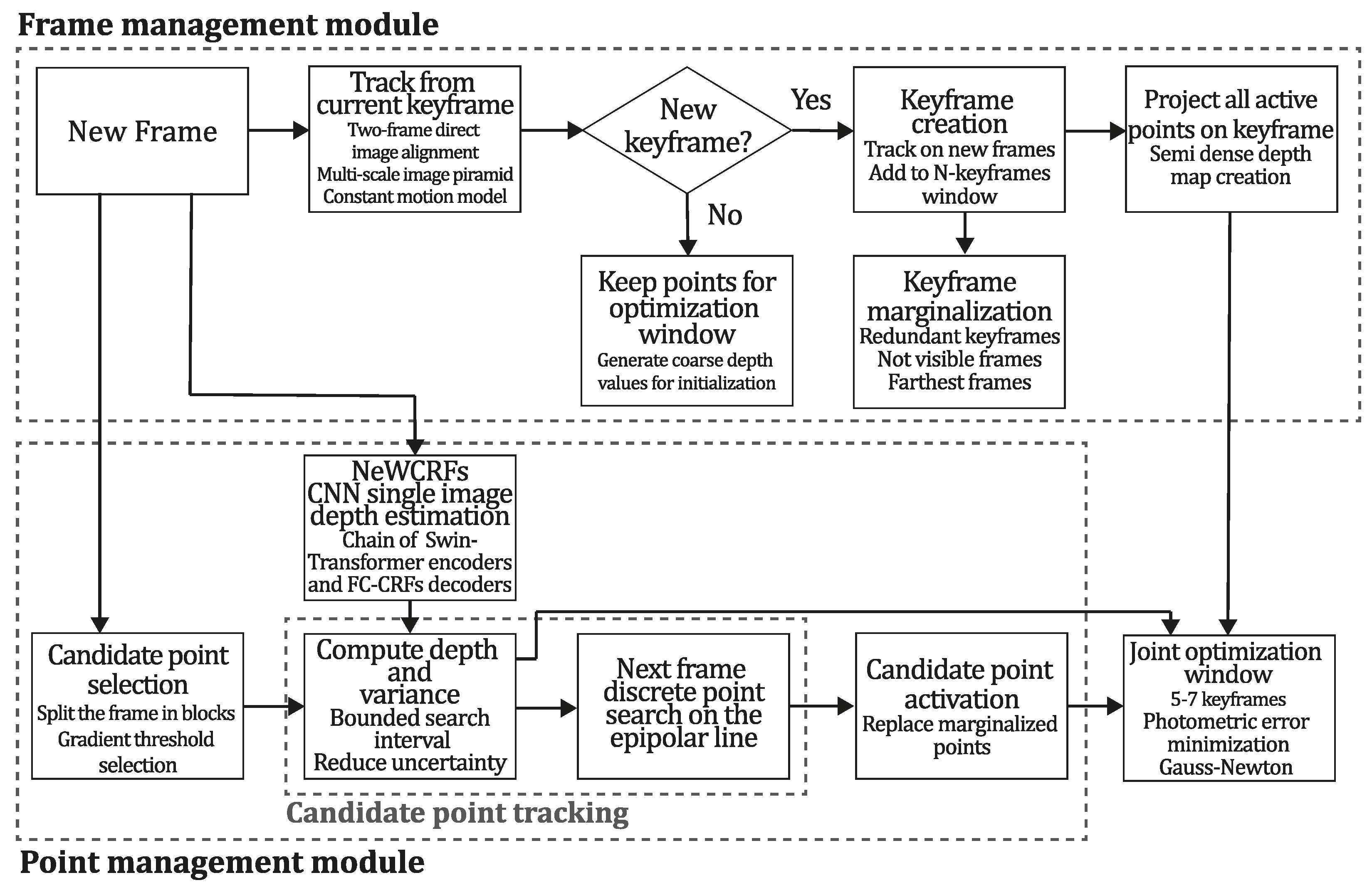

2.1. Review of the DSO Algorithm

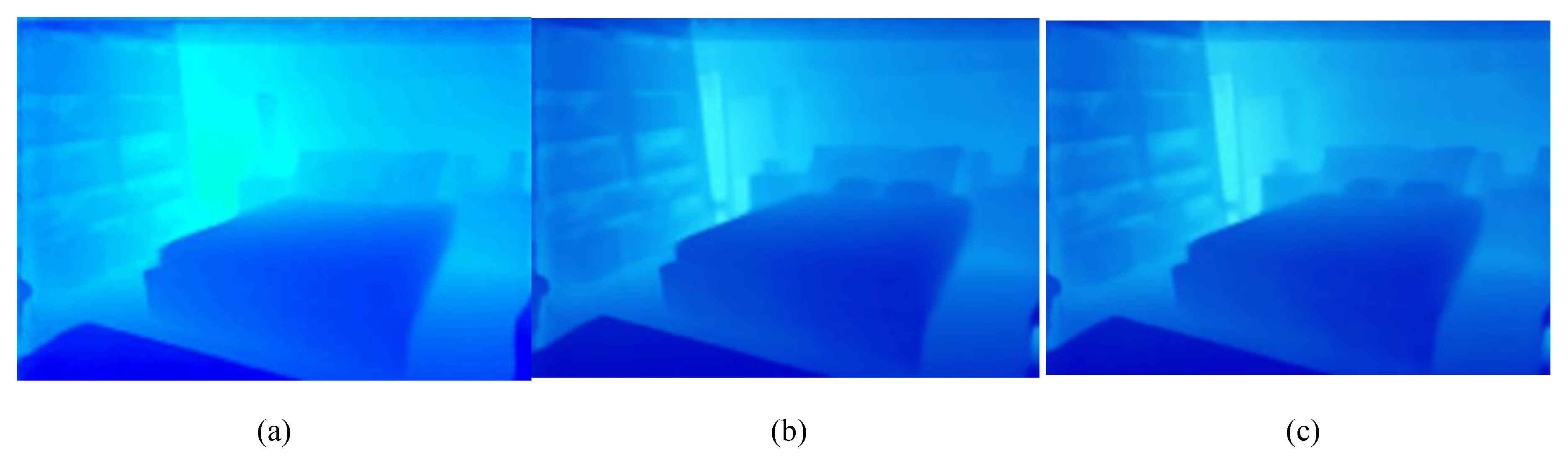

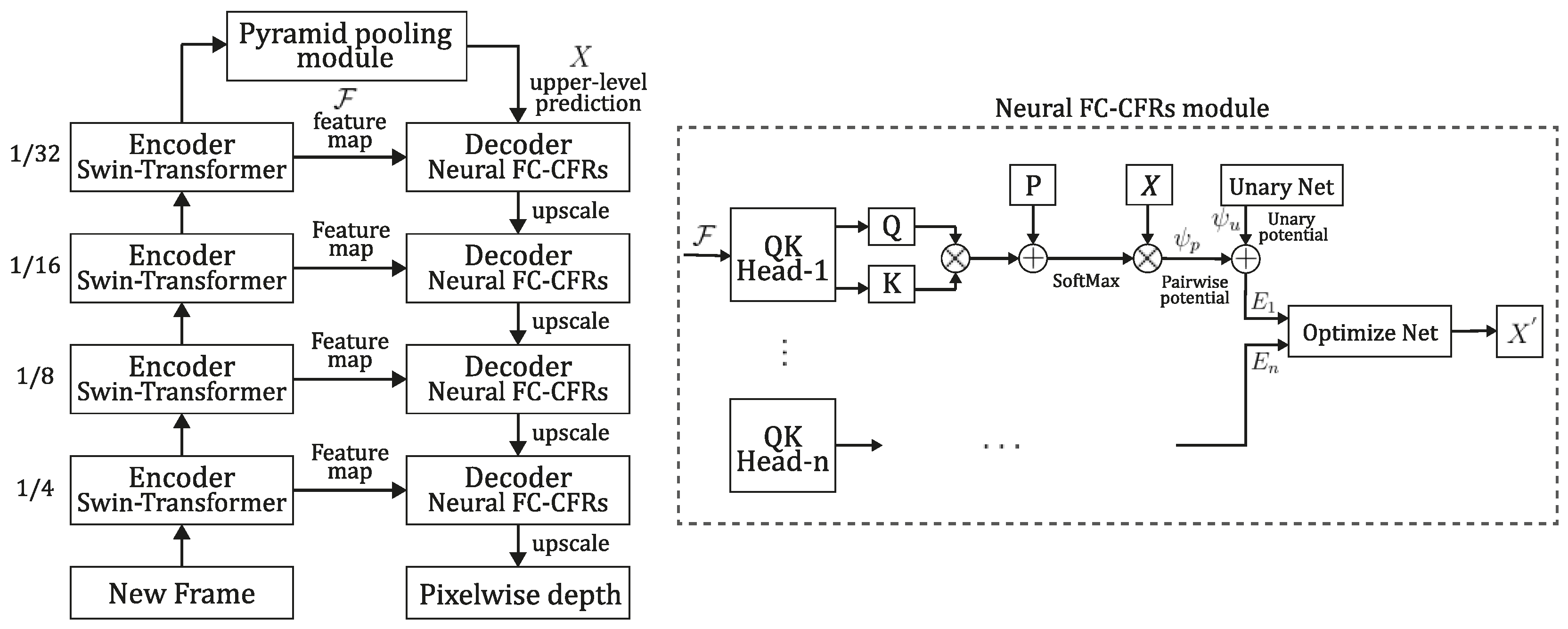

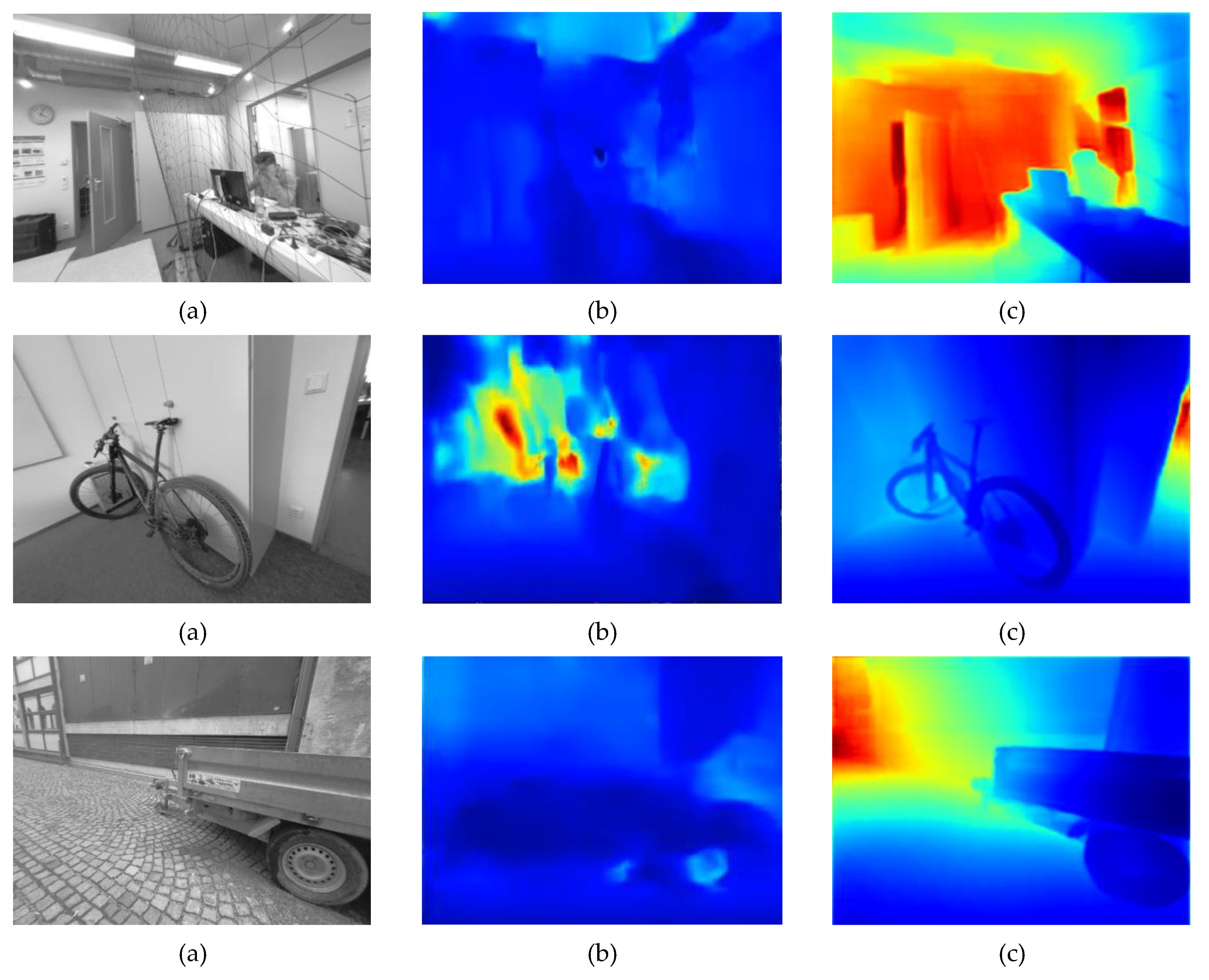

2.2. Review of NeW CRFs Single Image Depth Estimation

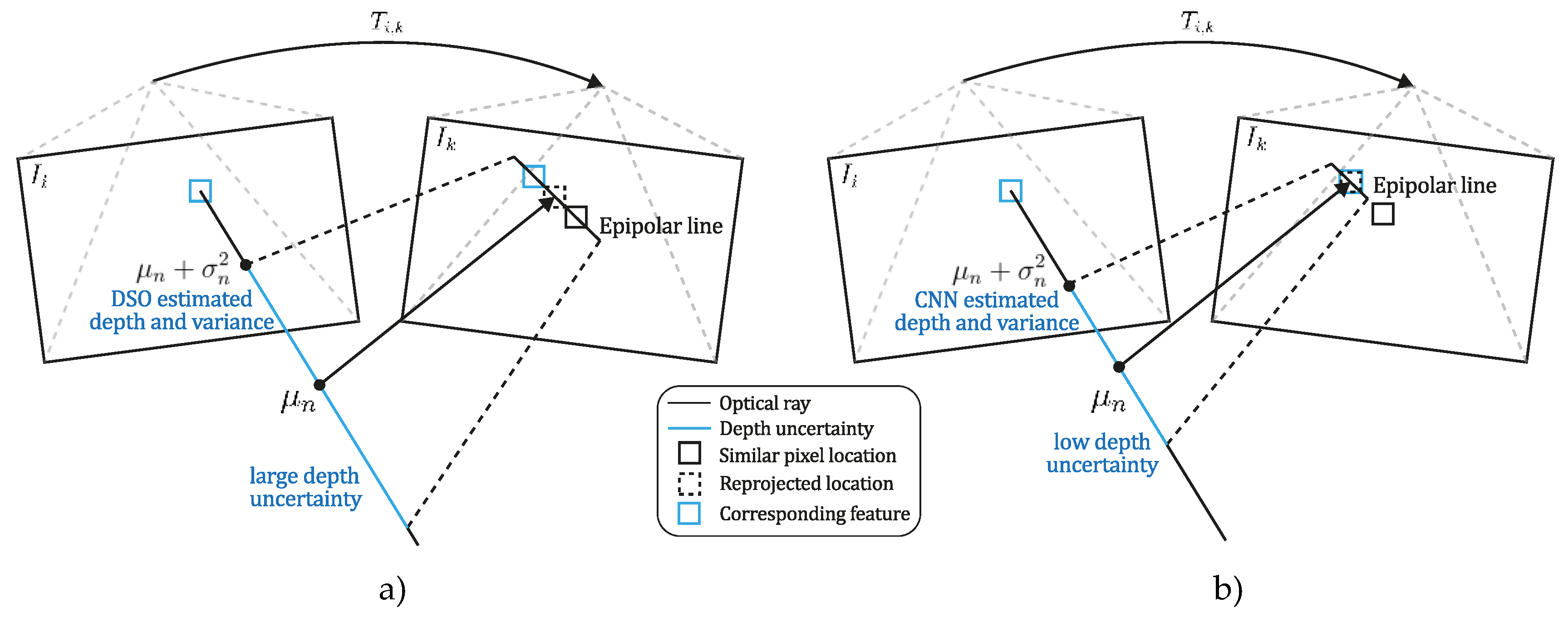

2.3. An Improved Point-Depth Initialization for the DSO Algorithm

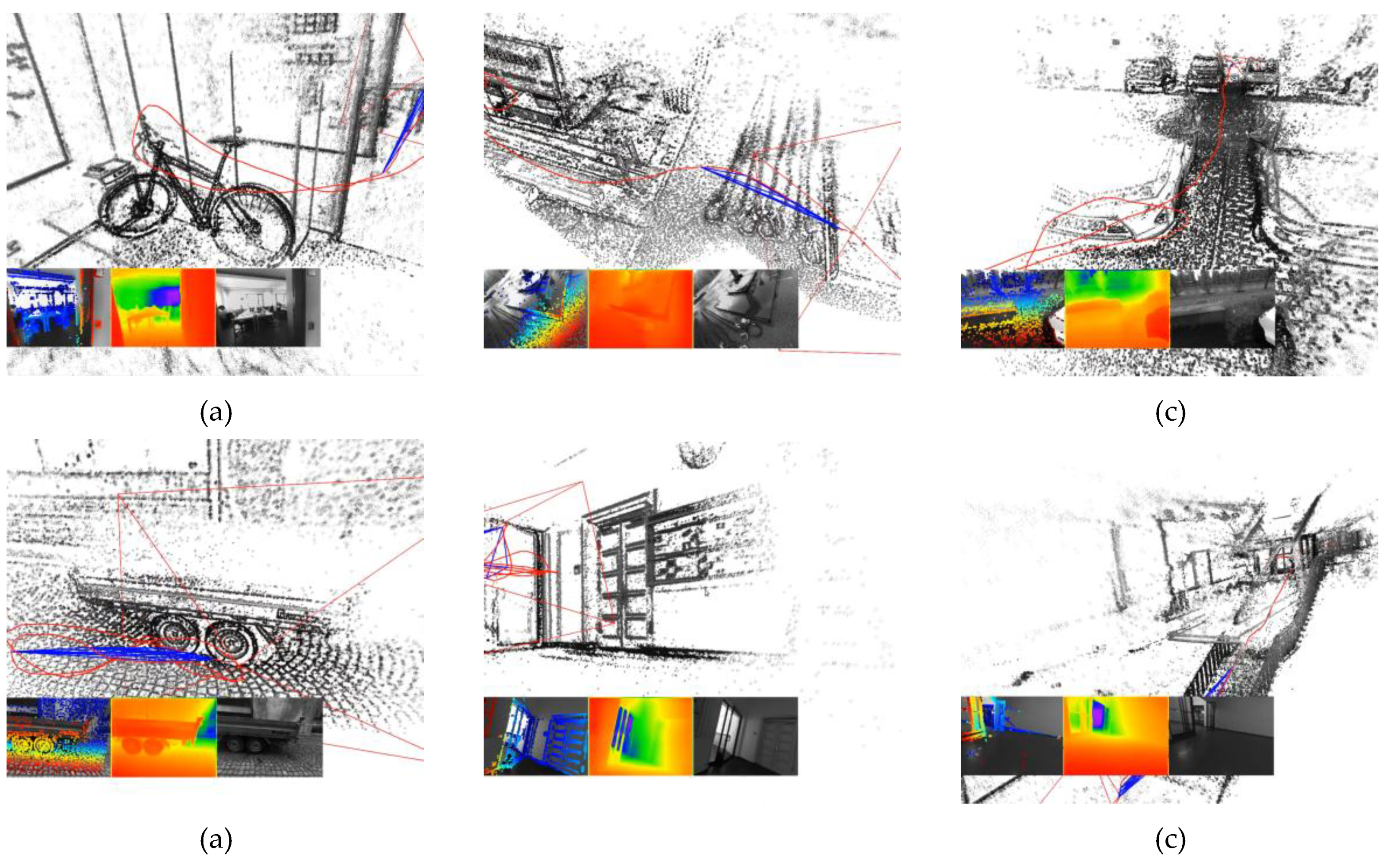

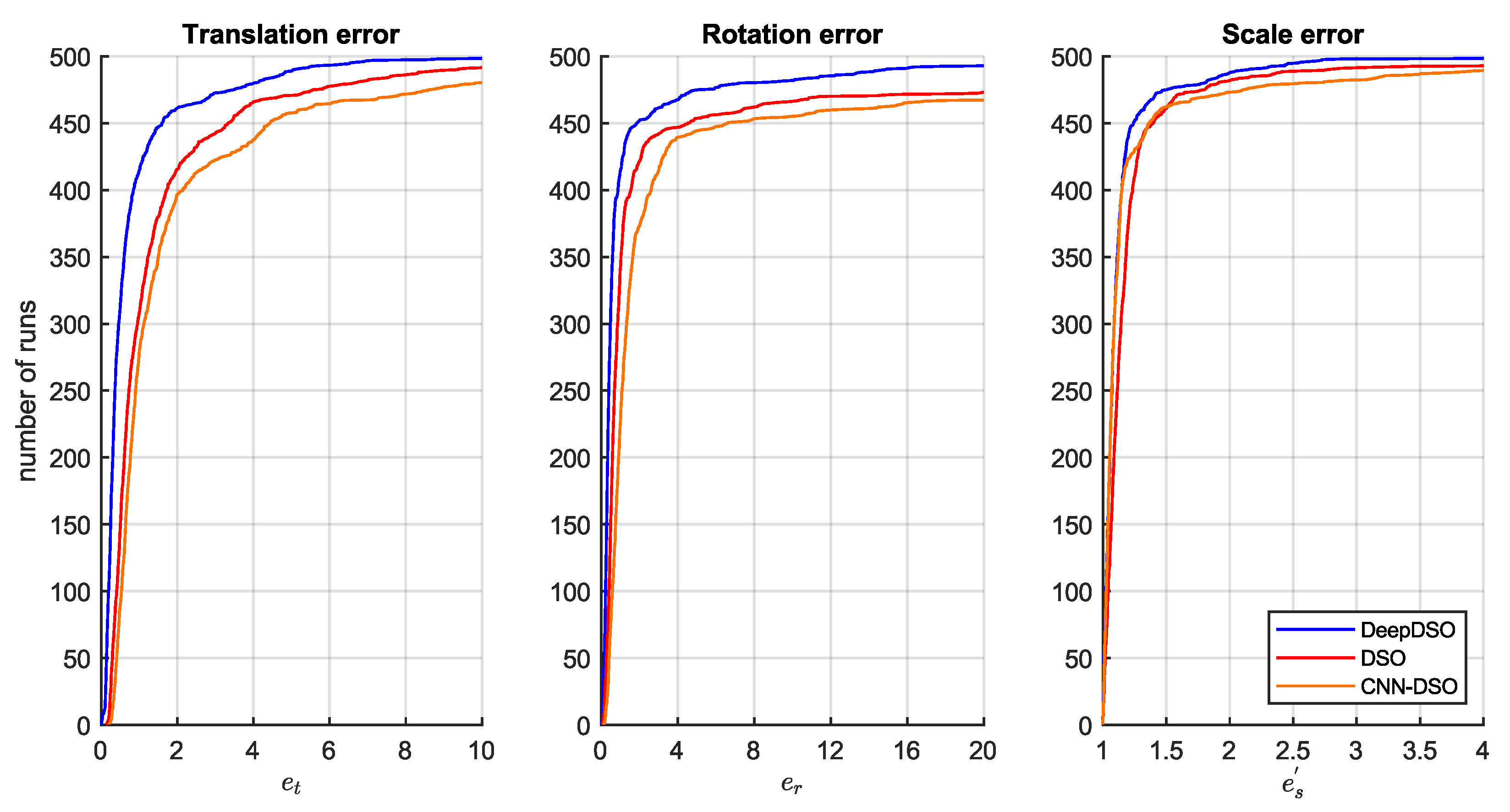

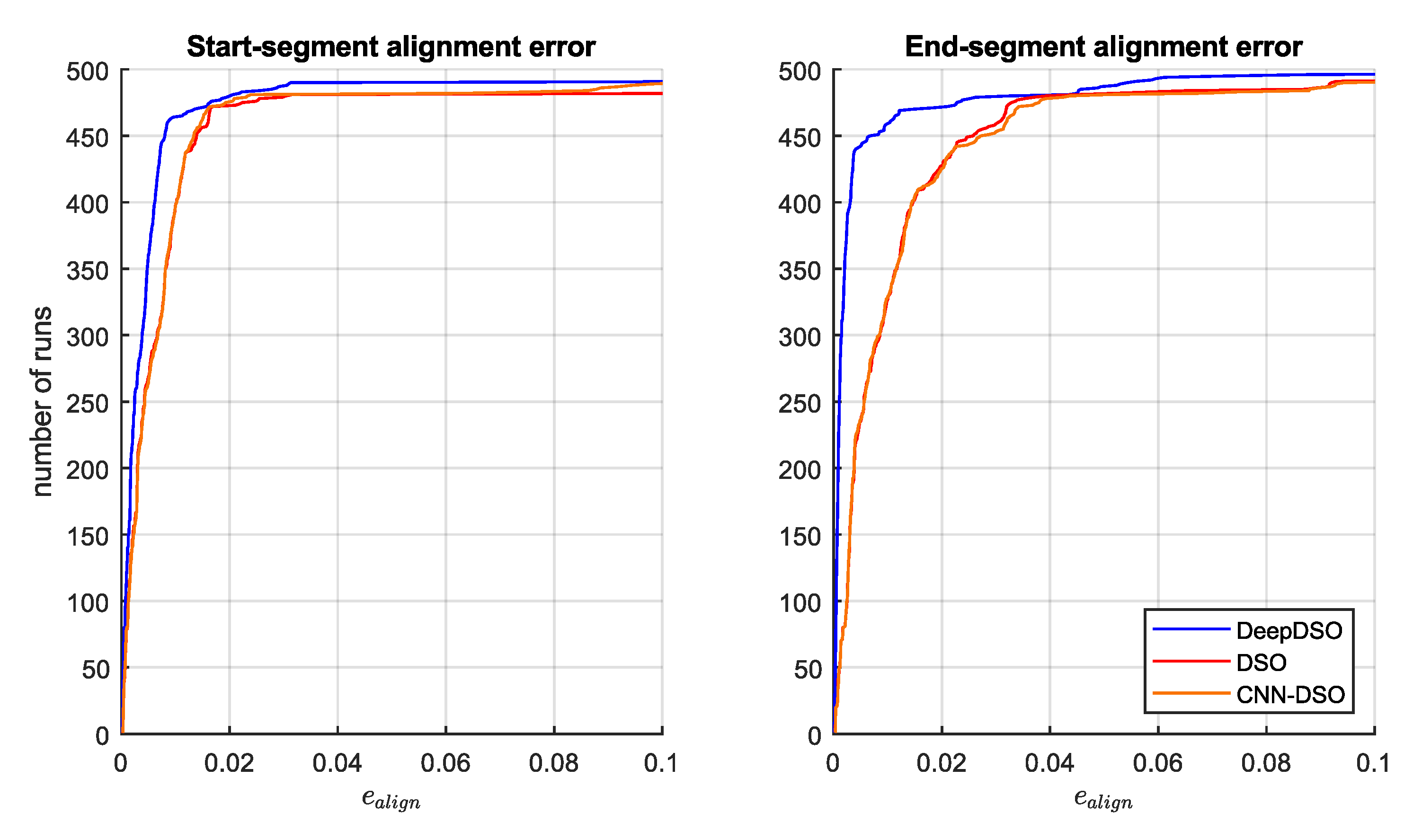

3. Results

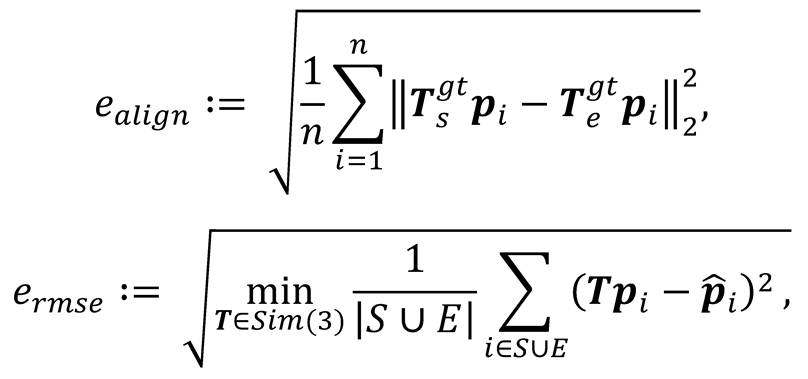

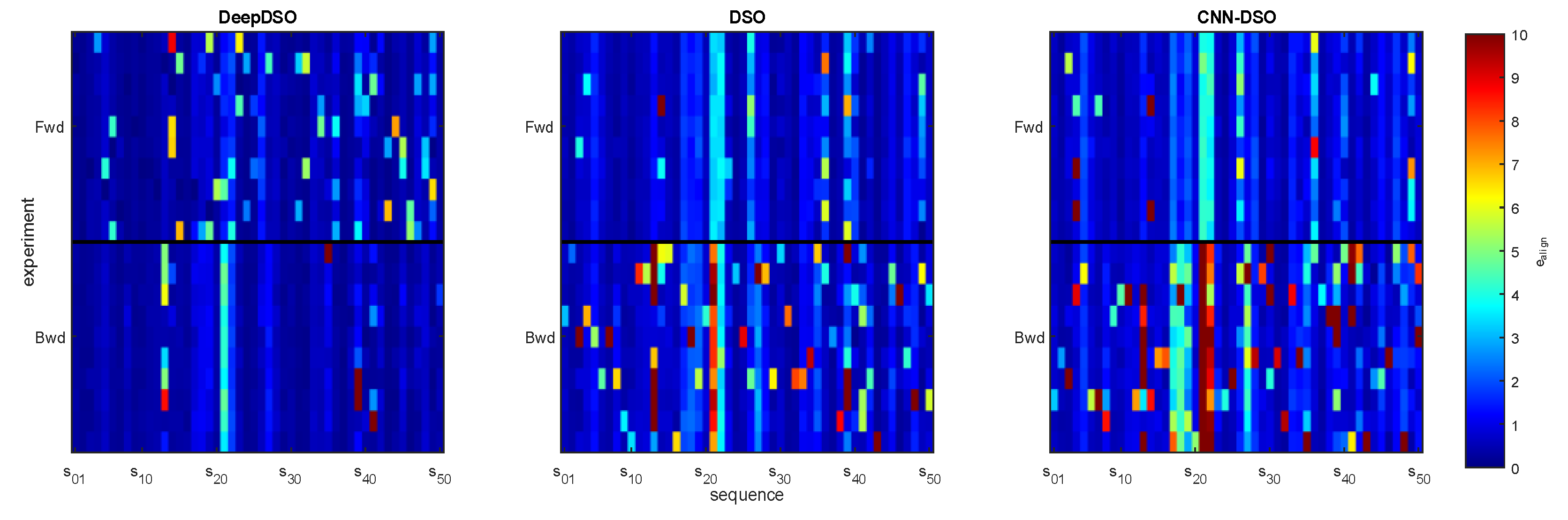

- Start and end segment alignment error. Each experimental trajectory was aligned with ground truth segments at both the start (first 10-20 seconds) and end (last 10-20 seconds) of each sequence, computing relative transformations through optimization:

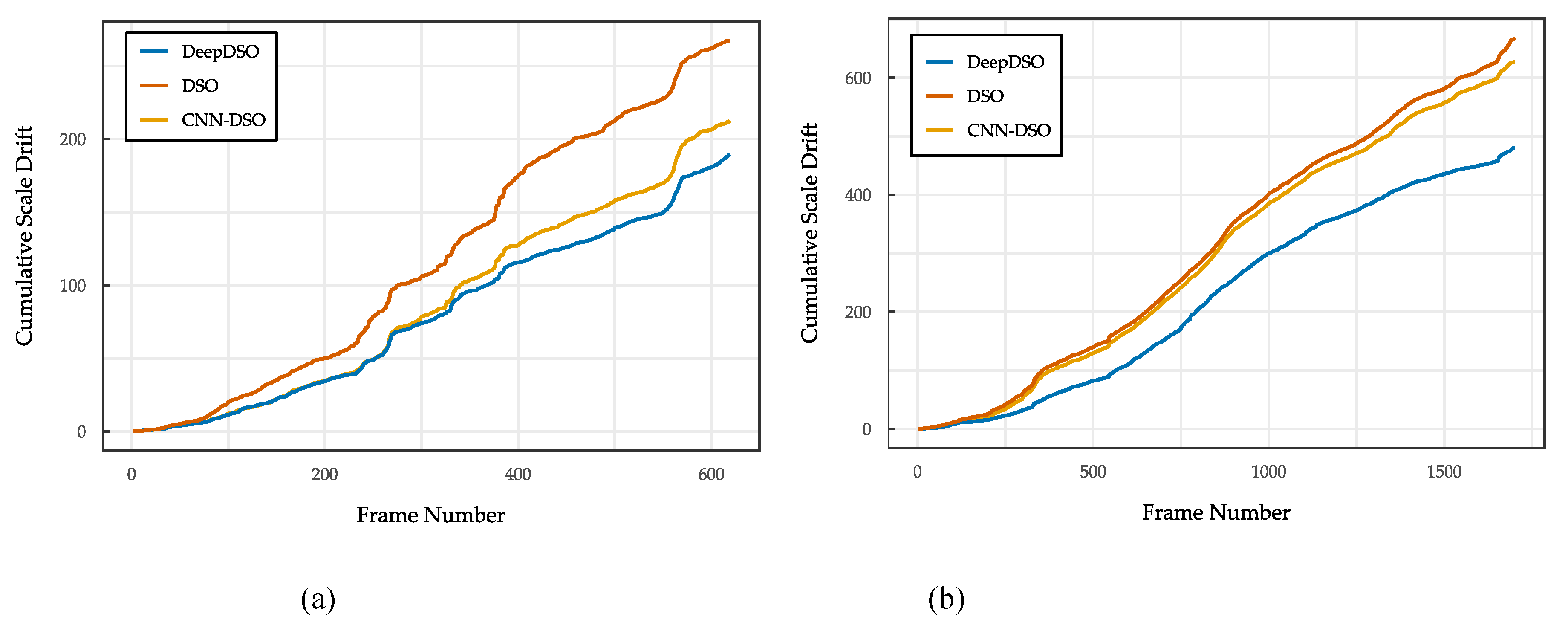

- Translation, rotation and scale error: From these alignments, the accumulated drift was computed as , enabling the extraction of translation, rotation and scale error components across the complete trajectory.

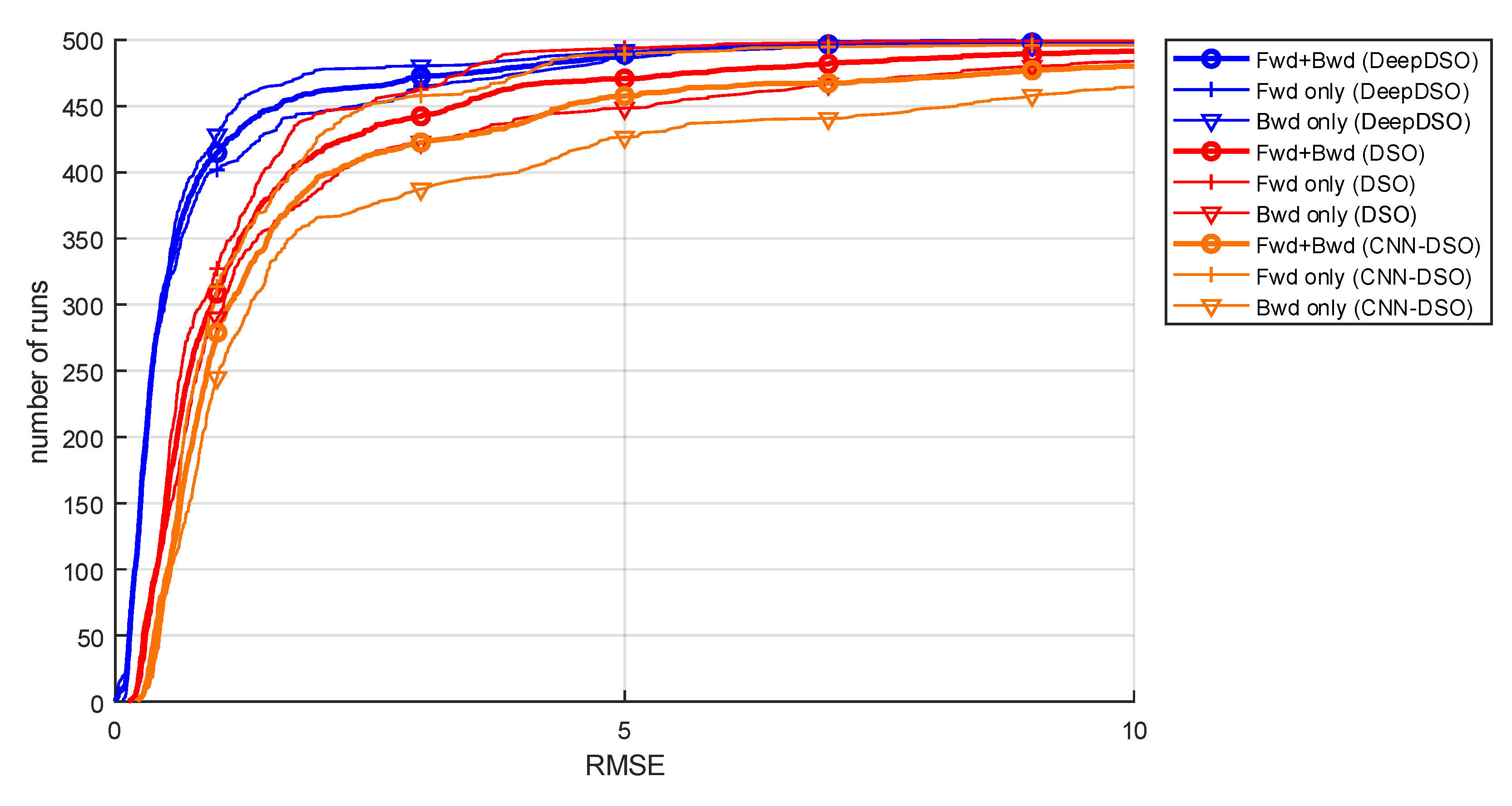

- Translational RMSE: The TUM-Mono benchmark authors also established a metric that equally considers translational, rotational, and scale effects. This metric, named alignment error (), can be computed for the start and end segments. Furthermore, when calculated for the combined start and end segments, is equivalent to the translational RMSE:

4. Discussion

5. Conclusions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Aqel, M.O.A.; Marhaban, M.H.; Saripan, M.I.; Ismail, N.B. Review of visual odometry: types, approaches, challenges, and applications. Springerplus 2016, 5, 1897. [Google Scholar] [CrossRef] [PubMed]

- Macario Barros, A.; Michel, M.; Moline, Y.; Corre, G.; Carrel, F. A Comprehensive Survey of Visual SLAM Algorithms. Robotics 2022, 11, 24. [Google Scholar] [CrossRef]

- Servières, M.; Renaudin, V.; Dupuis, A.; Antigny, N. Visual and Visual-Inertial SLAM: State of the Art, Classification, and Experimental Benchmarking. J. Sensors 2021, 2021, 1–26. [Google Scholar] [CrossRef]

- Zollhöfer, M.; Thies, J.; Garrido, P.; Bradley, D.; Beeler, T.; Pérez, P.; Stamminger, M.; Nießner, M.; Theobalt, C. State of the Art on Monocular 3D Face Reconstruction, Tracking, and Applications. Comput. Graph. Forum 2018, 37, 523–550. [Google Scholar] [CrossRef]

- Herrera-Granda, E.P.; Torres-Cantero, J.C.; Peluffo-Ordóñez, D.H. Monocular visual SLAM, visual odometry, and structure from motion methods applied to 3D reconstruction: A comprehensive survey. Heliyon 2024, 10, e37356. [Google Scholar] [CrossRef]

- S. Farooq Bhat, I. Alhashim, and P. Wonka, “AdaBins: Depth Estimation Using Adaptive Bins,” in 2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), IEEE, Jun. 2021, pp. 4008–4017. [CrossRef]

- R. Ranftl, A. Bochkovskiy, and V. Koltun, “Vision Transformers for Dense Prediction,” Mar. 2021. [CrossRef]

- N. Messikommer, G. Cioffi, M. Gehrig, and D. Scaramuzza, “Reinforcement Learning Meets Visual Odometry,” Jul. 2024, [Online]. Available: http://arxiv.org/abs/2407.15626.

- H. Liu, D. D. Huang, and Z. Y. Geng, “Visual Odometry Algorithm Based on Deep Learning,” in 2021 6th International Conference on Image, Vision and Computing (ICIVC), IEEE, Jul. 2021, pp. 322–327. [CrossRef]

- H. Jin, P. Favaro, and S. Soatto, “Real-time 3D motion and structure of point features: a front-end system for vision-based control and interaction,” in Proceedings IEEE Conference on Computer Vision and Pattern Recognition. CVPR 2000 (Cat. No.PR00662), IEEE, 2000, pp. 778–779 vol.2. [CrossRef]

- Davison, A.J.; Reid, I.D.; Molton, N.D.; Stasse, O. MonoSLAM: Real-Time Single Camera SLAM. IEEE Trans. Pattern Anal. Mach. Intell. 2007, 29, 1052–1067. [Google Scholar] [CrossRef]

- R. Castle, “PTAM-GPL: Parallel Tracking and Mapping,” GitHub repository. [Online]. Available: https://github.com/Oxford-PTAM/PTAM-GPL.

- .Mur-Artal, R.; Montiel, J.M.M.; Tardos, J.D. ORB-SLAM: A Versatile and Accurate Monocular SLAM System. IEEE Trans. Robot. 2015, 31, 1147–1163. [Google Scholar] [CrossRef]

- Mur-Artal, R.; Tardos, J.D. ORB-SLAM2: An Open-Source SLAM System for Monocular, Stereo, and RGB-D Cameras. IEEE Trans. Robot. 2017, 33, 1255–1262. [Google Scholar] [CrossRef]

- Campos, C.; Elvira, R.; Rodriguez, J.J.G.; M. Montiel, J.M.; D. Tardos, J. ORB-SLAM3: An Accurate Open-Source Library for Visual, Visual–Inertial, and Multimap SLAM. IEEE Trans. Robot. 2021, 37, 1874–1890. [Google Scholar] [CrossRef]

- Valgaerts, L.; Bruhn, A.; Mainberger, M.; Weickert, J. Dense versus Sparse Approaches for Estimating the Fundamental Matrix. Int. J. Comput. Vis. 2011 962 2011, 96, 212–234. [Google Scholar] [CrossRef]

- R. Ranftl, V. Vineet, Q. Chen, and V. Koltun, “Dense Monocular Depth Estimation in Complex Dynamic Scenes,” in 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), IEEE, Jun. 2016, pp. 4058–4066. [CrossRef]

- J. Stühmer, S. Gumhold, and D. Cremers, “Real-Time Dense Geometry from a Handheld Camera,” in Lecture Notes in Computer Science (including subseries Lecture Notes in Artificial Intelligence and Lecture Notes in Bioinformatics), vol. 6376 LNCS, Springer, Berlin, Heidelberg, 2010, pp. 11–20. [CrossRef]

- M. Pizzoli, C. Forster, and D. Scaramuzza, “REMODE: Probabilistic, monocular dense reconstruction in real time,” in 2014 IEEE International Conference on Robotics and Automation (ICRA), IEEE, May 2014, pp. 2609–2616. [CrossRef]

- J. Engel, T. Schöps, and D. Cremers, “LSD-SLAM: Large-Scale Direct Monocular SLAM,” in Lecture Notes in Computer Science (including subseries Lecture Notes in Artificial Intelligence and Lecture Notes in Bioinformatics), vol. 8690 LNCS, no. PART 2, Springer Verlag, 2014, pp. 834–849. [CrossRef]

- Engel, J.; Koltun, V.; Cremers, D. Direct Sparse Odometry. IEEE Trans. Pattern Anal. Mach. Intell. 2018, 40, 611–625. [Google Scholar] [CrossRef] [PubMed]

- X. Gao, R. Wang, N. Demmel, and D. Cremers, “LDSO: Direct Sparse Odometry with Loop Closure,” in 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), IEEE, Oct. 2018, pp. 2198–2204. [CrossRef]

- Zubizarreta, J.; Aguinaga, I.; Montiel, J.M.M. Direct Sparse Mapping. IEEE Trans. Robot. 2020, 36, 1363–1370. [Google Scholar] [CrossRef]

- C. Forster, M. Pizzoli, and D. Scaramuzza, “SVO: Fast semi-direct monocular visual odometry,” in 2014 IEEE International Conference on Robotics and Automation (ICRA), IEEE, May 2014, pp. 15–22. [CrossRef]

- Bescos, B.; Facil, J.M.; Civera, J.; Neira, J. DynaSLAM: Tracking, Mapping, and Inpainting in Dynamic Scenes. IEEE Robot. Autom. Lett. 2018, 3, 4076–4083. [Google Scholar] [CrossRef]

- Steenbeek, “Sparse-to-Dense: Depth Prediction from Sparse Depth Samples and a Single Image,” GitHub repository. [Online]. Available: https://github.com/annesteenbeek/sparse-to-dense-ros.

- Sun, L.; Yin, W.; Xie, E.; Li, Z.; Sun, C.; Shen, C. Improving Monocular Visual Odometry Using Learned Depth. IEEE Trans. Robot. 2022, 38, 3173–3186. [Google Scholar] [CrossRef]

- B. Ummenhofer et al., “DeMoN: Depth and Motion Network for Learning Monocular Stereo,” in 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), IEEE, Jul. 2017, pp. 5622–5631. [CrossRef]

- Z. Teed and J. Deng, “DeepV2D: Video to Depth with Differentiable Structure from Motion,” Dec. 2018. [CrossRef]

- Z. Min and E. Dunn, “VOLDOR-SLAM: For the Times When Feature-Based or Direct Methods Are Not Good Enough,” CoRR, vol. abs/2104.0, Apr. 2021, [Online]. Available: https://arxiv.org/abs/2104.06800.

- Z. Teed and J. Deng, “DROID-SLAM: Deep Visual SLAM for Monocular, Stereo, and RGB-D Cameras,” in Advances in Neural Information Processing Systems, arXiv, 2021, pp. 16558–16569. doi: 10.48550/ARXIV.2108.10869. [CrossRef]

- C. Yang, Q. Chen, Y. Yang, J. Zhang, M. Wu, and K. Mei, “SDF-SLAM: A Deep Learning Based Highly Accurate SLAM Using Monocular Camera Aiming at Indoor Map Reconstruction With Semantic and Depth Fusion,” IEEE Access, vol. 10, pp. 10259–10272, 2022. [CrossRef]

- K. Tateno, F. K. Tateno, F. Tombari, I. Laina, and N. Navab, “CNN-SLAM: Real-Time Dense Monocular SLAM with Learned Depth Prediction,” in 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), IEEE, Jul. 2017, pp. 6565–6574. [CrossRef]

- T. Laidlow, J. T. Laidlow, J. Czarnowski, and S. Leutenegger, “DeepFusion: Real-Time Dense 3D Reconstruction for Monocular SLAM using Single-View Depth and Gradient Predictions,” in 2019 International Conference on Robotics and Automation (ICRA), IEEE, 19, pp. 4068–4074. 20 May. [CrossRef]

- M. Bloesch, J. M. Bloesch, J. Czarnowski, R. Clark, S. Leutenegger, and A. J. Davison, “CodeSLAM - Learning a Compact, Optimisable Representation for Dense Visual SLAM,” in 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, IEEE, Jun. 2018, pp. 2560–2568. [CrossRef]

- Czarnowski, J.; Laidlow, T.; Clark, R.; Davison, A.J. DeepFactors: Real-Time Probabilistic Dense Monocular SLAM. IEEE Robot. Autom. Lett. 2020, 5, 721–728. [Google Scholar] [CrossRef]

- R. Cheng, C. Agia, D. Meger, and G. Dudek, “Depth Prediction for Monocular Direct Visual Odometry,” in 2020 17th Conference on Computer and Robot Vision (CRV), IEEE, May 2020, pp. 70–77. [CrossRef]

- N. Yang, L. von Stumberg, R. Wang, and D. Cremers, “D3VO: Deep Depth, Deep Pose and Deep Uncertainty for Monocular Visual Odometry,” in 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), IEEE, Jun. 2020, pp. 1278–1289. [CrossRef]

- F. Wimbauer, N. Yang, L. von Stumberg, N. Zeller, and D. Cremers, “MonoRec: Semi-Supervised Dense Reconstruction in Dynamic Environments from a Single Moving Camera,” in 2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), IEEE, Jun. 2021, pp. 6108–6118. [CrossRef]

- Zhao, C.; Tang, Y.; Sun, Q.; Vasilakos, A. V. Deep Direct Visual Odometry. IEEE Trans. Intell. Transp. Syst. 2022, 23, 7733–7742. [Google Scholar] [CrossRef]

- S. Y. Loo, A. J. Amiri, S. Mashohor, S. H. Tang, and H. Zhang, “CNN-SVO: Improving the Mapping in Semi-Direct Visual Odometry Using Single-Image Depth Prediction,” in 2019 International Conference on Robotics and Automation (ICRA), IEEE, May 2019, pp. 5218–5223. [CrossRef]

- E. P. Herrera-Granda, J. C. Torres-Cantero, A. Rosales, and D. H. Peluffo-Ordóñez, “A Comparison of Monocular Visual SLAM and Visual Odometry Methods Applied to 3D Reconstruction,” Appl. Sci., vol. 13, no. 15, p. 8837, Jul. 2023. [CrossRef]

- J. Engel, V. Usenko, and D. Cremers, “A Photometrically Calibrated Benchmark For Monocular Visual Odometry,” Jul. 2016, Accessed: Jun. 11, 2021. [Online]. Available: http://arxiv.org/abs/1607.02555.

- S. Zhang, “DVSO: Deep Virtual Stereo Odometry,” GitHub repository. [Online]. Available: https://github.com/SenZHANG-GitHub/dvso.

- R. Cheng, “CNN-DVO,” 2020, McGill. [Online]. Available: http://www.cim.mcgill.ca/∼mrl/ran/crv2020.

- Muskie, “CNN-DSO: A combination of Direct Sparse Odometry and CNN Depth Prediction,” GitHub repository. [Online]. Available: https://github.com/muskie82/CNN-DSO.

- C. Godard, O. Mac Aodha, and G. J. Brostow, “Unsupervised Monocular Depth Estimation with Left-Right Consistency,” in 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), IEEE, Jul. 2017, pp. 6602–6611. [CrossRef]

- E. P. Herrera-Granda, Real-time monocular 3D reconstruction of scenarios using artificial intelligence techniques. 2024. [Online]. Available: https://hdl.handle.net/10481/90846.

- S. Wang, “DF-ORB-SLAM,” GitHub repository. [Online]. Available: https://github.com/834810269/DF-ORB-SLAM.

- J. Zubizarreta, I. Aguinaga, and J. M. M. Montiel, “Direct Sparse Mapping,” IEEE Trans. Robot., vol. 36, no. 4, pp. 1363–1370, 2020. [CrossRef]

- E. P. Herrera-Granda, J. C. Torres-Cantero, and D. H. Peluffo-Ordoñez, “Monocular Visual SLAM, Visual Odometry, and Structure from Motion Methods Applied to 3D Reconstruction: A Comprehensive Survey,” Heliyon - First Look, vol. 8, no. 23, pp. 1–61, 2023. [CrossRef]

- J. Engel, J. Sturm, and D. Cremers, “Semi-dense Visual Odometry for a Monocular Camera,” in 2013 IEEE International Conference on Computer Vision, IEEE, Dec. 2013, pp. 1449–1456. [CrossRef]

- Saxena, S. H. Chung, and A. Y. Ng, “Learning Depth from Single Monocular Images,” in Proceedings of the 18th International Conference on Neural Information Processing Systems, in NIPS’05. Cambridge, MA, USA: MIT Press, 2005, pp. 1161–1168.

- S. Aich, J. M. Uwabeza Vianney, M. Amirul Islam, and M. K. Bingbing Liu, “Bidirectional Attention Network for Monocular Depth Estimation,” in 2021 IEEE International Conference on Robotics and Automation (ICRA), 2021, pp. 11746–11752. [CrossRef]

- D. Eigen, C. Puhrsch, and R. Fergus, “Depth Map Prediction from a Single Image using a Multi-Scale Deep Network,” Adv. Neural Inf. Process. Syst., vol. 27, Jun. 2014, doi: 1406.2283v1.

- L. Huynh, P. Nguyen-Ha, J. Matas, E. Rahtu, and J. Heikkilä, “Guiding Monocular Depth Estimation Using Depth-Attention Volume,” in Computer Vision -- ECCV 2020, A. Vedaldi, H. Bischof, T. Brox, and J.-M. Frahm, Eds., Cham: Springer International Publishing, 2020, pp. 581–597.

- J. H. Lee, M.-K. Han, D. W. Ko, and I. H. Suh, “From Big to Small: Multi-Scale Local Planar Guidance for Monocular Depth Estimation,” 2019, arXiv.

- Lee, S.; Lee, J.; Kim, B.; Yi, E.; Kim, J. Patch-Wise Attention Network for Monocular Depth Estimation. Proc. AAAI Conf. Artif. Intell. 2021, 35, 1873–1881. [Google Scholar] [CrossRef]

- X. Qi, R. Liao, Z. Liu, R. Urtasun, and J. Jia, “GeoNet: Geometric Neural Network for Joint Depth and Surface Normal Estimation,” in 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2018, pp. 283–291. [CrossRef]

- C. Godard, O. Mac Aodha, M. Firman, and G. Brostow, “Digging Into Self-Supervised Monocular Depth Estimation,” in 2019 IEEE/CVF International Conference on Computer Vision (ICCV), IEEE, Oct. 2019, pp. 3827–3837. [CrossRef]

- V. Guizilini, R. Ambruş, W. Burgard, and A. Gaidon, “Sparse Auxiliary Networks for Unified Monocular Depth Prediction and Completion,” in 2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2021, pp. 11073–11083. [CrossRef]

- M. Ochs, A. Kretz, and R. Mester, “SDNet: Semantically Guided Depth Estimation Network,” in Pattern Recognition, G. A. Fink, S. Frintrop, and X. Jiang, Eds., Cham: Springer International Publishing, 2019, pp. 288–302.

- S. Qiao, Y. Zhu, H. Adam, A. Yuille, and L.-C. Chen, “ViP-DeepLab: Learning Visual Perception with Depth-aware Video Panoptic Segmentation,” in 2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2021, pp. 3996–4007. [CrossRef]

- B. Li, C. Shen, Y. Dai, A. van den Hengel, and M. He, “Depth and surface normal estimation from monocular images using regression on deep features and hierarchical CRFs,” in 2015 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2015, pp. 1119–1127. [CrossRef]

- Hua, Y.; Tian, H. Depth estimation with convolutional conditional random field network. Neurocomputing 2016, 214, 546–554. [Google Scholar] [CrossRef]

- W. Yuan, X. Gu, Z. Dai, S. Zhu, and P. Tan, “Neural Window Fully-connected CRFs for Monocular Depth Estimation,” in 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2022, pp. 3906–3915. [CrossRef]

- Z. Liu et al., “Swin Transformer: Hierarchical Vision Transformer using Shifted Windows,” in 2021 IEEE/CVF International Conference on Computer Vision (ICCV), 2021, pp. 9992–10002. [CrossRef]

- S. Y. Loo, “CNN-SVO,” GitHub repository. [Online]. Available: https://github.com/yan99033/CNN-SVO. [CrossRef]

- Civera, J.; Davison, A.J.; Montiel, J. Inverse Depth Parametrization for Monocular SLAM. IEEE Trans. Robot. 2008, 24, 932–945. [Google Scholar] [CrossRef]

- J. Engel, “DSO: Direct Sparse Odometry,” GitHub repository. [Online]. Available: https://github.com/JakobEngel/dso.

- S. Lovengrove, “Pangolin,” GitHub repository. [Online]. Available: https://github.com/stevenlovegrove/Pangolin/tree/v0.5.

- S.-Y. Loo, “MonoDepth-cpp,” GitHub repository. [Online]. Available: https://github.com/yan99033/monodepth-cpp.

- M. Cordts et al., “The Cityscapes Dataset for Semantic Urban Scene Understanding,” in 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2016, pp. 3213–3223. [CrossRef]

- Handa, T. Whelan, J. McDonald, and A. J. Davison, “A benchmark for RGB-D visual odometry, 3D reconstruction and SLAM,” in 2014 IEEE International Conference on Robotics and Automation (ICRA), IEEE, May 2014, pp. 1524–1531. [CrossRef]

- X. Huang et al., “The ApolloScape Dataset for Autonomous Driving,” in 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), IEEE, Jun. 2018, pp. 1067–10676. [CrossRef]

- A. Geiger, P. Lenz, and R. Urtasun, “Are we ready for autonomous driving? The KITTI vision benchmark suite,” in 2012 IEEE Conference on Computer Vision and Pattern Recognition, IEEE, Jun. 2012, pp. 3354–3361. [CrossRef]

- Burri, M.; Nikolic, J.; Gohl, P.; Schneider, T.; Rehder, J.; Omari, S.; Achtelik, M.W.; Siegwart, R. The EuRoC micro aerial vehicle datasets. Int. J. Rob. Res. 2016, 35, 1157–1163. [Google Scholar] [CrossRef]

- D. J. Butler, J. Wulff, G. B. Stanley, and M. J. Black, “A Naturalistic Open Source Movie for Optical Flow Evaluation,” in European Conf. on Computer Vision (ECCV), A. Fitzgibbon et al. (Eds.), Ed., in Part IV, LNCS 7577. , Springer-Verlag, 2012, pp. 611–625. [CrossRef]

- A. R. Zamir, A. Sax, W. Shen, L. Guibas, J. Malik, and S. Savarese, “Taskonomy: Disentangling Task Transfer Learning,; in 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, IEEE, Jun. 2018, pp. 3712–3722. doi: 10.1109/CVPR.2018.00391.

- Yin, W.; Liu, Y.; Shen, C. Virtual Normal: Enforcing Geometric Constraints for Accurate and Robust Depth Prediction. IEEE Trans. Pattern Anal. Mach. Intell. 2022, 44, 7282–7295. [Google Scholar] [CrossRef]

- J. Cho, D. Min, Y. Kim, and K. Sohn, “A Large RGB-D Dataset for Semi-supervised Monocular Depth Estimation,” Apr. 2019. [CrossRef]

- K. Xian et al., “Monocular Relative Depth Perception with Web Stereo Data Supervision,” in 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, IEEE, Jun. 2018, pp. 311–320. [CrossRef]

- E. Mingachev et al., “Comparison of ROS-Based Monocular Visual SLAM Methods: DSO, LDSO, ORB-SLAM2 and DynaSLAM,” in Interactive Collaborative Robotics, A. Ronzhin, G. Rigoll, and R. Meshcheryakov, Eds., Cham: Springer International Publishing, 2020, pp. 222–233. [CrossRef]

- E. Mingachev, R. Lavrenov, E. Magid, and M. Svinin, “Comparative Analysis of Monocular SLAM Algorithms Using TUM and EuRoC Benchmarks,” in Smart Innovation, Systems and Technologies, vol. 187, Springer Science and Business Media Deutschland GmbH, 2021, pp. 343–355. [CrossRef]

- E. P. Herrera-Granda, “A Comparison of Monocular Visual SLAM and Visual Odometry Methods Applied to 3D Reconstruction,” GitHub repository. Accessed: Jun. 29, 2023. [Online]. Available: https://github.com/erickherreraresearch/MonocularPureVisualSLAMComparison.

- J. Sturm, N. Engelhard, F. Endres, W. Burgard, and D. Cremers, “A benchmark for the evaluation of RGB-D SLAM systems,” in 2012 IEEE/RSJ International Conference on Intelligent Robots and Systems, IEEE, Oct. 2012, pp. 573–580. [CrossRef]

- Z. Zhang, R. Zhao, E. Liu, K. Yan, and Y. Ma, “Scale Estimation and Correction of the Monocular Simultaneous Localization and Mapping (SLAM) Based on Fusion of 1D Laser Range Finder and Vision Data,” Sensors, vol. 18, no. 6, p. 1948, Jun. 2018. [CrossRef]

- E. Sucar and J.-B. Hayet, “Bayesian Scale Estimation for Monocular SLAM Based on Generic Object Detection for Correcting Scale Drift,” Nov. 2017, [Online]. Available: http://arxiv.org/abs/1711.02768.

- H. Strasdat, J. M. M. Montiel, and A. Davison, “Scale Drift-Aware Large Scale Monocular SLAM,” in Robotics: Science and Systems VI, Robotics: Science and Systems Foundation, Jun. 2010, pp. 73–80. [CrossRef]

- Agarwal and C. Arora, “Depthformer : Multiscale Vision Transformer For Monocular Depth Estimation With Local Global Information Fusion,” Jul. 2022, [Online]. Available: http://arxiv.org/abs/2207.04535.

- Agarwal and C. Arora, “Attention Attention Everywhere: Monocular Depth Prediction with Skip Attention,” Oct. 2022, [Online]. Available: http://arxiv.org/abs/2210.09071.

| 1 | Scaled reconstruction addresses the inherent scale ambiguity problem in purely monocular methods, which can recover accurate scene geometry but at arbitrary scales unrelated to real-world dimensions. The SIDE-NN module provides pixel-wise depth priors that enable more accurate scale recovery compared to classical approaches. |

| Coefficient () | Translational RMSE† | Std. Dev. | Convergence Rate‡ |

| 2 | 0.4127 | 0.0856 | 73% |

| 3 | 0.3214 | 0.0523 | 87% |

| 4 | 0.2685 | 0.0347 | 94% |

| 5 | 0.2453 | 0.0218 | 98% |

| 6 | 0.2382 | 0.0163 | 100% |

| 7 | 0.2491 | 0.0197 | 100% |

| 8 | 0.2738 | 0.0284 | 100% |

| 9 | 0.3156 | 0.0412 | 100% |

| 10 | 0.3647 | 0.0569 | 100% |

| Component | Specifications |

| CPU | AMD Ryzen™ 7 3800X. 8 cores, 16 threads, 3.9 – 4.5 GHz. |

| GPU | NVIDIA GEFORCE RTX 3060. Ampere architecture, 1.78 GHz, 3584 CUDA cores, 12 GB GDDR6X. Memory interface width 192-bit. 2nd generation Ray Tracing Cores and 3rd generation Tensor cores. |

| RAM | 16 GB, DDR 4, 3200 MHz. |

| ROM | SSD NVME M.2 Western Digital 7300 MB/s |

| Power consumption | 750 W1 |

| Method |

Translation error |

Rotation error |

Scale error |

Start-segment Align. error |

End-segment Align. error |

RMSE |

| Kruskal-Wallis general test | ||||||

| DeepDSO | 0.3250961a | 0.3625576a | 1.062872a | 0.001659008a | 0.002170299a | 0.06243667a |

| DSO | 0.6472075b | 0.6144892b | 1.100516b | 0.003976057b | 0.004218454b | 0.19595997b |

| CNN-DSO | 0.7978954c | 0.9583970c | 1.078830c | 0.008847161c | 0.006651353c | 0.20758145b |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).