Submitted:

01 October 2025

Posted:

01 October 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

- Position Society of Minds as a path to AGI: replace monolithic mastery with dialogical competence—capability that emerges from coordinated, critique-ready minds.

- Advance a Mind–Brain–Body triad for the digital world: Body as machine substrate and tools; Brain as pattern-learning AI; Mind as higher-order, ethical and explanatory oversight—the locus of reasoning, restraint, and purpose.

- Clarify the role of current models: training harvests global knowledge; true reasoning and memory live at the mind layer, where explanations are required and retained.

- Center interaction as the driver of augmented intelligence: minds—human and artificial—optimize action by exchanging discernible explanations grounded in extendable, explainable knowledge.

- Give the paradigm technical footing: adopt graph-structured memory to anchor shared context; use dialogical mechanisms to test, criticize, and extend knowledge—moving from incremental improvement to a transformative civic model of intelligence.

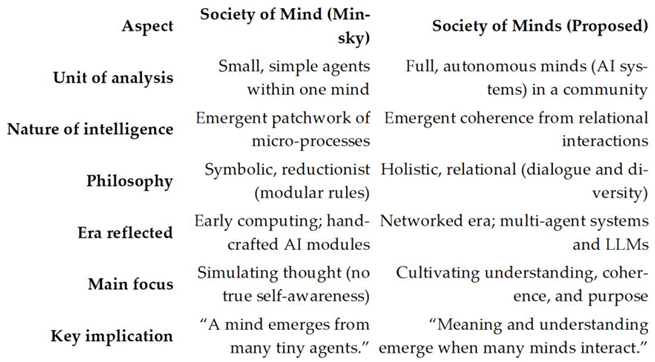

2. Society of Mind Framework

3. Society of Minds Paradigm

3.1. What’s New?

- Ethics Embedded in Architecture: We advocate moving beyond external “after-the-fact” guardrails and instead baking in ethical reasoning and constraints from the start. Concretely, we propose constructs like Digital Genomes and Cognizing Oracles to encode values and enable self-monitoring within AI minds [9]. A digital genome is a structured knowledge representation that carries not just facts but also the purpose and context of those facts – essentially encoding a system’s goals and constraints in its knowledge base [9]. Meanwhile, a cognizing oracle is a mechanism for transparency: it “looks inside” black-box models to extract hu-man-readable explanations or detect doubt. By incorporating these components, an AI mind can explain its reasoning and flag potential errors by itself, enforcing accountability internally. In our Society of Minds, every claim should come with a rationale (via the oracles), and every mind’s knowledge is accompanied by provenance and usage context (via digital genomes). This built-in explainability and value-tagging means ethics is not an afterthought but part of the AI’s core design. (In practice, these ideas draw on recent work by Mikkilineni & colleagues [9,10], linking our approach to emerging “mindful AI” prototypes).

- Brain–Body–Mind Triad Model: What Is a Computer, and Is the Brain a Computer? [11] are questions that are fundamental to the evolution of AI. We frame AI systems in a layered triad analogous to the relationship between body, brain, and mind in philosophical terms. The Brain represents the data-driven learners (neural networks, probabilistic models) that process inputs and produce candidate outputs. The Body represents the machine’s interface to the world – sensors, actuators, and also any fixed rule-based code (the deterministic machinery). The Mind is the overarching reasoning and regulating entity that interprets the Brain’s outputs, applies judgment, and decides actions by the machine. This triad offers a clear conceptual separation: the Body provides signals and actions, the Brain pro-vides patterns and predictions, and the Mind provides meaning, goals and their execution. By explicitly modeling these layers, we can address current shortcomings: today’s AI “brains” (LLMs, etc.) have raw power but no self-aware mind to guide them. Our architecture adds that missing mindful layer on top of existing AI, providing what Kant might call the understanding and judgment to complement the mechanical processing of a brain. This also aligns with the idea that true intelligence requires integrating multiple perspectives – the physical (Body), the computational (Brain), and the rational/ethical (Mind).

- Hegelian Dialectic of AI Evolution: We interpret the trajectory of AI through a Hegelian lens of thesis, antithesis, and synthesis [12]. The thesis has been human cognition as we know it – limited by biology but rich in common sense and values. The antithesis is machine intelligence as a brute-force simulation – enormously scalable and precise, yet lacking understanding and moral grounding. The syn-thesis, we argue, is a Society of Minds: a unification where human and machine minds interact in a shared system, combining their strengths while resolving their respective weaknesses. In practical terms, this means AI development should transition from humans and AIs working separately to humans and AIs working together in a principled way. Our framework explicitly enables such collaboration: human minds can be part of the society (e.g. a human expert might function as one “mind” in a problem-solving network), and the AI minds are designed to communicate in human-understandable terms (through explanations and dialogues) rather than operate opaquely. This dialectical progression also under-scores why a Society of Minds is timely: it addresses the current tension between the incredible capability of AI (antithesis) and the urgent need for control and meaning (thesis) by synthesizing a new paradigm where control is achieved through interaction and transparency.

- “Mindful Machines” – An Evolved End-State: We view Mindful Machines as an achievable the goal of the proposed paradigm. A Mindful Machine is not characterized by sheer speed or the size of its model, but by qualities of memory, reasoning, self-regulation, and self-awareness of its limits. It is an AI that remembers context over long periods, that can reason through novel problems, that regulates its own behavior according to ethical principles, and that knows when to act, when to ask for help, or when to refrain from action. This stands in stark contrast to today’s systems which are often “superficially fluent but clueless underneath” [13]. To achieve this, the Society of Minds design includes a graph-structured memory as a shared knowledge store, enabling persistent context and collective learning. All interactions between minds update this memory graph, which acts like an evolving knowledge base the society can draw on – thereby giving the machine a form of long-term memory and continuity that typical neural networks lack. Additionally, the multi-mind setup means the machine can deliberate: multiple minds can debate a question or plan, yielding reasoning that is dialogical (many viewpoints considered) and discernible (steps can be traced). The result is an AI that behaves less like an “oracle” spitting out answers and more like a wise committee that can show its work. These Mindful Machines, we believe, would be safer and more reliable by design – they don’t just produce answers, they produce explanations, justifications, and safeguards as part of their output. In essence, the Society of Minds approach shifts the metric of progress from raw performance (e.g. benchmark scores) to coherent understanding and trustworthiness.

3.2. Implications: So What?

- Conceptual Leap: This framework shifts the AI discourse from purely optimizing performance metrics to fostering relational understanding. It reframes AI progress not as a race for a single all-powerful model, but as the development of ecosystems of intelligent agents that learn to reason together. In doing so, it breaks the mold of thinking of intelligence as residing in one head (or one model), instead positioning intelligence as an emergent property of a network of interactions.

- Societal Value: By leveraging many minds, our approach offers a pathway to overcome the stagnation of human cognition in the face of accelerating machine capabilities. Rather than AI competing against humans, a Society of Minds is inherently a partnership model: it ensures that machine intelligence augments human intelligence, working with us in a transparent dialogue. This could help prevent scenarios where AI grows incomprehensible or uncontrollable – instead, humans remain in the loop as part of the society, and the combined system can tackle problems neither could solve alone.

- Organizational Impact: Practically, the Society of Minds reframes AI as a strategic operating system for organizations. Decision-making in complex domains (enterprise strategy, scientific R&D, governance) can be supported by a council of AI minds with different expertise, much like a diverse team of advisors. This means decisions are informed by multiple perspectives (financial, ethical, technical, human impact) all generated in tandem by the AI society powered by Mindful Machines. The outcome is a more robust and well-rounded analysis, reducing single-point failures in corporate or policy choices. In short, AI becomes less of a monolithic tool and more of a distributed, dialogical process embedded in organizational workflows.

- Ethics Reinvigorated: Our paradigm embeds ethical deliberation into the fabric of AI systems. Instead of relying solely on external compliance or post-hoc fixes, the Society of Minds has moral checks inherently through its multi-mind governance. This reframes the AI alignment problem: alignment is no longer just tuning a dingle model’s behavior, but designing a society in which unethical proposals are caught and corrected by other members. It mirrors how pluralistic democratic societies prevent extreme actions through debate and checks, rather than trusting any one actor blindly. Such an AI would be easier to audit and regulate, since it produces explanations and divides power among units. It aligns closely with emerging principles of ethical AI by design – moving from external oversight to internal conscience.

- Philosophical Depth: By drawing on philosophical insights (Kant’s categories [14], Wittgenstein’s language-games [15], Habermas’s communicative rationality [16]), this framework grounds technical AI development in deeper theories of knowledge and meaning. Intelligence here is not just number-crunching; it’s relational, conversational, and normative. This helps bridge the gap between engineering and humanities in AI discourse. For instance, Wittgenstein argued that understanding is inherently social and contextual – our Society of Minds explicitly operationalizes that idea by requiring AI minds to negotiate meaning with each other and with humans. Habermas emphasized the importance of reason-giving in legitimate dialogue – our architecture ensures every decision can be questioned and explained. In effect, the paradigm connects AI’s future to enduring human questions about how knowledge is created, shared, and validated.

- Towards a Sustainable Future: We define “Mindful Machines” as AI systems [5,10] that are transparent, ethical, and collaborative by design – a stark contrast to the opaque, unyielding AI systems that dominate today. Embracing a Society of Minds vision steers AI development onto a more sustainable trajectory: one where progress is measured not just by capability, but by controllability and trustworthiness. It offers a hopeful vision where increasing machine intelligence does not equate to increasing risk, because greater capability is coupled with greater in-ternal governance and wisdom. As AI pioneer Gary Marcus has implored, we should demand “a better form of AI” – one that earns our trust by how it operates, not just by its results. The Society of Minds paradigm is a direct response to that demand, suggesting that the path to wise AI is through constructing systems that inherently respect diverse perspectives, self-correct, and remain open to human guidance.

4. Discussion

4.1. Explanations, Epistemology, and Emergent Safety

4.2. Toward an Integrative AI Paradigm

5. Conclusions

5.1. The Implications of the Society of Minds Paradigm

5.2. The Choice Before Us

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Minsky, M. *The Society of Mind*; Simon & Schuster: New York, NY, USA, 1986.

- Marcus, G. Deep Learning: A Critical Appraisal. *arXiv* 2018, arXiv:1801.00631. Available online: https://arxiv.org/abs/1801.00631 (accessed on 24 September 2025).

- LeCun, Y. A Path Towards Autonomous Machine Intelligence. *Open Review* 2022. Available online: https://openreview.net/forum?id=BZ5a1r-kVsf (accessed on 24 September 2025).

- Hinton, G. The Future of Artificial Intelligence: Opportunities and Risks. *Philosophical Transactions of the Royal Society A* 2023, 381, 20220049. [CrossRef]

- Mikkilineni, R. General Theory of Information and Mindful Machines. *Proceedings* 2025, 126(1), 3. [CrossRef]

- Newell, A.; Simon, H.A. GPS, a Program that Simulates Human Thought. In *Computers and Thought*; Feigenbaum, E., Feldman, J., Eds.; McGraw-Hill: New York, NY, USA, 1963; pp. 279–293.

- Mikkilineni, R. General Theory of Information and Mindful Machines. *Proceedings* 2025, 126(1), 3. [CrossRef]

- Mikkilineni, R. Mark Burgin’s Legacy: The General Theory of Information, the Digital Genome, and the Future of Machine Intelligence. *Philosophies* 2023, 8, 107. [CrossRef]

- Kelly, W.P.; Coccaro, F.; Mikkilineni, R. General Theory of Information, Digital Genome, Large Language Models, and Medical Knowledge-Driven Digital Assistant. *Computer Sciences & Mathematics Forum* 2023, 8(1), 70. [CrossRef]

- Mikkilineni, R. A New Class of Autopoietic and Cognitive Machines. *Information* 2022, 13, 24. [CrossRef]

- TFPIS. What Is a Computer, and Is the Brain a Computer? 2024. Available online: https://tfpis.com/2024/04/21/what-is-a-computer-and-is-the-brain-a-computer/ (accessed on 24 September 2025).

- Hegel, G.W.F. *Phenomenology of Spirit*; Oxford University Press: Oxford, UK, 1977 (orig. 1807).

- OpenAI. GPT-4 Technical Report. *arXiv* 2023, arXiv:2303.08774. Available online: https://arxiv.org/abs/2303.08774 (accessed on 24 September 2025).

- Kant, I. *Critique of Pure Reason*; Cambridge University Press: Cambridge, UK, 1998 (orig. 1781).

- Wittgenstein, L. Philosophical Investigations; Blackwell: Oxford, UK, 1953.

- Habermas, J. *The Theory of Communicative Action*, Volume 1; Beacon Press: Boston, MA, USA, 1984.

- Rumelhart, D.E.; Hinton, G.E.; Williams, R.J. Learning Representations by Back-Propagating Errors. *Nature* 1986, 323, 533–536. [CrossRef]

- Nisa, U., Shirazi, M., Saip, M. A., & Mohd Pozi, M. S. (2025). Agentic AI: The age of reasoning—A review. Journal of Automation and Intelligence. [CrossRef]

- Popper, K. *Conjectures and Refutations: The Growth of Scientific Knowledge*; Routledge: London, UK, 1963.

- Deutsch, D. *The Fabric of Reality*; Penguin: London, UK, 1997.

- Deutsch, D. (2011). The beginning of infinity: Explanations that transform the world (1st American ed.). Viking.

- Burgin, M.; Mikkilineni, R. On the Autopoietic and Cognitive Behavior of Digital Genomes. *EasyChair Preprint* No. 6261, 2021. Available online: https://easychair.org/preprints/preprint_download/hRr2 (accessed on 24 September 2025).

- Burgin, M. *Theory of Information: Fundamentality, Diversity and Unification*; World Scientific: Singapore, 2010. [CrossRef]

- Burgin, M. Theory of Knowledge: Structures and Processes; World Scientific Books: Singapore, 2016.

- Burgin, M. Structural Reality; Nova Science Publishers: New York, NY, USA, 2012.

- Burgin, M. Triadic Automata and Machines as Information Transformers. Information 2020, 11, 102. [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).